Abstract

Introduction

The growing presence of artificial intelligence (AI) in health professions has created a need to investigate its potential benefits and challenges in medical education. This article presents findings from an AI learner training needs analysis survey at a U.S. medical school. It compares faculty and student experiences and perspectives on using generative AI (GAI) and other AI tools for undergraduate medical education, focusing on their respective knowledge and learning preferences.

Methods

Faculty and students were surveyed using an online cross-sectional survey design to assess their GAI experience, AI patterns of use, adoption readiness, and training preferences. Surveys contained 14 to 15 multiple-choice items, with 8 items including a write-in option. A total of 68 faculty and 506 students responded to the survey, with a 50% response rate for faculty and 30% for students. Statistical tests were used to determine whether students and faculty differed significantly in their GAI experience.

Results

We found that students were significantly more familiar with GAI than faculty (P < .001) but not significantly more experienced with GAI tools. There were no significant differences in frequency of use. Both groups considered AI tools and technology useful for personal, academic, research, and clinical applications. More than half of both groups were using AI for academic tasks. Both groups expressed concerns about the reliability of AI output, with faculty showing a much greater level of concern. Both groups identified several training formats as beneficial, with faculty preferring formal training (either online or in-person), followed by peer tutorials and self-study. On the other hand, students showed slightly greater interest in self-study than other formats.

Conclusion

Our findings will inform the design of two parallel structured AI training programs, focusing on faculty and student priorities, including hands-on skills practice, and emphasizing AI's ethical use, reliability, and limitations.

Introduction

AI is a rapidly expanding field with profound implications for medical education, clinical practice, and scientific research.1-3 In 2023, GAI tools such as ChatGPT suddenly became widely accessible to the public, quickly gaining popularity in higher education, medical education, and healthcare delivery.4-6 The adoption of GAI technologies is expected to significantly transform medical education, creating a “call to action” for the medical education community to acquire resources and implement plans to navigate these changes effectively. 7 The disruptive impact of GAI tools such as ChatGPT, DALL-E, Perplexity, Gemini, and Copilot has invigorated the field of technology-enhanced learning.8,9 Additionally, AI technology applications designed for clinical diagnosis, research, and predictive analysis are emerging rapidly.10,11 However, information about the experience of medical school faculty and students with these tools and how they used them was unknown.

This study was designed to understand the current state, challenges, and attitudes associated with AI at 2 campuses of the New York Institute of Technology College of Osteopathic Medicine (NYITCOM): Old Westbury, NY, and Jonesboro, AR. In November 2023, the medical school established an AI Task Force (AITF) and requested a training needs assessment survey to better understand medical school faculty and student needs. The aim was to collect information to develop tailored AI training programs for faculty and students 12 and to improve the functional use of AI and GAI tools and technology to better prepare future medical professionals for an increasingly AI-integrated healthcare environment.

The primary research question was: “How and to what extent do faculty and students differ in their AI experience, patterns of use, adoption readiness, and training preferences?” The survey design compares perspectives from both faculty and students. Doing so allows us to understand the views of 2 distinct generational cohorts bound in a high-intensity, high-stakes teaching-learning paradigm.

By comparing student and faculty perspectives, this study aims to develop a curriculum that leverages the insights of stakeholder groups within the framework of standard competencies suggested by experts. This approach ensures that the curriculum includes the knowledge, skills, and abilities suggested by AI/GAI competencies, but also aligns with the practical expectations and real-world applications of its learners, maintaining relevance in the swiftly evolving field of AI in health professions education.

Methods

The Development of a Needs Assessment Survey

The first step in designing an effective training program is to assess the knowledge base, user experience, and learning preferences. 13 The research team selected a survey design for the project based on this objective. Several factors precluded using an existing needs assessment survey. Literature searches located 7 survey research studies focusing on student or faculty AI perspectives14–20; only three published AI training needs assessment studies were conducted in a U.S. medical school.15,19,21 The reporting of this study conforms to the Defined Criteria to Report INnovations in Education. 22 Additional information about the survey literature review is provided in the Supplemental File, Section 1.

A Review of Literature on AI Competencies

In traditional curriculum design, seasoned academics typically guide the development of objectives and content. While a thorough analysis of the AI competency literature is outside the scope of this article, a literature search revealed 8 publications regarding AI competencies for students and 2 for faculty. For medical students, experts suggest beginning with foundational AI literacy, including essential concepts (without delving into technical specifics), such as AI terminology and healthcare implications.23–27 Other critical areas include understanding ethics,23,24,26 evaluating AI outputs, 23 ensuring data privacy,28,29 utilizing AI tools, 30 applying AI in healthcare,24–26 analyzing workflows, 24 and AI creation skills. 30 Niño et al 27 emphasize defining AI values and goals before beginning these activities and medical science student study skills. Regarding instructional design, various strategies are recommended, including lectures, hands-on activities, learning communities, and dual pathways in computer science or AI.23,28–30

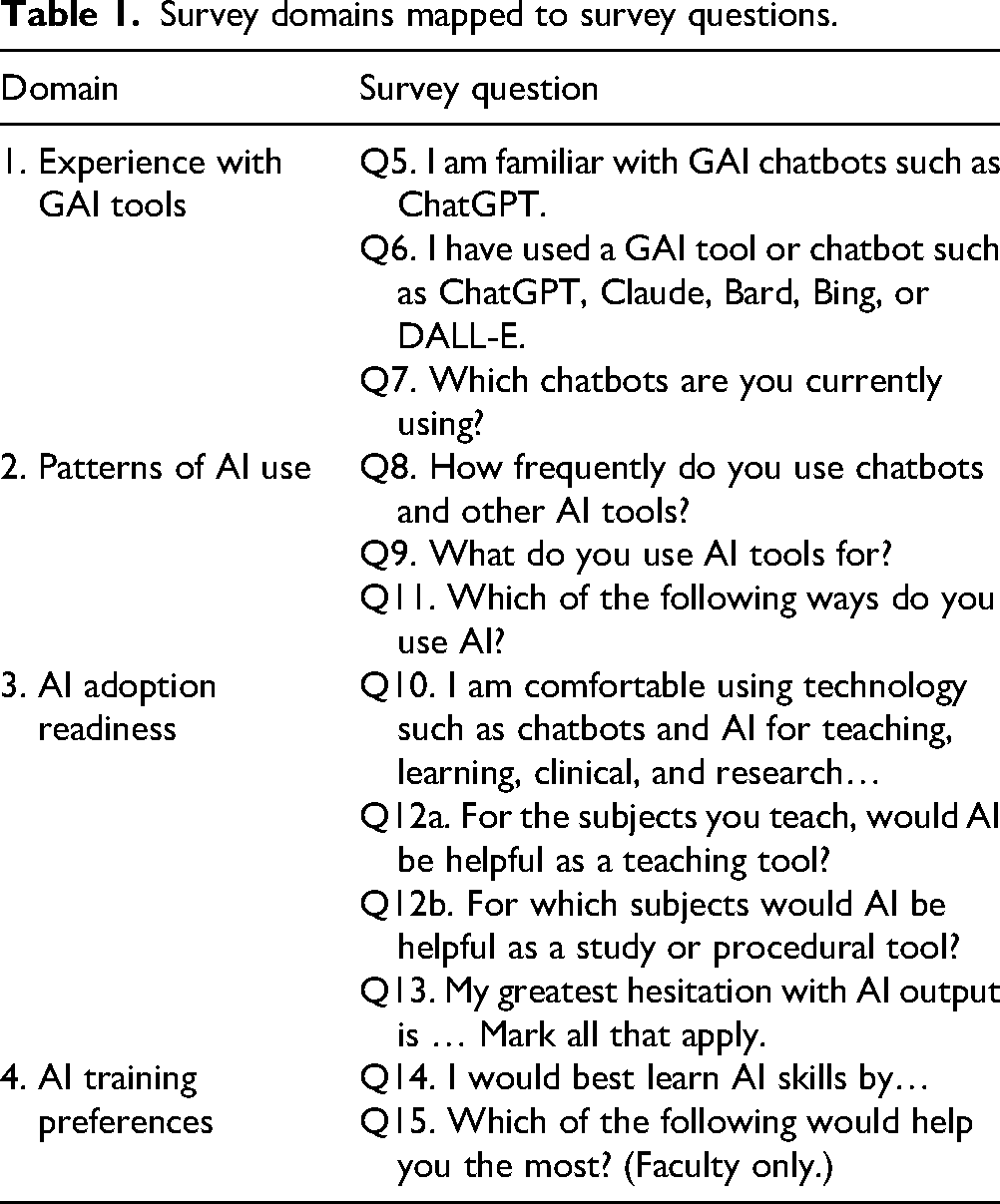

Following these literature reviews, we designed a survey in the style of a learner analysis following user experience design principles 31 to capture input on stakeholder attitudes, experiences, and readiness levels regarding AI. It is important to note that this initial form of needs assessment, a learner analysis, did not aim to diagnose participants’ current AI competencies. Researchers LM and DN assisted with the initial research design. Researchers LM and MS designed 2 survey forms to compare faculty and student perceptions: (1) Medical Faculty AI Experience Survey 2024: 15 items, and (2) Medical Student AI Experience Survey 2024: 14 items. The instruments were revised using a Delphi process with the AITF and research team over 8 iterations, 4 domains emerged (Table 1).

Survey domains mapped to survey questions.

Finally, VR, DN, and MS pilot-tested both forms to ensure they functioned effectively before electronic distribution. Additional discussion about the development of the survey domains is provided in the Supplemental File, Section 1.

Participants

The survey sample included all NYITCOM faculty and medical students from both campuses, a total of 1831 potential participants:

1696 medical students, all 4 levels: MS1, MS2, MS3, MS4. 135 faculty from both campuses (including two staff in administrative roles).

The reported response rates for each group—faculty and students—were calculated by dividing the number of completed surveys by the total sample population. Specifically, 68 faculty members and 506 students participated, resulting in 50% and 30% response rates, respectively.

Data Collection

To ensure data saturation, prior to distributing the survey, the research team set target response rates of 30% for students and >40% for the faculty group. These targets were met. Data collection took place from February through April 2024. The research team sent email invitations to all faculty and students in each email group. The invitations explained the survey's purpose, brief completion time, minimal risk to the participants, anonymous aggregated data reporting plan, and IRB exemption, provided by the NYITCOM IRB on 1.16.24, protocol No. IRB-2024-15. The email letters provided a direct access link to the electronic survey. Brief email reminders were sent 3 times over 12 weeks to all eligible participants and announced during 2 courses. No participation incentives were offered. Survey responses were received and stored securely on Qualtrics servers. Additional details about the survey distribution are provided in the Supplemental File, Section 2.

Data Analysis

Author NG performed the statistical analysis with GraphPad and analyzed the results based on the completed survey responses. For survey questions Q5, Q6, Q8, and Q10, similar to Likert scale items, t-tests were used to assess the statistical significance of differences between group means. This method assumes a normal data distribution and is appropriate given the large sample size and normality test results, allowing for reliable group comparisons. Additional details about statistical analyses are provided in the Supplemental File, Section 3. DM and DN served as editors on the manuscript and the survey instrument.

The sample captured a comprehensive perspective, including participants from all student levels and all faculty categories. Data saturation was achieved since there were no major changes in overall trends toward the end of data collection. The majority of survey responses were quantitative. Qualitative responses were analyzed to identify underlying themes. 32

The study's primary aim was to assess the AI/GAI training needs of undergraduate medical education faculty and students, focusing more on academic tasks for years 1 and 2. This study included a learning analysis and a review of the literature to consider the first stage of AI skills acquisition: fundamentals of AI/GAI, basic tools, policies, and best practices. For faculty, we were not focused on training them to teach the subject of AI.

Results

The results section includes 5 parts: (1) survey sample demographics, (2) GAI tool experience, (3) AI use patterns, (4) AI adoption readiness, and (5) AI training preferences.

Demographics

Faculty Demographics (Table 1a, Supplemental File, Section 4). The faculty survey received 68 responses (including 2 from staff with administrative roles), for a 50% response rate. The majority of faculty (64%) were above age 50 and male (55%). Faculty respondents were 40% basic science, 35% administration, 35% clinical, and 12% course leadership.

Student Respondent Demographics (Table 1b, Supplemental File, Section 4). The student survey received 506 responses from a sample of 1696, for a 30% response rate. The student response sample was distributed by academic cohort as follows:

MS1, cohort of 437, 329 responses; 75% response rate. MS2, cohort of 393, 125 responses; 32% response rate. MS3, cohort of 402, 21 responses; 5% response rate. MS4, cohort of 428, 31 responses; 7% response rate. MS1, off-cycle enrichment, cohort of 36, 0 responses; 0% response rate.

Nearly all students (91%) were 20 to 30 years of age, and 53% of the respondents identified as female. Most respondents (90%) were MS1 or MS2, 65% and 25%, respectively. Two MS3 respondents identified themselves as academic medical scholars taking an extra year to complete specific goals toward becoming medical educators.

Domain 1: Experience With GAI Tools

The domain Experience with GAI Tools includes 3 themes: familiarity with GAI chatbots, use of GAI chatbots, and a listing of specific GAI chatbots used.

“I am familiar with GAI chatbots such as ChatGPT” (Figure 1). Student responses demonstrated greater familiarity with GAI tools than faculty (P < .001). Combining agree and strongly agree, 76% of students were familiar versus 57% of faculty.

Familiarity with GAI Chatbots.

“I have used a GAI tool or chatbot…” (Figure 2a). Approximately two-thirds of both groups agreed or strongly agreed that they had used GAI tools (faculty 62% and students 59%). A 2-tailed t-test indicated that there was no significant difference between groups.

(a) Prior GAI tool use. (b) Specific GAI tools.

“Which chatbots or GAI tools are you currently using? Mark all that apply” (Figure 2b). The most popular was ChatGPT by OpenAI (faculty 60%; students 49%). The second most popular was Copilot by Microsoft (faculty 21%; students 6%). A small percentage of respondents had tried Gemini by Google (faculty 11%; students 3%). Claude.ai by Anthropic was the least used (faculty 6%; students 2%). Many indicated they did not use GAI tools (faculty 29%; students 38%).

“Other” responses for “Which chatbots or GAI tools…” (Table 2, Supplemental File, Section 5). Two students and 2 faculty listed image generators DALL-E and Perplexity.ai. Several other AI tools were listed for both groups, such as NotebookLM, You.ai, etc. Some respondents from both groups reported using custom bots, tools specific for research, or “neural networks.”

Student “Other” Responses (Table 2). An additional 5% of students wrote in comments, categorized into 4 areas: study, communication, image generation/entertainment, and medical reasoning.

Five comments from faculty were recorded, including “just getting started with [AI],” “for image generation,” “seems to be too early in its developmental stages,” “for clinical cases,” and “not sure about copyright infringement.”

Student AI usage for academics: Other responses.

*Sketchy is a microbiology study aid using pictures for mnemonics.

Domain 2: AI Patterns of Use

The domain AI Patterns of Use includes three themes: frequency of use, categories of use, such as “personal” or “academic,” and whether respondents used AI for specific academic tasks related to teaching, learning, or research.

“How frequently do you use chatbots and other AI tools?” (Figure 3a). Most respondents indicated they never or rarely used them (faculty 60%; students 77%). For those that did use chatbots or other AI tools, a quarter of the faculty (23%) used AI tools weekly, a rate almost three times higher than that of students (9%). A subgroup of early adopters used AI tools at least several times a week or daily (faculty 17%; students 14%).

(a) Frequency of use by role. (b) AI usage by category.

“What do you use AI tools for? Mark all that apply” (Figure 3b). AI usage was grouped into five categories: personal, academic, research, clinical training, and clinical work with patients. Faculty reported higher personal use of AI (46%) than students (37%). Both groups used AI for academic medical education (faculty 37%; students 24%), but a greater percentage of faculty used AI for research than students (faculty 28%; students 12%). A higher percentage of students reported not using AI tools (faculty 37%; students (24%).

“Which of the following ways do you use AI? Mark all that apply” (Figures 1 and 2, Supplemental File, Section 5). More than half of both groups had used AI for academic tasks listed (faculty 56%; students 77%). AI was used for generating assessment questions (faculty 30%), drafting case scenarios (faculty 22%), editing papers (faculty 19%, students 13%), and research plans (faculty 17%; students (9%). Faculty also used AI for scientific research (9%) and lesson plans (8%). Students used AI to take notes or create study guides (6%) and practice patient encounters (3%).

Domain 3: Adoption Readiness

The domain AI Adoption Readiness includes 3 themes: comfort level with AI technology in education, AI's potential helpfulness as a teaching tool, and concerns about AI output.

“I am comfortable using technology such as chatbots and AI for teaching, learning, clinical, and research purposes” (Figure 3, Supplemental File, Section 5). Combining the responses for “strongly agree” and “agree,” more students (42%) were comfortable using AI than faculty (34%). A 2-tailed t-test indicated that there was no significant difference between groups.

Faculty and student perception: Utility of AI for various subjects (Table 3, Supplemental File, Section 5). When asked, “For the subjects you teach, would AI be helpful as a teaching tool? Mark all that apply,” faculty acknowledged AI's benefit for several common medical education subjects, with clinical medicine (34%), physiology (29%), and anatomy (22%) the most frequent choices. When asked, “For which subjects would AI be helpful as a study or procedural tool? Select all that apply,” students reported AI as beneficial to the entire range of choices, with pharmacology and biochemistry ranking the highest, 14% and 12%, respectively. Faculty and student write-in responses are provided in the Supplemental File, Tables 4 and 5, Section 5, with several responses indicating uncertainty regarding subjects for which AI would be beneficial.

Student Write-in Comments for Figure 4: Hesitations About AI Output.

“My Greatest Hesitation with AI Output is … Mark all that apply” (Figure 4). Faculty registered more concern than students in all categories.

Faculty and student hesitations with AI output.

Student write-in comments: Hesitations about AI output (Table 3). Student write-in concerns focused on GAI and were grouped into 4 categories: Reliability, Over-Reliance, Societal Impacts, and Too much work.’

Domain 4: AI Training Preferences

The domain AI training preferences includes 2 themes: faculty and student AI training preferences and faculty resource priorities.

“I would best learn AI skills by … Mark all that apply” (Figure 5). In terms of training, student responses were fairly evenly spread among most options, with self-study being rated highest (29%). Faculty rated formal online training the highest (59%) but indicated they would best learn AI from most of the key modalities.

Faculty and student training preferences.

“Which of the following would help you the most? Select all that apply” (Table 4: Faculty Resource Priorities). In order of preference, the 3 most popular items were “hands-on training with AI skills” (61%), the development of guidelines for academic integrity and use of AI (48%), and a dedicated, protected faculty development day to learn AI.

Faculty resource priorities.

Discussion

Our training needs analysis incorporated both a literature review and a learner analysis. The literature review highlighted the dynamic and evolving nature of the field, emphasizing the importance of an AI training program grounded in recommended AI competencies and tailored to participant experiences and preferences. To complement this, we conducted an electronic survey to assess medical school faculty and students’ AI experience, usage patterns, and attitudes. This 2-phase approach yielded several valuable insights to guide the development of our training program.

Demographics

This study compared AI familiarity and usage between faculty and students. Most students’ responses (90%) were from MS1 and MS2, due to the MS3 and MS4 students completing off-campus clinical placements. In terms of age, as expected, faculty were comparatively older than students; most were from Baby Boomer and Millennial generations that were trained using a different set of non-AI technologies than the current Gen Z medical students. 33

Experience With GAI Tools

Students were significantly more familiar with GAI tools than faculty. Combining “agree” and “strongly agree,” 76% of students were familiar with GAI tools versus 57% of faculty (Figure 1). Our study found that 15% of our faculty strongly agreed they were familiar with AI. It is challenging to find comparable data; however, a 2024 Elsevier study of 3000 clinicians and researchers reported that 11% were very familiar with AI. 34 Although students self-assessed as more familiar with GAI tools (Figure 1), this may indicate more of an awareness than expertise: slightly more faculty reported having used GAI tools (faculty 62%; students 59%; Figure 2a). Furthermore, having some experience with a few AI tools does not necessarily indicate responsible use, nor does it imply students or faculty can appropriately apply AI in clinical practice.

Faculty, often from Baby Boomer or Generation X cohorts, typically have less technology exposure than Generation Z students, who are more adept with digital technologies. 35 This divide has substantial implications for both curriculum design and faculty development.

Key Strategies

Faculty development: Tailor training to enhance comfort with AI, starting with basic skills and gradually introducing more complex applications. This approach can reduce resistance and increase engagement among older faculty.

Student expectations: Utilize Generation Z's familiarity with digital tools to introduce AI-enhanced learning that meets their high expectations, focusing on personalized and ethical use of AI.

Intergenerational learning: Leverage the diverse strengths of each generation, encouraging collaborations that blend traditional expertise with innovative digital insights.

AI Patterns of Use

Our findings suggest that for both groups, the intensity of use followed a bell curve, with lower percentages at the “nonuse” and “frequent use” ends. This pattern is similar to that described by Rogers’ Innovation Adoption Curve. 36 Both groups used AI to some degree for personal, teaching, and research, but far fewer in clinical settings with patients (2%). When asked how they used AI for teaching or learning, 56% of faculty and 70% of students had used AI for the specific teaching/learning tasks listed, highlighting both the potential use of AI for these tasks and the need for further training. This finding is consistent with numerous studies highlighting various applications of AI in medical education.2,3,37

The “bell curve” distribution of AI use among faculty and students underscores the need for a curriculum that addresses varying levels of AI familiarity. To effectively meet the needs of all learners, the AI/GAI training curriculum cannot adopt a one-size-fits-all approach but must tailor its content and complexity to match the diverse starting points of its audience.

Strategic Curriculum Design

Tiered learning: Develop introductory, intermediate, and advanced modules to cater to users from novices to experienced practitioners. This ensures that each participant engages with material that matches their skill level.

Flexible pathways: Offer flexible learning paths that allow learners to choose content that best fits their current competence and progress at their own pace.

Incremental learning: Gradually increase the complexity of the material to build a solid foundation, ensuring that learners are adequately prepared for more advanced topics.

Hands-on applications: Incorporate practical activities that allow learners to apply AI concepts in real-world scenarios, enhancing the practicality of the training.

Supportive resources: Provide robust support through Supplemental Materials and forums to assist less experienced users and foster a learning community.

AI Adoption Readiness

Students reported being more comfortable with AI, but not significantly so. Both faculty and students listed almost all medical school subjects as useful for teaching or learning with AI. The faculty identified AI's potential for teaching clinical medicine, anatomy, and biology. Since most student respondents were from MS1 and MS2, it is not surprising they identified pharmacology and biochemistry as subjects where AI could augment their learning. Our study also identified substantial concerns among faculty and medical students regarding the ethical implications of AI, critical thinking, privacy, and data integrity. Several publications corroborate the crucial nature of addressing these concerns.8,21,38,39

Training Preferences

The results suggest that faculty and student groups preferred slightly different training approaches. Faculty training programs should prioritize both formal training and hands-on experiences to support AI skill development, as related to their faculty roles, such as research or clinical practice. Faculty indicated that responsible use guidelines and a protected AI training day would help them the most. The design of a student AI training program could include a variety of learning modalities, including engaging self-paced learning modules such as prompt libraries, interactive tutorials, video lectures, and interactive case studies that emphasize AI tools and methods for study and exam preparation.

Limitations

Generalizability. This study was conducted at a single medical school, so the results are not easily generalizable. However, the results may serve as an illustrative example for other medical schools that hope to begin an AI training program designed for a “cooperative” faculty-student-AI-patient culture, allowing faculty and students and faculty to simultaneously improve in their AI knowledge and skills.

Our study contributes to the literature by carefully describing the process of conducting this AI Needs Assessment and demonstrating several ways to analyze and present the survey data. The Supplemental File provides detailed information that could be used to replicate this study.

Most student responses (90%) were from MS1 and MS2. Fewer MS3 and MS4 participated because they were off-campus completing clinical rotations. Further, several GAI tools rapidly evolved during the 3 months of the survey data collection, coinciding with a period of user experimentation with AI technologies at the medical school. Finally, this study could have captured more of our rich conversations with faculty about AI for teaching and research work.

We did not perform an a priori power analysis to determine the optimal sample size for this study. The sampling design for our study was guided by the practical limitations inherent in distributing a voluntary survey within our medical education program. The survey was made available to all faculty and students to participate from both the campus sites. Future research should include such an analysis to ensure that the sample size is sufficient to detect significant effects.

In interpreting the survey results, especially the question, “What do you use AI tools for?,” it is important to recognize the differing implications for faculty and students. Faculty members often use G/AI for tasks such as creating curriculum materials, grading, or data interpretation, while students might use AI for generating assignments or study assistance. These usage distinctions are crucial for understanding AI's role within our institution and are considered in our analysis. For several survey items, tables were created for only either faculty or students.

Additionally, the relevance of certain options, like clinical interaction, varies between and within groups based on their clinical roles and exposure. Many basic science faculty members are not clinically active, which may affect their understanding of AI/GAI use in patient care responsibilities of medical students during clinical rotations.

Our study uses these data points as a starting layer for understanding engagement with AI, not as a definitive assessment of competence or readiness for clinical applications. We acknowledge the limitations in directly correlating current usage with appropriate or responsible use. Simply because AI tools are being utilized does not inherently imply they are being used effectively or ethically, nor does it guarantee that students are prepared to extend this use into clinical practice.

Sustainability and Future Directions

Using insights from the survey results and the AI competencies literature, the AI Task Force designed a training resource center entitled AI for Academics and launched a series of faculty workshops based on both expert advice and insights from our survey results. This faculty training program began with an AI orientation, guided experimentation with large language models, and AI limitations and ethical considerations. Additional sessions included AI for assessment, discussions about AI values and faculty hesitations about using AI, and how students study with AI. Protected time for learning AI skills was emphasized. Additional training will be customized to address AI/GAI skills for teaching, research, and clinical applications, such as imaging, evaluating AI output, AI in therapeutics. To test AI for clinical settings, the medical school supported the trial use of specific AI tools designed for clinical environments, such as Clinicalkey.ai. 40 Therefore, since the time of the survey, respondents’ current understanding and/or usage of AI may have improved compared to survey outcomes.

For medical students, particularly those the initial 2 years of medical school, we propose a training progression that begins with AI orientation and advances through ethics, tools, study skills, research skills, and finally introduces AI applications in clinical settings.

Conclusion

This article presents the AI landscape at a single U.S. medical school at a specific time, exploring the dynamic process of adapting to AI technology, primarily in the first 2 years of curriculum. This study was designed to compare faculty and student responses to develop a training approach that would benefit both groups and gradually contribute to parallel and collaborative AI skill acquisition. With respect to our initial question about whether faculty were far behind the students in their AI experience and awareness, the answer was “no.” Most faculty and students have tried GAI or AI tools. Both groups had a substantial amount of concern about the validity of AI output, with faculty registering considerably more concern. We endeavored to stay as close to the ground as possible, seeking the real-world experience of stakeholders by conducting a learner analysis as well as a review of the AI competency literature for both faculty and students. We plan to use this study to create effective and differentiated AI training programs for faculty and medical students that address their underlying concerns about reliability, value, and effectiveness.

Supplemental Material

sj-docx-1-mde-10.1177_23821205251339226 - Supplemental material for A Training Needs Analysis for AI and Generative AI in Medical Education: Perspectives of Faculty and Students

Supplemental material, sj-docx-1-mde-10.1177_23821205251339226 for A Training Needs Analysis for AI and Generative AI in Medical Education: Perspectives of Faculty and Students by Lise McCoy, Natarajan Ganesan, Viswanathan Rajagopalan and Douglas McKell, Diego F. Niño, Mary Claire Swaim in Journal of Medical Education and Curricular Development

Supplemental Material

sj-docx-2-mde-10.1177_23821205251339226 - Supplemental material for A Training Needs Analysis for AI and Generative AI in Medical Education: Perspectives of Faculty and Students

Supplemental material, sj-docx-2-mde-10.1177_23821205251339226 for A Training Needs Analysis for AI and Generative AI in Medical Education: Perspectives of Faculty and Students by Lise McCoy, Natarajan Ganesan, Viswanathan Rajagopalan and Douglas McKell, Diego F. Niño, Mary Claire Swaim in Journal of Medical Education and Curricular Development

Footnotes

Acknowledgements

The NYITCOM AITF supported this survey research project.

ORCID iDs

Ethical Considerations

The Institutional Review Board at NYITCOM approved our study (Approval No.: IRB-2024-15) on January 16, 2024.

Consent to Participate

Participation was voluntary. Consent was voluntary. The survey invitation letters explained the survey's purpose, brief completion time, minimal risk to the participants, anonymous aggregated data reporting plan, and IRB exemption.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Declaration of Conflicting Interest

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data Availability Statement

We appreciate the journal's interest in our study and the value of data transparency in academic research. However, we must respectfully decline the request to share the Qualtrics survey data files associated with our research. The data collected includes sensitive responses from both students and faculty members, containing potentially identifiable information, and sharing these data was not part of the research plan submitted to the IRB.

Supplemental Material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.