Abstract

BACKGROUND

While bedside teaching offers invaluable clinical experience, its availability is limited. Challenges such as a shortage of clinical placements and qualified teaching physicians, coupled with increasing medical student numbers, exacerbate this issue. Simulation-based learning encompasses varied educational values and has the potential to serve as an important tool in medical students’ education.

OBJECTIVES

In this study, we evaluate a new Clinical Decision Making Integrated Digital Simulation (CDMIDS) method that was developed in order to enhance the clinical decision-making competency and self-confidence of medical students early in their clinical training through practicing fundamental core skills.

METHODS

The study compares 108 4th-year medical students’ questionnaire responses pre-/postself-assessments following practice of a new clinical decision-making simulation methodology.

RESULTS

Results indicate a positive participant experience, with the simulation perceived as a valuable platform for practicing integrated bedside decision making. Notably, participants demonstrated a statistically significant increase in willingness to make clinical decisions. The simulation contributed to enhanced knowledge, professional skills, and self-confidence in clinical decision making.

CONCLUSION

The use of a CDMIDS method integrates clinical decision making as part of early medical school curriculum. Moreover, the method boosts learners’ professional confidence, self-directed learning, and additional experiences. The method is flexible and can be applied in any medical school, especially those with limited resources, by making specific, localized modifications.

Keywords

Introduction

Traditional bedside teaching, while valuable, limits medical students’ exposure to diverse clinical cases and struggles to address the broader skill set required in modern healthcare.1,2 Growing student numbers and resource constraints necessitate innovative educational approaches to equip medical students with the competencies needed for complex patient care.3,4

Active learning methodologies, including simulation, have emerged as powerful tools in medical education. 5 Simulation offers a versatile platform for developing essential clinical competencies, from critical thinking and problem solving to communication and teamwork.6,7 By providing immersive, repeatable, and personalized learning experiences, simulation can enhance student engagement and confidence. 8 While the potential of simulation is substantial, its effective integration requires a structured approach grounded in educational theory to maximize its impact on both learner outcomes and patient care. 9 Additional challenges include obtaining the dedicated resources necessary and technical difficulties, as well as the high cost of advanced simulation equipment, which can limit access and potentially exacerbate educational disparities.9,10

A decline in student engagement, evidenced by diminished systematic problem-solving abilities during nonmandatory oral examinations in the clinical years prompted a reassessment of our medical education curriculum. 11 These observations highlighted an important gap in medical students’ preparedness for these complexities in clinical practice. 12 Recent trends, following the COVID-19 pandemic, along with broader medical educational reforms, have led to increased integration of clinical decision making into medical school curricula.13–16

The process of clinical decision-making can be described through various decision-making models. The 2 primary models are analytical thinking and intuitive thinking. Intuitive thinking relies on pattern recognition, drawing on past experiences to quickly find similarities between the current situation and previous ones. In contrast, analytical thinking is a more systematic, deliberate approach, utilizing logic and reasoning to solve problems. Medical students, who are primarily trained in analytical thinking, depend on this method until they gain enough experience to develop their intuitive thinking skills. 17 Advancements in simulation technology enable us to directly teach cognitive processes, such as clinical decision making, and to tailor them to the basic clinical skill levels of undergraduate medical students.18–20

To enhance both foundational clinical skills (such as medical knowledge, physical examination, differential diagnosis, and treatment planning), and higher-order competencies (such as clinical decision-making, interpersonal and interprofessional communication, and ethical reasoning), we developed the Clinical Decision Making Integrated Digital Simulation (CDMIDS)—an easy-to-deploy, low-cost simulation training program.21–23

This study presents this new training program and its evaluation. We assessed its impact on student self-assessment, learning outcomes, critical thinking skills, and clinical confidence. The study focused on the effectiveness of a resource-efficient simulation methodology that involved all medical students in clinical decision-making case studies.

Methods

Setting and participants

This prospective cohort simulation-based study was conducted at Ben-Gurion University of the Negev (BGU) in Beer Sheva, Israel, within the School of Medicine and in collaboration with Soroka University Medical Center and SimReC, the Research Center for Simulation in Healthcare. The CDMIDS training program was first implemented in the 4th year of our 6-year medical program, coinciding with the initiation of formal clinical training. Fourth-year medical students are required to undertake 2 primary clerkships—internal medicine and pediatrics—each lasting approximately 10 weeks. The study spanned 4 months over 2 distinct periods, following the completion of Internal Medicine clerkships during the 2nd semester. To facilitate the study, the class was divided into 2 groups with varying core rotations. These clerkships encompass the core medical competencies outlined in the training curriculum.

Program description and development

The simulation we constructed was based on clinical cases from internal inpatient departments. Of the 10 topics provided at the outset of the clerkship, 2 were randomly selected for simulation practice, which only took place during the final third of the clerkship. These topics were edited and structured by experienced practitioners to be presented in digital format for case-based simulation delivery. The cases were adjusted and adapted to form a learning module using our institutional license for QualtricsXM© web-based survey software, which is also adapted for mobile phone use. Given budgetary constraints, our approach relies on role-playing simulations conducted with a trained instructor. Each simulation encompasses the 6 core elements of clinical decision making: taking a medical history, physical examination, differential diagnosis, diagnostic tests, treatment planning, and discharge planning.

The simulation sessions were led by an instructor, who was a clinician well experienced in routine clinical teaching in inpatient departments, who had undergone a training workshop and adaptation for simulation teaching that included approximately seven 2-hour meetings, including basic role-playing, practical experiences, and receiving feedback from a senior instructor.

Medical students engaged in two 2-hour simulation sessions per topic during their internal medicine rotation. Each simulation started by introducing the students to the steps needed to manage the case and a reminder that they were expected to do their best as 4th-year medical students. We emphasized that it was important to learn from their mistakes during practice with the simulator; therefore, during the training program they could act freely based on their level of knowledge and skills, and any mistakes would be used to promote learning. Students were required to perform all necessary actions, from conducting a thorough patient history to performing physical examinations or procedures on a training mannequin in a dedicated room equipped with basic medical supplies. This hands-on approach allowed for comprehensive skill development without the need for advanced simulation centers, despite their potential benefits.

Simulation sessions involved small groups of 10-12 medical students. A pair of students led each simulation element, while the remaining group members concurrently worked on the same element using their smartphones to document decision-making processes. This dynamic format maximized engagement and ensured that all the students actively participated in the entire simulation scenario. When students arrived at the simulation, they received an initial briefing outlining the teaching method, objectives, and aligning expectations with the group. The students followed the clinical case learning module starting with taking a medical history. First, the students need to collect information related to this step. For example, in our study, we first presented a general description of an 80-year-old patient who arrived at the emergency room in a wheelchair, accompanied by a family caregiver, due to experiencing significant abdominal pain and fever since the night before. Then, students were presented with tailored questions such as: “What order of actions would you like to take now?” from a list of reasonable options suited to the situation. On the next step, a specific, focused question appeared, for example: “You have decided to start with medical history taking, so please, what would you like to ask?” At this step, a pair of students moved to the center of the room for a simulation role-play with the instructor, while all the others answered the question themselves on their mobile phone simultaneously with their peers experiencing the role-playing. Next, we presented the students with all of the patient's medical history that was collected; thus, the group members continued managing the case toward a physical examination, differential diagnosis, diagnostic tests, treatment planning, and discharge planning, in a similar manner—that is, for each skill, a different pair of students dealt with the role-playing, while the others answered the questions on their mobile phone. At the end of the process, which lasted about 50 min, and after a short break, the instructor led a feedback session. Giving and receiving feedback is an important feature of the training session, which supports professional development. Thus, the students received a full anonymous report of all the answers written by the group, and the instructor led an open discussion, focusing the feedback on 4 elements that we wanted to highlight: alignment with simulation objectives, comprehensive performance evaluation, decision-making analysis, and addressing emotional aspects.

Following the simulation session, we collected feedback that included 2 main motifs that were intertwined: the first focused on feedback and discussion on the students’ performance and experiences. Specific reference was made to cognitive biases that might affect their decision making. The second motif referred to expected evidence-based clinical management and decisions regarding the case. Following this, all students received anonymous digital results, allowing them to further assess their performance and compare it to the rest of the group. The CDMIDS training program for teaching through case-based simulation is presented in Figure 1.

The CDMIDS–clinical decision making integrated digital simulation module flow chart comprised of 6 major components.

Program evaluation

The evaluation of the new CDMIDS training program was conducted using a self-report questionnaire to measure parameters that influence clinical decision making. The questionnaire in the present study was based on validated questionnaires from Harris et al, Heidari and Ebrahimi, and Macauley et al.24–26 To adapt this questionnaire to the needs of the present study, it was translated into Hebrew and back translated into English to validate the translation and to ensure cultural and linguistic equivalence. We compared the students’ responses before and after the simulation practice for each session.

Statistical analysis

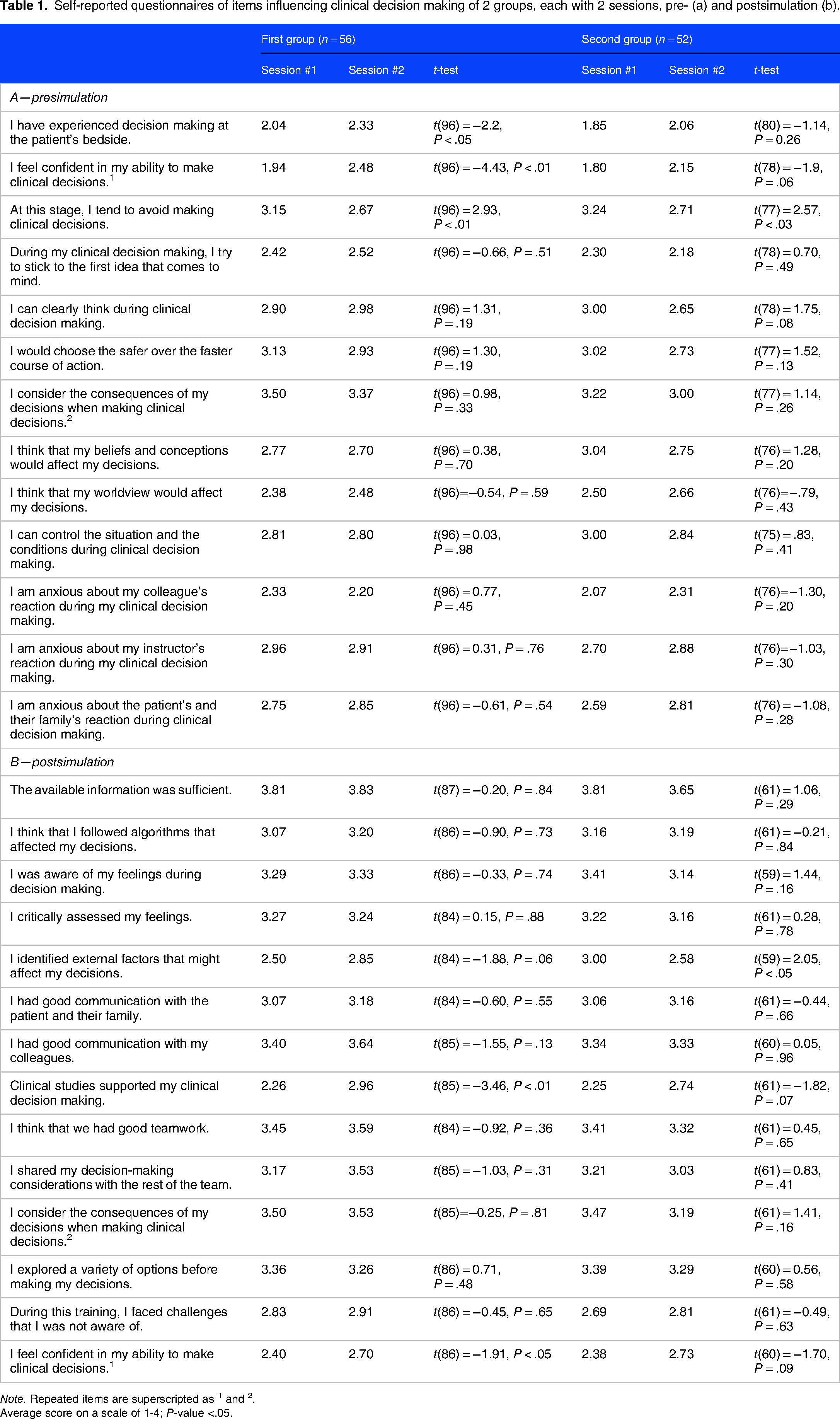

For analysis, we compared responses on two 4-point Likert scale questionnaires, a 13-item for the presimulation and a 14-item questionnaire for the postsimulation. Two items repeated in both questionnaires, the first measuring participants’ perception of the consequences of their decisions and the second measuring the confidence in their ability to make clinical decisions (a list of all items in both questionnaires is presented in Table 1). To analyze the data, we employed a paired samples t-test using Microsoft Excel. This statistical test was chosen to compare the mean differences between the 2 sessions and the repeated items in the pre- and postsimulation questionnaire. We assumed equal variances between the 2 groups. The level of significance was set at α ≤ .05. Following the 2 simulation sessions students of the 2 groups also responded to a 3-item questionnaire on a 4-point Likert scale to measure their perception after participating in the CDMIDS training program (the list of these items is presented in Table 2).

Self-reported questionnaires of items influencing clinical decision making of 2 groups, each with 2 sessions, pre- (a) and postsimulation (b).

Note. Repeated items are superscripted as 1 and 2.

Average score on a scale of 1-4; P-value <.05.

Questionnaire that compares the 2 groups of students following the 2 simulation sessions (average score on a scale of 1-4).

The reporting of this study conforms to the STROBE statement: guidelines for reporting observational studies. 27

Study approval was given by the ethics committee of the School of Medicine at BGU (approval #49-2022). All the participants signed an informed consent form.

Results

A total of 108 4th-year medical students (52 men, 56 women) participated in the study. While not mandatory, 51 (92%) and 23 (43%) students completed questionnaires in the first and second simulation sessions, respectively.

Overall, though most differences between the 2 sessions were not statistically significant, students reported positive experiences in both sessions (Table 1). The results demonstrate a significant positive impact of clinical simulations on medical students’ confidence in clinical decision making. Students reported a substantial increase in their confidence in their ability to make clinical decisions following participation in the simulations. For example, the average score for the questionnaire statement, “I feel confident in my ability to make clinical decisions” among the first group increased from 1.94 in the first session to 2.48 in the second (P < .01). Similarly, the average score for the statement, “At this step, I tend to avoid making clinical decisions” decreased from 3.15 in the first session to 2.67 in the second (P < .01), indicating a reduced tendency to avoid decision making. Notably, students in the second group reported increased awareness of external factors influencing decision making, a factor that decreased from 3.00 in the first session to 2.58 in the second (P < .05).

The summary questionnaire (Table 2) addressed participants’ perception, with a high mean score of 3.38-3.57/4 on all 3 questions in both rounds, showing that the CDMIDS training program positively impacted students’ knowledge, skills, and confidence in clinical decision making.

Discussion

We created the CDMIDS as an accessible and cost-effective training program designed to enhance core competencies of patient care such as medical knowledge, interpersonal and communication skills, and clinical decision making for medical students. 28 To evaluate the perception of students that use this tool, 2 standardized, comprehensive clinical cases were meticulously developed for the simulation. To enhance the realism of the scenarios, role-playing techniques were employed within a simulated clinical setting. Students were explicitly instructed to demonstrate each clinical skill in practice, ranging from obtaining a comprehensive medical history and conducting a thorough physical examination to formulating a differential diagnosis, diagnostic tests, treatment planning, and discharge planning.29,30 To mitigate potential frustration and embarrassment, prior to the simulation, students were provided with the necessary theoretical knowledge to effectively address the presented cases. The structure of the simulation, combined with the small group training format, may have contributed to the high levels of satisfaction observed among the participants who engaged in the simulation experience. The students demonstrated appropriate abilities and knowledge, and did well across all the stations throughout the case even though they were not informed in advance of the specific clinical topic that they would practice.

There was an additional emphasis on creating a comfortable and nonthreatening learning space for the group, to improve their skills in the tested elements. 31 The reliance on personal smartphones, the delivery of case links via our software, and the mobility of both the instructor and equipment enabled a shift away from a highly equipped simulation lab. Any quiet room that can accommodate a learning group and the necessary equipment can serve as a suitable simulation environment, mirroring the adaptability and flexibility required in real-world healthcare settings. This situation allowed students to freely practice routine medical scenes as part of their internal rotation. Moreover, in this pilot study, the students were assured that their performance would have no consequences on their final grades in the course, which contributed to lowering their stress levels. Specifically, they were told to do their best in each part of the scenario. 32

As mentioned above, the feedback provided at the end of each simulation addressed the students’ experiences and impressions of their performance, using their failures and mistakes as precursors to success. Positive self-talk regarding an error helped them to focus on “learning” instead of “shaming.” Our findings of an increase in self-awareness of cognitive bias are encouraging, specifically, when considering the relatively short time devoted to the first official discourse on cognitive biases and their significance in decision making.33,34 However, this report for the questionnaire was selective, and monitoring this awareness during the following courses and groups is required.

The substantial difference in the questionnaire response rates between the 2 simulation sessions (92% vs 43%) might be explained by the human element in survey research. 35 The initial enthusiasm and engagement of participants, as well as the role of instructors in encouraging participation, may have played a significant role in the higher response rate that occurred during the first session. The decline in response rates in the second session could be attributed to a decrease in these motivational factors. This finding emphasizes the necessity of designing improved strategies to maintain participant engagement in future research endeavors.

Nevertheless, similar trends were noticed for both simulation sessions, with 5 questions being statistically significant only in the first, which may be attributed to the declining response rate. The increased score for the statement “I feel confident in my ability to make clinical decisions” and decreased score for the statement “At this step, I tend to avoid making clinical decisions” indicate a change in the tendency to participate in clinical decision making following the participation in the CDMIDS program. Another questionnaire statement, “I identified external factors that might affect my decisions” was significant only in the second round, indicating greater awareness to possible cognitive biases in the decision-making process, following the simulation. These distinctions illustrate the importance of intervention and practice with simulators to strengthen learners’ confidence. 36

The advantages of this new simulation method are multifaceted and substantial. First, it offers an easy-to-deploy, low-cost approach to medical problem-solving training, encompassing all basic principles of patient care from history taking to follow-up. This holistic perspective fosters comprehensive clinical reasoning and bridges the gap between hospital and community medicine.

Second, like other simulation tools, this approach provides a safe and controlled environment that enhances learners’ confidence and clinical skills, particularly in physical examination basics. Moreover, the method's flexibility allows for adaptation to various learning settings and levels, while its low cost and minimal resource requirements make it highly accessible.

Unlike traditional simulation methods that often isolate specific clinical skills, the CDMIDS training program encourages a comprehensive understanding of patient care. By addressing complete clinical cases, students are challenged to make complex decisions, fostering a deeper understanding of the clinician's reality. 37 The synergistic combination of all these features provides a resource-efficient simulation method in which all student can participate in clinical decision-making case studies.

We acknowledge that there are several limitations in this study. The highly instructor-dependent nature of the simulation method and incomplete questionnaire response rates may have influenced the study's outcomes. Furthermore, while the simulation provided a controlled environment for practicing decision making and managing cognitive biases, it cannot fully replicate the complexities and nuances of real-world clinical encounters, particularly the emotional and interpersonal dimensions inherent in inpatient care. Extended follow-up is necessary to assess the long-term impact of this methodology on clinical practice. Additionally, self-reported data on awareness and willingness may not accurately predict future clinical performance, necessitating further objective evaluation. The division of case management into 6 steps, while pedagogically convenient, does not always reflect the interconnected nature of clinical practice. Finally, the questionnaires we used to evaluate the CDMIDS measured only the participants’ perception, and future research will have to continue investigating additional features such as improvement in medical knowledge, interpersonal and communication capabilities, and clinical decision-making skills.

Conclusion

Key goals in the development of our CDMIDS training program include the importance of learning intentions, fostering a classroom environment that tolerates and welcomes exploration, focusing on task challenges, and providing feedback to improve learnability. While the current study only evaluates medical students’ perception it indicates that the students gained confidence in their clinical decision-making skills. The CDMIDS training program that was developed for this study will continue to serve medical students as a platform that boosts their professional confidence, motivating them to pursue further professional development, and allow them to practice clinical decision-making in a safe environment.

Supplemental Material

sj-docx-1-mde-10.1177_23821205241310077 - Supplemental material for A Unique Simulation Methodology for Practicing Clinical Decision Making

Supplemental material, sj-docx-1-mde-10.1177_23821205241310077 for A Unique Simulation Methodology for Practicing Clinical Decision Making by Shimon Amar and Yuval Bitan in Journal of Medical Education and Curricular Development

Footnotes

Acknowledgments

We thank the Quality of Teaching and Learning Unit at Ben-Gurion University of the Negev for their support in conducting this study. This study was conducted as part of SimReC—The Research Center for Simulation in Healthcare at Ben-Gurion University of the Negev.

Author Contributions

Shimon Amar and Yuval Bitan developed the simulation method; Shimon Amar designed the clinical cases while Yuval Bitan computed them with QualtricsXM©; Shimon Amar was the primary author; Yuval Bitan performed the statistical analysis, and Shimon Amar performed significant revisions to the original manuscript; All authors reviewed the final manuscript.

Consent to Participate

The study was approved by the ethics committee of the School of Medicine at BGU (Approval No. 49-2022). All the participants signed an informed consent form.

DECLARATION OF CONFLICTING INTERESTS

The authors declare no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Ethics Approval

The study was approved by the ethics committee of the Faculty of Health Sciences at Ben-Gurion University of the Negev.

FUNDING

The authors received no financial support for the research, authorship, and/or publication of this article.

Supplemental Material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.