Abstract

Objectives

Proficiency in medical writing and publishing is essential for medical researchers. Workshops can play a valuable role in addressing these issues. However, there is a lack of systematic summaries of evidence on the evaluation of their impacts. So, in this systematic review, we aimed to evaluate all articles published on the impact of such workshops worldwide.

Methods

We searched Ovid EMBASE, Ovid Medline, ISI Web of Science, ERIC database, and grey literature with no language, time period, or geographical location limitations. Randomized controlled trials, cohort studies, before-after studies, surveys, and program evaluation and development studies were included. We performed a meta-analysis on data related to knowledge increase after the workshops and descriptively reported the evaluation of other articles that did not have sufficient data for a meta-analysis. All analyses were performed using Stata software, version 15.0.

Results

Of 23 040 reports, 222 articles underwent full-text review, leading to 45 articles reporting the impacts of workshops. Overall, the reports on the impact of such workshops were incomplete or lacked the necessary precision to draw acceptable conclusions. The workshops were sporadic, and researchers used their own method of assessment. Meta-analyses of the impact on the knowledge showed that workshops could nonsignificantly increase the mean or percentage of participants’ knowledge.

Conclusion

In the absence of systematic academic courses on medical writing/publishing, workshops are conducted worldwide; however, reports on educational activities during such workshops, the methods of presentations, and their curricula are incomplete and vary. Their impact is not evaluated using standardized methods, and no valid and reliable measurement tools have been employed for these assessments.

Introduction

Proficiency in medical writing and familiarity with medical publishing standards is essential when it comes to publishing findings of medical research. 1 The act of publication represents the final stage of research, and without it, all the prior stages of conceptualization, planning, data collection, analysis, and inference would be significantly futile. 2

Having undertaken credible research, success in publishing involves a number of challenges: Knowing how to structure one's paper based on the recommendations of the international organizations of the field, 3 such as the International Committee of Medical Journal Editors; awareness of checklists for elements to be included in types of published articles4,5; knowledge of ethics of issues such as authorship6,7; considering the target audience; choosing the target journal; using optimal English; and knowing how to deal with editors and peer reviewers. 8 Typically, postgraduate research-focused medical education tends to overlook these subjects. As a result, workshops could play a valuable role in addressing this gap. 9

Reports of sporadic workshops on aspects of medical writing/publishing suggest that they are mainly based on the prior experiences of the presenters. 9 On the other hand, the impact of such workshops on increasing the number of publications or on increasing the confidence and/or knowledge of participants in a longitudinal way is not clear. Although some articles have demonstrated the usefulness of such workshops on the actual practice of participants,10–15 there is no universal consensus on whether such workshops can significantly change the research outputs of the participants. A systematic review of comparative studies of formalized, a priori-developed training programs in writing for scholarly publication has demonstrated that few such evaluations exist, highlighting a knowledge gap regarding their effectiveness. 16

The important field of optimal approaches to medical publishing—also called medical journalology—thus lacks systematic summaries of evidence on the evaluation of such workshops and their short- and long-term impacts. So, in this systematic review, we aimed to evaluate all articles published on the impact and effectiveness of medical writing/publishing workshops worldwide. We did not delve into workshops pertaining to nonmedical research or publishing in other disciplines because different fields of research or scientific publishing have their own unique specifications and issues. This report is part of a larger project with different research questions on various aspects of medical writing/publishing workshops, which have a shared search strategy and database review.

Methods

This systematic review is reported based on the PRISMA guideline. 17

Types of Studies

Randomized controlled trials, cohort studies comparing workshop participants to nonparticipants, before-after studies of workshop participants, surveys of the experience of workshop participants after the course, and program evaluation and program development studies were included.

Types of Participants

We included all the abovementioned studies in which the participants were medical students, graduate students, faculty members, or any other adult participants who were willing to learn medical writing, related ethical issues, and/or publication standards and nuances.

Types of Outcome Measures

Impact on knowledge and confidence in medical writing/publishing, from questionnaires completed by workshop participants,

Impact on the number of manuscripts publications in peer-reviewed journals, from self-reports by participants or searching of the literature,

Impact on the number of abstract submissions to related conferences, from self-reports by participants or searching of the literature,

Impact on confidence in dealing with peer reviewers and journal editors, from participant self-report,

Impact on feedback received from peer reviewers and editors, from participant self-report,

Impact on skills in medical writing/publishing, from evaluation instruments or participants’ self-report.

Search Methods for Identification of Studies

Electronic searches

We searched Ovid EMBASE (from 1974 to October 2022) using the search terms and combinations in Appendix 1, and Ovid Medline from 1946 to October 2022 (Appendix 2). A librarian was involved in different stages of selecting the keywords and searching the related databases. Database-specific keywords were used for the search, Mesh terms were used to search Medline, and Emtree terms were used to search the Embase. We also searched the ISI Web of Science database (from the inception to October 2022) using the related keywords (Appendix 3). Another librarian familiar with searching the ERIC database was involved in searching this database, as a specialized database for medical education, using the related keywords. We did not limit the search to any specific language, time period, geographical location, or number of centers for performing the study.

Searching Other Resources

We also evaluated the reference lists of the eligible articles for any further published articles. To assess the grey literature, Google Scholar was also searched using the related keywords, and the first 100 hits of the Google Scholar search were reviewed for relevant articles.

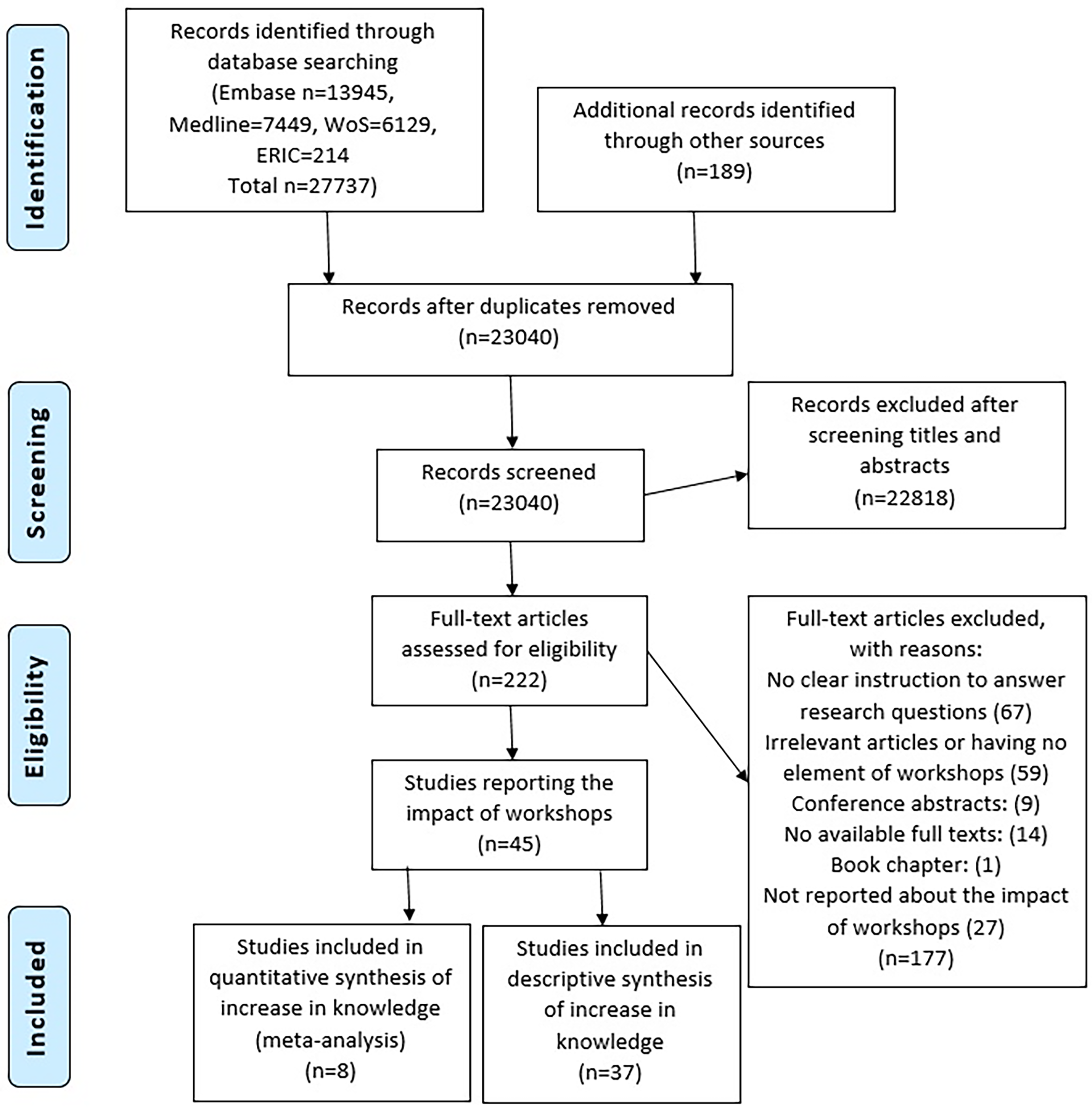

We made a snowballing approach to experts in the field, including the editors of the journals and also researchers of the medical writing/publishing area, by directly emailing whom we knew as well as by writing a related announcement in the related forums of World Association of Medical Editors, and Eastern Mediterranean Association of Medical Editors asking for introducing any related articles published in journals which are not indexed in the abovementioned databases or any on-going workshops with unpublished data. PRISMA flow diagram shows the number of articles retrieved from different databases (Figure 1). Also, Appendix 4 shows the PRISMA checklist.

PRISMA flow diagram for the impact of medical writing/publishing workshops.

Data Collection and Analysis

Selection of studies

Multiple independent reviewers screened the titles and abstracts of the retrieved studies through the Covidence software. However, each abstract and title were screened by only 2 independent reviewers. The full texts of the studies that passed the screening were also independently assessed by 2 reviewers, considering the eligibility criteria. Any disagreement between the reviewers was resolved by a third reviewer with a thorough discussion with the previous 2 reviewers.

Data Extraction and Management

A Google sheet was prepared for extracting data from the included articles, and 2 reviewers independently extracted data from each article for the listed outcomes after piloting the process for one study. Differences between the reviewers regarding the data were resolved by a third reviewer with a thorough discussion with the previous 2 reviewers.

The gathered data were about the characteristics of the studies, such as the design, the aim of the study, the number of participants in each workshop, inclusion and exclusion criteria, method of recruitment of participants, type of possible intervention, any comparison with the intervention, and possible outcomes.

Dealing With Missing Data

We attempted to contact the authors of studies with missing data. We did not use strategies of imputation, deletion, or modeling to address missing data.

Certainty of Evidence

We could not use GRADE to check the certainty of evidence.

Data Synthesis

We planned to perform a meta-analysis if the data permit; otherwise, a descriptive evaluation of articles would be presented. For the meta-analyses, the presence of heterogeneity was examined using the χ2 test and graphical methods (forest plot). Funnel plots and Begg and Egger tests were used to assess publication bias. Also, the Metatarium command was used to estimate the effect size of the relation in the presence of missing studies due to publication bias. All analyses were performed using Stata statistical software (version 15.0, Stata Corp), and the significance level was considered to be less than .05. This study was not registered in PROSPERO.

Results

Our search in different databases led to 27 737 articles. We also retrieved 189 articles from other sources. After removing the duplicates, we could locate 23 040 unique reports. After screening the titles and abstracts, 222 articles underwent full-text review, which finally led to 45 articles reporting the impacts of workshops. Very few articles sufficiently reported their educational approaches. Some reported education through seminars or hands-on workshops, and some others reported tutor training approaches or educational lectures without further clarification.

Of the 45 articles that reported the impact, 21 reported increases, though measured differently, in the number of publications after the workshops.14,18–37 Eleven articles reported an increase in knowledge after the workshops.15,30,38–46 Seven articles reported increases in the confidence of the participants after the workshops.15,21,23,30,34,38,40 Six articles reported an increase in the satisfaction rate of the participants.14,30,40,47–49 And 6 articles reported about self-rated skills.30,38,39,44,46,50 Some of the mentioned articles had overlaps in reporting different categories as indicators of the impact of such workshops. Table 1 shows the number of studies that assessed different outcomes, methods of assessment, and the outcomes assessed.

The Number of Studies that Assessed Different Outcomes, Methods of Assessment, and the Outcomes Assessed.

In 8 articles, the researchers assessed the impact of the workshops or training sessions by evaluating the assignments done by the participants or by subjective assessment of the manuscripts written by the participants.28,35,41,44,45,47,48,51 Each workshop had unique measures to evaluate the impact of their assessments. For example, manuscript grading by the instructors and 2 additional faculty members 28 or assessing each written project by 14 reviewers with 3 different evaluation methods. 44 This consisted of grading with a structured rubric, a feedback form, and free-form comments on the written assessment. 44

Some studies measured success through the quality of one final written assessment.47,48 However, the grading of this assessment varied from a single instructor determining the grade of each assessment using the Six-Subgroup Quality Scale to ensure all manuscripts were marked consistently 47 to focusing on standardized parameters to determine quality. 48

Some authors used multiple-choice and free-form questions before and after training sessions to evaluate the knowledge learned.41,45

A few studies used structured checklists or scoring systems to retrieve consistent marking from graders.35,44,51 Only one study used single-blind academics to grade final assessments. 51

Weekly assessments were used in one study to assess progress throughout the workshop. Participants of this study reported the lowest average final writing assessment grade, though, because many of the final manuscripts required major revisions. 44

Some studies focused on 1 to 3 hands-on exercises, where participants wrote their own research papers or writing assignments with guided feedback.28,35,41,51 On average, participants received a grade of 70.8% or higher on their written assessments in 4 studies.28,35,48,51 Participants were able to generate publications using this method in 2 of these studies.28,35

Studies that evaluated participants’ knowledge before the workshop or training sessions found that participants were uneducated on reference styles, manuscript drafting, academic writing, and publishing, along with the required structure and content of articles.41,48 Self-reported and assessed evaluations found that participants’ skills and knowledge had increased by the end of the session.41,44,45,47 Multiple-choice questionnaires and SQSS were the most common measures for evaluating skills gained.41,45,47 Only one of these studies reported skills self-assessed by participants. 41 Skills that improved included publishing and writing guideline knowledge, critical thinking, and writing quality and ability. In one study, authors reported using standardized forms where mentors reported their observed soft skills achieved over the course of the workshop. 44

Mentorship was used as a tool to train participants in some articles.28,35,44,47 Other studies used lectures and/or group discussions to train participants.41,45,48,51 While most of the articles evaluated the impact of in-person learning, only 2 articles evaluated the impact of online workshops. Both of these received positive feedback from participants regarding the online structure of the workshops.45,47

A majority of these studies found that participants were satisfied with the feedback, content, and/or structure of the courses.28,35,41,45,47,48 Suggestions for improvements included the use of more examples, more practice with hands-on exercises, and longer teaching periods.41,48

Effect of Medical Writing Workshops on the Increase in Knowledge

The only category that provided sufficient articles with rich quantitative data to conduct a meta-analysis was the one reporting on knowledge increase. Of the 11 articles in this category, 3 articles did not report sufficient data to be included in the meta-analysis.42,43,46 We reached out to the authors for the relevant data, but unfortunately, we did not receive any response. The remaining 8 studies included 4 studies, which reported the mean changes in knowledge after the educational intervention and 4 other studies reported the percentage of changes in knowledge. So, 2 separate meta-analyses were done.

SD was not reported in 2 studies.30,38 We once more sent emails to the authors requesting the related SD, but we did not receive any reply. So, the SD was calculated using the rule of 4 sigma by dividing the range of scores by 4. The SD of the mean difference was estimated using the formula: SD2 = [(SD before2 + SD after2) − (2 × R × SD before × SD after)] where the correlation coefficient (R) was considered as 0.7. 52

Effect of Medical Writing Workshops or Educational Sessions on the Mean Changes of Knowledge

The results of the meta-analysis of the 4 studies showed a mean increase of 1.13 (95% CI: −0.66 to 2.92; P = .22) based on a 5-point scale from 1 to 5.15,30,38,44 In other words, medical writing workshops or educational sessions could not increase the knowledge of participants from a statistical point of view. Figure 2 shows the related forest plot.

Forest plot of the effect of medical writing workshops or educational sessions on the mean changes in knowledge.

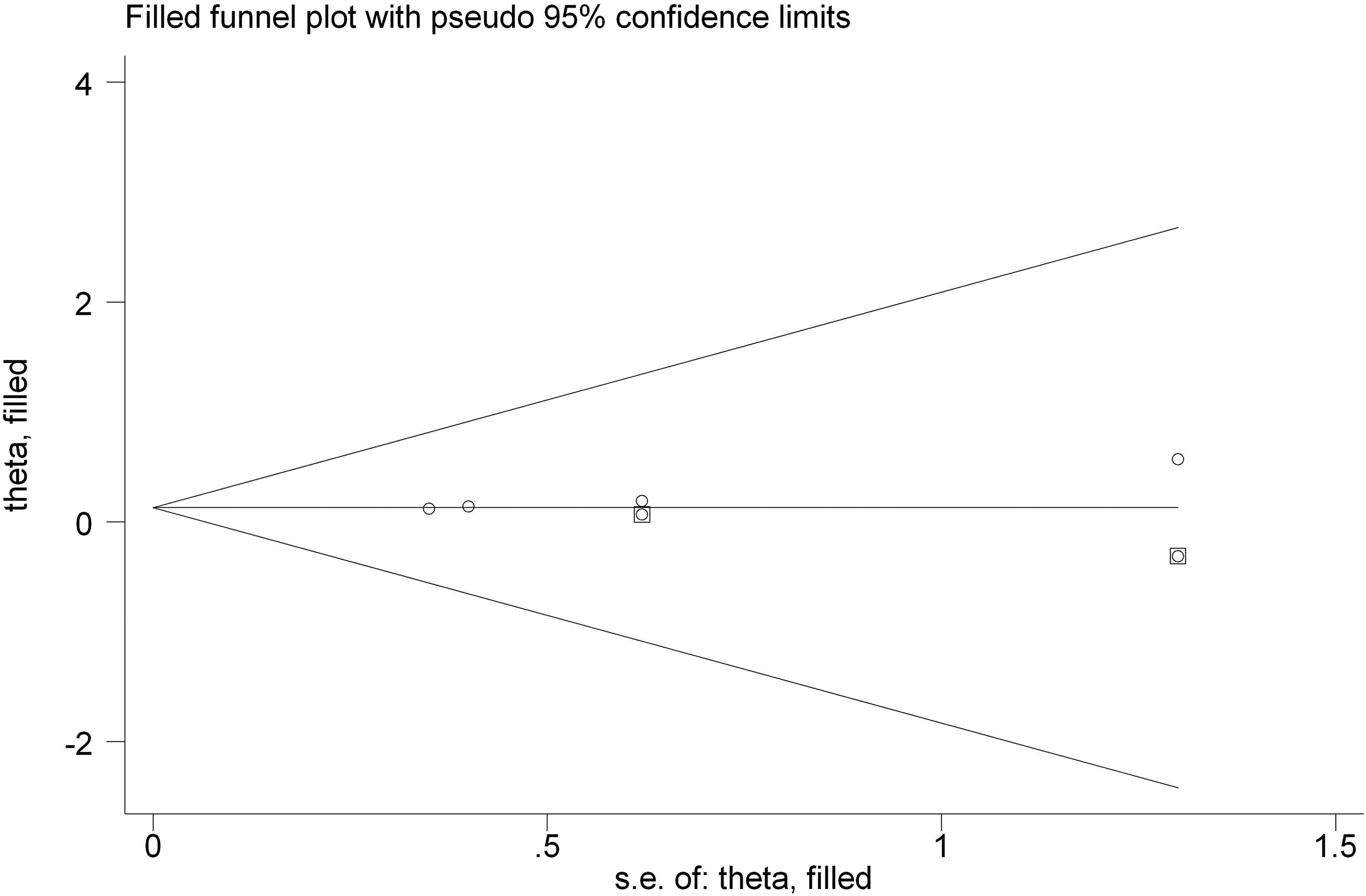

Figure 3 shows the funnel plot demonstrating no publication bias. Both Begg test (P = .68) and Egger test (P = .99) confirmed the lack of publication bias.

Funnel plot for evaluation of publication bias in the effect of medical writing workshops or educational sessions on the mean changes of knowledge.

Effect of Medical Writing Workshops or Educational Sessions on the Percent Changes in Knowledge

Meta-analysis of the remaining 4 studies, which reported an increase in the percent of knowledge after the workshops, showed a percent change of 15.2% (95% CI: −32% to 62%; P = .52); however, the percent of change was not statistically significant.39–41,45 Figure 4 shows the related forest plot. Similarly, no publication bias was found using the Egger test (P = .09); however, Begg test showed publication bias (P = .03). Figure 5 shows the related funnel plot. For more evaluation of the publication bias, we used the Metatarium command of the STATA software to estimate the adjusted percent change in the knowledge level by including possible missing studies, which could be the cause of publication bias, and include them in the final calculations (Figure 6). By including possible missing studies using the mentioned command, the final adjusted percent change in the knowledge level was 13% (95% CI: −30% to 56%; P = .56). Checking for the certainty of evidence using GRADE was not possible because of the “very, very low certainty.”

Forest plot of the effect of medical writing workshops on the percent of change in knowledge.

Funnel plot for evaluation of publication bias in the effect of medical writing workshops on the percent of change in knowledge.

The results of adding possible missing studies using the metatarium command of STATA software.

Discussion

Overall, the reports on the impact of medical writing/publishing workshops or training sessions in the articles were incomplete or lacked the necessary precision to draw consistent conclusions. The workshops were sporadic, and researchers used their own method of assessment. Some studies reported grading the participants’ works by the instructors and assessed each written project, while some other studies reported evaluating the quality of final written tasks as an indicator of the impact of their workshops. Researchers-made multiple-choice questions and forms were used in different articles. Overall, the authors believed the participants were satisfied with the content and administration of the workshops and considered this to be a positive impact of their workshops.

The only impact for which some articles provided analyzable data was an increase in knowledge. The findings of our meta-analyses indicated that medical writing/publishing workshops could increase the mean or percentage of participants’ knowledge, but the observed changes were not statistically significant. Considering the lack of a systematic course for teaching medical writing and publishing and the relatively low resources used by workshops compared with a full course, any increase in the knowledge of participants after the workshops is important and worth investing in. Statistical significance for a group of participants may not be representative of statistical significance in increasing knowledge at the individual level. Statistical nonsignificance that is reported here is about the mean or percentage of knowledge increase among a group of participants; however, at the individual level, at least some people may experience knowledge increase, which is important.

Lack of systematic training for medical writing and publishing standards has led to the fact that many clinicians will not be taught how to write for publication at any stage of their career 53 or may lead to different publication ethics misconduct due to lack of knowledge,54,55 rather than fraud. 56 This formal training gap leads to the use of different alternative methods, such as mentoring programs to train applicants. Pololi and colleagues reported the effect of a Collaborative Mentoring Program on the writing and publishing abilities of 18 assistant professors who could finally publish one scholarly publication after the program 19 ; however, the long-term impact of such programs, the quality of the manuscripts, the quality of the intervention, and the quality of the journals in which manuscripts were published are not clear. 16

Workshops, an integral component of nonsystematic training programs, play a pivotal role in teaching medical writing and publishing but might not cover all the preferences of peer reviewers. They can give advice that is likely to help with common issues but won't address every peer reviewer's unique preferences. Our investigation revealed that the impact of such workshops on the sustainability of learning and the enhancement of participants’ knowledge and confidence remained unverified. This finding aligns with the results reported by Galipeau and colleagues, who conducted a systematic review to investigate whether training in writing for scholarly publication, journal editing, or manuscript peer review improves the quality of health research reporting. Their analysis also indicated that the included studies were generally small and inconclusive regarding the effects of such training for authors. Furthermore, these studies were deemed of questionable validity and susceptible to misinterpretation. Similar to their findings, ours underscores the existing gaps in creating an evidence-based understanding of how to improve the scientific quality of research output for authors. 16

Strengths and Limitations

The primary strength of our study is its uniqueness, being the first systematic exploration of articles focusing specifically on the impact of medical writing/publishing workshops to date. Additionally, it represents the first meta-analysis in this field, contributing to the establishment of evidence-based literature that can guide the organization of such workshops in the future. Our study's strength is further underscored by the exhaustive search conducted across various databases, utilizing a comprehensive list of database-specific keywords. The inclusion of grey literature and a snowballing approach to identify all relevant articles adds significance to our findings.

However, an unavoidable limitation of this study is the incomplete reporting of findings in individual studies and the lack of information on how workshop presenters delivered their educational materials. This limitation impedes our ability to offer a robust recommendation regarding the optimal approach for conducting such workshops.

Another limitation of this study was that we could not check for the certainty of evidence. Using the GRADE approach to certainty of evidence, observational studies begin as low-quality evidence and can be rated down to very low because of a number of issues, including the risk of bias. The available studies were mostly single arm (ie, no concurrent control group not receiving the intervention). Any such studies would be rated down to very low simply because of the study design, without any further limitations because of the risk of bias in the conduct of the studies. Since GRADE does not have a category for “very, very low certainty” there was no need to address additional limitations in the conduct of the studies. Despite these limitations, our study contributes valuable insights to the existing body of knowledge on medical writing and publishing workshops.

Conclusions

In the absence of academic courses on medical writing/publishing, workshops are conducted worldwide; however, reports on their educational activities, presentation methods, and curricula are often incomplete and vary. Their impact is mostly not evaluated using standardized methods, and no valid and reliable tools have been employed for these assessments.

The definition of impact varies in different workshops, ranging from changes in knowledge to confidence in writing skills, participant satisfaction, and subjective measurements. Although workshops could lead to an increase in participants’ knowledge, statistically significant differences are often not observed.

There is a need for a standardized measurement tool for the evaluation of such workshops. Academic teaching courses with defined curricula are recommended for graduate students and medical researchers who need to publish their studies, although they may not be suitable for practitioners who do not have the flexibility in their role to leave bedside care roles in order to participate in such courses. This approach can address the current gaps and enhance the effectiveness of medical writing/publishing education.

Supplemental Material

sj-pdf-1-mde-10.1177_23821205241269378 - Supplemental material for Impact of Performing Medical Writing/Publishing Workshops: A Systematic Survey and Meta-Analysis

Supplemental material, sj-pdf-1-mde-10.1177_23821205241269378 for Impact of Performing Medical Writing/Publishing Workshops: A Systematic Survey and Meta-Analysis by Behrooz Astaneh, Ream Abdullah, Vala Astaneh, Sana Gupta, Hadi Raeisi Shahraki, Aminreza Asadollahifar and Gordon Guaytt in Journal of Medical Education and Curricular Development

Supplemental Material

sj-pdf-2-mde-10.1177_23821205241269378 - Supplemental material for Impact of Performing Medical Writing/Publishing Workshops: A Systematic Survey and Meta-Analysis

Supplemental material, sj-pdf-2-mde-10.1177_23821205241269378 for Impact of Performing Medical Writing/Publishing Workshops: A Systematic Survey and Meta-Analysis by Behrooz Astaneh, Ream Abdullah, Vala Astaneh, Sana Gupta, Hadi Raeisi Shahraki, Aminreza Asadollahifar and Gordon Guaytt in Journal of Medical Education and Curricular Development

Supplemental Material

sj-pdf-3-mde-10.1177_23821205241269378 - Supplemental material for Impact of Performing Medical Writing/Publishing Workshops: A Systematic Survey and Meta-Analysis

Supplemental material, sj-pdf-3-mde-10.1177_23821205241269378 for Impact of Performing Medical Writing/Publishing Workshops: A Systematic Survey and Meta-Analysis by Behrooz Astaneh, Ream Abdullah, Vala Astaneh, Sana Gupta, Hadi Raeisi Shahraki, Aminreza Asadollahifar and Gordon Guaytt in Journal of Medical Education and Curricular Development

Supplemental Material

sj-pdf-4-mde-10.1177_23821205241269378 - Supplemental material for Impact of Performing Medical Writing/Publishing Workshops: A Systematic Survey and Meta-Analysis

Supplemental material, sj-pdf-4-mde-10.1177_23821205241269378 for Impact of Performing Medical Writing/Publishing Workshops: A Systematic Survey and Meta-Analysis by Behrooz Astaneh, Ream Abdullah, Vala Astaneh, Sana Gupta, Hadi Raeisi Shahraki, Aminreza Asadollahifar and Gordon Guaytt in Journal of Medical Education and Curricular Development

Footnotes

Acknowledgments

The authors highly appreciate the sincere collaboration of Neera Bhatnagar and Hannah McIntosh for their professional help and insight in searching different databases. The authors would also like to thank Eric McMullen, Ya Gao, Kyle Tong, Luis Enrique Colunga Lozano, and John Basmaji for their help during the screening process.

Authors Contributions

BA: Conceptualization, Data curation, Methodology, Project administration, Validation, Writing – original draft, Writing – review & editing; RA: Data curation, Project administration, Writing – review & editing; VA: Data curation, Project administration, Writing – review & editing; SG: Data curation, Project administration, Writing – review & editing; HRS: Formal analysis, Writing – review & editing; AA: Data curation, Project administration, Writing – review & editing; GG: Conceptualization, Data curation, Methodology, Project administration, Supervision, Validation, Writing – review & editing; All authors read and approved the final version and accept the accountability.

Authors’ Note

Availability of data and material: All relevant data are within the manuscript and its Supplemental Materials.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.

Supplemental Material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.