Abstract

This article presents a brief overview of the state-of-the-art in large language models (LLMs) like ChatGPT and discusses the difficulties that these technologies create for educators with regard to assessment. Making use of the ‘arms race’ metaphor, this article argues that there are no simple solutions to the ‘AI problem’. Rather, this author shows that educators and universities will need to adopt a complex strategy consisting of solutions at four different levels of vulnerability: Ethical, pedagogic/didactic, technological and policy. Lastly, the article presents general recommendations for addressing vulnerabilities at each of these levels.

Introduction

Educators have enjoyed a few decades of mostly anxiety-free innovations in education-focused technologies. It would be difficult to imagine returning to a world before Canvas, Moodle, Blackboard and Turnitin existed. Digital technology has permeated the profession at both the practical and theoretical levels during the twenty-first century. Not only have we become dependent on technology in the education sector, but few topics have also generated more scholarly production over the last decade than the use of digital tools in the classroom. A 2-year pandemic only deepened our reliance.

For the first time, there are hints that the honeymoon with technology in education is coming to an end. The release of ChatGPT-3.5 in 2022 sent ripples of angst through the education sector. Educators saw in the AI model a real threat to the integrity of student assessment methods. ChatGPT appeared to do everything that our students could do—often at a higher level. In the months that followed, universities and colleges around the world worked frantically to propose temporary solutions to the ‘AI problem’, while administrators and faculty tried to formulate some basic understanding of the technology and its capacities. It would be difficult to name another technology that has blindsided higher education like ChatGPT.

In this article, I offer a brief description of large language models (LLMs) and discuss ChatGPT’s capabilities and limitations from an educator’s perspective. In the process, I examine the complex problem over which many university educators are losing sleep: Student use of AI systems to cheat on assignments. Finally, I propose a multi-level strategy for addressing the problem systematically.

It is important to acknowledge that many educators will find positive uses for AI-based tools in the classroom and beyond. As the title of this article indicates, I have limited my analysis to addressing unsanctioned uses of AI-supported technologies. I fully support the claim that educators should strive to find ways to work with these technologies when possible because the potential benefits for learners and teachers are obvious. The rapid expansion of AI-based tools into the education sector (and every other sector) is likewise inevitable. As always with paradigm-shifting technologies, however, ‘good’ usage and ‘bad’ usage are eventualities that must be discussed and debated during the initial stages of integration.

ChatGPT and LLMs

ChatGPT is a general designation used to refer to multiple generations of AI-chatbot iterations developed by OpenAI between the years 2018 and present. A stable version of the next iteration, ChatGPT-4, was made public in March 2023 (ChatGPT-4 Turbo announced in November 2023). ChatGPT-3.5, the initial launch version of the technology, was released in 2020 but was not made public until November 2022. In 2024, OpenAI made ChatGPT-4 available to the public for free. This section will focus on the capabilities of ChatGPT-3.5 and ChatGPT-4 since these are the iterations to which students have access most often. It is important to note, however, that indirect access to more advanced models has become common through the use of plug-ins and through the integration of that model into various consumer applications. Universities are beginning to make more advanced models available to students as well.

ChatGPT is an example of an LLM that has been trained to interact with questions or prompts in a conversational way. When asked to define LLMs, ChatGPT-3.5 says they ‘are artificial intelligence models that use deep learning techniques to analyze and understand human language. These models are designed to process vast amounts of text data and generate human-like language’. They ‘consist of multiple layers of neural networks that are trained using large data sets of text’. From this training, an LLM learns to ‘recognize patterns in the data’ and to ‘generate new text that is similar to the training data’ (OpenAI, 2023b). In addition, the next generation, GPT-4, is multi-modal, which means that it can encode and decode non-textual data to produce image, sound, video and text responses.

It is important to understand that LLMs are, in essence, prediction-making algorithms. ChatGPT is able to analyze ‘the context and meaning’ of the input, allowing it to generate ‘the most probable response’ to a question or prompt (OpenAI, 2023b). LLMs are not programmed to provide factual information. They predict the most probable language responses to a question or prompt based on language patterns that they identify in the data set on which they have been trained. A consequence of the way that LLMs work is that they have a tendency to ‘hallucinate’ responses at times, meaning that they generate answers that humans classify as fabrications (Athaluri et al., 2023; Azamfirei et al., 2023; Rudolph et al., 2023a). If patterns of thought exist in the data set, an LLM will identify those patterns and generate probable responses (predictive inferences drawn from those patterns). The factualness of a response is not guaranteed since responses are more of a ‘prediction’ than an attempt to describe reality. ChatGPT-3.5’s responses to requests for citations often include completely fabricated article titles, for example, Choi (2023). In short, the model ‘hallucinates’ the titles of articles that could be written by a certain researcher based purely on inferences drawn from the researcher’s actual publication history (a probability response). These hallucinations can be understood as ‘predictions’ about the kinds of articles that an author could write, with the actual publication record used as a pattern for that prediction. OpenAI has worked to reduce the frequency of ‘hallucinations’ in their newest iteration of ChatGPT. As of November 2023, GPT-4 and 4-Turbo led the pack among competing LLM-based AI models with a 97 per cent accuracy score (Hughes et al., 2023). Factual consistency rates have continued to improve in 2024, with OpenAI’s latest iterations, GPT-4o-mini (98.3 per cent), GPT-4o (98.5 per cent) and OpenAI-o1-mini (98.6 per cent) (Hughes et al., 2023).

Notably, LLMs can be trained to exclude certain responses or classes of response but this requires human intervention. The process of training a model to ignore certain patterns in the data set and to promote other patterns is referred to as ‘alignment’. The idea behind alignment is to try to ensure that the model’s responses remain consistent with the values and ethics of human societies. The method that OpenAI employs to train ChatGPT is called reinforced learning from human feedback (RLHF). At this point, human evaluations of the model’s responses still play a central role in the way that ChatGPT’s algorithm is trained. For now, this means that the model does not generate responses that we would categorize as strictly independent from human intervention. However, as the models develop over time, OpenAI has stated that less direct human intervention will be required since more advanced models will be able to train/align themselves to a pre-determined set of values (Altman, 2023; Leike et al., 2022).

Initial Thoughts about the Effects on Education

Students have had access to ChatGPT-3.5 since November 2022; however, the company’s popularity spiked in March 2023 when OpenAI launched ChatGPT-4. Discussions about the abilities and implications of AI models began to permeate both traditional and social media immediately. Since then, ChatGPT has exceeded all expectations, setting a record for the fastest-growing consumer application in history, with 100 million active monthly users within 2 months after launch (Hu, 2023).

Education was among the first sectors to sound an alarm about the use of LLMs. Concerns about widespread cheating and/or plagiarism led to a sense of panic, as many educators began to understand that it very difficult, perhaps even impossible, to detect when students were using AI systems to complete assignments. OpenAI acknowledged the scale of the problem, publishing a set of ‘considerations’ for educators on their website (OpenAI, n.d.). Regarding the issue of plagiarism, the document asserts that it is the responsibility of individual institutions to address the problems created by student usage of their technology, while urging caution about attempts to hold students accountable in the absence of clear guidelines:

We also understand that many students have used these tools for assignments without disclosing their use of AI. Each institution will address these gaps in a way and on a timeline that makes sense for their educators and students. We do however caution taking punitive measures against students for using these technologies if proper expectations are not set ahead of time for what users are or are not allowed (OpenAI, n.d.).

OpenAI recommends the use of AI detectors, including its own, but admits that the technology is not entirely reliable:

These tools will produce both false negatives, where they don’t identify AI-generated content as such, and false positives, where they flag human-written content as AI-generated. Additionally, students may quickly learn how to evade detection by modifying some words or clauses in generated content (Educator Considerations for ChatGPT, n.d.).

It is clear from these admissions that education is in a moment of transition. Traditional modes of assessment will need to be reimagined, as they are now vulnerable to an unprecedented kind of ‘exploit’. It will be in everyone’s interest (students, teachers and administrators), therefore, to try to close the ‘gaps’ as quickly and conscientiously as possible.

Identifying a Complex Set of Problems

To say that LLMs—more specifically, the many applications of that technology within the domain of generative AI—will create complex challenges for the world in the near future is a gross understatement. A growing number of researchers are beginning to describe the diverse ways in which generative AI will likely impact human understanding and influence human institutions, both positively and negatively. The scale of the perceived threats ranges from dire predictions about the extinction of our species [with the emergence of artificial general intelligence (AGI)], to warnings about the potential dissolution of Western democratic institutions, as we find it increasingly difficult to distinguish fact from fiction.

Many of these concerns centre on the pace of AI research and development, which appears to be trending towards a continuation of exponential scaling. Following from the work of Nick Bostrom and others, it is now widely assumed that the development of AI will continue to advance, finally reaching a point when that development becomes self-automated, unlocking the potential for an explosive acceleration well beyond the limits of human intelligence (Bostrom, 2016; Grace et al., 2018). More recently, and perhaps more problematically in the short term, are concerns expressed in a recent presentation given to the Centre for Humane Technology, which discusses the current frenetic rush of companies to introduce consumer versions of AI-supported tools to the market (Harris & Raskin, 2023). The launch of ChatGPT has sparked a massive response across many different domains, including social media platforms, search engines, image production, music production, video production, office productivity platforms, education platforms and language learning applications, just to name a few. To remain competitive, markets feel immense pressure either to integrate LLMs into their existing products or to develop entirely new products with AI systems running at the core. In a 2023 presentation, Tristan Harris and Asa Raskin raise questions about the ethical safety of this push, observing wisely, ‘when you invent a new technology, you uncover a new class of responsibility, and it’s not always obvious what those responsibilities are’ (Harris & Raskin, 2023). 1 The process of discovering new responsibilities requires careful consideration, and careful consideration requires time.

As an important aspect of this consideration, the push to incorporate AI systems quickly and broadly has generated a debate around the future of work and the place of creativity. These are not new concerns, of course. A 2017 survey of 352 researchers in artificial intelligence predicted a 50 per cent chance that AI will replace humans in ‘all tasks’ related to work within 45 years (Grace et al., 2018). The market’s response to the launch of ChatGPT appears to lend credibility to this prediction. Of course, many of these concerns are linked to the potential for an emergence of AGI within the next few years. AGI represents the next step in the ‘evolution’ of AI, bringing it within parameters comparable to human intelligence. Sam Altman, CEO of OpenAI at the time, expressed his own apprehensions about the prospects of AGI:

The first AGI will be just a point along the continuum of intelligence. We think it’s likely that progress will continue from there, possibly sustaining the rate of progress we’ve seen over the past decade for a long period of time. If this is true, the world could become extremely different from how it is today, and the risks could be extraordinary (Altman, 2023).

Since the launch of ChatGPT-4 in early 2023, a few major players in the education sphere have introduced versions of their platforms that now make use of LLM technology. In most cases, this is now direct integration with GPT-4o. A few notable examples from well-established companies are DuolingoMax, GrammarlyGo and Khanmigo. According to Grammarly’s website, the goal of their platform, GrammarlyGo, is to ‘generate high-quality drafts, outlines, replies and revisions when you need them’. The appeal of these claims to students is obvious, and with many similar platforms awaiting imminent launch and even more platforms in development for 2025 release, the impact on student work will likely be enormous. To that point, Microsoft announced the integration of a next-gen version of ChatGPT into its Office productivity suite (the Prometheus Model), which means that students who subscribe to the Copilot service will have access to AI-generated content at the most basic levels of their workflow. Educators will need to have a clear understanding of how these tools are going to impact the ways that students (and the rest of the world) produce individual work.

The Arms Race Metaphor and Developmental Acceleration

ChatGPT is only one of many newly introduced LLM-based platforms. Amazon introduced its AI platform, Bedrock, in April 2023; Anthropic launched Claude 2 in July 2023; Meta released Llama 2 in July 2023; and in March 2023, Elon Musk announced plans to develop his own AI model to compete directly with OpenAI, a company with whom he was initially involved as an investor. Musk’s team (xAI) delivered their model, Grok, in November 2023. Lastly, and perhaps most importantly, Google introduced its own rival models, Bard and PaLM 2, between February and May 2023, respectively. One year later, Google relaunched Bard as Gemini 1.0 Ultra. Within a week of this relaunch, Google announced a next-gen AI model, Gemini 1.5, which appears to outperform ChatGPT-4 on many ‘general reasoning’ tasks. By the time this article is published, even more AI models will have been added to an increasingly crowded space, and most of the abovementioned companies will have introduced updated and thus more powerful models.

As an addendum, I find it important to mention Microsoft’s Bing AI as well, since it represents a new direction in AI implementation and accessibility. Microsoft launched its Bing chatbot in February 2023, although it has since been rebranded as Copilot. Their chatbot, which is freely available to anyone who uses the Bing search engine, makes use of a ‘next-generation OpenAI LLM that is more powerful than ChatGPT and customized specifically for search’ (Mehdi, 2023). A much-discussed limitation of ChatGPT-3.5 is the fact that the data it was trained on was not updated after ‘early 2022’ (OpenAI, 2023a). Whereas ChatGPT-4 was updated to include training data before April 2023, ChatGPT-3.5 is unaware of events that have happened since it ‘went live’. Copilot, on the other hand, maintains a connection to the internet, giving it access to an ever-expanding source of data. 2 Rudolph et al. explain how a persistent connection to the internet leads to superior accuracy because the chatbot: ‘is thus aware of current events and not ignorant of events after September 2021, such as the war in Ukraine. It provides footnotes with links to sources and can provide proper academic references upon request’ (Rudolph et al., 2023b, p. 371). Going forward, we can expect that LLM-based tools will maintain persistent links to current data, making them both more useful and more difficult to either prevent or detect in education use cases. For example, OpenAI announced ChatGPT search in October 2024, making it possible for the current models to ‘get fast, timely answers with links to relevant web sources, which you would have previously needed to go to a search engine for’ (OpenAI, 2024a).

Undoubtedly, if the current rate of development persists, we will continue to see companies add increased functionality to their AI tools, making the task of keeping pace exceedingly difficult for policymakers and educators. In short, we are in the initial stages of an ‘arms race’ that is developing between the main players in the technology sector. Each entity is vying to secure market advantage over the other by offering the greatest degree of AI integration to the consumer as quickly as possible. The consequence is that an ever-growing number of AI platforms are being made available to the public. Following the established pattern for mass-market technologies over the last decade, these platforms are entering the consumer marketplace so rapidly that we do not have adequate time to consider the potential complications that may accompany a widespread use of AI-supported technologies.

On a secondary level, we are seeing a different sort of ‘arms race’ develop within the education sector. Traditionally, approaches to instruction and assessment are relatively stable concepts. Ideas usually have long life cycles, which means that advancements in education theory and praxis typically move at a slow pace. It is not uncommon, for example, to rely on theoretical frameworks that were introduced and popularized by educators in the previous century (e.g., Vygotsky’s sociocultural theory or Maslow’s ‘Hierarchy of Needs’).

Likewise, the life cycles of most technologies are often measured in decades. We expect to use most technologies for multiple years before being asked to master a new version/paradigm. Even if we replace a mobile phone each year, for example, we expect the operating system and UI to be recognizable (stable) enough to provide a continuous user experience. We do not expect to master a new technological paradigm each time we purchase a new version of a device. With AI models, however, not only are we encountering a completely new technological paradigm, but the underlying technology develops at a rate that is unprecedented in history. Not only will we need to understand and adapt to a novel technological paradigm, if we hope to adapt, we will need to track rapid iterative changes to the capabilities of that technology in real time.

To give an example of the rate of development that we are witnessing, consider the history of ChatGPT itself. OpenAI developed the first version of their language model, GPT-1, in 2018. The company released the first version of their conversation-based chatbot, ChatGPT, almost 4 years later, in 2022. Between 2018 and 2022, OpenAI developed multiple iterations of GPT, culminating in the now famous launch of ChatGPT-3.5 and the more recent release of ChatGPT-4 in March 2023. There are a few things to notice in the version history: First, OpenAI is able to release new iterations of GPT at the astounding pace of a new version roughly each year (GPT-1 in 2018, GPT-2 in 2019, GPT-3 in 2020, GPT-3.5 in 2022 and GPT-4 in 2023); second, in terms of scaling, each of these versions represents exponential growth beyond previous iterations (GPT-1 made use of 117 million parameters; GPT-2, 1.5 billion parameters; GPT-3, 175 billion parameters; GPT-4, unknown at present, but rumoured to be between 1 and 170 trillion parameters) (OpenAI, 2023b; VanBuskirk, 2023). This brief timeline demonstrates just how rapidly the upscaling of GPT’s capabilities has occurred. This is a trend that most expect to continue, with a more advanced version of GPT being launched possibly by 2025. Sam Altman has spoken about the fact that ChatGPT-5 is already in development (Dorrier, 2023). If this rate of advancement continues, it is not difficult to imagine AI systems drawing close to the stated goal of achieving AGI sooner rather than later. In a recent interview with The Wall Street Journal, CEO of Google’s DeepMind project, Demis Hassabis, confirmed that the goal of developing AI models that possess ‘human-level cognitive abilities’ is achievable within the next decade (Kruppa, 2023).

Thus, predictions that generative AI models will continue to develop at an accelerated pace, thereby extending their capabilities well beyond the threshold of being able to detect differences between human- and AI-generated responses, are not unreasonable. Even if we manage to decelerate these advancements, the last year has provided enough examples of the capabilities of generative AI to convince many experts that we have passed an important developmental milestone already. At present, detecting AI-generated content is extremely difficult, if not impossible (Gill et al., 2024, p. 21). For example, the AI detection tool developed by OpenAI was so unreliable at launch that it could not be used to detect content generated by OpenAI’s own AI model (ChatGPT). Their detection tool has since been shut down. In the light of these failures, one can argue that generative AI models (LLMs) already function beyond our capacity to detect instances of unsanctioned usage in traditional assessments. Educators must find ways to adapt their teaching and assessment strategies to the new realities created by AI-based technology or risk operating from a retrograde position. Problematically, the pace of AI development shows no signs of slowing down making the task of adapting analogous to aiming at a moving target.

Lastly, we are experiencing a third level of ‘arms race’: A struggle to stay ahead of our own students’ understanding and use of AI-based technology. As AI systems become integrated more and more into consumer technologies, especially social media platforms,

3

students will gain valuable competencies at a rate that may begin to outpace the competencies of their teachers. We need to wait for reliable data to provide clarity about the level of engagement students currently have with platforms like ChatGPT, but if anecdotal evidence has any value in this discussion, a significant portion of the 100 million active monthly users are students. A recent investigation by

Complex Solutions for a Complex Problem

In the preceding section, I provided a summary of the current state-of-the-art in LLM-based AI systems, while describing some of the challenges that have arisen from its development. For many educators, the emergence of generative AI has led to unprecedented levels of concern over the potential for cheating on assessments. In the following sections, I present a series of observations and recommendations that I believe will move us closer to finding possible solutions. As a way of trying to make the challenges easier to imagine, I believe it will be helpful to think about the problem (the use of AI for cheating) as a set of system ‘vulnerabilities’. Plagiarism is a problem as old as written culture itself. The novelty that we are facing is this: LLMs can be used to exploit vulnerabilities in the way that we detect and prosecute acts of plagiarism. In response to this novelty, we need to begin to map the points where the current system is vulnerable. Next, we need to understand how LLMs can be used to ‘exploit’ these vulnerabilities. Lastly, we need to outline some of the limitations associated with using AI models to cheat on assessments. If we can arrive at a better understanding of these three areas, we can begin to think about how to ‘resecure the system’.

In the next section, I will argue that the system is vulnerable at four points. The intention is to demonstrate that proposed solutions will need to address these numerous vulnerabilities at their respective levels. I categorize the problem as ‘complex’, meaning that a simple, single-factor approach will not provide a solution because the problem does not exist at a single level. Complex problems require systematic approaches that address multiple weaknesses at their multiple points of origin. I argue that potential solutions will need to address exploits at four distinct levels of vulnerability: The ethical level, the pedagogical level, the technological level and the political (policy) level.

Layers of Security: Prevention, not Detection

It should be acknowledged from the beginning that the immediate strategy for educators will need to be one of prevention, not detection. Currently, there are no reliable tools available to detect the use of generative AI in producing text. Legacy companies like Turnitin have made limited progress with the detection of AI-generated content (Dave, 2023). However, the technologies that they employ for detection operate probabilistically, with success rates that depend on the percentage of AI-generated content in a text (Chechitelli, 2023). As a result, these systems are quite easy to trick. One need only to paraphrase the text created by an AI model or rearrange the text in minimal ways to make the detection algorithm perform less reliably. Unfortunately, tools that help students to rearrange AI-generated text have already proliferated on the internet.

From an administrative standpoint, the bigger problem is that AI detection tools do not provide educators with forensic evidence, portions of both the original and plagiarized text, to compare in the investigation of cases of cheating. In traditional cases of plagiarism, teachers need only locate the original published text being copied, or claimed as one’s own work, to prove the case. With LLMs, there are no original published texts to discover. Evidence in the traditional sense does not exist. Rather, one must trust a detection algorithm tool to predict (accurately) the probability that a text has been generated by AI. Unlike traditional cases, with LLMs, the only ‘evidence’ available is the prediction of an algorithm. Understandably, university administrators have been squeamish about pursuing investigations of cases of suspected plagiarism using such non-traditional and potentially unreliable evidence. For this reason, AI-based plagiarism cannot be understood within the same paradigm as traditional plagiarism. The nature of the problem has changed. With that change should come the recognition that ‘proof’ in the traditional sense may not be an achievable goal in the investigation and prosecution of plagiarism for some time to come. Thus, our efforts should be directed at prevention, not detection.

I believe our current focus should be on creating a more secure academic environment that makes the unsanctioned use of AI systems a losing strategy for students who wish to cheat. My approach will be to propose four ‘layers of security’ that work together to prevent ‘exploits’ at four levels of vulnerability (Figure 1).

Four Layers of Security that Address Four Levels of Vulnerability.

Ethical Layer

Intellectual integrity provides the ethical foundation for everything we do in academic life. It guides the processes with which we evaluate existing knowledge and through which we both produce and share new understandings of the world. Intellectual integrity provides context for establishing trust between the teacher as an expert in the field and the student as a committed learner and future professional. As such, intellectual integrity forms the first layer of security against dishonesty in academic production.

While there are no reliable ways to quantify intellectual honesty, educators should make every attempt to reiterate the values of academic integrity even as new technologies challenge the way that we think about the parameters of these values. With regard to the use of secondary sources and properly crediting researchers, students tend to have a working definition of plagiarism in mind. However, the sheer novelty of AI models combined with a general lack of understanding about how it works may create conceptual challenges for students. Setting aside for the moment explicit decisions to use AI to cheat, many students may not see using ChatGPT to complete an assignment as comparable to using published journal articles. Students may be confused about the ethics of not crediting a non-human source. They may wonder if quoting a non-human source without providing proper credit really harms anyone.

While the correct judgements about these dilemmas may be obvious to research professionals, they may not be so obvious to non-experts. As such, educators need to communicate clearly and explicitly to students that plagiarism (the use of unaltered and/or uncited information) is an unacceptable practice no matter the source. Perhaps it goes without saying, but when providing examples of plagiarism in our instructions, we must now include examples of AI-sourced information. It should be mentioned, however, that it took the main style manuals a few months to catch up after the launch of ChatGPT. The use of AI models in academic writing, even when properly cited, remains a point of controversy in academic writing.

Not only should we be communicating to students about the expectations and habits of intellectual integrity, we should be taking steps to ensure that they are taking these points into consideration when they submit work. An additional recommendation, therefore, would be to require students to verify that the work they produce is solely theirs each time they submit an assignment or external exam. In practice, this would not be much different in structure from the kinds of affirmations that researchers will likely be required to make now when they submit articles for publication (van Dis et al., 2023). Students can be asked to include a statement of academic integrity at the end of an assignment: For example, ‘I hereby affirm that the content of this assignment is the product of my own work. When other sources of information have been used, I have given proper credit to the appropriate persons, or technologies’.

Statements of academic integrity will not put an end to plagiarism, of course, but they will act as a deterrent for students who may be weighing the ethics of their decision. Together with clear communication about the expectations and habits of intellectual integrity (Harper, 2024), asking students to affirm their commitment to these principles will provide the first layer of security in a multi-level strategy to address vulnerabilities in the current system.

Pedagogic/Didactic Layer

In December 2022, less than a month after the launch of ChatGPT-3.5, Stephen Marche sounded the alarm about the presumed death of the college essay in The Atlantic (Marche, 2022). The supposed demise of the essay format was predicated on the assertion that ChatGPT could produce college- and graduate-level essays with ease. Students around the world cleverly demonstrated the capabilities of the technology by submitting AI-generated essays for university courses. Not only is the technology undetectable, passing as human authors, the essays it generates are good enough to earn decent grades from seasoned assessors (Eke, 2023). To the casual viewer, it appeared that the structure of higher education was beginning to collapse.

Over the course of the last two years, educators have had many opportunities to use ChatGPT for themselves. We have witnessed the astounding, perhaps even revolutionary, capabilities of AI-supported technology—first in the GPT model itself, but then in the many GPT-supported tools and applications that have come to the market in 2023–2024. As the announcement of Microsoft’s initial investment of $10 billion in OpenAI demonstrates, companies are betting on the idea that humanity’s future will be written with the help of artificial intelligence. Still, this brief interaction with LLMs has shown us that AI-supported technologies are far from perfect replacements for human thinking. In short, while the educational paradigm has begun to shift, it has not shifted completely. Educators find themselves suddenly thrust into a transitional moment.

Perhaps the most complex challenge that educators face as we adapt to the capabilities introduced by AI models relates to how we design assessments. Given the narrow focus of this article, a detailed examination of the future of assessment is not possible. However, we can make a few observations about what ChatGPT and other chat-based AI models do well and what they do not do so well. From these observations, we can begin to make recommendations: (a) About the kinds of assessments educators should avoid using and (b) about how we might approach the task of AI-proofing assessments—or, at the very least, how we can make using AI models to cheat more difficult. We should keep in mind, however, that one can only make general recommendations at this point. As I explain above, the capabilities of LLM-supported AI systems are developing continuously and rapidly. Aspects of the recommendations that one makes today will be outdated in a few months (or less). 4

ChatGPT is designed to function as a chatbot. This means that users can carry out extended conversations with the model, adding incrementally to context and meaning in the conversation over time. Conversations take place in a prompt-and-response modality. Most incredibly, ChatGPT-4o retains a working memory of the conversation, making it quite easy to refine both the user’s prompts and the model’s responses as context and meaning accumulate over time. In addition, even older models like ChatGPT-3.5 are quite good at summarizing concepts and providing comparative analyzes (Figure 2). It is this functionality, of course, that creates a dilemma for educators. Users can ask questions about any topic on which the model has been trained (which, at this point, is assumed to be most of humanity’s published knowledge) and receive a response in seconds. ChatGPT-3.5’s answers tend to be short, gravitating towards summary-level explanations; yet they are indistinguishable from human responses in most ways.

Example of ChatGPT-3.5 Responding to a Query with Information, then Performing an Analysis of the Original Information in Relation to a Second, Unrelated Query.

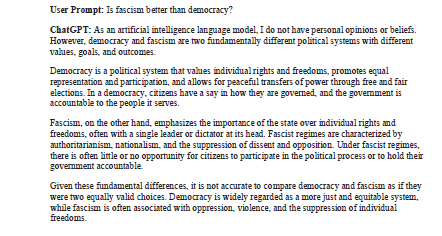

There are several areas in which ChatGPT-3.5 struggles to provide human-like responses; however, these ‘struggles’ originate more in the way that the model is trained than in the computational power of its predictive algorithm. For example, ChatGPT is programmed to avoid forming subjective opinions. If asked to provide an opinion, it will inform the user that this does not fall within the bounds of its training (Figure 3). Because ChatGPT-3.5’s training includes programming that prevents it from forming opinions, it does not ‘think’ with the aim of forming conclusions as humans tend to do. When asked if it thinks about its own thinking, ChatGPT informs the user that LLMs analyze data, but they do not have a subjective experience or conscious awareness of their own processes (Figure 4). They do not form opinions. 5 They do not have beliefs. As such, they are functionally incapable of metacognition—and perhaps incapable of critical thinking, if we characterize it as a metacognitively regulated process.

Is ChatGPT Capable of Metacognition?

Example of ChatGPT-3.5 Explaining that It does not have Opinions or Beliefs. I have not Included the Information that It provided about Fascism, but It defined the Concept and gave Examples.

In practice, this means that when asked to evaluate competing ideas, ChatGPT will not offer a strong opinion in support of one side of the issue. Instead, it will tend to give a summary of both ideas then fall back on its human training (RLHF) to explain why it cannot take a stand on the issue (Figure 5). From an educator’s standpoint, this is an interesting limitation to note, since it exposes an entire class of cognitive tasks that AI models cannot do well: Namely any type of task that requires forming and defending an opinion or belief based on critical reflection. We can categorize this class of tasks broadly as metacognitive. So, while ChatGPT can aggregate and analyze massive amounts of data, make interpretations of the data and provide statistical predictions based on observed patterns, its training does not allow it to form or evaluate subjective opinions. It is difficult to predict how long this will remain a limitation of GPT models. We have already observed many ‘jailbreaking’ techniques designed to bypass this training. More problematically, hackers have been jailbreaking entire versions of LLMs and making them available online to anyone who wishes to use them (Mozes et al., 2023). ChatGPT-4 has shown early signs of a potential for emergent general intelligence, but it still lacks the cognitive autonomy characteristic of human intelligence (Bubeck et al., 2023). However, it should be noted that the latest model, OpenAI-o1 (a preview of which was released in September 2024) was designed to ‘reason through complex tasks’ (OpenAI, 2024b) and problems before responding, bringing the model closer to the goal of ‘system 2’ thinking (Kahneman, 2011).

Example of ChatGPT Finding a Way to Avoid Making an Ethical Evaluation.

As educators begin to think about how to design assessments with ‘AI-proofing’ in mind, the most important factors will be to avoid assigning tasks that AI models do well (like summary and comparison) while emphasizing the kinds of tasks that AI models do poorly (tasks that rely heavily on metacognition and critical reflection). Ideally, educators need to be prepared to ask students to engage more in activities that require them to go through the processes involved in forming/defending opinions based on the conformity of a claim with evidence. This is not a new approach, of course. Modern educators have employed forms of assessment that highlight the use of metacognition, critical reflection and scientific thinking for decades. The novel assertion being made here is that many traditional forms of assessment hide significant vulnerabilities in the age of AI. Going forward, when educators design assessments for their courses, we cannot think solely about how well the task supports learning outcomes. We must consider first how easily certain elements of a given task may be completed by AI-supported technology. We must be familiar enough with the capabilities of the technology to identify vulnerabilities in the design of assessments. In the process, we may find that many traditional tasks—especially those that require students to summarize information and/or compare perspectives, but which do not encourage strong engagement with metacognition and/or critical reflection—are now obsolete.

Perhaps the best way to approach the problem is to concentrate on designing tasks that simply make reliance on AI technology a losing strategy. In most cases, this would mean designing hybrid assessments that encourage students to draw from many different knowledge domains, while simultaneously engaging in multiple modes of cognition. An example design would require students to blend knowledge from multiple sources, including from recent academic publications, course lectures and peer-group discussions, while requiring that they do more than simply summarize key concepts. In addition, it would require students to apply critical and metacognitive research strategies as they form new, individual understandings/opinions about a topic.

Clearly, I am not ‘reinventing the wheel’ with this example. My intention is to suggest that educators need to establish a new baseline. In the ‘arms race’ that we suddenly find ourselves, assessments must begin to account for and eliminate known vulnerabilities—those tasks that generative AI does well—while encouraging the use of uniquely human capacities to expose weaknesses in the capabilities of AI-supported technologies.

By requiring that students incorporate knowledge from unpublished sources like the classroom, we ensure that students cannot rely completely on AI to complete a task. Most LLMs, like ChatGPT, make use of publicly available data. They cannot account for unpublished information. Additionally, requiring that students use recent academic publications (as current as possible) presents a challenge for older AI models that are not connected to the internet, like ChatGPT-3.5. 6 Lastly, by requiring that students apply critical and metacognitive research strategies to form new, individual opinions about a topic, we exploit a weakness in the programming of AI-supported technologies: An inability to engage in metacognitive processes because of training restrictions.

Therefore, it is important to reemphasize the claim that the current best strategy involves prioritizing hybrid assessment designs that require students to make use of multiple sources of knowledge and multiple modes of cognition. Students may still attempt to use AI models to complete parts of a task, but well-designed hybrid tasks will place natural constraints on that usage while exploiting weaknesses in the current abilities of AI systems. Much more can be said about assessment design, of course; yet, it would be risky to make more specific recommendations at this point. We should not expect many strategies to be relevant in a year’s time (or less). This will be an important consideration for the next layer of security.

Technological Layer

In March 2023, the Future of Life Institute published an open letter to the public, calling for a 6-month pause on the ‘training of AI systems more powerful than GPT-4’ (Future of Life Institute, 2023). The letter, which has since expired, was signed by over 30,000 people, 7 including the signatures of thousands of experts in AI research and many noted academics. Signatories include such well-known figures as Elon Musk, Steve Wozniak, Yuval Noah Harai and Max Tegmark, among many others. The intent of the letter was to draw attention to potential dangers involved with developing and applying powerful AI systems so quickly that we do not have time to ‘develop and implement a set of shared safety protocols for advanced AI design and development that are rigorously audited and overseen by independent outside experts’. The letter—and the overwhelming response—highlights serious concerns among experts that the current rate of advancement in AI systems threatens to overwhelm our ability to react both knowledgeably and cautiously.

As such, we can assert that a complex solution to the problems created by AI-supported technologies will include the development of a readiness component, which I characterize as the technological layer of security. With this phrase, I simply mean that educational institutions must find ways to stay current with their understanding of the technological state-of-the-art. If they cannot find ways to do this, they cannot hope to foresee and address ‘vulnerabilities’ in the system adequately.

It should be clear by now that the abilities of AI systems are progressing at such unprecedented rates that it will be impossible for most educators to achieve and maintain an acceptable level of knowledge about the current capacities of AI-supported technology. This is an arms race that we cannot expect educators to win. Therefore, if institutions of higher learning wish to address these vulnerabilities successfully, they will need to create dedicated departments/committees to research and stay up-to-date with the state-of-the-art. These departments would need to act as consultants for faculty members and administrators, relieving both parties of the impossible task of maintaining high levels of competence in the domain.

The proposed department would develop adequate expertise about the current capacities—and imminent advances—of AI systems. In addition, it would develop adequate expertise about AI-supported tools and applications that are being brought to market. With this expertise, the department could generate and maintain a list of sanctioned and unsanctioned AI-supported tools, while providing guidance for faculty about state-of-the-art AI-detection. Lastly, the proposed department would act as a technological and educational hub for faculty and administrators, ensuring that an adequate level of competence is maintained at the institutional level.

If institutions expect to limit the unsanctioned use of AI-supported technologies, they will need to make sure that they have secured a level of expertise required to do so. Achieving this level of expertise would be difficult, if not impossible, for most faculty members and administrators. Not only would it require the acquisition of new knowledge at a very high level, it would mean keeping pace with the rate of advancement in AI system development. Universities and colleges cannot expect their faculty members and administrators to develop and maintain this level of competency on their own. Therefore, innovative institutions will need to develop internal specialists to bridge the gap between expert and non-expert competency levels. Universities that fail to supply the necessary expertise will create a massive knowledge gap in the AI readiness of their faculty and administrators.

Policy Layer

The last layer of security involves the creation of clear and proactive policies about the sanctioned and unsanctioned use of AI-supported technologies. Building on points made in the previous sections, we can establish that AI policies should be informed by expert knowledge in the iterative development and extended capacities of AI-supported technology. Recommendations include the following provisions:

A satisfactory AI policy will be informed by expertise in the state-of-the-art but will remain adaptable to advances in technology. Where this expertise is missing at the decision-making level, it should be cultivated and maintained in the appropriate departments/committees. Decisions and protocols should be reassessed often, since the domain is developed iteratively and at a rapid pace. A satisfactory AI policy will provide faculty with clear definitions and an unambiguous strategy for dealing with cases of unsanctioned use (plagiarism/cheating). These definitions and strategies should be uniform across the institution. As mentioned above, there are, at present, no reliable methods either for (a) detecting AI-produced content or (b) determining how much of the given content has been produced by AI. Therefore, establishing standards for the criteria for a ‘burden of proof’ in suspected cases of unsanctioned use is needed urgently. We cannot rely on older paradigms to investigate suspected cases of AI-assisted plagiarism. A satisfactory AI policy will protect faculty members from the responsibility of participating in a technological ‘arms race’. Educators should not feel pressured to become experts in AI systems to do their jobs. This responsibility should be allocated to a specialized department. If a department cannot be formed, some method for establishing ongoing consultation with expertise should be implemented. Lastly, faculty members should not be obliged to investigate and/or adjudicate cases where the unsanctioned use of AI is suspected. Doing so likewise implies an obligation to participate in an ‘arms race’. Institutions of higher learning should formulate and implement AI policies that remove these responsibilities from its faculty, allowing them to concentrate on what they do best: Teach and research.

Concluding Remarks

If researchers in AI systems are correct, we are living in the initial phase of a major shift in the way that humanity lives. This shift extends well beyond the concerns of education. We must strive to recognize the significance of this moment so we may respond from a place of understanding and caution. In this article, I have tried to give an overview of some of the issues that we are facing as educators, currently living and working through a transition. I have made recommendations about how to address new vulnerabilities in the way that we assess our students. Lastly, I have suggested a strategy for ensuring that we approach the problem systematically. Unfortunately, it is impossible to project how AI systems will develop in the near future. We can be certain, however, that the approaches we adopt will need to be flexible. This is true at every level of the issue. For example, how we classify the sanctioned use of AI will have ethical reverberations for decades to come. If we choose to embrace the use of AI-supported technologies, as we likely will, assessment design will matter more than it ever has. Finally, if we formulate policies from the perspective of old paradigms, ignoring or failing to understand the monumental shift in human development that we are witnessing, we may inadvertently contribute to the weakening of higher education, including the potential loss of necessary skills (Stadler et al., 2024). We find ourselves in a situation where we must think deeply but act quickly.

Footnotes

Declaration of Conflicting Interests

The author declared no potential conflicts of interest with respect to the research, authorship and/or publication of this article.

Funding

The author received no financial support for the research, authorship and/or publication of this article.