Abstract

Preventable healthcare-associated harm results in significant morbidity and mortality in the United States, costing nearly 400 000 patient lives annually. The Institute for Healthcare Improvement provides high-quality educational resources tailored for working healthcare professionals. One such resource is the Certified Professional in Patient Safety (CPPS™) review course, which equips professionals with advanced proficiency in 5 core patient safety domains. The CPPS™ certification is the only interprofessional, patient safety science credential recognized worldwide. In 2010, the Lucian Leape Institute at the National Patient Safety Foundation described the critical need for medical students to participate in patient safety solutions as well. However, equivalent patient safety credentialing remains challenging for students in the preclinical and clinical stages of training to obtain. To address this growing dilemma, the Texas College of Osteopathic Medicine (TCOM) piloted the first-of-its-kind CPPS™ course with 10 medical students to test a novel, academic-level approach to patient safety curriculum. Medical students showed large gains in performance on the post-test (83.18% ± 26.12%) compared to the pre-test (46.46% ± 27.18%) (P < .001, η2 p = .368), representing increased knowledge across all learning domains. On the national certification examination, students had a 90% first-time pass rate, exceeding the current national average of 70% for first-time examinees. In satisfaction surveys, students expressed the value of pilot curriculum for their medical training, the importance of similar Patient Safety Education and CPPS certification for all medical students, their confidence as future healthcare change agents. Content analysis of open response questions revealed 3 key areas of strength and opportunity for guiding future iterations of the course. This pilot generates a future vision of patient safety, equipping students with critical knowledge to systematically improve healthcare quality.

Keywords

Introduction

Healthcare-associated harm results in significant morbidity and mortality worldwide. A meta-analysis using a trigger tool to analyze medical records estimated that preventable harm may cost more than 400 000 patient lives each year in the United States.1,2 Mortality is only a fraction of the story, as some studies have predicted that the rate of patient harm in the hospital-setting has reached between 25% and 33% of all care.3-6

The Institute for Healthcare Improvement (IHI) provides safety science education tailored for healthcare professionals. One such resource is the Certified Professional in Patient Safety (CPPS™) review course, equipping candidates to sit for the CPPS™ certification exam. This certification attests that professionals have reached advanced proficiency in 5 core domains of patient safety: Culture, Leadership, Patient Safety and Risk Solutions, Measuring and Improving Performance, and Systems Thinking and Design/Human Factors. The CPPS™ certification is the only interprofessional, patient safety science credential recognized worldwide. It has been earned by healthcare professionals spanning diverse backgrounds, representing all 50 U.S. states and 20 countries. Currently, certification requires a combination of education, 3 to 5 years of direct clinical experience and successful completion of the evidence based CPPS™ exam.

For those healthcare professional students in the pre-clinical and clinical phases of medical training in the United States, patient safety credentialing is challenging to obtain. A recent systemic review of patient safety curriculum in undergraduate medical students was decisively sparse, with relatively few studies being published on integrating patient safety education into U.S. medical curriculum. 7 The Lucian Leape Institute 8 at the National Patient Safety Foundation issued a the Unmed Needs: Teaching Physicians to Provide Safe Care report describing the critical need to ensure that undergraduate medical students are appropriately trained to become part of the patient safety solution. To address this growing dilemma, the Texas College of Osteopathic Medicine (TCOM) pilot program was developed to investigate whether qualified medical students could prepare for and achieve CPPS™ certification. This effort was accomplished through collaboration between IHI’s national safety programs office, TCOM, University of North Texas Health Science Center (UNTHSC), and SaferCare Texas to design a custom 6-week course, using a flipped classroom model and the IHI CPPS™ Review Course modules.

Methods

Participant selection

Applications for 10 scholarships were opened for TCOM’s rising second year medical students to participate in the Patient Safety Pilot. To qualify for selection into the course, participants were required to meet the following criteria:

• Active membership in the IHI Open School—Fort Worth Chapter

• Evidence of good academic and disciplinary standing with TCOM and UNTHSC

• Validated experience in a healthcare setting, including time spent in direct clinical exposure, clinical rotations, and residency training.

Pilot structure

The pilot was implemented from June 4 to July 8, 2019, utilizing the IHI Online CPPS™ Review Course materials supplemented by subject matter expert discussions. The course content was divided into 5 in-person learning sessions, each focusing on the 5 safety domains tested on the CPPS™ certification exam (ie, Culture, Leadership, Patient Safety & Risk Solutions, Measuring & Improving Performance, Systems Thinking & Design/Human Factors). Course instruction during these sessions utilized a flipped classroom model, in which students reviewed print, audio, and video material before attending learning sessions. The purpose of sessions was to facilitate active learning and knowledge application by solving case-based scenarios. Before the final session, students completed an online CPPS™ Practice Exam offered by Certification Board for Professionals in Patient Safety (CBPPS™). The rationale for best answers were discussed during the final session to promote optimal cognitive discernment.

Pre- and post-course assessments

Students completed a 22-item Qualtrics-based assessment before and after the Patient Safety Pilot to evaluate changes in competency across the 5 domains. The questions were formatted similarly to those found in the IHI Online CPPS™ Review Course Comprehension Checks and the online CPPS™ Practice Examination.

Practice examination

During the final week of the course, students completed an online practice examination administered by PSI Testing Services, the official testing partner of CBPPS. Students then brought their results to the final classroom session to discuss the rationale for best answers and promote optimal cognitive discernment.

Course satisfaction survey

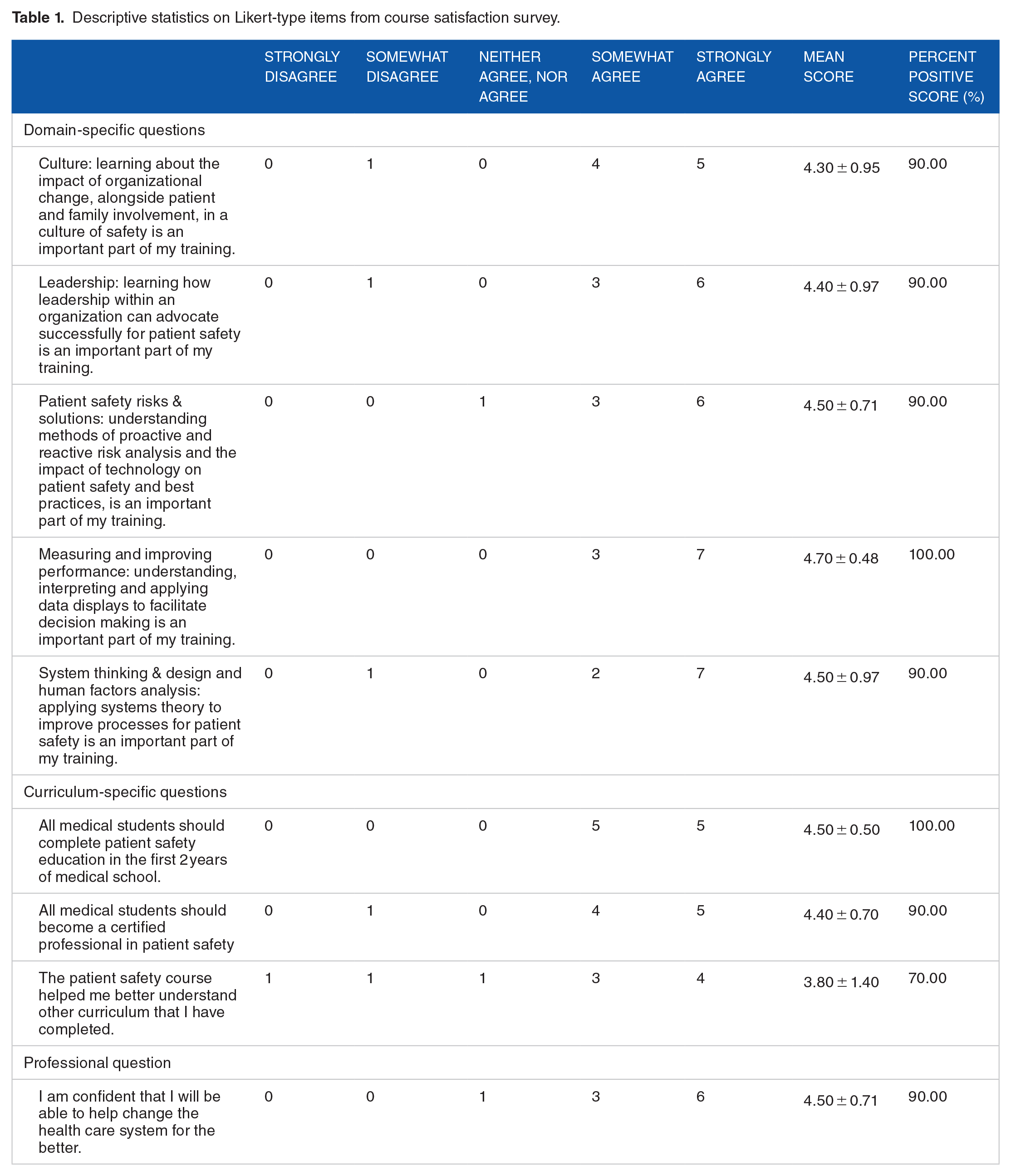

Following the Patient Safety Pilot Program, students were asked to complete an online satisfaction survey. This survey consisted of 9 Likert-type items and 3 open-ended questions. Responses on Likert-type items ranged from 1 = “Strongly Disagree” to 5 = “Strongly Agree”.

Core measures

Prior to pilot implementation, an evaluation plan was created considering that TCOM would not have access to IHI’s online CPPS™ Review Course comprehension check results for each student. Four criteria were selected to assess course effectiveness and overall success:

Completion of online pre-test assessment on the first day of class

Completion of online post-test assessment on the last day of class

Completion of a course satisfaction survey and qualitative assessment completed on the last day of class

Completion of the CPPS™ certification examination within 2 weeks of the course ending date, scheduled at the convenience of participants at an off-site PSI Testing Center.

Institutional Review Board approval

All data were collected as part of the patient safety pilot course (June 4-July 8, 2019). Prior to data analysis, the North Texas Institutional Review Board rendered our investigation exempt from IRB review under the provisions of 45 CFR 46.104 (d), category (1) and category (4)(ii).

Data analysis

For the pre- and post-test assessments, factorial ANOVA was used to compare changes in overall and domain-specific performance. The dependent variable was Assessment Score measures before and after the pilot implementation. The between-subjects independent variables were Time (ie, score measurement before and after the pilot) and Learning Domain (ie, Culture, Leadership, Patient Safety and Risk Solutions, Measuring and Improving Performance, and Systems Thinking and Design/Human Factors). During analysis, Shaprio-Wilk’s test of normality was performed for all levels of the independent variable. This test was significant for Post-Test Score in the Patient Safety & Risk Solutions Learning Domain. All other values were non-significant, indicating approximate normality of the data set. Levene’s Test for Homogeneity of Variance and White’s Test for Heteroskedasticity were non-significant, indicating maintenance of these assumptions. All measures of central tendency, dispersion, statistical tests and effect size estimates were calculated using IBM SPSS Statistics for Max (Version 27.0. Armonk, NY: IBM Corp). For statistically significant results (P < .05), post-hoc testing was performed using Bonferroni-corrected simple main effects analysis of estimated marginal means. Although not a pre-specified endpoint of this study, additional post-hoc analysis of total time spent testing was assessed using an independent t-test. Graphical figures were generated using GraphPad Prism for Mac (Version 9.0. San Diego, CA: GraphPad Software Inc). All bars represent the mean and error bars represent standard deviation (SD)

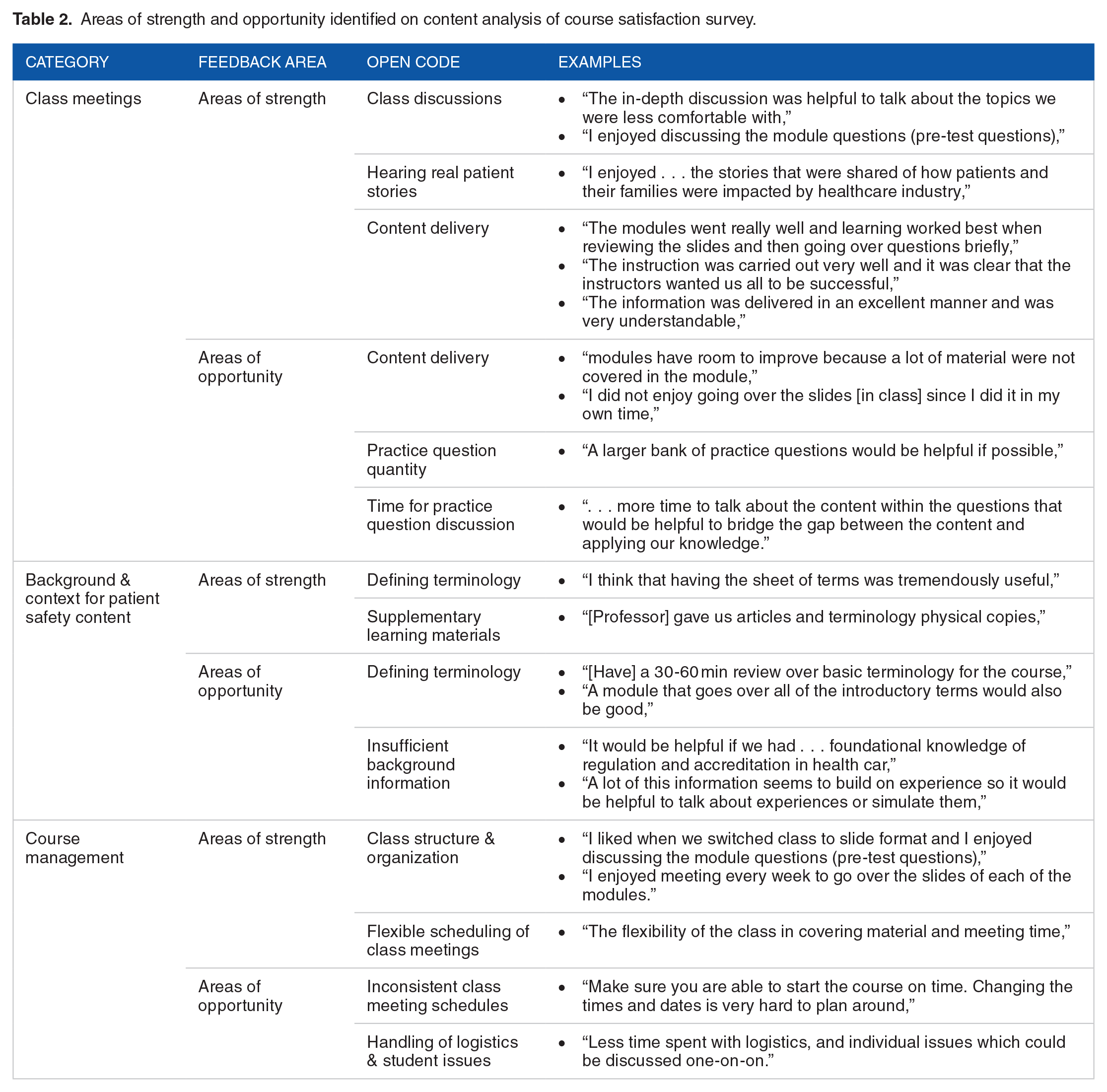

For the course satisfaction survey, the percentage of positive responses (“Agree” or “Strongly Agree”) were calculated for each item and descriptive statistics were summarized into a frequency table. Open-ended questions were analyzed using conventional content analysis. Following established guidelines, 9 our analysis began with 2 separate researchers independently generating codes, categories, and sub-categories. Researchers then compared and collaborated to establish consensus for definitive data organization. This process has been shown to minimize the bias inherent to subjective analysis and improve validity. 10 Researchers coded the student responses for the presence “1” or absence “0” of each consensus code. Cohen’s kappa was then calculated to ensure statistically significant, substantial-to-perfect agreement between the 2 researchers’ coding of responses.

Results

Medical students significantly improved performance across all learning domains following the Patient Safety Pilot

Factorial ANOVA revealed a significant main effect of Time on Assessment Scores [F(1, 34) = 19.79, P < .0001, η2 p = .368]. Overall, medical students showed considerable performance gains on the post-test (M = 83.18, SD = 26.12%), as compared to the pre-test (M = 46.46, SD = 27.18%) (Figure 1A). The main effect of Learning Domain did not reach statistical significance [F(4, 34) = 1.17, P = .442, η2 p = .102]. There was also no significant Time × Learning Domain interaction effect [F(4, 34) = 1.17, P = .341, η2 p = .121] (Figure 1B). Taken together, these data suggest that improvements in medical student performance were attributable to increased knowledge across all domains, rather than any individually. Additional analysis also revealed that students completed the post-test significantly faster (M = 596.70, SD = 106.38 seconds) than the pre-test (M = 772.00, SD = 117.77 seconds) [t(18) = 3.493, P = .003, d = 1.56].

Medical student pre- and post-examination performance. In our evaluation (A) medical students had significantly improved performance on the course assessment following the pilot course (P < .001). (B) There was no significant difference in performance improvements across the 5 learning domains targeted by the assessment. The bars represent the mean and the error bars represent the SD.

Medical students exceeded the mean passing rate on the CPPS™ Certification examination

Within 2 weeks following course completion, all medical student participants had completed the certification exam with a first-time pass rate of 90%. This performance exceeds the current 70% average national pass rate for first-time certification exam candidates.

Medical student feedback demonstrates high course satisfaction, as well as areas for future improvement of the Patient Safety Course

Medical student satisfaction surveys demonstrated strong satisfaction with the course. Overall, students indicated that all 5 learning domains were important for their training, with percent positive scores ranging 90% to 100%. They further stated that all medical students should complete Patient Safety Education (100%) and become Certified Professionals in Patient Safety (90%). They also confidently indicated that this curriculum prepared them to become healthcare change agents (90%) (Table 1).

Descriptive statistics on Likert-type items from course satisfaction survey.

Content analysis of open-ended questions gave detailed insight into student experiences (Table 2). During class meetings, students seemed to find significant value in the flipped classroom approach. Discussion of course content and answer rationale, as well as hearing patient stories, helped identify areas for more in-depth self-study and solidify understanding of core content. However, students also asked for a larger bank of practice questions with dedicated time for discussion to enhance their ability to answer questions through cognitive discernment.

Areas of strength and opportunity identified on content analysis of course satisfaction survey.

Students were concerned with their lack of knowledge of key regulatory and accrediting organizations, as well as safety science. They found supplemental articles and a professor generated index of key terminology to be highly valuable for their learning experience. However, the majority of student feedback in this area recommended additional class time to cover these concepts.

The last area of feedback pertained to overall course management. The opinions about class meeting schedules were conflicting, with some appreciating class time flexibility and others citing that inconsistent class time impacted learning. Students also commented that handling logistics of certification exam registration and addressing individual student issues took valuable class time as well.

Discussion

The purpose of this innovative pilot was to investigate whether qualified U.S. undergraduate medical students could prepare for and complete the CPPS™ certification exam in 8 weeks or less. Results revealed that the pilot enhanced participants’ knowledge of the 5 patient safety domains covered in the IHI CPPS™ Online Review Course. Additionally, first-time examinee pass rates among course participants exceeded current national averages. This intensive approach to addressing the need for more medical school patient safety curriculum outlined in the aforementioned Unmet Needs report appears to have been successful. 8 Employing a flipped classroom model, using a faculty expert in patient safety, and utilizing IHI’s pre-existing materials adequately facilitated knowledge transfer and content assimilation.

To enhance medical students’ knowledge and application of patient safety science, we believe the CPPS™ Review Course should be considered as a new standard in the curriculum of undergraduate medical education. Student-specific feedback should be taken into consideration for future iterations of the Patient Safety course. Students found that the flipped classroom model facilitates learning and emphasizes that face to face discussions with patient safety experts were important for shaping understanding. Systematic reviews of the flipped classroom model in higher education consistently find better academic outcomes than traditional lecture-based learning.11-13 Making students responsible for learning background information before class shifts the course instructors’ role to fine-tuning students’ knowledge base and providing expert-level cognitive strategies for problem-solving. This strategy likely underscores how students could become proficient in patient safety curriculum in such a concentrated timeframe.

Interestingly, health care professional students further indicated that the pilot’s 5-week timeframe could be condensed further, offering up to 2 modules per week. Other suggested improvements included integrating more case studies, patient scenarios, and simulation activities. In addition, students felt they lacked foundational background and context in several areas (ie, regulation, accreditation, and terminology) which steepened their learning curve throughout the duration of the course. This discrepancy well illustrates the critical need for patient safety in undergraduate medical education. Of note, this pilot study is limited to a small sample size for data analysis, therefore, generalizability should be met with caution until validation with larger groups can be accomplished.

In conclusion, this study demonstrates the first known successful implementation of the CPPS™ curriculum with medical students at the academic level. This pilot takes the first step in generating a future vision of patient safety education by equipping medical students with critical knowledge to systematically improve healthcare. We also believe this additional certification will equip our graduates with a higher level of mastery of the Accreditation Council of Graduate Medical Education (ACGME) Milestones in the domain of Patient Safety.

Footnotes

Acknowledgements

The authors would like to thank SaferCare Texas for providing the scholarships for students’ course materials and certification examination. Certified Professional in Patient Safety (CPPS) is a registered trademark of the Certification Board of Professionals in Patient Safety all rights reserved. The Institute for Healthcare Improvement (IHI) is an educational partner of the Certification Board of Professionals in Patient Safety all rights reserved

Funding:

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: The scholarships for students’ course materials and certification examination were paid by SaferCare Texas, a department of the University of North Texas Health Science Center.

Declaration of conflicting interests:

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Author Contributions

All authors listed on this manuscript have made substantial contributions to designing experiments, acquiring and analyzing data, generating the final manuscript, and approving the final version for publications. All authors agree to maintain accountability for all aspects of the work, ensuring questions relating to accuracy or integrity of any part of the work are appropriately investigated and resolved.

Data Availability Statement

De-identified data underlying the results presented in this article can be made available to Investigators who provide a methodologically sound proposal and/or evidence of approval for their data use by an independent review committee (learned intermediary). All inquiries should be directed to