Abstract

Objectives:

This study describes the results of NBME (National Board of Medical Examiners) implementation in Balamand Medical School (BMS) from 2015 to 2019, after major curricular changes were introduced as of 2012. BMS students’ performance was compared with the international USMLE step 1 (United States Medical Licensing Examination, herein referred to as step 1) cohorts’ performances. The BMS students’ NBME results were analyzed over the successive academic years to assess the impact of the serial curricular changes that were implemented.

Methods:

This longitudinal study describes the performance of BMS preclinical second year medicine (Med II) students on all their NBME exams over 4 academic years starting 2015-2016 to 2018-2019. These scores were compared with the step 1 comparison group scores using item difficulty. The t test was computed for each of the NBME exams to check whether the scores’ differences were significant.

Results:

Results revealed that all BMS cohorts scored lower than the international USMLE step 1 comparison cohorts in all disciplines across the 4 academic years except Psychiatry. However, the results were progressively approaching step 1 results, and the difference between step 1 scores and BMS students’ NBME scores became closer and not significant as of year 4.

Conclusions:

The results of the study are promising. They show that the serial curricular changes enabled BMS Med II students’ scores to reach the international cohorts’ scores after 4 academic years. Moreover, the absence of statistical difference between cohort 4 scores and step 1 cohorts is not module dependent and applies to all clinical modules. Further studies should be conducted to assess whether the results obtained for cohort 4 can be maintained.

Keywords

Introduction

Medical education has evolved over the years from “didactic lectures, with each individual course delivering information on a sub-set of individualized topics related to the specific department where the course was instructed”1(p2) toward more integration between the basic and clinical science courses. The purpose of this shift is to develop medical students’ understandings of the residency requirements and their aptitude in medicine,1,2 on the one hand, and their analytical reasoning2,3 and critical thinking skills, 4 on the other. These major curriculum changes are challenging and impact all aspects of the curriculum spanning from teaching methodology to assessment techniques.3,5- 7

Medical schools are adapting their curriculum and redefining the fundamental skills and abilities by including clinical reasoning skills and implementing new teaching methodologies that enhance critical thinking in the medical practitioner. 4 Medical education is currently changing to integrate clinical skills as early as first preclinical year of Med I.1,8 Similarly, some curricular changes focus on how didactic instruction can be combined or underpinned with case-based learning, problem-based learning, team-based learning, active learning, and independent learning exercises. 1 The value of illustrating teaching points with actual cases or simulated cases known as case-based learning is well recognized. 9 Such integration of clinical and basic science courses has provided students with a broader knowledge with which they can accurately diagnose and treat patients. Curricular change would involve new teaching methodologies as well as appropriate assessment practices that support and measure the new desired student outcomes. 10

Background

Changes to the curriculum

The integration of clinical reasoning into the curriculum has improved the quality of medical education and helped the students transition from medical school into the workplace.1,8 In line with its pursuit of “excellence in medical education and patient care” and a commitment to a “dynamic curriculum,” Balamand Medical School (BMS) has been progressively revising its curriculum since 2012 to move away from more traditional “rote” learning and memory-based content approaches toward a hybrid curriculum with both didactic and problem and case-based learning approaches. While such revisions have been studied globally 11 and to a lesser extent in Lebanon such as in the American University of Beirut, 12 there is still vast room for research in the Lebanese and Middle Eastern contexts as well as in the area of measuring assessment practices.

At BMS, sequential changes were implemented in the Med II curriculum as of 2012 as outlined in Figure 1.

Med II curricular changes at BMS as of 2012.

In 2012, the traditional subject-based (didactic-based courses) courses were modified or replaced by organ-based modules. The modified curriculum was designed to include organ-based modules, didactic lectures, and problem-based learning. In 2014-2015, a clinical skill’s module was integrated yearlong in Med II to introduce medical students to practical clinical experience early on and stimulate self-learning practices. In 2017-2018, simulation of Nursing Skills was implemented in Med II. In 2018-2019, more simulation courses were added to the Med II curriculum, such as Basic Life Support, Advanced Cardiac Life Support, Pharmacology of Cardiac Emergencies, Airway Management, and Ear and Prostate Examinations (normal and pathologic), along with Ultrasound of Breast Pathologies and First Trimester Normal Pregnancy.

The BMS Med II hybrid curriculum was revised to ensure the optimization of clinical reasoning, evaluation, analysis, and inference among medical students as main competencies to be achieved. The desired outcomes from the curricular changes were that medical students become able to seek information, to integrate their learning with practical cases, and to critically and analytically reason about cases (ie, move away from memorization), as such outcomes serve as precursors to an accurate diagnosis.

Studies show that implementing simulations in a medical education helps the future practitioner develop communication and nontechnical and faster diagnosis skills, optimizing faster decision-making processes13,14; it maximizes leadership and teamwork dynamics. 13 - 15

Changes to assessment practices

In 2015, the Med II teaching committee chose to adopt the National Board of Medical Examiners (NBME) because it matched the hybrid nature of the revised curriculum in 2012 that focused on developing clinical analytical reasoning skills. As the curriculum changed, the nature of the exams needed to change as well.3,11,16,17Assessment procedures had to reflect the shift from memorization to critical thinking and prepare students to a lifelong practice. 18 It was with this rationale that the NBME was adopted to monitor changes in performance and compare our curricula in light of international standards. 19

Curricular changes necessitate using valid assessments both to align assessments with learning outcomes and to assess the extent and effectiveness of the changes. 20 Moreover, and as we discuss below, the NBME, as an international standardized assessment, helped raise standards at BMS and focus the curricular transformations toward the desired outcomes.

Prior to NBME implementation, multiple-choice questions (MCQs) generated by BMS faculty were used to assess Med II students. Even as MCQs can cover a large amount of content, there are growing concerns regarding the validity of the traditional institutionally generated multiple-choice examinations, as such types of exams are usually highly reliant on memorization and recall of knowledge rather than higher order thinking skills that require the learner to reach a conclusion, make a prediction, or select a course of action.18,21,22 The MCQs also have several weaknesses related to irrelevant difficulty and test-wiseness. 23 Correctly answering a question may not reflect the amount of knowledge about a tested topic and may simply relate to better test-taking strategies. 24

The NBME allows assessment of clinical reasoning, an essential lifelong skill that enables the medical practitioner to think and make decisions that guide differential diagnosis and plans of action 25 with patient safety implications. Teaching medical students clinical reasoning may avoid lethal mistakes.26,27 Clinical reasoning has become one of the most important competencies of health care practitioner’s education and training, and it is highly recommended that this skill is taught to medical students as early as the first preclinical year. 26

The NBME questions focus on important concepts that are encountered in real clinical practice and assess the application of knowledge rather than recalling isolated facts. An “application of knowledge” item is defined as a question that requires the examinee to make a prediction, reach a conclusion, or select a course of action.18,28 These items require higher order skills compared with the recall items that test only examinees’ knowledge of isolated facts without their application. 24 The NBME MCQs are of 2 types: true and false test items that require the students to select all the options that are true and 1 best answer item that requires the student to select 1 answer, and the latter is more widely used. 23 Examples of these items are “vignettes” that offer information in a table or graph format to assess the examiner’s ability to reach the right conclusion and “problem-solving clinical vignettes” that assess the ability of the examinee to take the most appropriate next step. The NBME also offers the advantage to provide a detailed feedback to the students regarding their test performance promoting active learning strategies and guiding future learning. This allows the faculty and students to pinpoint areas of weakness and accordingly address them.

Aim of the study

Innovations in medical education methodology and evaluation differ based on contexts and cultures, and there is no one-size-fits-all curriculum. Medical schools are eager to embrace change, but those changes come at a price of researching these changes as new practices need to be based on reason, evidence, and best practices.3,10 Furthermore, there may be resistance to curricular changes on different levels,3,8,29 such as faculty lack of time to undergo the training in a busy and hectic environment. 29 Rapid changes in medical education are not easy to implement because “educational inertia—that is, the maintenance of the status quo—is a powerful force in most medical schools. Implementing successful educational programs across settings takes time, hard work, and financial resources.”3(p1487) Furthermore, active educational leadership and rigorous research are needed to successfully implement these innovations.3,6

The aim of this study was to describe the results of NBME implementation in Balamand Medical School from 2015 to 2019. BMS students’ performance was compared to international cohorts’ performances. We also analyzed the BMS students’ results over successive academic years to assess the impact of the serial curricular changes that were implemented.

The NBME as a standard assessment tool was implemented to position our students on an international scale as a way to validate our degrees at an international level. However, the exam then became a way to adjust, improve, and modify the curriculum to better reach the learning outcomes. It was hoped that the exam would serve as an external validation tool that would reflect the clinical reasoning potential of our Med II students and the progress of critical thinking with the newly integrated curriculum.

To validate the changes in the curriculum with reference to the NBME results as both a worldwide standard and an internal evaluation tool of our Med II students’ performance, the following research questions were developed to guide the data analysis of the study:

How does the performance of BMS Med II students compare with an international cohort of Med II students on a similarly constructed NBME exam over a 4-year span?

Which modules reflect a wider gap in performance between BMS Med II students and the international scores? How can these gaps be explained?

What BMS curricular changes seem to impact BMS Med II students’ performance?

Methods

The study was conducted over 4 academic years starting in 2015-2016 when NBME was implemented for the first time at BMS. The University of Balamand Institutional Review Board approved the study. A total of 299 Med II students took the NBME exams at BMS. The students were clustered into 4 cohorts, and each cohort represents the Med II students in 1 academic year as seen in Figure 2. Figure 2 represents the number of students in each cohort (academic year) and the cohort yearly cumulative average for Med I, the year before the students started Med II.

Cohorts’ average across the years.

The NBME exams were customized examinations constructed from the NBME bank questions by the clinical modules attended and reviewed by the respective module coordinators. These exams were administered at the end of each respective clinical module. No internal assessments for the respective modules other than the NBME were administered for these students during Med II. To examine BMS students’ performance, students’ scores on the different NBME tests were compared with the USMLE step 1 comparison group scores of those who took the same questions using the test item difficulty. Item difficulty represents the percentage of students who responded correctly to the item. The test item difficulty is the average item difficulty of all items in the test. These data are provided by the detailed report issued by the NBME to BMS at the end of each exam.

Med I yearly cumulative averages of each respective cohort were compared using 1-way 4-level analysis of variance (ANOVA) to check whether the students’ performances were similar before entering Med II. The cohorts’ cumulative average was based on each student’s average on all the tests taken in Med I.

Then, for every academic year, a t test was calculated for each NBME test the students took to check whether the difference in scores between our students and the USMLE step 1 comparison group was significant.

In addition, a comparative study was performed comparing Med II students’ performance over the span of the 4 listed academic years with step 1 cohort results in the same specific years.

Results

First, we looked at the performance of each cohort before the start of the year. A 1-way 4-level between-subjects ANOVA was conducted to compare the means of the students at the end of Med I for each of the 4 cohorts. The difference between the means was statistically significant, P = .013 (see Table 1). Because the results were statistically significant, we added Tukey post hoc tests to check where the differences are. 30

ANOVA results of means of the students at the end of Med I for each of the 4 cohorts.

Abbreviations: ANOVA, analysis of variance; SS, sum of squares.

The post hoc tests compare each cohort mean with the other cohort means. The results of the post hoc tests are presented in Table 2. The Tukey post hoc tests indicated that the means of cohorts 1, 2, and 3 are not statistically significantly different from each other. In addition, the means of cohorts 1 and 4 are not statistically significantly different. However, the means of cohorts 2 and 3 are statistically significantly different from the mean of cohort 4. These results suggest that before starting Med II, the performance level of cohorts 1, 2, and 3 was similar. However, cohort 4 outperformed cohorts 2 and 3.

Results of the post hoc tests on the means of the 4 cohorts.

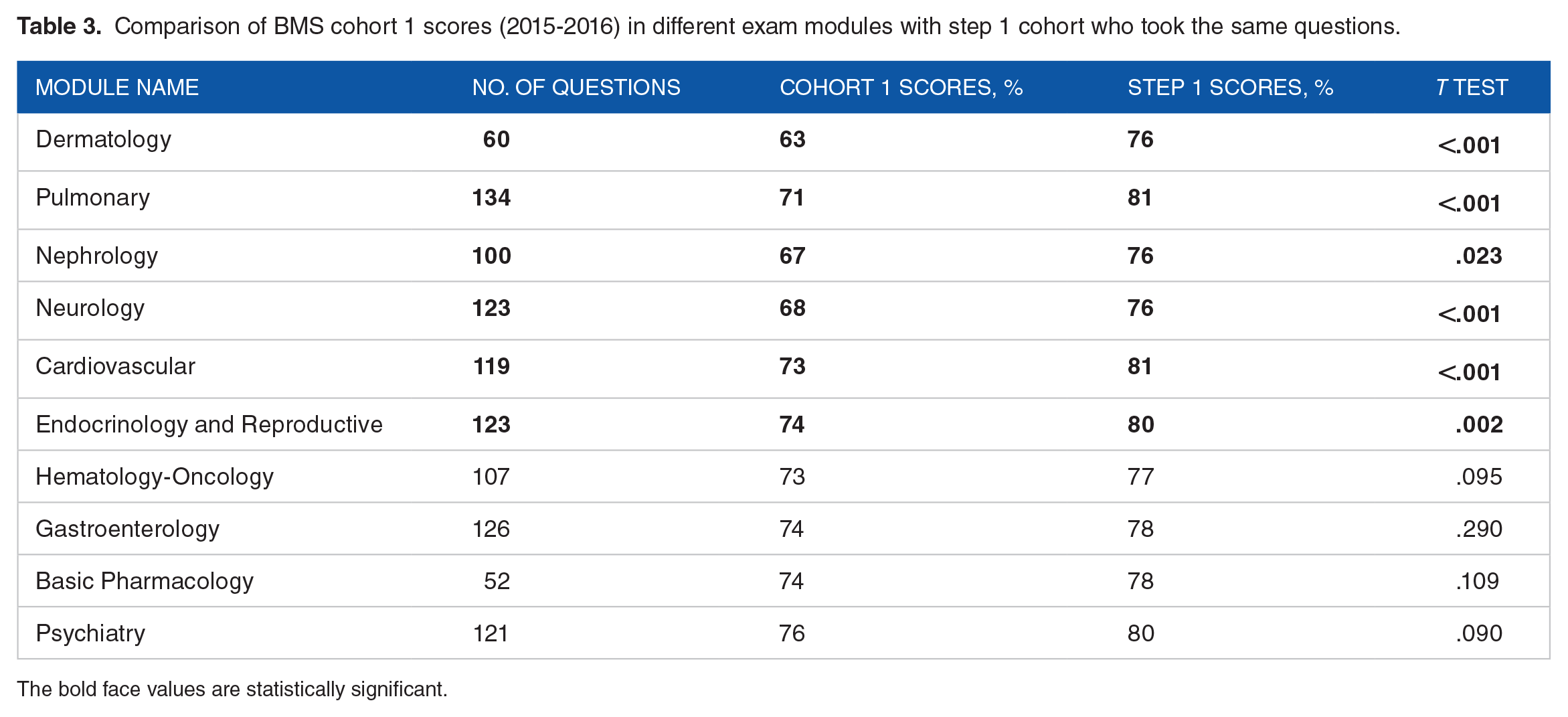

Next, we compared each cohort to the step 1 comparison group using the NBME tests. During 2015-2016, cohort 1 scored lower than the step 1 comparison group in all tests, as depicted in Table 3. This difference was statistically significant (P < .05) in 60% of the modules, namely, Pulmonary, Cardiovascular, Nephrology-Urology, Neurology, Dermatology, and Endocrine and Reproductive.

Comparison of BMS cohort 1 scores (2015-2016) in different exam modules with step 1 cohort who took the same questions.

The bold face values are statistically significant.

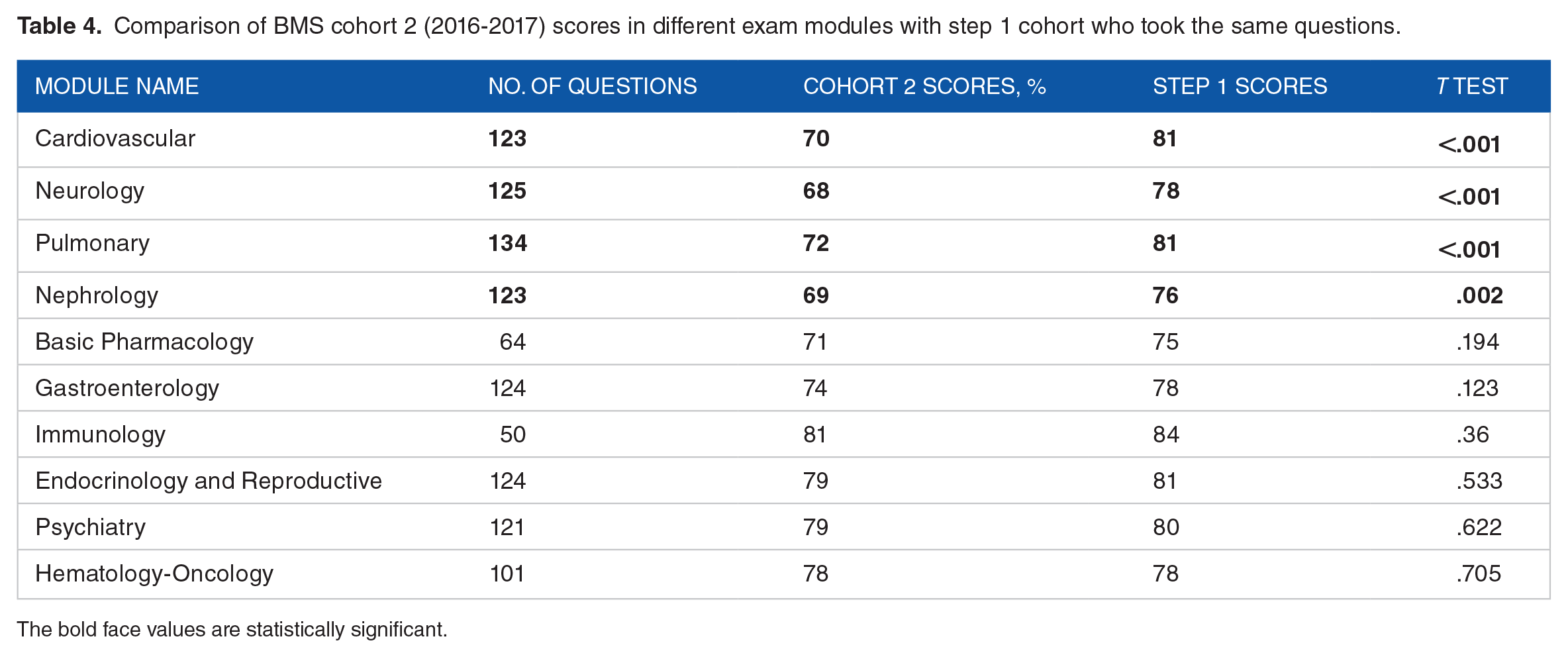

During 2016-2017, cohort 2 scored lower than step 1 cohort in all modules except Hematology-Oncology. This difference was statistically significant only in 40% of the modules: Nephrology-Urology, Pulmonary, Neurology, and Cardiovascular modules. The scores were matching for the 2 groups in Hematology-Oncology, as depicted in Table 4.

Comparison of BMS cohort 2 (2016-2017) scores in different exam modules with step 1 cohort who took the same questions.

The bold face values are statistically significant.

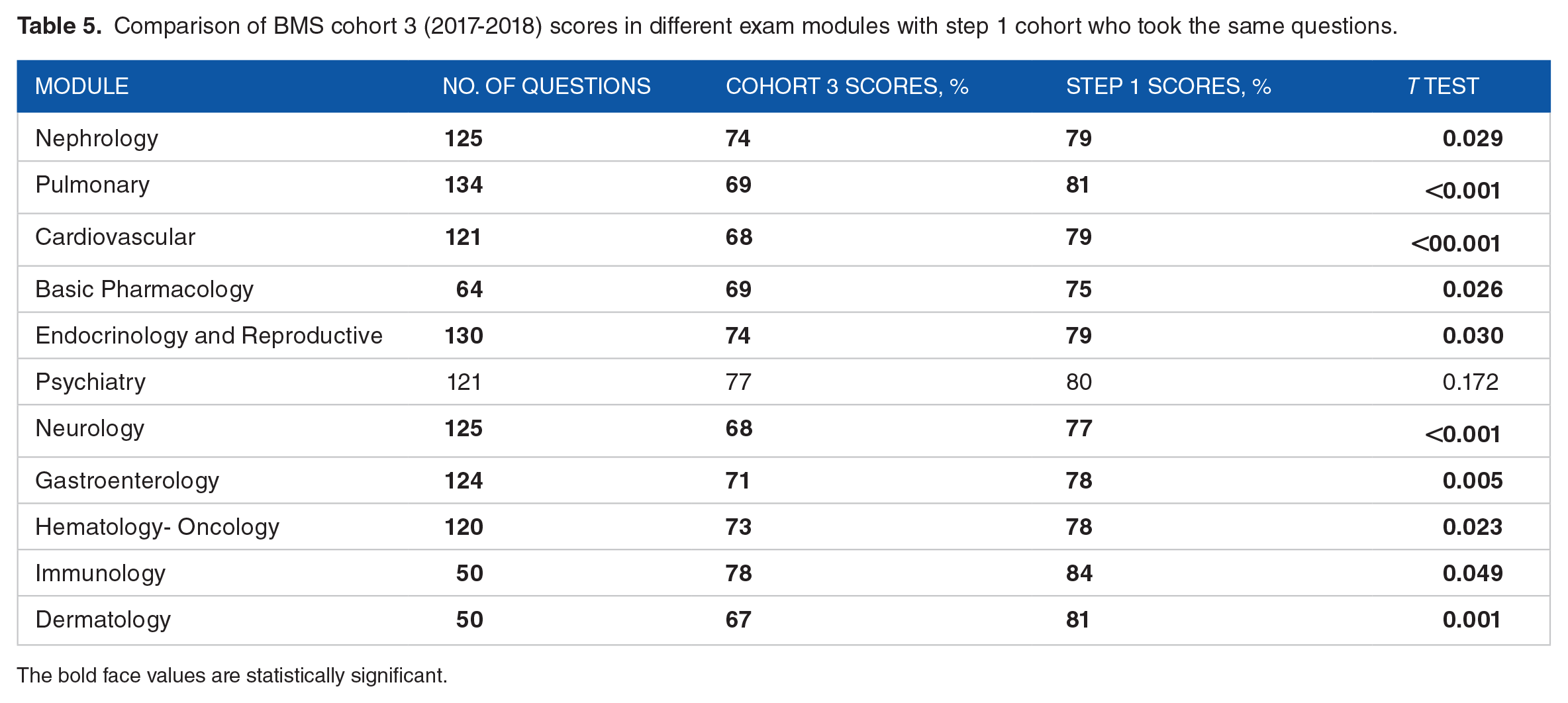

During 2017-2018, cohort 3 scored statistically significantly lower than step 1 cohort in all modules except Psychiatry where the score was lower than step 1 cohort; however, the difference was not significant, as shown in Table 5.

Comparison of BMS cohort 3 (2017-2018) scores in different exam modules with step 1 cohort who took the same questions.

The bold face values are statistically significant.

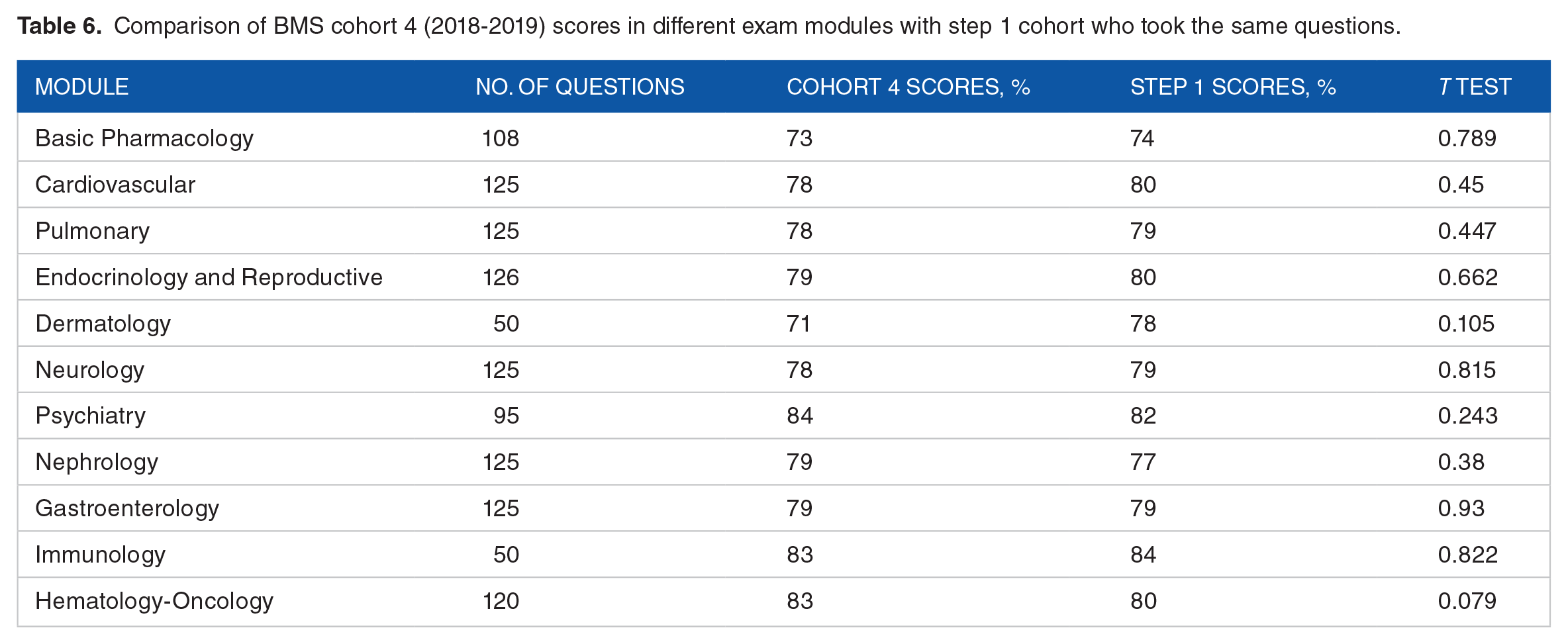

During 2018-2019, cohort 4 scores were lower than the step 1 cohort scores in all modules except Psychiatry, Nephrology, and Gastroenterology; however, the difference was not statistically significant in any of the modules, as shown in Table 6.

Comparison of BMS cohort 4 (2018-2019) scores in different exam modules with step 1 cohort who took the same questions.

When comparing BMS students’ overall performance in NBME since its implementation in 2015 until 2019, we notice that it is getting progressively closer to step 1, especially cohort 4, as shown in Graph 1. Cohort 3 values, however, appear to be dropping.

Overall BMS Med II students’ performance in NBME compared with that of step 1 cohorts from 2015 to 2016 (cohort 1), 2016 to 2017 (cohort 2), 2017 to 2018 (cohort 3), and 2018 to 2019 (cohort 4) (see Appendix 1 for Excel sheet to accompany Graph 1).

Discussion

While new curriculum and new testing are both time-consuming and costly, research on the NBME subject exam in medicine has been used to both measure the effects of new programs and compare students’ knowledge-based performances. 28 Our results show that all BMS cohorts scored lower than NBME cohorts in all disciplines except Psychiatry. The difference was significantly lower for cohorts 1, 2 and 3. This difference was not statistically significant for cohort 4. The lower performance in the Psychiatry module was statistically significant in cohort 1 only. It is worth noting that this performance was higher than step 1 cohort in 2018-2019, although the difference is not statistically significant.

The results show that it took 4 years after NBME implementation for the difference in the NBME scores between BMS and the comparative USMLE cohort to become statistically not significant. This lag period seems logical and reflects the required time for the students and faculty to readjust and adapt to this new assessment tool. It is worth mentioning that cohort 4 was introduced to NBME exams in Med I. This may have impacted the results of Med II NBMEs positively. Moreover, cohort 4 attended more simulation courses than any other cohort, which may have contributed to their enhanced performance compared with the step 1 comparison group in that year.

Medical schools are increasingly using simulation-based education to develop the medical students’ cognitive abilities and interpersonal skills. 14 Accordingly, a growing body of research is examining how the use of simulations impacts both the teaching methodology and the design of assessments in medical education. 31 Integrating simulations into the medical curriculum has been found to improve performance on assessments 31 by enabling medical students to integrate their knowledge with their clinical reasoning skills. This leads to an improvement in the learning curve and better knowledge retention and decision-making, especially in crisis. 14

After 4 years of NBME implementation, BMS average was statistically similar to USMLE step 1 cohorts’ averages, and these results have numerous implications and can be attributed to both academic (curricular and pedagogical) and student factors. Pedagogically, course instructors have modified their teaching methodologies and lectures to encourage more active participation and critical analysis. These factors could have been potential reasons for the improved performance in cohort 4 and are worth studying in more detail. The students themselves over these 4 years became more familiar with NBME exams. Each class may have been able to give better advice about the NBME test-taking skills and best resources to study the material to pass on to their junior cohorts. Examinees who tested in earlier cohorts may memorize important clues about the exams and provide them to subsequent cohorts (even though such conduct is prohibited). This diffusion might have happened at a superficial level when, for example, an examinee later tells a friend something. 31 Moreover, fear of the new NBME may have lessened progressively each year as students realized that this type of assessment was part of the curriculum and “here to stay.”

The performance of cohort 3 on NBME was suboptimal compared with the other 3 cohorts. Although this was not investigated in this study, we believe it is secondary to factors related to the students in this cohort. This may be an indirect indication that the improved performance across time is mainly due to learning and not to students getting used to taking standardized tests.

Previous research in the Lebanese context indicates that changing teaching practices at the university level faces different challenges such as faculty’s concerns that covering the required material will be jeopardized, their beliefs about what represents good and effective teaching in the sciences and medical school, and the limited professional preparation that faculty receive. 32 Moreover, literature has proposed that in addition to the aforementioned challenges, tension may exist between the professional identity of scientists, science professors, and pedagogical reform, and that the challenge of addressing such tensions remains a complicated issue. 33 In this study, adopting the NBME as a change in assessment practices and the other curricular changes such as incorporation of simulations seemed to motivate instructors to change their practices. Therefore, curricular change needs to be gradual, providing sufficient time for faculty to adopt it, 34 and importantly, curricular changes need to be aligned with valid assessments that monitor the desired outcomes. Utilizing assessments like the NBME provides a mechanism for faculty to receive constant feedback on students’ progress toward desired and valuable outcomes. Our study shows that when the faculty is able to note relations between changing practices and students’ outcome, they are in a better position to address the challenges identified earlier. 35 Results of this research and our students’ recent results suggest that actively aligning curriculum changes with assessment practices facilitates curricular reforms by pinpointing the misalignments and pushing changes to reach the desired and previously missed learning outcomes.

One limitation is the longitudinal time period over which this study took place. While the difference between BMS Med II cohort 4 scores and NBME is not significant, further results from forthcoming academic years are not available yet to show that these results are sustainable. Further research should extend to continue measuring the impact of the curricular changes. Another limitation is that this study does not address the factors which could have contributed to the lower performance observed in cohort 3.

Conclusion

Implementing NBME in BMS was a challenge for both the students and faculty. The results of our study are promising, though, as they demonstrate that the progressive curricular changes enabled BMS Med II students’ scores to catch up with the international cohorts after 4 academic years. Moreover, the absence of statistical difference between cohort 4 scores and step 1 cohorts is not module dependent and applies to all clinical modules.

Further studies should be conducted to assess whether the results obtained for cohort 4 can be maintained in future years. It is worth studying the scores of these students in step 1 and correlating them with their Med II NBME scores.

Do NBME scores indicate curricular success or does NBME instigate curricular changes?

Sustainable long-term change requires assessments that can first support and later help evaluate the effects of the changes for continuous improvements. Our study shows that curricular changes should be supported by changes in assessment practices, and assessment results may take longer to show a clear indication of improvement. Our results should encourage other medical schools undergoing curricular changes to look at long-term goals. Curricular changes need modifications in teaching methodology and in content per module, as well as changes in assessment practices. While this study focuses on assessment as a successful indicator of change, the other changes in the curriculum need equal exploration. For better validity, more NBME implementation data from other international and Lebanese medical schools using similar measurement must be further investigated.

Footnotes

Appendix

Excel sheet to accompany Graph 1 (formatting purposes only).

| Cohorts’ labels | (name deleted for review purposes) | Step 1 |

|---|---|---|

| 1 | 70.7% | 78.5% |

| 2 | 74.5% | 79.4% |

| 3 | 71.6% | 79.2% |

| 4 | 78.6% | 79.3% |

| Grand total | 0.739777778 | 0.791111111 |

Acknowledgements

The authors gratefully acknowledge the invaluable contributions of Ms. Rania Najjar - Senior Data Analyst, University of Balamand - for her support in the data analysis.

Author Contributions

All the authors were involved in conception, literature review, drafting and revising of the manuscript. All the authors approved the final manuscript.

Funding:

The author(s) received no financial support for the research, authorship, and/or publication of this article.

Declaration of Conflicting Interests:

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.