Abstract

Background

There is considerable debate about the two most commonly used scoring methods, namely, the formula scoring (popularly referred to as negative marking method in our environment) and number right scoring methods. Although the negative marking scoring system attempts to discourage students from guessing in order to increase test reliability and validity, there is the view that it is an excessive and unfair penalty that also increases anxiety. Feedback from students is part of the education process; thus, this study assessed the perception of medical students about negative marking method for multiple choice question (MCQ) examination formats and also the effect of gender and risk-taking behavior on scores obtained with this assessment method.

Methods

This was a prospective multicenter survey carried out among fifth year medical students in Enugu State University and the University of Nigeria. A structured questionnaire was administered to 175 medical students from the two schools, while a class test was administered to medical students from Enugu State University. Qualitative statistical methods including frequencies, percentages, and chi square were used to analyze categorical variables. Quantitative statistics using analysis of variance was used to analyze continuous variables.

Results

Inquiry into assessment format revealed that most of the respondents preferred MCQs (65.9%). One hundred and thirty students (74.3%) had an unfavorable perception of negative marking. Thirty-nine students (22.3%) agreed that negative marking reduces the tendency to guess and increases the validity of MCQs examination format in testing knowledge content of a subject compared to 108 (61.3%) who disagreed with this assertion (χ 2 = 23.0, df = 1, P = 0.000). The median score of the students who were not graded with negative marking was significantly higher than the score of the students graded with negative marking (P = 0.001). There was no statistically significant difference in the risk-taking behavior between male and female students in their MCQ answering patterns with negative marking method (P = 0.618).

Conclusions

In the assessment of students, it is more desirable to adopt fair penalties for discouraging guessing rather than excessive penalties for incorrect answers, which could intimidate students in negative marking schemes. There is no consensus on the penalty for an incorrect answer. Thus, there is a need for continued research into an effective and objective assessment tool that will ensure that the students’ final score in a test truly represents their level of knowledge.

Background

Multiple choice questions (MCQs) are often used in medical education to assess knowledge of a subject or course content.1–3 Apart from the ease of script grading, this form of assessment has the advantage of testing wide areas of knowledge encountered in medical education. Furthermore, MCQs that are well planned and delivered have desirable psychometric properties and are a viable and effective alternative to assess the critical-thinking skills of students.3–5

Despite its popularity, there are constant research efforts to exploit the strengths and mitigate the weaknesses of MCQs. One such weakness is the scoring system. There is considerable debate about the two most commonly used scoring systems. The first is the formula scoring method 6 popularly referred to as negative marking method in our environment. The other is the number right (nonnegative marking) scoring method.7,8

In the number right scoring method, the correct answers are awarded a positive point, while incorrect and omitted answers are given no point. 3 The negative marking scoring system attempts to discourage students from guessing in order to increase test reliability and validity. 9 A correct response results in a positive score and omitted items result in no mark, while marks are lost for incorrect answers.10,11 The number right scoring method was recommended by Frary 12 because he believed that penalizing students may induce psychological factors that could influence their decision to omit questions on which they have partial knowledge. Conversely, other authors suppose that with this scoring method, students can answer correctly through guessing, which introduces a random factor into test scores that lowers reliability and validity.13,14 Guessing the correct answer in the assessment can increase the total mark despite a candidate not possessing the required knowledge but good clinical practice does not include random guessing when unsure. 15

The standard negative mark for an incorrect answer is one that will give an expected score of zero if the candidate ticks all answers in a test randomly, and for this to happen, the penalty for an incorrect answer should be 1/(n - 1), where n stands for the number of choices. 16 However, even among proponents of negative marking, there is confusion as to the amount of negative marks for an incorrect answer. 16 Ahmed and Michail 17 reported that a minus 1 mark penalty is a genuine reflection of inadequate performance rather than bad evaluation and recommended it over a minus 1/4 penalty for an incorrect answer. In the same vein, other authors are of the opinion that an appropriate penalty that would effectively discourage guessing should exceed the standard penalty of 1/(n - 1).7,8,18

Since the adopted methods for medical licensing examinations vary among countries, there is no consensus as to whether a penalty for wrong answers should be applied in medical examinations. 8 None of these methods can fulfill the requirements of a perfect scoring system for the assessment of knowledge, and available research hardly offers alternative methods. 7 Medical examinations in Nigeria and the West African subregion try to strike a balance by combining the two methods of MCQ marking.

Feedback from students is part of the education process, and there is a dearth of information concerning their perception of negative marking. Duffield and Spencer 19 commented that while students’ views about the fairness of specific assessment tools may sometimes be at variance with published research on assessment, their perceptions are important because they influence the acceptability of an assessment instrument. They also recommended that students’ views on assessment should be sought systematically as part of the process of course evaluation.

It has been suggested that different marking methods can influence students depending on their personality traits 20 and also that negative marking places risk-averse students at a disadvantage.7,21,22 Gender preferences for particular types of assessment have produced some considerable debate, but there is little empirical research in the educational literature.23,24 As female students are in general more risk averse, marking methods can introduce an unwanted gender bias. 20 Ng and Chan 25 reported that MCQ tests may also introduce gender bias in test performance dependent on subject test area, instruction/scoring condition, and question difficulty. 25 However, Bond et al 26 found no significant gender difference in performance between different MCQ formats under negative marking conditions. Further research into objective questioning, negative marking, and gender bias has been recommended in order to stimulate informed debate and reduce prejudicial attitudes. 27

The research question asked in this study is whether in Enugu, South East Nigeria, medical students’ test scores in MCQ examination format are affected by perception, gender, and risk-taking behavior? Therefore, this study sought to assess the perception of medical students concerning negative marking scoring method and the effect of gender and risk-taking behavior on scores obtained from multiple choice assessment with the negative marking scoring method. It is hoped that the findings will stimulate more interest and informed debate concerning this assessment tool and enhance the student experience.

Methodology

This was a prospective multicenter survey carried out among fifth year medical students in Enugu State University and the University of Nigeria. The study was carried out over a 2-month period from September to October 2015 at the two study centers. Ethical approval was obtained from the Research and Ethics Committee of Enugu State University before commencement of data collection. The ethical clearance obtained from Enugu State University was accepted by the University of Nigeria. Written informed consent was also obtained from the students before enrollment.

The study took place in two stages. In the first stage, a structured questionnaire was administered to 175 medical students. Thirty-seven out of a class of 42 students were enrolled from Enugu State University giving a response rate of 88.1%, while the remaining 138 were enrolled from a class of 194 in the University of Nigeria giving a response rate of 71.1%. Purposive sampling was used to recruit students who gave consent to participate in the study. Information on examination format preference by students and their experiences with negative marking were ascertained. The questions related to students opinion and perception of negative marking in MCQs examination were asked and response recorded using Likert 5-point scale, namely, strongly agree, agree, neutral, disagree, and strongly disagree. These responses were then recategorized into positive (strongly agree and agree), negative (strongly disagree and disagree), and not sure (neutral) appropriately depending on the way the question was structured.

In the second stage, a class test was administered to medical students from Enugu State University based on a series of pediatric lectures. Of the 37 students from Enugu State University who initially responded to the questionnaire interview, 28 were present on the day the test was administered. They were informed that number right scoring method would be used to evaluate their answers. Three weeks later the same test was administered to the same set of students without prior notice. For this test, the students were informed that negative marking method would be applied with a penalty of minus 1 mark for every wrong answer.

In order to minimize the effect of learning that would have occurred between the administration of the two tests, the following steps were taken: (1) The results were not discussed after the first test. (2) The questions were projected on a screen for the first test to eliminate later use. (3) The tests were not expected because they were not part of the curriculum, and there was no prior warning before any of the tests.

Data analysis

All the data obtained were recorded and analyzed using the Statistical Package for Social Sciences (SPSS) version 20.0. Qualitative statistical methods including frequencies, percentages, and chi square were used to analyze categorical variables. Quantitative statistics using analysis of variance was used to analyze continuous variables. Results were presented as percentages, and statistical significance was set at P-value <0.05.

Results

One hundred and seventy-five medical students in their second clinical year (ie, fifth year in medical school) consented to participate and were enrolled in this study. Sixty-two percent of them were male and the remaining were female students with an overall mean age of 25.55 ± 3.61 years.

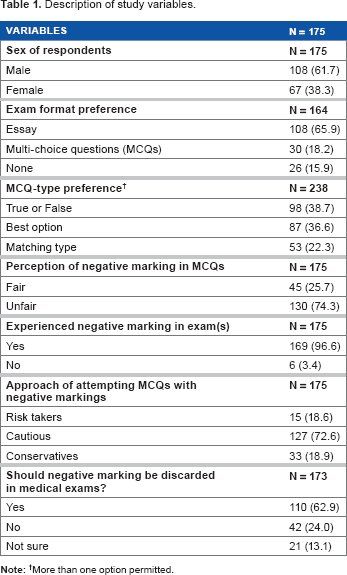

Inquiry into assessment format revealed that 108/164 (65.9%) preferred multiple choice questions (MCQs), 30/164 (18.3%) preferred essay-type questions, while 26/164 (15.9%) had no particular preference (Table 1). Reasons given for MCQs preference included the following: (a) it gives a broader knowledge of a course (23/108; 21.3%), (b) it helps understand a course better because it makes one read “in between lines” (21; 19.4%), (c) it covers a wider area of the course outline during assessment (58; 53.7%), (d) it gives the opportunity to make informed and/or random guesses (5; 4.6%), (e) it eliminates examination marker bias (2/108; 0.02%), and (f) it is more objective and everyone is treated equally during marking (12; 0.11%).

Description of study variables.

More than one option permitted.

Eleven (36.7%) of 30 students who prefer the essay format assessment believed that it is a better way of assessing knowledge because it shows detailed knowledge of a subject content, eight of these students (26.7%) were of the opinion that MCQs can be sometimes confusing, and seven (23.3%) felt that because students memorize past MCQs, it is not a true test of knowledge.

Of the 175 students enrolled, 169 (96.6%) have taken MCQs examination where negative marking was applied (Table 1). Majority (138/169; 81.7%) of these students first experienced negative marking in their third year in medical school (second MBBS class). One hundred and thirty students (74.3%) had a negative perception of negative marking compared to 45 (25.7%) of the students who had a positive perception of negative marking. Out of 130 students with negative perception of negative marking, 80 (74.1%) were male and 50 (74.6%) were female, while among the 45 students with a positive perception, 28 (25.9%) were male and 17 (25.4%) were female. The proportions of male and female students with negative or positive perceptions of negative marking were not significantly different (P = 0.935).

Thirty-nine students (22.3%) believed that negative marking reduces guess work and increases the validity of MCQs examination format in testing knowledge content of a subject compared to 108 (61.3%) who disagreed with this assertion (χ 2 = 23.0, df = 1, P < 0.001). It was also noted that well over half of students, 155 (88.6%) and 110 (62.8%), respectively, believed that negative marking application during MCQs increases anxiety and unfairly penalizes students. Majority of the students (110; 62.9%) agreed that negative marking system should be discarded in MCQs examination format, 42 (24.0%) believed it should be retained, while 21 (13.1%) were neutral in their opinion about negative marking in MCQs (Table 1).

When asked to list in order of appropriateness the type of MCQ without negative marking that is fairest in medical school examination, 98 (56.5%), 46 (26.3%), and 30 (17.1%), respectively, approved, disapproved, and were neutral about the “True or False” format. Similarly, 87 (49.9%), 44 (25.1%), and 44 (25.1%), respectively, approved, disapproved, and were neutral about the “Best option” MCQ format, and lastly, the “Matching type” format was approved by 53 (30.3%), while 60 (34.3%) and 62 (35.4%) disapproved and were neutral about it, respectively. Significantly more students preferred the True and False MCQ format to the other two formats of MCQ surveyed in this study (χ 2 = 10.2, df = 2, P = 0.006).

The pediatrics test was marked with negative marking and number right methods. All 28 students passed the test (score above 50) with a median score of 75 without negative marking, while only six (0.03%) passed the examination when negative marking was applied (median score of 22). The median score of the students without negative marking was significantly higher compared to their median score with negative marking (P = 0.001).

An assessment of risk-taking behavior with negative marking showed that 15 (8.6%) considered themselves “risk takers” (ie, attempts all questions irrespective of knowledge of the correct answers), the vast majority (127; 72.6%) considered themselves as “cautious” (ie, they will answer questions they are sure of and make an educated guess where possible for others), while 33 (18.9%) considered themselves as being “conservative” (ie, answer only questions they are absolutely sure of).

There was no significant differences in risk-taking behavior between male and female students in their MCQ answering patterns with negative marking method (risk takers [66.7% vs. 33.3%], cautious [63.0% vs. 37.0%], and conservative [54.5% vs. 45.5%], P = 0.618).

The second pediatrics test that was scored with negative marking method (penalty of minus 1 mark for every wrong answer) was analyzed further. The mean comparison of test scores across the various risk-taking behavior groups revealed no significant difference (risk takers [8 ± nil], cautious [26.00 ± 18.73], and conservative [36.67 ± 22.72], F = 1.213, P = 0.314).

The risk-taking behavior of these students also had no significant association with perception or opinion of negative marking or their performance when negative marking method was employed (Tables 2 and 3).

Association between risk taking behavior and perception of negative marking.

Association between risk taking behavior and performance in the second test.

Discussion

This study assessed the impact and perception of negative marking among medical students in two medical schools in Enugu, South East Nigeria.

A significantly greater number of our students had a negative perception of negative marking and felt that it was an excessive and unfair penalty that increases anxiety. Goonewardene 28 also observed a consistent disapproval of negative marking among the same set of students he surveyed when they were in the second year and again when they were in the fourth year.

With negative marking applied, the majority of students in this study (72.6%) self-identified their guessing patterns as “cautious” (ie, they will answer questions they are sure of and make an educated guess if possible for others). On the other hand, 15 (8.6%) consider themselves “risk takers” (ie, they will attempt all questions). However, when negative marking was not applied, all students admitted being risk takers. This phenomenon in which students who should not have been selected get selected was described as “gate crashing” by Karandikar 11 and rewards partial knowledge of topics. 26

This study revealed no significant association between risk-taking behavior and mean test scores or performance. This suggests that this personality trait places no undue advantage or disadvantage on any particular group and further strengthens the role of MCQs as a fair and balanced tool for assessing the critical-thinking skills of students.

Interestingly, most of the students preferred the True or False format to the Best option and the Matching type MCQ formats. This may be related to the ease of making a correct guess in the True or False compared to other MCQ types. In the True or False MCQ format, the probability of making a correct guess is always 50%, while in other MCQ formats, this probability gets much lower depending on the number of options one is to choose from.

A significant number of the students (61.3%) did not agree with the assertion that negative marking reduces guess work. This interesting observation is probably a reflection of the “cautious” approach adopted by most of them. This study found no significant differences between male and females in their MCQ answering patterns. This is in keeping with other studies that show evidence of gender neutrality in the tendency to take risks.26,29

The degree of aversion for negative marking was so high that most students would like it to be removed from the MCQ examination format, and these views are consistent with reports from other studies.26,28 However, it is more desirable to adopt fair penalties for discouraging guessing in negative marking schemes than excessive penalties for incorrect answers, which could intimidate students.30,31 This view is highlighted in the pediatrics test administered to the students, where the median score without negative marking was significantly higher compared to the median score with negative marking. Objective assessment of students should therefore strike a balance between the interests of students who would like to attain success at the expense of accurate and detailed knowledge of subject content. Though negative marking is less preferred by students, it is the view of the authors that it should not be completely discarded due to its benefits. Rather, a modification with the lesser penalty of one quarter (1/4) as recommended by Hammond et al 16 may be a fairer alternative. In view of this, there is a need for continued research for a more effective and objective assessment tool that will ensure that the students’ final score in a test is a true representation of their knowledge level.

Limitations

The data strongly suggest a high influence of the grading scheme on student performance; however, a potential limitation is how representative the sample size was of the population. In addition, despite the precautions taken to minimize bias in the administration of the tests, we are aware that the students improving on the questions (by reading them up) may have introduced some bias. However, by doing the test first as number right scoring method and then negative marking order, we hoped to reduce but not completely eliminate this bias.

Conclusions

In the assessment of students, it is more desirable to adopt fair penalties for discouraging guessing rather than excessive penalties for incorrect answers, which could intimidate students in negative marking schemes. There is no consensus on the penalty for an incorrect answer. The authors recommend a fair penalty based on the 1/(n - 1) formula. This may ensure that the students’ final score in a test truly represents their level of knowledge.

Author Contributions

The study was conceived by IKN. The study design, methodology, and questionnaire design were done by IKN, UE, DICO, SNU, and INA. Data collection was done by IKN and SNU. The data analysis and preparation of the results were done by IKN and DICO. There was equal contribution to the discussion by IKN, UE, DICO, SNU, INA, MON, OFA, IBO, JMC, and CJGO. All authors reviewed the final draft of the manuscript. IKN, UE, DICO, and INA are equal authors.

Footnotes

Acknowledgments

The authors wish to express their gratitude to all the medical students who participated in this study. We also wish to thank Ikenna Uche who assisted in the data entry and analysis.