Abstract

Background:

Although learning environment (LE) is an important component of medical training, there are few instruments to investigate LE in Latin American and Brazilian medical schools. Therefore, this study aims to translate, adapt transculturally, and validate the Medical School Learning Environment Scale (MSLES) and the Johns Hopkins Learning Environment Scale (JHLES) to the Brazilian Portuguese language.

Method:

This study was carried out between June 2016 and October 2017. Both scales have been translated and cross-culturally adapted to Brazilian Portuguese Language and then back translated and approved by the original authors. A principal components analysis (PCA) was performed for both the MSLES and the JHLES. Test–retest reliability was assessed by comparing the first administration of the MSLES and the JHLES with a second administration 45 days later. Validity was assessed by comparing the MSLES and the JHLES with 2 overall LE perception questions; a sociodemographic questionnaire; and the Depression, Anxiety, and Stress Scale (DASS-21).

Results:

A total of 248 out of 334 (74.2%) first- to third-year medical students from a Brazilian public university were included. Principal component analysis generated 4 factors for MSLES and 7 factors for JHLES. Both showed good reliability for the total scale (MSLES α = .809; JHLES α = .901), as well as for each subdomain. Concurrent and convergent validity were observed by the strong correlations found between both scale totals (

Conclusions:

Reliability and validity have been demonstrated for both the MSLES and the JHLES. Thus, both represent feasible options for measuring LE in Brazilian medical students.

Introduction

In recent decades, medical education has paid greater attention to medical schools’ learning environment (LE) and medical students’ perception of their LE. 1 In this context, developing valid and reliable instruments intending to measure and comprehend the most important aspects of the LE 2 is of major importance, allowing comparisons with many aspects of the environment and targeting more precise interventions to help students avoid or cope with negative environments.

The need for valid instruments to evaluate the LE in the Brazilian context may be especially important. A recent systematic review and meta-analysis of Brazilian medical students 3 found high rates of mental health problems, such as generalized anxiety disorder, depressive symptoms, and burnout, even higher than US medical students. 4 According to Tempski et al, 5 in a qualitative study with Brazilian medical students, medical schools’ LEs were felt to contribute to poor student well-being. Furthermore, medical education in Brazil is a growing business as compared with its international counterparts and is receiving greater attention from medical education leaders around the world. 6 According to recent data, Brazil has 1 of the largest medical education systems in the world, 6 with private medical schools being responsible for more than 50% of student enrollment. 7 Accurate measurement of LE quality is necessary to determine how LE quality varies across this large system and how LE factors could enhance student learning or contribute to poor student well-being.

To our knowledge, there is only 1 instrument translated to Brazilian Portuguese language to assess LE, the Dundee Ready Education Environment Measure (DREEM).8,9 It was developed by Roff et al 10 and is the most published measure of LE perceptions, 2 being the “gold standard” for measuring LE for many years. However, DREEM has drawn criticism for its 50-item length and concerns that some DREEM items were subject to misinterpretation and low factor loadings. 11 As an example, in the Swedish validation, students failed to understand questions about cheating (broad question not specifying in which situation cheating occurs) and students’ accommodation (not appropriate for all institutions and students, as some universities do not provide accommodation for their students). 11 Other problems were noted in the Greek validation, in which the items concerning cheating, feedback, and course objectives were misunderstood. 12 All these problems using DREEM resulted in a limited score interpretation validity evidence, 2 such that 1 group of authors2(p1692) concluded that “researchers have been more likely to consider using DREEM because they knew of it, rather than conducting a literature review to find a different tool.”

Thus, scales other than DREEM are needed to measure LE perceptions in the Brazilian and Latin America context and all over the world. Two out of several different tools are noteworthy due to its large use, adequate length, and good psychometric proprieties shown by previous studies, 2 the Medical School Learning Environment Scale (MSLES) and the Johns Hopkins Learning Environment Scale (JHLES), which was more recently developed and its use is growing worldwide.13-15 Hence, the development or the translation to the Brazilian Portuguese language of new instruments that evaluate medical schools’ LE is timely and important.

Thus, the objective of this study is to translate, adapt, and determine if interpretation of scores from 2 LE scales (JHLES and MSLES) have validity. The choice for these 2 instruments rely on the fact that these are possibly better options than DREEM due to their length, and better psychometric proprieties based on previous studies. Furthermore, having other options of scales with different perspectives and variables is important to contribute to a more solid evidence in this specific field. Also, we discuss the benefits and limitations of using either JHLES or MSLES based on several perspectives, such as their psychometric and structural characteristics (presented here and in past studies).

Method

This study was carried out between June 2016 and October 2017. Institutional review board approval was obtained from the Federal University of Juiz de Fora (UFJF) Ethical Committee and students signed a consent term.

Participants and criteria for eligibility

All 334 undergraduate students regularly enrolled in the second (end of first year) through fifth (beginning of third year) semesters of the School of Medicine of the UFJF and present during the classes when scales were administered were invited to participate in this study. Brazilian medical schools have a 6-year curriculum: the first 2 years are preclinical years (mostly classroom activities), followed by 2 years of clinical activity (classroom and hospital activities) and the last 2 years are the clerkship (mostly hospital activities). Students who did not agree to participate or who did not fully complete the questionnaires were excluded from the study.

Procedures

From August to September 2017, 2 authors (A.O.F. and B.N.S.) visited all medical students (n = 334) twice at the end of their classes to distribute all questionnaires. Professors agreed to let a researcher use class time to go over the research process, and the study objectives were explained to all students to have their informed consent. Students who voluntarily agreed to participate were given all 5 questionnaires in press for the first round of testing, and they were informed that 45 days later they would have only the JHLES and MSLES retested.

No compensation was provided to students who agreed to participate. The first round of testing lasted approximately 30 minutes, and the second round took about 15 minutes. To compare both test and retest data, students were asked to fill out the sociodemographic scale with each student’s national registration number (CPF, in Portuguese). Students who did not correctly fill out the CPF in either 1 of the applications were excluded from the study.

Instruments

The

The

Validity analyses

The validity was separated into 4 phases presented below: (1) the translation validity, (2) the factor analysis, (3) concurrent validity, and (4) the criterion-related validity.

In June through September 2016, we carried out a translation and cross-cultural adaptation of both scales, simultaneously. There is no general consensus concerning cross-cultural adaptation; therefore, the translation and adaptation processes that we used were adapted from previous studies and guidelines and are described below.32-34

First, approval was obtained from the original authors to translate both scales. Next, 3 authors (R.F.D., O.S.E., and G.L.), all native Portuguese speakers and fluent in the English language, independently translated both scales from the original English to the Brazilian Portuguese, after which a meeting was held to compare the versions; assess the initial conceptual, semantic, and content equivalence; and achieve consensus on translated scale items for each instrument.

Some slight changes were necessary to make each scale appropriate for the Brazilian culture. As an example, in the original JHLES, question 12 states: “The faculty advisors . . .”; as advisory is not a widespread practice in Brazilian universities, we decided to modify this question to become, “Professors and Directors . . .” Other changes were minor such as JHLES questions 22 and 23, where “mentor” was translated into “research mentor” (question 22) and “professor” (question 23).

After the translation to the Brazilian Portuguese language, both scales were sent to 2 native English speakers to perform back-translations independently, resulting in a total of 4 back-translation instruments (2 for MSLES and 2 for JHLES). Then, the 2 back-translations were sent to each scales’ original authors to create back-translation final versions. The English back-translation final versions were then translated into Portuguese by authors (R.F.D., O.S.E., and G.L.) and both scales were given in a discussion group to 12 Brazilian medical students for pilot testing and response process validity. Minor suggestions in punctuation and scale layout made by students were corrected by the Brazilian authors.

To reconcile the data with previous research13,15 and to create a comparison question for concurrent validity purposes, we also carried out the same adaptation process described above for the 2 overall LE perception questions, which resulted in no major changes.

Factor analysis was carried out using SPSS version 21, IBM for Microsoft Windows. Principal component analyses (PCAs) for each scale were performed using Varimax rotation with Kaiser normalization. Eigenvalues greater than 1.0 and Scree plots were used to evaluate the component factors, and factor loadings of 0.40 or greater were considered satisfactory. This was used to identify the factor structure of the instruments.

Concurrent validity refers to the degree to which a construct correlates with other measures of the same construct that are measured at about the same time. In our study, concurrent validity was assessed correlating the JHLES and the MSLES using Pearson correlation coefficients.

Descriptive statistics were reported for the sociodemographic features, LE and DASS21 scales, and the 2 general LE questions. Convergent validity

35

was assessed by calculating Pearson correlation coefficients for JHLES and MSLES totals to the 2 overall LE perception questions and to the DASS 21 scales.

16

Validity was also assessed through contrasted groups by comparing the differences among the means of JHLES and MSLES scores between sex, ethnicity, semester of graduation, overall perception of LE, and school endorsement using independent

Finally, as there were slight changes between the original JHLES and the revised Brazilian factorial JHLES in the PCA, we decided to explore this scale further, aiming to verify if the use of the original version of the scale would be appropriate to the Brazilian context as well. For that matter, we carried out several analyses using both factorial analyses of the instrument. These analyses were the correlation of the Brazilian and Original American JHLES with sex, ethnicity, semester of graduation, overall perception of the LE, school endorsement, and DASS. The same approach was not possible for the MSLES as the PCA resulted in different dimensions and, therefore, the use of the American version in this context would not be appropriate.

Reliability

Reliability was assessed in the following way. First, we assessed the internal consistency 36 of the instrument using Cronbach alpha coefficient (95% confidence interval), which varies from 0 to 1. An instrument is considered to have good reliability if Cronbach alpha is greater than 0.7. 37 Then, stability was tested using a test–retest reliability (45 days period) through Pearson correlation and intraclass correlation coefficients (ICCs). Although there is no gold standard period for the test–retest reliability, we decided to use this period to verify if the stability was still high after a medium-term period, which supports even further this stability. Our data collection happened predominantly in the middle of the semester and this is a period with a low quantity of examinations and the LE has a low likelihood of changing in this period.

All analyses were carried out using SPSS 21 (SPSS Inc.).

Results

From a sample of 334 undergraduate students, 248 students (74.2%) completed the questionnaire and 65 students (19.5%) filled out the retest questionnaires. Table 1 summarizes the main findings concerning sample description and general LE questions.

Sample characteristics and overall learning environment perception questions.

Abbreviations: JHLES, Johns Hopkins Learning Environment Scale; LE, learning environment; MSLES, Medical School Learning Environment Scale.

Significant differences between second versus third (

Medical School Learning Environment Scale

Principal component analysis

The data were appropriate for the PCA procedure, demonstrating a Kaiser–Meyer of 0.818 and Bartlett index of 922.359 (

Principal components analysis of the Medical School Learning Environment Scale (MSLES).

Abbreviation: MSLES, Medical School Learning Environment Scale.

Internal consistency

Cronbach coefficient was 0.809 for the total scale and was 0.781 for the subscale Students, 0.688 for Exams, 0.560 for External Problems, and 0.625 for School (Table 2).

Test–retest

The 45-day test–retest comparison resulted in a Pearson correlation coefficient of 0.757 (

Concurrent validity

The MSLES Global Score showed a significant (

Convergent validity

The MSLES Global Score showed a significant (

Johns Hopkins Learning Environment Scale (JHLES)

Principal components analysis

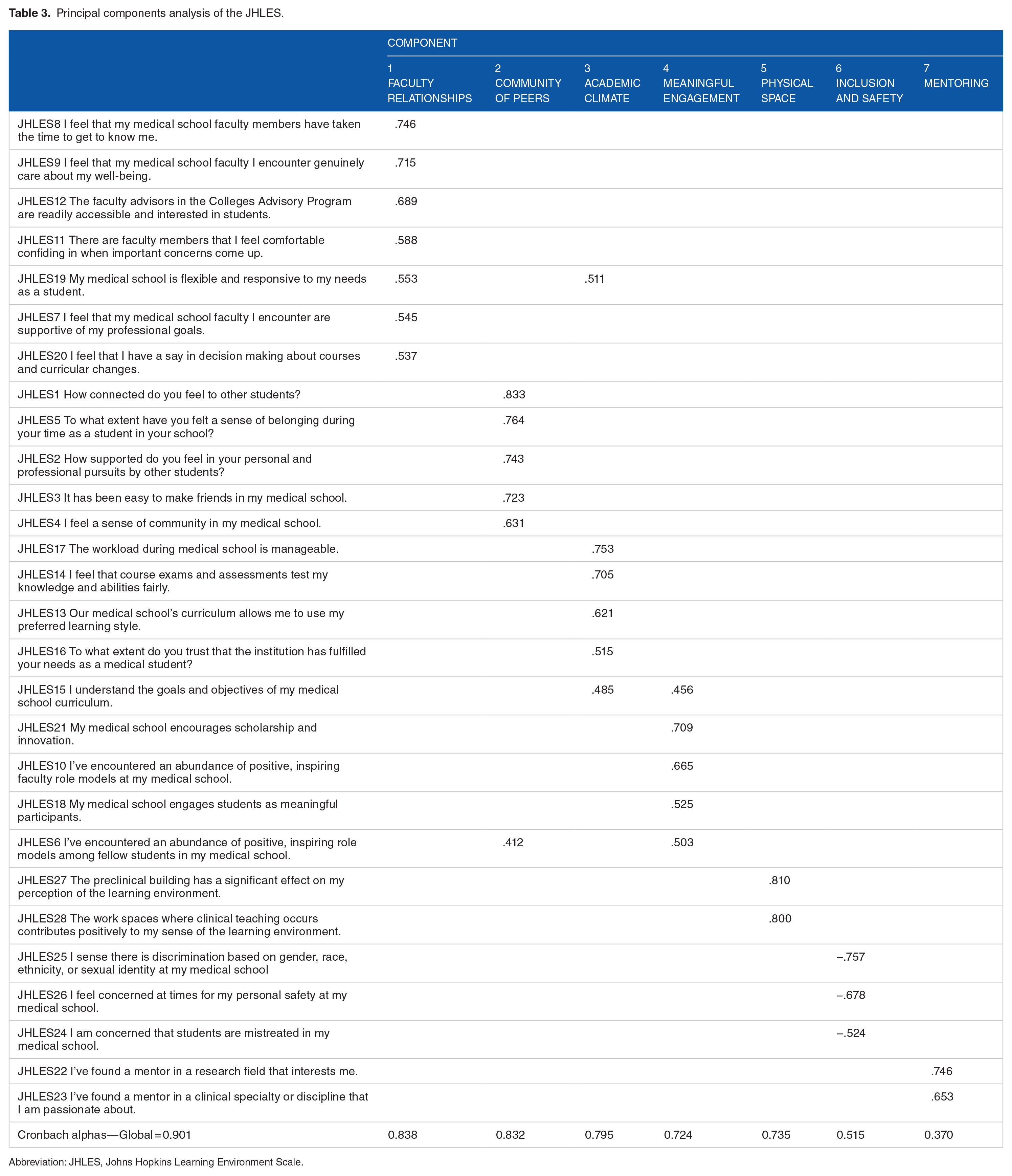

Table 3 summarizes the factor analysis for the JHLES. A Kaiser–Meyer of 0.887 and Barlett index of 2694.631 (

Principal components analysis of the JHLES.

Abbreviation: JHLES, Johns Hopkins Learning Environment Scale.

Internal consistency

According to the cutoff previously mentioned, the Cronbach alpha was above 0.70 for the total scale (0.901), and for most subscales, as follows: (1) Faculty Relationships (0.838), (2) Community of Peers (0.832), (3) Academic Climate (0.795), (4) Meaningful Engagement (0.724), (5) Physical Space (0.735), with exception of the subscales (6) Inclusion and Safety (0.515), and (7) Mentoring (0.370) (see Table 3).

Test–retest

The 45-day test–retest comparison resulted in a Pearson correlation coefficient of 0.697 (

Concurrent Validity

The MSLES Global Score showed a significant (

Convergent validity

The JHLES also showed convergent validity (see Table 4). A positive and significant (lowest

Correlations between JHLES/MSLES and DASS 21, LE perception, and school recommendation.

Abbreviations: DASS, Depression, Anxiety, and Stress Scale; JHLES, Johns Hopkins Learning Environment Scale; LE, learning environment; MSLES, Medical School Learning Environment Scale.

Johns Hopkins Learning Environment Scale comparison: original versus Brazilian revised factor analyses

The comparisons between the original 13 and the revised Brazilian factorial JHLES are presented in Supplementary Material 1 to 6. First, similar to the original analysis, all 28 items loaded significantly on the final factor and were included in the Brazilian Portuguese Version. However, we found small changes on some of the subdomain components (see Supplementary Material 1). Factors 3 (Academic Climate), 5 (Physical Space), 6 (Inclusion and Safety), and 7 (Mentoring) maintained the same structure as the original version. However, subscale Community of Peers lost question 6 (“I’ve encountered an abundance of positive, inspiring role models among fellow students in my medical school”). The subscale Meaningful Engagement acquired this question and also question 10 (“I’ve encountered an abundance of positive, inspiring faculty role models at my medical school”), and subscale Faculty Relationships gained questions 19 (“My medical school is flexible and responsive to my needs as a student”) and 20 (“I feel that I have a say in decision making about courses and curricular changes”) from Meaningful Engagement. Despite these changes, all subdomains of the Brazilian JHLES and the original versions had similar Cronbach alpha coefficients (Supplementary Material 2). Supplementary Materials 3 to 6 show comparisons among both original and factor revised JHLES and its correlations with each JHLES subdomain, MSLES, DASS, School Recommendation, LE Overall Perception, sex, ethnicity, semester of graduation, overall perception of the LE, and school endorsement. Generally, there is a slight nonsignificant difference between both scales, given that the magnitude and strength of associations were similar when compared with original and revised JHLES.

Discussion

In this study, MSLES and JHLES were transculturally adapted and the interpretation of scores from the instrument seemed to have appropriate validity (in content, internal structure, psychometric properties, and relation to other variables), supporting both scales’ use in educational settings. Therefore, we present them here as alternatives to other popular scales such as the DREEM, the only LE instrument translated to Brazilian Portuguese language so far.8,9

For both newly translated scales, we found good test–retest reliability and convergent validity. Both were significantly correlated with each other, as well as with 2 questions about overall LE perception and school endorsement. The JHLES has already been shown to correlate significantly with the DREEM in previous studies,14,25 as well as with both LE overall perception and school endorsement questions. 13 To our knowledge, no study has compared the MSLES (short version) with any other instrument. Furthermore, JHLES and MSLES correlated significantly but negatively with the 3 DASS subdomains (depression, anxiety, and stress), as expected due to recent evidence pointing to a correlation between LE and several mental health indicators.9,22

Although both MSLES and JHLES showed appropriate psychometric proprieties, they have differences that should be considered when using them to measure student LE perceptions. First, the stability of an instrument across cultures is important to allow reliable comparisons, in that students from different countries and backgrounds should interpret different questions in similar ways. The JHLES has been used in countries and cultures outside the United States14,15,25,26 to make meaningful comparisons between LE perceptions, whereas the MSLES has been used mainly on US student populations.38,39 After carrying out a PCA, the JHLES yielded the same domains in both Brazilian and US populations, whereas the MSLES did not, which suggests that the JHLES may be more appropriate to use internationally. We should highlight that, although the number of domains between the versions of the JHLES did not change, items still shifted to different domains, which also affects the internal structure. Nevertheless, the original American JHLES presented similar results as compared with the Brazilian JHLES in the analyses, and could also support a possible interchangeable use between both JHLES instruments. Second, the length of an instrument must be taken into account, especially when studying medical students, who often are overwhelmed with work and are loathed to spend time completing research questionnaires. In this regard, the MSLES is a short option, with 11 fewer questions than the JHLES.

Third, we should consider what each scale is trying to assess. Some domains of both tools seem to evaluate similar information and the answers did not differ among students. For instance, the domain

Despite some similarities between tools, JHLES seems to be more comprehensive than the MSLES. For instance, the

What do LE ratings tell us about our Brazilian students’ LE perceptions compared with other medical student populations? Our sample scores were slightly higher on the JHLES Global score (mean = 90.7), suggesting greater satisfaction with the LE, compared with India (mean = 86.2 and 82.8) 25 and lower compared with Taiwan (mean = 91.9), 14 Malaysia (mean = 101-116),15,26,40 Israel (mean = 100.9), 26 China (mean = 101.7), 26 and the United States (mean = 111). 13 Curiously, when analyzing only the 2 overall LE perception questions compared with the same US/Johns Hopkins sample, 13 Brazilian students tended to overall rank their school as having an exceptional or good LE more often than the Johns Hopkins students did (Brazil = 80.2%/United States = 77%), and Brazilian students also tended to recommend their school to a close friend more than US medical trainees (Brazil = 92.7%/United States = 81%). This brings us the question of why Brazilian medical students tend to rate their LE better when asked more globally than when asked specifically about each domain, more puzzling when we analyze a previous study that indicated lower levels of mental health among Brazilian versus US medical students. 4 Is this difference explained due to a low criticism among Brazilian medical students toward their own LE? Or are they just less exposed than their American counterparts to the concept of LE? It seems possible that if Brazilian students are asked less often about their LE or less pushed to engage in LE criticisms by the faculty than their US peers, they may not be as exposed to the concept of LE and therefore might be more prone to desirability bias. Moreover, the mean age difference between both studies and the fact that US medical students attend a 4-year college before medical school might also play a role in explaining these differences. A single global rating may not be best for Brazilian students, whereas an LE instrument gets at more specific features of LEs and offers a more nuanced and likely valid assessment of LEs. Similar findings were presented by Ilgen et al 41 in the context of simulation-based assessment of health professionals.

Our PCA might also indicate differences in LE concepts across cultures. Differently than the US study, 2 role-modeling questions (items 6 and 10) related closely to items from the JHLES Meaningful Engagement subscale which, in turn, lost 2 items (19 and 20) to the Faculty Relationship subscale. These changes might reflect different perceptions that Brazilian students hold about role-modeling and relationships with faculty. One hypothesis is that in Brazil, faculty members are considered more part of the institution, whereas at Hopkins they may be considered more as individuals. If this is true, then meaningful relationships with faculty at Hopkins/United States would be student–faculty relationships whereas in Brazil these same relationships would be student–School of Medicine/Directors relationships. Concerning role-modeling, on the contrary, it is plausible that to Brazilian students, the existence of a role model may be critical to perceiving a sense of meaning in the LE. Further cross-cultural studies are essential to understand these differences.

Finally, we found significant correlations between LE and depression, anxiety, and stress for both scales. These findings show the importance that LE has on the mental health, well-being, and burnout of students as noted by previous studies.9,22 Strategies such as pass/fail grading systems, mental health programs, mind–body skills programs, modifying curriculum structure, multicomponent program reform, wellness programs, and advising/mentoring programs have been investigated with mixed results. 42 The diagnosis of an institution’s LE using tools such as JHLES and MSLES can result in clinical and administrative implications, in a sense that health educators can map their medical school’s problem and act to minimize it, supporting medical students, training medical teachers, and modifying the curriculum as needed. 43

Limitations

First, we included only junior students (first 3 medical school years), so we cannot generalize these findings to more senior students, such as those in the clerkship years. More studies should be conducted using a large sample and including these students. Second, this is a single-institution study, and our results should be considered carefully when generalizing results to other institutions. Third, both scales and the overall LE perception items were completed at the same time. This can lead to a stronger correlation between instrument scores than if the surveys/items were completed at different times. However, it increases the response rate. Forth, our response rate was 75%. Although this response rate could be considered good, not all students have answered the questionnaire and the LE data could not be generalized for all students of our medical school. Finally, perceptions about the LE and knowledge about specific terms (such as advisory) might vary among institutions from the same country, justifying the need for a multi-institution study in Brazilian schools, such as has been done in the United States.38,39

Conclusions

In this study, we provided good evidence for the reliability and validity of the Brazilian versions of the JHLES and MSLES, both of which appear to be useful alternatives to the DREEM. The JHLES also appeared to have advantages over the MSLES, but the choice of a scale to measure a particular construct should be based on individuals’ experiences as well as on psychometric proprieties and particular characteristics of each instrument. Furthermore, the use of the original JHLES might be preferred in contrast to the revised one created using data from our Brazilian population, when it is desirable to compare findings with previous studies from the United States, 13 Taiwan, 14 India, 25 China, Israel, and Malaysia.15,26 Standardizing measurement across populations could allow for international benchmarking and cross-cultural research that would lead to a better understanding and improved quality of medical school LEs globally.

Supplemental Material

Supplementary_Material_2sep19_xyz257669895099c – Supplemental material for Measuring Students’ Perceptions of the Medical School Learning Environment: Translation, Transcultural Adaptation, and Validation of 2 Instruments to the Brazilian Portuguese Language

Supplemental material, Supplementary_Material_2sep19_xyz257669895099c for Measuring Students’ Perceptions of the Medical School Learning Environment: Translation, Transcultural Adaptation, and Validation of 2 Instruments to the Brazilian Portuguese Language by Rodolfo F Damiano, Aline O Furtado, Betina N da Silva, Oscarina da S Ezequiel, Alessandra LG Lucchetti, Lisabeth F DiLalla, Sean Tackett, Robert B Shochet and Giancarlo Lucchetti in Journal of Medical Education and Curricular Development

Footnotes

Funding:

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: The paper received funding from the National Council for Scientific and Technological Developments (CNPq) from August 2017 to July 2018 and Giancarlo Lucchetti received a Research Productivity Scholarship at Level 2 (Medicine) from the Brazilian National Council for Scientific and Technological Development (CNPq).

Declaration of conflicting interests:

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Author Contributions

RFD: Substantial contributions to the conception or design of the work, interpretation of data for the work, in drafting the work, final approval of the version to be published, and agreement to be accountable for all aspects of the work in ensuring that questions related to the accuracy or integrity of any part of the work are appropriately investigated and resolved.

AOF: Substantial contributions to the conception or design of the work; acquisition of data, revising the work critically for important intellectual content, final approval of the version to be published, and agreement to be accountable for all aspects of the work in ensuring that questions related to the accuracy or integrity of any part of the work are appropriately investigated and resolved.

BNS: Substantial contributions to the conception or design of the work, acquisition of data, revising the work critically for important intellectual content, and final approval of the version to be published, and agreement to be accountable for all aspects of the work in ensuring that questions related to the accuracy or integrity of any part of the work are appropriately investigated and resolved.

OSE: Substantial contributions to the conception or design of the work, interpretation of data for the work, revising the work critically for important intellectual content, final approval of the version to be published, and agreement to be accountable for all aspects of the work in ensuring that questions related to the accuracy or integrity of any part of the work are appropriately investigated and resolved.

ALGL: Substantial contributions to the conception or design of the work, interpretation of data for the work, revising the work critically for important intellectual content, final approval of the version to be published, and agreement to be accountable for all aspects of the work in ensuring that questions related to the accuracy or integrity of any part of the work are appropriately investigated and resolved.

LFD: Substantial contributions to the conception or design of the work; interpretation of data for the work, revising the work critically for important intellectual content, final approval of the version to be published, and agreement to be accountable for all aspects of the work in ensuring that questions related to the accuracy or integrity of any part of the work are appropriately investigated and resolved.

ST: Substantial contributions to the conception or design of the work; interpretation of data for the workrevising the work critically for important intellectual content, final approval of the version to be published and agreement to be accountable for all aspects of the work in ensuring that questions related to the accuracy or integrity of any part of the work are appropriately investigated and resolved.

RBS: Substantial contributions to the conception or design of the work; interpretation of data for the work, revising the work critically for important intellectual content, final approval of the version to be published and agreement to be accountable for all aspects of the work in ensuring that questions related to the accuracy or integrity of any part of the work are appropriately investigated and resolved.

GL: Substantial contributions to the conception or design of the work, analysis and interpretation of data for the work, and drafting the work. GL was also instrumental in final approval of the version to be published and Agreement to be accountable for all aspects of the work in ensuring that questions related to the accuracy or integrity of any part of the work are appropriately investigated and resolved.

Data Availability

The data used to support the findings of this study are available from the corresponding author on request.

Ethical Approval

The project has been approved by the Ethics Committee of the Federal University of Juiz de Fora.

Supplemental Material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.