Abstract

Background:

Simulation-based training has been used in medical training environments to facilitate the learning of surgical and minimally invasive techniques. We hypothesized that integration of a procedural simulation curriculum into a cardiology fellowship program may be educationally beneficial.

Methods:

We conducted an 18-month prospective study of cardiology trainees at Vanderbilt University Medical Center. Two consecutive classes of first-year fellows (n = 17) underwent a teaching protocol facilitated by simulated cases and equipment. We performed knowledge and skills evaluations for 3 procedures (transvenous pacing [TVP] wire, intra-aortic balloon pump [IABP], and pericardiocentesis [PC]). The index class of fellows was reevaluated at 18 months postintervention to measure retention. Using nonparametric statistical tests, we compared assessments of the intervention group, at the time of intervention and 18 months, with those of third-year fellows (n = 7) who did not receive simulator-based training.

Results:

Compared with controls, the intervention cohort had higher scores on the postsimulator written assessment, TVP skills assessment, and IABP skills assessment (P = .04, .007, and .02, respectively). However, there was no statistically significant difference in scores on the PC skills assessment between intervention and control groups (P = .08). Skills assessment scores for the intervention group remained higher than the controls at 18 months (P = .01, .004, and .002 for TVP, IABP, and PC, respectively). Participation rate was 100% (24/24).

Conclusions:

Procedural simulation training may be an effective tool to enhance the acquisition of knowledge and technical skills for cardiology trainees. Future studies may address methods to improve performance retention over time.

Introduction

Simulation-based training has been used in multiple professions to facilitate the learning of complex tasks and procedures. Simulation can be used to hone an individual’s cognitive and psychomotor skills in a low risk environment prior to independent performance. 1 Examples include flight simulator programs employed by the US National Aeronautics and Space Administration and virtual training for surgical procedures, echocardiographic imaging, and carotid artery stenting.2-8

Procedural simulation has been an area of considerable interest in the field of interventional cardiology. 9 With continually evolving techniques and technology, an operator’s ability to learn and maintain his procedural skills is essential to providing safe and effective patient care.1,10 For cardiovascular trainees, simulation may be appealing as a teaching tool to augment exposure to less commonly performed procedures and to create a more standardized experience across a training program. This study expands on prior work assessing the effectiveness of simulation among cardiology trainees.11,12

In our cardiology fellowship program, trainees historically reported inconsistent opportunity to master specific procedures. Therefore, we designed a simulation-based teaching curriculum targeting 3 of these procedures (temporary transvenous pacing [TVP] wire, intra-aortic balloon pump [IABP], and pericardiocentesis [PC]). We then employed this educational tool and evaluated its effect on learning and retention of these skills over time. We hypothesized that standardized simulator training with proctored feedback would improve the knowledge base and technical proficiency for these cardiac procedures in a cohort of fellowship trainees.

Methods

This prospective educational study was independently reviewed and approved by an institutional review board at Vanderbilt University Medical Center. The study population included cardiology trainees within a single fellowship program. Subjects were subdivided into categorical groups based on postgraduate year of training. The intervention group consisted of 2 successive classes of first-year fellows (postgraduate year 4), whereas the historical control group consisted of 1 senior-level class of trainees (postgraduate year 6). Performance assessments remained anonymous and had no impact on formal evaluation of the trainees. Participants consented to the study and could opt out of the protocol at any time.

The Vanderbilt Center for Experiential Learning (CELA) works in partnership with many of the medical center’s training programs to offer students and housestaff experience in simulation via standardized patient encounters, live technical simulation using state-of-the-art mannequins, and virtual procedural simulation via specialized software programs and equipment. We designed the following 3 procedural skills stations for TVP, IABP, and PC (Figure 1A to C) using the CELA laboratory space. We used expert faculty input to compose a 15-question, multiple-choice examination to be administered as a knowledge assessment relevant to each procedure (Supplemental Appendix). In addition, we created digital videos to provide a step-by-step audiovisual instrument for teaching purposes during simulator training (Online Supplemental Videos 1-3). Finally, 4 proctors were independently trained to dispense the written examination and conduct simulation assessment and teaching according to a standard protocol. Procedural skills assessment forms are provided in the Supplemental Appendix.

Procedural skills stations for (A) transvenous pacing wire, (B) intra-aortic balloon pump, and (C) pericardiocentesis.

At the beginning of the first year of fellowship training and prior to any real-time exposure to advanced cardiac procedures (ie, catheterization laboratory or cardiac intensive care unit rotations), first-year fellows in 2 successive years were brought to the CELA center to undergo simulation training. Collectively, these trainees were designated the “intervention group.” The curriculum consisted of the follow-ing (Figure 2): (a) orientation with didactic and video instruction covering each procedure; (b) case-based simulation testing at 3 skills stations; (c) immediate proctor feedback, teaching, and hands-on practice; and (d) postinstruction assessment using the 15-question multiple-choice test.

Simulation-based teaching protocol for intervention group.

For the control arm, simulation testing was conducted in one class of third-year fellows who, by virtue of their senior level of training, had greater exposure to cardiac procedures from experiential learning through prior clinical service rotations. This class of fellows underwent the following protocol: (a) orientation WITHOUT didactic and video instruction, (b) case-based simulation testing at 3 skills stations, (c) hands-on simulator practice WITHOUT feedback or instruction, and (d) written 15-question multiple-choice posttest. As opposed to the intervention group, no instructional videos were shown and no proctor teaching or feedback was provided during the simulator testing. This group of senior fellows was designated as the “historical control group.”

The final phase of the study focused on knowledge and skills retention. The index group of first-year fellows (n = 9) returned to the CELA center 18 months after their initial simulator session (timepoint considered the midway point of fellowship training). This group underwent skills assessments and written postexamination identical to the protocol used for the control group (ie, no didactic videos, teaching, or feedback). During this 18-month time period, this index group did not receive any interim simulation training using the didactic video instruments nor further proctored skill station exposure, as these could confound the assessment of retention over time.

Given the small number of subjects in this investigation and the nonnormally distributed data, we used nonparametric tests for statistical comparisons. We compared written examination scores between the intervention and control groups using a Wilcoxon rank sum test. This was repeated for each of the 3 procedural skills assessments between groups. A Wilcoxon signed rank test was used to evaluate in-group differences for the index first-year class by comparing written and skills results between the initial simulator training session (0 months) and follow-up (18 months). The 18-month follow-up results for this class were compared with the control group as well. The 95% confidence intervals (CIs) and P values were calculated for all tests. Analyses were performed using R program software version 3.2.2.

Results

Table 1 outlines the characteristics of all participating subjects. A total of 24 cardiovascular medicine fellows participated in this study (participation rate 24/24). A total of 19 subjects were men (79.2%). The intervention group consisted of 9 and 8 trainees, in the initial and subsequent first-year classes, respectively, with a mean age of 31.1 years. The historical control group consisted of 7 third-year fellows with a mean age of 32.7 years. In the control group, prior to the simulator assessment session, the mean number of cardiac catheterization laboratory rotations was 5.8 months per fellow, whereas the mean number of cardiac intensive care unit rotations was 4.6 months per fellow. Reported median numbers of TVP, IABP, and PC performed individually were 8.4, 4.4, and 3.4, respectively.

Fellowship trainee characteristics.

Underwent simulation training at the beginning of first-year fellowship training.

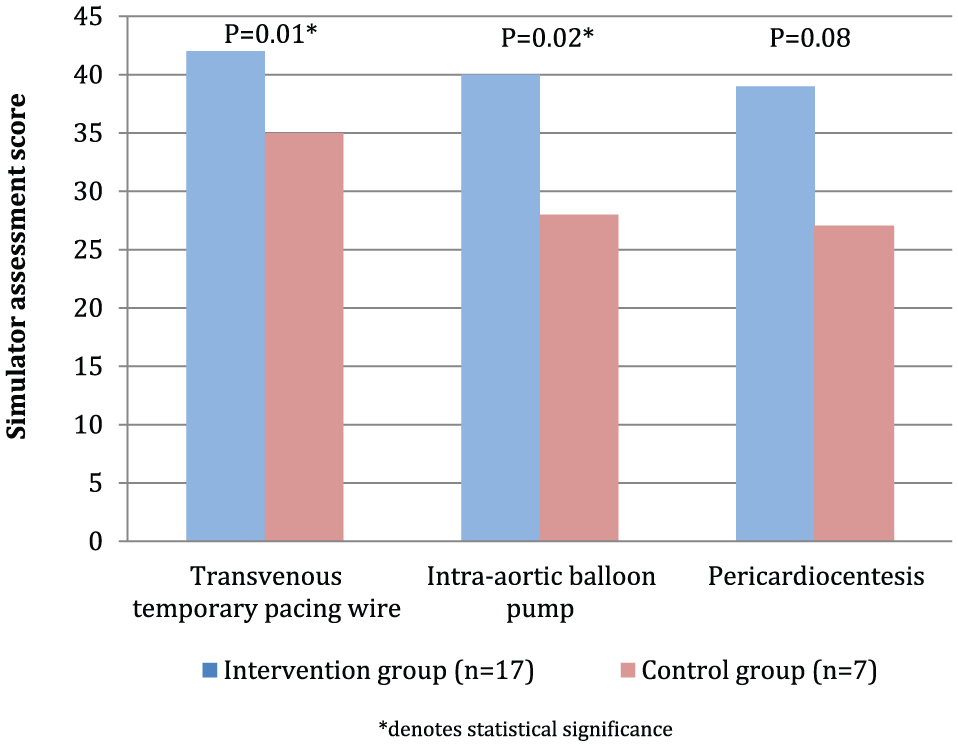

The intervention cohort had statistically higher scores on the written examination compared with the control group (CI: (0+, 4); P = .038). Figure 3 shows a comparison of skills assessment scores (TVP, IABP, and PC) between the intervention and control groups. Median scores for TVP, IABP, and PC in the intervention group were 42, 40, and 39, respectively, vs 35, 28, and 27, respectively, in the control group. By nonparametric analyses, the intervention group performed 2 to 8 points higher in TVP and 1 to 16 points higher in IABP skills assessments than controls (P = .007 and .021, respectively). However, the difference between PC skills assessment scores was not statistically significant between the intervention group and controls (P = .08).

Procedural skills assessment scores.

Figure 4 shows skills assessment scores of the index first-year fellow class (n = 9) within the intervention arm at the time of simulator training and 18-month follow-up, as well as scores from the control group. This index class had lower scores at 18-month follow-up compared with the time of simulator training (0 months) for all 3 procedures (median 18-month vs 0-month scores: TVP 36 vs 42; IABP 39 vs 44; PC 36 vs 40, respectively). Compared with 0 months, 18-month scores were 4 to 7 points lower for TVP (P = .008), 3 to 6.5 points lower for IABP (P = .014), and 2 to 7 points lower for PC (P = .014). However, performances at 18 months in this class remained statistically higher than the control arm (scores 1 to 4 points higher for TVP [P = .011], 8 to 16 points higher for IABP [P = .004], and 4 to 15 points higher for PC [P = .002]). With respect to knowledge assessments, written examination scores did not significantly differ when comparing the index class at 0 vs 18 months (P = .07) nor between 18 months vs the control arm (P = .66).

Procedural skills performance of index fellowship class receiving simulator training (0 months, 18 months) vs controls.

Discussion

The performance of any invasive procedure carries with it inherent risks for complications. Incorrect execution of such may adversely affect patient safety, increase hospital length of stay, consume additional health care resources, and propagate incorrect procedural methods for the next generation of physicians. 13 A standardized program that incorporates simulation-based instruction may serve to improve delivery of patient care and enhance the quality of a residency or fellowship training program.10-12,14-16 In adn era of patient outcomes and quality improvement, the mantra of “see one, do one, teach one” may simply not suffice.

Lenchus and colleagues previously developed a procedural training program that demonstrated significant improvements in competency for 5 bedside procedures performed by internists. In this course, simulation-based training enabled trainees to practice and receive objective performance feedback before applying diagnostic and therapeutic techniques to patients. 17 Similarly, we developed a simulator-facilitated, cardiac procedural competency curriculum and sought to examine its performance in measures of knowledge and skills acquisition as well as retention.

In a traditional 3-year cardiology fellowship at our institution, fellows-in-training regularly rotate through the cardiac catheterization laboratory and cardiovascular intensive care unit. During these rotations, fellows have hands-on experience with procedures such as coronary angiography and right and left heart catheterization. However, the exposure to other advanced procedures such as IABP placement, TVP wire placement, and PC can be variable, and the method of teaching these techniques has not previously been standardized.

In this simulation study involving cardiology trainees at a single institution, an educational curriculum employing a combination of video instruction, simulation-based teaching, and individualized feedback enhanced both knowledge and skill measures for 3 life-saving cardiac procedures. Not surprisingly, there was a decrement in procedural skill measures at 18 months compared with baseline, whereas performance on knowledge assessments did not significantly change. This suggests that repeated simulator training to reinforce technical skills could enhance retention over time. Notably, procedural results at 18 months compared favorably with the control arm, suggesting some degree of skill preservation and further supporting the utility of this curricular instrument.

We acknowledge several limitations to this study. Given the limited size of each fellowship class within a single institution, the study sample is small. The delineation of comparator groups based on year of training presumes that fellows from the same postgraduate class begin their training at an equal level of knowledge and experience that then improves in a comparable fashion longitudinally. The subject population may thus be prone to heterogeneity in that prior clinical exposure, procedural comfort level, baseline fund of knowledge, and learning curve can vary from one individual to the next and is difficult to quantify. In follow-up assessments of fellows at the 18-month mark, we also assume that overall education and procedural exposure on clinical rotations were equitable between trainees and did not significantly alter over this time interval. However, we note that the fellowship program did not undergo any major programmatic, leadership, or curricular changes during the study period.

Technology-enhanced simulation has proven to be an effective educational tool for health care professionals. 18 Given the resources and expenses required for a simulation laboratory and equipment, 19 it is unclear how widely applicable this technology and modern approach to postgraduate education may be among cardiology fellowship programs across the country. In a national survey of interventional cardiology fellowships (n = 59, 45% response rate), only 14 programs reported utilization of simulation as a teaching modality. 10 Furthermore, it is evident that a simulated environment is inherently different from “real-life” scenarios and therefore may elicit dissimilar behavioral responses compared with a true clinical practice setting. Looking forward, understanding how simulation in medical training may translate to improved delivery of care at the patient bedside should be prioritized for future study in the field.

Finally, procedural simulation represents a unique opportunity to share educational resources and methodology not only among regional institutions but also on a national level through invested clinician educators. For institutions that possess the space, personnel, and funding for simulation-based education, our study may serve as a prototype for the development of similar procedurally focused curricula. In settings without such infrastructure, the use of traditional didactics, audiovisual instruments, written assessments, clinical case scenarios, and mentored feedback remain core teaching principles generalizable to the standard training program.

Conclusions

The design, application, and integration of a simulator-enhanced teaching program into a cardiology fellowship curriculum are feasible. The protocol we employed proved educationally beneficial to cardiovascular medicine trainees in areas of knowledge and skills acquisition for procedures variably encountered in clinical training. Future study in simulation-based training may serve to elucidate the role of simulation in skills retention as well as its potential utility for other advanced cardiac procedures.

Supplemental Material

CELA_supplemental_materials_3_43909ef47ede_4390aad6264c_45118e0f9ff3_45117a5fa11b_xyz768330083774 – Supplemental material for Effects of Advanced Cardiac Procedure Simulator Training on Learning and Performance in Cardiovascular Medicine Fellows

Supplemental material, CELA_supplemental_materials_3_43909ef47ede_4390aad6264c_45118e0f9ff3_45117a5fa11b_xyz768330083774 for Effects of Advanced Cardiac Procedure Simulator Training on Learning and Performance in Cardiovascular Medicine Fellows by Michael N Young, Roshanak Markley, Troy Leo, Samuel Coffin, Mario A Davidson, Joseph Salloum, Lisa A Mendes and Julie B Damp in Journal of Medical Education and Curricular Development

Footnotes

Acknowledgements

The authors thank all participating trainees as well as the Vanderbilt Center for Experiential Learning & Assessment for providing the personnel, time, equipment, and space to conduct this study.

Declaration of conflicting interests:

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding:

The author(s) received no financial support for the research, authorship, and/or publication of this article.

Author Note

Michael N Young is now affiliated with Section of Cardiovascular Medicine, Dartmouth-Hitchcock Medical Center, Lebanon, NH, USA.

Author Contributions

All authors have contributed independently to the contents of the article. Specifically, MNY, RM, LAM, and JBD designed the study. MNY, RM, TL, SC, JS, LAM, and JBD conducted the study; MAD performed the statistical analyses. All authors contributed to the composition, review, and approval of the manuscript.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.