Abstract

Decision science and implementation science share the common goal of improving individual and population health through choosing and providing effective health innovations at scale. In this article, we summarize a symposium we hosted at the 45th Annual Society for Medical Decision Making North American meeting. The symposium aimed to illustrate how integrating implementation science and decision science can strengthen the real-world impact and practical utility of decision science methods. The symposium was attended by 51 individuals. It included 4 presentations by early-career researchers and a moderated discussion. Presentations covered innovative work at the intersection of implementation science and decision analytic modeling and focused on policy applications because of the presenters’ expertise and strong history of decision analytic modeling to inform policy decisions. The symposium’s moderated discussion indicated a need for developing collaborations between implementation and decision scientists to move this type of work forward. Suggested areas of future research are modeling to identify gaps in data and considering value-of-information methods, exploring how implementation could be incorporated into simulation methods beyond those discussed in the symposium (e.g., distributional and extended cost-effectiveness analyses), and integrating implementation science into other areas of decision science (e.g., preference and prioritization research, shared decision making). We urge decision science researchers to pursue interdisciplinary research integrating decision and implementation science to best inform policy decision making and drive the scale-up of promising policies across contexts.

Highlights

Implementation science concepts could strengthen the external validity and uptake of decision science methods such as decision analytic models.

The symposium discussed and highlighted innovative ways that decision science researchers could integrate implementation science frameworks and outcomes (e.g., cost, reach, fidelity) into decision analytic models to be more responsive to the multifaceted considerations of policy decision making.

Supporting interdisciplinary networking and collaboration between decision scientists and implementation scientists is critical to strengthen the real-world impact and practical utility of decision science methods.

Within decision science, decision analytic models aim to use simulation-based techniques to help inform decisions about medical, public health, and health-related resource allocations and health intervention delivery across contexts and populations. 1 Implementation science is the study of strategies to support the use of effective health innovations in practice. 2 Core to each of these fields is the common goal of improving individual and population health through choosing and providing effective health innovations at scale.

Decision scientists have a strong history of providing crucial information on, for example, the most cost-effective innovations for a given health issue, or understanding what various groups prefer and value. 3 However, we argue that more attention to how innovations are put into practice is needed in decision science methods. Attending to such considerations can ensure decision analytic models reflect real-world processes and contexts, thereby strengthening the external validity and uptake of modeling by decision makers. 4 This is precisely what implementation science can add.

We hosted a symposium at the 45th Annual Society for Medical Decision Making North American meeting focused on the integration of decision science and implementation science. The goal was to illustrate how decision science and implementation science are currently being integrated and spur discussion on ways to move such research forward. Because this symposium was attended by decision scientists at the annual meeting, we primarily aimed to highlight how implementation science concepts could be integrated within decision sciences, with a particular focus on decision analytic modeling. However, we note that there are similar arguments being made in the implementation science literature,5–7 and research at this intersection could transcend traditional disciplinary boundaries.

Symposium presentations were given by 4 early-career researchers involved in novel research at this intersection, with specific attention to policy decision making and applications. We focus on policy because of our presenters’ expertise, the strong history of using models to inform policy decisions, and the overall importance of policy to population health. The symposium was attended by 51 people.

In this article, we summarize each presentation and key points raised by audience members. We aim to illustrate ways that decision scientists could begin integrating more implementation science concepts into their projects and identify key areas of future research for decision scientists interested in implementation science.

What Is Implementation Science?

The first presenter (G.C.) introduced the goals of dissemination and implementation science as well as key concepts, definitions, and frameworks of relevance to decision scientists. We specifically focus on implementation science, the study of how to scale-up and improve the delivery of “things” or innovations (pills, procedures, policies, programs, principles, practices) in routine, real-world settings and practices.2,8–11

Three foundational implementation science concepts were introduced. We specifically selected these 3 distinct concepts because of their ability to be integrated within decision analytic models and thus their potential use to modelers: implementation determinants, strategies, and outcomes. Implementation determinants are factors that impede (barriers) or promote (facilitators) the uptake and successful integration of an innovation in real-world practice, 12 implementation strategies are specific ways to enhance the implementation of an innovation, 13 and implementation outcomes are how the field measures and understands the effects of implementation efforts, separate from intervention effects. 14 For each of these key concepts, we provide specific definitions, examples of how these concepts could be integrated into decision analytic models, and key references for further reading in Table 1.15–55

Key Implementation Science Concepts for Decision Analytic Modeling

Within implementation outcomes, we wish to bring readers’ attention to implementation costs (see the last row of Table 1). This is an active area of methodological research within implementation science, particularly with regard to conceptualizing and measuring implementation costs15–22 and describing their wide-ranging applications.23–32 In brief, implementation costs are an important complement to innovation costs because they quantify the costs and resources required to put the innovation into practice through implementation strategies. 15 Similar to innovation costs, implementation costs include both fixed and variable costs that vary over the course of implementation and implementation scale.4,17,20,33 Recent methodological articles have demonstrated how to apply common cost techniques such as micro-costing to understand implementation costs as well as innovation costs.15,34,35 Within a modeling framework, these costs are crucial to understanding the true cost-effectiveness as well as the feasibility and affordability of innovations being considered for scale-up across various settings.15,33,36,37

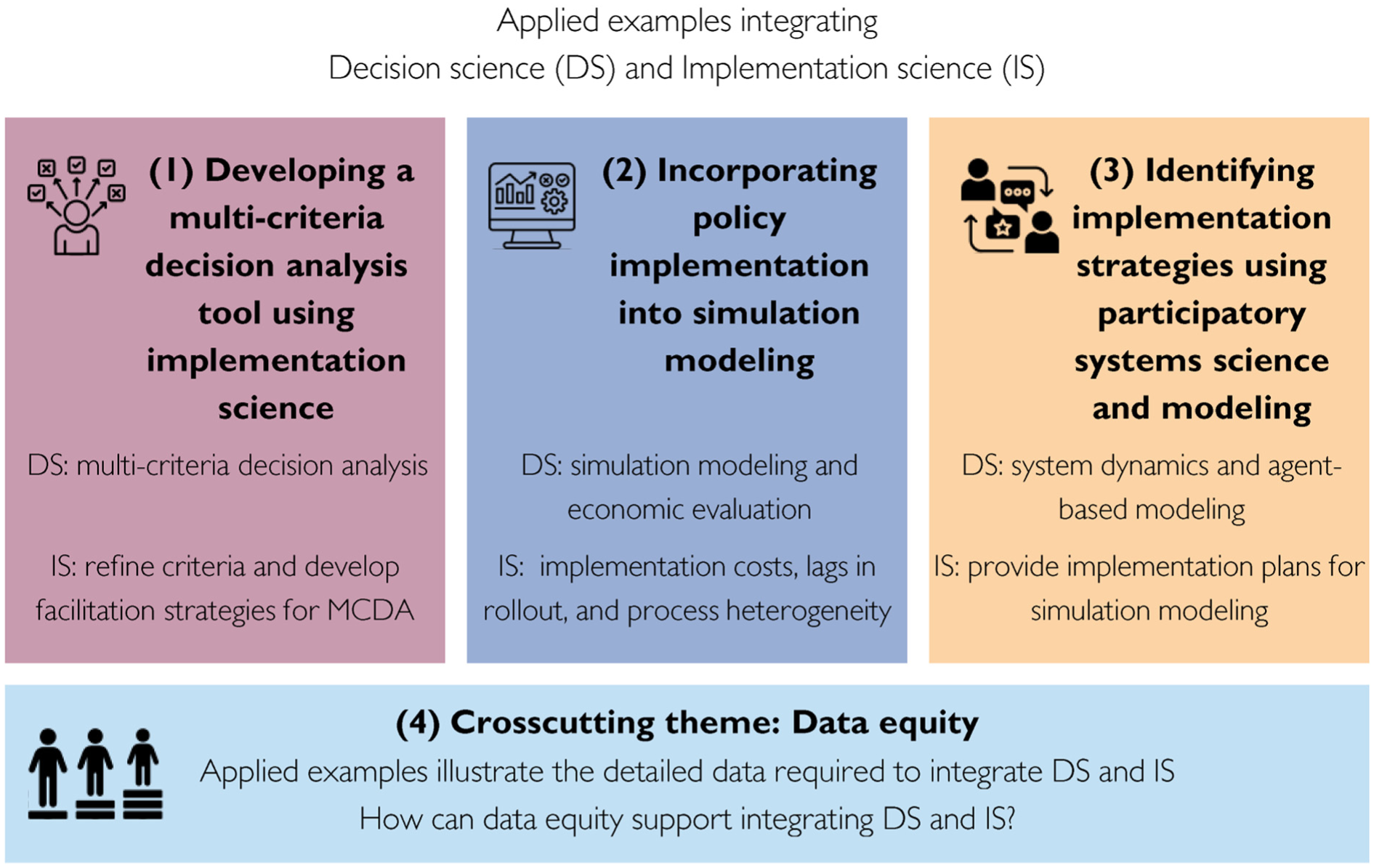

After this introduction, 3 speakers presented how they conduct interdisciplinary research integrating decision and implementation science. Each presentation approaches this integration slightly differently, using different aspects of implementation science and different modeling methods, effectively showcasing the breadth of potential applications. For each presentation, Figure 1 illustrates the specific components of implementation science and decision science included.

Overview of symposium presentations and components of decision science (DS) and implementation science (IS) included.

Three Applied Examples of Integrating Decision Science and Implementation Science

Developing a Multicriteria Decision Analysis Tool Using Implementation Science (Gracelyn Cruden)

To illustrate the integration of implementation science during the development of a decision analytic model to support policy planning, the presenter described the iterative development of a multicriteria decision analysis (MCDA) tool to support state-level child welfare system actors (e.g., leadership, middle managers, workgroups) make decisions about which programs to implement to prevent child maltreatment. MCDA approaches are broad but generally defined as approaches to analyzing (and comparing) decision options across multiple criteria. 55 To that end, approaches typically require identifying and defining which criteria matter, the relative importance of those criteria (i.e., weights), and how decision options will be compared (i.e., scoring). 55

MCDA criteria are the key factors that influence a decision or characterize the decision alternatives, such as potential resources required, outcomes affected, and costs. Criteria are typically presented as having acceptable ranges or thresholds. Weights quantify the relative influence of each criterion on the overall decision. Criteria and weights can be combined through a variety of quantitative methods to generate scores for each decision alternative (e.g., interventions) compared in the tool.56,57 Scores are then used to identify which intervention alternatives are the best fit for the decision at hand. The team used implementation science concepts to define the tool’s criteria and develop strategies to support the tool’s use in practice.

The tool’s criteria integrated implementation science through the RE-AIM evaluation framework.58,59 This framework specifies 5 key components linked to implementation success over time: reach, efficacy, adoption, implementation, maintenance. 59 After conducting formative qualitative work with 8 end users, 58 findings were mapped onto the RE-AIM framework to ensure that no implementation-relevant constructs were missing. Comparison revealed that concepts of implementation maintenance, or sustainment, were missing. A related criterion was added and then validated by end users in a second round of feedback. These criteria were further revised in a recent pilot, in which several criteria were modified for clarity or relevance and several were added, including one related to the potential impact on improving health equity. 60

Implementation science was also used to develop strategies that could support the ultimate utility and feasibility of the MCDA tool in practice. MCDA tools take a sociotechnical approach, meaning that the technical MCDA components are only part of the decision process. How the tool is integrated into decision making—particularly in group decisions that entail additional social aspects compared with individual decision making—is a critical part of an MCDA tool’s utility. Although there is substantial research on the methods for developing and validating the 3 core components of technical MCDA tools, the literature is sparse when it comes to specifying reproducible interventions for the “socio” component. Thus, in an ongoing clinical trial (K01MH128761; NCT06375551), the team is testing 2 modalities (live facilitation, self-guided) of a facilitation approach to incorporate the tool’s results into group decision discussions. The facilitation strategies are informed by systems thinking and systems science methods (group model building) and the decision hygiene approach—a process for minimizing bias and random variance in decision making. 61 In addition to individual- and group-level decision-making outcomes, such as the decision makers’ perceptions of the quality of the decision experience and their commitment to decision making, implementation outcomes will also be assessed, including implementation fidelity—adherence to processes known to be critical for high-quality intervention implementation. 62

“Big P” Policy Implementation Considerations for Modeling (Natalie Riva Smith)

The second presentation discussed how concepts from implementation science can inform more traditional simulation modeling approaches. The discussion focused specifically on “Big P” policy, which refers to formal or written government actions, such as pieces of legislation or regulation. 63 Increasingly, health policy and public policy scholars are stressing the importance of studying how “Big P” policy is implemented, including the implementation process and strategies used, as well as measuring implementation outcomes in addition to traditional service and health outcomes.63–67

Sugary drink tax policies provide a useful illustrative example of the importance of accounting for policy implementation. 23 Work evaluating the implementation of such policies in jurisdictions such as Berkeley and Oakland, California; Seattle, Washington; Philadelphia, Pennsylvania; and Cook County, Illinois, has shown that there are many actions that must happen after a policy has been formally passed to make it possible to achieve a policy’s intended impacts. For example, jurisdictions often need to undertake rulemaking to expand the specificity of legislation (e.g., determine responsibilities of key government partners, establish fine structures), create infrastructure to collect taxes, and educate regulated entities on how to pay taxes. The evidence also points to heterogeneity in implementation across jurisdictions.

Work accounting for implementation in standard policy evaluative work is emerging (both methodological and applied).68–72 These types of studies often seek to understand how implementation mediates or moderates a policy’s health outcomes.68,69 A necessary complement is for simulation modeling of policies to better incorporate implementation considerations (e.g., in cost-effectiveness modeling). 4 This presentation addressed 3 potential theoretical issues that should be accounted for in such models:

Time lags: Implementation processes create a time lag between when the policy is adopted, when costs begin to accrue, and when health effects start to accrue.

Implementation costs: Not accounting for the costs of implementation processes will result in cost estimates that are too small.

Process heterogeneity: Implementation processes will unfold differently in different jurisdictions (e.g., different implementation strategies will be used).

Issues such as these can affect the accuracy of models and policy comparisons (particularly cost-effectiveness comparisons). 4 These issues have relatively straightforward solutions: 1) explicitly modeling time lags, 2) including estimates of implementation costs, and 3) modeling heterogeneity in implementation processes via modifying a model’s structure or conducting scenario analyses. Sensitivity and uncertainty analyses focused on these issues are also critical: for example, examining the impact of different implementation time lags on cost-effectiveness results after a set time. At minimum, modelers should be explicit when models and results do not account for implementation.

As an applied example, this presentation drew on published work that assessed the potential costs to implement 2 sugary drink policies at the federal level in the United States: a sugary drink tax and warning label. 23 The team used a mixed-methods approach to identify and quantify implementation costs. First, they conducted interviews with individuals who were knowledgeable of federal policy-making procedures and had experience with federal rulemaking, public health law, the sugary drink industry, or public health advocacy. These interviews provided information on the possible way(s) that sugary drink policy implementation would unfold at the federal level. Specific actions that would be required of different groups included litigation to repeal legislation or hold up rulemaking, creating tax compliance or collection infrastructure, redesigning warning labels, and monitoring compliance with policies. This information was then used to drive the quantitative cost analyses. Cost estimates were developed using a variety of data sources including peer-reviewed literature, government documents and budget reports, or tools from nongovernmental organizations. As one example, the costs to relabel sugary drinks were estimated using an existing tool developed by the Research Triangle Institute. 73

The process and findings from this article underscore that policy is not costless to implement and speak to the importance of interdisciplinary, mixed-methods collaboration. Several important methodological questions and next steps were also raised, including what phases of the policy process are included in cost estimates, how to handle policy “packages” (i.e., multiple, related policies being implemented at once), and how to handle the significant uncertainties within cost estimation.

Identifying Implementation Strategies Using Participatory Systems Science and Modeling (Christina Yuan)

The third applied example focused on the application of systems science simulation modeling for implementation strategy decision making. In contrast to the to the prior 2 presentations where implementation science considerations informed decision science questions, this presentation illustrated how methods from decision science can help inform implementation science questions.

Ideally, implementation strategies are designed and selected to target implementation determinants (Table 1). However, in practice, there is often a mismatch between determinants and strategies,74,75 and existing implementation strategy selection methods are often inadequate.76–78 This session highlighted the potential for using systems science simulation models to serve as “virtual laboratories” to explore the potential effects of implementation strategies on outcomes prior to implementation. Such models can support decision making not only around which strategies to use in the first place but also how to operationalize and adapt strategies throughout the implementation process.

As one example, the Johns Hopkins ALACRITY Center for Health and Longevity in Mental Illness is planning to use a participatory systems science approach to identify implementation strategies to scale-up evidence-based smoking cessation treatment for people with serious mental illness.

The modeling approach involves triangulating the expertise of community partners (including policy makers), an extensive array of data sources, and model simulations. The process will begin by iteratively engaging with a diverse array of community partners to develop and refine a conceptual model that identifies candidate implementation strategies. The variables in the conceptual model will be rooted in the Exploration, Preparation, Implementation, Sustainment (EPIS) Implementation Framework, 79 which is an implementation model that highlights 4 phases of the implementation process and enumerates factors associated with the outer context (system), inner context (organization), factors that bridge the outer and inner context, and the nature of the innovation being implemented. This theory-informed conceptual model will provide the foundation for an integrated system model that will combine an agent-based model that simulates micro-level system behaviors (e.g., providers’ decision-making processes) with a system dynamics model that simulates macro-level system behaviors (e.g., staffing levels). The model will be calibrated using empirical data from prior research on smoking cessation treatment for people with serious mental illness, new primary data collection efforts collecting data across potential implementation contexts (including implementation costs), and a literature review of anticipated effect sizes of candidate implementation strategies.

The overall goal of the project is to create an interactive dashboard that includes “levers” to simulate implementation strategies at various levels of intensity and “switches” to simulate different combinations of strategies (which can be used to turn different strategies on and off, reflecting how different contexts may make decisions). The purpose of the dashboard will be to aid decision making by allowing users to “virtually test” how adjustments in the selection, dosing, and combination of implementation strategies would potentially affect intervention delivery and outcomes.

Cross-Cutting Theme: Data Equity Considerations when Integrating Decision Science and Implementation Science (Tran Thu Doan)

Each of the applied presentations touches on the data requirements of working at this intersection. Accounting for implementation contexts and processes in models, including costs and outcomes and comparing alternatives on a range of factors, requires detailed data. Especially when generating simulation results for specific contexts (often crucial in implementation-focused work), data must be sufficiently detailed to identify key factors relevant to implementing effective strategies for affected groups. Decision scientists will need data regarding implementation costs, clinical outcomes, intervention, and implementation outcomes as well as on how implementation processes intersect with usual care, disaggregated by populations of interest.

These data issues necessitate understanding data equity and related concepts, the focus of the final presentation. Data equity is a useful approach for identifying health similarities and differences within a population across contexts.80–83 Data equity refers to the concept and practice of collecting and using data in ways that are fair and representative of populations served.84,85 It is guided by principles of beneficence, justice, and fairness, as outlined in the Belmont Report, 86 for protecting human participants in ethical research and practice. It involves considerations of statistical power, bias, and discrimination in data collection methods, algorithms, and modeling to inform data-driven decision making. Data equity integrated with decision modeling can enable more context-specific modeling and help support precise implementation of programs and policies to promote overall health and quality of life.

A major component of data equity is data disaggregation, which involves the collection and use of data broken down by relevant categories. Thoughtful considerations are required regarding which, how, and when data categories should be disaggregated. For example, disaggregating sexual orientation and gender identity data by Medicaid coverage status could be extremely important for accurately simulating alternative policies or implementation processes. 87 Choices about the relevant data categories to use in each modeling effort should be made in collaboration with key decision partners, supplemented by the literature, and grounded in theory. When integrating decision modeling with implementation efforts, data can capture groups that fund the implementation, perform the implementation, or receive the implemented innovation. In disseminating research findings, products should fully and transparently justify and report on these choices, including what the variables are intended to measure and how the variables were measured.88,89

A second major component of data equity is “equitable data collection,” which refers to the process of gathering information that ensures beneficence, justice, and fairness for all. This aims to prevent discrimination and promote equal representation and opportunities for all populations affected by the health issue under study. Strategies include innovative sampling techniques, respectful engagement with communities, and careful consideration of ethics, consent, and privacy. Data collection should be guided by data sovereignty and governance principles that are co-created in respectful collaboration with community members and other decision partners. Data collection procedures should honor community members’ expressed desires for ownership, control, access, and possession of such data. 90

Data equity and associated concepts are rapidly becoming institutionalized. There has been increasing national demand in recent years to improve the collection of granular data and to advance health for all. In a major update to the US Census data collection methods, the US Office of Management and Budget revised Statistical Policy Directive No. 15 in 2024, updating standards for maintaining, collecting, and presenting federal data on race and ethnicity. 91 Key revisions included combining race and ethnicity into 1 question and establishing “Middle Eastern or North African” as a minimum category. In 2023, the White House released the Federal Evidence Agenda on lesbian, gay, bisexual, transgender, queer or questioning, intersex (LGBTQI+) people to support sexual orientation, gender identity, and variations in sex characteristics data collection. 92 In 2024, the US Food and Drug Administration 93 and the National Academies of Sciences, Engineering, and Medicine 94 supported enhanced data collection of populations from different backgrounds through increasing enrollment in clinical studies or drug evaluations. The 2025 federal restrictions on diversity, equity, and inclusion programs or language introduced new uncertainty around the funding for and prioritization of future stratified analyses. Government agencies, health systems, and payers should be aware of recent policy changes, contextualize their work, and acknowledge challenges of conducting health equity analyses.

Data equity remains a critical tool to guide the integration of implementation and decision sciences because it allows researchers to investigate targeted actions that result in demonstrably improved health outcomes for disaggregated populations.

Moderated Discussion

There were 2 major points of discussion during the moderated discussion after the presentations.

Is Implementation Science Ready for Decision Science?

One point of discussion was whether implementation science concepts are “ready” to be integrated with decision analytic models and methods. Discussion considered whether there is adequate evidence on implementation strategy effectiveness and core components to parameterize models, whether generalizable quantitative estimates exist for modeling, and the extent to which implementation determinants affect intervention and implementation effectiveness in various contexts. These are important questions and likely have different answers depending on the substantive area or methods used. Relatedly, audience members questioned whether rigorous measures of implementation outcomes exist to generate data appropriate for model parameterization. While the field of implementation science recognizes the need for more measures, rigorous measures do exist and continue to develop (examples cited throughout Table 1). For example, measures of implementation determinants, such as organization climate,95,96 are well-established.

These concerns about data also underscore the importance of collaboration between decision scientists and implementation scientists. For there to be appropriate data to inform models, decision scientists should collaborate with implementation scientists and ensure that implementation scientists know what data are needed for robust modeling efforts and can accordingly incorporate such measurement in implementation trial designs and measurement protocols.

Collaboration Is Crucial

A second major discussion point was the importance of improving collaboration between decision scientists and implementation scientists. Similar to how best practices for cost analyses entail engaging economic collaborators early, implementation science researchers can contribute knowledge of measurement challenges and possibilities and conduct the trials to collect data for simulation.17,33,97,98 Ways to bring implementation science and decision science researchers together are needed. Recent work on ways to improve interdisciplinary and transdisciplinary collaboration between health economists and implementation scientists can help inform paths forward. 98 Possible processes to help establish and maintain collaborations include establishing a shared understanding of the central research goals, concepts, and methods from collaborators’ fields (e.g., decision science or implementation science), clarifying and appreciating the expertise of different team members, and discussing how approaches to questions might differ across disciplines. 98 Funding will be critical to protect researchers’ time for networking and to create collaboration activities.

Moving Forward

This symposium illustrated several ways that current work is integrating the fields of decision science and implementation science. Building on the presentations and discussion, we discuss some specific ideas for moving this type of work forward, with particular emphasis on actions that decision scientists can take.

To address concerns raised in the symposium’s discussion about limited implementation data, we propose that decision analytic modelers consider integrating value-of-information methods within models that include implementation concepts.99–103 Modeling could apply value-of-information methods to understand which parameters are most critical to assess, the relative advantage of increased resource allocation to do so, 102 or how implementation affects value-of-information results. 99

We also challenge decision scientists to consider that decision analytic modeling can be an effective learning tool, rather than just a predictive tool (although predictive modeling is certainly useful 104 ).105–108 We envision that modeling to learn, rather than solely modeling to predict, could be particularly helpful to illuminate evidence gaps and define key priorities for implementation research (more broadly than with value-of-information methods). Overall, beginning to model can define evidence gaps, thereby helping to shape funding proposals and also serving the dual purpose of helping to formalize collaborations between decision and implementation scientists.

Another consideration for future work is how implementation science could be integrated with more advanced equity-focused simulation approaches, such as distributional or extended cost-effectiveness analysis.4,109,110 For example, researchers have used distributional cost-effectiveness to examine how alternative engagement strategies within a cancer screening program influence outcomes across population groups. 111 Key strategies included targeted reminders to individuals living in income-deprived areas and a universal reminder to all individuals. 111 Notably, researchers allowed for heterogeneity in how different groups receiving the intervention would likely respond to each strategy. 111 Future work could build on these methodological approaches by incorporating findings from implementation research, such as alternative implementation strategies for engaging different groups or refining the costs of implementing different strategies. The need for stratified group-specific estimates in such approaches also underscores the need for attention to principles and practices of data equity.

While the work presented here focused on policy settings and decision analytic modeling, future work can and should expand this thinking into other areas of decision science, particularly shared decision making and preference research. Within shared decision making, scholars have suggested that incorporating implementation science concepts is a necessity to promote more widespread uptake of shared decision making in clinical practice.112,113 Decision science work focused on preference and prioritization could also provide useful information for both decision analytic modeling and implementation science applications (e.g., which implementation outcomes are the most important include in models, 114 which approaches are most acceptable to the population implementing an innovation or targeted by implemented interventions 115 ).116,117 Finally, the other side of dissemination and implementation science—dissemination—is also an important area for decision science researchers to consider. Even simple simulation models can involve massive amounts of assumptions, data, and results. Interrogating how to best communicate models to decision makers is extremely important to ensure model transparency and trust by users. 118

Conclusion

Implementation and decision science share a broad goal of improving individual and population health by conducting research to inform the selection and scale-up of health innovations such as policies or programs. To realize this goal, our symposium argued that integrating these fields could increase the practical utility, usability, and uptake of decision analytic modeling approaches. To highlight the necessity and potential of this integration, the symposium included innovative work at the intersection of policy, implementation science, and decision analytic modeling. The symposium’s moderated discussion indicated a need for developing collaborations between implementation and decision scientists to move this type of work forward, and we outline potential areas for future research. Moving forward, we urge decision science researchers to undertake interdisciplinary research integrating implementation and decision science to help inform data-driven policy decision making and drive the scale-up of promising health policies across contexts.

Footnotes

Acknowledgements

The work presented in this article was presented at the 45th Annual Meeting of the Society for Medical Decision Making in Philadelphia, Pennsylvania, in October 2023. The authors gratefully acknowledge Dr. Mark Bouthavong, who supported the symposium as a moderator and served as an internal reviewer for this article.

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article. The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: Financial support for this work was provided by the National Institutes of Health under grants K99CA277135 (NRS), K01MH128761 (GC), and P50MH115842 (CY). The funding agreement ensured the authors’ independence in designing the study, interpreting the data, writing, and publishing the report. Funding for open access for this article was made possible by K99CA277135.

Ethical Considerations

Not applicable.

Consent to Participate

Not applicable.

Consent for Publication

Not applicable.

Data Availability

There is no code or data associated with this article.