Abstract

Highlights

Patients who viewed quantitative information in a decision aid about colorectal cancer screening were more knowledgeable about nonquantitative information and were more likely to have adequate knowledge according to a variety of approaches for assessing that, compared with individuals who viewed only qualitative information. This result supports the inclusion of quantitative information in decision aids.

Researchers assessing patient understanding should consider a variety of ways to define adequate knowledge when assessing decision quality.

Introduction

Challenges and Previous Assessments of “Adequate Knowledge”

Possessing adequate knowledge is an essential part of making an informed medical decision. If patients do not adequately understand basic facts about their options—the risks, benefits, and alternatives—then consent is ethically questionable. 1 While subjective measures of knowledge assess how a person feels about their level of understanding (e.g., Decision Conflict Scale 2 ), objective measures of knowledge assess the individual’s understanding of specific facts. Objective measures play an essential role in some approaches to assessing the quality of a medical decision, such as the Multi-dimensional Measure of Informed Consent (MMIC) 3 and the Colorectal Cancer Decision Quality Instrument. 4

Researchers have developed tests to assess objective knowledge, usually composed of multiple-choice and true-false questions covering key facts. Sometimes these tests are designed to determine if the participant’s knowledge level is “adequate.”4–6 One central challenge for formulating and evaluating knowledge tests is that an adequate level of knowledge must be achievable without knowing everything that health professionals know. Legal and ethical theories of informed consent have attempted to define what subset of information must be disclosed to a patient or potential research participant. 1 The reasonable person standard, for example, requires disclosure of all information that would be material to the decision for a reasonable person. 1 The standard is importantly vague, though, due to the terms material and reasonable.

There is no simple answer about what information should be disclosed or what questions should be used to test whether a patient or potential research participant has adequate knowledge.7–10 There are 3 key issues that make this determination challenging.

First, the designer of a knowledge test must decide what facts or topics should be covered. As mentioned above, a vast number of facts are relevant to any informed choice, and only a subset can be disclosed during informed consent or evaluated on a knowledge test.

Second, there are many ways to write a question to assess comprehension of a given fact or topic, and how a question is written will affect how many individuals will answer it correctly. For example, patients have a higher chance of answering a true-false question correctly than a multiple-choice question with 4 options or a fill-in-the-blank question about the same fact.

Third, a designer of a knowledge test must decide how many of the questions must be answered correctly for the subject to count as having adequate knowledge. An argument could be made for requiring 100% correct, since the questions were chosen because they are considered essential to an informed choice. On the other hand, many knowledge tests judge patients to have adequate knowledge even if they only get 80% or even fewer correct. Every test in school, after all, has a passing score lower than 100%.

Knowledge Assessment in Colorectal Cancer Screening

This article examines these issues in relation to colorectal cancer (CRC) screening and the design of decision aids. While CRC screening is recommended by the United States Preventive Services Task Force, 11 American Cancer Society, 12 and others13,14 for all people ages 45 to 75 y, people with average risk can choose from a variety of approved tests, most commonly colonoscopy or stool testing (FIT or Cologuard).

Colonoscopy provides a direct visual examination of the colon, can remove most polyps found, and is performed just once every 10 y if normal. However, it is also an invasive procedure that uses sedation, must be performed in a hospital setting, and requires the patient to drink a strong laxative to clean out the colon the day before the procedure. Stool tests are noninvasive and can be performed at home, although they are conducted annually, require handling stool, can fail to identify polyps and cancers, and positive stool tests require a follow-up colonoscopy. 11

Multiple studies have examined patient understanding of their options, often before and after these patients have reviewed a decision aid about CRC screening. 15 For instance, a recent review identified 9 studies that measured knowledge before and after a patient viewed a self-administered decision aid (i.e., outside of a clinic visit). 16 A vast number of other papers have measured lay individuals’ knowledge of CRC screening in a wide variety of settings, 17 although a smaller number aimed to identify whether individuals had adequate knowledge. We selected 3 papers that did this as part of an attempt to evaluate the quality of decision making following the MMIC approach 3 : these 3 papers were selected to display the range of possible approaches,4–6 since a more systematic review is beyond the scope of this article.

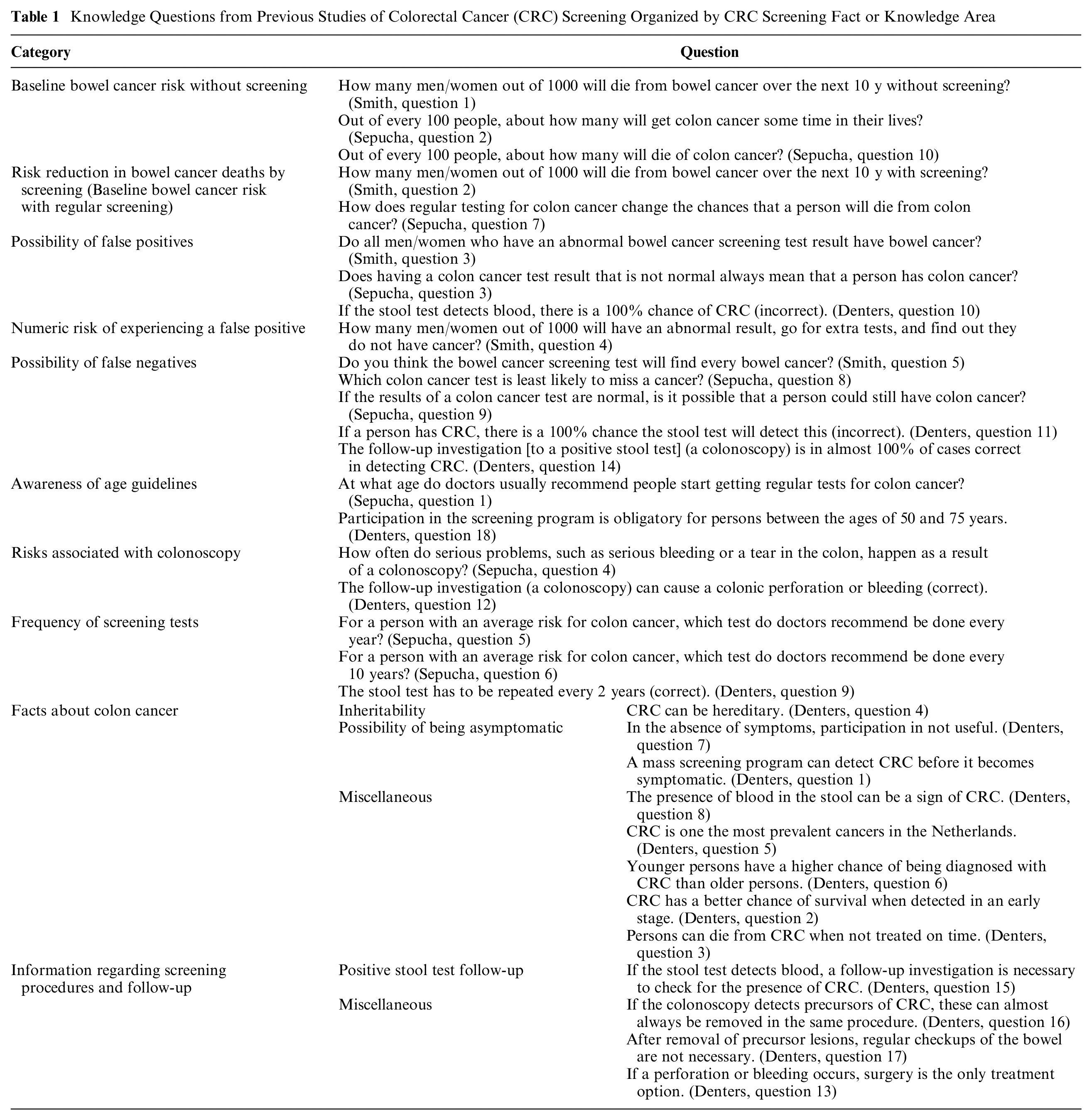

The knowledge tests used by these studies (Table 1) display great variability. While the authors do not explicitly discuss the reasons for choosing which facts to cover, their design of individual questions and the passing scores suggest important value judgments. Such judgments are independent of the standard evaluation of questions on surveys or questionnaires, such as psychometric properties, face validity, ceiling or floor effects, and ability to discriminate between different groups with presumed different levels of knowledge. 4

Knowledge Questions from Previous Studies of Colorectal Cancer (CRC) Screening Organized by CRC Screening Fact or Knowledge Area

The knowledge tests we reviewed cover a wide range of topics. The only topics that are covered by all 3 tests are the possibilities of a false positive and false negative, although only 1 asks the specific false-positive rate. 5 Two out of the 3 tests ask the specific (quantitative) risk of CRC with and without screening, with 1 test inquiring about 10-y mortality 5 and another about lifetime incidence and mortality. 4 Two of the 3 knowledge tests ask about the frequency of specific screening tests, the ages at which screening is recommended, and colonoscopy’s potential complications.4,6 One test includes multiple questions about screening procedures and follow-up that are not covered by the other knowledge tests. 6 Questions on different tests about the same topics are framed differently, sometimes as true-false and sometimes as multiple choice.

The reviewed tests also use very different passing scores, with minimal explanation of reasons for this choice. Using a “competency-based approach,” Smith et al.

5

“decided a priori that participants were considered to have ‘adequate knowledge’ if their total score was 6 or more out of 12,” or 50%. Denters et al. grouped their 18 knowledge questions into 2 categories (general knowledge and screening specific items) and relied on an expert panel to decide: “that knowledge can be considered adequate if at least two-thirds of knowledge items had been answered correctly (total knowledge score ≥ 12) under the condition that at least half of the items on general knowledge (general knowledge score ≥ 4) and at least two-thirds of screening specific items (screening specific knowledge score ≥ 8) were answered correctly.”

6

Sepucha et al. 4 defined adequate knowledge as having a “knowledge score at or above the mean knowledge of the decision aid group.”

Clearly, these differences in knowledge tests can have a major impact on whether an individual counts as having adequate knowledge or not: the same person could pass one test and fail another. For studies that compare decision aids or other interventions by appeal to the percentage of individuals achieving adequate knowledge, these differences in knowledge tests might affect a study’s findings. A closer examination of the justification for choosing specific questions and setting a passing score appears essential to improving the normative evaluation of decision support and patient choices.

Rationale for analysis

In this study, we report a secondary analysis of a randomized trial conducted by our team that measured the impact of adding quantitative information to a decision aid regarding CRC screening. 18 The study also measured the impact of adding a nudge to increase the chance that the patient will choose FIT for screening, but that part of the study is not the focus of this secondary analysis. Widely used guidelines recommend that decision aids should include quantitative information about probabilities or frequencies specifying baseline risk, risk reduction, and possible negative consequences, to support informed choice and shared decision making. 19 There is limited evidence, however, that disclosure of quantitative information of this sort improves decision quality.20,21 Previous studies have shown that disclosure of quantitative information increases the accuracy of patient perceptions, measured as correct descriptions of the probabilities that were disclosed, but these studies have not analyzed the impact on other parts of patient knowledge 22 or have not found an effect on nonquantitative knowledge. 5

A previous publication has described the study’s outcomes involving intent to be screened, test preference, decision conflict, and uptake 18 but did not analyze whether presenting quantitative information improved patient knowledge. In this secondary analysis, we examine the impact of disclosing quantitative information on patients’ knowledge of facts other than the specific probabilities that were disclosed (“qualitative knowledge”). We also examine the impact of using different approaches for determining whether patients have “adequate knowledge.”

Furthermore, we propose and apply a novel approach we call “choice-based knowledge assessment.” As mentioned above, few patients achieve perfect scores (100%) on knowledge tests. Researchers respond by setting a “passing score” for being judged to have adequate knowledge, such as 50%, 66%, or 80%, even though there is no theoretical basis for any of these cutoffs. Furthermore, relying on an overall “passing score” does not reflect the observation that some questions may be particularly relevant for a patient’s choice. In the choice-based knowledge assessment, we have identified a subset of the knowledge questions that appear to be particularly relevant for specific choices (not getting screened, getting screened with FIT, or getting screened with a colonoscopy), and patients count as having adequate knowledge only if they answer all of the questions in this subset correctly.

The ethical justification for this approach is that if we allow some patients who get less than 100% correct on a knowledge test to count as having adequate knowledge (as previous researchers do), we should, at the very least, expect patients to understand certain knowledge items that are particularly relevant to the patient’s choice. This approach is supported by the reasonable person standard commonly used in the informed consent literature.

1

In their discussion on the nature of understanding, ethicists Beauchamp and Childress commented that “patients and subjects usually should understand, at a minimum, what an attentive health care professional or researcher believes a reasonable patient or subject needs to understand to authorize an intervention [emphasis added].”1(p132) Importantly, Beauchamp and Childress indicated that the required content for the patient’s understanding follows directly from the specific intervention they are choosing, not the full range of possible interventions. Some knowledge about nonselected interventions may be necessary, of course, but the required type or level of understanding may shift based on which intervention the patient is choosing. Beauchamp and Childress further said that “understanding need not be complete, because a grasp of central facts is generally sufficient. Some facts are irrelevant or trivial; others are vital, perhaps decisive. In some cases, a person’s lack of awareness of even a single risk or missing fact can deprive him or her of adequate understanding.”1(p132)

Which facts are vital and required and which are trivial may partly depend on which intervention the patient is choosing, and this is an idea we explore for CRC screening in this article.

Methods

Study Setting

This project is a secondary analysis of a study that was conducted from August 2011 to February 2013 at 5 primary care sites in the Indiana University Health system. The original study was approved by the Indiana University Institutional Review Board. The methods and primary outcomes of this study have been reported elsewhere. 18

Inclusion and Exclusion Criteria

Participants consisted of male and female adults aged 50 to 75 y who were nonadherent to CRC screening guidelines, defined as having no colonoscopy in the past 10 y, no sigmoidoscopy in the past 5 y, or no FIT in the past year.11,12 Patients were excluded from the study if 1) they had an elevated risk for CRC, 2) were undergoing workup for possible CRC, 3) did not read English, or 4) had been advised by a health care professional to avoid CRC screening.

Study Procedure

Participants completed a baseline, self-administered survey (T0) in the presence of a research assistant. Participants then viewed a decision aid based on their assignment to 1 of 4 experimental groups: basic information (control), quantitative, nudge, and quantitative + nudge.

All participants saw the same basic information about CRC and CRC screening tests, consisting of a module that included a video produced by the American Cancer Society followed by 4 PowerPoint slides. The basic information (control) group saw only this module.

After viewing the basic information module, the quantitative group viewed 4 slides that presented lifetime CRC incidence and mortality without screening, lifetime CRC mortality with regular screening with colonoscopy and FIT, and the frequency of positive FIT (requiring follow-up colonoscopy) and of serious complications from colonoscopy.

The nudge group also started by viewing the basic information module but then viewed 3 slides with phrases meant to increase uptake of FIT. Finally, the quantitative+nudge group viewed the basic information module followed by the slides from the quantitative arm and then those from the nudge arm.

Immediately after viewing the decision aid, participants completed a postintervention survey (T1). Six months later, participants were contacted to repeat the postintervention survey and assess screening uptake (T2). More information on the study procedure and decision aids used can be found elsewhere. 18

Measures of Knowledge

The T0, T1, and T2 questionnaires include a knowledge test containing 8 true-or-false questions that were constructed through a consensus process among the members of the research team (see Table 2). We refer to these questions as “qualitative knowledge” questions, to distinguish them from 4 additional “quantitative knowledge” questions that were asked regarding average lifetime CRC incidence and mortality and risk reduction provided by regular colonoscopy and FIT. In our assessment of whether patients had adequate knowledge, we focused entirely on answers to the qualitative knowledge questions. We took this approach because one of the goals of the study was to assess the effect of presenting quantitative information in a decision aid on knowledge and decision making, beyond recall of those numbers.

Qualitative Knowledge Questions in our Study

Knowledge was measured in several different ways. We calculated the mean number of questions correct per arm at T0 and T1 to assess the overall effect of the decision aids on knowledge. We also assessed the percentage of patients in each arm, at T0, T1, and T2, who achieved adequate knowledge, defined as achieving a “passing score” on the knowledge test, following previous studies.4–6 For this, we use the cutoffs of 5 correct, 6 correct, and 7 correct out of 8.

Choice-Based Knowledge Assessment

We also used an alternative, novel way of determining adequate knowledge that identifies essential questions based on an individual’s planned screening behavior after viewing the decision aid. The patient is considered to have adequate knowledge only if they answer all of the questions of this subset correctly. Table 3 shows the selection of the essential questions for each possible screening intention. For instance, consider question 5, which assesses comprehension that it is possible for a person to have colon cancer even if they do not have symptoms. This fact is essential for a patient who chooses not to be screened: if they think that their lack of symptoms means that there is no chance of having CRC, they may forego screening based on a false belief that they are completely safe. On the other hand, failing to understand this fact does not seem as important for a decision made to be screened with colonoscopy or FIT. Since the patient in that case has chosen to be screened, they are clearly not acting on a false belief that they cannot have colon cancer.

Choice-Based Knowledge Assessment: Essential Questions to Determine Knowledge Adequacy, Based on Test Intent (T1)

Similar reasoning applies to question 8, which tests whether the patient knows that they can get colon cancer even if nobody in their family had it. This fact is essential to having adequate knowledge for those who choose not to be screened but not for those who intend to be screened. In contrast, question 2—knowing that there is more than 1 screening test available to check the colon—is essential knowledge for patients no matter what they chose: if they chose colonoscopy, they should know about the availability of FIT, and vice versa. If they chose not to be screened, they should be aware of both the available choices, since one might be more palatable to the patient.

Statistical Methods

We combined the 2 groups that viewed only verbal information (basic information group and nudge group) to form the “control group” and the 2 groups that viewed verbal information and quantitative information (quantitative group and nudge+quantitative group) to form the “quantitative group.”

We determined test preference for T1 based on patient responses to questions on the original questionnaire. 18

To determine if there were significant differences in knowledge between the 2 groups at T1 after viewing the decision aid (adjusting for T0), we used analysis of covariance regression models, linear models for mean number correct, and logistic models for adequate knowledge, defined either by passing score (yes/no) or essential knowledge (yes/no). Our level for significance was set at a P value less than 0.05. Analyses were conducted in SAS version 9.4 (Cary, NC).

Results

Data on participant demographics can be found elsewhere. 18 Briefly, most of the participants were White and female, and there were no significant differences for demographic variables or outcomes between groups at baseline (T0). In total, we analyzed 213 participants; 105 were included in control arm (C) and 108 included in the quantitative arm (Q).

Results from knowledge as mean number of questions correct are reported in Table 4. Knowledge increased for both arms after viewing the decision aids from T0 to T1. Viewing quantitative information resulted in significantly higher scores at T1 in mean number of qualitative knowledge questions answered correctly, adjusting for T0 (2.81 [Q] v. 2.64 [C], P < 0.05).

Mean Qualitative Knowledge at T0 and T1 by Arm

C, control arm; Q, quantitative arm.

P value for arm from the model: T1 outcome = arm + T0 outcome + site + age + gender.

The percentage of patients who answered a predetermined number of questions correctly can be found in Table 5. The percentage of patients who answered 5 out of 8, 6 out of 8, or 7 out of 8 correctly increased from T0 to T1 in both arms. In addition, for a cutoff of 6 out of 8 and 7 out of 8, viewing quantitative information resulted in a significantly greater percentage of patients achieving adequate knowledge at T1 (94% [Q] v. 83% [C], P < 0.05, and 86% [Q] v. 71% [C], P < 0.05, respectively) but not for 5 out of 8 (100% [Q] v. 94% [C], P > 0.05).

Adequate Knowledge Defined as ≥5, ≥6, or ≥7 out of 8 by Arm

C, control arm; Q, quantitative arm.

P value for arm from the logistic model: T1 knowledge = arm + T0 knowledge + site + age group + gender.

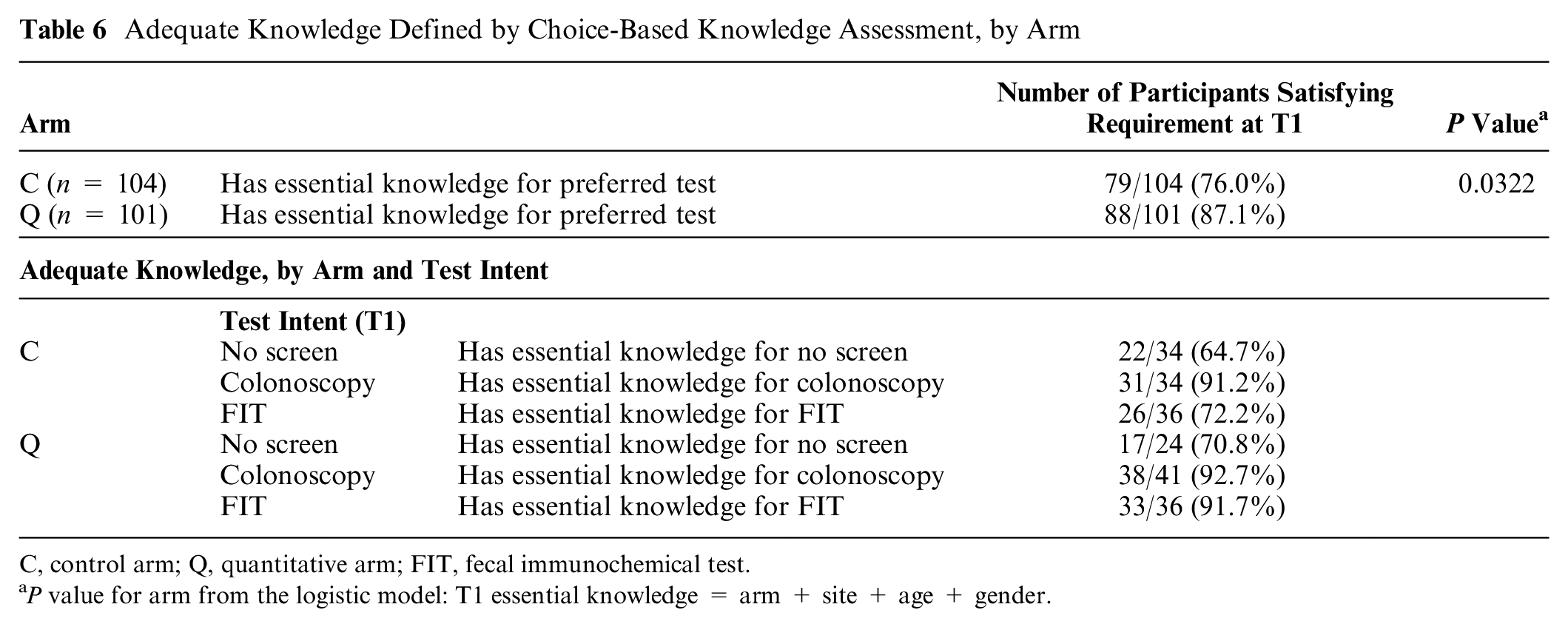

Table 6 displays the percentage of patients having adequate knowledge based on the choice-based knowledge assessment. Using this model, significantly more participants who viewed quantitative information were found to have adequate knowledge at T1 when compared with those who did not view quantitative information (87% [Q] v. 76% [C], P < 0.05).

Adequate Knowledge Defined by Choice-Based Knowledge Assessment, by Arm

C, control arm; Q, quantitative arm; FIT, fecal immunochemical test.

P value for arm from the logistic model: T1 essential knowledge = arm + site + age + gender.

Discussion

Major Findings

In this study, we found that viewing quantitative information about CRC screening led to improved knowledge about facts that did not involve recall of the specific quantitative information that was disclosed. This is the first study to show a positive effect on “qualitative knowledge” from disclosing quantitative information. This increase in qualitative knowledge also resulted in higher percentages of patients having adequate knowledge to make an informed choice according to multiple, but not all, ways of assessing knowledge, including a novel approach to determining adequate knowledge that we call choice-based knowledge assessment.

Quantitative Information Increases Qualitative Knowledge

Previous research has shown that presenting quantitative information about risk (such as the specific frequency of a medication side effect) may affect patients’ risk impressions and behavior, compared to if they view just qualitative statements of risk.22,23 On knowledge tests, patients who have seen quantitative estimates of their risk also do better at answering questions about their specific risk level than do patients who were not told that specific risk level. 22 But previous studies have not shown an impact on the retention of nonquantitative information. For example, a previous study found that individuals who viewed a decision aid about CRC screening that included quantitative information did better on numeric questions in a knowledge test but not on nonnumeric (“conceptual”) questions than did individuals who viewed a pamphlet that did not include quantitative information. 5

In contrast, participants in the current study who viewed quantitative information in addition to the standard qualitative (nonquantitative) information about CRC screening did better on a knowledge test that assessed only the qualitative information, compared with those who viewed just the qualitative information.

One possible explanation is that the quantitative information reinforced the qualitative information. For instance, one of the knowledge questions is a true/false question that “Regular stool testing can lower your chance of dying from colon cancer” (correct answer: true). While the qualitative module viewed by both groups states that colon cancer screening lowers the chance of dying from colon cancer, the quantitative module includes an icon chart showing that out of 1000 average-risk people who never get a stool test, about 30 will die from colon cancer, and that number goes down to 6 per 1000 for those who get annual stool tests. While the knowledge test did not ask participants to recall the numbers, seeing these numbers and the icon chart may have reinforced the message that tests “lower your chance of dying from colon cancer.”

Similarly, although the qualitative module says that people can get colon cancer even if they do not have a family history, the quantitative module explains, again using an icon chart, that out of 1000 people with average risk who never get screened, 60 will get colon cancer in their lifetime, potentially reinforcing this message. This quantitative information could have led more participants to correctly respond “false” to the true/false question, “You only have to worry about getting colon cancer if someone in your family has had it.” This finding emphasizes the potential importance of “gist” implications of quantitative information, emphasized by dual process accounts of risk communication.24,25 Smith et al. also cited a dual process account, the fuzzy trace theory, 26 in explaining their knowledge assessment, although in a different way. They include quantitative questions (e.g., “How many men/women out of 1000 will die from bowel cancer over the next 10 years without screening?”) but accept answers that are close to the right number but not exactly correct.

It is possible that simply repeating the qualitative statements of the key facts could have had a similar effect, although simple repetition of information is not a reliable way to improve retention, and spacing between repetitions appears to be essential. 27 In addition, more detailed decision aids have not generally outperformed shorter ones at increasing knowledge.28(202) Any proposed mechanism must remain speculative, however, since the study was not designed to identify mechanisms and did not have the power to detect differences in the frequency correct for individual questions. Future studies of the impact of quantitative information should eliminate the difference in the number of repetitions of information and be properly powered to better investigate the impact on individual questions.

Altering Thresholds for Adequate Knowledge Led to Varying Results

Our findings also highlight the importance of differences in methods for determining whether a patient has adequate knowledge. First, modifying the cutoff for passing the knowledge test changes the percentage of patients who count as having made a decision with adequate understanding, a key aspect in the ethical evaluation of informed choice. For instance, just 79% of patients count as having adequate understanding if we use a cutoff of 7 correct out of 8, while 97% have adequate understanding if we use a cutoff of 5 out of 8. The choice of cutoff also may affect the findings of the study. Using a threshold of 6 or 7 correct out of 8, we found that providing quantitative information increases the proportion of patients who achieve adequate understanding to make, but not if the cutoff was 5 out 8. Without a clear rationale for using one cutoff or another, the implications of the study cannot be fully appreciated.

Our survey of the literature on CRC screening decision making found significant variation and sparse justification for methods of measuring adequate knowledge, suggesting the need for more extensive analysis and discussion than is currently given in the design and reporting of decision-making research. Others have similarly called for more careful attention to the process of defining and using knowledge tests in assessing quality of informed consent.7,10,16

Choice-Based Knowledge Assessment

The impact of varying thresholds for adequate knowledge led us to create and pilot the use of our choice-based knowledge assessment, in which a patient counts as having adequate understanding only if they answer all of a subset of questions correctly that were matched to their intended behavior. Interestingly, and similar to more traditional approaches, this analysis found that a significantly higher proportion of patients who viewed quantitative information had adequate knowledge.

One advantage of the choice-based knowledge assessment is that the threshold for determining adequate knowledge (100% correct for the subset selected) makes clear sense, since the questions are selected as being particularly important based on the patient’s intended behavior. Another strength is that it appears reasonable to tailor the knowledge assessment based on the decision the patient has actually made, rather than every possible decision.

We recognize that this approach is in some ways less demanding and in some ways more demanding for determining that a patient has adequate knowledge: it is less demanding since a patient can be counted as having adequate knowledge based on answering fewer questions correctly (i.e., a subset of all the knowledge questions that count as “essential”), whereas it is more demanding since the patient must answer all the essential questions correctly. Of note, using the choice-based knowledge approach to determine the adequacy of knowledge for individual patients does not justify reducing the amount of information provided by the decision aid, since it has to provide the essential information for all of the choices. Furthermore, the knowledge test should cover all the essential information for all options, since the patient’s choice cannot be predicted ahead of time.

The choice-based knowledge approach to assessing adequate knowledge also faces some challenges. First, there is considerable difficulty in deciding which questions (which facts and information) are the “essential” ones for a given decision. Further research assessing the merits and applicability of various questions based on test preference will be needed. Second, it is difficult to determine an individual’s intended behavior in order to guide the choice of which knowledge questions are most important for a patient. 29 Third, it may be unclear whether the essential questions should be determined by the patient’s intention at T1, as we did here, or on which test they actually received at 6 mo. As in other approaches in which a patient’s intention is assessed, it may be useful to assess the level of commitment of the individual to that choice.

Of note, some commentators have recently questioned whether the ethical evaluation of consent requires evaluation of patient understanding, particularly for research participation.7,30,31 Some of these commentators have considered a return to focusing on what is disclosed to participants, and how clearly it is presented, rather than measuring objective knowledge. 9 This would actually be a return to the focus of traditional rules such as the reasonable person standard (the basis in fact for choice-based knowledge assessment), which focus on disclosure rather than understanding. 1

Limitations

This study looked at only a single randomized trial, related to 1 screening initiative (CRC screening) and was a secondary analysis. Furthermore, half of the participants also viewed a “nudge” toward FIT in the original study, and it is unknown what impact that could have on knowledge.

Conclusions

We described considerable variability in how researchers have measured patient knowledge and determined the adequacy of knowledge in studies of CRC screening. Researchers assessing patient understanding should consider various ways to define adequate knowledge when assessing quality of decision making, including considering the choice-based knowledge assessment method introduced here.

In our secondary analysis of a previously concluded study, we found that varying the method for assessing participant knowledge had considerable effects on the percentage of participants who were judged to have adequate knowledge. We also found that patients who viewed quantitative information in addition to verbal information had greater qualitative knowledge and more frequently had adequate knowledge compared with those who viewed verbal information alone, according to most ways of defining adequate knowledge. Quantitative information may have helped participants better understand or retain qualitative or gist concepts.

Footnotes

Acknowledgements

We thank our participants and the co-investigators on the original study for their assistance.

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.