Abstract

By rewarding engagement over accuracy, social media platforms foster the spread of misinformation. Likes and similar engagement reactions are a central form of feedback on most platforms and shape what users share. On the platforms, users quickly learn that sharing interesting, attention-grabbing content garners positive feedback, even when it is inaccurate. With repeated exposure to these social rewards, sharing interesting content—including interesting but inaccurate content—can become a habit. We review evidence for this reward-based learning and propose a simple redesign of platform rewards: adding a Trust button so users can reward accurate, reliable posts. Experimental evidence supports this approach: Users give Trusts to accurate posts more than inaccurate posts. Then, when they receive trust feedback, users increasingly share accurate content (even when less interesting) and reduce sharing of inaccurate but highly interesting posts. Because this intervention changes incentives, it is scalable, preserves user choice, and aligns with people’s stated goal of sharing accurate information. Misinformation interventions that overlook the role of social media incentives are unlikely to produce lasting results.

Despite a variety of attempts to reduce the spread of misinformation, social media remain a conduit for rapid, worldwide distribution of falsehoods. 1 Most interventions proposed to curb misinformation focus on users who post, share, and consume content on social media. Such interventions may remind users to consider accuracy before posting, 2 forewarn them about the flawed arguments typical of false information, a form of psychological inoculation against misinformation sometimes called prebunking, 3 or encourage them to conduct additional searches to verify accuracy. 4 However, the long-term effectiveness of such interventions is not promising. Only a few studies have examined the question of lasting impact, and these have often showed declining influence. 5 Thus, we need more enduring approaches to limiting misinformation.

To meaningfully reduce misinformation propagation across social media, we propose modifying the incentives that drive users to share inaccurate content. Our practical policy solution is simple but powerful: Add an incentive for users to post and share information that is accurate instead of merely interesting or attention grabbing. Our research has shown that this simple structural shift can maintain user engagement while reducing misinformation. 6

Surveys indicate that users value accurate information. In our studies, almost all social media users said that spreading accurate information is important to them, and that it was more important than sharing information that supports their own political views or that attracts attention and is widely read. 7 Most users are therefore not trying to deceive or mislead others. Instead, they are relying on social media to access news, connect with others, form relationships, establish group memberships, and reduce loneliness. 8

Yet, users’ claims regarding their desire to read and share accurate information clearly conflict with what they share on social media. They continue to spread material that others are likely to find interesting—content that is novel, attention grabbing, or likely to invoke emotional responses9 –11—even when that information is inaccurate. 12

What drives such contradictory behavior? Why would people say they value being truthful but still share falsehoods? The answer lies in social media’s incentive structure. In a survey of users across multiple social media sites (TikTok, X, Instagram, and LinkedIn), 51% agreed that they would reshare potentially inaccurate content if they believed their network would find it engaging or entertaining. 13 Moreover, 77% of these users stated that interesting and novel posts receive attention regardless of their accuracy, and 90% were confident that sharing inaccurate but engaging content would garner likes and positive comments. Thus, it seems that user intention to share truthful information often conflicts with the rewards that social media offers for sharing attention-getting, engaging material.

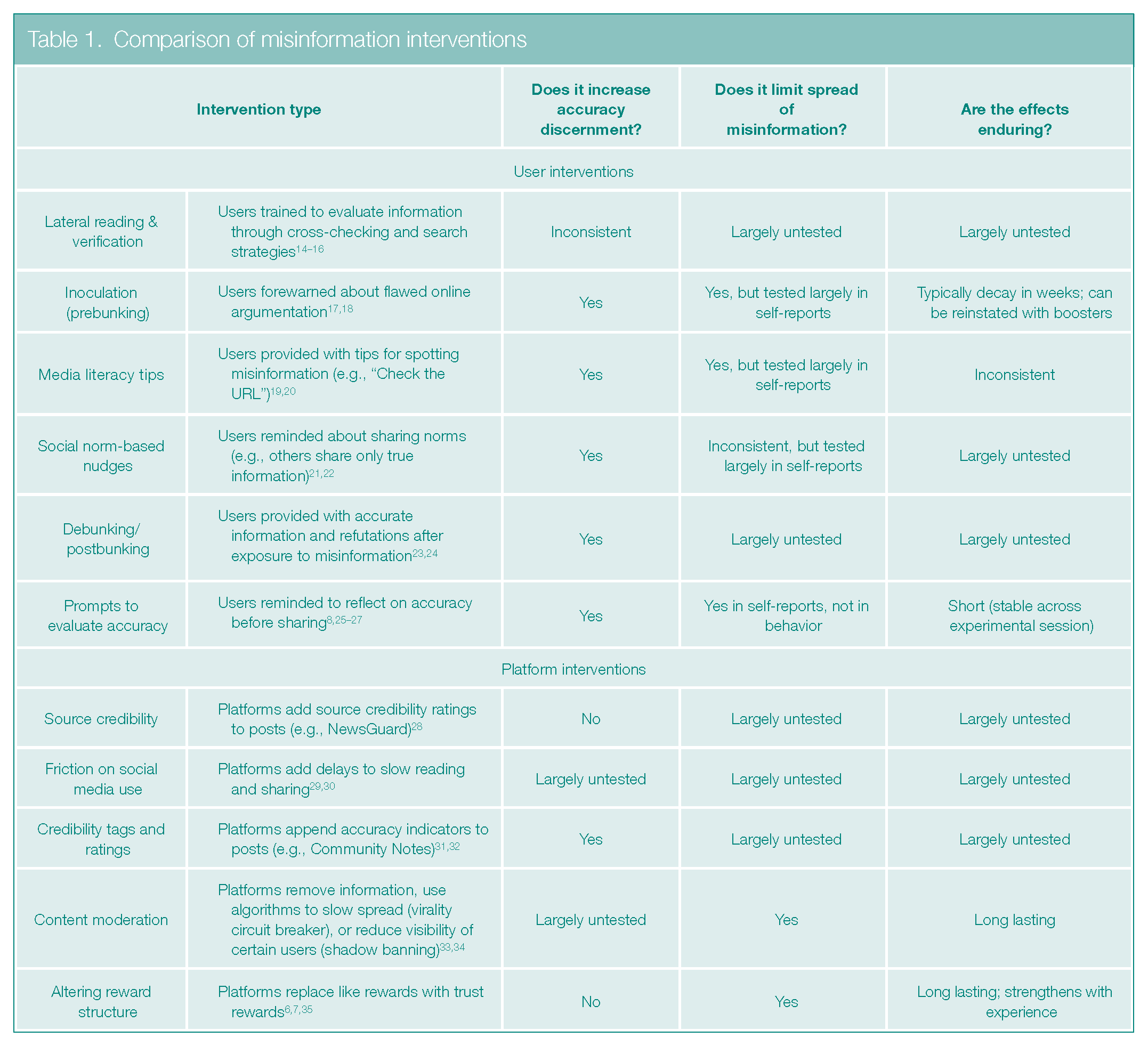

This conflict helps to explain why user-focused interventions often fail, as summarized in Table 1: Even when individuals recognize that interesting information is false, sharing it with others can result in positive feedback. Algorithms further amplify attention-grabbing posts that receive multiple likes, stoking a cycle in which the most engaging content, whether accurate or inaccurate, gains even more visibility. We therefore propose that meaningfully reducing misinformation requires reconfiguring the reward system itself.

Comparison of misinformation interventions

Reward Structures on Social Media

Rewards on social media were created to draw users to a site, hold their attention, and keep them coming back. Social media sites, including MySpace and Live Spaces, that failed to maintain large numbers of intensive daily users were mostly unsuccessful. 36 Among currently successful sites such as Facebook, Twitter/X, and Instagram, the greater the number of regular users, the higher the advertising revenues. 36 With many frequent users, sites can attract more advertisers and successfully deliver personalized marketing to users. Having occasional, sporadic users does not provide the same financial benefits.

One way that social media fosters repeated and enthusiastic engagement is through rewards––likes, loves, shares, and so on. This feedback, which signals recognition from the community, serves as a powerful driver of user behavior. 37 Research suggests that receiving likes and comments boosts users’ happiness, self-esteem, and overall satisfaction with social media use. Likes provide instant social validation, reinforcing the perception that a post is important and worthy of attention. 38 When users share content that generates likes, shares, and comments, they experience a physiological reward response, activating the brain’s reward centers37,39 and boosting release of the reward-associated neurotransmitter dopamine. 40 These rewards are so important that teens and young adults often delete posts that do not generate sufficient positive reactions.41,42

The reward systems on these platforms create a cycle of posting or sharing and then receiving feedback. More rewarding feedback leads to greater subsequent use. Specifically, users tend to post more frequently and at shorter intervals after receiving larger numbers of likes on previous posts.43,44

What type of content generates high levels of engagement—likes, comments, and shares—on social media? We use the umbrella term interesting content to describe information that captures attention, provokes emotional responses, or surprises users with unexpected details. Not all of this content is positive. Research shows that high-arousal, negative content, including misinformation, moral outrage, outgroup animosity, and incivility, is particularly likely to spread widely online. 9 –11,45,46 The algorithm-driven nature of social media platforms further amplifies this type of information, as algorithms promote posts that are highly engaging so they can reach even broader audiences. This boost results in a paradox of virality in that the content users engage with and share is not the accurate content they claim they want to see. 11

We argue that social media’s current reward structure plays a key role in elevating interesting content. As we explain in the next section, when engaging information gets rewarded, users learn to focus on the interest value of content, often to the detriment of its accuracy and quality.

Learning to Share Misinformation

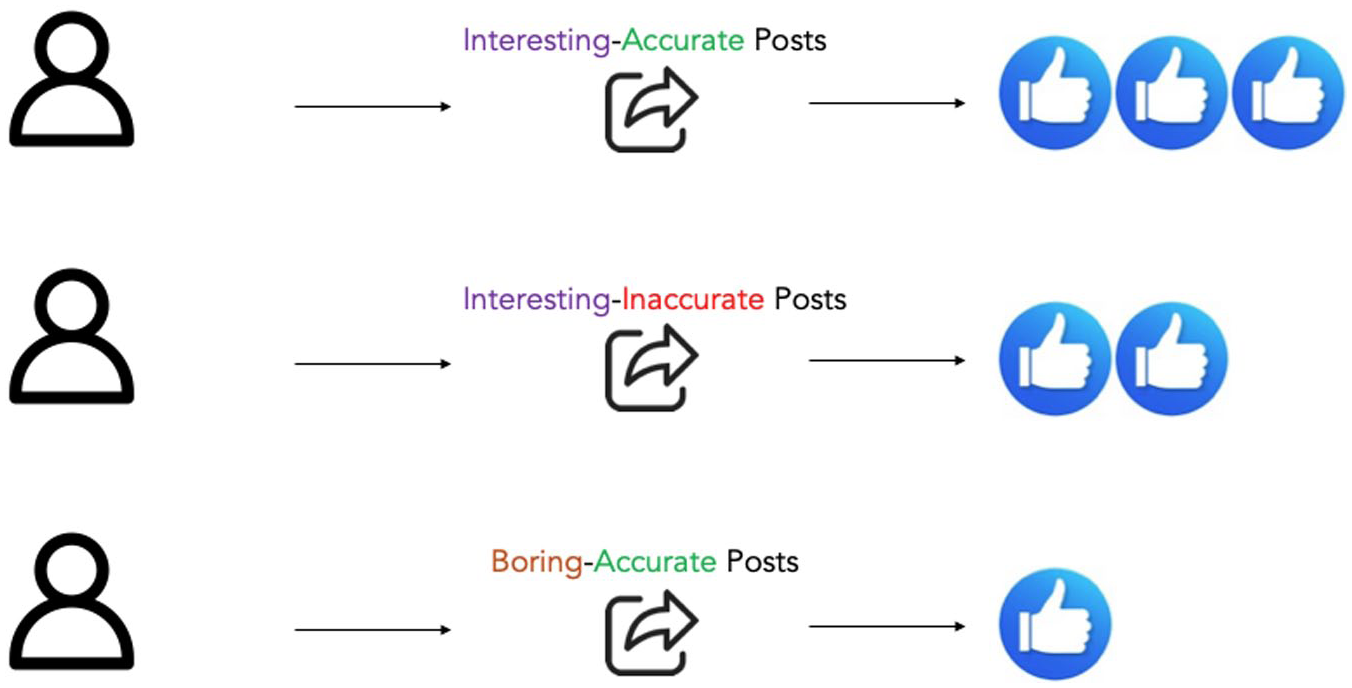

Sharing others’ posts is critical to the spread of misinformation: On Facebook, 38% of views of misinformation and 65% of views of inaccurate photos take place after a mere two shares. 47 Each reshare not only amplifies a specific piece of misinformation but also initiates a positive feedback loop that promotes sharing other misinformation. Because reshares generate rewards, they effectively educate sharers on the types of content that attract others. This reward system encourages people to share misinformation through reinforcement learning, similar to that demonstrated in B. F. Skinner’s studies of pigeons. 48 Just as pigeons learned which behaviors (such as pecking a button) would earn them food rewards, social media users learn which content will garner the social rewards of likes and comments. Although information that is both interesting and accurate will produce these rewards, users quickly discover that interesting but inaccurate content (misinformation) also generates rewards. Posts with two positive features (interesting + accurate) will typically yield more rewards than posts with a single positive feature (interesting + inaccurate), as shown in Figure 1. And all interesting posts typically get more rewards than content that is accurate but boring, such as simple statements of fact. This reward structure teaches users that the entertainment value of information on social media frequently outweighs its accuracy.

Social media reward structure

With repeated experience, existing reward feedback (post/share → get likes/loves/comments → post/share) trains users to continue posting and sharing the type of content that generated positive reactions in the past. In this feedback loop, information accuracy can become an afterthought. 7 This training explains why even well-intentioned users who claim to value accuracy may gradually start sharing more interesting but potentially less accurate content on social media. This link between interest-based rewards and misinformation has been largely overlooked in past research, given that most misinformation research ignores the interest value of content and focuses solely on its accuracy. 49

We have demonstrated that social media users learn from financial rewards as well as social likes.6,7 In our simulations of Facebook, some participants were rewarded for sharing interesting content regardless of its accuracy. 6 Others were rewarded for sharing accurate content regardless of whether it was interesting. Participants who received rewards for sharing interesting content prioritized sharing this type of material because it earned more rewards. At the same time, they also became less discriminating about content accuracy: While these participants increased their sharing of interesting and accurate information, they also shared more interesting misinformation. In contrast, participants rewarded for accuracy learned to prioritize sharing accurate content and did so whether the content was interesting or boring.

Current rewards on social media have negative effects in addition to spreading misinformation, such as fueling moral outrage. Twitter/X users posted more content expressing outrage after they had received positive social feedback for posting such material in the past. 35 Users expressed especially high outrage on days when they received more positive reactions than usual to their past outrage-filled posts. 50 Thus, social rewards shape what users post, and getting more rewards than expected—a positive reward prediction error—is an especially powerful driver of repeated behavior.

Frequency Makes Learning Stick

The more often users receive social rewards for their online behavior, the stronger the learning experience, and the more their behavior becomes shaped by others’ reactions. As users continue to frequently share, post, or give likes, these behaviors eventually become automatic. 36 That is, earlier learning based on engagement rewards not only drives responses but also forges habit associations in users’ memory when repeated often enough. Once these habits form, responses such as sharing are triggered automatically by context cues (e.g., posts expressing emotion) without much consideration of the consequences, including whether a post will spread falsehoods. Thus, habitual sharers display automated, learned responses rather than intended behaviors: They may spread misinformation without actually intending to deceive others, and they may express outrage without actually feeling outraged. 51

Direct evidence that sharing becomes habitual comes from studies showing that, after initial learning, misinformation sharing persists even without continued rewards. 7 In one of our studies, after participants learned to expect rewards for sharing misinformation, we removed this positive feedback. One might expect that these participants, who reported wanting to share accurate information, would then switch to doing just that. Yet, even in the absence of rewards, these participants continued to share more misinformation than others not initially trained to share it. Repeating a response even when outcomes change is a signature of habit formation. In other studies, sharing became more automatic as participants gained experience with the rewards: The more training they received, the faster they shared misinformation. 6

Repeated exposure to rewards on social media leads users to develop habitual behaviors beyond spreading misinformation. For example, users with a longer history of expressing moral outrage on social media continued to share posts with moral outrage even after others did not respond with positive reactions. 35 Infrequent users, in contrast, were more sensitive to rewards and tempered their subsequent posts when others reacted less positively.

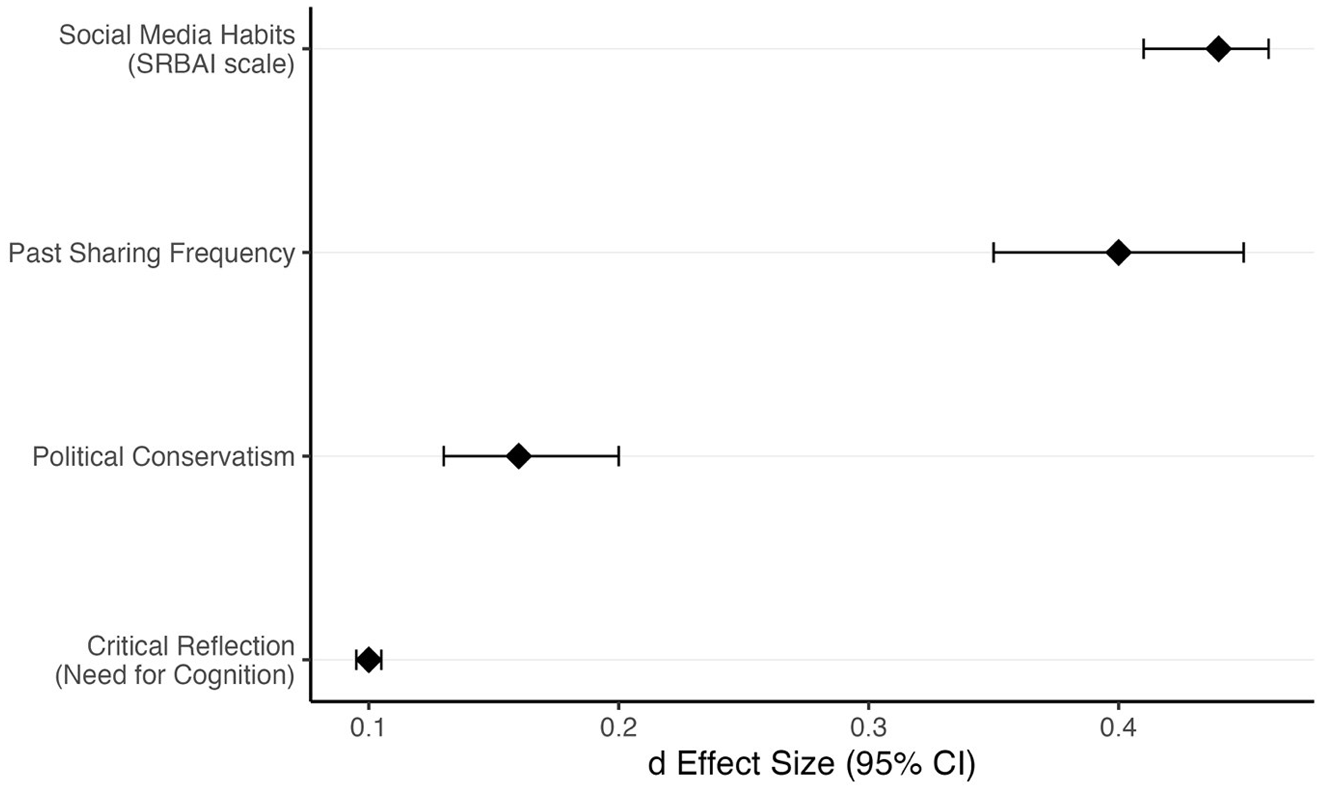

The habits people form from frequent, automatic sharing on social media are an especially strong driver of misinformation spread, even more so than users’ political beliefs or critical reasoning skills. 7 Although past research suggests that conservative (compared with liberal) users and those who are less analytically oriented are particularly prone to misinformation sharing,2,52 we found that these effects were small compared with users’ sharing habits (see Figure 2).

Factors influencing misinformation sharing

These findings could suggest that problematic sharing patterns are limited to perpetually heavy sharers––sometimes called superspreaders––who have developed entrenched habits over time. 55 However, our experimental evidence shows that these reward-driven behaviors can form remarkably quickly with repeated use, even in a single experimental session. Social rewards are powerful motivators in the highly structured social media context, and people rapidly learn to repeat rewarded behaviors. Although individuals use social media in different ways, behaviors that are repeatedly rewarded (posting, sharing, liking, commenting, and even scrolling) soon become habitual.

Understanding habitual sharing of misinformation is crucial because people’s sharing habits can undermine corrective interventions. Many past interventions to curb misinformation have missed this important point. 56 Once formed, habits become “sticky,” which could explain the limited impact of individual-level interventions aimed at highlighting accuracy goals, improving media literacy and critical thinking, or reducing political bias.3,57 These individual-focused interventions tend to have short-lived effects and require repeated prompts or “booster shots” to remain effective, given the conflicting pressures of platform incentives.17,56,58 To the extent that misinformation sharing is habitual, reducing such sharing requires new learning experiences to break the habit.59,60

Addressing Misinformation by Realigning Platform Rewards

By rewarding users for sharing interesting information, social media sites enable the spread of falsehoods and partial truths. But this system is not the only way social media platforms can reward engagement. One alternative would be to add a mechanism for recognizing and rewarding users who post and share accurate information. This approach should not only reward participation but also curb the propagation of misinformation.

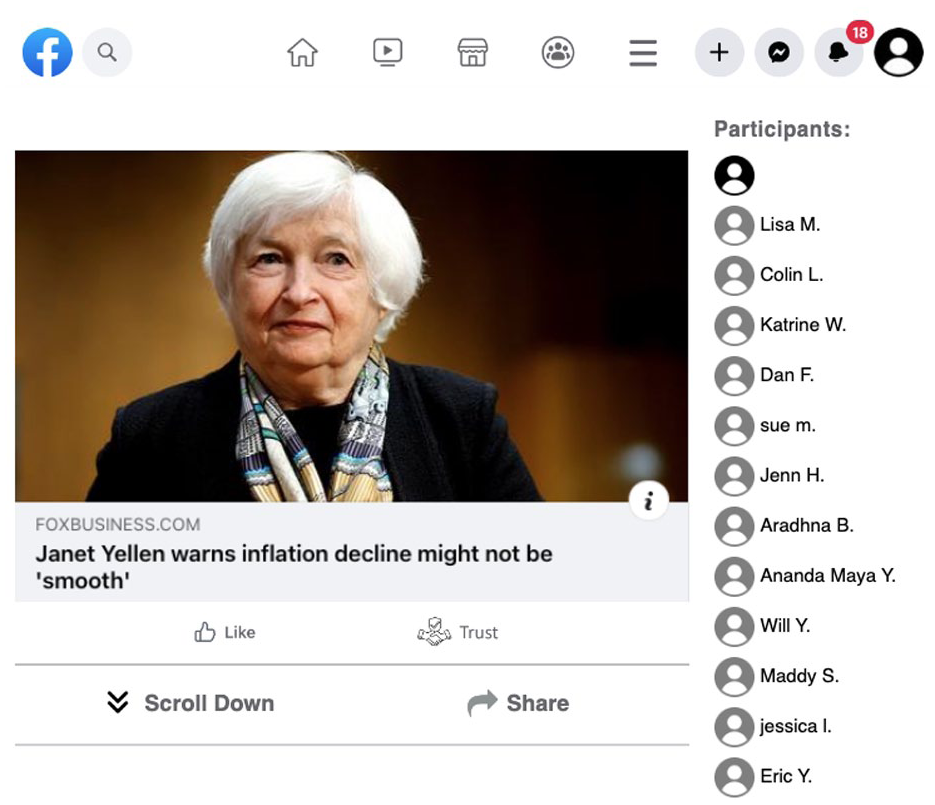

To examine the effects of changing rewards from interest to accuracy, we included a Trust button—similar to the current Like button—in our Facebook simulations. 6 Participants could then give and receive trusts for accurate content the same way they give and receive likes for interesting content.

Why trusts? Trust captures users’ judgment that information is credible, honest, unbiased, and reliably communicated. 61 Users often have a sense of whether content is trustworthy without extensively fact-checking it, drawing on accessible cues such as the source’s reputation, the post’s context, and whether others have endorsed it.62,63

When users evaluate posts on social media, trust reactions from others can serve as both a cognitive shortcut and a social signal. As a cognitive shortcut, trusts appended to a post allow other users to accept information without extensively verifying it, much like trusting a friend’s restaurant recommendation eliminates the need to research and read a number of reviews. Such cognitive shortcuts are crucial given the current information overload online. 64 As a social signal, trust feedback communicates to others that information can be believed. Information that receives high trust ratings via multiple Trust button clicks carries a social stamp of approval, indicating that it is widely perceived as credible.

Trust judgments are often made quickly and intuitively, aligning with the split-second decisions users make to engage with or scroll past content on social media.23,65 This rapid assessment makes trusts uniquely suitable for feedback: They fit the tempo of online behavior while capturing users’ intuitive sense of what is reliable.

Evidence That the Trust Button Works

Does adding a Trust button actually curb misinformation, as some have proposed? 49 Our research shows that it does by incentivizing accurate sharing behavior. 6 We first examined whether participants were more discerning of accuracy when clicking a Trust button as opposed to a Like button (see Figure 3 for an example of a post with its feedback buttons). We found that participants were more likely to give trusts to accurate posts than to inaccurate ones. The Trust button thus served as a marker of a post’s accuracy.

A trust button allows users to provide feedback on perceived accuracy

Next, we examined whether people’s political views biased their use of the Trust button. Trust buttons won’t be helpful if they are simply another partisan signal, with users trusting content that aligns with their own political views regardless of its accuracy. In our study, however, truth overcame politics: Giving trusts did not substantially depend on the politics of the posts. 6 Although users tended to like content consistent with their own political views, they were less partisan when using the Trust button. Furthermore, they were 3 times more likely to trust accurate posts even when the information was inconsistent with their own politics. Trusts therefore reflected information reliability, not its likability.

Finally, we examined how receiving trust feedback shaped users’ behavior on social media. As we noted earlier, people who received likes increasingly shared interesting posts, even when inaccurate. Participants who received trusts, however, increasingly shared accurate posts—even those that were factual and boring—and they shared fewer inaccurate posts (misinformation), even when the posts were interesting. Thus, trust-based rewards promoted accuracy, even for boring content.

A special advantage of this intervention is that it gets stronger with experience, as people have more opportunities to learn. For example, participants in one experiment chose whether or not to share 16 or 80 posts with others. 6 Recipients of the posts gave trusts when participants shared accurate information. After receiving trust feedback on 80 posts, participants shared more accurate information and less misinformation than those who received feedback on only 16 posts. These findings suggest that trust feedback not only promotes discernment in the short term but can produce stronger effects as people continue to be rewarded for trustworthy content in the long run.

Trust as a Scalable & Timely Solution for Information Quality on Social Media

By providing nuanced, scalable, and socially grounded signals of information quality, adding a Trust button offers a superior alternative to other platform interventions, as shown in Table 1. Credibility tags, fact checks, and other ratings can confuse users, leaving them uncertain about a post’s accuracy. For example, Facebook, Instagram, and Threads recently added a Community Notes feature so users can provide context to posts. However, this feedback does not necessarily help interpret accuracy. 66 Even more troubling, using content moderation to remove false information from a social media site may be seen as a threat to freedom of speech. 67 In contrast, trust ratings explicitly acknowledge the subjective nature of information evaluation while still providing useful measures of how others judge content reliability. This distinction is particularly important for politically charged topics where objective fact-checking can be challenging.

The Trust button leverages the wisdom of crowds by aggregating judgments that effectively identify misinformation at scale. Research shows that crowd ratings strongly correlate with professional fact-checker assessments. 68 In many cases, aggregated crowd judgments more reliably assess accuracy than those of individual fact-checkers or institutional efforts such as Facebook’s Oversight Board. 69 Although the Community Notes feature also relies on collective judgments, the Trust button offers distinct advantages. Community Notes are only posted for consensual judgments. 70 In contrast, the Trust button enables immediate, individual feedback that aggregates into dynamic, scalable signals of reliability. This real-time, self-reinforcing mechanism allows accurate content to gain prominence through social validation, ultimately reshaping what content gets shared and amplified.

As another advantage, the Trust button builds on the existing reward architecture of social media platforms—likes, shares, and reactions—without requiring new content verification or labeling infrastructures. The Trust button does not change the structure of social feedback but instead alters its meaning. Whereas likes signal that content is entertaining or emotionally resonant, trusts signal that content is reliable. By introducing this distinction, platforms can leverage the trust reward’s power, allowing accurate but mundane information to receive appropriate recognition while preventing sensational misinformation from gaining credibility through viral spread.

The Trust button also equips users to navigate uncertainty about whether content is true or false. When individuals are unsure, cognitive biases such as judging repeated information as more accurate and thus more shareable often emerge.65,71 By aggregating judgments of trustworthiness from the broader community, the Trust button reduces this uncertainty and offers a real-time accuracy signal, ultimately curbing misinformation spread.

The rise of AI-generated misinformation makes it increasingly important for social media platforms to adopt mechanisms like Trust buttons that signal reliability. Creating interesting but false content with AI, including deepfakes, 72 is now fast and cheap. 73 Unless accuracy is actively incentivized, this shift threatens to overwhelm platforms with misinformation. The Trust button addresses this risk in three ways: First, it reduces the incentives to produce or share misinformation. If more people reward accurate content and avoid inaccurate content, the potential reach and impact of falsehoods diminish considerably. Second, trusts leverage collective human judgment to identify subtle errors in AI-generated falsehoods that individual fact-checkers or algorithms may miss. 74 Third, trusts create reputational incentives for honest contributors to share verified content. By restructuring incentives to value truth alongside interest, platforms can limit the spread of AI-generated falsehoods.

In addition, the Trust button addresses rising public concern about misinformation. Trusts offer policymakers a science-based intervention that technology companies can easily implement to foster a more transparent, safe, and trustworthy online environment. 75 Importantly, this solution helps platforms protect freedom of speech while also addressing users’ growing concerns about information accuracy. According to a recent Pew Research Center poll, 40% of Americans identify inaccuracy as the feature they most dislike about getting their news on social media, an increase of 9% since 2018. 76 Thus, using a Trust button to curb misinformation would align with public demand for healthier information ecosystems. At the same time, this intervention would help social media companies mitigate reputational risks by demonstrating their proactive commitment to information integrity.

Finally, social media managers and company executives may worry that introducing a Trust button will reduce user engagement. However, our findings suggest this intervention sustains engagement and even enhances it. Positive user experiences, especially those grounded in trustworthy content, may be key to long-term platform vitality. As recent analyses suggest, mainstream social media suffers from declining user satisfaction and engagement, likely driven in part by misinformation and polarization. 77 By realigning incentives toward accuracy rather than interest, platforms can cultivate both engagement and information quality, rather than compromising one for the other.

Reddit as a Case Study for Changing Reward Structures

Although Globig et al. first proposed the idea of a Trust button to address misinformation in 2023, 49 major social media platforms have yet to adopt it. This fact raises a fair question: If the Trust button works, and we now understand how it influences sharing, why hasn’t it been implemented?

We can only speculate on the reasons behind this reluctance. Until recently, political will has not been sufficient to create and enforce public policies for reducing misinformation on social media. In fact, politicians and the platforms themselves may actually benefit from social media structures that spread falsehoods and rile up their political base. 78 In addition, social media researchers have largely promoted individual-level solutions (again, see Table 1), which have demonstrated limited long-term success at combatting the current reward structures that promote misinformation on social media. As long as the major scientific outlets continue to favor individual-level approaches, social media companies will make little progress in controlling online misinformation.

As we noted above, the possibility that shifting buttons might decrease user engagement has not emerged in any of our experiments thus far. Granted, we have mainly tested the Trust button in controlled studies rather than large-scale platform rollouts. Additional testing in a variety of contexts is, of course, a crucial step in scaling any intervention.

In the meantime, Reddit offers a compelling example of a platform that has implemented novel rewards. Reddit’s upvote/downvote system allows users to evaluate content based on whether it is helpful, informative, or meaningful—not just entertaining. Accumulated karma points (derived from upvotes and downvotes) shape users’ reputations and access to community participation, a mechanism that could skew incentives toward contribution quality rather than potential virality. 79 While upvotes are not explicitly trust signals, they function as community-based indicators of value and relevance, closer to trust than to pure popularity.

This community-first architecture appears to be paying off. According to the Neely Social Media Index, 77 Reddit was the only major platform to show an increase in active users from 2023 to 2025; on an aggregated basis, Facebook, Instagram, TikTok, YouTube, and Snapchat all plateaued or declined. Over the same period, the percentage of users who reported having a negative experience on Reddit dropped by 39%, and concerns about misinformation on the site were significantly lower than for other platforms. Although Reddit still faces issues of polarization, misinformation appears to be less of a reason for negative experiences on the site.

The potential gains are monetary as well as experiential. In 2024, Reddit generated $1.3 billion in revenue, reached 91 million daily active users, and had a $10 billion pre-IPO valuation––its most financially successful year since 2012. 80 In sum, Reddit’s numbers imply that reward systems centered on content quality rather than entertainment value alone can scale, support user well-being, and create tangible economic value. Its performance offers a powerful, real-world proof of concept for platforms and policymakers considering implementing structural interventions like Trust buttons.

Conclusion

Social media platforms reward engagement over accuracy, a reward structure that helps to explain why even well-intentioned users spread misinformation. Although partisan actors and authoritarian regimes deliberately spread falsehoods,56,65,81 ordinary users with no intent to deceive propagate most misinformation.12,82

We propose a simple shift in reward structure that will curb the flow of user-generated misinformation and improve the quality of information on social media. Instead of the current rewards for interesting, emotional, and sensational information, we propose shifting rewards to recognizing accuracy by including a Trust button. As we showed in the present article, this easily implementable platform redesign allows users to meet their dual goals of sharing accurate information that is recognized for its worth while continuing to gain social support and connect with others online. Successful social media platforms that incorporate trust incentives in the future will be giving users what they want: ready access to information they can trust.

Footnotes

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.