Abstract

What entities deserve moral rights? Although this question has been of long-standing interest to ethics and social theory, it has resurfaced in a particularly pronounced way with artificial intelligence (AI). Using original survey data from a convenience sample and a large-scale random sample of Switzerland, we compare two explanations for the willingness to grant moral rights to AI. The ontological view refers to perceived human-like properties of AI, such as sentience (i.e., what AI is). The social impact view refers to the social-relational meaning of AI in terms of its impact on humans and trust (i.e., what AI does). The findings corroborate both views. Support for the general idea of AI rights and a political referendum granting basic rights to AI are positively correlated with the perceived ontological proximity of AI to humans, expected benefits of AI for society, and trust in AI developers. A right to life and physical integrity rests on perceived ontological properties only. Ontological distance of AI to simple computer programs, expected costs of AI, and trust in the state play a minor or no role. Sociological perspectives on an entity’s social-relational meaning are hence indispensable to explain the social construction of moral rights for AI.

Do you think artificial intelligence (AI) should be granted moral consideration or even moral rights? Perhaps you agree with many philosophers and legal scholars arguing that current AI does not deserve a similar moral status to that of human beings (Birhane, van Dijk, and Pasquale 2024; Ladak 2024; Miernicki and Ng 2021; Müller 2021). After all, it’s just a tool! Yet there are many examples of societies showing moral consideration toward AI or autonomous technologies. A robot dog named Spot received a Buddhist funeral in Japan (Mays, Cummings, and Katz 2025). The animal rights organization People for the Ethical Treatment of Animals (PETA) condemned the maltreatment of HitchBOT, a human-like robot, in the United States (Darling 2015). The European parliament, a United Nations commission, and social movements are contending legal personality for AI (de Graaf, Hindriks, and Hindriks 2021; Lima et al. 2020; Tamatea 2008). In recent surveys, around one third of respondents approved of the general idea of robot rights (Mantello et al. 2023; Mays et al. 2025; Spence, Edwards, and Edwards 2018).

Although these examples might seem puzzling, they remind us that the attributions of moral status and moral rights are not fixed. They vary historically and culturally. What is odd or unimaginable to some is natural and taken for granted to others (Spillman 2023). Think of human rights (Pennington 2011), rights for nonhuman animals (Munro 2012), the legal personhood of cooperations or more recently for rivers (Barkham 2021). Moral rights are, to a certain extent, socially constructed (Munro 2012; critically Müller 2021). The distribution of rights among various entities, from humans to collective actors to technological artifacts, is a fundamental element of social order.

The standard account in philosophical (for overviews, see Harris and Anthis 2021; Owe, Baum, and Coeckelbergh 2022), legal (Miernicki and Ng 2021), public (Tamatea 2008), and everyday discussions for the explanation of why AI should have rights refers to ontological properties, such as sentience, consciousness, feelings, or interests (Anthis and Paez 2021; DeGrazia and Millum 2021; Ladak 2024; Müller 2021). For example, only if AI has the capacity to feel pain is it a candidate for moral rights. However, people’s willingness to attribute moral rights might not only depend on what AI is, but also on what AI does to others and society. On a second account, which we call the social impact view, granting rights to AI depends on its societal impact instead of some emergent ontological property (Darling 2015; Schwitzgebel and Garza 2015; Owe et al. 2022). If AI has extrinsic value to a society, for example by improving living conditions, people are more willing to acknowledge AI as an object of moral consideration. Conversely, if AI entails uncontrollable risks, the idea of moral rights for AI becomes less convincing to a collective.

People could refer to these arguments when judging the feasibility of AI rights in their everyday practice. Yet empirical research on the attribution of moral rights to AI is still scarce (de Graaf et al. 2021; Lima et al. 2020; Mays et al. 2025; Nakado 2012; Spence et al. 2018). In this contribution, we develop and empirically test the ontological and social impact views as explanations for the willingness to grant moral rights to AI among people of the general population. We show in two studies that the willingness to grant moral rights to AI does not only depend on ontological properties, namely the perceived similarity of AI to human beings or simple computer programs, but also on its social impact, related to expected benefits, costs and risks. Going beyond the standard ontological view and the mainstream of psychological research (for a review, see Bonnefon, Rahwan, and Shariff 2024), the attribution of moral rights to AI is therefore explainable only by a sociological perspective on an entity’s relational meaning: its perceived social impact on others and embeddedness in social structures of trust.

We define AI as a machine or advanced computer system with the ability to learn and to independently perform tasks previously carried out by humans (Arkoudas and Bringsjord 2014). From a normative perspective, rights protect an entity’s moral or legal status on principled grounds (DeGrazia and Millum 2021; Pennington 2011). Once established, they cannot be set aside on a case-by-case basis for purely utilitarian reasons (Schwitzgebel and Garza 2015). Having moral status means that an entity is treated in a certain way for its own sake, usually referring to its well-being or personal integrity (Bostrom and Yudkowsky 2014; Küster, Swiderska, and Gunkel 2021). In the modern nation-state, some rights become formalized in the legal system, backed up by a democratic process (Miernicki and Ng 2021; Pennington 2011). Following these considerations, our empirical analysis focuses on the general idea of AI rights, the right to life and physical integrity, and the support of a political referendum to grant basic rights to AI. Societies negotiate the conditions under which various entities deserve the moral protection of rights. They are in the midst of doing so for AI.

Our analysis relies on original survey data from a convenience sample of mostly Swiss but also German and Austrian respondents (n = 502) and a large-scale stratified random sample of the general population of Switzerland (n = 2,703). A focus on Switzerland is warranted to study AI rights. Switzerland is characterized by a high levels of digitalization (with an index value of 93 in a digital competitiveness ranking, similar to the United States at 91 but higher than Germany and Austria at 75 and 73, respectively; Institute for Management Development 2024). Additionally, it represents a highly individualistic country with a strong emphasis on abstract principles, formal norms, and universalistic values (reaching 79 on an individualism scale, similar to Germany and Austria and more than the United States at 79, 77, and 60, respectively; Hofstede, Hofstede, and Minkov 2010). As a case in point, it is one of the rare countries that had a vote on basic rights for primates. The conditions are thus especially favorable to generate enough variation in the willingness to grant AI rights.

Explaining the support of AI rights has several payoffs. First, (moral) actorhood is a central explanandum in the new sociology of morality, sociology of technology, and social theory in general (Abend, Posselt, and Schenk 2025). Recent sociological approaches have stressed macro-historical perspectives, for example on category change or social movement work, to explain human (Spillman 2023) or animal rights (Munro 2012). We extend such efforts by providing an explicit and testable micro-level theory based on a social actor’s beliefs about ontological properties (ontological view) and expected benefits, costs, and trust (social impact view). Second, AI provides an especially interesting fringe case to study moral rights in the making (Abend et al. 2025). Laypeople and experts situate AI between deterministic technologies and human actors (Glikson and Woolley 2020; Shank et al. 2021). Understanding what tips the scales to one side or the other bears implications for other cases, such as animal or environmental rights (Munro 2012). Finally, studying moral actorhood provides an important desideratum in the emerging sociology of AI, which has most often focused on the acceptance and social consequences of this new technology (Dahlin 2024; Schenk, Müller, and Keiser 2024).

Our findings also matter practically. Be it in philosophy or cultural imaginations, many hold that moral consideration for AI will become an urgent ethical, legal, and political issue, even within the span of a human lifetime (Harris and Anthis 2021; Ladak 2024; Owe et al. 2022). Just like philosophers or legal scholars, sociologists should be prepared. Yet even for critics thinking that AI rights are nothing but a “first-world problem” (cf. Miernicki and Ng 2021), knowledge on people’s ordinary understandings of such rights is indispensable. Public perceptions crucially affect choices on norms, policies, and trajectories for AI development (Caviola 2025).

We begin by outlining the ontological (“What AI Is”) and the social impact (“What AI Does”) views. Taking these philosophical and social scientific debates as a starting point, we develop two explanations for a person’s willingness to grant moral rights to AI, allowing us to derive empirically testable hypotheses. We then present the data, methods, and results from two separate studies. We conclude the article by a general discussion of the major findings and the implications for AI ethics and policy.

When Does AI Warrant Moral Rights?

What AI Is: The Standard View on Ontological Properties

According to the ontological account, moral rights for AI depend on the entity’s ontological properties: what AI is. In philosophical and everyday discussions alike, sentience is the most important of these properties (for an overview, see Harris and Anthis 2021). It denotes the capacity to have pleasant or unpleasant experiences (DeGrazia and Millum 2021). This includes the ability to have feelings and emotions, such as hedonistic well-being or suffering (Bostrom and Yudkowsky 2014). Sentience is tightly connected to consciousness and interests. Any sentient being is also conscious, while sentience is sufficient for having interests (e.g., an interest in avoiding torment). An entity deserves moral consideration because others take its interests into account (Bostrom and Yudkowsky 2014). So, for example, human infants or primates could deserve moral consideration because they can feel pleasure and pain, whereas a stone would not (Ladak 2024). 1 It follows that the willingness to attribute moral rights to AI depends on the perceived capacity of AI to be sentient .

Although inconclusive, empirical research rather supports this argument. In an experiment, Lee et al. (2019) found that participants granted higher moral standing to virtual agents if they were presented with a disposition to feel. According to studies by Nijssen et al. (2019), Mays et al. (2025), and de Graaf et al. (2021), perceptions of affective and emotional capacity in AI correlate with intrinsic valuation and the willingness to grant rights. In contrast, in an experimental study by Küster et al. (2021), participants deemed a robot dog more worthy of protection and compassion than a humanoid robot, although they considered the latter to be more conscious.

Another important property relates to an entity’s fundamental, unique essence: the substrate that an entity is made of. Although philosophers have criticized this argument, stating that substrate only matters in so far as it constitutes a difference in other, ethically relevant attributes, such as sentience (Bostrom and Yudkowsky 2014; Ladak 2024; Schwitzgebel and Garza 2015), previous research suggests that laypeople link moral considerations to essential properties. The most famous one is the difference between organic matter and silicon, or human brains and computer chips, or natural and artificial life. As the argument goes, even if we could build an extremely complex neural network, AI could never be considered alive or having consciousness as humans do. In a study by de Graaf et al. (2021), the belief that certain traits (e.g., intelligence, emotion, morality) are unique to humans was negatively correlated with the willingness to grant robot rights. A second important essential difference between AI and humans in western Christian thought concerns the human soul. According to a discourse analysis of Christian online posts on AI, there is the idea that only entities possessing a soul warrant moral rights, which is allegedly not true for AI (Tamatea 2008).

Discussions have also referred to AI’s appearance. Psychologists and sociologists emphasize anthropomorphic cues to explain moral reactions to AI (de Graaf et al. 2021; Schenk et al. 2024). In philosophy, Danaher (2020) argued that an AI should be granted moral status if its observable behavior is similar to other entities possessing such status. Hence, the more human-like AI becomes, the stronger the inclination to attribute moral rights to it. However, empirical support is mixed. Although participants in one experiment showed higher empathic concern toward more human-like robots (Riek et al. 2009), human-likeness made no difference for the willingness to sacrifice a robot in a bomb-defusal task (Tenhundfeld et al. 2021). These studies focus on situational cues instead of a person’s ontological understanding of AI. Given the inconsistent and overall weak effects of such cues (Bonnefon et al. 2024), it may be that humanizing perceptions rest more on a person’s beliefs on the ontological boundaries between AI and humans than situational affordances (Waytz, Cacioppo, and Epley 2010).

In other words, people may perceive the ontological properties of AI differently depending on their subjective understanding of this new technology, founded in turn on cultural categories and imaginaries of AI (Sartori and Theodorou 2022; Schenk et al. 2024; Schwitzgebel and Garza 2015; Spillman 2023). The willingness to grant moral rights to AI could thus be a function of the extent to which a person upholds or diminishes ontological boundaries between AI, humans, and other technological artifacts in terms of sentience, essence, and appearance (Waytz et al. 2010; for nonhuman animals, see Munro 2012). We can theorize an ontological space with humans on one end and simple computer programs on the other (MacDorman and Entezari 2015). People may then lump AI together with simple computer programs or with human beings. The smaller the perceived ontological distance of AI to humans and the larger the distance to simple computer programs, the more willing a person is to grant moral rights to AI. Two hypotheses for the ontological account follow, along with separate subhypotheses for each of the three types of support for AI rights included in the empirical analysis (see the section “Measurements” under “Empirical Analysis”). The willingness to (a) grant a right to life and physical integrity, (b) support a political referendum for basic rights, and (c) support the general idea of moral rights for AI increases with smaller ontological distance between AI and human beings (hypotheses 1a–1c) and increases with larger ontological distance between AI and simple computer programs (hypotheses 2a–2c).

What AI Does: The Social Impact View on Societal Benefits, Costs, and Trust

According to the social impact view, moral rights for AI depend on its impact on human beings and society. AI yields uncertain future benefits and costs, encompassing economic concerns, such as better living standards or causing unemployment, and social concerns, such as improving society as a whole or the loss of control (Dahlin 2024). As the argument goes, with larger expected benefits for human beings, lower expected costs, and higher levels of trust, people should be more willing to grant moral rights to AI. Hence, moral considerations are not only tied to the entity’s intrinsic properties. Moral status depends on AI’s ability to operate in society and to fulfill certain societal functions—on what AI does to us (Wang and Krumhuber 2018). The social impact view moves beyond a narrow psychological understanding of AI rights, limited to inner mental states and human-like essences (Schwitzgebel and Garza 2015). Benefits, costs, and trust are relational properties, contingent on the perceptions by social actors. AI rights must be explained by a sociological perspective on an entity’s relational meaning.

Although the ontological view is dominant in public and ethical discussions, various scholars have proposed ideas along the line of the social impact view. For example, Schwitzgebel and Garza (2015) postulated that moral consideration should encompass AI’s social properties next to ontological ones. Coeckelbergh (2010) argued that AI’s moral status emerges in a social ecology including humans and artificial agents. Similarly, Gunkel (2023) deconstructed ontological boundaries between things and humans, arguing that AI’s moral situation does not depend on what it is but on how it relates to us. In the legal domain, Darling (2015) contended that anthropomorphization is warranted if it leads to positive consequences for a collective. Notwithstanding the differences between these authors, they share a common assumption that AI rights do not solely depend on the entity’s ontological properties. Rather, they rest on the right sort of relation between AI, human beings, and society.

When social actors perceive technological systems of AI as beneficial for themselves or a collective, they have an interest in the protection of these systems, not because AI has intrinsic moral value, but because it advances their own interests. Consequently, this view does not presuppose any assumptions on the ontological properties of AI, such as sentience (Darling 2015). AI receives indirect moral consideration derived from the moral status of human beings (see Müller 2021). For instance, people might feel strongly about the maltreatment of an intelligent robot dog, not necessarily because they attribute an interest in avoiding suffering to the technological artifact, but because it is a child’s most cherished toy. Rights are understood not as an intrinsic entitlement but as an instrumental mechanism to protect the extrinsic value AI has for others (Miernicki and Ng 2021).

This is not something peculiar to AI. Similar lines of reasoning apply to other entities. People support environmental rights because they want to protect environmental resources valuable to them and future generations (Soyez 2012). Or they consider works of art as objects warranting moral consideration because they represent cultural goods high in symbolic value to a community (Beckert, Rössel, and Schenk 2017). As these examples indicate, derived moral status on the basis of the extrinsic value of an object might turn quickly into proper moral status on the basis of its intrinsic value. When an entity has special importance to people’s lives, they start to value these entities for their own sake, such as the environment, art, or possibly AI (Anthis and Paez 2021; Owe et al. 2022).

We must note an important counterargument. Granting rights to AI also limits the freedom to use it as a tool. Akin to discussions on labor rights (Wright 2010), the right to have free time or not to be terminated (de Graaf et al. 2021) limits the opportunities to extract economic value from AI or to use it in dangerous situations (Tenhundfeld et al. 2021). If this line of reasoning was correct, people perceiving AI as an economically beneficial tool could be opposed to the idea of AI rights. Ultimately, it is an empirical question whether expected benefits and costs promote or undermine AI rights.

Up to this point, there is very little research on this. Consistent with the argument that perceived benefits increase moral consideration for AI, the intention to harm a robot with an electric shock was lower when it was valuable for fulfilling certain social functions in an experiment by Wang and Krumhuber (2018). In another experiment, a functional framing stressing economic value induced larger donations for the repair of a robot than an anthropomorphic framing (Onnasch and Roesler 2019). Previous research also found that respondents with favorable attitudes toward robots were more likely to support a petition for robot rights (Spence et al. 2018). Yet there is also conflicting evidence. Respondents worried about AI being a threat to humans were more likely to support AI rights in a survey by Mays et al. (2025). While the scarce research is hence inconclusive, it rather suggests that perceived benefits promote the willingness to grant moral rights to AI.

Even if AI rights depend on expected benefits and costs, the current and future societal impact of AI are radically uncertain (Sartori and Theodorou 2022). Predictions range widely from utopian to dystopian imaginaries. Given such uncertainty, social agents rely on trust to form a judgment. Trust is commonly defined as the belief that a trustee is capable and committed to producing an outcome in the interest of the truster under conditions of risk (Robbins 2016). When it comes to the functioning of technologies, developers and the state play a vital role. The former have an obligation to develop and implement reliable, beneficial, and ethical technologies. The latter has an obligation to regulate new technologies, guarantee their safety, and enforce laws (Matsuzaki and Lindemann 2016). Consequently, trust in developers and the state might ease AI’s perceived risks. Although trust could be a critical factor in how people evaluate AI’s moral standing, it has been largely ignored in normative and empirical research. In one study, Awad et al. (2018) found that the legitimacy of moral decisions made by AI vary with a country’s quality of formal institutions, hinting at a connection between trust in governmental structures and perceptions of AI’s morality.

To summarize, from the social impact view, the attribution of moral status is seen through the lens of its uncertain societal impact: the relations between AI and people rather than the object’s ontological properties. Hence, the willingness to (a) grant a right to life and physical integrity, (b) support a political referendum for basic rights, and (c) support the general idea of moral rights for AI increases with expected benefits of AI (hypotheses 3a–3c), decreases with expected costs of AI (hypotheses 4a–4c), increases with trust in AI developers (hypotheses 5a–5c), and increases with trust in the state (hypotheses 6a–6c).

Empirical Analysis

Data Collection Study 1

In the first study, we collected data with a standardized online survey from May to August 2023. One of the goals of the first study was to develop scales for key theoretical constructs with a smaller convenience sample. We employed two approaches to survey administration. First, we distributed the survey among students of the University of Lucerne, situated in the German-speaking part of Switzerland. Students are a highly relevant population to study AI rights. They are early adopters of new technologies (Graf-Vlachy, Buhtz, and König 2018) and are politically more engaged (Dahlum and Wig 2021). We sent an e-mail invitation with a link to students from all faculties. Participation was incentivized with a lottery. Four hundred forty students participated in the survey, corresponding to a response rate of 12 percent. Considering the lower response rates of online surveys and the missing possibility of sending reminders (a university regulation to limit research-related e-mails to students), this is acceptable. Second, we administered the survey on Prolific, a crowdsource platform with a demographically diverse pool of respondents. Methodological research shows that data from Prolific exhibit strong measurement quality and population validity, especially when controlling for sociodemographics (Weinberg, Freese, and McElhattan 2014). Only people with German as first language, living in Switzerland, Germany, or Austria were eligible to participate. We used a quota sample by gender and student status of 150 participants. The additional data collected on Prolific allowed us to reach the nonstudent population.

Taken together, the respondents represent a nonrandom convenience sample of the Swiss, German, and Austrian populations. At 75 percent, people living in Switzerland make up the bulk of the sample, however. Women are overrepresented with 61 percent (37 percent men and 2 percent other) compared with the population (about 51 percent women). With an average of 30 years of age, 16 years of schooling, and a monthly income of about 4,680 Swiss francs, they are younger, better educated, and economically slightly worse off than the population (about 43 years of age, 12 years of schooling, income of 5,300 Swiss francs). Despite being characteristic for the student population, nearly one fifth (17 percent) of the sample does not have student status, providing enough variability to control for this background factor.

The title of the survey was “New Technologies—New Responsibility?” for the first wave of data collection and “Social Consequences of New Technologies” for the second, avoiding reference to AI. The questionnaire was in German. At the beginning, participants were asked for informed consent. We provided a short definition of the term artificial intelligence (in line with the definition in the article’s introduction). To reduce sequential effects, we randomized the order of the item batteries and the order of items within the batteries. To improve the efficiency of the data collection, we used a missing-by-design approach (Enders 2010). Each respondent received a subset of the entire questionnaire at random. The resulting missing values are estimable by multiple imputation procedures without bias. For the subsequent analysis, we only included respondents who were administered questions on AI rights, resulting in a net sample of 502 cases. We used 40 imputations for the estimation process, corresponding to the highest proportion of missing values. To check the validity of the procedure, we inspected the distributions of the variables in each imputed dataset. No anomalies were detected. According to a post hoc power analysis, we have sufficient power (β = 0.8) to detect fairly small effects of f 2 = 0.045 at the 5 percent level in the regression models.

Data Collection Study 2

The goal of the second study was to extend the analysis with a large random sample of the general population. We used a stratified random sample from the Sampling Frame of the Federal Statistical Office in Switzerland. The population includes all Swiss residents who are 18 years or older. The title of the survey was “New Technologies—New Responsibility?” We used dual-mode administration with respondents being able to choose between an online (83 percent) or a pen-and-paper version (17 percent). In contrast to previous studies in this research area, relying on combinations of online and convenience samples of special populations, the data collection for study 2 was considerably less prone to systematic sampling bias, covering the general Swiss population. 2

Survey administration began in October 2023 and was completed in February 2024. The questionnaire was available in German, French, or Italian. We incentivized participation with a small gift and followed up with two reminders after initial contact. Participants gave their informed consent at the beginning of the survey. In total, 2,703 people responded, corresponding to an adjusted response rate of 25 percent, satisfactory for a survey on a rather niche topic. The distributions of gender (48 percent women, 51 percent men, 0.5 percent other), age (mean = 52 years), and language region (German, 57 percent; French, 26 percent; Italian, 17 percent) follow the population closely. Mean net income per month is slightly lower compared with the population (5,046 vs. 6,700 Swiss francs, respectively), and highly educated people are overrepresented (54 percent vs. 45 percent with college-level education or higher). To handle missing values, we used multiple imputation (Enders 2010). We restricted the analysis to cases with a valid response on the dependent variable. Nineteen imputations were used for the estimation process, corresponding to the highest proportion of missing values, which is for income. An inspection of the distributions of the variables in each imputed dataset showed no anomalies. This yields 2,335 valid cases. A post hoc power analysis shows that we have sufficient power (β = 0.8) to detect very small effects of f 2 = 0.02 at the 5 percent level in the regression models.

Measurements

We use three dependent variables to measure the willingness to grant moral rights to AI, covering various facets of the philosophical, legal, and public discussion (Darling 2015; Miernicki and Ng 2021; Pennington 2011). We opted for three different dependent variables to cover a broad terrain in light of the small amount of empirical research. The first measure deployed in study 1 concerns the right to life and physical integrity for AI. Previous studies found that this type of right receives comparatively more support (Lima et al. 2020) and loads most strongly on an overarching factor representing the willingness to grant moral rights to intelligent robots (de Graaf et al. 2021; Mays et al. 2025). In two questions we asked participants whether AI should be granted the right to life and whether AI should be granted the right not to be punished or treated cruelly (see Table 1 for item wordings and scales). We combined the responses to an index. The second measure deployed in study 1 takes up the idea that moral rights could become institutionalized in a democratic process. With a question similar to Spence et al. (2018), we asked participants to what extent they would be willing to support a political referendum granting basic rights to AI. In study 2, we used a more direct measure for the willingness to grant moral rights to AI. We asked participants to what extent they are of the opinion that various examples should have moral rights, with AI being one of them. This measure does not refer to a particular right but to the general idea of moral rights for AI.

Distribution of the Dependent Measures.

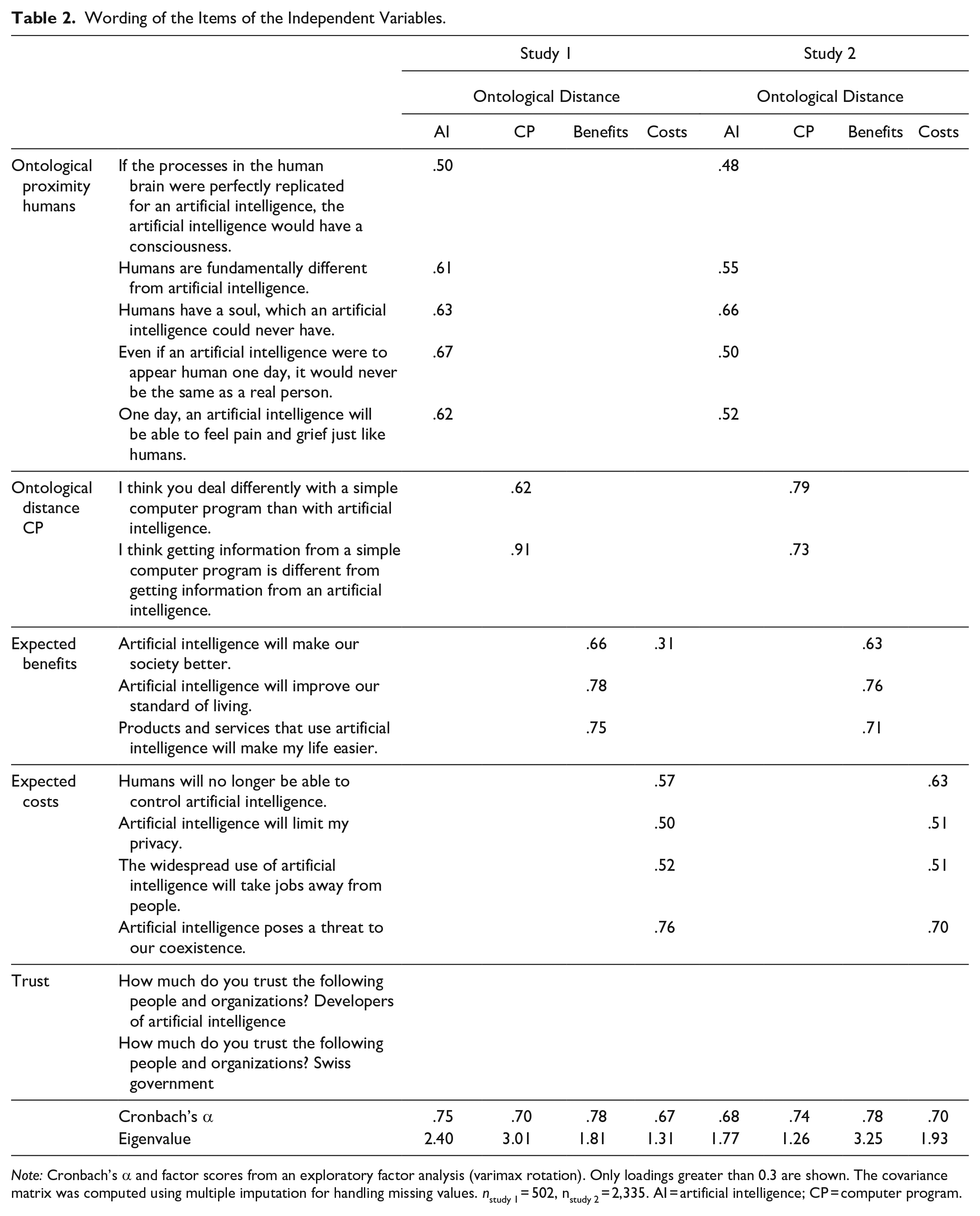

We used the same items to measure our main independent variables in both studies. Turning first to the ontological account, we used two scales to measure perceived ontological distance of AI in relation to humans (five items) and in relation to simple computer programs (two items). These scales were derived from previous research (MacDorman and Entezari 2015) and adapted to our research question (see Table 2 for the wording of all items). They refer to sentience, essence, and appearance, as the following item illustrates: “Even if an artificial intelligence were to appear human one day, it would never be the same as a real person.” For the computer program, we focus on the perception of fundamental technical aspects, as respondents are comparing two technological artifacts. To operationalize the social impact view, we developed measures for the respondents’ perception of expected benefits (three items) and costs (four items) of AI. A sample item for the benefits is “Artificial intelligence will make our society better.” Separating benefits and costs is important because individuals can hold ambiguous attitudes toward artificial agents (Dang and Liu 2021). Finally, we measured trust in developers and the state (i.e., the Swiss government) with one item each. All responses were recorded on five points scales ranging from “do not agree at all” to “fully agree.”

Wording of the Items of the Independent Variables.

Note: Cronbach’s α and factor scores from an exploratory factor analysis (varimax rotation). Only loadings greater than 0.3 are shown. The covariance matrix was computed using multiple imputation for handling missing values. nstudy 1 = 502, nstudy 2 = 2,335. AI = artificial intelligence; CP = computer program.

An exploratory factor analysis with the independent variables for ontological properties, benefits, and costs confirms the discriminant validity of these scales in both studies (see Table 2). Across both studies, the reliability of the scales is acceptable to very good, with Cronbach’s α ranging from 0.67 to 0.78. For the analysis, we employ ordinary least squares regressions, as all of our dependent variables are measured on metric or quasi-metric scales five points or more. Because the distributions of the dependent variables are skewed, we performed a logarithmic transformation prior to the analysis. 3 None of the data have been weighted. All models in study 1 include gender, age, income, years of schooling, household size, country, size of municipality, student status, and a validated scale to measure an individual’s tendency to respond in a socially desirable manner (three items, Cronbach’s α = 0.62; Kemper et al. 2014) as control variables. In study 2 we are able to include even more controls, such as interest in new technologies (four items, Cronbach’s α = 0.87), an index for the experience with AI technologies (such as ChatGPT), political orientation, various sociodemographic variables (income, education, language region, etc.) and the social desirability scale (three items, Cronbach’s α = 0.58). All calculations were performed using R version 4.3.0.

Results

Looking first at the distributions of the dependent measures (Table 1), we find considerable support for the right not to be punished or treated cruelly in study 1. Forty-five percent of the participants are at least partly willing to grant this right to AI. In contrast, respondents are clearly less supportive when it comes to the right to life. Just 22 percent of the respondents are at least partly agreeing with this question. The picture is similar for a referendum granting basic rights to AI. About every fourth respondent is at least partly supportive. Agreement seems somewhat lower compared with previous studies (Mays et al. 2025; Spence et al. 2018). As students are overrepresented in study 1 and the willingness to attribute moral rights is inversely related to education (see model 9), these figures might underestimate the tendency to grant such rights to AI in the general population. Yet in study 2 with a sample of the Swiss population, we obtain a similar result for the general idea of moral rights. Thirty percent of the respondents are at least partly agreeing that AI should be granted such rights (Mantello et al. 2023). All in all, then, the majority of the respondents in our studies is skeptical of AI rights. Still, there is substantial variation when it comes to the willingness to grant moral rights to AI. In the next step, we assess to what extent the ontological and social impact views are able to explain this variation.

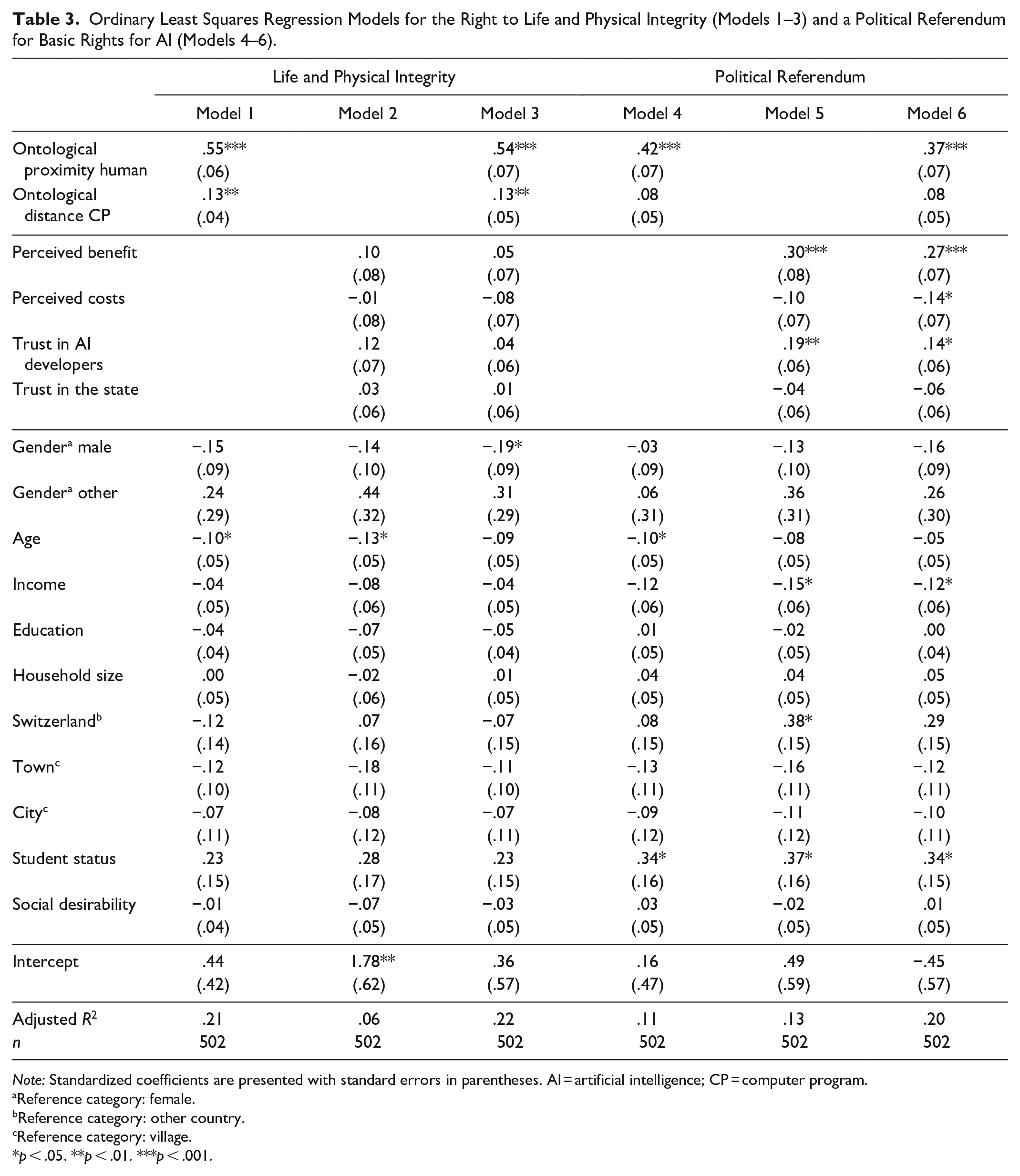

The index for the right to life and physical integrity (i.e., not to be punished or treated cruelly) is the dependent variable for the first three regressions (models 1–3 in Table 3). 4 In model 1, only including the predictors of the ontological account besides controls, we find that the ontological proximity of AI to humans and the ontological distance of AI to simple computer programs are both highly significant correlates (de Graaf et al. 2021; Lee et al. 2019; Mays et al. 2025; Nijssen et al. 2019). The more similar respondents perceive AI to humans and the more dissimilar AI to simple computer programs, the stronger the willingness to grant this type of right to AI. The explanatory power of the model is good with an adjusted R2 value of 21 percent. Model 2 regresses the dependent variable on the predictors from the social impact account and controls. Contrary to expectations, none of the independent variables correlate at a statistically significant level with the willingness to grant a right to life and physical integrity. Neither expected benefits or costs nor trust in developers or the state are related. Model 3 combines both accounts. The results are unchanged. Hence, in terms of the right to life and physical integrity, the data fully supports the ontological (corroborating hypotheses 1a and 2a) but not the social impact view (rejecting hypotheses 3a, 4a, 5a, and 6a).

Ordinary Least Squares Regression Models for the Right to Life and Physical Integrity (Models 1–3) and a Political Referendum for Basic Rights for AI (Models 4–6).

Note: Standardized coefficients are presented with standard errors in parentheses. AI = artificial intelligence; CP = computer program.

Reference category: female.

Reference category: other country.

Reference category: village.

p < .05. **p < .01. ***p < .001.

Next, the support for a political referendum granting basic rights to AI (models 4–6 in Table 3). Here, only the perceived ontological proximity of AI to human beings reaches a conventional level of statistical significance in model 4. The effect is in the expected direction. We are hence able to confirm hypothesis 1b but not hypothesis 2b. At 11 percent, the explained variance is lower than for the right to life and physical integrity. In model 5, we also find evidence for the social impact view. The expected benefit of AI for individuals and society is a highly significant covariate (Spence et al. 2018; Wang and Krumhuber 2018). The more respondents believe that AI will improve society and living standards, making life easier, the more they are inclined to support a referendum for AI rights. Trust in AI developers also predicts support. The more people trust developers, the more supportive they are of a referendum for AI rights. Perceived future costs and trust in the state yield no statistically significant effects in model 5. The explained variance of the model for the social impact account is similar to the ontological account with 13 percent.

Model 6 combines both explanations. All previously significant effects are robust. Additionally, the expected costs of AI are also significant at the 5 percent level controlling for the ontological variables. As hypothesized, the higher the costs in terms of unemployment, loss of control, privacy issues, and a threat to coexistence, the less people are inclined to politically support AI rights. The substantial increase in explained variance from model 4 or 5 to model 6 indicates that the two explanations are complementary. For example, including expected benefits, costs, and trust in developers nearly doubles the variance explained compared with a purely ontological explanation. This corroborates hypotheses 3b, 4b, and 5b but not hypothesis 6b on state trust. Overall, it is striking evidence for the social impact view in case of a referendum granting basic rights to AI.

Finally, we turn to our measure for the general idea of moral rights for AI in study 2 (models 7–9 in Table 4). We find again that perceived ontological similarity of AI to humans, perceived ontological distance of AI to simple computer programs, expected benefits, and trust in developers of AI are highly significant covariates, analyzing each explanation separately (models 7 and 8) or combined (models 9). This confirms hypotheses 1c, 2c, 3c, and 5c. Expected costs are not significantly correlated with the willingness to grant moral rights to AI. The same applies to trust in the state. The data are inconsistent with hypotheses 4c and 6c. Both views fare equally well in terms of explanatory power. Combining both explanations, we observe a substantial increase in adjusted R2 by a factor of 1.5. The social impact view clearly extends the explanatory power of the ontological view, and the other way round. Note also that models 7 to 9 include additional controls, representing important alternative predictors for the willingness to grant AI rights (Harris and Anthis 2021; Spence et al. 2018). However, once we take into account the variables from the ontological and social impact views, neither technology interest, nor experience with AI technologies, nor political orientation are significant (model 9). 5

Ordinary Least Squares Regression Models for the General Idea of AI Rights (M7-M9).

Note: Standardized coefficients are presented with standard errors in parentheses. AI = artificial intelligence; CP = computer program.

Reference category: female.

Reference category: lower education.

Reference category: village.

Reference category: German-speaking region.

Reference category: Christian denomination.

p < .05. **p < .01. ***p < .001.

Discussing briefly the remaining controls, we find that women, people with lower education and from the Italian-speaking part of Switzerland are more inclined toward the general idea of AI rights (model 9). Women also exhibit a tendency to support a right to life and physical integrity (model 3). Respondents with student status and lower income are more supportive of a political referendum (model 6). Age, urbanization, country of residence, and religious denomination never reach statistical significance. Neither does an individual’s tendency to respond in a socially desirable manner.

Discussion and Conclusion

In two studies with a convenience sample and a stratified random sample of the Swiss population, we found convincing evidence that the willingness to grant moral rights to AI follows not only from its perceived ontological properties but also from its expected impact on society. Moral consideration is grounded on the right sort of relations between AI and human beings. Only by including a sociological perspective on AI’s relational meaning, can we fully understand why social actors are willing to grant moral rights to AI. The support of AI rights does not only depend on what AI is but also on what AI does.

Although obtained in two separate studies with different populations, we might give an overall description of the results. Our analysis shows that the expected benefits of AI and trust in AI developers are strongly correlated with the support of the idea of moral rights for AI and a political referendum to grant basic rights. This confirms the results of previous studies explaining that economic and social value (Onnasch and Roesler 2019; Wang and Krumhuber 2018) or favorable attitudes (Spence et al. 2018) foster moral consideration toward AI (in contrast to Mays et al. 2025. Differing from expectations, perceived costs and trust in the state play a subordinate or no role, respectively. For these two types of support for AI rights, the explanatory power of the social impact account is just as high as for the ontological account. Moral rights for AI are granted on the basis of how it contributes to human interests and societal structures (Darling 2015; Schwitzgebel and Garza 2015; Owe et al. 2022). Figuratively speaking, AI has to earn its rights.

For the standard ontological account, we found that the ontological proximity of AI to humans is a very strong predictor of all types of rights included in both studies, with ontological distance to simple computer programs being relevant for a subset only. Corroborating experimental (Darling 2015; Riek et al. 2009), survey (de Graaf et al. 2021; Lee et al. 2019; Mays et al. 2025; Nijssen et al. 2019), and qualitative research (Tamatea 2008), the willingness to grant moral rights to AI is a function of the extent to which people uphold or diminish ontological boundaries between AI and other entities (in contrast to Tenhundfeld et al. 2021; Küster et al. 2021). From a sociological point of view, these boundaries are culturally formed, varying socially and historically (Spillman 2023). Our analysis shows that the social impact view rather complements than replaces the ontological account. Both tap into different reasons for granting moral rights to AI.

However, the social impact view only holds for the general idea of AI rights and the political referendum, but not for the right to life and physical integrity. Unfortunately, with our data, we are not able to fully explain why this is so. We can exclude certain candidates. It is not due to the political character of the referendum (Spence et al. 2018), as the findings are similar for the general idea of AI rights. One might also argue that some rights are merely a reflection of AI’s external value for humans (cf. Müller 2021). But then we should not find strong effects of ontological proximity in all cases. Finally, the difference might also be due to the particular populations or varying sample sizes of studies 1 and 2. Although the statistical evidence rather speaks against this interpretation, 6 it cannot be fully ruled out. To shed further light on this desideratum, future studies should measure a systematic selection of AI rights for a large sample of the general population to find the exact scope conditions for the social impact explanation.

A second limitation to our study is its cross-sectional design, making causal claims difficult. The support of AI rights might be the cause and not the consequence of certain beliefs. We encourage future research to employ panel designs to disentangle causal effects. As a third limitation, we have focused on the Swiss national context. Future studies should assess the cross-cultural generalizability of the findings. Finally, it was our goal to develop a sociological explanation for the willingness to attribute moral rights to AI among the general population, drawing on philosophical debates. Neither have we discussed all nuances of these philosophical accounts, nor have we exhausted the philosophical arguments (e.g., Birhane et al. 2024; Danaher 2020; Floridi 2013). We encourage future work to delve further into ethical inquiries to improve sociological explanations for the attribution of AI rights.

Although too often neglected, the views of the general population should inform philosophical and political debates (Bonnefon et al. 2024). In our samples, most people are opposed to the idea of AI rights. The burden of proof hence lies with the advocates. Guided by our findings, they could stress the social benefits of AI and foster trust in developers, instead of arguing for machine sentience, which might only be realized in the distant future (DeGrazia and Millum 2021; Ladak 2024). Moreover, ontological beliefs are difficult to change by short-term interventions. Experience in other fields shows, in contrast, that perceived benefits and trust can be strengthened by information and certification schemes (Arnold and Hasse 2015). For critics, warning us not to anthropomorphize technologies might not be enough (Birhane et al. 2024; Gibert and Martin 2022; Sparrow 2021). Rather, they should combat utopian narratives of AI’s future impact and blinding trust in its developers.

With recent technological advances, the discussion on AI rights has intensified. Moral rights for AI are a contentious issue. As philosophers and legal scholars are pondering normative implications, we are in need today of sociological investigations into people’s ordinary understandings of AI rights, how they relate to cultural concepts and imaginaries, and how they affect moral judgments and institutions. Yet the issue goes beyond AI. The extension of moral rights is a fundamental problem of any social order. Explaining when and how social groups and entities, from minorities to nonhuman animals to human-like technologies, become an object of moral consideration is crucial to our understanding of moral life in contemporary society.

Supplemental Material

sj-docx-1-srd-10.1177_23780231251357753 – Supplemental material for Earning Their Rights! Ontological Properties, Social Impact, and Moral Rights for Artificial Intelligence

Supplemental material, sj-docx-1-srd-10.1177_23780231251357753 for Earning Their Rights! Ontological Properties, Social Impact, and Moral Rights for Artificial Intelligence by Patrick Schenk and Vanessa A. Müller in Socius

Footnotes

Acknowledgements

We are indebted to Gabriel Abend and Luca Keiser for their invaluable contributions to our research project. We are especially grateful to Lukas Portmann for his support in conducting the survey at the University of Lucerne and Dorothee Müggler for her endless efforts in making our text beautiful. Special thanks to René for our chats on the topic (he’s a real person, not a chatbot, by the way). Additional thanks to Emmanuel Baierlé as well as to the editor of Socius and two anonymous reviewers for their valuable comments. An earlier version of this article was presented at the annual meeting of the Swiss Sociological Association in Muttenz, Switzerland.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This study was funded by the Swiss National Science Foundation (grant 100017_200750/1).

Supplemental Material

Supplemental material for this article is available online.

Notes

Author Biographies

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.