Abstract

Mediatization of science communication facilitates broader access to scientific knowledge. However, in the post-truth era, it makes the task of critical evaluation of science-based information more challenging than ever for laypeople. The authors conducted a factorial survey as a part of a nationally representative Russian survey panel to evaluate the influence of institutional information cues on laypersons’ plausibility judgments. The findings indicate that funding information and institutional affiliation influence how the general public perceives the plausibility of scientific results. The data obtained also show that research results from various scientific disciplines are perceived differently in terms of their plausibility.

The public understanding of science (PUS) depends on the ways research results are communicated to laypeople. Paradoxically, the mediatization of science communication in the digital era (Väliverronen 2021), while facilitating access to multiple science media sources and attracting public attention, makes the task of the critical evaluation of contradictory science-based knowledge and adjudicating reliable scientific information from post-truth more challenging for people than ever (Sinatra and Lombardi 2020; West and Bergstrom 2021). Although the term post-truth remains rather fuzzy and lacks a generally accepted definition, it has distinctive features, among which some authors (e.g., Braun 2019) highlight the appeal to emotions, the rise of new media, the attack on critical thinking and science, and others. With the growing epistemological focus on the social construction of alternative facts in the post-truth era (Fischer 2019), issues such as misinformation and the erosion of the distinction between truth and falsehood in political discourse (Collins 2023), as well as in the broader media environment (Iyengar and Massey 2019), have garnered significant scholarly attention worldwide (Sismondo 2017). This trend has renewed interest in public trust in science and highlighted the need for effective ways to communicate scientific research to a general audience (Rousseau 2021). The ease with which not only credible scientific information but also misinformation and invalid knowledge claims can be disseminated has increased exponentially in the era of widespread Internet and mobile technologies, and at that, misinformation sometimes spreads even faster compared with the truth (Vosoughi, Roy, and Aral 2018). As a result, it has become increasingly challenging for the general public to assess the credibility of supposedly scientific information and competing knowledge claims, a phenomenon often described as an “information arms race” resulting in declining trust in science, and an increasingly dissociated science communications landscape (Lewandowsky, Ecker, and Cook 2017; McIntyre 2018; Sinatra and Lombardi 2020).

The currently proposed interventions aim to increase the credibility of science by raising the standards of open science, recruiting and training more science writers (Kuehne and Olden 2015; West and Bergstrom 2021), and by developing new approaches to teaching the wider public to critically evaluate the plausibility of competing knowledge claims (National Research Council 2012; Sinatra and Lombardi 2020; Valladares 2021). However, the efficiency of these measures critically depends on deepening our understanding of the cognitive processes underlying ordinary plausibility judgments routinely produced by nonprofessionals.

In line with cognitive science, we define plausibility evaluation as a preliminary judgment of potential truthfulness related to knowledge claims suggested in a text serving as a criterion for its validity (Lombardi et al. 2018) or, in a slightly different formulation, as an assessment of the “acceptability or likelihood of a situation or a sentence describing it” (Matsuki et al. 2011:926). Consequently, if we want to investigate how laypeople come to accept scientific claims as valid using all available prior knowledge and judgments on a large variety of properties, we should explore potential information cues influencing their perceptions of the plausibility of incoming scientific information they encounter.

Such judgments are made both by scientists and by nonscientists, who intuitively or reflectively assess how well certain premises support a conclusion while simultaneously relating the incoming information to the context-relevant actualized world knowledge they already possess and assess its “goodness of fit” (Connell and Keane 2006; Lombardi, Seyranian, and Sinatra 2014; Richter and Maier 2017; Sinatra and Lombardi 2020; Wertgen and Richter 2023). It makes plausibility dependent both on the perspective of the one who is making the judgment, which includes their knowledge, dispositions, and beliefs, as well as on the content of the proposition. This internal and external coherence assessment includes, for example, knowledge about the structure of the argument as well as conceptual and content knowledge (Von der Mühlen et al. 2016). Plausibility is related, but not limited, to argumentation, source trustworthiness evaluation, and reading comprehension, while being associated with other criteria for judging incoming information, such as coherence (Connell and Keane 2006), credibility (Lombardi et al. 2014), and comprehensibility (Richter and Maier 2017). Existing research supports the claim that social information cues—such as funding acknowledgments, disciplinary field, and organizational affiliation—can influence research impact and peer evaluations among professionals (e.g., Bromham, Dinnage, and Xia 2016; Rigby 2013; Zhao 2010). Several studies also highlight the role of contextual information in shaping lay assessments of the credibility of scientific findings and trust in science (e.g., Lupia 2013; Nisbet, Cooper, and Garrett 2015). However, empirical studies that systematically evaluate, through experimental designs, the main effects and interactions of specific social information cues on a broader audience of science communications remain limited, focusing primarily on comparing public and private research (Critchley 2008; Critchley, Bruce, and Farrugia 2013) and on the institutional sources of the claims (e.g., government, business, or research institutions) (Carlisle et al. 2010; Sanz-Menéndez and Cruz-Castro 2019).

In this study, we leverage a novel empirical dataset from a factorial survey conducted as part of the 2020 wave of a nationally representative panel survey. This approach allows us to quantitatively assess how institutional information cues—such as funding size, institutional affiliation type, and disciplinary research field—shape laypeople’s plausibility judgments of research findings.

Social Information Cues in Judging Plausibility

In many situations, laypeople tend to orient their mostly intuitive plausibility evaluations toward “extrascientific” contextual cues embedded in cognitive heuristics in order to save their limited cognitive resources and time and compensate for what some authors consider as the inevitable ontological differences between scientific and everyday thinking (Bromme and Gierth 2021; Taddicken and Krämer 2021). Particularly, in a situation in which the layperson cannot decide which of the conflicting scientific claims to accept as true on the basis of the available stock of relevant knowledge, they may resort to social information cues associated with reputational signals, source credibility features, etc. For instance, while evaluating the credibility of an online information source, people usually check the Web site’s “about” section or Web address (e.g., preferring .org over .com). When deciding whom to trust in a professional field, such as medicine or food technology, and estimating the epistemic trustworthiness of a science-based information source, individuals consider not only observable markers of expertise but also the integrity and benevolence of their doctors, personal nutritionists, bakers, and so on (Hendriks, Kienhues, and Bromme 2016; Sinatra and Lombardi 2020).

Expectation states theory (EST) could serve as another important resource for the sociological interpretation of such reputational signaling in different social contexts as it generally explains how hierarchies of evaluation, influence, and prestige could form at a fast speed based not only on specific task-relevant perceived abilities and characteristics of individual group members but also on information cues indicating diffuse status characteristics that affect expectations for overall performance in a large variety of situations (Berger, Wagner, and Webster 2014; Correll and Ridgeway 2003). Such status-based expectations often reflect deeply ingrained cultural beliefs or easily observable cognitive shortcuts for high status rather than use specific information derived from cognitively demanding systematic observations related to genuine differences in efficiency, competence, or abilities. Some biasing effects from such status-based expectational shortcuts were demonstrated in a significant number of studies of such diffuse status characteristics as gender or race (Correll and Ridgeway 2003; Ridgeway 2006). Generally, effects of diffuse status characteristics used as cognitive heuristics can be observed for a wide range of social evaluation and influence formation processes beyond the formation of group status hierarchies, including those related to modern sciences as “reputational work organizations” (Whitley 2000).

In academic contexts, EST can relate directly to how disciplines attain different “status levels” on the basis of diffuse status characteristics, such as funding, affiliation with prestigious institutions, and the type of research they conduct. These diffuse characteristics conveyed through corresponding informational cues—such as details about low versus high funding, existing connections with prestigious research organizations, or indicators of “hard” quantitative versus “soft” qualitative research—often shape expectations regarding competence and performance. These expectations, in turn, influence both formal and informal rankings within the academic ecosystem, as well as expert and public assessments of the plausibility of research results. Thus, EST provides a useful sociological framework for understanding how institutional cues, conceptualized as diffuse status characteristics, influence research performance expectations and, by extension, perceptions of the scientific plausibility of research findings. In line with this theoretical framework, there is some convincing evidence that contextual information about the disciplinary affiliation of the research, grant support received, and the prestige of a respected academic institution may appear among such proxies for status, reputation, and credibility. In the present study we evaluate the effects of institutional information cues such as funding size, type of institutional affiliation, and disciplinary research field on the plausibility judgments of laypeople.

The grant funding size may be indicative of the quality of the research in the eyes of both academic experts and nonacademic experts, with increases in federal or internal institutional funding of academic research outranking funding from private and local government sources (Blume-Kohout, Kumar, and Sood 2009; Connolly 1997). Research funding is also frequently considered as a measure of research quality (Ma, Mondragón, and Latora 2015; Murray et al. 2016; Rosenbloom et al. 2015), which is based on elaborated and careful expert assessment (Geuna and Martin 2003). The relationship between these two variables, however, is not necessarily linear (Bloch, Schneider, and Sinkjær 2016). There is a strain of research demonstrating that scientific publications that mention grant funding attract more attention from peers and are cited more frequently compared with those without such information cues (Rigby 2013; Yan, Wu, and Song 2018; Zhao 2010). However, it is still unclear whether information on grant support size influences laypeople’s plausibility judgments about research findings. Although it is considered one of the most influential factors in expert evaluation, literature on funding effects on nonprofessional perceptions is quite limited and inconclusive. A recent study (Sheremet and Deviatko 2022) revealed no difference in how students perceive scientific findings depending on the research funding mentioned. However, students constitute a special group of nonprofessionals and may differ from the rest of the general public. Thus, although the role of research funding in expert evaluation of scientific research has been established, existing evidence of possible direct effects from information about research funding on lay plausibility evaluations needs further examination on representative samples. Hence, we expected that the research findings obtained by scientists with larger financial support would be perceived as more plausible compared with the same findings obtained by scientists with smaller one.

In a similar vein, information indicative of the relative prestige of an institution doing specific research can be considered among potentially relevant cues for the quality of the research. Prestigious universities and research organizations may be considered as more credible sources of high-quality research findings in many real-world contexts. The sociology of science abounds with empirical evidence of cumulative advantage due to a good reputation accrued not only for individual scientists but also for the research organizations and universities they represent. Scientists from major universities gain more recognition compared with their colleagues from smaller ones (Crane 1965), and early career researchers with PhDs granted by prestigious universities publish more successfully (Laurance et al. 2013). The same tendency is evident in the peer-review process of grant applications, manuscripts, and conference abstracts being more likely recognized and accepted when affiliated with prestigious institutions (Bakanic, McPhail, and Simon 1987; Gillespie, Chubin, and Kurzon 1985; Peters and Ceci 1982; Ross et al. 2006). Although there is a vast and convincing body of research on the correlation between institutional prestige and expert evaluation of science, the current literature on the impact of such factors on laypersons’ perceptions is rather insufficient. However, some authors (Sheremet and Deviatko 2022) found no such effect for students. The results obtained by scientists from prestigious universities did not differ from others in terms of their perceived plausibility. Although the influence of institutional prestige on expert evaluation of scientific research is well established, we have yet to determine how it shapes lay perceptions of science. Our study addresses this gap by examining whether laypeople use institutional affiliation as a cue when assessing scientific findings.

In the present research, we check whether information about a specific type of research institution will influence the perceived plausibility of research results in the eyes of a wider audience. Members of the Russian general public tend to consider institutes of the Russian Academy of Sciences (RAS) as more trustworthy in comparison with leading universities (ZIRCON 2022). RAS is the oldest research establishment in Russia (established in 1724), consisting of a network of scientific research institutes across the country and keeping its leadership in fundamental research and corresponding prestige despite controversial reforms initiated by the government over the past decade. According to the recent representative all-Russian telephone survey of the population 18 years and older, conducted in 2024, 92 percent of respondents have known or heard something about the RAS activities, and 72 percent have trust in the RAS as an organization (ZIRCON 2024). In the present research, we accept the supposition that institutes of RAS are considered by members of the general public as prestigious high-impact research organizations even in comparison with leading universities, as the latter tend to be perceived as predominantly teaching oriented despite recent considerable reforms aimed at strengthening their research orientation. Hence, we expected that the research findings obtained by scientists from the RAS would be perceived as more plausible compared with the same findings obtained by scientists affiliated with a leading university.

Cultural boundaries between scientific disciplines are also known to be another information cue used not only by experts but also by other audiences (Gieryn 1999). A number of studies (e.g., Barzilai and Weinstock 2015; Estes et al. 2003; Hofer 2000) have demonstrated that knowledge in social sciences and humanities, compared with natural sciences, is usually perceived as less certain and unchanging. Documentary data from judicial decisions about contested expert evidence in U.S. district courts also show that judges systematically favor evidence from natural scientists compared with social scientists (O’Brien, Hawkins, and Loesch 2022). Scientific disciplines can differ in plausibility because of such observable boundary-defining characteristics as the nature of the evidence available, object of research, particular research methods and prevailing research designs, level of theoretical maturity, and levels of reproducibility of research findings (Ioannidis 2005; Krishnan 2009; Whitley 2000). Information cues reflecting these differences can work as status signals and influence the perceived plausibility and credibility of research findings related to specific disciplinary research fields. It is noteworthy in this context that the explicit disciplinary attribution might be a less salient cognitive shortcut when presented in brief communications of research findings, as found in two vignette experiments (Scheitle and Guthrie 2019), thus leading to very different effects on scientific information trustworthiness and plausibility compared with conventional survey questions concerning perceived trustworthiness, etc. that only provide the name of a discipline. As our main emphasis is on contextual social factors as potential cues, in the present study, we examine the perception of research findings in different scientific disciplines to account for possible discipline-specific patterns.

Empirical studies also show that laypeople’s knowledge and contextual information cues cannot be considered the sole influence on ordinary perceptions of science. Particularly, ordinary plausibility judgments can also depend on a generalized trust in science and evaluations of its importance, as well as on a general awareness of science and technology (S&T; Allum et al. 2008; Roberts et al. 2013). Although the positive relationship between education, trust in science, and perceptions of science has been convincingly demonstrated, their moderating effects on relationships between institutional information cues and laypeople’s plausibility evaluations remain underinvestigated. Consequently, we also attempt to consider such effects and account for them.

Method

Participants

The study was conducted in 2020 as a part of the 29th wave of the Russian Longitudinal Monitoring Survey (RLMS). The RLMS is a nationally representative panel survey using face-to-face interviews with a multistage address-based probability sample. The project has been run jointly by the Carolina Population Center at the University of North Carolina at Chapel Hill, Demoscope, and the Institute of Sociology RAS and since 2010 has been coordinated by HSE University. 1

The RLMS sample includes residents of 160 settlements (cities and towns, townlike settlements, and villages) in 33 regions in the Russian Federation. The sample is designed to allow the analysis of household data, as well as of data on all individuals. It has two parts: (1) a representative all-Russia sample or original sample addresses (drawn in 1994) and (2) the follow-up addresses, or panel part, consisting of people who were interviewed as the original sample in their original locations for at least one round, and then they moved to a new address. The history of the RLMS, the sample design and its replenishment, loss to follow-up, and other key factors are provided in the data resource profile (Kozyreva, Kosolapov, and Popkin 2016). For the present study, we used a representative all-Russia sample. Informed consent was obtained from all respondents who answered the individual (separated according to age [≥14 and <14 years]), household, and community infrastructure questionnaires.

Our factorial survey was conducted within the representative part of the sample aged from 18 to 66. Data were collected through pen-and-paper personal interviews by professional interviewers. Of the 7,467 participants in the initial sample, we filtered out 2,021 incomplete cases. We also excluded data from one of the regions where respondents were asked to participate in all of the conditions because of a technical error (398 cases), thus not aligning with the procedure we aimed for (see subsequent discussion for details). The final sample consisted of 5,048 participants, of whom 54.3 percent were female, aged 18 to 65 years (M = 42.4 years, SD = 13.26 years), with 33.6 percent holding university degrees, 29.3 percent vocational secondary education diplomas, 28 percent secondary school diplomas, and 9.1 percent lower levels of education.

Design

In addition to the main RLMS questionnaire, we included a set of factorial vignettes representing lay summaries of different scientific studies using a 2 × 2 × 4 full-factorial experimental design with within-subject and between-subjects factors. The present experiment was not preregistered. The between-subjects factors we used were (1) Y, funding (low [300,000 rubles] vs. high [5 million rubles]), and (2) Z, the type of academic institution the scientists were affiliated with (“one of the RAS institutes” vs. “a leading university”). We also included a within-subject factor: (3) X, research field (sociological, criminological, geological, or biomedical research). Table 1 summarizes the conditions and experimental plan we used.

Experimental Design and Conditions Used for Vignette Construction.

Note: RAS = Russian Academy of Sciences.

Procedure

On the basis of the combination of the between-subject factors, funding, and institutional affiliation, we created four conditions, or groups of respondents (Table 1). Within each group, each participant read four brief textual vignettes describing sociological, criminological, geological, and biomedical research. Thus, some participants read about research that received a larger research grant, whereas others read about research with less funding. Analogously, participants read about research conducted by one or the other type of academic institution. Participants read summaries of those studies and evaluated the plausibility of the results. Furthermore, participants evaluated the perceived comprehensibility of each study, they also expressed their attitudes toward science by answering a separate set of questions and provided information about their sociodemographic background as part of the RLMS main questionnaire. The average time to complete the relevant questionnaire block was 25 minutes.

To assign participants to different conditions in face-to-face interviews, we created four sets of vignettes, each vignette placed on a separate card (options A, B, C, and D). The interviewers were instructed to give card A to the first respondent they talked to, card B to the second, card C to the third, card D to the fourth, and then rotate cards, accordingly, giving the next respondent the card with the number following the card number received by the previous respondent.

Because of the coronavirus disease 2019 (COVID-19) pandemic, there were some complications with the data acquisition process. The time between visits to different households increased, so the number of cases when all household members could be interviewed at once was reduced. As a result, it was difficult for interviewers to remember and control the required order of cards. Therefore, they would often start interviews in a new household or with a new member of that household using card A. Hence, interviewers gave card A more frequently compared with other cards.

Materials

We created a set of four brief research summaries (“stems”) for constructing factorial vignettes: three-sentence long lay summaries of approximately 50 ± 3 words. Each of them was based on a real scientific study from the relevant disciplinary field, sociological, criminological, geological, and biomedical research, published in Nature, and written following the recommendations provided by Kuehne and Olden (2015). Our choice of specific research summaries from different disciplines allowed us to address both social and natural sciences.

Thus, each summary was related to social or natural sciences and contained a brief research description: its objectives, methodology, and key results. In light of the results of Scheitle and Guthrie (2019), we did not explicitly attribute each vignette to a relevant disciplinary field. By adding information about funding and the institution, in accordance with varying levels of our experimental factors, we created a set of 16 vignettes.

A basic set of lay summaries that we used, without funding and academic affiliation information, is available in Supplemental Material 1.

Here we provide an example of two complete vignettes corresponding to different levels of experimental factors: X3Y1Z2: Scientists from one of the institutes of the Academy of Sciences found a correlation between people’s experiences of their parents’ deaths as children and future crimes. They studied national statistics on people born between 1983 and 1993. It turned out that people aged 15–30 were more likely to commit violent crimes if they had experienced a parent’s death from external causes (suicide, murder, accident) as a child. The research was conducted under a grant of 300 thousand rubles. X2Y2Z1: Scientists from one of the leading universities studied the adaptation of animals to a weightless environment. They studied the behavior of young and adult mice placed in a special module aboard the International Space Station. It turned out that soon after launch, only the young mice began to move, in other words, to run after each other and in circles, more actively than mice placed in the same module on Earth. The research was carried out under a grant of 5 million rubles.

Measures

Dependent Variable

After being exposed to each vignette describing the research, participants evaluated the degree of plausibility of the research results on an 11-point scale (from 0 = “completely implausible” to 10 = “completely plausible”). This measurement strategy is in line with the dominant view on plausibility assessment (Bråten, Salmerón, and Strømsø 2016), which uses some version of a “plausibility rating,” enabling respondents to rate how plausible they find a tested statement on an ordinal scale from “completely implausible” to “completely plausible” or a dichotomized scale reflecting whether a statement is plausible or not (Isberner et al. 2013; Von der Mühlen et al. 2016).

Other Measures

Participants also rated each of the research descriptions in terms of its comprehensibility on a 4-point scale (from 1 = “very simple” to 4 = “very complicated”).

Before answering our factorial vignette module, survey participants reflected on their attitudes to the role of S&T news (whether it is important for them to stay up to date, or it is enough to know general information, or whether such news doesn’t make any sense for them). They also rated the level of their awareness of S&T on a 4-point scale (from 1 = “very well informed” to 4 = “not informed at all”). Using 4-point scales, participants also expressed their opinions about different scientific fields in terms of their perceived trustworthiness (from 1 = “very trustworthy” to 4 = “not trustworthy at all”; six items, Cronbach’s α = .91) and the importance of their results (from 1 = “very important” to 4 = “not important at all”; six items, Cronbach’s α = .89). Relying on the OECD (2015) Fields of Research and Development classification, we included the following: natural sciences, engineering and technology, medical and health sciences, agricultural and veterinary sciences, social sciences, and humanities. We also obtained basic sociodemographic data for each participant from the RLMS main questionnaire (age, gender, and education).

Results

Our research question explores the effects of information about funding, research field, and institutional affiliation on the perceived plausibility of research results. To evaluate all the main effects and pairwise interaction effects of these experimental factors we used repeated-measures analysis of variance. We also used control variables as covariates (age and education) and between-subject factors (gender).

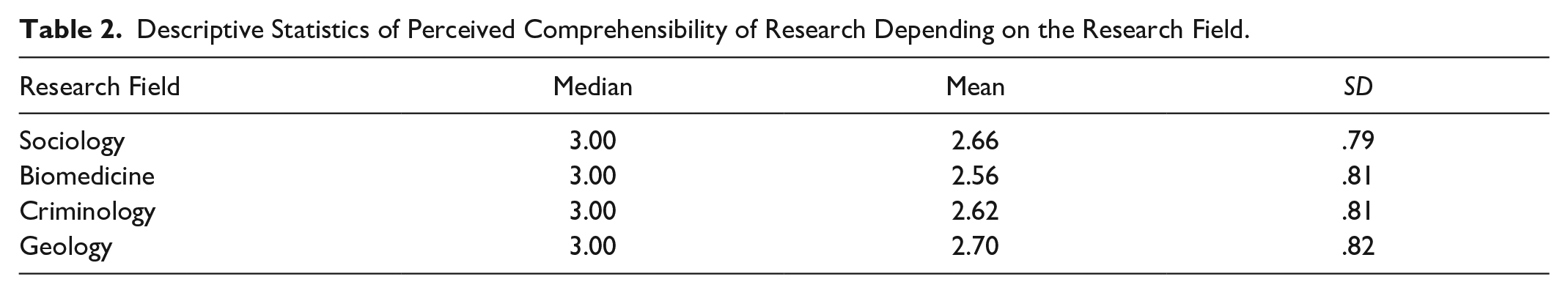

As part of the study, we included a subjective comprehensibility scale in relation to the research descriptions in our vignettes. Descriptive statistics of the subjective comprehensibility for the sample of vignettes are presented in Table 2.

Descriptive Statistics of Perceived Comprehensibility of Research Depending on the Research Field.

We used two levels of funding (300,000 vs. 5 million rubles) and two types of academic institution (“RAS” vs. “a leading university”) with sociological, criminological, geological, and biomedical research as levels of a within-subject factor to test the possible effects. As funding and academic institution were included as between-subject factors there were four separate groups of respondents. Table 3 provides the descriptive statistics for all combinations of conditions we used.

Descriptive Statistics of Perceived Plausibility of Research Results Depending on the Funding, Institutional Affiliation, and Scientific Field.

Note: RAS = Russian Academy of Sciences.

There was a small but significant main effect of funding (F1, 5038 = 8.28, p = .004, η p 2 = 0.002 as a measure of effect size) with the results provided by lower funded research perceived as less plausible than those from highly funded ones (estimated marginal mean [EMM] difference = 0.174, p = .004). The data also revealed a small but significant main effect of institutional affiliation (F1, 5038 = 3.99, p = .046, η p 2 = 0.001) with results from a leading university perceived as less plausible than those from the RAS (EMM difference = 0.12, p = .046) (Figure 1). The analysis did not reveal statistically significant effects of pairwise interaction between factors.

Estimated marginal means and 95 percent confidence intervals for funding levels (left) and institutional affiliation (right).

There was a small but significant main effect of the research field (F3, 5038 = 2.83, p = .037, η p 2 = 0.001) with sociological research results perceived as significantly less plausible than those in criminology (EMM difference = 0.216, p < .001) and geology (EMM difference = 0.28, p < .001), similar to biomedical results being less plausible than criminological (EMM difference = 0.218, p < .001) and geological (EMM difference = 0.282, p < .001) ones (Figure 2).

Estimated marginal means and 95 percent confidence intervals for disciplines.

As for the controls, there was a small but significant main effect of age on the perceived plausibility (F1, 5038 = 3.95, p = .047, η p 2 = 0.001), the latter decreasing among older people. There was also a significant interaction effect of the research field and educational level (F3, 5038 = 3.65, p = .012, η p 2 = 0.001). Respondents with higher levels of education generally perceived findings from sociology as slightly more plausible compared with less educated people. No other significant interaction effects were found. There was also no significant main effect of gender in the model.

We also tested a more complex model, adding variables related to widely used basic indicators of PUS research: the perceived importance and trustworthiness of scientific findings as covariates and the subjective awareness of S&T as a factor. There was a small but significant effect of the perceived importance on the perceived plausibility (F1, 4428 = 27.67, p < .001, η p 2 = 0.006), the latter increasing among those perceiving scientific results as important. There was also a small but significant effect of the subjective awareness of S&T (F1, 4428 = 4.54, p = .004, η p 2 = 0.003), with totally uninformed respondents generally perceiving findings as less plausible compared with well-enough informed people (EMM difference = 0.353, p = .003). No significant main effect of trust in science was found. However, there was a significant interaction effect of the research field and trust in science (F3, 4428 = 4.82, p = .002, η p 2 = 0.001), with increases in perceived plausibility of sociological, biomedical, and geological research findings weakly associated with higher trust in science. Moreover, although funding and institutional effects remain significant in this more detailed model, the main effect of the research field does not. However, this does not deny the existence of inequalities in the status of the broad disciplinary fields. According to our respondents’ general assessment of the perceived importance and trustworthiness, the latter were ranked in the following order: medical sciences, natural sciences, technical sciences, agricultural and veterinary sciences, social sciences, and humanities (see descriptive statistics in Table 1 in Supplemental Material 2).

Discussion and Conclusion

In this research we examined the effects of information on research funding, institutional affiliation, and disciplinary field upon lay plausibility perceptions of scientific findings.

Our results indicate, as expected, that funding information and institutional affiliation influence how the general public perceives scientific findings. Specifically, reporting larger funding leads to higher levels of the perceived plausibility of research results among laypeople compared with providing information about lower funding. The results obtained by scientists from leading universities are perceived as somewhat less plausible than those from scientists working at the RAS. This could be because, being the oldest research organization in the country, the RAS is widely known among different sociodemographic groups and has been highly trusted over the years (ZIRCON 2024). These results are in agreement with our expectations, supported by an extensive line of previous research on science productivity, performance, and justification (Bloch et al. 2016; Laurance et al. 2013; Rosenbloom et al. 2015; Ross et al. 2006; Yan et al. 2018). They show that laypeople tend to use funding information and institutional affiliation as cues in accessing the plausibility of scientific findings in a way similar to experts using such information in different aspects of research evaluation (i.e., peer review, citations, etc.). These observations fill an existing gap in the literature. At the same time, they differ from earlier findings (Sheremet and Deviatko 2022). However, the previous study used specific university names as informational signals of prestige, while the present research used a less differentiated albeit ecologically more valid dichotomy of RAS research institutes versus leading universities. This subtle methodological difference may put some limits on direct comparisons between the two studies, but it allows a more accurate estimation of institutional prestige effect on the plausibility assessment of scientific results.

Our data also show that research results from various scientific disciplines are perceived differently in terms of their plausibility, which is generally in line with previous studies (Barzilai and Weinstock 2015; Estes et al. 2003; Hofer 2000; O’Brien et al. 2022; Sheremet and Deviatko 2022). The plausibility perceptions of sociological research results in our findings did not differ from those of biomedical research results, while both were evaluated more critically compared with the results of criminological and geological research. These differences follow our prediction as well as recent research (Lewis et al. 2023) concerning the relatively lower prestige of social sciences compared with natural sciences when one compares paradigmatic cases of sociology and geology. Vignettes describing biomedical and criminological research tend to these two poles, respectively.

The absence of significant differences in the plausibility perceptions of sociological and biomedical research results, and lower scores for the latter compared with criminological research results were rather unexpected, contradicting the thesis about the lower status of results produced by social sciences. The lower scores for the biomedicine vignette may be partly because the study was conducted during the COVID-19 pandemic when skepticism toward mass vaccination began to spread among Russians (Reyniuk 2021) and trust in scientists declined (Gallup 2021; WCIOM 2020). The specificity of the selected vignettes itself may be another factor, namely the absence of the direct disciplinary attribution of the research findings, which is consistent with the findings from vignette experiments of Scheitle and Guthrie (2019), where changing disciplinary attribution did not affect individuals’ perceptions of the scientific-ness and trustworthiness of a study. Also, the particular research case of the biomedical vignette and its methodology may have been less close to the respondents’ life experience and prior beliefs than presented in the criminological one (Wertgen and Richter 2023).

However, the disciplinary field effect was very modest and probably limited. One potential limitation of our study relates to the fact that as we did not use more than one study from a discipline for practical limitations, our narrow disciplinary research fields were necessarily conflated with the specific research specimen selected. A particular research specimen may contain more features relevant to the respondent’s life experience and background knowledge adding to its general plausibility. Although it would be difficult to keep specific research content fixed while changing different disciplines for reasons of ecological validity, one may use significantly more vignettes per research field to cancel out specific extraneous effects after aggregation. At the same time, the pattern of significant differences in plausibility ratings of disciplinary research fields turned out to be in good agreement with relevant findings from the recent research using a completely different set of vignettes (Sheremet and Deviatko 2022), demonstrating some robustness of the effect discovered.

We discovered that ordinary plausibility judgments are shaped similarly to the patterns described in a range of PUS surveys (Allum et al. 2008; Roberts et al. 2013). The perceived importance of scientific findings and subjective awareness of S&T positively influence the perceived plausibility of research results among laypeople. Respondents who are totally uninformed on S&T matters tend to perceive research findings as less plausible compared with more informed respondents. This finding supports the previously proposed conclusion that the critical perception of science can be at least partly attributed to an increasing proportion of the population not informed about S&T (Klinger et al. 2022). Considering these results, research on factors determining plausibility judgments may also contribute some evidence to a more fundamental discussion on the Spinoza-inspired view that understanding something is actually conducive to believing it (Gilbert, Tafarodi, and Malone 1993). Following this line, those who do not understand some research-based descriptions would be less likely to believe its plausibility. Using a factorial survey design, we directly examined how institutional information cues in brief summaries of scientific findings influence laypeople’s plausibility assessments. Specifically, we systematically varied three factors that could affect these assessments and found statistically significant effects of funding information and institutional affiliation on perceived plausibility. In contrast, the effect of disciplinary affiliation in our data was less clear and not easily interpretable. This approach paves the way for further systematic research into the influence of these and other informational signals that convey diffuse status characteristics on plausibility assessments.

The results advance knowledge about the factors determining ordinary judgments of scientific findings that can be used to improve science communication. In particular, our findings have some practical implications for further developing efficient strategies to inform the general public about scientific findings. They underscore the importance of deliberately incorporating institutional cues, such as funding information and institutional affiliations, into science communication to enhance the perceived plausibility and credibility of reliably sourced scientific materials (Sinatra and Lombardi 2020). Strengthening these cues may help anchor the scientific status of particular research results or certain disciplines in public discourse as well as to counter the growing risk of erosion of the distinction between truth and falsehood in the post-truth era (Collins 2023; Iyengar and Massey 2019). Additionally, the findings highlight the need for further research into the differential effects of disciplinary affiliation cues and the boundary-defining observable characteristics of disciplinary fields. Such research could help address existing status-related biases in public trust toward “underprivileged” disciplines, fostering more favorable evaluations of the plausibility and credibility of their research findings.

Supplemental Material

sj-docx-1-srd-10.1177_23780231251332431 – Supplemental material for Laypeople Use Institutional Information Cues in Judging the Plausibility of Research Findings: Results from a Factorial Survey

Supplemental material, sj-docx-1-srd-10.1177_23780231251332431 for Laypeople Use Institutional Information Cues in Judging the Plausibility of Research Findings: Results from a Factorial Survey by Inna F. Deviatko, Elizaveta Sheremet, Valentina Polyakova and Konstantin Fursov in Socius

Footnotes

Acknowledgements

We thank the anonymous reviewers for their helpful comments and suggestions, which strengthened the article. We also thank the Human Capital Multidisciplinary Research Centre of HSE University for the opportunity to include our set of questions in the RLMS questionnaire and to collect data.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.

Data Availability Statement

Supplemental Material

Supplemental material for this article is available online.

1

The RLMS is a series of annual national representative surveys designed to monitor the effects of Russian reforms on the health and economic welfare of households and individuals in the Russian Federation after perestroika. It was piloted in 1992 and 1993. The regular phase of the RLMS-HSE was started in 1994. Detailed information about the RLMS project, the sample design description, and datasets by individuals and households containing answers of respondents on standard questionnaires in English are available at the HSE-RLMS (https://www.hse.ru/en/rlms/) and Carolina Population Center (![]() ) Web sites.

) Web sites.

Author Biographies

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.