Abstract

The authors use the timing of a change in Twitter’s rules regarding abusive content to test the effectiveness of organizational policies aimed at stemming online harassment. Institutionalist theories of social control suggest that such interventions can be efficacious if they are perceived as legitimate, whereas theories of psychological reactance suggest that users may instead ratchet up aggressive behavior in response to the sanctioning authority. In a sample of 3.6 million tweets spanning one month before and one month after Twitter’s policy change, the authors find evidence of a modest positive shift in the average sentiment of tweets with slurs targeting women and/or African Americans. The authors further illustrate this trend by tracking the network spread of specific tweets and individual users. Retweeted messages are more negative than those not forwarded. These patterns suggest that organizational “anti-abuse” policies can play a role in stemming hateful speech on social media without inflaming further abuse.

It is commonplace to find abusive language directed toward women and racial or sexual minorities online. These types of messages often reverberate through networks of interacting users (Felmlee, Inara Rodis, and Francisco 2018), with negative tweets reposted more rapidly and frequently than positive or neutral messages (Tsugawa and Ohsaki 2017). In response to public outcry, Twitter recently embarked on widely publicized policy changes to clarify existing standards and facilitate more reporting and removal of abusive content. We examine the effect of one set of major policy rule changes on negative messages through a sentiment analysis of tweets sent before and after the policy shift.

This moment provides a timely opportunity to examine how the content of derogatory messages may be influenced by Twitter’s attempt to exert greater control over its users. Here we build on this opportunity to test several social scientific arguments regarding the influence of organizational efforts to sanction negative behavior. Previous research suggests that such top-down attempts at control can be effective if the sanctioning authority is perceived as legitimate (Baldassarri and Grossman 2011). However, other evidence suggests that targeted bullies and trolls may instead respond with even more aggressive behavior or take covert measures to evade detection (Flores 2017).

Online Harassment on Social Media

Online harassment constitutes a routine occurrence in society, where online harassment refers to unwanted, problematic digital contact (Lenhart et al. 2016), and that arises in various forms with numerous labels, such as cyberbullying, hate speech, and online aggression. According to nearly two thirds of Americans (62 percent), online harassment poses a major social problem (Pew Research Center 2017), with close to half (47 percent) experiencing online harassment personally (Lenhart et al. 2016). These types of problematic online interactions can be harmful, inflicting emotional and psychological damage on their victims (e.g., Juvonen and Gross 2008; Patchin and Hinduja 2012; Şahin 2012; Ybarra et al. 2006). The threat of possible Internet abuse also can lead individuals, especially former victims, to self-censor and suppress their online presence (Lenhart et al. 2016), suggesting that online harassment curtails communication, particularly for those most vulnerable to attack.

Studies of cyberbullying and harassment tend to take an individual-level focus and could benefit from more of a sociological lens, according to scholars (Ey, Taddeo, and Spears 2015). Recent research points to two social, group processes that contribute to cyber and peer aggression, namely, the enforcement of social norms and the enhancement of social status (Faris and Felmlee 2011, 2014; Felmlee and Faris 2016). For organizations to be effective in limiting harassment and abuse on their social media platforms, such findings suggest that these organizations will need to shift norms to encourage greater online civility and/or make harassment less socially rewarding by minimizing its virtual fingerprint. Indeed, removal of offending messages could curtail the ability of hostile users to expand their online followings via likes and content sharing and thereby diminish one type of motivation for disseminating disparaging content.

Several previous studies (e.g., Chatzakou et al. 2017; Felmlee et al. 2018; Felmlee, Inara Rodis, and Zhang 2020; Xu et al. 2012) examined online harassment on Twitter, where racist, sexist, and homophobic tweets remain readily accessible to the general public at any time of day. Prior research finds that it takes an average of 24 seconds to 1.5 minutes to locate the first of thousands of derogatory, aggressive tweets, on the basis of a sample of searches for assorted defamatory slurs and insults (e.g., n**ger, who*e, fa**ot) (Sterner and Felmlee 2017). Additional research on sexist tweets identified more than 419,000 tweets per day that included at least one of four sexist slur words (bi*ch, c**t, s**t, and who*e) during a one-week collection period (Felmlee et al. 2020). Such negative messages often can be retweeted or “liked” by followers, thereby creating networks of cyberbullying that spread far beyond the original perpetrator and target (Felmlee et al. 2018).

Studying tweets over two years, researchers found that people posted more than 9.76 million tweets referencing bullying on Twitter (Bellmore et al. 2015), which averages to more than 130,000 discussions of bullying reverberating online each day. According to additional research, moreover, approximately 10,000 racial slurs occur in tweets on a daily basis (Bartlett et al. 2014). Furthermore, news accounts repeatedly highlight the outcry surrounding damaging messages on Twitter. In one instance, a number of women participated in a Twitter boycott (Women’s Boycott) triggered by the suspension of the Twitter account of an actress, Rose McGowan, who posted allegations of sexual abuse by Hollywood producer Harvey Weinstein.

Following the Women’s Boycott and repeated claims of online harassment and abuse, the CEO of Twitter pledged to introduce new “anti-abuse” rules that would be “more strictly enforced” over the coming weeks (October 14, 2017). The new rules would apply to unwanted sexual advances, nonconsensual nudity, hate symbols, violent groups, and tweets that glorify violence. In addition to updating and clarifying rules, Twitter announced changes to the content reporting and removal processes to be implemented, and these policy adjustments were widely publicized in media outlets and in a “Safety Calendar” posted on Twitter’s blog. These new rules were announced as going into effect on December 18, 2017.

One notable feature of Twitter’s attempts to affect its users’ behavior is that the organization did not outright censor the use of specific slurs or derogatory terms (e.g., bi*ch, n**ger, fa**ot). This likely owes to the perception that there is “gray area” in the use of such words, particularly depending on who specifically is using them. Sometimes members of the targeted group themselves (e.g., women, African Americans, or LGBTQ people) use these terms in a process that the feminist writer Judith Butler (1990) called “resignification.” In other cases, slurs allow an individual to express a positive message while simultaneously using the slur as a means of self-protection or boundary drawing (Pascoe and Diefendorf 2019). Instead, Twitter’s attempts to reduce offensive content rely on a combination of top-down organizational signals that abusive behavior is considered non-normative and unacceptable, as well as bottom-up peer reporting or “flagging” of offensive material. Without outright banning certain offending terms, Twitter thus attempts to regulate how such terms are used on the platform.

Organizational Policies and Individual Responses

In the present study we ask: Did Twitter’s rule changes influence patterns of abusive language on the platform? Previous work in sociology, political science, and economics suggests that such changes are potentially efficacious but that this effectiveness depends on characteristics of the environment into which the rule is introduced. Institutionalist models of social control emphasize the importance of the sanctioning authority’s perceived legitimacy in dictating users’ behavior (e.g., Baldassarri and Grossman 2011; Fehr and Gachter 2002; Henrich et al. 2006). If users perceive Twitter as a legitimate referee or institutional arbiter empowered to set the “rules of the game” (e.g., North 1990), then a top-down rule change can effectively signal a shift in norms of acceptable behavior. In a basic sense, the effect of such legitimate authority is analogous to the authority a state exercises in crime and punishment. Just as the state punishes lawbreaking with incarceration (removal from free society), the legitimate Twitter administrators might punish rule breaking with (in some cases) removal from the platform (Tyler et al. 2018). Although some especially belligerent users may still engage in aggressive behavior, others will comply so as not to risk their continued access to the platform.

However, previous theory and evidence suggests that the dynamics of rule and norm following on Twitter and other social media platforms are not likely to be so straightforward. First, to sanction abusive behavior in the first place, Twitter users must be aware of it. To this end, the organization needs to rely on individual users’ willingness to report abuses (though natural language processing advances may soften this requirement; e.g., Davidson et al. 2017). Thus, the top-down rule change will achieve maximum effect only if supported by a bottom-up movement of Twitter users mobilized to maintain social norms and report aggressive behavior to authorities. The presence or absence of such mobilization may depend on both the value users attach to norms of decent and nonabusive behavior and on the network externalities associated with violations of these norms (DellaPosta, Nee, and Opper 2017). These network externalities depend in turn on the audience that given Twitter users believe themselves to be facing when they tweet (Marwick and boyd 2011). If Twitter “bullies” are embedded in dense networks of fellow “bullies,” they may perceive social rewards in the continued negative targeting of others. However, if a shift in perceived rules and norms on the platform leads perpetrators to have greater fear of exposure and sanction, they might respond by engaging in self-censorship that would decrease the overall flow of negative messaging on the platform.

A recent study by Flores (2017) suggests that increased aggression may be an equally plausible outcome of Twitter’s attempts to crack down on negative messages. Examining Twitter messages before and after Arizona’s passage of an anti–illegal immigration measure, SB 1070, Flores found a mobilization of anti-immigrant messages attributable to the activation of both new and previous Twitter users. Although Flores’s case shows platform users’ mobilizing in response to an external societal signal that supported their already held views, it is not difficult to imagine the reverse—aggressive or abusive “trolls” ratcheting up in “psychological reactance” (Brehm 1966) against the perceived heavy-handedness of Twitter authorities. If other users respond to the rule changes with vocal support, the targeted trolls may then develop an even stronger sense of belonging to an oppositional identity movement (e.g., Bearman and Brückner 2001). Tweeting abusive language will then become a salient symbolic act in and of itself. Bullies may send abusive tweets to signal their social identity and opposition to the perceived oppression of political correctness (DellaPosta, Shi, and Macy 2015; Gusfield 1966; Smaldino et al. 2017).

Reactance dynamics of this kind are widely observed in studies of employee responses to top-down change in organizational contexts. When an organization’s administration institutes policies that threaten the perceived freedom and autonomy of members, these members often respond with deliberative noncompliance and resistance to reassert their freedom to behave as they wish (e.g., Lowry and Moody 2015; Nesterkin 2013). It is plausible to think that users of social media platforms would respond similarly to organizational attempts to control their behavior. Users—and even many of the people who control social media platforms (Massanari 2017)—often see social media sites such as Twitter, Facebook, and Reddit as occupying the role of a neutral platform where all views are equally valid to share and where top-down censorship would violate a right to “free speech.” Policies that restrict a user’s autonomy to post negative content go against such a conception of the platform as impartial and are thus likely to provoke reactance among at least a vocal subset of users.

Study Design

In light of these competing intuitions, the purpose of our research is to examine the degree to which Twitter’s rule changes influenced the content and network spread of aggressive messages, focusing on content related to gender and race. Did the organization’s policies alter the level of hostility in messages? To address this question, we begin by locating tweets that include a gendered slur, bi!ch, during the month prior to and following the effective date of the most widely publicized of Twitter’s recent rule implementations (December 18, 2017). Previous research finds that bi!ch is a particularly common slur used to demean a feminine target on Twitter (Felmlee et al. 2020), and it is one of the four most prevalent curse words on the platform (Wang et al. 2014).

In response to calls for further attention to online bullying of racial minorities (Zych, Ortega-Ruiz, and Del Ray 2015), next we examine tweets during that period that contain a racist slur, n!!ger. We chose that particular offensive term because it is one of the slurs used most frequently to demean individuals on the basis of race (e.g., Chaudhry 2015; Felmlee et al. 2018; Wang et al. 2014). Although bi!ch and n!!ger are both top curse words used in online messages, our choice of these two terms does not imply that they are equivalent. Quite the opposite, we believed that examining these two terms would lend our analysis greater robustness by showing how Twitter’s organizational policy affected patterns of usage in two contextually different cases.

To be clear, we do not measure the overall number of uses of offensive terms before and after the policy shift. Instead, we sample tweets that used specific terms before and after the change in policy to study whether message sentiment differs. We examine whether the use of such terms was influenced by Twitter’s attempts to reduce abusive content on the platform, in other words. Note that our design lacks a control group—the policy shifts we study applied to everyone on the Twitter platform—which precludes approaches such as difference-in-difference estimation. In this study we also focus on the short time period spanning one month before and after the policy shift. This limited time span helps ensure that any observed changes in Twitter content are less likely to be attributable to intervening factors likely to occur during lengthier time periods. Also, we present analyses that focus on individual users who used derogatory terms to ask whether the policy shift affected a change in tweet content at the individual level (e.g., Flores 2017).

Using this data set, we do the following:

Compare the degree of negative sentiment in tweets targeting individuals on the basis of gender and/or race in a multivariate regression analysis before and after the enactment of Twitter policy changes.

Investigate and describe incidences of online harassment that target individuals on the basis of gender and/or race, and their network spread, before and after the enactment of Twitter policy changes.

Examine the pattern of retweets and likes of tweets that contain either sexist and/or a racist slurs during the periods before and after Twitter policy changes, 1 both of which can contribute to the network spread of a post.

Methods

Data

To test whether Twitter’s policy change influenced aggressive messaging, we selected a targeted approach in which we focus on one type each of a sexist and a racist slur. Data demands prohibited investigation of all types of sexist and racist tweets, so we investigated two of the most frequently used offensive words. We collected data directly from the Twitter application programming interface and scraped tweets that included either a gendered slur, bi!ch, or a racialized slur, n!!ger. We collected these tweets over a two-month period (November 17, 2017, to January 18, 2018), beginning one month before and concluding one month after the date of a publicized change in Twitter’s rules regarding abusive content (December 18, 2017). From this data set we extracted a random sample of close to 4 million tweets. After removing duplicated and empty tweets, the total sample size included 3,615,445 tweets. Although not a random sample of all tweets, we believe that our focus on tweets containing major gendered and racial slurs enables an especially stringent test of the effectiveness of Twitter’s rule changes. In effect, we test whether there was a detectable change in the sentiments captured even among tweets using terms that target women and racial minorities. 2 As discussed in the following section, uses of these terms are sufficiently varied to make such a test meaningful.

Over the course of the two months (for a total of 64 days), we gathered an average of 56,491 tweets per day (1.6 percent of the total sample). The minimum number of tweets collected on a single day occurred on January 4, 2018, with 16,477 tweets (0.5 percent of the total sample), whereas the maximum took place on November 29, 2017, with 101,939 tweets (2.8 percent of the total sample). Tweets including the term bi!ch or n!!ger were not heavily concentrated on any single day but distributed fairly evenly over the two months.

Sentiment Analysis

We develop a classifier that we apply to the sentiment analysis of the content of the entire tweet message, which includes one of the two key words (Felmlee, Blanford et al. 2020; Felmlee, Inara Rodis et al. 2020; Zhang and Felmlee 2017). The sentiment tool is a variation of the VADER (Valence Aware Dictionary and Sentiment Reasoner) classifier. VADER is “a lexicon and rule-based sentiment analysis tool that is specifically attuned to sentiments expressed in social media. It is fully open-sourced under the [MIT License]” (https://github.com/cjhutto/vaderSentiment). To improve the overall accuracy of the sentiment score, we compared the performance of VADER with that of several commonly used packages. In the end, we used a combination of scores from the top three classifiers, which included VADER and two others, afinn and bing, which are lexicon packages available in R. Moreover, we updated the lexicon to include derogatory terms relevant to incidences of harassment or aggression and translated the score into a scale ranging from −4 (most negative) to 4 (most positive). We selected this scale to replicate the original lexicon scoring of the VADER classifier and to standardize scoring across our final classifier. The key term n!!ger received an individual score that was more negative than that of the term bi!ch, according to our classifier. The sentiment score depended on the content of the entire tweet, however, not only the key word. We compared the sentiment scores gathered from four human coders on a sample of 400 tweets with the scores obtained by our final classifier. Our sentiment classifier performed well, with overall F1 scores of .688 (micro) and .690 (macro). These F1 scores represented an improvement over scores from VADER alone or from other combinations of common classifiers.

Unsurprisingly, most tweets containing our key terms are quite negative in sentiment. At the same time, there exists a range of sentiment in the data set, and not all messages are negative. Tweets that are positive, despite containing slurs, often reflect attempts by the user to “reclaim” negative terms by attaching a more positive meaning that would be recognizable to other in-group members (see Butler 1990). In exploring the online use of the term bi!ch, for example, Felmlee et al. (2018) found instances in which the gendered slur bi!ch is deliberately “resignified,” or “reclaimed,” on Twitter as an act of defiance and self-determination for women of color. Thus, tweets that score as positive in sentiment, even though they contain derogatory terms, often represent alternative or unconventional uses of slurs. Moreover, similar to what Pascoe and Diefendorf (2019) found in their study of tweets containing “no homo,” some tweets in this sample use racist and/or sexist slurs in a self-protective or boundary drawing fashion, where the use of a slur separates the user from other sexist or racist individuals, but the tweet itself is positive. In this project, we find some examples of such messages with “reclaimed” and “resignified” gendered or racial insults, as illustrated subsequently.

Multivariate Approach

To test the significance of Twitter’s policy against abusive messaging, we conduct ordinary least squares (OLS) regression analyses on the Twitter data and test the robustness of our findings using several other statistical approaches. 3 The primary predictor, after policy enacted, is a binary indicator taking a value of 1 if the tweet is published after Twitter’s new policy implementation date (December 18, 2017) or 0 if it is published prior to this date. Tweets published on the date of the policy activation are considered to occur after the policy is enacted and scored as a 1. The dependent variable is the sentiment score of a given tweet (–4 to 4).

In addition to the main predictor, after policy enacted, further models also include several controls. To consider whether the length of a tweet makes a difference in the overall sentiment of the message, we include two controls. First, text character length is a continuous measure recording the number of characters in the tweet. Second, expected tweet length is a binary measure noting whether the total sum of characters in a tweet is between the minimum number of characters necessary to include the keyword (5 characters for tweets scraped with the term bi!ch and 6 characters for tweets scraped for the term n!!ger) and the total number allowed by Twitter. Tweets with messages containing between 5 or 6 and 280 characters were marked as 1.

To control for the influence of the time of day on tweet sentiment, we incorporate a variable, hours since midnight, for the hour of the day that the tweet was published. Golder and Macy (2011) found that tweet sentiment tends to vary over the course of the day. As previous research suggests a peak in tweet activity during midday (Cornell 2013), we also include a squared version of the time variable, (hours since midnight) 2 . Furthermore, because we collected the data over five major American holidays, we also add individual binary controls for whether tweets were published on a holiday (Thanksgiving, Christmas, New Year’s Eve, New Year’s Day, or Martin Luther King Jr. Day). In the models presented here, we include five variables, one for each holiday; tweets are marked 1 if the tweet is published on the respective holiday.

Finally, because of our interest in the possible network spread of deleterious tweets, we also include variables tracking the number of retweets, logged retweets, and likes, logged likes. We use a natural log transformation of the predictors because of the highly skewed distribution of both retweets and likes (i.e., the vast majority of tweets have no retweets or likes). 4

Descriptive Results

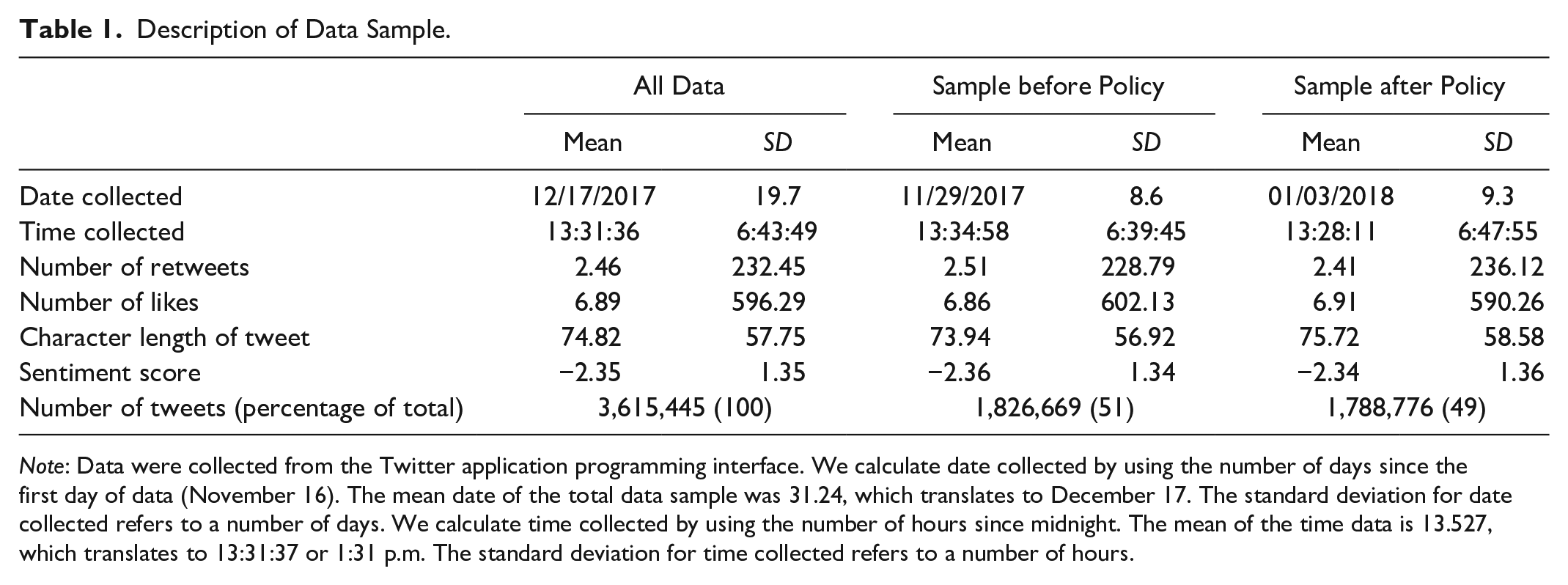

Our final sample is composed of just over 3.6 million tweets. The average tweet in the sample was published around 1:31 p.m., or just after midday (see Table 1). This matches previous research suggesting that tweets are most abundant around 1 p.m., after lunch or during afternoon work breaks (Cornell 2013). Within the sample, the average number of retweets was 2.46, and the average number of likes was 6.89. However, the modal numbers of retweets and likes were both zero. Thus, the vast majority of tweets were not further spread (retweeted) or approved (liked) by other users. Given that our sample consists of tweets containing racialized or gendered slurs, the average sentiment score of a tweet in this sample is negative, with a mean of −2.35. Notably, tweets on or around holidays (Thanksgiving, Christmas, New Year’s Eve, New Year’s Day, and Martin Luther King Jr. Day) had slightly less negative content on average. Tweets on those five days had an average score of −2.24.

Description of Data Sample.

Note: Data were collected from the Twitter application programming interface. We calculate date collected by using the number of days since the first day of data (November 16). The mean date of the total data sample was 31.24, which translates to December 17. The standard deviation for date collected refers to a number of days. We calculate time collected by using the number of hours since midnight. The mean of the time data is 13.527, which translates to 13:31:37 or 1:31 p.m. The standard deviation for time collected refers to a number of hours.

Illustrations of Tweets

Next, we present illustrations of tweets that represent a range of sentiment scores in Table 2. For example, the first four rows of the table contain four tweets that receive the most extreme negative sentiment score of −4. One of these expresses hope that someone will kill “this dumb stupid bi!ch,” while another severely insults a woman’s looks: “The n!!ger girl is ugly as sh!t.” Other tweets are more neutral in sentiment, with scores close to 0, one of which contains contrasting emotions (e.g., laughing/crying emojis); the positive and negative emojis offset each other and result in an overall sentiment score that is relatively neutral. Finally, there are examples of tweets that are exceptions to the rule that slurs are always negative and in which the content of messages with the term bi!ch or n!!ger appears to be positive. One example of such an upbeat tweet is in the third to last set of tweets in Table 2, in which the original message uses the term n!!ger while greeting another user “Happy New Year”; this tweet’s score is 2.00. In another instance, a person is described as an “amazing bi!ch,” and the tweet receives a highly positive score of 3.63. Both tweets illustrate “resignification,” whereby people use and reclaim negative terms for use in more positive contexts.

Examples of Twitter Messages and Their Scores.

Aggressive Tweets and Gender

To study online harassment and gender, we chose a popular, negative keyword, bi!ch (e.g., Felmlee et al. 2018) to scrape from the Twitter database. As noted earlier, this term is gendered in that the use of the term bi!ch often attacks feminine characteristics. Our final sample of tweets that contains a mention of this keyword was 3,540,669 tweets.

In our first analysis, we highlight social networks of Twitter “conversations” that emanate from an original tweet that uses the term bi!ch in an insulting manner to target a particular woman. In one example (Figure 1, left visual), we examine the following tweet: “Bi!ch stop lying [Crying/Laughing Emoji  ].” This brief tweet accuses an anonymous female target of lying and labels her a “bi!ch.” Moreover, even without identifying the target of this attack, 10 others retweet or like the original aggressive post. In the visual figure, two network edges, or lines, represent users who both retweet and like the original post, and the other eight represent 8 users who like the original tweet.

].” This brief tweet accuses an anonymous female target of lying and labels her a “bi!ch.” Moreover, even without identifying the target of this attack, 10 others retweet or like the original aggressive post. In the visual figure, two network edges, or lines, represent users who both retweet and like the original post, and the other eight represent 8 users who like the original tweet.

Network visualizations of Twitter conversations based on sexist language.

In the second example (Figure 1, right visual), we illustrate a highly negative Twitter conversation, based on the tweet “well you are a terrible fuc!!ng person so unfollow me you ugly a!s stale a!s moldy a!s little bi!ch.” In this message, the originator of the message uses multiple insulting terms to attack two individuals, culminating in calling each of them a “terrible” person and using curse words and affronts to amplify the abuse. The perpetrator of this post also asks the target to “unfollow” them on Twitter. Both the nastiness of the language in the tweet and the request to be “unfollowed” are noteworthy. The latter is relevant, because unfollowing someone effectively cuts off the person not only from the bully but also from the network of other followers. Another significant aspect of this case is the relatively large network of conversation that emanates from the original post. In total there are 215 individual users who participate in this network. Interestingly, 114 of these users (53 percent) are marked private, meaning that a casual observer can see that they retweeted or liked messages in the conversation but cannot view the message content or further details about these users. Both gender examples occurred before the policy change on abusive content by Twitter.

Aggressive Tweets Targeting Race

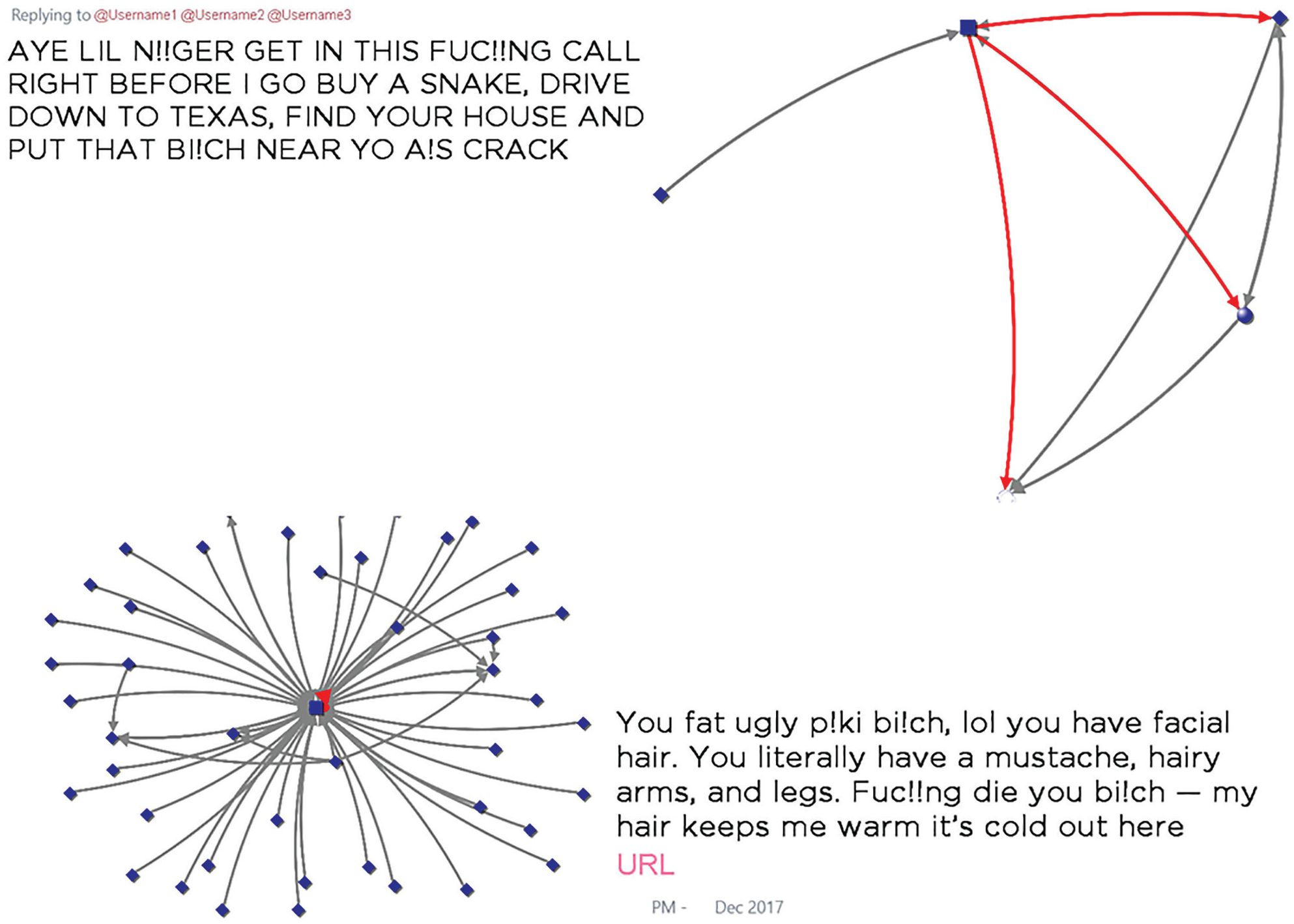

Next, we analyze messages targeting race by collecting tweets that include the term n!!ger in the tweet (74,776 tweets or 2.1 percent of the total sample). In the first example (Figure 2, top visual), we consider the following tweet: “AYE LIL N!!GER GET IN THIS FUC!!NG CALL NOW BEFORE I GO BUY A SNAKE, DRIVE DOWN TO TEXAS, FIND YOUR HOUSE AND PUT THAT BI!CH NEAR YO A!S CRACK.” In this instance, the person sending the message uses an offensive, racial slur (n!!ger) as well as curse words to make the point. The social network surrounding this message consists of five people who continue to abuse one another in subsequent tweets. Furthermore, in this relatively small conversation network, it appears that the Twitter users know one another, and therefore they are using insulting language not to affront strangers but to emphasize a point aggressively with acquaintances. Not all offensive messages target strangers.

Network Visualizations of Twitter conversations based on racist and sexist language.

In the second example (Figure 2, bottom visual), we illustrate a message that contains the racist slur p!ki, a term used to attack individuals from Pakistan, Asia, or from other non–Western European backgrounds. The selected tweet we illustrate is “You fat ugly p!ki bi!ch, lol you have facial hair. You literally have a mustache, hairy arms, and legs. Fuc!!ng die you bi!ch . . . .” In the tweet, the original user is responding to an attack offsite and seems to be venting resentment. As can be seen in the figure, a total of 45 individuals like, retweet, or reply to the original user’s post, and all of them act in support of the message, despite its vitriolic content. Here, again, we see the extensive involvement of the social network in responses to online aggression. In these two instances of racial targeting, the former occurs before the policy change. However, the latter was published after the change, demonstrating that negative messages still can occur following the shift in organizational rules.

Role of Policy in Aggressive Tweets: Multivariate Analyses

Next, in a series of multivariate regression analyses, we formally address the question as to whether a shift in policy significantly affects the degree of sentiment negativity in our full sample of tweets. To do so, we compare sentiment scores before and after Twitter enacted new policies intended to stem abuse on their platform.

As can be seen in Table 3, rule changes on the part of Twitter are related significantly to message sentiment. Given the size of our sample, it is not surprising that we report coefficients that are highly statistically significant; more important, however, is that the coefficient for the policy shift is positive in sign. This is notable because it cuts against the expectation that reactance dynamics might motivate users to tweet even more negatively when they perceive Twitter as trying to control their behavior. For example, in the first of our three models (the “null model”), where the only predictor is after policy enacted, the main predictor variable is positive (β = .001, p < .001); this means that tweets after the date of the Twitter changes intended to reduce abuse are slightly more positive in sentiment than those published prior to that date.

Three Models Predicting Tweet Score on the Basis of Characteristics of Tweet.

Note: AIC = Akaike information criterion; BIC = Bayesian information criterion.

p < .001.

Next, in the “partial” model (Table 3, column 2), we include controls for the length of the text, the expected tweet length, the time of the tweet (i.e., hours since midnight and hours since midnight squared), and whether the date represents a holiday. As noted, coefficients for all of these control variables attained significance in the model and in expected directions. Longer tweets were more negative, and time since midnight had a positive but curvilinear effect on sentiment. Tweets on holidays tended to be more positive than those not on holidays. In separate analyses, we also estimate models with combined variables to consider the impact of all holidays together or in various combinations. The inclusion of assorted combined holiday variables does not change the results presented here (see Supplementary Table 1). After including additional controls, the coefficient for the effect of policy enacted remains statistically significant and positive in this more expanded model (β = .012, p < .001).

Retweets and Likes

An important element of messages on Twitter is that people can like and retweet others’ tweets, thus helping spread Twitter content through the social media network. In the third regression model, the “full model,” (Table 3, column 3), therefore, we also investigate the role of retweets and likes in message sentiment by adding two additional variables to our previous “partial” model. Findings for the previous control variables continue to be similar to those in the partial model, with only small shifts in coefficient size. However, in the full model we see that both retweets and likes significantly influence message sentiment, but in opposite directions. The variable “logged retweets” relates negatively to tweet sentiment (β = –.068, p < .001), whereas “logged likes” has a positive relationship (β = .061, p < .001). Tweets that spawn retweets, in other words, are likely to be more negative than those without retweets. Those that gather likes, on the other hand, tend to be more positive. 5 The effect of the policy change remains positive and virtually unchanged net of both variables (β = .011, p < .001).

Gender and Race

We also perform further analyses to test whether gendered or racialized tweets exhibit divergent patterns with regard to the findings presented above. We begin by splitting the sample into two: one containing tweets scraped using the term bi!ch and one with tweets scraped using the term n!!ger. We then run the same analyses described above to test if there were any differences by sample. Although the overall findings remain the same—tweets are more positive following the policy change—there are two slight differences. First, tweets published on Martin Luther King Jr. Day containing the term n!!ger are significantly more likely to be positive than on other days, although this holiday effect does not occur for the overall sample or for tweets with the term bi!ch. Second, the retweet pattern detailed for the whole sample reverses for the smaller sample of tweets including the term n!!ger. Retweeted messages containing n!!ger were significantly more likely to be positive, rather than negative, in sentiment. According to additional investigation, this trend appeared to be due to an idiosyncratic, well-publicized event involving the N-word during the data collection period.

Following Individuals’ Tweets over Time

In supplementary analyses (see Supplementary Table 2), we also examine the degree to which individual user behavior is influenced by the policy change. We analyze this first by conducting fixed-effects regression models to control for individual variation. We use a sample of users who tweeted at least once both before and after the alteration in rules, thus allowing us to test a difference in behavior over this period. In this sample, there were 312,869 unique individuals who used the B-word once before and after the change (20.15 percent of all individual users in the total data set of users who use the B-word) and a relatively small group of 4,142 unique users who used the N-word in this same pattern (9.49 percent of all tweets scraped containing the N-word). In the final models, individuals’ tweets after the policy remain significantly more positive than tweets before the policy (although in complex models with multiple interactions the positive effect is not always significant).

Additionally, we conduct supplementary OLS regression models (see Supplementary Table 3) to control for individual variation. In this case, we include a binary flag for whether a user tweeted both before and after the policy and a count variable for the number of tweets a user had over the whole period. In these models, the coefficient for the policy change continues to be significant and positive. The total number of tweets a user sent also is associated with slightly more positive sentiment.

Network Sentiment Change over Time

To further illustrate the influence of Twitter’s policy change on the overall sentiment of tweets we visualize a subsample of tweets before and after the change (see Figure 3). We take a sample of tweets (those including both the term n!!ger and another user’s account in their original tweet) to specifically highlight how the anti-abuse rules may influence the practice of directly messaging other users. Within the visualization, we lock users in place (i.e., dots in Figure 3) before and after the policy to note how users tweeted using the racialized slur during these two periods. To emphasize the change in overall sentiment we colored the tweets as red, gray, or blue lines depending on their sentiment scores. Negative tweets, which received scores between −4 and −1, are depicted in red. Neutral tweets were assigned scores between −1 and 1 and are depicted in gray. Positive tweets, which received scores between 1 and 4, are depicted in blue.

Change in Twitter conversations including race-based language.

Note that Figure 3 contains two large hubs (a user with a large abundance of direct mentions from other users) on either side of the network. These hubs illustrate that many users directly mentioned many of the same Twitter accounts in their posts using n!!ger. In addition, we see a reduced concentration of negative tweets (red lines) in the center of Figure 3, which is apparent when comparing the right visual (tweets after the policy) with the left (tweets before the policy). The reduction in negative tweets subsequent to the change suggests that Twitter’s anti-abuse policy has some visible positive effects. Given that this finding represents only a small fraction of the overall data, it is notable that the implementation of new rules can influence the behavior of even a limited portion of tweets.

An additional important finding visible in Figure 3 is that despite an overall positive influence of the anti-abuse policy, potentially damaging messages still spread in clusters of groups. These results reinforce the regression analyses finding on the basis of the overall sample of tweets regarding retweets, in which tweets with more retweets are likely to be negative. In other words, tweets containing slurs that spread and emanate through retweet threads are more negative in nature than tweets that are not being retweeted or viral in nature.

Other Robustness Tests

In addition to the models presented here using OLS regression on the total sample, we perform further analyses to the test the robustness of these results. On the basis of the previous findings, we first explore the role of tweets published on holidays. In supplementary analyses, we drop all tweets published on holidays, and the overall findings remain the same, with the positive policy effects slightly larger in size (see Supplementary Table 4). We also try to control for bots and find that even when accounting for the presence of demarcated bots in the data set (e.g., accounts containing versions of the term bot), the positive finding remains relatively unaltered (see Supplementary Table 3). 6 Moreover, we compare our results from this modeling approach with several others, including ordered logistic regression and partial proportional odds logistic regression, using both the Akaike information criterion and mean squared error of fitted values. Both the significance and the direction of the effects in the findings detailed above remain the same when using these other modeling strategies, but the overall fit is not as good as that for the analyses presented herein (see Supplementary Tables 5 and 6).

As a final robustness check to ensure that the policy effect was caused by the policy intervention rather than a spurious variable, we performed a series of analyses in which we randomized the date of each tweet (see Supplementary Table 7). For each model, we estimated the new effect of the policy change on the shuffled data and repeated this test 1,000 times. We then compared the replicated models with our empirical model and found no instances in which the policy predicted the randomized data. From these analyses, we find that it is exceedingly unlikely that the policy effect we observed was a product of chance, as none of the permuted data sets produced a coefficient as large as the one in the original, unshuffled data.

Discussion

In sum, using a sample of tweets with a common gendered slur and/or a racist insult, we see that a change in Twitter policy positively influences the sentiment score of a sample of more than 3.6 million tweets. The modest positive effect remains even after we incorporate a range of control variables in our models to measure the influence of tweet length, time of the post, holidays, retweets, and likes. Our finding is robust to an analysis in which we investigate the effect of days close in time to the policy change; to a model that compares sentiment in tweets posted by the same set of individuals prior to, and subsequent to, the shift; and to a comparison of 1,000 models in which we randomly shuffled the date of each tweet.

Our case studies, furthermore, reveal the way in which retweets and likes of aggressive messages can lead an initial nasty post to spread beyond the original target to involve others in a virtual online social network. The potential for harm arising from a single tweet, in other words, is magnified because of the networking environment inherent in social media. Note, too, that messages that are retweeted tend to be more negative than those that do not get sent forward in a retweet. This result means that malicious tweets are more likely to spread beyond the initial post and in this manner may have particularly damaging effects.

No research project is without limitations. In this instance, a study on the influence of an organizational rule change on negative messages using Twitter data must be attentive to issues relating to the sampling of data, the temporal window before and after the rule change, selection of key terms, measurement of sentiment, handling data from bots, treatment of special days (holidays), and alternative analytical strategies. Throughout our work we discuss many of these challenges and report on specific robustness checks that might influence our results. Nevertheless, our study addresses only a limited portion of what represents a wider, societal problem. Additional research is necessary to broaden the scope to examine, for example, other manifestations of electronic harassment, alternative policies, and possible long-term effects.

Our main objective was to identify whether Twitter’s policy change had an effect; however, our data provide less leverage on the question of how this effect occurred. One possibility is that the rule change precipitated a shift in norms that led users to avoid negative or aggressive tweeting. However, it is also possible that the policy change led to more negative tweets’ simply being removed from the platform by fiat, thereby making the average sentiment more positive. Yet another possibility is that users found ways to effectively “code” their tweets targeting women and African Americans in ways that our sentiment classifier might not pick up. Future work with different data will be needed to more fully pursue these questions of causal mechanism.

In conclusion, this research applies a novel approach to studying the dynamics of a version of online aggression. Twitter’s rule changes presented a timely chance to investigate whether organizational “anti-abuse” policies can stem hateful speech on social media. The opportunity presented by this policy change enabled us to test several social scientific arguments regarding the influence of organizational efforts to sanction adverse behavior, and our results have implications for these theories. For example, given that negative sentiment decreases, albeit slightly, following the new rules, this suggests that organizational interventions may be able to play a role in curbing undesirable actions on the part of platform users. Perhaps of most note, we do not uncover significant increases in negativity that could reflect defiant reactions to corporate rules seen as restricting online rights. Yet we should emphasize that instances of offensive messages remain after rule changes, as shown in our case studies. Although not entirely immune to corporate policy, online aggression remains a sticky societal problem and one lacking simple solutions.

On a broad level, our findings call further attention to the serious social problem inherent in online harassment and aggression within social media. Our study represents one of the first large-scale investigations in which problematic Twitter messages are tracked that contain slurs that target individuals on the basis of gender or race. Our research confirms, in an independent investigation, that certain organizational anti-abuse practices can avoid inflaming users who use sexist and/or racist terminology to react with heightened hostility. Such policies, instead, may begin to limit the negativity of these all too common, distressing online interactions.

Supplemental Material

Cyber_Aggression_and_Policy-Supplemental_Tables-07_09_2020 – Supplemental material for Can Social Media Anti-abuse Policies Work? A Quasi-experimental Study of Online Sexist and Racist Slurs

Supplemental material, Cyber_Aggression_and_Policy-Supplemental_Tables-07_09_2020 for Can Social Media Anti-abuse Policies Work? A Quasi-experimental Study of Online Sexist and Racist Slurs by Diane Felmlee, Daniel DellaPosta, Paulina d. C. Inara Rodis and Stephen A. Matthews in Socius

Footnotes

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: Support for this research was provided by the National Science Foundation under grant 1818497. We also acknowledge administrative assistance from the Population Research Institute at Penn State University, which is supported by an infrastructure grant from the Eunice Kennedy Shriver National Institute of Child Health and Human Development (P2CHD041025).

Supplemental Material

Supplemental material for this article is available online.

Notes

Author Biographies

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.