Abstract

The primary tension in public discourse about the U.S. government’s response to the coronavirus pandemic has been President Trump’s disagreement with scientists. The authors analyze a national survey of 1,593 Americans to examine which social groups agree with scientists’ ability to understand the novel coronavirus (COVID-19) and which agree that COVID-19 scientists share their values. Republicans and independents are less trusting than Democrats on both measures, as are African Americans. The authors find conservative Protestants and Catholics to be skeptical of scientists’ knowledge but not their values. Working-class men and those who live outside cities believe in scientists’ knowledge but do not think they share scientists’ values. There is little evidence for a direct effect of President Trump’s criticism of scientists. The authors discuss the pragmatic implications for scientists trying to remain influential in COVID-19 policy.

In spring 2020, much of life came to a standstill as various levels of lockdown swept across the globe to limit the spread of the novel coronavirus (COVID-19) global pandemic. Recommendations for restrictive measures originated from medical research scientists such as infectious disease specialists and epidemiologists, who regularly advised governments and the public about what to do to curb the pandemic. Recommendations included imposing physical distancing restrictions, what sorts of establishments would need to be closed, when they may reopen, who should stay at home, the possibility of a vaccine, and much else that affected people’s lives and livelihoods.

In the United States, restrictive measures developed in tandem with major tensions, most notably at the national level, between scientists’ recommendations and those of largely Republican politicians who wanted policy that lessened physical restrictions with a goal toward limiting the economic repercussions of the pandemic (Mascaro 2020). Republican politicians and those representing business interests made their argument by either discounting the fact claims of scientists or by implicitly emphasizing values different from those of scientists, particularly focusing on the importance of retaining jobs. The Republican side was led by President Trump, who regularly contradicted the views of his scientific advisers (Friedman and Plumer 2020). The most famous of these scientists was Anthony Fauci, a member of the federal Coronavirus Task Force, who regularly appeared in the media.

Trump could be reflecting or causing public opinion. In this article, we review existing social science research that predicts which groups would and would not trust scientists during the COVID-19 pandemic. Importantly, we distinguish between two types of trust: trust that scientists’ knowledge claims are generally correct and trust that scientists’ values reflect the public’s values. On the basis of prior research, there are four general groups that would be expected not to trust pandemic scientists: those identifying with the Republican party, members of demographic groups such as men with lower levels of education who are attracted to populist arguments about knowledge and values, African Americans who have a history of maltreatment by medical research scientists, and members of particular religious groups who have a history of value conflict with scientists.

To address our questions, we use a national survey of 1,593 adult residents of the United States administered during the pandemic. We find that response to COVID-19 scientists is highly politicized. However, it is not Republicans who are distinct from independents and Democrats in their skepticism of scientists, but rather it is Democrats who are distinct from independents and Republicans in their support. We also find that the working-class nonurban men who are most responsive to President Trump are not different from others in their view of scientists’ knowledge. However, this group is much more likely than others to not think scientists share their values. As expected, African Americans are less likely than whites to exhibit either type of trust in COVID-19 scientists. Contrary to the religion-and-science literature, we find that conservative Protestants and Catholics do not trust the knowledge of scientists but are not different than the nonreligious in belief that scientists share their values. We end by discussing the implications of these findings for how scientists should communicate with the public.

Trust as Both Knowledge and Values

The religion-and-science literature, as well as the literature on populism that motivates some of our analyses, both make a distinction between knowledge and values (Evans 2018; Norris and Inglehart 2019). Therefore, we evaluate two aspects of trusting scientists: trusting that they understand the facts about COVID-19 and trusting that they share the public’s values when making recommendations or decisions.

Scientists involved with responding to the coronavirus pandemic make knowledge claims about how viruses spread, what actions result in mitigation, which treatments may work, what percentage of the infected may need certain treatments, and so on. It is not surprising that scientists make such knowledge claims; that is, after all, their main social role. It is perhaps less obvious, however, that scientists also make implicit value claims in the pandemic. They advocate for saving human lives, even at the cost of economic damage, even though this value remains implicit. To those who agree with this value statement, it may not seem that different from a scientific statement. But to those who do not share in this value judgement, such as people who prefer to reduce measures that harm the economy, it is very much independent of fact. Scientists in general are perceived by many groups in the United States as forwarding a range of values beyond the facts at hand (Evans 2018, chapter 7).

This knowledge/value distinction has practical importance for society’s response to the pandemic. For those who value scientific expertise, a rejection of scientists’ ability to generate legitimate knowledge claims is very problematic, as no one other than scientists can provide the knowledge needed to combat a pandemic. Scientists could also be excluded from influencing decisions because people do not like their values. However, scientists are not experts in values, and there are others who can take on that role, such as policy makers. Although science is the only profession that has the legitimacy to define the facts, others (such as governors) can use scientists’ facts to define the values that follow.

Expectations from the Literature

There has been one small study of people’s views of scientific expertise on COVID-19 (McFadden et al. 2020). That survey, conducted before the virus had spread extensively in the United States, found that respondents preferred that the directors of federal scientific agencies lead the COVID-19 response over politicians, but the investigators did not try to determine why that was the case or break down this preference by the characteristics of the respondents. Given a lack of research in this specific domain, we turn to prior literatures that would predict what groups would have less trust in scientists researching the pandemic.

Party Identification and the Politicization of Science

In the past decade, there has been extensive research on how various indicators of support of science have become increasingly structured by political identity. In general, studies have found that political conservatives and/or those who identify with the Republican party are more skeptical of science (Gauchat 2012, 2015). For example, Gauchat (2015) found that the political ideology difference is explained by the scientific sophistication and intellectual engagement of respondents. Another study showed that the majority of the overall effect of political ideology on skepticism about the moral authority of science is mediated through beliefs about Christian nationalism (Baker, Perry and Whitehead forthcoming).

Beside the associations of certain types of people with the Republican Party, and the subsequent views of science, the Trump era and the COVID-19 issue may have a different mechanism. Although political polarization has been under way for decades, Trump has further accelerated this phenomenon. For every controversy of the Trump presidency, those who identify as Republicans overwhelmingly see whatever Trump has done in a favorable light, and Democrats see the opposite. For example, Trump wished to “open up” the economy, and Republicans took up this charge (Rucker et al. 2020). Political communication is polarized, so Republicans generally see the perspective of other Republicans, like President Trump (Baum and Groeling 2008).

This suggests a relatively simple direct communication mechanism that explains Republican distrust of scientists who study COVID-19, which is that Trump has signaled that he does not believe the knowledge claims of scientists, and Republicans and the associated media sphere are then following these views (Friedman and Plumer 2020). Because he explicitly bases his policy on his skepticism of the science, Trump does not explicitly say that he puts a different value on saving lives as do the scientists, so the direct communication mechanism would be operative only for knowledge. Of course, following the logic of political polarization, Democrats would then hear those disagreeing with Trump, who are medical research scientists such as Anthony Fauci, and believe what those scientists are saying.

Many of the existing studies of politicization focus upon political ideology (Gauchat 2011, 2012; Noy and O’Brien 2016; O’Brien and Noy 2015). Those who examine party identification typically use a continuous measure of party identification from strong Democrats on one end, with independents in the middle, to strong Republicans on the other end (McCright and Dunlap 2011; O’Brien and Noy 2015). In studies that separate out Democrats, independents, and Republicans rather than placing them on a spectrum, there is a common finding that is rarely dwelled upon by the authors, which is that Republicans and independents have similar sized effects, and it is Democrats who are quite different (Evans and Feng 2013:381; Jelen and Lockett 2014:5). Relatedly, Gauchat (2012:176) found that ideological moderates and conservatives were both less trusting then liberals.

If the Trump direct communication mechanism is occurring, the effect size for independents would not be between that of Democrats and Republicans, because a close adherent of Trump’s views would identify as a Republican and not an independent. A direct Trump effect would result in Republicans’ being less supportive than independents. If both are distinct from Democrats, this suggests a Fauci communication mechanism, in which Democrats are unusually trusting of scientists.

An unpublished COVID-19 survey study (Lazer et al. 2020) supports this mechanism. It included the survey question “How much do you trust the following people and organizations to do the right thing to best handle the current coronavirus (COVID-19) outbreak?” In bivariate analysis, it found that Republicans and independents had similar levels of trust in the Centers for Disease Control and Prevention and “scientists and researchers,” and Democrats had much more trust (pp. 152, 163). The authors did not examine if the lack of trust was in facts or values.

Therefore, if we find that Republicans are less trusting than both independents and Democrats, we can assume a Trump direct communication mechanism. If Republicans and independents are the same, and it is Democrats who are different, we can assume either that Democrats are following scientists such as Fauci or that the mechanisms identified in the existing literature on party identification are all that exist for COVID-19.

Social Class and Gender

Social class and gender are important for analysis of trust in scientists with respect to COVID-19 because of the politicization of the COVID-19 response and Trump’s populist appeal to a particular sociodemographic group. Populists claim “that they speak for the ‘silent majority’ of ‘ordinary, decent people,’ whose interests and opinions are (they claim) regularly overridden by arrogant elites, corrupt politicians and strident minorities” (Canovan 1999:5). Populists can be from the political right or left, and populism is not a set of policy positions but rather is opposition to whatever the elites in a particular society hold dear. In the contemporary United States, the elites include scientists, and there is an emerging literature on populism and science (Mede and Schäfer 2020).

Populism challenges both the facts and values of scientists. In the words of one theorist of populism, “populism challenges not only established power-holders but also elite values” (Canovan 1999:3). “The claim is not just that members of the establishment are arrogant in their judgments, mistaken in their decisions, and blundering in their actions, but rather that they are morally wrong in their core values,” wrote two other analysts (Norris and Inglehart 2019:4).

The existing literature on populism would suggest a number of groups predisposed to populist opposition to scientists during the U.S. COVID-19 crisis. Populism appeals to those who feel disenfranchised and looked down upon by elites, and in the contemporary American context, this tends to be white, working-class, nonmetropolitan people, particularly men (Norris and Inglehart 2019, chapter 10). This is also a description of Trump’s “base,” which would be following his words closely.

Studies of science and class and gender conducted before Trump began explicit populist appeals have a limited set of measures and/or show weak and/or inconsistent effects. Most studies show that those with more education support science more than those with less education, although this is usually interpreted as a result of familiarity with science, not class position (Bak 2001; Evans 2011; Gauchat 2012). Moreover, education is not always a significant predictor (Baker et al. forthcoming; Evans 2013; Evans and Feng 2013).

Family income is not a strong predictor of science views, and is not significant in many survey studies (Evans 2013; Evans and Feng 2013; Gauchat 2015, 2017). In others, the effect is positive or negative depending on which aspect of science is under investigation (Baker et al. forthcoming). However, with 30,000 cases, Gauchat (2012) did find that those with lower incomes show lower levels of confidence in those who lead institutional science.

Gauchat (2012) found that men are more confident in science, but other studies demonstrated no gender effect (Baker et al. forthcoming; Gauchat 2015; Johnson, Scheitle, and Ecklund 2015). Yet other studies show women more confident or supportive of science (Evans 2013; Evans and Feng 2013). The influence of gender depends on which aspect of science is under investigation and the covariates in the model.

Race and Ethnicity

Although it is whites in the United States who are populists, and thus are less trusting of scientists, on issues of medical research African Americans may be even less trusting of scientists than whites. In one summary, “there is ample evidence that patient mistrust toward the American medical system is to some extent associated with communal and individual experiences with racism” (Sullivan 2020:18). African Americans have been used for experiments of medical science against their will dating back to the antebellum period, with the infamous mid-twentieth-century Tuskegee experiment being only a recent example (Gamble 1997). Moreover, racial minorities in general may not trust medical science and medical care, as it consistently limits their access (Williams and Mohammed 2009). Therefore, we would expect African Americans to have less trust than whites. Cross-tabulations from the study by Lazer et al. (2020:161) suggest that African Americans are slightly less trusting of scientists and researchers than were whites.

Religion

In the past 15 years, a very large literature on the relationship between religion and science has emerged (Ecklund and Scheitle 2018; Evans and Evans 2008; Noy and O’Brien 2016; O’Brien and Noy 2015). The traditional academic view had been that religion, or at least Western Christianity, is in conflict with science over ways of knowing the natural world. The general claim was that the religious look to religious texts for claims about nature, and scientists would use their senses and reason to determine facts about the world. The current sociological conclusion is that although this generalization may or may not have been true before the mid-twentieth century, it is not so today (Evans 2018).

Of the largest Christian traditions in the United States, there is no modern history of knowledge conflict between Catholicism or mainline Protestantism and science. Because Catholicism and mainline Protestantism have mechanisms for synthesizing science and theology, any contemporary knowledge conflict with science would only be with white evangelicalism or fundamentalism (Evans 2018) (groups we henceforth label white conservative Protestants; Woodberry and Smith 1998). This conflict is over only a few narrow fact claims for which there are alternative claims in conservative Protestant biblical exegesis, mostly concerning human origins. However, although conservative Protestantism has the intellectual capacity to oppose a particular scientific claim, there are not alternative conservative Protestant claims about virology and epidemiology, so we would not expect this group to be less trusting of scientists’ knowledge.

There has been much more extensive conflict between religion and science over values. Religious communities do not see science as value free but rather as advocating for certain values. Although for Catholics there are a few isolated value conflicts over issues such as embryonic stem cell research and human genetic modification (Evans 2010), this would not be expected to apply to the science of pandemics. On the other hand, conservative Protestants have a more than 100-year history of rejecting the values they see as being taught by scientists through claims about human evolution, such as their observation that Darwinism was used to promote eugenics. Conservative Protestants extrapolate from these conflicts to see value conflict with scientists in any arena of science (Evans 2018:77–84, 128, 140). If a conservative Protestant response to more symbolic issues such as human evolution also applies to viral disease, we would then expect conservative Protestants to be more likely to think that the values of scientists will be inconsistent with their own in decisions and recommendations about COVID-19. On the other hand, if respondents are not simply reacting to the term scientist but perceive that the values expressed by the scientists concern saving lives, this would be perceived as consistent with the teachings in the Christian tradition.

The polarized Trump era has potentially upended these traditional explanations from the literature. Like the expectations about political party identification, whereby certain people are following (or opposing) the messages of Trump, members of certain religious traditions may be directly following Trump’s views of scientists’ ability to generate knowledge, overpowering the more subtle influence of their traditions identified in the literature.

The most prominent group of Trump supporters are white conservative Protestants, and there is a large literature dedicated to explaining their support (Gorski 2017; Margolis 2020; Marti 2019). Less remarked upon, but equally transgressive to historical patterns, is that white Catholics also disproportionately voted for Trump, as indicated by his larger margin among Catholic voters compared with previous Republican presidential nominees (Martinez and Smith 2016; Rozell 2018:286). Therefore, although generalized theories of religious reaction to scientific knowledge claims would not predict conservative Protestant or Catholic opposition to COVID-19 scientists, the fact that the president whom they earnestly support is the one contradicting scientists’ claims may result in their not believing in these scientists’ abilities.

Methods

Data Collection

We surveyed a national sample of U.S. adults about their experiences with COVID-19 from May 4 to 8, 2020. We contracted with the online survey firm Cint to reach respondents who could access our survey on the Qualtrics platform using either a computer or a mobile device. Cint is an opt-in poll, and research in the past decade has shown “few or no significant differences between traditional modes [of survey administration] and opt-in online survey approaches” (Ansolabehere and Schaffner 2018:89). We set quotas for age, gender, education, and region to reflect U.S. census figures. Cint’s respondent pool includes more than 15 million people gathered through a double opt-in procedure. Potential respondents are initially contacted through telephone, face-to-face interactions, e-mail, social media, and banner ads. After potential participants fill out a form, they receive an e-mail that requires logging into their Cint accounts to become part of the panel. Cint then e-mails potential respondents about studies and compensates them with a small remuneration for their participation. Cint-generated samples have been used for other social science research (e.g., Hunsaker, Hargittai, and Piper 2020).

A few minutes into the survey, we asked an elaborate attention-verification question that screened out people who did not answer it correctly (this was a slight modification of the color question by Berinsky et al. 2016:22). We have valid data on 1,593 adult respondents. At the start of data collection, there were 1,172,921 confirmed cases of COVID-19 in the United States and 62,593 deaths, and an average of 1,936 deaths on each of those days (Wikipedia 2020). The New York metropolitan area was, at the time of the survey, the epicenter of the U.S. outbreak. There were cases in all 50 states (New York Times 2020). The public discourse while the survey was in the field concerned states’ “reopening” their economies in contradiction to warnings from scientists (Roy 2020).

Measures: Dependent Variables

To measure if a respondent trusts the fact claims of scientists, the survey asked, “On a scale of 1-5 where 1 means ‘Very well’ and 5 means ‘Not at all,’ how well do the following groups understand the spread of Coronavirus (COVID-19)?” Of the six groups, one was “Scientists who study pandemics.” For all such variables in this article, higher values are associated with more of the quality in question, so in this case, a respondent received a 1 if he or she thought scientists understood “not at all” and a 5 if he or she thought they understood “very well.” To measure value conflict with scientists, our survey asked, “If scientists who study pandemics have to decide life and death in the Coronavirus pandemic, the values they use will be consistent with mine.” Respondents were given the choice of five responses ranging from “strongly disagree” (1) to “strongly agree” (5).

Measures: Independent Variables

Party Identification

To measure party identification, we used the American National Election Studies (2020) question “Generally speaking, do you usually think of yourself as a Republican, a Democrat, an Independent, or what?” We created dummy variables for each of these three categories, and Democrat was the reference group in the models.

Social Class

Given the mixed findings in the existing literature on the effect of social class on views of science, we fit our operationalization to our particular analytic focus on the possible impact of populism. For contemporary American populism, gender and class are intertwined, as it is not men or the working class per se that are thought to be populist, but working-class men (Norris and Inglehart 2019, chapter 10). Therefore, we created dummy variables for lower income men (base in the models), lower income women, higher income men, and higher income women. For gender, we asked, “Are you male, female or other?” (Two respondents who selected other were excluded from further analyses.) We also asked for total household income before taxes, with 13 choices ranging from less than $10,000 to $250,000 or more. Recoding each response to the midpoint of the category, the bottom 52 percent of the sample (cut at $45,000 or less) were placed into the respective lower income dummies. Although many analysts would include race in the variables above, we needed to keep the race variables distinct to analyze the effect of being African American.

Education is a critical social class variable that has largely been interpreted as a proxy for exposure to science. Having only a high school education or less could also be a proxy for working-class position. We asked about educational attainment using six categories and created three dummy variables: high school education or less, some college, and college degree or more, with high school or less as the base in the models. Finally, populist rejection of expertise, as well as the Trump base, is thought to be centered in rural and small-town areas. A question asked, “How would you describe the type of community you live in?” with the choices being “a big city,” “the suburbs or outskirts of a big city,” “a town or a small city,” and “a rural area.” We created dummy variables for each of these, with “big city” as the reference group.

Race and Ethnicity

We asked respondents separately about their ethnicity and race, as is done on the U.S. census forms. First, we asked, “Are you of Hispanic or Latino descent?” with “yes” and “no” answer options. Second, we asked, “Please check one or more categories below to indicate what race or races you consider yourself to be,” with the following answer options: “White,” “Black/African American,” “Asian,” “American Indian or Alaska Native,” “Native Hawaiian or Pacific Islander,” and “Other, please specify.” When possible, we recategorized those who chose “other” on the basis of the information they provided (e.g., 27 of the 45 indicated Hispanic or Latinx origin). We recoded race and ethnicity into mutually exclusive categories so that if a person indicated two races, he or she received the value of the one associated with the lower social status. We thus have dummy variables for white, African American, Hispanic, Asian American, and Native American (a summary of the two Native questions). We use white as the reference group.

Religion

In the United States, religiosity (in contrast to identity or belief) is often measured by the amount of religious service attendance. For our study we had to replace this traditional measurement given that during our data collection, the vast majority of religious services were shut down. Instead, we asked a different question commonly used (Johnson et al. 2015) to measure strength of religiosity: “To what extent do you consider yourself a religious person?” Possible answers were “not at all religious” (1), “slightly religious” (2), “moderately religious” (3), and “very religious” (4).

We also asked a set of religious identity questions. The first was “What religion do you consider yourself to be? If more than one, click the one that best describes you.” Closely following Ecklund and Scheitle (2018:158), choices were “Protestant,” “Catholic,” “Just a Christian,” “Jewish,” “Mormon,” “Muslim,” “Eastern Orthodox,” “Buddhist,” “Hindu,” “Not religious,” “Agnostic,” “Atheist,” and “Something else” (with a write-in box). As expected, there were far too few respondents who selected “Jewish,” “Mormon,” “Muslim,” “Eastern Orthodox,” “Buddhist,” and “Hindu” for separate analysis, so we combined these into an uninterpreted “other religion” dummy variable. This is included in models to produce the proper comparison. All of the 8 percent of respondents who selected “something else” were also assigned this variable, except for those we describe below who were recoded into other dummy variables. Four respondents who did not answer this first question were excluded from analysis.

Those who selected “Catholic” were coded as Catholics. Those who selected “Not religious,” “Agnostic,” or “Atheist” were assigned a nonreligious dummy variable, as were the eight respondents who expressed nonreligion (e.g., “None”) when providing supplemental description for the “Something else” category. Following the self-reported identification measurement strategy used in the sociology of religion (Dougherty, Johnson, and Polson 2007), those who selected “Protestant” or “Just a Christian” were asked an additional Protestant identity question in which the choices were “fundamentalist,” “conservative Protestant,” “evangelical,” “mainline Protestant,” “liberal Protestant,” and “none of the above.” The first three were assigned a conservative Protestant dummy variable, and the mainline and liberal Protestants were assigned to the liberal Protestant identity dummy variable.

It is a growing challenge for surveys in American religion that Americans are increasingly “connected to congregations, but less so to denominations or more generic religious identity labels” (Dougherty et al. 2007:483). That is, many respondents whom academics would classify as belonging to a particular Protestant tradition either do not know that they are Protestants and do not recognize that label in a survey, do not recognize or reject any of the identity labels used in the Protestant community, do not know if their church is a member of a particular denomination, and/or reject any identity beyond “Christian” (Lehman and Sherkat 2018). In this sample, 37.4 percent of those asked the specific Protestant identity question selected “none of the above.” A good portion of these respondents who selected “none of the above” do not recognize or use the term Protestant. This is demonstrated by the fact that 49 percent of those asked the specific Protestant identity question were asked because they selected “Just a Christian” on the first question. But 76.8 percent of those who ultimately rejected a specific Protestant identity selected “Just a Christian” on the first question. Many of these specific-identity-rejecting Protestants are nonetheless quite involved with religion and are, by academic standards, essentially a different type of Protestant.

We therefore created a dummy for these identity-rejecting yet involved Protestants and also included the 71 of 127 respondents who, in writing in an “other” religion, wrote something that was clearly Protestant (e.g., “Adventist”). It is difficult to describe this no-specific-identity group, and further investigation is in its beginning stages (Woodberry et al. 2012:69). In theological beliefs and perspectives on social issues, these nonidentifying Protestants are between conservative and liberal Protestants (Lehman and Sherkat 2018:783).

A Protestantism that rejects the theological traditions and doctrines associated with these labels is a type of populist Protestantism that does not need theories and doctrines, just personal experience. For example, “seeker” conservative Protestant churches that eschew labels and would just call themselves “Christian” deemphasize doctrine (Miller 1997:129). Consistent with this being a populist form of religion, analysis (not shown) reveals that 34 percent of those we classify as conservative Protestant have undergraduate degrees, while only 15 percent of the non-specific-identity group do. Therefore, this is a particularly antiestablishment type of Protestantism, and given that mainline Protestantism is defined by its connection to the establishment (Wuthnow and Evans 2002), it is a type of conservative Protestantism.

The general expectation from the sociology of religion would be that religion will influence respondents’ views only if they participate in or are knowledgeable about their religion. We therefore took all of those assigned to the above Christian dummy variables who also claimed that they were “not at all religious” and assigned them to a “low-participation Christian” dummy variable. In sum, we have dummy variables for Catholics, conservative Protestants, liberal Protestants, non-specific-identity Protestants, low-participation Christians, members of other religions, and the nonreligious (the reference group in the models).

Possible Confounding Variables

Finally, we control for two potentially confounding variables. The first is age, and we asked respondents, “In what year were you born?” subtracting it from the year of data collection, which was 2020. The second is the extent to which the respondent is paying attention to the pandemic. All of the substantive effects presume that respondents are aware of scientists’ claims in the pandemic, but this awareness may be structured by having the time or motivation to be attentive to the news of the pandemic. For example, working-class respondents’ views of scientists may be because their class position does not allow them the time to follow what scientists are saying, not because of substantive views of scientists that flow from their class experience. To measure attentiveness, we used a question that asked, “How closely, if at all, have you been following news about the outbreak of the Coronavirus also known as COVID-19?” Respondents were given four options that ranged between “not at all closely” (coded as 1) and “very closely” (coded as 4).

The Sample

The mean and median age of the sample is 48 years (range = 18–93 years), and just over half of respondents are women (54 percent). Just under half (47 percent) have no more than a high school degree, a fifth (19 percent) have completed some college, and the remaining third (34 percent) have at least a college degree. Fewer than two thirds of participants (63 percent) are white, 16 percent identify as Hispanic, 12 percent as African American, 6 percent as Asian or Asian American, and less than 2 percent as Native American or Hawaiian/Pacific Islander. The average household income is just under $55,000. More than a third of respondents (37 percent) report living in the suburbs, 23 percent in a small city or town, 22 percent in a big city, and 17 percent in a rural area.

In terms of religiosity, a fifth are Catholic, 12 percent are other, and 21 percent are nonreligious. Eleven and a half percent identify as some type of Christian but say they are “not at all religious,” a group we call “low-participation Christians.” Just over 17 percent are Protestants who think of themselves as more strongly religious but who do not adhere to established Protestant identities (the non-specific-identity Protestants). Seventeen percent are more strongly religious with a conservative Protestant identity, and 7 percent are more strongly religious with a liberal Protestant identity. These figures are consistent with other recent surveys. 1

Republicans and Democrats are represented at about equal levels (34 percent and 35 percent, respectively), the remaining claiming that they are either independent or have no preference. Just over half of respondents (52 percent) claimed to be following news about the outbreak of the coronavirus very closely, 37 percent somewhat closely, 9 percent not too closely, and 2 percent not at all.

Results

Table 1 gives the descriptive statistics for the variables in the analysis. Most important, the dependent variable measuring whether scientists who study pandemics understand the spread of COVID-19 is skewed toward agreeing, with just under half (45.9 percent) selecting 5 (“very well”), 20.4 percent selecting 4, 15.3 percent selecting 3, 10.2 percent selecting 2, and just 8.2 percent selecting 1 (“not well at all”). This represents fairly strong agreement that scientists understand pandemics. The public is much more divided on whether scientists share their values, however, with just over a fifth (21.5 percent) selecting 5 (“strongly agree” that the values of researchers are consistent with theirs,), 32.5 percent selecting 4, 37.3 percent selecting 3, 6.0 percent selecting 2, and 2.8 percent selecting 1 (“strongly disagree”).

Sample Characteristics.

We use ordered logistic regression models because the dependent variables are ordered categorical measures. The first column of results in Table 2 shows ordered logistic coefficients for a model predicting the “understand” variable. Both Republicans and independents are less likely than Democrats to think scientists understand the spread of COVID-19. This is not consistent with a theory of direct influence by President Trump on Republicans but rather is consistent with the mechanisms in the existing literature, whereby it is Democrats who are unusual in their faith in scientists.

Ordered Logistic Regression on Belief in Scientists’ Understanding of the Pandemic and Whether They Share Respondent’s Values.

Note: Values in parentheses are standard errors.

p < .05. **p < .01. ***p < .001.

African Americans are less likely than white people to think scientists understand the spread of coronavirus. This probably reflects African Americans’ skepticism about medical researchers due to past research injustices. Catholics and conservative Protestants are less likely than the nonreligious to think scientists understand COVID-19. This is not consistent with the existing literature but is with a direct Trump influence mechanism. None of the sociodemographic class variables are significant. Older people are more likely to think scientists understand COVID-19. 2

We rely on the models in Table 2 for statistical significance, but it is difficult to interpret effect size for ordered logistic regression models. To understand the magnitude of these effects, in Table 3 we show selected predicted probabilities. Predicted probabilities are descriptions of hypothetical individuals in the data who vary by an important interpretive variable (Long and Freese 2014:355).

Predicted Probabilities for Believing That Scientists Understand Coronavirus Disease 2019.

The first and second lines in Table 3 represent an African American and a white respondent, respectively. 3 An African American has a probability of .11 of selecting the response that scientists understand not at all, while a white respondent has a probability of .07. On the other end of the continuum, the probability of an African American’s selecting the response that scientists understand very well is .35, whereas the probability for a white respondent is .48. A visual metaphor also contributes to understanding. If we metaphorically imagine these probabilities representing 100 people sorting themselves into five rooms by these categories, the fifth African American room would have 35 people in it, and the fifth white room would have 48.

The next sections of Table 3 show comparisons of a Republican versus a Democrat and a Catholic versus a nonreligious respondent. Those substantive differences are less powerful than the race difference. The final section combines identities to show a hypothetical Catholic Republican versus a nonreligious Democrat. At the response categories that reflect the least trust in scientists’ knowledge, the probability that a Catholic Republican will select the response that scientists understand “not at all” is double the probability of a nonreligious Democrat making that choice. At the other end of the scale, the probability that a Catholic Republican will select the response that scientists understand “very well” is .41, and the probability that a nonreligious Democrat will make that selection is .60.

Returning to Table 2, the second results column reports the coefficients for the shared values model, and as expected, African Americans are much less likely to think that scientists share their values. Older people also think that scientists do not share their values. Similar to the previous model, Republicans and independents are much less likely than Democrats to think that scientists share their values. By and large, the religious and nonreligious are not different in the extent they believe scientists share their values. The exception is the difficult-to-characterize group of non-specific-identity Protestants, who are less likely than the nonreligious to think that scientists share their values.

Most notable is that the social class groups thought to be amenable to populism also think that scientists do not share their values. Specifically, compared with low-income men, high-income men are more likely to think scientists share their values. Both classes of women are not different from the lower income men. Those living in rural areas, small towns, and suburbs are less likely to think they have shared values with scientists than those who live in cities. The only class variable that is not significant is education, for which the undergraduate degree variable (with high school as the reference group) falls just short of statistical significance. 4

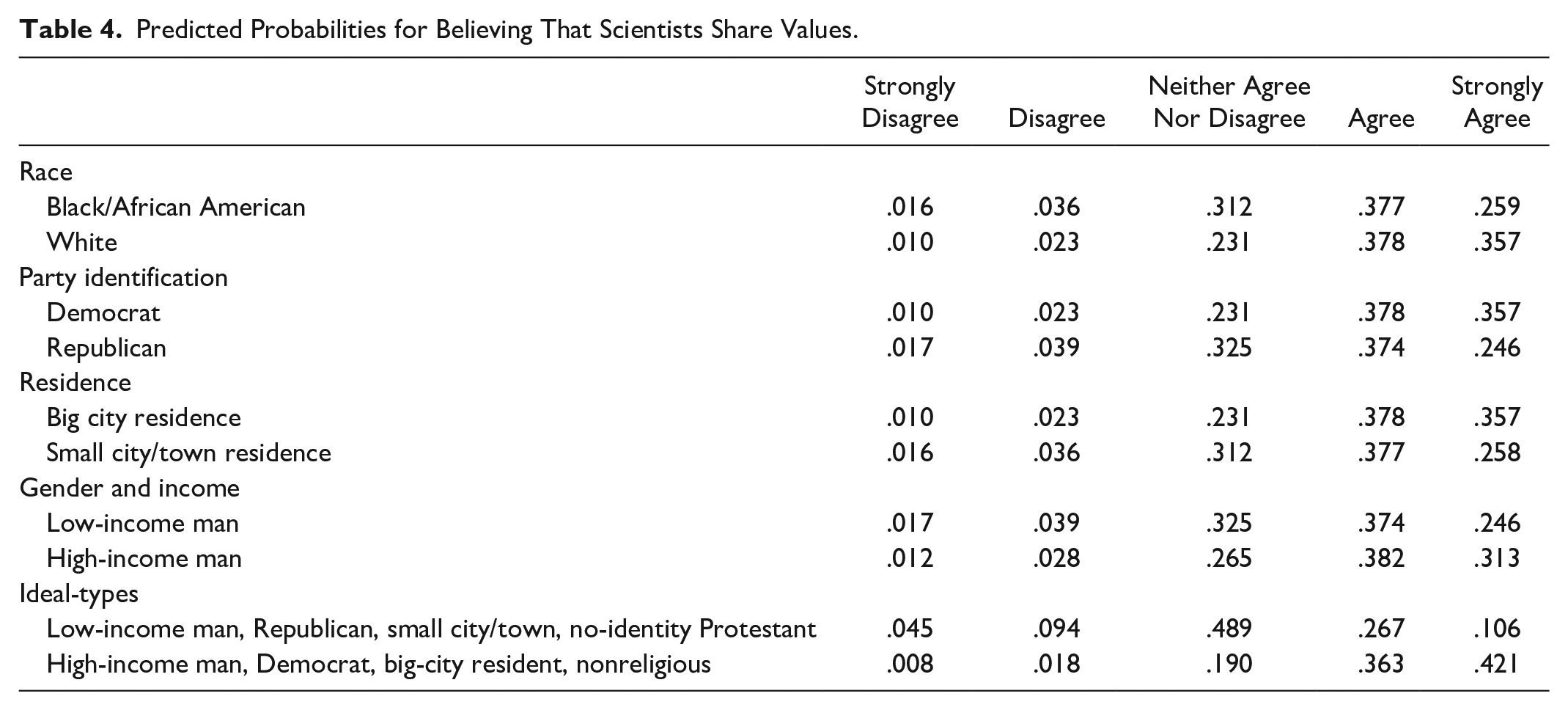

Table 4 shows the differences in selected predicted probabilities for the values model. As individual characteristics, these effects are not large, but comparison of common combined identities produces larger differences. For example, a lower income male, Republican, strong no-specific-identity Christian who resides in a small town has a predicted probability of .11 of strongly agreeing that scientists share their values, whereas a higher income male, nonreligious Democrat who lives in a city has a predicted probability of .42.

Predicted Probabilities for Believing That Scientists Share Values.

Discussion

As of this writing, the COVID-19 pandemic continues. Even if it is ultimately controlled, there will be similar challenges in the future. Beyond a contribution to academic debates about the public’s view of expertise (Eyal 2019), understanding which members of the U.S. polity trust and do not trust scientists researching the novel coronavirus is critically important for those who design a public response to pandemics.

There are a number of limitations of this analysis. Given the spread of the virus to different locations in the United States, which suggests different levels of public attention and threat, as well as the changes in how various public figures talk about COVID-19, it is possible that the same survey conducted two months later might have resulted in different findings. Second, we look for uniqueness in the COVID-19 case by testing if known relationships between social groups and science are operative, but we do not control for attitudes toward science in general to see if the COVID-19 case stands out. Future work should ask about both the unique situation at hand and science more generally.

Our research makes several distinct contributions. We split our analysis of trust between knowledge and values. As expected, the historical lack of trust between African Americans and medical research scientists continues into the present crisis. Scientists should be aware that the legacy of past research injustices is likely to mean that African Americans will tend to think both that scientists’ facts about COVID-19 are wrong and that the values scientists are implicitly promoting are not in line with theirs.

The religion-and-science literature predicted that there would be no effect of religion on belief in science’s abilities to generate true knowledge, but our model shows that Catholics and conservative Protestants are less likely than the nonreligious to think scientists understand COVID-19. A challenge in this literature is that because of the varied ways of measuring and categorizing religion in a survey, as well as the use of different reference groups, research is very difficult to compare. That said, we speculate that because there is no religious counterclaim regarding infectious disease in either tradition, these findings represent a direct Trump communication effect: these are the two religious groups in the United States who have, up until now, been Trump’s greatest supporters.

Trump is not explicitly criticizing the values of scientists, and we therefore find no religion influence on values, with one exception. Although the existing literature would expect white conservative Protestants to oppose the values of scientists, this literature has largely examined issues of human origins. On the particular issue of COVID-19, scientists’ implicit value of saving lives would be consistent with at least the theological views taught in these traditions.

The one religious group who think scientists do not share their values is the difficult-to-describe non-specific-identity Protestants. This growing group in American religion has not been extensively studied, but a religious group based on rejecting religious traditions and institutions may well be prone to rejecting other institutions, such as science. We can tentatively think of this as a particularly populist version of conservative Protestantism. Future researchers will need to determine the underlying mechanisms behind these mixed religion effects.

The existing literature on political identity and science shows that conservatives and moderates (Gauchat 2012) or Republicans and independents (Evans and Feng 2013; Jelen and Lockett 2014) are collectively different from liberals and Democrats. We see the same in this study. This means that there is not a direct Trump communication effect, because that effect would not affect independents. Rather, there is either a direct Fauci communication effect, whereby Democrats are particularly attuned to follow scientists, or whatever it is in the existing literature that leads to Republicans’ and independents’ being much more skeptical of science than Democrats also applies to a life-or-death scientific issue such as COVID-19.

Finally, we focused on the effects of social class and gender because nonmetropolitan, working-class men have been the target of Trump’s populist appeal. They, along with conservative Christians, have been Trump’s “base.” That there are no class effects in the understanding-COVID-19 model suggests that there is no direct Trump communication mechanism to his base, because Trump has been explicit only about rejecting scientific claims. The populism literature does predict that those attracted to populism would reject all elite knowledge claims, but the fact that we do not see rejection in the case of COVID-19 suggests that this populist rejection does not extend to issues of life and death. This may be because populism is more of a protest statement than an actual theory of knowledge: populism may be a type of symbolic status politics. It is consistent with this interpretation that we find effects on the more symbolic statement of thinking that scientists do not share one’s values.

Conclusion

Why do some groups not trust Fauci? Overall, we see very limited evidence of a direct Trump communication effect when it comes to believing that scientists understand the pandemic. The mechanisms we see in the attitudes toward science literature are also in effect for Covid-19, with the exception of the effects of class not applying to a life-or-death scientific issue and a religious clash over values not applying to COVID-19.

We offer some concrete suggestions for those who want scientists to be more influential in the public sphere during the COVID-19 crisis. The descriptive results of the two dependent variables in this study suggest that the public is more likely to think that scientists understand COVID-19 than to think that they agree with the values used by scientists in the COVID-19 pandemic. Particularly for non-Democrat, working-class men in nonurban settings, which is Trump’s base, indications that scientists are inevitably expressing their values may lead to less support for scientists’ involvement in COVID-19 policy.

This results in a pragmatic recommendation. Ideally, Fauci and other scientists would stick to description and not proscription, leaving to nonscientists the advocacy for the value of saving lives and the like. Many governors have advocated for these values while relying upon facts generated by scientists. This role of developing facts on which policy is based would be the one for scientists most supported by the public. Unfortunately, for those who believe in the value of prioritizing lives, scientists such as Fauci are the only prominent federal-level advocates of that value, which puts them in a bind. Although there may not be an easy solution at the federal level, it is important to be aware of the social determinants of support for scientists in the coronavirus pandemic. Most Americans do believe scientists’ claims about COVID-19; how that gets translated into value-laden policy recommendations is more complicated.

Footnotes

Acknowledgements

We are grateful to the members of the Internet Use & Society Division of the Communication and Media Research Department at the University of Zurich for their work on the survey upon which these analyses are based, especially Hao Nguyen and Jaelle Fuchs. We also thank Niels Mede and the anonymous reviewers for their helpful comments on a previous version of this article.

1

Of course, these numbers all differ slightly because of the myriad strategies used to measure and differentiate religion, particularly for Protestants. But, for example, a 2014 survey on religion and science identified conservative Protestants at 25 percent, other Protestants at 20 percent, Catholics at 24 percent, “other religion” at 15 percent, and nonreligious at 16 percent (![]() :6). Before our religiosity screen, our sample includes both types of Protestants combined (40 percent), Catholics (23 percent), “other religion” (12 percent), and nonreligious (21 percent).

:6). Before our religiosity screen, our sample includes both types of Protestants combined (40 percent), Catholics (23 percent), “other religion” (12 percent), and nonreligious (21 percent).

2

One assumption in an ordered logistic model (OLM) is the parallel regression or proportional odds assumption (Long and Freese 2014: 326). That is, the effect of a variable on the difference between the first category of the dependent variable on the rest of the categories should be the same as the effect of the first and second combined on the remaining categories, and so on. If that is not true, then the regular OLM coefficient is too much of a generalization and potentially misleading. Most OLMs actually violate this assumption (Long and Freese 2014:331). We diagnose the proportional odds assumption using the gologit2 program (Williams 2006) (results not shown). A nonparallel effect is typically one that increases or decreases in strength as the algorithm continues up the dependent variable scale. Occasionally, the nonparallelism is caused by the effect’s wavering in strength as it progresses up the scale. For the model reported in the first results column of ![]() , the rural residence variable is nonparallel, and fluctuates in size and direction, but is always not significant. Given that all of these are nonsignificant, this has no substantive impact on our interpretation. The age variable has the same magnitude for the comparisons until the last, for which it is approximately half the size of the earlier. But all are significant, so this represents a level of detail far above the generalization we need for our purposes. For conservative Protestants, the general OLM coefficient hides a pattern that, although interesting, does not affect our substantive conclusion. Between believing that scientists understand “not at all” and the higher confidence responses, conservative Protestants are more likely than the nonreligious to have confidence in scientists (but this only reaches a p value of .06). The next comparison is near zero and is not significant, but the final two comparisons are significant but in the opposite direction of the first. Therefore, conservative Protestants are most different from the nonreligious in the statements of strong confidence in scientists.

, the rural residence variable is nonparallel, and fluctuates in size and direction, but is always not significant. Given that all of these are nonsignificant, this has no substantive impact on our interpretation. The age variable has the same magnitude for the comparisons until the last, for which it is approximately half the size of the earlier. But all are significant, so this represents a level of detail far above the generalization we need for our purposes. For conservative Protestants, the general OLM coefficient hides a pattern that, although interesting, does not affect our substantive conclusion. Between believing that scientists understand “not at all” and the higher confidence responses, conservative Protestants are more likely than the nonreligious to have confidence in scientists (but this only reaches a p value of .06). The next comparison is near zero and is not significant, but the final two comparisons are significant but in the opposite direction of the first. Therefore, conservative Protestants are most different from the nonreligious in the statements of strong confidence in scientists.

3

When producing predicted probabilities, the variables not under analysis need to be set either at their mean values or at substantive values. The mean of age and the extent to which the respondent is following the COVID-19 news are legitimately continuous variables, and therefore these are set at their means. The other variables are categorical, so it does not make sense to take the average of something like a religion dummy variable, as an individual cannot be 20 percent Catholic. Therefore, a hypothetical individual must be compared. When not in the substantive comparison, living area is set at city, gender and income at lower income male, party identification at Democrat, race at white, education at the middle category of some college, and religion at Catholic.

4

In the parallel regression diagnostics, the “following the news” variable fluctuates in effect size, with each comparison being statistically significant. The Native American effect fluctuates as well, with no comparison reaching significance. The “other religion” dummy fluctuates, but this is not an interpretable variable, being in the model only to achieve the proper comparison with the other religion variables. The independent political affiliation variable fluctuates in the size of its effect. The male upper income variable is not significant in the difference between the first and second category or in the final comparison. It is very powerful in the middle of the range. This adds some subtle detail to this relationship, but our interpretation of the regular OLM coefficient is a good generalization for the purposes of this article.