Abstract

We run a randomized experiment to examine gender discrimination in book purchasing with 2,544 subjects on Amazon’s Mechanical Turk. We manipulate author gender and book genre in a factorial design to study consumer preferences for male versus female versus androgynous authorship. Despite previous findings in the literature showing gender discrimination in book publishing and in evaluations of work, respondents expressed no gender preference across a variety of measures, including quality, interest, and the amount they were willing to pay to purchase the book. This nonfinding, if it holds up to additional research, suggests that book consumers may not express the same discriminatory tendencies observed among indie and traditional publishers.

Keywords

This paper reports on a randomized experiment to examine gender discrimination among consumers using the case of books. A recent study observed a substantial and significant gender-based price gap for books (Weinberg and Kapelner 2018) but could not resolve the question of whether the observed discriminatory behavior by publishers, and to a lesser extent, authors, reflected and reinforced consumer biases and preferences or helped to engineer them (Baumann 2001; Maguire and Zukin 2004; Radway 2009). This study expands that work to investigate the extent of consumers’ gender discrimination in the book market.

Firms, like traditional publishers, play a diminished role in the growing gig economy, the nonstandard or alternative work economy also referred to as the freelancer economy, platform economy, on-demand economy, crowdfunding economy, and sharing economy, among other names (Kalleberg and Dunn 2016; van Doorn 2017). Whether replacing standard work arrangements or providing the opportunity to supplement income, the gig economy has the potential for widespread economic impact, as nearly a quarter of Americans earned money from a digital commerce platform in 2015 (Smith 2016). In the gig economy, workers come directly into contact with the market without the legal protections that regulate and temper discriminatory tendencies of employing organizations.

Consequently, as the gig economy grows, so, too, does the impact of external markets. Low barriers to entering the gig economy and pressures toward price competition provide consumers with opportunities to choose among a growing field of similar options. These trends suggest the possibility that worker characteristics may play an increasingly focal role in transactions (e.g., which workers receive gigs, achieve crowdfunding, or make sales). As a result, discrimination in the gig economy may be more pronounced and contribute more markedly to wage inequality than discrimination in standard employment arrangements.

The literature on gender discrimination focuses primarily on employer behavior (Fernandez-Mateo 2009; Williams, Muller, and Kilanski 2012), in large part because the activities of employers are both observable and subject to policy intervention and regulation. However, in the gig economy, employment relations between “workers” and “clients” are mediated by platforms or placement agencies that are not technically employers and have no legal obligation to ensure equality. For example, Fernandez-Mateo (2009) found that female contractors receive both lower rates for their contracts and a lower volume of work.

Rates for and volume of gigs reflect expectations regarding the value of the good or service offered and may also encompass explicit evaluations by others in the form of reviews. In the literature on wage inequality, numerous studies have identified a gender gap in earnings related to biased evaluations of male as compared to female workers (Petersen and Saporta 2004). In cultural industries, work by female artists and scientists receives fewer reviews or accolades and lower prestige compared to work by men in the same genre or field (e.g., Lincoln et al. 2012; Schmutz and Faupel 2010; van den Brink and Benschop 2012). Moreover, while information about accomplishments may mitigate gender-biased evaluation, experiments using identical resumes have found disadvantages for female candidates in judgments of their suitability for academic and scientific jobs (Foschi and Valenzuela 2012; Moss-Racusin et al. 2012; Steinpreis, Anders, and Ritzke 1999). In a recent experiment, Tak, Correll, and Soule (2019) examined ratings of craft beer and cupcakes when producers were thought to be either male or female and found evidence of asymmetric negative bias, such that products made by women are disadvantaged in “male-product” markets (beer) but products by men are not disadvantaged in “female-product” markets (cupcakes).

Several studies and experiments, beginning with the touchstone by Goldberg (1968), have examined unconscious gender bias in evaluation of manuscripts. Many of these studies draw on expectation states theory, posing the assignment of higher prestige or expectations of higher degrees of task competence based on a socially valued attribute or status characteristic (for a review, see Foschi and Valenzuela 2015). Related to writing, this line of work examines the double standard that may be applied by readers and reviewers based on an author’s reported gender and in many cases in relation to whether a topic or field is more strongly associated with male or female authorship. Many studies have found evidene of gender bias in evaluation of manuscripts (e.g., Goldberg 1968; Haswell and Haswell 1996; Knobloch-Westerwick, Glynn, and Huge 2013; Lloyd 1990; Paludi and Bauer 1983), but some have found none (Borsuk et al. 2009; Tregenza 2002).

Following the tradition of these smaller laboratory experiments on unconscious bias in evaluation, we designed and conducted a randomized experiment. Our experiment varies the gender of the author for identical books in male- and female-type genres while overcoming issues related to external validity (for reviews, see Kasof 1993; Top 1991).

Method

Using the covers and descriptions of two titles in different fiction genres by a single author, we randomized both book assignment and gender of the author name—male, female, or androgynous initials—and asked respondents to evaluate the book presented on a host of dimensions. We included two fiction genres, thriller and erotica, both of which have been widely reported to have high levels of sales, particularly in the latter case with the runaway success of the Fifty Shades of Grey series and the growing demand for similar titles, making them appropriate for an examination of consumer preferences. We picked these two genres because of the 2002-to-2012 data derived from R. R. Bowker’s Books in Print (Bowker 2017), a comprehensive bibliographic catalog used by retailers and libraries. The two genres exhibit strong gender sorting. In traditional publishing, 52 percent of thriller titles are by authors with unambiguously male names and 20 percent are by authors with unambiguously female names, while erotica titles are nearly a mirror image: 49 percent of titles are by female authors, and 16 percent are by male authors (Weinberg and Kapelner 2018). The cover artwork and descriptive blurbs were from existing works by author D. B. Shuster and were designed to be strongly representative of their genres.

We posted the experiment on Amazon’s Mechanical Turk (MTurk). MTurk is the largest online, task-based labor market, which employs more than half a million largely anonymous laborers worldwide (Paolacci and Chandler 2014). MTurk allows individuals and companies to outsource small tasks coined “human intelligence tasks” (HITs). If designed carefully, the experimenter can pose as an employer à la the “experimenter-as-employer” design of Gneezy and List (2006) and Chandler and Kapelner (2013), as done herein. This study received institutional review board exemption.

MTurk experiments have been used to test a variety of respondent biases in sociology, making the platform a good choice for examining potential gender bias, as well. For example, Hunzaker (2014) collected a sample (N = 140) to test reproduction of stereotype bias via story transmission, and Harkness (2016) used a sample (N = 225) to test gender and racial discrimination in lending decisions.

We created our experimental task to inconspicuously appear much like any other one-off MTurk market research survey task. This study’s HIT title was “Answer Some Questions for a Book Publisher”; the description was “We would like to get your opinions about a recently released fiction book based on the way we’re promoting it. We will ask you to examine the book’s cover and description and then answer questions about your opinions about the book”; and searchable keywords were survey, questionnaire, poll, and opinion. Surveys with these types of titles, descriptions, and keywords are among the most commonly performed HITs.

We recruited a total of 2,544 unique participants in five weeks from June 22 to July 28, 2015. 1

Demographic Measures

Respondents were first assessed on a host of demographic measures. We used dummy variables (yes = 1, no = 0) for whether the subject was male, African American, or Hispanic. We also examined age with dummy variables for categories younger than 20 (comparison group), 20 to 29, 30 to 39, 40 to 49, 50 to 59, and 60 or older. Similarly, income categories were less than $20,000, $20,000 to $39,999, $40,000 to $59,999, $60,000 to $79,999, $80,000 to $99,999, $100,000 to $149,000, $150,000 to $199,999, and $200,000 or more, with additional categories of “I don’t know” and “I’d rather not say” (comparison group). Respondents also reported on the number of e-books and print books read in the past six months, a continuous variable. The demographic questions were presented in random order to reduce the effects of order bias, a form of survey satisficing (Krosnick 1999). For the text of the demographic questions, see the supplemental material, available online.

Experimental Manipulations

After demographic characteristics were assessed, we randomized the subjects to explore the extent of any causal bias of author gender on book interest. We used a between-subjects completely randomized design (no blocking) with two manipulations This is also called the “Bernoulli Design” by Imbens and Rubin (2010, Chapter 4.3).

The first manipulation was gender, randomized across three levels: male, female, and androgynous initials. In order to avoid the scenario where the experimental effects we find are due to age, race, class, or other attributes attached to specific names rather than to gender (Kasof 1993), we selected and randomized the name from the Social Security Administration’s list of the most popular male names (Alexander, Benjamin, Chase, Daniel, Ethan, Gabriel, Ian, Jack, Liam, and Michael) and most popular female names (Alice, Anna, Charlotte, Ella, Grace, Isabella, Natalie, Olivia, Rachel, and Samantha) for babies born between 2010 and 2015. Androgynous initials were created at random without using letters that would correspond to rare names (A. K., A. S., B. T., C. P., D. D., D. J., E. L., N. C., P. D., and W. L.). For simplicity, we kept the last name of the author, Shuster, constant for every participant. 2 The author’s name appeared in every survey measure (see next section). This gender manipulation reflects real-world conditions of what consumers encounter when they browse online for books. Thus, our results here would most likely reflect what can be measured in the market.

We then created a second manipulation, book genre, with two levels: erotica, a female-type genre, and thriller, a male-type genre. For the erotica level, we used a short story called “Pleasing Professor” authored by Shuster. For the thriller level, we used “Kings of Brighton Beach: Gangsters with Guns,” which is the first episode in a serial also by Shuster. These manipulations include a book cover and two-paragraph description.

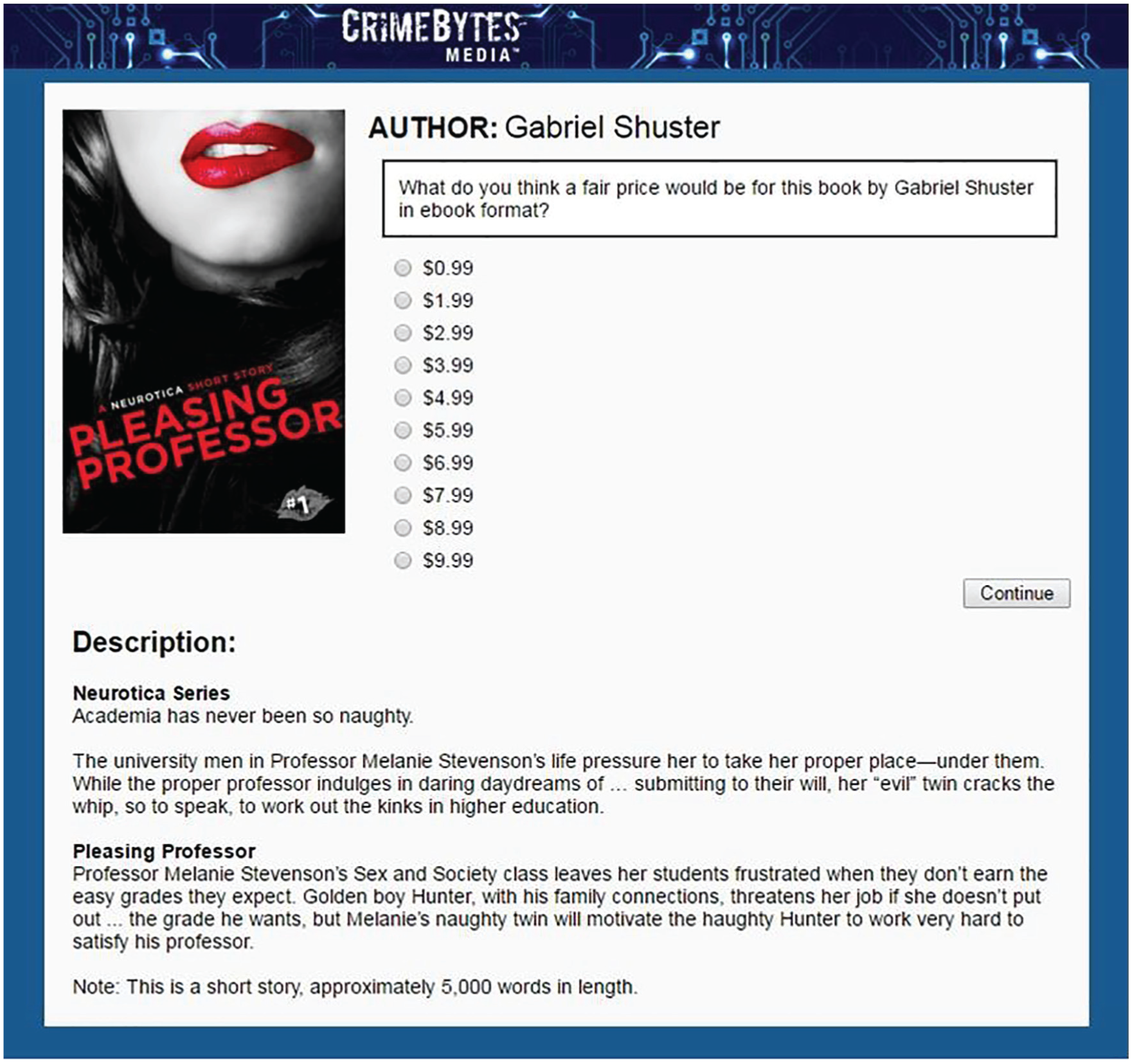

The two manipulations created six conditions: (1) male author/erotica genre (M/E); (2) female author/erotica genre (F/E), (3) androgynous-initials author/erotica gender (A/E), (4) male author/thriller genre (M/T), (5) female author/thriller genre (F/T), and (6) androgynous-initials author/thriller genre (A/T). The M/E condition is shown in Figure 1.

Randomized experiment screenshot of human intelligence task on Amazon’s Mechanical Turk.

Survey Measures

We asked respondents to evaluate the experimentally manipulated book presented in the HIT based on several dimensions related to consumers’ evaluations and their purchase decisions. For each question, the author’s name (i.e., the gender manipulation) was prominently featured in the question wording to make this characteristic of the author as salient as possible. Using 4-point Likert scales ranging from not at all likely to very likely, respondents rated how interested they would be in learning more about the book based on the cover (cover interest), how interested they would be in reading a sample of the book based on the description (description interest), and how they would rate the quality of the writing based on the description (writing quality). Respondents were also asked what they considered a fair price for the book in e-book format, with responses ranging in $1 increments from $0.99 to $9.99 (fair price). Respondents were also asked what they would pay for this book in hardcover, with responses of $0, $0.49, $0.99, and then increasing in $1 increments to $29.99 (willingness to pay). Based on the cover and descriptions, respondents selected from a list of adjectives that they thought best described the book: kinky, erotic, sexy, romantic, action-packed, violent, gritty, realistic, poignant, exciting, mysterious, and/or emotional. Asking respondents to describe the book in this way served as a check on whether our genre manipulation was effective. Figure 1 illustrates the fair price evaluation.

These response measure elicitations were presented in random order to reduce the effects of order bias. For further details about the response questions, including their text, see the supplemental material, available online.

Analysis Methodology

We used ordinary least squares regression to assess the effect of the manipulation on respondents’ ratings and perceptions and present models both with and without the demographic control variables.

Results

Experimental Balance

To ensure our randomization was effective, we tabulated sample sizes and demographic information among the six randomized groups (Table 1). No imbalances of statistical significance were detected across manipulations for any of our demographic measures, indicating a successful randomization. As each of these manipulations was similar to one another, differential attrition among experimental treatments was not observed in our experiment. 3

Main Baseline Covariate Averages among the Six Experimental Manipulations.

Note: M = male author; F = female author; A = androgynous author; E = erotica genre; T = thriller genre.

Manipulation Checks

To ensure our genre manipulation was effective, we fit separate logistic models explaining each adjective by genre; the results are found in Tables 2 and 3. In short, the genre manipulation worked. Participants determined the erotica book was kinky, erotic, sexy, and romantic (the top four adjectives selected) and that the thriller book was action-packed, violent, gritty, and realistic. Moreover, there is little overlap in the adjectives respondents used to describe the books from the two genres.

Postmanipulation Check for the Erotica Genre.

Note: Individual logistic regressions of responses for the 21 descriptive adjectives. Estimates are for the additive effect of the relevant manipulation on the log odds of a participant listing the adjective as best describing the book. Only significant variables are displayed. The four left out are intelligent, poignant, exciting, and mysterious (i.e., those can be attributed to both genres simultaneously). Effects are ordered from largest to smallest.

Postmanipulation Checks for the Thriller Genre Manipulation.

Note: See Table 2 note for a description.

Experimental Results

Did respondents find greater interest and value in books by male authors as compared to female authors? Across all eight survey measures, the regression models both without demographic controls and with controls (Table 4) show no significant difference related to author gender. We also find no statistically significant heterogeneous effects of author gender by genre (results unshown). This nonfinding is striking not only for its robustness across all of the outcomes but also given our high statistical power with N ≈ 2,500 subjects. With regard to willingness to pay, for example, the statistical test had 80 percent power to detect a one-sided difference as small as approximately $0.30.

Ordinary Least Squares Results for All Eight Response Variables Regressed on the Experimental Manipulation with and without Demographic Controls (N = 2,544).

Note: Results are displayed as estimate (standard error).

p < .10. **p < .05. ***p < .01.

However, we did find highly significant differences in the regression models related to the genre manipulation for all survey measures. The genre manipulation had been initially included as a means of examining whether consumers evaluated male and female authors differently based on whether they were writing in male-dominated or female-dominated genres. With no gender effect in the models, this original question is a nonstarter. However, we find that across all regressions, respondents consistently place a higher value on the thriller book (the male-dominated genre) compared to the erotica book (the female-dominated genre) for all ratings save the description of the cover. We find that compared to the erotica book, respondents deemed the thriller book to have a more interesting description (estimate of 0.251, p < .000) and higher-quality writing (estimate of 0.430, p < .000) and generated greater interest in purchasing (estimate of 0.219, p < .000). Moreover, respondents reported a fair price for the thriller e-book $1.21 (p < .000) higher than the erotica e-book and were willing to pay $3.75 (p < .000) more for the hardcover thriller book than the hardcover erotica book.

We conducted several checks to these findings, and the results were extremely robust. They are unchanged when including or excluding different demographic covariates or methods of estimation. Across all of these models, the coefficient for female author name was never found to be statistically significant regardless of the model selected, and the genre difference always remained significant, with the thriller genre scoring uniformly higher on every response metric. Further, no significant effects were found for any of the 30 individual names in the gender manipulation even when interacted with race or age of experimental subject.

Discussion

We began this paper with the premise that gender discrimination might go unchecked in the gig economy and proposed the case of books as an experimental test of consumers’ unconscious gender bias. Counter to expectations based on the observed discriminatory behavior of firms and individual authors, which price books by male authors higher (Weinberg and Kapelner 2018), our findings suggest that in the case of fiction, consumers’ assessments of books may be blind to author gender.

In their review of the literature, Petersen and Saporta (2004) describe three types of discrimination typically seen in the workplace: allocative discrimination (the differential assignment of men and women to particular types of jobs or occupations), valuative discrimination (greater value placed on jobs or occupations done by men compared to women), and within-job discrimination (the differential recognition and rewards for men and women doing the same job). Our randomized experiment was designed to detect a within-genre difference in value placed on books by male or female authors, corresponding to within-job discrimination, and a between-genre premium (or penalty) for books by male or female authors that might reflect allocative discrimination or the preference for one gender over another in certain occupations. Our negative finding in this study suggests that the gender-based differential in price that publishing houses and indie authors have placed on books is not reflective of what the market will bear or of consumer preferences for fiction books by male authors compared to female authors. The findings also suggest that the gender-based sorting of authors into fiction genres may reflect supply rather than consumer demand.

At the same time, the results from this study, though not designed to look specifically at valuative discrimination, coincide with findings across occupations and in publishing in particular that show that male-dominated fields are valued more highly than female-dominated ones. The differences we observed may reflect the differential valuation between male- and female-dominated genres, corresponding to the valuative discrimination by indie and traditional publishers for thriller and erotica genres (a difference of $10.21 for traditional publishers and $1.90 for indie authors when setting prices (Weinberg and Kapelner 2018). However, the observed differences in this study may be an artifact of differences in the books presented rather than differences in the genres themselves. For example, the results may reflect the difference in value respondents placed on a “serial” compared to a “short story” or readers’ greater interest in male compared to female protagonists (Bortolussi, Dixon, and Sopcák 2010).

Do the results herein generalize to the population of Americans at large? This is an open problem discussed in every area of science concerned with human subjects. Berinsky, Huber, and Lenz (2012) did a comprehensive survey of American MTurk laborers, and broadly speaking, they match with probability samples (the gold standard for surveying) except they are younger, slightly more educated, more politically liberal, and less religious. Since our hypothesized treatment effect is unlikely to be heterogeneous due to these demographic imbalances, we are not concerned that our results cannot be generalized to the American population.

Do our results measure actual consumer behavior? While we captured what respondents say they thought or what they would do (i.e., stated preferences), what they would actually do may be somewhat at variance.

There is much to be explored using this turnkey experimental methodology. This study tested two genres and was modest in scope. We suggest a future plan of research that delves more fully into gender-based differences between genres. It should be noted that both of the genres considered herein are fiction genres. While we differentiated erotica and thriller genres as female- and male-type product markets, respectively, fiction itself is a female-type product market, with women almost twice as likely to read fiction as men (Tepper 2000) and with prices for fiction lower on average than those for nonfiction (Weinberg and Kapelner 2018). Based on the finding of assymetric negative bias reported by Tak et al. (2019), our nonfinding of bias related to author gender may not be surprising in this female-type product market, and we may yet observe gender-based differences for authors in other types of genres. Future research should include nonfiction, where perceived expertise (e.g., among scientific textbooks) may play a larger role in consumers’ interest and regard and may also be more gender sensitive.

Nonetheless, with these caveats and limitations in mind, we believe this experiment offers valuable insights for gender and stratification scholars about gender inequality in a changing workplace. Across studies in the traditional labor market, within-job discrimination has a small impact on wage inequality relative to the impact of labor market segregation and the related sorting of men and women into higher- and lower-paying fields and occupations. Examining the gig economy, this study gives us little reason to expect a high degree of income inequality based on whether producers are identified as male, female, or androgynous. At the same time, our findings suggest the possibility for larger inequalities in the gig economy related to the gendering of product markets. While more research is needed, this study suggests that in the gig economy, as in the traditional economy, gender-based discrimination and inequality have the potential to derive far more substantially from the gendered nature of types of work and of product markets than from the gender of workers and producers.

Footnotes

Acknowledgements

We thank Tom Marano, Maria Teran, and Kristine Rosales for their invaluable assistance in data management; Rikki Katz for help with software engineering; Marie Le Pichon for the artwork used in the experiment; and Shige Song, Holly Reed, Francesc Ortega, and Amy Hsin and the Analytical Social Science Group at University of Illinois at Urbana-Champaign for helpful feedback on an earlier version of this paper.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This project was funded in part by research enhancement funds from Queens College, City University of New York. The funders had no role in study design, data collection and analysis, decision to publish, or preparation of the manuscript.

1

Human intelligence tasks (HITs) were completed continuously throughout this time period, day or night, with 1,000 HITs released every hour, which expired on the hour (in order to keep our HIT relatively fresh among the worker listings). Upon completion, participants were immediately paid $0.14 unconditionally, and the total recruiting cost was $356.11. Participants were restricted to have American bank accounts in order to be paid. Historically, such a restriction equates to about 99 percent of participants being located in America as estimated by IP address lookup in Kapelner and Chandler (2010). All 50 states were represented in our sample. In order for a subject’s participation to be valid, the entire task had to be completed within 30 minutes. This time limit was rarely an issue: the average time to complete the survey was 2.9 ± 6.3 minutes, with 99 percent completing it under 15 minutes (for a total of about 16,000 man-hours of surveys taken).

2

We suppose that the effect of this last name on our response variables (if any) would create a level effect observed among all manipulations but not a heterogeneous treatment effect, which would limit this experiment’s external validity.