Abstract

Background

The rapid integration of artificial intelligence (AI) into healthcare has raised concerns among healthcare professionals about the potential displacement of human medical professionals by AI technologies. However, the apprehensions and perspectives of healthcare workers regarding the potential substitution of them with AI are unknown.

Objective

This qualitative research aimed to investigate healthcare workers’ concerns about artificial intelligence replacing medical professionals.

Methods

A descriptive and exploratory research design was employed, drawing upon the Technology Acceptance Model (TAM), Technology Threat Avoidance Theory, and Sociotechnical Systems Theory as theoretical frameworks. Participants were purposively sampled from various healthcare settings, representing a diverse range of roles and backgrounds. Data were collected through individual interviews and focus group discussions, followed by thematic analysis.

Results

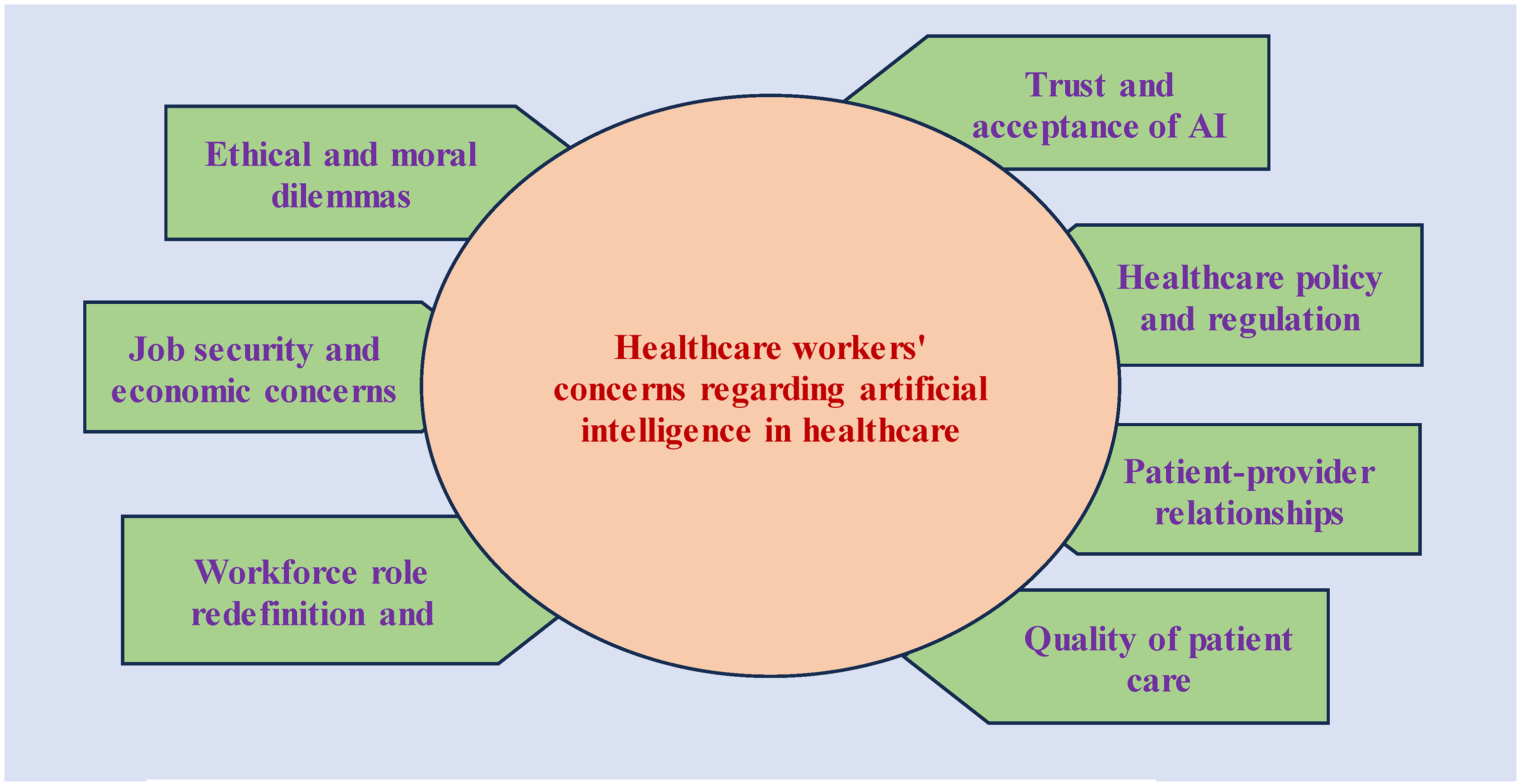

The analysis revealed seven key themes reflecting healthcare workers’ concerns, including job security and economic concerns; trust and acceptance of AI; ethical and moral dilemmas; quality of patient care; workforce role redefinition and training; patient–provider relationships; healthcare policy and regulation.

Conclusions

This research underscores the multifaceted concerns of healthcare workers regarding the increasing role of AI in healthcare. Addressing job security, fostering trust, addressing ethical dilemmas, and redefining workforce roles are crucial factors to consider in the successful integration of AI into healthcare. Healthcare policy and regulation must be developed to guide this transformation while maintaining the quality of patient care and preserving patient–provider relationships. The study findings offer insights for policymakers and healthcare institutions to navigate the evolving landscape of AI in healthcare while addressing the concerns of healthcare professionals.

Introduction

The realm of healthcare is undergoing a remarkable transformation driven by the rapid advancement of artificial intelligence (AI). In recent years, AI has emerged as a powerful force, promising to reshape the landscape of healthcare delivery in ways (Sun et al., 2022) that were once considered science fiction. With its ability to analyze massive datasets, recognize complex patterns, and make precise recommendations, AI has the potential to revolutionize medical diagnosis, treatment, and patient care (Dicuonzo et al., 2023). These AI-driven technologies offer the healthcare industry an opportunity to enhance efficiency, accuracy, and patient outcomes (Albahri et al., 2023; Kumar et al., 2023a). A growing number of healthcare institutions are integrating AI technologies into their systems. According to recent studies, the global AI adoption rate in healthcare has surged, with a substantial increase in the implementation of AI-driven solutions for tasks such as image analysis, predictive analytics, and personalized medicine (Alowais et al., 2023; Kumar et al., 2023b; Sebastian et al., 2023). This widespread adoption reflects the increasing recognition of AI's potential to address challenges in healthcare and improve overall service delivery (Younis et al., 2024).

Healthcare professionals, including doctors, nurses, radiologists, and various other medical staff, have historically played a pivotal role in the healthcare ecosystem. Their expertise, compassion, and dedication have been fundamental in providing medical care, ensuring patient well-being, and fostering trust between patients and healthcare providers (Appleton et al., 2023). These professionals have invested years in training and practice, cultivating specialized skills and knowledge that enable them to offer medical services ranging from diagnosis to treatment, rehabilitation, and emotional support (Frenk et al., 2022). Their role extends beyond the mere application of medical knowledge; it involves building relationships with patients, understanding their unique circumstances, and making empathetic decisions that transcend the purely clinical aspects of healthcare (Eijkelboom et al., 2023).

However, the introduction of AI technologies, capable of making rapid and precise decisions based on data analysis, has led to apprehensions about the possible displacement of human healthcare professionals (Amann et al., 2023; Mousavi Baigi et al., 2023). The potential for AI to replace or significantly alter the roles traditionally held by medical practitioners raises numerous concerns within the healthcare community (Aquino et al., 2023; O’Connor et al., 2023). As AI systems grow in sophistication, the question of whether these technologies can surpass, or even replicate, the capabilities of healthcare professionals have become a central point of discussion (Apell & Eriksson, 2023). The growing influence of AI in healthcare has prompted a need to explore the multifaceted concerns and doubts experienced by healthcare workers as they navigate this technological transformation (Ali et al., 2023).

Review of Literature

AI in healthcare is not just a trend but a transformative force that promises to enhance multiple facets of healthcare delivery (Mahdi et al., 2023). AI-driven applications, such as machine learning algorithms, natural language processing, and computer vision, have demonstrated the potential to streamline healthcare operations, optimize treatment plans, and improve patient outcomes (Chen et al., 2023; Naik et al., 2022). These technologies can process vast quantities of medical data and identify subtle patterns that can lead to more precise and efficient patient care (Khullar et al., 2022; Rony et al., 2024a). Furthermore, AI can reduce the burden on healthcare professionals, allowing them to focus on more complex aspects of medical practice, research, and patient interaction (Tursunbayeva & Renkema, 2023). The potential benefits of AI are substantial, making it a subject of great interest in healthcare (Shaik et al., 2023).

Moreover, the emergence of AI in the healthcare sector has generated a multitude of worries and doubts within the healthcare workforce. These concerns stem from various dimensions, including job security (Chew & Achananuparp, 2022), ethical considerations (Karimian et al., 2022), and the impact on the patient–provider relationship (Nash et al., 2023). Healthcare workers fear the possibility of losing their jobs or the need to adapt to new roles as AI systems take over routine tasks (Jarota, 2023). The fear of unemployment, coupled with the anxiety of acquiring new skills, can be profoundly distressing for those who have dedicated their lives to the medical profession (Shinde et al., 2024). In addition, healthcare professionals are concerned about the accountability and responsibility associated with AI errors, as incorrect diagnoses or treatment recommendations can have severe consequences for patients (Alowais et al., 2023).

The concerns and doubts of healthcare professionals about the integration of AI into their roles are varied and deeply ingrained (Mohanty & Mishra, 2022). This study explored the importance of comprehending these concerns, as the insights of healthcare workers are crucial for shaping the responsible and ethical integration of AI in healthcare. Moreover, understanding the concerns of healthcare professionals is vital for ensuring a harmonious transition toward an AI-augmented healthcare system. By exploring their perspectives, this research will contribute to the development of strategies to address job displacement fears, ethical considerations, and the preservation of the patient–provider relationship. Therefore, the study aimed to investigate healthcare workers’ concerns about artificial intelligence replacing medical professionals.

Methodology

Study Design

In this study, a descriptive and exploratory research design was adopted to investigate healthcare workers’ apprehensions and viewpoints concerning the potential substitution of human medical professionals with artificial intelligence. Through the utilization of qualitative methods, our goal was to accumulate context-specific insights and gain a comprehensive understanding of healthcare professionals’ emotions, attitudes, and experiences within a healthcare system undergoing transformation due to AI integration.

Theoretical Framework

This research drew upon several theoretical perspectives to guide our exploration. First, the Technology Acceptance Model (TAM) served as a valuable framework to investigate healthcare workers’ readiness and willingness to embrace AI in their practice (Alhashmi et al., 2020a; Holden & Karsh, 2010). This framework enabled us to delve into the dynamics of acceptance and adoption, shedding light on the factors influencing healthcare professionals’ receptiveness to AI technologies (Alhashmi et al., 2020b). In the context of healthcare worker concerns about AI, TAM helps us understand the psychological and behavioral aspects of their readiness to accept and use AI tools, focusing on factors such as perceived ease of use and perceived usefulness (Guner & Acarturk, 2020).

In addition to TAM, we integrated psychological theories, particularly the Technology Threat Avoidance Theory (Samhan, 2017). This theoretical strand facilitated our understanding of the intricate emotional and cognitive responses exhibited by healthcare workers when confronted with the prospect of AI potentially supplanting their traditional roles (Cao et al., 2021). By applying the Technology Threat Avoidance Theory, we gained insights into how healthcare workers perceive AI as a potential threat to their roles and how they employ coping mechanisms to manage the psychological impact of this perceived threat.

It helped us dissect their reactions, fears, and coping mechanisms in the face of technological change (Bae, 2017). To provide a broader context and comprehend the interplay between AI systems and healthcare professionals within the healthcare landscape, we adopted the Sociotechnical Systems Theory (Fayoumi & Williams, 2021). This perspective allowed us to examine not only the technology itself but also the social and organizational aspects influencing AI's integration into healthcare settings (Schoenherr, 2022). Sociotechnical Systems Theory assists in understanding the broader organizational and social implications of AI adoption in healthcare, addressing concerns related to workflow changes, teamwork, and overall organizational dynamics (Kompella, 2020). By drawing from these diverse theoretical foundations, our research sought to comprehensively explore the concerns and viewpoints of healthcare workers amid the evolving landscape of AI in healthcare.

Study Settings

The research was conducted in a diverse array of healthcare settings, encompassing hospitals, clinics, and other healthcare facilities such as diagnostic centers and nursing homes within Dhaka city, Bangladesh. The selected participants are considered representative of the broader healthcare worker population in Bangladesh, as healthcare settings in Dhaka city are more sustainable than those in other parts of the country. In pursuit of a comprehensive understanding, purposive sampling was employed to select participants. This approach aimed to ensure diversity in terms of age, gender, highest education, years of experience, employment type and roles within the healthcare system (Table 1). To promote inclusivity and reach a wide spectrum of participants, we harnessed professional networks, including nursing associations, collaborated with healthcare organizations, and tapped into social media platforms such as nurses’ Facebook and Telegram groups. Throughout the recruitment process, we rigorously upheld ethical principles to ensure a fair and ethical engagement with all participants.

Demographics Outline.

FGR: Focus group respondent, BSN: Bachelor of Science in Nursing, BSc: Bachelor of Science, MBBS: Bachelor of Medicine, MSc: Master of Science, MSN: Master's in Nursing, MPH: Master's in Public Health, MBA: Master of Business Administration, MD: Doctor of Medicine, D.Pharm: Diploma in Pharmacy, B.Pharm: Bachelor in Pharmacy, FCPS: Fellowship of the College of Bangladesh and Surgeons.

Sample and Recruitment

Our sample consisted of a diverse group of healthcare workers representing various roles and backgrounds within the healthcare system, including nurses, physicians, radiologists, medical technicians, pharmacists, and hospital administrators. Participant selection was based on the following criteria: (i) Role within the healthcare system, (ii) A minimum of one year of experience in the healthcare profession, (iii) A minimum of 6 months of experience or 15 days of training in an AI-implemented healthcare setting or attending a minimum of three conferences related to AI in healthcare. Each participant was provided with comprehensive information about the study's objectives, procedures, potential risks, and benefits. Prior to participation, written informed consent was obtained from every participant.

Data Collection

The data collection process spanned from May to June 2023. Data were collected in two phases. In Phase I, we conducted individualized online semi-structured interviews through the Google Meet platform, each lasting 50–60 min. These interviews served as the cornerstone of our data collection process, providing a profound platform for participants to openly articulate their concerns, opinions, and experiences related to the integration of AI in healthcare. All interviews were meticulously audio-recorded with participants’ explicit consent and subsequently transcribed for comprehensive analysis. Phase II involved the integration of focus group discussions (FGDs) to augment our data collection. These discussions facilitated a collective discourse among participants, encouraging them to exchange viewpoints and explore diverse perspectives. We conducted a total of four FGDs using the same questions that were asked in semi-structured interviews (Table 2), with each session lasting an average of 1 h and 40 min. Each took place in distinct healthcare settings within the study area, including a district hospital, a divisional hospital, a diagnostic center, and a nursing home. This comprehensive methodology allowed us to glean multifaceted insights into the concerns and viewpoints of healthcare professionals regarding the potential replacement of medical professionals by AI.

Semi-Structured Interview/Focus Group Discussion Outlines.

Data Analysis

Data analysis in this study was conducted through a systematic approach, employing thematic analysis (Figure 1). Since the interviews were conducted in Bangla, they were transcribed into English with the assistance of a bilingual expert. The research team engaged in a comprehensive immersion into the data through repeated readings, a process chosen for its ability to reveal nuanced meanings and contextual insights. Following this, initial codes were developed to identify essential concepts and recurring patterns, which were then structured into themes and sub-themes. This analytical process entailed a continuous cycle of review, refinement, and interpretation, with a deliberate emphasis on iterative refinement to enhance the robustness and depth of the analysis. Additionally, the iterative nature of this process aimed to ensure that no significant insights were overlooked and that emerging themes accurately reflected the richness of the data. With a specific focus on contextualizing these themes within the chosen theoretical framework, the analysis aimed to deepen the understanding of the study's context and phenomena. To ensure a rigorous and methodical examination of the data, qualitative data analysis relied on NVIVO software. This approach enabled the establishment of a comprehensive, meticulous, and systematic analysis of the data, yielding robust insights and interpretations.

Concerns about the replacement of medical professionals by artificial intelligence.

Ethical Considerations

Ethical considerations were of paramount importance throughout this research. All participants were provided with comprehensive information about the research's objectives and potential risks, and their written informed consent was diligently obtained before data collection commenced. To protect the privacy of the participants, their identities were kept confidential, and data was de-identified during the analysis process. During the de-identification process, personally identifiable information such as names, addresses, and contact details were carefully removed, and unique identifiers or codes were assigned to each participant, ensuring anonymity. This meticulous de-identification step was crucial in safeguarding the participants’ sensitive information. Robust data security measures were put in place to ensure the utmost confidentiality and integrity of participant information. These measures included encryption protocols for data storage, restricted access to authorized personnel only, and regular security audits to identify and address potential vulnerabilities. The entire research team underwent comprehensive training on data security protocols to minimize the risk of unauthorized access or data breaches. Participants were explicitly informed of their autonomy and right to withdraw from the study at any point without facing any adverse consequences. This research underwent a rigorous ethical review by the ethical review board of Mahbubur Rahman Memorial Hospital & Nursing Institution to ensure complete compliance with established ethical standards and regulations (approval number: HRM/MRMH/335/16/01/2023).

Rigor and Reflexivity

To bolster the rigor and validity of our research, we implemented various strategies. Triangulation was employed by utilizing multiple data sources, including interviews and focus group discussions, to enhance the credibility and trustworthiness of our findings. Member checking was embraced, a process where participants were actively involved in reviewing and validating the research findings. They were provided with summaries of the results and interpretations, allowing them to offer insights, corrections, or additional perspectives, thereby contributing to the accuracy and validity of the study. This iterative feedback loop with participants added an extra layer of scrutiny to ensure that the findings resonated with their experiences and perspectives (Motulsky, 2021). Reflexivity was a constant practice as our research team engaged in continuous self-reflection, recognizing and addressing their own biases and perspectives to minimize researcher bias. We also sought external input through peer debriefing, as colleagues and peers not directly involved in the research reviewed the study design, data analysis, and interpretation, providing an additional layer of objectivity and validation to our study.

Results

Theme 1: Job Security and Economic Concerns

Sub-Themes

Anxiety About Job Displacement

In this qualitative investigation, healthcare workers expressed concerns about AI replacing medical professionals, highlighting anxiety about job displacement. Experienced individuals feared job obsolescence, devaluation of their expertise, and job security uncertainty due to AI advancements. This raised significant worries about the future of their healthcare careers, contrasting their dedication to patient care with the looming threat of technology replacing human roles, leaving them sleepless and uncertain about what lies ahead (Table 3). I’ve been a nurse for 15 years, and the thought of AI taking over some of our tasks terrifies me. Will I still have a job in the future? It's a constant worry that keeps me up at night. (R10) We’ve heard stories about AI systems diagnosing patients with incredible accuracy. It's great for medicine, but what about our jobs? I fear I’ll become obsolete. (FGR23) Job displacement due to AI is a real concern. As a healthcare worker, I wonder if my years of training and expertise will be devalued by machines. (FGR31) I think the uncertainty about job security is affecting our morale. We’re dedicated to patient care, and AI's rise poses a significant threat to our profession. (R4)

Thematic Analysis Including Main Themes, Sub-Themes, and Codes.

Economic Stability and Livelihood

This study uncovered deep-seated concerns among healthcare workers regarding the integration of AI into their profession. Participants, voicing genuine apprehension, highlighted the inseparable connection between their livelihoods and the stability of their roles in the face of AI implementation. Their worries extend to the significant investments in medical education, with fears of potential disruptions to career paths and financial well-being. The findings underscored the urgent need for assurances and financial support to navigate the potential impact of AI on their professional lives. My livelihood is tied to my job in healthcare. If AI takes over, what will happen to my family's financial stability? It's a genuine concern. (FGR16) We’ve invested so much time and money in our medical education. AI could disrupt our entire career path and financial well-being. (FGR27) I’ve got loans to pay off, and a family to support. AI replacing us would have a significant impact on our ability to make ends meet. (FGR20) If AI takes over, will there be financial support to transition to new roles or retrain for different careers? We need assurances about our financial future. (FGR29)

Technological Unemployment

Healthcare workers highlighted concerns about technological unemployment, which refers to the displacement of human workers by technological advancements, with AI replacing medical professionals. They feared becoming technologically unemployed and emphasized proactive addressing of this challenge. As technology advances, we face the risk of becoming technologically unemployed. It's a challenge we need to address proactively. (FGR30) The fear of technological unemployment is widespread in our profession. It's time to have a comprehensive plan for the future. (FGR28) We need to acknowledge the potential for technological unemployment and prepare for a future where AI plays a larger role in healthcare. (FGR14)

Theme 2: Trust and Acceptance of AI

Sub-Themes

Belief in AI's Capabilities

Healthcare workers marveled at AI's capabilities, finding its diagnostic accuracy and data analysis incredible. They embraced it as a valuable tool, emphasizing enhancement in their practice (Table 3). I’m amazed at what AI can do. Its diagnostic accuracy and data analysis capabilities are incredible. We should embrace it as a valuable tool. (FGR21) I’ve seen firsthand how AI can assist in decision-making. It's a game-changer, and we should trust in its abilities. (FGR33) AI offers us powerful tools for diagnosis and treatment. We need to embrace its capabilities and integrate them into our practice. (FGR14) Belief in AI's capabilities can transform our perspective. It's not about replacing us; it's about enhancing what we do. (FGR27)

Acceptance of AI as a Complementary Tool

In our study, participants stressed the importance of viewing AI as a valuable partner, not a replacement for medical professionals. They emphasized the potential for AI to enhance their skills and improve patient care, highlighting the need to welcome it as a valuable companion on the frontlines of healthcare. We need to shift our perspective and see AI as a valuable partner. Together, we can achieve better healthcare outcomes. (FGR19) I see AI as a partner, not a replacement. It can complement our skills and improve patient care if we embrace it. (R4) AI can work alongside us, enhancing our capabilities and improving healthcare. We should welcome it as a valuable companion. (R13)

Distrust in AI's Decision-Making

In this research, participants expressed concerns about the ability to trust AI in making crucial clinical decisions, emphasizing the need for transparency and accountability in AI algorithms to address these legitimate worries within the healthcare profession. Distrust in AI's decision-making is a real issue. We need transparency and accountability in how algorithms make clinical decisions. (FGR32) I’m concerned about AI's decision-making. Can we trust it to make the right choices for our patients? It's a legitimate worry. (R5)

Theme 3: Ethical and Moral Dilemmas

Sub-Themes

Moral Concerns About Replacing Medical Professionals

Healthcare workers in the study voiced moral concerns about AI replacing medical professionals, expressing apprehensions about eroding the human touch and empathy (Table 4). Moral concerns weigh heavily on me. The idea of AI taking over patient care raises questions about the human touch, empathy, and the doctor–patient relationship. (FGR32) Our moral obligation as healthcare providers is to put patients’ well-being first. Replacing medical professionals with AI challenges that duty, and it's a moral dilemma. (FGR15) As a healthcare worker, I’m deeply torn about AI replacing human professionals. On one hand, it can improve efficiency, but on the other, there's a moral duty to provide human care and compassion. (FGR18)

Thematic Analysis Including Main Themes, Sub-Themes, and Codes.

Ethical Decision-Making in AI Implementation

In this research, participants emphasized the ethical complexities of integrating AI in healthcare, urging for a robust framework. They stressed the need for AI to operate ethically, respecting patient autonomy, rights, and values. The consensus is a systematic approach to decision-making, crucial for safeguarding patients and maintaining trust in the healthcare profession amidst advancing technological interventions. Replacing human judgment with AI has ethical implications that demand our attention. We need to ensure that AI operates ethically and respects patient autonomy. (FGR29) Making ethical choices in AI integration requires a strong framework. We must guarantee that AI serves patients while respecting their rights and values. (R7) I belief the ethical dimensions of AI in healthcare are intricate. We need a systematic approach to decision-making that safeguards patients and maintains trust in our profession. (FGR26)

Balancing Efficiency and Human Values

In the study, healthcare workers grappled with reconciling efficiency and human values amid AI replacing medical professionals. Participants emphasized harnessing AI's efficiency while safeguarding compassion and patient-focused care. Balancing efficiency and human values is a constant struggle. We should harness AI's potential for efficiency without compromising our core values. (FGR17) We should leverage AI to streamline tasks and improve efficiency, but it must align with our commitment to compassionate and patient-focused care. (FGR31)

Theme 4: Quality of Patient Care

Sub-Themes

Impact of AI on Patient Outcomes

Healthcare workers cautiously embraced AI's impact on patient outcomes. They recognized its potential for better results but emphasized safeguarding the human element of care. Patient perceptions varied (Table 4). I’m cautiously optimistic about AI's impact. It can augment our decision-making, leading to better patient outcomes. But we need to ensure it doesn’t compromise the human element of care. (R9) Patients are curious about AI, and some are worried. But when they see the tangible improvements in their health due to AI-driven care, they’re often relieved and grateful. (FGR14)

Concerns About Diagnostic Accuracy

Healthcare workers expressed reservations about AI's diagnostic accuracy, citing concerns about potential false results causing stress or missed diagnoses. The study emphasizes addressing these concerns for optimal patient care. I appreciate AI's speed, but I’m concerned about its accuracy. False positives and negatives could lead to unnecessary stress or missed diagnoses for our patients. (FGR20) We’ve seen AI's diagnostic potential but concerns about its limitations persist. We need to ensure it doesn’t lead to incorrect treatment decisions for patients. (R6)

Strategies to Enhance Patient Care with AI

This study discovered healthcare professionals discussing strategies for AI integration to enhance patient care. Participants emphasized embracing AI for automatable tasks, preserving the human touch in healthcare. In our hospital, the strategy is to embrace AI for tasks that can be automated, so we can spend more quality time with patients, focusing on their unique needs and concerns. (FGR24) We’ve been developing strategies to make AI integration seamless. It's about leveraging technology to improve patient care, not replace the human touch. (FGR16)

Theme 5: Workforce Role Redefinition and Training

Sub-Themes

Redefining Healthcare Roles in the AI Era

Healthcare workers reflected on AI's impact, transitioning from traditional caregiving to data interpreters. They emphasized collaboration with AI and adapting to new responsibilities (Table 5). We’re at a crossroads in healthcare. AI is reshaping our roles. We’re no longer just caregivers; we’re becoming data interpreters and technology experts, which is a big shift. (R13) The traditional roles of doctors and nurses are expanding. We now have to collaborate with AI tools and adapt to new responsibilities in patient care. (FGR22) Healthcare roles are no longer static. AI is forcing us to be more versatile and tech-savvy, challenging us to broaden our skillset and adapt to new responsibilities. (FGR26)

Thematic Analysis Including Main Themes, Sub-Themes, and Codes.

Training Needs for AI Integration

In the study, healthcare workers emphasized structured training's importance for successful AI integration. Participants recognized seamlessly incorporating AI into daily routines was vital. They underscored equipping professionals with necessary knowledge and skills for effective AI tool use. The healthcare workforce needs structured training to adapt to AI. Understanding how to integrate AI into our daily routines will be crucial for our success. (R11) Training is essential to harness the power of AI. We must equip healthcare professionals with the knowledge and skills required to use these tools effectively. (FGR25)

Preparing the Workforce for AI Changes

In our qualitative study, healthcare professionals emphasized a proactive approach to preparing the workforce for AI-driven changes. Participants stressed embracing change, staying ahead, and fostering innovation for optimal patient care. Preparedness for AI's impact remains crucial in healthcare. We must prepare the workforce by encouraging a proactive approach to AI. We can’t resist change; we need to embrace it and make it work for us and our patients. (R2) Healthcare workers should be proactive in preparing for AI changes. It's about staying ahead of the curve, embracing innovation, and ensuring that we continue to provide the best care possible. (FGR28)

Adapting to Changing Skill Requirements

Healthcare professionals emphasized adapting to evolving skill requirements in an AI-driven healthcare environment. They recognized the need for expanded skillsets, including data interpretation and AI interaction, stressing the importance of embracing technology and developing data literacy for comprehensive patient care. Adapting to these changes remains essential in the healthcare field. Our skillset is expanding to include data interpretation and AI interaction. It's essential to adapt and keep our knowledge up to date for the benefit of our patients. (R1) Adapting to new skill requirements involves embracing technology and data literacy. We need to be well-rounded professionals in an AI-driven healthcare world. (R4)

Theme 6: Patient–Provider Relationships

Sub-Themes

Enhancing the Patient–Provider Bond with AI

Participants emphasized the evolving nature of patient–provider interactions with AI. They highlighted the importance of ensuring technology enhances care without overshadowing the human element. While acknowledging AI's potential to streamline processes, concerns were raised about potential depersonalization. However, some participants viewed AI as a supportive tool, enriching the patient–provider bond and allowing more dedicated time for addressing both emotional and medical needs (Table 5). Patient–provider interactions have evolved with AI. We need to ensure that technology enhances our ability to care for patients and doesn’t overshadow the human element. (R8) The introduction of AI has altered the dynamics of patient–provider interactions. While it can streamline processes, we must ensure it doesn’t depersonalize our relationship with patients. (R12) The patient–provider bond can be enriched with AI as a supportive tool. It allows us to allocate more time to our patients, addressing their emotional and medical needs. (R10)

Communication Challenges with AI

This study uncovered healthcare professionals identifying communication challenges in patient–AI interactions. Concerns were voiced about potential misinterpretations causing anxiety. Participants emphasized the importance of effective, empathetic AI–patient communication to avoid distress or ambiguity. There are communication gaps when patients interact with AI. Misinterpretations and misunderstandings can lead to unnecessary anxiety and confusion. (R1) Our challenge is ensuring that AI communicates effectively with patients, understanding their needs and providing accurate information without creating confusion or anxiety. (R6)

Theme 7: Healthcare Policy and Regulation

Sub-Themes

Regulatory Frameworks for AI in Healthcare

In this study, healthcare workers emphasized the need for robust regulatory frameworks for AI in healthcare, stressing clear guidelines for patient safety and quality standards. They expressed concerns about the absence of proper regulations and recognized their duty to advocate for policies safeguarding patient rights and ensuring ethical AI use in healthcare (Table 5). Establishing strong regulatory frameworks for AI in healthcare is vital. We need clear guidelines to ensure patient safety and quality standards in an AI-driven healthcare landscape. (R2) The absence of proper regulations is concerning. It's our duty to advocate for policies that safeguard patient rights and ethical AI use within healthcare. (R11)

Legal Implications of AI in Healthcare

Healthcare workers emphasized addressing AI's legal implications, advocating for protective policies. They highlighted increasing legal complexity and the need for awareness and compliance. The study underscored clarity on responsibility in AI errors. As healthcare professionals, we can’t ignore the legal aspects of AI. It's essential to understand the implications and advocate for policies that protect both patients and practitioners. (FGR15) Legal implications in healthcare become more intricate with AI. As healthcare workers, we need to be aware of the potential legal risks associated with AI use and ensure we’re in compliance. (FGR27) AI raises significant legal questions. Who's responsible if an AI system makes a wrong diagnosis or treatment recommendation? We need clarity on these issues. (

Discussion

This qualitative investigation of healthcare workers’ concerns about the potential replacement of medical professionals by artificial intelligence (AI) provides valuable insights into the multifaceted challenges and opportunities associated with AI in healthcare.

The concerns raised by healthcare workers about job security and economic stability align with previous research (Jain et al., 2021; Richardson et al., 2021). The pervasive fear of technological unemployment across various industries, including healthcare, is well-documented (Chen et al., 2022). This study reinforces worries about job obsolescence and the depreciation of human expertise due to AI and automation (Gruetzemacher et al., 2020). However, it is crucial to recognize the multifaceted impact of AI on job roles. While some tasks may become automated, certain studies suggest that AI introduces new opportunities for healthcare professionals. For example, AI can empower healthcare professionals to take on roles in AI development, where they contribute to the creation and improvement of AI algorithms (Meskó & Görög, 2020). Additionally, oversight roles involve ensuring the ethical and efficient use of AI in healthcare settings. Maintenance roles, too, become critical, encompassing tasks such as troubleshooting, updates, and ensuring the optimal functioning of AI systems (Naik et al., 2022). This research emphasized that the fear of technological unemployment should be considered within a broader context. AI's potential extends beyond displacement, offering avenues for professionals to engage in novel tasks that contribute to the development, management, and supervision of AI systems in healthcare (Chavda et al., 2023; Rajpurkar et al., 2022). Recognizing this transformative potential is essential for understanding the evolving landscape of healthcare delivery amid AI integration.

This study revealed healthcare workers’ belief in AI's capabilities, aligning with the study by Formosa et al. (2022) that emphasizes the considerable potential of AI to enhance decision-making processes and elevate the overall quality of patient care. However, the expressed distrust in AI's decision-making underscores the need for transparency and accountability in AI algorithms (Siala & Wang, 2022). A lack of transparency can erode trust in AI systems, as seen in other industries such as finance and autonomous vehicles (Cunneen, 2023). Therefore, this study's findings underline the necessity of ensuring that AI in healthcare is developed and deployed in an ethical and transparent manner to garner trust and acceptance among healthcare professionals. Additionally, the theme of accepting AI as a complementary tool aligns with the idea that AI should be viewed as an aid rather than a replacement, reflecting the potential for AI to enhance healthcare practices rather than replace them (Sharma et al., 2023).

In terms of this, the moral concerns raised by healthcare workers regarding AI replacing medical professionals echo ethical considerations that have been central to the discussion on AI in healthcare. The erosion of human touch, empathy, and the doctor–patient relationship in patient care are valid concerns that underline the ethical quandary faced by healthcare providers (Wadmann et al., 2023). Existing research also highlights the importance of preserving human values and compassion in healthcare, even as AI becomes more integrated into the field (Morrow et al., 2023; Sauerbrei et al., 2023). The need to balance efficiency with human values, as emphasized in this study, is a crucial point of debate. Healthcare professionals must strive to strike a balance that optimizes healthcare delivery while preserving the essential human qualities that patients value (Hannawa et al., 2022).

Regarding this, the cautious optimism expressed by healthcare workers regarding AI's impact on patient outcomes aligns with the potential benefits that AI can offer in terms of improved decision-making and healthcare delivery. It becomes evident that while healthcare workers express optimism for AI's positive impact, concerns about diagnostic accuracy persist, emphasizing the nuanced perspectives within the healthcare community (Lau, 2024). However, their concerns about diagnostic accuracy resonate with existing research that emphasizes the importance of AI's accuracy and safety in patient care (Lång et al., 2023). Ensuring that AI's diagnostic capabilities align with the highest standards is crucial to prevent unnecessary stress or missed diagnoses for patients (El Zoghbi et al., 2023). The strategies to enhance patient care through AI integration reflect a growing consensus that AI can streamline routine tasks, allowing healthcare professionals to focus on personalized, patient-centered care (Osama et al., 2023; Zarella et al., 2023).

The evolving nature of healthcare roles in the AI era, highlighted in this study, is consistent with the changing landscape of work across industries due to automation and AI (Mannuru et al., 2023). The need for structured training for AI integration underscores the importance of preparing the healthcare workforce for these changes (Czaja & Ceruso, 2022; Rony et al., 2024b). Research has suggested that a lack of training can hinder AI adoption in healthcare, and healthcare professionals should be equipped with the necessary knowledge and skills to effectively utilize AI tools (Choudhury & Asan, 2022). The idea of a proactive approach in preparing the workforce for AI-driven changes aligns with the broader notion that embracing innovation and staying ahead of technological advancements is essential for the future of healthcare (Stasevych & Zvarych, 2023).

Moreover, this study revealed the evolving impact of AI on patient–provider interactions is an essential aspect of the discussion surrounding AI in healthcare. The concerns about communication challenges with AI reflect a potential source of friction between patients and AI-driven healthcare systems (Redrup Hill et al., 2023). Effective AI–patient communication is vital to ensure patients’ needs are accurately understood and met. The potential of AI to enhance the patient–provider bond is consistent with the idea that AI can be a supportive tool that allows healthcare professionals to allocate more time to patients and provide more personalized care (Javaid et al., 2022).

Furthermore, this research emphasized robust regulatory frameworks and clear guidelines for AI in healthcare, echoing the need for ethical and legal standards in AI integration in healthcare. Existing research has also emphasized the importance of regulatory oversight to ensure patient safety and ethical use of AI (Meskó & Topol, 2023). The complexity of legal implications, especially concerning liability in cases of AI errors, underscores the need for clarity and legal frameworks that address these challenges (Silva et al., 2022). Additionally, Redrup Hill et al. (2023) highlights the need for interdisciplinary collaboration in addressing liability challenges. They argue that legal frameworks should be developed with input from experts in both AI and healthcare, ensuring a nuanced understanding of the potential risks and consequences associated with AI errors. This interdisciplinary perspective promotes a more holistic and effective approach to liability that considers the unique intricacies of both fields.

Implications of This Study

This study delves into healthcare professionals’ perspectives, revealing concerns that should inform policy decisions in AI integration. It emphasizes the importance of a balanced approach to address worries about job security and the human touch in patient care. AI should complement, not replace, healthcare professionals. Transparency, accountability, and ethical considerations in AI systems are crucial, addressing expressed distrust among healthcare workers. Clear guidelines and regulations are needed for responsible AI use. Building trust is key for successful AI adoption. The study's insights extend to workforce training, emphasizing the need for healthcare professionals to acquire skills to collaborate effectively with evolving AI systems. Training programs and educational initiatives are vital to prepare healthcare workers for the changing healthcare landscape shaped by AI.

Strengths and Limitations

The study demonstrates strengths in its comprehensive exploration of healthcare workers’ concerns regarding AI integration in healthcare. Utilizing a qualitative approach, it adeptly captures diverse perspectives through individual interviews and focus group discussions. The inclusion of established theoretical frameworks like the Technology Acceptance Model enhances the study's theoretical foundation. Moreover, purposive sampling from various healthcare settings ensures a comprehensive understanding of the issue. The identification of seven key themes through thematic analysis provides structured insights into the multifaceted concerns surrounding AI adoption in healthcare, offering valuable implications for policymakers. However, the study's limitations encompass methodological and contextual factors. While the descriptive design sheds light on healthcare workers’ AI concerns in Dhaka, Bangladesh, it is crucial to acknowledge the context-specific nature of the findings. The unique characteristics of the healthcare system in Dhaka may not fully represent healthcare workers’ experiences in other regions. Excluding healthcare professionals with less than one year of experience might overlook pertinent perspectives. Additionally, participants’ responses could be influenced by their specific experiences or backgrounds that could enhance the credibility of the research. It is important to note that individual biases, such as personal beliefs, attitudes, or prior exposure to AI technologies, may shape participants’ responses and perceptions. Despite efforts to mitigate bias, some subjectivity may persist, emphasizing the need for careful consideration of the study's limitations.

Recommendations for Future Research

Addressing healthcare workers’ concerns about potential AI-driven replacements, key recommendations for future research emerge. Research should focus on comprehensively assessing AI's impact on patient outcomes, extending beyond diagnostic and treatment accuracy to broader implications for well-being and satisfaction. Urgently, the study underscores the need for ethical guidelines and robust regulatory frameworks, balancing innovation with ethical and legal considerations in AI decision-making. Future research should target strategies to equip the healthcare workforce with necessary knowledge for seamless collaboration with AI, addressing specific training needs. Exploring enhanced collaboration between healthcare professionals and AI, developing tools for effective communication and teamwork, is paramount. Research initiatives should also explore innovative methods to amplify AI's role in patient–provider interactions, streamlining tasks, and improving communication. Understanding factors influencing trust in AI is vital, necessitating strategies to foster trust—a cornerstone of successful AI integration. Equally important is an in-depth exploration of AI's cost-effectiveness, evaluating financial implications. Lastly, research should probe long-term societal and economic repercussions of AI potentially replacing specific medical roles, addressing job displacement, and forecasting shifts in the healthcare labor market, instrumental for optimizing healthcare sector efficiency and quality.

Conclusions

This study has revealed a landscape rich with multifaceted challenges and opportunities. Job security and economic concerns underscore the need for a thoughtful approach to AI integration, one that seeks a balance between redefining workforce roles and preserving the human element in patient care. Trust and acceptance of AI are central to the successful adoption of this technology, emphasizing the importance of transparent, ethical, and regulated AI systems. The study further highlights the potential of AI to enhance the quality of patient care by streamlining roles, necessitating robust training for healthcare professionals. Patient–provider relationships and the intricacies of healthcare policy and regulation also play pivotal roles in guiding the responsible implementation of AI in healthcare. This investigation underscores that while concerns exist, AI has the potential to be a transformative force that, when harnessed with care and ethical considerations, can enhance the healthcare sector for the benefit of both professionals and patients.

Footnotes

Acknowledgments

We express our gratitude to all the participants of the study who patiently answered all the questions and shared their valuable responses.

Authors’ Contribution

Conceptualization by Moustaq Karim Khan Rony, Mst. Rina Parvin. Data curation by Moustaq Karim Khan Rony, Md. Wahiduzzaman, Shuvashish Das Bala. Formal analysis by Moustaq Karim Khan Rony, Mst. Rina Parvin, Ibne Kayesh. Investigation by Moustaq Karim Khan Rony, Mitun Debnath, Shuvashish Das Bala. Methodology by Moustaq Karim Khan Rony, Md. Wahiduzzaman. Project administration by Moustaq Karim Khan Rony, Mst. Rina Parvin, Mitun Debnath. Resources by Moustaq Karim Khan Rony, Shuvashish Das Bala. Software by Moustaq Karim Khan Rony. Supervision by Moustaq Karim Khan Rony, Ibne Kayesh. Validation by Moustaq Karim Khan Rony, Shuvashish Das Bala. Visualization by Moustaq Karim Khan Rony, Md. Wahiduzzaman. Writing–original draft by Moustaq Karim Khan Rony, Md. Wahiduzzaman, Mitun Debnath. Writing–review and editing by Moustaq Karim Khan Rony, Mst. Rina Parvin, Ibne Kayesh.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.