Abstract

Keywords

Key Points

NLP analysis of large amounts of patient verbatim comments regarding experience can identify specific sentiments and themes for improving experience. The worst experiences are related to communication and relationship. Moderate experience is associated with logistical and process issues.

Introduction

Background

Online review platforms allow patients to describe their experience of medical care. 1 Patients may use online review platforms to learn about clinicians. 2 These platforms provide information about a clinician's training and experiences, numerical ratings and average ratings, and verbatim patient comments about their experience. The most negative patient experience comments tend to address aspects of interpersonal communication, trust, and relationship. For instance, a retrospective study of patient reviews on two online platforms found patients were less satisfied when they perceived the clinician as “uncaring” or “disrespectful.” 1 And another study of 1-star online patient reviews found that “Bedside-manner” was an important factor associated with the lowest patient experience ratings. 3 Indeed, the stories that other patients tell about clinicians may be more important to patients can quantitative or objective information such as training, experience, expertise, awards, or quality and safety metrics. For instance, one study of patient selection of a clinician using a simulated rating website containing both subjective and objective information about potential clinicians found that patients trusted subjective ratings and comments more than objective information regarding clinician experience and training. 4

Rationale

Studies to date have often used qualitative research methods to identify themes in verbatim patient comments about their experience, a process that requires expertise and is time-consuming. Natural language processing (NLP) can efficiently analyze large amounts of text comments to identify themes and categories embedded in them. 5 For instance, one study analyzed messages sent through an electronic medical record patient portal using NLP, compared it to the categories found manually with a qualitative approach by medical students and clinicians, and found that NLP identified similar communication theme categories. 6 NLP was used to identify themes in large sets of comments such as twitter posts 7 but to our knowledge the use of NLP to identify themes in patient experience comments is a field that is less explored. In one study, sentiment analysis of patient reviews obtained from popular physician review website found that words such as “rude” and “wait” were associated with worse experience and “bedside manner” with better experience. 8 In another study of patient review gathered from the same platform on family physician and internist doctors, sentiment analysis of patient reviews found that topics such as “caring” were associated with higher experience ratings. 9 These preliminary studies demonstrate the potential for NLP to help individual clinicians and entire care units to efficiently process large amounts of data about the experience of patients under their care—without the need for specialized training or expertise—in order to help identify areas for personal and organizational improvement.

Questions

To further develop the analysis of themes in patient verbatim comments about their experience with a specific clinician or care unit, we used NLP techniques to analyze a set of physician reviews collected using an online consumer rating program from patients who were less than completely satisfied (ratings of 1 to 4 out of 5) to address the following questions: (1) Which sentiments are associated with specific patient ratings of less than top-rated experience? And (2) What topics are associated with specific numerical ratings?

Method

Data Collection

Approval by the institutional review board was not needed since all the PHI were removed from the data. Ratings of experience from 1 (worst) to 5 (best) along with verbatim text comments describing the experience were available from a commercial enterprise that works with specialty care practices to help measure and manage patient experience. Among 2188 ratings of 4 or lower, 1117 had associated comments.

Analysis

We analyzed the sample of 1117 ratings of patient ratings of experience ranging from 1 to 4 on a scale from 1 (worst) to 5 (best) that had associated verbatim text comments responding to a prompt asking the patient to suggest opportunities for improvement. First, we performed sentiment analysis and regression on the entire dataset seeking linguistic factors associated with each specific numeric rating. Then, we built topic models to identify themes associated with specific rating values 1 through 4.

Sentiment Analysis

To identify words representing sentiments associated with levels of numerical patient ratings lower than the top score, we used an NLP program (Linguistic Inquiry and Word Count; LIWC-22) to analyze the verbatim patient comments for each rating level. 10 LIWC-22 identifies the proportion of words that correspond to 110 distinct linguistic dimensions and psychological processes. The software also produces eight quantitative variables that represent or summarize subgroups of these dimensions, such as words representing positive or negative emotions. We analyzed the correlation of each numerical experience rating between 1 and 4 (used as the dependent variable) and the 111 LIWC-22 variables (serving as independent variables). Variables with variance inflation factors > 10 or tolerance < 0.1 were considered collinear and were excluded. Correlations with P < .05 were advanced into a multivariable linear regression model 11 (StataCorp. 2021. Stata Statistical Software: Release 17. College Station, TX: StataCorp LLC).

Topic Modeling

To analyze and categorize word clusters (ie, topics) within text comments of ratings 1 to 4 independently, we applied the Meaning Extraction Method (MEM),12,13 a NLP technique often referred to as “topic modeling.” MEM identifies the core messages or “themes” presented in language data, by identifying words that often appear together in text. For example, the presence of words like “teacher,” “classroom,” and “student” in a sentence may indicate the topic of “education.” The identification of common word groupings helps reveal the predominant topics in each set of rating-specific comments (Appendix 1)

Results

Which Sentiments are Associated with Patient Ratings of Less Than top-Rated Experience?

Thirty-three of the 111 candidate LIWC-22 variables correlated with ratings of experience between 1 and 4. In multivariable regression, higher ratings of patient experience were associated with positive tone, Positive emotions, Negative emotions, and Allure and Future focus (Appendix 2, Table 1). Lower experience ratings were associated with higher Word count, Numbers, Negations, Negative tone, Social behavior, Ethnicity, and Motion.

What Topics are Associated with Specific Numerical Ratings?

1-Star Ratings

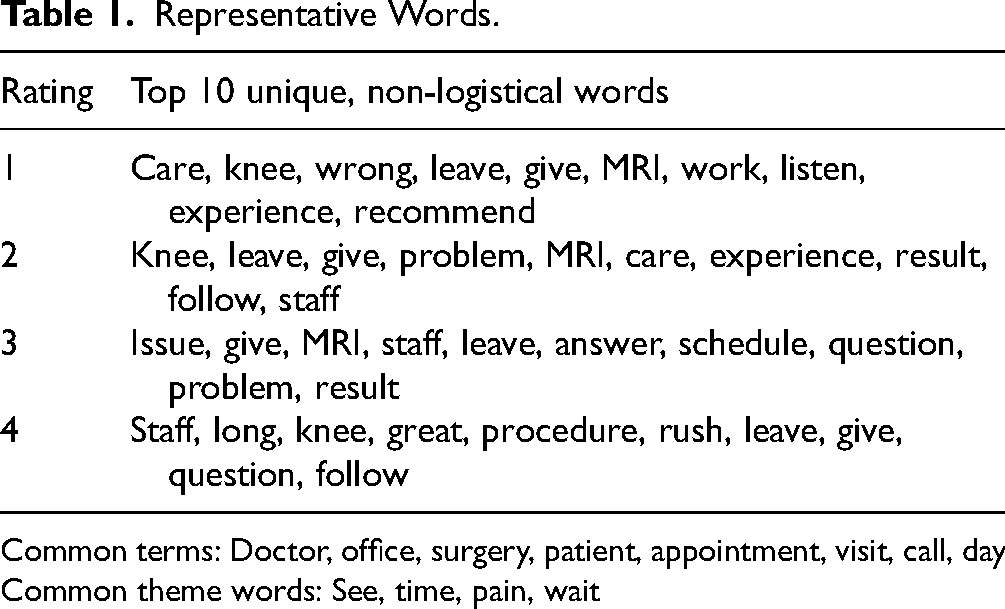

There were 234 one-star ratings in the sample, the lowest possible rating. Preprocessing yielded 57 unique words with words “doctor” “see” and “time” being the most commonly used (Appendix 2, Table 2). Applying the principal component analysis (Appendix 3) yielded a three-component solution, we identified the following topics to be the most prominent ones: “Listen, concern, collaboration,” “Responsiveness,” and “Wait” (Appendix 2, Table 3; Table 1).

Representative Words.

Common terms: Doctor, office, surgery, patient, appointment, visit, call, day

Common theme words: See, time, pain, wait

2-Star Ratings

There were 210 two-star ratings in the sample. Preprocessing yielded 48 unique words with words “doctor,” “surgery,” and “patient” most commonly used (Appendix 2, Table 4), and the principal component analysis (Appendix 3) led to a four-component solution. We found these to be the most prominent topics: “Responsiveness,” “Logistics,” “Comfort,” and “Listening, Concern, and Collaboration” (Appendix 2, Table 5; Table 1).

3-Ratings

There were 281 three-star ratings in the sample. Preprocessing yielded 37 unique words with “doctor,” “time,” and “see” being the most common ones (Appendix 2, Table 6), and the principal component analysis (Appendix 3) led to a three-component solution the topics “Wait,” “Listening, Concern, Collaboration,” and “Logistics” were the most prominent (Appendix 2, Table 7; Table 1).

4-Ratings

There were 388 four-star ratings in the sample, the closest rating to complete satisfaction. Preprocessing yielded 26 unique words out of which “doctor,” “time,” and “surgery” were most commonly used (Appendix 2, Table 8), and the principal component analysis (Appendix 3) led to a three-component solution : “Logistics,” “Wait,” and “Pain Alleviation” (Appendix 2, Table 9; Table 1).

Discussion

Qualitative analysis of patient statements regarding experience can identify specific areas of improvement for individual clinicians and larger care units. The process of qualitative research requires resources and expertise that are not readily available. There is precedent for using NLP to analyze a large number of patient statements regarding their experience of care. We measured sentiments and topics in the text comments of patients who were less than fully satisfied with musculoskeletal specialty care (less than 5 out of 5 rating), and—consistent with prior research—found themes of “listening, concern, and collaboration” and “responsiveness” associated with the worst ratings and “logistics” and “Pain” associated with less than completely positive ratings (4 out of 5 stars); and “Wait Times” appeared with both. The ability of NLP to efficiently and effectively identify themes for improvement for clinicians and care teams provides another tool for growth and improvement (Table 2).

Representative Themes.

Limitations

There are several limitations to our study. First, our dataset is from a limited number of practices. An analysis using data from a larger number of practices might generate different results, although, our findings are relatively consistent across studies done to date, and mostly demonstrate the potential of NLP to be of use. Second, NLP can’t identify indirect language such as sarcasm or slang. Use of a version of NLP specifically designed for analysis of patient comments about experience could be more accurate.

Which Sentiments are Associated with Patient Ratings of Less Than Perfect Experience?

The finding that sentiment analysis discerned differences between levels of ratings of less than perfect experience demonstrates the utility of NLP in measuring and improving patient experience. These findings support a prior study demonstrated that sentiments associated with negative and positive experience could be used to generate a numerical score without the ceiling effects typical of current quantitative experience measures. 14 Measures of perceived clinician empathy, communication effectiveness, and willingness to recommend often have a distribution of scores over-represented at the top end of the scale, a sign of loss of data regarding variation. While it might be tempting to assume these rating represent perfect patient experience, more likely people have suggestions for improvement but are influenced by factors such as social desirability bias to give top scores. 15 Quantitative analysis of patient descriptions of their experience may provide a way to measure experience in a more comprehensive manner less prone to ceiling effects. This has the potential to enhance the ability to track improvements after strategic interventions.

What Topics are Associated with Specific Numerical Ratings?

The observation that the lowest ratings are most closely associated with relationship issues such as listening, concern, and collaboration is consistent with prior studies.16,17 For instance, previous studies on 1-star reviews identified that most of the lowest ratings were related to relationship issues (bedside manner, effective communication, perceived empathy, trust) and lengthy wait times.16,17 The observation that middle numerical ratings of experience are associated with logistical issues and wait times is in line with a previous study of online reviews from physician profiles on three patient review websites that identified higher average ratings were associated with punctuality and good logistics.18 The observation that prolonged wait times is seen in both 1-star and 4-star ratings is consistent with a study of patient reviews obtained from a popular online doctor rating website that found a correspondence between longer wait times lower patient satisfaction across all metrics. 19 Another study of thousands of online patient reviews associated “timeliness” with higher experience ratings. 20

Conclusion

The ability of NLP to identify themes in large numbers of patient comments is now tested in several venue including portal messages, 21 group chat sites, 7 social media posts, 22 and patient surveys regarding their experience 6 as confirmed in the current study. What once might have been a daunting endeavor, to train or hire a person in semi-structured interviews and coding of resultant themes (qualitative research), now has a streamlined alternative in NLP. Testing of the utility of large language models might be the next step, as they can perform many NLP tasks such as identifying relevant themes. Given the consistent identification of difficulties with relationship factors such as trust, perceived empathy, and communication effectiveness at the heart of experiences that patients rate poorly, individual clinicians and care units can prioritize ensuring that patients feel heard and understood.

Supplemental Material

sj-docx-1-jpx-10.1177_23743735251323677 - Supplemental material for Natural Language Processing of Sentiments Identified in Patient Comments Associated with Less Than Top-Rated Care

Supplemental material, sj-docx-1-jpx-10.1177_23743735251323677 for Natural Language Processing of Sentiments Identified in Patient Comments Associated with Less Than Top-Rated Care by Ali Azarpey, Jacob Thomas, David Ring and Orrin Franko in Journal of Patient Experience

Supplemental Material

sj-docx-2-jpx-10.1177_23743735251323677 - Supplemental material for Natural Language Processing of Sentiments Identified in Patient Comments Associated with Less Than Top-Rated Care

Supplemental material, sj-docx-2-jpx-10.1177_23743735251323677 for Natural Language Processing of Sentiments Identified in Patient Comments Associated with Less Than Top-Rated Care by Ali Azarpey, Jacob Thomas, David Ring and Orrin Franko in Journal of Patient Experience

Supplemental Material

sj-xlsx-3-jpx-10.1177_23743735251323677 - Supplemental material for Natural Language Processing of Sentiments Identified in Patient Comments Associated with Less Than Top-Rated Care

Supplemental material, sj-xlsx-3-jpx-10.1177_23743735251323677 for Natural Language Processing of Sentiments Identified in Patient Comments Associated with Less Than Top-Rated Care by Ali Azarpey, Jacob Thomas, David Ring and Orrin Franko in Journal of Patient Experience

Footnotes

Declaration of Conflicting Interests

The author(s) declared the following potential conflicts of interest with respect to the research, authorship, and/or publication of this article: The authors declare that the company from which data is acquired is owned by OF.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Statement of Informed Consent

Informed consent for patient information to be published in this article was not obtained because all the PHI were removed from the data.

Supplemental Material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.