Abstract

Objective:

To assess whether communication training for house staff via role-playing exercises (1) is well received and (2) improves patient experience scores in house staff clinics.

Methods:

We conducted a pre–post study in which the house staff for 3 adult hospital departments participated in communication training led by trained faculty in small groups. Sessions centered on a published 5-step strategy for opening patient-centered interviews using department-specific role-playing exercises. House staff completed posttraining questionnaires. For 1 month prior to and 1 month following the training, patients in the house staff clinics completed surveys with Clinician and Group Consumer Assessment of Healthcare Providers and Systems (CG-CAHPS) questions regarding physician communication, immediately following clinic visits. Preintervention and postintervention results for top-box scores were compared.

Results:

Forty-four of a possible 45 house staff (97.8%) participated, with 31 (70.5%) indicating that the role-playing exercise increased their perception of the 5-step strategy. No differences in patient responses to CG-CAHPS questions were seen when comparing 63 preintervention surveys to 77 postintervention surveys.

Conclusion:

Demonstrating an improvement in standard patient experience surveys in resident clinics may require ongoing communication coaching and investigation of the “hidden curriculum” of training.

Introduction

Effective and empathetic communication with patients is widely accepted as a core competency of graduate medical education (1). However, most US residency training programs outside primary care do not include formal communication training in their curricula (2). Furthermore, the methods and tools used to assess the effectiveness of formal communication training for medical house staff that an institution may choose to implement are heterogeneous at best, with little consensus among educators (3,4). Of note, many published studies focus on trainee-reported outcomes, as opposed to outcomes that are reported by patients themselves (4,5).

Formal patient experience surveys, most notably those from the Consumer Assessment of Healthcare Providers and Systems (CAHPS) program, have become ubiquitous in the United States, in large part because of a nationwide trend toward linking survey administration and patient scores with insurance reimbursement across a variety of health settings (6). Despite the increasing importance of these patient experience surveys and emerging evidence that implementing communication training for attending physicians may improve patient experience scores (7), few studies have examined the impact that formal communication training for house staff may have on responses to standard experience surveys completed by patients who have received care directly from teaching services and clinics. We thus conducted a study with the primary aim to examine the direct impact that a communication training program, specifically for house staff—based on a previously published, widely accepted 5-step strategy for opening patient-centered interviews (8)—would have on the patient experience in resident clinic, as formally assessed by questions pertinent to physician communication from the Clinician and Group CAHPS (CG-CAHPS) Adult Visit survey.

Methods

Overview

We conducted a prospective preintervention and postintervention study in which each member of the house staff within the departments of neurology, neurosurgery, and urology at a single US academic medical center participated in an intensive, small-group educational session led by a trained faculty preceptor that reviewed a previously published 5-step strategy for opening patient-centered medical interviews. All resident training sessions occurred within February and May 2014. House staff completed posttraining questionnaires. For the preintervention and postintervention comparison, patient experience surveys based on physician communication questions from the CG-CAHPS Adult Visit 2.0 survey were collected from consecutive adult patients seen in the resident-staffed clinics of these 3 hospital departments, both 1 month prior to the initiation of the training (January 2014) and 1 month after all house staff had completed the training (June 2014).

Ethics Statement

This work was carried out in accordance with the Code of Ethics of the World Medical Association (Declaration of Helsinki). As the project assessed normal educational practices and did not involve any data recorded with patient identifiers, it received an exemption from formal review from our institution’s Human Investigation Committee. A script with the components of informed consent was read to all survey participants in clinic, and their agreement to participate in the survey was accepted as consent for study participation. All privacy rights were observed.

Participants

House staff participants

All house staff in the departments of neurology, neurosurgery, and urology were required by their training program directors to participate in the communication training, regardless of level of training, without notable exclusions.

Patient participants

During the preintervention and postintervention months described above, all outpatients over the age of 18 seen in house staff clinics in the departments of neurology, neurosurgery, and urology were directly approached by a research coordinator to take the patient experience survey immediately following their visits, while still in the clinic building. Only patients unable to read English and thus unable to take a written survey were excluded.

Intervention

House staff each participated in 1 intensive communication training session, with each session comprised of 3 residents led by 1 faculty preceptor. Neurology and neurosurgery residents and preceptors were mixed in their groups, while the urology residents and preceptors participated in urology-only separate groups.

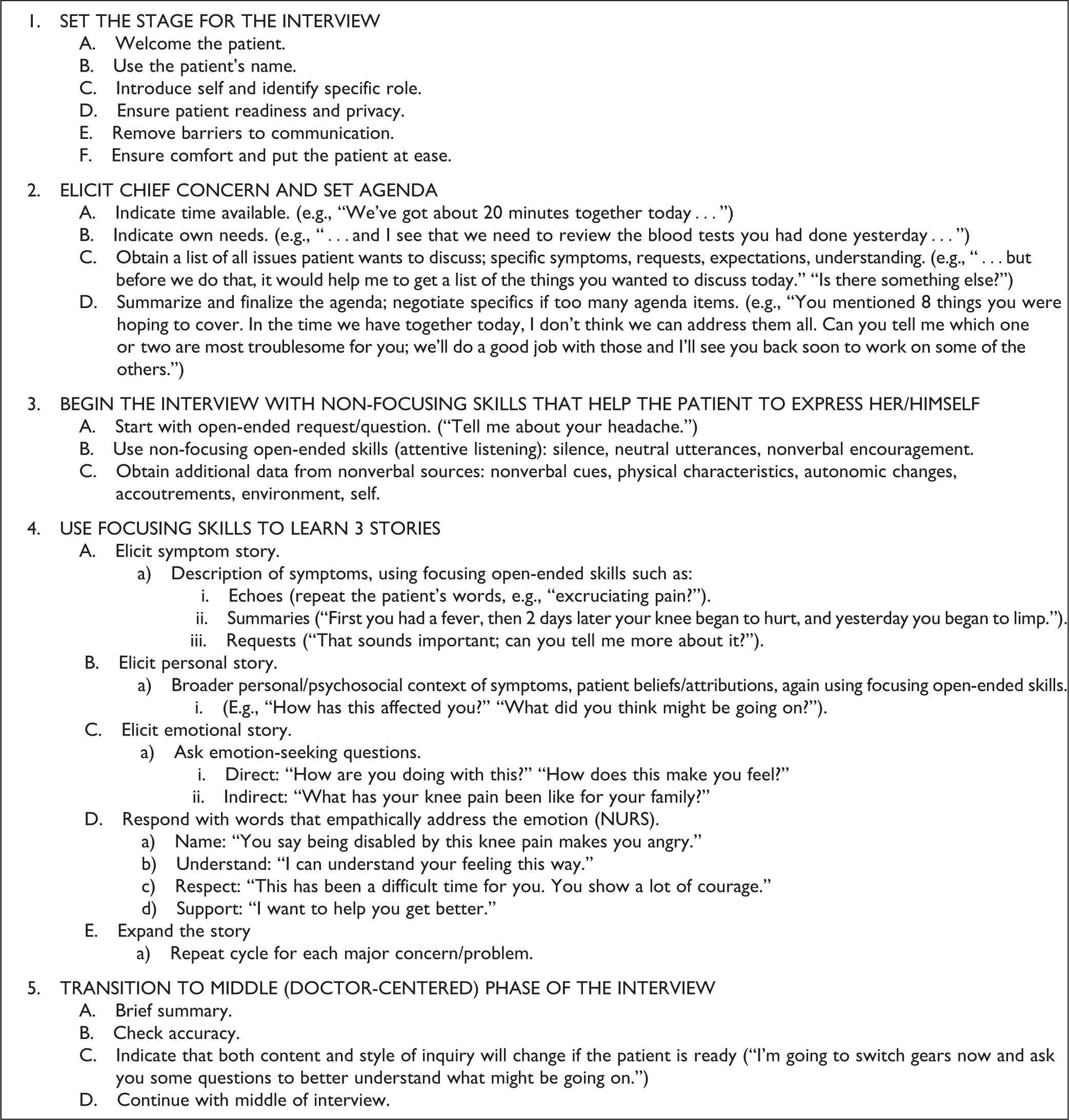

The training sessions focused on teaching a detailed 5-step strategy for opening medical interviews in a fashion that is patient centered (8). The strategy has been promoted by the American Academy on Communication in Healthcare (AACH) and is outlined in Figure 1.

Details of the 5-step method for opening patient interviews that was taught during the study’s training sessions. Adapted from Smith (8).

Each session followed a similar outline. One week prior to their scheduled session, participating residents were e-mailed a link to a 15-minute AACH video explaining and demonstrating the 5-step communication strategy (9). The scheduled small-group sessions themselves were each 2 hours and consisted of (1) a didactic lecture from the faculty preceptor on the importance of communication and details of the 5-step method for opening interviews, (2) a viewing of the aforementioned AACH video, with opportunity for group feedback, and (3) 90 minutes of structured role-playing exercises, where each participant in the small group practiced the 5-step strategy with constructive feedback from his or her peers and preceptor.

Role-playing is a widely accepted effective method for communication skills training in clinical medicine (4,10). For our role-playing exercises, we developed case scenarios specific to our participating hospital departments, with each scenario providing instructions for 1 resident who was assuming the role of the “doctor” in the role play and another resident playing the role of the “patient.” Doctors were instructed to open medical interviews with the 5-step method, whereas patients were given specific instructions on information that could be disclosed during open-ended questions from the doctors. For any given scenario, the resident who was neither the doctor nor the patient was given a checklist with key components of the 5-step strategy to assess, as a guide to give feedback along with the preceptor after each role-play scenario was completed. Several scenarios were created for each department, so that after each scenario, the participating residents in a small group could rotate roles for a new scenario, and each participant would ideally have a chance to experience each role (ie, doctor, patient, and evaluator). Figure 2 shows excerpts from instructions given to a doctor and a patient for an example clinical neuroscience scenario.

A sample case from the role-playing exercises that house staff participated during the study. For each case, each of the 3 residents in a small group had a specific role to play: (1) the doctor, (2) the patient, or (3) the observer, that is, the person responsible for feedback to the resident playing the doctor, after the scenario. After each scenario, the residents rotated their roles and repeated the exercise with a new case, until all residents in each small group had participated in all 3 roles.

At the end of the session, house staff were given laminated cards that summarized the 5-step process for opening medical interviews. Each participant also filled out a brief “Commitment to Change” card on which they wrote up to 3 aspects of the 5-step method on which they planned to focus, moving forward in their clinical practice.

Of note, all faculty preceptors from the 3 hospital departments participating in this study underwent an initial 2-hour training session themselves, with essentially the same format as outlined above, led by leadership from the institution’s Office of Graduate Medical Education and Patient Experience Council. A selection of the small-group training sessions with house staff was also observed by leadership from the institution’s Teaching and Learning Center to ensure uniformity between sessions and to provide direct feedback to participating preceptors.

Data and Outcomes Collected

House staff data

Immediately after completing the training session, before leaving the room, each resident was given a brief written survey that asked him or her to confirm that he or she had participated in all 3 roles during the interactive exercise and to provide feedback on the session’s content and organization. The face and content validity of the survey’s questions was initially assessed during an iterative review process by a group of 5 multidisciplinary members of this manuscript’s authorship team before the final survey’s use in this study.

Patient data

All patients recruited from resident clinics during both preintervention and postintervention months completed a questionnaire containing 8 multiple-choice items immediately following their clinic visit, with questions directly adapted from the physician communication section of CG-CAHPS Visit Survey 2.0 and developed and extensively validated by the US Agency for Healthcare Research and Quality (11). Seven of the questions were on a Likert-type scale, with 1 additional question asking the respondent to rate his or her provider with a number from 0 to 10 (with 10 being “best provider possible”). The survey specified that its questions were in reference to the resident physician who was responsible for the clinic visit, as opposed to faculty preceptors or other clinic staff. In addition, we collected self-reported patient demographic information, including age, sex, education level, and race.

Statistical Analysis

Patient demographics and all survey responses were characterized via standard descriptive analyses. For the CG-CAHPS items with Likert-type scale responses, we dichotomized outcomes into those respondents who reported the highest rating on the response scale (ie, “top-box”) versus those who did not, an approach identical to that which the US Centers for Medicare and Medicaid Services has taken with public reporting of CAHPS program data (12). The χ2 test was used to compare preintervention and postintervention patient data, and preintervention and postintervention dichotomized responses for individual CG-CAHPS survey items were compared using Fisher exact test. For the patient survey question with a 0- to 10-response scale, we used the Wilcoxon rank sum test to make precomparison and postcomparison. All analyses were performed using Statistical Analysis System 9.3 (SAS Institute Inc, Cary, North Carolina, 2011). Responses to the patient survey items were compared to available 2013 normative data to estimate national percentiles (13).

A minimum sample size of 55 clinic patients in the preintervention and postintervention groups was calculated with an initial expectation that on average 50% of preintervention patients would record top-box responses for CG-CAHPS items and that postintervention top-box item scores would rise by 25 percentage points. With these assumptions, a sample size of 55 patients in each group would have 80% power to detect such a rise for any survey item with 95% certainty.

Results

House Staff Participation

A total of 44 (97.8%) of 45 eligible residents (24 of 24 neurology residents, 12 of 13 neurosurgery residents, and 8 of 8 urology residents) participated in a small-group communication training session during the study period, with 1 neurosurgery resident unable to make a session due to the timing of the sessions and his clinical responsibilities.

House Staff Posttraining Survey Results

Table 1 summarizes the results of the survey that each house staff member filled out immediately after completing his or her training session. The majority (n = 31, 70.5%) of the 44 residents indicated on the survey that their perception of the 5-step strategy for opening patient-centered interviews increased following their participation in the training program.

House Staff Posttraining Survey Results.a

a N = 44.

Patient Recruitment

During the preintervention period during which house staff clinic patients were approached for participation, 63 (51.6%) of a possible 122 eligible patients took the survey. During the postintervention period, 77 (49.0%) of 157 eligible patients participated. No difference in participation rate was detected between the preintervention and postintervention groups (P = .71).

Patient Characteristics

The self-reported demographic information for participating patients is summarized in Table 2, for both the preintervention and postintervention groups. No differences in the distribution of gender, age, level of education, or race were detected between the groups.

Patient Demographic Data.

Patient Survey Results

Table 3 shows the percentages of respondents in the preintervention and postintervention groups who gave top-box responses for each of the CG-CAHPS items in the patient survey. As a reference, the national percentiles that those top-box percentages represent in the 2013 National CAHPS database are also provided in Table 3. No significant differences were found between the preintervention and postintervention groups with regard to survey responses. For the item on the survey in which patients were asked to rate their provider from 0 to 10, with 10 being the best response possible, 22% of preintervention respondents answered “9,” while 57% answered “10.” In the postintervention group, 18% answered “9” and 55% answered “10.” No difference was detected between the groups along the entire response scale (P = .96).

Patient Top-Box Responses to CG-CAHPS Items on Doctor Communication.

Abbreviation: CG-CAHPS, Clinician and Group Consumer Assessment of Healthcare Providers and Systems.

a Normative data from 2013 CAHPS Clinician and Group Survey Database (13) representing comparisons with 1234 US practice sites (428 154 surveys).

Discussion

In this single US center pre–post study conducted across 3 hospital departments, we were unable to demonstrate that a house staff communication training initiative, focused on teaching a widely accepted 5-step strategy for opening patient-centered interviews, was able to improve house staff clinic patient experience scores, as measured by relevant questions from the CG-CAHPS survey. Inherent differences in the preintervention and postintervention patient cohorts did not appear to play a role in this finding, as the 2 groups were well matched with regard to demographics. Although top-box responses from our patients who took the patient experience survey preintervention were higher than what had been projected during study design—with nearly all percentages higher than 90%—comparison of the scores with normative data from the national US CG-CAHPS database nevertheless argued against an unsurmountable ceiling effect in our data and suggested that there was room for improvement in our house staff clinic patient experience performance, in which a future effective training initiative could potentially play a role. Of note, the vast majority of residents participating in the training did indicate on a simple posttraining questionnaire that the role-playing educational activity that we had designed did increase their perception of the highlighted patient-centered communication strategy.

We selected CG-CAHPS questions related to doctor communication as our outcome instrument for this study because of the reality in the United States that the CG-CAHPS survey will likely soon become a required outcome measure for most outpatient practices receiving government reimbursements (14). Evidence has recently emerged showing that communication training for attending physicians can improve CAHPS scores (7), but studies examining the impact of house staff communication training initiatives have employed a wide assortment of various patient-reported outcomes and assessment instruments (15 –20), with few attempting to use standardized US patient experience surveys as a means for assessing “success” of initiatives (21). Whether the CG-CAHPS questions are indeed the appropriate outcome measure for assessing the impact of house staff’s use of a step-by-step protocol for opening medical interviews is debatable (4,22). However, given the now ubiquitous nature of the CAHPS surveys in the United States, understanding how communication training may or may not impact CAHPS scores in house staff clinics and other teaching services will likely be important moving forward (2) with other measures for assessing the value of training initiatives and giving direct feedback to trainees on communication skills being collected in parallel (15 –18,20,23).

Our study has several important limitations. First, as with many studies of medical education initiatives, it was conducted at a single center with a pre–post study design; it did not include a control group. Although we note that our preintervention and postintervention groups were matched with regard to basic patient demographics, it is possible that other changes between the preintervention and postintervention periods could have impacted the results. However, we note that the residents staffing our clinics in the study were the same group both before and after the educational initiative, without any turnover in the participating house staff during the study period.

Second, our training initiative largely consisted of a single 2-hour role-playing session for each resident, supplemented by some take-home materials. Despite (1) the structured nature of each session, (2) the adoption of a widely accepted communication strategy, (3) our formal training of preceptors, and (4) our role-playing activities that were designed to be department specific, a 2-hour time period for a postgraduate educational activity may be relatively brief. We do note that (1) we had an excellent participation rate among the residents in our 3 participating departments and (2) all residents signed a “Commitment to Change” form at the end of their session, with an explicit promise to carry their skills learned during the session forward in daily practice. Nevertheless, residents were not directly observed during the postintervention period to confirm they were using the 5-step strategy in actual patient encounters nor was long-term follow-up survey data regarding the utility of the strategy obtained from our participating residents.

Third, our 1-month data collection periods both before and after all residents had participated in the training session turned out to be short, especially since our initial power calculation was based on much lower projected preintervention top-box CG-CAHPS scores. To detect a rise in our baseline, CG-CAHPS scores would likely have taken either an intervention with a large effect size or a much larger sample of patients. We propose that such studies with larger samples of patients are worthwhile in the future though, at the very least given that large increases in a hospital’s CAHPS percentile score can occur with relatively subtle improvements in patients’ top-box response percentages.

Conclusion

Our study shows that communication training for house staff focused on using role-playing exercises to practice patient-centered strategies for opening medical interviews can increase residents’ perception of such strategies but that demonstrating skills transfer and improvement in patient-centered outcomes after implementing such communication training for residents remains challenging (19). High percentages of top-box responses from patients on survey items from the US CG-CAHPS may translate to a wide variation in percentile scores for those items, a fact that may point to room for practice improvement that may not be initially obvious. However, future studies of communication training initiatives geared toward residents and their impact on patient experience surveys may need to take into account the possibility of a ceiling effect among the raw survey scores when determining the sample size necessary to power their analyses adequately.

Practice and Research Implications

We would recommend that future communication training programs geared specifically toward improving patient-centered outcomes via house staff communication training include a longitudinal series of educational sessions for each participant, as opposed to 1 single role-playing activity. Direct observation of residents in real-life clinical encounters, after participation in formal training, with immediate feedback from trained coaches may help ensure that the strategies learned during training remain in place moving forward (3,24). Future formal studies using the CG-CAHPS as an outcome tool should ideally include more participants to detect improvements in subtle effect size, which may nevertheless be meaningful.

Finally, so many of the communication habits and techniques that house staff may learn may be from the “hidden curriculum” of residency—the habits which the residents observe from their attendings and peers—and the possible emphasis during training on biomedical science over interpersonal communication (25). As emerging studies have shown the possible effectiveness of communication training for attendings in improving patient experience scores (7), an effort to train an institution’s physician leaders at the same time or prior to the implementation of an educational initiative for trainees may be a future fruitful strategy.

Footnotes

Authors’ Note

All authors contributed to study conception and manuscript revisions and approved the final version of the submitted article. In addition, O.A.O. and M.H. collected patient data and performed statistical analysis. P.D.C. oversaw statistical analysis. A.B.H., K.R.B., and M.S.H.L. prepared education materials for the study intervention and served as preceptors for the resident training sessions. D.B.D., E.G.M., and J.J.M. served as preceptors for the resident training sessions. B.K. and J.W.H. coordinated data collection from patients. A.H.F., J.P.H., and M.C.B. trained preceptors and supervised the study. O.A.O. and D.Y.H. drafted the manuscript. In addition, D.Y.H. prepared education materials for the study intervention, served as a preceptor, coordinated data collection, and supervised the study.

Acknowledgments

The authors would like to thank Rosemarie Fisher and Jack Contessa for their help with training faculty preceptors and Simon P. Kim for his role in study conception.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research and/or authorship of this article: This research was funded by the Department of Neurology and the Department of Urology at the Yale School of Medicine. Members of either department aside from the listed authors were not involved in the study design; in the collection, analysis, and interpretation of data; in the writing of the report; or in the decision to submit the article for publication. The authors did not otherwise receive any specific grant from funding agencies in the public, commercial, or not-for-profit sectors. Of note, P.D.C. receives research funding from the AHRQ and the NIMH.