Abstract

Few medical specialties engage in ongoing, organized data collection to assess how graduate medical education in their disciplines align with practice. Pathology educators, the American Board of Pathology, and major pathology organizations undertook an evidence-based, empirical assessment of what all pathologists need to learn in categorical residency. Two challenges were known when we commenced and we encountered 2 others during the project; all were ultimately satisfactorily addressed. Initial challenges were (1) ensuring broad representation of the new-in-practice pathologist experience and (2) adjusting for the effect on this experience of subspecialty fellowship(s) occurring between residency and practice. Additional challenges were (3) needing to assess and quantify degree and extent of subspecialization in different practice settings and (4) measuring changing practice responsibilities with increasing time in practice. We instituted annual surveys of pathologists who are relatively new (<10 years) in practice and a survey of physician employers of new pathologists. The purpose of these surveys was to inform (1) the American Board of Pathology certification process, which needs to assess the most critical knowledge, judgment, and skills required by newly practicing pathologists, and (2) pathology graduate medical education training requirements, which need to be both efficient and effective in graduating competent practitioners. This article presents a survey methodology to evaluate alignment of graduate medical education training with the skills needed for new-in-practice physicians, illustrates an easily interpreted graphical format for assessing survey data, and provides high-level results showing consistency of findings between similar populations of respondents, and between new-in-practice physicians and physician-employers.

Introduction

In today’s environment of rapidly advancing biomedical science and continually evolving health-care organization, clinical disciplines must adapt to emerging modes of practice and new methods of care. Yet incorporation of change into established practices is slow, sometimes approximating the rate of turnover of the practitioners themselves. In particular, practice habits acquired in the course of graduate medical education (GME) may last a practice lifetime, making GME a prime educational locus for any adaptation to change. 1,2

Such slow change highlights the importance of ongoing alignment of GME with the actual skills that physicians need to practice. GME however takes place at the interface between the established, didactic environment characteristic of undergraduate medical education and the evolving, experiential environment in which practice-based learning and improvement occurs. As a result, GME tends to reflect current practice within the training department rather than focus on future practice needs of the trainees. Justifying and enabling educational change in these circumstances requires explicit and specific information on the actual effectiveness of GME in preparing trainees for practice. 3 In many disciplines, this information is not available because the experiences of recent trainees are not routinely assessed to see how effective the training they received was in efficiently preparing them for the demands they subsequently encountered in practice.

Educational and organizational leaders in one discipline (pathology) perceived an urgent and increasing need to develop an evidence-based assessment of how well current training in their discipline was meeting the needs of their trainees recently in practice. 4 -6 Pathology is faced with recent changes in both training and practice, as well as a longer term decrease in numbers of pathologists being trained. 7 Combined, these called for systematic consideration of the effectiveness and efficiency of GME in our discipline, toward which a necessary first step was an evidence-based understanding of the alignment of the content of our training with that of our practice. This article sets forth how this was accomplished for pathology, and illustrates generally how such processes may be developed to contribute to discipline-specific, evidence-based paradigms more broadly in GME.

Specifically, this article describes the development of a system for the methodical, ongoing assessment of effectiveness and efficiency of training in a clinical discipline (pathology). Although there are particular features specific to residency training in each discipline, the general concepts needing to be addressed are similar: First, a practical categorization of areas of clinical activity, encompassing in common both training and practice; next, an individual determination of each recently trained practitioner, of the importance to his or her practice of each of those areas, and of the usefulness of his or her training in that area in preparing him or her for practice; and finally, a demographic characterization of that individual’s practice and other training (fellowships), to contextualize the practice importance and preparation information. This article describes our methodology and explains the role of each of the foregoing elements.

To understand the relationship of practice requirements to GME experience for each individual trainee’s transition into practice, we collected information on multiple parameters of both the training and the practice experience of each individual respondent. We assessed by comprehensive survey, on an individual basis (1) the characteristics of the practice responsibilities into which that recent trainee had entered, together with (2) his or her residency preparation for practice, and (3) his or her other training and demographic characteristics. Each individual’s transition from training to practice was then analyzed, by practice area, for alignment of training with practice. Data collected for each individual included the importance of practice and preparation in training for each practice area, fellowship(s) taken, practice setting and size, and number of years in practice. These and other parameters were assessed against different combinations of training and practice circumstances. As a check on the validity of these new-in-practice physicians’ perceptions of the importance of these areas of practice, and their preparation for practice, we also similarly surveyed physician employers/supervisors of new-in-practice physicians in the discipline.

In this article, we focus on our methodological approach and an illustrative overview of results. Forthcoming companion articles will provide details of the pathology-specific results and their possible significance as a guide for change in content and/or organization of pathology GME.

Methods

Practice Area Categorization

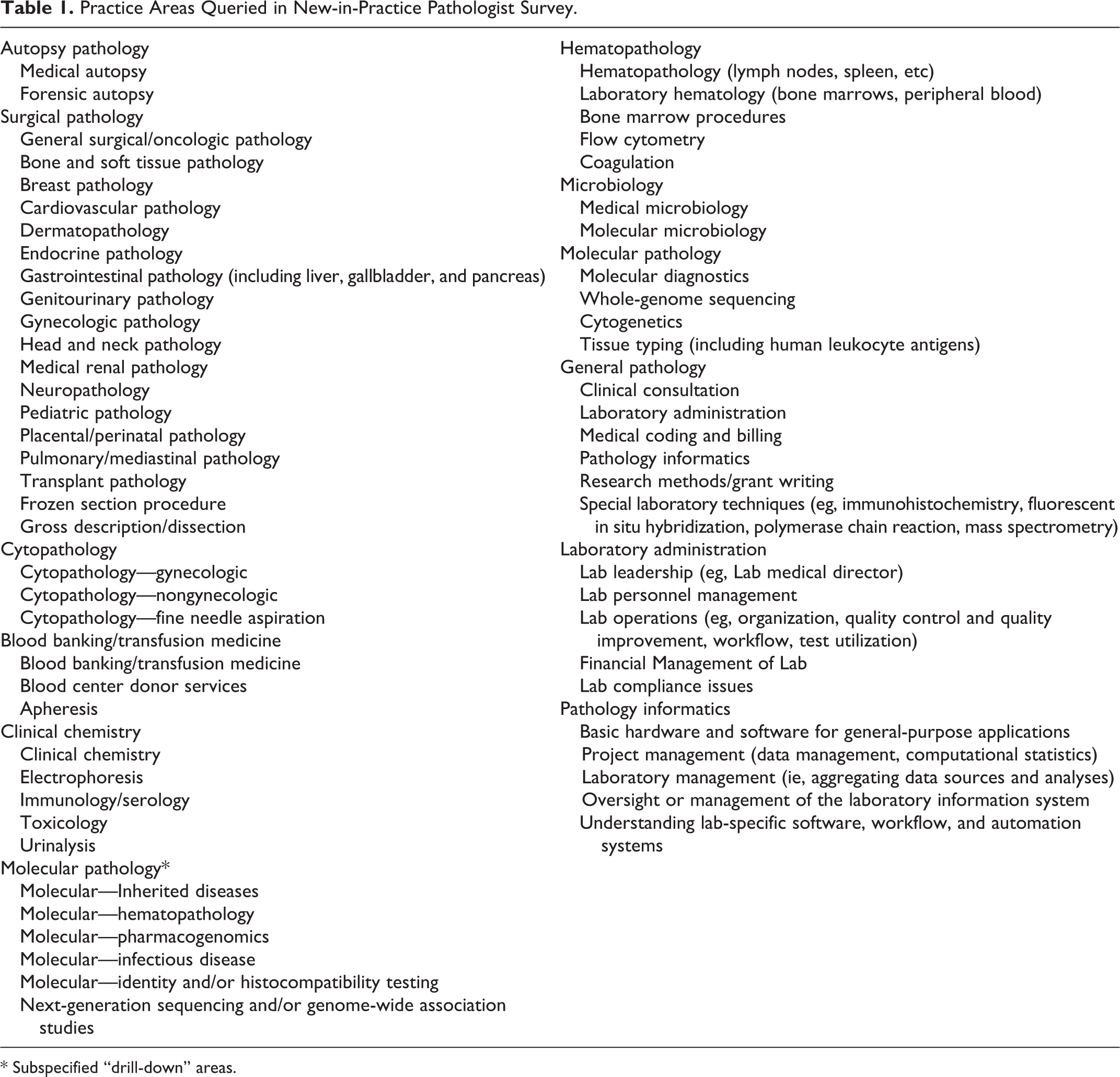

We had to develop a categorization of practice areas that could apply to both training programs and practice circumstances, so that we could simultaneously assess each area for (1) that area’s empirical importance in the actual practices of our recent trainees and (2) the utility of the preparation for practice in that area these trainees had experienced in residency. This categorization was developed by consulting source material from the 2 primary agencies that set the existing specifications for training in pathology—the Accreditation Council for Graduate Medical Education (ACGME) 8 and the American Board of Pathology (ABPath). 9 The ACGME sets the requirements for accreditation of pathology training programs, and the ABPath sets the requirements for certification of individual pathology practitioners. Although both entities address structural and content aspects of training, the ACGME requirements are mainly programmatic and structural, whereas those of the ABPath are mainly individual and content focused. Starting from the training content areas in these requirements, a survey design taskforce comprised of experienced pathology practitioners, new-in-practice pathologists, pathology trainees, and pathology residency program directors developed a list of practice areas covering the spectrum of pathology practice activities that would recognizably be applicable both to pathology training and to practice. The practice area list we developed is shown in Table 1.

Practice Areas Queried in New-in-Practice Pathologist Survey.

* Subspecified “drill-down” areas.

Survey Development

With these areas in hand, we developed 2 surveys—one for new-in-practice physicians (in practice for 10 years or less) and one for physician-employers/supervisors who had in the past 5 years hired new physicians for their first job. (We collected information from pathologists 10 or fewer years in practice because this was the time when they were comprehensively covered by the ABPath Maintenance of Certification [MOC] program, within which we could sort responses by length of time in practice. We chose 5 years for the employer survey based on our perception that employers would not likely be able to recall and report on the initial readiness for practice of pathologists who had joined their practice more than 5 years ago.) The surveys were designed to answer 3 questions: (1) directly, how well does residency training align with the most critical knowledge and skills required for actual practice; (2) indirectly, to what extent does the certification examination (of the ABPath, which sets an implicit standard for pathology GME program content) assess the most critical knowledge and skills required for practice; and (3) by way of validation, do the physician-employers/supervisors agree with the new-in-practice physicians’ self-assessments?

Since October 2014, the ABPath has administered the new-in-practice physician surveys in conjunction with the biennial MOC reporting required of its recent diplomates, in which approximately half of all diplomats certified since 2006 participate each year. The ABPath MOC reporting cycle runs each year from October 1 through the following January 31. To date, these MOC-associated survey data have been collected over 4 MOC cycles: the MOC surveys in the 2014 to 2015 and 2016 to 2017 cycles given to ABPath diplomates from even-numbered years; the MOC surveys in the 2015 to 2016 and 2017 to 2018 cycles given to diplomates from odd-numbered years (see Supplemental Table 1 for a description of the survey parameters.).

The physician-employer survey was fielded by the College of American Pathologists (CAP) in 2015. This survey was designed as validation for the MOC diplomate surveys rather than as a free-standing assessment tool. In contrast to the MOC surveys (fielded to all board-certified new-in-practice physicians in pathology as described above), we lacked a reliable general mechanism to identify all pathologist employers of new-in-practice pathologists. No entity had comprehensive data on pathology practices, practice leaders, or employers of pathologists. Also, although diplomates may be predisposed to respond to the survey because of its association with the MOC process, respondents to the physician-employer survey have no incentive to participate beyond a general interest in contributing to the potential improvement in GME, so we did not anticipate a comparably robust response to the survey.

As a proxy for identifying employers who supervise new-in-practice physicians in pathology, we sent an online survey to all CAP fellows (members) who had been in practice for at least 5 years. (Although pathologists in practice for at least 5 years may not have supervisory responsibilities for new-in-practice pathologists, previous research by the CAP has shown that surveys sent to this population provide relatively high response rates on information about new pathologists.) Respondents who neither hired nor supervised a new-in-practice pathologist within the last 5 years were screened out of the survey. The remaining self-identified pathologist-employers/supervisors were asked practice-area questions about the most recent new-in-practice pathologist they hired/supervised. These questions, analogous to those asked of the new-in-practice pathologists, were: (1) How important is the new pathologist’s knowledge/skill in each practice area to his or her performance of his or her job, and (2) To what extent was this new pathologist prepared for his or her responsibilities in that practice area? Respondents were also given the opportunity to answer the same questions about the next to most recent new-in-practice pathologist they had hired/supervised.

Survey methodology

In both the MOC (new-in-practice) and the employer (employer/supervisor) surveys, our methodology in large part parallels the methodology of the American Board of Pediatrics, which regularly assesses the relative importance of various training areas to practice and the frequency with which this knowledge is called for practice.

10

The assessment process we developed for pathology differs in that: we survey physician-employers/supervisors and new-in-practice physicians; we explicitly assess both the positive and negative usefulness for practice (utility) of residency training received in each practice area; and we assess both practice importance and training utility by individual, by practice area, and in conjunction with information on fellowship training.

Survey of New-in-Practice Pathologists (ABPath Diplomates)

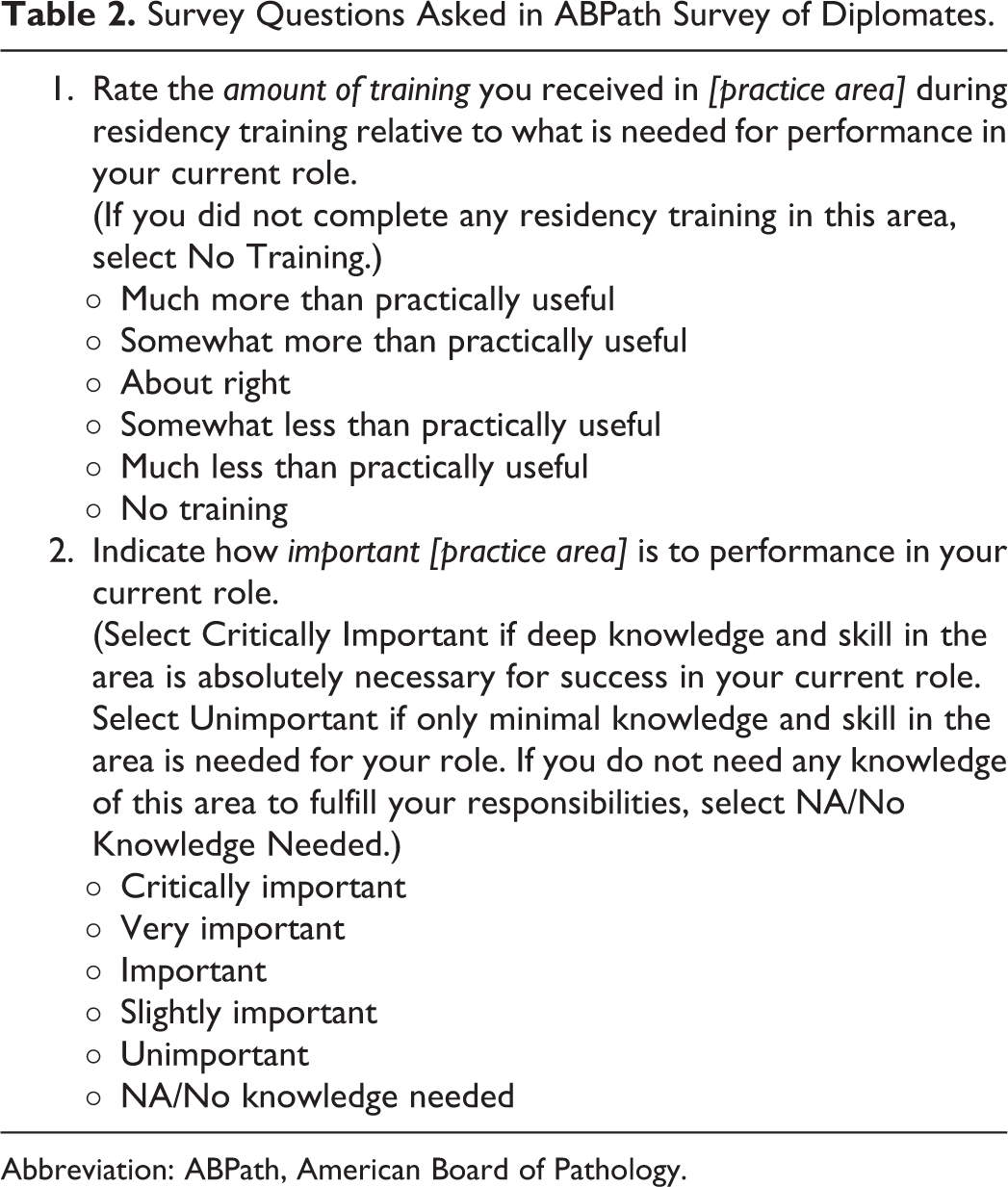

We asked new-in-practice pathologists to evaluate each pathology practice area on 2 scales: (1) the importance of that practice area to the performance of their current role and (2) the usefulness (utility) of their training in that practice area relative to the performance of their current role (Table 2). To avoid potential bias in how respondents ranked the 65 practice areas assessed, these areas were presented in random order to each survey recipient (48 distinct practice areas were assessed in the initial 2 surveys, 8 of which were replaced by 17 “drill-down” areas in the third and fourth surveys, for a total of 65 practice areas surveyed).

Survey Questions Asked in ABPath Survey of Diplomates.

Abbreviation: ABPath, American Board of Pathology.

Possible responses for practice area importance ranged from “critically important” (ie, deep knowledge and skill in the area is absolutely essential for the respondent’s success in practice) through “unimportant” (ie, knowledge and skill in the area is only minimally needed) to “NA/No knowledge needed.” For practice area utility of training received, response options ranged from “Much Less than Practically Useful” through “About Right” to “Much More than Practically Useful”; respondents could alternatively select “NA/No Training” (received).

To be included in this survey process, MOC participants were asked 3 screening questions: Completed the last year of pathology residency within the prior 10 years? Practiced Anatomic or Clinical Pathology (or both) during the past 2 years? Received primary certification in Pathology?

Only if all 3 questions were answered affirmatively was the survey continued.

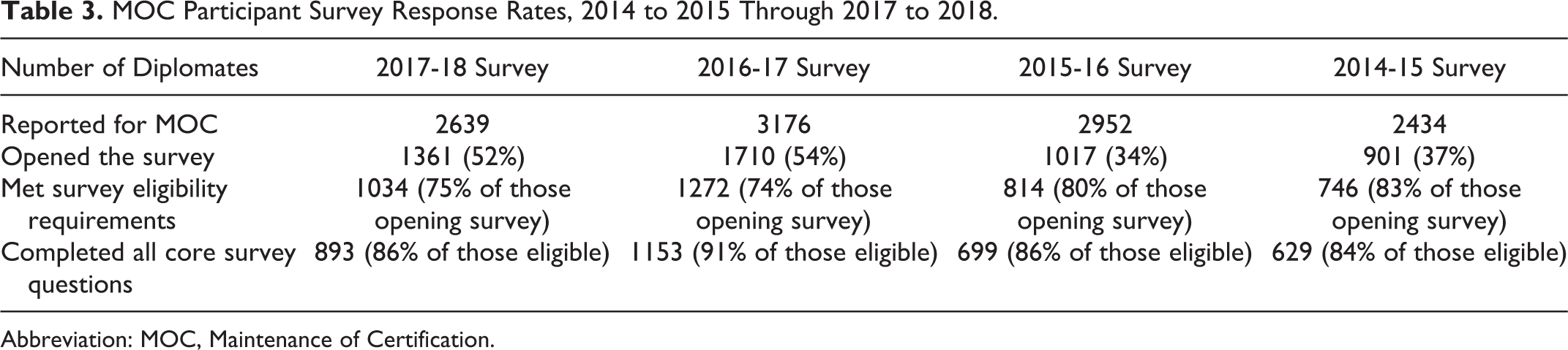

Survey participant response rates are shown in Table 3. All pathologists who reported for MOC were asked to complete the survey. During the first 2 years during which the survey was fielded, slightly over one-third opened the survey link, of whom at least 80% met the survey eligibility requirements. Of those eligible, about 85% completed the core survey questions concerning importance and utility of training received by practice area, resulting in 629 respondents in 2014 to 2015 and 699 respondents in 2015 to 2016. Participation rose substantially in the most recent years of the survey. Overall, over 50% of MOC participants opened the survey in both 2016 to 2017 and 2017 to 2018, and about 75% met the eligibility requirements. In total of 1153 pathologists completed the core survey questions in 2016 to 2017, and 893 completed it in 2017 to 2018. The lower number of respondents in 2017 to 2018 is partially attributable to there being over 500 fewer MOC participants than in the previous year.

MOC Participant Survey Response Rates, 2014 to 2015 Through 2017 to 2018.

Abbreviation: MOC, Maintenance of Certification.

The surveys queried the 65 practice areas listed in Table 1, which were grouped into categories that could be related to ACGME program requirements and ABPath examination specifications. To isolate the impact of intervening fellowship training from that of residency training, we excluded from the analysis responses for areas in which the respondent had done a directly-related subspecialty fellowship (Supplemental Table 2 lists subspecialty fellowship(s) directly related to each practice area). The impact of fellowship training on preparation for the various areas of practice is a matter for separate analysis, because it relates more directly to the overall structure of training for pathology practice (residency plus fellowship) than to the content of residency training per se. The complementary analysis (training and performance in practice areas preponderantly reported as important only by those with directly-related subspecialty fellowship training) is ongoing and will be reported separately.

In addition to subspecialty fellowship training received, participants also answered demographic questions on residency size (number of residents), current practice role, primary practice setting, practice size (number of pathologists), primary areas of practice responsibility, number of autopsies personally performed per year, and number of non-fellowship employed positions held since completing training.

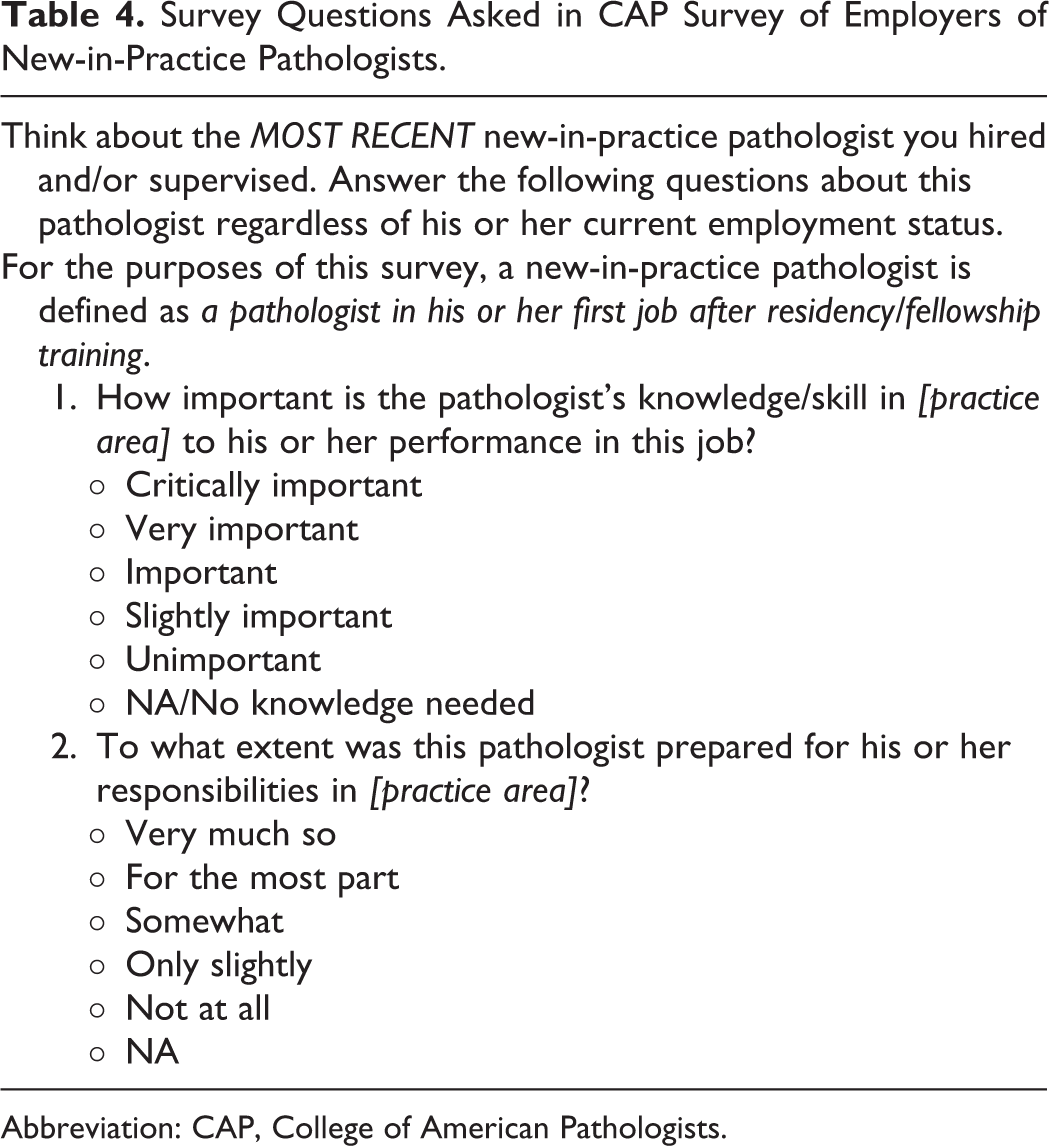

Survey of Pathologist-Employers

Employers of new-in-practice pathologists were necessarily asked slightly different although parallel questions (Table 4). To assess importance of practice areas, we asked the employer to rate (1) the importance of the new pathologist’s knowledge and skill in each practice area to the performance of his or her job and (2) the extent to which the new pathologist was prepared for his or her responsibilities in that practice area. (Note that, in contrast to the new-in-practice physician survey, we did not ask employers to assess whether their new-in-practice pathologist-employee had the “right” amount of training in each practice area: Instead, we simply asked employers to assess the adequacy of their pathologist-employee’s preparation for his or her responsibilities; although employers could certainly tell if their employee’s training had been inadequate, if it was adequate, they could not distinguish their employee’s training having been “about right” from having been more than practically useful).

Survey Questions Asked in CAP Survey of Employers of New-in-Practice Pathologists.

Abbreviation: CAP, College of American Pathologists.

To shorten the employer/supervisor survey as much as possible, we restricted questions to practice areas within which the recently hired pathologist had job responsibilities. For example, we asked about specific clinical chemistry practice areas (electrophoresis, immunology/serology, toxicology, and urinalysis) only if the employer first indicated their pathologist-employee had responsibilities in clinical chemistry. In addition to these practice-area questions, employer/supervisor respondents were asked to provide an overall rating of satisfaction with the new-in-practice pathologist; identify what changes, if any, had been made in the new-in-practice pathologist’s job responsibilities since being hired; and state whether the new-in-practice pathologist was still working in their practice and, if not, give reasons for their departure.

Possible responses for Importance to Practice ranged from “critically important” (ie, deep knowledge and skill in the area is absolutely essential for the employee’s success in their practice) through “unimportant” (ie, knowledge and skill in the area is only minimally needed by the employee) to “NA/No knowledge needed.” For Preparation for Practice, response options ranged from Not At All (prepared) through Very Much So; respondents could alternatively select NA (to the employee’s practice).

To reduce employer/supervisor bias toward reporting on the most “memorable” new hire (whether because a new hire was particularly able or particularly unable), we asked respondents to complete the survey only after answering 2 screening questions to determine their appropriateness for the survey: The number of years since the practice most recently hired a new-in-practice pathologist and, if within the previous 5 years; Whether the respondent was responsible for hiring/supervising at least 1 new-in-practice pathologist hired within those previous 5 years.

Respondents whose practice had not hired a new-in-practice pathologist in the last 5 years, or who had not been responsible for hiring/supervising at least 1 new-in-practice pathologist in that time, were screened out from taking the survey.

Analytical Methods

Each of the MOC surveys and the employer survey were conducted with specific aims in mind. The first 2 MOC surveys presented a unique opportunity to compare 2 functionally identical but individually distinct populations of new-in-practice pathologists; this constituted our principal consistency check, and our interest was to ascertain to what extent these populations (the first being those who received initial board certification in odd-numbered years, and the second being those who received initial certification in even-numbered years) would report their practice experience in relation to their training similarly—both overall and by practice characteristics subgroups. The employer survey, whose target group was the population that supervised new-in-practice pathologists, was designed to validate and/or challenge the perspective of those new-in-practice pathologists. Due to the very high degree of consistency that emerged between the first 2 MOC survey cycles, we were able to introduce small but important changes in our subsequent MOC surveys, to “drill down” into aspects of training or practice areas which appeared to show important findings, about which more detailed and specific reporting would help us make educational sense.

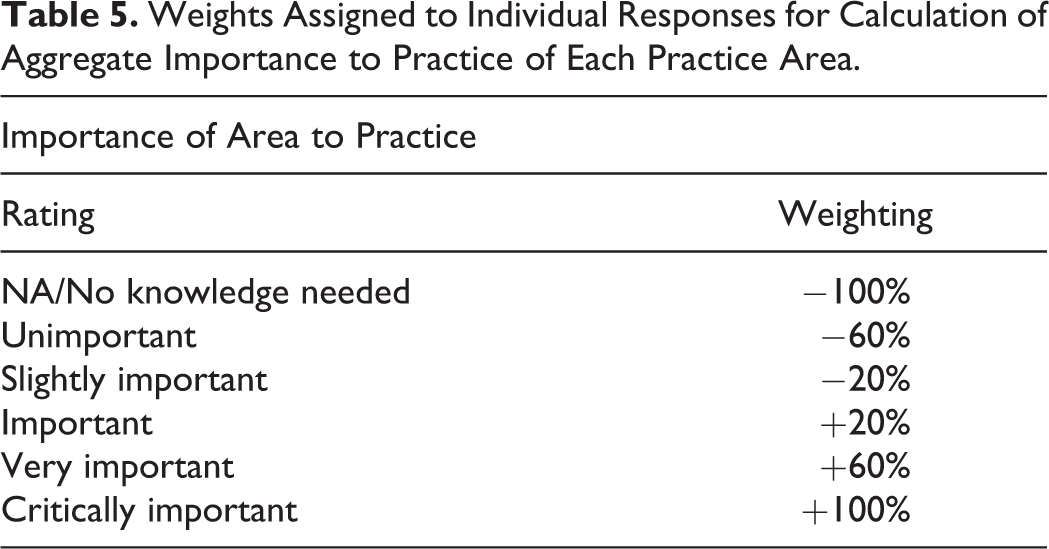

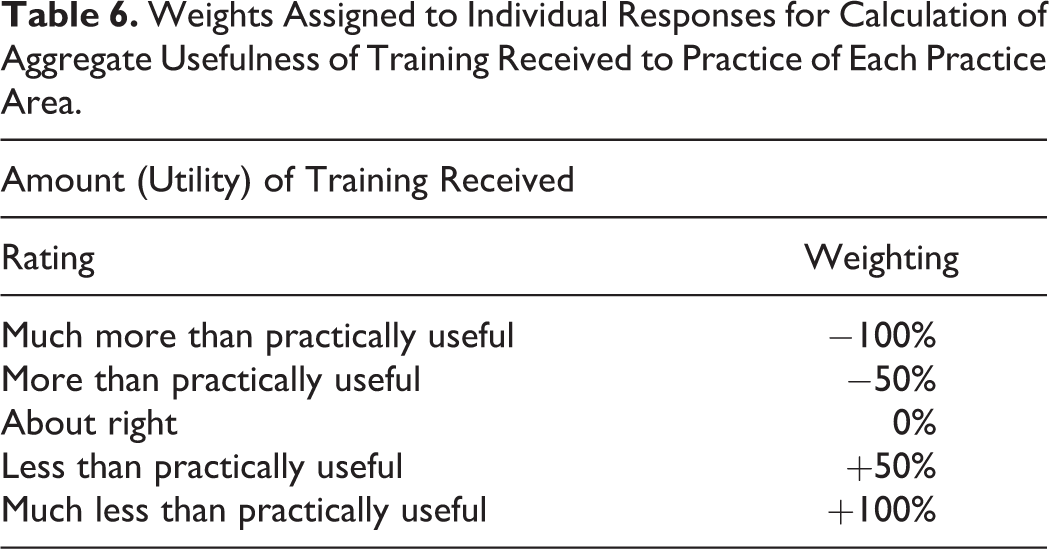

In order to compare the responses from different practice areas for both the MOC surveys of new-in-practice pathologists and the employer survey, we developed a weighted average of the responses based on (1) importance of skill/knowledge in that area to practice and (2) utility of training received in that area to practice. For each practice area, the responses on each scale were weighted to distribute over a potential range from −100% to +100% (Tables 5 and 6), noting that practice importance was rated by respondents on a 6-point scale (Table 5), whereas training utility was rated on a 5-point scale (Table 6).

Weights Assigned to Individual Responses for Calculation of Aggregate Importance to Practice of Each Practice Area.

Weights Assigned to Individual Responses for Calculation of Aggregate Usefulness of Training Received to Practice of Each Practice Area.

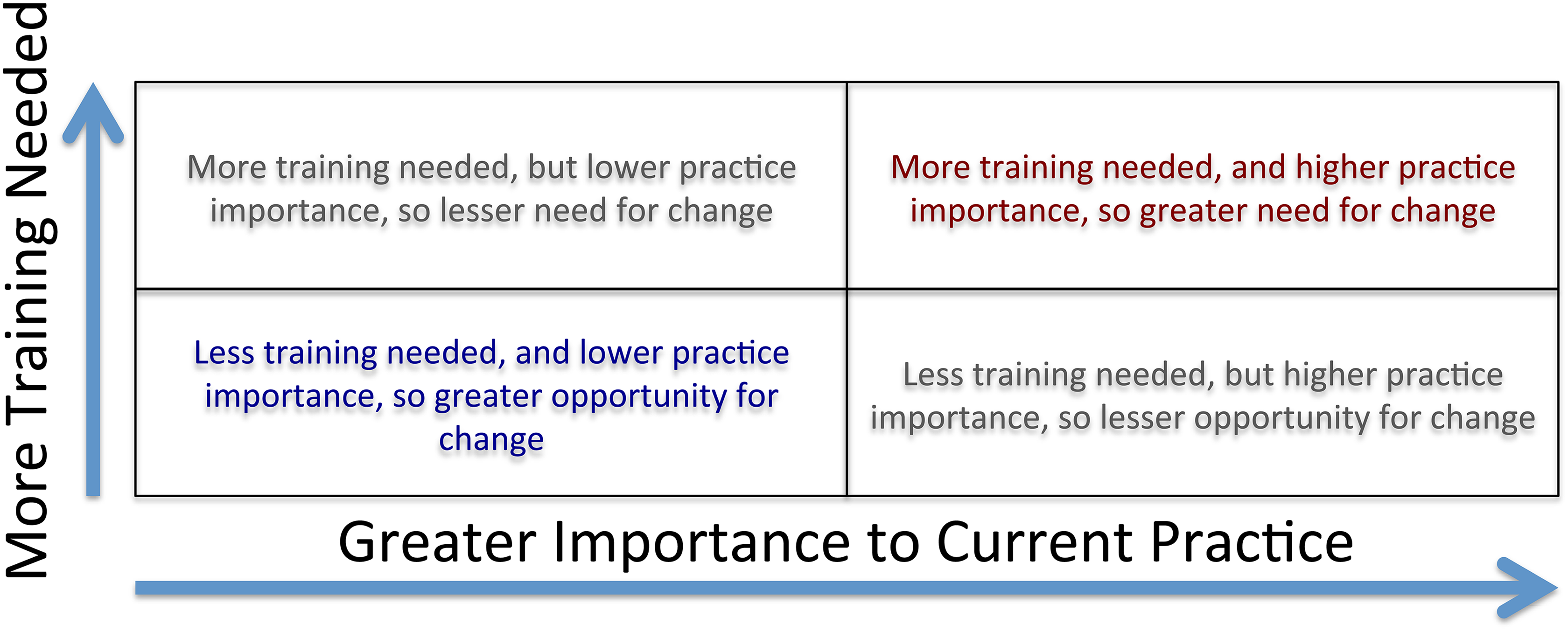

Averaged over all respondents in each analysis, each practice area thus had a weighted average of between −100% and +100% in its importance to practice, and a weighted average of between −100% and +100% in its usefulness of training to practice. This allows us to display the weighted ratings of these 2 parameters on a 2-dimensional graph, showing reported need for less to more training on the vertical axis, and reported practice importance from less to more on the horizontal axis. Doing so places practice areas into quadrants as shown schematically in Figure 1.

Schematic assessing weighted ratings of training and importance: This figure shows quadrants that verbally describe how to interpret weighted ratings of training and practice importance on a 2-dimensional graph. It shows reported need for less to more training on the vertical axis and reported practice importance from less to more on the horizontal axis.

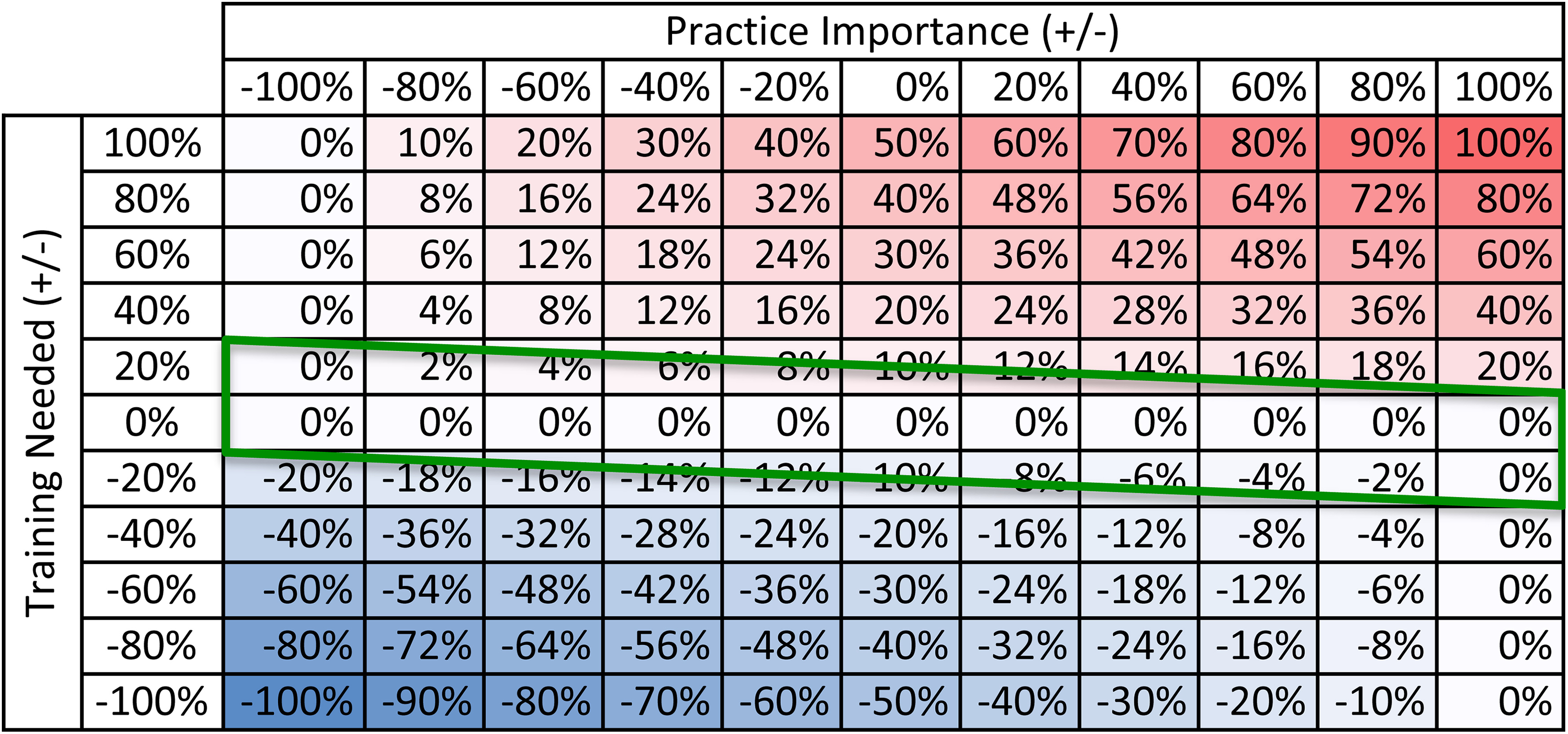

Graphical data such as that schematically illustrated by Figure 1 can also be represented numerically to show the indicated need/opportunity for change (The formula for the numerical combination of importance for practice and need for training to yield need for change is: Change Needed Index = Training Needed Index × [1 + SIGN(Training Needed Index) × Practice Importance Index]/2.). Figure 2 shows the calculated indication for change as a function of low to high practice importance and of more to less need for training developed from the survey responses. In Figure 2, a practice area of high importance and in need of much more training is in the “red zone,” denoting need for increased training. By contrast, a practice area of low importance in need of much less training is in the “blue zone,” denoting the opportunity for decreased training. Practice areas in which less training is needed, but which are important in practice, provide a smaller opportunity for negative change (“lighter blue”).

Alignment of indication for change with reported experience in practice: This figure shows the calculated indication for change as a function of low to high practice importance and of more to less need for training developed from the survey responses. In this figure, a practice area of high importance and in need of much more training is in the “red zone,” denoting need for increased training. A practice area of low importance in need of much less training is in the “blue zone,” denoting the opportunity for decreased training. Practice areas in which less training is needed, but which are important in practice, provide a smaller opportunity for negative change (“lighter blue”). The green parallelogram in this figure corresponds to a broad range in practice importance considered “about right” in terms of residency training.

Reassuringly, the range of reported practice importance and training needed ratings on MOC surveys clustered in the midportion of the potential vertical range (the green parallelogram in Figure 2), corresponding to a broad range in reported practice importance with a tighter clustering of most practice areas around “about right” in terms of residency training for most new-in-practice pathologists. The parallelogram shape reflects greater allowance for overtraining, and lower tolerance for undertraining, in categorical residency of practice areas that are of greater importance practice and the corresponding converse for practice areas that are of lesser importance in practice.

Comparisons were made among the MOC surveys and between the MOC surveys and the employer survey. For the MOC surveys, the distributions by practice area of both practice importance and training utility were compared among surveys for all respondents as well as for subsets (by practice setting, practice size, and length of time from training) of respondents. The individual MOC surveys and the aggregate of the MOC surveys were also compared to the employer survey for both practice importance and training utility, although structural differences intrinsic to the survey types (MOC vs employer, described below) limited exact matching. Also compared were the results from the MOC surveys by individual year for “No Training Received” versus “No Training Needed” as well as several subsidiary analyses within the “drilldown” areas surveyed in more granular and specific detail in the 2016 to 2017 MOC survey.

Initial data review was by direct visualization to enable us to see similarities and/or disparities among the abovementioned comparison population survey findings. The basic format for visualization was to display the putatively parallel results as distributions of reported rankings of practice importance and/or training utility in Excel (Microsoft Excel for Mac 2011 version 14.7.7) line charts to emphasize any deviations from parallelism. Visible similarities (and dissimilarities) were then quantified by calculating the Pearson correlation coefficient r using the Microsoft Excel CORREL function and, from this, 2-tailed Student t distribution P values were calculated using the Microsoft Excel TDIST (Student t-distribution probability) function.

Results

The general results are presented to illustrate the efficacy and limitations of the above-described methods; detailed presentation of the pathology-specific results and their significance as a possible guide for change in content and/or structure of pathology training will be provided in separate publications.

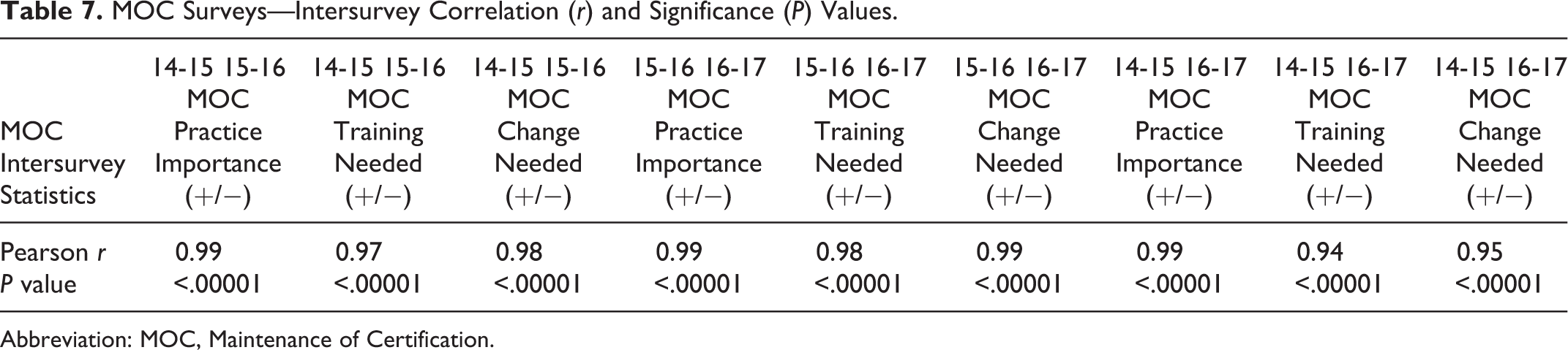

Intersurvey consistency

The MOC survey responses on practice area importance and usefulness of training showed a striking degree of consistency, providing a credible basis on which to assess the alignment of residency training with practice. Table 7 shows the Pearson r statistics and P values for overall survey findings.

MOC Surveys—Intersurvey Correlation (r) and Significance (P) Values.

Abbreviation: MOC, Maintenance of Certification.

Evaluations of training needs

Specifically to answer the questions originally posed, these data enabled us to sort by importance to new-in-practice physicians the practice areas that comprise our discipline and simultaneously how these physicians’ training in residency had prepared them for practice in each area. We combined these ratings to assess the indications and opportunities for change in residency training implicit in the data.

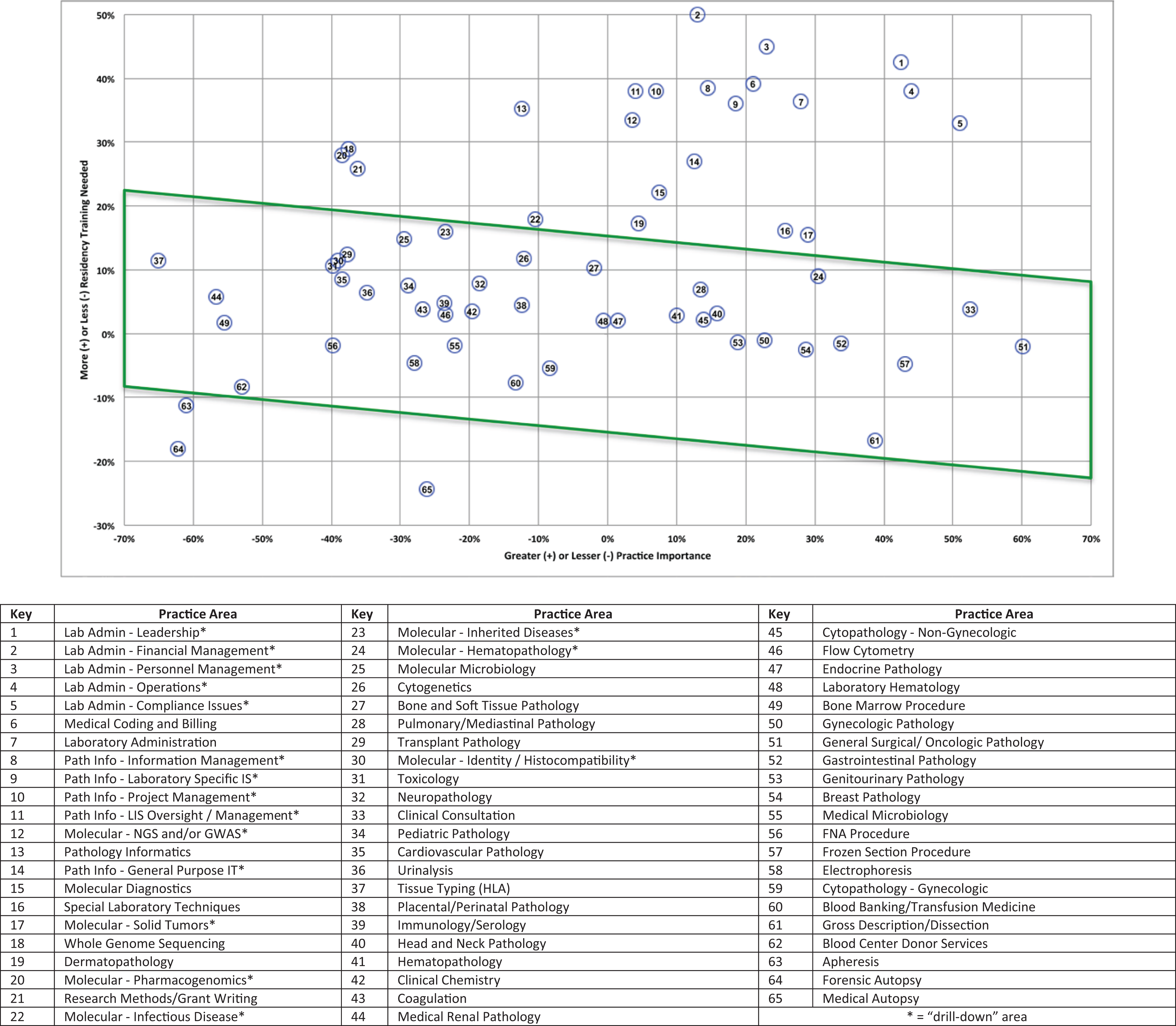

Graphically displayed, the green parallelogram in Figure 3 shows MOC survey respondents reported their training in most practice areas to have been “about right,” taking into account as described above both practice area importance and need for “more” or “less” training to align with their job requirements. The complementary practice areas in which training was reported as not having been substantially “about right” can also be seen in Figure 3: Practice areas above the parallelogram were reported to be undertaught and important in practice; those below the parallelogram were reported to be overtaught and less important in practice.

Combined Maintenance of Certification (MOC) surveys showing net residency training need versus relative practice importance: This figure provides a graphical representation of the average rating of each practice area. The practice areas are represented as numbers, and the key below the graph shows the practice area associated with each number. The green parallelogram in this figure shows MOC survey respondents reported their training in most practice areas to have been “about right,” taking into account as described above both practice area importance and need for “more” or “less” training to align with their job requirements. The complementary practice areas in which training was reported as not having been substantially “about right” can also be seen in this figure: practice areas above the parallelogram were reported to be undertaught and important in practice; those below the parallelogram were reported to be overtaught and less important in practice.

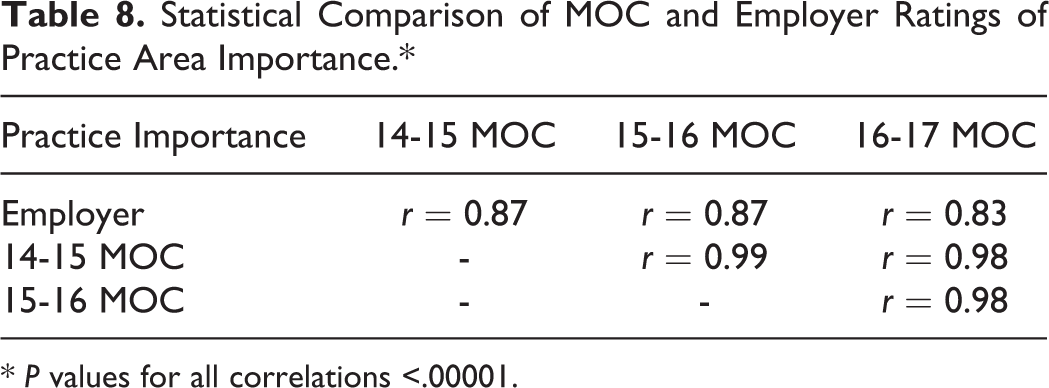

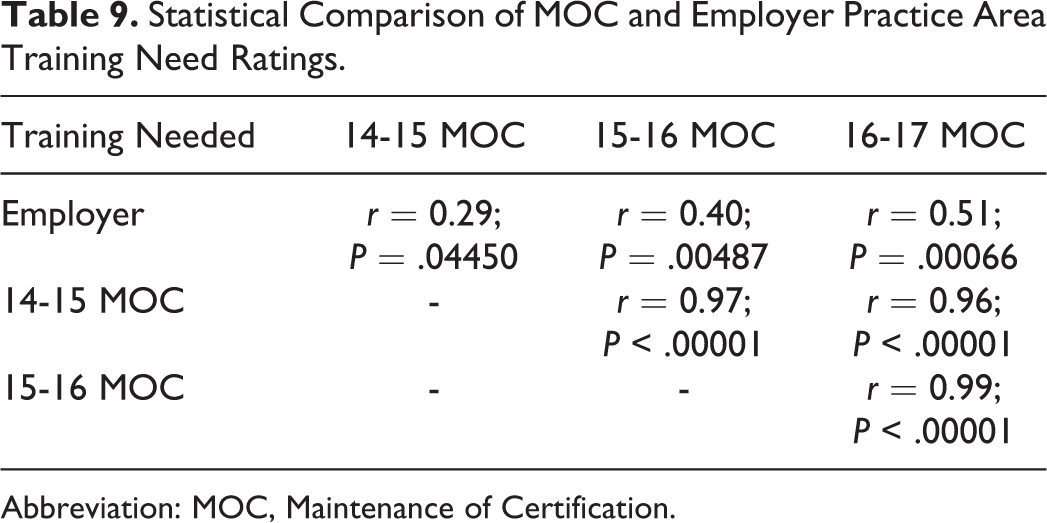

Finally, we “reality tested” these assessments, which were based on the perceptions of the new-in-practice physicians, by comparing them to analogous assessments from the separate survey sample of physician employers/supervisors of new-in-practice physicians. Table 8 shows a high correlation between employers and new-in-practice pathologists in ratings of the importance of each practice area to current responsibilities. By contrast, Table 9 shows the significant though distinctly lower correlation of employers’ and new pathologists’ rankings of needs for more training in each practice area. This lower level of correlation is not surprising, because it involves comparing the employers’ “one-tailed” (ie, ranging from inadequate to adequate) perception of the new-in-practice pathologists’ training with the new-in-practice pathologists’ own “2-tailed” (ranging from inadequate through adequate to excessive) perceptions. This issue will be discussed in more detail in a pathology-specific companion article.

Statistical Comparison of MOC and Employer Ratings of Practice Area Importance.*

* P values for all correlations <.00001.

Statistical Comparison of MOC and Employer Practice Area Training Need Ratings.

Abbreviation: MOC, Maintenance of Certification.

Discussion

For all the attention that is now placed on evidence-based medical practice, there is surprisingly little emphasis on evidence-based medical education. We contend that rapidly changing biomedical technologies and evolving health-care delivery systems make continuing evidence-based assessment of physician training equally essential. To that end, we developed a framework to acquire regular feedback from new-in-practice pathologists on practice areas in which they perceive they needed more (or required less) training in residency and on their important in actual practice. These assessments were supplemented with assessments by physician-supervisors of new-in-practice pathologists to provide validation and perspective. This article describes our general approach. Our next articles, still in development, will present detailed pathology-specific results from the annual surveys of new-in-practice pathologists and the survey of pathologist-supervisors, as well as our recommendations for how these results can be used to assess the curricular requirements for pathology residency.

A major challenge we encountered and addressed was the need to adjust for the now nearly ubiquitous phenomenon, whereby fellowship training intervenes between residency and practice (In a 2017 survey of pathology residents, 96% reported that they plan to complete at least one fellowship post residency; 46% planned to complete at least 2 fellowships. 11 ). Acknowledging and addressing the potentially confounding effect of fellowship training on preparation for initial practice was both essential and complex. Categorical specialty training in residency necessarily includes core training in all essential specialty practice areas, while fellowship training in a subspecialty area involves advanced training in a subset of the specialty’s practice areas. To assess how residency training per se prepares graduates for practice, each respondent’s survey responses needed to be segregated, by practice area, into (1) practice areas in which that respondent was residency-only trained and (2) practice areas in which that respondent was both residency and fellowship trained. Each response type was separately and distinctly important to analyze.

The residency-only trained practice area responses directly reflect, area by area, how effectively and efficiently categorical residency training is currently preparing pathologists for initial practice. With this being our primary focus, we therefore segregated our data to include in this analysis only those practice area responses not directly related to fellowship training received by the respondent (Supplemental Table 2 shows practice areas directly related to fellowships).

However, although the residency-plus-fellowship-trained practice area responses do not directly relate to the effectiveness and efficiency of residency training per se as preparation for entry into practice, they do provide important information: on a Practice Area by Practice Area basis, comparison of fellowship-plus-residency trained responses to residency-only trained responses shows how practice responsibilities of those with specific fellowship training differ from those without that same fellowship training. These differences show both the extent to which (1) specific fellowships are followed by substantially subspecialized practice and (2) specific practice areas have become effectively restricted to fellowship-trained practitioners. Assessment of the relationship between fellowship training and practice is ongoing and will be reported subsequently.

Our ability to measure the relationship between particular areas of practice importance and fellowship training, and how practice importance and utility of training can be combined to indicate the need for change in training, was dependent on maintaining throughout a relational data structure that allowed us to parse these anonymous responses on an individual basis, both by practice area and by all the potentially related demographic information on training, both residency and fellowship, and practice characteristics.

In particular, while our MOC survey data were anonymous as to individual respondent, each individual’s demographic and practice characteristics, as well as their quantitative rating responses to practice area questions and their comments, remained linked. This was essential not only to excluding potentially confounding effects of intervening fellowship training addressed above, but also to enabling us to identify areas in which high practice importance ratings were essentially restricted to fellowship-trained individuals and, for all respondents, to analyzing and quantifying post-training subspecialty practice in non-fellowship-trained areas. We could therefore meaningfully characterize the relationship between practice importance and utility of training by practice type and demographic subgroups within each survey, and also develop a novel quantitative measure for the highly variable extent and degree of subspecialization of practice across different practice settings. Quantification of subspecialization was needed because, in large practices, narrow subspecialization results in low reporting of practice importance in the non-practiced subspecialties. This is distinct from the distribution of reported practice importance by area among less subspecialized, typically smaller practices.

Our data collection and analysis was generally reassuring in that it showed that, in most areas, residency preparation for practice in pathology was “about right,” based both on our recent trainees’ reported employment experience and on the report of their employers. Also, those areas in which training was reported as excessive or inadequate largely coincided at least directionally with our expectations based on anecdotal discussion at national meetings of educationally interested pathology organizations. It was in the quantitative comparisons among the under- and overtaught areas, and analyses at the level of respondent subsets by practice setting, practice size, and years in practice that new and potentially important findings emerged.

In examining these subset analyses, it became apparent that in addition to the relatively small number of practice areas generally over- and undertaught in residency, more flexibility was needed in our approach to GME in pathology. We have both excessive residency training in practice areas predominantly performed by fellowship-trained individuals and inadequate preparation for practice in other areas in which, for many practitioner subsets, residency training must suffice. Although residency content area adjustments are certainly possible and desirable, our current rigid GME formulation of 3 or 4 years of general residency plus 1 or 2 years of subspecialty fellowship(s) is not well suited to the broad range of actual practice. Additionally, some areas of practice consistently became important only 5 or more years after entry into practice, raising a question of whether either residency or fellowship is an optimal setting for training in those areas. This process has for the first time provided quantitative, evidence-based information on the content of categorical training in residency, which has heretofore been largely a matter of eminence-based opinion.

Although the detailed findings of our process are necessarily specific to pathology, our general approach to designing and conducting these surveys, and subsequent analysis of the results, is not in any way particular to pathology. Also, while the perceived need to assess the content and structure of our training at this time was triggered by demographic circumstances and scientific advances particular to pathology, the concept of developing and maintaining an evidentiary basis for education in any clinical discipline ought to be of general interest in an era of advancing science, growing population, and (particularly for education) constrained resources.

The importance of this work, beyond the discipline-specific qualitative and quantitative findings to be presented in companion articles, lies in the general approach, methodologies developed, and potential to generate evidence to guide the ongoing direction of GME.

Supplemental Material

SupplementaryTables - Evidence-Based Alignment of Pathology Residency With Practice: Methodology and General Consideration of Results

SupplementaryTables for Evidence-Based Alignment of Pathology Residency With Practice: Methodology and General Consideration of Results by W. Stephen Black-Schaffer, David J. Gross, James M. Crawford, Stanley J. Robboy, Kristen Johnson, Michael B. Cohen, Melissa Austin, Joseph Sanfrancesco, Donald S. Karcher, Suzanne Z. Powell, and Rebecca L. Johnson in Academic Pathology

Footnotes

Acknowledgments

The authors wish to acknowledge the contributions of several people who were involved in the development of this manuscript, including: Barbara S. Ducatman, MD; William G. Finn, MD; Karen L. Kaul, MD, PhD; Peter J. Kragel, MD; Steven H. Kroft, MD; David N. Lewin, MD; William E. Schreiber, MD; and Thomas M. Wheeler, MD.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.

Supplemental Material

Supplemental material for this article is available online.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.