Abstract

Optometrists play a vital role in the prevention and management of many eye diseases. The expansion of optometrists’ prescribing and overall medical privileges has placed a burden on the optometric curriculum, limiting hours in professional topics courses such as practice management and public health. While the overall objectives may differ, the pedagogical challenges would be similar in public health training programs. That is, reduced hours and limited contact with students during the current COVID-19 (coronavirus disease 2019) pandemic both place pedagogical demands on the optometric and public health educator alike to meet learning objectives and course outcomes using atypical methods. As the current evidence about problem- or scenario-based versus lecture-based outcomes is equivocal, we randomly assigned half the students in an epidemiology/public health course to a case-based learning (CBL) group using three instructors naïve to problem-based teaching. The other half of the students attended lectures covering the same topics. Performance gains—the differences between the pretest and posttest scores—were compared between the two learning groups. The mean performance gain for the CBL group (M = 25.5%) was slightly higher than for the lecture-based learning group (M = 23.6%), but the difference was not statistically significant, t(56) = 0.71, p = .48. Inferences are discussed in the context of the study’s design and limitations. Overall, we believe our results can be extended to public health and health professions programs needing creative methods to reach health promotion learning objectives with limited student contact.

Optometrists play an important role in preventing occupational, recreational, environmental, and age-related eye diseases (Bressler et al., 2003; McCracken & Smith, 2017). These topics are covered extensively in core optometric courses such as optics, pediatrics, strabismus and amblyopia diagnosis/management, and diagnosis/management of ocular disease (Accreditation Council on Optometric Education, 2016; Association of Schools and Colleges of Optometry, 2011). What further elevates optometrists’ responsibility is the impact their clinical recommendations can have on a spectrum of health promotion opportunities ranging from avoiding developmental conditions in children (Press, 2008) to limiting vision-related falls in the elderly (Elliott, 2012). There is a recognized public health educators advocacy group that maintains standardization of public health curriculum across all North American optometry schools and colleges (Association of Schools and Colleges of Optometry, 2019). However, it has long been argued that public health education is the responsibility of all optometric educators across all didactic, lab, and clinical settings (Marshall, 1993). While teaching health promotion in a public health curriculum would have wider challenges such as incorporating social relations, mental health, and marketing aspects (Berkman, 1995; Soames Job, 1988), all public health educators are now faced with the coronavirus disease 2019 (COVID-19) pandemic (Ashour et al., 2020) that places pedagogical demands to deliver meaningful content in atypical ways (Shih et al., 2020).

Problem-based learning (PBL) is a pedagogical strategy that uses real-world questions to develop both knowledge and problem-solving skills in students (Chung, 2019). PBL achieves outcomes through active and collaborative learning activities and has been employed in undergraduate medical education for more than 50 years (Barrows & Tamblyn, 1976). It is increasingly used in health professional education (Spiers et al., 2014) and involves students more independently solving uncultivated problems rather than participating in instructor-led discussions or lectures (Barrows, 2000). Student roles—as well as their instructors’—are quite different from those in traditional lecture-based classrooms (Barrows, 2000; Hmelo-Silver, 2004). Students in PBL environments engage in more self-directed learning, allowing them to apply their current knowledge to a problem that immediately helps them identify information gaps. Many controlled educational studies have shown that PBL students achieve higher scores on both knowledge and practical examinations than students in traditional lecture-based learning (LBL) settings (Hwang & Kim, 2006; Meo, 2013), while others have shown only similar positive trends—favoring PBL over LBL (Antepohl & Herzig, 1999; Choi et al., 2014).

The advantages of PBL could extend into optometry education with the profession’s ever-increasing need for multidisciplinary clinical problem solving (Lovie-Kitchin, 1997; Yolton et al., 2000). There is evidence that the broad scope of public health and the need for health promotion professionals to be lifelong learners could also benefit from scenario-based teaching approaches (Gottwald & Goodman-Brown, 2012). There are, however, no direct comparisons of performance of LBL versus PBL settings in a public health course for optometry students. Furthermore, PBL places too high a demand on teaching resources, requiring one teacher per each small group, but no preparation is required of students prior to class meetings (Barrows, 2000). An alternative scenario-based approach is team-based learning (TBL), which only uses one instructor for the whole class. However, TBL requires student preparation before each session, group readiness assessments, peer evaluation, and self-evaluation (Hrynchak & Batty, 2012). TBL would certainly require fewer teaching resources, but it may be too much to ask of students in the context of this current classroom intervention.

We then proposed a modified case-based learning (CBL) approach—utilizing three instructors inexperienced with TBL or problem-based learning—for half of the students enrolled in a required epidemiology/public health course and an LBL approach for the other half of the class. The purpose of this study was to compare pretest to posttest performance gains between the two learning groups.

Method

Participants

A total of 60 third-year optometry students enrolled in the course were required to take a pretest on Day 1 of the semester as part of the regular coursework. All students were made aware that this was a low-stakes, formative assessment. One student was not available to take the pretest and was excluded from participating in the study. Another student with a Master of Healthcare Administration degree had previous advanced training in some of the covered topics and was selected as a tutor for the CBL group. That student was also excluded from participating. There were 58 total participants (57% female), who were randomly assigned to either the case-based (n = 29; 68% females) or lecture-based (n = 29; 43% female) group. At the conclusion of the semester, all students were required to take a high-stakes final exam (i.e., posttest), which constituted 33% of their final grade in the course. Participation in the study was voluntary, and the protocol was approved by the University of the Incarnate Word’s Institutional Review Board (Protocol #15-10-008). Informed consent was obtained from all subjects.

Activities

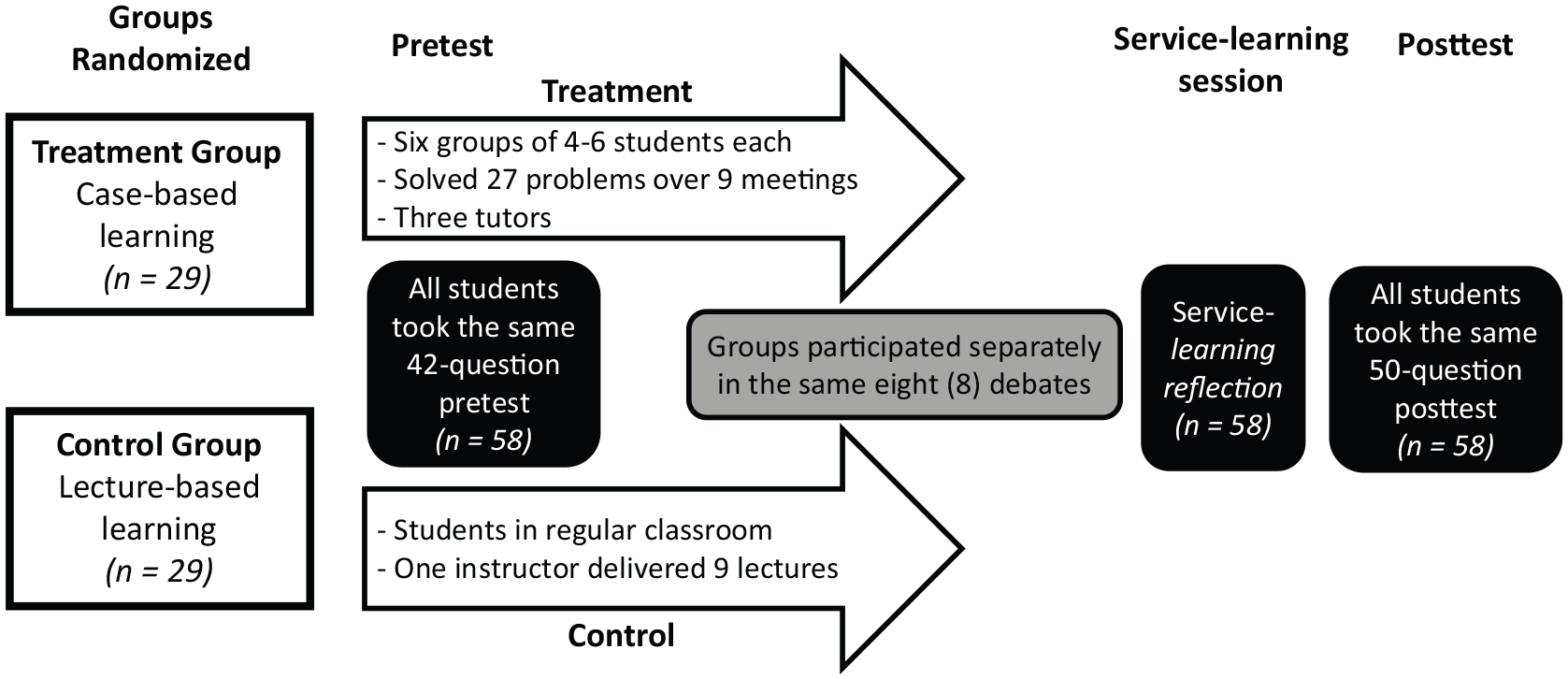

Both learning groups met 15 times over the semester, and the flow of activity is outlined in Figure 1. Both groups covered the same topics and in the same order over the next 9 weeks of the semester and participated—separately by learning group—in eight debates starting in Week 7. The groups only met together during Week 1 (pretest), Week 14 (reflections on our university’s community volunteer/service-learning requirement), and Week 15 (final exam/posttest). A detailed list of activities is shown in Table 1.

The flow of activity for both learning groups. The white boxes represent different activities completed separately. The gray box represents the same activity completed separately. The black boxes represent the same activities completed together.

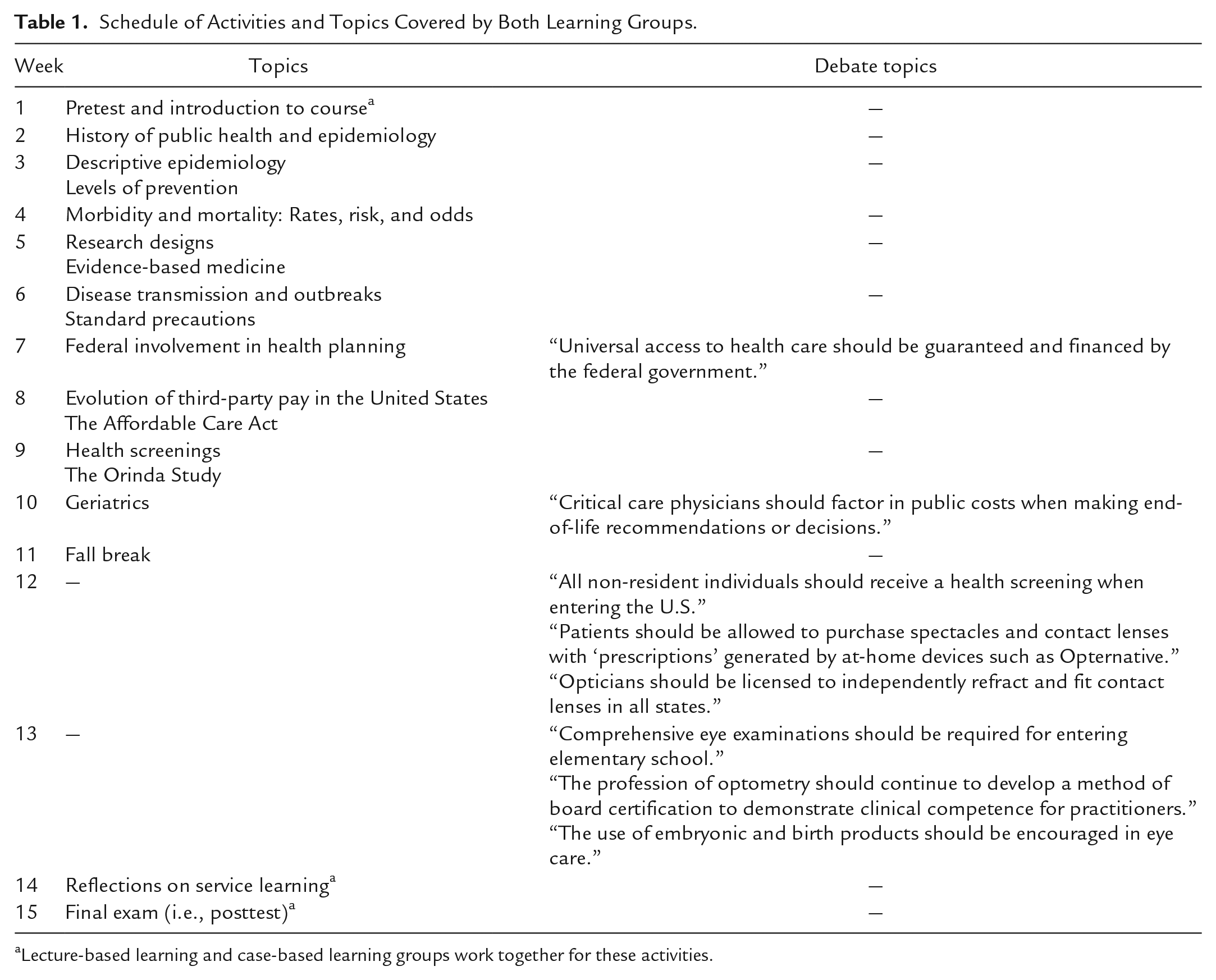

Schedule of Activities and Topics Covered by Both Learning Groups.

Lecture-based learning and case-based learning groups work together for these activities.

Lecture-Based Learning

The LBL group met from 9:00 to 10:50 a.m. on Monday mornings in the regular classroom. The lectures were delivered by the study’s principal investigator (PI) who also served as the regularly assigned course instructor. The schedule was followed exactly as shown in Table 1. There were no formative assignments or assessments given this group, but questions and typical discussion were both solicited by the instructor. Analytical considerations such as (a) calculating incidence rates in epidemiology, (b) odds ratios for evidence-based medicine, or (c) screening validity (sensitivity and specificity) were approached as a class via instructor demonstration or student volunteers.

Case-Based Learning

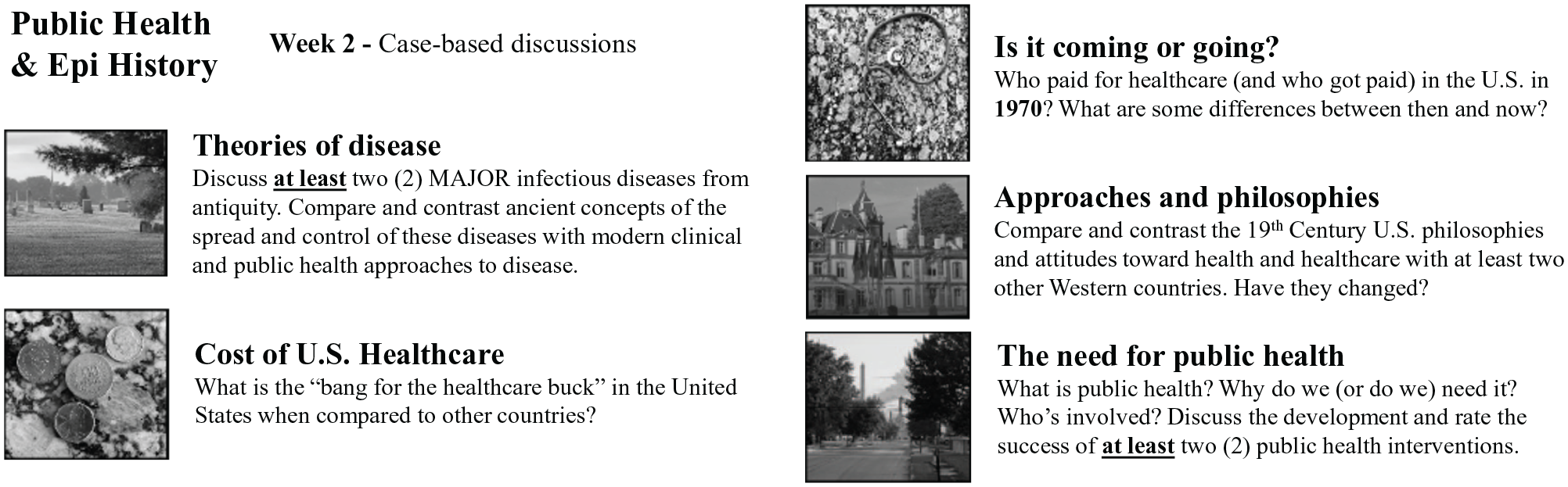

The CBL group met from 9:00 to 10:50 a.m. on Wednesday mornings in a larger special events room set up with 10-person round tables. Students were divided weekly into six naturally occurring (i.e., student selected) groups of 4 to 6 students. They achieved the learning outcomes by discussing and answering a set of 27 total instructor-assigned case-based discussion questions (see Figure 2 for a sample from Week 2). Three tutors (the PI of this study [also the lecturer for the LBL group], an additional faculty member with a master’s degree in public health, and a student teaching assistant with a Master of Healthcare Administration degree) were available to the groups for clarification and minimal guidance in answering these questions. Other than exposure to PBL and TBL literature during protocol preparation, all three tutors were naïve to PBL or TBL approaches.

Sample case-based learning exercises for Week 2.

Students were given 75 minutes to complete the exercises. The instructors then led a 25-minute session where groups shared their solutions with the rest of the class and were asked to reflect on any lessons learned.

Reflections on Service Learning

All students in our program are required to complete 16 hours of volunteer service, assigned during the fall semester of their first year. They have 2 years to complete the service learning requirement, and the course that is the subject of this study serves as its capstone. Ideally, students would choose to serve in efforts such as health/vision screenings or local, regional, or international health care missions. Many students did so, and—during the last week of the semester—all third-year students met to share their reflections on their service learning efforts.

Assessments

Assessments (i.e., pre- and posttests) were performed with both groups together in the regular classroom using Examplify, a computer-based testing software (ExamSoft Worldwide Inc., Dallas Texas). Both assessments contained multiple-choice questions sampled from all course learning objectives. Some questions covered multiple objectives, which included (a) world and U.S. history of public health; (b) evolution of U.S. health care delivery system over the last century; (c) federal involvement in health care; (d) third-party pay systems; (e) levels of care; (f) prevention levels: health promotion, screenings, and clinical care; (g) impact of prevention; (h) descriptive epidemiology; (i) issues of geriatric medicine; and (j) infectious diseases and precautions. The pretest consisted of 42 total questions, and the posttest consisted of 50 questions. Students were given 60 minutes to complete each test.

Data Analysis

As this was a pretest versus posttest experimental design, the primary dependent variable was posttest improvement when compared with the pretest. We first tested randomization of group assignment by comparing pretest scores between the two learning groups via an independent t test. We then compared all test scores via two-factor analysis of variance (ANOVA) with test (pre vs. post) as the within-subjects and learning groups (LBL vs. CBL) as the between-subjects factor. Last, post hoc comparisons of posttest scores and improvements in test scores between learning groups were made via independent t tests. We used SPSS Version 25 (IBM Statistics, Chicago, Illinois) for all statistical analysis and Excel to produce all graphs.

Results

Test of Randomization (Pretest Scores)

The pretest scores (percent correct) across all participants ranged from 30.9 to 66.6 (M = 48.0, SD = 8.4). While the pretest scores for the LBL group (M = 49.5, SD = 8.1) were higher than those for the CBL group (M = 46.5, SD = 8.6), the mean scores were not statistically different; t(56) = −1.39, p = .17. While 57% of the participants were female, the CBL group was 68% female, and the LBL group was only 43% female, a significantly different gender distribution between the learning groups; χ2(1, n = 58) = 4.46, p = .035.

Analysis Across All Test Scores (ANOVA)

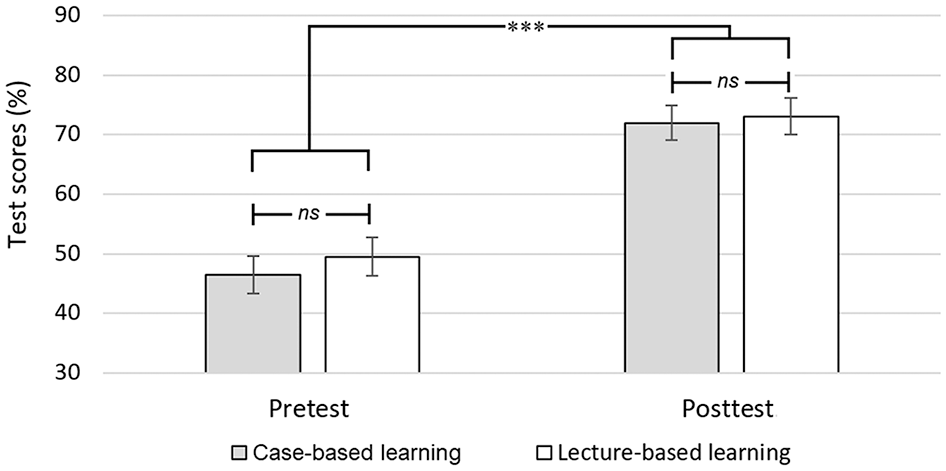

While overall test scores (i.e., pooled average of pre- and posttests) were slightly higher for the LBL group (M = 61.3, SD = 3.6) than for the CBL group (M = 59.2, SD = 4.1), the difference was not statistically significant; F(1, 112) = 1.73, p = .19 (see Figure 3). Obviously, posttest scores improved from pretest scores when LBL and CBL pooled together; F(1, 112) = 244.0, p < .001. However, there was little interaction effect between test (pre vs. post) and learning groups: F(1, 112) = 0.38, p = .54).

Pretest and posttest scores for both learning groups.

Posttest Scores

The posttest scores (percent correct) across all participants ranged from 48 to 90 (M = 72.6, SD = 8.5). The posttest scores for the CBL group (M = 72.0, SD = 8.8) were essentially equivalent to those for the LBL group (M = 73.1, SD = 8.4); t(56) = −0.49, p = .63. All test scores are shown in Figure 3.

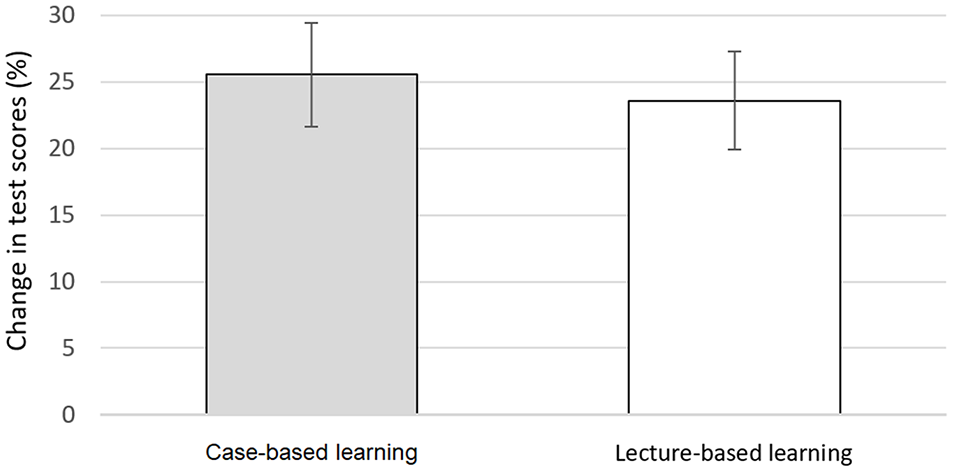

Pretest–Posttest Comparisons Between Learning Groups

Percent gains in test scores (i.e., posttest − pretest scores) across all participants ranged from 2.9 to 54.3 (M = 24.6, SD = 10.4). The mean increase for the CBL group (M = 25.5, SD = 10.6) was slightly higher than that for the LBL group (M = 23.6, SD = 10.2), but the difference was not statistically significant; t(56) = 0.71, p = .48. Percent gains in test scores for both learning groups are shown in Figure 4.

Percent gain in test scores (from pretest to posttest) for both learning groups.

Discussion

We formally compared the performance—measured by percentage gain from pre- to posttest—and found no difference between participants in the CBL and LBL groups. These results are particularly interesting in the current environment (Gaber et al., 2020), where experienced lecturers are being asked to provide distance-learning, often asynchronous, content and may be left wondering if the learning objectives are being met. We believe these results do show that carefully planned case-based assignments can—with limited faculty guidance—produce the same gain in learning as LBL over the same period.

Strengths

There were several aspects of our study that strengthen the inference that LBL and CBL produced the same pre- to posttest performance gains. The first of these was our randomized design. We randomly assigned students to groups and randomly decided which group received the treatment (i.e., CBL) and which was the control (i.e., LBL). This reduced selection bias, increasing the external validity of our results (see Isaac & Michael, 1981). Formal testing of randomization also revealed no statistically significant differences between the learning groups’ pretest scores.

Participants were all exposed to the same material each week and at the same time of day. That is, the material that was covered in lecture on Monday morning was the subject of the case-based problems in the Wednesday group of that same week. While there is a paucity of evidence concerning the role of weekly order presentation on longer term recall or problem solving, we believe our strict scheduling also strengthened our inference that performance gains were the same between the learning groups.

Another strength of our investigation was the high participation rate. All 60 (100%) of enrolled students consented to participation, and 58 of 60 (96.7%) were included as participants. This far exceeds reported mean participation rates in school-based research requiring formal consent (65.5%; see Blom-Hoffman et al., 2009) and further limited selection bias and other threats to external validity (Frye et al., 2003).

Our inferences are also strengthened by our testing method. Both groups took the same pre- and posttests using a secure, computerized platform. Computerized testing provided unbiased, experimenter-blind grading of the multiple-choice assessments. Since computerized testing was new to our school at the time of the study, it could be argued that some of the posttest performance gain was due to students being less accustomed to the computer-based testing process at the time of the pretest. However, a separate investigation at our school using a crossover design showed little difference between computerized and paper-based testing results during our transition to electronic testing (Foutch et al., 2019).

Last, while the pretest was constructed by the instructor for the LBL group, it only covered the same topics as the posttest, which was a collaboration between the three instructors for the CBL group. We then had posttest questions from two instructors experimentally masked from the control (LBL) conditions.

Limitations and Future Considerations

Our design did have limitations. First, while our study used an interventional pretest–posttest design, it suffered from a relatively small number (n = 58) of subjects and was a snapshot from a single cohort. Our inferences could certainly have been strengthened using a crossover trial, where both test (pretest vs. posttest) and learning group could be considered as within-subjects factors. However, the course used for this intervention is only offered during the fall of the third year, so a multiple year study was not feasible. We could have split the course material into two halves and given a midterm examination as the first posttest then switched the students into the other learning group. This design would certainly have strengthened our analysis via a true repeated-measures design. However, it is easily argued that the group receiving the CBL treatment the second half would have been at an advantage over the first half CBL group (i.e., they would have been receiving different treatments). Besides, one of our primary goals was to examine whether instructors inexperienced in delivering project or CBL experiences could produce the same performance gains as an experienced lecturer.

Pretest and posttest scores were nearly positively correlated (r = .252, p = .056). This could have the positive connotation that the tests fundamentally measured the same thing (Bland & Altman, 1986). It could, however, also mean that the tests were too similar and taking the pretest could have directly affected posttest scores. We could have had half of the treatment (i.e., CBL) group and half of control (i.e., LBL) group not even take the pretest (as considered by Dimitrov & Rumrill, 2003) and consider “pretest completion” as a between-subjects factor. However, that would have further reduced the statistical strength of our current comparisons and potentially created another confound. Either way, our current design did not provide a mechanism to discern the effects of pretest completion, which may or may not affect our overall inference that learning group assignment is not a factor in performance gains.

The distribution of males and females between the two learning groups was different from that expected from the overall proportion (57% female). There is at least one report that female students have higher “responsibility” subscores on self-regulatory processes during PBL (Demirören et al., 2016). It is possible then that the higher proportion of females elevated the performance gain in the CBL group. While there are other reports of impacts on learning, the most important factor appears to be adequate heterogeneity in PBL settings (reviewed by Khan & Sobani, 2012). We perhaps should have noted the difference and rerandomized the groups. That should be considered in future studies.

Last, opportunities to reflect on what students have learned is an integral part of TBL, but not necessarily LBL (Hrynchak & Batty, 2012). As the formal reflection shared by both groups in Week 14 of the current study could have produced a “team-based” opportunity for the LBL to learn (Tsingos-Lucas et al., 2016), this activity could be an experimental confound. However, from a curriculum perspective, the reflection was simply too valuable of an experience to omit from the course or for any proportion of the students. A better approach from an investigational perspective may have been to separate the class by learning groups.

Conclusions

We found no significant difference in performance gains between LBL and CBL groups sampled from students in a third-year optometry public health and epidemiology course. These results can be extended to the current challenges where health professions and public health programs alike need creative, remote methods of instruction that maximize student achievement of health promotion learning objectives.

Footnotes

Acknowledgements

We want to thank the University of the Incarnate Word’s Rosenberg School of Optometry Class of 2017 for participating in this study.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.