Abstract

With the Internet continuously being used as a means of providing health education and promotion to the public, consumers are increasingly going online to gather pertinent health information. However, disparities exist with regards to consumers’ ability in finding, evaluating, and applying online health information (collectively referred to as eHealth literacy). Identifying these disparities may elucidate which segments of the population would benefit from targeted eHealth literacy interventions and ways to adapt online health promotion materials. This study uses data from the 2020 CALSPEAKS survey to identify disparities in eHealth literacy among older adults aged 65+ residing in California, USA (

Introduction

With the rise of the Internet as a means of providing health education and promotion materials to the public, more and more consumers are turning to the Internet to gather pertinent health information in the interest of improving health and care. Yet, while the number of online health information tools continues to increase, and as access to these tools continues to increase, disparities exist with regards to consumers’ ability in finding, evaluating, and applying online health information (Werts & Hutton-Rogers, 2013). These abilities, collectively referred to as eHealth literacy, can support health decision-making (e.g., Luo et al., 2018), improve care (e.g., Brown & Dickson, 2010), and improve health and well-being outcomes (e.g., Neter & Brainin, 2019).

With the potential health benefits associated with elevated eHealth literacy, it is important to identify segments of the population at risk for low eHealth literacy. Doing so will allow interventionists to develop tailored training targeting the needs of specific groups and reveal ways in which online health promotion materials can be adapted for these groups for improved understanding. Previous research has shown that older adults may report lower health literacy rates (Chesser et al., 2016), be less confident in their ability to learn new technologies (Berkowsky et al., 2018), and be less likely to be comfortable with Internet technologies (Anderson & Perrin, 2017). Taken together, it can be assumed that older adults may be at risk for low eHealth literacy (Xesfingi & Vozikis, 2016) and could especially benefit from tailored training interventions and tailored health websites. To this end, this study uses data from the 2020 CALSPEAKS survey to examine eHealth literacy among older adults living in California for the purpose of identifying disparities based on demographic and technology use-related characteristics. Results of this study may elucidate what segments, within the older adult population, training interventions should specifically be marketed towards and designed for and how websites with health information should be re-tooled.

Methods

Data for this study come from the online-administered 2020 CALSPEAKS survey, an ongoing project managed by Sacramento State University that routinely assesses California public opinion on social, economic, political, and environmental issues through the use of a representative state-wide panel survey. The project is noteworthy as it is the first California-focused panel that uses probability-based sampling methods for recruitment. More specifically, a random sample is generated using the US Postal Service Delivery Sequence File of California residential addresses, stratified by region and population density, as a sampling frame. Use of this method assures that nearly all eligible California residents have a non-zero chance of being selected for inclusion in the panel. Participation in the CALSPEAKS panel is restricted to non-institutionalized California residents who are at least 18 years of age. In addition to detailed demographic information and public opinion data on several topics (e.g., opinions on elected officials), the 2020 survey included items measuring general technology use and eHealth literacy. The total 2020 sample included 851 respondents. Analyses for this study are restricted to those aged 65+ and who had valid responses for the predictors and outcome of interest; this produced an analytic sample of 237 respondents.

Measures

The outcome measure of this study is eHealth literacy, self-assessed in the 2020 CALSPEAKS survey through an amended version of the eHEALS questionnaire. The original eHEALS questionnaire was developed by Norman and Skinner (2006) and consists of eight items designed to measure knowledge, skill, comfort, and perceived skills at finding, evaluating, and applying online health information to address health issues. eHEALS has been previously tested for reliability and validity (e.g., Norman & Skinner, 2006; Sudbury-Riley et al., 2017). The eHEALS version used in the CALSPEAKS survey and assessed here is an amended version (Sudbury-Riley et al., 2017) which includes more nuanced item working that takes into account changes to the digital landscape since the development of the original eHEALS. These amended items include: 1) “I know what health resources and information are available on the Internet.” 2) “I know where to find helpful health resources and information on the Internet.” 3) “I know how to find helpful health resources and information on the Internet.” 4) “I know how to use the Internet to answer my questions about health.” 5) “I know how to use the health information I find on the Internet to help me.” 6) “I have the skills I need to evaluate the health resources and information I find on the Internet.” 7) “I can tell high-quality health resources and information from low-quality health resources and information on the Internet.” 8) “I feel confident in using information from the Internet to make health decisions.”

Respondents rate how much they agree or disagree with each eHEALS item on a five-point Likert scale. A total eHealth literacy score is calculated by summing the scores across the eight items (range: 0–32), with higher scores indicating increased eHealth literacy.

Predictor measures included in the analytic models to discern potential disparities include demographic characteristics and technology use-related characteristics. Demographic predictors include age (continuous), sex (0 = male, 1 = female), race (0 = White, 1 = non-White), whether the respondent identified as Hispanic, LatinX, or Spanish (0 = no, 1 = yes), marital status (0 = not currently married or coupled, 1 = married or coupled), education (0 = less than high school, 1 = high school, 2 = some college, 3 = Associate’s degree, 4 = Bachelor’s degree, 5 = postgraduate degree), employment status (0 = not employed, 1 = employed), and self-rated health (0 = poor, 1 = fair, 2 = good, 3 = very good, 4 = excellent). A score for household income (0 = <US$15,000, 1 = US$15,000–US$20,000, 2 = US$20,000–US$25,000, 3 = US$25,000–US$30,000, 4 = US$30,000–US$40,000, 5 = US$40,000–US$50,000, 6 = US$50,000–US$75,000, 7 = US$75,000–US$100,000, 8 = US$100,000–US$150,000, 9 = US$150,000–US$200,000, 10 = US$200,000+), imputed for missing values using other demographic measures, is also included in the analyses; imputed values are used due to the high number of respondents in the analytic sample with missing data (i.e., respondents refused to disclose their household income).

Technology use-related predictors were added to the analytic model to help discern if digital experience and literacy were driving forces in determining overall eHealth literacy. These measures included frequency of Internet use (0 = less than once per month, 1 = once per month, 2 = several times per month, 3 = once per week, 4 = several times per week, 5 = once per day, 6 = several times per day), number of devices used to access the Internet (continuous), and the breadth of Internet activities performed on a regular basis (continuous). The latter measure was assessed by asking respondents to indicate what activities, out of a possible 12, they regularly performed every time or almost every time they were online (e.g., email, using a search engine, using social media, browsing news, and weather-related websites); breadth of activities was coded by summing the number of activities, with higher scores indicating more activities performed.

Analytic Procedure

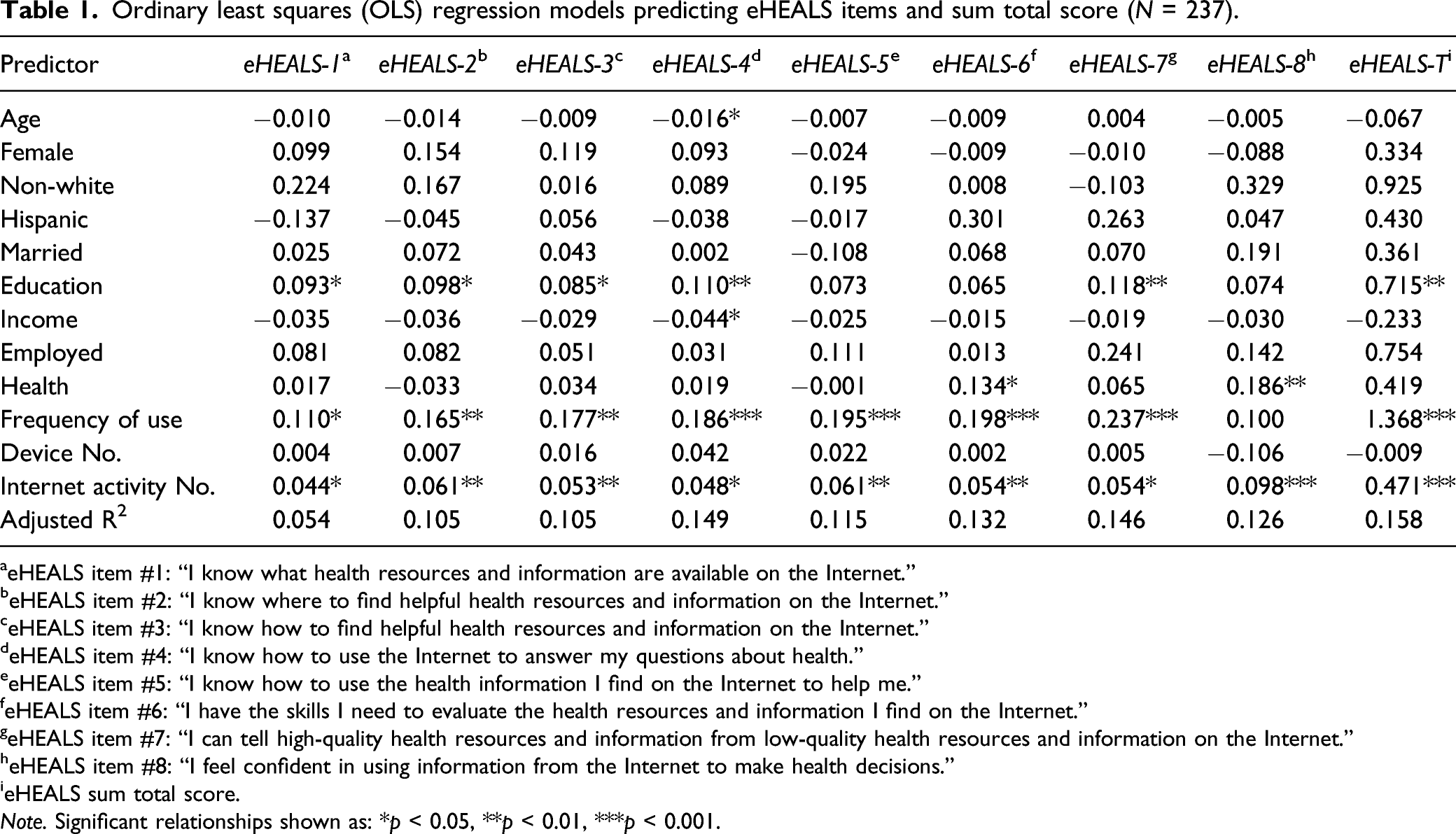

Ordinary least squares (OLS) regression models were run for each of the eight eHEALS items and for the sum total eHEALS score to identify significant predictors of eHealth literacy and elucidate potential disparities based on demographic and technology use-related characteristics (

Results

Ordinary least squares (OLS) regression models predicting eHEALS items and sum total score (

aeHEALS item #1: “I know what health resources and information are available on the Internet.”

beHEALS item #2: “I know where to find helpful health resources and information on the Internet.”

ceHEALS item #3: “I know how to find helpful health resources and information on the Internet.”

deHEALS item #4: “I know how to use the Internet to answer my questions about health.”

eeHEALS item #5: “I know how to use the health information I find on the Internet to help me.”

feHEALS item #6: “I have the skills I need to evaluate the health resources and information I find on the Internet.”

geHEALS item #7: “I can tell high-quality health resources and information from low-quality health resources and information on the Internet.”

heHEALS item #8: “I feel confident in using information from the Internet to make health decisions.”

ieHEALS sum total score.

Table 1 shows the results of the OLS regressions. Most demographic characteristics showed little or no significant associations with the outcome measures with the exception of education. Education showed significant positive associations with five of the eight individual eHEALS items and with the total eHEALS score such that those with higher education were more likely to score higher on eHealth literacy. Of the technology use-related predictors, both frequency of Internet use and breadth of Internet activities regularly performed showed strong and consistent associations with the outcomes. Frequency of Internet use showed significant associations with seven of the eight individual eHEALS items and with the total eHEALS score such that those who used the Internet more often were more likely to score higher on eHealth literacy. Breadth of Internet activities performed regularly showed significant positive associations with all outcomes such that those who reported more activities performed generally scored higher on eHealth literacy.

Discussion

Elevated eHealth literacy can positively contribute to health decision-making (e.g., Luo et al., 2018), care (e.g., Brown & Dickson, 2010), and overall health (e.g., Neter & Brainin, 2019), yet disparities exist wherein segments of the population report difficulty in finding, evaluating, and successfully using online health information (Werts & Hutton-Rogers, 2013). Using data from the 2020 CALSPEAKS survey, this study finds that among older California residents, disparities in eHealth literacy exist primarily based on education level and on digital experience and skill. Older respondents of the CALSPEAKS survey who reported lower levels of education, lower frequencies of Internet use, and a lower breadth of Internet activities regularly performed scored significantly lower on individual eHEALS items and on the total eHEALS score. Findings suggest that tailoring health websites to those with low literacy and low digital literacy can facilitate proficiency and comfortability with finding and using online health information among older California residents. Findings also suggest that eHealth training interventions should be tailored to and target those with low literacy and low digital literacy.

Previous studies provide support for other potential predictors of eHealth literacy including age and income (e.g., Choi & DiNitto, 2013; Guo et al., 2021; Tennant et al., 2015). However, the present study found no evidence that any demographic characteristics, beyond education, served as robust and consistent predictors of eHealth literacy specifically among older adults. These results somewhat mirror a study conducted by Arcury et al. (2020) which examined eHealth literacy among older Internet users recruited through urban and rural clinics that primarily served low-income communities and patients from minority communities. Unlike the present study, the Arcury et al. study did not find any significant demographic predictors of eHealth literacy, including education. A lack of consistent findings across studies with different populations of interest and different analytic samples speaks to the importance of this work; while disparities in eHealth literacy may not exist

A central limitation to this study is that the analytic sample is not representative of all older adults, thus results cannot be generalized to the overall older adult population (and while survey weights are available to better approximate the sample to the California population, their initial inclusion in the analyses wildly changed the regression results and so the weights were not used for this study). Related, as CALSPEAKS is administered online, the sample is likely more technologically proficient compared to the general population; it is thus possible that additional disparities not identified in this study exist amongst less technologically proficient Californians. Data are cross-sectional and thus results can only prove association (not causality). The study used a self-assessed measure of eHealth literacy, and future studies should examine other measures which test for this construct. Future studies should also examine other potential predictors (given the low

Conclusion

Disparities in eHealth literacy may prevent certain segments of the population from successfully finding and using pertinent online health information. This study finds that education and measures of digital experience and skill (e.g., frequency of Internet use, breadth of Internet activities performed regularly) show strong and consistent associations with eHealth literacy among older Californians. Efforts to promote eHealth literacy in this group should include tailoring health promotion materials to those with low education levels (e.g., scale down Web site reading levels) and those with low digital literacy (e.g., make health websites more user-friendly). In addition, interventionists seeking to increase eHealth literacy in this group should target older adults with low education and low digital literacy and tailor training materials to their specific needs (e.g., develop “how-to” manuals at lower reading levels).

Footnotes

Acknowledgments

The project described was made possible thanks to the CALSPEAKS CSU Fellowship program administered through the California State University Social Science Research and Instructional Council (CSU SSRIC). The content is solely the responsibility of the author and does not necessarily represent the official views of the CSU SSRIC.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.