Abstract

This paper details the development of a scale assessing teachers’ perceived capacity to enact transformative justice pedagogy, the Transformative Justice Scale (TJS). This measure is grounded in transformative justice (TJ) scholarship and is comprised of two subscales. The TJS factor structure was identified via exploratory and confirmatory factor analyses, with distinct samples of racially diverse middle and high school teachers. The TJS is time and resource efficient in that it uses teacher self-reports. The TJS contributes to the literature by providing an assessment of transformative or social justice–oriented pedagogy that is not well covered in existing measures. The TJS also would support teacher professional development and coaching because it could be used as a pre- to post-evaluative tool, to foster continuously reflective practice, or to provide structured feedback. Future work should explore these potential applications as well as further develop teachers’ capacity to enact transformative and equity-oriented approaches to practice.

Keywords

Introduction

Teacher education programs have started to and must continue to cultivate pedagogies rooted in social justice in order to address persistent racial injustice in education and society at large (Austin et al., in press; Franco et al., 2024). However, as programs have started to emphasize these tenets, their ability to measure these kinds of justice-oriented pedagogies (and therefore assess how well they’re doing in cultivating them) has lagged behind, remaining focused more on general practice-based components that typically do not focus on equity-informed elements of teaching. Without these measures, it is hard to know if, to what extent, and which teachers are actually doing this and thus how to successfully cultivate culturally sustaining and/or justice-oriented teachers.

Informed by a critical quantitative perspective (Diemer et al., 2025, in press), this paper aims to synthesize a critical theory (e.g., transformative justice [TJ], defined carefully below) with the development and provision of validity evidence in support of a measure, called the Transformative Justice Scale (TJS). This measure was developed from the perspective of teachers, inviting them to self-assess their enactment of four key tenets, or stances, of TJ pedagogy, each of which is reviewed below. The TJS measure is important because of (a) social justice currents in teacher education (recently reviewed by Franco et al., 2024), (b) as a complement to other more technical assessments of teacher practice, which focus on key elements (e.g., the Classroom Assessment Scoring System [CLASS] focuses on domains such as emotional support and classroom organization; Pianta & Hamre, 2009) yet does not focus on more social justice/culturally sustaining elements of pedagogy to the same degree, and (c) to contribute to emerging literature that supports the implementation of equity-informed teacher practices (e.g., the DoubleCheck intervention; Debnam et al., 2024). This measure aims to contribute to these more socially just currents in teacher education literature (e.g., culturally relevant; Ladson-Billings, 2006; and culturally sustaining, Paris, 2012) by providing an assessment tool to complement more theoretical advances, with the goal of gaining purchase into understanding how teachers enact more justice-oriented pedagogies. Specifically, the TJS aims to contribute to these literatures by precisely measuring how teachers’ understanding of the historical and structural roots of racism is connected to their classroom practices.

Defining Restorative and Transformative Justice

Restorative justice (RJ) is a paradigm shift seeking to move from punitive responses to harm and wrongdoing to accountability through consensus building and cultivating relationships. Elsewhere, Winn defined RJ in the context of education as the “art and science of making things right” (Winn, 2020, p. 57). RJ student practitioners describe RJ as shareholders having “equal ground,” including when students and teachers are engaging in problem solving together. Other RJ student practitioners view RJ as an opportunity to “talk it out” or even “a jigsaw puzzle that you just have to figure out” (pp. 63 & 65). However, RJ theorists and practitioners acknowledge the complexities of providing a succinct definition. In Listening to a movement: Essays on new growth and new challenges in restorative justice, (Stauffer and Shah, 2021, p. 2,) assert that RJ “is an alternative paradigm to build community, address violence, and repair harm that is rooted in community solutions and relationships.” Acknowledging this definition as having evolved and being their biggest hope for the possibilities of RJ, it is important to note that defining RJ in education settings is tenuous. In his groundbreaking book, Changing lenses: A new focus on crime and justice, RJ theorist Howard Zehr (1990) encouraged a reorientation to the way justice is viewed. The notion of justice in the context of the United States is inextricably linked to punishment, and Zehr contended that “the retributive paradigm of justice is one particular way of organizing reality” and that it can influence “how we define problems and what we recognize as solutions” (Zehr, 1990, p. 87).In the 25th anniversary edition to Changing lenses (with a new subtitle: Restorative Justice for Our Times) Zehr named racial justice as part of the work of RJ (Zehr, 2015). As schools sought to address the racial disparities in school discipline policies and practices, some educators and school leaders employed RJ as a strategy to decrease the number of suspensions, expulsions, and other harmful punitive responses in schools (Haft, 2000; Karp & Breslin, 2001). To be sure, scholars asserted that a punitive approach to harm found in the U.S. justice system “runs directly counter to a fundamental purpose of public education—the purpose of preparing children to live in a democratic society” (Haft, 2000, p. 797).

In these ways, RJ approaches are far better aligned with the democratic goals of public education and recent calls to reconsider the role of civic education (Winn, 2023). RJ provides opportunities for educators and youth to view themselves as civic actors in their teaching and learning communities. RJ replaces detention, suspension, and other forms of isolation with dialogic “circle processes” wherein all stakeholders are invited to engage in dialogue, open-ended questions, and consensus building (Karp & Breslin, 2001). This process also models for students what it means to create a community response to injustice and to participate in democratic engagement. However, one of the tensions is that despite importing RJ into schools and in some cases reducing the number of suspensions and expulsions, racial disparities continued to persist. Therefore, scholarship has called for mindset work in RJ with educators to make the paradigm shift toward more relational responses in schools with a focus on TJ in education (Winn & Winn, 2021).

TJ addresses several of the shortcomings of RJ, namely (a) inadequate attention to racialized dynamics, (b) application to the narrower realm of school discipline rather than all of schooling, and (c) failure to consider the multi-/intergenerational scope/magnitude of the struggle for justice. That is, RJ approaches often have not been adequately attentive to the racialized and gendered dynamics in communities (Winn & Winn, 2021). As early as 2015, RJ theorists and practitioners began discussing the limitations of the notion of restorative by asking, “Who gets to be deemed worthy of restoration?” and “What if the community of practice was not a welcoming space for all—what exactly is being restored?” “Restored to what?” 1 Thus, our use of TJ privileges the need for critical and historical analyses of race, racism, and racist ideologies through pedagogic stances that educators can take. Although there has been conceptual mapping of these stances—history matters, race matters, justice matters, language matters, and—more recently, a fifth stance—futures matter as well as research practice partnerships for extending these in classrooms across domains, there is a need for more empirical studies of teachers engaging the work (Souto-Manning & Stillman, 2020). Scholars have found that teacher coaching in the areas of school discipline can decrease racial disparities in school discipline (Gregory et al., 2016a). TJ extends RJ into the fullness and complexities of learning and living in schools and society. RJ is the widely used term to signal a paradigm shift from punitive approaches in discipline to one that centers relationships and consensus in repairing harm. TJ further emphasizes the radical reimagination of schools as communities that respond to the holistic needs of young people and their families, their histories, and their aspirations (Winn, 2020; Winn & Winn, 2021). Additionally, TJ centers the ongoing multi- and intergenerational struggle for racial equity and justice in education (Souto-Manning & Stillman, 2020). Given these distinctions, this paper centers the TJ in education framework. This serves as the core critical theory that is integrated with quantitative methods, consistent with the critical quantitative perspective that animates this work (Diemer et al., 2025).

TJ: Four Pedagogic Stances

Recent developments in TJ scholarship posit four core pedagogic stances—history matters, race matters, justice matters, and language matters (Winn, 2020; Winn & Winn, 2021). History matters invites communities of practice to consider the role of history in the pursuit of equity and justice in education. Equity work is not new. Although discourses have changed, communities throughout the world have sought to have opportunities and access for their children. The Race matters pedagogic stance calls for communities of practice to name racism and actively resist how racist ideas and anti-Blackness disrupt opportunities for communities to thrive. This stance calls to attention to the fact that issues around racism and anti-Blackness do not belong to one group of people but will need a collective response to counter and build more equitable and just futures for everyone. Justice matters is an opportunity to learn from social movements that excavated educational opportunities from grassroots movements to global movements. What can nondominant communities and Indigenous communities teach education about how to define and enact justice? Language matters acknowledges the power of words and their relationship to power. How is language coded for race in teaching and learning communities? How do certain labels impact teaching and learning communities? And finally, a futures matter pedagogic stance was conceptualized after the initial four stances. Although this study does not engage with the futures matter stance, it is important to note that ultimately the work of RJ and TJ is about imagining and planning for preferred futures. Educators are key to this process (Souto-Manning & Stillman, 2020).

Methodologic Rationale for Using Teacher Self-Reports

Interview-based or observational assessments (e.g., CLASS, Equity Quantified in Participation [EQUIP], and the Protocol for Language Arts Teaching Observation [PLATO]) of classroom practice yield critical information about teachers’ thinking and practice as well as patterns of classroom participation and discourse (Grossman et al., 2013; Pianta & Hamre, 2009; Pianta et al., 2010; Reinholz & Shah, 2018). These standardized protocols have advanced our understanding of how to understand the component parts of teaching practice and have introduced common conceptual frameworks and language in how we do so. The measure detailed in this paper builds on these frameworks in three key ways.

Despite these advantages, these measures are time and labor intensive, in that one interviewer evaluates one teacher or one evaluator assesses one classroom. The TJS measure is designed as a teacher self-report measure, which provides an efficiency gain in that teachers can self-assess their enactment of TJ pedagogy as opposed to requiring a trained evaluator. In contrast, self-reports have limitations (e.g., social desirability bias) that are returned to carefully in the “Limitations” section of this paper.

Second, the CLASS and PLATO assessments, for example, do not robustly assess more culturally relevant, sustaining, or transformational elements of teacher practice. These are vital domains of teacher practice, particularly given the increasing racial/ethnic diversity of the K–12 student population, and thus measures that do assess these domains would provide a more comprehensive assessment of key domains of teacher practice.

Third, the EQUIP observational protocol is designed to assess equity in mathematics classrooms, specifically in terms of equity of classroom discourse and participation (Reinholz & Shah, 2018). Although designed for equity, EQUIP is time and resource intensive because it requires a live observer or an evaluator of a videorecording—who then uses an EQUIP codebook to classify interactional sequences along seven dimensions as well as the demographics of students. In turn, these data are analyzed with the R statistical package. EQUIP has advantages in that it is calibrated specifically to equity and interaction patterns. In contrast, it is designed specifically for mathematics classrooms—as opposed to other domains—and is a labor-intensive observational tool. The TJS is designed to complement these existing tools.

Study Hypotheses

We hypothesize that the measure will be comprised of four subscales that reflect the four pedagogic stances noted earlier: (a) history matters, (b) race matters, (c) justice matters, and (d) language matters. This hypothesized factor structure will be closely examined in comparison with how well it is represented in the data via factor analytic approaches with distinct samples (detailed below). The goal of this effort is to provide new quantitative evidence in support of TJ theory, which may elaborate, modify, or help further establish the TJ framework.

Method

Participants

Data from this study come from two independent samples of teachers recruited via Cint, a survey research firm that maintains a large national online research panel. Online data-collection services have a robust body of evidence in support of their feasibility, quality, and accuracy (Jiang et al., 2023; Peer et al., 2022). Using quotas, teachers of color were purposively sampled in a greater representation than their overall proportion in the U.S. population and in the teaching workforce. This sampling strategy was employed in order to better understand the enactment of TJ principles among racially and ethnically diverse teachers, who represent the future of the U.S. population and the teaching workforce. Further, the assumption among the study team was that a more diverse sample would be more likely to access the mindsets and practices necessary to enact TJ principles. Thus, this more diverse sample was purposive and aligned with our subjective understanding of TJ.

Procedures

Cint directed panel participants to an online Qualtrics survey. The first sample of 279 participants completed the 54-item survey in an average of 10.30 minutes (median 7.23 minutes). The second sample of 289 participants completed the survey in an average of 12.97 minutes (median 5.45 minutes). At the start of the survey, respondents were prompted with, “Please think of the class most closely connected to civics, social studies, or language arts that you teach. Please keep those courses in mind when answering the questions that come next. If you don’t teach those content areas, then please think of the class you teach with the oldest group of students you have.” Civics, social studies, and language arts teachers were selected because these content areas provide more opportunities for teachers to address issues of racism, inequality, and justice and thus were assumed to provide more variation on items assessing TJ practices (Winn & Winn, 2021). The study protocol was approved by the university institutional review board.

Measures

Drawing on TJ theory (Winn, 2020; Winn & Winn, 2021), the first and fifth authors coordinated with a team of researchers to develop, vet, and discuss items that were designed to measure the four stances of TJ—race matters, history matters, language matters, and justice matters. That is, substantive theory and prior TJ scholarship (e.g., Winn, 2020; Winn & Winn, 2021) served as the source material for items that the project team developed. Consistent with a critical quantitative perspective, the TJ theoretical framework was considered along with a quantitative approach as the foundation of this research (Diemer et al., 2025). The project team generated items specific to each of the four stances while recognizing that the boundaries between stances (in some cases) were subtle (see Table 3 to see which TJ stance each item attempted to measure).

Item reading level checks were carried out to ensure the comprehension of items, as evinced by Flesch Kincaid estimates (7.6, between a seventh and eighth grade reading level) available in Microsoft Word. The item writing process was informed by best practices in writing items (DeVellis & Thorpe, 2021). After developing an initial set of items, these items were shared, vetted, and discussed with the remaining authors of this paper. The authorship team provided suggestions, feedback, and revisions that were discussed and incorporated into the item pool, totaling 54 items. Following these processes, this set of items was administered to two independent samples of teachers using the Cint online data-collection service. Evidence for the feasibility and accuracy of data collected via online data purveyors is thoroughly detailed in recent work (e.g., Jiang et al., 2023; Peer et al., 2022).

The percentage of missing data items in the survey ranged between zero and 9.69%. Still, analyses accounted for missingness via full information maximum likelihood methods in the factor analyses.

Analytic Approach

All data cleaning and analysis were conducted in R, version 3.5.1 (R Foundation for Statistical Computing, Vienna, Austria), including the psych, psychtools, lavaan, and lavaanPlot packages. Each item was scaled in a 1 (“strongly disagree”) to 6 (“strongly agree”) Likert-type response range, and each was considered continuous for estimation purposes.

Analyses proceeded in two sequential phases, with two distinct samples. Analyses began with an exploratory factor analysis (EFA) to generate hypotheses about dimensionality, or how items related to unobserved latent variables (N = 279). Next, confirmatory factor analysis (CFA) was used to cross-validate hypotheses about factor structure derived from the EFA with a new sample (N = 289). The sample sizes at each phase were designed to provide the necessary power for EFA (DeVellis & Thorpe, 2021) and CFA (Furr, 2021) analyses. These data, input files, and output files are available via an openICPSR repository at https://doi.org/10.3886/E238610V1.

Results

Exploratory Factor Analysis

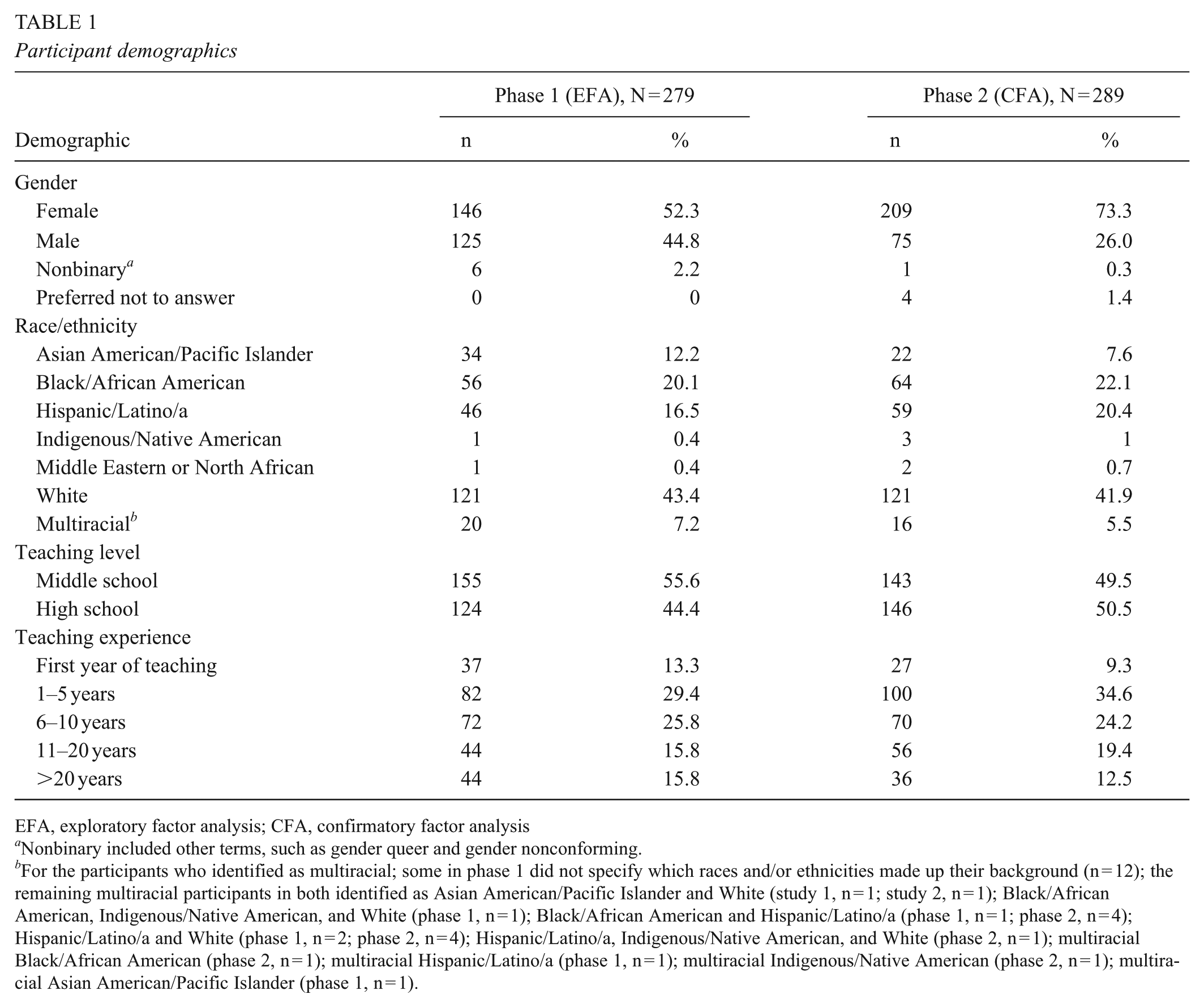

A total of 279 participants comprised the sample in the first EFA phase. Participant demographics for EFA analyses are detailed in Table 1. Fifty-four items were administered and considered in the EFA. As suggested by the factor analytic literature (e.g., DeVellis & Thorpe, 2021; Furr, 2021), a broad range of criteria were used to guide the number of factors to retain (i.e., how many latent variables explained meaningful amounts of variance in these 54 observed items). These criteria included scree plots, Kaiser’s criterion, the Very Simple Structure (VSS) function in R’s psych package, parallel analysis, item loadings, model fit estimates, and substantive plausibility. These criteria suggested that four- and eight-factor models were the two most compelling models to be considered. For example, the scree plot and VSS criterion suggested four-factor models, whereas the Kaiser criterion and parallel analysis suggested eight-factor models.

Participant demographics

EFA, exploratory factor analysis; CFA, confirmatory factor analysis

Nonbinary included other terms, such as gender queer and gender nonconforming.

For the participants who identified as multiracial; some in phase 1 did not specify which races and/or ethnicities made up their background (n = 12); the remaining multiracial participants in both identified as Asian American/Pacific Islander and White (study 1, n = 1; study 2, n = 1); Black/African American, Indigenous/Native American, and White (phase 1, n = 1); Black/African American and Hispanic/Latino/a (phase 1, n = 1; phase 2, n = 4); Hispanic/Latino/a and White (phase 1, n = 2; phase 2, n = 4); Hispanic/Latino/a, Indigenous/Native American, and White (phase 2, n = 1); multiracial Black/African American (phase 2, n = 1); multiracial Hispanic/Latino/a (phase 1, n = 1); multiracial Indigenous/Native American (phase 2, n = 1); multiracial Asian American/Pacific Islander (phase 1, n = 1).

These two competing factor solutions were compared, with maximum-likelihood extraction and an oblique rotational method (i.e., ProMax) because resulting factors were assumed to be correlated. A priori criteria for item loadings were used, with a minimum loading of .40 and no cross-loadings within .20 of magnitude of each other; this more liberal cross-loading criterion (cf. .15) was used because of the need to streamline the number of items being considered in the item pool (Worthington & Whittaker, 2006). To that end, if items were near either the minimum threshold of loading (e.g., .41) and/or cross-loading (e.g., .19), those items were removed from further consideration. One item, “I help my students understand that we can fix racism by helping individual people,” had a negative loading onto the first factor (this was a reverse-coded item). Because the other items all positively loaded onto the first factor, this item was removed from the item pool.

An initial EFA solution was developed that generally met these criteria, and we proceeded to cross-validate that EFA solution with a new sample using a CFA approach. However, the initial CFA (not detailed here for clarity but can be obtained by contacting the first author) failed to fully replicate the factor structure obtained with the EFA, presumably because the modest degree of item cross-loadings cumulatively dragged down model fit. (This discrepancy between EFA and CFA model fit estimates may occur because the EFA allows items to cross-load, whereas the CFA restricts all item cross-loadings to be zero.) Thus, we iteratively returned to the EFA model and respecified a 44-item pool to be examined with EFA techniques. Ten items were removed because of conceptual overlap (e.g., four items discussing slavery and its present-day impacts with similar wording and content were reduced to two) or because items focused more on general beliefs than on specific teaching practices (e.g., “I recognize that racism plays a role in many situations”).

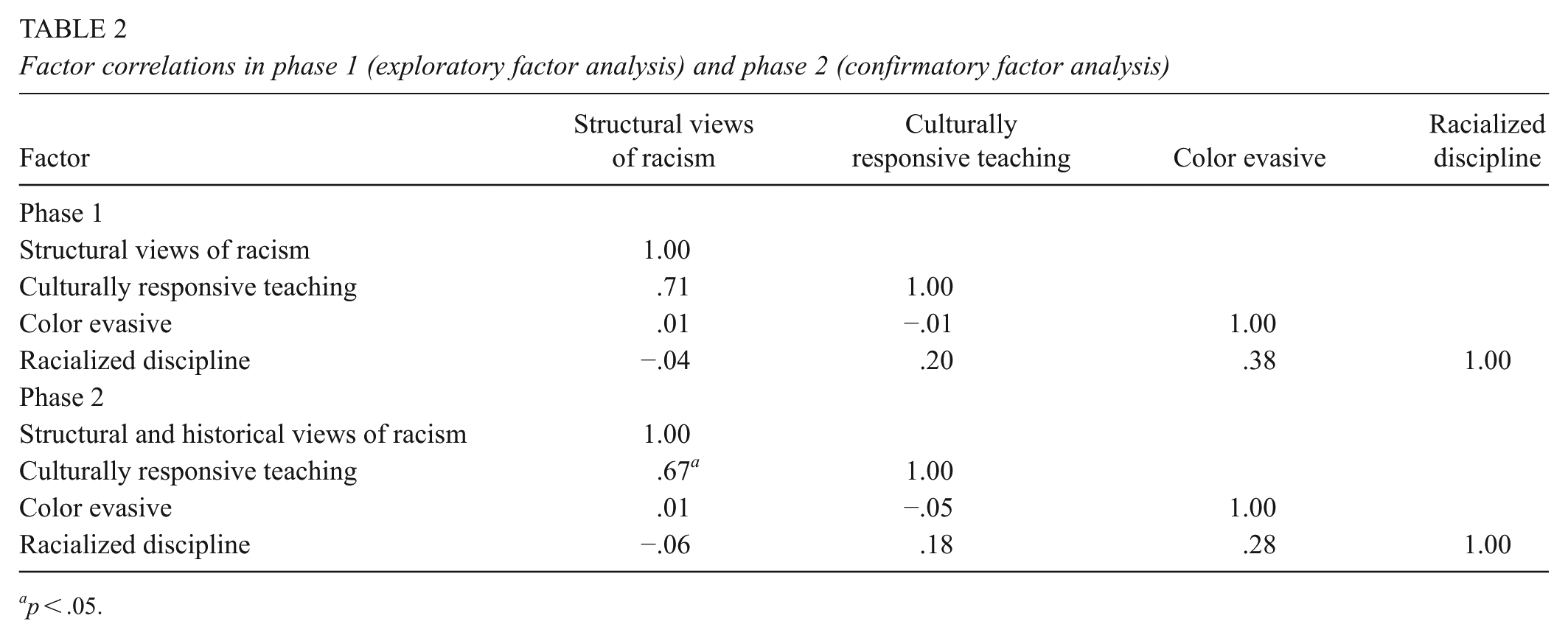

The EFA of the 44 items suggested that a four-factor model, using maximum-likelihood estimation (Revelle, 2022) yielded the most interpretable and best-supported factor solution. The correlation among factors is depicted in Table 2. Notably, the first factor had almost a perfectly zero correlation with the last two factors, and the second factor had a near-zero correlation with the third factor. If all the factor correlations were at or near zero, an orthogonal rotation would have been preferred. Yet, because several factors were relatively strongly correlated (e.g., r = .68 between the first and second factors) the oblique rotational method better captures the relationships among some of these factors (Furr, 2021).

Factor correlations in phase 1 (exploratory factor analysis) and phase 2 (confirmatory factor analysis)

p < .05.

Model fit indices for the four-factor model (root mean square error of approximation [RMSEA] = .08; 90% CI, .07–.08; Tucker–Lewis index [TLI] = .80; root mean square of residual [RMSR] = .05) were discrepant, with the RMSR model fit index (.05) right at the suggested .05 cutoff, suggesting good model fit; also, the four-factor model accounted for a meaningful proportion (54%) of the variance in the observed items. In contrast, the RMSEA was above the suggested .06 cutoff, and the TLI was below the .95 suggested cutoff. Presumably, the TLI value was low because this fit index is derived from the limited degree of correlation among some factors (Kline, 2023). Related to this issue, the third and fourth factors (and by extension, their items) were quite discrepant and had near-zero correlations with the first factor in the model.

Of the 44 items, 34 met a priori criteria for loading (both magnitude and degree of cross-loading) onto four identified factors in an interpretable factor solution; the other 10 items were removed from further consideration. These EFA item loadings are detailed in Table 3. Internal consistency for each of the four factors ranged from .80 to .94, as estimated by Cronbach’s α. Mean interitem correlations are also included in Table 3 and support the internal consistency of each TJS subscale. These reliability estimates are quite strong, considering that α is biased against (or biased downward for) shorter measures (McNeish, 2018)—factors three and four are comprised of four items and three items, respectively. The model fit indices and factor loadings obtained serve as validity evidence in support of this EFA structure (DeVellis & Thorpe, 2021; Furr, 2021; Messick, 1995). However, to further probe this four-factor structure, the model is cross-validated via CFA in the next phase of analyses, as detailed below.

Exploratory factor analysis (N = 279)

Note.

p < .05.

Confirmatory Factor Analysis

In the next phase, 34 items that loaded onto four factors in the EFA were retained and further empirically scrutinized via CFA. A new sample of 289 participants was administered the 34 items that survived the preceding EFA. These data were used to cross-validate the initial EFA model with a new sample in the CFA. Participant demographics for the CFA are detailed in Table 1.

Notably, CFA constrains all cross-loadings to zero—an item can load only onto one of the three specified factors and is assumed to have no relationship to other factors in the CFA (Worthington & Whittaker, 2006). For the CFA, the a priori criterion of item loadings greater than .40 was used. Maximum likelihood with robust standard errors (MLR) estimation was used for these continuous items, which were slightly skewed and kurtotic. Latent variances were constrained to one and the first item freely estimated to identify the model. The overarching objective of the CFA was to cross-validate the factor structure obtained via the EFA with an independent sample.

The first specified CFA was an adequate fit to the data (RMSEA = .07; 90% CI, .06–.08; comparative fit index [CFI] = .91; TLI = .91; standardized root mean square residual [SRMR] = .068) and confirmed the four factors identified in the preceding EFA phase. Model modification indices suggested that error covariances between some pairs of items should be estimated (Furr, 2021). Of the suggested pairs, we freely estimated error covariances between four pairs of items (i.e., #3 and #5, #4 and #6, #7 and #11, and #15 and #17—items correspond to final TJS item numbers; see Table 4). These shared error covariances mitigate potential negative impacts of self-report data in the factor solution obtained (Furr, 2021). Model fit indices indicated that hypothesized relationships between observed variables and their corresponding latent construct were a good fit to the data in the respecified CFA (RMSEA = .05; 90% CI, .04–.06; CFI = .95; TLI = .94; SRMR = .065). The correlation among factors is depicted in Table 2. Unexpectedly, the first two factors were quite correlated with each other (r = .67 to r = .71) but quite distinct from the last two factors, where most correlations were nearly zero. Thus, researchers should tally each subscale score, or factor score, individually instead of computing an overall sum score for the TJS.

Confirmatory factor analysis (N = 289)

TJS = Transformative Justice Scale; IIC = interitem correlation

Note. The scales items correspond to the final 28-item TJS; factors are ordered by the structure implied by the exploratory factor analysis. Freely estimated the first item loading and fixed factor variance to one.

p < .001

Factor loadings for each of the 30 items onto their respective factors are displayed in Table 4. Internal consistency for the four factors ranged from .83 to .95, as estimated by Cronbach α. Mean interitem correlations are also included in Table 4 and support the internal consistency of each TJS subscale. These reliability estimates are quite strong, considering that α is biased against (or biased downward for) shorter measures (McNeish, 2018)—factors 3 and 4 are comprised of four and three items, respectively. Relatedly, the model fit indices and factor loadings obtained serve as validity evidence in support of this CFA model (Furr, 2021; Messick, 1995). Here the initial EFA factor structure was cross-validated with a new sample and a new analytic approach—CFA—that is a more rigorous test of factor structure in that each cross-loading is fixed to zero (in contrast to EFA analyses, where each item may freely cross-load onto the other factors). Thus, the set of analyses carried out provide validity evidence in support of the proposed TJS measure (DeVellis & Thorpe, 2021; Furr, 2021; Messick, 1995).

Substantively, these factors appear to measure quite distinct domains such that the last two factors appear to measure opposition to TJ as opposed to its enactment. Our initial working hypothesis was that the items comprising the last two factors likely would measure the opposite of TJ enactment. However, the set of analyses reported here indicate that these two factors have nearly zero or modestly positive associations with the first two factors, contrary to our working hypotheses. We consider this further below.

Final Scale Version: Empirical, Substantive, and Consequential Perspectives

This research aimed to develop a measure of social justice–informed, culturally sustaining, and transformative pedagogy. Specifically, the TJS assesses how teachers understand the structural and historical bases of racism and how that understanding relates to their classroom practice. From a substantive perspective (e.g., Winn, 2023), the first two subscales—“Structural and historical views of racism” and “Cultural responsiveness and promotion of healing”—are advances toward that aim. The last two subscales—“Color-evasive views of race” and “Racialized disciplinary practices”—measure content quite distinct from the first two subscales. From the perspective of divergent validity, one would expect these last two subscales to have negative or inverse correlations with the first two factors (Furr, 2021). However, and unexpectedly, the last two factors had an essentially zero or modestly positive (yet nonsignificant) correlation with the first two factors.

From a critical quantitative perspective, the consequences of a measure are particularly important—a consequential validity consideration (Diemer et al., in press). In this case, the last two subscales measure a color-evasive view of race and racist perspective on school discipline, respectively. Given the empirical relationships observed, the substantive content of these last two items, and concerns about consequential validity (Messick, 1995, p. 745) for the use and promulgation of these last two subscales, we can only endorse use of the first two subscales. For example, one potential consequence would be for a user to use the third and fourth subscales in an attempt to measure the enactment of TJ pedagogy, which would be inaccurate and likely cause harm to students.

Thus, detailed information about the final version of this two-subscale TJS measure, including item descriptions and usage guidelines, is available in the User’s Guide in the online Supplementary Material. This two-factor version of the TJS aligns with the substantive perspective guiding this work, is supported by these empirical criteria, and includes considerations about the use and consequence of measures.

Discussion

This paper aimed to develop a scale assessing teachers’ perceived capacity to enact TJ pedagogy, the TJS, animated by a critical quantitative perspective (Diemer et al., 2025). The TJS measures how teachers understand the structural and historical bases of racism and how that understanding relates to their classroom practice. Exploratory and confirmatory factor analyses with distinct samples hewed to best practices in instrument validation (DeVellis & Thorpe, 2021; Furr, 2021) provide construct validity evidence for the TJS. By design, this sample was more racially and ethnically diverse than the teaching workforce and contained relatively similar levels of middle and high school teachers. This set of procedures provided validity evidence in support of using the TJS with racially diverse teachers across middle and high school settings and whom have varying levels of experience (Furr, 2021).

These analyses supported the literature’s assertion that TJ is multidimensional. Analyses led to the identification of four initial factors: structural and historical views of racism, cultural responsiveness and promotion of healing, color-evasive views of race, and racialized disciplinary practices. As reviewed in the preceding section, from a substantive and consequential perspective, the last two subscales cannot be supported. Thus, we are endorsing a final version of the TJS that is comprised of only the first two factors. Although the TJS two-factor structure is somewhat divergent from the original conceptualization of TJ pillars (Souto-Manning & Stillman, 2020; Winn, 2020; Winn & Winn, 2021), the content of these items supports and aligns with key tenets of TJ.

The TJS contributes to the literature by developing a self-report survey instrument that is more efficient and lower cost than observational measures of teacher practice (e.g., CLASS and PLATO) and mathematics classroom discourse (e.g., EQUIP) that are more time and cost intensive. Further, the TJS contributes an assessment of transformative, culturally sustaining, or social justice–oriented pedagogy, domains that are not well covered in existing observational measures. As equity-oriented scholars, we designed the TJS with the desire for transformative change in how teachers think about their relationships with young learners and build capacity for creating a culture in their classroom where students want to participate in meaningful ways. Jurow and Shea (2015) asserted that design research is “value oriented” and rooted in “consequentiality” (p. 287). In sum, we hope that TJS becomes a tool that teachers can use to hold themselves accountable by committing to stances that are rooted in supporting their students. TJS also seeks to become a part of efforts to scale for equity through potential research–practice partnerships in education by supporting school systems that want to build capacity for restorative justice work in schools (Cohen-Vogel et al., 2022).

Practice/Policy Implications

The pedagogic stances framework was developed as a guide to help educators navigate paradigm shifts through the stances of history matters, race matters, justice matters, and language matters (Souto-Manning & Stillman, 2020; Winn, 2020). However, as teachers engage in this deep reflective work, it is crucial to measure their growth. Studies have highlighted the need for evaluative tools that not only assess the effectiveness of RJ in reducing conflict but also capture the internal, equity-focused shifts occurring in educators themselves (Franco et al., 2024; Gregory et al., 2016b). The TJS helps to address this need, providing educators with a way to track their progress and the impact of their work on personal and systemic levels. That is, the TJS could be used as a reflective tool, in that teachers can self-assess their enactment of TJ via the TJS and identify areas of strength and growth. We did not collect evidence regarding the longitudinal sensitivity of the measure, but one direction for practice would be to use the TJS as a pre/post measure among preservice (or other) teachers.

Further, the TJS also may support teacher coaching initiatives. Research suggests that coaching can play a crucial role in reducing racial disparities in education by promoting more equitable classroom practices (Martin-Kerr et al., 2022). Similarly, the DoubleCheck intervention has demonstrated impacts on developing teachers’ capacity for equity-oriented practices (Debnam et al., 2024). Incorporating the TJS into coaching could provide educators with structured feedback, encouraging them to align their practices with equity goals. The TJS can serve as an aspirational guide, helping teachers to scaffold their learning, increase accountability, and clarify their goals on their journey toward a more just and inclusive classroom environment.

Bridging the gap between teachers’ self-perceptions and their actual practices is critical to advancing transformative justice in education. Scholars such as Chang and Cochran-Smith (2022) have noted that reflective self-assessment tools can challenge educators to confront implicit biases, encouraging them to align their behaviors with their stated beliefs. The TJS thus can function as both a mirror and a map, fostering critical reflection and providing actionable pathways for educators to deepen their commitment to equity and justice in their practice.

Limitations and Future Directions

Further, teacher self-reports regarding their own practice may be prone to social desirability and other forms of bias (DeVellis & Thorpe, 2021), particularly in the enactment of practice with minoritized students. This measure may tap into some mixture of teachers’ enactment of practice and their beliefs about their practice, which we cannot completely disentangle via a self-report measure. Related work documents some inconsistencies between teachers’ self-assessment of their practices and external observers’ assessments of their practice (Philip, Kothari, & Castro, 2024). CFA mitigates concerns with teacher self-reports (e.g., socially desirable responses) to some extent in that systematic residual variance shared between items can be estimated (Furr, 2021). Specific to these analyses, covarying the residual terms between sets of items (e.g., #3 and #5, #4 and #6, #7 and #11, and #15 and #17) attenuates the adverse impact of self-report among these pairs of items, although not the entire set of items. However, future work should further validate the TJS by examining its concordance with observational or other methods of assessing teacher enactment of TJ. Relatedly, a triangulated measure could assess students’ perspectives on how their teacher enacts TJ or an observational measure, wherein a trained observer rates teacher enactments of TJ.

Other measures were not administered in the data-collection protocol, and thus relations between the TJS and related measures (i.e., convergent/divergent validity) could not be estimated at this time. For example, scores on the TJS would be expected to correlate with scores on the Awareness and Concern about Bias (ACB) and Dispositions toward Discipline Practices (DDP) scales (Austin et al., in press), which would provide one important form of convergent validity evidence. Further, because our data collections were cross-sectional, test–retest reliability or longitudinal measurement invariance could not be examined and should be examined in future research. Relatedly, measurement invariance of the TJS across racial/ethnic and/or gender identities could not be examined in this study because of insufficient sample sizes of these identity categories yet would be an important direction for future research.

Summary and Conclusion

This paper details the development of a scale assessing teachers’ perceived capacity to enact transformative justice pedagogy, the Transformative Justice Scale (TJS), rooted in TJ theory and scholarship and comprised of two subscales. The TJS measure is time and resource efficient in that it uses teacher self-reports. The TJS contributes to the literature by providing an assessment of transformative, culturally sustaining, or social justice–oriented pedagogy, domains that are not as well covered in existing measures. Specifically, the TJS measures how teachers understand the historical and structural roots of racism and how that understanding relates to classroom practice. This measure also would support teacher professional development and coaching initiatives in that the TJS could be used as a pre- to post-evaluative tool to foster continuously reflective practice or as a way to provide structured feedback to educators regarding areas of strength and areas of growth. Future work should explore these potential applications as well as further develop teachers’ capacity to enact transformative and equity-oriented approaches to practice.

Supplemental Material

sj-docx-1-ero-10.1177_23328584251394657 – Supplemental material for Development and Initial Validation of the Transformative Justice Scale: Assessing Teachers’ Capacity for Transformative Practices in Education

Supplemental material, sj-docx-1-ero-10.1177_23328584251394657 for Development and Initial Validation of the Transformative Justice Scale: Assessing Teachers’ Capacity for Transformative Practices in Education by Matthew A. Diemer, Maisha T. Winn, Lawrence T. Winn, Wendy de los Reyes, Gabrielle Kubi and Thomas M. Philip in AERA Open

Supplemental Material

sj-docx-2-ero-10.1177_23328584251394657 – Supplemental material for Development and Initial Validation of the Transformative Justice Scale: Assessing Teachers’ Capacity for Transformative Practices in Education

Supplemental material, sj-docx-2-ero-10.1177_23328584251394657 for Development and Initial Validation of the Transformative Justice Scale: Assessing Teachers’ Capacity for Transformative Practices in Education by Matthew A. Diemer, Maisha T. Winn, Lawrence T. Winn, Wendy de los Reyes, Gabrielle Kubi and Thomas M. Philip in AERA Open

Footnotes

Acknowledgements

Thank you to Stacey Cabrera, John-Solomon Miller, and Matthew Truwit for their contributions to and comments on earlier versions of this manuscript.

Declaration of Conflicting Interests

The authors declare no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This paper was supported by a grant from the Spencer Foundation (#202000238) awarded to the first, second, third, and sixth authors.

Open Practices

Notes

Authors

MATTHEW A. DIEMER is chair of the Combined Program in Education and Psychology (CPEP) and professor of educational studies and developmental psychology (by courtesy) at the University of Michigan. His research interests include critical quantitative methodology and critical consciousness.

MAISHA T.WINN is the Excellence in Learning Professor at Stanford University and faculty director of the Stanford Accelerator for Learning’s Equity in Learning Initiative. Her scholarship examines how nondominant youth and communities have developed literate trajectories across a range of historical and contemporary settings within and outside formal schooling.

LAWRENCE T. WINN is an associate professor of teaching in education, Chancellor’s Leadership Professor, and executive director of the Transformative Justice in Education Center. His research examines race, justice, critical consciousness, foresight, and how Black youth acquire social capital in out-of-school learning spaces.

WENDY DE LOS REYES is an assistant professor of psychological science at Claremont Kenna College. Her research interests include (a) how youth resist structural inequities through supportive mentoring relationships, (b) how adults partner in youth-led social change, and (c) how institutions expand youth voice to address social inequities.

GABRIELLE KUBI is a recent graduate of the Combined Program in Education and Psychology (CPEP) at the University of Michigan. Her research interests include the development of intersectional awareness among Black girls and women along with how educational spaces can be reimagined to support young Black people’s sociopolitical and identity development.

THOMAS M. PHILIP is a professor at the Berkeley School of Education, where he also serves as the faculty director of the Berkeley Teacher Education Program. He studies how ideology shapes learning and how learning is a site of ideological contestation and becoming.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.