Abstract

This concurrent mixed-methods study descriptively explores teacher residency programs (TRPs) across the nation. We examine program and participant survey data from the National Center for Teacher Residencies (NCTR) to identify important TRP structures for resident support. Latent class analysis of program-level data reveals three types of TRPs (locally funded low tuition, multifunded multifaceted, and federally funded post-residency support), while regression models indicate significant relationships between individual program structures and participant (residents, graduates, mentors, and principals) perceptions. Qualitative analyses of multiple open response items across participants details four salient TRP structures: providing extended clinical experience, localizing individual support, offering programmatic training, and teaching practical professional knowledge. Findings inform policymakers on TRP investment, practitioners about program design, and researchers about continued large-scale evidence.

Keywords

Introduction

Teacher residency programs (TRPs)—designed to enhance the recruitment, preparation, and retention of teachers for high-needs schools (Silva et al., 2014)—have proliferated throughout the United States (Worley & Zerbino, 2023). These programs place diverse teachers in underserved districts and offer a suite of support for educator success (Azar et al., 2020; Gist, 2019). Preliminary evidence suggests TRPs are positively associated with increased student achievement scores and teacher retention (Papay et al., 2012), alongside decreased long-term district costs (Worley & Zerbino, 2023). These distinct benefits could curb the increased postpandemic teacher turnover (Goldhaber & Theobald, 2023) and persistent new teacher attrition rates (Papay et al., 2017). Correspondingly, the U.S. Department of Education has invested nearly $350 million in TRPs since 2014. 1

Given such significant financial investment, interest in and empirical evidence on the effect of TRPs is growing. A great deal of existing research describes the need, theory, and purpose of TRPs (Gist et al., 2021; Solomon, 2009), while other studies have examined various perspectives and experiences within residencies (Chu, 2019, 2021; Kwok et al., 2023; Mitani et al., 2022). However, there is less evidence examining across TRPs, as many prior studies focus on single programs or particular states and districts. These prior works inform a burgeoning groundwork outlining central structures 2 of TRPs, but given the variation across programs (Wasburn-Moses, 2017), less is known about peripheral structures that define this heterogeneity.

Our concurrent mixed-methods study leverages data from 39 TRPs in the National Center for Teacher Residencies’ (NCTR) network for 2021–2022. We explore program and participant surveys to identify important TRP structures through latent class analysis and qualitative coding of open response items, respectively. We also examine the relationship between program structures and participant experiences to understand the potential effects of TRP design. This study contributes foundational evidence about TRPs and illuminates vital program structures that inform policymakers and program administration. The research questions guiding our work are:

What characterizes different types of teacher residency programs?

To what extent are programmatic structures associated with participant perceptions?

How do participants describe salient structures of their teacher residency programs?

Literature Review

Central Structures and Effects of Teacher Residency Programs

Teacher preparation programs and policymakers have pursued assorted approaches to diversify the teacher pipeline and to mitigate teacher attrition. One approach has been through TRPs, which offer prospective teachers increased support for enhanced training and often are intentionally designed to recruit and prepare teachers of color (Azar et al., 2020). Amidst a range of implementations of TRPs, the Office of Elementary and Secondary Education provides a federal definition: a TRP is a school-based teacher preparation program in which a prospective teacher:

For not less than one academic year, teaches alongside an effective teacher, as determined by the state or local educational agency, who is the teacher of record for the classroom;

Receives concurrent instruction during the year, through courses that may be taught by local educational agency personnel or by faculty of the teacher preparation program, and in the teaching of the content area in which the teacher will become certified or licensed; and

Acquires effective teaching skills, as demonstrated through completion of a residency program, or other measure determined by the State, which may include a teacher performance assessment.” 3

TRPs seek to require more and longer training to sustain beginning teachers. This framework starkly contrasts with the expansion of alternative certification pathways, which reduces training and barriers to the profession. That is, amidst trying to fill large numbers of vacancies by placing many individuals as teachers of record as quickly as possible—often through an emergency credential (Grossman & Loeb, 2008)—TRPs take the opposite approach in enhancing training for fewer individuals to stay longer. Notably, TRPs typically encompass, but can differ from Grow Your Own (GYO) programs, which are often grassroots programs that focus on the academic and professional development of teachers of color within a local community (Edwards & Kraft, 2025; Gist, 2019). While there is significant benefit in hiring homegrown teachers (Redding, 2022), TRPs is a broader category that can consist of additional measures to support teacher recruitment, preparation, and/or retention that some, but not all GYO programs, might employ. For this manuscript, we include GYOs as a subcategory of TRPs and hope that both types of programs can continually learn from one another.

Evidence suggests the advantages of essential structures of TRPs. Most prominently, studies have identified the benefits of prolonged clinical training, where longer clinical teaching affords preservice teachers (PSTs) to “experiment with specific and concrete strategies under realistic conditions” (Pankowski & Walker, 2016, p. 4) and have increased exposure to real-world curriculum and pedagogical development (Klein et al., 2013). Extra time also provides increased opportunities to connect practice to theory through aligning coursework (Dennis, 2016; Guha et al., 2016), allowing residents to directly apply concepts and skills in further depth than in teacher education programs, ultimately enriching residents’ learning development (Gatti, 2019). Examining administrators, Berry et al. (2008) find a stated increase in recruitment of residents of color into hard-to-staff schools, resident retention, and benefits to mentors compared to other first-year teachers. These findings are echoed in other studies (Roegman et al., 2017) and extended through additional outcomes of reduced deficit thinking (Garza & Harter, 2016), increased sense of preparedness and commitment (Chu & Wang, 2024), and ultimately student achievement (Papay et al., 2012).

Peripheral Structures of Teacher Residency Programs

However, studies demonstrate variation beyond essential TRP program structures, leading to divergent learning opportunities (Garza et al., 2013). For instance, Wasburn-Moses (2017) examines 37 TRPs across 15 states and DC from publicly available materials and finds that certain structures substantially differed by program. In particular, the author suggests that post-residency induction and the alignment between course and fieldwork vary or may be especially difficult for certain programs to implement.

Other sources of structural variation may exist across TRPs, including the strength of district partnerships or the extent to which TRPs and districts work in tandem to ease PSTs into teaching (Kennedy & Hendrickson, 2019) and provide on-site professional development focused on emergent and contextually bound training (Hammerness & Craig, 2016; Miller & Strachan, 2020). Another source is program funding and resident financial support. For example, TRPs can offer residents different monetary incentives, often in exchange for commitment to future employment in the sponsoring district (Guha et al., 2017). Lastly, TRPs may adopt different mentor selection and matching processes with variation in the quality of mentoring and instructional coaching provided to residents (Garza et al., 2013; Guha et al., 2017).

Our study aims to further explore potential variation in structures among TRPs to build on existing literature. This work is comparable to Truwit et al. (2024), who examine TRPs across Tennessee to find distinctive features across programs and over time (e.g., year-long clinical placement) and ones that were more peripheral in nature (e.g., resident placement). We hope to extend this work through a more national representation of TRPs. Chu and Wang (2024) show that the evidence base for TRPs is predominantly qualitative and focused on current residents and call for more research on graduates and other TRP participants, greater use of mixed methodologies and multiple data sources, and incorporating outcomes that can speak to resident development, among other things. Furthermore, the few large-scale, quantitative studies of TRPs that exist provide mixed findings. For example, Silva et al. (2014) examine 30 programs receiving federal Teaching Quality Partnership grants, finding they slightly broaden and increase entry into the profession for individuals who have worked a full-time job prior to teaching, but that other characteristics of TRP teachers, such as race/ethnicity and retention rates, are similar to non-TRP teachers. Our work seeks to expand and address these issues.

Data and Methods

We leverage restricted data from surveys administered by the National Center for Teacher Residencies (NCTR) in 2021–2022. Founded in 2007, NCTR is the “only organization dedicated to developing, supporting, and accelerating the impact of teacher residencies” and is committed to building and developing “teacher residencies as a lever to prepare, support, and retain effective educators who represent and value the communities they serve.” NCTR provides programming and consulting to a network of TRPs across the country. The NCTR Network in 2021–2022 included 46 members (TRPs) in 26 states that collectively enrolled over 2,000 teacher residents nationwide, illustrating their overall scope and impact.

The data come from two NCTR-administered surveys: 1) program surveys of its 2021–22 members and 2) participant surveys of principals, teacher residents, mentor teachers, and graduates. Program surveys provide administration-level information about characteristics of that residency; 39 of 46 programs completed their survey for an 85% response rate. The study utilizes closed-ended items from the participant surveys, which are distributed for or on behalf of programs, and include demographic information and Likert-scale measures of participants’ satisfaction with their program and ratings of resident and graduate preparedness. These surveys are voluntary for both programs and their participants, leading to variation in representation; NCTR estimates that, on average, 70% of residents and mentors, 40% of principals, and 30% of graduates across programs completed surveys. However, an exact response rate cannot be identified because programs do not report the total number of qualified individuals by role.

Participant surveys contain open-response items utilized for this study. This includes, “How or in what ways has your program supported or prepared you well for being a teacher?” for residents and graduates. Other similar questions are:

What can your program do to better prepare residents to be teachers? (Residents)

Please share any additional thoughts you’d like to share about your program. (Residents)

What can your program do to improve the clinical experience for residents (i.e., the experience of working in a classroom for a year with a mentor teacher)? (Residents)

What suggestions do you have for how the residency program can improve? (Principal)

What can the program do to better prepare residents to be teachers? (Mentors)

Though there is some discrepancy across these items, they collectively offer valuable insights into TRP structures. Overall, these data represent the most wide and comprehensive evidence to date on TRP characteristics and participant experiences, enabling us to provide an overview of types of TRPs and their defining characteristics, how programs like these are associated with participant experiences, and how participants describe the most supportive aspects of their TRPs.

Data Analysis

Given the large amount and disparate types of data, we take a concurrent mixed-methods approach (Tashakkori & Teddlie, 2021). This approach allows for combining quantitative analysis of program surveys and quantitative and qualitative analysis of participant surveys. We explain each in the following sections.

Quantitative Analysis

We first explore the extent to which there may be unifying elements across residency programs. We use latent class analysis to examine which typology may exist among residency programs based on observable characteristics. In other words, we classify residency programs into mutually exclusive groups that are similar on some unobserved construct based on their observable patterns and characteristics (Denson & Ing, 2014). 4 Then, we theorize potential factors that may inform how participants view the successes and challenges of residency programs. We employ exploratory factor analysis to identify underlying factors for each participant type (i.e., residents, graduates, mentors, principals). 5

After characterizing TRPs and the stakeholders within, we investigate the extent to which the type of residency program and the specific programmatic characteristics are associated with these participant factors. Our linear regression model is:

Y represents one of the factors for each of the participant types of resident, graduate, mentor, or principal i in resident program p. ProgramChar is a vector of observable programmatic features of each residency program; γp is a residency program–type fixed effect that is included to account for unobserved differences among residency programs; eip is the error term. We use heteroskedastic-robust standard errors in all analyses.

Qualitative Analysis

To cohesively analyze the open response survey items, we create a standard unit of analysis about defined programmatic support (Frey, 2022). The content of interest identified what and how a program helps residents to develop ultimately. This includes the provision of personnel or specific knowledge to help these teachers grow in pedagogy or the profession and centers on what a residency offers or, conversely, what a residency should provide more of or provide altogether. The standard unit of analysis excludes information about the effect of the structure. For instance, participants may go into detail about how they changed their pedagogy. Rather, we are focused on what or who within the program was named by the participant to understand where the benefits derive. We include descriptions of these structures but avoid information about the aftereffects. Relatedly, we negate information about the resident or the individual themselves. Our attention is on learning about residencies, so personal information about the individual participant did not contribute to answering our research questions.

Our unit of analysis spanned across survey items, despite some conceptual differences. The first set of items proactively asks for support. The unit translated directly by pulling out the supports directly listed throughout responses. The second set of items was slightly tangential, inquiring about recommendations for improvement. In these responses, participants often address structures that were deficient or problematic and thus require improvement. While there can be a difference in tone (what was helpful versus what needs improvement), we felt like there was a conceptual overlap in identifying a residency structure. 6 With regards to beneficial programmatic characteristics and perceptions, there were examples of participants stating the lack or poor quality of certain structures. For instance, when residents were not supported by mentors or had bad placements, we still coded the structure (i.e., mentor teacher, placement). However, we also identified data that showed the absence of a structure was noticeable for residents not to grow (or develop enough) during their program. This was salient in the second set of survey items, particularly in principals, 7 who wanted more from residency programs.

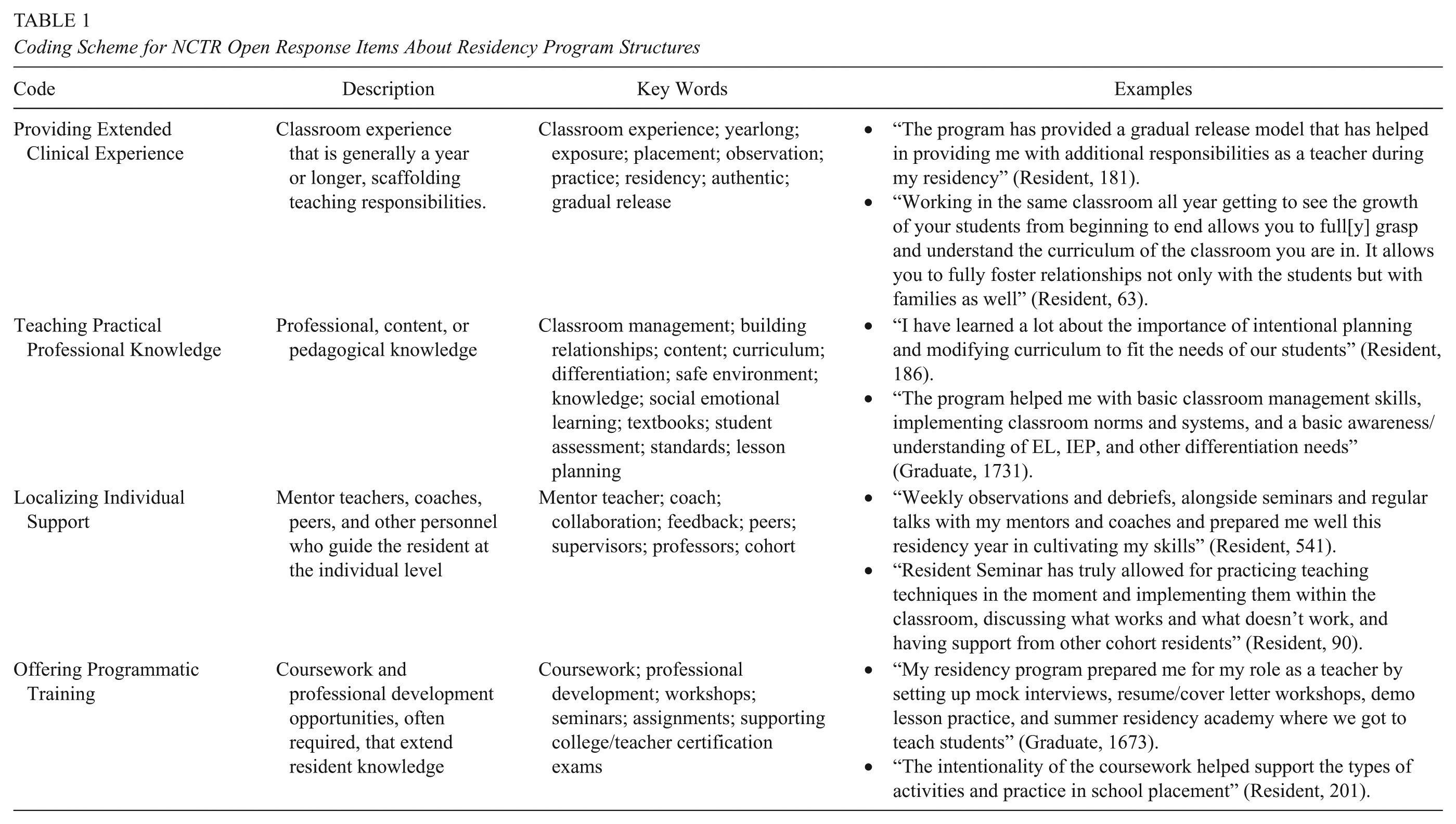

After establishing our unit of analysis, we open-coded a random subset of responses from each survey item. We pull out units (residency structures) and then conduct a process of axial coding (Miles et al., 2020) to group together similar structures. From this, we establish an initial coding scheme. Altogether, we design the scheme from 100 of each of the initial two questions and 50 from the remaining five items for a total of 450 responses. 8 We use constant comparative analysis (Glaser & Strauss, 2017) by reexamining the original data to ensure our interpretations of codes are reliable. From this initial scheme, we analyze all responses and update the scheme as necessary, adjust descriptions, and identify exemplars. Upon coding all data, we finalize our coding scheme, shown in Table 1.

Coding Scheme for NCTR Open Response Items About Residency Program Structures

We test the validity of our analyses in several ways. First, we conduct interrater reliability tests at two different points: establishing our unit of analysis and applying our initial coding scheme. We assess 10 random data pieces, achieving 80% or greater in similarity; otherwise, we discuss and test again until we meet the mark. We also seek disconfirmatory evidence, which are self-reported negative structures and experiences. While we focus on structures regardless of sentiment, this verifies the same TRP codes throughout analysis.

Results

Quantitative Analysis

RQ1: What characterizes different types of teacher residency programs?

TRP latent classes

Latent class analysis and corresponding fit indices statistically categorize program variables into separate latent classes (Appendix A). Overall, the results indicate that three latent classes for the residency programs as the p-value for the fourth class is insignificant and the decreases in AIC, BIC, and log likelihood are much smaller relative to the change from two to three latent classes. We use these fit indices to guide our decision—given our exploratory nature with relatively small sample size—alongside our professional judgment to suggest three distinct classes. Conceptually, we label classes as locally funded low tuition; multifunded multifaceted; and federally funded postresidency support programs. Importantly, these labels provide generalizations in helping to define programs and are not meant to be universally distinct in description. We highlight salient differences by class, as shown in Figure 1.

Program characteristics by residency type.

Locally funded low tuition TRPs often have the greatest number of accepted applications and highest number of applicants of color and residents. The average resident stipend, tuition paid, and non-stipend spending are lowest among the three types; relatedly, most of these programs receive some support from local and state but no support from the federal government. Moreover, only 20% of programs provide post-residency in-person coaching, and 30% provide post-residency professional development. These types of TRPs likely highlight cost-efficient TRPs to address larger numbers of vacancies.

In comparison, multifunded multifaceted TRPs receive fewer applications and enroll fewer residents. Simultaneously, the average resident stipend, tuition paid, and non-stipend spending are higher than the previous programs with an average of $21,000 for resident stipend and $15,000 for average tuition paid. These are financed through the multiple funding sources where almost all receive local and state funding, and most receive federal funding. The multifaceted component refers to how 85% of these programs provide licensure and have higher percentages of a GYO focus, pre-K focus, and/or incorporating special education. About 45% and 70% of programs offer post-residency in-person coaching and professional development, respectively. These multifunded multifaceted TRPs represent over 50% of the sampled programs, indicating the most common structural design of TRPs.

Lastly, federally funded post-residency support TRPs have, on average, a similar resident stipend of $21,000 but with residents only paying $7,000 in tuition. These programs all receive federal money and the vast majority also receive local and state funding. These funds likely account for the stark differences to the other two types of programs, where 89% of these programs provide licensure, and all provide both post-residency, in-person coaching and post-residency professional development.

In sum, these descriptive results suggest important variation in structural characteristics among residency programs, with the description of the key characteristics in Appendix B and the full set of descriptive variables of each type shown in Appendix C. As observed in Appendix C, there are clear differences in enrollment (e.g., number of applications), financial (average amount of resident stipend), and program characteristics (e.g., length of residency or post-residency supports) among the three different types of residency programs.

Factor analysis of participants’ responses

Throughout the four separate participant surveys (residents, graduates, mentors, principals), we seek to reduce items into coherent constructs about participant TRP experiences. We employ factor analysis in which Scree plot and eigenvalues (Appendix D) strongly suggest the following constructs:

Resident factors a. Support, feedback, and coursework received in the TRP b. Self-reflections of pedagogical self-efficacy c. Perceptions of hosting school community d. Evaluation of mentor teacher

Graduate factors a. Pedagogical self-evaluation b. Reflections of TRP preparation

Mentor factors a. Informal evaluation of resident effectiveness b. Perceptions of TRP programmatic support

Principal factors a. Informal evaluation of TRP graduate effectiveness b. Informal evaluation of resident effectiveness c. Perceptions of TRP effectiveness d. Perceptions of TRP programmatic support

Factor loadings, Cronbach alphas, and factor score indeterminacy results (Appendix E) suggest the validity of these factors (Beauducel, 2011; Tavakol & Dennick, 2011), where higher scores indicate stronger agreement that these factors are more effective. These factors also have high face validity, as the questions under each factor are generally about the same or highly related topic. For instance, the residents’ pedagogical self-efficacy factor includes questions such as whether the residents felt they were prepared to teach the subject matter or to use student data. Altogether, there is substantial statistical and conceptual evidence for these factors.

RQ2: To what extent are programmatic structures associated with participant perceptions?

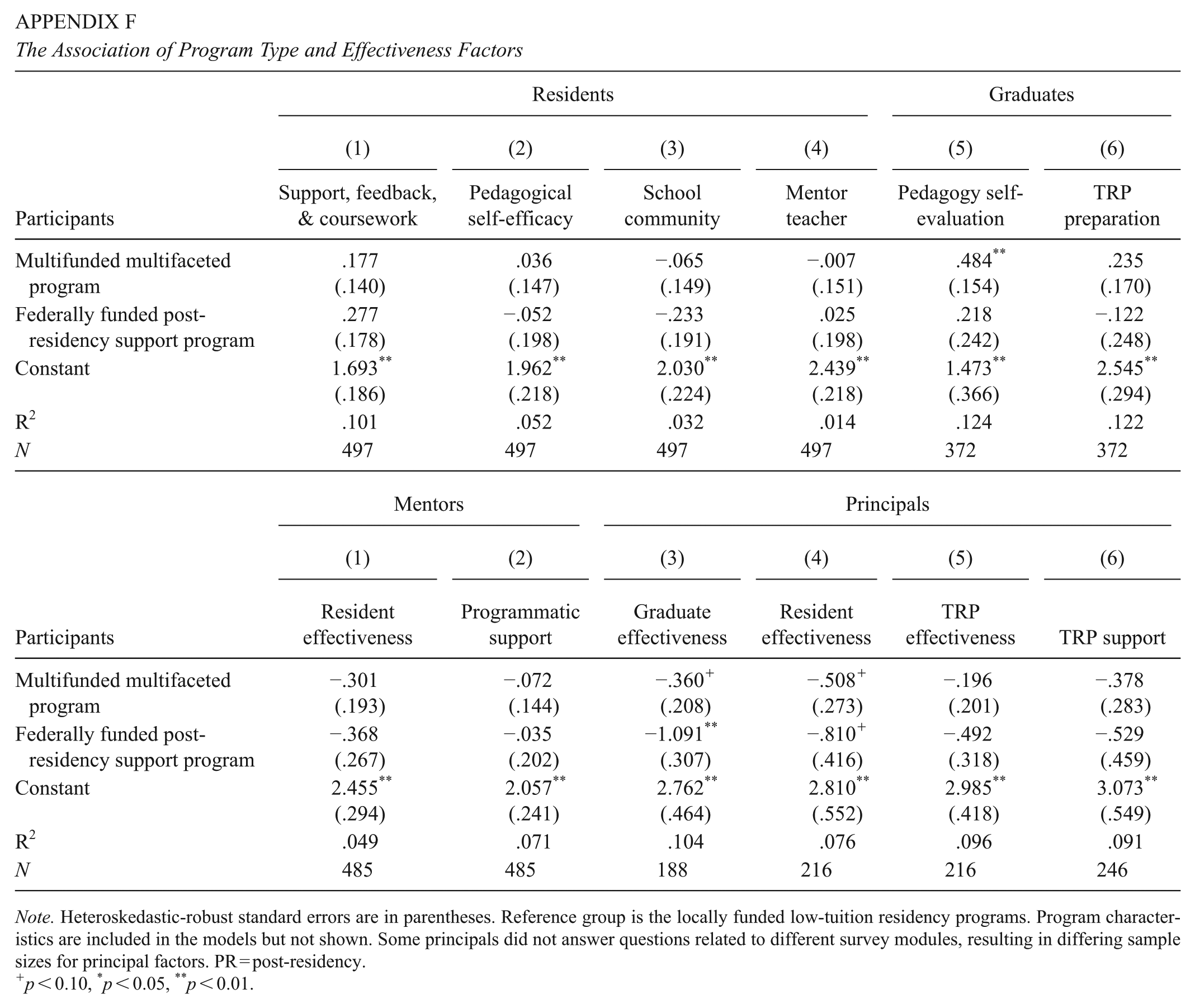

Upon establishing constructs of participant experiences and types of TRPs, we probe whether there is a relationship between the two. In Appendix F, we examine whether participant experiences are a function of TRP class while controlling for program characteristics and surprisingly find few significant relationships. 9 While program types by themselves may not explain participants’ perceptions, the specific programmatic features may, which we examine next.

In Table 2, the observable programmatic features explain residents’ perceptions of the TRP’s support, feedback, and coursework, but little to none of the other factors (Models 1–4). For instance, in Model 1, residents from TRPs that receive federal funding (.656 SD), focus on pre-K (.439 SD), provide licensure/teacher certification (.763 SD), and offer post-residency virtual coaching (.333 SD) are more positive about their programs’ ability to provide support, feedback, and coursework than their peers. Conversely, programs with post-residency professional development or coursework or are part of a GYO program are rated more negatively than those without these features. These programmatic features are mostly not significantly associated with the residents’ pedagogical self-efficacy, school community, and mentor teacher factors.

The Association of Program Characteristics and Preparation Factors for Residents and Graduates

Note. Heteroskedastic-robust standard errors are in parentheses. All models employ program fixed effects.

p < 0.10, *p < 0.05, **p < 0.01.

This changes, however, for graduates of residency programs (Models 5 and 6). Similar to residents, graduates rate their pedagogy and TRP preparation higher in programs with post-residency virtual coaching and other post-residency support than those without these features. Graduates of GYO programs also view their pedagogy and TRP preparation about one standard deviation less positively and more than half a standard deviation less positively than those offering post-residency professional development or coursework.

This theme of post-residency programming is echoed for mentors and, to a lesser extent, principals, as shown in Table 3. Mentors for GYO programs, on average, rate programs with required post-residency coursework worse than those without (Models 1 and 2). Mentors evaluate GYO programs worse than non-GYO programs, as well as report worse program support as the number of residents increases but better program support for residency programs receiving federal support (Model 2). Interestingly, none of the observable programmatic features of residency programs explain how mentors view residents’ effectiveness. For principals (Models 3-6), they tend to view programs receiving federal funding more positively than those without, potentially speaking to the question of available resources and how they are used. Principals also view programs with local and state funding much more positively than those without. Little else is significant across principal factors.

The Association of Program Characteristics and Preparation Factors for Mentors and Principals

Note. Heteroskedastic-robust standard errors are in parentheses. All models employ program fixed effects.

p < 0.10, *p < 0.05, **p < 0.01.

Qualitative Analysis

RQ3: How do participants describe salient structures of their teacher residency programs?

We simultaneously explore TRP structures through participant voices. We describe each of the four structures through illustrative quotes across participants.

Providing extended clinical experience

Participants express the benefits of having classroom experience of a year or longer to help them learn, develop, and scaffold teaching responsibilities. Residents consistently appreciate “residency in which [they] teach in actual schools for the year” before independently entering the field (G1553 10 ). Not only did this give them “a great way to experience teaching in a low-risk setting and gain more confidence in front of the classroom” (G1557), but it also provides residents with a full year “to see how expectations are set, how students develop over the course of a year academically, and how relationships are built over time” (R33). These lengthy periods in the classroom allow for “the total immersion in a classroom from the first day to the last day” (R220).

With extensive periods of time in the classroom, residents could slowly transition into teaching responsibilities. Multiple residents explain the benefits of “having a year-long student teaching experience with a gradual release model” (G1817), which means a “release of responsibilities, coupled with the multitude of opportunities to try new techniques related to management, instruction, or assessment/grading” (R548). Rather than thrusting novices into professional responsibilities too quickly, “having the space to learn both pedagogy and practice has helped our resident tremendously and has afforded the opportunity for a gradual release into the classroom” (A778). Then, through these frequent and realistic opportunities to “practice and reflect with over the course of the school year” (R7), residents can confidently implement acquired knowledge and experiences into their teaching. As best illustrated by one resident: The hands-on experience of being inside the classroom almost every day and leading content blocks has left me feeling very prepared to lead my own classroom. I’ve had plenty of “at bats” throughout the year in addition to the takeover days/weeks; these have allowed me to become more comfortable leading solo and implement more teaching strategies as this comfort grew. (R272)

Localizing individual support

Various personnel guide the resident development, including mentor teachers, coaches, and peers. The most consistently stated individual is the mentor teacher who would direct the resident in professional learning, such as the “process in preparing, handling meetings with parents, contacting parents, grading, etc.” (R44), and “develop great transparency with the residents so they can express difficulties or challenges within the classroom” (M1441). Mentor teachers could also provide candid feedback to “improve [resident] teaching practices” and “allowed me to grow and adopt effective [t]eaching strategies” (R577), creating “opportunities for us to engage in rich conversations related to instructional practices while providing feedback when needed in order to implement them in the classroom” (R3). Ultimately, these mentors enable residents to “walk away feeling completely confident in my abilities to be the best, most effective teacher that I can be” (R288).

Other individuals supported residents, often at the initiative of the TRP. Residents would state that their program “has created a network of educators that can lean on each other for support to move forward” (R485) by building a community, offering “many opportunities to collaborate with fellow peers” or making sure someone is “available to assist us in any way possible when needed” (R275). There are program coaches who “made a really big impact on why and how I would be able to help in the classroom” (R1886), who are “always available and willing to help navigate any questions or situations” (R612). And even at benefit for these coaches, “they are afforded a unique opportunity to continue their growth as reflective practitioners [sic] while positively promoting the profession” (A829). Altogether, there are “professionals within my program have, on many occasions, given me feedback that was encouraging and supportive of my continued professional development” (R417), ensuring a network of individuals to help the resident succeed.

Offering programmatic training

Educators state the benefits of required coursework and professional development opportunities. This includes “professional learning days and pipeline workshops [that] have provided numerous opportunities for growth as an educator” (R79). Programs offer “seminars to educate further on how to provide an equitable education to students and to promote change, among various other pedagogical skills” (R65). Residents highlight their opportunities to participate in a “break out group where [they] practice how to teach a lesson and write a lesson plan” (G1827), providing authentic practice. Certain programs even had mentor seminars that “enhanced my teaching practice and mentoring skills” (M933) and “learned new strategies and hear[d] from other mentors what works for them” (M1035).

Beyond professional development, “the coursework and placement experiences have aligned throughout the year to reinforce knowledge and skills learned” (R100). Residents express that the “assignments and projects from the courses have allowed me to really reflect and analyze the way I plan and teach students” (R166), through “engaging instruction, assessment, lesson differentiation, and how to emphasize a safe learning environment” (R593). One graduate states that they “still utilize many of the STEM challenges, ice breakers, and workshop models to prep lessons for my class” (G1800), indicating the longevity of this learning.

Teaching practical professional knowledge

Participants describe types of knowledge gained throughout residency, such as content, pedagogical, and general professional knowledge. 11 Most prominent is lesson planning. TRPs have “prepared me to be an educator by giving me the responsibility of creating and teaching lesson plans” (R29), largely because they “have gained a lot of experience working in teams to create and modify lessons for all students based on their learning needs” (R562). Residents feel that “the combination of learning how to work well with the curriculum and focusing [on] how to effectively deliver it” (R450) makes them well prepared for their full-time responsibility.

There is also a range of other pedagogical topics stated across participants. Residents were “able to see the interaction and content knowledge moves that I did in the classroom” (M1499), “developed relationships with staff/students” (A878), alongside gaining skills to “establish norms and procedures that better prepared [them] for classroom management” (G1788)” and how “to create a positive, nurturing, rigorous, and loving classroom environment . . . by providing me with tools and examples to implement these expectations and scaffolds in [their] own classroom” (R298). Instructionally, there is mention of “implementing high quality instructional material, and professionalism among students and peers” (R215) in conjunction with “developing professionalism, effective communication, facilitating assessments, analyzing data, planning for instruction, and writing lesson plans” (G1870).

There are also related personal skills taught through TRPs. Residents learn the importance of “self-awareness as well as how to advocate for my mental health needs” (R518), where they said that “the program has taught me the importance of support and asking for help when I need to. They also have helped me see the importance of reflection and looking at the growth that I’ve made over the last year” (R328). This introspection is reiterated in other ways, such as “the importance of self-reflection to address my positionality and biases and how they could impact my students” (R209) and “incessantly reflective and evaluate my own biases as well as to challenge the biases of others who will serve students that are marginalized and disenfranchised” (R486).

Discussion

Our concurrent mixed-methods study illuminates a snapshot of the variation between and associations with TRP structures and experiences. We analyze program-level surveys to categorize TRPs and participant-level surveys to understand structures among TRPs, and identify relationships between program characteristics and participant experiences. Our results suggest several important findings.

Essential TRP structures are salient across participants. Surveys of residents, graduates, mentors, and administrators collectively reveal the importance of TRPs providing extended clinical experience, localizing individual support, offering programmatic training, and teaching practical professional knowledge. These results illuminate necessary perspectives across TRP roles (Chu & Wang, 2024) and confirm evidence of relevant coursework and rigorous yearlong placement as critical to resident success (Chu, 2021; Mourlam et al., 2019). Increased clinical teaching (Pankowski & Walker, 2016), training (Miller & Strachan, 2020), professional knowledge (Hammerness & Craig, 2016), and mentorship (Guha et al., 2017) are all also mentioned, extending these descriptions across multiple perspectives throughout TRPs. This highlights how particular TRP structures are central to participant experiences.

Meaningful variation exists across TRPs. Statistically, programs group together based on funding, focus, and type of support, with three types of TRPs emerging: 1) locally funded low tuition; 2) multifunded multifaceted; and 3) federally funded post-residency support programs. These results suggest important categorization across programs and the potential for more apt comparisons across programs. However, little to no associations exist between the program types identified here and participant experiences, possibly hinting at the relatively high NCTR standards for accepting TRPs into their network (i.e., only exhibiting central components of a residency). While program funding, foci, and post-residency support are important for differentiating programs, they loosely influence participant experiences. Post-residency support, in particular, had some significance throughout models with mentors and graduates, pointing to a potential area that could noticeably differentiate TRPs. As a structure less discussed throughout the literature—Truwit et al. (2024) do not even include data in this area—our finding provides some initial importance of post-residency support that both researchers and practitioners should consider investing effort into.

Rather, variation in participant experiences could be explained instead by more specific TRP characteristics. This includes the positive association between residents’ and graduates’ feelings of support and post-residency virtual coaching. This reiterates the importance of coaching (Hobson et al., 2009), particularly in the virtual format (e.g., to accommodate educator schedules, normalization since the pandemic), though it could be explained by the desire to reduce professional responsibilities after residency. Additionally, mentor and principal factors are largely significant in how the TRP received funding. This could be related to whether these participants receive compensation for their work or in seeing that their residents and graduates receive adequate financial and professional support, which echoes our qualitative findings and prior works (e.g., Yun & DeMoss, 2020). This could also be due to the observed resident time spent on program responsibilities rather than experiences or efforts invested in the classroom. Finally, TRPs that were part of a GYO are negatively associated with resident and graduate experiences. We have little explanation beyond hypothesizing that GYOs offer less overall support for residents because of the assumption that they were already familiar with the local context, or potentially, the wide variation in definitions or how programs define themselves as a GYO (Edwards & Kraft, 2025). More needs to be examined in differentiating these types of programs, as well as identifying the outcomes in matriculating through each type of program.

Limitations

There are several limitations bounding our findings. First, data come from NCTR Network member programs and are not representative of all residency programs. There is a financial cost to participate and receive NCTR support. More importantly, NCTR intentionally works with only TRPs that align with their organizational values and exhibit essential components of an effective TRP that also reduces barriers discouraging aspiring educators from being able to enter or remain in teaching. This potentially limits and restricts the variation that might exist across programs in the data. In addition, while this ensures the authenticity and quality of the programs, the data may not represent the universe and wider variation among TRPs that might exist. Federal data or other national databases are needed to better understand the broader range of TRPs.

Second, program and participant survey data are self-reported. While our mixed methods approach of multiple data types and sources helps ensure the validity of the findings, we cannot rule out potential biases. Access to administrative records and more precise data collection across programs (e.g., triangulating results with interviews and observations) could strengthen findings.

Third, some quantitative analyses rely on small, potentially unrepresentative samples. Participant surveys had less than full response rates, which vary by program, so the data may not be representative of all program participants. For sample sizes, specifically, principal factors were substantially smaller than for other groups, which decreased the power to detect significant relationships among these programmatic features and principals’ perceptions. Given similarities in factors across participants, we feel confident in these results, but additional data are needed.

Implications

We believe our findings have important implications for research, practice, and policy. Policymakers should ensure that all TRPs are unified in embodying a greater number of essential program characteristics. Particularly when state and federal funds are involved, TRPs need to centralize on elongated clinical training, quality mentorship, and aligned coursework. But even more so, TRPs could be further specified through an expectation of training that is context-specific and that builds practical, professional knowledge.

Practitioners also need to consider localized structures in building or adjusting TRPs. Programs should consider how funds are best utilized and to what extent they would want to offer various post-residency supports and lean into certain focal areas (e.g., pre-K, SPED). Much of this should derive from thoughtful collaboration between districts, teacher preparation programs, and TRPs to design a residency program according to contextual and historical needs and available funding sources. Afterwards, though, participants’ experiences should be weighed relative to what the program offers.

For researchers, there remains a need to analyze TRPs from a large-scale perspective. With continued evidence examining the effect of TRPs beyond singular programs and geographies, the impacts of financial investment can be enhanced. Broader perspectives and examinations into important student and teacher outcomes, and the resources and capability to analyze and make connections across differing data and measurement systems, are vital. This needs to be prioritized within and across TRPs, focusing on connecting student, resident, and program-level data to make important connections to extend the value of TRPs.

Footnotes

Appendix

The Association of Program Type and Effectiveness Factors

| Residents | Graduates | |||||

|---|---|---|---|---|---|---|

| (1) | (2) | (3) | (4) | (5) | (6) | |

| Participants | Support, feedback, & coursework | Pedagogical self-efficacy | School community | Mentor teacher | Pedagogy self-evaluation | TRP preparation |

| Multifunded multifaceted program | .177 (.140) |

.036 (.147) |

−.065 (.149) |

−.007 (.151) |

.484

**

(.154) |

.235 (.170) |

| Federally funded post-residency support program | .277 (.178) |

−.052 (.198) |

−.233 (.191) |

.025 (.198) |

.218 (.242) |

−.122 (.248) |

| Constant | 1.693

**

(.186) |

1.962

**

(.218) |

2.030

**

(.224) |

2.439

**

(.218) |

1.473

**

(.366) |

2.545

**

(.294) |

| R2 | .101 | .052 | .032 | .014 | .124 | .122 |

| N | 497 | 497 | 497 | 497 | 372 | 372 |

| Mentors | Principals | |||||

| (1) | (2) | (3) | (4) | (5) | (6) | |

| Participants | Resident effectiveness | Programmatic support | Graduate effectiveness | Resident effectiveness | TRP effectiveness | TRP support |

| Multifunded multifaceted program | −.301 (.193) |

−.072 (.144) |

−.360

+

(.208) |

−.508

+

(.273) |

−.196 (.201) |

−.378 (.283) |

| Federally funded post-residency support program | −.368 (.267) |

−.035 (.202) |

−1.091

**

(.307) |

−.810

+

(.416) |

−.492 (.318) |

−.529 (.459) |

| Constant | 2.455

**

(.294) |

2.057

**

(.241) |

2.762

**

(.464) |

2.810

**

(.552) |

2.985

**

(.418) |

3.073

**

(.549) |

| R2 | .049 | .071 | .104 | .076 | .096 | .091 |

| N | 485 | 485 | 188 | 216 | 216 | 246 |

Note. Heteroskedastic-robust standard errors are in parentheses. Reference group is the locally funded low-tuition residency programs. Program characteristics are included in the models but not shown. Some principals did not answer questions related to different survey modules, resulting in differing sample sizes for principal factors. PR = post-residency.

p < 0.10, *p < 0.05, **p < 0.01.

Acknowledgements

We thank Kevin Levay, Shriya Bellur, and Abigail Spencer for their assistance in this manuscript.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

Notes

Authors

ANDREW KWOK is an associate professor in the Department of Teaching, Learning, and Culture at Texas A&M University. His research focuses on supporting beginning teachers with a particular focus on classroom management, teacher preparation, teacher pipelines, and teacher induction.

TUAN D. NGUYEN is an associate professor in the Department of Educational Leadership and Policy Analysis at the University of Missouri. He applies rigorous quantitative methods (quasi-experimental designs and meta-analysis) to examine 1) the teacher labor markets, particularly looking at the factors that drive teacher attrition and retention, and 2) the effects and implications of teacher policies and education policies intended for social equity and school improvement.