Abstract

Despite evidence that teacher professional development interventions in mathematics and science can increase student achievement, our understanding of the mechanisms by which this occurs—particularly how these interventions affect teachers themselves and the extent to which teacher-level changes predict student learning—remains limited. The current meta-analysis synthesizes 46 experimental studies of PK–12 mathematics and science professional development interventions to investigate how these interventions affect teacher-level outcomes, including knowledge and classroom instruction, and how these impacts relate to intervention effects on student achievement. Compared with controls, treatment group teachers in PK–12 mathematics and science PD intervention studies demonstrated stronger performance on teacher-level outcomes (pooled average impact estimate: +0.52 SD). Programs with larger impacts on teacher-level outcomes also tended to have significantly larger mean impacts on student achievement. We discuss implications for future research and practice.

Keywords

Strengthening children’s understanding and skills in mathematics and science is a major focus of many nations’ education systems (e.g., National Academies of Sciences, Engineering, and Medicine [NASEM], 2020; OECD, 2007). Given concerns about students’ preparation for a labor market that increasingly values higher-level quantitative and communications skills (Deming, 2017) and the urgent need in many countries to make mathematics and science learning opportunities more rigorous and efficacious (Chmielewski & Reardon, 2016), researchers and policymakers have made significant investments in improving instructional quality in mathematics and science classrooms. A core policy investment by which many nations seek to improve the quality of PK–12 mathematics and science teaching is the provision of teacher professional development (PD) (Desimone, 2009).

In the United States, the country where most of the experimental research on this topic has been conducted, policy logic models posited that improvements in student learning would operate through changes in teachers and teaching (see, e.g., National Research Council [NRC], 2007; Smith & O’Day, 1990; Wilson, 2008). U.S. policymakers in particular targeted two areas: perceived constraints in teachers’ knowledge of the content to be taught and perceived weaknesses in classroom instruction. Pertaining to teachers’ knowledge, for example, the National Academies’ (Institute of Medicine, 2010) report

Perceived constraints in teachers’ content knowledge and classroom instruction led scholars and policymakers to design policy logic models theorizing that teachers’ participation in PD would influence teachers’ knowledge, skills, and instructional practice. Ultimately, these changes were expected to catalyze improved student-learning outcomes (Ball & Cohen, 1996; Carlson et al., 2019; Philipp, 2007; for similar logic models in a broader range of countries, see, e.g., Bethell, 2016; Kärkkäinen & Vincent-Lancrin, 2013).

In line with these logic models, in the past two decades, researchers have developed a broad range of teacher PD interventions that target facets of teachers’ knowledge, classroom instruction, or both. Beginning in the early 2000s, the Institute for Education Sciences and National Science Foundation’s increasing emphases on funding randomized experiments produced a wealth of new studies of teacher PD using designs supporting causal inference. Research syntheses indicate that studies of PD interventions tend to show positive average impacts on students’ mathematics and science learning, although with considerable heterogeneity in study outcomes (e.g., Blank & De las Alas, 2009; Lynch et al., 2019; Scher & O’Reilly, 2009; Slavin et al., 2009).

Yet, despite the growing evidence base documenting the potential for PD interventions to bolster student achievement in mathematics and science, the teacher-level knowledge and practice mechanisms by which these investments operate remain not well understood. One limitation lies in prior scholarship’s inability to empirically test different elements of policymakers’ logic model—namely that PD would generate growth in teacher knowledge and improvements in teachers’ instruction and that changes in one or both would predict improvements in student achievement. A second limitation lies in the existing literature’s small number of efforts to explain heterogeneity in programs’ impacts on teachers—that is, testing whether we can isolate specific program foci or characteristics that predict stronger teacher impacts.

To remediate this gap in the literature, we conducted a comprehensive meta-analysis of contemporary experimental studies of teacher PD to empirically test several important, yet understudied, components of the core policy logic model for PD in mathematics and science outlined previously. In prior work (Lynch et al., 2019), we synthesized STEM classroom interventions’ impacts on student-level achievement outcomes. In the current study, we directly build on and extend this prior work by synthesizing two decades of evidence on the impacts of mathematics and science PD on teacher-level outcomes, including knowledge and classroom instruction. We also investigated whether key program content and contextual factors predicted improved teacher outcomes. We then linked interventions’ impacts on teacher-level outcomes to impacts on measures of student learning, thereby evaluating the extent to which key elements of a core logic model supporting policy investments into instruction are borne out in the contemporary research base. This study addresses the following research questions:

[RQ1]. What is the causal impact of mathematics and science PD interventions on in-service teachers’ outcomes, including knowledge and classroom instruction?

[RQ2] Do specific features and foci of mathematics and science PD interventions predict stronger impacts on in-service teachers’ outcomes, including knowledge and classroom instruction?

[RQ3]. Are PD program-induced changes in in-service teachers’ outcomes, including knowledge and classroom instruction, linked to improvements in student achievement?

To address these questions, we used exclusively randomized experiments, a “gold standard” research design that supports causal inference and mitigates many threats to internal validity.

Literature Review

Policymakers and researchers in many countries globally have prioritized improving mathematics and science student outcomes for the past several decades (American Association for the Advancement of Science [AAAS], 1990; National Governors Association [NGA], 2007; NRC, 2007; OECD, 2007). Many of these efforts called for more mathematics and science literacy among high school and college graduates, with dual attention to meeting employers’ needs and fueling innovation in the technology sector. These concerns about workforce readiness dovetailed, chronologically, with widespread dissemination in the field of updated guidance for how students learn content (Bransford et al., 2000), with the result that most mathematics- and science-related policy efforts indicated a preference for conceptual understanding and application of knowledge over rote memorization and basic skills.

Government agencies and researchers advocating for educational reforms often argued that improving student outcomes in mathematics and science would require a coordinated strategy aimed at changing classroom instruction. Advocates of systemic and standards-based reforms (e.g., Smith & O’Day, 1990) recommended a structure widely adopted by policymakers and funders (Knapp, 1997): standards, assessments, and accountability would create policy guidance and incentives for instructional improvement. Within this structure, PD interventions would increase teachers’ and schools’ capacity to offer improved instruction.

What Do We Know About the Effects of Teacher Professional Development in Mathematics and Science?

An influential stream of scholarship on teacher PD in the 2000s developed a framework that posited that effective teacher PD should contain several common elements: content focus, active learning, coherence, sufficient duration, and collective participation (Desimone, 2009; Garet et al., 2001). This framework was generative to the field; however, several federally commissioned large-scale studies that aimed to test PD containing these elements had disappointing results (e.g., Garet et al., 2008, 2011, 2016). This raised questions about potential refinements to the field’s conception of effective practices for teacher PD and influenced new scholarly efforts to conduct and synthesize a broad range of PD evaluations. We review results from these efforts in this article. We organize this review by first summarizing the evidence for impacts on students—looking at the degree to which PD interventions of the types studied in the literature meet their ultimate goal of improving student achievement. We then review evidence on the mechanisms by which PD achieves its results—looking at the impacts on teachers’ knowledge and classroom instruction.

Impacts on Students

Several reviews have examined the impacts of mathematics and science teacher PD on student achievement. In prior work (Lynch et al., 2019), we conducted a related meta-analysis of the student-level impacts of teacher professional development and curriculum improvement interventions in PK–12 mathematics and science, finding a pooled mean effect size on student achievement of 0.21

Albeit not a meta-analysis, Kennedy (2016) reviewed the literature using graphical comparisons of studies and concluded that programs that focused on a number of different instructional issues, including improving student behavior, bolstering student participation, making students’ thinking and problem-solving visible, and presenting the content of the curriculum all appeared similarly likely to improve student achievement. Programs that fostered teachers’ intellectual involvement with the material and featured voluntary, rather than mandatory, enrollment of teachers also tended to be more effective. Other authors have reviewed subsets of these literatures (in elementary mathematics, Pelligrini et al., 2021; in secondary science, Cheung et al., 2017; in elementary science, Slavin et al., 2014) or pooled math and science programs with those focusing on a range of content areas (e.g., literacy skills, economics, etc.; Sims et al., 2023), generally finding positive student achievement effects.

Evidence on the Mechanisms by Which Teacher Professional Development Achieves Its Effects: Impacts on Teachers’ Knowledge and Classroom Instruction

Our first research question examines the impacts of PD interventions on proximal teacher outcomes, including teachers’ knowledge and classroom instruction. As elaborated in the logic model discussed later, understanding impacts on teachers’ outcomes, including knowledge and classroom instruction, is important for unpacking the mechanisms by which teacher PD achieves its results. A handful of prior reviews have examined facets of teacher-level outcomes of PD interventions. We briefly review the methods and results from these reviews before describing the contributions of the present study to the evidence base.

We identified five prior meta-analyses that examined impacts of different types of teacher PD for a sample that included mathematics and/or science teachers on instructional practice outcomes (Egert et al., 2018 [specific to early childhood]; Garrett et al., 2019; Kowalski et al., 2020; Kraft et al., 2018 [specific to coaching]; Scher & O’Reilly, 2009) and one that included an analysis of impacts of teacher training activities on teachers’ knowledge (Kowalski et al., 2020). The earliest of these, Scher and O’Reilly (2009), reviewed the literature published through 2004 on elementary and secondary in-service teacher professional development in mathematics and science. The authors reported pooled mean effects of teacher PD interventions on teacher attitudes of 0.45

Additionally, Kraft et al. (2018) conducted a meta-analysis of teacher coaching programs, which they operationalized as “in-service PD programs that incorporate coaching as a key feature of the model” (p. 553), conducted with PK–12 teachers teaching in a range of content areas (e.g., reading, math, science). Combining experimental and rigorous quasi-experimental studies, Kraft et al. found a pooled mean impact of 0.49

Specific to science, Kowalski et al. (2020) conducted the one prior meta-analysis we could identify that examined outcomes on teacher knowledge. The authors meta-analyzed a set of quasi-experimental and experimental studies of science interventions for preservice and/or in-service teachers. Included studies aimed to improve knowledge, practices, and/or attitudes; were conducted in either schools or research labs; and, for quasi-experiments, were not required to demonstrate the comparability of treatment and comparison groups at baseline. The authors found a pooled mean effect size of 0.49

Programmatic Features and Contexts of PD Programs in Mathematics and Science That May Moderate Effects on Teacher-Level Outcomes

Our second research question examines how the features and contexts of PD interventions may moderate PD programs’ impacts on teachers. PD interventions vary on key characteristics that may explain variation in their impacts on teacher outcomes, including knowledge and classroom instruction. First, understanding the extent to which

Also, PD interventions may or may not include the

PD interventions also vary in their programmatic foci. PD programs may or may not include an

Teacher PD programs in mathematics and science also may or may not incorporate a focus on

A third programmatic focus is PD programs’ inclusion of

Lastly, elements of the

At the setting level, schools in different geographic locales may experience different contexts and resource environments that affect the comprehensiveness with which instructional initiatives are carried through (e.g., Wilson, 2013). Meanwhile, examining the effectiveness of instructional interventions by sociodemographic characteristics of participating students is important given the critical need to improve mathematics and science learning opportunities for all students, including students in high-poverty settings, students from communities of color, and students learning English as a new language (e.g., Oakes, 1990). We are not aware of prior meta-analyses examining these variables in the context of the influence of PD on teacher outcomes.

Can We Identify Links Between PD Impacts on Teachers’ Outcomes and Gains in Student Achievement?

As described previously, we hypothesized that PD interventions will affect teachers’ outcomes, including knowledge and classroom instruction, and that some interventions will be more effective than others. A logical next question and the third aim of our paper is: To the extent that programs are able to improve teachers’ outcomes, including knowledge and/or classroom instruction, how might this matter for impacts on student achievement? Different scholars and policy interventions have placed different degrees of emphasis on the importance of improving teachers’ content knowledge versus improving their practices, raising questions about whether improvements in knowledge or practices may be more strongly related to improvements in ultimate student achievement. We briefly summarize these different perspectives to motivate our third analysis where, using a similar approach as others (Egert et al., 2018; Kraft et al., 2018), we test via a regression approach the relationships between PD effects on teachers and their effects on students in the logic model.

Theorizing the Importance of Improving Teachers’ Knowledge to Strengthen Student Outcomes

A substantial history of education scholarship and policy has been concerned with teachers’ content knowledge and theorized that it has a major influence on students’ opportunities to learn science and mathematics (e.g., Conference Board of the Mathematical Sciences, 2012; Fennema & Franke, 1992; NASEM, 2007; National Research Council, 1996; No Child Left Behind Act, 2001; Wu, 2011). For instance, beginning in the 1980s and 1990s, a stream of research employed observations to draw attention to how constraints in teachers’ subject matter knowledge in mathematics and science could lead to confusing or incorrect delivery of curricular content to students (Cohen, 1990; Heaton, 1992; Putnam et al., 1992; Stein et al., 1990). Subsequent work developed a range of quantitative measures of facets of teachers’ knowledge and worked to link teachers’ scores on these measures with indicators of instructional quality and students’ achievement gains (e.g., B. A. Jacob et al., 2018; Hill et al., 2005; Rockoff et al., 2011). This line of research supported the argument that improving content knowledge was likely a critical component to improving classroom instructional quality and, ultimately, student outcomes.

Aligned with these views and this scholarship, improving teachers’ content knowledge in mathematics and science has been a major focus of federal education policy and funding investments in the United States. The National Academies’ influential

Theorizing the Importance of Improving Classroom Instruction to Strengthen Student Outcomes

Alongside policies and interventions weighted toward improving teachers’ knowledge, other scholars have placed more relative emphasis on the importance of improving teachers’ classroom instruction as central to strengthening student achievement in science and mathematics. Early research in the “process-product” literature tradition (e.g., Brophy & Good, 1986) aimed to categorize facets of teachers’ instructional behaviors that were associated with better student achievement. Observational research has also drawn attention to weaknesses in classroom instruction (e.g., Hill et al., 2018). For example, in a nationally representative observational study of science classrooms, Banilower et al. (2006) found that lecture formats dominated instructional activities, with limited time allocated to hands-on activities or small group work.

Scholars’ emphasis on topics such as questioning (Godbold, 1973), discussion routines (Pehmer et al., 2015), talk moves (Michaels & O’Connor, 2015), and coaching programs focused on discussing teachers’ instruction using classroom observation rubrics (Kraft & Hill, 2018; Wayne et al., 2023) also place an implicit emphasis on improving teachers’ practices as a central needed lever to improve student achievement. Influential popular books have also disseminated the message that improvements in teachers’ practices are of major importance to bolstering student achievement (Lemov, 2021). Meanwhile, many PD programs and policy investments with a focus on instructional practices also have a dual focus on improving aspects of teachers’ content knowledge, reflecting the view that changes in one area could spark or reinforce changes in another (e.g., Ball & Cohen, 1999; Clarke & Hollingsworth, 2002), although the relative emphasis placed on teachers’ knowledge versus practices varies.

Given these different emphases, it remains unclear but important to know how PD-induced improvements in teachers’ outcomes, including content knowledge and classroom instructional practices, may be related to improvements in student learning. Only two meta-analyses that we can locate linked teacher to student outcomes. Using a subset of studies that collected information on both classroom instruction and student achievement, Egert et al. (2018) and Kraft et al. (2018) found some evidence of positive correlations between impacts on instruction and improvements in student achievement in their meta-analyses, specific to the early childhood and teacher coaching contexts, respectively—though in Kraft et al. (2018) this correlation was not statistically significant. Neither study examined relationships between impacts on teacher knowledge and impacts on student learning, nor did they examine these relationships specifically in the context of in-service teacher PD in mathematics and science. We examine these questions in our analysis.

The Present Study

The present study aims to estimate the causal effects of PK–12 mathematics and science teacher PD interventions of the kinds studied in the experimental literature on teacher outcomes, including knowledge and classroom instruction, and to understand how these impacts correlate to student outcomes using the most rigorous evidence available. Prior reviews, while certainly informative, have typically examined only measures of instruction, and not specifically in mathematics and science (e.g., Egert et al., 2018, in early childhood; Garrett et al., 2019, across a range of content areas; Kraft et al., 2018, specific to coaching studies across a variety of subjects). These meta-analyses largely included studies on literacy and social-emotional learning, leaving questions about mathematics- and science-specific programs’ impacts on teacher outcomes unanswered. One prior meta-analysis has synthesized the impacts of educator training programs on science educators’ knowledge and practices (Kowalski et al., 2020); while quite useful, this science-specific review did not specifically examine in-service teachers, nor did it require that included quasi-experiments meet methodological criteria such as demonstrating group baseline equivalence (What Works Clearinghouse [WWC], 2022).

Thus more can be learned from the literature by specifically examining the impacts of PD programs on in-service mathematics and science teachers using rigorous contemporary evidence from RCTs. We further probe potential explanations for observed variations in PD programs’ impacts on teachers by empirically testing a set of important programmatic and contextual features of PD studies (described later).

Second, as prior reviewers have pointed out (e.g. Garrett et al., 2019; Kowalski et al., 2020), no existing reviews have empirically tested the links in the logic chain between interventions’ proximal impacts on teachers and their ultimate influences on student learning. However, doing so is important. The core purpose of PD investments is student learning, and the need to understand the mechanisms that produce that learning is crucial. Therefore, the current review takes the critical step of investigating how changes in teachers’ outcomes catalyzed by experimentally evaluated interventions predict improvements in student achievement. This analysis approximates as closely as possible an analytic test of the system of relationships between PD; teacher outcomes, including knowledge and instruction; and student achievement.

Logic Model for the Effects of PD Interventions

Adapting the model proposed by Garrett et al. (2019), Figure 1 provides an illustration of hypothesized pathways by which PD interventions that aim to influence teachers’ knowledge and/or instruction may lead to changes in student learning. This model structures our analysis. The left panel of the Figure 1 shows an illustration of potential mechanisms by which PD interventions are hypothesized to lead to changes in student learning. The right panel of Figure 1 illustrates the subset of mechanisms that we examine in the current analyses.

Hypothesized logic model for links between professional development interventions, teacher outcomes, and student outcomes.

In the full model, illustrated in the left panel of Figure 1, teachers’ participation in a PD may lead to changes in their knowledge (Path

Interventions may also affect student outcomes independent of teacher-level mediators, such as through the provision of new materials and student supports (Path

Our analyses permit us to test several elements of this logic model, illustrated in the right panel of Figure 1. In our first analysis [RQ1], we directly tested the impact of mathematics and science PD interventions on teacher outcomes, specifically knowledge (Path

Given the importance of understanding factors that may contribute to variation in interventions’ impacts on teacher outcomes, we also examine Paths

In our third set of analyses, we examined the links between impacts on teacher outcomes, including knowledge (Path

We describe our meta-analytic search procedures and analyses in the next section.

Methods

Defining Mathematics and Science PD Interventions

For the current meta-analysis, we defined mathematics and science PD interventions to include programs that aimed to improve student learning in mathematics and science via teacher PD for in-service teachers. This definition excludes interventions that do not focus on improving classroom instruction, such as out-of-school time tutoring, summer programs, or homework interventions, and those that do not involve in-service classroom teachers, such as interventions in which researchers provided instruction directly to students. Included programs ranged from those providing PD only (e.g., Dash et al., 2012; Jayanthi et al., 2017; Piasta et al., 2015) to those that included a relatively brief PD that focused on a set of new curriculum materials intended for implementation (e.g., Miller et al., 2007; Resendez & Azin, 2008).

We only included studies that used randomized experimental designs. We restricted our sample in this way to ensure that teachers in the treatment and control groups were similar on all observed and unobserved characteristics, meaning that differences in teacher knowledge and instruction between the two groups can be attributed to the impact of the intervention. We excluded studies that formed treatment and comparison groups in other ways (for example, by comparing teachers who elected to participate in a PD program against teachers who did not) because it would be impossible to disentangle the impact of the program from other factors, such as motivation differences between the groups.

The current meta-analysis pooled mathematics and science studies for conceptual and technical reasons. First, many nations’ education systems have a dual focus on improving mathematics and science education (NASEM, 2020; National Research Council [NRC], 2013), given the need for improved instruction in these areas and their joint importance in preparing students for the contemporary labor market. Additionally, the observation that teachers are less likely to have specialized training in these subjects as compared with ELA/reading and other content areas (e.g., NASEM, 2007, 2020, 2022) has led many scholars and policymakers to hypothesize unique kinds of PD needs for teachers in mathematics and science. These considerations have led to the funding of numerous initiatives aimed at improving instruction in both mathematics and science (e.g., the United States’ Title II’s Math-Science Partnerships [Hill et al., 2019] and National Science Foundation’s Systemic Initiatives program [Hamilton et al., 2003]) and motivated earlier meta-analyses that considered instructional interventions in both mathematics and science (Blank & de las Alas, 2009; Scher & O’Reilly, 2009). Prior research has argued that notwithstanding their obvious disciplinary distinctiveness, mathematics and science share elements of overlapping subject matter cultures and intersecting visions for student learning; 2 in turn, these historically have informed joint education policy and federal grant funding initiatives pertaining to instruction in both content areas (Knapp, 1997). Second, mathematics and science PD interventions tend to share similar approaches to improvement—for example, the pairing of PD with curriculum materials or providing information on student learning as a means to changing teachers’ practice. Including both mathematics and science studies increases our statistical power to test the efficacy of these approaches. Third, our dual focus on mathematics and science allowed us to restrict the review to include only studies using experimental designs that permit causal inference while maintaining sufficient statistical power to conduct a quantitative meta-analysis.

Search and Screening Procedures

The procedures employed are similar to those we used in a prior meta-analysis focused on student-level impacts of STEM classroom interventions (Lynch et al., 2019). We applied the following search procedures to capture relevant published and unpublished experimental studies of mathematics and science PD interventions. To be included in the meta-analysis, studies had to meet the following criteria relating to study design, intervention, sample, and outcomes: (1) Include students in grades PK–12; (2) focus on classroom-level instructional improvement in mathematics and/or science through professional development, which may or may not be coupled with the introduction of novel curriculum materials; (3) employ a randomized experimental design comparing a treatment group to a business as usual control group; (4) be published in 2001 or later, coinciding with the passage of the No Child Left Behind Act, which contributed to catalyzing an increased focus on effectiveness research in education; (5) be written in English; and (6) report sufficient data to calculate one or more effect sizes for both teacher and student outcomes. Examples of excluded studies included those focused on postsecondary education (e.g., Rust, 2011; Sullins et al., 2010) or on subject areas other than mathematics or science (e.g., Connor et al., 2013; Farver et al., 2009) and studies that did not use random assignment. We focused specifically on studies that include both teacher and student outcomes because they are directly aligned with the intended impacts of PD interventions in our theoretical model. The publication year span that we employed for study inclusion is similar to that used by the What Works Clearinghouse, which generally declines to review studies that are more than two decades old due to significant cross-time shifts in the educational landscape (What Works Clearinghouse [WWC], n.d.).

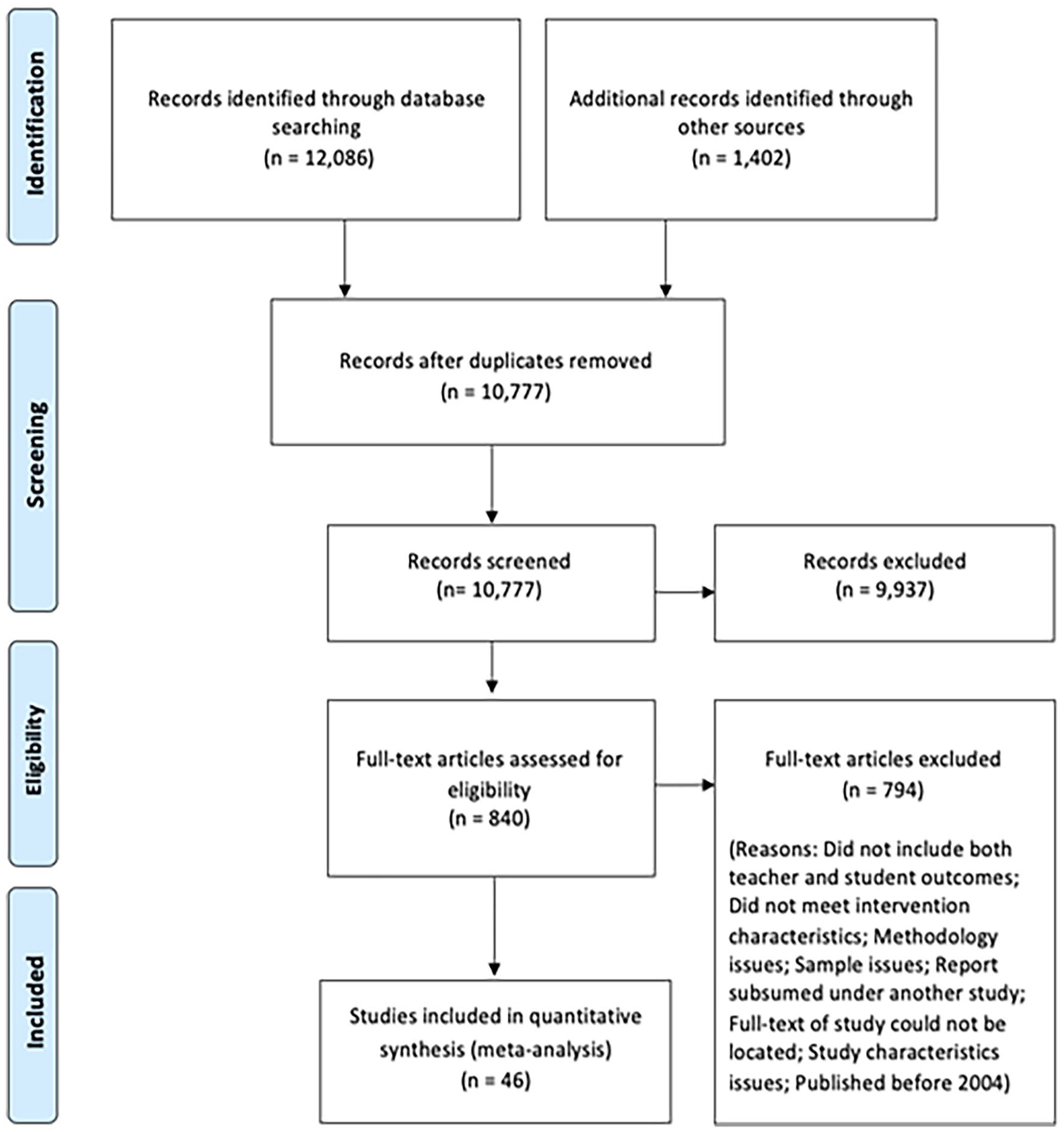

We searched in several channels. First, we examined the reference lists of previous research syntheses for studies that examined the topics of teacher PD and curriculum improvement in mathematics and science (e.g., Blank & De las Alas, 2009; Cheung et al., 2017; Garrett et al., 2019; Gersten et al., 2014; Kennedy, 2016; Kowalski et al., 2020; Scher & O’Reilly, 2009; Slavin & Lake, 2008; Slavin et al., 2009, 2014; Taylor et al., 2018; Yoon et al., 2007). We next performed electronic library database searches using Academic Search Premier, Ed Abstracts, EconLit, ERIC, PsycINFO, and ProQuest Dissertations & Theses for the period 2004 to 2024. As in Lynch et al. (2019), these searches were performed using subject-related search terms adapted from Yoon et al. (2007) and methodology-related search terms adapted from Kim and Quinn (2012). 3 We also examined relevant websites, including American Institutes for Research, Empirical Education, Inter-American Development Bank, Mathematica, MDRC, Regional Education Labs, WWC, the World Bank, and Society for Research on Educational Effectiveness (SREE) conference abstracts. Finally, we searched the NSF Community for Advancing Discovery Research in Education and IES websites and developed a list of all potentially relevant mathematics and science grant awards from the years 2002 to 2020. We searched electronic databases and the Web to identify relevant studies produced based on these grants and contacted study PIs to request reports if we could not identify publications with impact results from their grants. For the current review, we included the results of an initial round of searches we conducted for Lynch et al. (2019), then we extended the initial database of studies via an additional search for reports published through 2024. Via these search streams, we located 12,086 records from database searches and 1,402 records from other sources. Following the removal of duplicates, this yielded 10,777 studies.

Second, raters screened the titles and abstracts of these studies for whether or not they were conducted at the PK–12 level, reported information on student outcomes, and related to mathematics and/or science content and/or instructional strategies. During this phase, raters identified 840 studies as meeting the preliminary relevance criteria. These studies then underwent full-text screening, in which raters evaluated each study’s full text to determine whether it met the full criteria described previously, including requiring a randomized experimental research design, reporting impacts on both teacher and student outcomes, and being published within the review period. See Figure 2 for a PRISMA diagram.

PRISMA study screening flowchart.

Analytic Sample

The final meta-analytic sample includes 46 studies contributing 200 effect sizes for teacher outcomes and 126 effect sizes for student achievement outcomes. These include separate effect sizes for each study-reported assessment, treatment contrast, and teacher sample. Because we examine interventions’ impacts on all teacher outcomes as well as on teacher outcomes disaggregated by type, we also categorized each teacher outcome as either a teacher knowledge or classroom instruction outcome. Following others (Kowalski et al., 2020), while during initial coding we first classified teacher knowledge measures as either content knowledge or pedagogical content knowledge (PCK), we consolidated these measures for the analysis because PCK was measured in too few studies to permit separate analysis, thus it was preferable to pool it with the other knowledge measures rather than discarding the data altogether (Kowalski et al., 2020). We further argue that while our approach of categorizing outcomes as knowledge and practice may not be the only possible approach, it is a reasonable one based on the way these variables are reported in empirical studies in our dataset. For all analyses, we first considered all teacher outcomes simultaneously and then considered each group of outcomes separately.

Study Coding

We developed codes for meta-analytic moderator analyses based on a review of the existing literature on teacher PD, including prior research syntheses (e.g., Kennedy, 2016; Kraft et al., 2018; Scher & O’Reilly, 2009). The codes examined in the meta-analysis are described later. To complete study coding, after establishing interrater reliability at the start of the coding process (i.e., 80% agreement), study authors and trained research assistants coded full-text studies in pairs. Each researcher in the pair first coded the study independently, then pairs met to resolve all coding disagreements through discussion (for more details about code development, see Lynch et al., 2019).

We grouped potential moderators into three categories: (1)

Second, we coded each study for evidence that the PD intervention included an explicit

Lastly, we examined how the impacts of PD interventions varied based on the

Effect Size Calculation

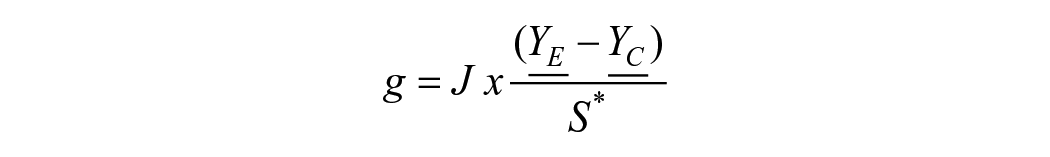

We computed standardized mean difference effect sizes for impacts on teacher outcomes and student achievement outcomes using Hedges’s

Where

As in Lynch et al. (2019), we computed effect sizes based on author-reported effect sizes, raw means and standard deviations, and other author-reported results using the Comprehensive Meta Analysis software package for most instances. Where possible, we calculated effect sizes that were adjusted for covariates (e.g., pretest scores). The following decision rules were applied to compute effect sizes: For cases in which a study presented a standardized mean difference effect size (e.g., Cohen’s

Impacts of PD Interventions on Teacher Outcomes

We estimated meta-regression models to examine the impacts of PD interventions on teacher-level outcomes, both pooled and separately for knowledge and classroom instruction outcomes. Many of the included studies yielded multiple effect sizes for impacts on teacher-level outcomes. We expect there are dependencies among effect sizes within our data, which violates the statistical assumptions required for the use of traditional meta-analytic methods. For example, some studies examined multiple outcome measures for one underlying construct or multiple related constructs for the same sample. We expect the sampling errors of these effect sizes to be correlated within the same study (i.e., correlated effects). In other cases, we expect multiple effect sizes within the same study to be independent—for example, because the study reports effect sizes for multiple participant samples or based on evaluations of multiple treatments. These types of dependencies are referred to as hierarchical effects (Tanner-Smith & Tipton, 2014).

As our sample of effect sizes is likely to include both types of dependencies, we use a correlated and hierarchical effects (CHE) model with robust variance estimation (RVE) that combines both dependence structures. The CHE approach allows for both between-study heterogeneity and within-study heterogeneity in true effect sizes and for correlations between effect sizes from the same study (J. E. Pustejovsky & Tipton, 2022). This approach has been adopted in recent meta-analyses (e.g., Atit et al., 2022; Vembye et al., 2024; Waheed, 2023; Wijnia et al., 2024) and allows us to include multiple effect sizes from the same study and avoid the information loss that would arise from excluding effect sizes or calculating mean effect sizes within each study. The use of CHE with RVE guards against model misspecification, given the complexities inherent in datasets with dependencies among effect sizes (Tipton & Pustejovsky, 2015). We used the

We first estimated separate unconditional CHE models to estimate the overall impact on all teacher outcomes, including teacher knowledge and classroom instruction outcomes simultaneously. We then estimated separate unconditional CHE models to estimate overall impacts on teacher knowledge and classroom instruction outcomes separately. An advantage of the CHE model is that it estimates the between-studies variance component, which we report as a measure of between-study heterogeneity in effect sizes (Tanner-Smith et al., 2016).

To examine moderators of program impact, we first examined whether

We then fit conditional CHE models to examine whether

Finally, we fit conditional CHE models to examine whether impacts differed based on

Conditional models also featured additional study characteristics as covariates: whether effect sizes were adjusted for covariates and whether interventions focused on mathematics or science. There was within-study variability in some moderators and covariates (e.g., contact hours varied across multiple treatment-control contrasts); in these cases, we followed the recommended approach of including the study-level mean (Tanner-Smith & Tipton, 2014).

Linking Impacts on Teacher and Student Outcomes

To examine the associations between intervention impacts on teacher-level outcomes and intervention impacts on student-level outcomes, we adapted the approaches used in recent meta-analyses (e.g., Egert et al., 2018; Kraft et al., 2018). First, we calculated mean effect sizes for impacts on teacher outcomes and student achievement for each treatment-control contrast in our sample. To examine whether impacts on teacher knowledge and classroom instruction are separately associated with impacts on student achievement, we calculated three mean effect sizes for impacts on teacher outcomes: (1) mean effect sizes for impacts on all teacher outcomes; (2) mean effect sizes for teacher knowledge; and (3) mean effect sizes for classroom instruction.

We examined the links between intervention impacts on teacher outcomes and intervention impacts on student outcomes rather than the correlations between teacher and student outcomes for two reasons. First, correlations between teacher scores on knowledge and/or classroom instruction measures and student achievement outcomes were not reported by most studies, and estimates derived from prior literature may not be applicable to the current context of contemporary RCTs of PD interventions in STEM. Second, by looking at the correlation between intervention-induced changes in teacher and student outcomes (i.e., correlations between the intervention impact estimates for teacher and student outcomes, respectively), we get as close as possible, given the limitations of the data, to understanding how exogenous changes in teacher knowledge and classroom instruction affect student achievement outcomes. Implicit in our approach is the assumption that intervention impacts on student outcomes occur only through changes in teacher-level outcomes, which may not hold if interventions have effects on other, unobserved factors that shape student achievement. Nevertheless, this approach is preferable to a meta-analysis of correlations between teacher and student outcomes, as these correlations would likely be confounded by a number of teacher, student, and contextual factors.

Some studies (

After calculating treatment-level mean effect sizes for teacher and student outcomes, we then estimated a series of regression models predicting mean impacts on student outcomes as a function of mean impacts on teacher outcomes. We estimated three separate models, including each of the treatment-level mean effect sizes for impacts on teacher outcomes described previously as predictors. These models were weighted by the average of the inverse effect size variances for teacher outcomes and featured study characteristics as covariates, including whether effect sizes were adjusted for covariates and whether interventions focused on mathematics or science. 4

As in other studies that have examined the link between intervention impacts on teacher-level and student-level outcomes (e.g., Egert et al. 2018; Kraft et al. 2018), the data we compiled from primary studies allow us to examine whether interventions that supported teacher knowledge and classroom instruction also tended to have larger impacts on student achievement. However, this analysis does not allow us to determine whether this association is causal in nature—that is, that improvements in teacher knowledge and instruction caused an improvement in student achievement—or occurred because such interventions impacted other, unobserved mediators that supported student achievement and are correlated with knowledge or practice.

Results

Study Characteristics

In Table 1, we present descriptive information about the PD interventions and studies included in the meta-analytic sample. The sample included 46 studies with 57 treatment-control contrasts, yielding a total of 200 teacher outcomes effect sizes. Among these studies, 25 (54 percent) featured both PD and new curriculum materials; 21 studies (46 percent) featured PD only. A majority focused on mathematics (28 studies; 61 percent), and roughly one-third focused on science (16 studies; 35 percent); two studies focused on both mathematics and science (4 percent). Studies included a mix of grade levels, ranging from preschool through high school. Interventions included an average of 57 PD contact hours; in a majority of studies, intervention activities took place over two or more semesters (32 studies; 74 percent). All studies were randomized controlled trials.

Sample Sizes and Study Characteristics

Studies with at least one treatment arm that provided new curriculum materials and professional development were included in “Professional development + new curriculum materials.”

Studies may have included multiple grade levels.

If professional development hours varied across treatment arms, we calculated the study average.

Out of 43 studies with nonmissing information on this variable. If professional development timespan varied across treatment arms, we used the maximum.

Out of 29 studies with nonmissing information on this variable.

Out of 37 studies with nonmissing information on this variable. Studies conducted in Head Start programs were included in the “Majority of students are low income or free or reduced-price lunch-eligible” category.

Out of 24 studies with nonmissing information on this variable.

Includes the average percent of students who are not white. Out of 32 studies with nonmissing information on this variable.

Table 1 also shows the foci of included interventions. A majority of studies included professional development that focused on at least one aspect of teacher knowledge, including improving teacher content knowledge or pedagogical content knowledge or knowledge of how students learn (32 studies; 70 percent). The majority of studies also focused on at least one aspect of classroom instruction. Nearly all focused on content-specific instructional strategies (45 studies; 98 percent); therefore, we were unable to examine this feature as a moderator. A smaller proportion of studies included a focus on content-specific formative assessment (8 studies; 17 percent) and on generic instructional strategies (7 studies; 15 percent).

Table 1 also describes the contexts in which studies were conducted. On average, 62 percent of students were identified as free- or reduced-price-lunch eligible or low-income, 16 percent were emergent bilingual students/students learning English as a new language, and 60 percent were students of color, among studies that reported this information. We did not screen studies based on the country in which they were conducted. However, 45 of the 46 studies that met the review’s inclusion criteria were conducted in the United States; one study was conducted in the Philippines (San Antonio et al., 2011).

The studies in our sample reported on a mix of teacher knowledge and classroom instruction outcomes. As shown in Table 1, 55 effect sizes (28 percent) captured impacts on some aspect of teacher knowledge in science or mathematics. The other 145 effect sizes (73 percent) captured impacts on classroom instruction. These included impacts on both observational and self-report measures of classroom instruction. Most effect sizes were based on intervenor-developed measures (150 effect sizes; 75 percent), although some were based on standardized (41 effect sizes; 21 percent) or other (9 effect sizes; 5 percent) measures.

Overall Average Impacts on Teacher Outcomes

Table 2 presents the results of estimating unconditional CHE models examining study impacts on teacher outcomes. Pooling across studies, the average weighted impact on all teacher outcomes was 0.52

Results of Estimating CHE Models Examining the Impacts of PD Interventions on Pooled Teacher Outcomes, Teacher Knowledge, and Classroom Instruction

The prediction interval was calculated as:

When we considered teacher knowledge and classroom instruction separately, we found studies yielded positive, similarly sized mean impacts on each group of outcomes. We found an average weighted impact on teacher knowledge of 0.52

Our primary analysis also included evaluations of both mathematics and science interventions. As an exploratory analysis, we also examined overall impacts on teacher outcomes for mathematics and science studies separately and found impacts were generally similar for studies in both groups (Table S1; online only). Among studies focused on interventions in mathematics, we found a mean pooled effect size of 0.51

Intervention Characteristics and Contextual Factors That Moderate Program Impacts on Teacher Outcomes

Next, we turn to programmatic and contextual factors that moderated impacts on teacher outcomes. We first examined

Results of Estimating CHE Models Examining the Impacts of PD Interventions on Pooled Teacher Outcomes, Teacher Knowledge, and Classroom Instruction, Including Intervention Type and Dosage as Moderators

PD contact hours measured as PD contact hours/10.

Second, we examined the association between

Results of Estimating CHE Models Examining the Impacts of PD Interventions on Pooled Teacher Outcomes, Teacher Knowledge, and Classroom Instruction, Including Professional Development Foci as Moderators

Study covariates include whether intervention focused on math or math/science (vs. science only) and whether effect sizes were adjusted for covariates. Study-level mean values of all covariates were included in the model.

Finally, we examined whether study contexts moderated intervention impacts on teacher outcomes. We did not observe significant relationships between intervention impacts and any of the measured contextual features, including teacher sample size, demographic and socioeconomic characteristics of the student sample, whether studies were implemented in urban as compared to rural or suburban districts, or grade level (see Tables S2–S4; online only).

Linking Impacts on Teacher and Student Outcomes

Table 5 presents the results of estimating weighted regressions that tested the associations between intervention impacts on overall teacher-level outcomes, on teacher knowledge and classroom instruction outcomes, and improvements in student achievement. When we pooled all teacher-level outcomes (model 1), we observed a positive, statistically significant association between treatment-level mean impacts on teacher outcomes and treatment-level mean impacts on student achievement outcomes. Specifically, we found that a 1

Results of Estimating Weighted Regression Models Examining the Associations Between Treatment Impacts on Teacher Outcomes and Student Outcomes

We next fit separate models to consider relationships between interventions’ impacts on teacher knowledge (model 2) and classroom instruction (model 3), respectively, and impacts on student achievement. As shown in model 3, a 1

Publication Bias

In all systematic reviews, there exists the possibility of publication bias among available studies. We took three approaches to examine this issue in the present sample of studies. Following conventional practices in meta-analysis, we first inspected funnel plots (showing effect sizes against their standard errors) to explore whether there is visual evidence of publication bias. The rationale for this approach is that smaller studies (with larger standard errors) have less precision, hence are less likely to yield statistically significant results, and therefore are more likely to be affected by publication bias. Asymmetrical patterns in the funnel plots indicate potential publication bias. We examined three funnel plots, including plots for all teacher outcomes, teacher knowledge only, and classroom instruction only. We observed asymmetry in all three funnel plots, providing visual evidence of possible publication bias (see Figures S1 to S3; online only). This pattern appears somewhat more pronounced for teacher knowledge relative to classroom instruction.

Then, we conducted statistical tests for publication bias using Egger’s regression test. This method is widely used in meta-analysis to assess potential publication bias by statistically testing for asymmetry in the funnel plot. This method performs a linear regression of the standardized effect sizes on their standard errors, weighted by precision (the inverse of the effect size variance), testing the null hypothesis of a zero intercept (i.e., that there is not publication bias). A rejection of the null hypothesis indicates evidence of publication bias (e.g., Egger et al., 1997). Given the structure of our data, which includes multiple effect sizes per study, we performed two versions of Egger’s test. First, we aggregated effect sizes and effect size standard errors to the study level by calculating the average effect size and average effect size standard error across all effect sizes in each study. We then regressed the standard normal deviation—that is, the effect size divided by its standard error—on the effect size standard error’s inverse. Second, we used a modified approach that regresses the effect sizes on their standard errors, weighted by precision, which yields equivalent results as the traditional Egger’s test (Rothstein et al., 2005) but allows us to retain multiple effect sizes per study. Specifically, we added the effect size standard error as a moderator to the unconditional CHE model. If the standard errors of the effect sizes predict the magnitude of the effect sizes, this similarly indicates evidence of publication bias. We conducted both tests for all teacher outcomes, teacher knowledge only, and classroom instruction only. Results are consistent with the presence of publication bias and suggest that this may be more pronounced among studies that examined teacher knowledge (see Tables S5 and S6, online only).

Finally, we compared mean impacts on knowledge and instructional outcomes for peer-reviewed versus non-peer-reviewed studies (see Table S7, online only). For this test, we estimated conditional CHE models that included an indicator for whether the study was peer-reviewed (including IES-approved publications and NCEE reports) as a moderator. We did not observe a statistically significant difference in mean impacts between peer-reviewed and non-peer-reviewed studies. However, the direction of results suggests that peer-reviewed studies had more positive impacts, consistent with the results described above, suggesting the presence of publication bias in our sample.

Results of the three approaches described previously are not entirely consistent but suggest that there may be publication bias in our sample. Findings are consistent with a recent meta-analysis examining the impact of professional development on instructional practice, which also found evidence of publication bias (Garrett et al., 2019). This points to the importance of searching the gray literature when capturing research in this domain and, as a field, encouraging the publication of null findings.

Sensitivity Checks

Overall Average Impacts on Teacher Outcomes

We conducted several sensitivity checks to test the robustness of our findings to different sample and model specifications. First, we examined whether study quality issues could bias the results. As we include only RCTs, the main threat is attrition. Teachers frequently exit studies due to moving between schools, grade levels, and other reasons, particularly for multiyear studies that comprise a substantial proportion of our sample. A high degree of attrition and/or differential attrition between treatment and control groups could lead to bias in estimates of intervention impacts, even in the context of an RCT. We coded for attrition in our sample in two ways: overall attrition at the cluster

Additionally, effect sizes for impacts on classroom instruction represented a combination of self-report and observational measures of instructional practice. To determine whether this mix of self-report and observational outcomes could influence our estimate of the overall average impact on classroom instruction, we first replicated our unconditional CHE model after restricting the sample first to effect sizes based on self-report measures of instructional practice and then to effect sizes based on observational measures of classroom instruction. Results indicate that the overall average impacts were somewhat larger for observational compared to self-reported measures of instructional practice (see Table S9, online only).

Effect sizes also represent a mix of standardized, intervenor-developed, and other types of outcome measures. Therefore, we also replicated our unconditional model after restricting the sample to effect sizes based on standardized or intervenor-developed outcome measures and after restricting the sample to effect sizes based on intervenor-developed outcome measures. We could not examine impacts on standardized or other outcome measures separately due to the small number of effect sizes in each category. Results indicate that excluding these different groups of outcome measures did not substantially affect the magnitude of estimated impacts on classroom instruction or teacher knowledge (see Table S10, online only).

Linking Impacts on Teacher and Student Outcomes

We also examined the sensitivity of our findings regarding the links between impacts on teacher and student outcomes to model specification. We confirmed that we received similar results when we examined unweighted associations between intervention impacts on teacher and student outcomes (see Table S11, online only) and when we examined weighted associations between impacts on teacher and student outcomes that used mean effect sizes aggregated to the study level rather than to the treatment-contrast level (see Table S12, online only).

Discussion

In this study, we meta-analyzed experimental research on the impacts of mathematics and science teacher professional development programs on teachers’ outcomes, including knowledge and classroom instruction; examined the extent to which programmatic and contextual moderators explained variation in programs’ impacts on teacher-level outcomes; and probed the extent to which improvements to teacher outcomes ultimately predicted improvements in students’ mathematics and science achievement. This analysis represents a fuller test of recent policy initiatives’ logic model than has occurred to date.

To summarize the primary findings, in-service mathematics and science teacher PD interventions of the types examined in the experimental literature base had mean positive impacts on teacher outcomes, both overall and disaggregated by measures of knowledge and classroom instruction. Moderator analyses suggested that PD programs with a focus on improving teachers’ knowledge, and those that included a focus on content-specific formative assessment, had stronger mean impacts on pooled teacher-level outcomes and on classroom instruction outcomes relative to programs that lacked these foci. Improvements in teacher-level outcomes, in turn, were associated with improvements in student achievement. We discuss the findings in more detail in the next section.

Overall Impacts on Teacher Outcomes

As estimated from the available random assignment research, mathematics and science PD interventions that measured both teacher and student outcomes had mean positive impacts on teacher outcomes of 0.52

This included positive mean effects on measures of teacher knowledge, a key component of recent mathematics and science education policy initiatives’ logic model (e.g., NRC, 2007, 2013). The average weighted impact on teacher knowledge was 0.52

Interventions also had positive mean effects on measures of classroom instruction, with a pooled mean weighted impact estimate of 0.49

Associations of Program Features and Foci With Teacher Outcomes

Programs have a finite time to spend with teachers, meaning that evidence regarding the specific program features and foci associated with impacts on teacher outcomes can provide insights into potentially valuable facets of program design. We thus sought to quantitatively test potential mechanisms that may explain heterogeneity in programs’ efficacy. Unlike prior reviews, we were able to examine potential moderators of PD programs’ impacts on teacher outcomes, including knowledge and classroom instruction, specifically for in-service mathematics and science teachers. We point out that caution is necessary in the interpretation of moderator tests due to the power constraints inherent in the small sample sizes available for moderator analyses. Additionally, like all meta-analytic moderator analyses, the moderator analyses presented in the current study are inherently correlational, not causal, in nature (e.g., Borenstein et al., 2021). As such, it is always possible that other variables may explain observed patterns of findings. Thus meta-analytic moderator analyses serve to identify potentially noteworthy trends across studies that may warrant follow-up.

With respect to impacts on classroom instruction, moderator analyses suggested that interventions that included a focus on strengthening teacher knowledge tended to have stronger impacts on pooled teacher-level outcomes and specifically on classroom instruction outcomes, on average, as compared to interventions that lacked this focus. These differences were marginally significant (

The moderator analyses also indicated that interventions’ impacts on teacher-level outcomes, and specifically on classroom instruction outcomes, tended to be larger among programs that included a focus on content-specific formative assessment, as compared with programs that lacked this feature. While not causal, it is plausible that the inclusion of an explicit focus on instructional adjustments based on evidence of students’ learning needs, which is characteristic of formative assessment, could potentially have benefited teachers’ instructional decision-making and demonstrated classroom practices (Palm et al., 2017). Again, given the number of interventions that included this focus was small, this finding is not definitive but suggestive of a relationship worth noting and worthy of future follow-up.

Turning to teacher knowledge, we found that effect size magnitudes were not significantly predicted by the key intervention features that we analyzed, including intervention type, duration of the PD, or PD programmatic focus. Rather, it appears to be the case that a variety of kinds of PD programs can effectively increase facets of teachers’ knowledge, including those that operate through less direct channels than an explicit knowledge development focus. Once more primary experimental studies on this topic are conducted, attempting to parse at a finer grain size how a PD focus on deepening specific facets of teachers’ knowledge may foster changes in classrooms and student learning would be a useful next step for future syntheses.

Meanwhile, the variables that we examined relating to intervention type and dosage were not significantly related to effect size magnitudes for any of the teacher-level outcomes we examined. An absence of a statistically significant association between PD duration and teacher outcomes, which was retained after parsing the data for potential nonlinear relationships, hearkens to the findings of Kennedy (2016) and Lynch et al. (2019), which did not find a clear link between longer-duration PD experiences and student learning, as well as Garrett et al. (2019), who did not find a significant relationship between classroom observation indicators and the duration of teacher PD in studies pooled across different content areas. Consistent with conclusions drawn in Kennedy (2016) and Lynch et al. (2019), PD content and quality appeared to matter more than contact hours between teachers and PD leaders. It is also possible that, as Garrett et al. (2019) suggested, shorter-duration PD programs tend to focus on more discrete teacher skills, which are easier to improve than more complex pedagogies but can nonetheless be valuable for incrementally strengthening teachers’ instructional expertise (e.g., Cai et al., 2017). We also did not observe a significant relationship between PD programs’ inclusion of a focus on new curriculum materials and teacher outcomes. This is perhaps surprising, given that curriculum materials are generally hypothesized to be educative for teachers (e.g., Davis et al., 2017), and Lynch et al. (2019) found a significant relationship between teacher PD’s inclusion of a focus on curriculum materials and student achievement outcomes. Lack of significant associations could be due to power constraints in the moderator analysis. Speculatively, it is also conceivable that students may be learning more in interventions that include new curriculum materials directly as a result of their own interactions with the new curricular content in ways not captured by teacher outcome measures, or that curriculum-focused interventions are bolstering facets of teachers’ instruction not typically captured in knowledge and practice assessments, like amount of classroom time dedicated to the content.

We note that although the current review quantitatively modeled a set of meta-analytic moderators derived from the literature, we could not test everything. Like most meta-analyses, we originally coded for and hoped to analyze additional moderator variables beyond those we were able to include in the analysis (e.g., whether the PD focused on integrating technology into the classroom; contextual factors such as the share of students receiving special educational services); however, not all variables could be analyzed because the included studies contained too little variation on these variables (i.e., coded feature present in fewer than 10% or more than 90% of studies or codes collinear with other codes). The research base also points toward the value of a range of further intervention features, such as an explicit PD focus on building on students’ home and community funds of knowledge (e.g., McWayne et al., 2020). Meta-analytic testing of these additional features would be a generative step for future research once a larger pool of primary studies has been conducted.

We also found that program impacts did not differ significantly based on the contextual moderators we examined, including intervention scale, setting type, and characteristics of the student sample. Most of the PD studies identified were conducted in relatively high-poverty settings, as evidenced by the fact that roughly three-quarters of the studies with nonmissing data on this variable were conducted in settings in which a majority of students were low income or eligible for a free or reduced-price school lunch. The observation that PD interventions did not appear to be less effective in high-poverty settings is encouraging, given that high-poverty schools facing resource constraints are often those that PD interventions are particularly designed to support (e.g., Ladson-Billings, 2005). We note that although the studies in our sample included students in grade levels ranging from prekindergarten to secondary school, we did not find evidence that intervention impacts on teacher outcomes differed by grade level. This is consistent with findings from prior meta-analyses examining the impacts of mathematics and science instructional interventions on student achievement, which generally yield similar patterns of positive impacts for students in elementary and secondary grades (e.g., Lynch et al. 2019; Slavin et al., 2009).

Links to Student Achievement

Bearing in mind that our meta-analytic data and design do not support causal inferences about the impacts of teachers’ outcomes on student achievement, the data nonetheless allowed us to estimate the magnitude of relationships between interventions’ impacts on proximal teacher outcomes and distal student learning. Pooling across outcome types, we found that PD interventions that had stronger impacts on teacher-level outcomes tended to have stronger impacts on student learning outcomes. Disaggregating by teacher outcome category, we found supportive evidence consistent with the notion that PD interventions that had stronger mean causal impacts on instruction tended to have significantly stronger impacts on students’ mathematics and science achievement. On average, a 1

The particular pattern of findings we observed regarding the relationships between student impacts, impacts on instruction, and impacts on teacher knowledge may have occurred for one of several reasons. On the one hand, while both teacher knowledge improvements and classroom instruction improvements were associated with improvements in student achievement in the disaggregated models, the association was larger in magnitude for classroom instruction improvements. We note that, perhaps interestingly, some of the included primary studies aimed at improving content knowledge posited that strengthening knowledge alone was not sufficient to improve student outcomes (e.g., Garet et al., 2010). For example, Garet et al. (2010, 2016) conducted two large-scale federally funded RCTs examining the impacts of PD interventions whose major aim was to improve teachers’ mathematics content knowledge. Despite improving teacher content knowledge and some aspects of instructional quality, these studies returned null or negative results on student achievement (see also, e.g., Dash et al., 2012). However, the current analysis is not causal. Observed differences in the relationships between knowledge and classroom instruction impacts and student achievement impacts could be due to other features of instructional programs that measured knowledge impacts that made them less strong and hence less effective.

As a practical matter, we note that many contemporary mathematics and science PD programs reject an “either/or” approach to improving knowledge and classroom instruction and instead emphasize both of these levers, providing teachers opportunities to deepen their learning of the content to be taught while also attending to pedagogical practices that teachers will use to portray the content. For instance, when teachers learn to use new curriculum materials, they may also be learning the specific subject-matter knowledge embedded in those materials (Remillard & Kim, 2017). However, how, to what degree, and under what conditions teacher PD programs should focus teachers’ attention on subject matter knowledge compared with centering instructional practices remains an important unsettled question in mathematics and science education research, which warrants further follow-up. Nevertheless, the observed pattern of findings provides support for the notion that among the kinds of PD interventions that have been rigorously evaluated using RCTs, there are positive links between improvements in teacher outcomes and improvements in student outcomes, as hypothesized in the PD logic model.

Strengths, Limitations, and Future Research Directions

Our findings move the field forward by providing empirical information about whether facets of the logic model implied in recent policy investments into PD interventions in mathematics and science are supported by the research available in the experimental evidence base. First, we show that PD interventions to improve mathematics and science instruction of the types evaluated in the contemporary experimental literature have, on average, been successful in improving teacher outcomes. In contrast to earlier reviews that examined only classroom instruction (e.g., Egert et al., 2018; Kraft et al., 2018), we also document that PD interventions of the types examined in RCTs also tend to improve the knowledge of in-service mathematics and science teachers. Third, in contrast to prior reviews that considered impacts on student outcomes (e.g., Lynch et al., 2019) or teacher outcomes (e.g., Garrett et al., 2019) alone, we provide empirical evidence that connects the dots between how instructional interventions influence teachers and how they influence students.

The current findings and evidence gaps identified in the literature point toward useful pathways for future research. First, as is usually true in meta-analyses, which rely on study report information, we faced the challenge of missing data. Empirical studies often reported impacts on teachers’ knowledge or classroom instruction, but not both. We urge future research studies to report both types of outcomes to enable future synthesists to empirically test hypothesized logic models of instructional interventions with larger data pools. Furthermore, access to the underlying individual-level data from the primary studies that permits testing of more of the additional paths represented in the Figure 1 logic model would be useful to the field, and we urge future primary study authors to make these data available. Additionally, information was often missing from primary studies on teachers’ personal beliefs, attitudes, and values, precluding us from analyzing how these factors related to interventions’ impacts despite their theoretical importance for shaping teachers’ implementation of innovations (Pajares, 1992). We urge primary study authors to collect and report information on these factors as they are an important topic for future research syntheses.

Inconsistent reporting on study methodological details also suggested areas for future improvement. We urge future primary study authors to consult the most recent version of the WWC handbook and report information on baseline equivalence of groups as needed, following its guidance. We further urge more detailed reporting on study attrition levels, in line with the reporting recommendations described in the WWC handbook, as this would permit future analysts to conduct finer-grained analyses of differences in study findings by attrition information. Another form of missing data pertained to the kinds of interventions that were studied. The kinds of PD interventions examined in randomized experimental studies are likely to be more time-intensive and resource-intensive compared with practices that are likely to be typically occurring in schools. As such, the current observed positive findings do not imply that schools’ or districts’ typical PD investments are paying off—rather, they indicate that PD interventions of the types examined in randomized studies and that met our relevance criteria were effective at bolstering teacher-level outcomes, on average. We concur with arguments for more rigorous studies of interventions that are similar to common practices in school districts, as such studies would shed more light on the outcomes of investments of the kind districts typically make into teacher PD (e.g., Hill, 2004). Additionally, nearly all studies were conducted in the United States. Given the degree of variability in instructional systems and settings across country contexts, more future experimental research on the efficacy of instructional interventions in non-US contexts would deepen the field’s understanding of how the efficacy of interventions may vary across settings.

Finally, other constructs of theoretical interest, such as the level of school leadership support allocated to the intervention, the degree of alignment between each intervention and the existing curriculum in study schools, and the financial and material supports expended (e.g., Penuel et al., 2010; Scher & O’Reilly, 2009; Wilson, 2013), could not be modeled due to minimal information reported in the primary studies. Reporting consistent data on students’ baseline knowledge and attitudes toward mathematics and science could also aid future research in understanding potential variations in interventions’ impacts—for example, by identifying programmatic features that may be especially beneficial in classrooms serving students who began the intervention with less exposure to or knowledge of the content area (e.g., McCabe et al., 2020; Warne et al., 2019). Building a set of standardized reporting recommendations for primary experimental studies on professional development interventions that cover these substantive issues would be a productive step for the field in order to enrich the ability of future syntheses to analyze how contextual factors relate to the efficacy of educational interventions (e.g., Lynch, 2024; for a recent effort in this vein, see Hill et al., 2025).

In sum, our findings indicate that mathematics and science teacher PD interventions of the types evaluated in the experimental literature show promise for strengthening outcomes for teachers, and these improvements are in turn linked to stronger student achievement. Given the projected expansions in the PD market in the United States over the next five years (Technavio, 2022), incrementally advancing the field’s understanding of how and under what conditions PD interventions in mathematics and science yield stronger teacher and student impacts can help districts and schools improve their odds of seeing meaningful changes in students’ ultimate learning and attainment.

Supplemental Material

sj-docx-1-ero-10.1177_23328584251335302 – Supplemental material for A Meta-Analysis of the Experimental Evidence Linking Mathematics and Science Professional Development Interventions to Teacher Knowledge, Classroom Instruction, and Student Achievement

Supplemental material, sj-docx-1-ero-10.1177_23328584251335302 for A Meta-Analysis of the Experimental Evidence Linking Mathematics and Science Professional Development Interventions to Teacher Knowledge, Classroom Instruction, and Student Achievement by Kathleen Lynch, Kathryn Gonzalez, Heather Hill and Ramsey Merritt in AERA Open

Footnotes

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This material is based upon work supported by the National Science Foundation under Grant #1348669. Any opinions, findings, and conclusions or recommendations expressed in this material are those of the author(s) and do not necessarily reflect the views of the National Science Foundation.

Note: This article was accepted under the editorship of Kara Finnigan.

Notes

Authors

KATHLEEN LYNCH is assistant professor at the Neag School of Education, University of Connecticut. Her research focuses on how education policies can strengthen opportunities and achievement for children, particularly in STEM.

KATHRYN GONZALEZ is a researcher at Mathematica. Her research uses quantitative methods to understand the effectiveness of early childhood programs and policies, including their impacts on children, families, and the early childhood workforce.

HEATHER HILL is the Hazen-Nicoli Professor of Teaching and Teacher Leadership at the Harvard Graduate School of Education. Her research focuses on teacher learning and professional development, the quality of mathematics instruction, the effectiveness of different approaches to teacher education, and machine learning tools that automate the measurement of instruction and feedback to teachers.

RAMSEY MERRITT is a doctoral student at the Harvard Graduate School of Education. His work is focused on improving instructional leadership, as well as bridging the gap between scholars and educators.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.