Abstract

Early literacy assessment has become commonplace in the preschool years, with phonological awareness constituting one component of emergent literacy targeted by such practices. This within-subjects experimental study examines the role of word familiarity on 93 dual language preschoolers’ performance on phoneme-level awareness tasks in three-phoneme words. A researcher-designed digital tool created individualized test items (foils and target responses) for each child. Half of the items presented target responses that contained familiar words, and half contained unfamiliar words. Results suggest unknown/unfamiliar target words yield lower phonological awareness performance scores.

Introduction

Learning to read is one of the essential goals of early education across the world. In order to read, children need to have emerging and developing skills in of a number of essential areas—vocabulary, print awareness, alphabet/letter knowledge, and phonological awareness (PA)—in the language of instruction. Assessment is commonly used in early learning contexts to establish baseline and incremental estimations of children’s skills in each of these areas. Teachers then leverage these results as they plan for instruction, to grow children’s literacy skills across the academic year.

This study emerged as part of a series of investigations (Cassano & Steiner, 2016; Sckaal et al., 2023) examining early literacy teaching and assessment practices in Head Start contexts, where many dual language learning preschoolers were enrolled. Work in these Head Start contexts revealed an anecdotal trend: Many of the dual language learners (DLLs) were less frequently successful in identifying (i.e., naming) picture cards used for PA assessment and instruction in the language of instruction than their monolingual peers. On the surface, this trend was not surprising (DLLs are working on learning vocabulary in two languages, after all). But teachers drew conclusions about and planned interventions for so many DLLs’ PA skills based on an assessment that used picture cards for words unfamiliar to many children. We wondered, to what extent was a child’s word familiarity entangled with their PA performance? Might an assessment that controls for word familiarity yield results different from an assessment that does not implement such controls? The aim of this study was to explore these phenomena.

Dual Language Learners: Early Learning Contexts in the United States

National estimates indicate there is a growing population of preschool-aged DLLs, that is, young children who have a home language other than English and are learning two or more languages simultaneously or learning a second language (L2) while continuing to develop their first (L1) (McFarland et al., 2018). As a result, increasing numbers of children are beginning preschool and kindergarten with comparatively less English language exposure than their monolingual peers. DLLs, particularly those who are first exposed to English upon preschool entry, are faced with the complex task of acquiring essential literacy and language skills while simultaneously learning the language of instruction.

Early childhood education programs must be prepared to provide DLLs with the individualized supports necessary to develop a strong language and literacy foundations essential for academic achievement (Espinosa, 2015). This study examines one possibility for individualizing assessment in the foundational area of PA.

Phonological Awareness and Vocabulary in Early Literacy Acquisition

Research has identified both PA and vocabulary as essential precursors to reading in the early and later phases of reading development (National Institute of Child Health and Human Development [NICHD] Early Child Care Research Network [ECCRN], 2005; Song et al., 2015; Storch & Whitehurst, 2002). Children with low levels of PA and vocabulary are likely to experience reading difficulties that persist throughout their school years (Juel, 1988; Paratore et al., 2011; Spira et al., 2005); thus, early detection of PA and vocabulary difficulties are essential for long-term reading development.

Vocabulary is defined as the words children understand or use while speaking and listening (Mancilla-Martinez & McClain, 2018). Vocabulary is closely related to reading vocabulary (i.e., the words children recognize and use when reading or writing) and reading comprehension (Ouellette, 2006). The frequency and complexity of children’s exposure to language(s) (e.g., Hoff, 2006) as well as extended discourse opportunities (Dickinson & Tabors, 2001) are associated with language acquisition.

PA is defined as the conscious ability to detect sounds (e.g., syllables, onset/rimes, and phonemes) in spoken words. Although the developmental origins of PA are underspecified, a well-documented sequence of acquisition of PA takes accounts for both the size of the linguistic unit (e.g., syllables, onset-rime, phonemes) (Liberman et al., 1974) and the complexity of the operations required by PA tasks, such as synthesis (e.g., blending tasks) or analysis (e.g., segmenting, deleting, or manipulating tasks) of linguistic units in a spoken word (Stahl & Murray, 1994; Yopp, 1988). PA and vocabulary are also related positively in early childhood (Dickinson et al., 2010). Moreover, vocabulary knowledge may contribute to individual differences in PA (Lonigan, 2007; Metsala, 1999) and performance on PA assessments (Barton, 1976; Metsala, 1999).

Phonological Awareness and Word Reading Accuracy

Defined broadly, PA refers to the ability to perceive and manipulate the sounds of spoken words. Evidence has unequivocally established a strong, positive relationship between PA measured in preschool and kindergarten and word reading accuracy (i.e., decoding) in first grade when decoding is the primary reading challenge (Juel, 1988; NICHD ECCRN, 2005; Storch & Whitehurst, 2002). Although PA does not involve print directly, it is linked with decoding because graphemes (i.e., alphabet letters) represent phonemes (i.e., sounds) in words in alphabetic writing systems. Thus, PA, in conjunction with alphabet knowledge, enables children to understand that graphemes are mapped onto phonemes (i.e., translated into), and that phonemes obtained when phonologically recoding print must be blended to form spoken words in the child’s vocabulary (Wharton-McDonald, 2018).

Extant research and research syntheses converge on five major findings (August et al., 2014; National Early Literacy Panel [U.S.] & National Center for Family Literacy [U.S.], 2008; NICHD et al., 2000; NICHD ECCRN, 2005; Snow et al., 1998). First, phoneme-level instruction increases phoneme-level skill (e.g., National Early Literacy Panel [U.S.] & National Center for Family Literacy [U.S.], 2008); second, when phoneme-level instruction is provided, current and subsequent decoding and encoding skills are impacted positively (e.g., Ehri et al, 2001; Lundberg et al, 1988); third, PA is reciprocally related to reading acquisition (e.g., Hogan, 2005); fourth, PA is essential but not sufficient for word reading acquisition (e.g., Castles & Coltheart, 2004); and, lastly, children learning English also benefit from direct instruction in PA (e.g., August & Shanahan, 2006; Dickinson et al., 2004).

Given the predictive power of PA (Hulme et al., 2012), early identification of difficulties in PA is essential to ensure preschoolers who may be at risk for reading difficulties receive appropriate instruction or intervention. Children who lack PA skill in the early grades have a high probability of experiencing reading difficulties throughout their elementary years (Juel, 1988). Spira et al. (2005) found first graders who had difficulty learning to read had 75% chance of continuing to struggle throughout their elementary years. The improvers—that is, those who recovered from reading failure (i.e., below the 25th percentile in reading)—had higher levels of PA in preschool compared to the nonimprovers.

Assessing Phonological Awareness

A growing number of studies have demonstrated the significance of PA as a foundation for reading. The What Works Clearinghouse (Institute for Education Sciences, n.d.) and the National Center on Intensive Intervention (n.d.) both include promising evidence that attending to PA instruction, and PA skill monitoring in preschool is positively related reading achievement. The use of PA assessments is practically ubiquitous in early childhood settings. This ubiquity has led to an increased number of instruments examining PA on the market. Many have leveraged the potential for streamlining efficiencies in data processing and tracking through digital technologies. Table 1 contains a brief overview of six PA assessment tools available for use with 3- to 5-year-olds (Invernizzi et al., 2004, 2007; Lonigan et al., 2007; Robinson & Salter, 2007; Torgesen & Bryant, 2004; Yopp, 1995). All are administered to children in a one-on-one testing context. Most of these utilize picture cards or arrays of images as stimuli for evaluating children’s awareness of a range of linguistic units (i.e., word, syllable, onset-rime, phoneme) across various task operations (i.e., isolating, segmenting, blending, deleting, manipulating). Psychometric properties such as predictive validity, concurrent validity, and internal consistency (reliability) measures for many of the assessment tools provide support their use in early literacy.

Overview of Selected Assessments of Phonological Awareness for Early Childhood

Chronbach’s alpha value exceeds .80.

Chronbach’s alpha value exceeds .90.

Moreover, PA assessments such as those appearing in Table 1 can be used to plan classroom instruction. They are also used to help identify children who may be at-risk for reading difficulties; however, there are several issues to consider. First, PA is often assessed comprehensively (i.e., assessments include sets of tasks measuring the full developmental continuum of PA: syllable, onset-rime, and phonemes) (e.g., PAT-2; TOPA+2) rather than focusing on the operations and linguistic units research has identified as essential for reading (i.e., segmenting and blending phonemes) (International Literacy Association, 2019). Second, assessment tasks vary considerably in their supports and demands. Specifically, Cassano and colleagues (Cassano & Schickedanz, 2015; Cassano & Steiner, 2016) examined several commonly used measures of PA. The results indicated PA assessment tasks may share some similarities, but they differ in many ways including the linguistic units of analysis (e.g., word, syllable, onset-rime, or phoneme) and positions (e.g., initial, medial, final), the task operations (e.g., detection, segmentation, blending), the response modes (e.g., verbal, pointing, clapping), the task formats (e.g., picture prompts, multiple choice, timed), and in words used (low vs. high age of acquisition).

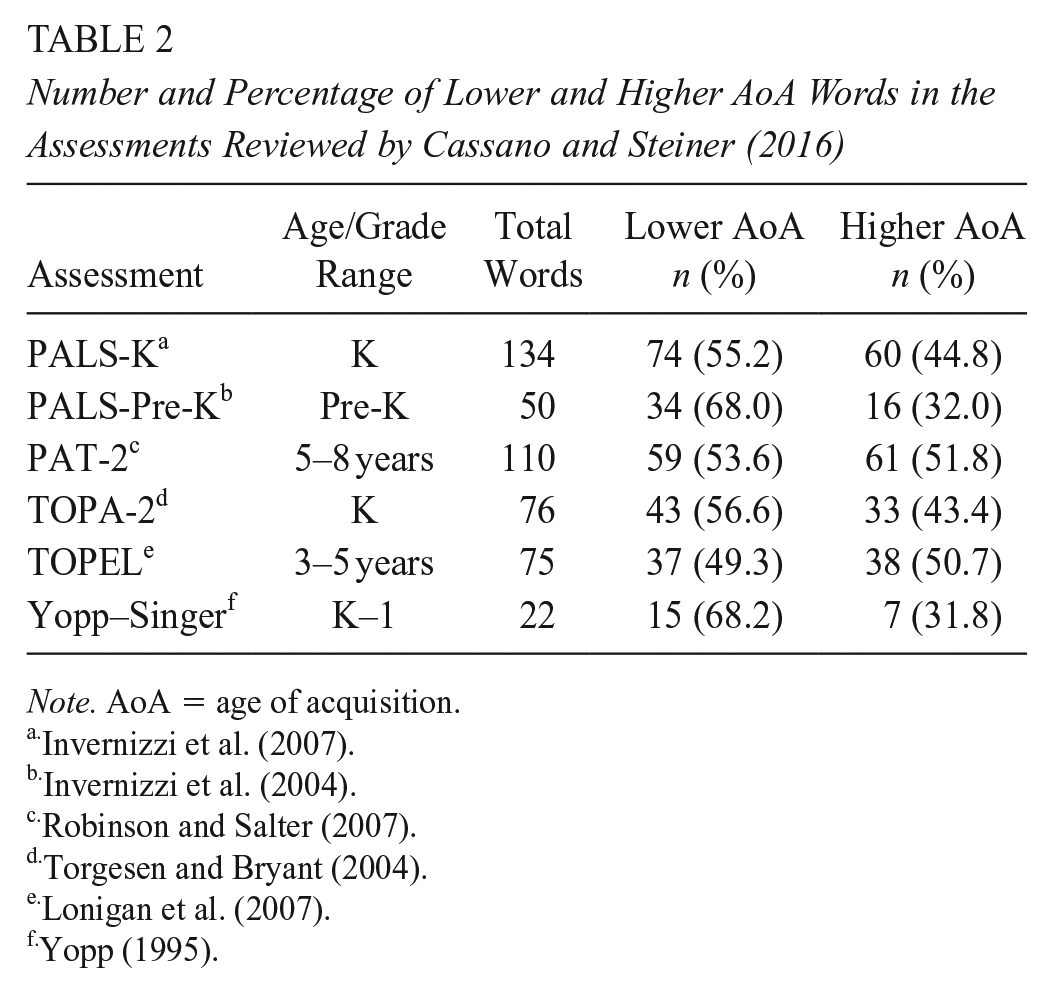

With regard to the words used on the measures, Cassano and Steiner (2016) examined the age-of-acquisition of the words/images used on several assessments. The findings from this study are summarized in Table 2. Age-of-acquisition (AoA) refers to the timing of when a word is typically added to the lexicon. Although individual differences are always evident, words typically learned earlier in development (e.g., up to age 10) are assigned lower AoA ratings whereas words typically learned later in development (e.g., after age 10) are assigned higher AoA ratings. Despite the relationship between performance on PA assessments and children’s vocabulary knowledge the words used on the assessments vary by age of acquisition.

Number and Percentage of Lower and Higher AoA Words in the Assessments Reviewed by Cassano and Steiner (2016)

Note. AoA = age of acquisition.

In a series of experiments, Metsala (1999) determined that 4- and 5-year-olds perform better on PA tasks (e.g., blending) when low-AoA words were used and when children had higher levels of vocabulary. Notably, the AoA ratings were assigned to the words yet children’s actual familiarity with these words was not examined. Moreover, only monolingual English speakers participated in the study.

It is unclear if commonly used PA assessments rely on AoA for word selection since information about how words were selected for the assessment are not always provided. When higher AoA words are used, it is difficult to determine if poor performance results from low PA skill or vocabulary knowledge (Barton, 1976). For example, although it is reasonable to assume that a child who cannot identify a picture of a cub, or who identifies the picture of a cub as a bear, will have difficulty when asked to “point to the picture that starts with /k/,” we lack a research base that allows for more than assumptions.

Methods

A researcher-designed, web-based PA assessment was developed to provide opportunities to examine (a) the predictive value of age-of-acquisition ratings for multilingual preschoolers and (b) the variation in PA outcomes as a function of the dual language preschoolers’ knowledge of the words utilized on the PA assessment tasks. A within-subjects experimental design was utilized.

Overview of the Assessment and Administration

The PA measure was designed to be administered individually in an environment conducive to early childhood assessment. The participants were assessed by the principal investigator (PI) or a trained research assistant on a laptop computer in a quiet corner of the classroom. The assessor and the participant sat side by side with the screen between them. Due to the COVID pandemic, both the assessor and the participants wore facemasks as required by policy.

The assessment began when the assessor entered the name, date of birth, and home language of the participant and clicked the start button. In addition to participant information, the assessment included two main components: a vocabulary screen and a PA measure. The vocabulary screen required participants to label a series of images presented individually as described in more detail below. This component helped determine if the participants had expressive-level knowledge of the words/images presented. The PA component was designed to examine participants’ phoneme-level awareness through three commonly used phoneme-level tasks: isolation, blending, and segmentation.

The Assessment Interface

A tool bar located at the bottom of the screen enabled the assessor to record the participant responses and navigate the assessment. (The tool bar and user interface were refined through pilot testing [see variations in the appearance of the bottom bars from Figures 1 to 3] prior to the start data collection to help make clearer the functions and purposes of each button in the assessment protocol, detailed below.) The most recent interface utilized for assessment in the present study included two buttons for recording whether a response was accurate or inaccurate. Skip, back, and next buttons were also present. A skip button allowed the assessor to skip a specific probe (e.g., target word or PA item) without recording a response as accurate or inaccurate. The back button allowed the assessor to return to a previous screen as needed, and the next button allowed the examiner to advance to the next task when a task needed to be discontinued for any reason (e.g., if a participant asked to stop, refused to respond, or if more than half of the items were completed incorrectly).

Word familiarity screen in the researcher-designed tool.

Sample initial phoneme isolation task.

Phoneme blending task.

Criteria for Word Selection

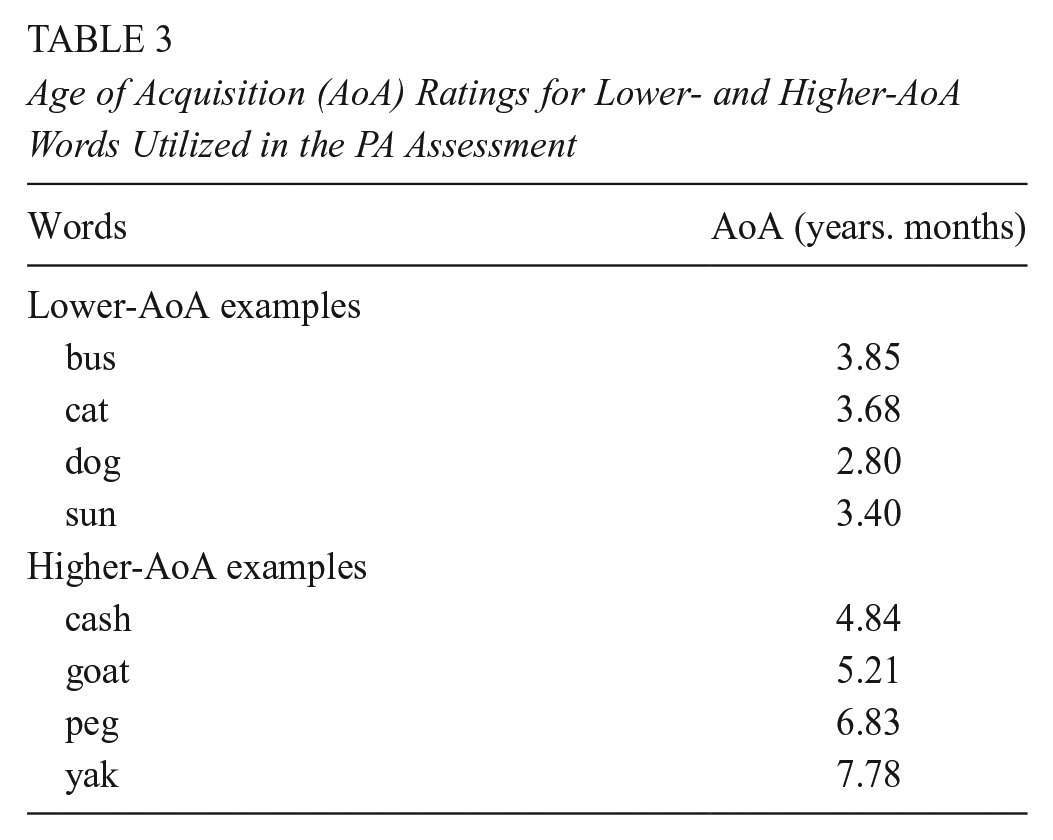

Three criteria were used for selection of words used in the vocabulary screen and the PA tasks: part of speech, number of phonemes, anticipated familiarity rating (i.e., known/unknown). First, the words used were nouns. Nouns are typically acquired earlier in development (Gentner, 1982) and were easier to represent in static images. Second, target words contained three phonemes including consonant-vowel-consonant (cvc) words such as dog, consonant-vowel-vowel-consonant words (cvvc) such as sail, and vowel-consonant-e (-vce) such as nose. Third, AoA ratings were used to sort words into lower and higher AoA (Kuperman et al., 2012). Lower-AoA words included bus, cat, dog, and sun. Higher AoA words included cash, goat, peg, and yak. Simple, yet colorful line images were selected for each word using creative commons or public domain clip art.

The resulting vocabulary familiarity screen included a total of 113 words. The assigned AoA ranged from 2.58 to 13.41 with a mean = 5.38 AoA (SD = 2.01 for all words). Table 3 presents the AoA ratings for a sample of words included. Because the purpose of the assessment was to determine the participants’ PA in English, word knowledge was not collected in any of the participants’ first languages.

Age of Acquisition (AoA) Ratings for Lower- and Higher-AoA Words Utilized in the PA Assessment

Word Familiarity Screening

As noted previously, the first component of the PA assessment was a word familiarity screen. This portion of the assessment was designed to create two word banks that would be used for the subsequent PA tasks. Each word bank was designed to include up to 15 words per participant with one containing words familiar to the participant (i.e., known words) and a second containing words unfamiliar to the participant (i.e., unknown words).

Figure 1 presents a screen shot of an early item in the word familiarity screen. Here, the pictured item was in the database as bed. If the participant said “bed” in response to the probe, “What is this a picture of?” the assessor scored the response as correct, and the word was labeled as familiar and included in PA task items as a target known/familiar word. If the participant responded with a related but not the target response (e.g., “sleep,” “nighttime,” or “pillow”), labeled the image in another language (e.g., cama), or made associated sounds/gestures (e.g., snoring or laid head down), the image was skipped to indicate that the participant was familiar with the image/item, but had not produced the target word bed.

Incorrect or “I don’t know” responses were recorded as unknown, and the image was included in the unknown/unfamiliar word bank and included in the subsequent phoneme-level awareness tasks. When the responses were not understood by the assessor (e.g., unintelligible), the item was skipped and not recorded as accurate or inaccurate.

Assessments of Phonological Awareness

The next three components of the research tool were comprised of PA assessment tasks including initial sound isolation, phoneme blending, and phoneme segmenting. There were 10 items for each of the three PA tasks, with 5 items populated with the target as an unknown/unfamiliar word and 5 items populated with the target as a known/familiar word. The sequence of presentation of known/familiar and unknown/unfamiliar was randomized. The image appearing in Figure 2 displays the view of the first PA task, isolating initial phonemes. For this one, the target was “bed,” and three foils (sun, goat, fish) were presented. For the isolating initial phoneme task, the assessor said, “Which of these begins with /b/?” The assessor then selected the picture the participant identified and indicated whether the response was correct or incorrect.

The image appearing in Figure 3 displays the view of second PA task for a different child, blending phonemes. In this example, the screen shows the target word, pig, and three foils (cash, fish, and fan). For the phoneme blending task, the assessor would say, “Which of these is /p/ +/i/ + /g/?” If the participant points to or says “pig,” the assessor scores it as correct. Any incorrect response is recorded to document the participant’s incorrect response.

The final screen for the PA tasks engaged the participant in phoneme segmentation tasks. The participant saw a single known/familiar or unknown/unfamiliar image and the assessor asked, “What are the sounds in ___?” If the participant said all three phonemes correctly with a pause between them, /b/ + /aw/ + /l/, the item was scored as correct. (Note that the second phoneme in ball is written in the International Phonetic Alphabet as /ɔ/, but we wrote it out to align with common pronunciation.) Any response that was not three isolated phonemes (e.g., onset-rime break) was scored as incorrect.

Participants

The participants included 108 DLLs from eight classrooms in two inner-city Head Start preschools in the Northeast United States. Participants were identified as DLLs by the center director based on caregivers’ responses on the home language survey completed as part of Head Start’s enrollment process. The directors provided information on the participants’ home language and date of birth.

Analysis was conducted with only the participants whose data sets were complete, resulting in an analyzed sample of 93 DLLs. The participants’ ages ranged from 39 to 67 months (M = 56.40; SD = 7.76). The languages spoken by the participants are presented in Table 4.

Participants’ Home Language

Results

Assessors recorded results for each component of the research tool for all participating children. These data were combined with the AoA data for the individual words utilized in the word familiarity screen and were then analyzed to determine the extent to which AoA data predict multilingual preschoolers’ vocabulary knowledge and PA. Total unknown/unfamiliar items scored as correct and incorrect and total known/familiar items scored as correct and incorrect were calculated for each of the three PA tasks.

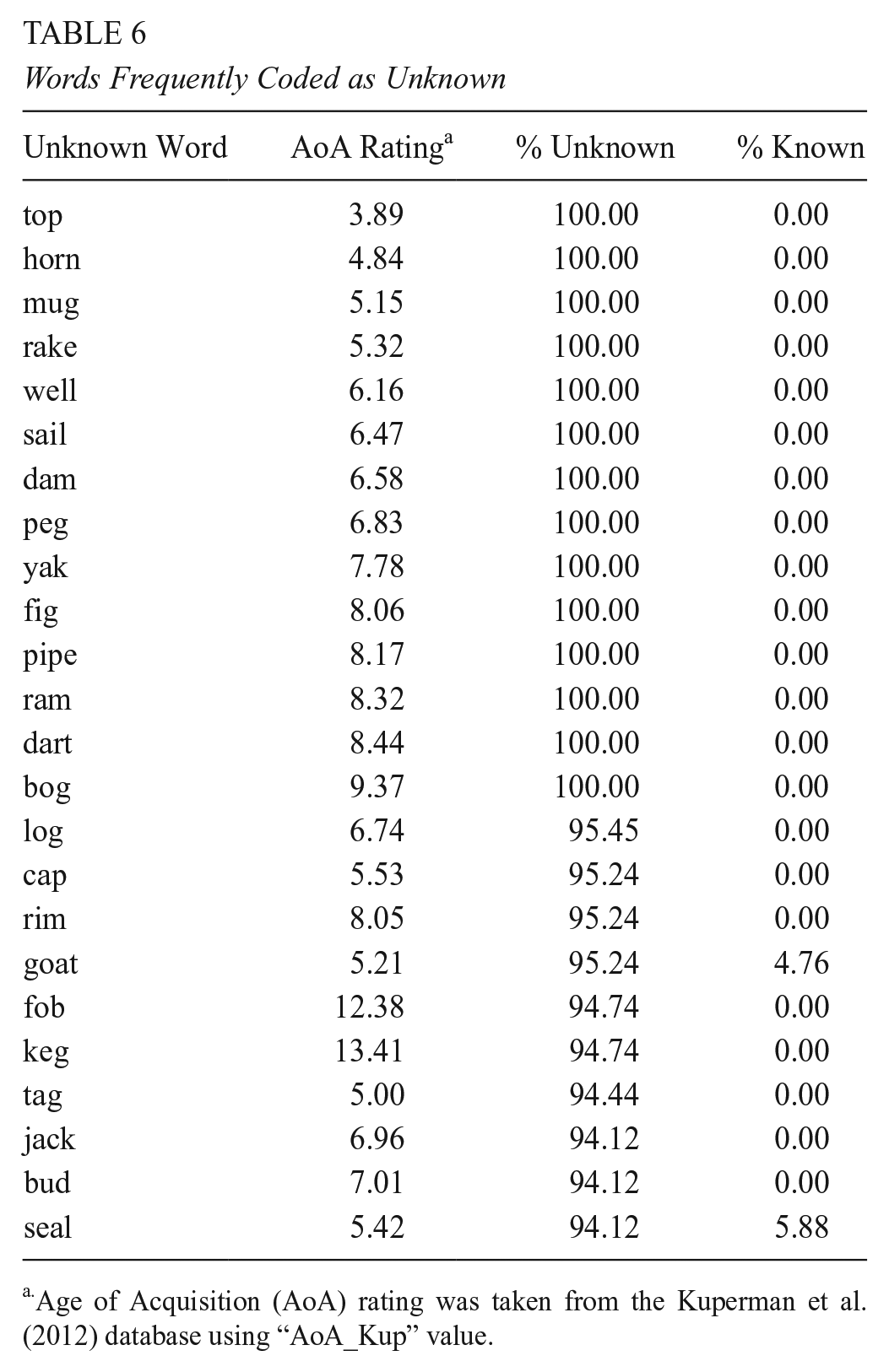

Word Familiarity Results

The top 24 words frequently coded as known/familiar appear in Table 5 alongside the percentage of participating for whom the word was viewed and coded as known/familiar or unknown/unfamiliar. Despite the use of low-AoA words, creating complete sets of known/familiar word banks (i.e., 15 words each) was more challenging than expected. Specifically, although 85 of 93 (i.e., 91% of participants) achieved a complete set of 15 unknown words, only 49 of 93 participants (52.7 % of participants) had complete known/familiar sets of words. When a full set of known/familiar words was not achieved, known/familiar words/images were repeated as needed during the phoneme-level tasks to ensure the balance of known/familiar unknown/unfamiliar items was maintained. The top 24 words known/familiar appear in Table 5 along with the percentage of participants who identified the word as known/familiar or unknown/unfamiliar. Table 6 displays similar data for the 24 words most frequently coded as unknown/unfamiliar.

Words Frequently Coded as Known/Familiar

Age of Acquisition (AoA) rating was taken from the Kuperman et al. (2012) database using “AoA_Kup” value.

Words Frequently Coded as Unknown

Age of Acquisition (AoA) rating was taken from the Kuperman et al. (2012) database using “AoA_Kup” value.

Recoded Words

In two instances, words predicted as unknown/unfamiliar (i.e., tub and van) were recoded in accordance with children’s responses. The image coded as “tub” was selected due to high AoA. The image included a dog in a tin washtub. Yet, throughout the assessment, the participants consistently labeled the image “dog.” Similarly, the image of van was consistently labeled a “car.” As a result, these items were recoded as known words and accepted as known throughout the assessment. The two examples of these misnomers are for target illustrations of van and tub (with a puppy in the tub). Assessors accepted car and dog, respectively, for these items. No child identified the illustrations as van or tub in our trials.

Skipped Words

There are instances in which the percentages appearing in Tables 5 and 6 do not sum to 100 percent. In such cases, the word was skipped by the assessor. For example, the image of sock from Table 5 was skipped 40% of the time it was shown. The skips were due to related responses in English (e.g., foot, feet) or Spanish (e.g., calcetines [socks], pies [feet]).

When words/images were unknown/unfamiliar to the participants, they were unknown for a larger percentage of participants. In other words, these rare, typically higher-AoA words were unknown/unfamiliar to most of the participating preschoolers. The mean AoA for known/familiar words appearing in Table 5 was 3.42 (SD = 0.43), and the mean AoA for unknown/unfamiliar words appearing in Table 6 was 6.79 (SD = 2.26).

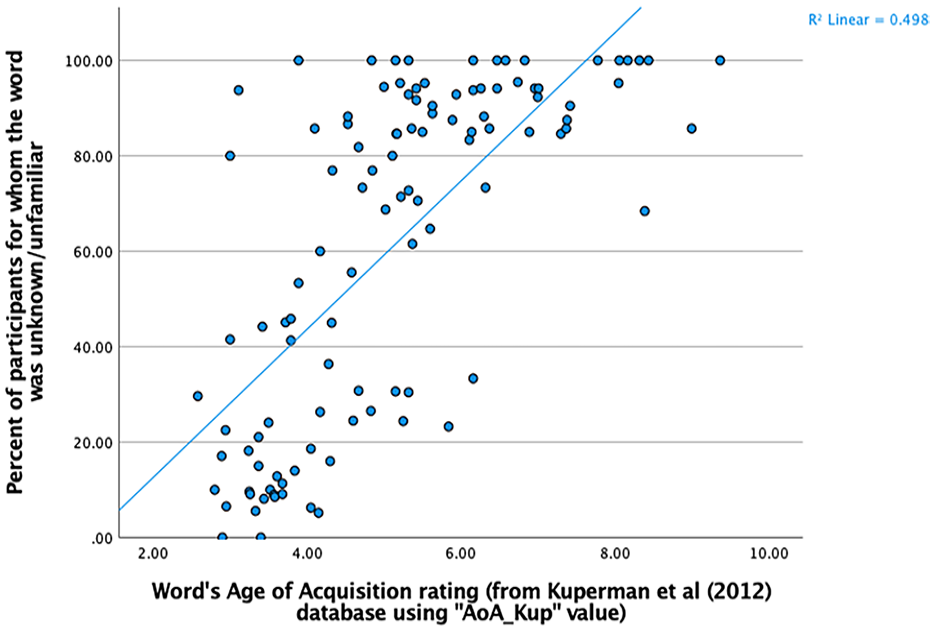

Scatterplots and lines of best fit were utilized to assess the extent to which a predictive relationship existed between AoA and the proportion of participants for whom a word was coded as known or unknown. Excluding several words from the word familiarity screen that were outliers in terms of AoA higher than age 10.0, the AoA ratings of words accounted for 46.0% of the variance in known words and 49.8% of the variance in unknown words (see Figures 4 and 5, respectively). As AoA increased, the proportion of instances the word was coded as known decreased.

Scatter plot of percentage of participants for whom the word was known/familiar by word’s age of acquisition rating as specified by the Kuperman et al. (2012) database using “AoA_Kup” value.

Scatter plot of percentage of participants for whom the word was unknown/unfamiliar by word’s Age of Acquisition rating as specified by the Kuperman et al. (2012) database using “AoA_Kup” value.

Initial Phoneme Isolation Task Results

A paired sample t-test compared children’s scores on the initial phoneme isolation task items that were scored as correct when the target word was familiar/known (M = 1.66, SD = 1.54) vs. unfamiliar/unknown (M = 0.97, SD = 0.99). Results indicated a significant difference in mean initial phoneme isolation items correct as a function of the child’s familiarity with the words, t(92) = 3.994, p < .001, two-tailed.

An additional paired sample t-test was utilized to compare children’s scores on the initial phoneme isolation task items that were scored as incorrect when the target word was familiar/known (M = 2.28, SD = 1.68) versus unfamiliar/unknown (M = 2.71, SD = 1.36). Results indicated a significant difference in mean initial phoneme isolation items correct as a function of the child’s familiarity with the words, t(92) = −2.24, p = .027, two-tailed.

Phoneme Blending Task Results

A paired sample t-test was utilized to compare children’s scores on the phoneme blending task items that were scored as correct when the target word was familiar/known (M = 2.29, SD = 1.73) versus unfamiliar/unknown (M = 1.41, SD = 1.15). Results indicated a significant difference in mean phoneme blending items correct as a function of the child’s familiarity with the words, t(92) = 5.16, p < .001, two-tailed.

An additional paired sample t-test compared children’s scores on the phoneme blending task items scored as incorrect when the target word was familiar/known (M = 1.59, SD = 1.39) versus unfamiliar/unknown (M = 2.79, SD = 1.46). Results indicated a significant difference in mean phoneme blending items correct as a function of the child’s word familiarity, t(92) = −7.59, p < .001, two-tailed.

Phoneme Segmenting Task Results

The majority of the children’s scores were at the floor and so were not normally distributed. Thus, the results were not well-suited to run tests of statistical significance. No additional analyses were conducted.

Discussion

This study examined the relations between word familiarity and performance on phoneme-level tasks in preschoolers who attended Head Start. The research was conducted with DLLs using an innovative assessment that used a vocabulary screen to identify familiar/known and unfamiliar/unknown words and then used these words to populate three phoneme-level tasks (i.e., initial phoneme isolation, blending, and segmentation). Unlike previous research that examined the relationship between performance on PA tasks and the AoA as a global measure of familiarity of words used in PA tasks (e.g., Metsala, 1999), this is the first known study to examine individual participants familiarity with the words used in the assessment. Because the participants familiarity with the words/images presented varied, each participant was presented with an individualized assessment. Moreover, children’s performance on the PA tasks were examined in relation to their familiarity with the words/images presented.

The results were noteworthy. First, consistent with previous studies on AoA (Kuperman et al, 2012), as the AoA of a word increased, the likelihood that it was a known word for preschool-aged participants decreased. Put simply, the preschool-aged participants were less likely to be familiar with a word typically acquired later in English language development (i.e., higher AoA). The participants were also more likely to be familiar with words typically acquired earlier (i.e., lower AoA).

Second, an examination of children’s performance on the initial phoneme isolation task indicated a significant difference in mean items correct as a function of the child’s familiarity with the words. Put simply, children performed better when the word was familiar. Likewise, they did not perform as well when the word was unfamiliar. A similar result was observed on the phoneme-level task: Children’s familiarity with the words/images on the assessments was related positively to their performance. Here again, the participants were considerably more likely to identify the target word when a familiar/known word was used compared to an unfamiliar/unknown one.

The relationship between AoA and the participant’s familiarity with the words on the assessment was also noteworthy. Specifically, AoA was used to identify words that were expected to be known to participants; however, as is often the case with literacy research conducted in previous decades, the database was compiled using primarily monolingual speakers, and so yielded a larger than expected number of words that were unknown to participating children. (For example, in Kuperman et al.’ [2012] examination of AoA for 30,000 English words, only 2.5% of the respondents (n = 43) reported speaking a language other than English).

Limitations

Several limitations should be noted. First, modeling or feedback may have offered more precise information on children’s performance, particularly on the phoneme segmentation task. Teaching/feedback items were not included in the assessment. At times, the participants offered responses that were inconsistent with the task requirements, resulting in the item scored as incorrect or skipped. For example, during the phoneme segmentation task, when asked, “What sounds do you hear in cat? children responded with the meowing sound a cat makes. Teaching items providing a model (e.g., “Meow is the sound a cat makes when it communicates. That word, meow has the sounds /m/ - /ee/ - /aʊ/]. What sounds do you hear in the word cat?”) or feedback (“You’re saying the word meow. Can you say the word cat? Now tell me the sounds you hear in cat.”) may have helped the participants better understand the assessment and may have increased the number of items with a response coded as correct in each task (isolation, blending, and segmenting).

Second, a more fine-grained scoring protocol may have offered more insight into the participants’ performance on the phoneme-segmentation task. At times, participants provided the initial sound once (e.g., /k/) or repeatedly (e.g., /k/, /k/, /k/) when presented with an image of a cat. A more fine-grained scoring protocol that allowed for partial credit would have provided an opportunity to capture a child’s emerging phoneme segmentation ability. Specifically, the scoring protocol should be consistent with the sequence of acquisition in PA. That is, initial phoneme/onset and rime may be scored as a precursor to phoneme-level awareness.

In addition, concurrent validity was not established. Tasks used frequently on measures of PA with a focus on the tasks required for reading (International Literacy Association, 2019) are readily available and have long histories of widespread use, particularly in Head Start preschools and Title I-funded schools. Establishing concurrent validity would provide an empirical bridge to tie to historical data sets collected by many early learning contexts. Without a measure of concurrent validity, this assessment and its results may not resonate with school administrators or policymakers.

Finally, this study did not account for participants’ languages other than English. The goal of the study was to assess PA in English because English was the language of instruction. Because of this, the AoA for the words utilized were calculated on words in the English language. We do not know the extent to which another set of words scaled for acquisition in other languages would have provided different results. Nor do we know whether accepting and utilizing correct responses in a child’s other language on the vocabulary screening would have provided different results. English words and their pronunciation in other languages oftentimes do not have the same number of phonemes (e.g., cat with three phonemes vs. gato with four phonemes). In addition, objects are not always referred to as the same word in different regions speaking the same language. Accounting for such variations in lexicon and in phonology could provide value as a moderator variable, but creating an assessment to account for these differences is a complex endeavor.

Conclusion

Due to the limits of instructional time, PA instruction must be efficient, effective, and equitable (Rohde et al., 2021). To achieve this goal, early childhood teachers must have accurate and valid assessments that provide timely and accurate information on PA.

The results of this study have implications for word selection on assessments of PA, particularly for students who are multilingual and thus, less likely to be familiar with the words targeted in school-based assessments. Additionally, there are implications for using AoA as a global measure of word familiarity in assessment with DLLs. Assessments that inaccurately or inequitably assess PA (i.e., measure the same skills in vastly different ways) could result in an (a) inaccurate picture of instructional needs, or (b) overidentification or underidentification of children at risk for reading difficulties. Moreover, given the growing number of DLLs in preschool, it is essential that early childhood teachers have an assessment that disentangles PA from vocabulary and ensures children are familiar with the words and images on the assessment. Although a more formal analysis is required, initial examinations suggest the words most likely to be familiar to participants were “school words”—that is, words children encounter in the Head Start setting such as those associated with family-style meals (e.g., apple, milk), classroom routines such as weather, story reading, or outside play (e.g., sun, book, ball), or animals found frequently in stories (e.g., cat, dog, fish).

This research suggests that familiarity with the words on the assessment is important to consider when assessing young children, particularly young DLLs. The results indicated, quite strongly, that using unfamiliar words/images on PA tasks increased the likelihood that children provided an inaccurate response. Thus, in instances where word familiarity is not vetted prior to engaging in PA assessment, early childhood professionals could be left to wonder if any observations of poor performance were based on a child’s lack of PA skill or a lack of familiarity with the words/images on the assessment.

Given the importance of PA acquisition in preschool and kindergarten, subsequent research should examine the implications for instruction of PA as well as the role of word familiarity in the assessment and instruction in other foundational skills essential for literacy and language acquisition.

An assessment becomes a barrier if it fails to determine whether a child even knows the names of the images used. Given the growing number of DLLs in preschool classrooms, the relationship between word familiarity and PA performance is important to explore. At present, PA instruction and intervention based on assessment results may have limited utility because a preschool-aged DLL’s word knowledge and PA knowledge are intertwined. This research is the first step in clarifying this relationship.

Footnotes

Acknowledgements

Special thanks to the participating teachers and children, to Randall Sckaal for help designing the research instrument, and to Caitlin Ciamataro for her assistance in data collection.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: Funding for this work was provided by Salem State University’s Deflice Grant and Salem State University’s Creative Research and Creative Activities (CRCA) Grant.

Authors

KATHLEEN A. PACIGA is an associate professor of education in the Department of Humanities, History, and Social Sciences at Columbia College Chicago;

CHRISTINA M. CASSANO is a professor of education in the Childhood Education & Care Department at Salem State University;