Abstract

The past two decades have seen the rise of the residency model, another nontraditional pathway to certification that offers an intensive, practice-based, context-specific preparation designed to address shortages in hard-to-staff districts. Although advocates call the model innovative, research examining residencies at scale warrants skepticism around whether they truly offer a distinctive preparation. Surveying all programs across Tennessee about their adoption of features that characterize the model, we find that residencies offer a unique pathway to teaching in some expected ways though not all. Specifically, residencies reported longer clinical placements, more financial support for residents, and higher rates of mentor training, though they mostly did not report unique practices of candidate recruitment, district partnership, mentor selection, or curricular design. Moreover, we see that the distinctiveness of the model is in decline, with evidence of its dilution among newer residencies and the spread of its features among traditional programs.

Advocates argue that the residency model offers a unique and superior pathway to certification (Berry et al., 2008; Guha et al., 2016; National Center for Teacher Residencies, n.d.-a; Silva et al., 2014), while others are skeptical that the residency model is truly as “innovative” as claimed (e.g., Reagan et al., 2021). We believe this is an empirical question. To answer it, we report the results of a novel survey administered to all program leaders in Tennessee to explore how distinct residencies truly are from traditional programs, comparing average differences in the presence of the program features that typically characterize the residency model as well as how these differences have changed over time.

We find that residencies consistently report offering preparatory experiences that are distinct from those at traditional programs in terms of clinical placement length, financial incentives for residents, and, to a lesser extent, support for mentors; however, other features, like an integrated curriculum, a highly involved partner district, and high-quality candidates in high-needs areas, characterize both residencies and nonresidencies at similar rates. Moreover, while these observed distinctions are clearest for the subset of programs considered by external organizations as truly embodying the residency model, they are significantly reduced when expanding to all programs whose leaders claimed to have fully adopted the model. Finally, we find evidence that the distinctiveness of the model may be declining over time, due to the rise of self-identified “partial” residencies, which mostly appear to offer a traditional pathway to teaching, as well as the increased adoption by nonresidencies of many features associated with the model.

The Teacher Residency Model

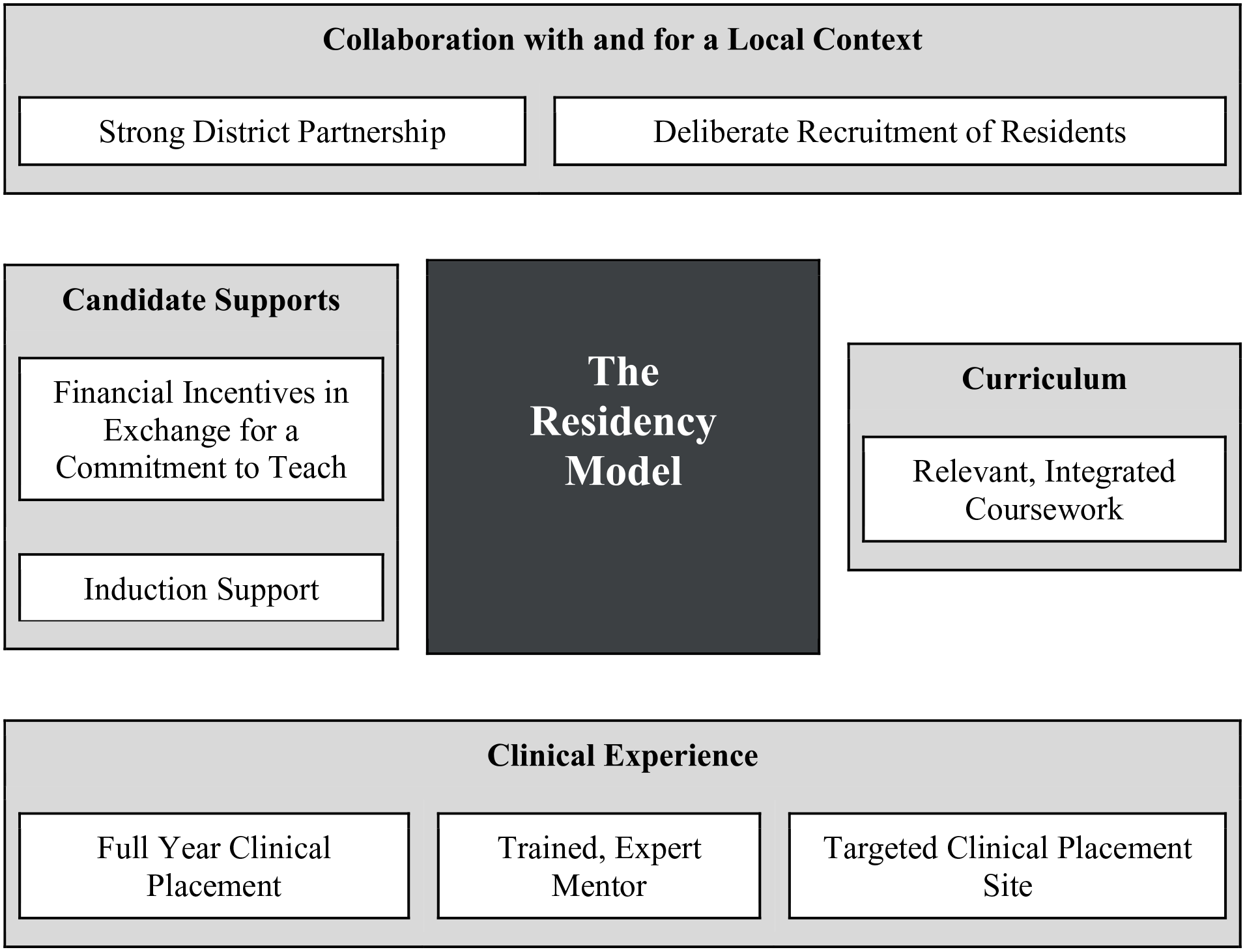

Inspired by the training that prospective doctors receive in medical school, the teacher residency model is based on the premise “that those learning to teach need authentic learning experiences with expert mentorship in the context in which they will eventually be teaching” (Guha et al., 2016, p. 3). As they have grown in popularity, residencies have become more varied, with program leaders adapting the model to fit the needs of their specific local contexts. However, efforts to identify commonalities across programs have established eight key features of high-quality residencies, shown below in Figure 1 (for the remainder of this section, we draw heavily on Berry et al., 2008; Guha et al., 2016; National Center for Teacher Residencies, n.d.-b). These features were collectively designed to improve the traditional preparation experience by providing a more diverse pool of aspiring teachers with a more intensive, supported, and context-specific training in school settings more intentionally organized for learning in order to directly solve the staffing needs of districts. Although conceptualizing the model purely in terms of its components will inevitably fall short of encapsulating the gestalt of the teacher residency, we believe that these features capture much—though not all—of its uniqueness and are relevant to those in teacher education programs making decisions about how to best structure and organize their paths to the profession.

The residency model and its features.

Collaboration With and for a Local Context

Residencies begin by establishing a strong partnership with at least one local school district. Together, program and district leaders work to design a teacher preparation experience tailored directly to fit the local educational context by collaborating across programmatic areas. For example, district officials might be involved in curricular and clinical placement design, including the selection of mentors, to help ensure that preparation is highly aligned to the expectations and realities of teaching; they also might help shape the admissions and recruitment processes to target the prospective teachers that would most directly benefit their workforce.

Relatedly, residencies, more so than traditional programs, engage in the deliberate recruitment of cohorts of highly qualified prospective teachers who will both fulfill the specific staffing needs of and diversify the local teacher workforce. To do so, residencies typically set strict admissions standards while also prioritizing the enrollment of prospective teachers of color and career changers with experience in other fields. Furthermore, they collaborate closely with districts to target prospective teachers for more difficult-to-staff positions, typically in subject areas like math and science or with student populations like English language learners and students with disabilities. Studies of individual programs suggest that exemplar residencies succeed at this practice, recruiting more racially diverse cohorts seeking more endorsements in higher-needs areas (for a list of many of these studies, see Chu & Wang, 2022).

Clinical Experience

Perhaps its hallmark feature, the residency model emphasizes extensive clinical training in a deliberately chosen site under the supervision of a highly effective mentor teacher. Residencies typically require year-long clinical placements, resulting in over 900 hours of preservice clinical preparation, compared to roughly 500 for most traditional programs (Guha et al., 2016).

Residencies determine suitable sites for these clinical placements based on criteria at the mentor and school levels. First, the residency model prioritizes the recruitment of experienced, effective classroom teachers with demonstrated coaching skills and the willingness to host a candidate for a year. Moreover, to cultivate this pool of trained, expert mentors, many residencies offer mentors financial incentives and/or extensive professional development around coaching and supporting their residents.

Beyond the individual mentor, residencies also aim to place residents in targeted clinical placement sites called “teaching schools” characterized by supportive environments, significant feedback, and high levels of collaboration across staff. These schools are located in residencies’ partner districts, helping to ensure a high degree of alignment between the preparation that residents receive and their future experiences as teachers. Finally, residents are often grouped in cohorts at the same schools to provide increased support, foster deeper relationships, and strengthen learning connections across classrooms.

Curriculum

Residents’ clinical experiences are supplemented with highly relevant, integrated coursework. Partner district officials and teachers may play a substantial role in designing and even (co)teaching residency courses, while program leaders may spend a significant amount of time at clinical placement sites providing feedback on residents’ instruction. This degree of integration has led many researchers to characterize the residency model as a “third space” that blends theory and practice while blurring the boundaries between practitioner and academic knowledge (Klein et al., 2013; Solomon, 2009; Zeichner, 2010).

Resident Supports

Finally, to attract high-quality, diverse teachers into partner district classrooms, residencies typically offer significant financial and professional support both before and after program completion. Residents usually receive some sort of financial incentive in exchange for a commitment to teach in the partner district for a period of time (often 3 to 5 years) after program completion, including a living stipend, loan forgiveness, tuition remittance, or even salary and benefits. Residencies may also provide scaffolded induction into the workforce, such as continued mentoring or access to coursework during residents’ early in-service years.

Literature Review

Each of the eight features in Figure 1 aims to make a unique contribution to the overall goal of the residency model—namely, solving the staffing issues of local school districts by improving the recruitment, performance, and retention of high-quality, diverse teachers in high-needs subject areas. Collectively, they illustrate the residency model as something of a hybrid (Zeichner, 2010), blending components common to both traditional and alternative pathways (Solomon, 2009) into a “third space” that seeks to bridge district and university (Klein et al., 2013; Zeichner, 2010). However, conceptualizing the residency model as a hybrid also invites the question of exactly how distinct the residency model is. Many nonresidencies would likely lay claim to various combinations of these very same features, while perhaps only the most exemplary residencies actually implement all eight with fidelity.

In a study of program websites, Reagan et al. (2021) investigated how residencies presented themselves online and claimed legitimacy. The authors explored the websites of 20 programs randomly sampled from those that were identified as members of the National Center for Teacher Residencies (NCTR), funded through the U.S. Department of Education Teacher Quality Partnership, or included in Guha et al. (2016). Their content analysis revealed that many residency websites claimed “legitimacy by innovation” (Reagan et al., 2021, p. 8), explicitly framing their programs as distinct from and superior to traditional teacher preparation pathways. The authors, however, question this claim, given that many of the features residency program websites highlight have become increasingly common among nonresidencies in recent years. Consequently, they end by calling for “research that could unpack programmatic structures across residency programs and could shed light on whether and how residency structures and components are different or innovative, both from each other, and from other models of teacher preparation” (Reagan et al., 2021, p. 13).

In the same way that prior research has suggested that alternative pathways may not be quite as alternative as advertised (Boyd et al., 2008; Walsh & Jacobs, 2007), there is a need for research that clarifies the extent to which residencies are truly distinctive. Given that traditional programs also continue to evolve, often by adopting the same features as residencies, the degree to which these two groups actually differ in terms of the kinds of preparatory experiences they provide is not immediately clear. For example, in roughly the same window since the residency model was established, traditional pathways have increasingly adopted features like a longer, more intensive clinical experience (Grossman, 2010; Zeichner & Bier, 2012) and early career induction and mentoring (Bastian & Marks, 2017; Ronfeldt & McQueen, 2017). Even if these are universal features of residencies—which is also unclear—it is no longer obvious how unique these features are.

While many studies of individual programs highlight the features that characterize the residency pathway (e.g., Papay et al., 2012; Perlstein et al., 2014; Solomon, 2009), we are aware of only three studies that have begun to empirically assess the extent to which the programmatic features of residencies and nonresidencies differ in a broad and practical sense. First, Matsko et al. (2021) surveyed nearly 800 preservice and mentor teachers to disentangle how traditional, alternative, and residency pathways in Chicago differed in terms of the characteristics of their cohorts, the design and features of their programs, and the perceptions and intentions of their graduates. Secondly, Terziev and Forde (2021) combined interviews and focus groups with administrative records to compare the features of four state grant-receiving residencies in Pennsylvania to those of the nonresidency pathways within the same institutions. Finally, Silva et al. (2014) compared the characteristics and experiences of just under 400 graduates of 12 residencies across the country receiving funding from the federal Teacher Quality Partnership Grants Program with those of other novice teachers who had graduated from nonresidency programs and were hired into the same districts. We summarize what each study reveals about differences in each of the eight features below.

Collaboration With and for a Local Context

All residencies studied by both Silva et al. (2014) and Terziev and Forde (2021) were required by their respective departments of education as a part of their grant funding to establish a partnership with at least one high-need district. However, neither of these studies nor Matsko et al. (2021) examined how the existence or strength of a district partnership differed between residencies and nonresidencies.

With regard to deliberate recruitment, both Silva et al. (2014) and Matsko et al. (2021) observed that residents were indeed more likely to teach in the high-needs subject areas of math and science. However, unlike most studies of individual programs, they found that residency cohorts did not differ from those in nonresidency pathways in terms of composition by race, gender, or age. Similarly, most of the residents in Pennsylvania programs were White; though the authors made no direct comparison to the racial composition of nonresidents, programs acknowledged that attracting residents of color was a substantial challenge (Terziev & Forde, 2021). Finally, residents in Chicago had lower average undergraduate grade point averages (GPAs) (Matsko et al., 2021), whereas Silva et al. (2014) found no difference in prospective teachers’ GPAs, although residents tended to have graduated from more competitive undergraduate programs and were more likely to have advanced degrees. Pennsylvania programs varied greatly in the rigor and nature of their application process, with no clear differences between residencies and nonresidencies (Terziev & Forde, 2021).

Clinical Experience

Matsko et al. (2021) found that Chicago’s residencies were far more likely than traditional or alternative programs to offer clinical placements of over 15 weeks. In Pennsylvania, this was a requirement for grant funding as well, with newly implemented residencies extending the existing clinical placement practices among traditional pathways in the same institution of one semester to two (Terziev & Forde, 2021). Similarly, residents in Silva et al. (2014) reported an average clinical placement length of 33 weeks, more than double that of nonresidents.

In Chicago, mentors working with residencies were significantly more likely to receive professional development and compensation and to have earned a master’s degree (Matsko et al., 2021). However, mentors working with residencies were less likely to have 10 or more years of teaching experience, and residents reported that they provided less frequent mentoring and observations of teaching. Terziev and Forde (2021) noted that it was a universal requirement for mentors at all Pennsylvania residencies to attend training, though the frequency of this practice among traditional pathways was unclear; in contrast with Chicago, mentors and field supervisors typically offered more oversight and observation, though residents still shared a desire for more effective and integrated mentoring. Silva et al. (2014) did not explore the prevalence of training and compensation for nor the average characteristics of mentors; additionally, they did not compare the quality or frequency of mentoring at residencies and nonresidencies.

Finally, Matsko et al. (2021) found no differences in the clinical placement sites of Chicago residents and nonresidents in terms of perceived collaboration among faculty, warmth of climate, and inclusivity, though residents did report less supportive school administrators. Neither Terziev and Forde (2021) nor Silva et al. (2014) contrasted the clinical placement criteria of residency and nonresidency pathways.

Curriculum

Silva et al. (2014) found that, as 1st-year teachers, residents were more likely to report that their program had covered relevant curricula than other novice teachers from nonresidency programs. In Chicago, Matsko et al. (2021) found that residents were less likely to complete coursework prior to student teaching, indicating a contemporaneity that might suggest an integration of curriculum and clinical placement; however, residents actually perceived their programs to have lower levels of coherence than nonresidents. Similarly, despite the Pennsylvania Department of Education’s requirement that coursework be closely integrated with the clinical experience, residents consistently shared a desire for greater curricular alignment, discussing the challenge of balancing teaching with class assignments that were often perceived as busy work (Terziev & Forde, 2021).

Resident Supports

All Pennsylvania programs were required to provide residents with some sort of financial support; most simply modified existing pathways by offering residents scholarship assistance and/or modest stipends, although one program did provide a salary and benefits in exchange for a 3-year teaching commitment (Terziev & Forde, 2021). Few programs, however, offered a summer orientation, and there was no evidence of continued induction support for hired teachers. Graduates of the residencies studied by Silva et al. (2014) reported receiving mostly similar levels of induction—particularly from mentor/master teachers—to other novice teachers in their districts overall, though the authors found some evidence of increased support from other program-affiliated staff, particularly in residents’ second year on the job. Matsko et al. (2021) did not include any questions on their survey about financial incentives or induction.

Together, these studies offer limited evidence of a somewhat distinct teacher preparation pathway under the residency model, largely driven by differences in recruitment and clinical experiences. Across settings, residencies offer longer clinical placements, provide more training and compensation to mentors, and more directly target prospective teachers in high-needs subject areas like math and science. In other areas, though, residencies seem no different than traditional or alternative pathways, with field placement sites that are no more intentionally assigned, mentoring that is no more effective, and prospective teachers that are no more racially diverse. The comparative evidence around other features is either mixed (e.g., candidate quality, curricular integration) or absent altogether (e.g., district partnerships, financial incentives).

However, these studies are also hampered by several limitations that prevent a fully comprehensive comparison of the features of residencies and nonresidencies. First, two of the three studies examine only a small number of programs. Terziev and Forde (2021) focused on four residencies in Pennsylvania, all in their first 2 years of implementation, and only compared them to the traditional pathways at the same institutions. Matsko et al. (2021) focused on the single labor market of Chicago, acknowledging that their results were heavily driven by two residencies. As a result, it is difficult to know if the differences identified in these studies truly stem from the residency model or instead simply from idiosyncratic variation among programs. Though more comprehensive, Silva et al. (2014) only made comparisons based on graduate characteristics, failing to contrast many of the programmatic features of residencies and nonresidencies. Secondly, all three studies relied solely on external stakeholders like funders or state departments of education to classify programs as residencies, a restriction that might either exclude or misclassify other programs that have adopted (features of) the residency model. Thirdly, all three studies focus only on a static picture of 1 or 2 years, failing to investigate how residencies may have either converged with or diverged from traditional pathways in terms of the features that characterize them over time. In particular, the absence of any longitudinal analyses cloud whether residency programs may have served as a disruption in the field of teacher education, introducing new features and influencing traditional programs to adopt them over time. Finally, especially in the case of Matsko et al. (2021) and Silva et al. (2014), contrast between residencies and nonresidencies stemmed from perceived differences in features collected via survey of preservice teachers (and their mentors), who may offer a less accurate and stable recollection of program features than either administrative records or program leaders themselves.

Our study addresses each of these limitations. We examine a statewide sample of between 5 and 34 residencies across multiple classification schemes along all eight features of the residency model, based on a combination of administrative data and program leader survey responses. Additionally, we use a longitudinal approach to consider how programs’ adoption of the residency model—as well as its features—has changed over time.

Research Questions

Our study was motivated by the following two descriptive research questions:

To what extent do the features thought to characterize the residency model actually distinguish the pathways of residencies from those of nonresidencies?

How have these distinctions changed over time?

Methods

Data

Our study combines responses to a novel survey administered to all programs in the state with an administrative dataset from Tennessee’s longitudinal data system containing information on the characteristics of all program completers.

Survey Data

We began by designing a survey to explore the statewide prevalence of the programmatic features that typically characterize the residency model. Specifically, our survey asked the degree to which each program in Tennessee identified as a teacher residency (e.g., fully, partially, not at all), whether and to what extent it had implemented many features, and, for both, the year since which it had done so. 1

To construct our survey, we reviewed existing literature on the residency model (Berry et al., 2008; Guha et al., 2016) and drew on the criteria of the NCTR, both as listed on their website (NCTR, n.d.-b) and as described via personal communication with NCTR leadership. We also consulted with teacher education policy officials at the Tennessee Department of Education (TDOE) as well as program leaders from the Tennessee Association of Colleges for Teacher Education (TACTE), conducting an expert validity check of our survey that led to revisions based on their piloting and feedback. One such revision was our operationalization of a “program” based on student population as one of three teacher preparation pathways common to most providers—undergraduate, graduate, and alternative (for job-embedded candidates already serving as the teacher of record). Though this conceptualization may mask some additional variation within a “program” (for example, by subject area or grade), program leaders felt it was the optimal level for distinguishing among while ensuring homogeneity within the various pathways to teaching housed in each institution.

We administered the survey in early 2020 via direct outreach to all program leaders (typically, deans, chairs, and/or program directors) of institutions that prepare teachers across the state. Respondents were asked to complete the survey multiple times (for each of the different programs present in their institution) and were encouraged to involve as many different program leaders within an institution as necessary. Our effort was supported both over email and in person at a TACTE conference by members of the TDOE and by program leaders with whom we had previously developed close relationships. We offered a $20 gift card as incentive for completion of the survey. Outdated contact information for a small number of programs resulted in targeted follow-up administrations over the next 6 months. Of all programs, 69.5% responded to our survey, covering 83.2% of all teachers prepared in the state during our sample window.

Administrative Data

We then linked survey responses to an administrative dataset prepared by the state containing comprehensive information about all completers of preservice teacher preparation programs since the 2009–2010 academic year. These data include demographic information such as gender and race, admissions criteria like high school GPA and SAT scores, and details of clinical placement experiences, including identifiers for linking to clinical mentors, indications of clinical placement type, and endorsement areas. 2

Sample

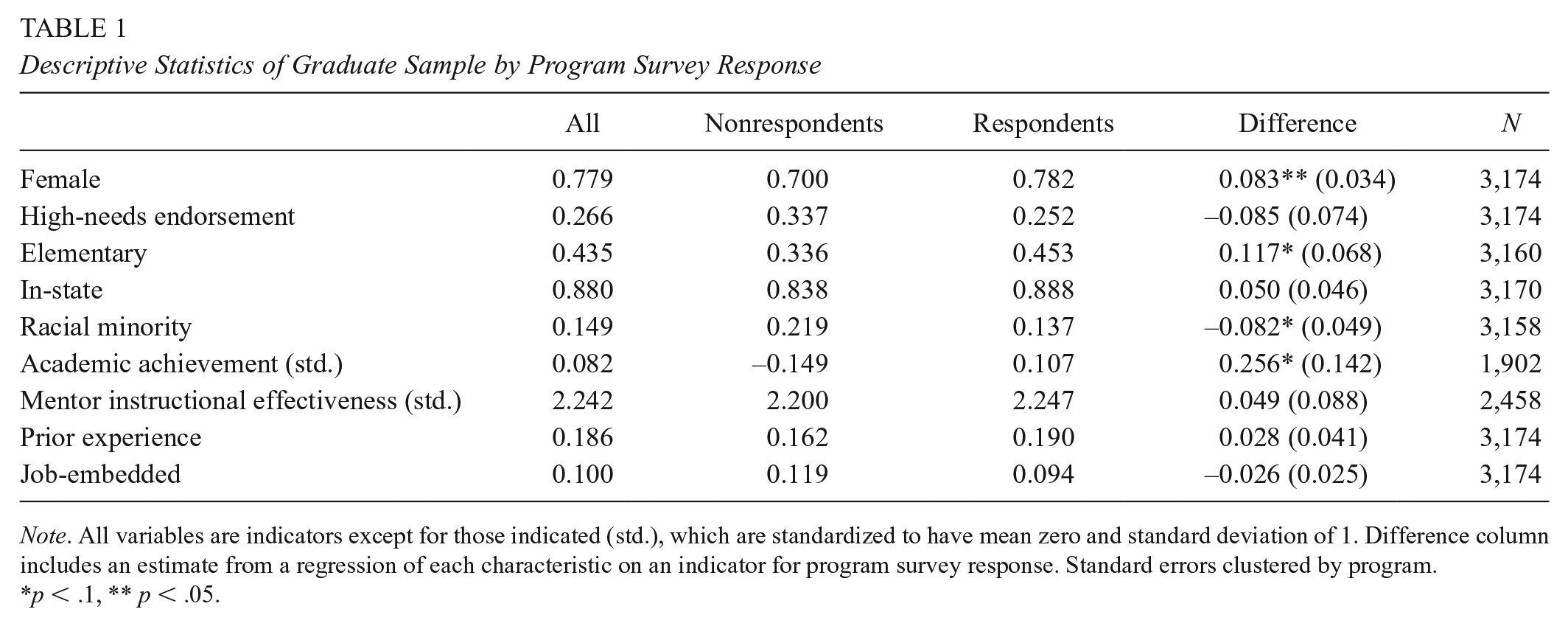

For our first research question focused on the current distinctiveness of residencies, our final analytic dataset includes 72 different programs within 36 institutions during the 2018–2019 academic year. To assess the external validity of our sample, we compare in Table 1 the 2,679 unique program completers from these responding programs to the 495 completers from the 26 nonresponding programs within 12 institutions, finding some marginally significant differences. Nonresponding programs had, on average, more completers of color, fewer women, fewer completers seeking elementary certification, and completers with lower levels of prior academic achievement. These distinctions encourage some modest caution in generalizing our findings from the survey subsample to all completers and programs across Tennessee; however, the breadth of our nearly statewide sample still offers a significant improvement in external validity over previous work focused on a small number of programs.

Descriptive Statistics of Graduate Sample by Program Survey Response

Note. All variables are indicators except for those indicated (std.), which are standardized to have mean zero and standard deviation of 1. Difference column includes an estimate from a regression of each characteristic on an indicator for program survey response. Standard errors clustered by program.

p < .1, ** p < .05.

Our second research question, which explores changes in the distinctiveness of residencies over time, takes this dataset and extends it back in time over the past 5 academic years to 2014–2015, producing a sample of 353 observations for 83 programs within 39 institutions, representing 13,988 program completers. 3

Residency Classification Approaches

Our first challenge stemmed from determining whether, when, and to what extent a program should be considered a residency during our observation window. To address this, we developed two distinct approaches to classification, which we describe below.

External Classification

In our first approach, which mimics prior work, a program is only classified as a residency if deemed so by one of two external stakeholders—the NCTR or the TDOE. Through substantial personal communications, and supported by existing organizational documentation, we worked with these two expert stakeholders to determine which programs across the state should be designated as residencies, a classification that remained constant for each program across all years in our sample. This approach is our strictest, identifying only five programs in our survey sample, perhaps indicating the low prevalence with which the residency model has been adopted statewide. However, it is also possible that this approach inappropriately excludes programs that have adopted the residency model despite not receiving any official designation. As a result, it would underestimate any distinctions between residencies and nonresidencies given that these highly aligned programs would be classified as the latter. Another potential concern is that these five programs may differ from the rest of the state in ways that have little to do with the residency model itself; if so, we might overestimate any programmatic distinctions and attribute them to the residency model itself rather than to unobserved idiosyncrasies.

Program Self-Classification

Therefore, our second classification approach relies on program leaders themselves to identify whether their programs are residencies. Specifically, a program is classified as a residency if selecting either “fully” or “partially” in response to the initial question on our survey asking, “To what degree do you consider your teacher preparation program to be a ‘residency program’?” beginning with the year provided in the follow-up question, “Please select the school year in which you began to consider your program a ‘[full/partial] residency,’” and including all subsequent years. While this approach may address some of the shortcomings detailed above, it is also possible that programs may claim to be partial or full residencies despite not implementing the model in full or with fidelity. As such, we present results using both approaches to determine whether any observed differences between residencies and nonresidencies are robust to classification scheme.

Analytic Strategy

For our first research question, we sequentially regress each feature on an indicator for whether a given program was classified as a residency, intuitively comparing the prevalence of each feature between residencies and nonresidencies in 2019, across both classification approaches described above. We cluster standard errors at the institution level, given that the decision to adopt a particular feature at one program (e.g., undergraduate) may be related to the presence and timing of adoption of that feature at another program (e.g., graduate) within the same institution. 4

For our second research question, we graphically represent the annual proportion of both residencies and nonresidencies reporting the adoption of each feature over the past 5 years. Although our administrative dataset begins in 2009–2010, and our survey asks about the timing of adoption of a given feature back to the same year, we choose the 2014–2015 academic year for our starting point for theses analyses as proportions in earlier years are so highly sensitive to year-to-year changes that they are uninformative and even misleading. For example, prior to 2014–2015, only two programs were identified as residencies by external stakeholders; and before 2012–2013, no programs self-identified as partial residencies; adoption of a feature by just one program—or the introduction into the denominator of a newly established program without that feature—unduly influences our estimates. However, for the interested reader, we reproduce all figures related to our second research question across the full decade in Appendix A.

Results

Below, we compare the prevalence of each model feature among residencies to that among nonresidencies in 2018–2019 to identify whether and how residencies actually offer a unique pathway into teaching. We begin by classifying programs based on the external determinations of the NCTR and the TDOE before turning to programs’ own self-identification via survey response. Then, we turn to exploring how the observed contrast in features between residencies and nonresidencies has changed over time.

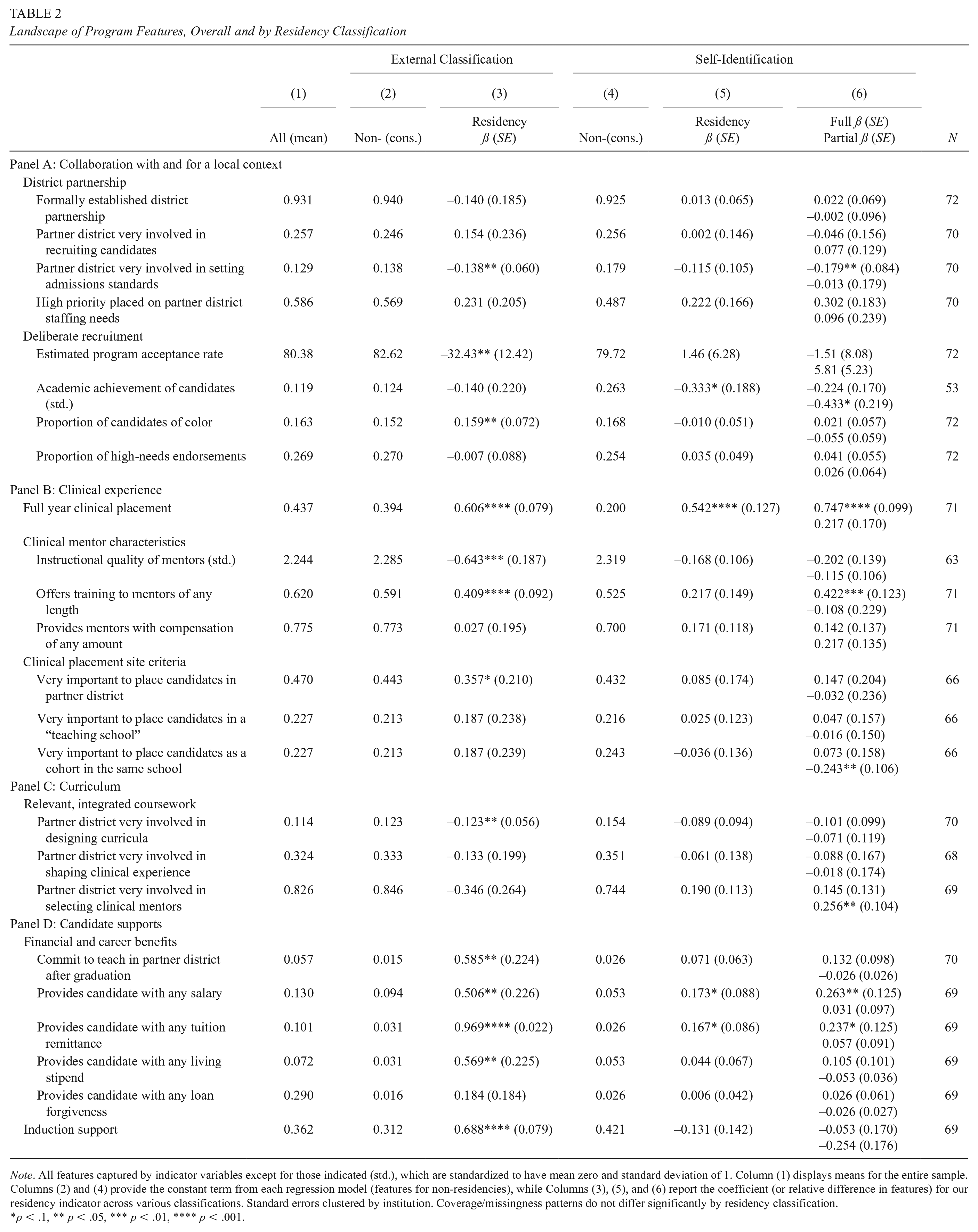

External Classification

Our first approach only classified five programs as residencies (about 7% of our sample). Although these programs were residencies for all graduating cohorts, some were not established until partway through the past decade; as a result, they make up an increasing share of all programs in the state over time, as seen in Figure 2. Overall, programs deemed residencies by either the NCTR or the TDOE reported significantly different constellations of features than traditional programs across the rest of the state, though not for all programmatic areas.

Adoption of the residency model over time.

Looking more closely, the first panel of Table 2 (columns 2 and 3 only) illustrates that externally classified residencies did not seem to have unique relationships with their partner districts—largely because similarly strong district relationships existed at nonresidencies—though they did attract somewhat different cohorts of prospective teachers. For example, residencies were no more or less likely to have a partner district, they placed similar priority on the staffing needs of partner districts, and their partner districts were no more or less likely to have high levels of involvement in recruitment; in fact, these partner districts may even have had somewhat lower levels of involvement in setting admissions standards for residencies. Relatedly, residencies and nonresidencies enrolled cohorts with comparable proportions of candidates seeking endorsements in high-needs subject areas. As for other cohort characteristics, residencies reported substantially lower estimated acceptance rates (by 32.4 percentage points, p = .013)—though with no difference in levels of prior academic achievement 5 —as well as twice the level of racial diversity (31.1% candidates of color compared to 15.2%, p = .003).

Landscape of Program Features, Overall and by Residency Classification

Note. All features captured by indicator variables except for those indicated (std.), which are standardized to have mean zero and standard deviation of 1. Column (1) displays means for the entire sample. Columns (2) and (4) provide the constant term from each regression model (features for non-residencies), while Columns (3), (5), and (6) report the coefficient (or relative difference in features) for our residency indicator across various classifications. Standard errors clustered by institution. Coverage/missingness patterns do not differ significantly by residency classification.

p < .1, ** p < .05, *** p < .01, **** p < .001.

As shown in panel B, externally classified residencies also offered distinct clinical experiences. All had yearlong clinical placements, compared to just 39.4% of other programs (p < .001). Additionally, all required mentor training, compared to just 59.1% of other programs (p < .001); however, residencies did not have a higher prevalence of compensating these mentors. Moreover, they tended to recruit mentors with lower average levels of instructional quality (by 0.64 standard deviations, p = .002). Finally, externally classified residencies may have been slightly more selective about how they determine clinical placements, as they were marginally more likely to place a high priority on ensuring these sites are in partner districts (35.7 percentage points, p = .098).

Panel C reveals little difference between residencies and nonresidencies in the degree of integration between coursework and clinical placements and may, in fact, favor nonresidencies. Traditional programs were slightly more likely (12.3 percentage points, p = .034) to report high levels of partner district involvement in curricular design, while reported levels of district involvement in shaping clinical experiences and recruiting mentors were statistically comparable (though similarly trending favorably for nonresidencies).

Perhaps the most significant distinctions between residencies and nonresidencies lie in the final panel, regarding how candidates were compensated and supported during and after their programs. Externally classified residencies were overwhelmingly more likely to offer candidates salary (50.6 percentage points, p = .032), tuition remittance (96.9 percentage points, p < .001), and a living stipend (56.9 percentage points, p = .017) than nonresidencies, perhaps linked to a similarly higher likelihood (58.5 percentage points, p = .013) of requiring candidates to commit a certain number of years to teaching in partner districts after program completion. Finally, residencies were far more likely to offer induction supports to graduates (68.8 percentage points, p < .001).

Program Self-Classification

Self-classification broadened our definition of a residency to include 32 (44.4%) programs considering themselves as either full (N = 13; 18.1%) or partial (N = 19; 26.4%) residencies. Some of these programs (N = 6) began identifying as residencies before the earliest year in our data, with most adopting the model during our observation window (N = 26). Program leaders reported adopting the residency model at a mostly steady pace throughout the decade; however, self-identified partial residencies, which did not exist at all until 2013, saw particularly high adoption in the last year of our sample (see Figure 2). Overall, under this classification approach, self-identified residencies offered at least a somewhat distinct pathway to teaching along some of the same dimensions as externally classified residencies; however, these distinctions are consistently much smaller, and they appear to be driven almost entirely by self-identified full residencies, with partial residencies offering mostly the same programmatic experience as traditional programs.

For example, in panel A of Table 2 (columns 4 through 6), self-identified residencies and nonresidencies again displayed similar frequencies and strengths of district partnerships (though, as before, full residencies reported lower likelihoods of having high partner district involvement in setting admissions standards). In looking at cohort composition, though, we see a less favorable trend from that found under the prior classification approach. Self-identified residencies reported similar acceptance rates to nonresidencies and actually enrolled candidates with marginally lower levels of past academic performance (0.33 standard deviations, p = .087). Furthermore, cohorts of self-identified residencies were no more racially diverse than those of nonresidencies and had similar proportions of candidates seeking high-needs endorsements.

The second panel reveals again that residencies reported offering consistently unique clinical experiences. As with externally classified residencies, self-identified residencies were much more likely than nonresidencies to have yearlong clinical placements (54.2 percentage points, p < .001), though this feature was no longer universal (74.2%); additionally, the prevalence of longer clinical placements only differed significantly for full residencies. Similarly, self-identified full (but not partial) residencies were again more likely to report training mentors (by 42.2 percentage points, p = .002). All programs tended to recruit equally instructionally effective mentors and were similarly likely to compensate them. Unlike under our external classification, self-identified residencies and nonresidencies prioritized various clinical placement site criteria at mostly similar levels.

We see a mostly consistent pattern in panel C with regard to self-identified residencies’ level of curricular alignment, where residencies reported similar levels of partner district involvement in designing program curricula, shaping clinical experiences, and selecting mentors.

Lastly, as under the previous classification approach, self-identified residencies continued to report somewhat higher likelihoods of financially supporting candidates during their programs; however, these differences are substantially reduced compared to the external classification and are only significant for salary and tuition remittance. Additionally, they are driven entirely by full residencies, with partial residencies offering on average the same incentives as traditional programs. Unlike in the prior classification approach, we find no difference between residencies and nonresidencies in their probabilities of requiring a commitment to teach in the partner district or offering induction after program completion.

Change in Contrast Over Time

To this point, we have distinguished residencies and non-residencies only during the last year of our sample. However, programs’ self-identifications as well as their features can fluctuate from year to year. While the preceding analyses help uncover the current degree of average differences between residencies and nonresidencies, they reveal little about how these distinctions have changed over time.

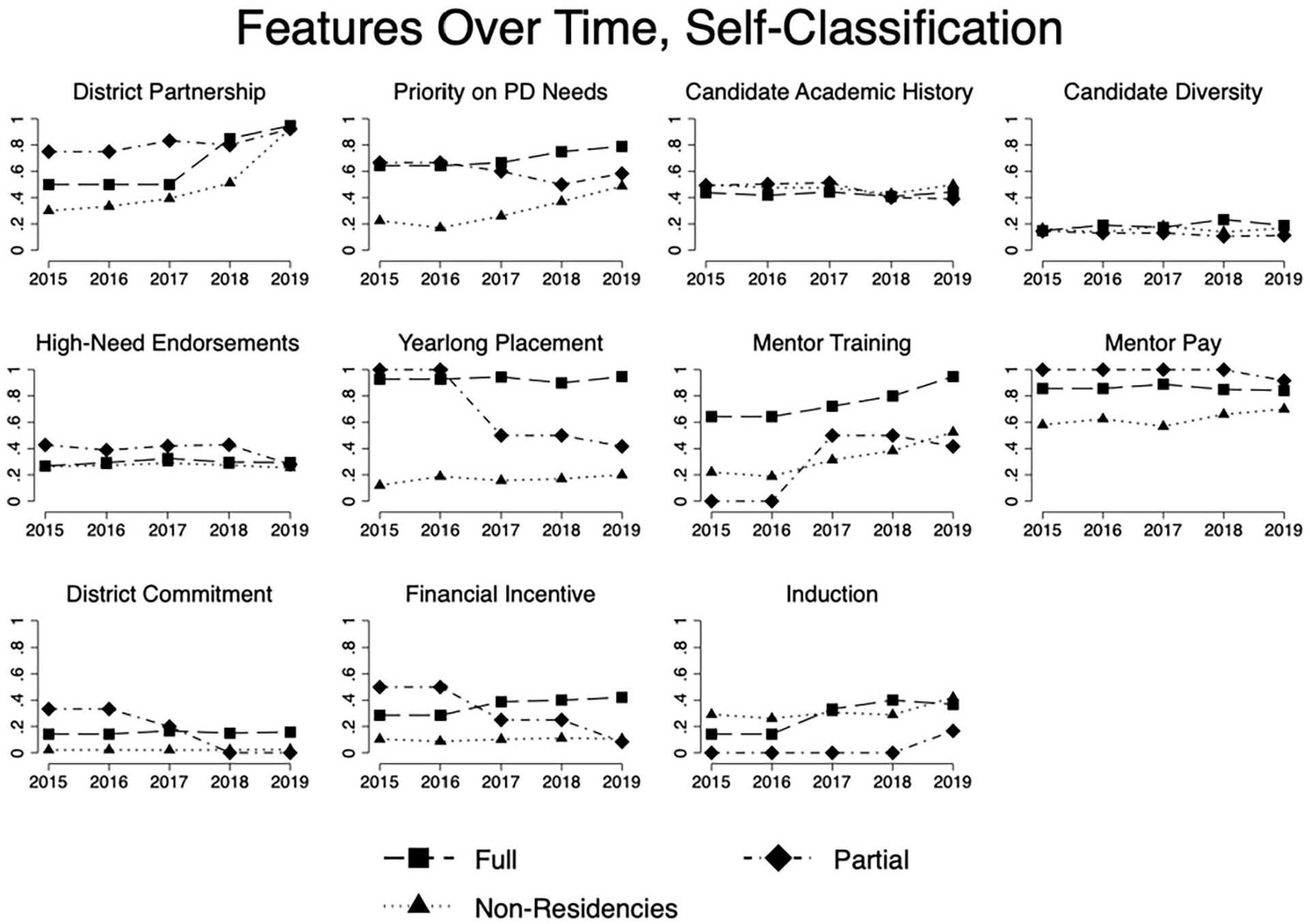

As shown in Figure 2, a growing number of programs identified as residencies over the past decade, particularly in the last few years. Moreover, an increasing proportion of all programs adopted many features of the residency model, forming district partnerships, prioritizing their staffing needs, and offering mentor training, as well as, to a lesser extent, providing yearlong clinical placements, induction programs, and financial incentives (see Figure 3). Consequently, to understand how these two concurrent trends affected the distinctiveness of the residency model, we produce a series of graphs that capture the proportion of both residencies and nonresidencies—across classification approaches— who reported having adopted a given feature in each year since 2015. (For the much noisier and less stable graphs dating all the way back to 2010, see Appendix A.)

Adoption of residency model features over time.

Overall, and across both classification approaches, we find suggestive evidence that the distinctiveness of the residency model is declining over time. We begin with our external classification approach in Figure 4, where there is some evidence that externally classified residencies became slightly less distinct from nonresidencies over recent years, largely due to diminishing contrasts in mentor training and pay, clinical placement length, financial incentives, and postcompletion commitments. For most of these features, this declining distinctiveness stemmed from nonresidencies adopting certain features (i.e., “catching up”)—see, for example, the increasing prevalence of mentor training, yearlong clinical placements, and financial incentives among nonresidencies since 2015. Additionally, there was even a diminishing contrast for features like the existence and strength of district partnerships, which, though increasingly adopted among residency programs, grew in prevalence among nonresidency programs at a faster rate. To a lesser extent, we also see some evidence of new residencies failing to adopt all the model’s features (i.e., “watering down”), as shown by the establishment of residencies in 2017 that did not pay mentors or require a commitment to teach in the partner district after graduation.

Adoption of residency model features over time by external classification.

Turning to self-identified residencies in Figure 5, we see an even more salient story of diminishing contrasts since 2015, when residencies were once more distinct from traditional programs in terms of district partnerships, clinical placement length, mentor training and pay, and postcompletion commitments. This derives equally from a “watering down” of the residency model, as shown by the decreasing prevalence of yearlong clinical placements and district commitments among self-identified residencies since 2016, and from nonresidencies “catching up,” given their increasing adoption of mentor training, mentor pay, and district partnerships over the same time frame, usually outpacing similarly rising rates of implementation among residencies.

Adoption of residency model features over time by survey self-classification.

Digging deeper, we find that most of the “watering down” stems from partial residencies (see Figure 6), which, starting in 2017, placed a lower priority on partner district needs, did not always require yearlong clinical placements, and offered fewer financial incentives. Given that earlier-adopting partial residencies sometimes had higher rates of these features than earlier-adopting full residencies, it is even more clear that these later-adopting partial residencies were not implementing the full model with fidelity. Additionally, once we separate out partial residencies, we see that prevalence rates of most features remain relatively consistent over time for full residencies.

Adoption of residency model features over time by tiered survey self-classification.

Despite this decline, a few features consistently and clearly distinguished externally classified and self-identified full (though not partial) residencies from nonresidencies. The predominant feature once again is a longer clinical placement of at least 7 months, which, across all 5 years, was between three and four times more common in residencies than in nonresidencies. The fact that it remained a distinguishing feature for self-identified residencies—avoiding becoming “watered down” and without nonresidencies “catching up”—suggests that program leaders began to identify their programs as residencies around the same time that they extended the duration of clinical placements. Similarly, the provision of candidates with financial incentives remained a consistent distinguishing feature, universal to externally classified residencies over all 5 years and between two and three times more prevalent among self-identified full residencies than traditional programs.

Discussion

Despite the increasing popularity of the residency model as a purportedly innovative pathway to teaching, the extent to which residencies actually offer preparatory experiences distinct from traditional programs has gone largely unexamined (Reagan et al., 2021). Therefore, our analysis of which features of teacher preparation were adopted over the past decade by nearly all programs in the state offers critically needed clarification as to whether residents experience a unique pathway to teaching, a crucial first step for determining whether the promising outcomes found in prior literature (summarized in Chu & Wang, 2022; Guha et al., 2016) can truly be attributed to the residency model.

Corroborating the Distinctiveness of the Residency Model

Across classification approaches, we find that a few of the more salient features of the model significantly distinguish residencies from nonresidencies. First and foremost, a full-year clinical placement appears to be the hallmark characteristic of residencies. While this is perhaps unsurprising, given that the residency model is founded on the bedrock of intensive clinical preparation, the high prevalence of adoption among residencies, the consistent magnitude of the distinction from nonresidencies, and the persistence of the contrast over time strongly suggest that both external stakeholders and program leaders view a yearlong clinical placement as the defining feature of the residency model. This is consistent with prior research finding longer clinical placements to strongly distinguish residencies from other pathways (Matsko et al., 2021; Terziev & Forde, 2021).

Second, residencies were significantly more likely to offer financial incentives and support to prospective teachers, most often in the form of tuition remittance or salary and benefits. This feature differentiated both externally classified and self-identified full residencies from nonresidencies; however, while every single program in the former group adopted at least one financial incentive, it was far less prevalent among the latter group, perhaps suggesting a somewhat divergent understanding between external stakeholders and program leaders of what is necessary to be considered a residency. Offering financial incentives was also a relatively persistent distinction over the past decade and aligned with limited prior research, at least for distinguishing residencies receiving funding from a state department of education from traditional pathways (Terziev & Forde, 2021).

Finally, across classification approaches, residencies were consistently more likely to offer training to clinical mentors. Residencies clearly placed a priority on investing time and resources into the crucial mentor-resident relationship, again consonant with prior literature (Matsko et al., 2021; Terziev & Forde, 2021); that said, they did not recruit more (and perhaps even recruited less) instructionally effective or experienced teachers; nor did they commit more seriously to compensating them. Additionally, training for clinical mentors became somewhat less effective at distinguishing residencies from nonresidencies over time due to its rising prevalence among traditional programs toward the end of the decade.

There were other differences between residencies and nonresidencies that were either smaller or less robust to classification approach. For example, externally classified residencies appeared to have somewhat distinct recruitment practices, with greater selectivity and increased enrollment of candidates of color. Notably, the greater racial and ethnic diversity of residency cohorts stands somewhat in contrast to findings from prior analyses comparing multiple externally classified residencies to multiple nonresidencies (Matsko et al., 2021; Silva et al., 2014; Terziev & Forde, 2021), instead aligning with the positive findings of prior studies of individual residencies (Chu & Wang, 2022; Guha et al., 2016). Additionally, externally identified residencies were more deliberate in placing residents in partner districts, more likely to require a commitment to teach in a partner district after graduation, and more likely to offer induction support to graduates; however, these distinctions were smaller in magnitude, shrank over time, and were not as evident among our self-classification approach, suggesting that these features may not always be truly as unique to residencies in practice as they are purported to be.

Challenging the Distinctiveness of the Residency Model

Across the remaining features, residencies and nonresidencies did not differ as expected. For example, the degree of partner district involvement usually did not differ between residencies and nonresidencies (e.g., recruiting candidates) and, in some cases, even favored nonresidencies (e.g., setting program admission standards). This is a novel finding, with no prior research comparing the strength of district partnerships between residencies and nonresidencies. However, as we discuss in further detail below, the lack of a distinctly higher level of partner district involvement for residencies may be a result of the unique context of our study, with the TDOE introducing a statewide initiative requiring the establishment of district partnerships for all teacher preparation programs in the state during the past decade (see Figure 3). It is thus important that future studies examine whether this feature distinguishes residencies from nonresidencies in other policy contexts.

Additionally, reports of similar levels of partner district participation in curricular and clinical practice design among both groups of programs suggest that the degree of integration between coursework and clinical practice is not uniquely high among programs adopting the residency model. This is reinforced by the findings of both Matsko et al. (2021), who noted lower levels of perceived program coherence among residencies; and Terziev and Forde (2021), whose interview subjects reported some dissatisfaction with curricular load and alignment. Notably, the level of partner district involvement in shaping curricula and clinical practices was low across all programs, perhaps indicating that achieving a high level of integration and alignment is a universal challenge in teacher education.

Finally, in terms of recruitment, we find that teacher residencies do not appear to more successfully attract highly qualified candidates to address staffing shortages in certain subject areas than traditional programs. Regardless of classification approach, residents were no more likely to pursue a high-need endorsement than candidates at nonresidencies, which stands in clear contrast with previous work (Matsko et al., 2021; Silva et al., 2014). Moreover, residencies did not appear to enroll more highly qualified candidates, and their cohorts may actually have lower average levels of past academic achievement than candidates at nonresidencies, aligned with the results of Matsko et al. (2021).

Changes in the Distinctiveness of Residencies Over Time

Our longitudinal analyses reveal two factors that likely contributed to the limited distinctiveness of the residency model. First, the rise of partial residencies, particularly in recent years, may have resulted in a dilution of the model, as these programs consistently offered a similar path to teaching as traditional programs across almost all features. The fact that self-identified partial residencies are closer in nature to nonresidencies, while self-identified full residencies are closer to externally assigned residencies, may offer greater credence to a more conservative classification of what is required to truly be a residency, and the expansion of partial adopters offers some additional evidence in support of the claim by Reagan et al. (2021) that many residencies may claim “legitimacy by innovation” without actually offering a different pathway to teaching.

While more research is needed to understand why these partial residencies have proliferated over time, institutional theory—particularly around the concept of structural isomorphism as applied by Reagan et al. (2021) in their consideration of the legitimacy of teacher residencies—offers insight into a few potential explanations. For example, one possibility worth exploring is that the increasing popularity of teacher residencies across the state and country could have led programs to increasingly embrace some of the prestige associated with the model without necessarily aligning their programmatic experiences in full. Programs classifying themselves as residencies may have implemented the features over which they have the most direct control (e.g., longer placements) while struggling to adopt those that require funding (e.g., mentor compensation, candidate incentives) or transformation earlier in the teacher pipeline (e.g., diverse and high-quality candidate recruitment), especially among pathways to teaching situated in larger, more traditional institutions of higher education.

Ad hoc conversations with program administrators and state policymakers reflecting on this time period in Tennessee gave credence to this explanation of mimetic isomorphism, in which programs felt pressure to look like their most effective peers. These individuals described a political climate around teacher preparation programs statewide that prioritized innovation and competition, due to a similar federal emphasis present in Race to the Top, a redesign of the state’s public evaluation of teacher preparation programs focused on measures of candidate employment and instructional effectiveness, and the continued expansion of alternative certification pathways like Teach for America. Whether real or only perceived, the high-profile success of the first full teacher residencies on the state report cards 6 and their effectiveness competing with alternative routes to recruit candidates may have led some traditional programs to adopt some of the language around the residency model in order to illustrate their own efforts at innovation.

Within this context of innovation, six of the largest preparers of teachers in the state, governed at the time by a single higher education system, transitioned to a residency model in the earlier part of the decade as part of a larger redesign initiative aimed at developing a more problem-, practice-, and performance-based teacher preparation experience (Goodin et al., 2018; Nivens, 2013; Scott & Teale, 2010). This overhaul, which possibly contributed to the initially greater distinctiveness of the state’s teacher residencies, could have prompted similar mimetic isomorphism among smaller programs following suit; however, given the significant recognition it received from accreditation bodies and teacher education groups, this initiative also likely resulted in a process of normative isomorphism, wherein the organizations that set standards exert influence over institutions through their expectations—here, perhaps tacitly encouraging programs to adopt the label of a partial residency even in the absence of implementing many of the model’s features.

A second reason for the declining distinctiveness of the model involves the increasing prevalence among nonresidencies of many of those features thought to distinguish residencies. Again, more research is needed to better understand why this has occurred. One possibility is that some of the featural innovations that the residency model initially emphasized more heavily than traditional programs could have altered the teacher education landscape as a whole, eventually finding their way into nonresidencies as their potential merits or political cache became clear to all programs. In such a case, even if residencies are no longer as distinctive as they may have once been, such evidence may imply that the model truly was innovative, possibly introducing disruptions that quickly spread across the state.

As above, institutional theory combined with insights gleaned from our ad hoc conversations with state policymakers and practitioners provide some support for this initial conjecture. The same processes of normative and mimetic isomorphism that may have encouraged some traditional programs to adopt the label of partial residency without all of the model’s features could have pressured other programs to implement certain residency features while continuing to conceptualize themselves as traditional (i.e., nonresidencies). For example, policymakers mentioned that, around the middle of the decade, the Educator Preparation Report Card described above began to track and more heavily emphasize the racial diversity of each program’s cohort as well as the proportion pursuing an endorsement in a high-needs area; as a result, each program—regardless of whether they identified as a full, partial, or nonresidency—felt similar pressure to recruit more candidates of color and prepare more teachers in more difficult-to-staff subject areas, thereby perhaps diminishing some of the distinctiveness of residencies around recruiting diverse candidates in subject areas that meet district staffing needs.

In addition, our conversations also identified specific features that may have proliferated throughout the state due to the more formal pressures of coercive isomorphism. As mentioned previously, the state began to require that all programs establish at least one primary district partnership in order to provide a more context-specific preparation that more directly addresses local staffing needs, while another regulation set more rigorous criteria for teachers’ eligibility to serve as clinical mentors across the state. These formal mandates may have eliminated some of the earlier distinctions between residencies and nonresidencies by raising the bar for all programs with regard to the presence of certain features associated with the residency model.

We note that these follow-up conversations were ad hoc in nature and were not intended to capture the experiences of all policymakers and practitioners in the state comprehensively or even representatively. Much of the context they provide only allows us to conjecture about the possible reasons for why residencies and nonresidencies differ in the ways and at the times that we document. However, we believe they offer useful insight into the climate around the state in ways that not only inform our results but also complement the existing theoretical contributions of prior literature and encourage future work to examine more rigorously the pressures and mechanisms that contribute to these institutional changes in the teacher education landscape.

Limitations

This descriptive study has a few other important limitations. First, though we find our operationalization of the residency model theoretically helpful and useful for analysis, we caution against conceptualizing the residency model purely as the sum of its parts. Breaking down the model into its components inherently results in the loss of some of the nuances that distinguish residencies from traditional programs in the gestalt (e.g., a positioning of teaching as a profession with complexities intricately tied to an idiosyncratic context; a conception of teacher learning that, as a result, is deeply embedded in place). In addition, although we consider them one at a time, each feature likely not only makes an independent contribution to the residency model but also may interact with other features in complex and critical ways. For example, a yearlong clinical placement may appear to distinguish residencies from nonresidencies, but it is also possible that it only really makes a difference for residents when combined with another feature (e.g., high-quality, experienced mentors). Moreover, it is possible that teacher residencies could (partially) achieve their intended goal of recruiting, preparing, and retaining teachers in and for hard-to-staff classrooms even without demonstrating that they differ along these particular dimensions (e.g., by distinguishing themselves from traditional programs in other ways). However, since prior literature identified these features as the distinguishing elements of the model, we view this study as a promising initial effort in clarifying whether and how teacher residencies provide a distinct form of teacher preparation.

Second, our operationalization of most features—with the exception of those stemming from demographic and administrative data on residents and mentors—rely on program leaders’ self-reports. We do not observe whether these features are actually in place at each of these programs and are forced to make assumptions about the level or absence of these features in years prior to their reported implementation. While survey respondents were assured that their individual responses would remain confidential, program leaders may have felt some pressure to report the presence or strength of certain features in ways that were flattering to their program or, given the possible isomorphic processes we have detailed, closer to those in the residency model. We believe this is a critical and valuable area of potential future qualitative research that can more closely examine and richly describe the preparation that prospective teachers receive at both residency and nonresidency programs.

Finally, while our survey response rate was quite high, our analytic sample is not fully representative of all programs in the state. In particular, our study somewhat disproportionately excludes pathways containing more candidates of color, men, and secondary teachers, cohort characteristics that are also closely tied to the residency model; as a result, we suggest some caution in generalizing our findings to all (residency) programs and pathways to teaching.

Policy Implications and Future Research

Part of the growing popularity of the residency model has been driven by studies showcasing residents’ increased diversity, heightened rates of employment in high-needs schools and subject areas, and better retention rates (Chu & Wang, 2022; Guha et al., 2016). However, this body of research largely focuses on single residency programs and fails to inquire into whether or how the preparatory experiences of their residents actually differ from those of the graduates of traditional programs to whom their workforce outcomes are compared. This kind of design is inherently limited in the extent to which it can attribute observed differences in graduate outcomes to the residency model itself as opposed to other differences in candidate recruitment, faculty quality, geographic labor market, and so on. In fact, one possibility for these prior studies is that residencies did not offer a unique form of preparation at all and that differences in outcomes stemmed entirely from other program idiosyncrasies. Our study, though, suggests that residencies—at least in certain classification approaches—do indeed provide a distinct pathway into teaching and, more importantly, pinpoints the features where distinctions are most evident and that may therefore be most responsible for these previous findings. As a result, we view our study as a crucial first step toward understanding what truly drives the improved outcomes of graduates of residencies. By clarifying the extent to which the features of residencies differ from nonresidencies, we lay the foundation for a broader and more rigorous analysis of the model’s effects on graduate workforce outcomes.

Furthermore, drawing on the descriptive results of this article, we hope to start disentangling the unique and combinatorial roles of each component in explaining the impacts of the residency model. All eight of the model’s features play important roles in fulfilling the goals of teacher residencies—providing a context-specific preparation for the teaching profession that leverages the strongest mentors and best clinical placement sites to produce high-quality and diverse teachers who address the real needs of school districts. However, the role that each feature plays is not the same, particularly with respect to teacher learning; while some impact how candidates develop into teachers, others likely influence who becomes a candidate in the first place. For example, requiring a full-year clinical placement, which drastically increases the frequency and variety of candidates’ opportunities to practice and cultivate instructional skills with mentoring, may be more influential for and closely tied to teacher learning than, say, offering them financial support during this time, which instead may be aimed at bringing a more diverse and promising pool of candidates in the door.

Notably, we see that not only the distinctiveness of teacher residencies but also their commonality come from both the recruitment- and development-focused features of the model. In addition, this overly simplistic dichotomy fails to do justice to the fact that many features simultaneously work toward both ends; for example, bringing in more racially diverse and highly qualified candidates obviously introduces a change in cohort composition, but such change will inevitably also shape the ways that these candidates collaboratively learn together, both in their courses and when grouped together in their clinical placements. And while an extensive body of prior literature has linked many of these individual features—whether in residencies or traditional pathways—with greater feelings of preparedness and higher levels of instructional effectiveness among aspiring and early-career educators (Ronfeldt, 2021), further comparative, causal research is needed to help understand which of these features empirically play the most significant and influential role in shaping teacher learning. We therefore urge other researchers to conduct similar lines of inquiry that can further unpack whether the residency model is truly fulfilling the promise it has shown thus far and to better understand which of its features should be adopted more widely and how.

Footnotes

Appendix A

Acknowledgements

We are grateful to Gina Lucchesi, Angelina Little, Kevin Schaaf, Julie Baker, Jack Powers, Amy Owen, Michael Deurlein, Randall Lahann, and Anissa Listak for their contributions to this work, as well as participants at the Association for Public Policy Analysis and Management (APPAM) and American Educational Research Association (AERA) annual conferences for their helpful comments. This project would not have been possible without the partnership, support, and data provided by the Tennessee Department of Education and partner educator preparation providers. Any remaining errors should be attributed to the authors.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: Matthew Truwit and Emanuele Bardelli received pre-doctoral support from the Institute of Education Sciences (IES), U.S. Department of Education (PR/Awards R305B150012 and R305B200011).

Notes

Authors

MATTHEW TRUWIT is a graduate student pursuing his doctorate in quantitative research methods in education and a master’s degree in statistics at the University of Michigan;

MATTHEW RONFELDT is an associate professor of educational studies at the University of Michigan School of Education, 610 E. University Ave., Ann Arbor, MI 48109;

EMANUELE BARDELLI is executive director of information and evaluation at Santa Rosa City Schools, 211 Ridgway Ave, Santa Rosa, CA 95401;