Abstract

We study whether visits to a college campus during eighth grade affect students’ interest in and preparation for college. Two cohorts of eighth graders were randomized within schools to a control condition, in which they received a college informational packet, or a treatment condition, in which they received the same information and visited a flagship university three times during an academic year. We estimate the effect of the visits on students’ college knowledge, postsecondary intentions, college preparatory behaviors, academic engagement, and ninth-grade course enrollment. Treated students exhibit higher levels of college knowledge, efficacy, and grit, as well as a higher likelihood of conversing with school personnel about college. Additionally, treated students are more likely to enroll in advanced science/social science courses. We find mixed evidence on whether the visits increased students’ diligence on classroom tasks and a negative impact on students’ desire to attend technical school.

Keywords

Both access to and success in higher education are important policy concerns, given the positive economic returns to postsecondary education and its potential to promote social mobility (Chetty et al., 2017; Oreopoulos & Petronijevic, 2013). In addition, the importance of a postsecondary degree is likely to increase over time, as demand for workers with formal postsecondary training continues to outpace supply (H. Johnson et al., 2015; National Center for Education Statistics, 2018). For example, seven of the 10 occupations with the highest projected employment growth between 2018 and 2028 require a postsecondary credential (U.S. Bureau of Labor Statistics, 2020).

Unfortunately, many prospective students face nontrivial barriers to postsecondary access and success. First-generation college students, 1 for example, are 19 percentage points less likely to enroll in college relative to students with at least one parent who graduated from college (Cataldi et al., 2018). Researchers and policymakers typically focus on addressing three specific barriers to college: addressing credit constraints by providing financial aid, addressing information failures by providing information about college options and the matriculation process, and addressing gaps in students’ academic preparation via counseling, tutoring, and remediation (Page & Scott-Clayton, 2016). While such interventions can affect college access, inequality in postsecondary enrollment, persistence, and completion rates across socioeconomic strata and racial/ethnic groups persist and may be growing (Avery et al., 2020; Bailey & Dynarski, 2011; Baker et al., 2018; Posselt et al., 2012). These persistent opportunity gaps suggest the existence of additional barriers beyond those associated with finances, information, and preparation.

Evidence suggests that other, less tangible barriers may help explain existing gaps in postsecondary access and attainment. Students’ sense of belonging, for example, can play a major role in their choice of whether and where to enroll in college (Allen et al., 2004; Bourdieu & Passeron, 1977; Comeaux et al., 2020; McDonough, 1997). College campuses, however, have struggled to create welcoming environments for historically underrepresented groups (Bettencourt, 2019; Brown McNair et al., 2016; Lewis, 2020; Museus et al., 2012; Vaccaro & Newman, 2016). One strategy for creating a welcoming environment and sense of belonging is early outreach to students from historically underrepresented groups. This article examines an intervention designed to provide students with an early opportunity to acquire experiential knowledge about college.

Using a multisite-randomized field experiment (Raudenbush & Bloom, 2015), we estimate the impact of visits to a college campus in eighth grade on students’ interest in and preparation for college as a potential strategy for addressing experiential barriers to college. Participating students were assigned to one of two conditions. Those assigned to the treatment condition received an informational packet on postsecondary options and three field trips to the University of Arkansas—the state’s flagship 4-year public institution 2 —that introduced students to various aspects of college life. Control group students received only the informational packet. Our study is particularly relevant for low-income and first-generation college students, as the majority of students in our sample report having no parent or guardian with a bachelor’s degree. This is also reflected more generally in our research setting, as median family income in Arkansas is $15,000 below the national average (Guzman, 2019), over half the students in participating schools receive free or reduced-price lunch, and college-going rates in participating districts range from 31% to 56%. 3

We hypothesize that the experience of visiting a college campus multiple times, interacting with students and faculty, and participating in college-readiness programming over multiple visits will have a greater impact on student interest in, preparation for, and socioemotional skills associated with college success than will the receipt of an informational packet. Our results indicate that students assigned to participate in the field trips report higher levels of knowledge about college, college efficacy, and self-reported grit, have more conversations about college with school personnel, and are less likely to express an intention to attend technical school. We find mixed evidence suggesting the trips increased students’ effort on the survey we distributed in school, a proxy for students’ diligence in completing school or college-related tasks. Finally, treatment group students appear more likely to enroll in advanced courses in ninth grade. These results suggest the important role that experience can play in developing socioemotional skills associated with college outcomes as well as students’ actual preparation for college.

This research offers four contributions to the higher education access literature. First, our study focuses on a relatively understudied barrier to college access: limited direct interaction with the college experience. While the majority of interventions addressing gaps in access and success in higher education have largely focused on addressing financial, informational, and preparational barriers, this study explores how exposure to various aspects of college life via field trips can help students feel confident in preparing for, applying to, and succeeding at an institution of higher education (Cabrera & La Nasa, 2000; Di Maggio, 1982).

Second, this study benefits the literature by exploring the effectiveness of a relatively low-cost, yet readily scalable, intervention designed to offer students tangible experiences with college campus life. The intervention studied here makes use of preexisting resources on campus: Students met with campus advisors from the University’s Center for Multicultural and Diversity Education, met with representatives of academic departments, and were introduced to dorm life and campus dining halls through Residential Life. Given that campus representatives were generally happy to participate and that many schools routinely organize some type of field trips for students, it would be possible for other schools and universities to organize similar opportunities for students.

In addition, this intervention differs from other programs designed to address college access gaps by targeting students in eighth grade. By limiting their focus to students who are nearing high school graduation, many college access initiatives intervene too late for students who have already been dissuaded from postsecondary education by their prior experiences and feelings of alienation from formal education (Anders & Micklewright, 2015). Indeed, postsecondary aspirations are often solidified by the time high school students reach their freshman and sophomore years (Hossler et al., 1989). While not determinative of postsecondary outcomes, early aspirations and preparation for college are important steps for eventual college enrollment (Holzman et al., 2020; Klasik, 2012).

Finally, we study this unique intervention using a highly rigorous research design: a multisite-randomized control trial (Raudenbush & Bloom, 2015). While there are programs that provide students with exposure to college campuses, such as the Talent Search program offered by the U.S. Department of Education, this is the first study to rigorously demonstrate the ability of early college experiences to affect student interest in and preparation for college (Talent Search provides an array of support services to students in high school; U.S. Department of Education, n.d.). Taken together, this study offers a strong contribution to the field through its rigorous examination of a unique intervention designed to address an understudied barrier to college access: limited tangible experience with college.

The remainder of this article proceeds as follows. First, we discuss commonly theorized barriers to college access and existing evidence about the effectiveness of interventions aiming to address those barriers. Next, we describe our field trip intervention and outline our analytical strategy. We then present our primary findings and conclude with a discussion of their implications.

Prior Literature: Barriers to College Access and Potential Interventions

While this study focuses on an intervention designed to address an experiential barrier to college access, it is important to examine the research based on interventions designed to address a variety of barriers to better situate our article within the existing literature on college access. We categorize barriers to college access as either tangible or intangible. Tangible barriers refer to practical or logistical hurdles students must surmount in order to enroll in college, such as securing funding or completing forms. Intangible barriers are deeper, potentially less concrete, barriers that may powerfully shape students’ trajectories, such as lack of representation and inequitable social structures. In the following sections, we describe interventions focused on the tangible barriers of financial constraints, informational constraints, and academic preparation. We then turn to a discussion of the evidence regarding less tangible barriers to college access.

Tangible Barriers to College Access

Tangible barriers to college entry identified in the literature fall into three categories: a lack of financial resources, a lack of information about college costs and benefits as well as the college application and matriculation processes, and a lack of preparation for college (Page & Scott-Clayton, 2016).

While financial aid programs with various designs can increase college enrollment (e.g., Bartik et al., 2017; Daugherty & Gonzalez, 2016; Goldrick-Rab et al., 2016; Page et al., 2019; Swanson & Ritter, 2020), several factors limit the ability of financial aid programs to improve college access and completion. First, the complicated paperwork required to apply for aid is a significant hurdle for all students and can be particularly challenging for first-generation college students (Bettinger et al., 2009; Holzman et al., 2020; Klasik, 2012). Furthermore, financial aid is typically awarded late in a student’s journey to college, often after they have secured admission at a particular institution. This timing creates uncertainty about one’s ability to pay for college that may deter students from applying to universities with a high sticker cost or from accepting an offer of admittance (Kelchen & Goldrick-Rab, 2015). Additionally, financial aid programs can induce undermatching, whereby students who would have been successful in 4-year universities enroll in 2-year colleges because of the available aid (Carruthers & Fox, 2016).

Information failures related to the college application and matriculation process can also disrupt a student’s postsecondary plans (Avery & Kane, 2014; Castleman & Page, 2014; Hoxby & Avery, 2012). Several studies have demonstrated that providing prospective students with information about the college application and matriculation processes can increase rates of applying to and enrolling in college (Barr & Turner, 2017; Hoxby & Turner, 2013; Page & Gehlbach, 2017). However, evidence also indicates that informational interventions may be limited in their ability to affect postsecondary decisions (Bettinger et al., 2009), in part because they often lack meaningful personal interactions (Carrell & Sacerdote, 2017). Indeed, Sanders (2018) found that an intervention that included both personal interactions and the provision of information between current university and high school students increased enrollment at selective institutions.

Students may also struggle to matriculate at a postsecondary institution because of inadequate academic preparation (Avery & Kane, 2014; G. Gonzalez et al., 2011). This problem may be particularly acute for would-be first-generation college students; for example, Cataldi et al. (2018) find that would-be first-generation students are less likely to take advanced math, Advanced Placement (AP), and International Baccalaureate (IB) courses in high school than continuing-generation students, even though these courses may be particularly useful for college applications.

While researchers consistently find that comprehensive interventions addressing overlapping barriers to college success increase postsecondary enrollment and persistence (e.g., Carrell & Sacerdote, 2017; Carruthers & Fox, 2016; Castleman & Goodman, 2018; Castleman & Page, 2015; Oreopoulos et al., 2014; Oreopoulos & Petronijevic, 2016), they are often difficult to scale up, expensive, and may fail to maximize benefits by focusing on upper-level high school students. Indeed, while many college access programs offer support to students during the later years of high school, many students fall off a college track in middle school (Wimberly & Noeth, 2005). Furthermore, the focus on “promising” (e.g., already academically successful) students could limit the magnitude of an intervention’s impact (Seftor et al., 2009).

Intangible Barriers to College Access

Students also have to contend with structural inequalities that may make it more difficult to access postsecondary education. Markus and Nurius (1986) argue that individuals’ conceptions of what they could achieve often motivate and drive behavior, but that the so-called “possible selves” individuals envision are shaped by their environment, experiences, and broader social forces. In the context of postsecondary access, this means that students will be more likely to aspire to and prepare for college if they can envision themselves as college students, but that vision may be less salient for students from historically underrepresented populations. This lack of representation could contribute to gaps in postsecondary aspirations that emerge and widen between would-be first- and continuing-generation college students in middle and high school (Anders & Micklewright, 2015). Thus, an intervention aimed at increasing the salience of college life and the experience of college students could broaden the pool of students interested in attending college and shape students’ long-term educational decisions.

In addition to the systemic inequalities that may make the idea of being a college student less salient for historically underrepresented students, first-generation and low-income college students’ knowledge and experiences (e.g., funds of knowledge; N. Gonzalez et al., 1995; Moll et al., 1992; Vélez-Ibáñez, 1988) may be misaligned with the cultural capital (e.g., cultural knowledge and social assets; Bourdieu, 1977) inherent in universities’ complex formal and informal systems students must navigate when applying to and attending college (Collier & Morgan, 2008; Hamilton et al., 2018; Jack, 2019; Lareau, 1989; Perna, 2000; Swidler, 1986). For example, first-generation college students may be less familiar with college application essays, scheduling informational interviews with alumni from a target university, or the availability of institutional financial aid. Cultural mobility theory (Di Maggio, 1982) argues that students can acquire cultural capital from outside the family, suggesting that a school-based intervention could provide students with experiences that build navigational capital (Yosso, 2006) to help students feel confident preparing for, applying to, and successfully attending an institution of higher education.

There is also reason to believe that intervening when students are in late middle school or early high school could change students’ postsecondary outcomes. First, students begin making decisions that affect their postsecondary outcomes relatively early in their educational careers (Cabrera & La Nasa, 2000; Hossler et al., 1989; Klasik, 2012; Wimberly & Noeth, 2005). Second, socioemotional skills, such as grit and conscientiousness, are malleable at this age (Hoechsler et al., 2018) and are predictive of educational attainment (Almlund et al., 2011; Caviglia-Harris & Maier, 2020; Hitt et al., 2016) and career choices (Bandura et al., 2001). Third, and intuitively, the earlier one intervenes, the more likely it is that the student can adjust their trajectory to successfully apply to college. However, a college-focused intervention that occurs too early could fail to resonate with the student, or the student could forget what they learned when they reach high school and start making college-relevant decisions. Thus, we argue that intervening when a student is in eighth grade could be particularly effective in altering students’ postsecondary trajectories: They are close enough to high school for the information to resonate, but far enough away from postsecondary matriculation that all options are still open.

In this article, we test whether an intervention offered in eighth grade and aimed at increasing the salience of college life and enhancing students’ existing forms of capital (by familiarizing students with a college campus) can affect students’ college knowledge and intentions, academic engagement, conversations about college with school personnel, and ninth-grade course load. 4 We also examine whether the intervention affects students’ socioemotional skills previously associated with college success, such as diligence on school-related tasks, grit, self-efficacy, and self-management. This work addresses gaps in the literature by determining if a college-focused intervention offered relatively early to students can affect their college-going attitudes and decisions.

Materials and Methods

Participants

Recruitment and consent for this study took place in two stages. First, school administrators signed a memorandum of understanding with the research team consenting to participate in the study and allowing the researchers to recruit students to participate. Second, students and parents signed an informed consent form agreeing to participate in the study. These procedures were approved by the institutional review board at the University of Arkansas.

Participating Schools

Schools were initially contacted during the 2016–2017 school year. To ensure that historically underrepresented students were well-represented in our sample, we initially reached out to schools within a 2-hour drive of the university with student bodies comprising at least 50% students of color or at least 60% students receiving free or reduced-price lunch. Sixteen unique schools participated in this study over the 2-year period, with 15 participating in the 2017–2018 school year and 12 schools participating in the 2018–2019 school year. Drive time between each school and the university ranged between a minimum of 10 minutes and a maximum of 90 minutes. Over half of the schools served rural communities (some with populations of fewer than 1,000 residents). No schools served communities with more than 90,000 residents.

There is noticeable heterogeneity among participating schools, as highlighted in data available through the Arkansas Department of Education and the Office for Education Policy at the University of Arkansas. Schools varied in size, with the total number of eighth-grade students within each school ranging from around 50 to 500 students. The share of students receiving free- or reduced-price lunch within each school ranged from 51% to 88%, while the share of students of color ranged from 7% to 88% in the year prior to the beginning of the project. College-going rates of high school graduates in participating districts range from 31% to 56%.

Participating Students

At the beginning of each school year (2017–2018 and 2018–2019), all eighth-grade students in participating schools received consent forms and information about our project. In total, 1,478 students across all school sites and years agreed to participate (885 students in Cohort 1 and 593 students in Cohort 2). This translates to schoolwide take-up rates ranging from 12% to 70% for Cohort 1 and from 20% to 63% for Cohort 2. Roughly half of participating students in each school were assigned to treatment.

Table 1 summarizes the characteristics of our analytic sample. About 59% of participating students identified as female. In terms of race/ethnicity, 62% of students identified as White, 21% as Latino/a/x, 3% as Black, and 14% with another racial/ethnic category. Among students participating in this study, 64% reported that neither parent held a 4-year degree, and 46% reported that neither parent had a credential beyond high school. About 42% of the students in our sample reported never having visited a college campus prior to this intervention.

Within-School Baseline Balance

Note. Min = minimum; Max = maximum; SES = socioeconomic status.

Mean and standard deviation calculated across schools. bEach baseline variable regressed on treatment status and school-by-cohort indicators to test for baseline balance. Standard errors clustered by lottery (e.g., school-by-cohort).

p < .1. **p < .05. ***p < .01.

Randomization Procedure and Intervention

We used a multisite-randomized research design to identify the causal impact of campus visits on student interest in and preparation for college (Raudenbush & Bloom, 2015). Participating students were randomly assigned to either the treatment (campus visits and information) or control (information only) group within their school. 5 Students in the treatment condition were invited to visit the University of Arkansas—the state’s flagship public university—three times throughout their eighth-grade year. These visits introduced students to various facets of the college campus experience with the goal of making them feel more comfortable on campus and with the idea of being a college student. Additionally, students in both the treatment and control groups received a college information packet at the beginning of the spring semester of their eighth-grade year that contained information about college options and career pathways.

We therefore identify the impact of visiting and experiencing a college campus and receiving information relative to only receiving information about college on paper. We hypothesize that the concrete experience of visiting a college campus will leave a more profound and lasting impression on students than will having easy access to written information about postsecondary options. Below, we briefly describe the three visits experienced only by students in the treatment group, then describe the informational packets received by students in both the treatment and control groups.

Visit 1 (Late September–Early October)

The first campus visit included a college information session and campus tour. Students first met with student ambassadors from the college admissions office for a tour that highlighted campus traditions, history, and unique buildings. Students then participated in a workshop developed by staff at the University’s College Access Initiative that discussed what college is, how to succeed in college, and how to prepare for college. The workshop covered material that would set students up for success when applying to colleges, including study tips, information about the importance of enrolling in challenging classes and participating in extracurricular activities, and resources available to them as high school students. The workshop discussed 2- and 4-year postsecondary opportunities. The students also heard from current undergraduate students about their experiences and had the opportunity to ask questions about college life. The visit concluded with lunch in an on-campus dining hall.

Visit 2 (Late October–Early November or Mid-February)

The second visit focused on introducing students to various departments and degree paths. Students participated in interactive, content-specific activities with two or three academic departments. Participating departments represented a variety of degree areas, including engineering, English, theater, architecture, physics, nursing, economics, and astronomy. Campus offices—including the Volunteer Action Center, University Recreation, and University Housing—also offered activities. 6 The visit again concluded with lunch in an on-campus dining hall.

Visit 3 (Late March–Mid-April)

The final visit aimed to foster a sense of campus spirit. Schools chose to attend either an official baseball game or compete in an on-campus scavenger hunt organized by the research team. Food was again provided.

Information Packet

All participating students, regardless of treatment status, received an information packet at the beginning of the spring semester of their eighth-grade year. The packet included publicly available information on all postsecondary institutions in the state including their websites, physical locations, and contact information; a checklist of things to do each year in high school to prepare for college; and information about educational requirements and expected salaries for various occupations. 7 Finally, the folder included a personalized cover letter describing the packet’s contents. Packets were compiled by the research team and distributed by school personnel.

Assessments and Measures

Student Characteristics

We surveyed students prior to randomization at the beginning of the fall semester (late August–early September) to collect student characteristics and baseline measures of the outcome constructs. The fall survey asked for students’ demographics, whether they participated in a federal TRIO program (college access and student support programs funded by the Department of Education), prior exposure to college campuses, and current grades. We also included a measure of socioeconomic status based on the Programme for International Assessment (PISA)’s index of economic, social, and cultural status (Organisation for Economic Co-operation and Development, 2012), as well as baseline measures of all outcomes. The surveys took students between 20 and 40 minutes to complete. We obtained baseline surveys for 88% of students opting into the study. 8 Survey responses for gender and race/ethnicity were supplemented with administrative data collected directly from schools.

Table 1 presents summary statistics and tests of balance for our sample based on our fall (baseline) survey. 9 While randomization helps ensure against nonrandom sorting of students to treatment, rogue randomizations could still threaten the validity of our results (Gerber & Green, 2012). To test for within-school balance, we regress each baseline variable on an indicator for treatment status, a vector of school dummies, and cohort indicators. As shown in Table 1, there are few statistically significant differences in baseline characteristics between students in the treatment and control conditions. In particular, students in the treatment group are slightly more likely to be White and less likely to be Latino/a/x, are more likely to have had conversations about college with their parents, and express slightly higher confidence in their ability to succeed in college. In general, Table 1 indicates that our randomization was successful at creating equal groups across treatment conditions.

Survey-Based Outcome Variables

We again surveyed participating students at the end of the spring semester (mid-April–early May) after all the campus visits and after students received the information packets to collect our outcome measures. 10 In total, we have outcome surveys for 76% of participating students. 11 The spring survey measures students’ college knowledge, socioemotional skills, and postsecondary intentions. All examined outcomes have been established as important steps in the college enrollment process or as predictors of student success (e.g., Akos et al., 2020; Caviglia-Harris & Maier, 2020; Klasik, 2012). Full baseline and outcome survey instruments are available on request.

College knowledge

Our first outcome of interest is students’ knowledge of college-related information. This is a measure we developed based on student responses to a series of questions about college to test the hypothesis that the experience of visiting a college campus would help students retain more information than simply receiving written information in school.

The college knowledge instrument was developed as follows. In the baseline survey for the first cohort, students were assigned one of two sets of college knowledge questions. Each set consisted of a series of true or false and multiple-choice questions covering topics such as the type of high school courses for which students can earn college credit and the differences between community colleges and 4-year universities.

We tested the validity of the questions using item response theory to examine the extent to which they were able to discriminate among different levels of knowledge about college and were appropriately difficult for students in our sample. Based on these analyses, we determined that six items on our baseline survey used for Cohort 1 did not work well in our sample. We then developed new questions to replace those that did not work in the fall and administered a single set of questions to all students in the spring. We again used item response theory to test the extent to which the spring questions worked in our sample and found that it was appropriate to include only seven of the 11 questions when calculating our final construct. 12 Six of these questions were true/false or multiple-choice, and one was an open-ended response question. Topics covered in these questions included the difference between community colleges and 4-year universities, the average cost of attendance for an in-state student at the state’s flagship university, and which factors universities typically consider when making admissions decisions. These seven items were used for both the baseline and postintervention surveys for Cohort 2.

Socioemotional skills

The second set of outcome variables measures student socioemotional skills, also referred to as character skills, psychosocial skills, and noncognitive skills across different fields of study (Duckworth & Yeager, 2015). We examine these measures because prior research has found them to be correlated with educational attainment (e.g., Heckman et al., 2006). We include two behavioral proxy measures of student diligence through the effort students put forward on the survey: careless answering (Hitt, 2015) and item nonresponse (Borghans & Schils, 2012; Hitt et al., 2016; Zamarro et al., 2018). Recent literature has found that these survey effort measures are good proxy measures of conscientiousness and are significant predictors of important academic and life outcomes (Hitt, 2015; Huang et al., 2012; J. Johnson, 2005; Marcus & Schütz, 2005; Meade & Craig, 2012; Zamarro et al., 2018).

Additionally, we include self-reports on college efficacy (Gibbons & Borders, 2010), Duckworth’s eight-item grit scale (Duckworth et al., 2007), and measures of self-management (Panorama, Education, 2018). Finally, we developed two measures of academic engagement for this study. The first index of academic engagement focuses on students’ emotional engagement, while the second focuses on students’ behavioral engagement (Fredricks et al., 2004).

We calculate Cronbach’s alpha for each construct to check its reliability within our specific sample. Table 2 presents a summary of our socioemotional constructs, including a sample item and Cronbach’s alpha. The constructs exhibit moderate reliability within our sample, with the college efficacy and self-management scales exhibiting the strongest reliability.

Reliability of Scales (Spring Survey)

Our survey included four items, but we excluded one item (“What are your current grades?”) to increase the construct’s internal reliability.

Postsecondary intentions and actions

We next look at two initial measures of college-going behaviors related to students’ conversations about college with school personnel and parents. Our first scale measures the conversations students report having with school personnel and combines students’ responses across eight items. We asked students if they had discussed the following with school staff: admissions requirements for 2-year and 4-year colleges; how to decide which college to attend; their likelihood of being accepted to different types of schools; what ACT/SAT scores they would need to get into college; opportunities to go out-of-state; readiness for college-level coursework; study skills required for postsecondary education; and how to pay for college. Students responded on a 0 (No), 1 (Yes, Once), and 2 (Yes, multiple times) scale. This scale presented high reliability in our sample (

Our second measure was obtained from students’ responses to a single item asking if they had ever talked to their parents about college. Students responded on a 0 (Never), 1 (Once or twice), 2 (A few times), to 3 (All the time) scale.

We also studied the effect of our intervention on students’ postsecondary intentions. In the survey, we asked students the following question: “If I had to decide right now, after I graduate high school, I plan to . . .” Students were given six options and prompted to choose one: attend a two-year or community college, attend a technical/vocational school, attend a 4-year college, enter the military full-time, find a job, or other. We look at each of the options as a dichotomous outcome to determine if the campus visits affected students’ likelihood of intending to follow any of these paths. While students’ self-reported intentions do not fully capture actual enrollment decisions, early educational aspirations are predictive of later educational attainment (Bui, 2005; Choy, 2001; Christian et al., 2020; Klasik, 2012). This suggests they could be a helpful short-term measure of the impact of campus visits on college-related outcomes.

Transcript-Based Outcomes

In addition to analyzing the impact of the visits relative to information alone on self-reported measures of academic engagement, college knowledge, motivation, and postsecondary intentions, we analyze district administrative records to determine whether the program affected students’ ninth-grade course-taking decisions. While the majority of courses students take in ninth grade are determined by the school, students can choose whether to take pre-AP or honors courses instead of regularly paced courses; taking and passing these types of courses predicts college credits attempted, earning a double-major, and graduating college (e.g., Dougherty et al., 2006; Ewing et al., 2018; Lapan & Poynton, 2020; Murphy & Dodd, 2009). We collected transcript information from participating schools to determine whether treated students were more likely to enroll in pre-AP or honors courses for their core subjects (math, English, and science/social studies) than control students. We use administrative data collected from 14 of the 15 participating schools in Cohort 1 and all 12 participating schools in Cohort 2. Of the 780 study participants in Cohort 1 whose schools provided administrative data, we have transcript information for 708 students (91%). We observe little differential attrition in the administrative data based on treatment status: 92% of treated students and 89% of control group students in Cohort 1 are observed in the administrative data. Of the 593 participants in Cohort 2, we have transcript information for 573 (97%). We observe similarly low levels of differential attrition in Cohort 2: 95% of treated students and 98% of control students have transcript information.

Ninth-grade course-taking

We code a course as being “advanced” if it includes “advanced,” “honors,” “pre-AP,” or “AP” in the course name provided by the district. A portion of participating students in every school enrolled in advanced English courses in ninth grade. However, in four schools, no students participating in the study enrolled in advanced math courses. Additionally, no participating students from four schools enrolled in advanced courses in science or social studies. 13 These schools are excluded from analyses looking at the effect of the visits on students’ likelihood of enrolling in advanced math or science and social science courses, respectively. Overall, 21.3% of participating students across all schools enrolled in an advanced math course in the first semester of their ninth-grade year, 33.9% enrolled in an advanced English course, and 24.4% enrolled in an advanced science or social studies course.

Empirical Strategy

We estimate the intent-to-treat effects of campus visits relative to only receiving an information packet using ordinary least squares regression. Specifically, we estimate the following linear model:

where

Our coefficient of interest,

Our outcome measures in this article are derived from student responses on the spring survey as well as administrative records. The survey-based outcome measures are summarized in Table 3. Note that our outcome variables are measured on different scales: careless answering is a standardized measure, item nonresponse and college knowledge are percentages (share of skipped items or share of correct responses, respectively), self-reported socioemotional skills are on scales of 1 to 4 or 1 to 5, postsecondary intentions are dichotomous variables, and conversations with school personnel and parents are on 0 to 2 and 0 to 3 scales, respectively. For ease of interpretation, we also present standardized effect sizes below.

Summary Statistics of Outcome Variables From the Spring Survey

Note. 885 students participated in Cohort 1 (445 treated, 440 control); 593 in Cohort 2 (295 treated, 297 control). Min = minimum; Max = maximum.

Results

Survey-Based Outcomes

We first examine the student survey administered in the spring, about 3 months after students received the information packets and about 1 month after the final campus visit. Table 4 presents results from our preferred model, which includes an indicator for treatment assignment and school-by-cohort fixed effects. Column 3 presents our primary estimates of the intent-to-treat effect of the campus visits intervention. 14 Overall, the results presented in Table 4 provide evidence of null or small to medium positive effects of the campus visits treatment on student outcomes (Kraft, 2020).

Impact of Campus Visits on Survey-Based Outcomes, Preferred Model Specification

Note. Cohort-by-school fixed effects included in all models. Standard errors clustered at the lottery (e.g., school-by-cohort) level. Std = standardized.

Students completely missing a spring survey are included in this analysis.

p < .1. **p < .05. ***p < .01.

We find that being assigned to the visits led to a statistically significant (p < .05) 3.0 percentage point increase in the share of correct responses on the college knowledge items relative to the information-only control group (an effect size of 0.14 SDs). While this increase is small in terms of items (less than one additional correct response out of seven), this finding suggests that hearing information about college in an interactive workshop helps students retain more information than receiving written information.

Our results additionally suggest that campus visits increased students’ socioemotional skills. Specifically, participating in the visits led to a 0.086-point increase (effect size = 0.15 SDs; p < .10) in students’ college self-efficacy and a 0.063-point increase (effect size = 0.12 SDs; p < .10) in self-reported grit. This suggests that visiting campus and interacting with current students, faculty, and university staff improved the extent to which students felt they could succeed at college and their self-assessed commitment to following through on their goals. Conversely, we find no impact of the visits on students’ self-reported self-management or academic engagement.

The effect of the visits on proxy measures of diligence through item nonresponse and careless answering is ambiguous. Being assigned to attend the campus visits led to a statistically significant 9.0 percentage point reduction (effect size = 0.21 SDs; p < .01) in item nonresponse on the spring survey. However, we also see a statistically significant increase in careless answering (effect size = 0.14 SDs; p < .10). These two survey behaviors are alternative strategies students can use to indicate disengagement. It is possible that students in the treatment group felt they “owed” the research team survey responses because they became familiar with the researchers on the visits, but put forth lower effort on the survey by answering carelessly. More research is needed to further study the validity of these proxy measures of diligence in the context of field experiments.

Participating in the visits led to a 2.7 percentage point decrease in the likelihood a student reported planning to attend a technical school after graduating high school (p < .05). However, there is no corresponding significant increase in the likelihood of intending to find a job immediately, enter the military, attend a community college, or attend a 4-year university. Furthermore, less than 4% of students in the control group indicated they intended to attend a technical school, so the number of students potentially affected by this effect is small. Future postsecondary enrollment data will allow us to determine whether this result indicates a shift in postsecondary outcomes.

Finally, we find that students assigned to the campus visits increased their reports of conversations with school staff about college requirements, college choices, likelihood of college admittance, ACT scores required for their preferred college, out-of-state college options, college readiness, how to study for college, and how to pay for college. When combined into an index, we find a statistically significant increase in college-related conversations of 0.089 points on a 3-point scale (effect size = 0.16 SDs; p < .01). Our analysis of student course-taking behaviors, presented below, provides evidence as to whether the visits affect students’ intermediate, in addition to short-term, outcomes.

Transcript-Based Outcomes

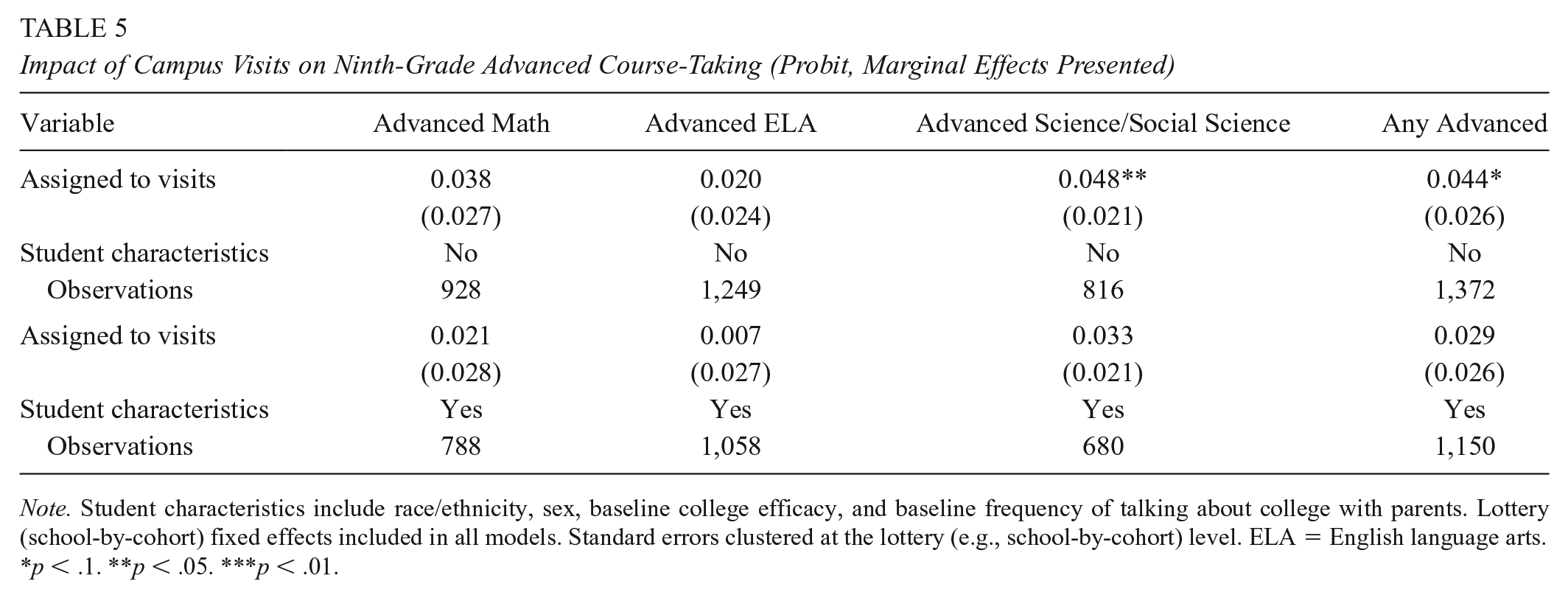

We also estimate the intent-to-treat impact of the visits on students’ ninth-grade course enrollment decisions using the model outlined in Equation (1). The results are presented in Table 5. We find that students assigned to the campus visits are 4.4 percentage points (p < .05) more likely to enroll in any advanced coursework and 4.8 percentage points (p < .10) more likely to enroll in advanced science/social studies courses. 15 We find no significant effects on the likelihood students will take advanced English or math courses, respectively. Point estimates are similar, but slightly smaller and insignificant when we control for students’ background characteristics.

Impact of Campus Visits on Ninth-Grade Advanced Course-Taking (Probit, Marginal Effects Presented)

Note. Student characteristics include race/ethnicity, sex, baseline college efficacy, and baseline frequency of talking about college with parents. Lottery (school-by-cohort) fixed effects included in all models. Standard errors clustered at the lottery (e.g., school-by-cohort) level. ELA = English language arts.

p < .1. **p < .05. ***p < .01.

Limitations

Our study offers some of the first quantitatively rigorous evidence on the importance of early college experiences for influencing students’ postsecondary trajectories. Our use of random assignment minimizes the potential for nonrandom sorting of students into treatment conditions, which should provide for strong internal validity. However, our design cannot protect against treatment–control group crossover caused by treatment students talking about their experiences with control students or control students deciding to visit campus on their own. While acknowledging this limitation, we note that a multisite-randomized research design was necessary to generate sufficient statistical power to detect medium-sized effects in our analysis (Raudenbush & Bloom, 2015). Given our research design and sample size, our analysis can detect effect sizes of 0.17 or greater at power level 0.80.

Furthermore, we do not believe control group crossover strongly affects our results for two reasons. First, our baseline and spring surveys provide empirical evidence suggesting that control group crossover represents, at most, 5% of our sample. While 42% of control group students indicated that they had not visited a college campus in their baseline survey, this dropped to 37% in the spring. If assignment to control induced students to travel on their own to colleges, we would expect a much larger drop in the percentage of students who had not visited a college campus by the spring survey. Moreover, only 4% of treatment-group students indicated that they had not visited a college campus on their spring survey, suggesting a distinct treatment–control group contrast for our analysis.

Second, the unique programming experienced by the treatment group could play an important role in developing an understanding of what campus life is like. Treatment students interacted with college students, faculty, and administrators, and used university facilities. Individual visits to a college campus likely cannot achieve this depth of experience. Thus, we argue that even the 5% of control group students who visited college campuses on their own are unlikely to have experienced the treatment in full.

Another limitation of our work is that we are testing multiple outcomes and therefore may see false positives in our models. In addition to preregistering our research design, our results provide some confidence that our findings are not merely statistical noise as we observe seven statistically significant findings compared with an expected two false-positives under a 10% significance level. Additionally, we implement the step-down method outlined in Heckman et al. (2010) and Romano and Wolf (2005) to control the family-wise error rate when analyzing survey-based outcomes. 16 Using this method, we find significant effects on college knowledge (adjusted p-value is .096), item nonresponse (adjusted p-value is .008), frequency of conversations about college with school personnel (adjusted p-value is .004), and intentions to attend technical school (adjusted p-value is .072). These results provide further evidence that we are finding true programmatic impacts rather than statistical noise.

Another limitation of our study is the context of our intervention. The campus visits we examine occurred within the context of a 4-year, predominantly White, flagship state university. While the information students received was not exclusive to the University of Arkansas, prior research (e.g., Engberg & Gilbert, 2014) suggests that students’ preferences for 2- or 4-year institutions may be influenced by the type of campus they are exposed to during high school. Additionally, the sort of name recognition and appeal that a flagship university with a statewide, and even national, reputation has may leave a stronger impression on students than a smaller, less well-known institution. Future research could explore the possibility of heterogeneous effects of campus visits across institution types.

A final concern lies with our analysis of immediate socioemotional outcomes, some of which have been criticized for their psychometric and theoretical properties (e.g., Credé et al., 2017; Denby, 2016). Indeed, we find that some of the socioemotional constructs exhibit somewhat weak reliability in our sample; for example, the Cronbach’s alpha of grit in our sample was around .6 for both cohorts, and one of our measures of academic engagement had a reliability of .53 for our second cohort. Self-reported scales have also been criticized because of the potential for social desirability bias, whereby students respond as they think they should, rather than in ways that accurately reflect their self-assessments. Similarly, our behavioral measures, particularly item nonresponse, could be influenced by students in the treatment group feeling obliged to respond to the survey given that it was administered by the same individuals they saw organizing the campus visits (e.g., the research team). Additional work is needed to understand how to measure socioemotional outcomes, whether through self-reports or behavioral measures, in various contexts and samples.

Additionally, we find conflicting results between grit, item nonresponse, and careless answering, all of which are conceptually linked to students’ conscientiousness and diligence. The mixed results we find suggest that further work is needed to understand what exactly these constructs are measuring and how they are related. While no single construct is beyond critique, we examine several socioemotional outcomes in this study, likely capturing some important measure of students’ noncognitive skills, which are predictive of students’ postsecondary outcomes (Fosnacht et al., 2018).

Finally, some critiques have focused on the limitations of socioemotional outcomes for addressing systemic barriers to postsecondary education (Stitzlein, 2018). Our interest is in examining whether a school-based intervention can develop these skills as a means of increasing students’ chances of success in college, as part of broader, more systemic, efforts to increase college access. Thus, despite the limitations of the measures and incompleteness of the constructs for addressing inequities, understanding how early college experiences relate to students’ socioemotional outcomes can be useful for informing the conversation about how to expand postsecondary opportunities.

Discussion and Conclusion

Postsecondary access is a concern for policymakers, researchers, and individual students across the country. While past work has focused on determining if financial aid, information, and assistance navigating bureaucratic processes can address access gaps between historically advantaged and disadvantaged students, little work has examined the role that a lack of experience with college plays in students’ postsecondary planning. We provide some of the first rigorous evidence that an intervention designed to introduce prospective students to the experience of college through field trips to a college campus can improve students’ knowledge about college (effect size of 0.14), self-efficacy related to attending and succeeding in college (effect size of 0.15), grit (effect size of 0.12), and academic diligence (proxied by item nonresponse; effect size of 0.21) above the effect of providing written information about college. We also find that campus visits may make students more likely to engage in conversations about college options and preparation with school personnel (effect size of 0.16). Our estimates represent small- to medium-sized effects within the literature on education interventions (Kraft, 2020).

Furthermore, we find suggestive evidence that students assigned to the campus visits are more likely to seek out more rigorous courses, particularly in content areas that are less rigidly tracked. Specifically, while we find no impact of the visits on students’ likelihood of taking advanced math courses, into which students may be placed as early as sixth grade, we find that treated students were almost 5 percentage points more likely to take advanced science or social science courses. Moving forward, students may have more flexibility in choosing to begin taking advanced courses in multiple subjects in high school. We will continue to examine students’ course-taking behavior throughout high school, which will allow us to better explore heterogeneous effects by content area.

Our work suggests that early outreach to middle and high schools could be an effective investment for university admissions offices. Counselors working in middle and high schools could reach out to nearby institutions to establish partnerships and opportunities for students to visit. There are, however, concerns with the scalability of this intervention. Universities may be hesitant to invest in programs that may increase college access without increasing their enrollment figures. Our intervention was not aimed at recruiting students to the University of Arkansas but rather used the University of Arkansas as a place where students could become more familiar with the collegiate experience. In addition, school districts may be concerned about the loss of instructional time caused by students being out of class to attend a campus visit. Despite these challenges, this study and other research highlights the importance of bridging these divides to provide students with early, meaningful experiences to improve college access.

As one of the first experimental evaluations of an experience-based intervention aimed at improving students’ college-going outcomes, this study makes an important contribution to the literature on the intangible barriers that students face when making postsecondary decisions. To close opportunity gaps in postsecondary enrollment and degree completion, researchers should find scalable interventions that can be implemented across a variety of contexts. In this study, we explore the ability of a relatively low-cost intervention—three field trips to a local public university—to affect student attitudes and behaviors toward college. Our findings regarding the short-run impacts of this intervention suggest that this field-trip-based intervention could meaningfully affect student college decisions and preparation. This approach could be adopted by school districts interested in promoting college access for their students and could find support among universities interested in increasing their socioeconomic diversity or student population overall.

Footnotes

Appendix

Notes

Authors

ELISE SWANSON is a postdoctoral research associate at the University of Southern California. Her research focuses on college access, postsecondary student success, and equitable educational outcomes.

KATHERINE KOPOTIC is the manager of external reporting at Saint Louis University. Her research focuses on financial aid and scholarship policies on student outcomes.

GEMA ZAMARRO is full professor and endowed chair in Teacher Quality in the Department of Education Reform at the University of Arkansas. Her research examines teacher quality and turnover, socioemotional skills, and dual language programs.

JONATHAN N. MILLS is a senior research associate in the Department of Education Reform at the University of Arkansas. His research focuses on college access and retention as well as school choice policies.

JAY P. GREENE is Department Head and Distinguished Professor in the Department of Education Reform at the University of Arkansas. His work examines the impact of field trips, the effect of schools on noncognitive skills and civic values, and school choice.

GARY W. RITTER is dean of the School of Education and a professor of education policy at Saint Louis University. His current research focuses on postsecondary access for low-income students, student discipline policy, teacher quality, and the implementation and evaluation of programs aimed at improving educational outcomes for low-income students.