Abstract

Parent engagement has been a cornerstone of Head Start since its inception in 1965. Prior studies have found evidence for small to moderate impacts of Head Start on parenting behaviors but have not considered the possibility that individual Head Start programs might vary meaningfully in their effectiveness at improving parenting outcomes. The present study uses the Head Start Impact Study to examine the average effect of random assignment to and participation in Head Start on parenting outcomes as well as variation in that effect across Head Start programs. Findings reveal that Head Start is effective on average at promoting parents’ daily reading and overall literacy and math activities with children but that effects vary significantly for parents’ literacy and math activities, with some programs much more and some much less effective than their local alternatives. Findings also demonstrate that Head Start has consistent near-zero impacts across centers on parents’ disciplinary interactions with children.

Keywords

Children’s early experiences with their caregivers are one of the most important inputs to their skill development (Crosnoe et al., 2010; Price, 2010; Villena-Roldán & Ríos-Aguilar, 2012; Waldfogel & Washbrook, 2011). Public programs—including home visiting and other parenting interventions—can be effective at changing parents’ behaviors (see Ryan & Padilla, 2018, for a review), but they often struggle to recruit and retain low-income parents (Heath et al., 2018), who often have irregular employment and child care schedules (Chaudry et al., 2012; Enchautegui, 2013; Mytton et al., 2014; Prinz & Sanders, 2007). It is thus judicious to consider additional ways to reach low-income families. The present study considers Head Start—a federally funded school readiness program for low-income children and families—as an important albeit often overlooked avenue for promoting positive parenting behaviors.

As one of the largest sources of federal support specifically dedicated to supporting the school readiness of children from low-income families, Head Start is well positioned to promote positive parenting practices in this population. Based on a belief that boosting children’s skills requires attention to multiple aspects of children’s development, Head Start adheres to a “whole child” model and provides not only preschool education but also medical, dental, mental health, and nutrition services, as well as supports to engage parents in their children’s learning and development (Puma et al., 2010). One way Head Start aims to help parents foster their children’s development is by enhancing parents’ interactions with children (Puma et al., 2010; Zigler & Valentine, 1979). Indeed, the newly updated Head Start performance standards specifically emphasize efforts to involve parents in their children’s early learning through its Parent, Family, and Community Engagement (PFCE) Framework (U.S. Department of Health and Human Services, Administration for Children and Families, 2018a). Given the program’s explicit emphasis on involving parents in their children’s early learning, Head Start could be an ideal program through which to support parenting.

Prior studies examining whether Head Start is effective at enhancing parents’ interactions with their children have found evidence for small to moderate impacts on the frequency with which parents engage in certain reading and math activities with children and their likelihood of spanking children (Ansari et al., 2016; Gelber & Isen, 2013; Puma et al., 2010). Not all Head Start centers are the same, however. Individual Head Start centers vary considerably with regard to the curricula they use, the parenting programming they offer, their classroom quality, and the ways in which they choose to engage families (Aikens et al., 2017; Moiduddin et al., 2012; U.S. Department of Health and Human Services, Administration for Children and Families, 2016, 2018a). For example, all Head Start centers are required to offer two home visits to families per year, but they can provide more if they choose, producing variability in the number of home visits that are offered across centers (U.S. Department of Health and Human Services, Administration for Children and Families, 2016). Moreover, the PFCE framework makes clear that individual grantees have freedom in deciding what family engagement at individual centers looks like, given family- and community-specific needs (U.S. Department of Health and Human Services, Administration for Children and Families, 2018a). Prior studies of Head Start’s impact on parenting have not considered the likely possibility that individual Head Start programs vary in their effectiveness at improving parenting outcomes. Prior research has thus not been able to fully elucidate the conditions under which Head Start is most effective at reaching its goal of engaging parents in their children’s development.

The present study takes advantage of the multisite design of the Head Start Impact Study (HSIS), a large randomized controlled trial (RCT) of Head Start centers, to examine the impact of random assignment to and participation in Head Start on parenting outcomes in two domains—cognitive stimulation and physical discipline. First, I examine Head Start’s average effects on parents’ cognitively stimulating and disciplinary interactions with their children. Though largely a replication of prior work (Gelber & Isen, 2013; Puma et al., 2010), I focus on parenting measures that are both predictive of children’s outcomes and theoretically modifiable by Head Start—parents’ daily reading, other literacy activities, math activities, and physical discipline—rather than examining every available variable related to parenting in the HSIS, as in prior studies. The key innovation of this study, however, is the examination not only of average effects of Head Start but also the variation in effects on parents across sites. Solely focusing on the average effect of Head Start could mask meaningful variation in effects across programs that could help to elucidate why some programs are more effective than others (Bloom et al., 2017; Bloom & Weiland, 2015; Weiss et al., 2014; Weiss et al., 2017). It is only by understanding both the average effects and variation in those effects that we can form a clear picture of the extent to which Head Start is effective at its goal of improving parents’ early interactions with their children.

Head Start as an Intervention for Parents

Since its inception in 1965 as part of the War on Poverty, Head Start has served more than 36 million children and families, making it one of the largest sources of federal support for low-income families with young children in the United States (U.S. Department of Health and Human Services, Administration for Children and Families, 2018b). The program is free for all children whose family income is below the poverty line and, when there are slots available, for an additional 35% of children whose families’ income is below 130% of the poverty line (Improving Head Start for School Readiness Act of 2007). Ten percent of Head Start slots are also reserved for children with disabilities, regardless of family income (U.S. Department of Health and Human Services, Administration for Children and Families, 2016). The main goal of Head Start is to improve the school readiness of children from low-income families by supporting the development of the whole child (Office of Head Start, Administration for Children and Families, 2019; U.S. Department of Health and Human Services, Administration for Children and Families, n.d.). To achieve this goal, Head Start provides a number of services, including parent and family supports that address families’ self-sufficiency goals, promote positive parent-child relationships, and actively encourage parents’ involvement in their children’s learning (Office of Head Start, Administration for Children and Families, 2019; Puma et al., 2010; Zigler & Styfco, 2004). The majority of attention surrounding Head Start has centered around the extent to which Head Start supports children through early childhood education (ECE) services. Significantly less attention has been paid to the extent to which Head Start supports parents and families as a whole, despite the known important contributions of parents to children’s development as well as Head Start’s historical commitment to involving parents in their children’s learning.

For more information on which parenting behaviors we might expect Head Start to impact and why we might expect them to be affected, as well as a review of prior research examining Head Start’s impact on parenting behavior, see online Supplemental Appendix A.

Variation in Head Start Program Effects on Parenting Behaviors

Prior research suggests that Head Start participation can enhance parenting behavior on average, in terms of both cognitive stimulation and physical discipline (online Supplemental Appendix A). However, not all Head Start centers are the same. Under federal regulations, Head Start centers must all meet certain standards and requirements, but individual centers also have a large degree of freedom in their operation. Individual centers can differ in, for example, their program curricula, the parenting programming they offer, their teachers’ qualifications, or even the overall climate and attitude toward parents (Aikens et al., 2017; Bloom & Weiland, 2015; U.S. Department of Health and Human Services, Administration for Children and Families, 2016). It is thus quite possible that centers vary meaningfully in their effectiveness. It is important to note, however, that the opposite could also be true—while Head Start allows individual grantees certain choices, it ultimately operates as a single, uniform program with the same overarching goals and design. It is therefore also possible that Head Start centers do not vary meaningfully in their effectiveness and that the way we have historically thought about the program—as a monolith—has been accurate. Indeed, as detailed below, large multisite RCTs do not always find treatment variation across sites (Weiss et al., 2017). Estimating whether Head Start programs do indeed vary significantly (i.e., above and beyond random estimation error) in their effectiveness is the first step toward understanding what determines program effectiveness—if some centers are shown to be significantly more effective than others, we can begin to identify practices and features of particularly effective centers and, ideally, implement these practices on a broader scale in order to improve services and outcomes for more children and families.

In recognition of the need to study treatment effect variation, new and innovative methodologies have been developed to precisely quantify treatment effect variation using multisite RCTs (Bloom et al., 2017; Raudenbush & Bloom, 2015; Weiss et al., 2017). Recent studies have used these methodologies to quantify variation in Head Start’s effects on children’s outcomes (Bloom & Weiland, 2015; Morris et al., 2018; Walters, 2015). They have found that the originally reported small impacts on children’s academic and behavioral skills from the HSIS were masking large variation in effects across sites and that programs ranged in their effectiveness at promoting children’s school readiness compared to their local alternatives (Bloom & Weiland, 2015; Morris et al., 2018; Walters, 2015). While these studies highlight the potential for Head Start centers’ effectiveness to vary, they focused solely on children’s outcomes. We have a very limited understanding of the conditions under which Head Start centers are best able to promote parenting behaviors that are known to affect children’s school readiness outcomes.

Prior studies have demonstrated that on average, Head Start has positive but relatively small effects on the frequency with which parents engage in math and literacy activities and on parents’ physical discipline (Ansari et al., 2016; Gelber & Isen, 2013; Puma et al., 2010). Significant average effects do not necessarily signal that treatment effects vary across programs, however, just as null average effects do not mean that there is no variation in impacts. Indeed, in a study that estimated both average effects and effect variation using data from 16 large multisite RCTs of various education and workforce development interventions, Weiss et al. (2017) found that treatment impacts from these 16 studies fell into four categories. First, it is possible to observe consistent near-zero impacts across all sites, wherein the average treatment effect is zero or close to zero and treatment effects do not vary, indicating that the program is consistently ineffective. Second, there could be a near-zero average impact with substantial cross-site variation. In this scenario, average null effects might lead to the conclusion that the program is ineffective, yet the wide variation in effects demonstrates that this is not true for all sites. Third, treatment effects could be consistently positive (or negative), meaning that there is an average effect and that there is little variation in that effect. Fourth, there could be a significant positive impact on average with substantial cross-site variation, where most sites produce positive effects with widely varying magnitudes. Given the prior findings of small positive average effects of Head Start on parenting outcomes, variation in treatment impacts could mean either that Head Start is consistent in making small changes on parenting behaviors (i.e., no variation in impacts) or that some sites make large changes while others make small or no changes (i.e., significant variation in impacts).

Studying Treatment Effect Variation in the HSIS

The design of the HSIS makes it the ideal data set with which to answer questions about variation in Head Start treatment effects. Specifically, randomization of children and parents to either treatment or control occurred at the center level in the HSIS, meaning that parents applied to a specific Head Start center and then, if randomly assigned to the treatment group, were offered services in that center. This means that the HSIS can be considered a composite of over 300 RCTs, each of which was conducted at a single site. This unique design allows for the estimation of both an average effect and a standard deviation of that effect that quantifies how much the individual effects vary across centers. Very few other RCTs—even federally funded ones—have the power to identify potential effect heterogeneity across program sites. By examining and describing the full range of Head Start effects on parenting behaviors, the present study adds to our knowledge of Head Start’s ability to improve parenting practices, a question fundamental to the program’s “whole child” model and PFCE framework.

Method

Data and Sample

The HSIS is a nationally representative sample of 383 randomly selected Head Start centers and 4,667 newly entering, eligible 3- and 4-year-old children. The study began in 2002 and continued through 2006, when children ended first grade. In order to maximize statistical power and so that results could be compared to those from prior studies examining treatment effect variation in the HSIS (Bloom & Weiland, 2015; McCoy et al., 2016), the current study combined the 3- and 4-year cohorts and examined short-term parenting outcomes after 1 year of Head Start. Children whose parents applied for Head Start services were randomly assigned to either a treatment group that had access to Head Start or a control group that did not have access to the Head Start center where they applied (but could enroll in other programs).

I created separate analytic samples for each parenting outcome. I restricted each sample to those who had valid data for the parenting outcome under investigation. Following others (Bloom et al., 2017; McCoy et al., 2016), I addressed missing predictor variable data (e.g., missing data on covariates and baseline parenting variables) through single replication of a multiple imputation model. I imputed using the ICE command in Stata 15.0, which is based on a regression switching protocol using chained equations (Royston, 2005). For each analytic sample, I dropped centers if after restricting to those with nonmissing outcome data they had either no treatment group members or no control group members and thus could not provide experimental estimates of Head Start effects (Bloom & Weiland, 2015; McCoy et al., 2016; across samples, n of children ranged from 3,497 to 3,602 within 316 to 318 centers). I also dropped centers if they had zero compliance with random assignment—meaning that the proportion of their control group members who enrolled in Head Start was equal to the proportion of their treatment group members who enrolled—because these centers similarly could not provide information about Head Start effects. This process resulted in sample sizes ranging from 3,416 to 3,525 children within 296 to 297 Head Start centers.

Measures

Parents answered questions about their interactions with children at the beginning (Fall 2002; Time 1) and end (Spring 2003; Time 2) of the Head Start year in two domains: cognitive stimulation and physical discipline. I examined three cognitive stimulation outcomes: whether parents read to children daily as well as the frequency with which they engaged in multiple literacy- and math-related activities with children, and two physical discipline outcomes: whether parents spanked their children in the past week and the number of times they did so. For more detailed information about each of these measures, as well as the covariates included in all analyses, see online Supplemental Appendix B.

Analytic Strategy

To quantify variation in Head Start’s effects on parenting outcomes, I employ recently developed approaches designed for use with multisite RCTs (Bloom et al., 2017; Bloom & Weiland, 2015; Raudenbush & Bloom, 2015; Raudenbush et al., 2012). I separately estimate the causal effect of random assignment to Head Start and the causal effect of enrollment in Head Start.

In all models, parenting behaviors at the end of the Head Start year (Time 2) are predicted by Head Start treatment status and all covariates. Each parenting measure from the beginning of the Head Start year (Time 1) is also included as a covariate, which controls for any potential unmeasured, time-invariant differences in treatment and control group parents present at the beginning of the study (Cain, 1975; Chase-Lansdale et al., 2003; National Institute of Child Health and Human Development Early Child Care Research Network & Duncan, 2003; Votruba-Drzal & Chase-Lansdale, 2004). Regression coefficients are thus interpreted as the effect of random assignment or enrollment on changes in the parenting measure being predicted (Kessler & Greenberg, 1981).

Due to significant noncompliance to random assignment in the HSIS (14%–20% depending on the age cohort and random assignment condition), it was necessary to separately estimate the effect of Head Start random assignment—which compares treatment and control group members regardless of their actual participation in the program—and the effect of Head Start participation—which adjusts for this noncompliance. Both of these estimates are useful. The effect of random assignment is arguably more policy relevant in that it reflects real-world scenarios in which parents are offered a slot in Head Start but do not always take it, while the effect of program participation may be more relevant to program implementation efforts in that it characterizes the effect for families who actually participated in the program (Puma et al., 2010).

Following others (e.g., Bloom & Weiland, 2015; Morris et al., 2018; Puma et al., 2010), I consider p values less than .10 as statistically significant because this matches the threshold used in the original HSIS final impact report and allows for broader discussion of treatment effect heterogeneity. Results that are significant at the p < .10 should be treated with some caution, however.

Effect of Random Assignment

To estimate the effect of random assignment to Head Start—the “intent to treat” (ITT) effect—as well as the cross-site variance in the effect of random assignment, I estimate a two-level random coefficients model that nests parents (Level 1) in the Head Start centers at which they initially sought care (Level 2). First, this two-level random coefficients model specifies a fixed intercept and a random coefficient for each site (called an FIRC approach; Sabol et al., 2020). Specifying a fixed intercept eliminates biases resulting from systematic and nonsystematic differences across Head Start centers (Bloom et al., 2017; Bloom & Weiland, 2015; McCoy et al., 2016), and specifying a random coefficient allows treatment effects to vary across Head Start sites. The model also specifies separate individual-level residual outcome variances for the treatment group and the control group, which accounts for “T/C heteroskedasticity”—the possibility that the individual-level outcome variance for treatment members differs from that of the control group—which could bias the estimate of cross-site variance (Bloom et al., 2017; Bloom & Weiland, 2015). For more details on this approach, see online Supplemental Appendix C.

Effect of Enrollment

In the case of the HSIS, where there are both “no shows” and “crossovers,” the effect of Head Start enrollment represents a “local average treatment effect” (LATE), meaning that the average treatment effect is specific to compliers (Angrist et al., 1996; Raudenbush et al., 2012). To estimate the LATE, I use an extension of a typical instrumental variables approach (Angrist et al., 1996; Heckman & Robb, 1985; Raudenbush et al., 2012) proposed by Raudenbush et al. (2012; “Option C”) and then expanded upon by Bloom and Weiland (2015). This approach allows for estimation of cross-site variation in enrollment effects, accounts for the nested structure of the data, and accounts for random assignment noncompliance. For more details on this approach, see online Supplemental Appendix C.

Results

Descriptive Results

At baseline, treatment and control group members were balanced on almost all covariates (Table 1). The two exceptions were that parents in the treatment group were slightly less likely to have less than a high school education and that the time between baseline and spring parent interviews was slightly longer for control group compared to treatment group parents.

Descriptive Statistics and Baseline Balance of the Analytic Sample

Note. Sample includes children in complete randomized blocks with nonzero compliance. This sample is from a single replicate of a multiple imputation model that imputed missing baseline parenting variables and covariates. The N for the descriptive statistics reported here reflects the largest possible of these samples, from the cognitive stimulation variable.

p < .001. **p < .01. *p < .05. †p < .10.

Treatment group parents exhibited slightly more positive parenting behaviors at baseline—they were more cognitively stimulating, read to children more often, read for longer, were less likely to spank, and spanked their children less frequently. These differences were small but nonetheless signal a need to compare changes in parenting across the two groups as a result of Head Start and to control for demographic characteristics of families that might help account for preexisting differences between the groups.

Treatment Impact Average Effects and Variation in Effects

I standardized effect sizes for literacy activities and math activities by dividing the estimated Head Start effect on each outcome in its original units by the control group standard deviation for that outcome (Bloom & Weiland, 2015; Weiss et al., 2017). This is a useful approach in interpreting these outcomes since these scale scores do not have a natural meaning and are thus difficult to interpret (Weiss et al., 2017). For example, if the average literacy activities score for the control group at the end of the Head Start year was 3.5, and the treatment effect on the original 1- to 6-point scale was 0.5, this would mean that on average, control group parents read to their children two or three times per month (scale score of 3) and one or two times per week (scale score of 4), while treatment group parents on average read to children one or two times per week (scale score of 4)—a difference of about two to five times per month. This interpretation gets complicated and tedious, particularly when effects are smaller than 0.5. Putting effect sizes for literacy and math activities into standard deviation units also allows effects to be more easily compared to previous studies. An average effect size for literacy outcomes of 0.2 in standard deviation units would signify that on average, Head Start parents increased their literacy outcomes more than control group parents by a magnitude that equals 0.2 standard deviations of the literacy activities measure in its original 1- to 6-point scale units.

For consistency, coefficients for spanking frequency are also reported in standard deviation units in tables, but the Results section also notes the interpretation in terms of the item’s natural units (spanking frequency) where significant. Coefficients for binary outcome variables (child is read to daily, child spanked in past week) represent the difference between the treatment and control group in how much parents’ probability of doing that behavior changed as a result of Head Start.

Results from all models examining the average effect of Head Start on parenting outcomes were very similar to results from prior studies examining this effect in the HSIS using similar parenting variables (Gelber & Isen, 2013; Puma et al., 2010). Online Supplemental Appendix D shows the results from the present study for average effects compared to those from prior studies.

ITT Results

Cognitive stimulation

Results for the average effect of random assignment to Head Start on each parenting outcome, as well as the standard deviation for those treatment effects, are displayed in the top panel of Table 2. Random assignment to Head Start was effective on average at promoting parents’ cognitively stimulating interactions with their children. Specifically, parents randomly assigned to Head Start increased their probability of reading to children daily more (by 4.4 percentage points) on average than did parents in the control group. Head Start parents also increased their literacy activities by 0.157 standard deviation units (equivalent to 0.18 points on the original 1- to 6-point scale) and their math activities by 0.179 standard deviation units (equivalent to 0.21 points on the original 1- to 6-point scale) with children more on average than did control group parents.

Average Effects of Head Start Random Assignment and Enrollment on Parenting Outcomes and Standard Deviations of Effects

Note. Models were fit using children with available outcome data in nonzero compliance and complete randomized blocks and using fixed intercepts for centers with a random treatment coefficient. Covariates include those used in the original Head Start Impact Study final report, including child gender, child race/ethnicity, home language, mother’s age, mother’s education, mother’s marital status, whether mother was a teen mother at the time of child’s birth, mother immigrant status, whether child lives with both biological parents, and number of weeks between fall and spring parent interviews. Models also include a binary indicator of the child’s age cohort. The appropriate parenting measure from baseline is also included as a covariate for each outcome. Effect sizes for all continuous outcomes (literacy activities, math activities, spanking frequency) were calculated by dividing the estimated Head Start effect on each outcome in its original units by the control group standard deviation for that outcome. Coefficients for binary outcome variables (child is read to daily, child spanked in past week) represent the difference between the treatment and control group in how much parents’ probability of doing that behavior changed as a result of Head Start. Standard errors are in parentheses.

Assuming normality, 90% of cases would fall in this range.

p < .001. **p < .01. *p < .05. †p < .10.

For two of these outcomes, parents’ literacy and math activities, effects also varied significantly across centers, indicating that some programs were significantly less and some were significantly more effective than their local alternatives. For literacy activities, the significant standard deviation of 0.221 around the average effect of 0.157 indicates that if effect sizes were normally distributed across centers, then 90% of centers would have an effect size for literacy outcomes between −0.21 and 0.52. This is a wide range and demonstrates the inadequacy of solely considering the average effect. Likewise, for math activities the significant standard deviation of 0.201 around the average effect of 0.179 indicates that if effect sizes were normally distributed across centers, then 90% of centers would have an effect size for math activities between −0.15 and 0.51—again, a wide range of effects. Effects for the probability of reading daily did not vary significantly across centers.

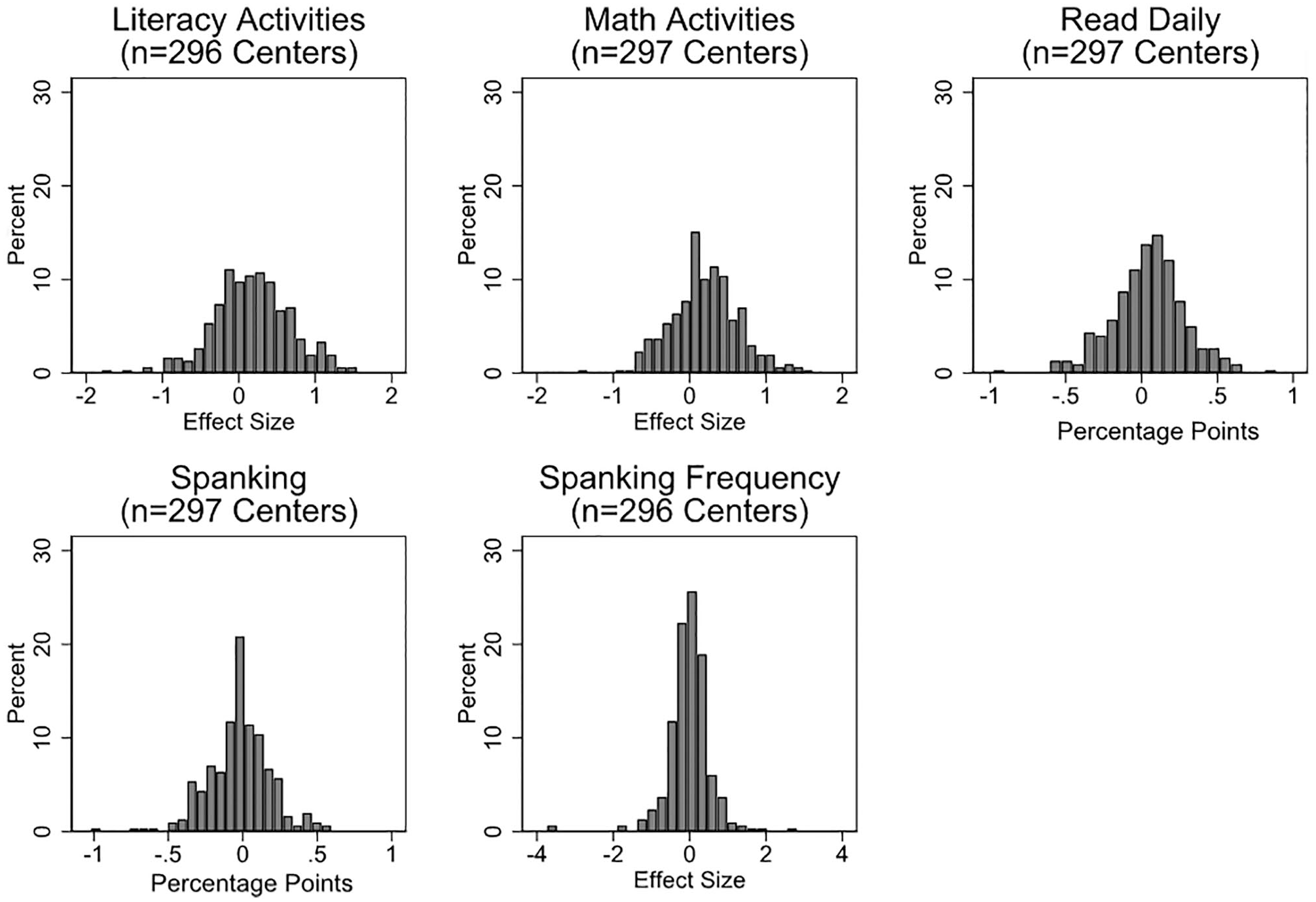

Figure 1 displays histograms of adjusted empirical Bayes estimates for all examined outcomes from the FIRC models in order to visualize the variation in effects (Bloom & Weiland, 2015). For literacy activities, for example, the figure shows that many programs had an effect size around 0.16 (the average) but that many programs’ effect estimates also varied from the average, from negative to positive. The distribution for this effect is wide and relatively low. By contrast, a higher percentage of programs’ effects on daily reading, which did not vary significantly, were around the average (0.04, or 4 percentage points). The distribution for this effect is more tightly concentrated around the average.

Histograms of adjusted empirical Bayes estimates of random treatment effects from FIRC models for each outcome. Effect sizes for continuous outcomes (literacy activities [SD = 1.16], math activities [SD = 1.45], and spanking frequency [SD = 1.46]) are in standard deviation units. For example, an average effect size for literacy (or math) outcomes of 0.2 in standard deviation units would signify that on average, Head Start parents increased their literacy (or math) outcomes more than control group parents by a magnitude that equals 0.2 standard deviations of the literacy (or math) activities measure in its original 1- to 6-point scale units. The original units for spanking frequency was the number of times the parent spanked the child in the past week. Effect sizes for dichotomous outcomes (read daily and spanking) are in percentage point units and represent the difference in treatment and control group parents’ likelihood of reading daily and spanking children after 1 year of Head Start. FIRC = fixed intercept and random coefficient.

Figures displaying treatment impact estimates and their standard errors for each outcome for a random sample of centers are included in online Supplemental Appendix E.

Physical discipline

Random assignment to Head Start was not effective on average at lowering the likelihood of parents spanking their children or the frequency with which parents reported spanking their children (Table 2). Although neither of these average effects were significant, this effect did vary—though modestly—for the frequency with which parents spanked children (Figure 1). Specifically, the significant standard deviation of 0.209 around the average effect of −0.016 (equivalent to an average reduced spanking frequency for Head Start parents by 0.02 spanks per week) indicates that if effect sizes were normally distributed across centers, then 90% of centers would have effect sizes between −0.03 and 0.03 (−0.5 and 0.5 spanks per week) for spanking frequency. This is not a very wide range and all effect sizes are very close to zero. Together, results suggest that Head Start random assignment effects for physical discipline are small and vary minimally.

LATE Results

Cognitive stimulation

Results for the effect of Head Start enrollment were similar to those for the effect of random assignment with regard to the pattern of significant findings, with significant average effects for reading daily, literacy, and math activities, and significant standard deviations around those average effects for literacy and math activities. The magnitude of these effects differed somewhat, however, with larger average effects across all outcomes in the LATE models compared to ITT models (as is common when comparing effects of random assignment versus participation because of “dilution” in ITT effects as a result of noncompliance with random assignment) but smaller standard deviations around those effects in the LATE models compared to ITT models (likely due to increased precision as a result of eliminating uncertainty introduced by noncompliers in LATE estimates). Specifically, the average effect of enrollment for literacy activities was 0.199 (equivalent to 0.23 points on the original 1- to 6-point scale) with a significant standard deviation of 0.174, indicating that if normally distributed, 90% of centers’ effect sizes would be between −0.08 and 0.48. The average effect for math activities was 0.236 (equivalent to 0.27 points on the original 1- to 6-point scale) with a significant standard deviation of 0.176, indicating that if normally distributed, 90% of centers’ effect sizes would be between −0.05 and 0.53.

Physical discipline

Whereas random assignment to Head Start did not significantly affect parents’ likelihood of spanking on average, the magnitude of the analogous coefficient on enrollment in Head Start in the LATE model was larger and was statistically significant (p = .053), showing that enrollment in head Start reduced the likelihood of parents spanking their children somewhat, by 3.7 percentage points. Enrollment did not affect parents’ average frequency of spanking, however, and neither of these effects varied significantly in LATE models. Results suggest that Head Start enrollment was minorly effective at reducing spanking likelihood on average across programs.

Discussion

One of the hallmarks of Head Start is its focus on empowering parents to be active participants in their children’s learning and development. This study assessed whether Head Start programs are effective at achieving that goal by examining the average effect of Head Start on parents’ cognitively stimulating and disciplinary interactions with their children, as well as the extent to which Head Start programs vary in their effectiveness at improving these interactions. I found that both random assignment to and participation in Head Start were effective on average at improving parents’ cognitively stimulating interactions with children—including their likelihood of reading to children daily and their overall literacy and math activities. For both random assignment and participation, Head Start effects on parents’ literacy and math activities also varied significantly. Effects were much weaker for Head Start’s effects on parents’ physical discipline behavior. Only enrollment—but not random assignment—had a significant effect on decreasing parents’ likelihood of spanking, and this effect was very small in magnitude. Neither random assignment nor enrollment affected parents’ average frequency of spanking. Effects on parents’ likelihood of spanking did not vary significantly across Head Start programs, though the effect of random assignment on spanking frequency varied slightly across programs.

Results from the present study indicating that Head Start is moderately effective at promoting parents’ cognitively stimulating interactions with children are consistent with prior reports (Gelber & Isen, 2013; Puma et al., 2010). This study extends this past work by demonstrating that two of the three examined effects varied significantly across Head Start sites, meaning that some programs were significantly more effective than others at improving parents’ literacy and math activities with children. Moreover, the wide ranges of program effects for both of these outcomes—from negative to positive—demonstrate that while most Head Start programs are more effective than their local alternatives (i.e., the control condition), some programs are less effective than their local alternatives at improving the frequency with which parents engage in literacy and math activities with their children. This means that parents at these centers likely would have improved more had they not been randomly assigned to or attended Head Start. These findings reveal that while Head Start is effective on average at its goal of improving parents’ literacy and math activities with their children, individual Head Start programs vary considerably in their ability to do so. This is an important finding—Head Start has historically been treated in both the academic and policy arenas as a single, uniform program that is operated in many places. The present study provides a deeper understanding of the potential for Head Start to improve families’ outcomes by demonstrating that while Head Start centers operate under a uniform funding structure and set of standards, they should not all be considered uniform in their effectiveness at improving either parent (or child, as previously demonstrated) outcomes.

Notably, Head Start’s impact on the likelihood of parents reading to children daily was consistently positive across programs, suggesting that Head Start is universally good at relaying to parents the importance of daily reading with young children. Perhaps it is easier or more straightforward for providers to convey the importance of daily reading with children than messages about multiple literacy and math activities. Given the known positive links between regular parent-child reading and children’s language and literacy skills (Frijters et al., 2000; Price & Kalil, 2018; Sénéchal & LeFevre, 2002), these are promising findings that suggest that one way that Head Start may affect children’s early literacy skills (Puma et al., 2010) is through its impact on parenting.

Daily reading is not the only important cognitively stimulating input to child development, however. Children who are exposed to rich and complex language input and who practice literacy and math concepts and skills regularly tend to develop better language, literacy, and math skills and have higher academic achievement (Crosnoe et al., 2010; Melhuish et al., 2008; Price, 2010; Song et al., 2014; Tamis-LeMonda et al., 2014; Villena-Roldán & Ríos-Aguilar, 2012). Promoting parents’ engagement in math activities with children is particularly important because parents are often less confident in their abilities to support early math compared to reading concepts (Levine et al., 2010). It is thus promising that many centers are quite effective at promoting these types of learning-enriching activities between parents and children. Results demonstrate that Head Start nonetheless still has room to grow in these domains such that all Head Start children are supported by their parents in their early learning and development.

Head Start’s impacts on parents’ physical discipline behaviors were minimal. Only enrollment, and not random assignment, yielded an average treatment effect for parents’ likelihood of spanking their children. At first glance, this finding seems to contradict prior studies finding that Head Start reduces parents’ use of physical punishment (Puma et al., 2010). However, in the HSIS final impact report, effects of random assignment on spanking were found only for the 3-year-old cohort—these parents were about 7 percentage points less likely to spank their children than control group parents (Puma et al., 2010). No effects on parents’ spanking were found in the 4-year-old cohort. Given that the 3-year-old and the 4-year-old cohorts were combined in the present study, it is perhaps not surprising that the already modest average effects on spanking were smaller than in the original impact report and not statistically significant. Results from the present study also indicate that Head Start effects on physical discipline varied only minimally across programs—the only disciplinary treatment effect to vary significantly was the effect of random assignment on parents’ frequency of spanking, and the magnitude of this standard deviation was very small. Thus, Head Start was shown to be only minimally effective at reducing physical punishment on average, and while these average effects on spanking may be stronger for 3-year-olds compared to 4-year-olds (Puma et al., 2010), full-sample treatment effects do not vary across centers. Rather, across centers, Head Start treatment effects on parents’ spanking use are consistently very small or null, signaling that most Head Start sites are not effective at changing the overall population of Head Start parents’ physical discipline behaviors.

When results from both parenting domains are taken together, they demonstrate that Head Start is effective on average at promoting parents’ cognitive stimulation but that these effects still vary widely across centers, while most Head Start centers had little to no success at reducing parents’ use of spanking as a disciplinary strategy. That Head Start is better at promoting parents’ enriching activities with children than their disciplinary behaviors is perhaps not surprising given that Head Start’s main mission is to prepare children for school. It therefore makes sense that Head Start would prioritize the domain of parenting more closely connected to promoting children’s early academic skills. Furthermore, discipline as a focus of intervention is often a more sensitive subject than cognitive stimulation for a few reasons. First, the wide held belief in the United States that decisions regarding how to discipline a child should be made privately by parents might lead Head Start centers to decline from providing suggestions for nonphysical discipline, or might make parents less susceptible to these suggestions. Indeed, in a recent report describing interactions between Head Start providers and parents using data from the most recent wave of the Head Start Family and Child Experiences Survey (2014), neither teachers nor family service staff reported talking to parents about discipline, and the majority of both groups reported that they sometimes find it hard to support the way parents choose to discipline their children (Aikens et al., 2017). Second, widespread messages directed at parents about the importance of reading and doing other enriching behaviors with children are more likely to have reached parents across income levels, while there is still a greater reluctance among parents to accept the message that spanking is ineffective and detrimental to children’s development—particularly among lower income families (Gershoff et al., 2018). Indeed, despite the documented reduction over time in the overall incidence of spanking for families of all income levels, gaps in spanking use between low- and high-income parents remain such that parents in the 10th income percentile are significantly more likely to spank their children than parents in the 90th income percentile (Ryan et al., 2016). Specifically, in 2011 30% percent of parents in the 10th income percentile endorsed spanking as a disciplinary strategy compared to 12% of parents in the 90th income percentile. Given the link between spanking and negative outcomes like aggression and antisocial behavior for children (Gershoff & Grogan-Kaylor, 2016) as well as the potential for spanking to escalate into more serious abuse (Brown et al., 1998; Sedlak et al., 2010; Zolotor et al., 2008), these levels of spanking use—particularly among lower-income families—are problematic. The American Academy of Pediatrics has released new guidance for pediatricians and other health care providers for encouraging parents against the use of physical punishment and educating parents about positive and effective alternative disciplinary strategies (Sege et al., 2018). Head Start should consider following these recommendations and begin encouraging providers to educate parents about the harmful effects and ineffectiveness of spanking and to more actively dissuade parents from engaging in spanking as a disciplinary strategy.

While this study makes important contributions to the literature, it is not without its limitations. First, although parenting items and scales like the one used in the current study are commonly used to measure parenting behavior in the home, the study might have been strengthened if observational ratings of parenting quality dimensions such as parental scaffolding, sensitivity, and negative regard were available. Second, while random assignment is the gold standard in evaluation research and allows for stronger causal inferences, the comparison of randomly assigned groups means it is not entirely clear to whom Head Start attendees are being compared because families in the control condition could choose a range of alternative arrangements. Thus, the control condition to which Head Start attendees are compared is made up of children in a number of different arrangements, making it an imprecise comparison. This means that it is not possible to produce causal estimates of Head Start compared to specific ECE arrangement types, though prior work demonstrates that estimates may vary depending on which alternative care arrangement Head Start is being compared to (Feller et al., 2016; Morris et al., 2018; Zhai et al., 2013). For instance, Feller et al. (2016) used the HSIS and found that it was children who would have received care in a home-based setting had they been assigned to the control condition who benefitted most from Head Start (Feller et al., 2016; Morris et al., 2018). By contrast, effects were minimal for children who would have received care in a center-based setting had they been assigned to the control condition. The counterfactual care setting is arguably less important for parenting compared to child outcomes, however, given that the contrast between what Head Start parents and parents in other center-based arrangements receive is likely larger than the contrast between what children in those arrangement types receive since Head Start places a larger emphasis on parent engagement than do other care arrangements. Nonetheless, if the difference between services that Head Start parents receive and services that parents at other arrangements receive is larger for some centers than it is for others, this could affect variation in Head Start’s treatment effects on parenting. Future research should therefore consider the possibility that children’s counterfactual care condition might also help to explain variation in Head Start treatment effects on parenting, as it does for effects on child outcomes.

Third, while this article makes an important contribution to the literature on Head Start’s ability to influence parenting behaviors and to the treatment effect heterogeneity literature, it was unfortunately beyond the scope of the present study to examine predictors of Head Start treatment effect variation on parenting behavior. A natural next question in this line of inquiry is this: What factors predict which centers are more versus less effective? There may be certain characteristics of centers—such as the types of parenting programming that they offer—or of families served at centers—such as the degree to which families face economic risk—that might predict which centers are particularly effective and which are particularly ineffective. Exploring predictors of Head Start treatment effect variation on parenting behavior is a key direction for future work.

Finally, while the HSIS is the ideal data set with which to answer questions about Head Start treatment effect heterogeneity, the data are somewhat old—children in the study attended Head Start in the 2002–2003 school year. This is relevant for two reasons. First, with the wide expansion of public preK programs, the Head Start treatment contrast has changed since 2002. More specifically, the control group in 2002 may have had access to a very different set of options than children today and thus findings may or may not be generalizable to today. Head Start still leads the ECE landscape in terms of its attention to parents, though, so the contrast between outcomes for parents whose children attend Head Start and those who do not today versus in 2002 may not be as different as the contrast in outcomes for children during the same time frame. Second, parenting itself has changed since the time the HSIS was carried out, with parents of all income levels doing significantly more literacy and math activities with children in 2012 than in 1998 (Kalil et al., 2016). The effect of Head Start on parenting behaviors may thus be different today than it was in 2002. Head Start may be less effective at improving parenting if parents served by the program are already doing all they can and do not have as much room to grow, but it may also be more effective at changing parenting if low-income Head Start parents today are more motivated to be involved in their children’s learning.

Conclusions

Despite the limitations, this study demonstrates that the previously reported small effects of Head Start on parents’ cognitively stimulating interactions with children masked significant variation in the extent to which individual programs modify parents’ engagement in literacy and math activities with their children, with some programs much more and some much less effective than their local alternatives. This variation is an important finding worthy of further consideration. Political support and funding for public programs are often determined by the answer to the question “Does the program work?” The variation demonstrated in the present study contributes to the growing body of evidence (Morris et al., 2018; Weiss et al., 2017) that shows that once a program is taken to scale and implemented in many different locations, one cannot expect to get a uniform answer to that question. Head Start specifically has a central federal funding source but is ultimately operated at the local level, meaning that Head Start funding is distributed from the federal government directly to locally operated grantees. As a result, individual Head Start programs have freedom with regard to the programming they offer, the curricula they use, and the ways in which they choose to engage families, which results in variation in these features across programs (Aikens et al., 2017; U.S. Department of Health and Human Services, Administration for Children and Families, 2016, 2018a). Head Start centers also vary inherently in that they operate in many different locations and thus serve different populations of families. The present study establishes that there is meaningful variation in centers’ effectiveness at promoting positive parenting outcomes, a finding that has been previously established for Head Start’s effects on children’s outcomes (Bloom & Weiland, 2015; Walters, 2015; Weiss et al., 2017). Thus, the answer to the question “Does Head Start work at improving parents’ interactions with their children?” is this: sometimes yes, and sometimes no. As noted above, future research should identify which Head Start program features specifically, or which Head Start parent populations, are most effective at promoting positive parenting behaviors.

Currently, the most common public policy approach to promoting positive parenting behaviors is home visiting (Ryan & Padilla, 2018). Federal funding of home visiting operates through the Maternal, Infant, and Early Childhood Home Visiting (MIECHV) program, which provides funding to states to operate evidence-based home visiting programs (Michalopoulos et al., 2019). The most recent Mother and Infant Home Visiting Program Evaluation (MIHOPE) of four of the most widely used MIECHV programs (Early Head Start—Home-based option, Healthy Families America, Nurse-Family Partnership, and Parents as Teachers) found either no or very small effects of programs on parenting outcomes such as support for learning and literacy, overall supportiveness, and parents’ disciplinary practices (Michalopoulos et al., 2019). Results from the present study thus lend support for the idea that Head Start may be among the most promising avenues for supporting positive parenting practices among low-income families, particularly at the most effective Head Start centers. For example, the MIHOPE evaluation found that home visiting positively impacted parents’ support of literacy and learning, with an average effect size of 0.08 (Michalopoulos et al., 2019). By contrast, I found an average effect of Head Start on parents’ literacy activities of 0.16, with some programs’ effect sizes as large as 0.52. These positive and relatively large effects combined with both the high rates of ECE use among low-income families with young children, as well as the much larger public investment in Head Start compared to home visiting programs—in 2019 the federal government appropriated $10 billion for Head Start (U.S. Department of Health and Human Services, Administration for Children and Families, 2019) compared to $351 million for MIECHV home visiting programs (Health Resources & Services Administration, 2019)—position Head Start at the forefront of promising public approaches to improving parents’ interactions with their young children.

In addition to finding very small average effects of home visiting on parenting outcomes, the MIHOPE evaluation also found that impacts on parenting outcomes did not vary across the four evidence-based programs included in the evaluation (though it is possible that there was variation within programs; this was not included in the evaluation). This lack of variation across programs—particularly for a measure similar to the literacy activities measure included in the present study—is noteworthy given that these were four unique programs operating from distinct (though similar) program models. By contrast, the present study demonstrates variation within a program with a single (though personalizable) program model. The contrast of these two findings further supports the notion that Head Start should by no means be thought of as a uniform program with uniform outcomes. Rather, individual Head Start centers have the potential to be more different from one another than four entirely different programs, all with the same goals of improving parents’ interactions with their children.

As discussed, Head Start is currently prioritizing parent engagement through the PFCE framework, which aims to increase programs’ engagement with parents in order to more fully involve them in their children’s learning and development. However, to the extent that some Head Start programs are quite effective at promoting parenting practices known to enhance children’s school readiness, and others are not effective and even less effective than local alternatives, Head Start decision makers should prioritize understanding what makes some centers more effective than others. We cannot assume that all centers—despite operating from the same funding source and program model—will be equally effective in supporting parents in their children’s development. Rather, individual Head Start centers may need different supports in making this goal a reality. The present study took the first important step by establishing that there is meaningful variation in effects on parents across sites. Future studies should examine which program features, and which parent populations, make some programs more effective than others.

Supplemental Material

sj-docx-1-ero-10.1177_2332858420969691 – Supplemental material for Beyond Just the Average Effect: Variation in Head Start Treatment Effects on Parenting Behavior

Supplemental material, sj-docx-1-ero-10.1177_2332858420969691 for Beyond Just the Average Effect: Variation in Head Start Treatment Effects on Parenting Behavior by Christina M. Padilla in AERA Open

Footnotes

Author

CHRISTINA M. PADILLA completed her doctoral studies at Georgetown University and is currently a research scientist at Child Trends. She is interested in the independent and joint influences of parenting and early childhood education on children’s early development.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.