Abstract

Open textbooks have been developed in response to rising commercial textbook costs and copyright constraints. Numerous studies have been conducted to examine open textbooks with varied findings. The purpose of this study is to meta-analyze the findings of studies of postsecondary students comparing learning performance and course withdrawal rates between open and commercial textbooks. Based on a systematic search of research findings, there were no differences in learning efficacy between open textbooks and commercial textbooks (k = 22, g = 0.01, p = .87, N = 100,012). However, the withdrawal rate for postsecondary courses with open textbooks was significantly lower than that for commercial textbooks (k = 11, OR (odds ratio) = 0.71, p = .005, N = 78,593). No significant moderators were identified. Limitations and future directions for research, such as a need for more work in K–12 education, outside of North America, and that better examine student characteristics, are discussed.

Literature Review

The development of open textbooks is part of a broader movement in open educational resources (OER). OER is an umbrella term for a variety of learning materials, including textbooks, videos, online modules, music, brief readings, and music (Butcher, 2015). According to the William and Flora Hewlett Foundation (2019), which has supported OER for decades, OER are teaching, learning, and research resources that reside in the public domain or have been released under an intellectual property license that permits their free use and re-purposing by others. (para. 7)

Textbooks were the focus of this manuscript for three reasons. One is that textbooks are commonly used learning resources throughout postsecondary education (Illowsky, Hilton, Whiting, & Ackerman, 2016). Another reason is that open textbooks are a type of OER that has a notable body of research on its efficacy (Weller, de los Arcos, Farrow, Pitt, & McAndrew, 2015). Finally, by focusing the analyses on a single type of OER (in this case, textbooks), the comparisons between OER and commercial resources are clearer.

A survey of postsecondary faculty found that many faculty express frustrations with the high costs of commercial materials, and a majority of faculty agreed that the high cost of commercial materials was a problem (Seaman & Seaman, 2018). However, the same survey indicated that one of the major concerns that faculty have about adopting open textbooks is whether their quality is comparable with commercial textbooks (Seaman & Seaman, 2018). Concerns about quality have been noted as a barrier to open textbook adoption in other surveys of faculty as well (Belikov & Bodily, 2016; Jhangiani, Pitt, Hendricks, Key, & Lalonde, 2016). These concerns are not unwarranted. Indeed, in two studies comparing performance on researcher-developed, objective learning measures, students enrolled in courses with commercial textbooks outperformed students enrolled in courses with open textbooks (Gurung, 2017). However, there have been numerous studies on open textbooks indicating no meaningful differences in learning compared with commercial textbooks (e.g., Clinton, 2018; Engler & Shedlosky-Shoemaker, 2019; Jhangiani, Dastur, Le Grand, & Penner, 2018; Medley-Rath, 2018) that need to be considered alongside Gurung’s (2017) findings. For this reason, a meta-analysis in which the overall efficacy of open textbooks across studies were summarized would be informative for instructors, administrators, and policymakers.

There are reasons to expect that students in courses with open textbooks would outperform those in courses with commercial textbooks. According to the

Examinations of studies on open textbook efficacy have been the topic of two systematic reviews (Hilton, 2016, 2018) as well as nonsystematic, narrative reviews (Clinton, 2019a; Wiley, Bliss, & McEwen, 2014). In these reviews, the overall conclusions based on qualitative analyses were that OER, including open textbooks, were as effective for student learning as commercial materials. These reviews provide thoughtful, in-depth thematic analyses of the research on the topic. However, there are no published meta-analyses on this topic, to our knowledge, and conducting meta-analyses would build on these reviews by combining the effects of multiple studies to get overall quantitative effect sizes.

Given that random assignment is generally not feasible for comparing textbooks, nearly all of the research has involved quasi-experiments with varying levels of quality in terms of controlling for confounders, such as comparing courses taught by different instructors. These methodological limitations constitute a significant limitation in research on the efficacy of open textbooks (see Griggs & Jackson, 2017; Gurung, 2017, for discussions). A meta-analysis could examine some of the potential effects of these confounders by including moderator analyses based on study quality variables. The use of the same instructor in courses with open textbooks compared with commercial textbooks is important given the variability in grading practices and pedagogical quality across individual instructors (de Vlieger, Jacob, & Stange, 2017). Another confounder to consider is whether student prior achievement or knowledge varied when comparing courses. This is important to consider given that prior academic performance is a clear predictor of future performance on learning measures (Cassidy, 2015). Furthermore, in one study, a course with an open textbook had higher average grades than the same course with the same instructor using a commercial textbook, but the students in the course with the open textbook had stronger academic backgrounds based on high school grade point averages (Clinton, 2018). In addition, many studies used different instruments to measure learning (e.g., different exams and quizzes that contributed to final grades). The use of different instruments is a clear confounder when comparing learning efficacy from open and commercial textbooks as different instruments would likely yield different scores due to measurement error.

The availability of an open textbook could potentially influence postsecondary students’ decisions on whether to complete a course or withdraw from it. One reason postsecondary students state for withdrawing from a course is that they cannot afford the textbook (Michalski, 2014). Indeed, approximately 20% of postsecondary students report that the expense of a textbook motivated their decision to withdraw from a course (Florida Virtual Campus, 2016). These course withdrawals lead to losses of time and tuition for students and increase the amount of time it takes to complete a degree, making the overall cost of a postsecondary education higher (Boldt, Kassis, & Smith, 2017; Nicholls & Gaede, 2014). Students have indicated that having an open textbook for the course was a reason they completed that course (Hardin et al., 2019). It is possible that postsecondary students who are behind in a course may be more likely to complete the course if there is an open-access textbook they can use to access the material rather than spend hundreds of dollars for a commercial textbook. This could potentially lead to courses with open textbooks having lower withdrawal rates than courses with commercial textbooks. However, it should be noted that postsecondary students withdraw from courses for a multitude of reasons that are unrelated to finances, such as stressors in their personal lives or dislike of the instructor (Hall, Smith, Boeckman, Ramachandran, & Jasin, 2003; Michalski, 2014).

Research Questions

Three research questions guided this meta-analytic research:

Method

A search and analysis plan for this meta-analysis was preregistered prior to beginning this project (Clinton, 2019b).

Inclusion Criteria

In order to be included in this meta-analysis, the report needed to meet certain criteria. First, the report needed to have findings comparing student learning performance and/or withdrawal rates between open and commercial textbooks. It needed to report sufficient information to be used in the meta-analysis (e.g., means and standard deviations,

Search Procedure

A systematic search for studies comparing learning measures and/or withdrawal rates between students using open and commercial textbooks was conducted in multiple steps. First, scholarly dissemination was searched for in the following databases: Scopus, DOAJ (Directory of Open Access Journals), ProQuest, PSYCinfo, and ERIC using the search terms

A list of these relevant reports found at this stage was compiled and sent to the Community College Consortium for Open Educational Resources–Advisory listserv, the authors of these reports were emailed, and a tweet was posted on this manuscript’s first author’s Twitter page with the hashtags #oer and #opensource requesting any additional reports or unpublished data (this yielded one additional report). The research reports posted on “The Review Project” of the Open Education Group’s website (https://openedgroup.org/) were also examined, which yielded one more report. After these relevant reports were identified, a backward search of the reference lists of these reports was conducted (no new reports were identified in this way). Then, a forward search of the citations of the relevant reports in Google Scholar was conducted, which identified one more report. The titles and abstracts of all presentations at Open Education 2018 were examined, which led to the identification of one more report. The systematic reviews of OER conducted by Hilton (2016, 2018) were examined, but no additional relevant studies were found. This led to a total of 22 reports with 23 independent studies (Gurung, 2017, reported two independent studies). This process ceased in October 2018. Twenty-two of these studies were analyzed in the learning efficacy meta-analysis (Table 1). Eleven of these studies were analyzed in the course withdrawal meta-analysis (Table 2).

Descriptions of Studies for Learning Performance Meta-Analysis

Descriptions of Studies for Withdrawal Rate Meta-Analysis

Coding of Studies

To provide descriptive information, assess study quality, and obtain information for moderator analyses, the studies were coded using the criteria found in Appendix B. Items pertaining to study quality were based on the Study Design and Implementation Assessment Device (Valentine & Cooper, 2008). The Study Design and Implementation Assessment Device provides researchers with questions to study quality regarding construct, internal, external, and statistical conclusion validity. The studies were coded and double-coded by the first author. In addition, research assistants coded 25% of the reviews (interrater reliability was good, κ = 0.78; see Follmer, 2018, for a similar approach). The first author resolved disagreements.

Statistical Procedures

To summarize the findings across learning measures, Hedges’s

Flow diagram of the systematic review process.

For the learning measure, if course grades and learning measures included in the overall course grades were reported (e.g., exam scores), then only course grades were used in the meta-analysis. This was to avoid redundant effect sizes because course grades are inclusive of performance on learning measures within a course (note that Medley-Rath, 2018, had a posttest measure that was not included in the final grade). One exception is Allen and colleagues (2015), because the information to calculated effect sizes based on a standardized learning measure was available, but such information was not available for course grades. However, there were studies in which multiple measures were reported that had separate scores (e.g., Hardin et al., 2019; Jhangiani et al., 2018). These measures were not independent because they came from the same samples. To account for these multiple measures that were from the same study (but were separate measures), robust variance estimation (RVE) was used. RVE is a method of incorporating correlations of dependent effect sizes within studies. This approach is more accurate for estimating effect sizes than the traditional approach of aggregating multiple effect sizes within a study (Tanner-Smith, Tipton, & Polanin, 2016). Also, a small sample correction was used (Tipton, 2015; see Tipton & Pustejovsky, 2015). The package “robumeta” was used (Fisher & Tipton, 2014). There were 22 studies with 26 measures (and subsequently 26 effect sizes) assessing learning efficacy.

For the withdrawal information, the odds ratio was used to compare the odds of withdrawal in a course that uses commercial textbooks compared with one that uses open textbooks (see Freeman et al., 2014, for a similar approach). The odds ratio was chosen because findings regarding withdrawal rates, unlike learning measures, were always reported as dichotomous variables. Because there was only one measure of withdrawal rate (and subsequently only one effect size) per the study, RVE was not used. The odds ratio indicates the relative odds of withdrawal rate associated with the type of textbook used. An odds ratio less than 1 indicates that the use of open textbooks in courses was associated with a lower withdrawal rate compared with commercial textbooks. The withdrawal rate was reported in five studies and provided by authors on request for an additional six studies (i.e., the withdrawal rate not stated in the dissemination, but six authors whose reports were included in the learning efficacy meta-analysis were able to locate and share the relevant numbers regarding course withdrawals).

The

Results

Learning Efficacy

See Appendix C for R code used in analyses and for data sets. A data dictionary for the data sets is also provided in Appendix C.

Outliers in the effect sizes were examined using a violin plot prior to synthesizing effect sizes in RVE (see Tanner-Smith et al., 2016). To construct the violin plot, the package vioplot was used (Adler & Kelly, 2018). A violin plot is a combination of a box plot, which displays a measure of central tendency (either mean or median) and interquartile range, and a kernel density plot, which has a smooth curve to indicate the probability density of a variable in a manner similar to a histogram (Hintze & Nelson, 1998). This allows for a visual display of the distribution of the data as well as the summary statistics. The violin plot, shown in Figure 2, has a white circle to indicate the mean and the thin black line to represent the interquartile range. Weights were not used, but the data displayed in the violin plot, Hedges’s

Violin plot for learning performance studies.

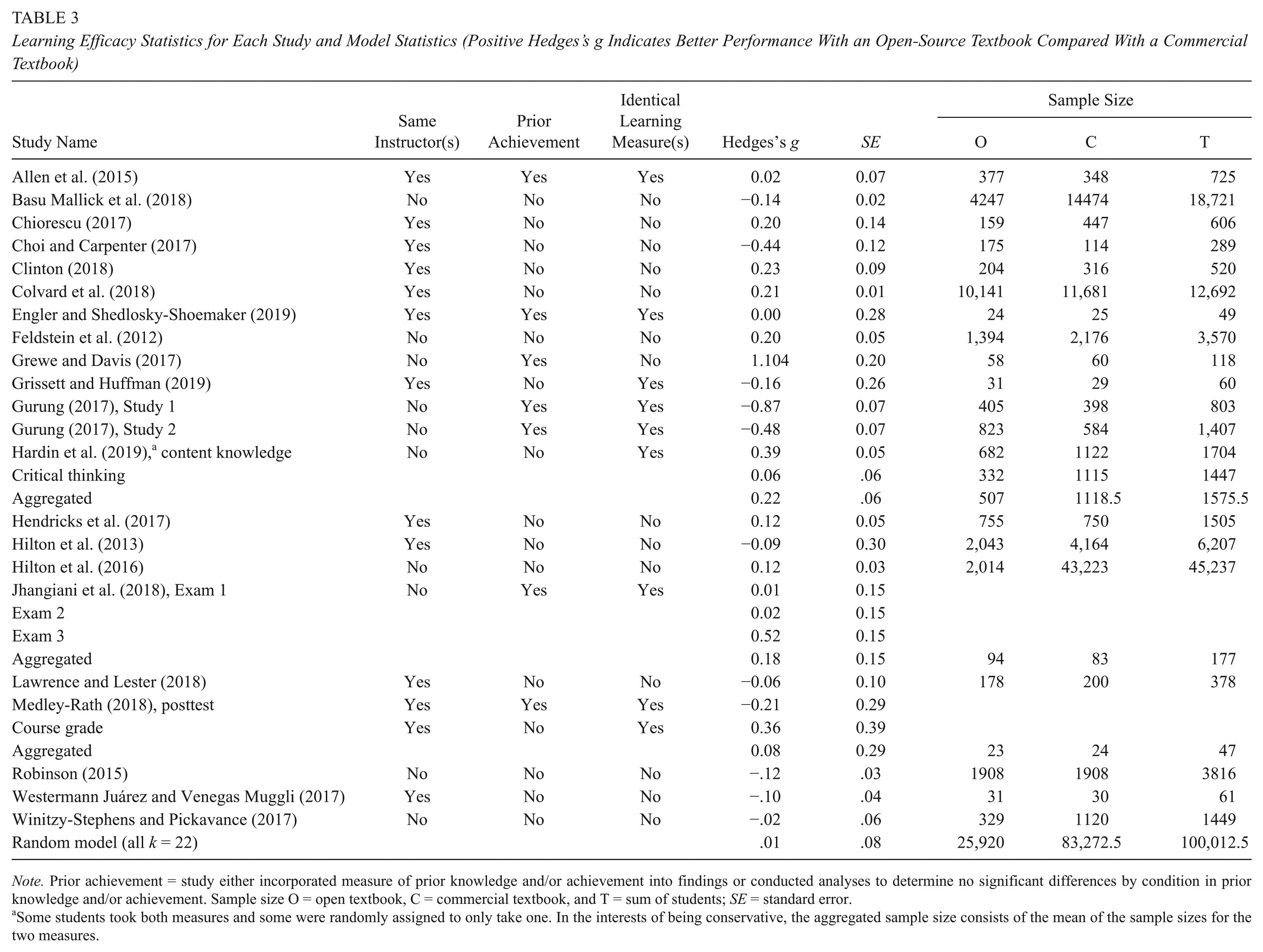

The learning performance overall was first examined, and the

Learning Efficacy Statistics for Each Study and Model Statistics (Positive Hedges’s g Indicates Better Performance With an Open-Source Textbook Compared With a Commercial Textbook)

Some students took both measures and some were randomly assigned to only take one. In the interests of being conservative, the aggregated sample size consists of the mean of the sample sizes for the two measures.

To further examine the null effect found, two approaches were used. The first was a power analysis (Harrer & Ebert, 2018). Based on a small effect size of Cohen’s

The second examination was an equivalence test using the package “toster” in R (Lakens, Scheel, & Isager, 2018). The equivalence test was significant,

Publication bias, which is the increased likelihood that statistically significant findings were reported, was assessed in two manners using aggregated effect sizes because there currently is not a validated measure of publication bias with RVE (Friese, Frankenbach, Job, & Loschelder, 2017). The first was the graphical technique of the funnel plot. A funnel plot shows studies graphed according to their size along the

Funnel plot for learning performance studies.

Moderator Analyses

To test for potential moderators, a meta-regression model with each of the moderators as coefficients was estimated (see Tipton & Pustejovsky, 2015). Following Borenstein, Hedges, Higgins, and Rothstein (2009), a minimum of six effect sizes per potential moderator category was required to conduct moderator analyses (see Elleman, 2017, for a similar approach). Therefore, potential moderators such as the same course being compared and whether the learning measure was part of a course grade or only for a research study (e.g., for the studies in Gurung, 2017) were not examined.

The moderators examined were whether the instructor was the same for open and commercial conditions, whether prior knowledge or prior student achievement was considered or controlled in the analyses, and whether the learning measure was identical (e.g., the same exam was used) for open and commercial conditions. Each of these potential moderators pertains to methodological quality. Note that Clinton (2018) was coded as “does not consider prior knowledge or prior academic performance” because the measure of student prior knowledge was significant between conditions and could not be covaried out of the effect size (data were only available at the course level, it was not possible to get student-level data). As can be noted in Table 4, none were significant. However, there were fewer than 4 degrees of freedom for the results with prior knowledge or prior student achievement as well as for whether the learning measure was identical. With fewer than 4 degrees of freedom, the results cannot be trusted, and therefore, it was inconclusive whether these two moderators have effects. In addition, it is not good practice to conduct a power analysis for moderators in a meta-regression (Pigott, 2012); therefore, it is uncertain whether the findings for instructor indicated that there is truly no difference or if there was simply a lack of power to detect a difference.

Meta-Regression Results for Learning Efficacy

Withdrawal Rate

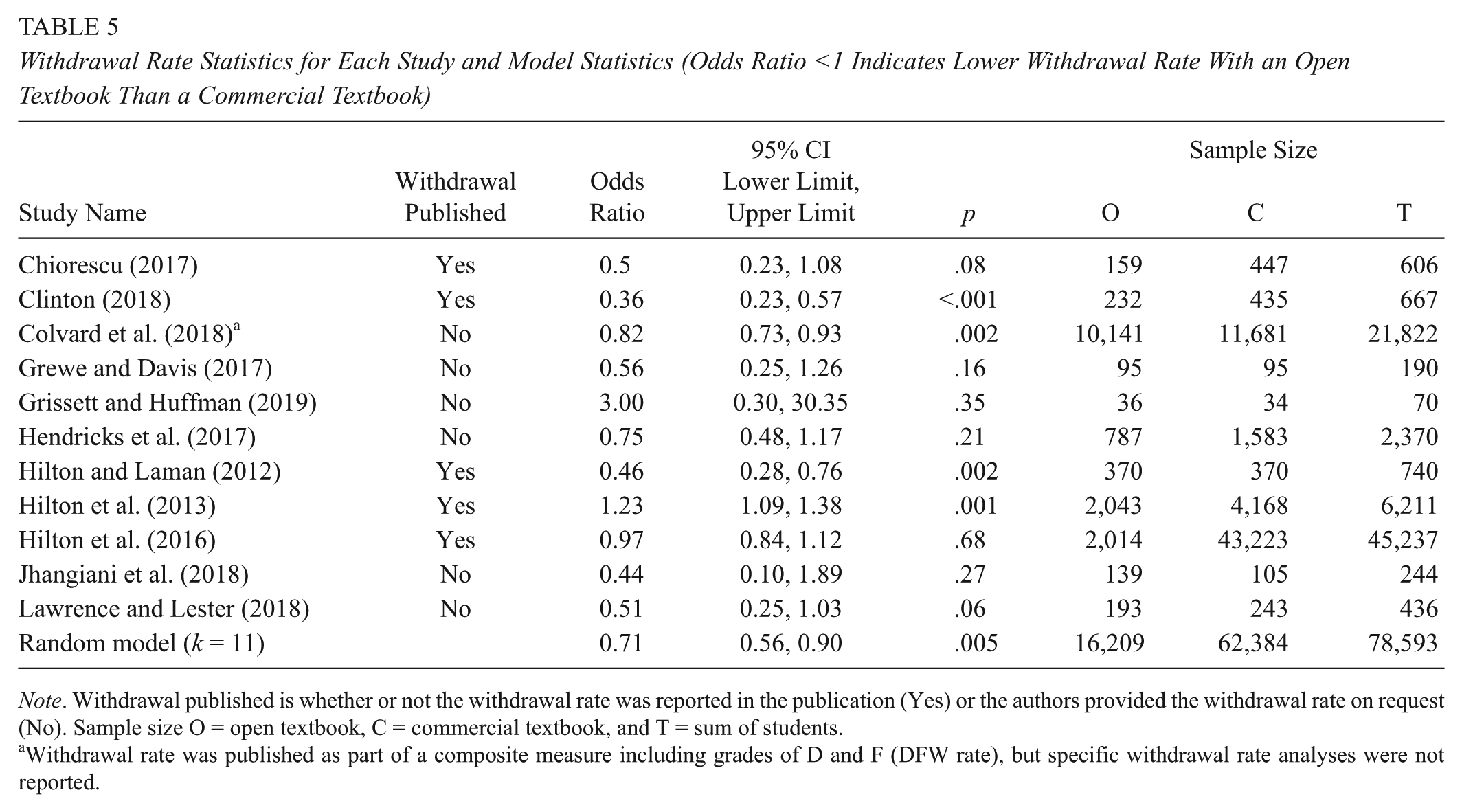

For the withdrawal rate, the heterogeneity of effect sizes was substantial, with an

Withdrawal Rate Statistics for Each Study and Model Statistics (Odds Ratio <1 Indicates Lower Withdrawal Rate With an Open Textbook Than a Commercial Textbook)

Withdrawal rate was published as part of a composite measure including grades of D and F (DFW rate), but specific withdrawal rate analyses were not reported.

Violin plot for withdrawal rate studies.

For withdrawal rate, over half of the studies did not report this information (it was obtained from the authors). Therefore, examining publication bias in a manner similar to what was done with learning efficacy (i.e., funnel plot and Egger’s test of the intercept) would be inappropriate given that half of the findings were not published.

Discussion

The purposes of these meta-analyses were to summarize the efficacy of open textbooks compared with commercial textbooks on learning performance and withdrawal rate. Based on the findings of 22 independent studies, there appeared to be no effect on learning performance in courses with open textbooks compared with courses with commercial textbooks. This effect did not appear to vary between studies based on having the same instructors for the courses with open and commercial textbooks. However, it should be noted that the absence of a significant effect from a moderator may be due to a lack of power rather than a true null effect (Hempel et al., 2013). The moderator analyses for incorporating prior student performance into the analyses or using identical instruments for measuring learning for open and commercial textbook conditions were inconclusive. The withdrawal rate from courses with open textbooks, based on 11 independent studies, was reliably lower than that for courses with commercial textbooks.

The null results for learning performance from open compared with commercial textbooks supports the notion that open textbooks save students money without a detrimental effect on learning. This quantitative summary of the findings converges with conclusions based on qualitative systematic reviews comparing learning in courses with open and commercial textbooks (Hilton, 2016, 2018). Furthermore, this meta-analysis builds on these previous reviews by comparing effect sizes based on study quality through moderator analyses. These moderator analyses indicated that controlling for the confounder of whether or not the instructor was the same did not significantly vary the results. Therefore, although many studies on open textbooks have been justifiably critiqued for methodological quality (see Griggs & Jackson, 2017; Gurung, 2017, for critiques), this particular confounder did not appear to be skewing the findings on open textbook efficacy. However, this could be due to lack of power, and there was not sufficient power to trust the results for the other potential moderators examined (identical learning measure and whether or not student prior achievement was controlled).

Based on the access hypothesis, students in courses with open textbooks should be academically outperforming their peers in courses with commercial textbooks. This is because every student would have access to open textbooks, but some students would not be able to afford access, or at least reliable access, to a commercial textbook. However, the findings from this meta-analysis do not provide support for this hypothesis. One reason could be that having free access to a textbook may only have a benefit for learning for a small number of students. For example, Colvard and colleagues (2018) found that students eligible for federal assistance grants based on low household income yielded greater learning benefits from open textbooks relative to their peers with higher household incomes. It could be that if the information on student socioeconomic status (SES) were available, SES would be a significant moderator in which students with lower SES backgrounds would have benefits not seen with students of higher SES who can more easily afford and access commercial textbooks. Alternatively, it is possible that the open and commercial textbooks compared were of similar quality and perhaps access was not necessarily an issue, so the learning findings were similar. Another possible reason, based on conjecture, is that postsecondary instructors may be aware that not all of their students have the commercial textbook and adjust their pedagogy accordingly. For example, instructors may be less likely to require reading or assignments requiring the use of the textbook if they are concerned about the financial impact on some of their students. Finally, it could also be possible that the type of textbook simply has little influence on student learning. For example, one experiment comparing commercial textbooks from different publishers found no differences between types in learning from reading them (Durwin & Sherman, 2008). This possibility is supported by findings from studies on open textbook efficacy (not eligible for this meta-analysis) in which all students had access to the commercial textbooks, but there were either no differences or a small benefit in learning performance with open textbooks (Clinton, Legerski, & Rhodes, 2019; Robinson, Fischer, Wiley, & Hilton, 2014).

Although there was no benefit of open textbooks on the performance of students who completed courses, there was a significant reduction in the likelihood of students withdrawing from the course. The most common reason students state for course withdrawal is that they anticipate failing the course or receiving a low grade (Wheland, Butler, Qammar, Katz, & Harris, 2012). It is possible that having access to a textbook may help a student who is behind to cover missed material and not withdraw from the class. In this way, students who are struggling in a course may be less likely to withdraw if they can access an open textbook for free as opposed to paying hundreds of dollars for a commercial textbook to succeed in the course. However, there should be caution with interpreting withdrawal findings because some studies compared courses with different instructors (e.g., Hilton et al., 2016; Wiley, Williams, DeMarte, & Hilton, 2016), and there were not enough studies in each cell to conduct moderator analyses.

Although not identified as an outlier in analyses, there was one study in which the withdrawal rate was significantly higher in a mathematics course with an open textbook than with a commercial textbook (Hilton et al., 2013). In the discussion of this finding, Hilton and colleagues (2013) describe how institutional policy changes for placement in mathematics courses coincided with the adoption of an open textbook for that course. This led to students with different levels of preparedness in mathematics in the compared courses, which creates a clear confounder for comparing withdrawal rates.

Limitations and Future Directions

There are a number of limitations in these meta-analyses as well as in research on open textbooks more generally that should be acknowledged. One limitation of this learning efficacy meta-analysis was the focus on postsecondary students. As with postsecondary education, the adoption of open textbooks in K–12 education can yield significant cost savings, even with the time involved with modifying curricula and pedagogy (Wiley, Hilton, Ellington, & Hall, 2012). Furthermore, one study found that K–12 teachers rated open textbooks as better quality than commercial textbooks (Kimmons, 2015) and another found that K–12 teachers saw open textbooks as helpful when personalizing student learning (de los Arcos, Farrow, Pitt, Weller, & McAndrew, 2016). However, there is only one study that examined K–12 student learning that we know of that would have otherwise met the criteria to be included in this meta-analysis (Robinson et al., 2014). The increased focus on open textbooks in postsecondary education may be because the costs of commercial materials are more obvious to postsecondary students who typically have direct expenses for course materials, whereas the cost to public K–12 students are indirectly supported through taxpayers. That said, with more OER being developed for and used in K–12 schools (Pitt, 2015), more inquiry in their efficacy is needed (see Blomgren & McPherson, 2018, for a review of K–12 OER research on a variety of issues).

The geographic scope of this meta-analysis was not representative of OER use. The reports in this meta-analysis were based on studies set in the United States and Canada, with one exception of a study based in Chile (Westermann Juárez & Venegas Muggli, 2017). The advocacy and use of OER, including open textbooks, is international (Bliss & Smith, 2017). Furthermore, teaching and learning practices with textbook use vary considerably by geographic region, partly due to textbook availability (Milligan, Tikly, Williams, Vianney, & Uworwabayeho, 2017). It is unclear if open textbooks would ameliorate difficulties with textbook availability in regions in which access to the internet and electronic devices may be limited (Butcher, 2015). For these reasons, more inquiry into open textbook efficacy is needed outside the United States and Canada.

One limitation in the methodology of this meta-analysis is the use of a single screener for abstract screening. Logically, more than one screener would certainly reduce the likelihood a relevant citation was missed. Therefore, it is possible a relevant report was missed during abstract screening due to the use of a single screener. That said, an assessment of Abstrackr, the abstract screening software used in this meta-analysis, used only one screener (Gates, Johnson, & Hartling, 2018). Moreover, other possibly relevant reports were searched for in alternative methods (e.g., backward and forward citations searches) that may have compensated for the use of a single screener.

There were no significant moderators identified in the meta-regression analyses. However, there was substantial variability in the findings that would indicate the possible existence of significant moderators. It is possible that as more research is conducted in OER, there would be sufficient power to find that the proposed moderators related to study methodological quality explained some of this variability. In addition, future research could consider possible moderators, such as student background and how the textbook is used by the instructor and students, to determine sources of variability in findings.

Another reason for the variability could be that the studies themselves were quite different. Although all studies compared students enrolled with courses with open textbooks with those with commercial textbooks, there was a broad range of content areas and sample sizes. Moreover, grading criteria vary considerably among instructors and institutions. Regarding course withdrawals, each institution in this study had an initial drop period without a withdrawal on the transcript at the beginning of the term and a deadline at some point in the term to receive a W and not an F. However, the specific deadlines and policies varied.

The noted differences in the course withdrawal rates for open and commercial textbooks may have subsequently led to the characteristics of the students who completed the courses to be different. Although it is possible that students opted not to withdraw because they had access to an open textbook that allowed them to perceive a better chance of success in the course, in general, students who withdraw tend to have weaker academic backgrounds than those who persist in courses (McKinney, Novak, Hagedorn, & Luna-Torres, 2019). It is possible that this could lead to student differences in the courses that cannot be accounted for in the data available for these meta-analyses.

A potential issue is the medium (paper or screen) from which students read their course textbook. In Gurung’s (2017) second study, the performance between students in courses using open textbooks was lower than students in courses using commercial textbooks. However, the difference was much smaller when only examining students who accessed their textbooks only electronically (thus reading from screens). Because open textbooks are free to access electronically but involve costs for hard copies, it may be that students may be more likely to read their open textbooks electronically than commercial textbooks (that involve costs in any medium; Clinton, 2018). This issue of reading medium used may be worth considering given that meta-analyses have been published indicating a small benefit in learning performance when reading from paper compared with reading from screens (Clinton, 2019c; Kong et al., 2018).

Conclusion

Open-source textbooks have been developed primarily in response to the rising costs of commercial materials. Concerns over quality and effects on learning have prompted numerous studies in this area. Based on the meta-analytic findings here, there are no meaningful differences in learning efficacy between students using open textbooks and students using commercial textbooks. However, students in courses with open textbooks appear to be less likely to withdraw. There are several limitations in research on open textbooks that indicate future research should consider K–12 students, the needs of students outside of the United States and Canada, and the potential moderating factors of student characteristics.

Footnotes

Appendix A

Appendix B

Appendix C

Acknowledgements

Michael Burd and Jennifer Duffy are thanked for their assistance on this project.

Authors

VIRGINIA CLINTON, PhD, is an assistant professor of Educational Foundations and Research at the University of North Dakota. Her research interests are open educational resources and text comprehension.

SHAFIQ KHAN is a doctoral student in Educational Foundations and Research at the University of North Dakota. His research interests are open educational resources and instructional design.