Abstract

This article explores faculty perspectives at three colleges of education regarding strategies of knowledge mobilization for scholarship in education (KMSE), with consideration for the opportunities and challenges that accompany individual and organizational capacities for change. Faculty surveys (n = 66) and follow-up interviews (n = 22) suggest two important trends: First, KMSE presents both a complementary agenda and a competing demand; second, barriers and uncertainties characterize the relevance of knowledge mobilization for faculty careers in colleges of education. This study empirically illuminates the persistence of long-standing challenges regarding the relevance, accessibility, and usability of research in colleges of education housed in research-intensive universities. While KMSE holds promise for expanding the reach and impact of educational research, scholarly tensions underlying these trends suggest that individual and organizational efforts will suffice only with modifications to university procedures for identifying what counts as recognizable, assessable, and rewardable scholarly products and activities for faculty careers.

T

These tensions resonate with broader challenges associated with research utilization. The National Research Council (Prewitt, Schwandt, & Straf, 2012) report Using Science as Evidence in Public Policy similarly highlights the fact that how and why research is useful and used remains unclear. The relationships between science and policy do not reflect simple or uniformly rational decision-making models. Therefore, to encourage research utilization may require more interactive and social models that recognize and engage with context, alternative perspectives and types of knowledge, and not only individual but institutional behavior (Nutley, Walter, & Davies, 2007). In light of such approaches, the National Research Council’s report (Prewitt et al., 2012) underscores that understanding remains limited in terms of how institutional arrangements facilitate the use of science in policy (and, by extension, practice).

Perhaps in response to the demands for accountability and echoing the calls to develop interactive and social approaches to research utilization, faculty and COEs increasingly seek to enhance the accessibility and usability of educational research (Fischman & Tefera, 2014; Oakes, Welner, & Renée, 2015). We broadly define these approaches and attendant processes as knowledge mobilization for scholarship in education (KMSE). KMSE includes iterative, purposeful, multidirectional interactions among researchers and groups (policy makers, practitioners, third-party agencies, community members) aimed at better understanding and improving educational organizations and systems. There is no easy and effective system of fostering dialogue and exchanges between researchers and students, families, teachers, schools, foundations, policy makers, media, and the general public while capturing scholarly production and relevance in a field as diverse as education. However, as COEs discuss and develop organization-level approaches to KMSE, the characterization of faculty engagement with and perceptions of these interactive multiway strategies and practices informs efforts to understand institutional arrangements that seek to facilitate the use of educational research in policy and practice.

In this article, we present findings from a 2-year project examining faculty and administrator perceptions of emerging KMSE models within three COEs at public research-intensive universities in North America. Using survey and interview methods, we characterize faculty engagement with KMSE-related practices (e.g., open access publishing) and faculty perceptions of college-level approaches to KMSE. Our findings present inherent tensions that participants describe in relation to KMSE, particularly against the backdrop of the critical perspectives and calls for accountability to the public discussed earlier. By focusing on tensions, we aim to understand the challenges that COEs can face when advancing KMSE approaches and practices, rather than to simply commend or criticize the efforts of participating faculty and colleges. Before describing our methods and findings, we briefly discuss some of the dynamics surrounding cultural and structural changes to the education research landscape in the last century and detail literature related to KMSE.

Structural Accretion in 21st-Century COEs

It is important to recognize our positionality as active and curious members of the education research field. Our motivation for conducting the overarching 2-year research study was twofold: first, to understand and improve the accessibility and usability of educational research while avoiding the use of simplistic models to assess the relevance of educational research; second, to propose alternatives that could ameliorate what we label “educational research distress” that is accumulating among faculty in COEs and academia in general. The historical development of universities—specifically, the American model of research universities (Crow & Dabars, 2015)—is marked by structural change associated with processes of expansion (e.g., numbers of institutions, students, faculty, and social groups admitted) and by the increasing pursuit of many more goals than traditionally conceived (Fischman, Igo, & Rhoten, 2010). The combination of expanding structures and increasing goals reflect Smelser’s (2012) concept of “structural accretion.” The development of universities can be characterized by increasingly more functions without either foregoing old ones or creating separate new institutional structures to support these functions. Such structural accretion generates disruptive demands on well-established traditions and university operations, challenging each institution to reengineer itself to survive (Calhoun, 2006). Relatedly, many other dynamics converge to generate higher levels of distress in the field of educational research due to the increasing and not merely shifting types of demands.

To contextualize faculty perspectives related to organization-level approaches to KMSE in their colleges, it is worth mentioning some of the most noticeably distressing trends. Such trends include

Shifting enrolments in COEs—especially those with large teacher education programs (see U.S. Department of Education, 2013, 2015)

Intensified competition with nontraditional actors in higher education—for example, large online for-profit universities and alternative teacher certification programs such as Teach for America (Mihaly, McCaffrey, Sass, & Lockwood, 2013; Scott, Trujillo, & Rivera, 2016) 1

Changes in the standards for assessing educational research (Feuer, Towne, & Shavelson, 2002; Lagemann, 1989)

Augmented use of standardized requirements for educational research accountability (Darling-Hammond, 2015)

Broad adoption of bibliometric analyses, not only as a key component for ranking universities, colleges, and programs but also for administering and regulating the careers of researchers (Post, 2012; Wilsdon, 2016), alongside ensuing debates about how best to assess the range of scholarship produced by COEs playing a pivotal role in the evolution of a research-focused landscape (Labaree, 2006; Oancea, 2013). 2

Collectively, these transformations have accelerated processes of organizational restructuring in COEs while shifting agendas to expand research-intensive models at many more COEs than was the case a generation ago. These structural changes highlight a need to alter the way that we as educational researchers do research. However, a parallel trend further complicating the structural challenges highlighted earlier is the well-documented and growing public indifference to expert judgment in education research (Makel & Plucker, 2014; Miller, 2013). 3 In part to combat the structural changes and seeming loss of public faith in education research, a central goal of knowledge mobilization (Campbell, Pollock, Briscoe, Carr-Harris, & Tuters, 2017) is to increase the use of research evidence in policy making and inform practice through iterative social processes involving interaction of two or more groups or contexts (e.g., researchers, policy makers, practitioners, third-party agencies, community members). As mentioned, we use the term KMSE throughout to highlight specific knowledge mobilization aims that seek to increase the impact and usability of educational research by a range of education stakeholders. The aforementioned themes informing our analysis of KMSE in COEs reflect a desire to better understand the shifting landscape as we turn to the details of this research study.

Analytic Methods and Data Analysis

To consider tenured/tenure-track faculty and administrator perspectives on the KMSE agendas at the three participating COEs, we conducted purposeful sampling of three public research-intensive universities in the United States and Canada as part of this interpretive qualitative study. Focusing on public universities was an important component of our sampling criteria, given that these institutions are funded and supported by the public and thus have a greater commitment to ensure that their research is of value to their local communities. Based on information available at university websites in October 2013, we identified COEs that were implementing publicly visible KMSE agendas and selected three whose agendas ranged along a continuum from developing (COE1) to emerging (COE2) and established (COE3). 4 See Appendix A for the profiles of the participating COEs and features that allowed us to categorize as such. While all three COEs participating in this study maintain strong reputations and explicit commitments to rigorous and impactful research, their respective KMSE agendas were operating over varying lengths of time and with different relative emphases and orientations. Looking at tenured/tenure-track faculty perspectives across COEs with different KMSE agendas can inform similar efforts at other COEs and in relation to the changing landscape presented in the literature review. Given that our goal was to understand faculty perspectives at these three COEs in depth, purposefully choosing these COEs allowed us to examine perspectives across a range of KMSE initiatives, including how some of the tensions and trade-offs reflect common challenges and opportunities in alignment with our interpretivist qualitative orientation (Creswell, 2013). We also acknowledge that a small sample precludes generalizations, as all three participating COEs remain embedded in their unique cultural and institutional conditions. We focused on two guiding research questions in the present study: How do faculty report their engagement with KMSE-related practices at three research-intensive COEs? How do faculty and administrators describe the opportunities and challenges associated with KMSE at their respective COEs?

To address these questions, we first invited all tenured/tenure-track faculty at each COE to complete a survey. We did not include non–tenure track faculty in our study, because in most COEs at research-intensive universities, only tenured/tenure-track faculty are required to engage in traditional research and scholarly activities, which is a key aspect of our exploration of KMSE. Sixty-six faculty responded to the survey, for an overall response rate of 33% (with college-specific response rates ranging from 20% to 50%). These relatively low response rates are typical for an online survey (Sheehan, 2001; Vaus, 2013). Despite following best practices for web surveys (Fan & Yan, 2010), we recognize that respondents represent a minority of faculty at each college and, therein, the possibility of nonresponse bias by faculty disinterested in or opposed to KMSE. We therefore underscore that while the participants represent a nontrivial number of tenured/tenure-track faculty at each college, our findings do not necessarily represent all faculty at each college.

Respondents included 5 college-level administrators, 8 assistant professors, 27 associate professors, and 26 full professors (the smaller number of pretenure assistant professors participating in the study reflects the decision by COE3 to exclude its assistant professors from participating in the study). For the purposes of this article, we enlist a subset of survey items that situate the interview data in wider relation to the full sample (see also Zuiker et al., 2017, for complete survey analysis). These items consider how participating faculty perceived the relative value of (a) scholarly actions and products at their institution, (b) institutional faculty evaluation processes, and (c) engagement with practitioners and local education agencies.

After administering the survey, we conducted interviews with faculty engaged in knowledge mobilization. The process of selecting interviewees involved multiple steps. First, we generated a list of prospective faculty by reviewing various college materials for the KMSE indicators featured in our survey and by soliciting recommendations from the associate dean of research at each college. Next, we selected a subset of prospective faculty that balanced diversity and rank. Through this process, 22 faculty (out of 30 invited) participated in semistructured interviews that we conducted (7, 10, and 5 faculty from each COE, respectively), audio recorded, and transcribed verbatim. 5 Interviews aimed to explore faculty perceptions of institutional tenure and promotion structures, college-level strategies to incorporate KMSE practices into tenure structures, and interviewees’ practices regarding conducting and disseminating research and partnerships therein (see Appendix B for the interview protocol). Interviews lasted from 45 to 90 minutes.

To analyze the interviews, we engaged in a multilevel collaborative coding process (Saldaña, 2015, p. 34–35) informed by a social constructivist version of constant comparative analysis (e.g., Charmaz, 2006). 6 We began preliminary analysis with a subset of interviews in common to generate a set of initial, tentative open codes (i.e., descriptive tags for swaths of data based on theoretical and empirical sensitivities). These open codes described our initial engagement with the interview data according to areas of analytic interest (e.g., impact, research dissemination, perceived tensions, and institutional practices). This process allowed us to maximize analytic sensitivity across a variety of researcher perspectives and ward off validity threats from prematurely arriving at a set of codes to apply to the data corpus. Next, we further developed interrelations across open codes to arrive at a set of axial codes (i.e., conceptual categories for more formally categorizing data), allowing us to then look across interviews according to categories related to faculty’s strategies and beliefs surrounding their professional practice and experiences at each institution (Creswell & Poth, 2016, p. 86).

From an axial coding process involving 10 of the 22 tenured/tenure-eligible faculty interviews, we then arrived at 14 final codes (see Appendix C for the codes, subcodes, and their definitions) that we applied to the corpus of transcribed interviews. After three further rounds of collaborative coding, we wrote descriptive narratives for each code, which cut across all interviewees by institution, as well as for each interviewee, thus resulting in a holistic analysis of each case and a cross-sectional analysis for each code across individuals (Mason, 2002; Saldaña, 2015). After completing these various iterative analytic processes, we interpretively arrived at two overarching themes, by which we organize the findings: (a) KMSE as complementary agenda and competing demand and (b) barriers and uncertainties regarding KMSE.

Findings

With differences in emphasis and orientation in their approaches to KMSE (see Appendix A), faculty described the three participating COEs as engaged in a variety of actions aimed at making educational research more accessible, at increasing the “impact” of and “engagement” with scholarship, and ultimately at reducing the “theory to practice” gap. 7 While these organization-level efforts are the foundations of KMSE models at various stages across the colleges, we consider them in light of faculty engagement and perceptions. Our survey and interview data underscore that KMSE holds value to these COEs and faculty, but attendant opportunities and challenges must be understood against a wider backdrop of competing demands. We describe these challenges by reporting faculty engagement with KMSE-related practices and examining faculty and administrators’ perspectives. We outline these tensions in terms of complementary agendas and competing demands characterized in survey responses. Then, we enlist interview transcripts to develop finer-grained detail that illuminate the survey results in terms of the barriers and uncertainties that made KMSE a difficult endeavor for participating faculty and administrators at these COEs.

KMSE as Both Complementary and Competing Demand

Surveyed faculty and administrators at all three COEs represented two common perspectives on educational scholarship. First, participants affirmed the importance of peer-reviewed publications in prestigious journals for their academic careers, which interviewees sometimes labeled as being “top tier” or as publishing in journals with high impact factors (all but two survey respondents indicated their importance). Second, participants strongly agreed that they should try as much as possible to generate research-based knowledge that is usable by practitioners, policy makers, and the public in general (almost all survey respondents agreed). Some interviewees subsequently highlighted the relevance of having “face-to-face interactions with local educators,” for “researchers to be more open-minded,” and to use forms of “engaged” and “collaborative” research models. While overall survey response rates preclude claims to a consensus view among all faculty at these COEs, this high level of agreement establishes that study participants valued complementary agendas for educational scholarship—(a) producing academic knowledge to be used by other researchers (e.g., traditional scholarly publications) while (b) mobilizing research among practitioners, media, and policy makers (i.e., KMSE). 8

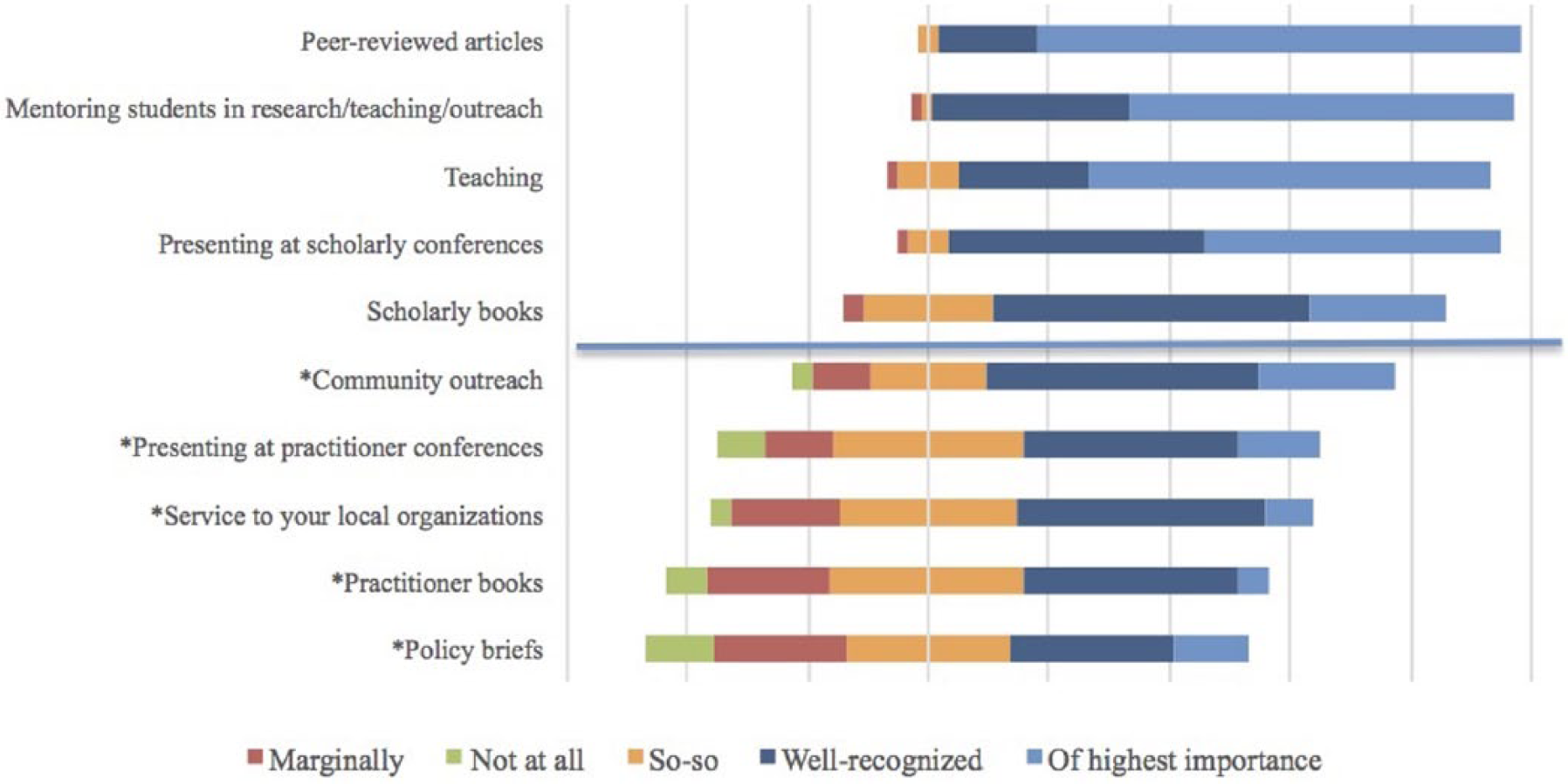

To better understand the relative complementarity or competition between production- and mobilization-oriented forms of scholarship, the survey also concentrated on scholarly practices that faculty engaged in from day to day and semester to semester. To this end, participants rated 34 scholarly products and activities in relation to how they valued them and how their COEs did. Figure 1 presents the five products and activities with the greatest and least personal value to faculty respondents. 9

Faculty valuations of scholarly products and activities: Most and least valued. *Product/activity more often associated with knowledge mobilization for scholarship in education.

Similarly, Figure 2 presents the five products and activities with the greatest and least institutional value based on faculty’s perception.

Faculty perceptions of college of educations’ valuations of products and activities: Most and least valued. *Product/activity more often associated with knowledge mobilization for scholarship in education efforts.

These results underscore that faculty valued the production of peer-reviewed articles as their highest priority, matching their perception of their COEs. It is also important to highlight the lack of a substantial difference between (a) the products and activities with the greatest and least personal value to faculty and (b) their perception of what their COEs value. Meanwhile, participants perceived products and activities associated with KMSE (e.g., practitioner books, op-eds, media reports, and policy briefs) as a lower priority and believed that their COEs did as well. These results reflect prior studies of knowledge mobilization (e.g., Fry et al., 2009; Kyvik, 2013). These survey results also suggest that respondents perceived producing high-quality scholarship and KMSE as competing agendas of greater and lesser priority, respectively.

As one strategy for understanding faculty participants’ perspectives on these three COEs’ approaches to KMSE, the 34 scholarly products and activities that respondents rated included KMSE-related practices, such as community outreach, service to local organizations, practitioner books, policy briefs, practitioner conferences, media interviews, blogs, podcasts, and massively open online courses. With only minor variation between their ranked value among respondents and their perceived value to their COEs, KMSE-related practices appear only among the five lowest ranked in Figures 1 and 2 and, among all 34 products and activities, remain in the lower half overall (see Appendix D for full participant ratings of scholarly products and activities). These results parallel those reported by Cooper, Rodway Macri, and Read (2011), who surveyed educational researchers from wide-ranging colleges.

While respondents almost unanimously affirmed complementary agendas for their scholarship, they perceived their COEs’ KMSE agendas to have not reprioritized the products that faculty seek to generate and the activities that faculty seek to engage. In other words, participating faculty perceived their COEs’ approaches to KMSE as additional, competing demands despite institutional affirmations of the value of KMSE scholarship as integrated, complementary agendas. This institutional tension may also be compounded by divided views on college-level assessments of faculty production. Answering a Likert-scale item concerning how well their scholarly production was assessed, roughly as many survey respondents reported that their institution assessed their production either well or very well as those reporting that the assessment was not well done or was poorly done. Therefore, seemingly well-aligned valuations of scholarly products and activities may not be reflected in COE assessments of these products and activities.

These survey results underscore that for COEs that are developing KMSE models, institutional and faculty strategies for fostering, valuing, and assessing complementary agendas may be as complex on the inside as they are on the outside, in alignment with our review of the literature. These results also begin to situate KMSE in relation to various barriers and uncertainties that many participants later raised in follow-up interviews. The next section therefore enlists these interviews to delve deeper into complementary and competing aspects of organization-level approaches to KMSE across these three COEs.

Barriers and Uncertainties Regarding KMSE

In relation to general tensions between complementary agendas and competing demands, our interviews with a subset of survey respondents lend greater nuance and depth to survey results. In general, the tensions described during interviews suggested various barriers and uncertainties associated with institutional approaches to KMSE at these three COEs. Our collaborative analytic process led us to identify two attendant subthemes related to these barriers and uncertainties—first, recognizing the impact of multiple forms of scholarship in education while underscoring the emphasis on publishing sole-authored articles in peer-reviewed journals; second, ambiguous structures for supporting and evaluating faculty members’ KMSE engagement, despite organization-level approaches to KMSE at these COEs.

Recognizing impact of multiple forms of scholarship

As discussed so far, the prevalence of debates and uncertainties about metrics related to scholarly publications is crucial to understanding potential barriers to KMSE. Considering whether and how COEs assess faculty’s scholarly contributions beyond publishing in peer-reviewed scholarly journals is therefore critical for understanding organizational approaches to KMSE. 10 An assistant professor from COE1 characterized this uncertainty in terms of how his university and external reviewers might evaluate his tenure case.

I’m going up for my review right now, and the piece I have out that is the most cited is a book chapter. But it won’t be in [those] that I put in for people to look at [for tenure review] because they won’t value it as much, even though it’s had more impact. It has had impact at the practitioner level, but also researchers are citing that particular piece. But it’s not what the overall university tends to look at. . . . It’s really important to me that my research, especially because I come out of a tradition of being a classroom teacher, that it have an impact in classrooms, in real classrooms.

This example highlights tensions between faculty’s and institutions’ prioritization of publication types based on perceived degree of impact (scholarly vs. uptake by practitioners and citations). It thus highlights the uncertainty that many faculty reported regarding what counts as impact, and it begins to lend insight to the competing demands that study participants perceived. Interviewees at all three COEs similarly expressed uncertainty about criteria for differentiating the quality or prestige of various journals as well as various nonjournal forms of publishing (e.g., book chapters, blogs, encyclopedia entries). Moreover, regardless of how the COEs approach KMSE, faculty must still reconcile the broad norms at their universities and the general expectations of external reviewers.

Organization-level approaches to KMSE also prove challenging because each faculty member within a college interprets KMSE in relation to one’s particular circumstances. For example, two interviewees at COE1 whose scholarship involved school collaborations perceived their college to regard collaboration differently. An associate professor suggested that COE1 recognized and respected school collaborations as a form of impact.

I think there’s a bias in the university towards more traditional kinds of forms of academic quality. But I think within [COE1], people who do collaborative work with teachers, with community organizations, with young people, I think that is regarded as a form of impact and it is respected.

Meanwhile, an assistant professor, also at COE1, expressed a different view about how the college perceived school collaborations.

At [COE1], I’m not sure if there’s a premium on collaborating with schools, and I don’t know if you get the same credit for the school collaboration. Though, that’s something that is the most important to me and something I value.

These contrasting perspectives by these two faculty members at COE1 may be related to tenure and promotion processes. There were many instances across interviews in which tenure was invoked as a fault line that enabled tenured faculty to engage with KMSE-related practices and to perceive that their college regarded it as a respected form of impact; yet, at the same time, tenure precluded similar engagement and perceptions among pretenure faculty. Indeed, two tenure-track faculty at COE1 outlined a related tension regarding KMSE—risking the college’s “reputational capital” with peer institutions whenever scholarly productivity departed from conven-tional standards of scholarship.

Nevertheless, vagueness about whether and when KMSE approaches to scholarship that include school collaborations count as impactful might be one reason why KMSE remains both a subordinated agenda and a competing demand.

The uncertainty among the assistant professors and the indeterminacy suggested by the contrasting views on school collaboration resonate with the broader perspective shared by other COE1 faculty. For example, KMSE agendas in COEs may ultimately entail not only expanding what counts as impact but also reenvisioning impact, inside and outside the college (e.g., outside of sole-author publications in peer-reviewed journals with high JIFs). A full professor from COE1 characterized a tension between traditional notions of scholarly impact and ambitions for broader impact.

One of the things that has to change, in addition to the kinds of impact [used in] valuing scholars who are collaborators and contributors, is not having everyone have to have this obsession with uniqueness. What’s your unique contribution? Well, if everybody makes a unique contribution, we’ll have very little impact on large-scale problems of educational practice or the things that we really care about, promoting equity and excellence throughout the whole system.

Sole-author publications in peer-reviewed scholarly journals with high JIFs were commonly cited as the forms of scholarship with the highest and clearest impact across surveys and interviews. However, such comments were situated amid clearly articulated tensions surrounding how long-standing and pervasive institutional foci and reward structures can preclude collaborative work, scholarship in nontraditional venues, and a host of other KMSE activities.

By extension, the combination of simple incentives and metrics implemented among COEs over the last two decades may be producing more publishable educational research and generating easily quantifiable comparisons. However, most participating faculty perceived an increasing reliance on metrics and incentive structures as interfering with individual and organizational KMSE agendas as well as perhaps more impactful, usable, relevant, and comprehensive research programs. Resonating with the faculty perspective from COE1, a full professor from COE3 drew on extensive personal experience with KMSE to sharpen the point.

It’s actually really difficult to measure impact. [Our research project team] have been doing it through tangible things like number of outputs, activities, publications, Google Analytics around social media. But for actual impact on change and knowledge, skills, and practice, it comes more through interviews and case studies. We’re having to go more to a qualitative route to get to the impact. It’s difficult because impact is diffuse and over time, so it’s challenging.

This reflection leads into the notion of ambiguity regarding how KMSE is supported and assessed—the second subtheme that we identified from analysis of interviews.

Ambiguous support and evaluation of faculty KMSE engagement and impact

Understanding whether and how COEs support and assess faculty’s scholarly contributions is critical for developing organizational approaches to KMSE. While we found that administrators and faculty at all three COEs cited the importance of engaging in work with broader impact, the same individuals did not perceive existing institutional incentive structures and evaluation practices as necessarily supporting faculty engagement in KMSE. Pointedly, this proved true even at COE3, where formal KMSE recognition is in place. Participants expressed uncertainty about evaluating KMSE-oriented scholarship in tenure and promotion structures, as illustrated by an associate professor at COE1.

I think we kind of send a mixed message about outreach work or work where you’re designing something that a school has taken up. But it only really received real recognition I think if you’re . . . simultaneously publishing about it, or somehow it’s integrated into the traditional kinds of scholarly outputs.

This comment highlights the processes of structural accretion discussed earlier, wherein new structures and demands are put into place without attenuating prior ones. Many faculty across COEs similarly commented on KMSE-type work being rhetorically valued but only receiving “real recognition” if linked with more traditional scholarly activities and products. Such ambiguity and perceived disconnection between what is valued and “what counts” as captured by institutional procedures generate a sense of risk associated with KMSE, especially for pretenure faculty. An associate professor at COE3 underscored such risks associated with typically time-intensive KMSE activities.

I think junior faculty who are interested in doing [KMSE] work have to find mentors who are not preoccupied with their own careers and . . . can just focus on supporting the junior faculty. . . . Although I recognize that at the moment the leadership of [COE3] values the [KMSE] work that I do, I don’t think that that is necessarily an institutional expression of an institutional recognition. I think it’s an expression of the values of those people in leadership positions at the moment, and that could change very quickly. So, to the extent junior faculty still have to play that game, I wouldn’t recommend any junior faculty who arrives here to just do [KMSE]. It would be suicide.

Highlighting perceptions of KMSE as potentially risky for pretenure faculty, this interviewee’s comments suggest that, even at COE3 (where formal structures in place for promotion and tenure recognize KMSE), such activities are in addition to “the game” that must be played to earn tenure—for example, sole-authored articles in high-JIF journals. As retrospective commentaries on their career advancement, both tenured faculty excerpts suggest that KMSE should be a lower priority among untenured faculty despite institutional rhetoric (and structures at COE3) in support of KMSE agendas due to the risks associated with the unclear assessment of such work as being relevant for tenure and promotion.

Although our interpretation of these data suggests some degree of faculty ambivalence about the value of KMSE at these COEs, organizational tensions surround the evaluation of KMSE and what counts as evidence of actually doing it. The following two comments from a full professor and an associate professor at COE3 highlight complementary challenges—(a) KMSE is harder to assess and reward vis-à-vis the traditional forms of dissemination, and (b) despite greater levels of institutional acceptance, KMSE models have not achieved a reputation that enables systematic support and evaluation. To the first point,

I don’t think there’s a particular reward for [KMSE] products. For the scholarly high-impact [products] there might be release time—course release—but not necessarily. I would say some of my colleagues would argue for course release . . . if they are extremely effective [at KMSE] and getting their work out there.

On the issue of the reputation of KMSE,

I think [COE3] values what we do about [KMSE]. But it’s not widespread . . . and I don’t think that the majority of the faculty who do research here would either engage in the kind of [KMSE] work that we are doing or necessarily count it as impactful or as significant. And in fact, I think that there would be very many people who would be quite dismissive, because [COE3] has the virtue of being really diverse . . . and that’s the beauty of COE3. But it also means that . . . there’s a kind of institutional support, but I don’t know that necessarily there’s a kind of shared understanding that somehow these things that we are doing are impactful.

These two interviewees highlighted how institutional support of KMSE proves challenging even when formally recognized. In other words, KMSE activities and products are more difficult to measure and still remain in the reputational shadow of traditional scholarly activities and products. Even with the formal support and recognition of KMSE at COE3, successful faculty often navigate KMSE work as a complement, rather than an addition to, traditional scholarly activities.

While interviewees across COEs indicated that their institutions valued and respected the work that they performed within schools and the community, comments reflect findings in the literature that claim that the field of educational research as a whole has not yet reached consensus on how to recognize such impact and engagement either systematically or comparatively (Ellison & Eatman, 2008; Feuer, 2016). In further support of this point, an associate dean from COE1 reflected on changes in how her college assessed the impact of scholarship, noting how difficult it is to recognize and reward KMSE.

When I first came . . . the focus really was much more on the publication in top-tier journals, on the research and scholarship, and that is definitely still the focus. But I do think there’s been an increased focus on what, here, they call “outreach” instead of “service.” So, for example, in our yearly reviews, when I first came, we didn’t have to write anything about what we did in outreach. We could, but it wasn’t really a big component. And now it’s a required component of our review, and it’s largely symbolic. I still don’t think it has much impact in terms of the incentive and reward system. But, especially if it’s combined with scholarship, then you’re paid attention to more.

The following quote from an associate dean at COE2 similarly illustrates how his college was at a particularly crucial period with regard to establishing institutional practices surrounding recognizing KMSE.

I think [COE2] is moving very fast to . . . increase the size of the group of people who are very productive scholars without necessarily losing attention to producing research that can benefit practitioners. . . . We cannot afford to stay only with indicators that look at the impact factor of journals and number of publications and the prestige of the journals in which we do that. I think those are useful indicators, but I think they narrow the conversation and the assessment of what counts as impact.

The first administrator comment highlights the “largely symbolic” nature of KMSE recognition. Echoing others quoted here, KMSE is seen to “count” only when combined with more traditional scholarship. Similarly, the second administrator comment cites the aspirational need for new ways of measuring faculty impact given recent changes in what counts and is valued for education researchers (e.g., benefitting practitioners, community outreach). However, while the testimony of these administrators referred to their institutions, they illustrate that the challenges that we have identified—changes in the professoriate, tensions between doing applied scholarship and publishing, the use of publications as proxies for a college’s research stature, and the limitations of the current system of assessing the relevance of journals—are also relevant for many COEs, especially those at research-intensive universities.

A last prominent point within this subtheme regards a tension between (a) how relationships with nonacademic educational actors and stakeholders are considered important by participants and according to institutional rhetoric about these relations and (b) how such relationships are institutionally supported and recognized. A full professor at COE1 characterized this tension.

One of the most valuable things to many practitioners that I interview, and district leaders, are regular interactions with researchers. That’s not a knowledge product, but rather I think part of a long-term partnership that people have, arrangements where they might do some joint work. But beyond that joint work together, practitioners look to those researchers as kind of confidantes, as people they can go to for advice about who are outside their system . . . and I don’t think there’s a place that typical universities have for valuing that kind of relationship and the time that is required to cultivate and maintain those kinds of relationships.

Despite comments like this about the importance of cultivating relationships and collaborations with various stakeholder groups, many participants noted that building and maintaining such relationships remained invisible within their COEs’ systems of support and assessment. The same tension emerges as faculty enlist social media to advance KMSE. For example, blogs, podcasts, and online social networks are seen as increasingly central to mobilize scholarship (Veletsianos, 2013; Veletsianos & Kimmons, 2012). But as an administrator and full professor at COE2 described, how institutional systems of assessment for tenure and promotion acknowledge such efforts remains unclear.

We have faculty that are really active [educational] bloggers, and that’ll be a test case. . . . We will be looking at alternative ways of looking at impact, and we have to. Once it goes up for external review [when] someone’s going up from assistant or associate, and our external reviewers . . . underscore it as another alternative metric that they looked at to consider this person’s national reputation, then we’ve got to look at that and look at our standards for that.

In this type of “test case,” faculty bear the risk if a KMSE agenda is rendered invisible, and they hold the potential to influence college standards if recognized. However, the fulcrum of this risk-reward depends on external reviewers rather than the college itself, resonating with preceding themes in our findings. Faculty described wrestling with competing demands, with KMSE remaining a lower priority in spite of college-level approaches when faculty perceive that recognition and incentives remain tied to external reviewers, a topic that we consider next.

Discussion

Participants in this study identified competing demands that simultaneously value and limit engagement with KMSE in relation to other scholarly goals and processes. They cite ambiguous and sometimes simplistic incentive structures at COEs as internal barriers that reflect wider uncertainties regarding the relevance of KMSE activities for academic careers. Our findings thus reflect Boyer’s (1990) familiar, enduring tensions between scholarship oriented toward developing theory and influencing practice, as well as tensions among traditional silo-like divisions based on disciplinary orientations and specialties in education research. The COEs in this study may be among a growing number that aspire to advance organization-level KMSE agendas, but their efforts remain constrained by the limited recognition currently paid to the activities and processes required to mobilize research in education.

Against this backdrop, the findings underscore that the KMSE agendas at all three COEs do not necessarily resolve professional tensions concerning the growing interplay between knowledge production and mobilization. Each COE considers KMSE-related metrics, albeit monitoring them as secondary indicators that may inform traditional, indirect metrics of scholarly impact (e.g., JIFs). Recognizing the distinctive situation of COE3, where some form of legitimation exists regarding KMSE-related metrics, participating faculty felt that it was not systematically integrated or recognized into the everyday practices at COE3.

The complementary or minimal levels of recognition and incentives for KMSE-related processes (e.g., codesign, partnerships) and various secondary or tertiary research products (e.g., blogging, commentaries, professional development, social media) may signal to many participants that KMSE is still peripheral, if not inconsequential. Its relative value, regardless of how useful KMSE may be, remains ill-defined and largely perceived to be outside tenure and promotion policies. As a result, participating faculty at all three COEs widely perceived that their COEs continue to prioritize and reward sole-author publications in peer-reviewed journals and external research grants as primary, if not also exclusive, products of academic careers. These findings empirically illuminate the persistence of long-standing challenges to improving the relevance, accessibility, and usability of research in education in spite of explicit organization-level efforts to foster and sustain scholarly mobilization agendas (Nutley et al., 2007; Prewitt et al., 2012). Publishing sole-authored articles in outlets with high JIFs is one effective strategy for resolving a scholar’s unique contributions, and it has been used as an efficient model for incentivizing faculty (Fischman & Tefera, 2014). However, this simple structure of incentives might also compromise complex research agendas that require group efforts and collaboration (Fanelli, 2010). For any one person, sole authorship may yield clear and distinguishable impact, but for whole organizations or fields, it may ultimately fail (Heffernan, 2015). That is, if researchers remain too focused on producing original sole-authored articles, the opportunities for collaboration around research and impact on complex educational issues may be missed (Adams, 2013).

These challenges reflect processes of structural accretion mounting in many research-intensive universities and exacerbated by the amalgamation of three elusive goals in COEs: (a) increasing scholarly productivity, innovation, and research impact; (b) expanding prestige in national and global rankings; and (c) demonstrating higher levels of accountability to increasingly impatient educational stakeholders (Gutiérrez & Penuel, 2014). Simultaneously pursuing these goals gives rise to tensions between (a) strategies aimed at incentivizing faculty to secure more grant funding, obtain more publications in journals with high JIFs, and increase citations and (b) debates about what could constitute impactful, accessible, and usable scholarship. Each of the three participating COEs uniquely navigates the complementary agendas and competing demands, most pointedly in the administration of tenure and promotion. To this end, COE3 maintains an explicit policy for recognizing scholarship consistent with KMSE; however, the faculty interviewed did not believe that the policy influenced tenure and promotion. Similarly, COE1 uses a comprehensive annual report system with several hundred items related to scholarship, including some consistent with KMSE. Interviewed faculty similarly perceived the KMSE-related items as being peripheral, while recognizing that COE1 explicitly encourages forms of engaged research and is exploring ways of improving related reward structures, particularly when combined with peer-reviewed publications. Meanwhile, COE2 developed a formal KMSE initiative, but it had not yet informed college policy or practices. Rather, it has remained an opportunistic effort to encourage the use of KMSE tools and strategies and to explore expanded notions of what counts as educational impact.

In sum, the structures for academic accountability in place at each of the three COEs participating in this study not only measure differences in research performance but also construct academic cultures of desirable scholarly performance. Despite these three COEs’ unique pathways with regard to promoting KMSE and related practices, participating faculty consistently noted their limited and conservative effect with uncanny commonalities across these COEs.

Conclusions

We return to the literature on knowledge mobilization in educational research and the assertion that no simple and straightforward system exists to measure the quality of educational scholarship—specifically, how well the research produced by faculty at COEs generates more comprehensive theoretical models; develops models for better learning opportunities; and is used to inform curriculum and policies, inspire other scholars (educators and students), and address perennial conceptual and practical problems (Anderson, De La Cruz, & López, 2017; Berliner, 2002; Feuer et al., 2002). Despite the relatively low survey response rates, the possibility of nonresponse bias, and the exclusion of tenure-track faculty from one COE’s sample, our study highlights that amid the interest in KMSE across COEs, many participating faculty and administrators still prioritize sole-authored articles published in high-JIF outlets as a proxy for research quality, which may provide the illusion of scholarly influence but does not convincingly address the conflicting demands that affect the field of educational research.

Based on our analysis, the results of several decades of prioritizing sole-author peer-reviewed articles published in high-JIF journals are quite evident in two forms: First, there is a noticeable increase in the number of articles published in those journals; second, the systematic use of narrow incentives has consolidated collective faculty habits of following reductive procedures, rather than encouraging the exploration of new areas and deepening the connections between educational researchers and other relevant potential research users. COEs will greatly benefit from remembering Goodhart’s law: “When a measure becomes a target, it ceases to be a good measure.”

While KMSE appears to hold promise for expanding the reach and impact of COEs at research-intensive universities, current implementation strategies will not suffice unless there are clear and sustained modifications in the institutional procedures for recognizing, assessing, and rewarding “what counts” as relevant for their faculty. As the demands facing educational scholars continues to intensify, it is important to examine how COEs with researchers adapt and to what extent they engage with communities, practitioners, and policy makers (Anderson et al., 2017). Indeed, the organizational tensions between (a) attempting to incorporate KMSE strategies into the existing structures of tenure and promotion and (b) continuing to use inadequate incentives and metrics for improving educational research constrain faculty. This situation is especially limiting for faculty in the early stages of their careers as well as those seeking promotion. Participating faculty often expressed these tensions amid a sense of fear or skepticism that implied risk avoidance strategies for doing collaborative and engaged forms of educational research and neglecting to engage in KMSE practices—due to the perception that they were (a) time-consuming efforts not directed at the production of impactful research and (b) perceived as not being rigorous or even as ideologically motivated.

We propose that comprehensive strategies of KMSE be multidimensional, interactive, and inclusive processes that are coordinated and supported at the college and university level to increase the accessibility and usability of educational research. A KMSE strategy should involve deliberate and systematic coordination of products, events, and networks to help individuals and teams reach a range of education stakeholders, including scholars, professionals, practitioners, policy makers, and community members. Understanding and enabling diverse pathways to and from educational research is key for developing meaningful educational impact and enduring educational partnerships (Baker, 2015; Cooper, 2015b; Ladson-Billings & Tate, 2006; Penuel, Bell, Bevan, Buffington, & Falk, 2016; Penuel, Coburn, & Gallagher, 2013; Penuel, Fishman, Haugan Cheng, & Sabelli, 2011). This includes not only COEs prioritizing KMSE efforts but other organizations as well, including school districts and departments of education clearly articulating more deliberate engagement with researchers and scholarship (Cooper, 2015b).

We are not naïve: we understand that in highly polarized and politicized contexts, the biggest challenge to developing more usable educational research is not to produce more or better data; the field is already doing that. Rather, the challenge is to overcome the lack of trust among potential allies and to confront those who benefit from a system where there is literally no difference between (a) publishing research that systematically concludes with the statement “more research is needed” and (b) producing knowledge that may eventually bring value to a scholarly field, help educators to improve practices, or provide rigorous evidence to policy makers.

The scenario is complex, yet we remain cautiously optimistic because the colleges and faculty considered in this study demonstrate awareness that 21st-century educational research cannot simply produce the most rigorous, innovative, and usable scholarship but must do so in concert with multiple forms of KMSE. This general awareness across all three COEs is promising evidence that there are many colleagues working toward producing knowledge with the ultimate goal of contributing to the public good.

Footnotes

Appendix A: General Profiles of Participating COEs

Appendix B: Semistructured Faculty Interview Protocol

Appendix C: Axial Codes and Their Subcodes

1. Impact (see subcodes).

a. Indicators—what counts as impact is up to individual interpretation, value is negotiable, when how to measure impact is called into question, not codified (e.g., presentation attendance, award, readership, take-up, reach, influence, prestige, mentions of “top tier”). (When in doubt, use when ways to measure impact are oriented to “at stake” or ambiguous.)

b. Measures—what counts as impact is seen as objective; value is not negotiable, institutionalized, established, operationalized, codified in writing (e.g., impact factor, citation count, journal citation ranking, research funding, Altmetrics, impact story, PlumX, institutional guidelines for how to report, article [in cases where article output is codified as evidence that outreach had impact]). (When in doubt, use when ways to measure impact are referred to as taken for granted, transparent, or the “way things are.”)

c. Scale—when an interviewee identifies the scale of a type of impact; local, national, and/or global; can be relational (i.e., local vs. national) or solitary (state legislature); often coincident with other codes.

d. Form—how different forms of output are valued, personally or institutionally (e.g., research, teaching, service, collaborative coproduction of research, consulting, entrepreneurship, partnership, mentoring, public/community outreach, policy / legal brokering / translating / advising, conference presentation, among others).

e. Users of research—mention of intended and/or actualized consumers of products, events, and/or networks (e.g., practitioners, researchers, press, community, courts, policy makers).

f. Momentum—commentary on momentum/arc/evolution of what counts as impact or how it is measured—includes mentions of traditional (because its mention means that it is not just seen as neutral) difference/expansion/shift (scholarly/practical divide) (i.e., references to or contrasts to “traditional” or “scholarly” impact); also use for instances of nonimpact (e.g., negative impact, flat curves).

g. Tools—social/technological means for disseminating research (with potential for increasing impact) in relation to indicators or measures (e.g., networking, actions, products).

h. Accessibility—explicit reference to access, constructed/framed to be understood beyond a discipline.

2. Institutional values—noncodified institutional priorities, rhetoric/messaging, expectations, culture.

3. Institutional structures—incentive structures, how college measures impact, annual review, merit pay, formal supports.

4. Personal values/commitments—choices/decisions (e.g., how to represent work, what work to do), ideologies, obligations, personal definition of impact (which might be simultaneously coded under the relevant subcode of Impact); includes research decisions and methodologies, as they relate to views on knowledge mobilization; personal orientations to knowledge mobilization.

5. Tensions—used when an interviewee raises a tension explicitly, including when an opposing view is acknowledged (e.g., dichotomies, contradiction, difficult choices, challenges).

6. Tenure/promotion—differences pre-/posttenure, anything related to tenure and promotion.

7. Public perception of educational research—interviewee commentary on what/how the public perceives educational research.

Authors’ Note

Authorship order is random and denotes equal contributions on behalf of all authors.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This research was conducted with support from the Spencer Foundation. Organizational Learning Small Grant 10003022.

Notes

Authors

GUSTAVO E. FISCHMAN is a professor of educational policy and the director of edXchange, a knowledge mobilization initiative, at the Mary Lou Fulton Teachers College, Arizona State University, PO Box 872211, Tempe, AZ 85287-2211, USA;

KATE T. ANDERSON is an assistant professor in the Mary Lou Fulton Teachers College, Arizona State University, PO Box 872211, Tempe, AZ 85287-2211, USA;

ADAI A. TEFERA is an assistant professor of foundations of education at the School of Education, Virginia Commonwealth University, PO Box 842020, Richmond, VA, USA;

STEVEN J. ZUIKER is an assistant professor of learning sciences in the Mary Lou Fulton Teachers College, Arizona State University, PO Box 872211, Tempe, AZ 85287-2211, USA;