Abstract

Playing action video games has previously been linked to improvements in attentional control, the ability to choose what to attend and what to ignore that relies on a frontoparietal network of the brain. Here we asked whether action video game training would impact a range of mathematical abilities that rely on similar brain regions. Twenty-four adults completed extensive cognitive testing before, after 25 hr, and after 40 hr of video game training. Half of the participants trained on an action video game, the other half trained on a nonaction video game. Action video game training yielded no significant improvements in foundational number-processing skills and attentional control in this study but some improvements on standardized assessments of complex mathematics. Thus, although action video game play is clearly not an intervention of choice when considering mathematical skills, the present study suggests its use as a recreational activity may support, albeit weakly, complex mathematical skills.

At the neural level, mathematical processes seem to consistently rely on a frontoparietal network (Ansari, 2008; Nieder & Dehaene, 2009). The intraparietal sulcus (IPS) is a fissure running the length of the parietal lobe (which is located at the top of the brain just behind primary motor and sensory cortices) roughly in an anterior-posterior (front-to-back) manner. The IPS has been shown by various researchers to be central to a wide range of numerical and mathematical processes, such as when people compare which of two numbers is the larger or solve novel arithmetic problems (see Arsalidou & Taylor, 2011, for a meta-analysis). Furthermore, greater prefrontal cortex activation during math tasks seems to be associated with greater task difficulty (Zhou et al., 2007), possibly reflecting greater reliance on working memory and attentional processes (Arsalidou & Taylor, 2011).

The existence of a frontoparietal network for supporting mathematical processes raises the possibility that training such a network could improve mathematical abilities. In the present work, we took this reasoning one step further and asked, Can a training regimen that targets the frontoparietal network in a completely non-numerical context (i.e., action video games) transfer to enhanced mathematical abilities? Over the past 15 years, a burgeoning literature has been documenting the impact of first- and third-person shooter games and other so-called action video games on various top-down attentional skills, such as attentional tracking of objects (Green & Bavelier, 2003; Trick & Pylyshyn, 1993), visual search (Hubert-Wallander, Green, & Bavelier, 2011; West, Stevens, Pun, & Pratt, 2008), or attention when deployed over time (Mishra, Zinni, Bavelier, & Hillyard, 2011). The emerging view is that of an enhancement in attentional control in individuals trained to play action video games, resulting in a greater ease to focus on the task at hand and to ignore sources of distractions or noise (for reviews see Green & Bavelier, 2015; Spence & Feng, 2010). Associated with enhanced attentional control has been the documentation of improved inference in the service of decision making (Bavelier, Green, Pouget, & Schrater, 2012; Green, Pouget, & Bavelier, 2010). Briefly, action video game play may act by enabling gamers to more efficiently accumulate the relevant information for the task at hand and thus enable them to make more informed decisions. In this view, mathematical processes, especially those foundational ones relying on approximate evaluations, would be expected to benefit after action video game training. In support of this view, a few works point to performance advantages in action video game players in tasks related to approximate number processing, such as subitizing (Green & Bavelier, 2006) or approximate number comparison (Halberda et al., 2013). Importantly, at the neural level, the frontal and parietal brain areas that engage attentional processes appear to mediate the altered attentional control witnessed in action video game players. In particular, functional magnetic resonance imaging points to a differential recruitment of the middle frontal gyrus, a major controller of attention, and the temporoparietal junction, an area hypothesized to regulate the interaction between bottom-up and top-down attention in action video game players (Bavelier, Achtman, Mani, & Föcker, 2012; Föcker, Cole, Beer, & Bavelier, 2015, in press; Gong et al., 2016; Krishnan, Kang, Sperling, & Srinivasan, 2013; Wu et al., 2012).

Given that action video game play enhances efficient integration of information and greater attentional control through a frontoparietal network that is similar to that previously found to mediate mathematical processing, the present study sought to improve a range of adults’ mathematical abilities by training them on a commercially available action video game. Adults with limited exposure to mathematics through concurrent education or work and with little previous exposure to video games were randomly assigned to complete either 40 hr of an action video game or 40 hr of a nonaction video game (control game). All participants completed standardized assessments of math, language, and fluid reasoning abilities as well as experimental tasks assessing foundational number processing and attentional control abilities before training, after 25 hr, and after 40 hr of training. Mathematical skills were the main focus of this study, with our working hypotheses predicting both improved attentional control abilities and improved foundational mathematical processing after action as compared to control video game play. We were also interested in possible transfer to standardized mathematical tests, which are more representative of academic performance. Standardized tests of verbal skills and fluid reasoning skills acted as controls as these skills were not expected to change after action video game training based on the existing literature (Bediou et al., in press).

Method

Subject Recruitment and Demographics

The participants reported in this study are part of a larger pool. All participants were recruited either from the Rochester, New York, community or the University of Rochester student body. The community members were recruited through flyers posted around the city of Rochester, specifically in local community organizations (community colleges, local libraries), newspaper advertisements (City Newspaper), and Internet postings (Craigslist). The university students were recruited through flyers posted around the University of Rochester campuses as well as through word of mouth. The average level of education for all participants was “some college,” as the majority of the participants had completed at least 1 year of college toward either a bachelor’s degree or an associate’s degree.

In this report, we focus on a subsample of 24 participants who accepted to continue training for 40 hr; the rest of the sample stopped after 25 hr of training. The “action” group had a mean age of 24 years (range: 19–35), and the “control” group had a mean age of 23 years (range: 18–31). Both training groups consisted of 42% community members and 17% males each. Participants were compensated for their participation at the rate of $8 per hour plus a $100 bonus for the completion of the entire study. This study was approved by the Institutional Review Board of the University of Rochester.

Screening and Group Assignment

Study participants underwent a first screening either over the phone or through e-mail for initial eligibility. This first screening included questions about age, number of hours of video game play per week and examples of games played, basic work and education history, and use of math in daily life. Four exclusion criteria were used: (a) being below the age of 18 or above 35 years of age, (b) reporting playing regularly either action video games or games of the Sims series, (c) having attained a bachelor’s degree or equivalent, and (d) using math on a daily basis for either work or school. During the first screening, the participants were also informed of the basic study structure as well as their responsibilities if they were accepted and chose to participate, such as the extended time commitment it represented and the need to have their own transportation to come to the lab for training.

One hundred and twenty-five participants agreed to come in for the in-lab interview after having passed the first screening. During the in-lab interview, participants were screened again to double-check they met our inclusion/exclusion criteria, and they were required to complete a more detailed video game use questionnaire, which asked about the detailed usage of various video game genres over the past year as well as their entire video game play history (Green et al., 2017). Only participants who played less than 1 hr of action video games (i.e., first- or third-person shooter games (such as Halo, Call of Duty, Gears of War, GTA, Half-Life, Unreal, etc.), or action/action sports games (such as God of War, Mario Kart, Burnout, Madden, FIFA, etc.) per week and less than 3 hr of video games of all genres combined per week at any point in time qualified for the study. Importantly, none of the participants were habitual players (3+ hr per week) of the assigned training video games. Out of 125 people initially screened as eligible, 66 people qualified to participate in our study and went on to pretesting.

At the time of pretesting, the participants were pseudorandomly assigned to either the action video game training (action) group or the control group. Fifty-eight participants agreed to complete the pretesting stage of the experiment; however, 11 participants dropped out of the study early during training, mainly citing time constraints as the reason for dropping out. Seven of these participants were assigned to the action group and four to the control group.

Participants were initially recruited for a 25-hr training study, with the possibility of longer training if willing. A total of 47 participants (24 in the action group and 23 in the control group) completed these 25 hr. Participants were invited to a 15-hr training top-up, after having completed between 20 and 22 hr of their initial 25 hr. Thirteen participants in each of the training groups agreed to extend their training so as to reach a total of 40 hr of training, but one participant in the action group systematically failed to follow task instructions, and one participant in the control group was excluded as an outlier (see below), resulting in a final sample of 24 participants. As can be seen in Supplementary Table S1, participants who extended their training to 40 hr did not differ on any of the experimental measures described below at pretest compared to those participants who stopped training after 25 hr.

Experimental Design: Stimuli

The study consisted of a pretest, 25 hr of in-lab training on the assigned video game distributed over 6 weeks, a first posttest (labeled post-25) always scheduled at least 24 hr and at most 48 hr after 25 hr of training were completed, followed by another 15 hr on the assigned video game distributed over 4 weeks, and a second posttest (labeled post-40) always scheduled at least 24 hr and at most 48 hr after 40 hr of training were completed. The average delay between the completion of training and posttest was 36 hr for both post-25 and post-40. All testing and training were completed in the lab.

Foundational Number-Processing Tasks

We administered a total of eight foundational number processing tasks that tap into number comparison (Approximate Number System Acuity task; Halberda, Mazzocco, & Feigenson, 2008), numerical estimation (Approximate Estimation task, Numberline Estimation task; Siegler & Opfer, 2003), approximate arithmetic (Approximate Symbolic and Nonsymbolic Addition and Subtraction tasks; Barth et al., 2006), and ordering (Symbolic and Nonsymbolic Number Ordering tasks; Lyons & Beilock, 2011). These skills are present early in development and are foundational to the acquisition of formal math skills, such as arithmetic and complex math (De Smedt, Noel, Gilmore, & Ansari, 2013; Lyons, Price, Vaessen, Blomert, & Ansari, 2014). However, because there is a lot of debate about the validity of some of these foundational number-processing tasks and their underlying constructs (see, for example, Barth & Paladino, 2011, for a discussion about issues regarding numberline estimation tasks; for discussions about issues regarding tasks tapping into the approximate number system, see Gebuis, Cohen Kadosh, & Gevers, 2016; Leibovich, Katzin, Harel, & Henik, 2016), we decided to collapse all of the measures derived from these eight tasks into one composite score of foundational number processing. A full description of all foundational number-processing tasks and the respective dependent measures can be found in the Supplemental Materials.

Basic Arithmetic

This task was designed to assess participants’ ability to solve addition and subtraction problems. This pen-and-paper test consisted of 60 two-operand and 60 three-operand addition and subtraction problems and was modeled after Ekstrom (1976). Participants were asked to solve as many problems correctly as quickly as possible. Participants were given 2 min to solve each set of 60 problems, and the total number of correctly solved problems was used as dependent measure.

Mathematical Abilities

We administered the mathematical subtests from two standardized assessments: the Wide Range Achievement Test (WRAT-4; Wilkinson & Robertson, 2006) and the Basic Achievement Skills Inventory (BASI; Bardos, 2004). As mentioned below, the language subtests of these standardized assessments were also administered.

Attentional Control

We administered three tasks that tap into different aspects of attentional control: the Useful Field of View task (Ball & Owsley, 1993), the Multiple Object Tracking task (Pylyshyn & Storm, 1988), and the Flicker Change Detection task (Pailian & Halberda, 2015). A full description of all attentional control tasks and the respective dependent measures can be found in the Supplemental Materials.

Standardized Language and Fluid Reasoning

We administered two standardized assessments to test participants’ language skills: the WRAT-4 (Wilkinson & Robertson, 2006) and the BASI (Bardos, 2004). In addition to these standardized language tests that assessed reading, spelling, and vocabulary, we administered the Controlled Oral Word Association Test (COWAT; Ruff, Light, Parker, & Levin, 1996) to assess participants’ verbal fluency and the Rapid Naming subtest of the Comprehensive Test of Phonological Processing (CTOPP; Wagner, Torgesen, & Rashotte, 1999) to assess their rapid naming skills. Finally, we administered three standardized assessments to test participants’ fluid reasoning skills: the Wechsler Abbreviated Scale of Intelligence (WASI; Wechsler, 1999), the Raven Progressive Matrices (Raven, 1990), and the Bochumer Matrizentest (BOMAT; Hossiep, Hasella, & Turck, 2001). A full description of all standardized assessments and the respective dependent measures can be found in the Supplemental Materials.

Flow Scale

To assess participants’ engagement during game play, we administered the flow scale (Csikszentmihalyi, 1990). It contained 36 questions rated on a 1-to-5 scale. Participants rated their gaming experience at several different time points during their training, filling in the flow scale questionnaire about every 7 hr of game play. Scoring proceeded by summing the ratings of all 36 questions, leading to overall flow scores that ranged from 36 to 180, and regrouping all assessments done during the first 25 hr of training and all those done in the remaining period from 25 to 40 hr of training.

Experimental Design: Procedures

Testing Procedures

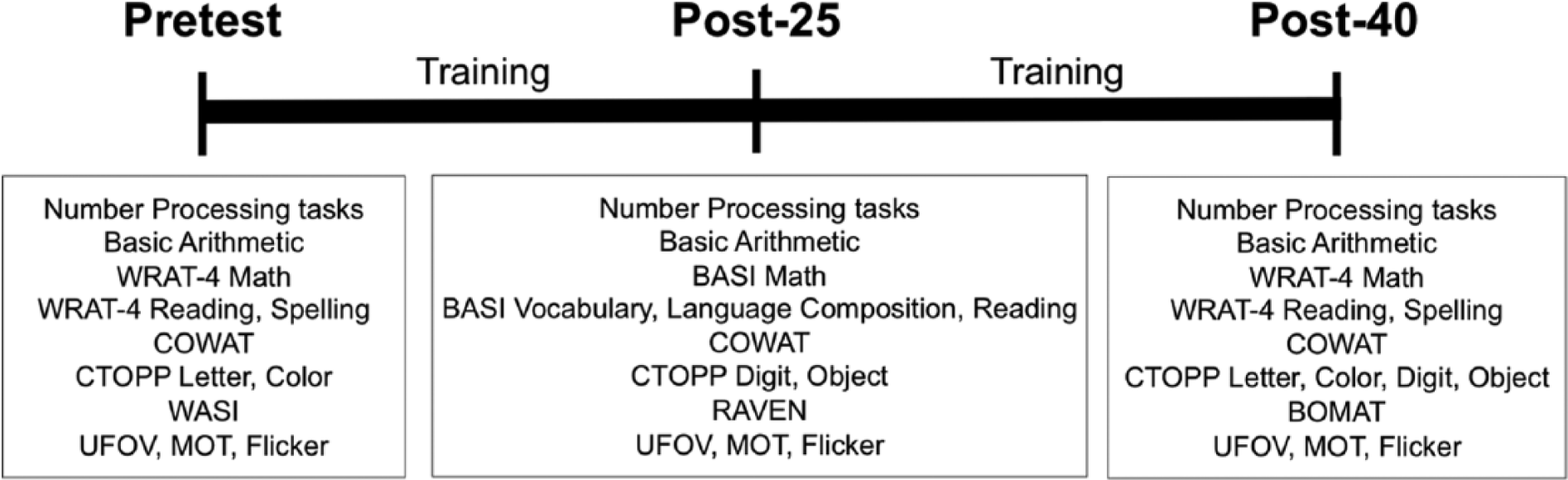

Testing at pretest, post-25, and post-40 was administered over 2 consecutive days. Eight tasks were administered each day, always in the fixed order as listed below, for a total of about 2.5 hr per day of testing per participant (see Figure 1). We decided to maintain a fixed order of tasks to avoid incomplete counterbalancing due to the large number of tasks and small number of participants. This was a way to ensure our two groups of interest had identical test order. On the 1st day, participants completed the Computerized Numberline Estimation task, then the Useful Field of View task, followed by the COWAT, Numberline Estimation task, the Symbolic Number Ordering task, and the Nonsymbolic Number Ordering task. They were then required to take a 15-min break before the next tasks. At pretest, participants then completed the WASI; at post-25, the Raven Progressive Matrices; and at post-40, the BOMAT. The last task on the 1st day of testing was the Flicker Change Detection task. On the 2nd day, participants completed the Approximate Symbolic Addition and Subtraction task, then the Approximate Nonsymbolic Addition and Subtraction task, followed by the Exact Symbolic Addition and Subtraction task, the CTOPP, and the Multiple Object Tracking task. They were then required to take a 15-min break before the next tasks. At pretest and post-40, participants then completed the WRAT-4; at post-25, participants completed the BASI. Finally, participants completed the Approximate Number System Acuity task and the Approximate Estimation task. Changes to the standardized assessments were necessary because they did not provide three different forms. Upon completion of their 40-hr posttest, participants took an exit questionnaire, which asked about their impressions of the study and how they thought their assigned video game might have affected their performance on the skills tested.

Schematic of study procedures detailing which cognitive assessments were completed at pretest, after 25 hr (post-25), and after 40 hr (post-40) of training. See main text and Supplemental Materials for detailed descriptions of each assessment.

Training Procedures

Participants were assigned to their video game training group by alternating between action and control training for each of our two groups of participants (community vs. college). Participants assigned to the action group were trained on the first-person shooter video game Unreal Tournament 2004 (PC version published by Atari, Inc., in 2004); those assigned to the control group were trained on the simulation game The Sims 2 (PC version published by Electronic Arts in 2004). Participants were told that the purpose of the study was to provide information about how visual and cognitive skills can be altered by experience (such as training). They knew that there were two different video games, which were each introduced as an experimental manipulation of interest. A general reference to cognition was made given that the title of the study on the consent form was “Video Games as a Tool to Train Cognitive Skills,” but no references to math or any specific cognitive skills (e.g., attention, inhibition, working memory) were made.

Each participant came into the lab for 1 hr a day, three to five times a week, to train on his or her assigned game. Under exceptional circumstances, a 2-hr training session was permitted when a participant had too many conflicting constraints that week. For the action group, game scores were recorded three times during the hour of training as the training was broken down into three 20-min blocks. For the control group, game scores were recorded at the end of the 1-hr training session.

After every 7 hr of training, participants filled out the flow questionnaire (Csikszentmihalyi, 1990) to evaluate their involvement and immersion in their training video game. This allowed us to assess participants’ engagement with the training games at different times during training. We were especially interested in determining whether the two training games were equally engaging. The specific questionnaire we used included 36 questions covering four domains (enjoyment, attention, reward, and confidence). Participants answered each question by selecting one of five answers that ranged from 1 = strongly disagree to 5 = strongly agree. The sum of all ratings was used as the dependent measure.

Action group training

The action group played Unreal Tournament 2004 in the “Deathmatch” mode. Deathmatch is a single-player mode in which the participant must defeat as many enemies as possible while “dying” the fewest number of times. As the game starts, each player has 100 health points, and “death” occurs when the player reaches 0 points of health. Game scores are determined based on how many “kills” and how many “deaths” each player has at the end of the 20-min game session. Progress is marked by increasing the difficulty level of the game as soon as the player gets twice as many kills as deaths in a given 20-min session. There are seven difficulty levels in the game, from “novice” to “godlike.” The levels determine the quality of the computer-generated players, which the participant must try to defeat. The number of other computer-generated opponents was set to 16 throughout training.

A first familiarization phase introduced players to the overall goals of the game (i.e., to try to get as many kills as possible), to the game controls (i.e., how to move their character on the screen and to aim and shoot at the enemies), and to the map as well as everything that can be found on it (health packs, ammunition, guns, and power-ups). This was done while there were no enemies on the map. Then participants played a version of the game that contained only three enemies on the map. After 20 min of this introductory game play, participants were switched to the first and easiest level of the game using 16 computer-generated opponents. They were encouraged to play on their own as the researcher stood by to help for the remainder of the hour.

During the first 3 days of training, the players went through all seven levels of difficulty of the game, and afterward, they started again on the easiest level. Participants advanced to the following levels only when they obtained twice as many kills as deaths. At the end of the study, the participants played through all seven levels again regardless of game scores. Thus, none of the training included forced play through levels that were beyond a participant’s skill level. It was only at training start and finish, for the purpose of assessing game play skills, that this assessment was administered. The flow measures reported were not collected after such forced game play.

Control group training

The control group played The Sims 2, in which a player creates a character, or Sim, and controls the life this Sim leads. The goal of the game is to keep the Sim happy and healthy by filling its “needs” and “wants” while avoiding its “fears.” The game information that is recorded is the money that the Sim accumulates (which is obtained by getting a job in the game) and the number of Sims in the participant’s household (the participants always start with one Sim, but they are free to add more as they go along). In the game, each Sim has eight basic needs: hunger, comfort, bladder, energy, fun, social, hygiene, and environment. The Sim’s wants can be filled by completing the tasks that are indicated on the dashboard. Fulfilling the wants gives the Sim aspiration points, whereas choosing to elicit the Sim’s fears takes those points away. The more aspiration points the Sim has, the more special items it can purchase. Some of those items include a “money tree,” which gives the Sim money, and an “elixir of life,” which increases the lifespan of the Sim by keeping it young.

During the initial training for the control group, a researcher helped the participants create a Sim that they were to control for the length of the study as well as purchase and furnish a home for the Sim that would fill all the basic needs. Each participant was encouraged to gain enough aspiration points in order to purchase the elixir of life so the Sim’s life could be prolonged for the entirety of the study.

In The Sims, players are challenged by requiring them to invest time and resources in building their environment. As players get more and more committed into their game play, the game play requires decisions on an increasing number of domains that may interact, and the stakes for loss become higher and higher. Specifically, we asked participants to achieve as many “wants” as they could to keep their Sim happy. When the Sim has only its needs taken care of, like sleep, food, and fun, its happiness level is in green. But when it also has its wants taken care of, the happiness level goes to gold or platinum. As the game progresses, the wants get more and more complicated. For example, if the Sim wants to make friends, at the start of the game, this want might consist of “make 1 new friend.” However, later on in the game, this want might be “make 10 new friends,” which requires more effort.

A first familiarization phase was used, during which the researcher explained the goals of the game, the controls for the Sims, and the needs, wants, and fears. Due to the complexity of this game, the familiarization session took about 1 hr.

Note that for both action and control training, a researcher was present for the first several sessions as the participant played the game in case there were questions or problems. Later on, participants played more independently, but there were always researchers available in case issues arose.

Data Analysis Methods

The pre, post-25, and post-40 data from our 16 cognitive tasks were designed to be reduced into six main performance scores: foundational number processing, attentional control, basic arithmetic, and three standardized measures for mathematics, language, and fluid reasoning, respectively. For all performance scores, higher scores indicate better performance. In addition, we measured engagement during game play using the flow scale, separately for the first 25 hr and last 15 hr of game play.

Foundational Number Processing

To obtain a composite score for foundational number processing at pretest, post-25, and post-40, we calculated the means and standard deviations of each dependent measure for each task across all participants at pretest. We then calculated each participant’s z score for each dependent measure at each time point by subtracting the group mean at pretest from his or her individual score and dividing it by the standard deviation at pretest. Next, for those tasks with accuracy and response time (RT) data, we calculated a composite score by subtracting the RT-related z score from the accuracy-related z score and then re-z-scored these composite scores. Finally, for each participant, we averaged the z scores from all foundational number-processing tasks to obtain an overall composite score.

Basic Arithmetic

For the exact timed arithmetic test, we first calculated the mean accuracy and its standard deviation across all participants at pretest. We then calculated each participant’s z score at pretest, post-25, and post-40 by subtracting the group mean at pretest from the individual’s scores at each testing point and dividing it by the standard deviation at pretest. These computed z scores were used as the basic arithmetic score.

Mathematical Abilities

For the WRAT-4 that was administered at pretest and post-40, we calculated z scores at both time points based on the means and standard deviations across all participants at pretest. We examined the BASI Math Computation and Math Application subtests at post-25 separately because they tap into conceptually distinct aspects of math. The Math Computation subtest required participants to solve whole-number, fraction, and decimal arithmetic problems and was similar to the WRAT-4 math test. In contrast, the BASI Math Application subtest required participants to solve word problems and read and interpret data.

Attentional Control

Similar to the foundational number-processing composite, we obtained a composite score for attentional control abilities by calculating the means and standard deviations for each of the three attentional control tasks across all participants at pretest. We then calculated each participant’s z score by subtracting the group mean at pretest from his or her individual score and dividing it by the standard deviation at pretest. A composite score was computed by subtracting the two RT-based z scores (Useful Field of View and Flicker scores) from the accuracy-based z score (Multiple Object Tracking).

Standardized Language and Fluid Reasoning

For the WRAT-4 that was administered at pretest and post-40, we calculated z scores for each WRAT-4 subtest at both time points based on the means and standard deviations across all participants at pretest. For those tests administered only once (WASI, BASI, Raven, BOMAT), we calculated z scores for each subtest based on the means and standard deviations across all participants for that testing session. Similar to the foundational number-processing and attentional control composite scores, we obtained a z score composite for language skills. Because language skills were assessed with a variety of tests that differed between pretest, post-25, and post-40 assessments, composite scores were based on slightly different sets of scores. At pretest, the language score consisted of the WRAT-4 spelling and reading subtests, the WASI verbal score, the CTOPP, and the COWAT. At post-25, the language score consisted of the BASI Vocabulary, Language Mechanics, and Reading Comprehension subtests; the CTOPP; and the COWAT. At post-40, the language score consisted of the WRAT-4 spelling and reading alternate subtests, the CTOPP, and the COWAT. For fluid reasoning, we did not create a composite score because there was only one assessment at each time point.

Measure of Game Flow

Due to experimenter error, not all participants regularly completed the survey. Here, we report participants’ flow scores averaged over two time periods: surveys completed within the first 25 hr of game play and those completed thereafter. Out of the 23 participants who filled out the flow questionnaire, 17 completed questionnaires during both time periods (0–25 hr and 25–40 hr).

Examining the distributions of each of our z scores, we found one participant whose foundational number-processing z score exceeded more than 3 times the interquartile range in our data set. All data from this participant were eliminated and all z scores were recalculated based on means and standard deviations without this participant’s data, leaving the total of 24 participants introduced above. Note that the pattern of results for all other scores does not change depending on whether data from this participant are included or not.

Results

We checked all of our scores for violations of normality using Kolmogorov-Smirnov tests and did not find any. Thus, for all scores except for the game flow score, we first compared the pretest scores in the two training groups using t tests to determine if there were any significant differences prior to training. None of these comparisons were statistically significant (all ps > .1; see Table 1 for effect sizes). Next, we analyzed the impact of training assignment over time by running a 2 × 3 mixed-design ANOVA with the factors training group (action, control) and time (pretest, post-25, post-40) as the independent variables on each score type as the dependent variables. For the game flow score, we used a 2 (training group) × 2 (time period) mixed-design ANOVA. Due to a few missing data points, the analyses presented below have slightly unequal degrees of freedom. Note that all participants performed the pretest tasks, and thus z-scored data were computed using the entire set of participants. Participants with missing data were selectively removed from the analyses after that computation.

Means and Standard Errors for the Main Measures of Interest at Each Testing Point, Separated by Action and Control Group

Note. Effect sizes (Cohen’s d) are reported for the comparison between action and control group at each testing point and for each testing point compared to pretest separated for action and control groups. WRAT-4 = Wide Range Achievement Test; BASI = Basic Achievement Skills Inventory.

Foundational Number Processing

The 2 × 3 ANOVA revealed no main effects: training, F(1, 21) = 0.34, p = .56, ηp2 = .02; time, F(2, 42) = 1.69, p = .20, ηp2 = .07; and a nonsignificant interaction between training and time, F(2, 42) = 1.74, p = .19, ηp2 = .08.

Basic Arithmetic

The 2 × 3 ANOVA revealed only a significant main effect of time, F(2, 42) = 12.35, p < .0001, ηp2 = .37, indicating improved performance over time across both training groups and likely indexing a test-retest effect. The main effect of training, F(1, 21) = 1.79, p > .19, ηp2 = .08, and the interaction between training and time, F(2, 42) = 0.88, p > .26, ηp2 = .04, were not significant.

Standardized Mathematical Abilities

Because the WRAT-4 was administered only at pretest and post-40, we used a 2 × 2 ANOVA to examine training-related effects on that standardized math performance. We found a marginal interaction between training and time, F(1, 22) = 3.09, p = .09, ηp2 = .12. The main effects of training, F(1, 22) = 2.93, p > .10, ηp2 = .12, and time were not significant, F(1, 22) = 0.17, p > .68, ηp2 < .01. As illustrated in Figure 2A, there was a trend toward greater improvement in the action group between pretest and post-40 than in the control group. We found significant training-related differences when comparing the BASI scores of the two training groups at post-25. To assess which aspect of mathematics was most affected by training, we considered the Math Application (i.e., solving word problems, reading and interpreting data) and Math Computation (i.e., whole number, fraction, and decimal arithmetic) scores on the BASI separately (see Figure 2B). A 2 × 2 ANOVA with training and math assessment (Math Application vs. Math Computation) as factors revealed a significant interaction between training and math assessment, F(1, 21) = 6.59, p = .02, ηp2 = .24, and no main effects of training, F(1, 21) = 1.38, p > .25, ηp2 = .06, or math assessment, F(1, 21) = 0.01, p > .91, ηp2 < .01. This interaction appeared to be due to a lack of training-related differences in Math Application, F(1, 21) = 0.05, p > .83, ηp2 < .01, and significantly greater performance of the action group compared to the control group for Math Computation, F(1, 21) = 5.92, p = .024, ηp2 = .22.

Performance on standardized math assessments for action and control groups.

Attentional Control

Numerically, the two groups were unfortunately not equated at pretest, with the control group underperforming as compared to the action group (Table 1). On the basis of the existing literature, we expected to find an interaction between training and time. However, the 2 × 3 ANOVA revealed only a significant main effect of time, F(2, 44) = 5.53, p < .01, ηp2 = .20, due to improved performance over time across both training groups, in line with a test-retest improvement. The main effect of training, F(1, 22) = 0.72, p > .40, ηp2 = .03, and the interaction between training and time, F(2, 44) = 0.17, p > .84, ηp2 < .01, were not significant.

Standardized Language and Fluid Reasoning

Means and standard errors of these control measures as well as effect sizes of comparisons similar to those listed in Table 1 are given in the Supplementary Table S2.

Standardized Language

The 2 × 3 ANOVA revealed no significant main effects of training, F(1, 21) = 0.39, p > .53, ηp2 = .02, or time, F(2, 42) = 0.22, p > .80, ηp2 = .01, and no significant interaction between training and time, F(2, 42) = 0.03, p = .97, ηp2 < .01, on participants’ verbal composite scores.

Fluid Reasoning

The 2 × 3 ANOVA revealed no significant main effects of training, F(1, 21) = 0.21, p > .65, ηp2 = .01, or time, F(2, 42) = 0.03, p > .97, ηp2 < .01, and no significant interaction between training and time, F(2, 42) = 0.77, p > .46, ηp2 = .04, on participants’ fluid reasoning scores.

Measure of Game Flow

A 2 (training group) × 2 (time period: flow before 25 hr and flow after 25 hr of game play) repeated-measures ANOVA with total score on the flow scale as the dependent measure yielded a main effect of training only, F(1, 15) = 5.26, p < .05; all other ps > .16. Overall, participants assigned to the control group rated their video game experience as providing them with a higher game flow than those assigned to the action group (142.77 vs. 123.34). This is an important demonstration that the trending benefits of the action group did not derive from the action video game training creating greater engagement than the nonaction, control video game.

Correlations Between Gains in Attentional Control, Foundational Number Processing, and Standardized Mathematical Abilities

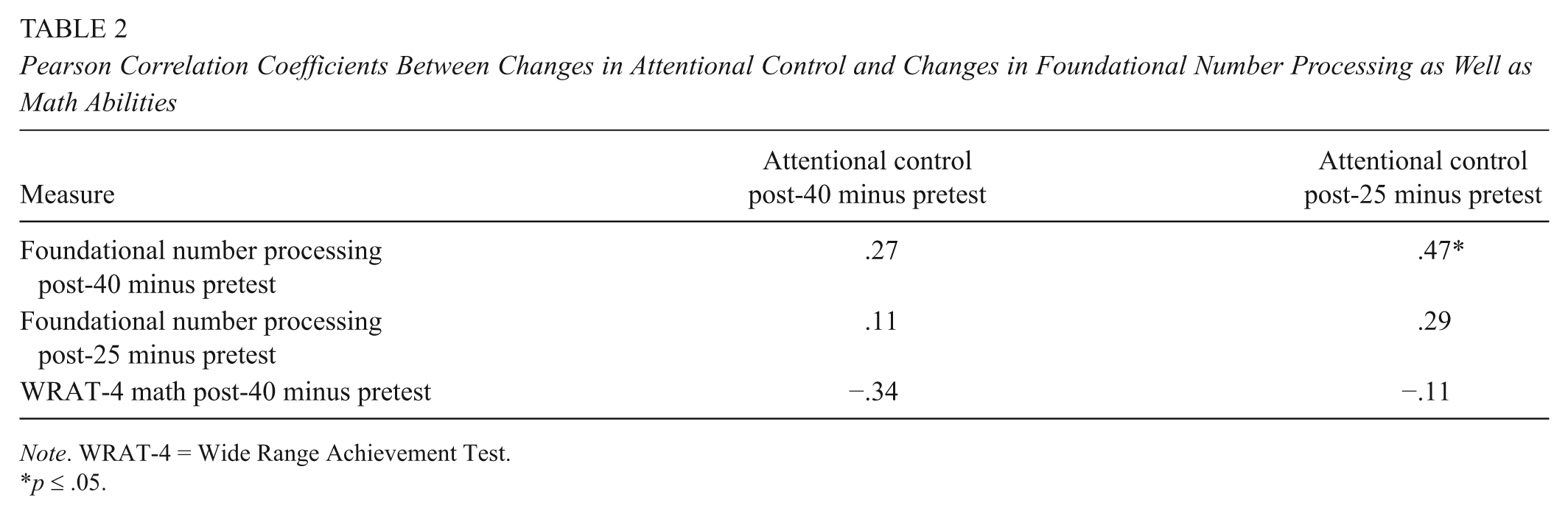

Because we hypothesized that attentional control may be linked to foundational number processing and possibly standardized mathematical abilities, we examined the correlations between changes in attentional control and changes in foundational number processing and standardized math abilities, respectively. To increase power, we collapsed across both training groups. To ensure that none of our results were affected by outliers, we calculated Cook’s distance, and any data points with a Cook’s distance > 1 were excluded from our analyses. As can be seen in Table 2 and Figure 3, only changes in attentional control between post-25 and pretest are positively correlated with changes in foundational number processing between post-40 and pretest. For the standardized mathematical abilities, we were able to look only at change scores from pretest to post-40. As can be seen in Table 2, none of the changes in attentional control were correlated with changes in WRAT-4 math scores between post-40 and pre-test.

Pearson Correlation Coefficients Between Changes in Attentional Control and Changes in Foundational Number Processing as Well as Math Abilities

Note. WRAT-4 = Wide Range Achievement Test.

p ≤ .05.

Scatterplot depicting the association between changes in attentional control between pretest and post-25 and in foundational number processing between pretest and post-40.

Discussion

We were inspired by the surprising observation that both action video game play and mathematics activities may rely on the same frontoparietal network of brain areas (Arsalidou & Taylor, 2011; Bavelier, Achtman, et al., 2012). Here, we assessed the effect of action video game training on adults’ mathematical abilities. Given the previously documented advantage of video game play on the ability to extract more information from the environment and thus execute more informed decisions (Green et al., 2010; Green & Bavelier, 2015), we predicted our action video game intervention would be most impactful on foundational number-processing skills, as these tasks share more surface features with action video game play. Furthermore, action video game play and foundational number tasks may rely on similar cognitive abilities (e.g., spatial attention), and they both may require the efficient decoding of noisy evidence to reach a decision (e.g., an approximate estimate). In addition, we tested for possible further transfer to more formal aspects of mathematics.

The tasks that we used to assess foundational number processing required participants to make simple number comparisons, to estimate dot quantities, to perform approximate arithmetic, and to order numbers both with respect to each other and on a spatial continuum. All of these skills are present in early childhood and provide a foundation for learning complex math in school (Chen & Li, 2014; Fazio, Bailey, Thompson, & Siegler, 2014; Lyons et al., 2014; Schneider et al., 2016). In contrast to our hypothesized effects, we did not find any signs of training-related improvements in these foundational number-processing tasks. Repeated training of foundational number skills through computerized or traditional games has shown significant transfer effects of improved math skills in both children and adults (Kucian et al., 2011; Link, Moeller, Huber, Fischer, & Nuerk, 2013; Park, Bermudez, Roberts, & Brannon, 2016; Park & Brannon, 2013, 2014; Ramani & Siegler, 2008; Siegler & Ramani, 2008). However, even when training targets foundational number-processing skills in adults, the improvements in foundational number processing itself are minimal at best (DeWind & Brannon, 2012; Park & Brannon, 2014). Thus, it is possible that it is difficult to improve foundational number-processing skills in adulthood and even more so when the training does not target these skills directly (as in the case of action video game training).

The failure to find an effect on foundational number processing is difficult to interpret in the present study given our failure to also reproduce the known effect of action video game play on attentional control. Unlike previous studies using action video game training, we did not find any significant improvements in attentional control. In accord with previous studies, action video game trainees showed improved attentional scores with training; however, unlike previous studies, the control trainees also showed improvement. The source of this difference with previous studies is unclear as the tests used here are similar if not identical to those used previously. One difference of potential note is that participants in the control group started with worse attentional control at pretest than those in the action group, allowing for possible regression to the mean in that group looking like improvement. It is not the first study to document a failure of action video game play to change attentional control, although of the 35 effect sizes recorded for intervention studies targeting attentional control, 24 (69%) show a positive effect (Bediou et al., in press).

Given that one of the main premises of our study was that foundational number processing may be linked to attentional control, we moved forward with testing this assumption in the current study. We correlated changes in attentional control over time with changes in foundational number processing. We found significant correlations between changes in attentional control from pretest to post-25 and foundational number processing from pretest to post-40. This finding indicates that changes in attentional control and in foundational number processing are related as hypothesized, especially the initial change in attentional control observed after 25 hr of training and the longer-term change in foundational number processing. This pattern reinforces the view that an intervention that successfully increases attentional control in one group to a greater extent than in another should also be expected to demonstrate an intervention effect on foundational number processing.

In our attempts to isolate which aspects of mathematical thinking are most affected by video game training, we found small improvements in some formal math abilities as a result of action video game play but not nonaction video game play. We found that after 25 hr of training, participants who had been trained on the action video game performed better on a standardized test requiring complex mathematical computations (BASI Math Computation) than those who had been trained on the nonaction video game. In contrast, no significant differences were observed on a standardized test requiring the application of complex mathematics to solve everyday problems (BASI Math Application). These findings suggest that it is the execution of mathematical operations, and not the identification of the correct operations and reasoning about them, that may be strengthened by action video game training. After 40 hr of training, participants who had been trained on the action video game still showed higher scores on another standardized assessment of complex math skills (WRAT-4), but the difference between the two training groups was not statistically significant. Thus, although our results are weak, especially given the lack of correction for multiple comparisons, and although we certainly would not suggest that action video game training should be used to train math abilities, these results suggest that repeated action video game play does not exert any negative influences on players’ math abilities compared to repeated nonaction video game play.

Our two control domains, verbal abilities and fluid reasoning, did not show any significant improvements as expected, indicating that the changes in math scores found above are not just due to a general trend for greater performance improvements on our standardized tests in participants trained on action video games as compared to those trained on nonaction video games.

Limitations and Future Directions

Despite our best efforts, there are a number of limitations to our study. First, the sample reported here is fairly small and consists of only those participants who agreed to continue training for 40 hr. Although there were no significant differences between these participants and those who completed only 25 hr of training on any of the pretest measures (see Supplementary Table S1), these participants may have been particularly motivated to continue the training or may have other characteristics that distinguish them from the larger sample or the general population, limiting the generalizability of our findings. However, it is noteworthy that participants in the control group similarly elected to continue for 40 hr and consistently reported greater flow than in the action video game training group but did not show greater improvements than their action-trained peers on any of our measures.

Second, this sample consisted of a carefully selected group of adults with limited exposure to math. On the one hand, it is possible that adults with greater experience with math either through education or work may not benefit from action video game training in the same way as described here. On the other hand, it is possible that children or adolescents who are still learning math and whose brains are more plastic may show greater benefits.

Third, we administered all tasks in a fixed order. We used the same order for both training groups, which helps ensure that none of the training effects can be explained by variations in exposure to previous tasks or fatigue. However, the particular performance and interrelations between tasks may be affected by the particular task order, and follow-up work would be needed to disentangle these relations.

Finally, we compared only two active groups. A third, passive control group that completed only the pretest, post-25, and post-40 assessments would be needed to provide an independent measure of test-retest effects. Indeed, a possible worry is that playing video games may have hurt mathematical abilities, leading to smaller test-retest improvements in our two video game–trained groups than expected. We note that the standardized math assessment used at post-25 (BASI Math Computation and Math Application subtests) was administered only once, eliminating any potential test-retest effects that may influence our results and arguing against a negative impact of action video game play, given the two groups had equal formal math abilities on the WRAT-4 at pretest and enhanced BASI Math Computation at post-25. In accordance with this view, the WRAT-4 publisher reports an average alternate-form delayed test-retest difference on the math subtest of 1.1 points (average first score = 100.7, SD = 15.0; average second score = 101.8, SD = 15.2). We found an average math score difference between pretest and post-40 of 1.78 across all of our participants. Importantly, participants in the action video game training group scored an average of 108.6 (SD = 18.06) points at pretest and 116.5 (SD = 15.58) at post-40, suggesting that they performed slightly better than would be expected by test-retest alone. In contrast, the control group scored an average of 101.9 (SD = 14.68) points at pretest and 98.2 (SD = 11.13) at post-40—average scores that are well within the range expected, based on the published norms. Thus, mathematical abilities as measured by the WRAT-4 were clearly not hindered by playing video games, especially the action video game.

Overall, this study shows that a training regimen that targets attentional control in a completely non-numerical context (i.e., action video games) shows some, albeit limited, transfer to mathematical abilities. First, attentional control improvements were observed to correlate with foundational number processing, providing an interesting pointer for future studies. It is likely that such effects may be, however, easier to evaluate in children than in adults. Second, among the formal mathematical abilities, mathematical computations improved more after action video game play, although the effect size of this effect remains extremely modest. For now, this study demonstrates that despite the widespread skepticism regarding the exposure to action video games, they are not detrimental to mathematical abilities.

Footnotes

Acknowledgements

This research was supported by National Science Foundation (NSF) Award No. 1227168 to DB and JH, NSF Award No. 1109366 to DB, and National Institute of Child Health and Human Development Grant R01 HD057258 to JH. PCL is supported by the Luxembourg National Research Fund (FNR-ATTRACT/DIGILEARN).

Authors

MELISSA E. LIBERTUS is an assistant professor at the University of Pittsburgh. Her research focuses on mathematical cognition and its development.

ALLISON LIU is a graduate student at the University of Pittsburgh. Her research focuses on mathematical cognition.

OLGA PIKUL is a research assistant at the University of Rochester. Her research focuses on perception.

THEODORE JACQUES is a graduate student at the University of California–Riverside. His research focuses on the malleability of cognitive skills.

PEDRO CARDOSO-LEITE is an ATTRACT fellow (assistant professor) at the University of Luxembourg. His research focuses on the impact of interactive digital technologies on cognition.

JUSTIN HALBERDA is an associate professor at Johns Hopkins University. His research focuses on mathematical cognition and perception.

DAPHNE BAVELIER is a professor at the University of Geneva. Her research focuses on the impact of videogame play on cognition and perception.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.