Abstract

This article extends existing research on school effectiveness by focusing on identifying the combination of programs, practices, processes, and policies that explain why some high schools in a large urban district are effective at serving low-income students, minority students, and English language learners. Using a mixed methods study of high schools selected on the basis of value-added indicators, we conducted a comparative case study to understand what differentiated schools that “beat the odds” from those that struggled to improve student achievement. We found that the higher-value-added schools enacted practices that integrated academic press and support in ways that fostered student efficacy and engagement. These findings contribute to the larger literature on school effectiveness by highlighting the importance of the student culture of learning and noncognitive student characteristics. They do so by identifying student ownership and responsibility as a critical area for research on school effectiveness and improvement.

Keywords

A broad consensus is emerging about the components of effective schooling—including learning-centered leadership, rigorous curriculum and instruction, professional culture among teachers, personalized learning for students, systemic performance accountability, and connections to external communities (Bryk, Sebring, Allensworth, Luppescu, & Easton, 2010; Goldring, Porter, Murphy, Elliott, & Cravens, 2009). While most of the research on school effectiveness is focused on elementary schools, generalization to secondary schools is difficult, as they are larger and more organizationally complex (Firestone & Herriott, 1982; McLaughlin & Talbert, 2001). Much of the research on urban high school reform has clustered around organizational or structural features, such as size, use of time, and how students and teachers are organized within that time (Bloom & Unterman, 2014; Lee & Burkam, 2003; Rice, Croninger, & Roelke, 2002). Yet we know that substantially improving educational outcomes requires understanding that the components of effective schools are multifaceted and integrated (Creemers & Kyriakides, 2006; Preston, Goldring, Guthrie, & Ramsey, 2016).

This article reports the findings from an intensive case study of a large urban district, which focused on identifying the combination of programs, practices, processes, and policies that explain why some high schools are effective at serving low-income students, minority students, and English language learners. This research was aimed at answering the following research question: Within a single district’s organizational and resource environment, what distinguishes (1) high schools that “beat the odds” for students from traditionally lower-performing groups from (2) schools that struggle to improve the achievement and graduation rates of these student populations? This type of research within a single district is particularly important now, with growing calls for researchers to understand not only “what works” but how the social and organizational context shapes school effectiveness (Means & Penuel, 2005). This article answers this call by, first, using value-added models to identify high schools that are producing higher and lower outcomes than their students’ demographics and prior performance would predict and, second, using rigorous and detailed mixed methods evidence to understand how the higher-value-added (HVA) schools enacted components identified by prior research on effective schools.

Essential Components of Effective Schools

This study was guided by a framework integrating “essential components of effective schools,” which is based on the literature on high school effectiveness (Bryk et al., 2010; Dolejs, 2006; Goldring et al., 2009). This framework suggests that schools succeed not because they adopt piecemeal practices that address these components but because they organize a cohesive system of aligned practices (Preston et al., 2016).

Learning-centered leadership focuses on the degree to which formal and informal school leaders establish a common vision in their school, focused on learning and high expectations (Murphy, Goldring, Cravens, Elliott, & Porter, 2007; Spillane, Halverson, & Diamond, 2001). Prior research suggests that student learning increases when leaders articulate an explicit school vision, generate high expectations and goals for all students, and monitor their schools’ performance (Leithwood & Riehl, 2005; Murphy et al., 2007). Principals’ effects on student learning are also likely mediated by their efforts to obtain resources, improve working conditions, and hire high-quality personnel (Horng, Klasik, & Loeb, 2010; Louis, Leithwood, Wahlstrom, & Anderson, 2010). One aspect of leadership is developing connections to external communities, which refers to the ways in which schools establish meaningful links to parents and build relationships with local social services (Sanders & Lewis, 2004).

Rigorous and aligned curriculum defines the provision of content in core academic subjects, focusing on the topics that students cover as part of the curriculum, as well as the cognitive skills they are expected to demonstrate in each course (Gamoran, Porter, Smithson, & White, 1997). Closely integrated with curriculum is having quality instruction that focuses on the teaching strategies used to implement the curriculum and help students reach curricular standards (McLaughlin & Talbert, 2001; Weglinsky, 2002). Effective practices identified in this literature include collaborative group work, inquiry-based learning (Staples, 2007), formative assessment (Brown, 2008), and classroom climate and structures that allow students to try and fail without negative consequences (Alper, Fendel, Fraser, & Resek, 1997).

Personalized learning connections are defined as connections between students and adults that provide students with individualized attention that is targeted at their unique circumstances and learning needs (Lee & Smith, 1999) and that encompasses the development of students’ sense of belonging at school (Walker & Greene, 2009). Students’ connections to school can fall on a continuum from strong and robust (leading to connectedness) to weak and nonexistent (leading to alienation; Hallinan, 2008; Nasir, Jones, & McLaughlin, 2011).

A strong culture of learning and professional behavior captures the extent to which teachers take responsibility for student learning and the degree to which they collaborate in their own learning efforts through activities such as schoolwide or department-level professional development (Lee & Smith, 1996; Little, 1982), including the development of professional learning communities and other communities of practice that define norms of engagement, commitment, and heightened professionalism for learning (Newmann, King, & Youngs, 2000).

A focus on systemic performance accountability and use of data refers to having outcomes take precedence over processes in the evaluation of academic performance and to using data to inform classroom decisions (Elmore, Abelmann, & Fuhrman, 1996; Kerr, Marsh, Ikemoto, Darilek, & Barney, 2006). Although research on data-based decision making in high schools is scant, a consistent finding is that where data use is effective, the power to make data-based decisions is diffuse, collaborative, and pervasively integrated into practice (Ingram, Seashore Louis, & Schroeder, 2004; Spillane, 2012).

This framework emphasizes that it is not the implementation of a reform to improve any single component that leads to school effectiveness but rather the integration and alignment of school processes and structures across these eight components. Indeed, emerging research on effective schools suggests two important elements that cut across these components: how schools develop integrated practices of academic press and support and the implications of these components for the student experience in high schools.

Research on academic press suggests that when schools create environments that push students to achieve, student achievement increases (Bryk et al., 2010; Lee & Smith, 1999; Murphy, Weil, Hallinger, & Mitman, 1982; Shouse, 1996). Academic press draws on the components of leadership, curriculum, instruction, culture of learning, and shared accountability, as they emphasize the need for cognitively challenging curricula and a shared focus on high expectations for all (Boaler & Staples, 2008; Marks & Louis, 1999). As schools press students to excel, they need to provide the resources needed to succeed—that is, to meet the demands created by academic press. Academic support can take many forms, including elements of curriculum, organization of time, use of personnel to provide personalized support, and the use of authentic and formative assessment (Carbonaro & Gamoran, 2002; Lee & Burkam, 2003; Legault, Green-Demers, & Pelletier, 2006). Classroom instruction can also provide critical academic support through collaborative, engaging activities that are a source of empowerment for students and that are focused on higher-order thinking skills (Brown, 2008; Kelly & Turner, 2009; Staples, 2007). Furthermore, evidence exists that teachers can effectively teach students strategies for engaging cognitively and behaviorally (Anderman, Andrzejewski, & Allen, 2011).

Recent research also points to the importance of students’ noncognitive characteristics, such as their engagement and self-beliefs (Duckworth, Peterson, Matthews, & Kelly, 2007; Farrington et al., 2012). Students who have strong, positive mind-sets and a high degree of self-efficacy tend to exhibit more positive academic behaviors, choose more difficult tasks, expend greater effort, exhibit more self-regulatory strategies, and have higher achievement across academic areas (Farrington et al., 2012; Pajares & Urdan, 2006; Zimmerman, 2000). Students with high academic self-efficacy also demonstrate higher engagement (Fredricks, Blumenfeld, & Paris, 2004; Yazzie-Mintz & McCormick, 2012). Furthermore, effective high schools attend to these noncognitive aspects of student development to establish systematic supports that personalize student learning experiences (Rutledge, Cohen-Vogel, Osborne-Lampkin, & Roberts, 2015).

Despite substantial research on these components of effective schools, little is known about the ways in which educators develop, integrate, and sustain them. Thus, the goal of our research was to examine what combination or enactment of these components distinguishes HVA high schools from lower-value-added (LVA) high schools in the same district context, paying particular attention to the types of programs, practices, and processes that support better-than-expected outcomes for students from traditionally lower-performing groups. Given the challenges of improving schools at scale, research that seeks to understand how practices are enacted is crucial (Means & Penuel, 2005).

Methods and Data

District and School Selection

This study took place in a large district that met two criteria: (1) It was in a state that had a sufficient history of high school assessments to allow calculation of current and 3-year average school-level value-added measures, and (2) it had a sufficient number of HVA and LVA high schools for which differences in school-level measures would likely be statistically significant. In 2011, the district served >80,000 students, who were predominantly Hispanic (59%), African American (23%), and economically disadvantaged (76%). Texas has a long history of test-based accountability, beginning with the Texas Assessment of Basic Skills in 1980 and the first school accountability ratings in 1993. The district had responded to the accountability system by developing detailed curriculum frameworks, benchmark assessments, and recommended activities that it expects teachers to implement across the district.

Once the district was identified, four high schools were selected on the basis of school-level value-added measures for an in-depth comparative case study, with the goal to select two schools in the upper end of the distribution of school-level value-added scores and two schools in the lower end of the distribution. Value-added measures were used for this purpose because they are designed to measure overall school effectiveness, controlling for factors associated with student achievement, including prior student achievement and student characteristics that are associated with growth in student achievement but not under the control of schools (Meyer & Dokumaci, 2014). Specifically, we estimated value added via a fixed effects model with controls for pretest measurement error. We used an empirical Bayes approach to compute shrinkage estimates of value added to ensure that schools with fewer students are not overrepresented among the highest- and lowest-valued-added schools due to error variance. While value-added measures are controversial when used for high-stakes decisions, our school-level analysis produced estimates of each school’s contribution to student achievement (Grissom, Kalogrides, & Loeb, 2014) and were more reliable than teacher-level value-added measures (Harris, 2011). We further used 3-year estimates to increase reliability. Additional information about the value-added measure estimates can be found in Appendix A of the online supplement. Finally, the team visiting the schools for the case studies did not know prior to the first visit whether the school was selected as an HVA or LVA school. The ongoing analytic process made this blindness to value-added measure status impractical to maintain; thus, school teams knew whether the school was an HVA or LVA school during the second and third visits.

Table 1 provides data on the demographic characteristics and average value-added rankings for each case study school. To protect the identity of the schools and participants, we have provided ranges and used pseudonyms. We refer to the schools as either LVA or HVA on the basis of where they fell in the distribution of value-added scores. Mountainside and Valley tended to have LVA scores versus other district high schools, and Lakeside and Riverview tended to have higher scores.

Demographic Characteristics and Performance Indicators of Case Study High Schools

Note. The state accountability rating and graduation rate were the most recent data available at the time of school selection. Demographics represent the composition of the schools at the time of our visits (2011–2012). Data come from the Texas Education Agency (http://ritter.tea.state.tx.us/perfreport/snapshot/2011/state.html). The value-added ranks are derived from 3 years of data of school-level value added in math, science, and reading. The most recent year was 2010–2011. LVA = lower value added; HVA = higher value added.

Data Collection

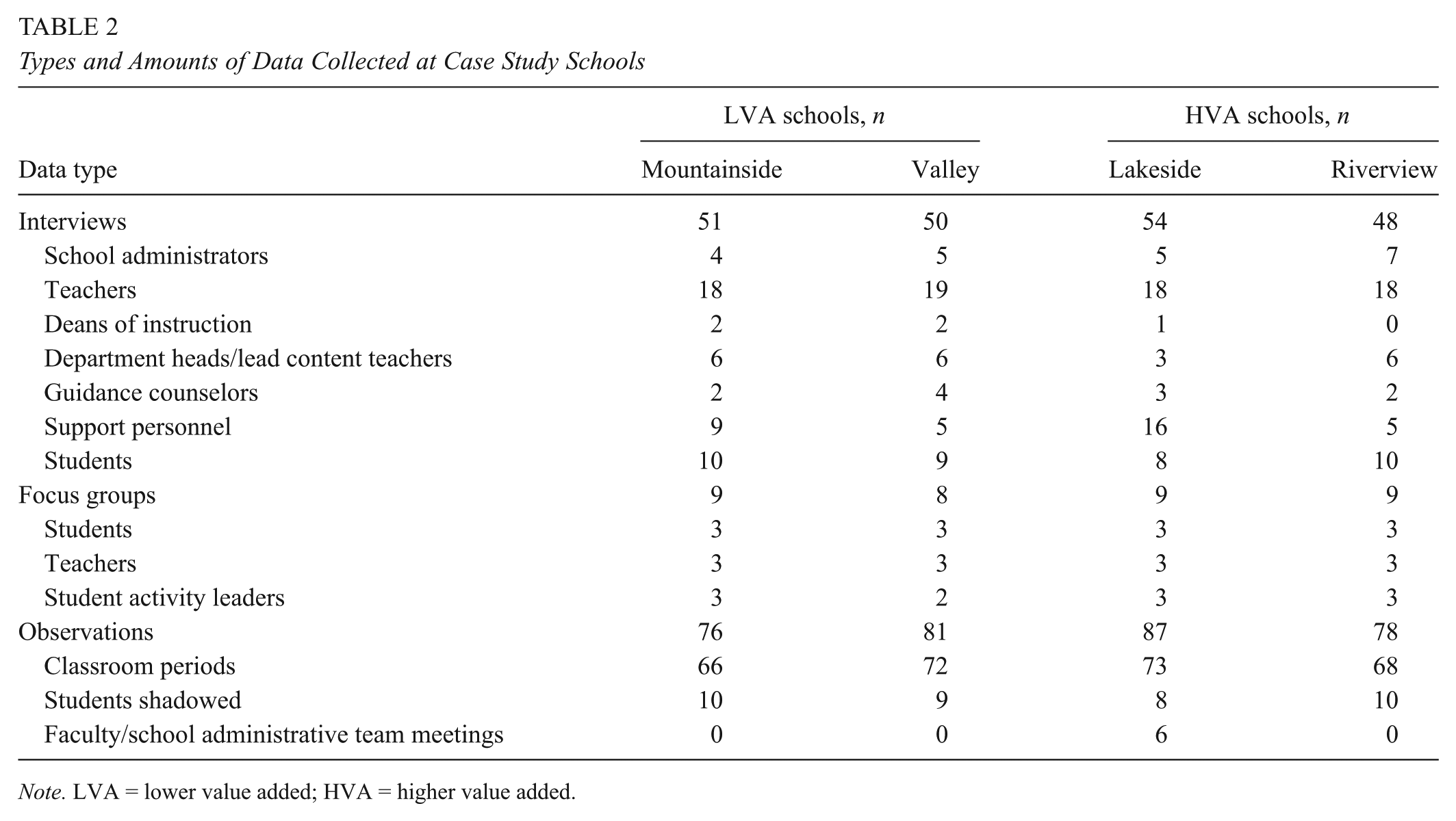

Data collection and analyses were guided by our core research questions: What are the distinguishing characteristics between HVA and LVA schools? How did these differences develop, and how are they orchestrated and supported? Fieldwork data were collected in these four high schools over three weeklong visits during the 2011–2012 school year. Data collection methods included focus groups, interviews, and classroom observations. Data collection primarily focused on 9th- and 10th-grade students and teachers in English, mathematics, and science because those are the grades and subjects that produced most of the assessment data used to calculate the value-added scores, although we balanced this focus with other data from key staff and a cross section of the school to gain a comprehensive understanding of the schools. Table 2 shows the amount of data collected by school.

Types and Amounts of Data Collected at Case Study Schools

Note. LVA = lower value added; HVA = higher value added.

All administrators, counselors, and deans of instruction were interviewed. Six teachers in each of the mathematics, English language arts, and science departments were interviewed and observed teaching in each school. These teachers were chosen because they taught classes designed for 9th- and 10th-grade students. We interviewed all lead content teachers (part-time, school-based coaches) in these three subjects. Other personnel were sampled according to their specific roles in the school, such as special education and limited English proficiency coordinators, or through snowball sampling to interview personnel that other participants identified as serving key roles in the school.

We conducted three types of focus groups. First, teachers in grades and subjects that were not sampled for individual interviews were invited to participate in focus groups. These groups were designed to get a wider representation of teachers, including core content, electives, special education, and career/technical education. Each focus group had between 2 and 11 teachers, with the modal focus group having 8 teachers. The teachers had between 1 and 29 years of experience. Second, we conducted focus groups with students who had been selected on the basis of grade level and course-taking patterns. We focused on students in Grades 10 to 12 because they were more familiar with their schools, although some 9th graders did participate in focus groups. Student focus groups were organized to include one for students taking primarily “advanced” courses, one for students in “general” courses, and one for students in “remedial” classes. Students were selected per the convenience of their schedules with the goal of having a cross section in each focus group that broadly represented the demographics of students in that course selection pattern. Each focus group had between 4 and 15 students. Finally, because our initial data analysis highlighted the importance of extracurricular activities in engaging students, we conducted focus groups with personnel who supervise these activities. Each focus group had between three and seven activity leaders, and they represented a variety of activities, such as sports, yearbook, student clubs, JROTC, and academic competition teams.

We observed and videotaped a total of 274 class periods of English language arts, mathematics, and science. The same teachers who participated in the interviews were the ones observed. Four class periods per teacher were video recorded over multiple time points and coded by trained observers. We used an observational tool called the Classroom Assessment Scoring System–Secondary (Pianta, Hamre, & Mintz, 2011) to assess the quality of teacher-student interactions in the classroom. We observed and coded the following domains and dimensions: emotional support (positive climate, negative climate, teacher sensitivity, regard for adolescent behavior), classroom organization (behavior management, productivity, instructional learning formats), instructional support in the classroom (content understanding, analysis and problem solving, quality of feedback, and instructional dialogue), and student engagement.

Regular class period observations were divided into two or three segments of about 25 min, depending on the length of the class. Due to teacher absences and attrition from the study, between 1 and 12 segments were coded for each of the 72 teachers, and the 274 classroom videos represent a total of 603 observation segments for Classroom Assessment Scoring System–Secondary coding. To assess interrater reliability, 20% of the videos were randomly selected for double rating. Of the 127 segments that were double rated, overall exact point reliability was 43%, and 1-point reliability was 90%. The data presented here focus on the student engagement domain. Complete results from the observations are in Appendix B of the online supplement.

In addition to the interview and observation data, we collaborated with the district to administer surveys to teachers and students. Due to space considerations, this article focuses on evidence from the fieldwork. Additional details on the survey and a discussion of how those data support and complicate the findings described here are found in Appendix C of the online supplement (Smith, Cannata, & Taylor Haynes, 2016).

A major limitation of this study is the focus on a single year of data collection. While we use multiple years of value-added measures to ensure that the school selection process is robust to yearly shifts in outcomes, all data used to understand differences in HVA and LVA schools come from a single school year. Since part of data analysis is understanding the processes by which schools enacted practices that allowed them to become high performing, the analysis would be even more robust if we used multiple years of fieldwork to create a longitudinal case study of how the social and organizational contexts of these evolved over time.

Data Coding and Analysis

The case study analyses were divided among four school-focused teams that systematically coded the transcribed data using NVivo software. Each school team was responsible for coding and analyzing all data collected about its school and writing a comprehensive case report. We used the analytic technique of explanation building (Yin, 2009) to understand how and why each essential component developed (or did not develop) in the schools. The a priori coding scheme was based on the essential components described earlier and cross-cutting themes that were identified in our fieldwork notes (i.e., goals, trust, locus of control, structures that support or inhibit goals, rigor and academic press, student culture of learning, and student responsibility). Using this coding scheme, we engaged in directed content analysis (Hsieh & Shannon, 2005). This was combined with an emergent coding scheme specific to each school, which was grounded in the data (Corbin & Strauss, 2008). The emergent coding scheme included themes around academic press and student culture of learning. School teams met weekly to check for consistency in applying codes and discuss emerging themes. We held cross-case comparison meetings every other week. These meetings had two goals: to ensure that definitions were being applied consistently and reliably across schools in the coding process and to flag emerging findings to begin making comparisons across schools.

Once all data were coded, school-level teams developed a narrative of each essential component. Coders strove to provide a thorough, well-supported set of claims about the drivers and/or inhibitors of essential components, as well as the practices through which these were enacted. With the school-level case reports as our base, we held an intensive set of cross-case meetings to look systematically across the cases to build cross-case explanations. This process was used to note the presence or absence of differences between HVA and LVA schools. Each team member read all four case reports in their entirety to ensure that the team as a whole thoroughly understood each case. Next, multiple people were assigned to conduct cross-school analyses of particular components, cross-cutting themes, key findings, and the surveys and administrative data. The purpose was to identify the differences between the HVA and LVA schools and explain what contributed to these differences in the context of this district.

Results

Although our framework of essential components guided the analysis, the pattern of differences between the HVA and LVA schools did not surface any single essential component as a distinguishing characteristic between schools. Instead, our analyses identified a thread that tied several components together: learning-centered leadership, rigorous curriculum, personalized learning connections, and culture of learning. The thread that ran through these components focused on systemic practices that integrated academic press and academic support to achieve increased student engagement, as the primary distinguishing characteristic between the HVA and LVA schools. Although the specific practices varied between the two HVA schools, both schools had concerted efforts to develop an environment of academic press (the encouragement of students to succeed) and support (resources to foster academic success). In the sections that follow, we describe how the HVA schools leveraged academic press and support for students while building a culture of learning. In doing so, we also provide evidence for how these were lacking in the LVA schools.

Maintaining Academic Press

Our data suggest that both HVA schools had stronger and more systemic practices in place to press students to achieve. One HVA school, Lakeside, had adopted “student responsibility” as its core mission. As one participant described this focus emerging 4 years ago, A group of us got together and said, you know, [our school] is really at a place kind of in the middle of the district. . . . We decided a lot of it was because of adults. That we had created systems and trained students to either consciously or unconsciously in these systems to be very dependent upon us for their learning and that as long as that was the program, that there was a ceiling to that.

Other stakeholders agreed that the school had taken on this challenge. This focus is evident in the adoption of “Effort Required” as a school motto, a statement visible on posters around the school and T-shirts worn by faculty and students. One teacher described how the motto was enacted: It has to be with the responsibility that we’re putting back on the students. This year’s motto is effort required so we have an intense way of keeping up with that in several check systems to make sure that the students are putting forth the effort necessary to succeed in class. . . . And if they’re not asking questions, they need to be able to explain the material when asked. So at any point in time, I can stop the class and have a student explain the entire lesson that we just went over. And if they refuse, you say, “Well, that’s [the Lakeside Code].” You need to be able to do this or be asking questions.

Participants at the other HVA school, Riverview, described two interrelated goals: promoting success in advanced academic courses and reducing the achievement gap. Their key lever was to provide greater learning opportunities to a broader array of students by systemically encouraging more students to enroll in Advanced Placement (AP) or honors-level courses. An administrator described their focus on getting more students enrolled in AP: We have a lot of honors programs and, and that’s been strong. . . . Any student can be a part of the AP program, and that’s one of the things that we really pushed is that there was at one point where it was kind of low in our minorities being in the AP program, but now I think we really have improved in that.

Another administrator described changing teacher assignments so that teachers taught advanced and on-level courses, rather than just one or the other, to equalize learning opportunities across courses: My focus is on the instruction for children, one that in on-level [classes] you have to teach the on-level kids and there is a same expectation of teaching in a pre-AP, AP classroom as there is an on-level. . . . About 7 years ago there was a real divide: you either taught AP, or you taught on-level.

When participants in the LVA schools were asked about school goals, there was agreement that student achievement was the main goal. Yet, in contrast to the HVA schools, where participants further described a systemic lever that their school was using to reach that goal, there was little agreement about the school focus related to learning in the LVA schools. In Mountainside, for example, teacher interviews and focus groups included mentions of “creating a safe haven for the students,” fostering relationships so that “[you can] reach kids before you teach kids,” and increasing “literacy efforts across subjects.” Indeed, several participants noted a distinct lack of shared goals. For example, when a teacher was asked to describe the principal’s goals, she replied, I don’t know what his goals are. . . . I mean other than what anybody else, you know, as far as their goals would be to make sure that students are performing statistically, you know, when you get data back from your standardized test, but what his goals are, I’m kind of unsure of that.

Participants at Valley described multiple goals in interviews and focus groups, from applying disciplinary standards consistently to developing positive relationships between teachers and students and “getting students to think about going to college.” The principal said that her main goal is increasing rigor; yet, she admitted to using “backdoor” means for improving academic rigor, and no teacher mentioned this as a goal.

The importance of mechanisms to maintain academic press was apparent in how participants across HVA and LVA schools spoke about pressures from the district. We learned from all four schools that, districtwide, there was pressure to avoid failing students and to increase graduation rates. The district had a number of structures that allowed students to make up courses that they failed and to make up absences, and it held teachers accountable when large numbers of students failed their classes. In the LVA schools, teachers described these structures as a barrier to holding students accountable. For example, a Mountainside teacher said, “If your student doesn’t succeed, then you’re a bad teacher and you have to make that student succeed. And this is fostered in an environment of coddling, babying, no accountability on the student.” An administrator in Valley described how hard it was to hold students accountable given the current district policies, saying that the school needs to eliminate things like third, fourth and fifth, sixth chances. At some point consequences mean nothing. . . . [The student] failed this class: “I know I’m going to get all these different options to retake it. I missed this many days of school, but I know there’s a way around it.” The kids know all the loopholes, so they’re more apt to not take things seriously the first time.

Participants in the HVA schools also described these pressures, but the schools had found ways to help teachers resolve the tension between supporting students and holding them accountable. Teachers in a Lakeside focus group indicated that the focus on student ownership has shifted, to an extent, the accountability from the teacher to the student: “That was a big slap in the face to like 40 of my kids, the first 6 weeks I don’t think they expected when I told them that they were gonna fail, that they were gonna fail.”

Systemic Processes to Provide Academic Support

Another characteristic that distinguished the HVA and LVA schools is that academic press was integrated with systemic processes to provide academic support. For one HVA school (Lakeside), the structures for integrating academic press and support that allowed faculty to feel that it was acceptable to fail students were enacted through systematic interventions known as the Lakeside Code, Learning Time, and the Intervention Committee. The Lakeside Code for student conduct especially illustrates how press and support are integrated, as the code focuses on academic and instructional behaviors rather than discipline or social behaviors. Students were required to demonstrate various behaviors through the code, including (1) attending school and being on time, (2) coming to class prepared and taking advantage of tutoring opportunities during Learning Time, (3) finding out required assignments after missing school, (4) being able to either explain what the teacher had emphasized or asking a question about what was not clear, and (5) attending Learning Time when they do not understand. Lakeside teachers and students described these behaviors as the heart of the student and teacher accountability mechanisms. For example, one teacher in a focus group compares Lakeside with a school in the district where she previously taught: Here I think I have more choice on what I’m doing and I can hold them, like [for] the books not coming to class, I can give them tardies for not having a book; I couldn’t do that at my last school. You know there wasn’t a lot of support in making the [students] responsible.

Students agreed that not following the Lakeside Code had consequences: “They make sure you suffer the consequences. They make sure that you follow the code of conduct.”

The Lakeside Code was also used as a tool to guide the provision of academic support. In addition to setting student expectations, it outlined expectations for teachers that were necessary for supporting student success. For example, while Element 4 requires students to be able to explain what is happening in a class period or ask a question, it requires teachers to “ask students to explain (rather than telling them answers) by randomly questioning them to explain, paraphrase, offer examples, follow up, agree or disagree and state why, or have a question” (Lakeside Code handout). Another academic support structure was Learning Time, an extended lunch period where students had opportunities to attend tutoring or ask teachers questions during the regular school day. One Lakeside teacher describes how she encourages students to come to Learning Time when they need extra help: I go around the room and see where they are. If they’re keeping up with the rest of the class or if they need my help. And if they need more help, then, I tell them, “You need to come in at [Learning Time] and we can work on this together.”

Evidence on the role of Learning Time in emphasizing academic press and supporting students also comes from students. One student in a focus group described the expectations of attending Learning Time: “It’s in the school’s code of conduct that you have to go to it. You’re failing a class, you have to go.”

Although the strong student culture of learning at the other HVA school, Riverview, was influenced by parental press for high academic standards, the school had implemented concerted strategies to broaden access to more rigorous coursework. The school’s efforts to broaden access by actively promoting students’ success with AP courses helped to maintain academic press, but it integrated academic support by creating instructional support mechanisms for students to succeed in these courses. One teacher in a focus group illustrated this philosophy when she said that the faculty is committed to taking students who are not honors students and “try to take those kids and we make them into honors students.” We found evidence of proactive strategies by teachers, administrators, and support staff at Riverview to identify and encourage more on-level students to enroll in honors-level courses, including faculty encouraging students to enroll in an AP class and faculty talking with counselors or administrators to identify students who could succeed in AP courses. For example, a counselor described how she advises ninth graders when choosing courses: We try to get them to take a high level, and you know, if in their seventh- and eighth-grade years they’re doing Bs, we try to encourage them to take honors classes. . . . We try to give them the opportunity, let’s go ahead and try the AP class, or try the honors class, and if it’s not something for you then we can back it down. And just to see if you can do it, because I’d rather you try it than . . . not.

Students verified this push to take more advanced courses, with one saying that her counselor said that she “[needs] to start taking more AP classes and getting some of my college credits while I’m still in high school.”

In contrast, the two LVA schools did not demonstrate a systemic focus on academic support. As noted, administrators in Valley reported that they were working to improve instructional rigor: “We need to progress on our level of rigor. . . . When you’re in a school like this that’s a pressure cooker, you know, it’s you have to balance pushing and laying off when they need to lay off.” Teachers, however, described a lack of focus on rigor. One teacher noted in an interview how rigor was lacking even in AP courses: We have a very weak AP program. . . . I don’t know why the College Board doesn’t come because I mean this is years of this and here’s what I hear, “Well, you know, the students [don’t perform].” . . . The students have been here for all of these years in honors, what are you not doing?

In Mountainside, the breakdown of basic school operations inhibited the effectiveness of the practices that the school was trying to put into place. When asked about challenges that the school faces, one administrator described “a lack of efficient systems. . . . Last year we would have some kids going to the wrong class for a whole six weeks before anybody found out about it . . . so just a lack of systems and lack of communication.”

Differences in academic support between the HVA and LVA schools are evident in classroom instruction. Interviews and focus groups with teachers provided evidence on how school personnel defined high-quality instruction and their efforts to attain instructional rigor, while classroom observations provide quantitative evidence on the level of instructional support. Teachers in HVA schools mentioned using questioning strategies or problem-solving activities to reach higher-order thinking skills, although most indicated that mastering this was an ongoing struggle. For example, a teacher in Riverview described how the school has “a real push to hands on learning and that is a difficult push for some people to make, but really to just continue focusing on it, focusing on it, focusing on it.” A teacher in a focus group in Lakeside described how the school defined quality instruction: Students speaking and teaching, which is complete opposite from my last school. At our last meeting we were told . . . not more looking and note taking, it’s them reading and talking about it and interacting with the content and no longer you in front.

While problem solving and student focus do not always lead to increased rigor, they show teachers’ efforts to get students engaged.

Teachers in the LVA schools, in contrast, attributed their students’ academic struggles to their lack of background knowledge, class size, and student behavior rather than to the quality of instruction, despite the fact that class sizes were similar in all case study schools and that Mountainside students were not necessarily more disadvantaged than students in the other case study schools. For instance, at Mountainside, teachers questioned the feasibility and appropriateness of teaching critical thinking to students with poor educational foundations. One teacher said in a focus group, Listen, okay, they don’t know how to read, right? I think most of us would feel that we’re operating like we’re already in a hole so they’re coming in without some of the skills. . . . These kids also lack a broad base of knowledge, like not just the knowledge the skills, like the science skills or the writing skills, but just like a knowledge of basic like culture, politics, history, what’s going on in the world that you can, should be able to draw.

At Valley, teachers mentioned having difficulty individualizing instruction and directing instruction toward the mid- to lower-level students. For example, when asked what he does when students are struggling, a teacher at Valley said in an interview, That’s a really hard thing to one, to answer it, and to also understand. We do have students that are struggling, and I don’t call them out in class. I ask them, you know, one on one. Most of the students that are struggling have a difficult time speaking in class . . . and so you don’t know how to help them.

Thus, we found evidence in both LVA schools that teachers were not taking responsibility for student learning.

Building a Student Culture of Learning and Engagement

A third feature that distinguished the LVA and HVA schools is that the HVA schools fostered student engagement and a culture of learning among students. In the HVA schools, teachers and administrators were focused on building a positive culture of learning among students. While faculty and staff in the HVA schools recognized that some students naturally had more ownership for their learning than other students, they described how it was possible to build student ownership through school actions. For example, a teacher in a Lakeside focus group said, I truly believe that if we all are on the same page and stick to our guns and we make them own it, we will adore the seniors in 4 years because these freshman will have had had 3 full years of owning it.

When asked what contributes to the difference in how the students behave, several teachers in the focus group replied, “We expect things of them. . . . We start school with we expect you to do well, we expect you to be the best, we expect you to do things and when they don’t, we’re all over them.” An honors geometry teacher at Riverview described how students rise to the expectations that the teachers give them: Now I’ve taught at-level geometry as well and they are not used to doing homework and it is very difficult to get it from them, but I have seen in some cases where once you expect specific students to do certain things, they will usually rise to the occasion and do it.

In contrast, one LVA school, Valley, focused on developing relationships between students and teachers but did not leverage these relationships to improve learning. When asked if there were any schoolwide programs to help students engage in their learning, an administrator said, I think that all falls pretty much on the teachers and how they can make it interesting and engage them. . . . Nowadays you got to be able to juggle balls and play Xbox to get their attention so, you know, you just got to make it fun and get them engaged that way.

Despite concerted school effort on building student-teacher relationships, this school culture-building activity stops before it affects instruction. Faculty and staff at the other LVA school, Mountainside, described a poor student culture of learning that was outside their control to change. In a focus group, when asked about consequences for students who do not meet academic standards, several teachers responded by describing a myriad of practices that work to lower expectations for students, with the result being that we give these kids a lot of chances and, from my perspective, it seems like we’ve been doing this long enough that the kids understand this is part of the culture, that they have a lot of chances to do stuff.

Teachers described the low culture of learning among students as being due to the school context, with one teacher saying in an interview “the challenges are the same as any other inner-city school. . . . The students have low motivation.”

The observational data on classroom instruction further support the finding that student engagement varied between HVA and LVA schools (see Table 3). Both HVA schools had significantly higher levels of observed student engagement when compared with the other case study schools, and one LVA school had significantly lower student engagement. Although the differences are small, they support the pattern of greater student engagement in the HVA schools versus the LVA schools. The fact that Valley had student engagement scores in between Mountainside (the other LVA school) and the two HVA schools supports this pattern, as it had some value-added measures that were closer to the district average.

Student Engagement Observation Measures by School

Note. Statistical significance was calculated on the basis of mean comparison tests between each case study school’s mean rating and the mean from the other schools combined. See Appendix A in the online supplement for predicted estimates of student engagement, adjusted for differences in grade, subject, visit (fall, winter, or spring) and whether the course was an honors/Advanced Placement course or a regular or “remedial” course.

Based on the Classroom Assessment Scoring System–Secondary.

p < .05. **p < .01.

Student Ownership and Responsibility: Weaving Through the Essential Components of Effective High Schools

We began this investigation by overlaying a framework of essential components of effective high schools on the practices of two HVA and two LVA schools in a large urban district. The contribution of the analysis described here is the specific details of how two HVA schools maintained a student culture of learning and engagement throughout their schools. What distinguished the HVA schools were actions that adults took to implement a culture of learning and build integrated systems of academic press and support. Leadership was distinguished between the HVA and LVA schools by their systematic focus on academic press and support to build student engagement, which is consistent with prior research about academic press on the importance of establishing shared high expectations for students (Boaler & Staples, 2008; Lee & Smith, 1999; Shouse, 1996). While all teachers described efforts to implement quality instruction, teachers in HVA schools were more likely to describe specific instructional practices promoted by the school as intending to build active engagement among students. While many aspects of the curriculum and accountability practices were common across the district, the HVA schools managed to enact these practices in ways that maintained high academic press and accountability for students. In this way, our findings reflect research on academic press and support that highlight the need to establish structures that push students to achieve high expectations (Kelly & Turner, 2009; Lee & Burkam, 2003; Legault et al., 2006).

Furthermore, this study contributes to the larger school effectiveness literature by emphasizing the importance of the student culture of learning and noncognitive student characteristics. We do so by identifying student ownership and responsibility as a critical area for school improvement, within the context of a district under strong accountability pressure for test scores and ambitious goals for instructional improvement. Increasing student ownership and responsibility means creating a set of norms and schoolwide practices that foster a culture of learning and engagement among students. Encouraging such a focus involves building students’ confidence and designing programs and practices that support students in taking responsibility for their academic success. We emphasize two activities important for this: (1) changing students’ beliefs and mind-sets to increase self-efficacy and (2) engaging students to do challenging academic work (Farrington et al., 2012; Fredricks et al., 2004). These two elements are seen in our case study, as teachers and administrators in both HVA schools developed integrated systems of academic press and support to generate greater academic engagement and a culture of learning among students.

While student ownership and responsibility are individual characteristics, noncognitive skills can be developed through systematic interventions (Durlak, Weissberg, Dymnicki, Taylor, & Schellinger, 2011). While the case study methods used in this study do not provide causal evidence that integrated strategies of academic press and academic increase student ownership and responsibility, these findings are consistent with this prior research. Teachers and other adults scaffolded the learning of academic and social behaviors that guided students in assuming responsibility for their academic success. While the focus on student responsibility was seen only in Lakeside, both of our HVA case study schools provided this scaffolding through integrated strategies of academic press and academic support.

By identifying student ownership and responsibility as a critical focus of research on school effectiveness, this study points to the importance of interdisciplinary research that brings together psychological research on factors that build student efficacy, engagement, and self-regulation skills, with sociological research on schools as organizations and enacting organizational change. While some models of school effectiveness include a focus on student motivation and participation in school (Bryk et al., 2010), the context of state standards and accountability has led to a greater emphasis on core instructional activities, such as aligning curriculum and administrator support of the instructional core (Rutledge, 2010). Indeed, the role of principals as instructional leaders is common to models of effective school leader practices, while aspects of the student learning environment tend to focus on safety and, again, on curriculum and instruction (Hitt & Tucker, 2016). Yet students enter high school with varying levels of efficacy and capacity to engage. Thus, the current student population in a school influences school culture and achievement (Bidwell, 2006). The culture of achievement is also shaped by norms and structures established by teachers and administrators. A focus on student ownership and responsibility requires leveraging organizational mechanisms for change in high schools, including structures of support and accountability coupled with cultural-building practices, to focus explicitly on the psychological and sociological factors of students that contribute to student achievement. For example, one recent study suggests that organizational structures established by the school can influence students’ use of self-regulation strategies, which in turn influence achievement (Adams, Forsyth, Dollarhide, Miskell, & Ware, 2015).

This study also contributes to research on school improvement, as it illustrates how there is more than one path to implementing student ownership and responsibility for learning. The two HVA schools had different contexts and school histories. For example, Lakeside had experienced several years of improvement, followed by stagnation; Riverview had a history of exemplary achievement for the most advanced students, while other student subgroups struggled. The specific mechanisms of academic support and press that were enacted in these schools thus reflected their distinct histories, even as they operated within the same district resource and programmatic context. Often, school improvement efforts search for “what works,” with the goal of spreading a proven program to more schools. Yet, there is increasing recognition of the fact that no single improvement approach will work for all students and that researchers and practitioners need to understand what works, for whom, under what conditions (Cohen-Vogel et al., 2015; Mazzeo, Fleischman, Heppen, & Jahangir, 2016). As the improvement process is nonlinear, educators need to recognize the strengths and limitations of their own contexts to effectively plan and adjust improvement plans (Thompson, Henry, & Preston, 2016). In this case study, we provide two illustrations of how schools used paths unique to their context to develop academic press and support systems that build a student culture of learning. Future improvement efforts should help schools develop a deep understanding of the underlying ideas of academic press and support while creating processes to achieve goals specific to their contexts (Cohen-Vogel, Cannata, Rutledge, & Socol, 2016).

Footnotes

Acknowledgements

This research was conducted with funding from the Institute of Education Sciences (R305C10023). The opinions expressed in this report are those of the authors and do not necessarily represent the views of the sponsor.

Authors

MARISA ANN CANNATA is a research assistant professor at Vanderbilt University’s Peabody College of Education and Human Development and Director of the National Center on Scaling Up Effective Schools. Her research focuses on teacher hiring, evaluation, and career decisions; district capacity to scale effective practices; and charter schools.

THOMAS M. SMITH is dean and professor in the Graduate School of Education at the University of California-Riverside, and executive director of the National Center on Scaling Up Effective Schools. His research interests include understanding how policy can support instructional improvement at scale, teacher commitment and turnover, and improvement research applied to education.

KATHERINE TAYLOR HAYNES is director of the Westminster School for Young Children and former assistant director of the National Center on Scaling Up Effective Schools.

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.