Abstract

Interactive communication in virtual reality can be used in experimental paradigms to increase the ecological validity of hearing device evaluations. This requires the virtual environment to elicit natural communication behavior in listeners. This study evaluates the effect of virtual animated characters’ head movements on participants’ communication behavior and experience. Triadic conversations were conducted between a study participant and two confederates. To facilitate the manipulation of head movements, the conversation was conducted in telepresence using a system that transmitted audio, head movement data and video with low delay. The confederates were represented by virtual animated characters (avatars) with different levels of animation: Static heads, automated head movement animations based on speech level onsets, and animated head movements based on the transmitted head movements of the confederates. A condition was also included in which the live videos of the confederates’ heads were embedded in the visual scene. Sixteen young adults (19–32 years) with self-reported normal hearing participated in the study, i.e., 16 triads were measured. The results show significant effects of animation level on the participants’ speech and head movement behavior as recorded by physical sensors, as well as on the subjective experience. The largest effects were found for the range of head orientation while speaking, the head orientation while listening, and the perceived realism of avatars. We therefore conclude that the representation of conversation partners affects communication behavior, which may be considered when natural speech and movement behavior is desired.

Keywords

Introduction

Face-to-face conversations in noisy environments occur frequently in every-day life (Wolters et al., 2016). They are an important part of social interaction, and frequent misunderstandings negatively impact self-confidence, social participation and overall well-being in people across the lifespan (Hogan et al., 2015; Hoppe et al., 2014; Punch & Hyde, 2011). For hearing-impaired listeners, verbal conversations in groups are typically challenging, especially when background noise is present. Hearing devices aim to increase speech intelligibility and reduce listening effort, but about 25% of users continue to report low satisfaction with their device in difficult listening situations, according to large-scale surveys (Carr & Kihm, 2022; Kim et al., 2022). Especially for patients with higher degrees of hearing loss, current hearing devices provide insufficient support (Hoppe et al., 2014; Punch & Hyde, 2011).

Improving device performance is not simple, as it requires identifying and attenuating irrelevant signals in diverse and dynamic sound scenarios. This task may be facilitated by recent developments that use the user's gaze and head movement behavior to distinguish between relevant and irrelevant sources (Best et al., 2017; Favre-Félix et al., 2018; Grimm et al., 2018). Such behavior-based signal processing strategies require systematic tests that elicit natural behavior. For example, interactive conversation was found to elicit different head movement behavior from the listener than isolated listening (Hadley et al., 2019; Hartwig et al., 2021). It has been suggested that widely used evaluation methods and measures, such as speech reception thresholds, are poor predictors of device performance in real life when used to evaluate more complex algorithms (Keidser, 2016; Naylor, 2016). This may be partly due to the fact that the head movement behavior of listeners in these traditional methods does not reflect real life behavior. One way of overcoming the limitations of traditional evaluation methods is to base evaluations of behavior-controlled hearing devices on interactive conversations (Grimm et al., 2023). In real-world environments, undisturbed audio signals cannot be accessed directly, and experiments are difficult to reproduce due to varying environmental conditions. Conversation-based paradigms for evaluating hearing devices can be performed in more controlled conditions using virtual audio-visual environments in the laboratory, thereby increasing precision and reproducibility.

In face-to-face conversations, non-verbal cues play a significant role. For instance, speakers’ head and eyebrow movement has been demonstrated to enhance speech understanding by aligning with prosodic cues (Graf et al., 2002). Munhall et al. (2004) found that natural head movements facilitate syllable identification. In addition, head orientation may support turn-taking, for example by visually indicating the intended addressee of a question. Furthermore, head movement and eye contact were found to facilitate communication and establish a sense of comfort (Aburumman et al., 2022; Rogers et al., 2022).

Given the critical role of non-verbal cues in real conversations, their simulation in virtual environments becomes essential for maintaining ecological validity in experimental paradigms. When moving from face-to-face conversations to virtual conversation scenarios, the influence of non-verbal behavior can still be present even when the interlocutors are represented by animated avatars. Hendrikse et al. (2018) showed that the listener's head movement behavior depends on the level of lip movement and head orientation animation of virtual animated characters when following a conversation. It was also found that manipulating the head movements of an interlocutor in an active conversation changes the movements of the receiver (Boker et al., 2009). Therefore, to achieve high ecological validity in virtual experiments, it is crucial to accurately represent interlocutors’ non-verbal cues—such as head orientation, gaze, and nodding—within the virtual environment.

Our overarching aim is to provide paradigms for the evaluation of hearing devices during interactive verbal conversation, based on physical measures of communication behavior, such as head movement, gaze or speech features. This work specifically aims to contribute to current research by investigating the effects of head movements of avatars on the listener's behavior and experience in order to assess their relevance in interactive scenarios. The research question of this study is:

Does the Realism of Head Movements of Avatars Affect Behavior and Experienced Involvement in Virtual Environment Scenes?

Our approach involved observing participants in real triadic conversations. Triadic conversations are the minimum configuration in which turn-taking results in horizontal head movement. They are also a common scenario in everyday life (Peperkoorn et al., 2021). To systematically assess the effect of head movements on conversational behavior, the conversation was conducted via telepresence between a study participant and two confederates. The confederates were represented by avatars with varying levels of animation. These avatars were displayed on a projection screen using virtual animated characters. Two levels of background noise were used to control the level of difficulty. The measurement environment was designed to resemble a typical pub conversation, both visually and acoustically. To investigate the effects on a reference group, the participants were young people with reported normal hearing.

We expected that animating head movements in a way to provide realistic non-verbal cues would facilitate the conversation by supporting speech understanding and turn predictability, which may be reflected in an altered speech behavior as well as movement behavior during conversation. We also anticipated that these effects would be more pronounced in the presence of high background noise levels, based on the assumption that normal-hearing participants tend to rely more on auditory cues than visual cues in quiet environments, but more on visual cues in noisy environments where verbal interaction is limited and visual communication becomes more important. To quantify the effects, we analyzed measures which were shown in the literature to be affected either by noise or by non-verbal cues. The speech behavior can be divided into voice-related features and timing of speech. One candidate is vocal effort, which was shown to be related to the success of conversations (Beechey et al., 2018). Changes in vocal effort can be measured in terms of the Lombard effect (Brumm & Zollinger, 2011). In face-to-face conversations, an increase in speech level of 3–4 dB per 10 dB increase in noise level is typically observed (Hadley et al., 2019, 2021). Related to the timing of speech, it was found that utterance duration was shorter in high background noise during face-to-face conversation, which may reflect the strategy to share less or simplified information in adverse conditions (Hadley et al., 2019, 2021). In contrast, utterance duration was found to increase in noise in a dyadic puzzle task (Beechey et al., 2018). A facilitated ability to predict the time points of speaker turns is expected to lead to shorter transfers of the speaker floor, referred to as floor transfer offsets (FTOs) (Hadley et al., 2021; Heldner & Edlund, 2010; Levinson & Torreira, 2015).

Related to head movement behavior, with increasing level of head movement animation we expected a reduced search behavior in head movements (Hadley & Culling, 2022). This may be expressed by a smaller angular distance between the participant's head orientation and the currently active avatar. Additionally, the participant's head orientation range was expected to increase with increasing level of animation. The head orientation range represents the range between the two most prominent orientation angles, which were expected due to two conversation partners. Next the head orientation, listeners tend to lean-in closer to the interlocutors in noisy conditions (Hadley et al., 2019). Similarly, a reduced level of head movement animation could also lead to more leaning-in behavior.

With an increased level of head movement animation and an improved turn predictability, an increased perceived conversation success (Nicoras et al., 2023) may be present. Perceived conversation success is a multifaceted concept, subjectively rated by each conversation partner. It reflects both sensory (e.g., hearing ability) and psychosocial (e.g., connection and comfort) dimensions of interaction. Unlike objective methods of measuring conversation success (e.g., based on communication outcome, see Örnolfsson et al., 2023), it can be assessed using a questionnaire.

A virtual environment can be evaluated by the experienced sense of presence by the user. The iGroup Presence Questionnaire (IPQ) (Schubert et al., 2001) comprises the factors of spatial presence, involvement, and the realism of the virtual environment. This questionnaire was also used in a previous study (Hendrikse et al., 2019) to evaluate non-interactive virtual scenes of everyday life, using the same virtual animated characters as in the present study. Here, we expected an increased sense of presence in the virtual environment with an increased level of head movement animation.

Methods

Design and Task

Free interactive triadic conversations were conducted in virtual reality using telepresence technology. The conversation scenario consisted of three interlocutors, the study participant and two confederates, seated at equal distances around a virtual table in a virtual pub (cf. Figure 1). The confederates were presented with the same virtual acoustic environment, so they experienced noise levels similar to those experienced by the participant in every condition. For the participants, the two confederates were visually represented on a large cylindrical screen by avatars consisting of virtual animated characters with different levels of head movement animation, or by live videos. These live videos were video overlays of the upper torso, inserted into the visual simulation of the scene. They showed the heads and faces on the interlocutors, similar to a video call, while maintaining the virtual surrounding. This setup facilitated the manipulation of the confederates’ head movements. All three interlocutors were seated in different rooms, which allowed access to separate speech and noise audio signals. For the confederates, the participant and the other confederate was visually represented over live video on a computer screen at the correct angular position. The participants as well as the confederates were asked to behave naturally.

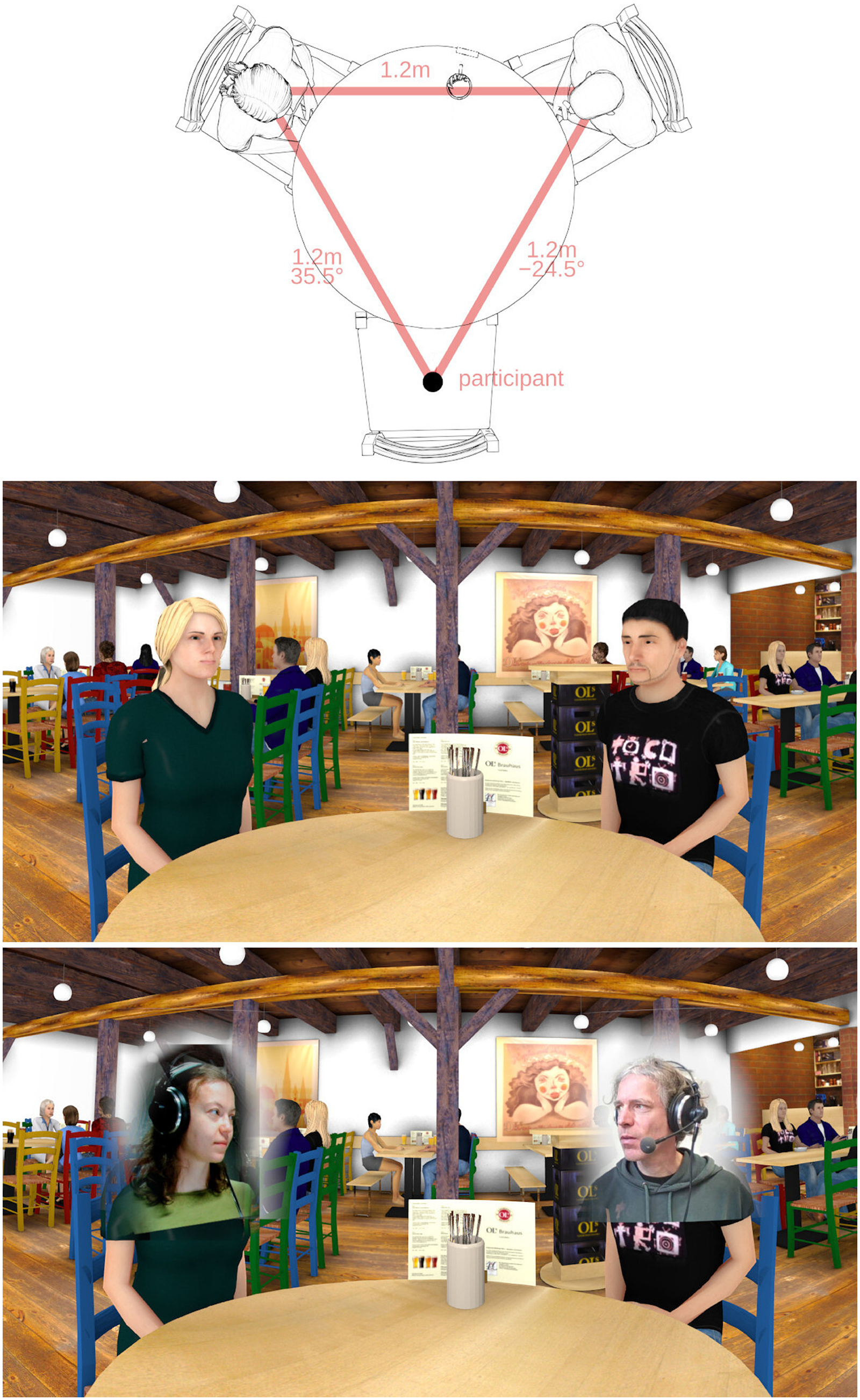

Top panel: top view of the virtual conversation setup. The participant is virtually sitting on the empty chair. Center panel: view of the virtual environment from the participant's perspective. Bottom panel: view of the virtual environment with real-time video textures in front of the avatars’ faces, which was used in the condition “video.”

Only one participant was invited to each session. The two confederates took part in several sessions. One confederate controlled the ongoing experiment and was referred to as the experimenter. One avatar always represented the experimenter and the other avatar represented a confederate, one of two different people, each taking part in about half of the measurements. The participants were informed before the experiment that the other two interlocutors were the experimenter and a confederate.

To achieve active participation, participants were instructed to maintain a free conversation about casual topics. Picture cards, as suggested by Smeds et al. (2021) and also used by Hartwig et al. (2021), were used to spark the conversation topics, which could freely change, or span multiple conditions. The picture cards were given only to the confederates to keep the focus of participants on the avatars. For a detailed evaluation of avatar head movement effects, multiple levels of head movement realism were implemented together with two contrasting levels of background noise, resulting in a 2 × 4 factorial design, see Table 1. In the visually static condition (“stat”), the avatar's head and gaze were fixed over the center of the table. In the automatic head movement condition (“auto”), the avatar reactively oriented the head and eye gaze toward the defined target angle, cued by speech onset detection (Hendrikse et al., 2018). To include a more complex behavior in one condition, the confederate's head movements were recorded by a motion sensor, transmitted to the visual simulation, and used to control the avatar's head orientation in real-time (“trans”). In the condition which was considered to be closest to a face- to-face conversation, a live video image of the confederate's head and shoulders was transmitted to the position of the avatar in the virtual scene (“video”). In the last two conditions, the movements of the confederates matched the spatial setup in the virtual visual simulation presented to the participants, due to the spatial arrangement of the computer screens and the camera.

Selected Independent Variables and Levels of Avatar's Head Movement (“Animation”) and Background Noise (“Noise”) in a 2 × 4 Factorial Design.

Each audiovisual condition lasted at least 5 min and was manually switched to the next condition by the experimenter at an appropriate time to avoid abrupt interruptions of the conversation. The order of conditions was pseudo-randomized in advance. After each condition, participants completed a questionnaire in which they rated their experience during the last conversation.

Study Participants

Sixteen participants (nine female, seven male) were acquired via a university-wide online advertisement. The study was approved by the Commission for Research Impact Assessment and Ethics of the Carl von Ossietzky University of Oldenburg (approval number EK/2021/068), and participants were reimbursed for their time. Their age ranged from 19 to 32 years (mean 23.8 years). They stated to have no hearing impairment, and language skills at or close to a native speaker level in German, the language of conversation. Familiarity with virtual reality was not required or assessed. Vision was corrected to normal if necessary. One participant was acquainted with both the experimenter and the confederate before the experiment, one participant with one of them, and 14 participants with neither. The confederates also met the inclusion criteria of the study participants.

Measurement Setup

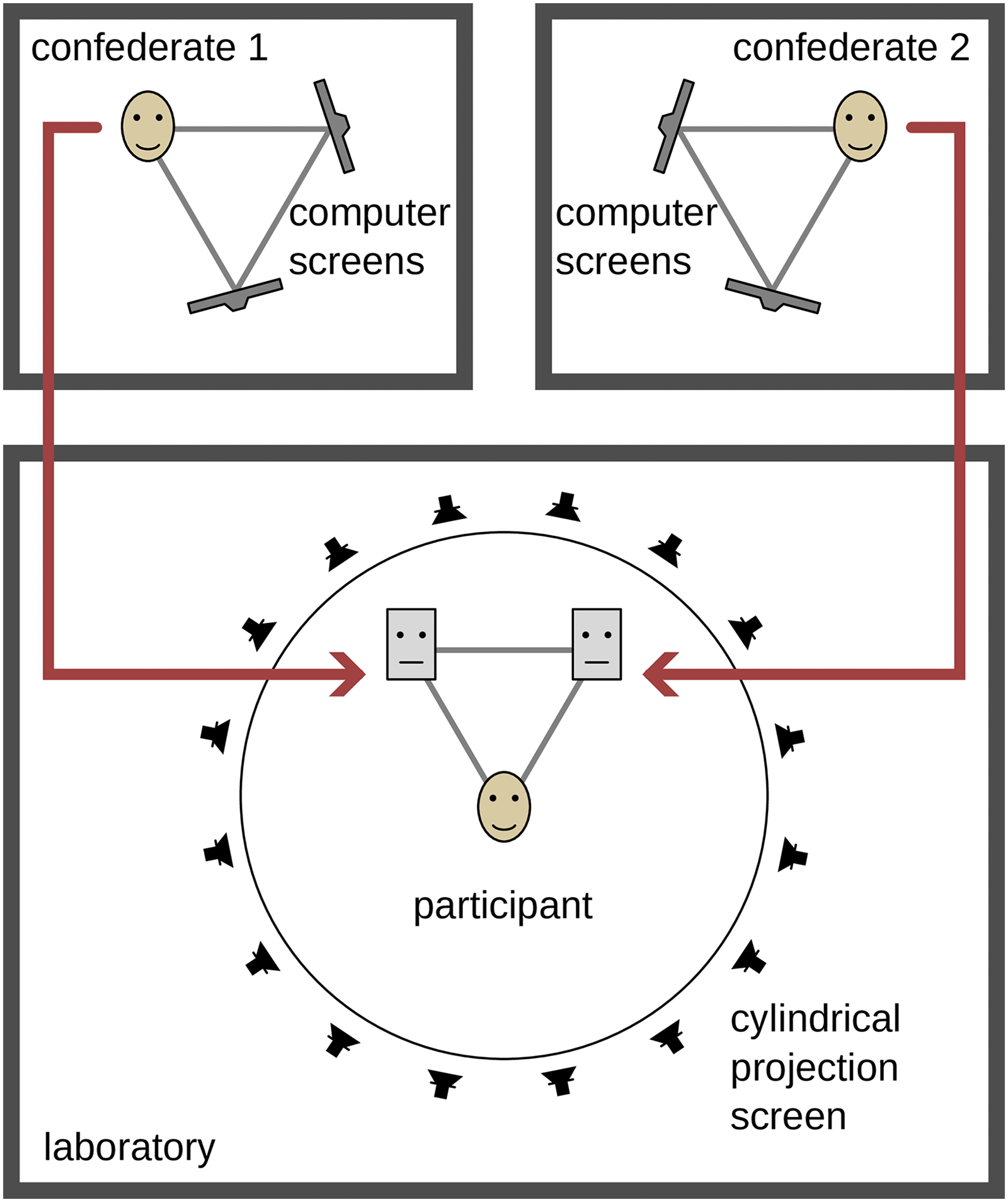

The measurement setup was distributed over three rooms: one for the participants with an audiovisual reproduction using loudspeakers and a cylindrical video projection, and one for each confederate, with binaural headphone reproduction and video reproduction on computer screens (c.f. Figure 2).

Overview of the setup. The study participant was seated in the laboratory, surrounded by loudspeakers behind a cylindrical screen. The confederates were placed in separate rooms. For the participant, they were represented as avatars. The audio signals were transmitted in low-delay real time. The spatial representation was rendered using a low-delay real-time virtual acoustics engine. Depending on the measurement condition, the head movements or a live video image was transmitted from the confederates to the avatars.

The two confederates were seated in separate rooms. Each confederate wore a headset (AKG HSC 271) equipped with a head tracking sensor, see section

The laboratory where the participant was seated was acoustically treated with absorbers on the ceiling and walls, a carpet on the floor and a heavy stage backdrop around the spherical loudspeaker array. The resulting reverberation time of the laboratory was below 0.2 s for all frequencies above 500 Hz and below 0.4 s for 125 and 250 Hz. The Direct-to-Reverberant Ratio was increasing from −3.7 dB at 125 Hz to 8.9 dB at 4 kHz, measured from the frontal reproduction loudspeaker at the listening position. One of the confederates’ rooms was another acoustic laboratory and the third was an office.

The audio delay from the confederate microphone to the position of the participants was 49.8 ms, at a block size of 256 samples via the TCP. This delay is larger than typical delays reported by Grimm (2024), which is caused by the relatively large block size and the TCP transmission. The delay between the remote head movement and a movement of the projected image of the avatar was approximately 180 ms. The streamed video pictures had a delay from confederate camera to the lab projection screen of 511.9 ms. The audio delay was not adjusted to the visual delay and stayed consistent over all measurement conditions.

Data from the rating questionnaire was collected via a tablet and sent to the data logger. Short term RMS speech levels were measured in each audio block and sent to the data logger. Data collection was centralized on the audio rendering PC in the laboratory where the participant was seated using the data logging module in TASCAR. This allowed all data sources to be synchronized.

Virtual Environment

The virtual space represented a pub with other guests at several tables in the background (cf. central panel of Figure 1). The dimensions, acoustic characteristics and audiovisual model are based on an existing room, the “Ols Brauwerkstatt” in Oldenburg, Germany (Grimm et al., 2021). The participants were located at a round table together with two other interlocutors at approximately equal distances (

In the acoustic model, the confederates were virtually represented by omnidirectional point sources. Noise sounds were first-order ambisonics field recordings of a babble noise in the local university cafeteria during lunchtime and a refrigerator sound (Grimm, Kothe, et al., 2019). The noise sounds were implemented as diffuse sound fields, i.e., with uniform sound levels within a defined volume. Background music (Dokapi, 2015) was played through two simulated loudspeakers in the room, using a physical model based loudspeaker cabinet simulation of a loudspeaker with a resonance frequency of 80 Hz, see the documentation of the module “spksim” in Grimm (2022) for details. To achieve a higher level of immersion, early reflections and late reverberation of the voices of the confederates and the participants were simulated. The measured broadband reverberation time (T30) at the listening position in the laboratory for a source at the interlocutor's position was 1.27 s (early decay time: 0.17 s), and the Direct-to-Reverberant Ratio was 7.1 dB. In the “quiet” conditions, the refrigerator sound ensured a steady noise floor level at 37.3 dB SPL (A) (48.2 dB SPL (C)) to mask the varying ventilation noise of the projectors. In “noise,” the sounds fridge, babble and music resulted in an

In the visual simulation of the virtual environment, the avatars were blinking at random times and moved slightly back and forth to indicate breathing. Their eye gaze was either directed horizontally over the center of the table (condition “static”) or toward the other avatar's or participant's head (conditions “auto” and “transmitted”). When the avatar's head crossed the central angle between the two interlocutors, its gaze shifted from one to the other. This gaze behavior was intended to simulate typical human behavior without conveying additional information. While the confederates were speaking, the avatar's lips moved in real time via a speech-based algorithm (Llorach et al., 2016). The virtual characters had no facial expression, gestures or body movement. For the “video” condition, a real-time video of the confederate's shoulders and head was streamed to the Blender scene and shown on a flat texture in front of the avatar's face, see lower panel of Figure 1. The virtual characters in the background were only visible during noisy conditions.

Measures and Analyses

The communication behavior of participants was evaluated regarding speech and head movement. The subjective experience of participants was assessed via a rating questionnaire. For each selected measure, cf. Table 2, one data point per participant and condition was calculated. Variance within conditions was not analyzed, except for the head orientation measure, where range was used as a separate measure.

Overview of the Dependent Variables and Corresponding Measures.

Questionniare Items That Were Asked After Each Condition.

The average

Scheme of possible combinations of speech segments in a triadic conversation. Each row represents one speaker, the dark gray bars are speech activity over time. Gaps and overlaps are marked by light blue bars. Only the times of speech gaps and overlaps were defined as turn takes.

The

Despite their similar definitions, both measures—angular distance and head orientation range while listening—were retained for the analysis. This allowed for better comparisons with prior literature and captured the differences between speaking and listening time intervals.

The

In addition, a factor analysis was conducted to examine the effects of animation level and background noise on the latent constructs “sense of presence” and “perceived conversation success.” For each construct, a one-factor model was tested using the respective items: Q1–Q5 and Q7 for sense of presence, and Q6, Q8–Q10 for perceived conversation success. Values were pooled across all participants. Factor loadings were estimated using the regression method for factor score prediction, with no rotation applied to maintain interpretability of the single underlying factor.

For the experience rating data, a Kruskal–Wallis rank sum test was performed, for the factors “animation level” and “noise level.” In case of a significant effect, a Dunn's test with Bonferroni correction of the

Results

The study data consists of 16 sessions, with one missing session for head movement data due to a technical error.

Speech Behavior

The effect of “noise” on participants’ speech level was significant, see Table 4, with a mean increase of 10

Summary of Main Effects of Communication Behavior as Reported by the ANOVA With

When

The median duration of utterances during conversation are shown in Figure 4. Pooled across all conditions, the median duration was 1

Median utterance duration during conversation.

The median duration of speech gaps and overlaps at turn takes are shown in Figure 5, upper panels. For comparison with the literature, histograms of FTOs are presented in the two lower panels. The median duration of all detected speech gaps was 0.51 s, speech overlaps had a median duration of 1.11 s. ANOVA results showed that speech gaps and overlaps were significantly affected by “noise,” but not by “animation,” see Table 4. Speech gap duration increased in noise by 0.074 s in mean value. A possible interaction effect was not significant (

Upper panels: median duration of speech overlaps (left) and gaps (right) at turn takes between the three interlocutors, relative to individual median across all conditions. The speech overlap duration is noted in negative values, i.e., more negative numbers denote a larger overlap. Lower panels: Floor transfer offset (FTO) histogram in 250 ms bins for the quiet (q) and noise (n) conditions, together with a probability density estimate to visualize the shift in median values with animation level and noise. The median FTO and number of floor transfers N is indicated for each condition.

Head Movement Behavior

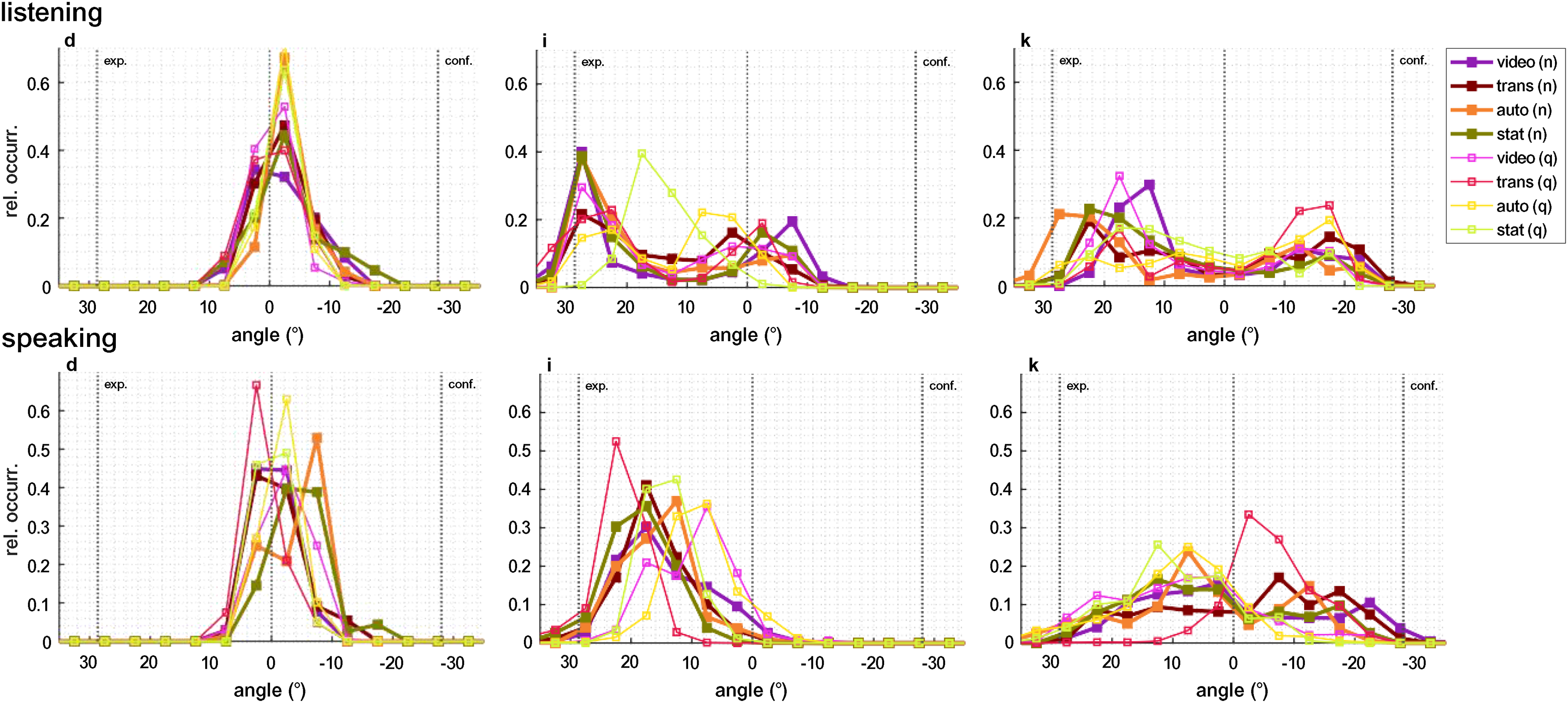

Participants consistently did not orient their heads completely toward the avatars’ faces, but showed some amount of head angle undershoot. Figure 6 shows histograms of head yaw angle in the different conditions for three participants with typical, yet different, head movement patterns. During the listening phases, some participants exhibited a clear bimodal distribution of yaw angles. Other participants barely moved their heads. In Figure 11 (appendix), individual histograms of head movement angles of every recorded session are displayed. Visually different patterns were found between listening and speaking, mostly pronounced in individuals (f) to (o).

The relative occurrence of the head yaw angle for three typical participants is shown during listening (top panel) and speaking (bottom panel) in all conditions. The vertical lines indicate the positions of the avatars representing the experimenter and the confederate, as well as the center between them. Occurrence values sum to 1 and the angle is divided into 5° increments. During listening, a bimodal distribution can be seen for participants (i) and (k), but not for (d), who did not move their head. Participant (i) oriented the head closer to the experimenter than to the confederate, which may have been caused by a torso orientation that was more toward the experimenter. This may be because, at the beginning of the experiment, the experimenter dominated the conversation by providing general instructions. While speaking, most participants did not exhibit a bimodal distribution.

The overall median angular distance was not symmetric, participants were more closely oriented toward the avatar range IQR = 1

Participants’ angular distance to the actively speaking avatars’ faces in each condition, referenced to the individual median over all conditions. Lower values represent a more accurate orientation; values below zero indicate an orientation closer to the avatars’ faces than the individual baseline. Undershoots and overshoots can not be distinguished, however, most participants did not show overshoots (see Figure 11). One outlier is outside the displayed

Summary of Effects of the Experience Rating as Reported by the Kruskal–Wallis Rank Sum Test, With

The used range of head yaw angles was individually different (cf. Figure 11), individual median values over all conditions during listening or speaking ranged from 11

Head orientation range during “listening” (left) and “speaking” (right), referenced to the individual median over all conditions. A higher value represents a wider angle range. Two data sets had a “speaking” period below 15 s in at least one condition and had to be excluded.

The head translation toward the screen which displayed the two avatars was affected by noise, see Table 4. Participants positioned their heads 3

Experience Rating

Participants rated their presence in the virtual space (Q1, see Table 3) and their co-presence with their conversation partners (Q2) with a median value of +2

Rating values of questionnaire items regarding presence, involvement and realism of the virtual scene. The English labels are parts of the translated original questions in German (cf. Table 3).

For all four questions regarding perceived conversation success (see Figure 10), the effect of noise was significant, as shown by the Kruskal–Wallis rank sum test. Participants indicated that they were less spoken to in a helpful way (Q6) and less able to listen easily (Q8). Participants also rated that in noise, they were less able to share information as desired (Q9) and had to make a higher effort to be understood (Q10). Based on the Kruskal–Wallis rank sum test no effect of animation level on the perceived conversation effect could be found.

Upper panels: Rating values of questionnaire items regarding perceived conversation success. The English labels are parts of the translation of the original questions in German (cf. Table 3). Note that Q10 has a reversed direction. Bottom panel: latent factor related to “conversation success.”

A factor analysis of the questionnaire data was performed, by splitting the questions into the two constructs “sense of presence” and “perceived conversation success” (see Table 2). The resulting loadings and specific variances of each question are shown in Table 6. Only a question which is not well explained by the common factors shows a significant effect of animation level (Q5; see Table 5). The loading of Q10 is negative, which can be explained by the fact that the scale was reversed for that question (from low effort to high effort), while the other items were ordered from poor perception to good perception. The Kruskal–Wallis rank sum test revealed no significant effect of noise or animation level on the common factor related to “sense of presence.” A significant effect of noise was found for the common factor related to “perceived conversation success” (

Discussion

In this study, we investigated the influence of avatars’ head movements on selected measures of speech behavior and self-motion, and collected feedback via an experience rating questionnaire. We expected an influence of the level of animation on communication behavior in background noise conditions, reflecting strategies to compensate for the degraded acoustic information, and to a lesser extent also in quiet conditions. Furthermore, we expected an improved perceived conversation success with increasing level of animation, because head movement cues provide non-verbal information which can be utilized to predict turn takes (Hadley & Culling, 2022; Templeton et al., 2022).

The increased level of background noise did not affect utterance duration of the participants, opposed to Hadley et al. (2021), who reported shorter conversational utterances in noise. The observed significant increase in median utterance duration of participants with higher levels of animation can be either caused by overall longer utterances, or fewer short utterances during conversation, such as fewer backchannels, i.e., affirmative utterances (Borch Petersen et al., 2023), during listening. Alongside with the argumentation of Hadley et al. (2021), longer utterances could indicate that, with higher levels of avatar animation, participants shared more complex, i.e., detailed, verbal information. It may be the case that different behavioral patterns were present: for example, long utterances in quiet could contain more complex information, whereas long utterances in noise could be used to keep the conversation going. However this is speculative, as the motivation for longer utterances could not be determined with our data.

Utterance duration values recorded here differed from literature which investigated free face-to-face conversations (median over all conditions here 1.08 s in contrast to Hadley et al. (2021) with values around 4 s). In speech corpora, utterances have a mean duration of about 2 s, independent of language (Gonzalez-Dominguez et al., 2015). Although the participants’ task and analysis approach are considered to be very similar to Hadley et al. (2021), differences in the detection and removal of backchannel utterances may remain, leading to a lower median value of utterance duration. It can be assumed that the speech activity detection algorithm had a considerable influence on this measure.

Although a decrease in median FTO values was present, neither speech gaps nor overlaps between speaker turn takes were significantly affected by head movement animation. It has to be considered that this measure is based on the interaction of all three interlocutors, of which only one person was exposed to the visual conditions. When all three interlocutors are immersed in the visual environment, this effect may be larger. Gaps tended to be shorter with higher animation levels in quiet, but not so much in noise, contrary to expectations, see Figure 5. As improved turn predictability is expected to affect speech gaps (Hadley et al., 2021; Heldner & Edlund, 2010; Levinson & Torreira, 2015), it seems that the non-verbal information did not facilitate the conversation in acoustically adverse conditions. Although head movements have been found to accompany speech prosody and support, for example, syllable identification (Munhall et al., 2004), Saryazdi and Chambers (2022) suggested that in noise, a lack of benefit from real-time visual information may be related to occupied cognitive processing in adverse acoustic conditions when the audio-visual setting is complex, which was the case in this study.

The effect of noise on speech gaps and overlaps was significant: in the noisy conditions, overlaps were shorter and less frequent, and gaps were more pronounced, i.e., longer and more frequent. This indicates that the interlocutors took more time for their responses in higher background noise, which has also been found by Sørensen et al. (2020). Longer speech gaps are associated with increased cognitive load and processing time due to hindered access to verbal information, and possibly indicates a strategy to facilitate communication in noise (Sørensen et al., 2020).

The magnitudes of gap and overlap duration in this study differ from the values reported in the literature. The median duration of speech gaps at turns in conversations found here is 506 ms, which is approximately 150–300 ms longer than the values typically reported (Heldner & Edlund, 2010; Levinson & Torreira, 2015), and the median duration of overlaps is 1108 ms, which is approximately 650–850 ms longer than the median values reported (Hadley et al., 2019; Heldner & Edlund, 2010; Levinson & Torreira, 2015). The literature typically reports on dyadic conversations. However, triads were found to show even shorter turn transition times than dyads, which can be related to an increased competition for the conversation floor (Holler et al., 2021). The differences in the duration of speech gaps and overlaps can be assumed to be due to the level-based detection of speech activity, which is highly dependent on the time constants in level meters—shorter time constants result in more gaps—and the level thresholds—higher thresholds result in more and longer gaps—used to detect the onset of speech. The possible exclusion of gaps and overlaps above a certain duration, e.g., as in dyads in Borch Petersen et al. (2023), was not considered here. As it is difficult to control for all the parameters involved (background noise level, speech microphone, frequency and spatial characteristics of microphones, and the annotation framework), absolute FTO times cannot be compared across studies. Nevertheless, relative differences between conditions can still be meaningful.

An increased transmission delay (measured: Δ

In the video condition, participants oriented their heads 2

The effect of background noise level on orientation toward avatars was comparable to the effect of video vs. static, with a mean head angle 1

The qualitative analysis of head orientation ranges (5th–95th percentile of yaw angles) revealed individual differences, ranging from 11

The head orientation range increased with the animation level during speaking phases, suggesting a stronger multimodal connection than just acoustic communication. During listening, however, this effect was only evident as a trend. Furthermore, the increase in head orientation range in noisy conditions may indicate a greater reliance on non-verbal cues in adverse acoustic environments.

Participants decreased their distance to the screen in noise by 3.0 cm. This magnitude is consistent with the literature for seated triadic and diadic face-to-face conversations (Hadley et al., 2019, 2021), both resulting in a decrease of inter- personal distance of about 3 cm in an increasing noise level. That indicates that also in the virtual environment participants used this universal strategy, consciously or not, to optimize their acoustic situation, or to indicate difficulties. Due to real-time rendering, which included the subject's head position, leaning-in behavior changed the signal-to-noise ratio (SNR) not only due to proximity to the loudspeakers (which would have a smaller effect than in face-to-face conversations due to the greater distance between physical and virtual sources), but also due to changes in acoustic reproduction position. However, these effects are small in terms of SNR; therefore, we assume that it is more of a social gesture to indicate difficulty.

For all selected measures, we found most significant differences between the conditions “static” and “video,” rarely between “static” and “transmitted” or “automated” and “video” and never between “automatic” and “transmitted.” This suggests that other facial features, such as eye blinks or displays of emotion, may be more important than realistic head movements. Still, the ANOVA indicated that the effect sizes

We had expected a larger effect size of noise compared to animation. This was found for all selected measures of head movement behavior and all but one measures of speech behavior. The effect sizes

In this study, only the participant was surrounded by the virtual visual environment, which may have reduced the effect of visual conditions on interlocutor interaction, measured here by speech gaps and overlaps at speaker turn takes. Another asymmetry was the confederates’ prior knowledge of the study goals and the experimenter's awareness of the current condition, which may have had an influence on the confederates’ movement, speech levels and between-speaker speech gaps and overlaps.

As noted above, the magnitudes of the selected speech measures were highly dependent on the level threshold for the automatically detected speech activity. Studies using different speech analysis approaches may yield different speech measure values based on the analysis method alone.

The factor analysis revealed that the linear models cannot explain the effect of the animation level, as demonstrated by the large specific variance in the questions that show an individual effect of the animation. This suggests that the questionnaire used in this study was unsuitable to gain insight into the effects of head movement behavior.

One general limitation is the use of virtual reality and animated characters. Participants may have different levels of familiarity with virtual reality. This should be less of a problem when using a projection screen, as in this study. However, it may still affect participants’ willingness to immerse themselves in the virtual environment. Furthermore, animated characters do not display emotions, which could impact the conversations, as indicated by the largest differences being found in the video condition. Conversely, the use of virtual reality and telepresence can be viewed as a positive feature because it enables the explicit modification of salient conversation features.

Conclusions

In this study, we found that the transmission of head movements to avatars affects the behavior of participants in interactive, triadic conversations in virtual reality. In particular, we found small changes in participants’ head movements, in the duration of utterances, and in the experienced realism of avatars. The effect of transmitted head movements was never significantly different from automated head movements. However, as a general trend, most measures in the transmitted head movement condition fell between those in the video condition and the automated head movement condition. The largest effects were achieved when the video transmission was used to represent the remote interlocutors compared to static avatars.

Therefore, it can be concluded that in the context of interactive communication in virtual reality, the representation of conversation partners will affect the communication behavior of the interlocutor. The participants’ head movement differs between speaking and listening, which is relevant for the evaluation of behavior-based signal processing strategies. Nevertheless, the transmission of head movements alone does not provide sufficient non-verbal communication behavior and further research is needed into the effect of facial expressions, gestures and posture of the interlocutor on communication behavior.

Footnotes

Acknowledgments

We thank D. Rothenaicher for her assistance with the data collection.

Ethics Approval and Informed Consent Statements

The study was approved by the Commission for Research Impact Assessment and Ethics of the Carl von Ossietzky University of Oldenburg (approval number EK/2021/068). All participants provided written informed consent before participating in the study.

Funding

The authors disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: This work was supported by the Deutsche Forschungsgemeinschaft (DFG, German Research Foundation) [Project-ID 352015383—SFB 1330 project B1].

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Data Availability Statement

The behavioral and questionnaire rating data, analysis scripts, and supplemental figures have been made available at Kothe et al. (2015). The virtual pub environment is published under doi:10.5281/ZENODO.5886987 (Grimm et al., 2021).

Appendix

Loadings of Factor Analysis of the Two Constructs “Sense of Presence” and “Perceived Conversation Success.”

| Sense of Presence | ||

| Loading | Spec. var. | |

|

|

||

|

|

||

| Q3 | 0.50 | 0.75 |

| Q4 | 0.65 | 0.57 |

| Q5 | 0.44 | 0.81 |

| Q7 | 0.37 | 0.86 |

| Perceived conversation success | ||

| Loading | Spec. var. | |

| Q6 | 0.67 | 0.55 |

|

|

||

|

|

||

|

|

||