Abstract

Audiological datasets contain valuable knowledge about hearing loss in patients, which can be uncovered using data-driven techniques. Our previous approach summarized patient information from one audiological dataset into distinct Auditory Profiles (APs). To obtain a better estimate of the audiological patient population, however, patient patterns must be analyzed across multiple, separated datasets, and finally, be integrated into a combined set of APs. This study aimed at extending the existing profile generation pipeline with an AP merging step, enabling the combination of APs from different datasets based on their similarity across audiological measures. The 13 previously generated APs (NA = 595) were merged with 31 newly generated APs from a second dataset (NB = 1,272) using a similarity score derived from the overlapping densities of common features across the two datasets. To ensure clinical applicability, random forest models were created for various scenarios, encompassing different combinations of audiological measures. A new set with 13 combined APs is proposed, providing separable profiles, which still capture detailed patient information from various test outcome combinations. The classification performance across these profiles is satisfactory. The best performance was achieved using a combination of loudness scaling, audiogram, and speech test information, while single measures performed worst. The enhanced profile generation pipeline demonstrates the feasibility of combining APs across datasets, which should generalize to all datasets and could lead to an interpretable global profile set in the future. The classification models maintain clinical applicability.

Introduction

Audiological datasets contain valuable knowledge about patients with hearing loss that can be exploited to learn about patterns in the data, for instance, for identifying patient groups that exhibit similar combinations of audiological test outcomes and may therefore benefit from a similar treatment. Data-driven techniques allow assessing these feature combinations without prior knowledge about audiological findings or diagnostic information. Performing such analyses on large-scale datasets has received increasing attention across medical fields as well as by policy-makers, due to its potential for precision medicine, the identification of risk factors for diseases, and health care quality control (European Commission. Directorate General for Health and Food Safety et al., 2016; Sinkala et al., 2020; Stöver et al., 2023, to name a few). In the domain of audiology, available data for large-scale analyses are currently spread across institutions. While research institutes often have smaller datasets available and may share them openly, larger datasets are available in clinics, but these are restricted in terms of access. Further, clinical or research institutions generally obtain data from patient groups in line with the respective institution's purpose or purpose of the respective study which may lead to considerably different patient populations and audiological measures across datasets. For example, a cochlear implant clinic will have a larger proportion of severe hearing deficits in its datasets than an ambulant audiological center, which will be frequented more often by hearing aid candidates. Even more variability across datasets comes into play if online remote testing with smartphones is included, allowing for data collection outside labs and clinics with a population exhibiting a mild-to-moderate hearing loss. Hence, a comprehensive data analysis describing the complete audiological patient population requires the combination of available, but distributed data across institutions.

Varying approaches exist to describe the audiological patient population. These approaches range from epidemiological studies regarding the prevalence of hearing loss in combination with different demographic factors (do Carmo et al., 2008; Roth et al., 2011), to developing optimal test batteries to characterize hearing deficits (Sanchez-Lopez et al., 2021b; van Esch et al., 2013), and advanced analyses into audiological groupings (Bisgaard et al., 2010; Saak et al., 2022; Sanchez-Lopez et al., 2020). Bisgaard et al. (2010) grouped audiograms into 10 standard audiogram patterns. However, as no additional measures are considered, a detailed description based on multiple domains of hearing, including, for example, suprathreshold auditory processing deficits like Plomp’s (1986) “D-component,” is not possible. Sanchez-Lopez et al. (2020) applied principal component analysis and archetypal analyses to multiple audiological measures to characterize patients into four distinct auditory profiles (APs). By defining the APs based on the hypothesis of two underlying distortion types they limit the number of profiles to four. Saak et al. (2022) proposed a profile generation pipeline which resulted in a first set of 13 APs. Again, APs were generated based on multiple audiological measures (e.g., speech testing, loudness scaling, audiogram). In contrast to Sanchez-Lopez et al. (2020), the number of APs is not chosen based on a hypothesis, but dependent on the patient population of the underlying dataset, hence, completely data-driven. Auditory Profiles are described in terms of data distributions across measures, which have the potential to integrate additional profiles into a combined set of APs. If sufficient datasets are accounted for that cover a large variety of the audiological patient population, such a combined set of APs could ultimately lead to a robust, comprehensive AP set that can provide a better estimate of the audiological patient population than single datasets.

To create such a comprehensive AP set, it is necessary to compare and integrate multiple datasets from various sources, leveraging the growing amount of available data, including various subtypes of hearing deficits as well as different information collected in the respective dataset. Combining multiple datasets from diverse institutions and geographical locations enables APs to more accurately reflect the audiological patient population. This is because multiple datasets can provide a more unbiased estimate than a single dataset, unless patients were acquired via stratified sampling. Hence, a suitable tool must be capable of integrating APs from different datasets including different tests and features, while ensuring interpretability, flexibility, and applicability to clinical practice, as well as complying with data privacy and protection regulations.

First, the process to combine profiles generated across datasets, as well as the resulting profiles need to remain interpretable to ensure their clinical relevance. Finding such interpretable subgroups or subtypes of diseases via big data analyses has proven valuable and transformative for medical research and precision medicine (Grant et al., 2020; Parimbelli et al., 2018). For example, molecular profiling has allowed us to identify two pancreatic cancer subtypes with subtype-specific biomarkers. The two subtypes have different clinical outcomes and may be more responsive to different subtype-specific treatments (Sinkala et al., 2020). In a similar way, APs could serve to identify subgroup-specific causes and treatment recommendations, for instance, by highlighting patient groups that exhibit a similar audiogram but may benefit from different fitting rationales for the hearing aids or by prompting subgroup-specific research. Sanchez-Lopez et al. (2021a), for example, have shown that listeners from their profiles preferred different hearing aid settings, supporting the value of profile-based rehabilitation.

Second, the process to combine APs should remain flexible to support various use cases. Research may require finer distinctions across audiological measures, while clinical practice may benefit from coarser profiles. More detailed profiles could represent subtypes of coarser profiles and could, thus, inform on potential subgroups of individual profiles. Likewise, varying audiological measures may be of interest for different future use cases. Here it should be feasible to combine profiles based on the largest available subset that includes a given measure. Hence, providing a method that allows for profiles to vary in terms of audiological measures and to be more detailed or coarser based on the individual use case helps in the applicability of the profiles in practice.

Third, for APs to be useful in clinical practice, the identified subgroup-specific causes and treatment recommendations need to be accessible to practitioners, such as clinicians, hearing care professionals, and researchers. This requires classification models tailored to different subsets of audiological measures (features), depending on clinical or research needs. For example, hearing care professionals in Germany, thus far, mainly use the audiogram and the Freiburger speech test in quiet in their hearing aid provision process, as these are required for a hearing aid indication and hearing aid approval for the reimbursement of the health care insurance (Gemeinsamer Bundesausschuss, 2021), even though research indicates that loudness scaling can be beneficial in hearing aid fittings (Oetting et al., 2018). Smartphone apps, in contrast, can easily perform loudness scaling (Xu et al., 2024), but measuring bone conduction thresholds without special equipment is not as straightforward, even though conductive hearing loss can be estimated with the antiphasic digits-in-noise test (De Sousa et al., 2022).

Finally, the process to combine and compare profiles needs to be able to also handle sensitive data, such that clinical datasets can be used that underlie data restrictions (European Parliament & European Council, 2016). For this purpose, profiles generated from privacy-sensitive datasets need to be fully anonymized, enabling them to be combined with other profiles, while ensuring the underlying raw data remains protected under privacy restrictions. Federated learning (Konečný et al., 2017; McMahan et al., 2017; Pfitzner et al., 2021) offers a promising solution for training machine learning models remotely while adhering to privacy constraints. This technique involves training models locally and iteratively updating a global model without sharing raw data. However, when integrating additional datasets for APs, multiple rounds of updates may not be feasible. In such cases, a one-shot federated learning framework (McMahan et al., 2017; Pfitzner et al., 2021) could serve as a solution for data privacy restrictions. That means, local APs could be generated at a specific institution, and the AP information could then be shared to update the already existing global APs. In other words, insights and knowledge could be extracted from different datasets via the APs, without the need to combine the respective datasets.

The present study proposes a flexible and interpretable approach for combining APs, generated with the analysis pipeline of Saak et al. (2022), that can be used in clinical practice. That means, we aim to investigate how APs can be merged from different datasets to allow for knowledge integration via APs (while maintaining information from all tests) and take the next steps toward an audiological patient population-based estimate of APs. To achieve this, we (1) aim to compare APs generated on two different datasets with respect to their profile similarity. To ensure the interpretability of the merging approach, we assess both the overall and feature-specific profile similarity across all merging iterations. We hypothesize that some APs will be similar across datasets, while the inclusion of further datasets will also result in new AP patterns, as a broader range of audiological patients is covered. Further, we (2) aim to analyze the feature importance of the distinct APs, such that APs can be easily identified by their specific patterns across audiological measures. Finally, we (3) aim to make the APs applicable by providing classification models for various settings, including research, hearing aid fitting, and potential smartphone applications. The current study, thus, aims to answer the following research questions:

Method

Datasets

We used two separate datasets (dataset A and B) in our analysis to generate two separate AP sets (profile set A and B). Both datasets were provided by the Hörzentrum Oldenburg gGmbH (Germany) and contain information with respect to a broad range of measures. They include measures contained in both datasets, which we refer to as common measures, as well as different additional measures. Common measures for both datasets are age, the Goettingen Sentence Test (GOESA; Kollmeier & Wesselkamp, 1997), the audiogram for air conduction and bone conduction, and the Adaptive Categorical Loudness Scaling (ACALOS; Brand & Hohmann, 2002). Both datasets use narrowband noise for ACALOS for left and right ears measured with headphones. For dataset A, results for the center frequencies 1.5 and 4 kHz are available and for dataset B, for 1 and 4 kHz. For audiogram and ACALOS measures, we only used the worse ear to reduce the feature space, but account for asymmetric hearing loss by introducing an asymmetry score derived from the audiogram (ASYM). In the following, we will refer to audiological tests as measures, and to features both as specific subresults of the measures and as summarizing features.

For example, a common feature between the two datasets of the measure GOESA is the binaural speech recognition threshold (SRT) for the collocated S0N0 condition, measured adaptively with varying speech levels and fixed test-specific noise level of 65 dB SPL (specific subresult). For the audiogram, the common features we used are summarizing features, derived from specific subresults. These include the pure tone average (PTA, calculated from the hearing threshold at 0.5, 1, 2, and 4 kHz) for air (AC PTA) and bone (BC PTA) conduction, an asymmetry score between left and right ears (difference between left and right PTAs, ASYM), the air–bone gap (ABG), the Bisgaard class (Bisgaard et al., 2010) to characterize the shape of the audiogram, and the PTA of the uncomfortable level (UCL PTA). For ACALOS we used summarizing features that can characterize both the lower and upper parts of the loudness curve (L15, L35, i.e., level corresponding to the categorical loudness of 15 categorical units (CU) and 35 CU, respectively), and the difference between L15 and L35 (as a feature representing the dynamic range), for both available frequencies (i.e., 1_diff and 4_diff). More information on common features between the two datasets and feature combinations used for analysis is given in Table 1. A description of ranges across common and additional features of the two datasets can be found in the Supplemental material.

Different Feature Sets Used in the Analysis (see the section “Datasets” for Feature Descriptions).

Note. “ALL” corresponds to all features common to both datasets that were also used for the profile generation; “APP” to measures potentially measurable via a smartphone; “HA” to measures generally available for hearing care professionals. The remaining feature groups describe feature combinations and the importance of single features.

AG SRT = audiogram-related speech recognition threshold; AG ACALOS = audiogram-related Adaptive Categorical Loudness Scaling; ASYM = asymmetry score derived from the audiogram; AC PTA = air conduction pure tone average; BC PTA = bone conduction pure tone average; ABG = air–bone gap; tUCL PTA = uncomfortable level pure tone average.

Original Dataset A

The original dataset A refers to the dataset and the profile set used in Saak et al. (2022). The dataset was collected for research purposes (Gieseler et al., 2017) and consists of 595 listeners (mean age = 67.6, SD = 11.9, female = 44%) with normal to impaired hearing. Next to the common measures and features, additional information is contained. In the speech test domain, the SRT for the digit triplet test (Zokoll et al., 2012) and the slope for the GOESA S0N0 condition are available. Further, information regarding the socioeconomic status and two cognitive measures are included, namely the Demtect (Kalbe et al., 2004) and the Vocabulary tests (Schmidt & Metzler, 1992). All these measures were used for the generation of the profiles. Detailed information about this dataset can be found in Gieseler et al. (2017) and Saak et al. (2022).

Dataset B

The new dataset B was collected for diagnostic purposes and consists of 1,401 listeners. The main measures overlap with dataset A (common measures). However, no cognitive measures are available for dataset B. Further, additional GOESA conditions are available, namely the S0N90 binaural and monaural conditions, where noise is presented from one side. Due to the diagnostic purpose of the dataset, information on the tympanogram (type A, As, Ad, B, C, D, tympanic membrane perforation, not measurable), Valsalva and otoscopy (not impaired, moderately impaired, impaired) is also available for both ears. All these measures were used for the generation of the profiles. Only patients with data for at least two common measures (from the audiogram, speech test, and loudness scaling) were included to ensure sufficient information for the clustering. This resulted in 1,272 patients (mean age = 63.74, SD = 13.22, female = 42.26%) with normal to impaired hearing. In addition, different outcome parameters are available, such as International Statistical Classification of Diseases and related Health Problems (ICD) codes, and information on a potential hearing aid supply and the respective aided performance. Outcome parameters were not used for the generation of the profiles.

Framework for Generating and Combining APs from Multiple Datasets

To enable the integration of audiological data from different databases, potentially underlying privacy restrictions, we extended the previously developed profiling pipeline by Saak et al. (2022) by a framework that enables the combination of individual local profiles into a global profile set. This framework facilitates federated profile generation, allowing individual profiles to be generated locally at the data ownership site. These profiles can be derived from diverse audiological measures and are then stored in a central profile data pool. From this pool, profiles can be flexibly combined into a global profile set or into custom profile sets that include only profiles with a specific subset of measurements. The framework is illustrated in Figure 1.

Visualization of the Framework for Generating and Combining Auditory Profiles. Gray Italic Text Shows the Potential Addition of Further Datasets.

Profile Generation

For dataset A, APs were already available and obtained from Saak et al. (2022). They contain 13 distinct APs and will be referred to as profile set A. Each profile consists of distributions across different features from audiological measures (audiogram, ACALOS, GOESA, etc.). These ranges provide an estimate of plausible values for individuals that belong to a certain profile. To generate the profiles, both the common and additional features from dataset A were used.

For dataset B, APs (profile set B) were generated using the profile generation pipeline from Saak et al. (2022). Features used for profile generation include the common and additional features from dataset B. No outcome measures, such as the ICD codes, were included to cluster patients into the APs. The available categorical features from the tympanogram, otoscopy, and Valsalva were transformed to continuous features using multiple correspondence analysis (Lê et al., 2008). Multiple correspondence analysis is a dimension reduction method, similar to principal component analyses. It quantifies the relationship between categorical variables in the form of principal components, and in that way transforms the categorical features into continuous components. We used the first three resulting components instead of the original three categorical variables as features for the subsequent data analyses. With this approach we retained some information regarding the three categorical variables for the generation of profiles. The resulting components, however, only represent the relationship across the categorical variables and some information will be missing. Hence, in the future, a different approach to tackle the difficulty of handling mixed data may become preferable.

Model-based clustering was used to generate the profiles. Model-based clustering assumes that an underlying model generated the data, and the clustering aims at recovering the model (Banerjee & Shan, 2017). The model is a combination of data clusters that describe the patterns in the data. These clusters serve as the generated APs. The profile generation pipeline (Saak et al., 2022) using model-based clustering consists of two steps.

In the robust learning step two parameters are estimated, namely the number of profiles present in the dataset and the respective covariance parameterization. To achieve this, bootstrapping without replacement was used to generate 1,000 datasets, each using 90% of the data. Next, missing data in each bootstrapped dataset were imputed. Here, we made a small adjustment to the original pipeline, which is expected to not impact the clustering results, as internal simulation analyses have confirmed for both datasets. We replaced multivariate imputation by chained equations (van Buuren & Groothuis-Oudshoorn, 2011) by imputation based on factorial analysis for mixed data (FAMD; Audigier et al., 2016). Factorial analysis for mixed data is a competitive imputation technique based on principal components that reduces the computational complexity of the profile generation pipeline. This means that instead of multiple imputed datasets only a single imputed dataset is generated for each bootstrapped dataset, which simplifies the following computations for cluster analyses. Next, features were scaled using the min–max scaling, which transforms values to range from 0 to 1. We then applied model-based clustering to each dataset using different parameter combinations (number of profiles, covariance parameterization). The possible number of profiles was set to 1 to 40 profiles to cover a broad potential range of profiles. The covariance parameterizations determine the shape, volume, and orientation of the profiles (see, Fraley & Raftery, 2003, for all possible covariance parameterizations). The model describing the underlying data best was then selected using the Bayesian information criterion (Schwarz, 1978). Finally, the most frequently occurring number of profiles and covariance matrix across all bootstrapped datasets was selected as the model parameter solution fitting the data best.

The profile generation step uses the original complete dataset and, again, imputes missing data with FAMD. Model-based clustering is then applied to the scaled features with the learned model parameter solution to result in the APs of profile set B. To allow for later merging with anonymized data distributions from sensitive datasets, all profile data were transformed to count data with 100 equidistant steps. More details regarding the generation of profiles can be found in Saak et al. (2022).

Profile Merging

To compare and combine profiles generated across multiple datasets, they need to share common features that allow us to investigate how similar different profiles are. We, therefore, selected the common features of the two datasets across the domains of speech intelligibility, audiogram, loudness scaling, and anamnesis information, namely the age of the individual. The rows of Table 1 display all features used to combine the two datasets.

Overlapping Density Index

A measure of similarity is required that allows for an estimation of similarity between two profiles. For this purpose, we use the overlapping index (Pastore & Calcagnì, 2019). The overlapping index is a distribution-free index that calculates the overlapping area of two probability density functions. To obtain the similarity between two respective profiles we calculate the overlapping index for each feature of the two profiles and use the mean as the final similarity score for the respective profiles. Sample size imbalances are accounted for by using normalized density distributions.

Merging Procedure

Starting from all profiles generated on datasets A and B (44 profiles, combination of profiles estimated separately for datasets A and B, respectively), profiles were merged iteratively, and the procedure is depicted in Figure 2. The similarity score was calculated for all profile combinations, and the two profiles with the highest similarity score were merged. The SRT was used six times when calculating the similarity score to balance the effect of the speech test to the number of available features from the audiogram and ACALOS. Then, for the new set of profiles containing the merged profile, the similarity score was calculated for all profile combinations, and the two profiles with the highest similarity score were merged. This procedure continued until only two profiles remained. Hence, we pregenerated all potential profile solutions and then derived a stopping criterion to result in the final profile set, as described in the section “Selection of Merged Profile Set.”

Merging Procedure. Profiles are Merged Iteratively Until a Stopping Criterion is Fulfilled. The Two Profiles with the Highest Overlap are Merged at Each Iteration Step.

Selection of Merged Profile Set

After each merge a potential profile set is generated. That means the merging pipeline results in N–1 potential profile sets, ranging from all N available profiles from the two datasets to the last two remaining profiles. Depending on the intended use case in practice, a plausible profile set needs to be selected. For instance, if a coarse classification into mild, moderate, and severe hearing loss is desired, merging could continue until only two to three profiles remain, instead of stopping the merging iteration earlier. This does not, however, provide a detailed differentiation across features, which can be achieved with a larger profile set, potentially necessary for research purposes or detailed patient characterization. In contrast, a profile set containing a larger number of profiles may contain redundant information for clinical classification but may aid in investigating specific differences between patient groups. For that reason, we aimed at proposing a basic set of APs that remains detailed enough, while reducing the number of profiles and combining similar profiles contained in both datasets. More specifically, we aimed at reducing redundancy across profiles for a proposed general set of profiles, such that the differences between the profiles are maximized. To achieve this, we used a combination of two steps to manually select a cutoff based on the overlapping density (see Figure 4A in “Results” section), corresponding to a number of obtained profiles at a certain number of merging iterations.

In the first step, we selected the cutoff based on two parameters, namely the slope of the maximum overlapping density, and the variance of the median overlapping density. The first parameter describes the slope over merging iterations for the two profiles with the highest overlap in each merging iteration. A steeper slope and lower overlap, thus, indicates when profiles are merged that are less similar than in previous steps. We selected the parameter such that the slope decrease is relatively larger after the cutoff, as compared to prior to the cutoff. The second parameter describes the variance over iterations for the median overlap of all profiles. If the variance changes too much over iterations, it indicates that merges took place between two profiles that differ strongly from each other. Hence, we selected the cutoff such that the variance remains relatively stable prior to the cutoff, contrasting the variance after the cutoff.

In the second step, we compared the overlapping index across features before and after the previous cutoff. For an optimal cutoff, we expect a higher overlapping index prior to the cutoff, and a lower overlapping index after the cutoff. In that way, we can determine whether features were overall similar for the two profiles to be merged, or whether they differ substantially from each other, in which case a merge may not be advisable. Hence, it also allows us to investigate which features are most important for the merging procedure and the profiles, and likewise, which features distinguish two profiles the most. We aimed for a cutoff that shows high overlap of features prior to it, and substantial difference between profiles after the cutoff.

Feature Importance of the Merges

For an interpretable merging procedure and interpretable APs, it is highly relevant to investigate which audiological measures drive the profile merges (high overlap), and which audiological measures hinder the profile merges (low overlap). That is because features that drive the profile merges are less relevant, whereas features that hinder the profile merges are more relevant and able to discriminate better between profiles. We, therefore, investigated which features had the least overlap across profiles, that is, were most discriminative between profiles. For that purpose, we defined different sets of iterations, for instance, iterations before the defined cutoff and after the defined cutoff. Iterations before the defined cutoff indicate which features were merged and which information may have been lost. Iterations after the defined cutoff indicate which features are most important for discriminating between profiles. For example, if we start at the last profile set with two remaining profiles, we can investigate which measures are least similar across the two profiles and would therefore lead to a split into three profiles. Hence, these features would support the split and can be considered as relevant features.

We further investigated which features were most relevant across different ranges of iterations (merging areas, see P44-26, P25-14, and P13-2 in Figure 4B). For this, we calculated the 1 − mean overlap for each feature within a merging area and compared this to the average mean overlap for all features for the respective merging area. Hence, we investigated whether a feature was more or less important than the average of all features within the merging area.

Classification Models

We built classification models for the global APs for two reasons. First, we aimed at enabling the classification of new patients into the profiles, such that the profiles can be used in practice. Second, the feature importance of the classification models allows us to draw conclusions with respect to which features are most relevant for classification into the respective profiles.

Feature Sets and Labels

To simulate different use cases, enable applicability of the profiles in practice, and extract insights into feature importance, different feature sets (subsets of the common features used for merging) were used to build the classification models. The feature sets belong to three general categories, denoted use cases, combined, and single in the following. The feature combinations “ALL,” “APP,” and “HA” belong to the category use case, as they represent specific use cases in practice. “ALL” contains all common features, enabling a comprehensive feature importance interpretation across all features. “APP” defines features that could potentially be measured via a smartphone, facilitating the estimation of APs in a mobile context. “HA” defines features generally available for hearing care professionals, making it possible to use APs in a hearing aid fitting context. The combined feature group explores the performance of only using two of the three main features, while the single feature group investigates performance with each measure separately. In that way, the classification models could be used also for datasets only containing one or two of the three measures. Specifically, “AG” includes audiogram-related features, “SRT” includes speech test-related features, and “ACALOS” includes ACALOS-related features. Consequently, “AG SRT,” “AG ACALOS,” and “SRT ACALOS” represent the combination of these respective tests. All sets are displayed in Table 1. While these feature groups allow for broader applicability of the classification models, they also allow for feature importance interpretations, as the performance across feature sets can be compared. Profile numbers of the final combined profile set were used as labels for classification.

Classification Model

In Saak et al. (2022), a random forest (RF; Breiman, 2001) classification model was built to classify new patients into the profiles, which resulted in adequate performance (precision and sensitivity >0.75 for the majority of the profiles). Random forest models work well with smaller sample sizes and provide an inherent feature importance index. We, therefore, also selected RF for the current analyses. Random forest uses an ensemble of trees, where the results of independent trees are aggregated, and the most frequently predicted label is used as the final prediction. Within each tree, only a subset of the features is used to split the predictor space into smaller regions, which effectively decorrelates the trees.

To build the RF model we used the one-versus-all (OVA) design, as ensemble methods have shown good performance for multiclass problems (Galar et al., 2011), OVA provided good performance in Saak et al. (2022), and it eases the interpretation with respect to feature importance. The OVA design splits a multiclass model into k binary models, where k is equal to the number of profiles. That means each model predicts whether an instance belongs to a specific profile or not. The feature importance therefore always highlights features that are most important for distinguishing the profile of interest from all remaining profiles. Data imbalance naturally exists with OVA design. To counter the imbalance, we upsampled labels using Gaussian noise to the average amount of labels available for each profile, which means all profiles have at least the average amount of patients in each profile, while profiles with patient numbers above the average retained their larger sample size. The rationale behind this was that we wanted to keep a balance between upsampled and original data. The remaining imbalance was addressed by using weights for the labels in the training. In that way, mistakes for the label of interest are more costly in terms of prediction errors.

Train, Validation, and Test Set

The complete dataset (global AP set) was split into a training (80%) and a test set (20%). The training set (containing 80% of the data) was then further split into a training (80%) and validation set (20%). For the training set, we used 10 times repeated 10-fold cross-validation to get a better estimate of the prediction error. The validation set was then used to evaluate the performances of the models on cases that were not used in the model training. After the model was specified with the training and validation set, the final model performance was evaluated with the test set.

Classification Performance Evaluation

Each classification model aims at reducing the prediction error, which is quantified by a specified evaluation metric. Evaluation metrics have different properties, making them useful for different prediction problems. For instance, accuracy is an evaluation metric that is easily interpretable but does not perform well for imbalanced classification performances. We chose Cohen's kappa (Cohen, 1960) as the evaluation metric for two reasons. First, Cohen's kappa takes imbalances into account, by comparing the accuracy to the baseline accuracy that could be achieved by chance. Second, it proved to be the best evaluation metric among three others (balanced accuracy, area under the precision recall curve, F1-score) for the classification model for profile set A in Saak et al. (2022). Hence, in the model training process, we used Cohen's kappa to tune the number of considered features at each split.

To evaluate the general performance of the trained classification models for each feature set, we used two distinct but complementary metrics, namely sensitivity and precision. Sensitivity describes the proportion of correctly classified cases for the class of interest, whereas precision describes the proportion of misclassifications for the class of interest. Both sensitivity and precision were compared across feature sets and further, for the overall profile classification and single profiles.

Finally, we build a dummy prediction model, which does not include any features and predicts profile labels based on stratified sampling, that is, it reflects the relative frequency of patients contained in different profiles. That way, we could estimate the benefit of our prediction models as compared to the baseline dummy model which represents classification performance by chance.

Feature Importance of the Classification Model

To estimate the feature importance of the RF models we used the inherent feature importance metric, namely the Gini importance or rather the mean Gini decrease (Breiman, 2001). The mean Gini decrease is used in the training process to estimate how well a feature can split the labels across nodes in the ensemble of trees. A good split results in pure nodes where no misclassification occurs, whereas a bad split does not aid in separating the labels for the classification and leads to “impure” nodes. The mean Gini decrease estimates how much a feature decreases the impurity on average across all nodes in the ensemble of trees, where a feature can be used multiple times in the same tree.

Overall, feature importance across all profiles and feature importance specific for each profile was calculated and compared via a feature importance plot. We transformed the mean Gini decrease to percentages (with the total mean Gini decrease of a profile equaling 100%) to show the contribution of each feature for each profile separately.

Results

Generation of Profiles

For dataset A, the optimal profile number of 13 was obtained from Saak et al. (2022). For dataset B, the optimal profile number was determined according to the profile generation pipeline described in the present paper. Figure 3 shows that 31 and 32 were most frequently selected across the bootstrapped datasets, and there is only a marginal difference between these two profile sets in terms of frequencies. In contrast, estimated profile numbers higher or lower than 31/32 were selected less frequently for the bootstrapped datasets. Since profile number 31 was slightly more frequently estimated than profile number 32, we selected 31 profiles as optimal for dataset B. Just as in Saak et al. (2022), the best covariance parameterization for dataset B was “VEI.” “VEI” refers to variable volume, equal shape, and coordinate axis orientation. It therefore allows clusters to be of different sizes but restricts them in terms of shape and axis alignment. Although the number of profiles may seem high, it can be partially attributed to the chosen clustering method. Fewer profiles could have sufficed in explaining the data, if larger datasets were available that would allow for more complex clustering structures (fewer covariance matrix restrictions). Conversely, by increasing the number of clusters, less complex clustering structures than the one used here could still capture the same underlying data structure (Bouveyron et al., 2019).

Distribution of Optimal Profile Numbers Across the Bootstrapped Datasets.

Merging Profiles

The profiles of the two datasets were merged using the mean overlapping density. This requires the selection of criteria to stop the merging process and result in the final profile set. Figure 4 displays the two criteria that we used to select the proposed combined profile set.

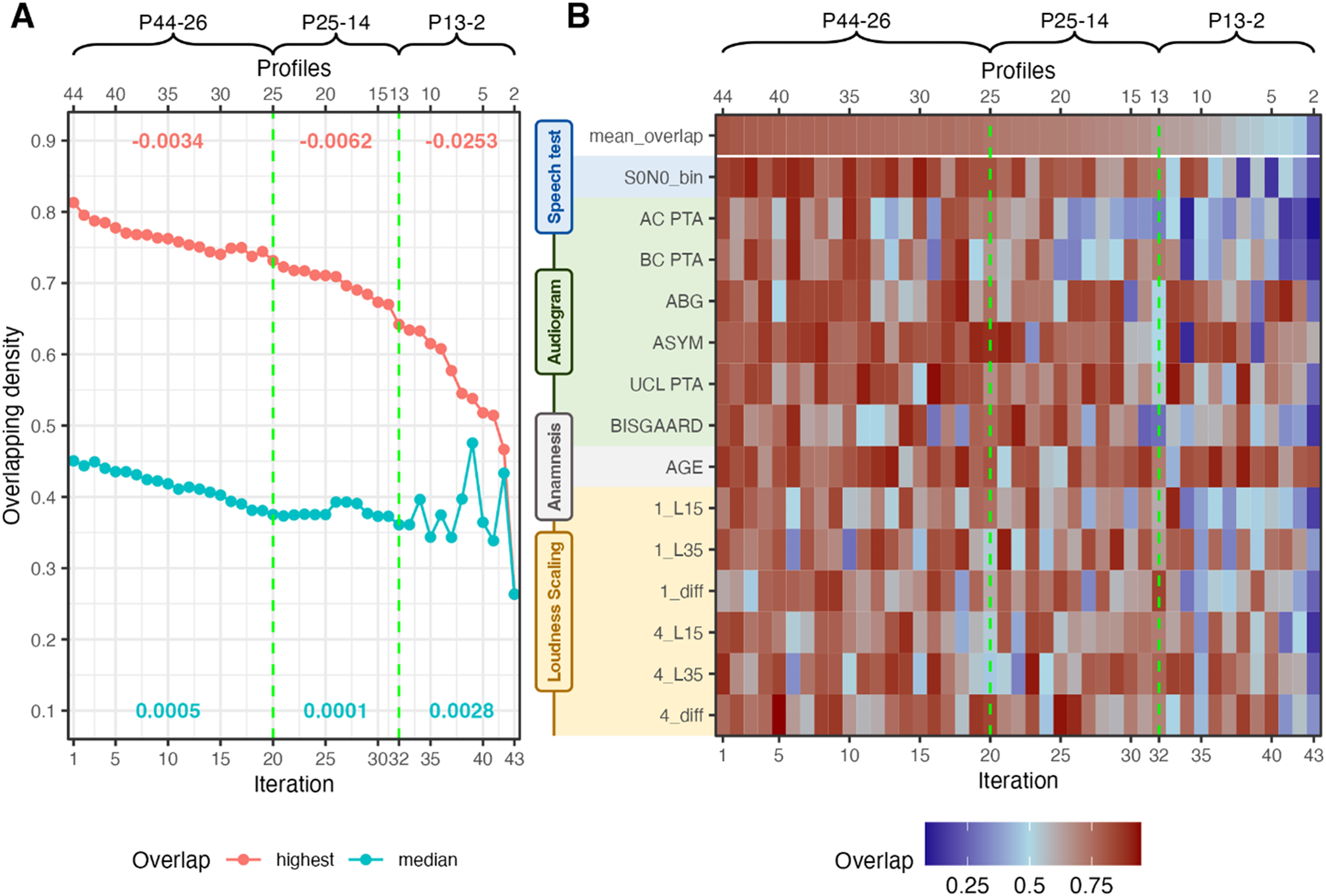

Merging Iterations. Vertical Green Lines Indicate Cutoff Candidates. (A) Median and Highest Overlapping Density Across the Merge Iterations. Red Numbers Indicate the Slope of Highest Overlapping Density for the Respective Merging Area; Turquois Numbers Indicate the Variance of Median Overlapping Density of the Respective Merging Area. (B) Overlapping Densities Across Features for the Two Profiles to be Merged at the Given Iteration. P44-26, P25-14, and P13-2 Indicate Different Iteration Sets by the Number of Profiles in Each Iteration.

Figure 4A displays the highest overlap (i.e., profiles to be merged) next to the median overlap across all profiles for each merging iteration. Two cutoffs are depicted that show two potential profile sets. The first cutoff at 20 iterations (denoted P25, corresponding to a profile set of 25 profiles) is characterized by a steepening decrease of the highest overlap, next to a reduction in variance. The second cutoff at 32 iterations (P13) precedes an even steeper slope decrease and high variations in variance. The high variations in variance indicate that merges occurred where profile ranges became much broader such that the similarity between profiles could increase again. Since this is undesirable, a cutoff should occur prior to high variations in the variance.

Figure 4B displays the overlapping densities of the two profiles to be merged at each merging iteration. The mean overlap corresponds to the highest overlap from Figure 4A. The two cutoffs are indicated with the dashed green lines. We can observe a general decrease in overlap with increasing merging iteration, that corresponds to the results from Figure 4A but provide us with insights into which features drove the decrease in overlap. We can observe that especially after the cutoff at 32 iterations the mean overlap decreases substantially across multiple features, which indicates that profiles would be merged that can be distinguished. Hence, merging the profiles beyond 32 iterations would result in a substantial loss of information. This widespread decrease in overlap is not as pronounced for the cutoff at iteration 20. Hence, for the following analyses, the profile set at 32 iterations was selected, which leads to a proposed combined profile set with 13 APs. Further merging beyond iteration 32 may be possible if the goal is, for instance, to retain only the last three profiles. However, this would lead to overly coarse profiles. Therefore, we recommend maintaining a minimum of 13 profiles. The corresponding analyses for the profile set at 20 iterations can be found in Supplemental Material.

Feature Importance of the Merges

To ensure interpretability of the merging iterations, we estimated which features were most important for profile distinctions. Figure 5 displays the important (turquoise) and nonimportant (red) features of the merging pipeline within different merging areas (P44-26, P25-14, P13-2). P44-26 corresponds to the merging area until iteration 20; P25-14 to the merging area between iteration 20 and iteration 32; and P13-2 visualizes feature importance across the remainder of the iterations.

Feature Importance for Different Merging Areas. P44-26, P25-14, and P13-2 Correspond to the Indicated Areas in Figure 4. Mean Feature Overlap was Calculated for Each Feature in Each Merging Area and then Subtracted from 1 to Indicate Higher Importance with Higher Scores. Subsequently, Scores are Divided by the Mean Overlap of all Features of the Merging Set. Scores, thus, Indicate the Higher or Lower Importance than the Average. Turquoise Bars with Values Above 1 Indicate Higher Importance; Red Bard with Values Below 1 Indicate Lower Importance.

Feature importance or profile separability for the 13 profiles at iteration 32 can be analyzed by looking at merging area P13-2, as the iterations after P13 highlight which features would lead to a split between profiles (going from right to left iterations in Figure 4B). For the 13 profiles, the feature importance is mostly based on AC PTA and BC PTA, the Bisgaard class, the SRT, and L15 and the difference of L35 − L15 of the ACALOS for 1 kHz. To compare the profile set with 25 profiles (Appendix Figure A3) to the profile set with 13 profiles we can evaluate merging area P25-14. This merging area highlights the features that differentiated the profiles from P13 to the profiles of P25. Compared to the previous profile set, we observe a greater emphasis on parameters from loudness scaling and a reduced importance of SRTs. A similar trend is evident in the merging area P44-26, where SRTs again play a lower role, while parameters from ACALOS gain significance. This indicates that earlier merges prioritize loudness scaling features (ACALOS information is lost), whereas later merges (fewer profiles) rely more on audiogram-based features, the SRT and L15 from the ACALOS measures. Vice versa, this also implies that if we would start from only two profiles and would split until iteration 1, in later split iterations (profile sets with a higher number of profiles) more detail with respect to loudness scaling is added to the profiles.

Proposed Profile Set

Figure 6A visualizes the proposed profile set with 13 APs. Both the profile ranges for each feature (A) and a proposal for single profile visualization are depicted (B). We can observe distinct patterns across APs. Profile 13 corresponds to a normal hearing (NH) profile and profile 1 has the highest SRTs. In contrast, profile 6 shows the highest impairment in the PTA. Profile 8 contains the largest number of patients, corresponding to a mild high-frequency hearing loss, and profile 5 shows a similar pattern to profile 8, but with a higher degree of impairment. Overall, the profiles cover a large range of hearing deficits in terms of test measurement ranges. We can see a distinctiveness of the profiles based on the SRT, audiogram-related, and loudness scaling-related features. The age was not found important in the two datasets.

Thirteen Proposed APs Across the Speech, Anamnesis, Audiogram, and Loudness Scaling Domain. (A) Profile Ranges are Depicted for All Features and are Ordered with Respect to the Increasing Median SRT. (B) View of Selected Singular APs. The Remainder of the Singular APs Can be Found in the Supplemental Material. Data is Referenced to the NH Profile (Value 0). All Bar Values Represent the Median Deviation from the NH Profile, Whereas the Numbers Indicate the True Median Value. AP = Auditory Profiles; NH = Normal Hearing; SRT = Speech Recognition Threshold.

In the SRT range of −5 to 0 dB SNR differences between profiles are mainly driven by audiogram and loudness scaling-based features. For instance, profiles 10 and 11 show similar SRTs, but differ regarding loudness perception and the AC PTA. Further, for profile 10 an asymmetry is present which could partly explain the higher AC PTAs. As expected, the presence of a higher asymmetry and a higher ABG compensate for higher AC PTAs in terms of higher SRTs.

We can see a clear inverse trend of the dynamic ranges for ACALOS 1 and 4 kHz to the SRT. That means, generally a higher SRT is accompanied by a reduced dynamic range, which is in line with existing research (Gieseler et al., 2017; Moore & Glasberg, 1993; Van Esch & Dreschler, 2015; Villchur, 1974). This trend fluctuates, when an ABG and asymmetry is also present in the profile.

The single profile visualization (Figure 6B) aids in visualizing the pattern for a single profile. Not all profiles are displayed, but the remainder can be found in Supplemental Material. The polar plots depict the normalized median difference of each profile to the NH profile (green circle). We can clearly see the impact that the presence of an ABG or asymmetry has on the relation between SRT and AC PTA (profile 1 vs. profile 3), that is, a smaller SRT results from a higher AC PTA in profile 3 due to asymmetry and/or ABG that both mitigate the general SRT deterioration with increasing AC PTA.

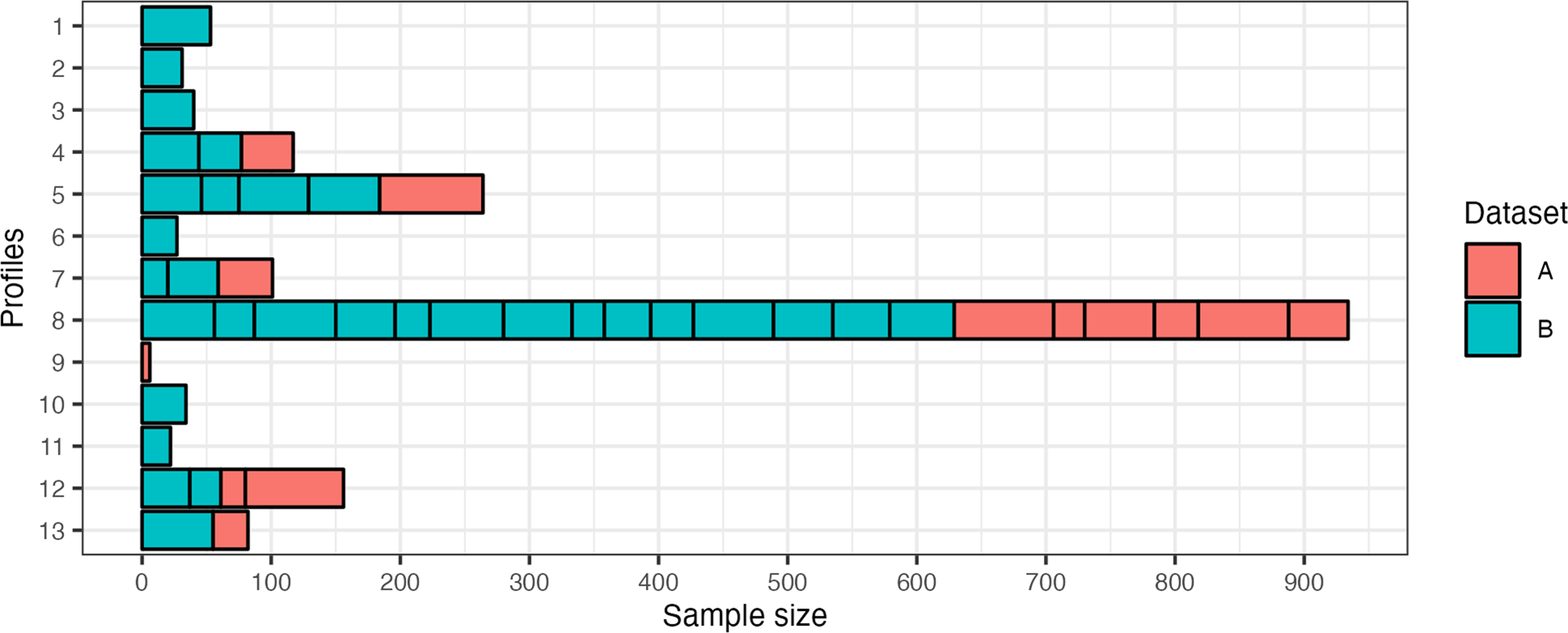

Figure 7 depicts the distribution of profiles from the two profile sets (A and B) in the final 13 profiles. The 13 profiles from profile set A have been merged with the 31 profiles from profile set B. Hence, the previous profiles from Saak et al. (2022) are also included within the new profile set. However, since dataset B contains patients with a larger variety and more severe deficits, the previous profiles are merged in favor of retaining a broader profile distribution with the new profile set. The number of patients per profile corresponds to the relative frequency of patients contained in the datasets. This is because model-based clustering, used for profile generation, does not impose any constraints on cluster size with the variable volume parameterization (“VEI”).

Distribution of Profiles that were Merged to Result in the Selected 13 Auditory Profiles. A Corresponds to Dataset A and Presents the Previous 13 Profiles Detailed in (1). B Refers to the New Dataset B and the 31 Profiles that were Defined as Optimal by the Profile Generation Pipeline. The x-axis Depicts the Sample Size for Each Subprofile, As Well As the Proposed 13 Profiles.

Classification Models

Classification performance across profiles for different feature sets is shown in Figure 8. The general classification performance is adequate for the “APP” and “ALL” feature sets (Figure 8A), with “APP” performing best among all feature sets. All feature sets performed better on average than the dummy model but for different profiles different benefits are achieved (Figure 8B). The higher performance of the dummy model for AP 8 can be explained by the larger sample size of this profile, which increases the chances of belonging to this specific AP. The “single” feature groups performed worst among the feature groups. This can, however, be expected, as less information is available to discriminate between APs with “single” sets. For the “single” feature group “AG” (audiogram), and for the “combined” feature group “AG ACALOS” achieved the best performance. The feature groups “SRT ACALOS” and “AG SRT” performed comparable on average and differences can be observed when comparing performances across profiles. For profiles with fewer deficits (higher profile number) “SRT ACALOS” could generally discriminate better than “AG SRT,” and vice versa for profiles with higher deficits (lower profile number). The feature sets “APP” and “ALL” both perform better than “HA” from the use case feature group, which does not contain ACALOS information. The results demonstrate the importance of using audiological tests beyond the audiogram to adequately classify patients into APs. All three measures contribute to better discriminability into the distinct APs, and the benefit of including ACALOS information for better discriminability is shown.

Classification Performance Across Profiles for Different Feature Sets. Feature Groups are Categorized into “Use Case,” “Combined,” and “Single.” Profile 9 is not Displayed, as the Sample Size is Too Small for Adequate Training. (A) The Mean Test Performance Across Profiles and (B) The Test Performance for Each Profile. Dummy Indicates the Performance of the Dummy Model that Predicts Profile Labels based on Stratified Sampling.

To investigate the feature importance of the classification models, we selected the best-performing feature set, namely the “APP” feature set of the “use case” feature group (Figure 9). Hence, a reduced feature set performed best in classifying patients into the profiles. The AC PTA was determined to be overall most important for classifying profiles. More specifically, this means that it is most important for distinguishing between profiles in the presence of the remaining features. This is seconded by the SRT. We can, generally, observe that for profiles with higher SRTs (lower profile number) the SRT becomes more important. For instance, for distinguishing profiles 1 and 2 from the remaining profiles, the SRT is more important than the AC PTA. We can further observe the most important features by comparing them to the mean importance of all features (Figure 9, dashed line in the top panel). The most important features are the SRT, AC PTA, asymmetry, and L15 for 1 kHz, and the importance of these features varies for different profiles. Profiles with lower SRTs (higher profile numbers) show a slight trend for higher importance of ACALOS features for differentiating the profiles from all the remaining profiles.

Feature Importance for “APP” Feature Set. Feature Importance Refers to Percentage Distribution of the Mean Gini Decrease for Each Profile Separately. Profile Specific Importance is Shown by the Colors. Overall Importance (Transformed to Percentage) Across All Profiles is Depicted by the Gray Bars. The Dashed Line Indicates the Mean Percentage Across Features.

Discussion

With the present study, we aimed at extending the existing profiling approach such that multiple datasets can be integrated to result in a global AP set that can, with future integration of further datasets, lead to an audiological patient population-based estimate of APs. Our results show that APs generated across datasets can be plausibly merged using the mean density overlap of two profile distributions. The exact number of profiles is flexible and can be chosen regarding the required detail of the profiles. We further trained classification models that allow for adequate classification of new patients into the APs using different feature combinations. Finally, we determined the importance of different features for both merging of and classification into the APs.

Auditory Profile Generation for Dataset B

The optimal number of profiles generated for dataset B is 31. In comparison to the 13 APs from dataset A, this number can be considered rather high. However, one must consider the differences between the two datasets. First, dataset A is a research dataset where participants were recruited via a participant database of the Hörzentrum. In contrast, dataset B is a clinical dataset where participants approached the Hörzentrum themselves for the diagnostic support of an ear, nose, and throat physician. As a result, dataset B contains a larger sample covering a broader range of hearing loss including patient patterns with more severe hearing loss, as evident from the higher SRTs in profiles 1–3, which were not merged with profiles from dataset A. Second, the two datasets vary in terms of additionally included audiological measures. For dataset A, the most prominent additional features to the common features are cognitive measures. Dataset B, in contrast, contains additional features such as the S0N90 condition (noise from one side), both monaurally and binaurally, and information from the tympanogram, Valsalva, and otoscopy. As a result, these additional features, in combination with the larger variation in patient patterns can explain why dataset B resulted in a higher optimal profile number (see “Role of Common and Additional Features for Profile Generation and Merging” section).

Proposed Profile Set

Our proposed iterative merging procedure, using the highest mean density overlap between two APs, respectively, enables the integration of different datasets via APs (RQ1.1). The separately generated profiles from the two datasets can, thus, be combined to describe both datasets together. The newly proposed and merged/combined profile set covers a wide range of hearing deficits and extends the range of deficits to the APs of profile set A, which are described in detail in Saak et al. (2022). Both sensorineural and conductive/mixed hearing losses (APs 3, 6, and 7) are covered within the profiles. Integrating the two datasets (A and B) has extended the range of hearing deficits contained in the profiles as compared to profile set A. This is evident from the new profiles with higher SRTs (Figure 7, APs 1–3) covering patients with more severe hearing deficits. The APs now compactly describe varying hearing deficit patterns.

Auditory Profiles 8, 5, and 12 have the largest sample sizes. Auditory Profiles 8 and 5 both represent common high-frequency hearing loss, but differ in severity. More specifically, we observe a more pronounced reduction in the dynamic range at higher frequencies compared to lower frequencies (1_kHz diff vs. 4_kHz diff), which can aid in better speech intelligibility, as compared to a uniformly reduced dynamic range observed in AP 1. This trend is stronger for AP 8, which is in line with the generally more severe hearing loss patterns of AP 5. Since these types of hearing loss are common in audiology, this might explain the rather large proportion of patients that belong to these profiles. In other words, it can be expected that a frequent hearing loss pattern will contain more patients than a less frequent hearing loss pattern. However, the current analyses pipeline does not check whether the repeated merges could have led to profiles that have become too coarse. Hence, it would be important in the future to implement such a mechanism (for more details, see the next subsection).

Auditory Profile 12 also contains a rather large share of patients with mild hearing loss. Here it is interesting to note that both UCL PTA and 1_L35 have lower scores than the NH reference profile 13. This could be an indicator for the presence of recruitment. Auditory Profiles 1 and 3, generally, vary with respect to the impairments across included measures. Auditory Profile 1 shows worse SRTs, but better ACALOS and audiogram scores than AP 3, likely due to AP 3's larger ABG. The general prevalence of asymmetries and ABGs in the APs highlight the importance to include these patient types in future research.

Merging Procedure

The newly proposed profile set contains information from the two profile sets (A and B). The previously generated 13 APs (profile set A) are also represented in dataset B (RQ1.2). They are both merged with profiles from dataset B, and with profiles from dataset A. That means, profile set A is now represented by fewer and coarser profiles, while additional profiles with more severe hearing deficits were added to the new proposed profile set. This behavior can be explained by the continuous merges leading to a coarser profile separation, more severe cases being present in dataset B, and that profiles from profile set A were more similar to themselves than profiles from profile set B.

The number of profiles after merging is flexible and depends on the intended use. Generally, a cutoff in the later merging steps yields fewer, broader profiles, which could be suitable for screening purposes (e.g., estimating mild, moderate, or severe hearing loss). Conversely, a cutoff in the earlier merging steps retains more, finer profiles, and allows us to investigate smaller differences between profiles (RQ1.3). Hearing care professionals may need such a detailed separation of patients, to potentially incorporate information from the profiles into the fitting procedures, while researchers may need an even more detailed separation of profiles to investigate relations and effects of certain audiological features. Here, a cutoff could be selected prior to the loss of information for the feature of interest. An example for a larger profile set is shown in the Appendix (Figures A3 and A4).

The similarity score develops plausibly across merging iterations and features can be identified that drive the AP merges. In that way, the merging procedure allows us to plausibly combine, compare, and characterize the content of two datasets containing common features. With the current cutoff procedure, we aimed at first demonstrating the general feasibility and plausibility of the profile merges, which was confirmed with the feature importance analyses of the merges. While a flexible cutoff suits different applications, an automated and unbiased cutoff will be needed to define a global profile set in the future. One possible approach would be to compare within-profile similarity via bootstrapping to the between-profile similarity. Within-profile similarity refers to how similar patients are to each other within a profile, whereas between-profile similarity describes the similarity of patients between two separate profiles. One can assume that the within-profile similarity will be higher than the between-profile similarity, as patients within a profile are likely to be more similar to each other than to patients in other profiles. Therefore, the midpoint between the calculated within-profile and between-profile similarity could be used to define the merging cutoff in the future.

With the future integration of additional datasets, there is a risk of profiles becoming too coarse due to the repeated merges. To mitigate this, a monitoring mechanism is needed to detect when a profile has become too coarse. One option here would be to identify potential subprofiles within a profile that may have become too coarse via clustering models. Another option would be to check whether the within-profile similarity deviates from the expected range. If the similarity deviates, the profiles could be split into two related subprofiles.

Classification Model and Its Applications

Using the “APP” feature set, which includes audiogram, ACALOS, age, and speech test data, we achieve an adequate classification into the 13 combined APs. The most relevant features for this are the SRT, AC PTA, ASYM, and L15 1 kHz of the ACALOS (RQ2). As no bone conduction measures are included in this feature set, the current set of APs could be measured via smartphones, as various audiogram, loudness scaling, and speech test apps already exist (Chen et al., 2021; Kollmeier et al., 2015; Saak et al., 2024; Van Zyl et al., 2018; Xu et al., 2024). As speech tests differ, it would be necessary to consider appropriate ways how to achieve comparability between the available speech tests in different datasets before merging APs generated on the respective dataset.

Hearing care professionals currently measure the audiogram for hearing aid fitting. Depending on the respective regulations and tests available in each country, a speech test in quiet or noise is also used for hearing aid indication (Hoppe & Hesse, 2017). However, the manufacturer first-fit of a hearing aid is solely based on the audiogram. Speech or loudness measurements are not considered, which appears to be insufficient to cover all aspects involved for compensating a hearing loss (Kollmeier & Kiessling, 2018). This is also reflected in our findings: classification improves with the “APP” features set (including loudness scaling) compared to the “AG SRT” and “HA” feature sets, while single measures alone perform poorly. This shows that multiple measures are necessary to fully characterize hearing deficits in practice.

Overall Feature Importance and Interpretability

The feature importance of the merging procedure and the classification models are generally comparable and appear audiologically plausible (RQ3). Both steps use the same initial feature set, but from the classification models only the best performing model (“APP”) was used for feature importance analyses. This resulted in the AC PTA, SRT, and the L15 1 kHz of the ACALOS to be among the most important features. These cover a combination of threshold information, speech intelligibility, and loudness perception for soft sounds at 1 kHz.

Especially in the later merging iterations (Figures 4B and 5—merging area P13-2), the speech intelligibility gains importance, demonstrating the relevance of speech information for our combined profile set. The merging process is interpretable, as we can observe which features drive merges and for which information is lost. For instance, loudness scaling information is more prominent in earlier merging stages, which becomes evident from the higher feature importance for loudness scaling in merging areas P44-26 and P25-14 (see Figure 5). Hence, an earlier cutoff (after 20 iterations) may better preserve this feature, yielding 25 profiles (see Figure 4 and Appendix Figures A2 and A3). These profiles show clearer differences in loudness scaling and reveal patterns like recruitment (e.g., profiles 13, 20, and 23). This comes at the cost of lower SRT separability, suggesting that more profiles overlap in terms of SRT ranges but differ with respect to other features. Comparing the 13- and 25-profile sets across use cases could clarify their effectiveness in providing meaningful separation between patients in these situations.

Our feature importance results align with prior findings emphasizing the need to characterize hearing deficits beyond the audiogram (Musiek et al., 2017). While the audiogram is often seen as the gold standard for characterizing hearing loss, it cannot characterize every aspect of existing deficits (Gieseler et al., 2017; Musiek et al., 2017; Sanchez-Lopez et al., 2021b; Van Esch & Dreschler, 2015). Our results show that combining audiogram, speech intelligibility, and loudness scaling information offers a more comprehensive view. Notably, models including ACALOS (“HA” vs. “ALL” and “APP”) outperform those without it, even when audiogram UCLs are used. This confirms prior findings that loudness scaling provides additional information beyond the audiogram (Kollmeier & Kiessling, 2018; Launer et al., 1996; Oetting et al., 2016; Van Beurden et al., 2021). Interestingly, the “AG SRT” and “SRT ACALOS” classification models perform comparable overall, but profiles with better speech intelligibility (lower SRTs) are better predicted by using SRT in combination with loudness information, while profiles with worse intelligibility (higher SRTs) are better predicted by the combination of audiogram information with the SRT. This trend can also be observed in Figure 9, where there is a slight trend for higher importance of ACALOS in profiles with lower SRTs as compared to profiles with higher SRTs. One potential explanation could be that L15 from the ACALOS is related to the threshold of the audiogram, as it measures the perception of soft sounds. In that way, it may capture both threshold- and loudness-related information. Notably, the dynamic range at 4 kHz (L35 − L15) is more important for profiles with lower SRTs than for profiles with higher SRTs. This is plausible, as individuals with near-NH typically have a broader dynamic range, which decreases more rapidly with increasing hearing loss for higher frequencies. Regardless, the combination of SRT and ACALOS with audiogram-related information remains necessary for adequate classification performance. Hence, it appears crucial to include threshold and suprathreshold information in characterizing hearing deficits.

Role of Common and Additional Features for Profile Generation and Merging

The available features vary between datasets and consequently also between profile generation and merging steps. In the profile generation steps, common features (see Table 1) and additional features are available (see descriptions of the datasets). In the merging step, profiles can only be merged based on common features. Although only common features are shown here, additional features, such as S0N90 (noise from one side) are also available in the profiles. While not all patients in a profile have data on these features, they enable us to estimate conditional probabilities of feature ranges given a profile, thereby preserving information about the additional features in a descriptive manner. Merged profiles, therefore, contain integrated information on the common features used in the merging process. Note that both datasets employed included information on the individual audiogram, speech recognition in noise and loudness scaling which has some impact on the resulting finding, that all three information areas are relevant for classifying the individual patient. This would not have shown up if clinical datasets would have been employed with much less suprathreshold audiological information, which is both a strength and a potential pitfall of the current study.

Additional features were included in profile generation but not in merging, as they are not contained in both datasets. This approach ensures that the initial patient grouping is based on the most informed choice, using all available information, including additional features. This is beneficial for two reasons. First, additional features can impact the initial grouping of the patients by allowing for finer distinctions, as compared to profile generation based solely on the common features. For example, using only the AC PTA and the SRT would create coarser groupings and miss certain subgroups that are revealed when including ACALOS features.

Second, while merges are limited to common features, it may be of interest to derive profile sets that contain distinct feature sets. For example, if dataset X includes additional information on spectrotemporal modulation thresholds, a specific profile set could be generated by merging the profiles from dataset X only with profiles from datasets that also contain this information. These combined profiles could serve as subprofile sets. Hence, by always generating the profiles on the largest available feature set, rather than just common measures, the resulting profile sets are more flexible and can be merged with other datasets that share different features.

For those reasons, we have always generated the profiles on the largest available feature set. It follows that a certain number of common features should be available to adequately merge profiles generated from different datasets and to classify patients into a given profile. Given the provided feature importance analysis, we advocate for using a combination of threshold-, loudness- and speech test information, as these consistently prove important for merging and classification. However, depending on the use case and data availability, profile generation and merging may also be used exploratively to understand a given dataset better.

Combining the two datasets using only their common features prior to clustering, rather than generating profiles using the largest available feature set for each dataset separately and merging them afterward, yields similar overall hearing loss patterns, but some differences arise from excluding additional features. These differences may reflect both the influence of additional features and the merging procedure itself. Future studies should investigate the specific impact of these features on profile generation to better understand their relevance for patient characterization and to separate their effects from those of the merging procedure.

Another property of the additional features is that they could be used to infer probabilities within a given profile where data for the additional profiles is available. Here, it could be of interest to investigate whether certain feature ranges occur more frequently in certain profiles—especially if profiles were merged with at least one further profile containing the additional profile.

Toward an Estimate of the Audiological Patient Population via Combined APs

The combined profile set is the next step toward a better estimate of an audiological patient population-based AP set. Here, the audiological patient population primarily focuses on patients who have actively engaged with their own hearing loss by either visiting research institutes, clinics, or hearing care professionals. In the future, additional datasets need to be integrated to cover further hearing deficits, as well as information from different audiological measures. Measures not captured in the global profile set could be captured in subprofile sets that combine multiple profile sets sharing common measures to provide more detailed estimates for these specific subprofiles.

Stratified sampling and continued data integration would help refine profile definitions and improve the classification models. Currently, one large profile (AP 8) describes most of the patients due to the obviously high relative frequency of this profile (corresponding to a moderate high-frequency hearing loss, potentially age-related hearing loss) in the two employed datasets. Including additional and more diverse, representative datasets would help in better defining the remaining profiles and eventually enable prevalence estimates. In addition, the current datasets mainly cover hearing aid candidates, whereas cochlear implant candidates are rarely included. An audiological patient population-based set should, however, include all degrees of hearing deficits and be as complete as possible.

To reach an audiological patient population-based estimate of APs, several further steps should be taken. First, profiles from different datasets need to be merged until convergence, which means that no new profiles emerge with added data, similar to convergence in optimization (Hastie et al., 2009). A fixed, calculated cutoff is essential to determine when this point is reached, and future work should focus on defining it. Second, the precise impact of additional features on the generation of profiles should be disentangled from the merging procedure, such that we can pinpoint the impact these features have and evaluate their value for a good characterization of the audiological patient population. Finally, a method is needed to detect and split overly coarse profiles, ensuring the resulting set maintains meaningful distinctions. Additionally, the feasibility of profile-based first fits for hearing aids could be investigated, similar to Sanchez-Lopez et al. (2021a). Particularly, these first fits could already include information on both thresholds and loudness perception included in our profiles, as also shown by Kramer et al. (2020) and Oetting et al. (2018).

Integration Within a Federated Learning Framework

To include many additional datasets and work toward a global AP set, it will be important to conduct the AP merging step in a privacy-preserving way, without sharing individual data. This can be achieved by integrating the current analyses in a federated learning approach. Federated learning enables model training without needing to share data directly (Konečný et al., 2017; McMahan et al., 2017; Pfitzner et al., 2021) and can be implemented in two ways: (1) training machine learning models locally and sharing model updates with a central computation site which are used to retrain the models in multiple communication rounds, or (2) employing a one-shot federated learning approach, where only distilled information from locally trained models is shared with a global model in a single communication round (Duan et al., 2024; Guha et al., 2019). Additionally, it is important to verify privacy preservation by investigating that no individual information can be inferred from the shared information, for example by reverse engineering (Lee et al., 2018; Pfitzner et al., 2021)

In the context of our auditory profiling approach, the profile generation step would be performed locally on different databases, while the merging step would integrate all profiles on a central server acting as profile data pool. We intentionally designed our merging approach to comply with data privacy by only using anonymized information from the generated profiles, making it ready for federated learning. As overlapping density is calculated separately for each feature and then averaged, patient data can be fully anonymized by shuffling records per feature without affecting feature distributions. Consequently, our profiling approach can be used within a one-shot federated learning framework to integrate sensitive datasets.

Further evaluation is needed to verify that profiles merged via federated learning match those generated from combined datasets, ensuring the method's validity. This requires the same features in the profile generation and merging steps. In the present study, we generated profiles on all respective (common and additional) features of the datasets to maintain complete information from both datasets, hence, a systematic investigation of the influence of the additional features remains to be done in the future.

Conclusions

The current study demonstrates the feasibility of integrating datasets using the proposed framework for profile generation and merging, enabling a combined characterization of the content of audiological datasets. Combining the two datasets yields a new combined set of APs, which consists of 13 APs. Profiles can generally be well characterized based on the three dimensions: audiogram-, speech test-, and loudness scaling features.

We further enable the classification of patients into the APs using RF classification models. The best performing classification models include a combination of these three measures (audiogram, speech test, loudness scaling), excluding the bone-conduction audiogram. While classification models tailored for hearing care professionals, which use only the audiogram and a speech test, are also available, their performance can be improved if loudness scaling is included.

Audiogram-, speech test-, and loudness scaling-based measures provide complementary information that aid in characterizing patients and are consistently determined as important for both merging and classification. Even though this finding is based on the composition of the two underlying datasets that both include these measures, we nevertheless advocate for the inclusion of these measures for detailed patient characterization.

Toward an audiological patient population-based set of APs, it is necessary to incorporate additional datasets using the proposed method. These datasets should include a wider range of audiological subpopulations, such as cochlear implant users, thereby extending the APs contained. To make the merging procedure more robust, further research is needed to optimize the cutoff for the profiles and integrate a mechanism that evaluates the coarseness of the profiles due to repeated merges. Our merging approach provides privacy-preserving properties toward a federated learning approach, which has to be further developed and investigated in future studies. This will facilitate the integration of datasets that are subject to privacy restrictions.

Supplemental Material

sj-docx-1-tia-10.1177_23312165251349617 - Supplemental material for Integrating Audiological Datasets via Federated Merging of Auditory Profiles

Supplemental material, sj-docx-1-tia-10.1177_23312165251349617 for Integrating Audiological Datasets via Federated Merging of Auditory Profiles by Samira Saak, Dirk Oetting, Birger Kollmeier and Mareike Buhl in Trends in Hearing

Footnotes

Ethical Considerations

Our institution does not require ethical approval when already existing datasets are analyzed.

Funding