Abstract

People regularly communicate in complex environments, requiring them to flexibly shift their attention across multiple sources of sensory information. Increasing recruitment of the executive functions that support successful speech comprehension in these multitasking settings is thought to contribute to the sense of effort that listeners often experience. One common research method employed to quantify listening effort is the dual-task paradigm in which individuals recognize speech and concurrently perform a secondary (often visual) task. Effort is operationalized as performance decrements on the secondary task as speech processing demands increase. However, recent reviews have noted critical inconsistencies in the results of dual-task experiments, likely in part due to how and when the two tasks place demands on a common set of mental resources and how flexibly individuals can allocate their attention to them. We propose that in order to move forward to address this gap, we need to first look backward: better integrating theoretical models of resource capacity and allocation as well as of task-switching that have been historically developed in domains outside of hearing research (viz., cognitive psychology and neuroscience). With this context in mind, we describe how dual-task experiments could be designed and interpreted such that they provide better and more robust insights into the mechanisms that contribute to effortful listening.

Introduction

Communication often takes place in highly dynamic conditions that require individuals to flexibly shift their attention to varying sources of sensory input, which can require considerable mental effort. However, speech tests given in the audiology clinic were generally designed to isolate challenges associated with auditory perceptual processing from those related to higher cognitive demands. As a result, standard audiological assessments are limited in the extent to which they characterize the range of listening challenges that individuals experience in complex environments (Brungart et al., 2014). In the research lab, too, calls to enhance ecological validity have led researchers to consider the impact of listening in complex environments, such as while multitasking (Keidser et al., 2020).

There has been increasing recognition of the importance of quantifying the listening effort that patients report in their daily lives and that healthy adults report under complex real-world demands, such as in background noise (Rudner & Lunner, 2014) or while driving (Kubose et al., 2006). Over 25 million American adults are estimated to experience hearing difficulties in the absence of audiometric hearing loss (Edwards, 2020). The ability to quantify the mental effort associated with listening as a complement to standard audiometry may enhance patient care by providing a means to validate listener complaints and improve counseling and auditory rehabilitation strategies (Kuchinsky & DeRoy Milvae, 2024). Thus, there is a need for an easy-to-collect, valid, and reliable measure of listening effort experienced in complex situations. However, the extent to which multitasking interacts with different listening tasks and conditions has not been systematically interrogated in the context of existing theoretical models of attentional resource allocation.

Many studies have concluded that the consequences of listening in complex situations, such as when multitasking, manifest not just in poorer speech recognition scores, but also in greater listening effort, even when intelligibility is high or matched across individuals (Winn & Teece, 2021). Listeners may have to work harder to maintain performance by engaging additional sensory or top-down, cognitive resources (Peelle, 2018). Individuals with impaired hearing and/or cognitive function may be at a particular disadvantage when communicating in complex environments: exhibiting even greater deficits and/or allocating their mental resources to tasks with different priorities. Older adults have been observed to compensate for greater difficulties with multitasking at the expense of communication in order to preserve driving performance (Becic et al., 2007). This trade-off in task performance has been used as an index of individual differences in the capacity of various central functions. For example, the “stops walking when talking” test measures changes in gait while performing an attention-demanding task, such as holding a conversation, and has been used as a predictor of falls in older adults (Lundin-Olsson et al., 1997).

Quantifying the impact of multitasking as a function of listening demands forms the basis of perhaps the most common behavioral measure of listening effort: dual-task cost (Anderson Gosselin & Gagné, 2010). This measure follows from capacity-limited models of attention (Kahneman, 1973; Pichora-Fuller et al., 2016), which state that a singular set of mental resources must be divided across concurrent tasks. A primary task, such as listening, requires more mental resources; fewer remain available for performing a secondary task. Poorer secondary task performance (e.g., longer reaction times) then indicates greater effort had been allocated to listening. Research in hearing sciences has generally employed the dual-task paradigm to provide a cost-based measure of listening effort. However, we argue that there are substantial limitations on the inferences that can be drawn from dual-task studies of listening effort and that these may be mitigated in part by greater contextualization within long-standing theoretical models of multitasking.

A number of results stemming from dual-task studies of listening have been weak or inconsistent (Ohlenforst, Zekveld, Jansma et al., 2017). Reviews of the literature have noted that variability in the nature and timing of the listening and secondary tasks has likely contributed to these inconsistencies and thus limits our ability to generalize results (Gagné et al., 2017). We contend that this seemingly methodological challenge is exacerbated by theoretical imprecision in what the dual-task paradigm can and should tell us about the engagement of the mental systems that underlie effortful listening. By grounding the dual-task design in the larger psychological literature, we aim for dual-task design choices, rather than being a source of stress, to be seen as an opportunity to ask more precise questions about the nature of the mental architecture that supports speech understanding in complex conditions.

Therefore, the goal of this review is two-fold. First, we want to facilitate better integration of theoretical models of resource capacity and allocation as well as models of task-switching that have been developed in adjacent domains (namely cognitive psychology and neuroscience). Second, we want to cultivate a more holistic view of contextualizing how the results of dual-task paradigms should be interpreted. We believe that understanding effortful listening through multiple lenses may help guide our field to design better experiments. This review is organized in the following ways. We will first contextualize how terms such as “listening effort” and “dual-task measures” are used in the literature. Next, we will provide working definitions of multitasking, task-switching, and dual-tasking so that we can borrow vocabulary and concepts from other fields to tease apart the more ecologically valid, but often more complex, experimental paradigms for measuring listening performance. We will also provide some historical perspectives on the different models of attention allocation and how these models have shaped our interpretations. In doing so, we provide several direct quotes from historical works to demonstrate that they had, decades ago, plainly and directly stated the importance of integrating across such models—a crucial point which has been largely absent in more recent listening effort research. Finally, we will conclude by offering a few considerations for moving our field forward by looking backward to the literature: allowing for a closer connection between theory and methods, with the goal of potentially resolving many critical inconsistencies in the literature.

A Brief Overview of Listening Effort in the Literature: Terminology and Measures

Listening Effort

For individuals who experience hearing difficulties, even in the absence of hearing loss (Beck et al., 2018; Edwards, 2020), the perception of speech is often notably insufficient for its understanding (Gordon-Salant, 2014). In difficult listening situations or with declines in hearing function, the engagement of higher-level cognitive functions may be required to help fill in missing information and resolve perceptual or linguistic ambiguities. However, this top-down support comes at a cost in terms of energy that must be devoted to listening and thus cannot be dedicated to other simultaneous functions. Colloquially, this cost is described as listening effort, which is thought to give rise to listening-related fatigue (McGarrigle et al., 2014).

The experience of mental effort is near universal and thus can seem to be intuitively understood. However, generating a formal definition and objective, validated measures of effort have not been without contention. A commonly cited definition stems from the Framework for Understanding Effortful Listening (FUEL), which is directly based on Kahneman's capacity-limited model of attention (Kahneman, 1973). FUEL describes listening effort as “the deliberate allocation of mental resources to overcome obstacles in goal pursuit when carrying out a [listening] task” (Pichora-Fuller et al., 2016, p. 10S). Each portion of this definition requires unpacking: what is deliberate allocation?; what are mental resources?; etc. Although FUEL only uses the term “effort,” there is good reason to distinguish the subjective perception of listening effort (which may be influenced by intelligibility and Moore & Picou, 2018) from the engagement of mental resources that yield changes in behavior or physiology (Herrmann & Johnsrude, 2020). As an in-depth discussion is beyond the current scope, we use the term “effort” throughout to align with FUEL and point readers to models that do distinguish these ideas (Herrmann & Johnsrude, 2020) and to reviews of related issues (e.g., Kuchinsky & DeRoy Milvae, 2024). Below, we argue that understanding the historical context of the development of models of effort is important for validating objective measures, interpreting the results of studies that employ them, and ultimately understanding the underlying mechanisms and how best to target them through interventions.

Generally, studies of listening effort have provided evidence that there is a nonlinear relationship between task demands and effort: Effort tends to be maximal at moderate levels of task difficulty, decreases or asymptote as the task becomes easier, and decreases as the task becomes near impossible (as individuals “give up”). This nonlinearity means that it is particularly important for individuals to be assessed at similar levels of difficulty: ideally at moderate to high levels of intelligibility (Ohlenforst, Zekveld, Lunner et al., 2017).

When speech is at least moderately intelligible, studies have generally observed greater effort is associated with listening to a more degraded acoustic signal, such as with poorer signal-to-noise ratios (for a review, see Zekveld et al., 2018) or for individuals with hearing loss (for a review see Ohlenforst, Zekveld, Jansma et al., 2017). Importantly, differences in effort have been observed even in the absence of, or when accounting for, differences in performance (Kuchinsky et al., 2013; Winn & Teece, 2021; Zekveld et al., 2010). A recent systematic review (Ohlenforst, Zekveld, Jansma et al., 2017) suggests that some methods for assessing effort (e.g., subjective, behavioral) have yielded less consistent patterns of results across studies than other methods (e.g., physiological).

Commonly Used Measures

While there is little debate that mental effort can impact the listening experience, there is currently no universally agreed-upon method for quantifying listening effort. A number of measures of effort have been proposed and used in experiments that manipulate task demands as well as examine the impacts of group and individual differences (McGarrigle et al., 2014). These include self-report surveys, behavioral measures (e.g., accuracy, reaction time), physiological measures (e.g., pupillometry, heart rate variability), and neurophysiological measures (e.g., functional magnetic resonance imaging; fMRI, electroencephalography; EEG). High within-test reliability has been observed for all but a few measures (Alhanbali et al., 2019), with some evidence that pupillometry is even more reliable and sensitive to changes in listening effort than subjective, behavioral, or skin conductance measures (Giuliani et al., 2021). Furthermore, neural measures may provide even greater timing and spatial insights into the mechanisms that underlie listening effort (for reviews see, Kuchinsky & Vaden, 2020; Peelle, 2018).

While subjective self-report measures have a high degree of face validity, without additional training, raters have been shown to rely on their perceived intelligibility as a heuristic for responding to effort questions (Moore & Picou, 2018). Neural and physiological methods come with notable practical considerations—in terms of finances, required expertise, and participant exclusion criteria—limiting their collection in clinical settings and even many laboratories. Thus, there are good reasons to seek out an objective, behavioral measure of listening effort. Notably the Model of Listening Engagement (MoLE; Herrmann & Johnsrude, 2020) goes a step further, suggesting important dissociations between the subjective experience of listening effort from the allocation of mental resources, termed listening engagement. Perhaps the most commonly described purported objective measure of the allocation of cognitive resources has been collected via the dual-task paradigm, namely, dual-task cost (Gagné et al., 2017).

The premise of dual-task cost measures of listening effort as they are most used in the literature is sketched out in Figure 1. The basic assumption is that there is a limited set of shared mental resources that can be drawn upon to complete any given task. It is also assumed that these resources can be allocated to more than one task and that this allocation and division of resources can occur in the space of a relatively short period of time. In this framework, then, the more shared resources are required to complete a prioritized listening task (i.e., primary task, such as speech recognition in noise), the less shared resources are available for any other concurrent task (i.e., secondary task, such as visual monitoring for a target). Dual-task cost is calculated as the decrement in performance on a secondary task when performed in conjunction with a primary task (right side of figure) relative to when the secondary task is performed alone (left side of figure). The relative cost of primary-task listening demands (e.g., different signal-to-noise ratios, SNRs) are often compared directly (i.e., secondary task RTs for a harder minus an easier SNR condition), given that the same single-task RT would be baseline-subtracted from both.

Schematic of the dual-task cost measure of listening effort. Dashed box indicates tasks are performed simultaneously. Emojis have been carefully selected to make two points here and in subsequent figures. First, emojis capture emotion states that are often hard to verbally define. Second, we want to highlight that our field has yet to propose accurate operational definitions to fully capture the consequences of effortful listening.

Beyond being an easy-to-collect indicator of effort, dual-task paradigms may afford additional potential insights into the study of listening effort that are not commonly recognized. In particular, the design readily allows for the manipulation of multiple demands in a way that may be more ecologically valid than auditory tests alone—one can manipulate the acoustic challenge of listening, the linguistic properties of the auditory stimuli, as well as the timing and degree of multitasking cognitive demands (Kuchinsky et al., 2023). The ability to vary the nature and timing of these demands has the potential to reveal how and when these systems interact, thus leading to the effortful listening that arises in complex, real-world environments (Keidser et al., 2020). However, as will be described, there are also notable limitations of the dual-task paradigm, which have been described in other reviews (Gagné et al., 2017; McGarrigle et al., 2014).

The remainder of this paper addresses the underrecognized strengths and noted limitations of dual-task cost measures of listening effort in an attempt to move the literature forward. We describe two historical contexts that have utilized the dual-task paradigm for ostensibly different purposes: task-switching and models of attention and effort. By bridging these literatures, we aim to inform the design and interpretation of future studies of listening effort.

Revisiting Concepts: Multitasking, Dual-Task and Dual-Task Interference

Dual-Task and Multitasking

In the previous section, we referenced the term dual-task and described the operational definition that has seemingly been tacitly adopted in the current literature. A challenge for the definition of dual-task (or, in more general terms, multitask) is that the term “task” itself is not easily defined. The task that is of most interest in the context of listening effort is, of course, a listening task. Yet, to further complicate these definitions, the term “listening” itself implies several auditory, linguistic, and cognitive subprocesses (Mattys et al., 2012)—perceiving, understanding, responding—each of which themselves may involve multiple component processes.

Other potential dual-task tasks typically involve monitoring and making discrete responses to a stimulus (e.g., press a key when a target tone is heard) or performing a continuous, complex mental operation (e.g., multiplication). Figure 2a illustrates these three different types of tasks as an example. When designing each task, there are many factors to consider. For example, in a listening task (T1), how complex should the auditory scene be (i.e., how many targets and maskers are present, what target-masker ratios should be tested on) and what type of auditory stimuli should be used (speech sounds ranging from nonsense words to meaningful sentences or nonspeech sounds) are often dictated by the questions that the researcher is trying to answer.

Dual-task studies of effort involve a primary listening task (T1) and one or more secondary task (T2, T3, etc.). Multiple considerations needed for (a) designing each component task and (b) its expected interaction with the primary task. Tasks performed simultaneously are outlined with a dashed-line box. (c) Inspired by Figure 1 from Koch et al. (2018). Variations on when and how primary and secondary tasks are combined and compared to baseline conditions (left and right of each subpanel, respectively) have historically been used to investigate different aspects of attention allocation and effort. Manipulation differences include whether tasks are performed sequentially (no outline) or simultaneously (dashed outline), the composition of the baseline conditions (whether they include one or more task), and the stimulus-onset asynchrony (SOA) between tasks.

For other tasks (T2 or T3), the difficulty of the tasks can be modulated by other domain-specific factors (e.g., timing of the visual stimuli in Task 2 or what type of mathematical operation is used in T3 in Figure 2a). Generally, tasks refer to a behavioral or cognitive goal that must be accomplished, either as instructed by another person or by the individual themselves. Note some challenge in the definition of task itself when defining dual-task studies as the task of performing mathematical operations (T3) can subsume other “tasks,” such as monitoring (T2). Broadly speaking, however, multitasking is thought to occur when cognitive processes involved in performing two (or more) tasks are overlapping in time. Additional considerations are required in the design in terms of how the tasks are expected to interact with each other in the nature and timing of the resources they require. In this review, we define dual-task as a specific condition whereby the participant is asked to perform two tasks simultaneously (Figure 2b).

Dual-Task Interference

People often engage in multitasking in their everyday lives. Consider composing an email intermittently while listening to the keynote speaker at a conference. Many cognitive processes occur concurrently in time and across different tasks and are thus simultaneously mentally represented. When one is tending to more than one task, other factors need to be considered since monitoring or responding to multiple tasks comes with interruption and resumptions of one task and switching attention to another task.

Koch et al. (2018) outlined four specific costs associated with interferences when one is attending to two tasks. Even when the two tasks are not simultaneously attended to (Figure 2c top row), the fact that the participant is required to hold two sets of task-rules introduces a “mixing cost.” When the participant needs to switch from one task rule to another, another “switch cost” is introduced. For example, if you are tasked to add 7 from the number displayed on the screen in one block and in another block to subtract 7, this is easier than if you are tasked to switch between adding and subtracting within the same block. This difference in task performance is coined as “mixing cost” (Figure 2c top left). Furthermore, if you are switching between trials (one trial to add 7 and another trial to subtract, compared to completing a run of trials that ask you to repeatedly add 7), the “switch cost” is referred to as the cost to perform adjacent trials with switch rules compared to trials with repeated rules (Figure 2c top right).

If two tasks are performed simultaneously—which is most likely the case in a listening task context since auditory tasks inevitably unfold in time while a background task is also being performed—a dual-task cost needs to be considered (Figure 2c bottom left). However, it is important to note that the degree these two tasks overlap temporally (Figure 2c bottom right) could influence the cost due to the psychological refractory period (PRP). Telford (1931) observed that the more temporal overlap of tasks, the more slowly people respond to the latter task (Pashler, 2000).

Age-related factors and practice effects can also alter these dual-task interference costs. For example, there are close links between age-related cognitive changes and changes in motor control. Krampe et al. (2011) found that a concurrent memory search task produced clear dual-task costs in the distances walked during the allotted time, but these costs were larger in older than in younger adults. Walking is complex, but other less complex tasks (postural control tasks) were found to produce similar age-related performance declines. Boisgontier et al. (2013) suggested that postural tasks become less automatic with age, so that such tasks require increased allocation of attentional resources, which in turn can produce increased dual-task costs. Switch costs can also be sizably reduced with little practice but cannot be eliminated, unlike for mixing costs (Strobach et al., 2012; for a review of training effects, see Karbach & Strobach, 2022). Therefore, practice is an important factor to consider in dual-task paradigms. Experimenters also need to consider these age-related factors in dual-task paradigms when testing listening effort across different age groups.

Revisiting Concepts: Attentional Resource Allocation Models

A fundamental limitation of cognition is that we do not process all of the information that exists in a complex environment with equivalent fidelity. Selective attention refers to our ability to direct mental resources toward processing of task-relevant information and away from irrelevant information (for a review, see Driver, 2001). With the rise of information theory and a surge of research in cognitive psychology in the middle of the 20th century, models were developed that aimed to reveal which stages of processing are limited and modulated by attention (e.g., perception, response generation, memory), the nature of these limitations (structural, capacity, both, neither), and ultimately their impact on task performance and effort. Here we provide a broad summary of some of the major models of attention, how they employed multitasking paradigms to probe these models, and ultimately how they bear on dual-task cost measures of listening effort. For more detailed historical perspectives on models of selective attention, see also Neumann (1996).

Structural Bottlenecks

To account for the effects of selective attention, structural bottleneck models largely focus on understanding where in the cognitive processing chain there are structural limitations on the flow of information. Broadbent's (1958) Filter Theory of Attention placed a single-channel processing bottleneck just prior to perceptual analysis, such that only one stimulus can be perceived at a time while the other is held in an echoic or image queue. Thus, there is no limitation on what enters the system—processing constraints are driven by the structure of the mental architecture, which determines the amount of information that can pass through an attentional filter at a given time. Information that is not processed decays. In a cocktail party scenario in which a target speech stream occurs in the presence of one or more nontarget streams, this filter theory would predict that the unattended speaker is not perceived unless the listener switches attention to the previously unattended information before it decays.

Other filter-based structural models specify the location and control of the perceptual bottleneck differently. For example, Treisman's Attenuation Model (Treisman, 1964) also specified a bottleneck early on in perception, but unattended stimuli are attenuated rather than filtered out completely. Late selection models, such as by Deutsch and Deutsch (1963), posited the bottleneck to exist just prior to response selection. In this case, all cocktail party signals would be heard, but only one can be responded to at once. Alternative structural theories placed greater emphasis on the selection of relevant information rather than the filtering out of irrelevant information (“analysis by synthesis”, Neisser 1967).

One of the multitasking paradigms that were used to develop and test structural bottleneck models is the aforementioned psychological refractory period (PRP) paradigm (Figure 2c bottom right). The PRP paradigm is based on structural accounts’ assumption that processing takes place in a series of discrete steps and that parallel processing is impossible for certain mental processes. Generally in PRP tasks, there are two tasks (T1, T2) each of which involved stimulus presentation (S1, S2) and required speeded responses, respectively (R1, R2). For example, these may include auditory tone identification and visual letter identification. Stimuli are presented with varying stimulus-onset asynchronies (SOAs). The premise of this task is that, to the extent that the two stimuli cannot be processed in parallel, shorter SOAs will lead to larger slowdowns in the S2-to-R2 response time (PRP effect), but generally not affect S1-to-R1 response times. A PRP effect indicates that some aspects of S1 processing (e.g., S1-based selection of R1 processing, such as response selection, activation, and retrieval) had to be completed before S2 processing could be initiated, indicative of a structural bottleneck for that particular process.

Using the PRP paradigm, research has generally identified the response selection to be the structural bottleneck (Pashler, 1984, 1994). Though notably, bottleneck effects have also been observed using hybrid-PRP paradigms (Koch et al., 2018) in which a primary memory encoding task is observed to interfere with a secondary RT task and vice versa (Jolicœur & Dell’Acqua, 1998). As evidence that the structural bottleneck is determined by central (not sensory or motoric) limitations, the PRP effect has also been demonstrated when stimuli are presented in different sensory modalities and responses require different motor systems (e.g., button press, verbal response, or eye movements; Pashler, 1990).

Although the PRP paradigm differs in some key aspects compared to dual-task paradigms used to measure listening effort, one takeaway from this literature highlights the importance of specifying which processes subsumed under the general term “listening” contribute to observed processing costs. In particular, an overlap in response selection processes (Pashler, 1984, 1994) or between memory encoding and response selection processes (Koch & Prinz, 2002; Koch & Jolicœur, 2007) may underlie a significant portion of interference effects that contribute to dual-task cost metrics.

Capacity-Limited Models

In contrast to structural bottleneck models, capacity models specify that processing limitations do not depend solely on the channel via which information is transmitted and processed, but also on the size of the one or more pools of mental resources allocated to a task. Though beyond the scope of the current paper, the nature of these resources has long been, and continues to be, up for debate (e.g., Kurzban et al., 2013; Navon, 1984).

In arguably the most influential single-capacity model, Kahneman built upon structural models by positing that there are two components necessary for task completion “an information input specific to that structure, and a nonspecific input, which may be variously labeled ‘effort,’ ‘capacity,’ or ‘attention’” (Kahneman, 1973, p. 9). His use of neural/physiological labels themselves represented a major departure from the information-theory perspectives that had largely inspired the models of attention at the time. Compared to structural models, capacity-limited models were proposed to allow for greater flexibility in how attention can be directed at a given moment. As will be further discussed, it is important to highlight that structural and capacity-limited models are not mutually exclusive. Kahneman very clearly stated that both are necessary for describing the allocation of attention: a capacity-limited set of mental resources that can be flexibly allocated to process stimuli within an inherently structurally limited mental architecture.

Single-Capacity Models

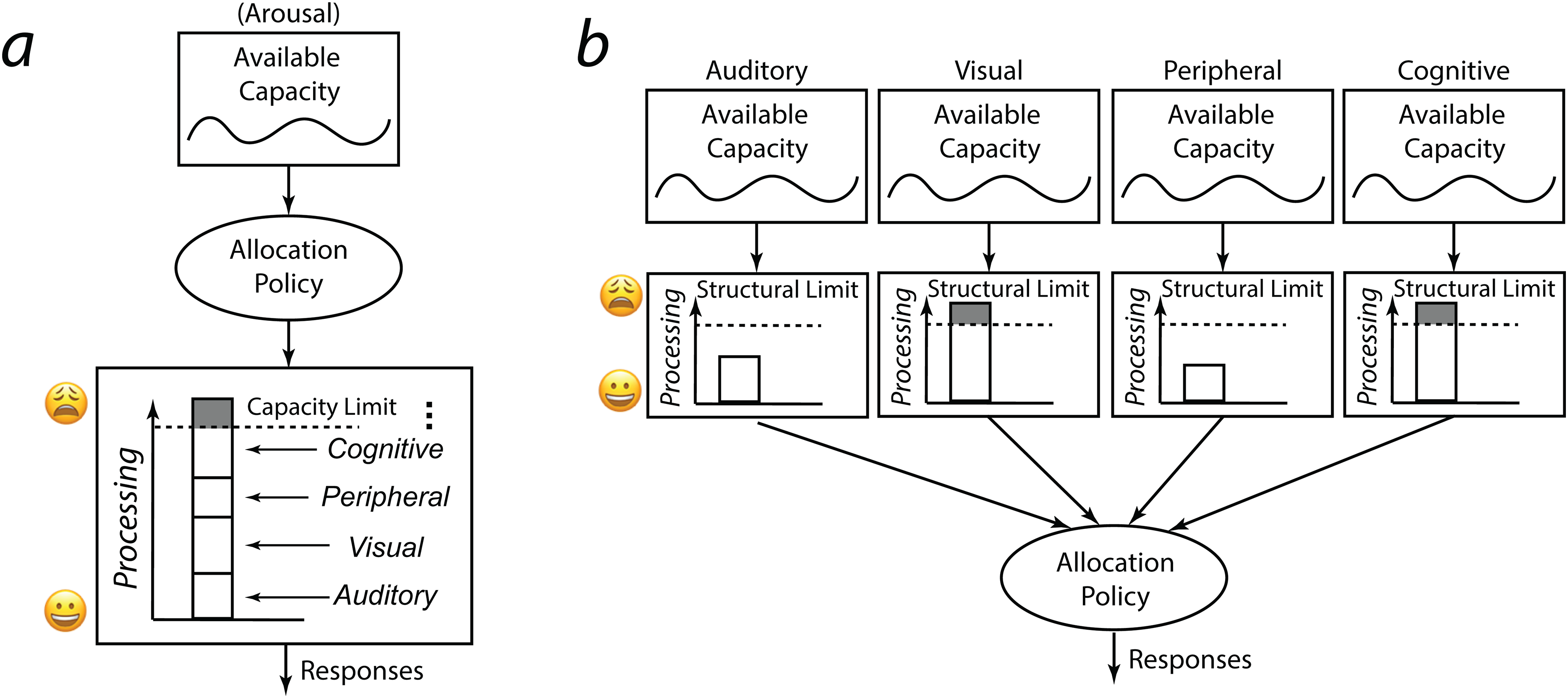

In studies of listening effort, the most commonly cited theoretical model, FUEL (Pichora-Fuller et al., 2016), is rooted in the capacity-limited model of attention developed by Kahneman (1973). In his seminal book, Kahneman (1973) described a general pool of attentional resources that is limited in the total amount that can be deployed at a given moment. Figure 3a depicts the central portion of the model in which effort and attention are driven by the evaluation of task demands. An allocation policy selects and directs resources from the generic attentional pool to tasks requiring mental resources (i.e., not early sensory processing). Any such auditory, visual, peripheral, and cognitive demands are drawn upon the total available resource capacity. Factors that can impact the allocation of resources include involuntary attention to novel stimuli, changes in demands that emerge over time, and changes in arousal state or motivation. For example, more capacity may be available when arousal is at a moderate level than when it is very low. While low arousal may impede people from representing the task set and hinder performance monitoring, overly high arousal disrupts allocation.

For illustrative purposes, two classes of models of attention and effort are presented here. (a) Single-capacity model. This diagram is inspired by Kahneman's Capacity Model of Attention (Kahneman, 1973) and recapitulated in the FUEL model (Pichora-Fuller et al., 2016). The available capacity in this model can be modulated by many factors (here, showing arousal). There can be many factors that would influence the allocation policy (not shown in this figure), but the possible processing involved leading to a response can potentially be driven by different sensory domains (e.g., auditory and/or visual) as well as different levels (e.g., peripheral and/or cognitive). How these individual components are combined and potentially exceed the capacity limit is often not well described. (b) Multiple capacity model. This diagram is built on the single-capacity model in (a) but further expands on how the limits can be delimited structurally for each domain/level of processing.

The main differences between structural bottleneck and single-capacity-limited models lie in the extent to which task interference is driven by mechanism-specific demands (structural bottlenecks) or by relative task difficulty (capacity-limited). While structural models describe the sequence of operations that can be performed on a set of concurrently appearing stimuli, capacity models describe the relationships among components of the system. Capacity models highlight that there is flexibility in how attention can be allocated at a given time than would be permitted under a filter-based theory alone. For example, while structural models posit that response selection always happens in a serial manner, capacity-based models indicate that processes can occur either serially on in parallel, primarily depending on the overall task difficulty, which can be impacted by one's arousal state and other contextual factors.

Multiple Capacity Models

A critical component of Kahneman's single-capacity model of attention is that “interference is nonspecific, and it depends only on the demands of both tasks” (Kahneman, 1973, p. 11). However, there has long been evidence that capacities were more specific than Kahneman postulated, in much the same way that Baddeley and colleagues revealed that the construct of a working memory is made up of multiple subsystems which differentially contribute to attention switching (for a review see, Repovš & Baddeley, 2006). Around the same time as his book was published, studies had already been published demonstrating greater task interference not only with greater task difficulty, but specifically when two tasks are more similar in their nature (e.g., Brooks, 1968). For example, less task interference had been observed for a primary auditory shadowing task when the second task involved monitoring for visual pictures compared to attending to another auditory speech stimulus (Allport et al., 1972). Early explanations of these types of results alluded to multiple capacities being necessary, such as: “a number of special purposes computers (processors and stores) operating in parallel and, at least in some cases, capable of accepting only one message or ‘chunk’ of information for processing at one time” (Allport et al., 1972, p. 233). The notion of specialized processors (see Figure 3b) was also taken up by others around the same time (Sanders, 1979).

Indeed in his book, Kahneman (1973) recognized: “For an effort theory, the occurrence of interactions between tasks is a complication, because the attractive notion that effort demands of concurrent tasks are additive must be abandoned whenever such interactions occur. It is obviously impossible to predict the amount of interference between two tasks solely on the basis of their separate demands for effort. Overlap, similarity, and mutual compatibility must also be considered. However, it appears equally impossible to account for the phenomena of interference without reference to the role of task difficulty. Thus, it is useful to retain the term of structural interference for situations of strong interaction between similar tasks, and to apply the label of capacity interference to situations where difficulty is the main determinant of results” (p. 199).

Thus, Kahneman seems to have suggested that observations of increased interference between more similar tasks are indicative of “structural interference” in which two tasks utilize the same perceptual or response processes, which are outside the domain of the attentional systems that drive changes in effort, namely “capacity interference.” Kahneman defined structural interference as occurring when two tasks that are equally difficult when performed alone (Tasks A and B), but that when each is combined with another Task C, now yields unequal difficulty. For example, finding that A + C > B + C, indicates there has been some interaction between Task A with Task C that is not purely additive. However, there has been a substantial amount of subsequent research showing that interference effects are common. For example, Wickens et al. (1983) observed greater interference effects between a primary visuomotor tracking task and a secondary task that included visual stimuli and/or manual responses compared to one that included auditory stimuli and/or verbal responses.

In contrast to models like Kahneman's in which the class of central mechanism was not specific, content-dependent models stated that the content of the central systems required by tasks can, in fact, impact interference effects, either in terms of shared properties of the stimuli or the response (e.g., Navon & Miller, 1987; Pashler, 1990) or the linkages between the two (Hazeltine et al., 2006). Perhaps the most well-known example of content-dependent models posits multiple resources (Allport et al., 1972; Navon & Gopher, 1979; Wickens, 1980, 1984).

As sketched in Figure 3b, multiple resource theory posits that the degree of dual-task interference depends on specific central resources. In Wickens’ multiple resource framework (1980, 1984), interference is impacted by the nature of the tasks along three main dimensions: central codes of processing (e.g., spatial vs. verbal), stimulus input modality (e.g., auditory vs. visual), and response output modality (e.g., manual vs. verbal). A fourth, visual channel dimension (focal vs. ambient) was later added within visual resources (Horrey et al., 2006). In computational models (for an overview see Wickens, 2021), total interference between two concurrent tasks is thus predicted by the sum of the resources demanded by each task (ordinal scale for each task: 0 [automated], 1 [easy], to 2 [difficult]) and the degree of required overlap among those resources (binary for each possible dimension: 0 or 1).

However, some have noted that such models are more akin to a data redescription rather than providing explanatory power of the likelihood of two tasks generating interference (Navon, 1984; Neumann, 1996). It can be challenging to know which resources a task is likely to engage a priori (Allport, 1980). Furthermore, the small set of dimensions specified in multiple resource theories are unlikely to account for the types of interference observed (Neumann, 1987). Though beyond the scope of the current paper, some theories have posited that specifying limited capacities may not be necessary to explain the properties of attention at all (Sanders, 1983).

While the specifics of these computation models are still being debated, however, there is still much to be learned from the development of multiple capacity theories. Specifically, contrasting with the single-capacity model that has formed much of the basis of current research on listening effort, multiple capacity models emphasize that there is more than one type of resource capacity, and these resources may interact, affecting how effort is ultimately allocated.

Considerations When Using a Dual-Task Paradigm to Measure Listening Effort

Extensive research in the field of hearing sciences has examined the relative advantages and disadvantages of myriad purported metrics of listening effort (McGarrigle et al., 2014). In particular, the dual-task cost is a behavioral measure of effort that avoids potential subjective biases that can color self-report ratings (Moore & Picou, 2018), while being easier to implement and less costly than physiological or neural measures (for a review of listening effort measures’ application to audiology see Kuchinsky & DeRoy Milvae, 2024). Of course, the dual-task paradigm also affords researchers the opportunity to manipulate multiple types of task demands to examine their additive or interactive impact on listening.

A number of theoretical and methodological concerns currently preclude the widespread, standardized use of dual-task measures of effort in the laboratory and in clinical settings. Indeed, reviews of the listening effort literature have observed weak or inconsistent effects across dual-task studies, likely due in part to variations in design (Gagné et al., 2017). For example, across studies that aimed to answer the very straightforward question of whether or not hearing loss leads to greater listening effort and hearing aids reduce it (Ohlenforst, Zekveld, Jansma et al., 2017), half of the reviewed findings found greater effort and slightly less than half showed no difference. Similarly, for the question of whether hearing aids reduce listening effort, 14 studies observed reduced effort, six found no difference, and two indicated an increase in effort. Dual-task paradigms, in particular, were observed to yield “low quality of evidence” due in large part to a lack of consistency across study designs, while greater consistency was observed with pupillometry or EEG measures of effort.

Inconsistencies across dual-task studies were noted in a review of the listening effort literature for cochlear implant (CI) users compared to normal-hearing or hearing-impaired non-CI user controls or within unilateral, bilateral, and bimodal CI user groups (Philips et al., 2023). Dual-task experiments yielded no differences across CI users and controls nor across CI groups. This review also observed that pupillometry and EEG studies were more sensitive to differences in effort.

Together, these reviews suggest that dual-task studies may be particularly susceptible to variability driven by design choices. The next section describes some of these design limitations and proposes both theoretical and high-level methodological considerations for future research that may improve the standardization and interpretability of dual-task measures of listening effort. Although it is not yet possible to write a recipe book for designing the ideal dual-task study for a given question, we argue that closer connection to the existing literature can provide a framework upon which researchers can more solidly justify their study choices.

Consideration 1 (Theoretical): Start With a Well-Specified Model of Attention and Effort

The observation that subjective report, behavioral, physiological, and/or neural measures of effort do not commonly covary with one another has led, in part, to the conclusion that listening effort is a multidimensional construct (Alhanbali et al., 2019; Strand et al., 2018). Based on these findings alone, however, it is difficult to tease apart whether listening effort is a singular construct into which individual measures tap differentially well (in line with single-capacity models, Figure 3a) or whether listening effort is composed of multiple distinguishable mechanisms (in line with multiple capacity models, Figure 3b). One might propose moving away from the broad term “listening effort” which implies the former and toward a collection of terms like “perceptual effort,” “lexical access effort,” “response selection effort”, etc. as indicated by the latter. For example, if one's research question is about the mental resources required by perceptual processes, or about interactions between perceptual and response selection effort, the hypotheses (and study design, see Consideration 2 below) should be based on the specific supporting theoretical models. Strand et al. (2020) highlight the potential consequences that a lack of construct validity has on the consistency of study results and thus our understanding of listening effort. Aligned with many of our considerations that follow, they recommend increased transparency and rigor in the way that listening effort is defined and measured.

We recognize the challenge of this consideration as there is more work to be done to understand the mental and neural underpinnings of listening effort (Peelle, 2018). For example, the degree of granularity of the potential subcomponents of listening effort must be the subject of additional research. Given that the number and nature of mental functions are not thoroughly delineated (Poldrack & Yarkoni, 2016), some models of listening effort have acknowledged that multiple pools of resources contribute to effortful listening but have remained “agnostic” with respect to the nature of those resources (Herrmann & Johnsrude, 2020). Though beyond the scope of the current paper, we urge interested researchers to continue to connect to the theories and models of attention and effort that have been investigated outside the domain of listening. For example, there has been extensive research into the question of what are mental resources and how to model effort in the fields of social psychology, neuroscience, and neuroeconomics (Kurzban et al., 2013; Shenhav et al., 2017; Westbrook & Braver, 2015). Similarly, weak correlations among measures of other cognitive functions, such as attention control and working memory capacity, have supported the idea that multiple measures are necessary to extract a latent index of complex cognitive constructs (for a review, see Unsworth et al., 2021).

As we detail in each of the subsequent sections, we contend that explicit consideration of the model of effort and nature of the mental resources that drive a study's hypotheses, method, and interpretation is critical for moving the field forward. The previous sections aimed to highlight that much work has already been done to inform our understanding about the relationship between theoretical models and measures of effort. Of particular note is that studies of listening effort have generally emphasized the role of capacity-limited resources in driving effort over structural limitations. However, capacity and structural limitations are not mutually exclusive despite how discussions are often crafted in current literature. Kahneman (1973) stated as such: “Studies of selective and divided attention indicate that the deployment of attention is more flexible than is expected under the assumption of a structural bottleneck, but it is more constrained than is expected under the assumption of free allocation of capacity. A comprehensive treatment of attention must therefore incorporate considerations of both structure and capacity” (p. 11). Despite the noted complementary perspectives of these models, we suggest that dual-task listening effort studies, however, have broadly focused on the capacity, and specifically a single generic capacity, while ignoring or being agnostic to the architecture of the attention systems that jointly determine effort allocation.

As illustrated in Figure 3c, Koch et al.'s (2018) review highlights that capacity-limited and structural models of dual-task performance can be better integrated with appreciation of cognitive flexibility. One perspective on integrating capacity limitations into structural bottleneck accounts is to recognize that the central processing stage is structurally limited, but that allows for flexibility in how those resources are allocated to the myriad task processes. Indeed, research on structural bottleneck accounts has acknowledged that “evidence for a bottleneck in some particular pair of tasks is not evidence against the entire capacity-sharing perspective, because that perspective grants that sequential processing is a possible strategy” (Pashler, 1984, p. 359). In this view, central capacity and bottleneck accounts can yield the same predictions (Navon & Miller, 2002; Tombu & Jolicœur, 2003), with structural bottlenecks merely being a special, more restrictive case of capacity-sharing in which stimuli are processed serially. That is, a strict structural bottleneck account might posit that 100% of a central capacity be allocated to T1 and 0% to T2 at a given time (or vice versa), whereas a broader capacity-sharing instantiates parallel processing by allowing for the allocation of any percentage split across tasks. As we detail in the sections to follow, important for the design of dual-task studies of listening effort is to understand under the conditions under which processing is likely to occur in a more parallel versus serial fashion.

In the following sections, we aim to be explicit about how greater consideration of models of attention can help guide the way in which studies of listening effort are designed and interpreted. Though such connection to theoretical models would benefit all studies of listening effort, we assert that it is particularly critical for studies that employ the dual-task paradigm. Specifically as noted, dual-task studies have been observed to yield inconsistent patterns of effects across studies seemingly in large part to variations in task designs (Gagné et al., 2017; Ohlenforst, Zekveld, Jansma et al., 2017; Philips et al., 2023).

Consideration 2 (Methodological): Task Design Should be Guided by One's Model of Attention and Effort

Any researcher designing a dual-task study is likely to have wrestled with the sheer number of decision points that must be made, each of which potentially impacting the likelihood of obtaining a dual-task cost estimate (Gagné et al., 2017). While the selection of the primary task is often fairly clear as it drives the theoretical question, of greater challenge is the selection of the secondary task—particularly its nature and timing relative to the primary task—as well as the structure of the entire task—the nature of the baseline conditions as well as the manner in which those are interleaved with dual-task conditions.

Secondary Task(s)

Inherent in the design of a dual-task or multitasking paradigm is a commitment to deciding when and which mental processes compete for shared resources. In studies of listening effort, the primary task (T1, Figure 2a) will involve listening and responding to an auditory stimulus. If one has adopted a single-resource model of attention (Kahneman, 1973; Pichora-Fuller et al., 2016), then the secondary task could be any task, so long as it consistently requires mental resources across the experiment, is sensitive to changes in primary task difficulty (Fisk, 1986), and there is no physical interference between tasks (e.g., button response to both tasks). Single-capacity models do not distinguish between the specific perceptual and cognitive subprocesses engaged by the task. Thus to assess the effort associated with speech recognition in a task eliciting verbal responses, dual-task cost measures could be obtained just as well with equally difficult secondary tasks that involve visual stimulus monitoring and detection (T2, Figure 2a) with button-press responses (Downs, 1982) as with one that involves performing mathematical operations (T3, Figure 2a). However, if the nature of the resources across tasks matters, as posited by multiple capacity models (Hazeltine et al., 2006; Wickens, 2008), then secondary tasks that exhibit greater overlap with the primary task in the stages and codes of processing should generate more interference and thus potentially more robust measures of effort.

A few studies have examined the impact of secondary task design on measures of listening effort with differing outcomes. Picou and Ricketts (2014) asked participants to recognize words in quiet or noise and with or without audiovisual cues. The secondary task was a simple visual detection task, a complex visual categorization task, or a semantic judgment task (i.e., whether the just-heard word was a noun). The semantic task yielded the largest effects of noise on effort (but not audiovisual presentation), which was thought to arise from its engagement of a higher level of processing and thus the most dual-task interference. Wu et al. (2016) did not find an effect of secondary task complexity (visual reaction time task vs. incongruent-items Stroop task) on measures of effort from a sentence-in-noise recognition task. Kuchinsky et al. (2023) observed larger dual-task cost estimates of effort with a simpler visual detection (vs. categorization) secondary task, particularly for words-in-noise stimuli that were more linguistically challenging (i.e., less common in English). Across these three studies then, larger, zero, and smaller changes in speech-in-noise effort were observed with manipulations of the nature of the secondary task. All studies recognized that variations in design choices across tasks have the potential to impact the patterns of results across dual-task experiments (Gagné, 2017). Minimally, these findings suggest that not considering the nature and timing of the secondary task in relation to the primary task can lead to inconsistent results across studies—a critical problem for replicability of results as well as the development of a standardized measure of effort.

On the one hand, the number of parameters that require decisions when designing a dual-task study can seem overwhelming. On the other hand, these options afford an underutilized (in the domain of listening effort) advantage of the dual-task paradigm that systematic manipulations of the nature and timing of the tasks can provide mechanistic insight into the systems that drive listening effort. As reviewed, such manipulations were generally the purpose of employing a dual-task paradigm as tests of structural and capacity models of attention. We contend that the findings from these studies should inform task selection and timing decisions of studies that aim to calculate dual-task cost measures of effort.

Understanding structural models of attention can help to identify the critical subprocesses that may underlie measures of effort in a dual-task cost paradigm. For example, Trumbo and Noble (1970) had participants perform a primary task in which they learned a list of nonsense consonant-vowel-consonant syllables. They examined the impact of secondary tasks that involved attending to sequences of lights, but were designed to differentially interfere with one or more of four different stages of processing: (1) stimulus preprocessing (or representation formation), (2) stimulus classification, (3) response selection, and (4) response execution. In addition to a (a) no-task control condition, secondary tasks were designed to interfere with (b) the initial two stages (implicit sequence learning task with no response), (c) the latter two stages (free response task), (d) all stages except response selection (shadowing task), and (e) all stages (anticipatory sequence learning task with a response). Only tasks that required response selection (c and e) were disruptive, irrespective of task difficulty (i.e., free response was more disruptive despite being less complex than the visual sequence learning task). In the context of listening studies, these findings suggest that dual-task cost might be driven by factors that impact response selection processes rather than by purely auditory perceptual processes. Neural and physiological methods may lend themselves more easily to teasing apart the contribution of these component processes to effort (e.g., Fleming et al., 2024; Kuchinsky et al., 2013).

Brown (1968) noted the importance of considering the primary and secondary task structural interference, in addition to task difficulty, when measuring cognitive load. Tasks that impose high motor load and those that impose high perceptual or conceptual load likely have different effects on secondary tasks (Brown et al., 1967). Because Brown observed that dual-task cost measures are a function of both capacity and structural interference, he asserted that cost is best measured via a battery of secondary tasks rather than by a single task.

The inclusion of multiple within-subject controls has the added advantage of potentially yielding results that may be even more sensitive to differences at the level of the individual (Brungart et al., 2022), which has the potential for greatest clinical impact. Ideally, the choice of secondary tasks should most align with the research question. For example, if the goal is to measure listening effort within a population where deficits are expected, such as older adults or other clinical populations (e.g., individuals at elevated fall risk), then at least one of the secondary tasks should reflect multitasking challenges that are likely to arise in their daily lives (e.g., walking while talking paradigm; Beauchet et al., 2009; Kemper et al., 2003). Whenever possible, piloting and/or basing task selection on published designs collected within the population of interest may be critical for selecting a sufficient set of secondary tasks. This may be particularly important if timing or other methodological details are sparse in the literature or must be adjusted in the current design.

Block and Trial Structures

As described in the section on dual-task interference, when needing to attend to two or more tasks, there can be costs beyond the dual-task cost (Koch et al., 2018). Careful consideration of the potential for these additional costs to contribute to dual-task effort calculations is important for the design of listening effort studies and ultimately the interpretability of study results. In particular (see Figure 2c), to the extent that T2 overlaps in time with T1 PRP effects should be considered. Switch and mixing costs may arise with changes in task goals within versus across blocks (mixing costs) or within versus across trials (switch costs).

While practice with the tasks can help minimize switch and mixing costs, they cannot fully eliminate them (e.g., Karbach & Strobach, 2022; Strobach et al., 2012), thus potentially influencing the calculation of dual-task cost measures effort. Furthermore, factors such as aging can alter the likelihood for observing additional costs (Krampe et al., 2011). When task-set switches are anticipated, it is important to try to estimate their contribution with the selection of additional baseline conditions, such as by including (for switch costs) blocks that contain no switches or (for mixing costs) counterbalancing the order of switch and nonswitch blocks. Importantly, if task prioritization instructions are to split attention across tasks (rather than prioritizing the primary task), estimating these “hidden” costs becomes much more challenging.

Consideration 2 Summary

In this section, we highlighted how previous models of attention and task-switching can inform dual-task studies of listening effort, both in terms of the selection of secondary tasks as well as the design of the overall block and trial structure. The overarching takeaway is that more careful consideration of control conditions is necessary to interpret dual-task cost calculations. Based on previous research, recommendations include collecting multiple within-subject controls conditions that allow researchers to tease apart dual-task versus mixing and switch costs in conjunction with primary-task prioritization instructions. Additionally, more reliable results are expected when using more than one secondary task, particularly those that may be more relevant to the mechanisms that are thought to underlie listening effort as well as more relevant to the real-world multitasking experiences in the population of study.

Consideration 3 (Methodological): Anticipate and Check Dual-Task Assumptions

The dual-task paradigm entails a number of task design assumptions that can be challenging to meet, but important for the interpretability of study results (Fisk et al., 1986).

Assumption 1. Secondary Tasks Require Mental Resources

The first assumption, as stated by Fisk et al. (1986), is that (1) the secondary task requires mental resources (i.e., is not automatically processed) and does so consistently across the experiment (i.e., the load does not change throughout). Tasks that have so-termed consistent mappings between stimuli and responses may become automatically processed, and thus not require effortful resources (Schneider & Shiffrin, 1977; Shiffrin & Schneider, 1977). Automaticity could develop with practice, for example, as individuals indicate the position of an object on a screen (say, a square in the upper left corner) with the same button response on every appearance (say, the upper leftmost button option in a grid). To maximize interference, they advise, a secondary task should involve searching for novel targets, stimulus presentation near perceptual threshold, or require that stimuli–responses have variable mappings.

Assumption 2. Participants Do Not Shift Their Attention Away From the Primary Task

The second assumption is that participants do not shift their attention prioritization away from the primary task in the presence of a secondary task (i.e., consistent primary task performance). However there is ample evidence that performance on T1 is not in fact immune to T2 processing. In a review, Strobach et al. (2014) found that more than half of the analyzed studies showed poorer T1 performance or longer T1 response times for short T1-to-T2 SOAs. Longer response times and less accurate T1 performance have also been observed when T1 is performed as part of a dual-task versus a single-task condition (Jiang, 2004; Sigman & Dehaene, 2006) and with greater uncertainty about the secondary tasks (for a review see Schubert, 2008): a global cost associated with representing two distinct task-sets across the experiment (Koch et al., 2018). Recent listening effort studies have indeed observed that even with explicit instructions, primary and secondary task prioritization strategies can vary substantially in different listening conditions (Ceuleers et al., 2024) and across individuals (Kestens et al., 2024).

Figure 4 further illustrates these potential concerns. The white and orange bars in the leftmost panels of Figure 4a restate the canonical calculation for dual-task cost measures of effort shown in Figure 1: decrements in secondary task performance when performing T1 and T2 together relative to performing T2 alone. Assumption 2 states that there should be no change in performance on the primary task when moving from single to dual-tasking conditions (T1 + T2 example in Figure 4a). However, the simple linear subtraction calculation of dual-task cost is complicated when this assumption is not met: dual-task cost is now a function of both primary and secondary task difficulty (T1 + T3 example in Figure 4a). How much should one thus penalize the standard dual-task cost calculation for this shift in attention? One strategy might be to only calculate cost based on trials for which the primary task response was correct, which has been shown to yield similar results as an all-trials analysis (Huang et al., 2023), but may be more appropriate for this concern. However, correct primary task responses alone do not indicate that primary-task attention prioritization was maintained in dual-tasking conditions (e.g., if they got the answer right by chance or if there were subtle shifts of attention that did not manifest as performance changes).

(a) T1 and T2 indicate typical dual-task cost measures of effort calculations (as in Figure 1). However, violations of Assumption 2 may be expected to manifest differently depending on one's theory of attention allocation. (b) Dual-task cost calculations under single-capacity model assumptions. (c) Dual-task cost calculations under multicapacity model assumptions.

The interpretation of shifts in attention prioritization differs depending on whether one ascribes to a single versus multiple capacity theory of attention. In both (Figure 4b and c), dual-task costs arise when the total processing resources required (y-axis) exceed those available (i.e., their capacity limit, horizontal dashed line). Within a single-capacity framework (Figure 4b), all tasks (T1, T2, T3, etc.) draw upon a single generic pool of resources. Thus, in the T1 + T2 example, the dual cost can entirely be attributed to the lack of resources devoted to processing T2 alone. However, in the T1 + T3 example, the performance of T1 in this dual-task setting dropped along with T3 (refer back to Figure 4a, T1 + T3). While on the face of it, a drop in T1 performance suggests that T1 has become a more challenging task in the presence of T3, and thus requires more processing resources (Figure 4b); alternatively the drop in performance may be driven by a reallocation of limited resources to T3 and away from T1. Regardless, it is unclear how the single-capacity model can fully capture the complexity of having a performance drop in both T1 and T3, in violation of Assumption 2.

Assumption 3. Increased Primary Task Difficulty Yields Poorer Secondary Task Performance

Fisk et al.'s (1986) third assumption of the dual-task is that greater primary task difficulty results in poorer secondary task performance. However, it can be difficult to know ahead of time whether a given task design will meet such requirements and may depend on one's theory of what mental resources are and how they interact. Violation of assumptions may partially explain why dual-task studies of listening effort have been shown to yield small (Picou et al., 2013) or inconsistent effects across studies (Gagné et al., 2017; Ohlenforst, Zekveld, Jansma et al., 2017). For example, Miller et al. (2009) have found that the distribution of SOAs in a task can impact the serial versus parallel processing of stimuli: more serial processing occurs in tasks that include more commonly occurring short SOAs and more parallel processing with longer SOAs. This line of work highlights that the specific parameters of the task can influence the mode of processing to maximize efficiency (for a review see Fischer & Plessow, 2015).

Another pattern of results that may require a more flexible, capacity-sharing version of structural models is crosstalk effect (see Koch et al., 2018 for a deeper discussion). Crosstalk refers to the transfer of information across processing channels, which, under strict bottleneck accounts, should be impossible for response selection processes at a minimum. While one could suggest reconciling strict bottleneck with crosstalk effects by identifying smaller subunits of response selection that require serial processing, a more parsimonious (and falsifiable) perspective is that there is again a continuum from highly “shielded” processing streams, and thus more serial processing, to less shielded and thus more parallel processing. Notably, in the more flexible state, the ability to shift to alternative tasks would also be facilitated, thus suggesting a trade-off between shielding and shifting processing that requires the engagement of adaptive control mechanisms (Goschke, 2013). Similar to the SOA studies mentioned above, participants have been observed to engage in more serial to more parallel processing depending on the task context, such as the proportion of incongruent trials (Fischer et al., 2014).

In dual-task studies, participants are generally instructed to respond to T1 prior to T2 and thus, under strict bottleneck accounts, it is assumed they prepare their responses respectively. However, if given the option, participants do exhibit flexible patterns of scheduling, such as ordering processing in terms of and relative task difficulty (Ruiz Fernández et al., 2011). Task context factors do appear to play a role in the extent to which participants are willing and able to follow task prioritization instructions (Levy & Pashler, 2008). For example, greater reliance on task ordering preferences over explicit instruction appears to occur with less experience with dual-task trials (Jansen et al., 2016). Additionally, counter to explicit instructions, participants have been observed to exhibit preferences for prioritizing secondary tasks (map reading) that are more relevant to the primary task (driving), even if that informative, secondary task is more mentally demanding than a less informative, less demanding task (Cnossen et al., 2004). Together these findings suggest that understanding attention allocation in dual-task cost studies requires an understanding of both the structure and function of its underlying processes.

Consideration 3 Summary

We contend that consideration of assumptions of the dual-task cost measures is critical at study design and interpretation stages. When designing a dual-task study, previous research and pilot testing can provide important insights into the design factors that may impact the extent to which assumptions are likely to be met. As described above, this can include the tasks’ susceptibility to become automated (i.e., no longer resource demanding) with practice, distribution of SOAs, the relevance of the secondary to the primary task, and the relative difficulty of the two tasks, which can drive variation in switch costs. Given the variability along all of these dimensions (Gagné et al., 2017), pilot testing may be necessary to supplement existing research, particularly if testing is to occur in a previously untested population (e.g., different age groups than reported in the literature).

When writing up dual-task results, we recommend making explicit what the dual-task cost measure assumptions are and how the reader will know that they have been met with the current study. This may include discussions of piloting data and/or include statistical tests assessing changes in secondary task performance across trials within the study as well as primary task performance in the presence of absence of the secondary task. However, it is also important to be aware that these manipulation checks may not be fully sensitive to small violations, such as subtle shifts in attention that do not rise to the level of negatively impacting performance.

If dual-task assumptions have not been met, authors should detail why this is a problem for interpretation or seek out alternative analyses that may account for trade-offs in primary versus secondary task attention allocation. There is some recent indication that analyzing all trials, even when participants err on the primary task, provides more robust assessments of listening effort than correct-only analyses due to the increase in number of trials that can be included in analyses (Huang et al., 2023). Multivariate analyses may be able to account for the relative shift in performance across trials. However, this approach may be less well suited to providing information at the level of the individual participant, which may be particularly crucial for developing clinical measures of effort.

Consideration 4: Promote the Potential for Replicability

Varied design choices (Gagné et al., 2017) are thought to have driven many of the inconsistencies observed across dual-task studies of listening effort. As mentioned previously, some studies have observed that hearing loss is approximately equally likely across studies to result in greater or no difference in listening effort (Ohlenforst, Zekveld, Jansma et al., 2017). Without the ability to explain why and how those methodological choices or participant variability impacted the results, however, each subsequent dual-task cost study of effort merely serves to slightly tip the scales in favor of one conclusion or the other.

A final recommendation for promoting consensus across dual-task studies of effort is to ensure reporting supports the replicability of study results. Keeping the need for others to be able to replicate or reproduce study results requires that authors be explicit in the details of the dual-task methodology. This involves including details beyond the general instructions for the primary and secondary tasks, but with the exact wording of the instructions (including task prioritization), the nature of the visual, auditory, and/or other stimuli (sharing stimuli whenever possible), and their relative timing (sharing experiment presentation code or executables whenever possible). Even better would be sharing experimental paradigms and stimuli on public repositories (e.g., the Open Science Framework, osf.io) to facilitate other researchers’ ability to repeat or extend the study in a new group of participants. Strand and Brown (2023) note that there are many critical details, such as distinct features of specific talkers, which cannot be conveyed in text but which may greatly impact study replicability. For an in-depth discussion and practical considerations for improving reproducibility in cognitive hearing science research, see Heinrich and Knight (2020).

Moving Forward by Looking Backward

Given all of the new dual-task findings, methods, and analytical tools at our disposal, a reasonable researcher might ask: Why look backwards and why now? Our recommended considerations represent big asks, and thus it is especially important to clarify why we think that moving forward as a field hinges on our ability to look backwards.

More than 40 years after some of the pioneering dual-task studies of speech recognition effort (Downs, 1982), we recognize there are still major gaps in our understanding of what listening effort is and, likely as a result, how best to measure it, particularly with the dual-task paradigm. In their review of age-related changes in listening effort, Gagné et al. (2017) noted that inconsistencies in the results across 29 studies were likely due in large part to methodological differences across them. Several of their recommendations suggest that researchers may benefit from peering further back into the literature as we consider what future work would benefit the field. Regarding the ability of more recent models of effort to explain inconsistencies related to secondary task selection, they said: “… to our knowledge, neither the FUEL framework nor any other model of cognitive resources (cf. Wingfield, 2016) provides information that would theoretically motivate the choice of a specific type of secondary task” (Gagné et al., 2017, p. 22). In relation to inconsistencies resulting from variation in the temporal ordering of tasks, they said: “…both sequential and concurrent dual-task paradigms can be used to measure listening effort. At the present time, it is not known if these two approaches measure the same dimensions of listening effort” (Gagné et al., 2017, p. 22). In this vein, our goal here was to expand upon those conclusions by pointing dual-task researchers to the existing theoretical work that could help them as they make decisions about these and other methodological decisions.

Our intention, importantly, is not to discount past studies nor any model of effort. Each has provided critical insights into the factors that are likely to impact our understanding of listening effort. Furthermore, we note that we have not reviewed the full spectrum of relevant models of attention (e.g., functional view of attention, Neumann, 1987), cognitive models of language processing (Ease of Language Understanding, ELU, Rönnberg et al., 2013), nor models of listening engagement (MoLE, Herrmann & Johnsrude, 2020). Nor have we reviewed the full range of tasks that have been used to probe such models, many of which include sequential multitasking or other features similar to dual-task paradigms, such as hybrid-PRP (e.g., Jolicœur & Dell’Acqua, 1998), auditory attention switching (e.g., McCloy et al., 2017, 2018), bilingual language switching (for a review: Declerck, Koch & Philipp, 2015), or attentional blink (AB) paradigms (for a review: Dux & Marois, 2009). For example, AB studies observing declines in target detection following another target with short SOAs have been used to probe listening challenge (Gallun et al., 2012) and specifically listening effort (Akça et al., 2023).

Rather than provide a comprehensive review of relevant models and methods for understanding the allocation of attention to speech, our goal has been to remind the field of the many nuances in historical models that have served as the basis for research on listening effort (most notably, Kahneman, 1973). Likewise, see Wingfield (2016) for his revisiting of the history of models of working memory to better understand its purported role in cognitive models of language processing (i.e., ELU; Rönnberg et al., 2013). Such historical perspectives allow us to recognize not only that the details in these models matter theoretically, but also that they provide concrete considerations for us to move forward in studies of listening effort.

An example of such nuances can be seen in the FUEL's decision to change Kahneman's term “ “interfere” to “influence” because current thinking is that fatigue and (dis)pleasure can influence the evaluation of performance without being the results of performance” (Pichora-Fuller et al., 2016, Figure 1 caption, p. 16S). The point is well taken because, as highlighted in pupillometry studies that have partialled out the effects of performance (for a review see Zekveld et al., 2018), there are many factors that can alter how effort is allocated without resulting in a measurable impact on performance. This subtle change, however, could potentially obscure the large “dual-task interference” literature on which Kahneman's model was based. Re-reading Kahneman (1973) allows us to better appreciate both FUEL's incredibly important contributions to the field's conceptualization of listening effort, while rediscovering the foundation on which FUEL was built.

Though this paper has focused on a limited set of models of attention, we hope it sparks dual-task researchers to continue to look backwards to other approaches to studying attention. In particular, the signal detection theoretic approach to modeling attention is rarely discussed in the listening effort literature but is directly applicable to the dual-task experiments discussed in this note. Sperling (1984) was not the first to suggest that attention as an explanatory concept should be replaced by the concept of the allocation of processing resources. Reminiscent of the challenges with defining and measuring listening effort, the argument is that doing so replaces an ill-defined theoretical concept with a specific operationalization of performance which can then be analyzed mathematically.