Abstract

While listening, we commonly participate in simultaneous activities. For instance, at receptions people often stand while engaging in conversation. It is known that listening and postural control are associated with each other. Previous studies focused on the interplay of listening and postural control when the speech identification task had rather high cognitive control demands. This study aimed to determine whether listening and postural control interact when the speech identification task requires minimal cognitive control, i.e., when words are presented without background noise, or a large memory load. This study included 22 young adults, 27 middle-aged adults, and 21 older adults. Participants performed a speech identification task (auditory single task), a postural control task (posture single task) and combined postural control and speech identification tasks (dual task) to assess the effects of multitasking. The difficulty levels of the listening and postural control tasks were manipulated by altering the level of the words (25 or 30 dB SPL) and the mobility of the platform (stable or moving). The sound level was increased for adults with a hearing impairment. In the dual-task, listening performance decreased, especially for middle-aged and older adults, while postural control improved. These results suggest that even when cognitive control demands for listening are minimal, interaction with postural control occurs. Correlational analysis revealed that hearing loss was a better predictor than age of speech identification and postural control.

Introduction

During listening, we typically engage in concurrent activities. For example, at a reception people often stand while listening and talking. Previous research showed that listening and postural control interact when performed simultaneously (dual task). Adults had worse postural control when listening in more adverse situations (Carr et al., 2020; Helfer, Freyman, van Emmerik, et al., 2020) and worse performance on a speech identification task when the postural control task became more difficult (Helfer, Freyman, van Emmerik, et al., 2020). The present study aimed to determine whether interaction between listening and postural control also occurs when the cognitive demands of listening are minimized and whether any pattern of interaction differs between age groups.

When listening, sounds are detected and identified. Identification of words involves retrieval from the mental lexicon in long-term memory. According to the Ease of Language Understanding model (Rönnberg, 2003; Rönnberg et al., 2008, 2019), these processes are mostly automatic. The model is based on the idea that cognitive control uses contextual information in cases of initial mismatches between the perceived word and long-term memory representations. Auditory information is temporarily stored in working memory, where it can be manipulated through different cognitive control processes such as the selection of relevant information (selective attention) while suppressing distracting information (inhibition), flexibly switching between multiple task goals (task switching), and monitoring current actions and the consequences of these actions (monitoring) (Gazzaniga et al., 2014). Cognitive control processes are assumed not to be specific to the auditory modality. In the case of auditory stimuli, cognitive control functions could comprise the selection of desired sounds, inhibiting unwanted sounds (for a review, see Dryden et al., 2017), switching between information streams, and updating the constantly incoming auditory stream of information inworking memory. It is assumed that the more complex the auditory signal is (e.g., background noise, different perceived sound locations…), the more attentional resources are needed to successfully perform the speech identification task (Akeroyd, 2008; Dryden et al., 2017; Heinrich et al., 2015; Herrmann & Johnsrude, 2020; Meister, 2017). Age-related declines in listening result from both peripheral and central changes in the auditory system. Auditory detection thresholds, particularly high-frequency thresholds, tend to increase with age. In addition, auditory processing is altered by reduced discrimination of the spectral and temporal properties of sounds (Füllgrabe et al., 2015; Humes et al., 2012, 2013; Martin & Jerger, 2005; Rigters et al., 2019). A review by Shende and Mudar (2023) found consistent evidence that adults with age-related hearing loss (ARHL) show long-term changes in switching abilities (the ability to switch between task goals or mental sets of information). The same review states that results regarding inhibitory control and working memory updating are inconclusive. According to some theories (Li & Lindenberger, 2002; Reuter-Lorenz & Park, 2014), adults may compensate for a degraded speech input (either from hearing loss or noise) by using information processing capacity that they might otherwise deploy for other tasks. Also, compared to young adults, older adults expend more listening effort (Gosselin & Gagné, 2011), which encompasses the attention and cognitive resources required to understand speech.

While listening, we often move around or maintain our upright stance. Postural control reflects our ability to maintain balance and orient our body in space (Horak, 2006). Postural control depends on the multisensory integration of visual, proprioceptive, and vestibular inputs (Peterka, 2002). When standing in a stable, upright stance, we mainly rely on sensory input from the proprioceptive system (Henry & Baudry, 2019). In situations of conflicting sensory information, the reliable sensory modality needs to be monitored, and less weight should be assigned to potentially unreliable inputs (Magnard et al., 2017; Redfern et al., 2004). For example, if the ground is unstable, the proprioceptive system becomes less reliable and must be partially ignored. Age-related declines in postural stability can occur due to degradations in sensory systems and the neuro-muscular system (Hunter et al., 2016; Shaffer & Harrison, 2007). In addition, older adults show a diminished ability to select relevant information to integrate multisensory cues to maintain balance (Teasdale & Simoneau, 2001). Some researchers have suggested that postural control in older adults becomes less automatic and more dependent on higher-level cognitive processing (Boisgontier et al., 2011; Heuninckx et al., 2005).

Postural control is typically measured using center-of-pressure (CoP) measures (Figure 1(a) and (b)), obtained using a force platform, reflecting the body's effort to keep the center of mass of the body within the base of support to maintain stability. CoP measures provide information about the amount of postural sway within a certain time frame. For example, path length captures the trajectory of the ‘body's CoP during a specific period of time (Red lines in Figure 1(b)). These summary statistics do not capture the contribution of different control mechanisms (Van Humbeeck et al., 2023). Stabilogram diffusion analysis (SDA) yields more insights into the component processes of postural control. SDA is a mathematical modeling technique used to estimate the balance control mechanisms that are active at different time scales (Collins & De Luca, 1993) by characterizing postural control using four parameters: critical time interval, critical displacement, and short-term and long-term coefficients. Figure 1(c) shows an SDA plot with the four parameters. The short and long-term coefficients, respectively, reflect the amount of displacement over shorter (∼0–1 s) and longer time intervals (∼>1 s). Short-term processes reflect continuous movements in a certain direction. They are linked to biomechanical factors like the passive stiffness of muscles, ligaments, and joints, co-contraction of muscles, reflex responses and pre-planned strategies to anticipate movements (Van Humbeeck et al., 2023). It is assumed that long-term processes reflect feedback-based movement, where movements away from the center of stability are slowed down and reversed. Sensory feedback is used to make error corrections to maintain balance. Critical time reflects the delay until long-term processes kick in, while the critical displacement reflects the amount of displacement from the center of stability that is allowed before corrective movements by long-term processes occur.

(A) Schematic illustration of how the center of pressure is measured on a force plate (B) the center of pressure trajectory measured when feet are shoulder width apart. Path length is shown in red. The blue dot reflects the center of pressure. The anteroposterior (AP) and mediolateral directions are shown on the figure. (C) Graphical example of SDA analysis. Red dotted lines represent the slope of the short and long-term postural control processes. The blue point represents the critical time point and the critical displacement at the time of transition between short-and long-term postural control processes (adapted from Van Humbeeck et al., 2023).

Different theoretical models have been proposed to explain why simultaneous listening and standing interact. According to the general resource model, individuals possess limited cognitive resources that must be distributed across different tasks simultaneously (Crossley & Hiscock, 1992; Kahneman, 1973; Navon & Gopher, 1979). The limited capacity of resources can lead to competition between tasks for resources. The more resources two tasks share, the more competition occurs (Wickens, 2002). Other researchers proposed that attentional resources must be allocated properly to perform two tasks simultaneously (Koechlin & Summerfield 2007, Wickens 2021). In contrast, bottleneck theories assume a specific processing limitation, implying that concurrent tasks must be processed sequentially (Pashler et al., 1994). According to these models, the bottleneck occurs either at a central processing stage or at the peripheral level, meaning that the brain struggles to handle multiple inputs or outputs at a peripheral level simultaneously or tasks cannot be processed in parallel at a central level. More recent resource allocation models (Dux et al., 2009; Koechlin & Summerfield, 2007; Monsell, 2003) are also based on the assumption that cognitive control is crucial for effectively performing two or more concurrent tasks. The amount of cognitive control that is needed is adaptively altered based on the demands of a task. Wickens (2021) theorizes that in a multitasking context the decision to focus either on one or both tasks is based on the salience of the tasks, the inherent interest of the tasks, the priority of the tasks and the difficulty or resource demands of the tasks. In short, the model of Wickens is based on the idea that the decision as to which task will be focused on is based on which task (or both tasks) is more attractive/necessary to be switched to or continued with.

Previous research indicated that postural control and listening (and responding) interact when performed simultaneously, leading to worse postural control (Carr et al., 2020; Helfer, Freyman, van Emmerik, et al., 2020) or worse performance on the speech identification task (Helfer, Freyman, van Emmerik, et al., 2020). These studies all used difficult listening situations where cognitive processing is presumably necessary to perform the task, for example, inhibition of a competing talker (Helfer et al., 2010; Napoli et al., 2022) or background noise (Helfer, van Emmerik et al., 2020), and (working) memory (Bruce et al., 2019; Carr et al., 2020; Polskaia & Lajoie, 2016). Dual-task research has also been used to assess listening effort (for a review, see Gagné et al., 2017), where more postural sway indicates that listeners need more cognitive resources to perform the other task (Helfer, Freyman, van Emmerik et al., 2020; Helfer, van Emmerik et al., 2020).

The first goal of this study was to assess whether interaction between listening and postural control occurs when cognitive control demands in the speech-identification task are minimized. To this end, participants performed a speech-identification task and a postural control task separately (single task) and concurrently (dual task). For the speech identification task, short words were presented in silence (no inhibition) and repeated immediately (little memory component) at each of two sound levels. We hypothesized that at the lower level, more cognitive resources would be necessary. The postural control task required participants to stand as still as possible on a platform. The difficulty of the postural control task was manipulated using a stable or moving platform. When the platform was moving, proprioceptive information became less reliable. We theorized that adults must re-weight the use of this unreliable information while integrating information from the remaining reliable sensory modalities. This manipulation assesses whether a more difficult postural control task shifts attention from the speech-identification task to the postural control task. We expected more sway in the posture task when performed concurrently with the speech identification task. However, if adults find the speech identification task too difficult, they may pay more attention to the posture task. If so, the dual-task costs will be smaller for postural control and higher for the speech-identification task, especially in the more difficult listening condition, and for older adults.

SDA was used to distinguish component processes of postural control between anteroposterior (front-back; AP) and mediolateral (left-right; ML) directions, since older adults show more sway (worse postural control) in the ML direction than young adults (Winter et al., 1996). From a general resource perspective, we hypothesized that older adults have a smaller pool of cognitive resources available, resulting in larger dual-task decrements (in either listening or postural control). In addition, previous research has shown that a concurrent postural control task can be used to measure listening effort. We hypothesized that expending more listening effort, which is needed when the speech identification task becomes more difficult, increases postural sway.

This study's second goal was to determine the relationship among age, high-frequency hearing loss, listening performance, and postural control in single- and dual-task conditions. Previous research has shown that postural control and listening interact, and adults with a hearing impairment show worse postural control (for a review see Foster et al., 2022). Given this, we expected a correlation between hearing abilities and postural control. By controlling for age and hearing loss, we aimed to gain insight into the associations between age, hearing loss and performance on the speech identification and postural control tasks.

Methods

Participants

This study included 22 young adults (18–30 years old), 27 middle-aged (46–64 years old), and 21 older adults (65–84 y/o). Participants had hearing thresholds that were evenly spread around the 50th percentile of the ISO 7029 (Figure 2) (ISO, 2017). Older adults had worse hearing thresholds than young adults due to age-related hearing loss. Hearing thresholds were quantified by a pure-tone average (PTA, the average of thresholds at 0.5, 1 and 2 kHz) and a high pure-tone average (hPTA, the average of thresholds at 1, 2 and 4 kHz). Hearing thresholds were measured using a personal computer, Sennheiser HDA 200 headphones and a Focusrite Scarlett 2i2 or RME Fireface UC soundcard. Pure tones were calibrated in dB SPL with the Brüel and Kjær sound level meter type 2260 and artificial ear type 4153. Sounds levels were converted to dB HL based on the ISO (389:2004) norms.

Mean hearing thresholds for each group. Error bars depict the standard deviation across participants. Blue crosses and red circles represent left and right ear hearing thresholds.

Participants were screened to rule out otologic, vestibular, motor, or neurological problems. All participants were native Dutch speakers, and no participants wore hearing aids. Additionally, they were screened for indications of cognitive disorder using the CODEX (Ziso & Larner, 2019). Two participants did not pass cognitive screening and were excluded from further analyses. Demographics of all participants included in the study are given in Table 1. All participants signed an informed consent form before participation and received compensation of 4 course credits (for students) or eight euros per hour.

Demographics of Participants.

Procedure

Participants performed a speech identification task and a postural control task separately (single task) and in combination (dual task). We first describe the single-task procedures for each task. Afterwards we describe dual-task procedures and the general procedure.

In the auditory task, participants were asked to repeat the monosyllabic Lilliput words while seated. Lilliput words are semantically meaningful Dutch consonant-vowel-consonant (CVC) words known by most children aged 4 years or more (van Wieringen & Wouters, 2022). Each Lilliput word has the same speech reception threshold (SRT) for normal-hearing young adults. The SRT is defined as the level at which 50% of the words are correctly identified. Words were presented in silence at 25 dB SPL and 30 dB SPL, corresponding to 56% correct and 72% correct respectively, on average for normal-hearing adults. Each trial consisted of five words. Participants were asked to repeat all phonemes of each word (e.g., they say /t/ if they only heard /t/ of hit) immediately after the presentation of each word. Each phoneme was scored separately, resulting in a maximum score 3 for each word and 15 for each trial. Percentage correct scores were calculated for each trial. Between the five words in each trial, a random interval of silence (4000–6000 ms) was inserted to prevent habituation to a repetitive pace of stimulus presentation. Participants were asked to respond as soon as each word was presented. Trials within each condition were phonetically balanced. The words were presented binaurally via circumaural Peltor H7A headphones with a DD65 Radio-Ear transducer via the APEX software (Francart et al., 2008). Adults with elevated hearing thresholds (>20 dB HL at 500, 1000, 2000 or 4000 kHz) were provided with linear frequency-specific amplification (NAL-RP; Byrne et al., 2001) based on their pure-tone audiogram. Previous research has shown that fast compression and working memory performance are related (Souza et al., 2015). Given this, we opted for linear amplification in order not to influence working memory demands (Souza et al., 2015).

For the posture task, participants stood on a 46 by 46 cm platform, part of the NeuroCom Balance Master (NeuroCom International, Inc., Clackamas, OR, USA). This measures CoP movements with a sampling frequency of 100 Hz. Data were collected with the feet approximately shoulder width apart. Participants removed their shoes before testing commenced. They were asked to maintain a stable posture while centering their gaze on a fixation cross. There were two conditions: a stable condition where the platform remained fixed throughout the trial and a sway-referenced condition where the platform rotated in the sagittal plane based on the participants’ movement (i.e., the platform moved forward when the CoP of participants shifted forward). The movements of the platform are illustrated in Figure 3. Participants were asked to look at the screen and repeat the word ‘'boom” (which means tree in Dutch) each time an image of a tree appeared on the monitor. This was done to control for the effects of talking (and motor planning) in the dual task. The tree was presented five times in each trial, equaling the number of times a response in the dual task was required in the dual task. Outcome measures of the postural control task include path length (the length of the CoP-trajectory) and SDA-analysis (short-term processes, long-term processes, critical time interval and critical displacement) in AP and ML directions.

Illustrations of the movements of the platform. On the left, the platform is stable. The right shows the moving platform. The figures below illustrate the effect of platform movements on muscle proprioception.

During the dual task, participants performed the speech-identification task while standing on the platform. A fixation cross was visible on the screen. There were four dual-task conditions: 30 dB SPL on a stable platform (easy-easy), 30 dB SPL on a moving platform (easy-difficult), 25 dB SPL on a stable platform (difficult-easy), 25 dB SPL on a moving platform (difficult-difficult).

At the beginning of the experiment, participants were familiarized with the auditory and postural tasks and received feedback. Feedback in the speech identification task included percentage correct. For the postural control task, CoP trajectories were shown on the monitor. Conditions were counterbalanced across participants. Single- and dual-task manipulations were implemented as an ABBA sequence in which all participants first performed half of the single-task blocks (A) followed by the dual-task blocks with a break in between half of the trials (B-break-B) and the second half of the single-task blocks (A). This design was used to balance training, transfer, and fatigue effects. All participants performed 12 single-task trials and 12 dual-task trials. We opted not to emphasize one of the tasks (listening or postural control) since adults in real-life multitasking situations must determine how to prioritize various tasks (Fraser & Bherer, 2013). Not instructing participants to focus on one task allowed us to investigate whether participants spontaneously prioritize one task over another.

Statistical Analysis

Statistical analyses were performed using R (R Core Team, 2017). Percent correct scores for the speech identification task were transformed into Rationalized Arcsine Units (RAU) (Studebaker, 1985). Postural control measures were log-transformed to obtain normality. These served as input for linear mixed models (LMM). Different LMMs analyzed six dependent variables: RAU scores for the speech identification task, path length for postural control and the four SDA parameters. Fixed effects included age group, task context (single vs. dual task) and difficulty level. Planned contrasts (Schad et al., 2020) were defined to compare young adults with middle-aged adults and to compare middle-aged adults with older adults. Task context effects were a priori defined as the difference between single task and dual task and the difference within a dual task. The former compares single task performance with the mean performance of all dual task conditions, while the latter contrasts compare performance on the dual task when the concurrent task was easy to when it was difficult. We also investigated nested age factors (continuous variables centered within each age group) to gain insights into age effects within an age group. Since no systematic effects of nested age were found, these nested age effects are not mentioned in the results section but are presented in Appendix A. A random factor of subject was added to each LMM to account for intrasubject variability. LMMs were followed by post-hoc t-tests with Bonferroni correction where needed. Three-way interaction effects were deleted whenever these effects were not significant. Correlation analysis with Pearson's r (with Bonferroni correction where required) was conducted to identify associations between age, hearing loss (measured by hPTA), and task performance.

Results

Listening

Figure 4 shows the results of the speech-identification task. Middle-aged adults had worse performance than young adults (β = −18.6, SE = 3.5, t = 5.30, p < .001), and older adults showed worse performance than middle-aged adults (β = −13.7, SE = 3.7, t = 3.71, p < 0.001). As expected, the speech-identification task was more difficult at the lower level (β = 28.6, SE = 0.80, t = 35.71, p < .001), especially for older adults (β = 4.4, SE = 1.06, t = 4.16, p < 0.001). The difference between young and middle-aged adults was larger at 25 dB SPL (ΔM 1 = −28.4, t(68) = 6.9, p < .001) than at 30 dB SPL (ΔM = −22.4, t(68) = 5.91, p < .001).

Results of the speech-identification task in RAU for the single task (ST), dual task (DT) stable and dual task sway. Error bars depict 2*the standard error.

Participants performed significantly better on the speech-identification task when seated (single task) than when standing (dual task) (β = −2.9, SE = 0.76, t = 3.86, p < .001). Multitasking effects differed between young and middle-aged adults (β = 5.2, SE = 1.76, t = 2.93, p < .001). The difference between young and middle-aged adults was larger for the dual-task condition (ΔM = 20.4, t(49) = 5.89, p < 0.001) than for the single-task condition (ΔM = 15.9, t(49) = 5.29, p < 0.001).

We had expected that the more difficult concurrent postural control task would lead to larger performance decrements in the auditory domain. This was not the case, as performance was similar for the single and dual tasks (β = −1.17, SE = 1.08, t = 1.08, p = .28). There was an interaction between the difficulty of the speech-identification task and the difficulty of the concurrent postural control task (β = 6.27, SE = 2.12, t = 2.96, p < .001): unexpectedly, the RAU difference between the 25 dB SPL and 30 dB SPL conditions was larger for the stable platform (ΔM = −30.9, t(138) = 7.21, p < .001) than for the moving platform (ΔM = −26.2, t(137) = 6.38, p < .001).

Postural Control

Figures 5 and 6 show the results of the postural control task for the pathlength and SDA-analysis. As expected, pathlength was significantly larger when the platform was moving than when it was stable (β = 0.2, SE = 0.00, t = 50.91, p < 0.001). Middle-aged adults had a shorter path length (better postural control) than older adults (β = 0.1, SE = 0.04, t = 2.94, p < .001). A significant interaction between age group and platform mobility indicated that platform mobility influenced path length more for older age groups (young vs. middle-aged: β = 0.05, SE = 0.01, t = 5.34, p < .001; middle-aged vs. older: β = 0.02, SE = 0.01, t = 2.00, p = .05). Post-hoc tests showed that the difference between the stable and the moving platform was larger for middle-aged (ΔM = −0.24, t(52) = 9.15, p < .001) than for young adults (ΔM = −0.18, t(46) = 6.38, p < .001). Analysis of postural control component processes showed that in the AP direction, middle-aged adults showed better short-term postural control processes than older adults (β = 0.14, SE = 0.07, t = 2.08, p = .04). In the ML direction middle-aged adults showed better short-term processes (β = 0.19, SE = 0.08, t = 2.21, p = .03), a shorter critical time point (β = 0.08, SE = 0.04, t = 2.36, p = .02), a smaller critical displacement (β = 0.28, SE = 0.10, t = 2.83, p = .0.01) and more error corrections (β = 0.15, SE = 0.07, t = 2.25, p = .03) than older adults.

Performance on postural control, measured in pathlength (mm) in the single task (ST), dual task (DT) at 30 dB SPL and at 25 dB SPL. Error bars depict 2*the standard error.

Averaged Stabilogram diffusion plots for AP (left) and ML (right) CoP sway with fit lines based on parameter estimates for short- and long-term diffusion coefficients. The crossing of lines denotes the critical delay. Error envelope = ±1SE.

Participants showed less sway (shorter pathlength) in the dual task than in the single task (β = −0.02, SE = 0.00, t = 4.93, p < .001). Component processes of postural control showed better short-term processes (AP: β = −0.07, SE = 0.02, t = 4.92, p < 0.001; ML β = −0.05, SE = 0.02, t = 3.35, p < .001) and a later critical time point in both directions (AP: β = −0.08, SE = 0.01, t = 7.43, p < .001; ML: β = −0.03, SE = 0.01, t = 4.13, p < .001) when performing the dual task.

When performing the dual task, the difficulty of the speech-identification task influenced path length significantly (β = −0.02, SE = 0.00, t = 4.93, p < .001), with shorter path length at 25 dB SPL. Analysis of component processes showed that short-term processes were better (β = −0.05, SE = 0.02, t = 2.40, p = .02), and the critical displacement smaller (β = −0.05, SE = 0.02, t = 2.25, p = .02) in the AP direction when the concurrent speech identification task was more difficult. Middle-aged adults showed worse short-term processes in the AP direction than young adults in the easy listening condition (β = −0.12, SE = 0.05 t = 2.52).

Individual Variability and Dual Task Performance

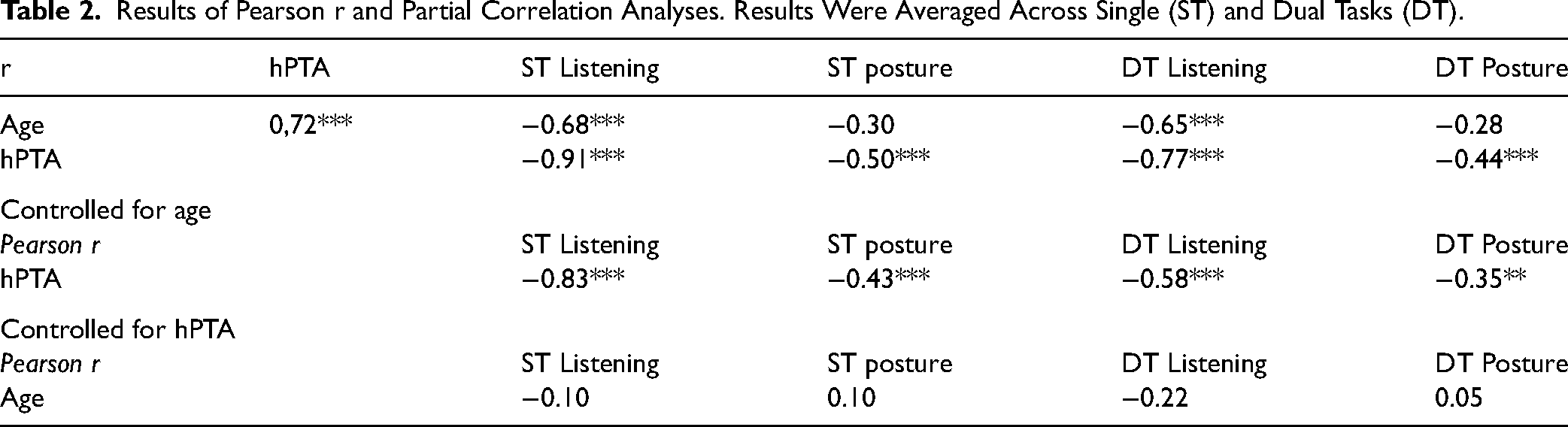

Correlation analysis was conducted to identify associations between age, hearing loss (measured by hPTA), and performance for single-task and dual-task conditions. Single-task and dual-task performance was calculated by averaging across different difficulty levels. Results of these analyses are shown in Table 2 and Figure 7. Age and hPTA were strongly correlated. Age was significantly correlated with listening scores (in single and dual task), and hPTA was correlated with both listening scores and postural control. When controlling for age, hPTA remained correlated with all measures, whereas when controlling for hPTA, age was not significantly associated with listening scores.

Scatter plots between sores for ST listening, ST posture, DT listening and DT posture and A) age and B) hearing loss, measured by high PTA. (ST = single task, DT = dual task).

Results of Pearson r and Partial Correlation Analyses. Results Were Averaged Across Single (ST) and Dual Tasks (DT).

Discussion

The experimental manipulations of the difficulties for both the postural control task and the listening task were effective, resulting in poorer performance in the more difficult listening (25 dB SPL) and the more difficult postural control task (moving platform). Older adults performed worse on the listening task than middle-aged adults and middle-aged adults performed worse than young adults. Age effects were greater in the most difficult condition for middle-aged adults than for young adults. For listeners with hearing loss, we partially restored audibility by providing NAL-RP gains. However, the NAL-RP gains are designed for speech at 65 dB SPL, and are most likely not sufficient to fully restore audibility at 25 or 30 dB SPL. In addition, our study did not account for the impact of age-related declines in central auditory processing (Goossens et al., 2016; Merten et al., 2022). The observed age differences in single-task performance may reflect differences in audibility, peripheral and central auditory processing and/or cognitive control.

In line with previous research, we found poorer postural control for older adults than for middle-aged adults, reflected by a larger pathlength for older adults (Henry & Baudry, 2019; Ruffieux et al., 2015). In addition, Analyses of postural control component processes showed worse short-term processes in the AP and ML directions for middle-aged and older adults. In addition, older adults showed a later critical time interval, a larger critical displacement and less error corrections than middle-aged adults in the ML direction. These results suggest that older adults likely use less efficient strategies to provide stability, especially in the ML direction. This is consistent with the notion that correcting ML instability is inherently more challenging than for AP sway (Winter et al., 1996).

We predicted that listening performance would suffer from concurrent postural control demands and that this effect would be more pronounced for the older age groups. Furthermore, we expected dual-task interaction to be more pronounced in the more difficult postural control condition. These predictions were partially supported by our findings. Overall, listening performance was poorer for the average of the two dual-task conditions than for the single-task condition. It is worth noting that the dual-task decrements in the speech identification task were small (2.93 RAU). The effect was greater for middle-aged than for younger adults. No further dual-task decrements were observed for the older age group relative to middle-aged adults, suggesting that dual-task performance affected middle-aged adults and older adults more than for young adults. The dual-task decrements in listening did not change when the postural control task was more demanding. Surprisingly, performance in the dual task for postural control was better than for the single-task conditions. This pattern was similar across age groups and could not be attributed to trade-offs with listening performance.

From our SDA approach, we were able to trace the improvements in postural control to reduced short-term processes during dual tasking for all groups. While this finding was unexpected we did obtain the hypothesized increase in critical delay, indicating that for all groups error correction kicked in later in dual- than in single-task conditions. A plausible explanation for this finding is that the demands of the concurrent speech-identification task slowed down the multisensory integration processes necessary to inform optimal error correction. Nevertheless, our study showed improved short-term processes, which indicates that during the dual task the strategy for maintaining postural control was different. Our study is not the first to find improved postural control under dual task conditions (Huxhold et al., 2006; Jehu et al., 2015; Petrigna et al., 2021; Polskaia & Lajoie, 2016).

We consider several accounts of these improvements during dual-task performance. The first account is based on the constraint action hypothesis (Vidal et al., 2018) and was put forth by Huxhold and colleagues (2006) to explain their participants’ improved posture performance under dual-task conditions. Focusing on minimizing sway leads to decreased postural control efficiency by interaction with (normally) automatic motor control processes. In other words, performing a concurrent (listening) task shifts attention away from postural control, giving way for more effective automatic processes to take over (Lacour et al., 2008), thus improving stability under dual-task conditions. Secondly, our SDA findings suggest that participants may have relied on co-contraction as a strategy to reduce short-term processes during dual-tasking. By co-contraction, we mean the simultaneous contraction of muscles working together to provide stability. Co-contraction itself does not result in any movement, but alters the stiffness of the body. For example, when you are standing on a bus and know it is about to brake for a stop, you pre-plan for this perturbation by contracting your muscles. However, in less challenging contexts, co-contraction can result in lack of flexibility or an increase in sensory thresholds for sway detection. The better short-term processes in our results correspond to less stiffness (Finley et al., 2012; Laughton et al., 2003; Nagai et al., 2011), which can improve stability in the dual task. Lastly, a review by Campos et al. (2018) showed that stationary sound sources can serve as an ‘auditory ‘anchor’ to improve postural stability by providing spatial mapping of sounds. Given that the single-task postural control condition did not involve any auditory stimuli, the auditory stimuli provided in the dual task could have improved postural control.

For the dual task, the difficulty of the speech-identification task influenced path length, with shorter path length, at 25 dB SPL. In addition, adults exhibited a larger difference between performance at 25 and 30 dB SPL in the speech-identification task when they were on a stable platform than when they were on a moving platform. Following resource allocation theories, a larger decline in listening performance would be expected for more challenging conditions, where limited cognitive resources are assumed to constrain performance of at least one of the component tasks. However, the absence of a larger decline in the most challenging condition (i.e., moving platform and at 25 dB SPL) renders this explanation less plausible. Additionally, there was no significant improvement in postural control measured by in path length in this condition, which would be expected if adults directed more resources to the postural control task. Component processes of postural control showed better short-term processes and a smaller displacement when the speech-identification task was more difficult, resulting in less stiffness and allowed less displacement. Previous studies characterized the dual task effects in postural control in terms of listening effort, assuming that increased listening effort is associated with more postural sway (Helfer, Freyman, van Emmerik, et al., 2020). Since participants showed slight listening performance declines but demonstrated improved postural control in the dual task, these changes are unlikely to reflect increased listening effort. If participants experienced more listening effort in the more difficult listening condition, more postural sway should have occurred.

Correlation analyses showed that high-frequency hearing loss was correlated with listening performance and postural control for both single- and dual-task conditions. The larger the degree of hearing loss, the more postural sway increased. These results are consistent with previous research (Agmon et al., 2017; Helfer, Freyman, van Emmerik, et al., 2020; Thomas et al., 2018). The observed correlations underscore the relation of high-frequency hearing loss with listening as well as postural sway. Several hypotheses have been proposed regarding the relationship between postural control and high-frequency hearing loss (for an overview see Agmon et al., 2017; Campos et al., 2018). Some of the proposed hypotheses describe that age-related changes in both hearing and vestibular or neural correlates can explain the link. Some authors hypothesize that hearing impairment may contribute to decreased social interaction, which would restrict physical activity and in turn also increase the likelihood of falls. It has also been hypothesized that sensory declines elevate cognitive load, making the processing of sensory information more demanding and reducing resources available for postural control. The present study does not support this, given that the correlation between high-frequency hearing loss and the single-task postural control was significant when no auditory stimuli were presented. Nevertheless, the recruitment of additional cognitive resources could result in permanent changes, which could lead to worse postural control permanently.

Conclusions

In summary, the results indicate that listening and postural control interact, even when cognitive control is limited in the speech-identification task. When performing a dual task, there were decrements in performance of the speech-identification task, especially for middle-aged and older adults. However, postural control improved in the dual-task condition. The correlation analyses showed detrimental effects of high-frequency hearing loss and an association of hPTA with listening and postural control in single- and dual-task conditions.

Supplemental Material

sj-docx-1-tia-10.1177_23312165241260621 - Supplemental material for Speech-Identification During Standing as a Multitasking Challenge for Young, Middle-Aged and Older Adults

Supplemental material, sj-docx-1-tia-10.1177_23312165241260621 for Speech-Identification During Standing as a Multitasking Challenge for Young, Middle-Aged and Older Adults by Mira Van Wilderode, Nathan Van Humbeeck, Ralf Krampe and Astrid van Wieringen in Trends in Hearing

Footnotes

Acknowledgment

This work was supported by a C1 grant from KU Leuven (grant nr C14/19/110). We thank our Master students Yenthe, Eveline and Sibel for their help with data collection.

Author Contributions

M.V.W., N.V.H., R.K., A.v.W. contributed to the study conception and design. M.V.W and N.V.H. prepared materials and collected data. M.V.W. performed data analysis and wrote the manuscript. M.V.W., N.V.H, R.K, and A.v.W. provided critical revision and feedback.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

This work is supported by a C1 grant of KU Leuven (grant no C14/19/110).

Supplemental Material

Supplemental material for this article is available online.

Notes

References

Supplementary Material

Please find the following supplemental material available below.

For Open Access articles published under a Creative Commons License, all supplemental material carries the same license as the article it is associated with.

For non-Open Access articles published, all supplemental material carries a non-exclusive license, and permission requests for re-use of supplemental material or any part of supplemental material shall be sent directly to the copyright owner as specified in the copyright notice associated with the article.