Abstract

This article presents the ongoing conversation about generative AI guidance and policy in higher education. The article examines syllabus policies, including analyzing sentiment, emotion, and common themes in GenAI policies. Findings show that policies should be audience-focused, clearly written, and grounded in strategies to promote ethical AI use in academia and the workforce. Practical tips for policy writing and sample policies are provided.

Keywords

The world has recently seen a boom in using artificial intelligence (AI) tools (Getchell et al., 2022). Since 2022, generative AI (GenAI) has expanded even more rapidly. Schools and instructors are racing to figure out what that means for learning in the classroom. This rapid change stirs up questions surrounding AI itself (e.g., What can I do with AI? What’s the difference between AI and GenAI? What does the future of AI look like for work?) to questions about AI use and classroom policy (e.g., “Should I ban AI?” “What does AI mean for teaching writing?” “How can I teach my students to use AI responsibly?”).

Technological innovation brings disruption. As Wright and Sarker note in Dwivedi et al. (2023), this has happened before in academia (e.g., calculators, email, search engines). While there is a need for critical consideration and debate on the ethical ramifications of AI, students and businesses already use GenAI tools and will continue to use them at work in the future (Getchell et al., 2022). Instructors must prepare students to use AI thoughtfully and responsibly and understand how to address AI within their courses. However, given corporate bans on GenAI use, educators must also prepare students to be effective communicators without using GenAI. Teaching strategies have evolved and will continue to evolve, and faculty need effective policies, instructions, and general guidance for students.

The business communication field uniquely possesses expertise in communicating about workplace communication and communication technology and tools. The field has managed previous technological disrupts, including email, hybrid work, and remote meetings, and considered how those changes influence workplace culture and the expectations and conventions of business communication. Further, the field has engaged in sensemaking by examining how workplace communicators use tools and responsibly incorporate them into increasingly complicated communication channels. With this in mind, business communicators must consider how to communicate policies and guidance about GenAI to promote effective and ethical communication. In her work on policies related to academic promotion, Mouton (2023) argues that “Based on previous business communication research, [business communication scholars] understand how policies are created, who they primarily serve, challenges with interpretations, and their legal and financial ramifications” (p. 6). With this in mind, we wanted to see how policies address this disruption. GenAI will evolve, and our policies will also need to do so.

In this article, we aim to (1) capture a historical moment of reaction to new technological communication tools, (2) reflect on current policies’ effectiveness and language use, and (3) provide a framework for faculty, administrators, and practitioners to consider how to manage the use of new technological tools like GenAI. Rather than a formal research study, this project is a snapshot of the current state of policy language and suggests the next steps for business communication practitioners and educators. To aid policy authorship and further the conversation, we present the ongoing conversation about GenAI and policy development, examine publisher and course policies, and suggest some strategic guidelines and writing advice for those creating policies.

Further, policies can promote excellence but must be explained appropriately to the audience. While explaining how standards can promote excellence, Larson and Lafasto (1989) write, “Needless to say, the extent to which standards are clearly and concretely articulated determines the eventual likelihood of the standards being met” (p. 102). Clear communication about the professional use of GenAI is vital. Policies and guidance must also match institutional values, field- and course-specific missions, and program- and course-level learning goals. Providing policies and guidelines answers the cry for direction from faculty, students, and support staff. Business communication as a field can uniquely address this need for effective and appropriate communication about GenAI usage. Clearly written, audience-appropriate policies and guidance can set high standards for communication excellence in the classroom and the workforce.

Literature Review

While AI has received much attention since 2022, AI is not new. However, access, availability, and tool reach have changed, particularly for business communication (Getchell et al., 2022). In addition to academic articles and presentations, business communication scholars have engaged in wider, public discourse by commenting for the press and giving webinars. As AI continues to revolutionize business communication, its applications have broadened further into educational environments.

Technological Change in Higher Education

GenAI’s reach has expanded and become available in commonly used products, applications, and browser extensions. Microsoft Copilot incorporates GenAI into Word, PowerPoint, Teams, and Excel. Google offers Duet AI, which is available in Google Workspace. Duet AI includes GenAI for writing, and Google pitches the product as “AI as a real-time collaborator” (Pappu, 2023). In short, students will have GenAI built into traditional tools for writing.

Further, when considering the reach of GenAI, new incoming students will encounter GenAI as a normal tool they may have used during their primary school years. Psychologically, for new university students, as far as they are concerned and insofar as they will have experienced it, the college experience will have “always had” an AI component. This technology transition mimics the technological disruptions seen with digitized card catalogs, access to search engines for research, or the advent of slides or email as teaching and learning tools. Considering the advent of email, current students could not conceive of a college environment where professors required only in-person office hours and did not have an email. Still, some current faculty had email-free experiences as college students or junior faculty.

Policies have been used to manage technological change in the classroom and provide a point of consideration when developing policies and guidance about GenAI. For example, some faculty used syllabus policies that banned online encyclopedias in the 1990s and early 2000s. Faculty expressed concerns about academic integrity given the new technologies. Some banned Wikipedia and included that ban in their syllabi. At a conference in 2006, the founder of Wikipedia said he had “no sympathy” for students who got into trouble for using the online user-written encyclopedia (Young, 2006). In 2007, Middlebury College made national news by banning Wikipedia in the history department, citing Wikipedia’s inaccuracies despite other scholarly findings that Wikipedia was more accurate than traditional encyclopedias (Jaschik, 2007). Meanwhile, other faculty pointed out the importance of focusing on content to promote student success during the trend to ban Wikipedia (Maehre, 2009). The teacher-scholars who emphasized content focused on postgraduation outcomes rather than classroom-specific artifacts appropriate for assessment. Maehre (2009) argued: If we consider students to be learners rather than performers, and if we value the learning of thinking skills rather than the carrying out of orders, we won’t be afraid to allow students to explore and to not always get the “right” answer. If we won’t do this, I’m afraid we’re not helping students develop into the increasingly-interactive world they’ll enter after graduation. They will embark on professional careers not just as readers or finders of information, but as collaborators in information creation. (p. 236)

Since that article’s publication, the conversation about Wikipedia has changed (Konieczny, 2016), but the discourse about Wikipedia and learning echoes the current language about GenAI as a teammate or collaborator. For most faculty teaching now, banning Wikipedia would be unlikely, and banning search engines is implausible. Some have compared banning calculators or Wikipedia to banning ChatGPT (Kelly, 2023). In an editorial, a communication researcher who studied Wikipedia calls ChatGPT this generation’s Wikipedia (Geiger, 2023). Similarly, ChatGPT may be this decade’s Wikipedia for faculty.

The anxiety and frustration about students’ Wikipedia use mirrors ongoing sentiment about students’ use of GenAI. In 2023, a majority of surveyed college officials “agreed” or “strongly agreed” that GenAI posed “a threat to higher education” (Chronicle of Higher Education, 2023, p. 4). However, of those respondents, only 32% had created a policy, and about half had no conversations or meetings with faculty or students about GenAI (Chronicle of Higher Education, 2023, p. 19). The negative sentiment and lack of direction have led to reports of confusion and stress. Some university’s restrictive policies reportedly “panicked” students, who wanted more information on using GenAI tools appropriately (Williams, 2024). Lacking a policy or guidelines can create greater confusion.

Responses to AI and Policy Development

Higher education reactions have ranged from not having a written policy about GenAI (Zimmerman, 2023) to allowing students to help write the policy (Brown, 2023). Many universities have posted policies online. In Grammarly’s Fall 2023 “Back to School” webinar on AI policies, attendees marked this shift of GenAI’s presence in academia with a poll about what attendees’ schools were doing in response to the growing popularity of GenAI tools. Of the 313 attendees, 67% (209) reported that in the 2022-2023 school year, their school did not have a formal GenAI policy guiding faculty and students on how to use it in academic settings. Conversely, 10% (32) responded their institution had such a policy, while 23% (72) were unsure of their school’s policy. However, when asked about the 2023-2024 school year, of the 318 attendees polled, 45% (142) reported their school did not have a formal GenAI policy, 29% (94) said their school did, and 26% (82) did not know (Moore & Maxwell, 2023). As of 2023, most universities did not have policies (Xiao et al., 2023). Universities with policies follow an interesting pattern in policy adoption. Xiao et al. (2023) found that “of the universities with ChatGPT policies, approximately 67% embraced ChatGPT in teaching and learning, more than twice the number of universities that banned it” (p. 2).

Specific to the field of business communication, faculty views on policies varied. In a 2023 survey, most business communication faculty preferred that policy be developed “primarily” at the school, university, or department level; less than 20% preferred that policies be developed by instructors (Cardon et al., 2023). In that survey, about 12% of faculty wished for a ban; the researchers found those faculty had “strong feelings” and saw “AI-assisted writing as incompatible with the development of writing skills and critical thinking” (Cardon et al., 2023, p. 273).

The policies identify how administrators and faculty perceive and frame GenAI. Luo (2024) argues that policies about GenAI “shape how problems are understood by prioritising certain representations over others” (p. 2). In an analysis of 20 university policies, the problems identified included academic misconduct, a lack of policy, weak assessment design, training, equity, and issues with technology such as hallucinations (Luo, 2024).

Privacy and access are also factors in policy development. Clear policies are needed for student use and data protection (Dwivedi et al., 2023). For example, our university has taken the position that given FERPA, student work cannot be put into an external GenAI tool including ChatGPT and external plagiarism detectors. Further, the policies of the tools may limit who can access and use the tool. Age limitations are present. ChatGPT requires users to have parent permission if under the age of 18 years (OpenAI, n.d.). Microsoft Copilot for higher education is limited to student users of age 18 years or older (Welcome to Copilot in Windows, n.d.). About 2.1% of students in postsecondary institutions are under the age of 18 years (Hanson, 2024), but that number is about 20% at a 2-year college (The Hamilton Project, 2023). Given the age restrictions, faculty must consider who will be unable to use GenAI, particularly at the 2-year college level. Further, institutions must act to protect minors and promote equitable use, particularly if students can use GenAI but those under 18 years technically cannot. Policies must consider the changing legal and technological landscape to ensure all students have an equitable academic experience.

Policy language can influence equity and comprehension. Language use influencing compliance includes the use of the negative. Researchers studying codes and language that prohibited behavior identified issues with how respondents read the codes. George et al. (2014), for example, note, “This result is surprising as it indicates that respondents do not differentiate between ‘prohibition’, ‘should not’ and ‘will not’!” (p. 11). In short, George et al. found that saying “shall” rather than “should” did not make a significant difference. Further, they found that factors like age and race influence perceptions.

Finally, effective policy should operate appropriately for different dimensions: pedagogical, operational, and governance (Chan, 2023). In Chan’s (2023) AI policy framework, the following considerations are proposed for pedagogical policy: “Rethinking assessments and examinations; Developing student holistic competencies/generic skills; Preparing students for the AI-driven workplace; [and] Encouraging a balanced approach to AI adoption” (p. 20). Complicating the considerations, some universities have adopted GenAI tools for classroom use or teaching while adopting policies that ban GenAI to promote academic integrity (Xiao et al., 2023). This article focuses on the pedagogical dimension of policy.

Findings: The Current State of Syllabus AI Policies

To understand the current state of syllabus AI policies, we initially gathered syllabi from business communication courses for Fall 2023 to find sample policies in context. However, the presence of a policy was inconsistent. Instead, we gathered 102 AI-related syllabus policies (all that were available at the time of data collection) from Eaton’s (n.d.) crowdsourced Google Doc resource. Eaton designed an open form where instructors could submit their current syllabi policies or institutional policies. Instructors from a range of areas submitted policies: 40.20% (41) were from arts & humanities, 20.59% (21) from sciences, 8.82% (9) from interdisciplinary studies or miscellaneous, 7.78% (8) from business, 7.78% (8) from education, 5.88% (6) from special population focus, 4.90% (5) from social sciences, and 3.92% (4) from law. We analyzed the policies through sentiment and emotion analysis, studying word use frequency, and exploring common themes and trends among policies. The themes and trends were not quantified but are meant to give “a lay of the land” to the reader.

Sentiment and Emotion Analysis

We conducted a sentiment analysis and emotion analysis of the policies to gain an overview of the sentiments and emotions embedded in them. The analyses were performed through Qualtrics Text IQ AI tool. These methods have been successfully used by researchers to examine qualitative data (e.g., Durand et al., 2023; Tiruye et al., 2023; Winkelmann & Games, 2021). Text IQ uses textual analysis to determine a sentiment score for each policy that ranges from −2 (very negative) to 2 (very positive) (Qualtrics, n.d.). Additionally, Text IQ noted if policies contained mixed sentiments (i.e., including both positive and negative sentiments). The researchers also reviewed the sentiments associated with the policies to verify agreement.

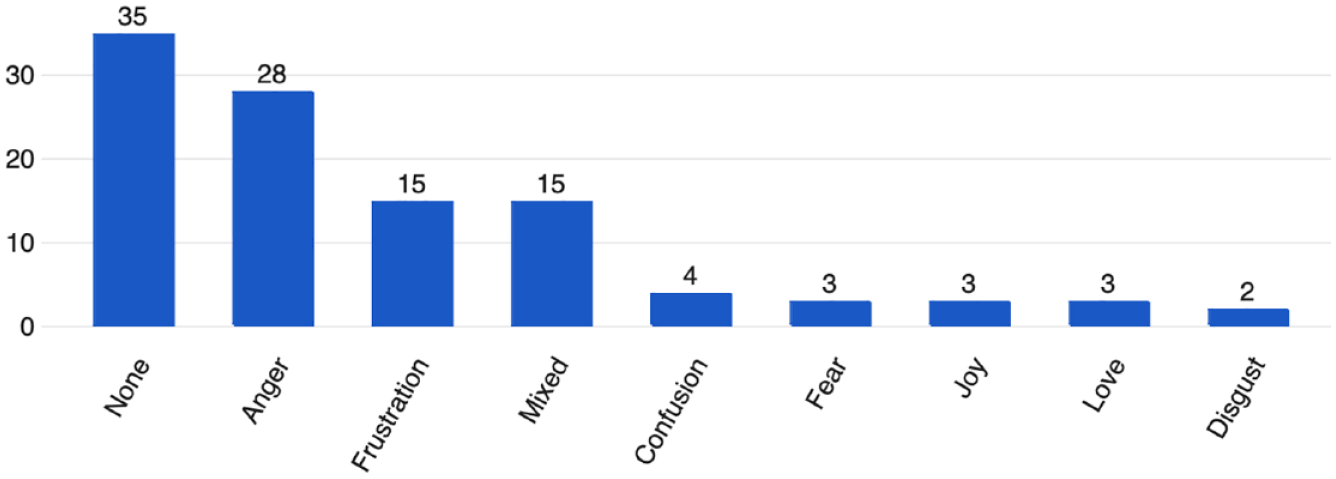

Overwhelmingly, over 60% of the policies presented were either negative (30; 29.41%) or very negative (33; 32.35%) regarding AI. Less than 5% of policies had positive (3; 2.94%) or very positive (2; 1.96%) sentiments. Fourteen (13.73%) policies were neutral, while 20 (19.61%) were mixed in sentiment. The detected emotions (102) reflected the negative sentiment. The predominant emotion detected in the policies was anger (28; 27.45%), followed by frustration (15; 14.71%) and mixed emotions (15; 14.71%). Of the policies, 35 (34.31%) were detected as having no emotion. See Figure 1 for details.

Detected emotions in policies about generative AI (N = 102).

Negative and strongly negative sentiments did not necessarily align with negative views of GenAI use. Intent and word choice sometimes revealed complicated positions. Some policies stressed the limitations of GenAI while permitting its use. This sometimes resulted in a very negative sentiment for a policy in which the faculty member may have felt positively about GenAI. For example, in a policy that allowed using GenAI for brainstorming and outlining, the policy stated, “the material generated by these programs may be inaccurate, incomplete, biased or otherwise problematic. Beware that use may also stifle your own independent thinking and creativity.” That policy was coded as very negative. Similarly, another policy that encouraged cited use explained that generated content could be “biased, offensive” and “unethical.” While the policy had positive intent, the sentiment was scored as very negative because of the negative words incorporated.

Similarly, a positive sentiment policy might limit or ban GenAI use. For example, one of the two policies coded as “very positive” banned using GenAI. The policy employed positive language to explain that “AI tools” could be used for spelling and grammar checks, and only for those applications. The policy avoided most negative words and sentence constructions and did not include discussions of violations and punishments. Instead, the policy encouraged students to ask the faculty member questions, with the following explanation: “Scholarly citing is not particularly intuitive, and part of my role is to help you learn the rules for intellectual attribution.” The policy promoted conversations and comprehension without using fear. Similarly, the other policy coded as very positive included limitations on GenAI use. The policy explained using bulleted items the types of assignments and use that were not allowed. However, it also explained ethical and appropriate use using positive language and clear explanations free of jargon. For example, the same policy stated, “When you use AI for an assignment, you should screenshot any queries and output from AI and submit it with your work. . . . If you are open with me about your uses of AI, I can help you make the most of it without penalizing you.” The policy did not allow all uses but promoted positive conversations.

Common Policy Themes

While the policies share many common phrases and word choices, the policies differ significantly. Some are around 100 words or less, while others gave more than 500 words of guidance, including examples. Some appear to repeat university policy or guidance; some authors note they differ from official guidance and other professors’ policies.

To take a deeper look at the policies themselves, the authors conducted a thematic analysis of the policies. The themes provided insight into how universities and professors defined uses and explained AI.

Specific guidance

Some, but not most, of the policies offer specific guidance on what students should do. One policy sets up use cases in which students can use GenAI (e.g., brainstorming) and when they cannot use GenAI (e.g., an entire essay). One policy links the American Psychological Association (APA) handbook on how to cite GenAI use. Further, that statement includes a sample disclosure statement for inclusion at the end of an assignment. One policy tells students to cite, screenshot, and save “everything.” Others also include sample statements and details about how and when to cite. Three of the policies include references to transcripts of interactions with an AI tool.

Tool specificity

The word choices vary across the policies. Some policies refer to AI broadly. They do not distinguish between an AI-based tool and a GenAI. One policy mentions “alternative generation tool,” a nonstandard phrase for the field of AI. Three of the policies reference “machine learning” with no definition. One policy references “synthetic content.” Many policies talk about ChatGPT. Other tools include ChatGPT-3, Dall-E 2, Moonbeam, iA Writer, MidJourney, DALL-E, and Copilot. A few policies leave tools unspecific, saying something like ChatGPT and “similar programs,” or “anything after” a list of current tools. Some mention “tools like” or “generative AI and ChatGPT,” but only talk about ChatGPT throughout the rest of the policy. The heavy emphasis on the word “tool” to describe chatbots and generation is worthy of further research.

AI versus GenAI

Some policies address differences between AI tools and GenAI, but many do not. One policy that bans “AI tools” clarifies that “pre-submission editing” such as “spell-check and grammar-check” can be used; the faculty member writes that AI tools cannot be used for “thinking or drafting.” Another policy states that any use of AI tools like a writing assistant should be cited or identified as a “partner or augmentation.” One policy instructs students to identify any AI tool, including Grammarly and ChatGPT.

References and AI as author

Many policies mentioned citing the use of AI, but few explained how to cite. Further, the treatment of authorship varies in the policies. The varied treatment matches some of the differences given to students and authors by major reference guides. For example, APA directs student writers cite the “author of the algorithm,” such as OpenAI, when referencing ChatGPT (McAdoo, 2024). However, APA Publishing Policies direct authors not to credit AI as an author on a journal article (American Psychological Association [APA], 2023). Two policies address whether or not to list ChatGPT as a coauthor. One policy instructs students to list ChatGPT as a coauthor as students are “sharing co-authorship . . . with them.” One policy cites a journal relevant to the course’s field and explains the journal’s prohibition on listing ChatGPT as a coauthor.

Policy creation and authorship

Authorship also includes the use of GenAI to write or cowrite the policy. Some policies explicitly mention using GenAI in policy development. For instance, one policy explains that GenAI was used for part of the policy creation. Another policy says ChatGPT generated it; the AI-generated policy says not to use ChatGPT as a “replacement” for thinking and analysis, given factors including context and ethics. A third policy notes its development based on ChatGPT, but the faculty member says “critical reflection” and editing occurred. One policy says to limit the amount of generated content and not present generated work as one’s own before acknowledging that ChatGPT wrote part of the policy; however, the policy does not explain what AI-generated is.

Responsibilities and warnings: False information, bias, and privacy concerns

Multiple policies contain cautionary language for students, usually relating to three areas: false information, bias, and to a lesser extent, privacy. Over a third of policies warn about false information. Some policies offer short directives regarding GenAI and accuracy like “don’t believe anything,” “don’t trust anything,” and “ChatGPT is often wrong—Wikipedia is a better resource for [providing background knowledge] right now.” Others explain in more detail how GenAI invents sources (i.e., hallucinations) and information.

In warning users, about one-fourth of policies include concerns about bias. One policy references the number of cis-gender White males who likely trained the systems. Others warn of GenAI reiterating bias by including inappropriate, offensive, or unethical content and using “discriminatory, non-inclusive language.” One policy says GenAI might be “inaccurate, incomplete, or otherwise problematic.”

Less than 7% of policies warn of privacy concerns. These tended to focus on not putting private information into GenAI. One policy explained, “You can’t control where it ends up. If you wouldn’t post it on the internet, don’t give it to an AI.” One policy suggested that students read the terms and conditions of OpenAI. The syllabus links to further information, as did another when mentioning privacy. One introductory course policy says students should “adhere to privacy laws” without explaining those laws or linking resources.

With all of these warnings, policies consistently explained that the student was responsible for considering these areas of concern and should verify content from AI and protect their personal content.

Academic dishonesty and consequences

Some policies explain plagiarism, cheating, or fabrication in plain language. For example, one policy explained, “The information derived from these tools is based on previously published materials. Therefore, using these tools without proper citation constitutes plagiarism.” Another policy puts a common definition of plagiarism into parentheses after using the term. Many policy authors seem to assume students understand the concepts of plagiarizing and cheating, including in a new AI-related writing environment.

The consequences of using GenAI are sometimes strongly worded. One policy suggests that a cheating student can risk a school’s accreditation and their “future prospects.” A different policy warns students that GenAI tools may “stifle your own independent thinking and creativity.”

AI detector use

Several policies mention AI detection. One policy says faculty “reserve the right to use” AI detection software. Only one policy mentions TurnItIn regarding plagiarism rather than AI detection. One policy mentions GPTZero. One policy says students and faculty should use “detection tools”; students are told to use them before submission. A few policies include the faculty member as the detector. In one example, the policy says that credit may be reduced if a student’s “writer’s voice” cannot be detected. In another, the faculty member says students would need to meet to discuss the assignment to do “knowledge checks.”

Drawbacks of AI use

However, illustrating the focus on the drawbacks of GenAI use, many policies reference GenAI as negatively related to critical thinking. Broadly, 39 policies were coded to reference critical thinking. The policies position critical thinking as a human activity. The context of critical thinking is often negative, as humans can lose critical thinking opportunities or miss gaining skills when using GenAI. One policy mentions critical thinking several times, including, “While AI can assist, it cannot replicate the nuanced guidance of a human instructor and cannot be used as a replacement for personal effort and critical thinking.” Interestingly, the policy states at the end that it was drafted using Bard for “some of the ideas.” Citing ChatGPT as its author, another policy states, “Students are encouraged to approach AI as a supplementary tool that enhances, rather than replaces, their critical thinking and analytical skills in finance.” The policy like others connects critical thinking to core disciplinary skills. Similarly, in a first-year writing course, the policy states, “Writing is a means to critical thinking, and we must do our own writing to cultivate our own true, not artificial, intelligence.” The policies sometimes point to GenAI limitations related to critical thinking: “As human beings, you can do certain things that AI tools cannot—such as thinking creatively and critically, or making judgments based on experience.” Overall, the treatment of critical thinking revealed negative views of GenAI.

Goals and benefits of AI use

Several policies mention learning or broader goals and benefits of AI use. One policy suggests that GenAI could “augment understanding” and encourage critical output evaluation. In another policy statement, the writer uses positive language to focus on what students should do, such as be critical, responsible, and accountable learners. A different faculty member describes AI as a “new and valuable skill,” which can help students “become more productive, efficient, and skilled scholars.” Another policy states the faculty member’s “commitment to promoting the development of 21st-century information literacy skills for learning.” These themes demonstrate the range of views and approaches to GenAI and education.

Policy Creation Considerations

Effectively written policies can prepare students for a workplace where policies will guide AI use for professional communication. While some faculty may not want to create policies, they must still be prepared to offer advice and guidance. For business communication courses, having a policy or teaching how to read a policy could improve appropriate AI tool use. We acknowledge the difficulty of creating a policy in a changing technological environment. However, the following recommendations suggest ways to create a policy that transcends some technological changes and implements guidance promoting student success. In particular, business communication faculty train future communicators and business professionals who likely will work in a corporation with an AI communication policy, and some may need to rewrite or revise communication policies and guidelines for employees.

When considering whether or not to create a policy, give guidance, or set standards, faculty must consider multiple things. We list here some broad considerations, but faculty should further attend to the nuances of their fields, the context of their courses, and the learning outcomes set forth by their programs and institutions. Policies alone will not suffice. The Modern Language Association and Conference on College Composition and Communication (MLA-CCCC) joint statement recommends, “At the institutional level, policy should be accompanied by education about AI; when creating policy, institutional actors must prioritize both ethical conduct and the mission of higher education” (MLA-CCCC Joint Task Force on Writing and AI, 2023, p. 10). The MLA-CCCC statement sets the expectation that no matter the policy, educators must educate. Further, as institutional actors, faculty will face questions from students beyond the scope of a university-wide policy.

Faculty can make a difference as individual actors. Considering supervisor moral talk contagion (Zanin et al., 2016), faculty can promote the appropriate use of GenAI and trust between faculty members and students by discussing moral and ethical behavior in the classroom. There is danger in silence. Lacking a policy is silence. Not talking about GenAI and expectations for integrity and ethics is a dangerous silence. Students must be equipped to evaluate policies given corporate policies governing GenAI and AI tool use. Learning to evaluate and discuss policies may become a significant part of critical AI literacy.

Prior to writing a policy or determining if a policy is wanted, faculty may want to consider the following:

Policy Creation and Implementation Advice

In addition to the above considerations, here are some practical tips to aid AI policy writing in syllabi. Based on the findings, several conclusions are clear. However, those conclusions present challenges. Faculty must explain what AI can and cannot do while considering privacy and responsibility alongside critical thinking and originality. Effective AI policy statements flow from positions taken about teaching and learning. The reviewed policies that seemed very clear made these rhetorical moves to provide direction while clarifying values and purpose.

Broadly, students need to understand what GenAI can and cannot do, while also understanding privacy concerns, professional and academic responsibility, critical thinking, and broader concepts like original voice, personal work, and authorship. Further, professional communicators need to understand the changing legal regulations that will influence decisions about everything from posting generated images on social media to writing performance evaluations with the aid of AI assessment and GenAI to improve writing.

Write the Policy for Your Audience (Your Students)

First, faculty and institutions must have clear policies created with the audience in mind—that students can understand. Appendix A provides examples of language that might be used. Syllabi language can improve feelings of belonging and inclusion (Sunds et al., 2023). First impressions and language matter.

Set the Tone and Focus for the Course

Second, faculty and institutions must set an appropriate tone and use policies not merely as a tool for legal compliance but as an opportunity to promote appropriate and effective communication. Beyond writing an AI use policy, instructors should:

Finally, beyond the findings in this article, we recognize the potential for continued rapid technological changes and changing institutional policies. We may need to adjust our policies to consider Artificial General Intelligence (AGI) in a few years. Faculty should consider using a broad syllabus policy that narrow assignment instructions can support. Using assignments to clarify AI tool use rather than a broad policy can support teaching different applications and contexts for AI tool use. For example, faculty might want students not to use GenAI on one assignment but allow GenAI on another assignment. This matches corporate practices, which deserve future research for their sentiment and themes.

Conclusion

This article captures the sentiment, tone, and themes of the initial policy response to GenAI. By reflecting on policy effectiveness and language use, the article provides a framework for considerations before, while, and after composing a policy or offering guidance. Effective instruction and clearly communicated expectations promote success. Clear policies can guide expectations and help learners prepare for communicating in workplaces where different companies have potentially radically different GenAI policies. Students will be held accountable for their AI use when entering the workforce, and understanding AI policies and best practices will be part of critical AI literacy. Further, they must be able to clearly communicate how they use AI, why they use AI, and how they remain in compliance with employer, institutional, and legal guidance or regulations.

Educators must help students understand the GenAI tools they are using now (e.g., LLMs or AI writing assistants) and how to work in a changing environment. Professional communicators must operate ethically in an ever-changing world related to compliance and technology. Ethics cannot be outsourced, especially to companies profiting off AI tools. Those drafting policies or communicating guidance must remember their audience by implementing best practices for effective and appropriate communication.

Policies are the first step in setting the classroom tone for effective and ethical GenAI use. Faculty must consider how they position GenAI, how they communicate the value of the course content, and how students will learn, whether using GenAI or not. Learning as a priority must be clearly communicated and implemented throughout the course, starting with the policy.

Footnotes

Appendix A: Sample Policies

Note: These policies were not written with GenAI.

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.