Abstract

This study evaluates a “feedback-only,” constrained-generative AI tool designed to support revision without generating or rewriting student text. StoryCoach was developed for a business communication elective and grounded in cognitive apprenticeship with principles of feedback literacy. The tool generated structured feedback: one strength, one opportunity, and one reflective question per submission. Analysis of 57 paired drafts showed significant gains in feature-specific rhetorical execution, with vividness as the primary quantitative indicator (Cohen’s d = 1.39), supported by independent reader judgments and student reflections. Findings demonstrate that constrained-generative AI can function as a pedagogical partner that strengthens rhetorical awareness and preserves authorship integrity.

Keywords

Reframing AI in Writing Instruction

The Problem with Product-Driven AI

When generative artificial intelligence (AI) entered the classroom, the response among writing instructors was immediate and divided. Some viewed tools like ChatGPT as shortcuts that could erode students’ authorship and critical thinking skills, echoing early concerns about automation in education (Kasneci et al., 2023). Others, however, saw potential for individualized coaching and scalable feedback that might enhance reflective learning (Lee & Moore, 2024). Both perspectives share a central concern: how to preserve learning when the machine can so easily produce the product.

In the past 2 years, the adoption of generative AI tools, such as ChatGPT, Claude, Co-Pilot, Gemini, and others, has accelerated so quickly that pedagogy often lags behind practice. Faculty worry about plagiarism and the subtle loss of cognitive labor that occurs when students offload early thinking to machines. Yet these same tools offer a possibility that few have tested: an AI tool designed to teach writing without writing for the student.

A Classroom Test of Process-Focused Design

This study emerged from that tension. In a business storytelling course, students used a custom-built AI assistant designed to prompt reflection and revision in their writing. (Figure 1 shows the student-facing interface.) Built using OpenAI’s ChatGPT-4.0 Custom GPT interface, the tool, named StoryCoach to emphasize its instructional role rather than its generative capability, was governed by a detailed system prompt written entirely by the instructor. StoryCoach analyzed student stories for key narrative features and provided feedback through strengths, opportunities, and reflective questions rather than edits or rewrites. Its design premise was simple: if AI could learn to compose, it could also learn to coach if it was instructed to focus on process instead of product.

Student-facing view of the custom-built StoryCoach GPT.

The results were striking. Across 57 paired story drafts, students’ writing showed measurable improvement in descriptive precision (Cohen’s d = 1.39), meaning that vividness improved by roughly one and a half standard deviations after the AI-guided revision. Human readers, authentic proxies for a real audience and blind to which drafts were pre- or post-revision, consistently rated the revised versions as better based on engagement and descriptive vividness. Additionally, students reported the sense that the AI made them notice their own choices. One student wrote, “I liked how it [StoryCoach] doesn’t give us an example of how to change our story because then we weren’t losing our own creativity and truth behind the story” (Student comment, 2025 course survey).

From Prototype to Pedagogical Framework

This article uses that classroom experiment as a proof of concept for a broader claim: educators can design AI tools to enhance rhetorical awareness without allowing AI to generate text. The discussion that follows situates this approach within existing scholarship on AI-supported writing instruction, cognitive apprenticeship, and feedback literacy, and then offers a practical framework for creating feedback-only AI partners.

Literature Review and Conceptual Framework

Three Strands of Inquiry

The literature informing this study spans three intersecting strands: research on AI feedback in writing pedagogy, theories of reflection and cognitive apprenticeship in learning, and emerging frameworks for ethical AI design. Together, these areas provide a conceptual foundation for examining how a feedback-only AI tool can strengthen writing without actually rewriting. The following review outlines each strand in turn.

AI Feedback and Writing Pedagogy

Recent studies in educational technology have moved beyond viewing generative AI solely as a content producer, examining instead its potential to enhance instruction through feedback. When paired with human guidance, AI tools can improve writing fluency, structure, and clarity by helping students visualize patterns in their own language use (Mahapatra, 2024; Steiss et al., 2024). For example, Steiss et al. (2024) show that ChatGPT can deliver broadly useful formative comments on students’ essays, while also finding that trained human feedback remains stronger for higher-level rhetorical judgment and contextualized pedagogical guidance. Similarly, Mahapatra (2024) found that using ChatGPT as a feedback partner yields significant improvements in students’ academic writing.

While feedback-oriented applications of generative AI show clear pedagogical promise, researchers simultaneously caution against uses that cross the line from feedback to substitution. Tools that generate full-text revisions risk reducing student agency and shifting cognitive effort away from the learner. Instructors, therefore, face a pedagogical challenge: how to use AI to foster writing reflection rather than writing replacement. This gap in practice frames the present study. By designing an AI tool that delivers descriptive, non-compositional feedback, defined as feedback that analyzes rather than generates text, this research extends current conversations on feedback literacy and the responsible integration of generative AI into writing pedagogy.

Learning by Reflection: Cognitive Apprenticeship and Writing

The feedback-only design of StoryCoach aligns closely with the model of cognitive apprenticeship articulated by Collins et al. (1991). In traditional apprenticeships, novices learn visible tasks through observing and imitating experts. Cognitive apprenticeship adapts that model to mental processes, which include writing, reasoning, and problem-solving. In doing so, cognitive apprenticeship makes expert thinking visible through modeling, coaching, and scaffolding. Learners gradually assume greater independence as guidance fades.

In a writing classroom, cognitive apprenticeship unfolds when instructors externalize expert reasoning by talking through decisions about tone, structure, or vivid detail and then inviting students to do the same. Reflection, not correction, becomes the central mechanism for growth. The StoryCoach tool performed this same function algorithmically: It posed targeted questions and highlighted linguistic patterns that prompted students to consider the rationale for their revisions. In this sense, the AI acted as a “coach” within a cognitive apprenticeship framework, guiding attention toward the process of composing rather than supplying the product itself. This process-oriented coaching function also positions the study to examine a second dimension of the framework: understanding how specific linguistic choices shape reader experience.

Narrative engagement depends on both how a story is organized and how it is rendered linguistically. Narrative structure supports comprehension by organizing events into a coherent causal sequence, allowing readers to follow what happens and why. Vivid language, by contrast, supports mental simulation by supplying concrete sensory details and access to a protagonist’s internal experience. Research on narrative transportation suggests that readers are most absorbed when they can both track the narrative logic and mentally experience the unfolding events; vividness contributes to narrative transportation by increasing imagery and felt immediacy (Green & Brock, 2000; Speer et al., 2009).

This reflective emphasis, therefore, intersects with narrative transportation theory, which explains how attention to vivid, concrete language enables writers to anticipate and shape reader immersion. Within a cognitive apprenticeship model, such awareness is not incidental but essential: when writers understand how specific linguistic choices contribute to reader immersion, they gain a clearer sense of the rhetorical effects they are trying to produce. Measuring vividness features, therefore, provides a quantifiable window into how students are internalizing this awareness. By linking cognitive apprenticeship to narrative transportation, the study demonstrates how AI-guided noticing of rhetorical features can strengthen rhetorical judgment, thereby helping students see both what to revise and why those choices influence audience engagement.

Principles for Designing Ethical Pedagogical AI

Developing AI tools that coach rather than write requires clear design parameters. Recent frameworks propose three guiding principles for educators who adopt AI in language and communication instruction: transparency, non-generation, and iterative measurability (Kazemitabaar et al., 2024; Macias et al., 2024). Transparency ensures that students understand the AI’s purpose. Non-generation defines the pedagogical boundary that keeps AI from composing text to maintain students’ authorship and cognitive investment. Lastly, iterative measurability emphasizes collecting evidence of learning by tracking revision quality, reflection depth, and skill transfer across drafts. The StoryCoach prototype applied these principles by disclosing its non-writing role, providing interpretive rather than prescriptive feedback, and allowing learning gains to be measured quantitatively (vividness per 100 words) and qualitatively (reader-judgment data). Together, these principles frame a replicable model for integrating AI into writing instruction in ways that respect human strategic thinking while leveraging machine-assisted feedback.

Synthesis and Conceptual Framework

Taken together, these three strands of scholarship, which include AI feedback in writing pedagogy, cognitive apprenticeship and reflection, and ethical design for educational AI, form the conceptual framework for this study. The common thread across them is a focus on process-oriented learning rather than product-oriented automation. Drawing from cognitive apprenticeship, the StoryCoach prototype was designed to make expert thinking visible by prompting reflection rather than producing revisions. From feedback literacy research, it applies transparency, non-generation, and iterative measurability as core design principles. Collectively, these ideas establish a pedagogical model in which AI functions as a coach rather than a composer and supports learning through guided reflection while preserving human authorship and strategic thinking.

The Classroom Prototype: StoryCoach as a Pedagogical Partner

This project was implemented in an upper-level business communication elective taught in a weekend format, Storytelling to Influence and Inspire, at the University of North Carolina’s Kenan-Flagler Business School during the first weekend of the Fall 2025 term. The course teaches students to craft professional and personal narratives that demonstrate leadership, persuasion, and authenticity, which are qualities that are essential to managerial and leadership communication (Bostanli & Habisch, 2023). Within that context, StoryCoach was introduced as a classroom experiment designed to extend and validate individualized coaching and feedback beyond traditional peer-to-peer workshops.

Overview of Custom GPT Design

StoryCoach was developed as a custom-built conversational AI assistant using OpenAI’s “Custom GPT” interface, a platform that allows instructors to configure a tailored version of ChatGPT within defined parameters. Figure 2 illustrates the instructor-facing configuration panel in ChatGPT where StoryCoach was created. This setup interface allowed the instructor to script the system prompts, restrict generative capabilities, and define the tool’s feedback behaviors prior to student use.

Backend configuration interface for the custom StoryCoach GPT.

Using OpenAI’s provided template, a detailed system prompt, written by the instructor, governed the model’s behavior, scope, and feedback tone. The model was programmed to provide one strength, one opportunity, and one reflection question for each submission, positioning it as a structured storytelling coach rather than a generative writing tool.

For instance, when a sample section was tested during the prototype phase, the AI responded: You describe what happened clearly in the story’s situation, but you have an opportunity to help your audience see and feel what your protagonist did. What sensory detail—sound, texture, or movement—might help readers experience the moment more vividly?

This response illustrates StoryCoach’s reflective-prompting approach: feedback that guides students to enhance vividness and rhetorical awareness without rewriting their text. The feedback structure aligns with cognitive apprenticeship (Collins et al., 1991) and dialogic feedback models that emphasize reasoning and self-regulation over correction (Carless & Boud, 2018).

Constraints and Ethical Design

A key design constraint prohibited the model from rewriting, rephrasing, or extending any portion of a student’s text. The system prompt instructed the AI to ask thoughtful questions to promote reflection and revision, ensuring that authorship and linguistic agency remained with the student. This approach aligns with current recommendations for the ethical use of generative AI in education, which emphasize functional transparency, learner autonomy, and nonsubstitutive design (Moorhouse & Kohnke, 2024; Weisz et al., 2024). Before engaging with the tool, students were encouraged to regard StoryCoach as a reflective partner rather than a co-author.

Sequential Workflow

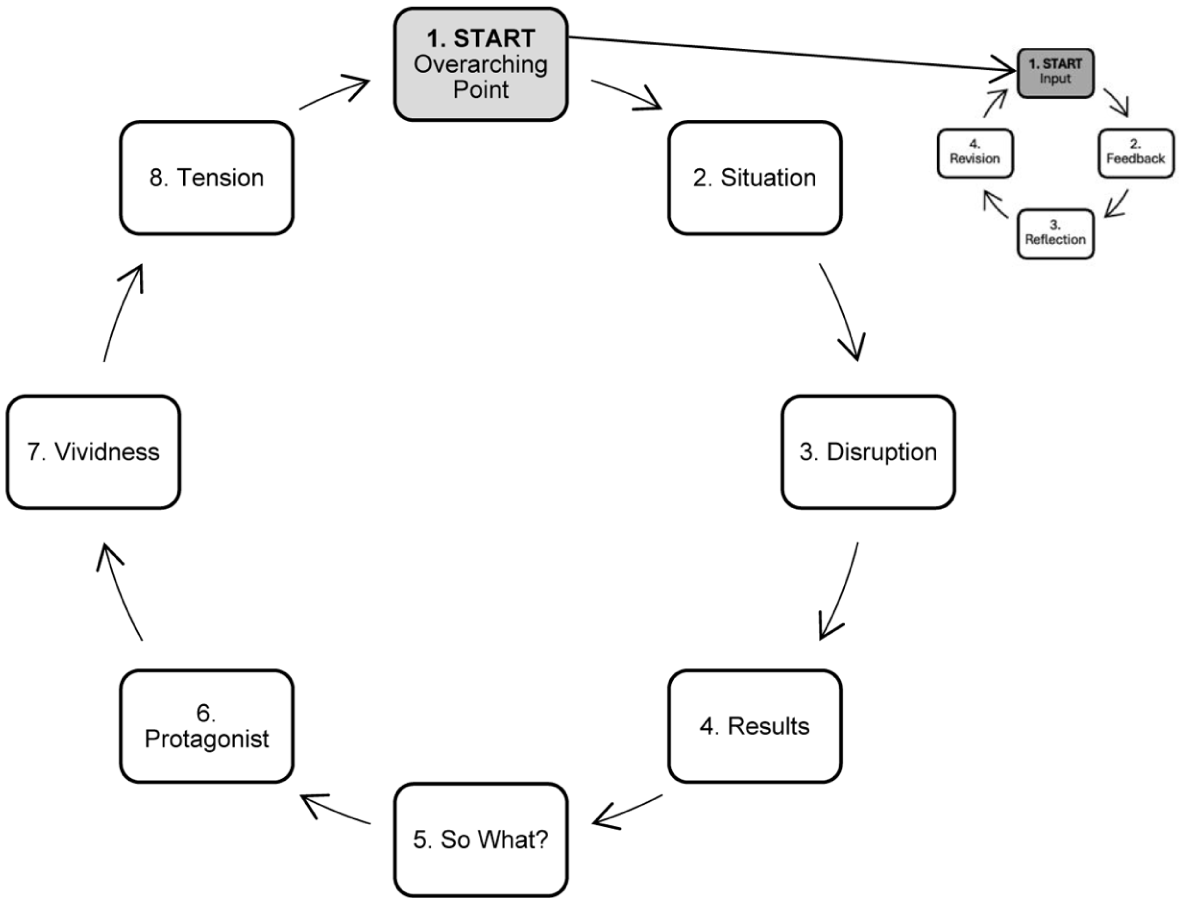

Before engaging with the AI tool, students submitted an initial draft to the instructor to demonstrate substantive effort and establish a baseline for revision. Once the draft was approved, students opened StoryCoach and entered their story section by section in eight iterative cycles designed to slow the composing process and foreground reflection.

For the first five cycles, students labeled and submitted one component of the five-part storytelling framework, which included the Overarching Point, Situation, Disruption, Results, and So What text. After each submission, the AI generated feedback identifying one strength, one opportunity for improvement, and one question for reflective revision. Students reviewed this feedback, reflected on its relevance, and then decided whether and how to revise before advancing.

After completing the five structural sections, students repeated the same process for three enhancement dimensions: Protagonist, Vividness, and Tension. In total, students completed eight discrete feedback–reflection cycles, each representing a short, focused loop of inquiry. This structured sequencing enabled the AI to model the logic of revision without replacing human judgment, aligning with pedagogical calls for dialogic rather than corrective feedback (Carless & Boud, 2018).

Implementation and Pedagogical Framing

Students completed the in-class writing sequence, which consisted of eight timed sprints of approximately 10 min each. Figure 3 visualizes the workflow linking structural and stylistic refinement through iterative cycles of feedback, reflection, and revision for each sprint.

Workflow of StoryCoach input, feedback, reflection, and revision cycles (eight 10-min sprints). Students completed eight iterative input-feedback-reflection-revision cycles, each lasting approximately 10 min. The workflow guided them through five structural and three stylistic dimensions of storytelling, emphasizing reflection before revision.

StoryCoach operated exclusively through instructor-authored instructions using ChatGPT 4.0 in its standard configuration with all extended capabilities disabled to maintain privacy compliance and institutional review board approval (IRB No. 25-1850). Each new chat reset automatically, ensuring that feedback remained localized to the section under review. The resulting design was transparent, replicable, and adaptable for other instructors seeking discipline-specific AI tools that preserve learner authorship.

From a pedagogical standpoint, StoryCoach exemplified a feedback model that supports rather than supplants human instruction (Kasneci et al., 2023; Lee & Moore, 2024). Its design operationalized the logic of cognitive apprenticeship by making expert reasoning visible and guiding students through a structured, stepwise process of revision and reflection.

Methods: Measuring the Impact of a Feedback-Only AI Tool

Study Design

This study employed a quasi-experimental, within-subjects design to examine how an AI-enabled feedback tool influenced students’ narrative writing. Each student’s post-intervention draft was compared to their own pre-intervention draft rather than to a separate control group. This quasi-experimental approach allowed for testing the instructional impact of StoryCoach within an authentic classroom environment where random assignment was neither practical nor pedagogically appropriate. Because all participants experienced the same instructional sequence, the pre/post comparison provided strong internal validity for assessing changes in rhetorical vividness and engagement. The intervention occurred during a 90-minute, in-class session in which students interacted with StoryCoach across eight structured feedback and revision cycles.

Participants and Data

Participants were 29 undergraduate business students enrolled in an upper-level business communication elective, Storytelling to Influence and Inspire, at the University of North Carolina’s Kenan-Flagler Business School. The course emphasizes leadership communication through authentic, audience-centered narratives, aligning with research that positions storytelling as a key mechanism for influence and relational credibility in professional contexts. Each student produced two drafts for each of two required stories (a pre-revision and post-revision version), resulting in narrative submissions of approximately 250–400 words per draft, the equivalent of a 2- to 3-min spoken narrative. The study was reviewed and deemed exempt by the university’s Institutional Review Board under the benign behavioral intervention category (Exempt 07/17/2025, Study No. 25-1850). In total, 114 drafts comprised the quantitative data set.

Quantitative Measure

Vividness was operationalized as the presence of concrete, sensory, and emotionally resonant language that enhances reader engagement. Each draft was analyzed for vividness features per 100 words to control for length variation and included three categories: (1) figurative comparisons such as metaphors and similes (“this is like that”); (2) sensory imagery evoking sight, sound, movement, or texture; and (3) internal monologue or dialogue that reveals a protagonist’s emotion or thought process. Coding followed a rubric adapted from Green and Brock’s (2000) narrative transportation framework, which defines vividness as language that draws the reader into a mental simulation of the story experience. Although students engaged in eight input–feedback–reflection-revision cycles that addressed multiple rhetorical dimensions, including tension, protagonist development, and vividness, vividness was selected as the primary quantitative outcome because it can be reliably operationalized through linguistic features. Measuring vividness provided a concrete, replicable indicator of rhetorical development while other dimensions were examined qualitatively. Because StoryCoach was intentionally designed to prompt attention to vivid language, vividness is treated here as a feature-specific outcome aligned to the instructional target, rather than as a comprehensive proxy for overall writing quality. Accordingly, the analytic focus is on the tool’s capacity to support rhetorical noticing and revision within a constrained domain. Future work should evaluate whether these feature-specific gains generalize to broader measures of writing effectiveness and transfer across genres.

Coding Procedure

Quantitative coding was conducted by the instructor using a predefined rubric adapted from Green and Brock’s (2000) narrative transportation framework and was performed blind to draft order, student identity, and grading outcomes prior to final evaluation. Drafts were anonymized and randomized prior to coding so that vividness features were evaluated independently of draft order. For each draft, the instructor identified and counted instances of figurative comparison, sensory imagery, and internal dialogue or monologue. Raw counts were then divided by each draft’s total word count and multiplied by 100 to generate a standardized “features per 100 words” score.

Statistical Analysis

Quantitative analyses (descriptive statistics, paired-samples t tests, Cohen’s d, and chi-square tests) were conducted using standard procedures recommended by the Odum Institute for Research in Social Science at the University of North Carolina at Chapel Hill. Analyses were carried out using established formulas and cross-checked for consistency to ensure accurate reporting.

Qualitative Measure

Two independent raters conducted a comparative reader-judgment assessment of pre- and post-intervention drafts, evaluating descriptive richness and overall engagement. The raters read an initial small sample of story pairs together to become familiar with the task, but no formal norming or reconciliation procedures were applied. This approach was intentional: The goal was to preserve authentic, audience-like responses rather than to enforce evaluative consensus. Even without formal calibration, interrater agreement was substantial (Cohen’s κ = .68), indicating a consistent pattern of independent reader judgment across drafts.

Perceptual Measure

A short post-use survey captured students’ perceptions of StoryCoach as a learning and writing partner. Items included open-ended responses and Likert-scale ratings focused on perceived usefulness, clarity of feedback, and comparative effectiveness relative to peer feedback in workshops. Two-thirds of respondents characterized their experience as positive, and 88.9% indicated that StoryCoach was more helpful and specific than peer feedback. This pattern aligns with the design intent of StoryCoach as a supplement to classroom instruction, providing immediate, low-stakes feedback during revision rather than replacing instructor guidance.

Importantly, the survey did not include a direct comparison between StoryCoach and instructor feedback; thus, findings should be interpreted as evidence of the tool’s value relative to peer-based input rather than as a substitute for trained human instruction. Prior research comparing AI-generated and human feedback suggests that trained human feedback remains stronger for holistic rhetorical judgment, contextual nuance, and pedagogical decision making, even when AI performs well on specificity and surface-level features (Steiss et al., 2024). Future research should examine how feedback-only AI functions within a three-part feedback ecology involving AI, peers, and instructors. To provide a boarder perceptual measure, a separate survey item asked students to judge whether StoryCoach improved their stories, as reported in the Results section.

Analysis

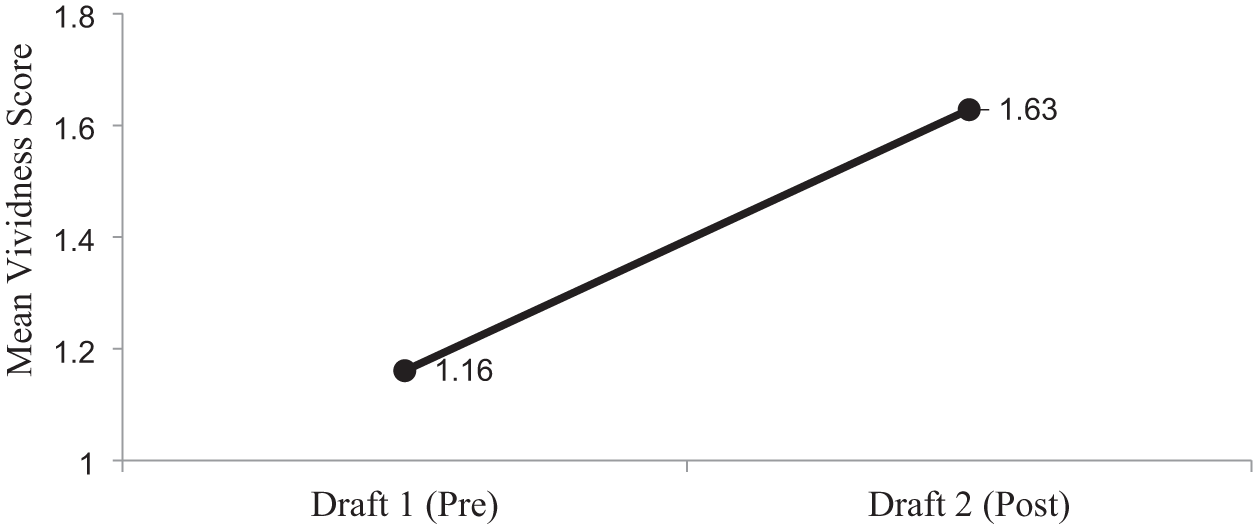

Paired-samples t tests were conducted to examine differences in vividness scores between students’ first and second drafts. The results were significant, t(56) = 10.49, p < .001, d = 1.39, indicating a large effect size and suggesting substantial improvement in linguistic vividness following interaction with StoryCoach. A chi-square test of reader preference also revealed a significant shift toward post-revision drafts, χ2(1, N = 114) = 12.67, p < .001, confirming that independent raters consistently favored the revised versions for engagement and descriptive richness.

Qualitative analysis of the reader-judgment data revealed complementary patterns in the kinds of revisions that shaped audience preference. Although readers were not asked to identify specific factors influencing their choices, the rater rubric included a column for optional notes. Within that discretionary space, both readers occasionally remarked that certain drafts, which were later revealed to be post-revision versions, felt more concrete, immersive, and emotionally engaging. In contrast, pre-revision drafts were sometimes described as more abstract or expository, summarizing events rather than unfolding them. These unsolicited impressions aligned with the quantitative gains in vividness coding, indicating that the rhetorical features StoryCoach emphasized (such as sensory specificity, scene construction, and narrative tension) were the same elements readers found most compelling.

Together, these converging results underscore how structured AI feedback can heighten narrative engagement without diminishing student authorship. The next section examines these outcomes in greater detail, focusing on measurable improvements in vividness, alignment between StoryCoach’s feedback emphasis and reader preferences, and emerging implications for feedback literacy and reflective writing pedagogy.

Results: Quantitative, Reader, and Student Perceptions

Quantitative Findings

Quantitative analysis revealed significant improvement in vividness following the StoryCoach intervention. Mean vividness scores increased from M = 1.16 to M = 1.63 features per 100 words, t(56) = 10.49, p < .001, d = 1.39, indicating a large effect size and a substantial improvement in rhetorical vividness. Figure 4 displays the pre/post distribution of vividness features, demonstrating a consistent upward shift across nearly all participants.

Mean vividness scores per 100 words (95% CI).

Reader Judgments

Rater-judgment data supported these quantitative findings. Two independent raters compared 57 story pairs (114 total judgments) and showed substantial agreement (κ = .68). Across those judgments, post-revision drafts were preferred 76 times (67%), indicating that raters more often favored the revised versions for overall engagement and descriptive richness. This preference is consistent with increased vividness, although it likely reflects multiple co-occurring revisions that include clarity, pacing, and narrative coherence rather than vividness alone. In their qualitative comments, raters sometimes described the revised drafts as more concrete, sensory, and immersive, while also occasionally noting stronger narrative progression, clearer scene development, and deeper scene coherence. Because raters were asked to select the more engaging draft rather than to isolate specific causal features, preference judgments should be interpreted as holistic evaluations of revision quality rather than as direct attributions to vividness alone.

Student Perceptions

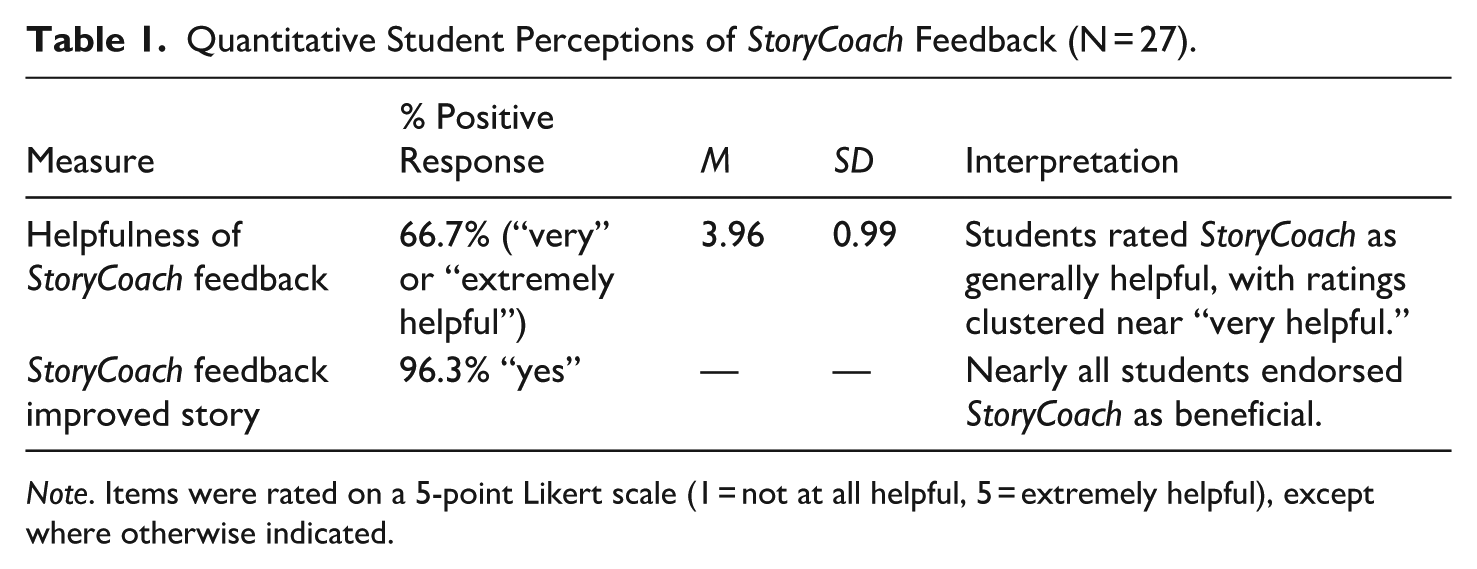

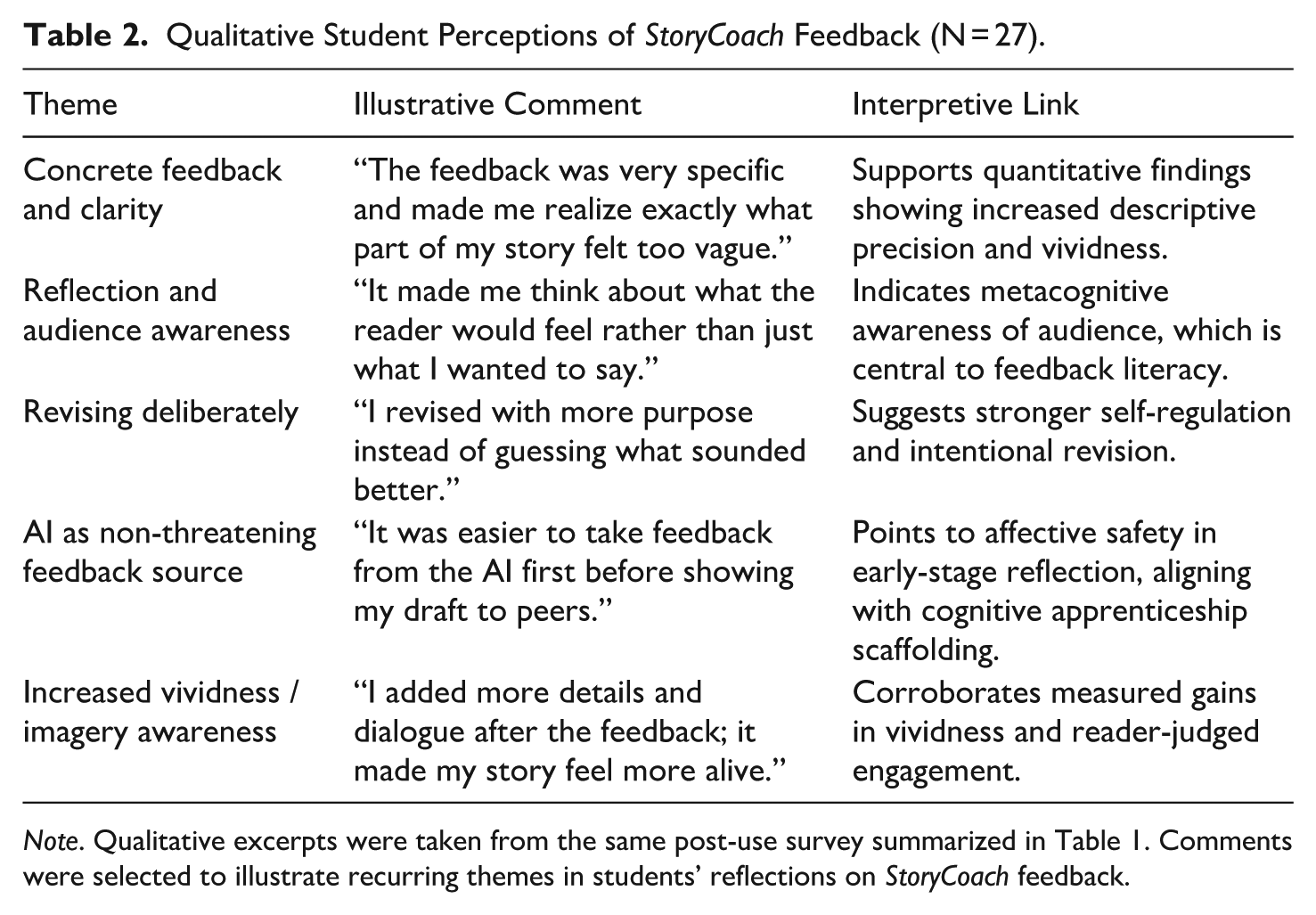

Student perceptions aligned with these results. Post-use survey data indicated that students viewed StoryCoach as an effective reflective tool that improved their ability to revise deliberately and with greater awareness of audience engagement. Nearly all participants (96.3%) reported that they believed the feedback from StoryCoach improved their stories. While self-reported perceptions do not independently confirm performance gains, they parallel the measurable improvements observed in vividness and rater judgments, suggesting that the model’s reflective prompting was both effective and positively received. These responses also point to an affective dimension of feedback literacy: some students described StoryCoach feedback as lower stakes early in the drafting process. Table 1 summarizes the statistical results, while Table 2 provides student comments representative of recurring themes from the post-use survey.

Quantitative Student Perceptions of StoryCoach Feedback (N = 27).

Note. Items were rated on a 5-point Likert scale (1 = not at all helpful, 5 = extremely helpful), except where otherwise indicated.

Qualitative Student Perceptions of StoryCoach Feedback (N = 27).

Note. Qualitative excerpts were taken from the same post-use survey summarized in Table 1. Comments were selected to illustrate recurring themes in students’ reflections on StoryCoach feedback.

Summary of Findings

Taken together, these results demonstrate that StoryCoach functioned as an effective pedagogical partner for fostering rhetorical vividness and reflective awareness in student writing. The convergence of quantitative gains, rater judgments, and self-reported perceptions indicates that the tool improved the descriptive and narrative quality of student stories while supporting students’ sense of agency during revision.

Students’ qualitative comments also suggested an increased comfort with engaging feedback, particularly in early drafting stages, indicating that StoryCoach’s neutral, process-oriented prompts created a low-pressure space for reflection. These affective shifts parallel the measurable improvements observed in vividness and reader preference, providing a cohesive picture of how a feedback-only AI can enhance student revision practices.

The following discussion interprets these findings in relation to feedback literacy, cognitive apprenticeship, and the broader pedagogical role of generative AI in writing instruction.

Discussion: What “AI That Doesn’t Write” Teaches Us

Pedagogical Meaning

It is important to note that some degree of improvement from an initial draft to a revised draft is expected, particularly among upper-level business communication students with prior writing and revision experience. This study does not claim that all observed gains can be attributed solely to AI feedback. Rather, the findings suggest that, within a typical revision cycle, interaction with feedback-only AI was associated with measurable changes in vividness and reader-perceived quality beyond what might typically be assumed from revision alone. Future research employing control or comparison groups will be necessary to isolate the unique contribution of AI feedback relative to natural document evolution.

To be sure, the results of this study reveal more than a measurable improvement in vividness. They point to a deeper pedagogical shift: feedback-only AI can externalize cognitive processes that are often invisible to writers. Rather than generating new text, the model’s design required students to attend to their own linguistic and rhetorical choices, prompting reflection rather than replacement. In this way, the tool enacted a form of cognitive apprenticeship (Collins et al., 1989), thereby scaffolding the learner’s awareness of strategy use in real time. Students engaged with their own prose as problem-solvers rather than as passive recipients of correction.

The measurable gains in descriptive precision are consistent with theoretical work on cognitive engagement and narrative transportation (Green & Brock, 2000). Vivid writing reflects not only stylistic enhancement but also a writer’s capacity to imagine, simulate, and guide a reader’s experience, which is an inherently rhetorical act. When students revised with AI-generated feedback, their attention was directed toward audience-centered communication, aligning with the pedagogical aim of writing instruction as the cultivation of reader and audience awareness in narrative communication.

Although vividness was the focal quantitative outcome, reader judgments suggest that interaction with StoryCoach was associated with broader revision strategies, including improved coherence and narrative flow, which are outcomes consistent with its design as a reflective rather than prescriptive tool.

Students’ responses also resonate with the affective dimension of feedback literacy (Carless & Boud, 2018). Several students noted that receiving feedback from the AI felt easier or lower-stakes than sharing early drafts with peers, a perception they associated with reduced rhetorical vulnerability. Others noted that the AI’s comments helped them notice vague sections or better understand why certain parts of their stories were less effective. Taken together, these comments reflect student-reported perceptions about the emotional tone of early feedback interactions rather than measured affective change. Within a feedback literacy framework, such perceptions suggest a possible affective dimension of feedback-only AI that warrants direct investigation in future research.

Human Reader Response

The impact of the intervention was not limited to numerical measures or student self-reports. When two human evaluators reviewed the paired story drafts blind, they judged the post-feedback version (Draft 2) to be stronger in 76 of the 114 evaluations (57 paired submissions x 2 evaluators). Although not unanimous, this level of agreement indicates that readers perceived meaningful differences between drafts. When they found Draft 2 more effective, they attributed its success to greater clarity, smoother narrative flow, and a more natural tone. These qualities made the writing feel immersive by pulling the reader into the scene more fluidly (narrative transportation) and easier to follow (cognitive ease). Evaluators also noted that the revised stories often felt more authentic, like something a real student might write rather than something polished to the point of stiffness.

In a smaller number of cases, Draft 2 did not surpass the original, typically when revisions introduced new complexities or disrupted the story’s natural rhythm. Even so, the overarching pattern across reader feedback underscored that the most effective revisions balanced vivid description with clarity and authenticity, which are hallmarks of rhetorical presence.

These impressions provide an external validation of the intervention’s impact. The differences between drafts were perceptible in linguistic measures and in the readers’ experience of the text. Because the AI provided feedback rather than rewriting or suggesting specific revisions, these improvements likely emerged from students’ own interpretive and stylistic decisions rather than algorithmic intervention.

A Framework for Educator-Built AI Tool

The effectiveness of feedback-only AI depends on the intentional design choices made by educators. This study suggests a replicable framework that positions instructors as architects rather than adopters of AI feedback systems. The process begins by defining the cognitive or rhetorical skill to be developed so that the AI’s analytical function aligns with a specific learning outcome. Educators then script diagnostic prompts that ask the system to identify patterns, tendencies, or rhetorical effects rather than to supply revised text. In doing so, instructors shape the boundaries of the AI’s role and make its reasoning transparent to learners.

Next, instructors collect data and validate learning, comparing measurable outcomes, such as reduction in error types or gains in rhetorical presence, with qualitative indicators of student awareness. Findings are then used to iterate ethically and transparently, refining prompts, clarifying feedback language, and monitoring how students interpret and apply AI comments. This cyclical process embeds ethical oversight directly into tool design and ensures that AI serves pedagogical aims rather than efficiency alone. The resulting framework offers a practical model for educators seeking to build feedback systems that foreground human judgment, preserve authorship integrity, and align with human-centered AI principles (UNESCO, 2023).

Implications for Writing Pedagogy

Collectively, these findings demonstrate that quantitative gains, reader evaluations, and student reflections invite a rethinking of AI’s role in writing pedagogy. While generative AI often raises concerns about authorship and authenticity, a feedback-only model reframes the technology as a reflective partner rather than a co-writer. By restricting the model’s generative capacities, instructors can direct student attention to judgment rather than output and to why a sentence does not work and not how to produce more of the same. Such an approach aligns with research on metacognitive regulation in writing (Zimmerman & Bandura, 1994) and on the value of prompting students to evaluate feedback before acting on it (Nicol, 2021).

In this light, the pedagogical potential of AI lies in simulating how a human reader notices, reflects on, and responds to writing. When used within a transparent, iterative framework, AI can amplify both awareness and agency, which are two of the hallmarks of effective learning.

Limitations and Future Work

As with all classroom-based research, these findings are bounded by context. The present study was conducted within a single business communication elective at one institution with a somewhat homogeneous group of postsecondary learners. Replicating the intervention across multiple courses, instructional settings, and learner populations would help determine the generalizability of these results. Multi-site implementation could also clarify how institutional culture, instructional modality, or disciplinary conventions shape the integration of feedback-only AI.

A second limitation concerns the study’s time frame. Although the immediate post-intervention gains in vividness and rhetorical awareness were substantial, the persistence of those effects remains unknown. Future work should trace whether these skills transfer to new genres and contexts, particularly to workplace writing, where communicative demands are often time-pressured and audience expectations diffuse. Longitudinal follow-up studies could reveal whether AI-facilitated reflection produces durable changes in writers’ habits of mind.

Finally, while this project isolated the effects of feedback-only AI, an important next step would be to compare different feedback ecologies: AI-only, peer-only, and hybrid formats that blend algorithmic noticing with human response. Such comparisons would illuminate relative efficacy and the complementary strengths of machine and human feedback, which balance speed and consistency on one hand with empathy and nuance on the other. Mapping these intersections can help educators design feedback systems that preserve the best of both worlds: the scalability of technology and the interpretive depth of human mentorship. Moreover, such investigations would further clarify how feedback-only AI can complement, rather than compete with, human instruction in developing advanced writing competencies.

Ethical and Practical Implications

Framing AI as a reflective partner rather than a co-writer preserves the ethical core of writing instruction: authorship integrity. The feedback-only model described here ensures that students remain the agents of revision while making the evaluative process transparent and replicable. In doing so, this design approach operationalizes key principles of human-centered AI, which require transparency, accountability, and learner agency and which are outlined in UNESCO’s Guidance for Generative AI in Education and Research (2023). These principles matter because UNESCO’s framework is currently one of the most authoritative global standards for ethical AI integration in education, emphasizing precisely the values that writing instruction must protect: ownership of ideas, academic integrity, and meaningful human oversight.

Beyond ethics, the model also offers practical transferability. The same feedback-only design framework can be adapted to other rhetorical skills central to business communication, including clarity, concision, organization, style, tone, and even grammar. By combining ethical oversight with pedagogical design, feedback-only AI demonstrates that technological innovation in writing instruction need not compromise educational values. Instead, it can enhance them by modeling how educators might integrate AI responsibly, equitably, and with attention to the communicative judgment that defines professional expertise.

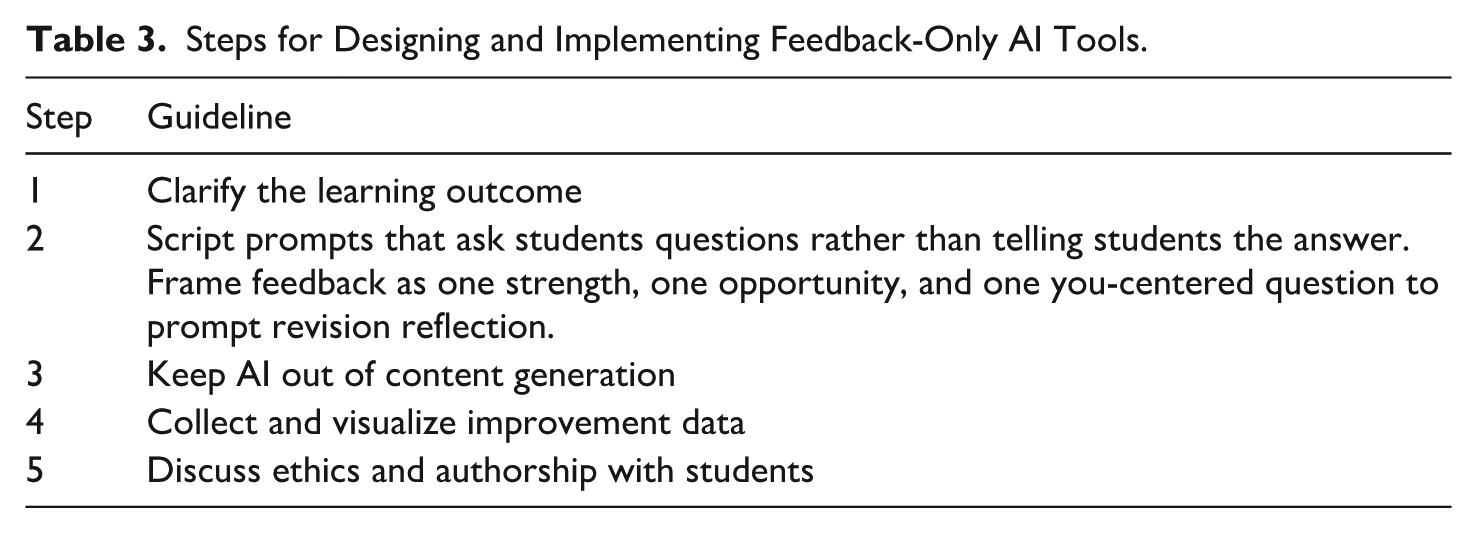

Practical Application: Implementing Feedback-Only AI in the Classroom

The principles of this study can be translated into a replicable classroom practice through a concise, five-step design checklist (see Table 3). The checklist positions instructors as intentional designers of AI feedback systems rather than as passive users of commercial tools. Each step emphasizes reflection, transparency, and alignment with clearly defined learning outcomes.

Steps for Designing and Implementing Feedback-Only AI Tools.

By articulating outcomes before integrating AI, educators ensure that feedback targets specific rhetorical or linguistic skills. Prompts that invite inquiry rather than correction maintain student agency in revision, as do guardrails that prevent AI from producing text on students’ behalf. The data collection step allows instructors to monitor progress empirically, linking qualitative reflection to measurable outcomes. Finally, explicit discussion of ethics and authorship reminds students that the goal of AI feedback is awareness and not automation. Together, these practices create a transparent, replicable model for human-centered AI pedagogy that scales across communication courses without compromising the integrity of the writing and thinking processes.

Conclusion: Toward a Rhetorical Pedagogy of Human–AI Collaboration

This study demonstrates that effective AI-supported writing instruction begins by defining clear boundaries around what AI may and may not do. When artificial intelligence is prevented from generating text on behalf of students, it redirects attention toward the writer’s own decision-making processes during revision. The feedback-only model described here foregrounds reflection, rhetorical judgment, and authorship integrity, which are core capacities in business and professional communication that are increasingly strained in an era of automated text production.

When educators design AI feedback tools intentionally, they can scale personalized learning without diluting the learner’s voice. The approach illustrated here offers a blueprint for pairing human expertise with machine precision: educators define the skill, frame the prompts, and interpret the data, while students engage actively with feedback that strengthens their rhetorical awareness. Rather than automating writing instruction, this model repositions it as a site of human-centered reasoning, where technology supports discernment rather than substituting for it.

Looking forward, integrating feedback-only AI invites a broader rethinking of the relationship between rhetoric and technology. As AI becomes an enduring feature of professional communication, the field would benefit from a dedicated subdiscipline of rhetorical AI pedagogy that is grounded in authorship ethics, learning psychology, and the evolving literacies of digital discourse. Such a perspective ensures that AI remains a partner in reflection rather than a source of text, and that business communication continues to develop writers who can think critically, adapt strategically, and lead with language.

Footnotes

Appendices

Funding

The author received no financial support for the research, authorship, and/or publication of this article.

Declaration of Conflicting Interests

The author declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.