Abstract

Businesses increasingly use Artificial Intelligence (AI) Applicant Tracking Systems (ATS) to screen job applicants’ résumés. A summative content analysis auditing how 18 business communication, business English, and technical communication textbooks cover résumés and AI ATS found a lack of consensus. The study identified the challenge of offering specific advice on emerging AI technology in textbooks. The article recommends writing and teaching practice changes when discussing emerging technology and creating or using textbook content.

Keywords

Instructors may increasingly face students who ask, “Is anyone even seeing my résumé?” With advances in artificial intelligence (AI) applicant tracking systems (ATS), the answer for students applying to major corporations is potentially “no.” AI ATS or AI recruiting can use algorithmic decision making to analyze and reduce a large virtual “stack” of résumés down to a set for screening interviews, which AI can perform and assess. AI can narrow the screened candidate pool to a manageable final group of applicants (Lacroux & Martin-Lacroux, 2022). Higher education institutions must prepare students for an application process that involves AI tools. Textbooks have included references to résumé scanning technology and scannable résumés. However, the ubiquity of AI ATS places new demands on textbook authors, supplemental educational resources, and job search guides to include more up-to-date information about the role of AI in the hiring process.

This study presents the results of an audit of business communication, business English, and technical communication textbooks’ inclusion, discussion, and advice concerning résumé writing and AI ATS. Finally, the study provides recommendations for textbook authors and faculty as they advise students on changing technology and the job market.

Literature Review

An ATS is a software that helps recruiters sort and track candidates. Unlike résumé scanners, AI ATS technology uses algorithms to aid decision making to determine skills and experience and rank applicants (Lacroux & Martin-Lacroux, 2022). Goldman Sachs has used this technology since 2016 (Picker, 2016). As of 2019, 98.8% of Fortune 500 companies used AI screening tools (Beltran, 2022; Hu, 2019). Based on a market share analysis of one of AI ATS products, both large and small businesses in all industries use these tools (Enlyft, n.d.). Also, companies use AI for end-to-end recruitment (Barthell, 2021; Buijsrogge, 2021). For example, AI ATS company HireVue (n.d.) engages, screens, assesses, interviews, and provides hiring support and decision making. Companies even use AI to forecast salaries based on résumés (Gong et al., 2022). Further, the trend is not limited to the United States (Biswas, 2021). Globally, the market for ATS is estimated to increase from $2.7 billion in 2022 to $4.1 billion in 2030 (Gale Business, 2023). Thus, all job seekers must have AI ATS-compatible résumés to help them through the screening process.

Résumé optimization for online submission is not a new topic, but résumés ready for AI ATS differ from scannable or online résumés. In the 1970s, companies like IBM and government agencies like the US Pentagon used computers to sort and screen applicant résumés (O’Neil, 2016). A person or computer could read scannable résumés (Krause, 1997). By the late 1990s, far more companies began using computers to scan candidate résumés for keywords (Quible, 1998). Scannable résumés became popular in the 1990s and were included in business communication textbooks in the early 2000s (Schullery et al., 2009). Scannable résumés, sometimes called electronic résumés, were designed for the physical scanning of a paper résumé into a computer system or for electronic screening that focused on keywords, essentially counting occurrences to determine matches; with scannable résumés, software sorted scanned résumés by keyword inclusion (Roever & McGaughey, 1997). Candidates were encouraged to include nouns and reduce the use of adjectives and adverbs (Sorohan, 1994). The trends shifted to include online résumés or eportfolios with résumés. Unlike scanners, where software might process or read a résumé first, human viewers read online résumés, which included electronic files uploaded to job boards (Quible, 1998). Online résumés, set up like a website or eportfolio, might have multiple pages placed online using programming skills (Krause, 1997). For example, a candidate might prepare a blog or website with pages for his or her résumé and work samples to demonstrate technological ability.

In the field of business communication education, the discussion of résumé changes and their influence on résumé writing and instruction increased in the mid-1990s and shifted dramatically in the early 2000s. In 1997, Business Communication Quarterly included articles on preparing scannable résumés and online résumés. However, more than 10 years later, only 3% of employers preferred a scannable résumé (Schullery et al., 2009). Unlike résumé scanners, AI ATS can provide an assessment and can perform everything from screening interviews to in-person interview scheduling and predicting candidate performance and success. AI recruiting dramatically improves hiring efficiency, including the time to review résumés and to hire (Black & van Esch, 2020). Further, AI can outperform humans in narrowing the candidate pool (Kuncel et al., 2014). Candidates may no longer need a scannable résumé but only be prepared for AI-based recruitment and screening.

Since the late 2010s, business communicators and researchers have been increasingly interested in AI. Advancements in AI have influenced multiple aspects of communication, including students’ internal and external communication. The 2019 Association of Business Communication Annual Conference held a special session for “AI and Team Communication.” From that origination, researchers published a significant article examining the basics of AI and its history (Getchell et al., 2022). The authors identified that AI influences “team communication and meeting tools, text-summarization tools, augmented writing tools, oral communication evaluation, and conversational agents” (Getchell et al., 2022, p. 10). Within that framework, AI ATS can fall under “oral communication evaluation,” but the systems combine multiple tools referenced in that article. Getchell et al. (2022) argued the importance of instructors using frameworks and developing students’ ethical understanding of AI. Several other AI applications exist in the classroom and on many college campuses. Some schools use chatbots for student interaction. As another example, Naidoo and Dulek (2022) examined text summarization’s moderate but limited effectiveness. However, students reported that their business schools were not effectively preparing them for AI in the workplace (Abdelwahab et al., 2022). Little has been studied regarding student perceptions of AI ATS.

Business school students applying to corporations will likely encounter AI ATS. A survey of over 2,250 employers in the United States, United Kingdom, and Germany found that over 90% of employers surveyed use AI ATS systems to screen and filter candidates (Fuller et al., 2021). When using an AI ATS, recruiters likely only look at top-scoring candidates, but that depends upon the organization. Candidates must learn how they interact with AI during this process, especially as chatbots advance. AI Chatbots can provide routine communications to improve engagement and the hiring experience (Anitha & Shanthi, 2021). The human recruiter can see a candidate’s materials submitted to the AI ATS; therefore, the applicant must be sure the submitted materials work for a computer and a human to read.

Given these changes, business and technical communication educators must prepare students for a new process of job applications. However, this teaching shift presents challenges. Moshiri and Cardon (2020) noted that business communication instructors are less likely to cover emerging technologies in their classes. Further, the authors found that less technology-confident instructors were less likely to cover newer technology (e.g., professional networking sites and online meetings/video conferencing) than more technology-confident instructors. Moshiri and Cardon (2020) did not explicitly address AI ATS in their study. Without access to peer-reviewed information or content from sources like a career center, students may turn to popular sources for information on emerging technology. For example, on TikTok, categories like CareerTok have multiple creators, including professionals who work in recruiting, posting advice about how to apply for jobs; as of September 2023, CareerTok and CareerAdvice videos had three billion views (DiBenedetto, 2022).

Given publication cycles for traditional textbooks, the inclusion of references to technological changes risks the presence of quickly outdated concepts or websites. For example, textbook authors must consider the challenge of how to define and select terms for AI ATS. In the 1990s, researchers sometimes talked about new developments in scanning as “computer-readable” résumés (Kennedy & Morrow, 1994, para. 14), and textbook authors used the term “résumé scanning” regularly. In the 2010s and 2020s, the technology was called résumé or CV parsing, extraction, recognition, or matching, among other terms (Rojas-Galeano et al., 2022). Another term related to this is algorithmic decision making (Lacroux & Martin-Lacroux, 2022), encompassing AI ATS for recruiting and résumé and interviewing analyses. In algorithmic decision making, AI can make recommendations based on the extracted information from résumés and interviews using predictions about success and position fit, depending on the programming (Hunkenschroer & Luetge, 2022).

Further complicating instruction about AI ATS is rapid technological change and the need for more details about how screening systems work. When extracting information, different systems might pull content into blocks or look for information like name, job title, education, and skills (Palshikar et al., 2023). This information is straightforward and teachable. However, the companies running the systems do not explain how their AI ATS rank résumés or candidates. For example, Oracle Human Capital Management holds a significant market share for AI ATS. Its product, Taleo, is used by companies ranging from companies like Starbucks to schools like Vanderbilt (Oracle, n.d.-a). Recruitment, including résumé review, is part of Oracle Recruiting’s Talent Management functions. The website explained that the Oracle product can help users “move fast and minimize hiring bias by using AI to find the best prospects and applicants for open positions” (Oracle, n.d.-b, Candidate Engagement section, para. 1). However, the website’s public-facing documentation did not inform applicants about résumé format or best practices. The website presented public-facing documentation toward employer/corporate purchasers of the recruiting products. The Oracle website displayed the top AI-assessed candidates for a company in a clear view (Oracle, n.d.-b). These systems organize and store identifiable personal information, including candidate names and contact information. Some have called for companies to make candidate data use more transparent to improve equity and privacy understanding (Albassam, 2023). In summary, students must be prepared to use opaque systems.

AI ATS can be inflexible, eliminating candidates based on gaps in work history or recommending a candidate with a college degree as having skills or a positive work ethic based on the degree (Fuller et al., 2021). On perceptions of AI ATS as inflexible, Fuller et al. (2021) found that “a large majority (88%) of employers agree, . . . qualified high skills candidates are vetted out of the process because they do not match the exact criteria. . . . That number rose to 94% in the case of middle-skills workers” (p. 3). However, despite those concerns, AI ATS maximizes efficiencies and has grown in popularity since the publication of that 2021 study. As mentioned, online recruitment with an AI ATS saves companies time and money, even if the systems are sometimes unreliable (Rathee & Bhuntel, 2017).

AI ATS systems reflect the biases of their creators (Yarger et al., 2020). Some early systems to analyze résumés demonstrated these biases, rejecting candidates based on race, gender, and neighborhood in the 1970s and 1980s (O’Neil, 2016). Yarger et al. (2020) explained, “Historical data used to train machine learning models may reflect longstanding societal biases, which may unintentionally harm marginalized populations” (p. 390). These biases can happen in two ways: first, by the algorithm design depending on who and how it was programmed, and second, by the use of the results after the algorithm recommends specific candidates. For example, Yarger et al. (2020) referenced a platform that reduces bias by deemphasizing personally identifiable information. Researchers reviewed two screenshots of candidate results pages from AI ATS companies for this study. Both results pages led with names, which would potentially reveal gender or hint at nationality, and one showed that when clicking on a name, the user would see a photograph that might reveal age and race.

Fortunately, the résumé review systems appear to have some established practices, including assessing but differing slightly on formatting and looking for soft and technical skills. Based on the researchers’ review of paid résumé review websites and websites that review student résumés as a university tool, some of those best practices mirror advice for scannable résumés. (Note: The authors of this article recognize this as an area for future research and analysis.) Some universities have contracted with AI résumé training companies like Quinncia.io and VMock. Simulation companies like Quinncia.io work with school partners and provide training materials based on their work with AI ATS tools like HireVue, known for its video interviewing capabilities. Quinncia.io lists soft and hard skills keywords and advises users to improve keyword use. Similarly, paid services to review résumés like Rezi, ResumeWorded, JobScan, and Zety emphasize keyword use or soft and hard skills. Textbook authors can and sometimes do incorporate principles into textbooks, classroom materials, and activities.

In summary, major companies use AI recruiting; however, much is unknown about how these systems work, and business communication researchers have many opportunities to expand on what has been done. AI communication research can be divided into functional, relational, and metaphysical research areas (Guzman & Lewis, 2020). This study takes a functional approach, which views the “dimensions through which people make sense of these devices and applications as communicators” (Guzman & Lewis, 2020, p. 70). This study is less concerned with the technology or programming. Instead, it considers how textbook authors and faculty understand and explain AI ATS and the implications for professional development, communication, and business English pedagogy, specifically using textbooks as a starting point. The researchers are interested in how authors and faculty make sense of AI recruiting.

Given the need for student preparation to use AI recruiting systems, this study explores the approach to AI ATS and the content about résumé optimization in business communication, technical communication, and business English textbooks. This study also examines how current textbooks already support the teaching of résumés ready for AI ATS:

Research Question 1: How do business communication, technical communication, and business English textbooks cover résumés?

Research Question 2: How do business communication, technical communication, and business English textbooks cover AI ATS concerning résumés?

Method

Data Collection

The study’s data collection consisted of selecting business communication, business English, and technical communication textbooks from multiple publishers (e.g., Cengage, Kendall Hunt, Macmillian, McGraw Hill, Oxford, Pearson, Routledge, and Sage). The inclusion criteria were that the textbook (1) be a recent publication (published since 2018) to accommodate for the growth in AI ATS usage in the past 5 years; (2) include résumé writing or job searching in its table of contents; and (3) be generally well-known or assigned (not self-published or school-specific). Initially, the researchers started with 23 textbooks that met the criteria. Researchers removed five books from the analysis. Removal reasons included either the most recent publication was not accessible and the second most recent edition was published before 2018; or the textbook had a different title from another in the list but had the same authors and text. In total, the researchers evaluated 18 textbooks (see Appendix A for a complete list) from the areas of business communication (10), business English (two), and technical communication (six) published since 2018. The researchers intentionally focused most of their sample size on business communication because of the connections between business communication courses and job document preparation.

Data Analysis: Summative Content Analysis

The researchers used summative content analysis to examine how textbooks discuss AI, ATS, and résumés. A summative content analysis approach explores the contextual use of particular words and content in a text (Hsieh & Shannon, 2005). Scholars use summative content analyses to analyze textbooks by assessing content focus and areas of improvement for future publications (e.g., Kelly & Michalek, 2019). This method contains two processes: (1) selecting and quantifying specific words or content in the area of interest; and (2) exploring the contextual meaning of those words and the extent of subject area coverage in the texts (Hsieh & Shannon, 2005). This method differs from other versions of content analyses (e.g., conventional and directed; see Hsieh & Shannon, 2005, for a review of the approaches). The summative method starts with keywords that researchers initially identify from relevant literature reviews on the topic and also emerge in the data analysis process. In contrast, conventional qualitative content analyses define codes during data analysis through observation, and directed content analyses define codes from theory and relevant research before and during analysis.

The researchers used summative content analysis, traditionally used in textbook analyses (Hsieh & Shannon, 2005), to quantify the content, and they gained a deeper understanding of how textbook authors discuss items through contextual analysis.

Researchers evaluated material coverage with specific textbook content keywords (Hsieh & Shannon, 2005). Researchers first began reviewing the extent to which résumés were included in textbooks’ tables of contents and how the content was covered (e.g., Is it listed in the table of contents? Is it in a separate chapter, or is it under a subsection of a chapter?). This process allowed researchers to understand the coverage of the general topic of résumés. Next, researchers quantified the use of index keywords related to résumés, AI, and ATS in the textbook indexes. Four books did not contain indexes. Researchers used an electronic search function to review the texts’ ebook versions in those cases. Researchers developed a list of keywords and content matter by reading popular business news articles and peer-reviewed research on résumés (e.g., DiMarco & Fasos, 2020; Doan, 2021). A total of five keywords were included in this search (e.g., résumé, computer-read résumé, résumé scanning, AI or AI software related to résumés, and applicant tracking systems).

To ensure potential keywords were sufficiently covered, coders also read the complete indexes of the first five books to confirm they did not miss other keywords that could fit the subject matter’s scope. The researchers independently coded all textbooks in batches of four to five, stopping after each batch to discuss coding and reach a consensus on the quantitative portion of the findings.

After analyzing the extent of concept coverage, researchers examined the contextual meaning and patterns of the résumé content (Hsieh & Shannon, 2005). In that process, each researcher made separate, anonymized notes on each textbook and later combined the notes. In the initial notes review, one researcher searched for themes and concepts without referencing textbook authors, fields, or publication dates to gather a general understanding of the data. The second researcher did so regarding authors and fields. The review then proceeded with both authors by looking at the fields, and the final review considered all information. Each researcher’s detailed notes were summarized for the categories of the research questions. Additionally, the researchers identified strengths and weaknesses of coverage in the different content categories.

The researchers categorized the textbook advice based on their use of an AI ATS simulator built on a real-world AI ATS system. The researchers were trained on the systems and trained the AI tool in 2019, and they have used the systems with more than 13,000 students at their university as of the time of publication. For this analysis, the researchers focused on what has generally been found to be accurate based on user experience, what coincides with using an AI ATS system, and what has been published, as seen in the literature review of this article. An example of a category not included in the analysis is how education is listed since that may differ across AI ATS systems.

Results

This section first presents the analysis of how textbook authors cover résumés and then explains how authors specifically approach AI ATS and résumés. In the qualitative discussion of the textbooks, the researchers deliberately do not cite or name the textbooks or authors and try to make the textbooks challenging to identify. The study intends not to “rank” or “stack” textbooks but to assess the coverage of résumés and AI ATS broadly. The study authors acknowledge the strengths of all books reviewed. Further, the study authors recognize the difficulty for authors in incorporating emerging technology, specifically AI-based technology, and related advice in a traditionally published textbook.

Research Question 1: How Textbooks Cover Résumés

The results of the first research question provide a base understanding of how the sample generally covers résumés. Most textbooks included résumés. Sixteen (88.9%) textbooks listed “résumé” in their index. Of the two textbooks that did not (one business communication and one business English book), the business communication book discussed résumés in the text and had a dedicated subsection. The business English book did not cover résumés; its employment chapter was interview-centric.

Depth of résumé coverage

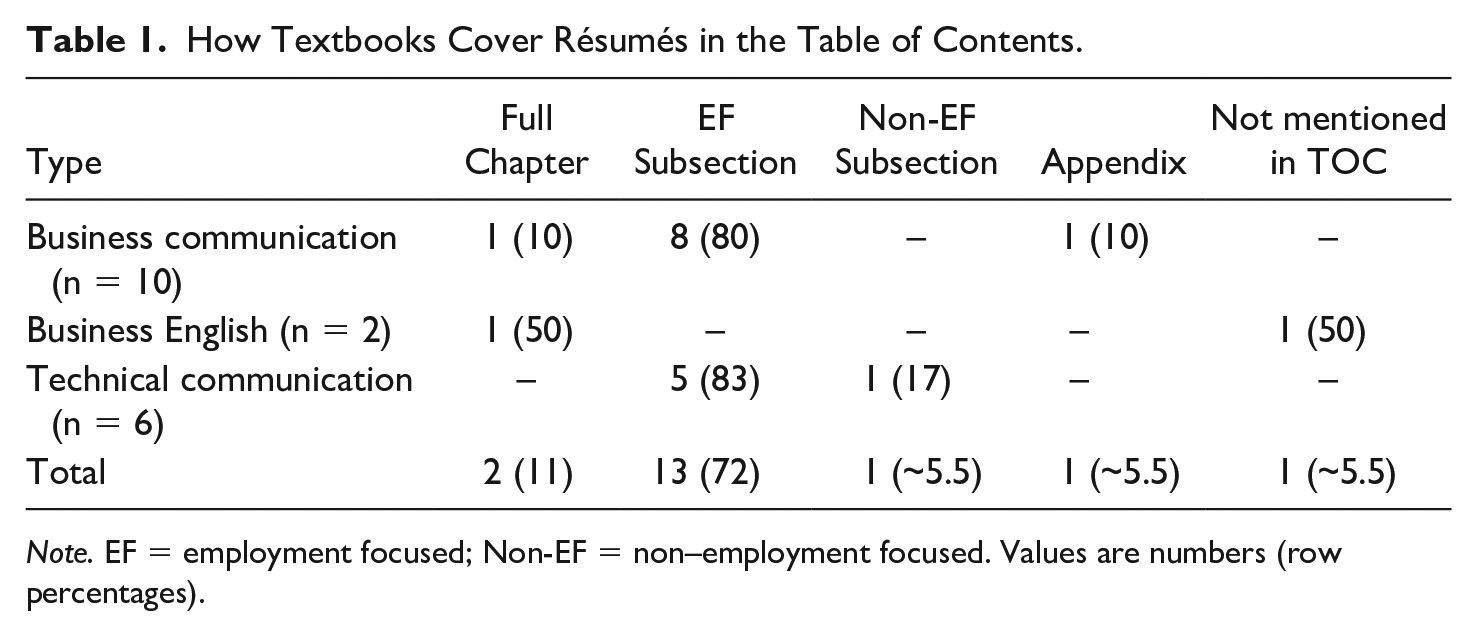

The study considered the depth of coverage in multiple ways, given different textbook audiences, lengths, and approaches. Business communication textbooks primarily featured résumés as part of an employment-focused subsection (8). Some business communication textbooks dedicate a chapter (1) or appendix (1) to the topic. Similarly, technical communication texts exclusively placed résumés in subsections: employment-focused (5) or non–employment-focused (1). One business English text had one entire chapter on résumés, while the other text in that field did not mention résumés in the table of contents. The most frequently seen way to cover résumés was through subsections in employment-focused chapters that also talked about adjacent topics such as cover letters, online profiles, interviewing, or a combination. Following that, some textbooks dedicated an entire chapter to résumés, while others placed résumés in other subsections or appendices.

In assessing the résumé content in context, the researchers noted how résumés were positioned in the textbooks and noted differing levels of résumé emphasis. Additionally, textbooks lacked consensus on writing style, format, and template use. Textbooks typically presented advice content as factual knowledge or established practice, but the advice content differed from book to book.

Positioning résumés

Overall, as seen in Table 1, textbook authors from all three areas of study talked generally about how to do a job search with résumé writing as part of the job search process. Some put networking before résumés, but not all did so, and most included interviewing. While résumés appeared as a document type, they were not typically a primary document type. Instead, authors included résumés with other job materials content or a job materials chapter.

How Textbooks Cover Résumés in the Table of Contents.

Note. EF = employment focused; Non-EF = non–employment focused. Values are numbers (row percentages).

The chapter and section titles reflected how authors typically placed résumés within a job search context or in a chapter alongside other job search documents. The researchers found that this supported the idea that the job search process has many components. Full-chapter titles included “Building Careers and Writing résumés,” “Employment Communications,” “Applying for a Job,” “Résumés, Cover Letters, & Interviews,” “Searching for Jobs and Writing Résumés,” “The Job Search, Résumés, and Cover Messages,” and “Communicating Your Professional Brand: Social Media, Résumés, Cover Letters, and Interviews.” Some books with broad employment chapters had subsections like “Writing résumés” or “How to write a résumé.” Frequently, authors placed résumés alongside networking or professional development topics. No book reviewed for this study had a chapter title dedicated only to résumés. Still, multiple books had résumé-focused chapters or chapters that used the genre of job application documents as the focus of an entire chapter.

Résumé Writing Style

Most books had some consistency in writing style, such as using bullet points and beginning those bulleted lines with verbs. However, whether to use a verbal noun, past-tense verb, or present-tense verb differed. Up-to-date or mostly correct advice included detailed information on constructing bullet point phrasing. Of note, a few books recommended using strong action verbs and were more specific by providing sample lists of action verbs, making the advice more actionable and transparent for students. Further, some books detailed how to correctly phrase bullet statements (e.g., use action verbs; quantify information) and provided correct and incorrect phrasing examples. The more straightforward, detailed, and example-based advice provided an excellent starting point for students unfamiliar with résumé writing.

Conversely, some books included vague advice that, while correct, needed to be more actionable. For example, some authors did not explain how to write content for a job description bullet point but stated that this phrasing was important. Out-of-date or typically considered incorrect advice included advising readers to write in the first person and complete sentences or include numerous details even if it meant the résumé was long.

Résumé types and formatting

Authors often divided résumés into the categories of chronological, functional, and combination—with a side box or secondary section on a scannable or AI ATS résumé. Some books included four categories: chronological, functional, combination, and scannable. AI ATS–compatible résumés were never their own category. While many books aligned with how they presented résumé types, they differed in advice on how to format résumés. For instance, with résumé length, some advised to keep résumés to a single page while others encouraged writers to write more than a page. One book suggested using columns, and others did not advise using columns but had sample résumés with columns. Another book used tables in the sample résumé.

Advice on template use varied dramatically. While one book discouraged the use of templates, another book argued for them. While this could be due to regional differences in job markets, this is a broader point of disagreement. Anecdotally, the authors have experienced this argument between the different career centers on their campus; one advises against templates, while the other requires using them despite targeting the same employers. One book explained that templates are too complicated, especially for updating; however, the difficulty of modifying a template depends on the suggested template. For the book that said templates were too complicated, the book used the example of a template with varied fonts, graphics, and columns. Templates and samples in the books sometimes needed to be more consistent with advice in the textbook. For example, one book advised on how to prepare a scannable résumé, but the sample scannable résumé did not follow the authors’ advice.

Research Question 2: How Textbooks Cover Résumés and AI ATS

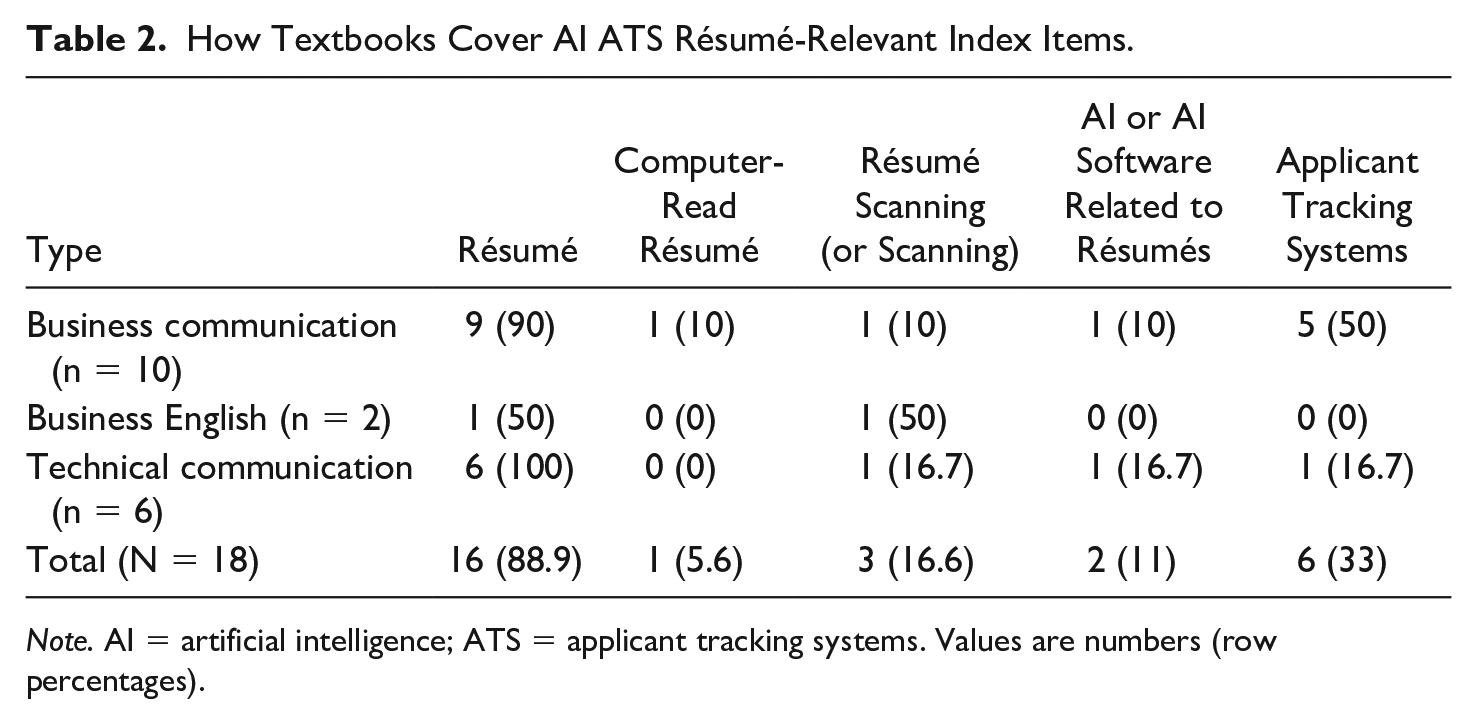

While some textbooks incorporated AI ATS and résumé sorting in their résumé discussion, many did not. As noted in the review of AI ATS résumé-relevant index keywords in Table 2, most textbooks’ indexes do not list AI ATS–related terminology. For instance, 16 (88.9%) books list “résumé” in the indexes; however, AI ATS terms are considerably less prevalent (e.g., the most mentioned term, “ATS,” is covered in only 33% of books). Notably, the coders found no detectable increase in AI ATS–related mentions related to the publication year. One might expect that as AI ATS–based hiring increases in popularity, more recently updated textbooks would include it more in their content. However, the index listings do not validate that assumption.

How Textbooks Cover AI ATS Résumé-Relevant Index Items.

Note. AI = artificial intelligence; ATS = applicant tracking systems. Values are numbers (row percentages).

While textbooks generally cover résumés and scanning across types, business English textbooks did not list AI or ATS concerning résumés in their indexes. Interestingly, AI ATS appeared in over half of business communication indexes and only in two technical communication indexes. After analyzing indexes, the authors reviewed the content of textbooks’ résumé sections to uncover more detail on AI ATS usage. While only 2 (11%) texts listed AI and 6 (33%) listed ATS in their indexes, in the textbooks’ contents, 12 (67%) books addressed AI ATS in their résumé sections, while 6 (33%) did not. Again, the publication year did not influence the AI ATS coverage. Additionally, those 12 books were either from technical or business communication fields. One business English book referenced scannable résumés and résumé screening but did not mention AI or ATS.

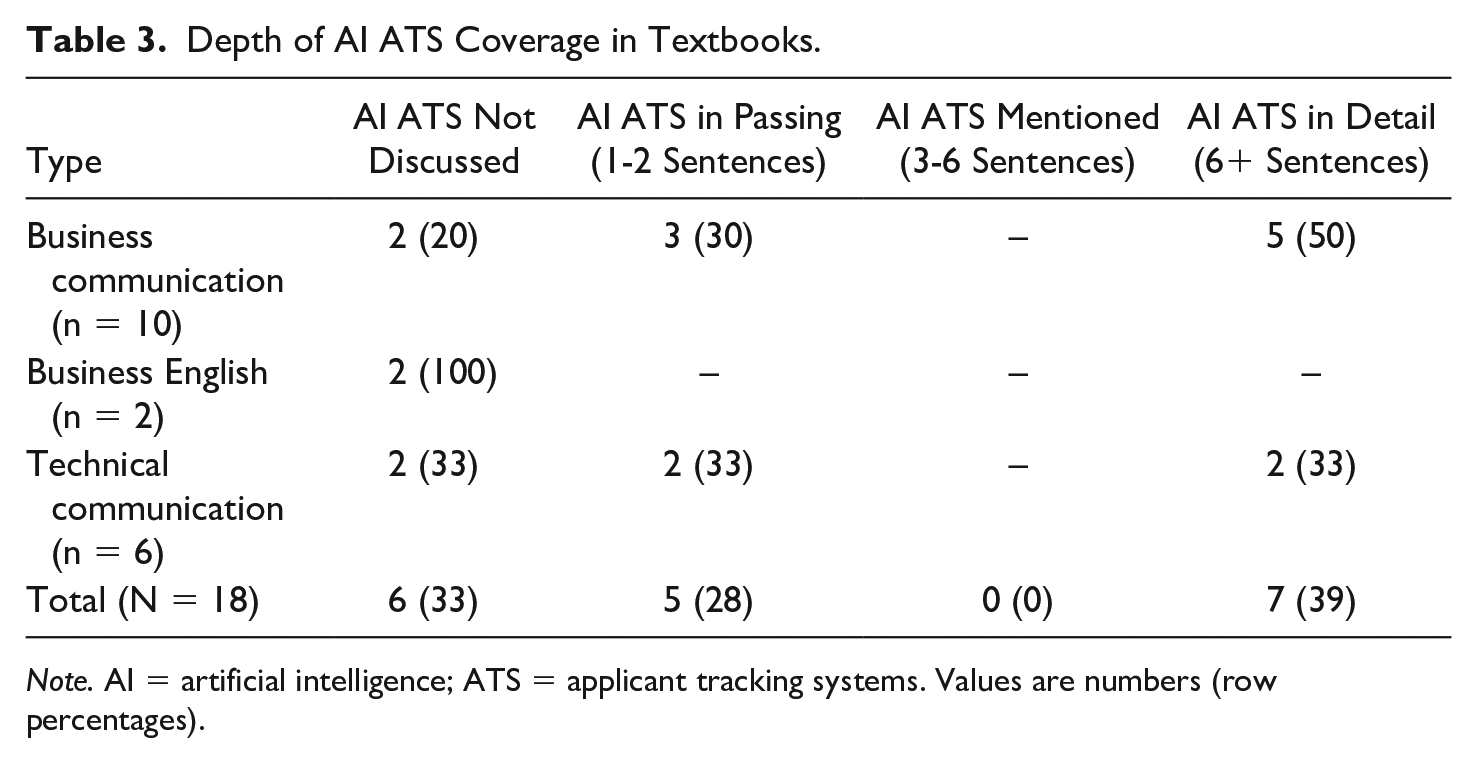

Depth of AI ATS coverage

Of those 12 AI ATS covering books, 5 (28%) mentioned AI ATS in passing, with one to two sentences dedicated to the topic, while 7 (39%) books discussed AI ATS in greater detail (i.e., six or more sentences). See Table 3 for more detail. Of note, coders had a category for three to six sentences on the topic, but no books met that criterion. So, textbooks either (1) did not cover AI ATS, (2) briefly mentioned it, or (3) covered the topic in depth. Further, the coders analyzed the depth of AI ATS content coverage to understand the topic better and compared the coverage by field. Five (62%) business communication books discussed the topic in depth, while three (38%) only mentioned it in passing. Technical communication (with the acknowledgment that it was a smaller sample) was evenly split, with two books covering AI ATS in depth and two mentioning it.

Depth of AI ATS Coverage in Textbooks.

Note. AI = artificial intelligence; ATS = applicant tracking systems. Values are numbers (row percentages).

As textbook authors have incorporated AI ATS content into new editions of textbooks, the placement of AI ATS has differed. For instance, one book promoted a supplemental website. Another book included AI ATS tips in a pull-out box, but the rest of the chapter focused on the audience as a human recruiter. However, books may have done so to make the content more easily edited and updated.

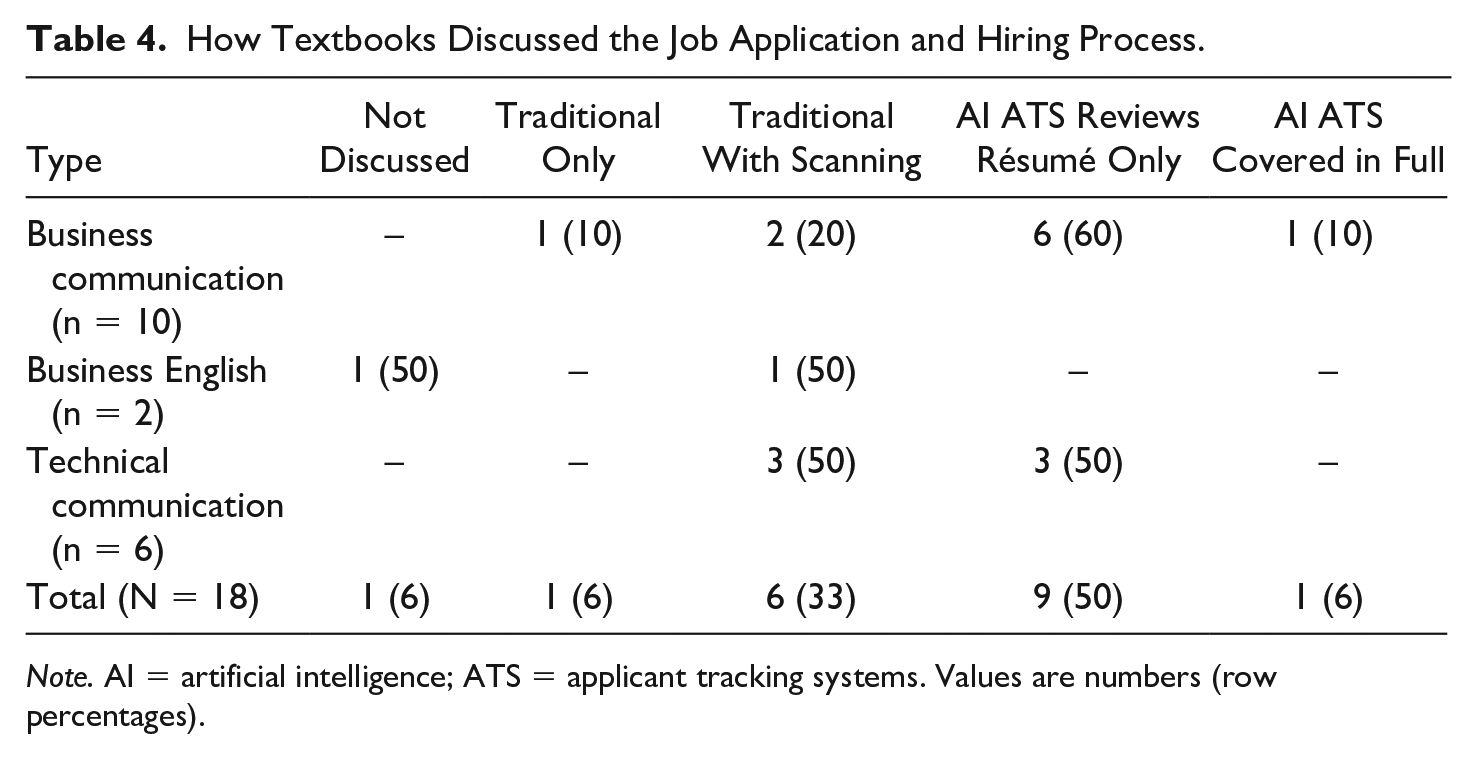

Explanation of the job application and hiring process

AI ATS significantly impacts the job application process for employment seekers because many companies use AI to sort through résumés and select top candidates. Then, the system may have AI-led and AI-analyzed computer interviews for those candidates. The interview would precede a human seeing the résumés (Buijsrogge, 2021). Despite this significant shift in the job application and hiring process, of the 18 textbooks reviewed, only 1 (6%) book talked about the AI ATS process in this way (see Table 4). Instead, the authors described a more traditional process (e.g., submitting a résumé, a hiring manager reviewing it, and then interviewing someone). One (6%) book described the traditional hiring process, while another (6%) did not discuss the process at all. Six (33%) books described a traditional process and added résumé scanning (not AI ATS) to that description, emphasizing the importance of aligning résumé keywords with the job advertisement. Nine (50%) textbooks modified the traditional process to explain that AI ATS would be applied to sort résumés before a human sees it. However, those books did not mention the role of AI ATS in interviewing. Also, they differed in how they talked about AI ATS with résumés. Some books implied that AI ATS would sort résumés, but a human would see it; others explained that humans would not look at the résumé until the interview. This fundamental difference in the conceptualization of the hiring process seemed to impact how authors structured and discussed AI ATS and résumés. Understanding how textbook authors contextualize the hiring process is critical because of the impact on framing AI ATS.

How Textbooks Discussed the Job Application and Hiring Process.

Note. AI = artificial intelligence; ATS = applicant tracking systems. Values are numbers (row percentages).

In analyzing the texts, the researchers found more pronounced content differences in AI ATS–related résumé discussions than in the texts’ general reviews of résumés and job materials discussed in Research Question 1. These differences were noted regarding audience conceptualization, AI ATS understanding or explanations, and advice on keyword usage, phrasing, and format.

Audience conceptualization

The differing perspectives of the hiring process continued into a critical theme regarding the anticipated audience in résumé writing. The researchers found that most textbooks focused on humans as the résumé audience rather than AI ATS systems as an audience. This focus aligns with the depth of coverage of AI ATS (with only seven books covering it in depth) and the differing conceptualizations of AI ATS’s role in the hiring process. Further, multiple authors mentioned the concept that AI ATS might reject a résumé without a human viewing the résumé. However, most of their chapters still focused on résumé advice with humans as the audience.

Not all books treated a résumé submitted to AI ATS as a document that a human would eventually view. A minority of textbooks noted that there would be a combination of readers. As an example of practical advice, one book explicitly explained that résumé writers may have an audience of humans, computers, or a combination. Some books were vague; for example, some did not list computers as the sole audience but included advice that implied only a computer would read the résumé. Some advice seemed incorrect; for example, one said there was no maximum page length for a résumé submitted online. As an example of a balanced treatment, one book noted that a challenge with AI ATS is not knowing how a résumé will be “read” by an AI ATS; further, the résumé writer cannot know if the format is correct for that system. One book did explicitly state that résumés were read by humans only if the AI filters selected the résumé.

AI ATS explanation and understanding

The textbooks’ explanations, advice, and understanding of AI ATS résumés differed widely. For instance, while 12 books mentioned ATS, only 9 (75%) of the 12 explained what ATS was to readers. Additionally, the concept of a scannable résumé influenced many of the 12 textbooks describing the AI ATS process. Several books included sections on scannable résumés. As one author put it, résumés must pass the “scan test” and the “skim test,” advice that works for scannable résumés as well as AI ATS. However, multiple authors conflated AI ATS and scannable résumés. For instance, one book explained AI ATS as a database management system in which résumé content was scanned for keywords. Another book called AI ATS a “web-based database.” These conceptualizations misrepresented the sophistication of AI ATS that has moved beyond simple keyword detection. The misconceptions impacted résumé construction advice.

AI ATS advice

AI ATS résumé advice focused on keyword usage and format. However, similar to advice reviewed in Research Question 1, advice on how to craft a résumé for ATS varied widely. Specifically, advice ranged from (1) correct and detailed, (2) too vague to be actionable, and (3) incorrect or outdated. Sometimes, a book would have a mix of correct and incorrect advice or up-to-date and out-of-date advice. The categories overlapped, and the researchers provide clarification in the qualitative discussion.

Keywords and phrasing

Keyword use and bullet point phrasing in AI ATS résumés were clear areas of conflicting advice. Eight (67%) of the 12 texts that reviewed AI ATS résumés provided advice on phrasing and AI ATS. Of those books, many gave correct advice. Correct advice consistently discussed the importance of aligning wording with the job advertisement and integrating keywords into statements rather than keyword stuffing. For instance, one of the books that included extensive advice on AI ATS discouraged readers from trying to “beat” or “trick” the system; instead, the author encouraged readers to focus on the audience and company needs with AI ATS as a tool used by the companies. Many of those texts highlighted the importance of quantifying in bullet statements since AI systems could assess those numbers and numbers that identify schooling dates and jobs to measure experience levels and career progression.

Notably, some of the texts that provided AI ATS phrasing advice gave vague suggestions like “apply the right keywords,” “if your résumé doesn’t have the right keywords, it might be rejected by an applicant tracking system,” or use “strong keywords” for AI ATS without defining “strong keyword.” The keyword usage advice is helpful for AI-read résumés, but the explanations of keywords in these texts were vague, not actionable, nonspecific, or off. For example, one book suggested using a keyword like “willing to travel.” Another said not to go “overboard” with keyword usage but did not offer concrete examples of the difference between acceptable and “overboard.” One book suggested focusing on nouns such as titles. While the researchers did not find incorrect advice on keywords specifically for AI ATS, the most common practice that could be considered incorrect was textbook authors not discussing AI ATS keywords.

Repeatedly, textbook authors assumed that a human reader would use an ATS system to search for keywords. In examining the eight books, the types of keywords recommended varied. One book suggested repeating keywords, and another said to use gerunds rather than action verbs for keywords. Because multiple books conflated résumé scanning and AI ATS, authors sometimes treated keyword use as a counting game in which a computer system would look for quantity rather than quality. Similarly, authors assumed that humans would be involved in résumé screening and keyword searching. For instance, one book assumed a recruiter might filter through keywords in an ATS system to determine candidates to interview. These authors did not assume that résumés would be scored and ranked with keywords count for scoring. The process of searching résumés for keywords did not align with how all AI ATS systems work.

Format for AI ATS

Of the 12 books with AI ATS advice, most (10; 83%) included suggestions for formatting résumés for AI ATS. The formatting advice ranged from mostly correct or up-to-date, correct but vague, to mostly wrong or out-of-date. Mostly correct or up-to-date advice aligned with current AI ATS standards, like using a format from which AI can pull information cleanly. For instance, authors would explain how AI ATS privileges not using columns, lines, color for meaning, text in the header or footer, or images. Vague advice tended to be too vague to be actionable. For example, a book might say to use a plain text résumé but never explain the plain text. Like keyword advice, many books provided vague, nonactionable advice like “use simple formatting” without defining “simple” or providing examples. Wrong advice was more out of date than blatantly false, remaining in line with best practices for scannable résumés including how to mail a résumé for scanning. For example, a book in a paragraph about AI ATS warned readers against using fancy paper or perfume; while correct, the advice was out-of-date. One book stated that résumés for ATS should have no special characters (e.g., no bold, italics, bullets, tabs), and all uppercase should be used for headings. While an outlier, one book said not to use bullet points for AI ATS. However, AI ATS processes things like bolding and bullets.

In addition to specific format advice, the researchers looked at how books presented résumé templates. While some books provided accurate format advice, their templates did not necessarily align with their format advice. For instance, one book included a sample résumé for online submission but used columns, which are generally not AI ATS compatible. Additionally, one book told readers to create a scannable résumé for AI ATS but provided a sample that was neither scannable nor AI ATS compliant. Another book clearly explained the need for a plain text résumé, but its sample in the book did not include dates. When reviewed by AI ATS, a résumé without dates would have the dateless activities or experiences automatically rejected from consideration. Another book recommended avoiding a “generic” résumé, which the author said would likely be rejected, contrary to how these systems work.

Inherent in the formatting discussion, although not always explicit in the authors’ advice, were assumptions about the AI ATS process and the résumé audience. The assumption was that résumé writers need a résumé for AI ATS and a different résumé for humans. Several books split audiences, encouraging writers to consider one or the other rather than a combined or multifaceted audience. However, AI ATS pushes forward one résumé; the one read by the recruiter is the same one that passed the initial system. With AI ATS systems, an applicant would not typically submit two different résumés or have that second chance to impress the human reader.

Discussion

Textbook authors recognized the importance of résumés, as seen in how often textbooks presented résumés as part of the hiring process and how much detail authors offered. However, variances existed in the advice offered, including bullet point writing style, types of résumés, and formatting, and at times textbooks included conflicting advice and examples. Because of changes in the job search process, AI ATS needs more consideration as its coverage does not reflect its ubiquity in the hiring process, which was often not explained in detail. The audience conceptualization when writing about AI ATS is vital for providing actionable advice. However, the researchers found that the books need more actionable detail, including updating content to distinguish between AI ATS and scanning and expanding and updating examples such as templates.

Résumé audience presents a significant challenge for textbook authors. Traditionally, business communication instruction has focused on the audience as a primary consideration in the communication process. Textbooks often mention known and unknown audiences, primary and secondary audiences, and internal and external audiences. Until recently, textbook authors could assume that the external audiences reading résumés would be human, whether known or unknown. Authors must explain to students how to write for a computer and a human, not knowing which would be primary or secondary or if a human would see the résumé at all. Further, the researchers found a lack of transparency in how competing for-profit companies and start-ups run AI ATS to “read” résumés. A detailed, comprehensive set of best practices for each company did not exist during data collection. Some researchers refer to this lack of clarity of AI ATS résumé parsing or extraction as part of the black box (Getchell et al., 2022). Authors must write, and faculty must teach about this aspect of AI without having access to all of the information about how AI ATS works. Finally, this new technology has expanded during the pandemic, when many faculty were balancing significant personal or professional adjustments or challenges. No doubt, technical résumé formatting details for AI ATS were a lower priority in the face of needing to remedy perceived deficiencies in student writing or speaking skills.

Recommendations for Textbook Authorship and Teaching

As communication practices change rapidly, textbook authors face significant challenges. The traditional textbook publication cycle did not support the rapid changes in the job search process, including online job applications, AI ATS, or AI more broadly. This lag was similar to challenges faced with email and messaging in the 1990s or social media in the early 2000s. Specific to AI ATS, as faculty, the researchers understand the difficulty of keeping up to date with what needs to be emphasized to students in terms of format and content for résumés. For authors or faculty who have not applied to corporate jobs in some time, the current job application experience will differ from applications prior to the mid-2010s. Textbook authors need to frame the role of AI ATS in the job search process and how that changes the résumé audience. Students and instructors need to know how ubiquitous AI is in this process and that students will likely encounter it as they apply for jobs and will need a résumé that works for both an AI and human audience.

Further, user experience influences perceptions of AI résumé parsing (Lacroux & Martin-Lacroux, 2022). Lacroux and Martin-Lacroux (2022) found that recruiters with expertise in the traditional process faced difficulties transferring the expertise to AI systems, given systems “based on AI are opaque to their users, for technical (use of unsupervised learning algorithms) and commercial (industrial secret) reasons” (p. 11). The researchers’ university has the advantage of utilizing an AI ATS simulation system that allows résumé instructors to supervise the algorithm and review résumés ranked by the AI. However, the researchers understand that this puts them in a unique position and advantage to advise students, especially when technological changes occur mid-semester. Additionally, they recognize that not all schools and applicants have access to these systems because of their cost, thus creating a disparity. This disparity emphasizes the importance of explanatory content being included in widely available textbooks to prevent further inequities from emerging.

Published advice must be actionable and broad to meet the changing requirements of AI ATS systems while adaptable to systems that change. Numerous approaches and models exist to parse résumés and extract information (Palshikar et al., 2023). Instructors with limited machine learning and AI training may benefit from focusing on the aforementioned functional approach. In other words, rather than asking, “How does AI ATS work?” the question should be, “How can we advise students about AI ATS based on what we know?” Actionable advice can give instructors and students an understanding of how to write résumés. One solution may be to move away from adding more technical advice on format and toward including broader principles about effective communication (e.g., use of keyword phrasing and quantification). The choice to place résumés within a job search context does this well. Fortunately, core principles in how résumés have been taught carry into how AI ATS reads résumés. For example, AI ATS typically rejects résumé work experience if the résumé has one or fewer bullet points or assigns a lowered assessment when margin sizes are inconsistent. Textbook authors have long included such advice. However, changing systems demand new practices.

This study has outcomes that can be applied both to textbook authors and to faculty as users of the textbooks. Even with a focus on core concepts, textbook authors face challenges of rooting examples in present practice and providing actionable detail. As part of the initial research for this study, the researchers looked for activities or discussion questions about AI ATS in the textbooks; they found so few that they eliminated the research question. However, with the active teaching groups and assignment roundtables at the Association for Business Communication conferences, hopefully, in the coming years, faculty can collaborate to support each other in this significant change in the field, including at workshops and panels about AI and pedagogy as held at the 2023 conference. That change includes preparing a résumé for AI ATS and teaching about the role of AI broadly.

For textbook authors, updates may be a considerable undertaking. While business communication researchers will likely be at work on this topic of AI ATS résumés for future research articles, students need information now. Business and technical communication textbook authors and faculty must find ways to deliver materials to students dynamically. Beyond author-driven textbook changes, faculty can use shared resources, as happens already with “My Favorite Assignment” and the teaching with technology panels. Newly developed collaborative materials could be rooted in present practices rather than relying on past practices, reducing the pressure to retain existing “wisdom,” “understanding,” or samples in textbooks.

Textbook authors could incorporate AI ATS into discussions of generative AI or sections or chapters on the job search process.

The researchers have several recommendations for faculty and authors of resources or textbooks:

The literature review shows that much of the published research on these systems comes from computer-mediated communication or computer science. Interdisciplinary approaches and collaborations with corporations or simulation systems can support faculty as they work through integrating a wide range of AI tools. Textbook authors can use AI ATS systems to their benefit by contacting AI recruiting tool companies like HireVUE or simulation platforms like Quinnica.io or Interview Stream for up-to-date advice. Some faculty may want to adopt a simulation system. However, access to simulation platforms can be cost-prohibitive for faculty and students. To promote equity and inclusion for job seekers, business communication faculty and researchers must do more to “level the playing field” as students from diverse backgrounds apply for jobs at companies that use AI recruiting tools. Providing clear information can help.

Limitations and Opportunities for Future Research

The study’s sample size is limited to 18 books, and the division between business communication, business English, and technical communication could have been more balanced. Future research studies could refine the sample’s size and division, allowing for more direct comparisons between how the three fields cover AI ATS and résumés. Another limitation of this study is that the researchers only examined the textbooks and their referenced material. The researchers did not collect or analyze content that may be available on a supplemental learning system or platform. Textbook authors and publishers might discuss AI ATS more in those supplemental learning sections (e.g., videos and case examples).

Most importantly, the researchers note a need for additional and more consistent standards for résumé format, writing, and content to create an assessment for the textbook content. Future research could consider essential practices, core knowledge, or general advice specific to certain fields, countries, or companies. This further research could continue into core business communication concepts that transfer across all technologies. Based on the Lacroux and Martin-Lacroux (2022) study, future research should consider whether authors or faculty with extensive expertise in traditional résumé writing may face unique challenges in updating material to include AI recruiting and traditional recruiting advice. Further, future research should consider student and faculty perceptions and understandings of AI, including screening tools like interviews.

Conclusion

Teaching AI and communication presents numerous challenges to faculty and textbook authors. By examining the state of textbooks, this study contributes to understanding innovative business communication instruction by providing insight into résumé instruction related to AI. The study finds inconsistencies and advice that may not help readers understand or faculty teach AI ATS effectively without using supplemental materials. The study provides initial information to support future research on résumé writing and AI ATS by creating a baseline of what has been understood and published. The researchers stress that their analyses should not be taken as a criticism of the books. Textbook authors serve as guides and thought leaders for students and faculty, and textbooks have a significant opportunity to advise students on using AI ATS, just as past textbooks did when scannable résumés were new.

Getchell et al. (2022) wrote, “We look forward to the future where many teacher-scholars add AI technologies to the list of topics that they know and teach to students as part of their courses” (p. 26). For job seekers and those teaching job search readiness, the future is now because AI ATS is happening now. Business students, especially those applying to Fortune 500 companies, must be equipped to understand and navigate AI recruiting. As the systems advance, they likely will become more widespread. The researchers commend the authors who included AI ATS; discussing a new, emerging field or technology is a significant challenge, and some have risen to and met that challenge in exciting ways.

Footnotes

Appendix A: Textbooks Analyzed

Andrews, D., & Tham, J. (2022). Designing technical and professional communication: Strategies for the global community (1st ed.). Routledge. https://www.routledge.com/Designing-Technical-and-Professional-Communication-Strategies-for-the-Global/Andrews-Tham/p/book/9780367549602 [Tech Comm]

Balzotti, J. (2022). Technical communication: A design-centric approach (1st ed.). Routledge. https://www.routledge.com/Technical-Communication-A-Design-Centric-Approach/Balzotti/p/book/9780367438234 [Tech Comm]

Bovee, C. L., & Thill, J. V. (2021). Business communication today (15th ed.). Pearson Education (US). https://www.pearson.com/en-us/subject-catalog/p/business-communication-today/P200000005837/9780136713807 [BCOM]

Camp, S., & Satterwhite, M. (2019). College English and business communication (11th ed.). McGraw Hill. https://www.mheducation.com/highered/product/college-english-business-communication-camp-satterwhite/M9781259911811.html [BCOM]

Cardon, P. (2021). Business communication: Developing leaders for a networked world (4th ed.). McGraw Hill. https://www.mheducation.com/highered/product/9781260088342 [BCOM]

Chan, M. (2020). English for business communication (1st ed.). Routledge. https://www.routledge.com/English-for-Business-Communication/Chan/p/book/9781138481688 [BCOM]

Finkelstein, L., Aune, J. E., & Potter, L. (2023). Technical writing for engineers & scientists (4th ed.). McGraw Hill. https://www.mheducation.com/highered/product/technical-writing-engineers-scientists-finkelstein-aune/M9780073534930.html [Tech Comm]

Guffey, M. E., & Loewy, D. (2018). Essentials of business communication (11th ed.). Cengage. https://www.cengage.com/c/business-communication-process-product-9e-guffey-loewy/9781305957961 [BCOM]

Laplante, P. (2019). Technical writing: A practical guide for engineers, scientists, and nontechnical professionals (2nd ed.). Routledge. https://www.routledge.com/Technical-Writing-A-Practical-Guide-for-Engineers-Scientists-and-Nontechnical/Laplante/p/book/9781138628106 [Tech Comm]

Locker, K., Mckiewiz, J., Aune, J. E., & Kienzler, D. (2023). Business communication (13th ed.). McGraw Hill. https://www.mheducation.com/highered/product/business-communication-locker-mackiewicz/M9781264067510.html [BCOM]

Markel, M., & Selber, S. (2021). Technical communication (13th ed.). Macmillan. https://www.macmillanlearning.com/college/us/product/Technical-Communication/p/1319245005?selected_tab=About [Tech Comm]

Newman, A. (2023). Business communication and character (11th ed.). Cengage. https://faculty.cengage.com/titles/9780357718131 [BCOM]

O’Hair, D., Wiemann, M., Mullin, D., & Teven, J. (2021). Real communication (5th ed.). Macmillan. https://www.macmillanlearning.com/college/us/product/Real-Communication/p/1319201741?selected_tab=About [BCOM]

Patten, K., & Sands, Y. (2020). Business technology communications (1st ed.). Kendall Hunt Publishing. https://he.kendallhunt.com/product/business-technology-communications [BCOM]

Quintanilla, K. M., & Wahl, S. T. (2021). Business and professional communication (4th ed.). Sage (US). https://us.sagepub.com/en-us/nam/business-and-professional-communication/book274401 [BCOM]

Rentz, K., & Lentz, P. (2020). Business communication: A problem-solving approach (2nd ed.). McGraw Hill. https://www.mheducation.com/highered/product/business-communication-problem-solving-approach-rentz-lentz/M9781260088359.html [BCOM]

Shwom, B. G., & Snyder, L. G. (2018). Business communication (4th ed.). Pearson Education. https://bookshelf.vitalsource.com/books/9780134740836 [BCOM]

Tebeaux, E., & Dragga, S. (2021). The essentials of technical communication (5th ed.) Oxford University Press. https://global.oup.com/academic/product/the-essentials-of-technical-communication-9780197539200?cc=us&lang=en&# [Tech Comm]

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.