Abstract

Background:

Shoulder pain and injury are among the most prevalent musculoskeletal presentations in primary care. With the rise of consumer health information on TikTok, it is pivotal to assess and determine whether the information produced by content creators can serve as a supplement for shoulder rehabilitation and injury prevention.

Hypothesis:

It was hypothesized that content creators with professional degrees and extensive knowledge within the realm of shoulder injuries would yield valuable and accurate health information.

Study Design:

Cross-sectional study.

Methods:

On June 18, 2025, #ShoulderInjury was used as the search item under the TikTok search engine. A total of 9286 videos appeared after the initial search. The authors applied an inclusion criteria of at least 100 likes. Exclusion criteria removed irrelevant, non-English, and duplicate videos, resulting in 209 eligible videos for further analysis. These were evaluated using the DISCERN questionnaire, an instrument used to assess consumer health information on a 1 to 5 scale. Two independent raters scored the videos, and interrater reliability was calculated using weighted Cohen kappa.

Results:

The 209 analyzed videos garnered 1,408,268 likes and 12,536 comments, with a mean DISCERN score of 2.61. Physicians’ videos (n = 41) had the highest mean score (3.52), significantly outperforming nonprofessionals (2.18), physical therapists (2.87), and other professionals (2.79) in critical DISCERN areas (P < .001). Educational content yielded the highest mean score (3.29), whereas personal story videos had the lowest (1.89). Weighted Cohen kappa showed very good agreement for physician videos (κ = 0.82), moderate for physical therapists (κ = 0.59), good for nonprofessionals (κ = 0.79), and fair for other professionals (κ = 0.40).

Conclusion:

This study highlights the potential of TikTok as an effective educational tool when used by qualified professionals. Professionally produced content consistently scored higher on the DISCERN scale. Although the findings are promising, it is important to note limitations, like potential biases in DISCERN scoring due to nonblinded raters, the influence of TikTok’s algorithm, and the exclusion of videos with <100 likes. Future research should explore social media’s role in medical education and assess how to optimize content delivery and engagement.

Shoulder injuries are a prevalent, debilitating musculoskeletal injury affecting a diverse array of patients, from adolescent athletes to geriatric patients and many populations in between. Shoulder injuries result from a multitude of causes, such as overuse, trauma, and arthritis. Shoulder pain has been previously cited as the third most common musculoskeletal presentation in primary care, after low back pain and knee pain. 26 With its great prevalence and ability to significantly impair daily functionality, shoulder injury remains among the most commonly researched and discussed topics in orthopaedics. As with other common injuries, the public understanding of shoulder injury has been increasingly influenced by social media content. TikTok has emerged as a popular and powerful platform for the dissemination of medical information.

Social media has increased in the past decades to become an exceedingly common part of daily life. With this unprecedented growth, social media platforms have permeated into conveying more and more forms of information, now including the medical field. 13 TikTok currently has >3 billion downloads and is the fastest growing platform in social media.16,21 The popularity of TikTok as a means of health information dissemination has prompted significant research into the platform’s effects on public health literacy for a wide array of medical conditions. 22

Although TikTok has shown promise in increasing public health literacy, there is growing concern regarding the prevalence of medical misinformation spread through the platform.2,7,10,23 The prevalence of medical misinformation on TikTok can likely be linked to its open platform design, enabling users to post content freely without any validation of the information being conveyed. 13 Recent research in the field of orthopaedics has explored the influence of TikTok and other social media platforms in spreading medical information in the context of anterior cruciate ligament injuries and rehabilitation, sports medicine, ankle sprain care, Achilles tendinopathy, knee osteoarthritis, osteosarcoma, and low back pain, but there is a gap in the literature regarding shoulder injuries.2,3,6,9,11-15,25

The present study aims to investigate the landscape of medical information regarding shoulder injuries on TikTok and analyze the accuracy, quality, and validity of the content, as well as the qualifications of video creators. Further, this study sought to identify trends between the quality of information presented and engagement as gauged by the number of likes on a video. We hypothesized that content creators with professional degrees and extensive knowledge within the realm of shoulder injuries would yield valuable and more accurate health information.

Methods

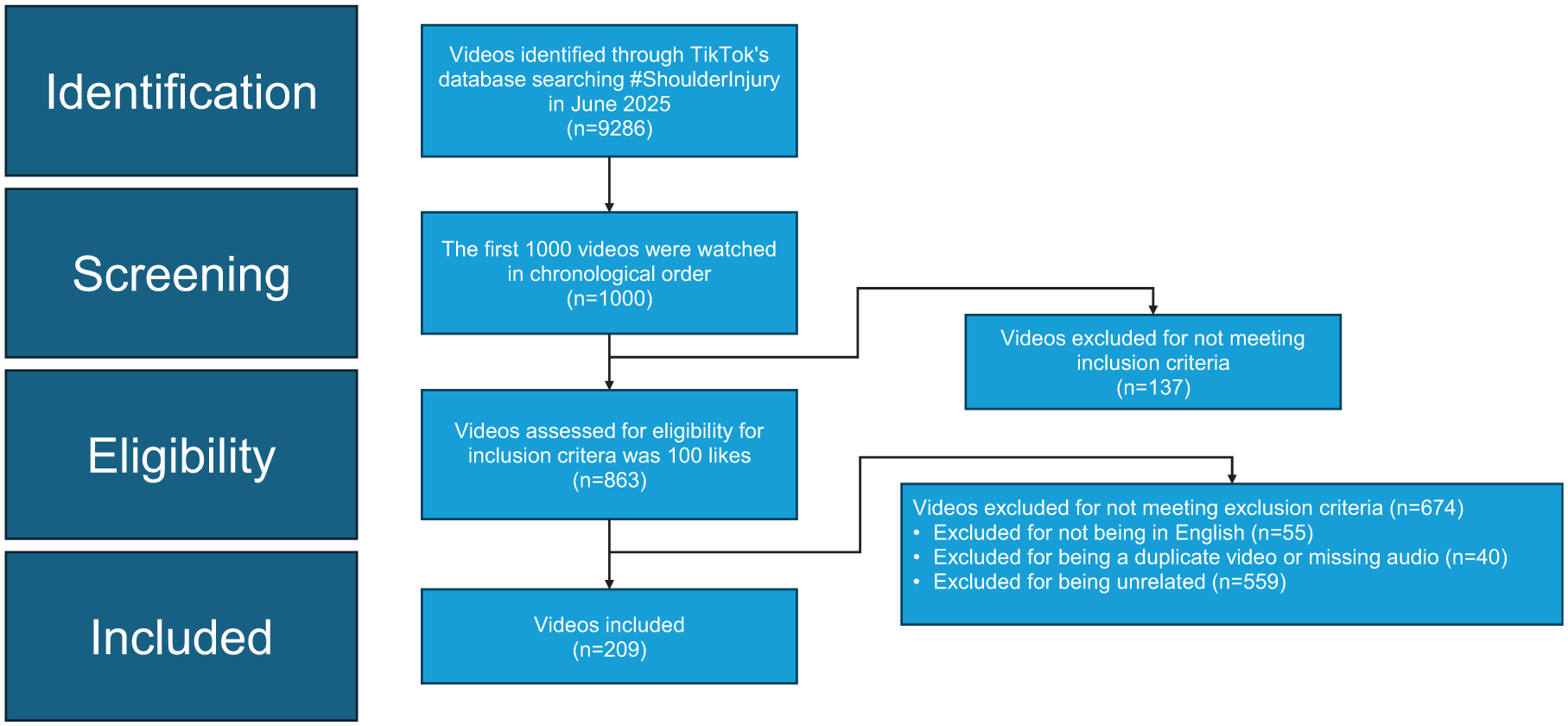

On June 18, 2025, we conducted a search on the TikTok search engine by typing #ShoulderInjury. A total of 9286 videos appeared under this category. From there, we analyzed the results displayed in TikTok’s default relevance-based order, as determined by the platform algorithm. No manual sorting or filtering was applied. The first 1000 videos appearing in sequence were reviewed and included for analysis. Inclusion criteria consisted of 100 likes, which resulted in 863 videos being eligible to move on to the exclusion criterion screening phase. The exclusion criteria consisted of removing any video that was not actually related to shoulder injury (ie, prevalent dancing trends), videos not in English, and videos that were duplicates and reposted by the same content creator. A detailed breakdown of the measures taken can be seen in Figure 1. Following these extensive measures, 209 videos remained and were included for further analysis.

Visual representation of inclusion and exclusion criteria.

To evaluate the quality of each video, we used DISCERN, a standardized 16-item questionnaire. DISCERN aims to use objective metrics to evaluate the reliability, quality, and delivery of consumer health information across platforms. 20 These metrics include the accuracy of information presented, mention of credible sources, meeting the aims of the video or literature, and discussing a range of treatment options (see Appendix A). 5 As stated, the questionnaire contains 16 items, with the final question asking the rater to give an overall score while keeping in mind the previous 15 metrics. The values used in this study reflect what each rater assigned as an overall score to each video. This study deployed 2 independent raters, who are third-year medical students (not on the author list), who were tasked to extensively evaluate each video by answering each metric with numerical values between 1 and 5. After answering the first 15 questions, the raters were then asked to give an overall score between 1 and 5, which was the 16th question. That overall score was used for this study. In both cases, a score of 1 represents a video with serious shortcomings, whereas a score of 5 indicates stellar quality and virtually no shortcomings. 17

To further define the data, the 209 videos assessed in this study were stratified into 4 main categories: type of content creator (physician, physical therapist, nonprofessional, or other profession), sex (male, female, or other), physician specialty (orthopaedic surgeon or sports medicine), and video type (personal story, educational content on pain and injury mechanisms, or rehabilitation and prevention advocacy). The “nonprofessional” designation was given to content creators who lacked credentials. The “other profession” designation was assigned to chiropractors or certified athletic trainers. The “other” sex designation was given if the sex identity could not be determined or if the video was generated via artificial intelligence.

To assess interrater reliability, a weighted Cohen kappa statistic was used on 2 observers. The Cohen kappa measures agreement between raters while accounting for agreement expected by chance, with values ranging from <0 (no agreement) to 1 (perfect agreement). Agreement strength was interpreted as slight (0.01-0.20), fair (0.21-0.40), moderate (0.41-0.60), good (0.61-0.80), and very good (0.81-0.99). A weighted kappa was selected rather than the standard unweighted kappa because the weighted kappa accounts for the magnitude of disagreement between ordinal ratings, making it particularly appropriate when using Likert-type scales like the DISCERN tool. 5 Weighted kappa offers a more nuanced and accurate representation of rater agreement when scoring data on an ordered scale.17,27 All statistical analysis and graphical representations were acquired via JASP, Microsoft Excel, and Prism.

Results

The 209 analyzed videos collectively garnered 1,408,268 likes and 12,536 comments, with a mean DISCERN score of 2.61. Nonprofessionals created the majority of videos (119; 57%) and had the lowest mean DISCERN score—2.18. In contrast, physicians produced 41 videos (20%) with a higher mean score of 3.52. Physical therapists contributed 35 videos (17%) with a mean score of 2.87, whereas other professions accounted for 14 videos (7%) with a mean score of 2.79. Compared with physicians, nonprofessionals generally underperformed in critical DISCERN areas such as referencing credible sources, articulating the video’s purpose, outlining diverse diagnostic or treatment options, and discussing uncertainties or potential risks. Physicians consistently demonstrated higher quality content aligned with these evidence-based communication standards.

Stratifying the data into video type was pivotal in gaining a clearer understanding of the DISCERN ratings. Table 1 represents a detailed breakdown into the 4 main categories mentioned previously. Videos within the realm of educational content, specifically about pain and injuries, yielded a higher mean DISCERN score of 3.29. This is a stark difference from personal story videos, as those yielded a mean DISCERN score of 1.89. Last, videos focusing on “Rehab and Injury Prevention Advocacy” had a mean DISCERN score of 2.46.

Overview of TikTok Video Features and Their Associated DISCERN Scores a

Nonprofessional: individuals without credentials. Other professional: chiropractors or certified athletic trainers. DISCERN scores are expressed as mean ± SD.

A Kruskal-Wallis test revealed a significant difference in DISCERN scores between the 4 types of content creators: physician, physical therapist, nonprofessionals, and other professional (P < .001). To get a more detailed understanding, we conducted a Dunn post hoc comparison. The results demonstrated that physicians had significantly higher DISCERN scores compared with physical therapists, other professionals, and nonprofessionals (P < .001). We found no significant difference between other professionals and physical therapists in terms of their DISCERN scores. Figure 2 illustrates the comparison between content creator types.

Graph of the differing DISCERN scores among content creator types along with their respective significance in comparison with each other.

An additional Kruskal-Wallis test was conducted to evaluate differences among the more specific video classifications. Growth plate fractures and sternoclavicular joint videos were excluded due to minimal sample size (n = 1 each). Dunn post hoc comparison revealed that videos addressing general shoulder instability, injury, rehabilitation, and prevention had significantly lower DISCERN scores compared with more targeted topics, such as acromioclavicular joint, clavicular fractures, glenohumeral joint/osteoarthritis, rotator cuff tears, shoulder dislocation, and superior labrum anterior-posterior (SLAP) tears (P < .001).

Mean DISCERN scores assigned by rater 1 were 3.46 for physicians, 2.71 for physical therapists, 2.15 for nonprofessionals, and 2.57 for other professions. Rater 2 gave slightly higher mean scores of 3.59, 3.03, 2.22, and 2.79 for the same respective groups. Weighted Cohen kappa analysis showed very good agreement for physicians’ videos (κ = 0.82), moderate agreement for physical therapists’ videos (κ = 0.59), good agreement for nonprofessionals’ videos (κ = 0.79), and fair agreement for other professionals’ videos (κ = 0.40).

Discussion

The major findings of this systematic evaluation of TikTok demonstrated considerable variation in the quality and reliability of consumer health information, as measured by the DISCERN instrument. Dunn post hoc comparisons clarified the degree of statistical significance between groups. Physicians produced significantly higher quality videos than nonprofessionals (z = −9.88; P < .001), physical therapists (z = 3.29; P = .001), and other professionals (z = −2.70; P = .007). Nonprofessionals also performed significantly worse than both physical therapists (z = −5.37; P < .001) and other professionals (z = −3.38; P < .001). Notably, no significant difference was observed between physical therapists and other professionals (z = −0.25; P = .804), suggesting a comparable level of quality between these groups. These results were further supported by effect sizes (rrb), which were strongest in the physician versus nonprofessional comparison (rrb = 0.654), indicating a large effect.

As shown in Table 2, videos with a more specific focus tended to achieve higher DISCERN scores. This likely reflects the fact that professionals, oftentimes physicians, took the time to provide detailed explanations by including injury mechanisms, surgical procedures, rehabilitation strategies, and references to credible research. The observed discrepancy between targeted and generalized videos (n = 137 vs n = 72) highlights and reinforces the need for more qualified professionals to produce in-depth content, particularly in underrepresented areas such as sternoclavicular joint injuries (n = 1). This aligns with previous research showing that content produced by medically trained professionals tends to adhere more closely to evidence-based standards and consistently incorporates credible sources, treatment risks, and therapeutic options.1,24

Classification by Type of Shoulder Injury and Their Associated DISCERN Score a

DISCERN Scores are expressed as mean ± SD. SLAP, superior labrum anterior-posterior.

The consistently superior performance of physicians reinforces their potential value in improving health literacy through platforms like TikTok (Table 3). Videos categorized as educational content had the highest mean DISCERN scores, whereas personalized stories and anecdotes, largely from nonprofessionals, performed the worst. The low scores in personal story videos likely stem from a lack of structured, evidence-informed communication, a trend echoed in prior studies across various orthopaedic and medical conditions.7,9 From an educational perspective, physician-generated short-form content may function as an accessible adjunct to traditional patient education by reinforcing foundational concepts such as injury mechanisms, treatment pathways, and rehabilitation expectations. Prior literature suggests that well-designed digital health content can improve baseline health literacy, enhance patient preparedness for clinical encounters, and support informed decision making, particularly when aligned with evidence-based messaging delivered by medical professionals. 19

Median DISCERN Scores and Interquartile Ranges Representing Content Creator Type a

IQR, interquartile range.

Total number of ratings between the 2 independent raters.

Engagement metrics also offer important context. Despite their lower frequency, physician-created videos had the highest mean number of likes, suggesting a potential link between content quality and viewer engagement. Prior studies have noted that likes and other engagement metrics may act as proxies for perceived credibility or entertainment value.4,8 However, TikTok’s algorithm likely introduces confounding factors such as trending audio, video aesthetics, and posting frequency, which can amplify or suppress content regardless of its informational accuracy. 13 In a clinical context, this finding is notable because engagement metrics directly influence content dissemination on TikTok, shaping which videos patients are most likely to encounter prior to seeking care. 18 As social media increasingly informs patient expectations before professional consultations, higher engagement with accurate, physician-led content may help mitigate misinformation and reduce unrealistic expectations, which can facilitate more productive, shared decision making, and improved clinical outcomes.

Interrater reliability was particularly strong for physician content (κ = 0.82), further validating its consistency and structural integrity. Other groups showed more variability in scoring, possibly due to inconsistent presentation formats, less structured messaging, or informal tone. DISCERN, although robust for assessing structured health information, was originally developed for written materials. Applying it to dynamic, user-generated video content introduces subjectivity, although the use of 2 independent raters mitigated this concern to some extent. 5 Additionally, although the use of medical student raters ensured familiarity with evidence-based medicine, their relative lack of independent clinical practice experience may have influenced interpretation of treatment applicability, risk communication, and clinical nuance within the DISCERN framework.

Our study is not without limitations, such as the study’s inclusion threshold of 100 likes. Although necessary to filter out low-engagement or algorithmically suppressed content, this inclusion threshold may have excluded newer or underrepresented but potentially high-quality videos. Moreover, the cross-sectional nature of the sample captures only a moment in time and may not reflect longitudinal trends or evolving creator practices.

Although we evaluated interrater reliability, intrarater reliability was not assessed. In this study, intrarater reliability was bypassed to minimize recall bias. Given the relatively short duration and distinctive content of TikTok videos, raters would be likely to recognize previously reviewed material upon reassessment, thereby compromising the validity of intrarater reliability measures. Although interrater agreement reflects consistency across independent evaluators, the absence of intrarater analysis limits conclusions regarding individual rater stability over time and is acknowledged as a methodological consideration.

Despite these limitations, this study underscores a critical insight. Professional content creators, particularly physicians, are best positioned to combat medical misinformation on platforms like TikTok. Their higher DISCERN scores, paired with relatively strong engagement, point to a promising intersection of educational value and viewer interest. Encouraging more healthcare professionals to participate in content creation and providing them with social media communication training could enhance the quality of medical information accessible to the public.10,22

Our study highlights a common misconception that misinformation is inherent to open-platform apps themselves; we argue that it is contingent on the qualifications of the content creator instead. Limitations of our study include a potential bias during DISCERN scoring due to the nonblinded nature of the grading, where knowing the profession of a creator may have influenced the rating. Additional biases include the influence of TikTok’s algorithm on the video collection process. It is possible that accounts regularly engaging with medical and scientific information tend to get more accurate results from verified creators suggested to them, whereas another layperson might yield different results for the same search. Future research should continue investigating the realm of disseminating information on social media. Meanwhile, larger institutions should embrace these platforms as a tool to keep their patients well informed with evidence-based medicine and care.

Conclusion

This study highlights the potential of TikTok as an effective educational tool when used by qualified professionals. Professionally produced content consistently scored higher on the DISCERN scale. Although this study is promising, it is important to note the limitations, like potential biases in DISCERN scoring due to nonblinded raters, the influence of TikTok’s algorithm, and the exclusion of videos with <100 likes. Future research should explore social media’s role in medical education and assess how to optimize content delivery and engagement.

Footnotes

Appendix A

Final revision submitted December 17, 2025; accepted January 4, 2026.

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article. The authors received no financial support for the research, authorship, and/or publication of this article.