Abstract

Background:

In the past few years, there has been an increase in the use of artificial intelligence (AI)–based large language models, including ChatGPT, in scientific research. This has shown promise in its ability to draft high-quality articles; however, there has been concerns regarding its ethical use in generating original research.

Purpose/Hypothesis:

The purpose of this study was to quantify the percentage of AI use in articles that were published in major sports medicine journals before and after the release of ChatGPT. It was hypothesized that AI use has changed and increased over time.

Study Design:

Cross-sectional study.

Methods:

All articles that were published from 2023 to 2024 in the 5 sports medicine journals with the highest impact factors were identified (Arthroscopy: The Journal of Arthroscopic and Related Surgery [Arthroscopy], Orthopaedic Journal of Sports Medicine [OJSM], The American Journal of Sports Medicine [AJSM], British Journal of Sports Medicine [BJSM], and Knee Surgery, Sports Traumatology, Arthroscopy [KSSTA]). After removing tables, figures, and references, full texts were assessed for AI-generated content using ZeroGPT. To establish an AI-generated content threshold, articles published before the release of ChatGPT were also assessed for AI-generated content. A 28.69% threshold was determined from 518 articles published before the release of ChatGPT. Articles published after the release of ChatGPT that exceeded this threshold were analyzed across journals and publication dates using chi-square and regression analyses.

Results:

Among the 3596 articles published after the release of ChatGPT and included in this study, 3.28% exceeded the established threshold. Moreover, Arthroscopy was flagged as having the highest AI use among all 5 journals (Arthroscopy = 7.17%; OJSM = 4.01%; AJSM = 3.34%; BJSM = 1.42%; KSSTA = 0.93%; P < .001). Finally, temporal analysis identified a significant rise in the use of AI, increasing from 2.38% in January 2023 to 6.25% in December 2024 (r2 = 0.34; P < .003).

Conclusion:

AI use in sports medicine research remains low but is steadily rising. Editorial policies allowing AI usage may, in turn, perpetuate its use in published sports medicine articles.

Artificial intelligence (AI)–based large language models (LLMs), such as ChatGPT, have influenced all fields of medicine. In cardiology, for instance, they have been used to interpret electrocardiograms and predict the likelihood of cardiac arrhythmias. 34 In radiology, they have assisted with image recognition tasks, such as the detection of pathological lesions on brain scans. 3 Across all fields, these models have helped researchers generate detailed plans for study designs, perform relevant statistical analyses, and overcome “writer’s block” to write original research articles. 4 The last factor can, in part, be attributed to AI’s ability to generate texts that are concise, easy to read, and grammatically correct, thus ensuring an efficient manuscript drafting stage.13,20,23,33 In sports medicine, in particular, AI has also proven to be a valuable tool for conducting preliminary literature searches, assisting in data analysis and interpretation, and facilitating the writing process.6,20,23,30,35,36,41

However, the incorporation of AI in medical research has raised many ethical concerns related to the integrity of the generated content.10,16,19,22 ChatGPT has been reported to provide nonexistent or inaccurate references and to even provide misleading conclusions that are not backed by evidence, which could potentially go undetected during the peer review process.4,15,25 To address these concerns, guidelines have been implemented to regulate the use of AI in research.1,27,29 AI detection tools, such as ZeroGPT, have also been developed to estimate the percentage of AI-generated content in texts.

Previously, Callanan et al 8 investigated the use of AI in the manuscript writing process of major orthopaedic surgery journals and reported that around 17% of recently published articles had significant AI use. The present study aimed to extend their findings by quantifying AI-generated content in articles published in major sports medicine journals before and after the release of ChatGPT. We hypothesized that AI utilization has increased over time.

Methods

Study Design

This was a cross-sectional study investigating the use of AI in all research articles published in leading sports medicine journals between January 1, 2023 (2 months after the release of ChatGPT on November 1, 2022) and December 31, 2024. Articles were extracted from 5 major sports medicine journals based on their high impact factors and their recognized role as sources of high-quality research specifically within the field:

(1) Arthroscopy: The Journal of Arthroscopic and Related Surgery (Arthroscopy),

(2) Orthopaedic Journal of Sports Medicine (OJSM),

(3) The American Journal of Sports Medicine (AJSM),

(4) British Journal of Sports Medicine (BJSM), and

(5) Knee Surgery, Sports Traumatology, Arthroscopy (KSSTA).

Nonresearch articles, including letters to the editor, commentaries, and opinion pieces, were excluded to maintain methodological consistency and focus on original research.

Detection Tool for AI-Generated Content

ZeroGPT was used to detect AI-generated content in each article. ZeroGPT was selected because of its user-friendly interface and use in previous studies.5,9,31 Similar to other AI detection tools, ZeroGPT analyzes linguistic patterns in provided texts, such as limited lexical diversity and uniform syntactic structures, both of which are more characteristic of AI-generated content because of the model’s training on large textual datasets. It then assigns a probability score that is indicative of the likelihood of AI utilization. The full text of each included article was managed by removing tables, figures, references, headers, and footnotes. The clean text was then manually entered into ZeroGPT to provide the percentage of AI-generated content.

AI-Generated Content Threshold

A key limitation of AI detection tools is that even human texts, written without the assistance of AI, can be falsely flagged as having an AI-generated content score >0%. As such, a threshold for AI-generated content was established to identify and distinguish articles with significant AI use. To determine this threshold, all research articles published in the 5 sports medicine journals in 2013 were assessed for AI-generated content. The year 2013 was chosen because it represents the first year in which all 5 journals published issues, with OJSM being founded that year. The mean and standard deviation of the AI-generated content score in these articles were calculated.

The threshold was defined as 2 standard deviations above the mean percentage of AI-generated content in the articles published before the release of ChatGPT. Articles that exceeded this threshold were determined to have significant AI use. To establish a reliable threshold, we first confirmed that the scores from articles published before the release of ChatGPT were normally distributed. A bootstrap procedure with 10,000 iterations was then performed on the included articles to evaluate the robustness of the threshold. A narrow 95% confidence interval (CI) suggested a reliable and robust threshold.

Statistical Analysis

Each article included in this study published after the release of ChatGPT was assessed for the percentage of AI-generated content using ZeroGPT. Articles that exceeded the established threshold were determined to have significant AI use. The percentage of articles determined to have significant AI use was compared across all 5 sports medicine journals and across months of publication using chi-square analysis. Multivariable logistic regression analysis was then performed to determine the odds of publishing an article with significant AI use across journals while setting the journal with the lowest percentage of AI-generated content as the reference. Finally, linear regression analysis was performed to evaluate the trend in significant AI use over time, and the corresponding linear regression line was plotted. Because all included studies had complete data for the variables of interest (ie, date of publication, journal of publication, and AI-generated content score), missing data were not an issue. All statistical analyses were conducted using SPSS Statistics for Windows (Version 29.0; IBM), with P < .05 defined as indicating statistical significance.

Results

A total of 518 articles published before the release of ChatGPT were assessed to establish the AI-generated content threshold. In these articles, the mean AI-generated content was 10.43 ± 9.13. The established threshold was calculated as follows: mean + 2 standard deviations = 10.43 + 2 (9.13) = 28.69%. The bootstrap procedure yielded a 95% CI of 27.90% to 29.47% for the threshold, thus confirming its robustness and reliability.

AI-Generated Content Across Journals

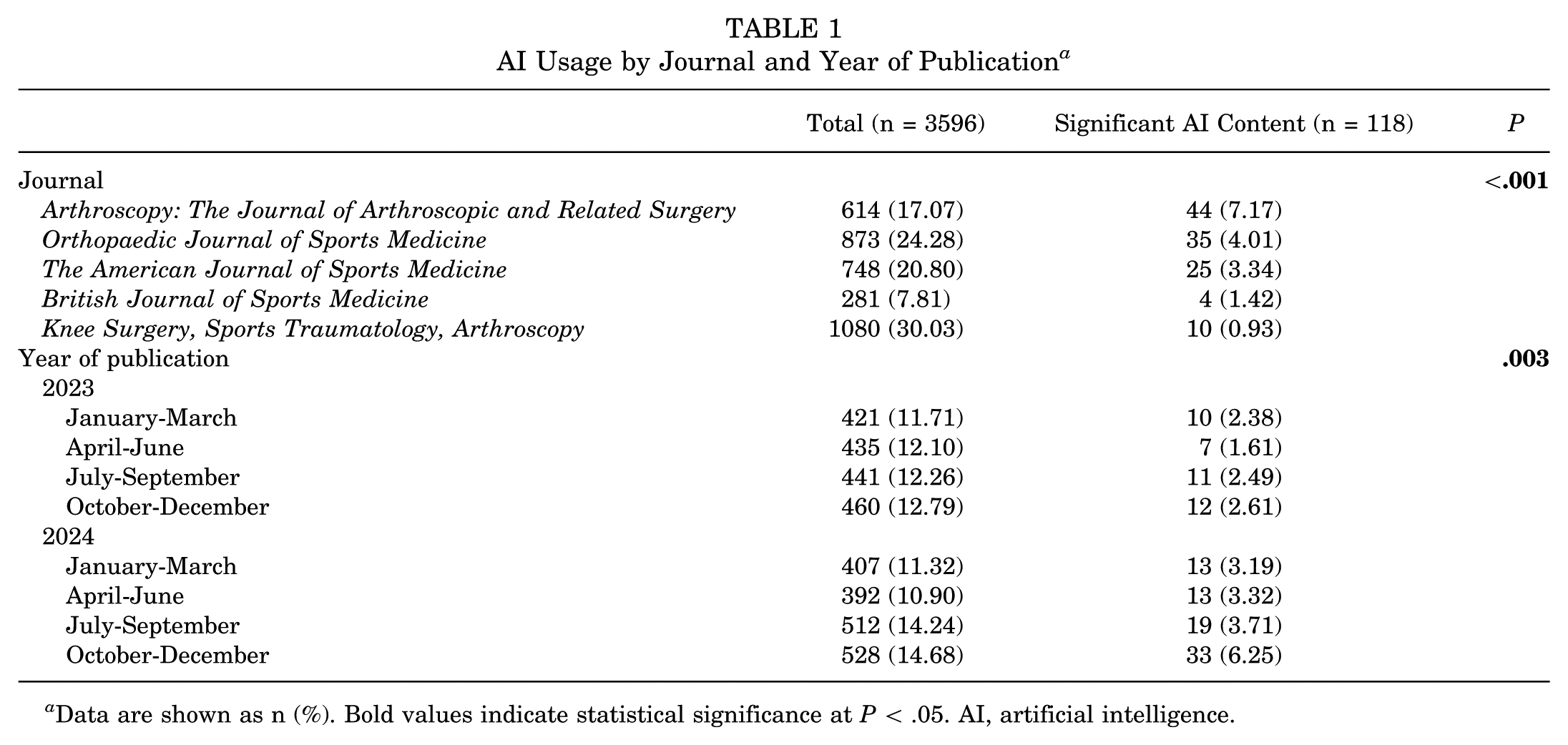

A total of 3596 research articles published across the 5 sports medicine journals after the release of ChatGPT were extracted, cleaned, and analyzed. This included 614 articles (17.07%) from Arthroscopy, 873 (24.28%) from OJSM, 748 (20.80%) from AJSM, 281 (7.81%) from BJSM, and 1080 (30.03%) from KSSTA. Based on the established threshold, 118 (3.28%) of the articles published after the release of ChatGPT were determined to have significant AI-generated content.

The percentage of articles that surpassed the established threshold across all 5 sports medicine journals is plotted in Figure 1. The percentage of articles that surpassed the established threshold was significantly different across the 5 journals, with 7.17% published in Arthroscopy, 4.01% in OJSM, 3.34% in AJSM, 1.42% in BJSM, and 0.93% in KSSTA (P < .001) (Table 1).

Published articles with significant artificial intelligence (AI) use in major sports medicine journals.

AI Usage by Journal and Year of Publication a

Data are shown as n (%). Bold values indicate statistical significance at P < .05. AI, artificial intelligence.

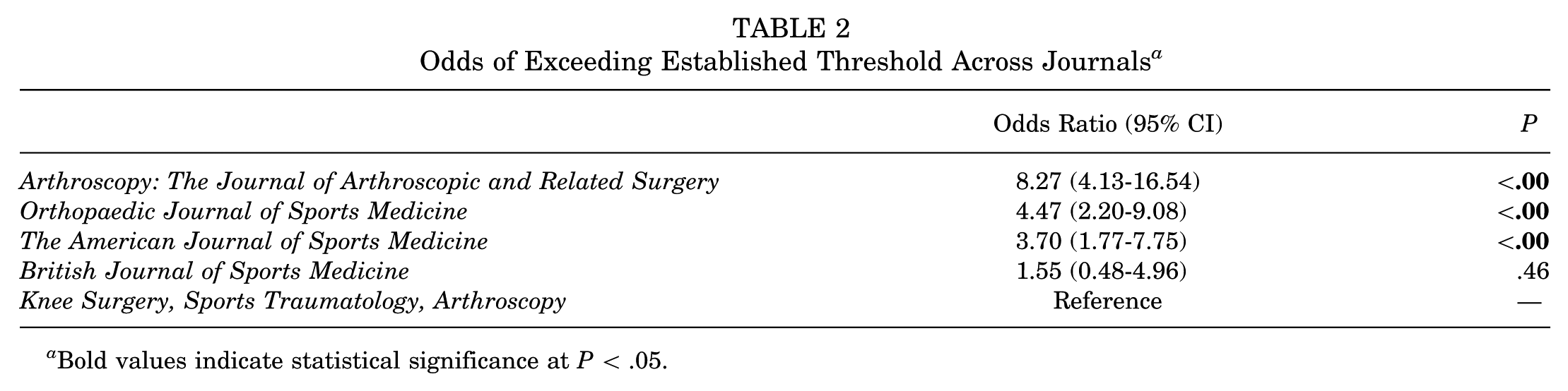

Multivariable logistic regression analysis was performed using KSSTA as the reference journal, given that it had the lowest percentage of AI-generated articles. Arthroscopy (odds ratio [OR], 8.27 [95% CI, 4.13-16.54]; P < .001), OJSM (OR, 4.47 [95% CI, 2.20-9.08]; P < .001), and AJSM (OR, 3.70 [95% CI, 1.77-7.75]; P < .001) had significantly higher odds of publishing articles with significant AI use, whereas BJSM (OR, 1.55 [95% CI, 0.48-4.96]; P = .465) had similar odds of publishing articles with significant AI use compared with KSSTA (Table 2).

Odds of Exceeding Established Threshold Across Journals a

Bold values indicate statistical significance at P < .05.

Temporal Trend of AI-Generated Content

The percentage of articles determined to have significant AI-generated content significantly increased over time. It ranged from 2.38% during January to March 2023 to 6.25% during October to December 2024 (P = .003) (Table 1).

Moreover, linear regression analysis demonstrated a significant upward trend in AI-generated articles across all sports medicine journals by 0.16% per month, which corresponds to an annual increase of 1.94% (r2 = 0.34; P = .003) (Figure 2). This trend was driven by statistically significant increases in AI-generated articles published in Arthroscopy (r2 = 0.26; P = .011), with nonstatistically significant changes in the other journals (Figure 3).

Percentage of all included sports medicine articles exceeding the established artificial intelligence (AI) usage threshold between January 2023 and December 2024. The solid line represents the linear regression line (r2 = 0.34).

Journal-specific percentage of articles exceeding the established artificial intelligence (AI) usage threshold between January 2023 and December 2024. The differently colored lines represent the linear regression lines.

Discussion

The most important finding of this study was that only 3.28% of articles published in the 5 most impactful sports medicine journals were determined to have significant AI-generated content. However, an increasing trend in AI use was shown during the study period, increasing from 2.38% during January to March 2023 to 6.25% during October to December 2024, which corresponds to a monthly increase of 0.16% in AI-generated articles. ChatGPT’s ability to generate texts has been thoroughly discussed in the literature.4,16 However, it is important to acknowledge its limitations, including its inability to generate accurate content, retrieve valid references, or verify the accuracy of the content that it generates.19,32 As such, it is crucial to analyze the prevalence and trend of AI-generated articles in sports medicine literature as a starting point.

Recent studies that assessed the use of AI in orthopaedic literature have reported that 10% to 17% of published articles had significant AI use.5,8,31 The low but increasing trend of AI-generated articles in sports medicine research may be explained by the field’s gradual adoption and implementation of AI in research practices. Unlike other medical specialties and orthopaedic disciplines that have rapidly integrated AI tools for diagnostics and manuscript writing, studies have shown that sports medicine might be adapting at a slower pace.8,35 Moreover, the significant difference in the percentage of AI-generated articles across the sports medicine journals is intriguing. One might expect that the journal with the highest publication volume would also have the highest percentage of AI-generated articles. However, this was not the case, as KSSTA accounted for the largest percentage of published articles in this study (30.03%) but had the lowest percentage of published articles with significant AI-generated content (0.93%). In contrast, Arthroscopy had the highest percentage of published articles with AI-generated content (7.17%), which can be partially explained by its explicit policies published in 2024 that allow the use of AI in manuscript writing.11,28 The journal permits authors to use AI generative tools such as ChatGPT to improve the readability of their articles, with the acknowledgment that they review, edit, and take full responsibility for the accuracy of their content. 28 Moreover, unlike other journals that could have stricter limitations 40 or lack clear policies, Arthroscopy acknowledges the role of AI in scientific writing and has required structured disclosure statements rather than discouraged its use. These guidelines could be one factor contributing to a higher percentage of published articles with AI-generated content in Arthroscopy compared with other sports medicine journals. However, other explanations such as differences in the authors’ familiarity with AI tools, research focus, or demographic characteristics could also contribute to this. The recent development of these policies further reinforces the fact that sports medicine journals have recently integrated the use of AI in scientific writing.11,28

As AI tools are becoming more advanced and accessible, sports medicine researchers are beginning to acknowledge their potential benefits in improving literature reviews, generating coherent texts, and assisting in data analysis and interpretation.30,35 Moreover, the widespread adoption of AI across other scientific disciplines could be another reason for its growing use in sports medicine research.7,17,18,21,26,39 With researchers becoming more familiar with AI’s role in manuscript preparation, albeit to a varying degree across stages, the upward trend in AI-generated articles is expected to continue rising. 31 To manage this increase and ensure the integrity of published articles, more sports medicine journals should establish clear policies for the use of AI. For instance, BJSM stated that, as of September 2023, authors are required to declare the use of AI along with a detailed description of how it was used. If they fail to do so, the article in question is subject to rejection or even retraction. KSSTA, as of February 2023, announced that it is against the use of AI and is planning to incorporate AI detection tools in the future. 12 Both AJSM and OJSM stated, as of August 2024, that AI is allowed only for language correction and require authors to declare any AI use. In contrast, Arthroscopy stated that, as of October 2023, it allows the use of AI to improve readability and language, given that authors take full responsibility for reviewing, editing, and ensuring the accuracy of the content generated by AI. Editorial processes must incorporate structured requirements and ethical oversight while embracing advancements in AI and encouraging its coordinated use.

Detecting fabricated and inaccurate information has always been challenging, and AI further complicates this issue. 6 Studies have shown that even trained human raters were only able to detect 71.4% of AI-generated content, with an interrater agreement of only 59%.12,24 Given these limitations, the traditional peer review process alone is insufficient to detect AI-generated content. To mitigate this challenge, Brameier et al 6 proposed several measures to improve the integrity of scientific publishing. Editorial teams should integrate AI detection tools in their peer review process, verify that references exist by cross-checking them against databases such as PubMed, and ensure that prospective trials are registered on ClinicalTrials.gov. 6 Many journals are now aware of the use of AI in research articles, and some require authors to disclose and describe their use of AI upon submitting their manuscripts.14,37 However, Tang et al 38 reported that only 38% of journals explicitly mention AI use guidelines in their “Instructions to Authors.” Whether the authors accurately report the use of AI in their articles remains uncertain. Some may fear that disclosing the use of AI could result in rejection from the journal, while others may not fully understand what is considered AI use in their work. It is important to note that LLMs such as ChatGPT represent only one aspect of the use of AI in scientific writing. In fact, even common manuscript tools such as grammar checkers and spell checkers are now being powered by LLMs, which could result in the text being flagged as AI generated by tools such as ZeroGPT even when the authors do not actively or knowingly use LLMs for assistance in writing. To clarify, AI use itself is not problematic; it is rather the significant AI use in which content is subject to major alterations and the generation of inaccurate or nonexistent references that would compromise the integrity of the articles.16,19 Therefore, explicit guidelines and their enforcement are necessary to encourage the ethical use of AI in scientific writing. Journals should also state clear consequences for misconduct to improve transparency and compliance. 6 These actions are essential to preserve the credibility of sports medicine literature in the AI era.

This study has several potential limitations. First, AI detection tools, such as ZeroGPT, may not accurately and reliably determine the true percentage of AI-generated content in research articles. In fact, different AI detection tools have been shown to report significantly different percentages of AI-generated content for the same text, which could lead to potential false positives and negatives. 2 To mitigate this limitation, a single detection tool was used in this study, and a threshold based on AI-generated content before the release of ChatGPT was established and used to assess AI-generated articles after the release of ChatGPT. In addition, the methodology used to generate this threshold was kept consistent with previous literature, although there is a concern that this threshold may be too high to capture AI usage. 8 Moreover, ZeroGPT was chosen to allow comparisons with other previous studies, including the one by Callanan et al 8 and others from different specialties. However, solely relying on a single AI detection tool is still a limitation, and future studies should aim to validate our findings using other AI detection tools. Second, the threshold for defining “significant AI-generated content” was based on articles published in 2013, which may not adequately account for evolving writing styles and changes in editorial practices. Third, this study only analyzed articles from a select number of high-impact sports medicine journals, which might not fully capture the broader scope of sports medicine literature. Fourth, this study did not assess the references and figures of articles in which AI has been known to “hallucinate.” Fifth, this study did not evaluate the adequate detection of AI usage in articles that specifically acknowledged the use of AI because this is not consistently reported across all journals and because authors may not always report their AI usage. Also, we did not note the number of articles that acknowledged the use of AI (and whether ZeroGPT correctly flagged such articles). Finally, this study did not assess the accuracy of AI-generated content and therefore did not evaluate its true impact on the integrity of sports medicine articles.

Conclusion

AI usage in sports medicine articles remains low (3.28%) but is steadily rising. The variability across journals suggests that editorial policies could play a major role in allowing for the integration of AI in sports medicine research, although other factors such as the authors’ familiarity with AI tools, research focus, and demographic characteristics could also contribute.

Footnotes

Final revision submitted May 16, 2025; accepted June 5, 2025.

One or more of the authors has declared the following potential conflict of interest or source of funding: A.H.D. has received royalties from Spineart, Stryker, and Medicrea; consulting fees from Medtronic; research support from Alphatec, Medtronic, and Orthofix; grant funding from Medtronic; and fellowship support from Medtronic. B.D.O. has received consulting fees from or has an advisory relationship with CONMED and Vericel and has a patent with royalties paid by CONMED; he is also the editor in chief of The American Journal of Sports Medicine. AOSSM checks author disclosures against the Open Payments Database (OPD). AOSSM has not conducted an independent investigation on the OPD and disclaims any liability or responsibility relating thereto.