Abstract

Background:

In professional sports, injuries resulting in loss of playing time have serious implications for both the athlete and the organization. Efforts to quantify injury probability utilizing machine learning have been met with renewed interest, and the development of effective models has the potential to supplement the decision-making process of team physicians.

Purpose/Hypothesis:

The purpose of this study was to (1) characterize the epidemiology of time-loss lower extremity muscle strains (LEMSs) in the National Basketball Association (NBA) from 1999 to 2019 and (2) determine the validity of a machine-learning model in predicting injury risk. It was hypothesized that time-loss LEMSs would be infrequent in this cohort and that a machine-learning model would outperform conventional methods in the prediction of injury risk.

Study Design:

Case-control study; Level of evidence, 3.

Methods:

Performance data and rates of the 4 major muscle strain injury types (hamstring, quadriceps, calf, and groin) were compiled from the 1999 to 2019 NBA seasons. Injuries included all publicly reported injuries that resulted in lost playing time. Models to predict the occurrence of a LEMS were generated using random forest, extreme gradient boosting (XGBoost), neural network, support vector machines, elastic net penalized logistic regression, and generalized logistic regression. Performance was compared utilizing discrimination, calibration, decision curve analysis, and the Brier score.

Results:

A total of 736 LEMSs resulting in lost playing time occurred among 2103 athletes. Important variables for predicting LEMS included previous number of lower extremity injuries; age; recent history of injuries to the ankle, hamstring, or groin; and recent history of concussion as well as 3-point attempt rate and free throw attempt rate. The XGBoost machine achieved the best performance based on discrimination assessed via internal validation (area under the receiver operating characteristic curve, 0.840), calibration, and decision curve analysis.

Conclusion:

Machine learning algorithms such as XGBoost outperformed logistic regression in the prediction of a LEMS that will result in lost time. Several variables increased the risk of LEMS, including a history of various lower extremity injuries, recent concussion, and total number of previous injuries.

Injuries in professional athletes are detrimental to both the team and the sport overall. 33 Time missed from sport could be detrimental from not only a competitive perspective but also a financial one. 33,41 Lower extremity muscle strains (LEMSs) are some of the most common injuries in athletes. One study on gastrocnemius-soleus complex injuries in National Football League (NFL) athletes reported at least 2 weeks of missed playing time on average. 41 In a summative report on time out of play for Major and Minor League Baseball players, the authors reported that the most common injuries were related to muscle strains or tears (30%). In the same study, hamstring strains were the most common injury in approximately 7% of the athletes and resulted in a total of more than 46,000 days missed, with a mean of 14.5 days missed per player. In addition, approximately 3.6% of these injuries were season ending and 2.6% recurred at least once more. 1 Two additional LEMSs consisted of the top 10 most common injuries in Major League Baseball (MLB) players resulting in additional missed days. While the combined incidence and outcomes of LEMSs have not been well studied in National Basketball Association (NBA) athletes, in a 17-year overview of injuries in NBA athletes, Drakos et al 6 identified hamstring and adductor strains to be among the top 5 most frequently encountered injuries, with quadriceps and hip flexor strains representing significant proportions.

Machine learning has become increasingly recognized as a useful tool in medicine, including orthopaedic surgery. 19,29,34 By allowing for the creation of predictive models that can improve accuracy, machine learning can help guide decision making for not only physicians but also the patients. In addition, machine learning has the distinct advantage of performing well when handling complex relationships by allowing accurate prediction from many inputs. 12

Because of the frequency of LEMS in addition to the days missed and common recurrence, it is important to determine the most important factors that can contribute to LEMS. In addition, no current models exist delineating important risk factors for LEMS in professional NBA athletes. Therefore, the purpose of this study was to (1) create accurate machine learning models for the prediction of LEMS in NBA athletes and (2) compare the predictive performance of these models with conventional logistic regression with the hypothesis that machine learning would allow for the creation of customized risk-predictive tools with higher discrimination than conventional logistic regression. We hypothesized that time-loss LEMSs would occur infrequently in this elite athlete population and that a machine-learning model would outperform traditional methods in quantifying injury risk.

Methods

Guidelines

This study was conducted in adherence with the Guidelines for Developing and Reporting Machine Learning Predictive Models in Biomedical Research as well as the Transparent Reporting of a Multivariable Prediction Model for Individual Prognosis or Diagnosis guidelines. 3,25 A detailed modeling workflow is available in the Appendix, and definitions of commonly encountered machine-learning terminology are available in Appendix Table A1. This study was considered exempt from institutional review board approval.

Data Collection

NBA athlete data were publicly sourced from 3 online platforms: www.prosportstransactions.com, www.basketball-reference.com, and www.sportsforecaster.com. Injuries included all publicly reported injuries resulting in loss of playing time. Data were compiled for all players from the 1999 through 2019 NBA seasons (over a 20-year period). Data collected included demographic characteristics, prior injury documentation, and performance metrics.

Variables and Outcomes

The primary outcome of interest was risk of sustaining a major muscle strain, which was defined as any muscle strain that led to loss of playing time based on movement to and from the injury list, as noted by the publicly available compilation of professional basketball transactions. The major muscle strain injury types considered in the model consisted of hamstring, quadriceps, calf, and groin muscle strains. Demographic variables included age, career length, and player position. Clinical variables included recent injury history, defined as one of the following injuries within 8 weeks of the case injury: groin, quadriceps, hamstring, ankle, back injury, or concussion; remote injury history, defined as any history of the injuries before the case injury; and previous total count of lower extremity injuries. Performance metrics were also included, including basic and advanced statistics. Notable advanced statistics included the 3-point attempt rate (percentage of player field goal attempts that are for 3 points) as well as free throw attempt rates (the ratio of a player’s free throw attempts to field goal attempts). The full list of variables considered for feature selection is provided in Appendix Table A2. There were no missing data. All variables collected in the final compilation were included in recursive feature elimination (RFE) using a random forest algorithm, a technique demonstrated to effectively isolate features correlated with the desired outcome while eliminating variables with high collinearity within high-dimensional data. 5,30

Modeling Training

After selection, modeling was performed using the selected features with each of the following candidate machine learning algorithms: elastic net penalized regression, random forest, extreme gradient boosted (XGBoost) machine, support vector machines, and logistic regression. Variables significant on logistic regression were entered into a simplified XGBoost for benchmarking.

Models were trained using 10-fold cross-validation repeated 3 times. The performance of this model was then evaluated on the respective test set, and no data points from the training set were included in the test set. The model was then internally validated via 0.632 bootstrapping with 1000 resample sets, because of this technique’s ability to optimize evaluation of both model bias and variance. 11,38,39 The model was thus tested on all data points available, and evaluation metrics were summarized with standard distributions of values.

Model Selection

The optimal model was chosen based on area under the receiver operating characteristic curve (AUC). Models were compared by discrimination, calibration, and Brier score values (Figure 1A). An AUC of 0.70 to 0.80 was considered acceptable, and an AUC of 0.80 to 0.90 was considered excellent. 13 The mean square difference between predicted probabilities of models and observed outcomes, known as the Brier score, was calculated for each candidate model. The Brier scores of candidate algorithms were then assessed by comparison with the Brier score of the null model, which is a model that assigns a class probability equal to the sample prevalence of the outcome for every prediction.

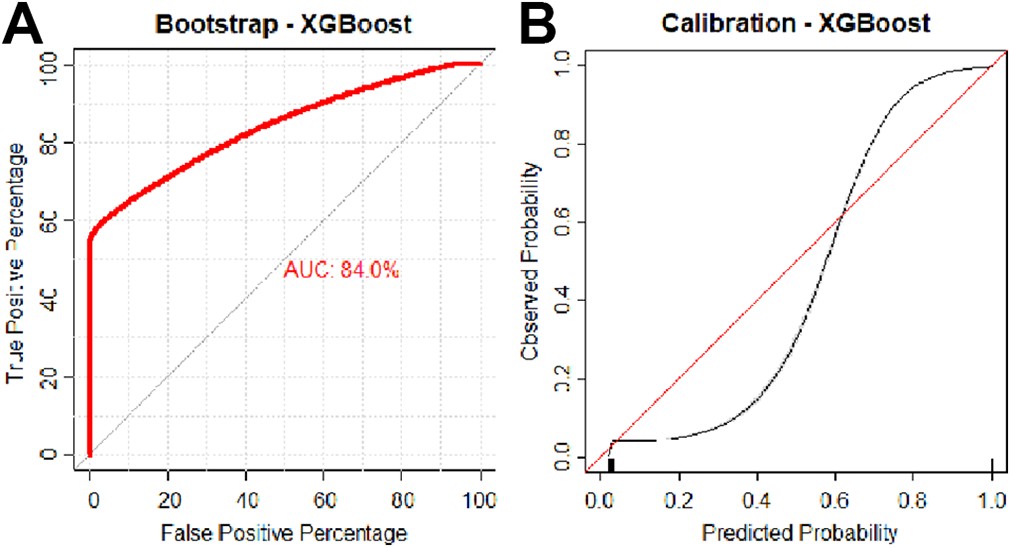

(A) Discrimination and (B) calibration of the extreme gradient boosted machine. AUC, area under the receiver operating characteristic curve.

The final model was calibrated with the observed frequencies within the test population and summarized in a calibration plot (Figure 1B). Ideally, the model is calibrated to a straight line, with an intercept of 0 and slope of 1 corresponding to perfect concordance of model predictions to observed frequencies in the data.

Model Implementation

The benefit of implementing the predictive algorithm into practice was assessed via decision curve analysis. These curves plot the net benefit against the predicted probabilities of each outcome, providing the cost-benefit ratio for every probability threshold of classifying a prediction as high risk. Additionally, curves demonstrating default strategies of changing management for all or no patients are included for comparative purposes.

Model Interpretability

Both global and local model interpretability and explanations were provided. Global model interpretability is provided as a plot of the model’s input variables normalized against the input considered to have the most contribution to the model prediction and Shapley Additive Explanations (SHAP), demonstrating how much each predictor contributes, either positively or negatively, to the model output. 24 Local explanations are provided using local-interpretable model-agnostic explanations, in which variable contributions for individual model predictions are visually depicted. 8,35

Digital Application

The final model was incorporated into a web-based application to illustrate possible future model integration. It should be noted that this digital application remains exclusively for research and educational purposes until rigorous external validation has been conducted. In the digital application, athlete demographic and performance data are entered to generate outcome predictions with accompanying explanations. All data analysis was performed in R Version 4.0.2 using RStudio Version 1.2.5001.

Results

Patient Characteristics

A total of 2103 NBA athletes were included in the study over a 20-year period. The median career length was 6 years (interquartile range, 2-9 years), with an almost even breakdown between designated positions (Table 1). Hamstring (36.4%) and calf (36.1%) injuries were more prevalent compared with quadriceps (11.5%) and groin (15.9%) injuries. The incidence rate of LEMSs per athlete per season was 5.83%.

Baseline Characteristic of the Study Population (N = 2103) a

a Values are presented as n (%) or median (interquartile range). BMI, body mass index.

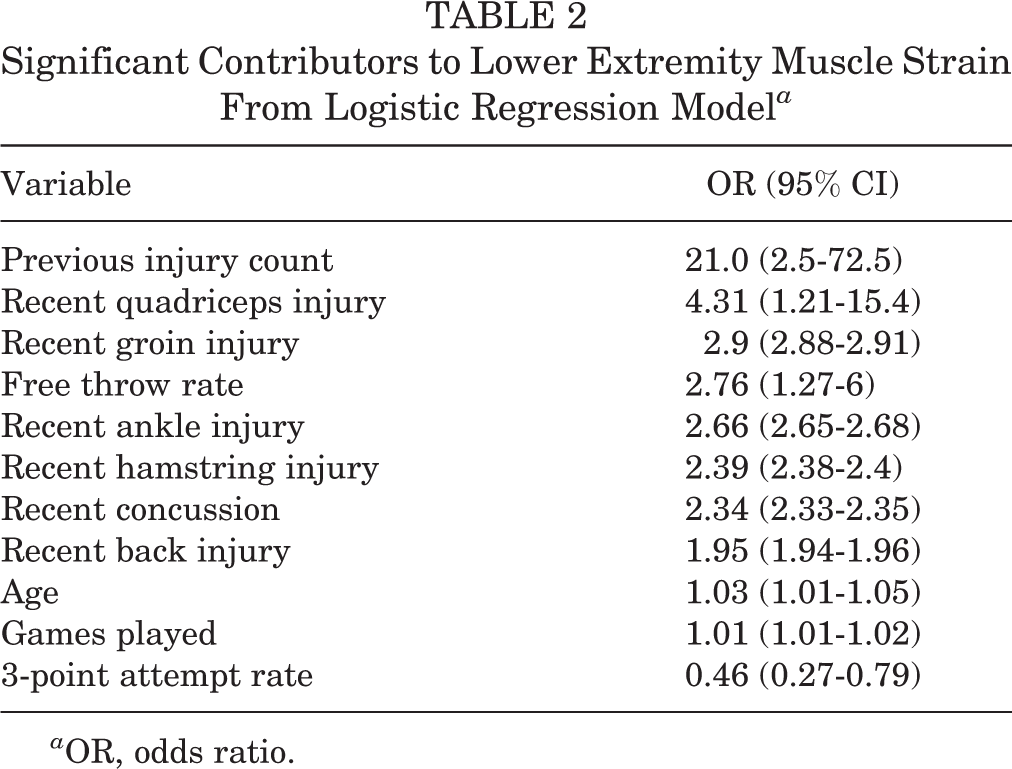

Multivariate Logistic Regression

After feature selection, the multivariate logistic regression was utilized to generate models using the selected features with odd ratios (ORs) of statistically significant contributors to LEMS. The most important risk factor for LEMS was previous injury count (OR, 21.0; 95% CI, 2.5-72.5) (Table 2). The next 5 most important risk factors for LEMS, in order from most to least contributory, were recent quadriceps injury (OR, 4.31; 95% CI, 1.21-15.4), recent groin injury (OR, 2.9; 95% CI, 2.88-2.91), free throw attempt rate (OR, 2.76; 95% CI, 1.27-6), recent ankle injury (OR, 2.66; 95% CI, 2.65-2.68), and recent hamstring injury (OR, 2.39; 95% CI, 2.38-2.4) (Table 2). While significant, age and games had a negligible effect in contributing to LEMS in the logistic regression (OR, 1.01-1.03), and 3-point attempt rate was protective of LEMS (OR, 0.46; 95% CI, 0.27-0.97).

Significant Contributors to Lower Extremity Muscle Strain From Logistic Regression Model a

a OR, odds ratio.

Model Creation and Performance

After multivariate logistic regression, machine-learning models were trained using the same variables identified from RFE. After model optimization, candidate model performances on internal validation were compared (Table 3). The random forest and XGBoost models had higher AUCs on internal validation data, 0.830 (95% CI, 0.829-0.831) and 0.840 (95% CI, 0.831-0.845), respectively, although XGBoost had a nonsignificantly higher Brier score. In this data set, conventional logistic regression had a significantly lower AUC on internal validation (0.818; 95% CI, 0.817-0.819) compared with the aforementioned models; similarly, this was lower than the AUC yielded by the simplified XGBoost model (0.832; 95% CI, 0.818-0.838). The calibration slope of models ranged from 0.997 for neural network to 1.003 for XGBoost, suggesting excellent estimation for all models (Table 3). The Brier score of models ranged from 0.029 for random forest to 0.31 for multiple models, indicating excellent accuracy. The XGBoost model had the highest overall AUC, with comparable calibration and Brier scores, and was therefore chosen as the best-performing candidate algorithm (Figure 1).

Model Assessment on Internal Validation Using 0.632 Bootstrapping With 1000 Resampled Data Sets (N = 2103) a

a Null model Brier score = 0.063. AUC, area under the receiver operating characteristic curve; SVM, support vector machine; XGBoost, extreme gradient boosted.Data in parentheses is 95% confidence intervals.

Variable Importance

The global importance of input variables used for XGBoost was assessed with previous lower extremity injury having a near 100% relative influence, followed by games played, free throw attempt rate and percentage, 3-point attempt rate, and assist percentage (Figure 2A). SHAP values (Figure 2B) are average marginal contributions of selected features across all possible coalitions and indicate the 3 most common features to be total rebound percentage, previous lower extremity injury count, and games played. As can be interpreted, age and games played affect the model in a positive direction. While there are a number of outliers in which a high injury count does not contribute positively to an increased probability of LEMS, the overall contribution of previous injury count as indicated by the mean SHAP value remains positive.

(A) Variable importance plot of the extreme gradient boosted (XGBoost) machine model. (B) Summary plot of Shapley (SHAP) values of the XGBoost model. Specifically, the global SHAP values are plotted on the x-axis with variable contributions on the y-axis. Numbers next to each input name indicate the mean global SHAP value, and gradient color indicates feature value. Each point represents a row in the original data set. Three-point attempt rate = percentage of player field goals that are for 3 points; free throw attempt rate = ratio of free throw attempts to field goal attempts. LE, lower extremity.

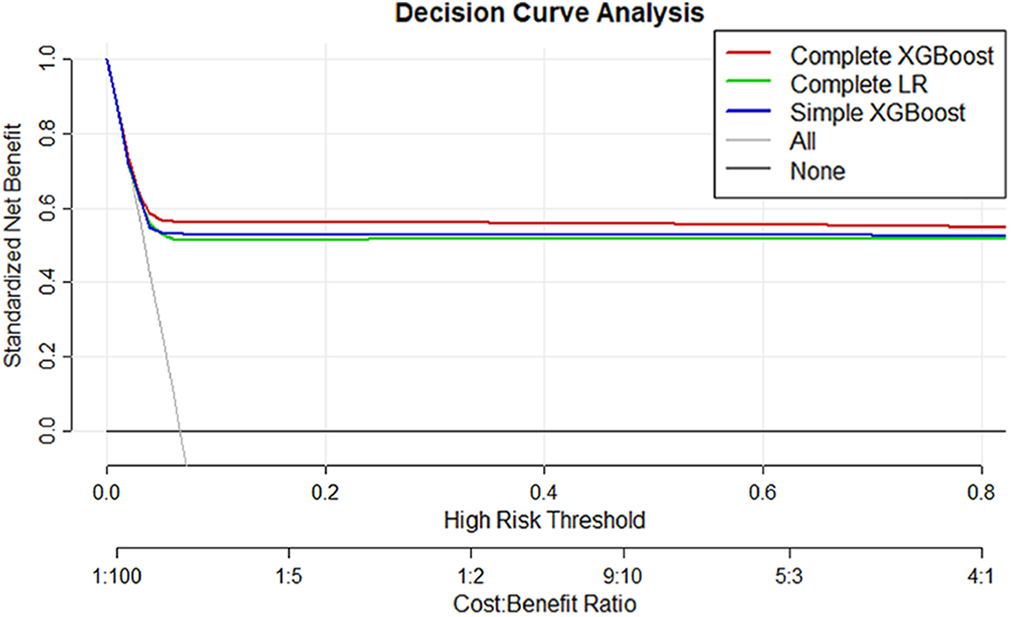

Decision Curve Analysis

Decision curve analysis was used to compare the net benefit derived from the trained XGBoost model. For comparison purposes, a decision curve was also plotted for a learned multivariate logistic regression model trained using the same parameters and inputs. The XGBoost model trained on the complete feature set demonstrated greater net benefit compared with logistic regression and other alternatives (Figure 3).

Decision curve analysis comparing the complete extreme gradient boosted (XGBoost) machine algorithm with the complete logistic regression as well as a simplified model utilizing select parameters. The downsloping line marked by “All” plots the net benefit from the default strategy of changing management for all patients, while the horizontal line marked “none” represents the strategy of changing management for none of the patients (net benefit is zero at all thresholds). The “All” line slopes down because at a threshold of zero, false positives are given no weight relative to true positives; as the threshold increases, false positives gain increased weight relative to true positives and the net benefit for the default strategy of changing management for all patients decreases. LR, logistic regression.

Interpretation

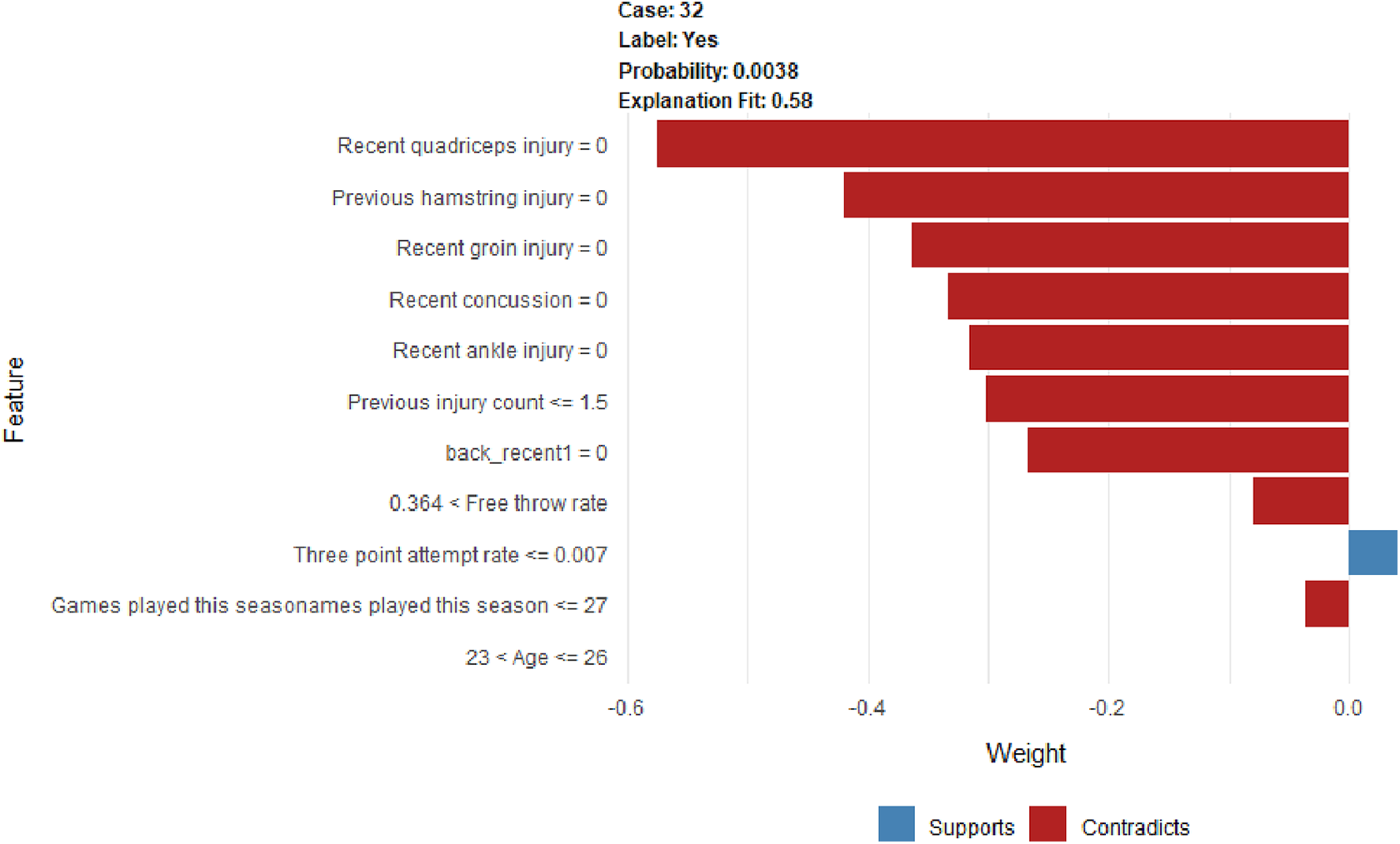

An example of a patient-level evaluation and variable importance explanation is provided in Figure 4. This patient was assigned a probability of 0.007 (approximately 1%) for sustaining LEMS. Features that decreased the patient’s risk for LEMS included lack of recent ankle injury; concussion; or groin, hamstring, quadriceps, or back injury (among others). Features that increased the patient’s risk of injury were a recent back injury in addition to 3-point shot percentage.

Example of individual patient-level explanation for the simplified extreme gradient boosted machine algorithm predictions. This athlete had a predicted injury risk of 0.77% at this point during the season. The only feature to support the likelihood of injury was a recent back injury.

For each patient or professional basketball athlete, baseline parameters can be collected or examined during the encounter to generate predictions regarding risk of LEMS in the athlete. These predictions can be utilized to inform counseling, modify exercise regiments, or dictate rest periods for athletes at high risk for LEMS. The final model was incorporated into a web-based application that generated predictions for probabilities of LEMS. The application (available at https://sportsmed.shinyapps.io/NBA_LE) is accessible from desktop computers, tablet computers, and smartphones. Default values are provided as placeholders in the interface, and the model requires complete cases to generate predictions and explanations.

Discussion

The principal findings of our study are that (1) the incidence of LEMSs in the NBA over the study period was 5.83%, and a number of significant features in the prediction of these injuries were identified; (2) the XGBoost algorithms outperformed logistic regression with regard to discrimination, calibration, and overall performance; and (3) the clinical model was incorporated into an open-access injury risk calculator for education and demonstration purposes.

Despite a number of studies evaluating injuries in the NBA, 20,36,37 there is a paucity of evidence regarding the rate of LEMS. A study by Drakos et al 6 found that hamstring strains (n = 413; 3.3%), defined as any that required missed time, physician referral, or emergency care, were among the most frequently encountered orthopaedic injuries in the NBA across a 17-year study period; our study reports a lower incidence (n = 268) over 20 years, likely given our accounting of only time-loss injuries. This likely reflects a similar overall deflation of injury incidence in our cohort among other body parts.

While similar investigations have been carried out in professional soccer, baseball, and American football athletes, 21,28,32 few studies have previously investigated the risk factors for characterizing the epidemiology of LEMSs in professional basketball athletes. The input features that were significant on both multivariate logistic regression and global variable importance assessment included the following: previous injury count; games played; history of recent ankle, groin, hamstring, or back injury; history of a concussion; free throw percentage; and 3-point shot percentage. The investigation by Orchard et al 32 identified a strong relationship between LEMS and a recent history of same-site muscle strain. We similarly utilized the definition of recent recurrence as within 8 weeks of the index injury as described by Fuller et al, 7 because of the absence of such a consensus definition in the NBA, and corroborated this finding among basketball athletes. This relationship is intuitive, as premature return to play can predispose athletes to reinjury. However, we did not identify a relationship between nonrecent history of any injuries with the risk of an index injury, which suggests that injury risk for LEMSs may be equivalent between controls and injured athletes beyond the 8-week window within this cohort of elite athletes.

There has been a longstanding relationship between ankle injuries and lower extremity biomechanics and postural stability. 2,22,23 However, whether this relationship is causative or correlative is more ambiguous. While a motion analysis study by Leanderson et al 22 identified a significantly increased range of postural sway among Swedish Division II basketball players with a history of lateral ankle sprains compared with controls, a more recent investigation by Lopezosa-Reca et al 23 found that athletes who had certain foot postures as described by the Foot Posture Index were more likely to experience lower extremity injuries such as lateral ankle sprains and patellar tendinopathy. Our findings suggest that altered foot biomechanics secondary to a recent ankle injury contribute at least partially to the increased risk of lower extremity muscle injuries, as nonrecent ankle injury was not found to be a significant risk factor.

The relationship between concussion and lower extremity injury has been extensively studied in the sports medicine literature, 10,15,16 with a number of hypotheses for the underlying mechanism, including resultant deficiencies in dynamic balance, neuromuscular control, cognitive accuracy, and gait performance. 4,14,27,31,40 This observation is corroborated by our study, which identified a concussion history as a significant risk factor in both the multivariate logistic regression and the machine learning models. Finally, an interesting protective correlation was identified between 3-point attempt rate and risk of lower extremity injury. One possible reason for this observation may lie in the role of the 3-point attempt rate as a proxy of playing style, as players who take a greater number of 3-point attempts usually play a less physical perimeter game. Conversely, the free throw rate represents the ability of a player to draw personal fouls from opponents and therefore is a measure of physical play and a strong predictor of injury risk.

On evaluation of the predictive models, both the complete and simple XGBoost models outperformed the logistic regression on both discrimination and the Brier score. Investigators have previously developed machine learning injury-prediction models for recreational athletes 17 as well as in professional sports, including the NFL, 42 National Hockey League, 26 and MLB. 18 These models utilized a range of inputs, from performance metrics to video recordings and motion kinematics. The present study evaluated a number of performance metrics as well as clinical injury history, which may present more actionable findings for the team physician. After external validation, prospective deployment of the model can integrate the athlete’s injury history in an 8-week window to provide a real-time snapshot of the athlete’s risk for experiencing a LEMS with excellent fidelity and reliability. Additionally, with contemporary improvements in computational and sensor technology, there has also been an increase in focus on the potential of global positioning system tracking data in real-time injury forecasting and prevention, 9 and machine learning technology is uniquely equipped to handle the sheer overabundance of data available through the automation of structure and pattern recognition. 12

Strengths and Limitations

The strengths of this study must be interpreted concurrently with a number of limitations. The first concerns the quality of the data source. While we were able to capture injury history and performance metrics that can serve as proxies for playing style, data extracted from publicly available sources do not offer insight into postinjury rehabilitation protocol or long-term management strategies for recurrent injuries. Additionally, detailed clinical data including physical examination and imaging findings were unavailable. Within these limitations, it is notable that the current machine learning algorithm reached an excellent level of concordance and calibration and that a simplified algorithm performed similarly to the complete logistic regression model; it should be within expectation that prospective incorporation of granular characteristics of the injury and the return-to-play protocol can continue to augment the performance of the algorithm. Second, the sampling remains limited to the population of elite athletes, and generalizability to those competing at the recreational or semiprofessional levels may remain questionable until further external validation, as such use of the digital application is for education and demonstration only. In this context, an interesting future extension of this study may be a matched-cohort comparison of LEMS injury risk between professional and amateur athletes. Finally, the black box phenomenon is an inherent flaw of certain machine learning algorithms wherein transparency into model behavior is insufficient. For example, the complete model utilizes 25 inputs and can become extremely cumbersome for the user, especially for physicians to whom the effects of specific performance variables may not be clear from a clinical perspective. We have attempted to mitigate this by reducing the dimensions of the training data to produce a simplified model that is both clinically sound and easily deployable without significantly sacrificing its effectiveness. In addition, the application features a built-in local agnostic model explanation algorithm that can approximate the model dependence on each input for a given prediction.

Conclusion

Machine-learning algorithms such as XGBoost outperformed logistic regression in the effective and reliable prediction of a LEMS that will result in lost time. Factors resulting in an increased risk of LEMS included history of a back, quadriceps, hamstring, groin, or ankle injury; concussion within the previous 8 weeks; and total count of previous injuries.

Footnotes

Final revision submitted February 16, 2022; accepted May 11, 2022.

One or more of the authors has declared the following potential conflict of interest or source of funding: Support was received from the Foderaro-Quattrone Musculoskeletal-Orthopaedic Surgery Research Innovation Fund. A.P. has received consulting fees from Moximed. B.F. has received research support from Arthrex and Smith & Nephew, education payments from Medwest, consulting fees from Smith & Nephew and Stryker, royalties from Elsevier, and stock/stock options from iBrainTech, Jace Medical, and Sparta Biopharma. C.L.C. has received nonconsulting fees from Arthrex. AOSSM checks author disclosures against the Open Payments Database (OPD). AOSSM has not conducted an independent investigation on the OPD and disclaims any liability or responsibility relating thereto.

Ethical approval for this study was waived by Mayo Clinic.