Abstract

Background:

Produce prescription projects (PPRs) allow healthcare professionals to “prescribe” fruits and vegetables for patients experiencing food insecurity and a diet-related chronic disease. Evaluation of healthcare outcomes, utilization, and costs data is prudent to understand the impact of PPRs. However, substantial challenges exist. The objective of this study is to understand facilitators, barriers, lessons learned, and emergent best practices for data derived from electronic health records (EHR) among PPRs.

Design and methods:

A multiple methods case study including four PPRs funded through a pilot grant to use EHR-derived data to measure healthcare outcomes, utilization, and costs of health care. Data sources included grant applications (n = 4), data use agreements (DUA; n = 4), memoranda of understandings (n = 4), pre/post healthcare outcomes and utilization data, and qualitative interviews/focus groups (n = 10). For analysis we used: descriptive statistics; paired t-tests for changes in values pre/post PPR; and thematic qualitative analysis to construct themes.

Results:

The four cases shared varied healthcare outcomes and utilization measures and submitted less data than was outlined in their respective DUA. Three salient themes emerged: PPR projects need: (1) sufficient time and resources to develop procedures to collect and share healthcare data; (2) tailored healthcare outcome measures to PPR design, outcomes of interest, and EHR capabilities; (3) technical support related to technology, data security and sharing.

Conclusions:

EHR data can provide insight on the impact of PPRs and related healthcare interventions on health outcomes and cost-effectiveness. Evaluation efforts must consider project capacity and ensure adequate resources to collect and securely share healthcare data.

Keywords

Significance for public health

Prevention and management of diet-related chronic disease is complex for households that experience food and nutrition insecurity. Many public health, clinical, and community organizations are working together to mitigate food and nutrition insecurity through interventions such as Food is Medicine (FIM). The suite of FIM interventions includes produce prescriptions, which allow healthcare professionals to “prescribe” fruits and vegetables for patients experiencing food insecurity and a diet-related chronic disease diagnosis. Rigorous evaluation of produce prescription projects (PPR) is lacking. Given the intersection with healthcare, evaluation of healthcare outcomes, utilization, and cost data through the electronic health record (EHR) appears to be practical, yet challenges exist. This multiple case study evaluation elucidates facilitators, barriers, lessons learned, and emergent best practices among active PPR projects collecting, sharing, and evaluating data derived from electronic health records (EHR).

Introduction/background

Food is medicine to promote food security and chronic disease prevention and management

Fruits and vegetables (FVs) are critical to prevention and management of diet-related chronic conditions, such as type 2 diabetes and cardiovascular disease.1 –3 Adequate intake of FVs can be challenging for those residing in the United States (US) with low-income and food or nutrition insecurity. 4 Food Is Medicine (FIM) approaches are being integrated into healthcare systems in the US to address chronic disease management, recognizing the critical role nutrition plays in health outcomes. FIM programs pair improved access to healthful foods, such as fruits and vegetables (FVs), with intervention strategies (e.g., nutrition education) to eligible patients in the healthcare setting. They involve partnerships between healthcare organizations and other groups, such as community-based organizations and local food growers/producers.5,6

Produce prescription projects (PPRs) are an increasingly common type of FIM intervention focused on increasing FV intake. 7 These projects allow healthcare professionals to “prescribe” FVs for patients experiencing food insecurity and a diet-related chronic disease diagnosis. Since 2010, hundreds of PPRs have been launched throughout the US.7,8 These programs vary widely in priority audience, screening and eligibility procedures, prescription value, delivery mechanism (e.g., produce box, voucher), funders, implementation approaches, and evaluation components. Growing evidence suggests PPRs may increase FV purchasing 9 and consumption 10 ; reduce household food insecurity11,12; improve clinical health outcomes including hemoglobin A1c,13,14 diastolic blood pressure, 15 and body mass index 16 ; decrease cost to the healthcare system related to providing care 17 ; and improve patient experiences.18,19

USDA Gus Schumacher Nutrition Incentive Program (GusNIP) overview

The United States Department of Agriculture (USDA) National Institute of Food and Agriculture (NIFA) funds Produce Prescription Programs (PPRs) through the Gus Schumacher Nutrition Incentive Program (GusNIP). GusNIP is part of the USDA’s four pillar approach to nutrition security 20 that contribute to the White House Conference goals of “ending hunger and reducing diet-related diseases and disparities” through the National Strategy on Hunger, Nutrition, and Health. 21 Since it was established in 2019, the GusNIP family of funds has provided $60,189,825.92 million in funding to 126 PPR projects across the US. Additionally, GusNIP funds a Nutrition Incentive Program Training, Technical Assistance, Evaluation, and Information Center (GusNIP NTAE Center) cooperative agreement with NIFA. The GusNIP NTAE Center (“NTAE”) primary awardee is the Gretchen Swanson Center for Nutrition, in partnership with Fair Food Network and University of California San Francisco. The NTAE created and leads the Nutrition Incentive Hub, a coalition of specialists who provide expertise about GusNIP programs.

GusNIP PPR projects are required to include a healthcare partner or be a healthcare entity themselves (e.g., hospital, federally qualified health center). GusNIP PPR projects are required to enroll individuals who are (1) eligible for income-qualifying benefits like Supplemental Nutrition Assistance Program (SNAP) or enrolled in Medicaid and (2) a member of a low-income household who has or is at risk of developing a diet-related health condition. 22 PPR projects provide eligible participants with prescriptions for fresh FVs, which are typically redeemed at collaborating farmers markets, grocery and corner stores, and in healthcare settings (e.g., one morning per week food box distribution at a clinic). 7 Finally, nutrition education and/or other supporting services, such as transportation, are commonly added to augment programs.23,24

Evaluation of produce prescription projects with electronic health record data

The 2018 Farm Bill that established GusNIP requires that the NTAE conduct an overarching evaluation of GusNIP projects. 25 PPR evaluation includes health outcomes, utilization, and cost to the healthcare system related to providing care, among others. 26 Upon funding, all GusNIP PPR grantees agree to report on these outcomes. In addition to a survey that all GusNIP PPR grantees are required to collect with a subset of participants, 27 one potential source for these data are electronic health records (EHRs). EHRs can provide relevant data documented within the clinical care process. Several studies have leveraged EHRs to evaluate FIM interventions’ effects on biomarkers or healthcare utilization.9,14,16,28,29

Many GusNIP grantees’ leverage EHR data to meet requirements for reporting on health outcomes, utilization, and cost to the healthcare system related to providing care. However, EHR data can be difficult for PPR projects to access and evaluate for myriad reasons. Notably, some GusNIP grantees are clinical organizations that have easier access to EHR data, while others are community-based organizations that must work with their clinical partners to obtain this access. GusNIP grantees have both formally and informally reported to the NTAE the following as challenges for collecting, accessing, and/or sharing EHR-derived healthcare data:

PPR project is not connected to EHR data and/or it is difficult to access within clinical workflows.

Partners do not have the time or capacity to perform additional tasks related to evaluation requirements (e.g., EHR programming, EHR data extraction).

Staff are unsure how to derive data from EHRs.

Uncertainty exists on how to initiate and implement data sharing agreements between community-based organizations and healthcare entities.

Institutional Review Board (IRB) processes to seek approval to conduct human subjects research (e.g., accessing EHR data) are difficult and time-consuming to navigate.

In 2022, 86% of PPR grantees reported plans to access EHR data for their project evaluation. By the end of these grantees’ funding cycles, almost none had successfully accessed nor utilized EHR data. 30 Due to these challenges, the NTAE offered all active PPR grantees the opportunity to apply for a small grant to participate in a case study about deriving EHR data for their PPR project evaluation. The small grant mechanism offered financial support to access health outcomes, utilization, and cost data from the EHR; specialized technical assistance from the NTAE; and occasions to share qualitative data about the facilitators and barriers faced when collecting, extracting, and sharing these EHR data. Therefore, the purpose of this case study was to answer the following research questions:

What is the quality (e.g., completeness) of data derived from EHRs, as originally planned through a data use agreement (DUA) and memorandum of understanding (MOU)?

What is the experience of GusNIP PPR grantees who aim to access healthcare outcomes, utilization, and cost data through EHR?

What barriers and facilitators exist for GusNIP PPR grantees to collect and share healthcare outcomes, utilization, and cost data derived from EHRs?

How can the NTAE support GusNIP PPR grantees in the processes involved in EHR-derived healthcare outcomes, utilization, and cost data?

Methods

Conceptual framework

Constructivism was used to frame this project, which is built on the premise of a social construction of reality. A key advantage of this approach is close collaboration between the researcher and the participant while enabling the participant to talk about his/her experiences. It is through this discourse that participants are able to describe their views of reality, and this enables the researcher to better understand the participants’ actions and experiences. 31

Research design and methods

An instrumental, multiple methods, multiple case-study design was employed for this project, drawing upon quantitative and qualitative data. Due to its flexibility and rigor, this approach is valuable in understanding approaches to PPR evaluation in healthcare. 32 The unit of analysis is defined as each GusNIP PPR grantee (n = 4) who participated. An instrumental approach is often used in situations where the case itself is of secondary interest; for instance, the case plays a supportive role in facilitating understanding of a broader question. Findings include a variety of perspectives from participating grantees; however, individual stories are not the project’s focus. Case study methodology allows researchers to view problems from multiple perspectives, thereby enriching the meaning of a singular perspective. 32 Engaging multiple cases allows researchers to triangulate findings across different contexts to better understand similarities and differences. 33

For the quantitative component of the case study, each program had the flexibility to submit its own chosen data elements that aligned with health outcomes, utilization, and cost to the healthcare system, which was outlined in a DUA and MOU. To better understand the process experienced by these partners, qualitative interview and focus group data provided a richer, deeper level of insight.34 –37 Since GusNIP PPRs vary in terms of partnerships, capacity, staff expertise, and other factors, it is important to understand the experience of multiple types of projects. This study was approved by the University of Nebraska Medical Center Institutional Review Board (#829-20-EX) as exempt.

Project selection

This case study is bound by both the geographic location of each participating GusNIP grantee and the 14-month small grants funding cycle (May 2022–August 2023). PPR grantees were selected to participate based on their application to a Request for Applications to a Small Grants Case Study Project. Applicants (i.e., GusNIP PPR grantees) that were selected for a small grant demonstrated that they, in collaboration with their healthcare partner, were able to (1) extract healthcare outcomes, utilization, and cost data for individuals enrolled in the PPR before, during, and after participation and (2) link cost and utilization data with clinical metrics from EHRs and GusNIP surveys. As outlined in the Request for Applications, these applicants also needed to be able to access similar healthcare outcomes, utilization, and cost data from a sample of non-PPR participants to serve as a matched comparison group (e.g., control). Awardees agreed to provide de-identified data to the NTAE. In all, five grantees applied and were selected for funding, though only four ultimately participated. To distinguish these five grantees from all other GusNIP PPR grantees, they will be called “Cases” throughout the remainder of this paper.

Data collection procedures

Multiple methods of data collection were employed to triangulate findings for a rigorous, complete picture of each Case experience. 33 First, the NTAE and each Case set up a DUA and MOU to partner in the research project. The MOUs were established to formally outline the specific roles and responsibilities to be undertaken by each party (NTAE and Case), while the DUAs clearly delineated which data was to be collected and securely shared between partners as part of this research project. DUAs delineated that data to be shared would include: healthcare outcomes (HbA1c; blood pressure; anxiety severity 38 ; depression severity), 39 utilization measures (cost of health care provided, charged or billed amounts; attended, no show, and canceled appointments), number of PPR prescriptions redeemed, pre/post survey data, and associated timeframe to submit data. Of note, no protected health information (e.g., identifying information or protected health information [PHI]) was shared between the Cases and NTAE for this study thus obtaining patient-level consent was not required.

Researchers quantified the number of exchanges needed between NTAE and Cases to secure DUA and MOUs. To do so, a comprehensive review of all correspondence between Cases, affiliated partners, and the NTAE regarding the execution of the required DUAs and MOUs was systematically categorized, including: contract dates initiated and finalized, number of individuals involved and their role, number of email interactions, number of contractual revisions needed before a final version was signed and fully executed, as well as observed key challenges and facilitators throughout the process. Additionally, NTAE-based researchers conducted a comprehensive document analysis of each Case small grants application narrative in comparison with their fully executed DUA and MOU with the NTAE to compare and contrast data submitted versus proposed in the DUA/MOU (of note, in all cases, less data was submitted than was approved in the DUA/MOU).

For healthcare utilization and outcomes, selected measures were collected and recorded by healthcare providers per standard of clinical care and extracted from the EHR. Additionally, corresponding dates were provided for some health values to align the measures with the baseline or post timeframes of the PPR intervention period. Each Case submitted their EHR-derived data to private folders using a secure SharePoint site set up by the NTAE.

Semi-structured qualitative interviews were conducted with each Case at the start of the small grants funding cycle (October 2022–January 2023). At the funding cycle mid-point (February–April 2023), grantees participated in one of two focus groups to discuss their progress. After the full funding cycle and data transfer to the NTAE were completed, each Case participated in a close out interview (August–October 2023). The same lead qualitative researcher conducted all interviews and focus groups to provide continuity and fidelity in the data collection methods. 40 Moderator guides and types of data collection (i.e., focus group versus interview) for each data collection point can be found in Table 1. Each Case had the option to invite more than one representative to their pre and post interview—which is why some were focus groups and some were interviews. The mid-point focus group was selected as the data collection method because collectively the Cases wanted the opportunity to have a structured conversation amongst themselves to learn from one another at this mid-point.

Moderator guide and data collection methods used for qualitative data collection.

EHR: electronic health record; NTAE: Nutrition Incentive Program Training, Technical Assistance, Evaluation, and Information Center; PPR: produce prescription program; USDA NIFA: United States Department of Agriculture, National Institute of Food and Agriculture (NIFA).

Analysis

EHR-derived data that was obtained from each Case was compared to the DUAs that were submitted to identify alignments and gaps. The missingness in healthcare utilization data (clinic visits, no show appointments, appointments canceled) and outcome measures (HbA1c, blood pressure, anxiety severity, depression severity) were compared for the pre and post periods within participants using descriptive statistics (frequencies, percentages). Additionally, descriptive statistics were conducted to quantify the number of exchanges needed between NTAE and Cases to secure DUAs and MOUs. Following data review, we identified that control group data was missing for the majority of cases. For the impact analysis, a one-group, pre/post design was used to compare averages (using paired t-tests) for continuous normally distributed outcomes and medians (using the Wilcoxon Signed Rank test) for continuous outcome variables that were not normally distributed. Analyses were conducted using STATA (version 18).

All interview audio recordings were professionally transcribed verbatim. The lead qualitative researcher checked each transcript for accuracy against the audio recordings. Next, researchers used Atlas.ti (Version 24.0.1) as a digital qualitative management tool to facilitate organization and analysis. 41 The interview transcriptions were coded using qualitative content analysis methods,42,43 which helped generate comparisons across cases to understand salient cross-case themes. The data were coded in various quotation increments depending on context of the quotation. 44 The first pass of coding involved inductive free coding, which was narrowed by collapsing and integrating codes to remove redundancy during the second pass which involved describing and defining each code. To enhance rigor, a sample of the transcripts were double coded, with the lead qualitative researcher (who collected all data) coding 100% of the transcripts and a second coder independently double coding 35% of the transcripts. The two coders met weekly to review discrepancies and resolved discrepancies with members of the larger research team. Code and concept maps 45 were developed to serve as visual network representation of the coded data and to facilitate emergent categories across the different transcripts, eventually leading to three main themes. Documents (e.g., proposals and MOUs) were analyzed using similar methods and coded separately from the transcribed interviews. Descriptive statistics were conducted to quantify the number of exchanges needed between NTAE and Cases to secure DUAs and MOUs.

Results

Overview of cases (n = 4)

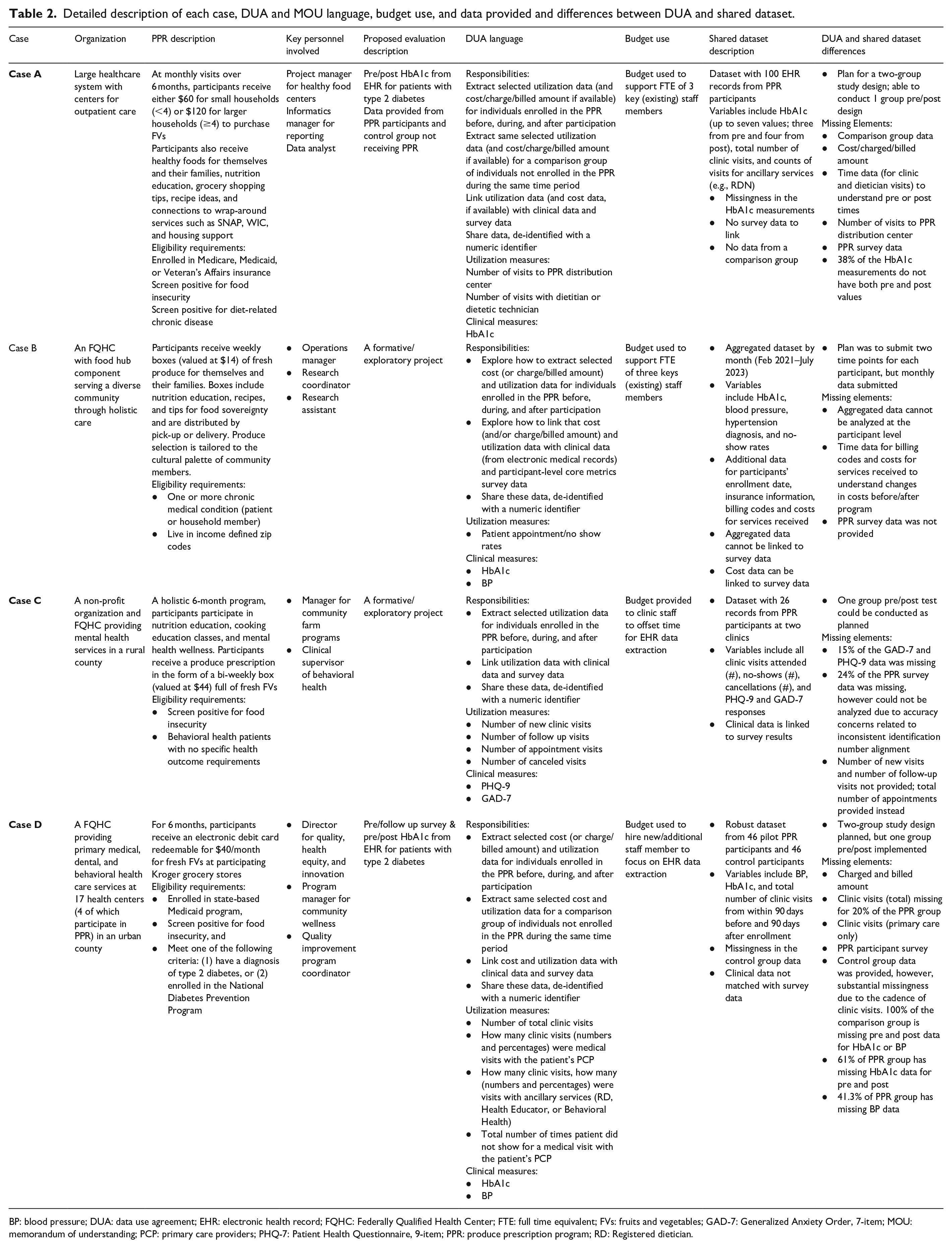

Table 2 presents a summary of the four Cases who participated in this project. Case A is a large healthcare organization located in a large metropolitan area (Central US), Case B is a single federally qualified healthcare center (FQHC) located in a moderately-sized metropolitan area (West US), Case C is a community-based organization that partners with an FQHC in a rural area (Southwest US), and Case D is a large network of FQHCs located in a large metropolitan area (West US). Grantees served populations ranging from approximately 300 to over 3000 annually across one or more clinics. Often, the EHR projects focused on a single clinic or a specific subpopulation, resulting in different numbers of participants than the overall project.

Detailed description of each case, DUA and MOU language, budget use, and data provided and differences between DUA and shared dataset.

BP: blood pressure; DUA: data use agreement; EHR: electronic health record; FQHC: Federally Qualified Health Center; FTE: full time equivalent; FVs: fruits and vegetables; GAD-7: Generalized Anxiety Order, 7-item; MOU: memorandum of understanding; PCP: primary care providers; PHQ-7: Patient Health Questionnaire, 9-item; PPR: produce prescription program; RD: Registered dietician.

Of note, a fifth grantee applied and engaged in a “pre” interview with the research team. However, shortly after that interview, the staff member of that healthcare organization who was leading the effort resigned from their position. Following, the organization did not have sufficient staff capacity to engage in the project and withdrew their application. No DUA, MOU, funding distribution, or data transfer occurred. Therefore, the fifth grantee was not included in any tables or results in this paper.

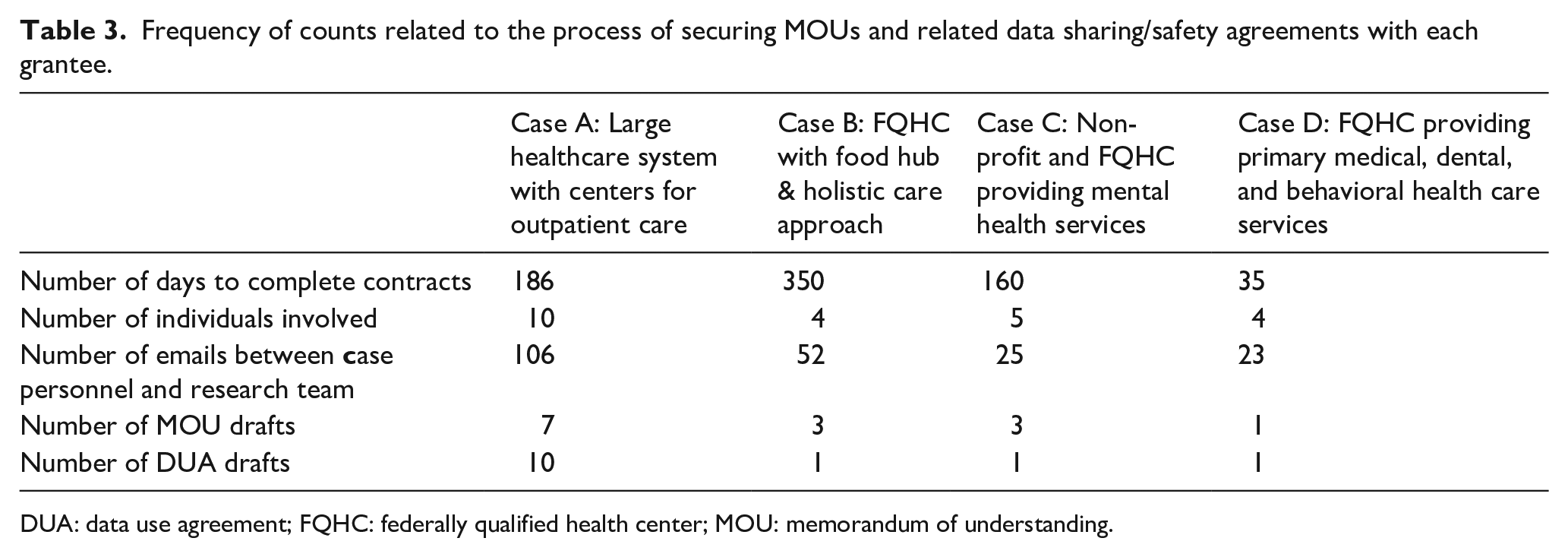

Establishing DUAs and MOUs and submitting EHR data

Establishing DUAs and MOUs proved to be time and resource intensive among all four cases. Challenges centered around identifying the “right” person at any given organization to execute and sign the documents and identifying the type of data (e.g., metrics) to collect and share. Table 3 provides a quantified representation to understand the processes, time/resources and expertise needed, and experience with executing DUAs and MOUs with each grantee.

Frequency of counts related to the process of securing MOUs and related data sharing/safety agreements with each grantee.

DUA: data use agreement; FQHC: federally qualified health center; MOU: memorandum of understanding.

Descriptions of each Case and MOU/DUA language, budget use, and EHR data provided are shown in Table 2. The four Cases varied in priority populations, program delivery strategies, and the outcomes that were submitted from their EHR. Therefore, NTAE researchers chose not to combine data across these programs, and instead analyzed individually for each case as the data allowed. All four of the Cases submitted data that had missing elements including comparison data (e.g., control group), utilization data (e.g., missed versus attended appointments; charged or billed amounts), time data to compare outcomes during the baseline and post period, number of visits to the PPR distribution center to pick up fresh FV, and PPR survey data. For Case B, aggregated monthly data was submitted instead of participant-level data that could be used to analyze participant outcomes. This was related to limitations in the EHR technology available to Case B. As delineated in Table 2, only one Case (B) shared cost data in the form of billing charges. The other three Cases had no access to cost data.

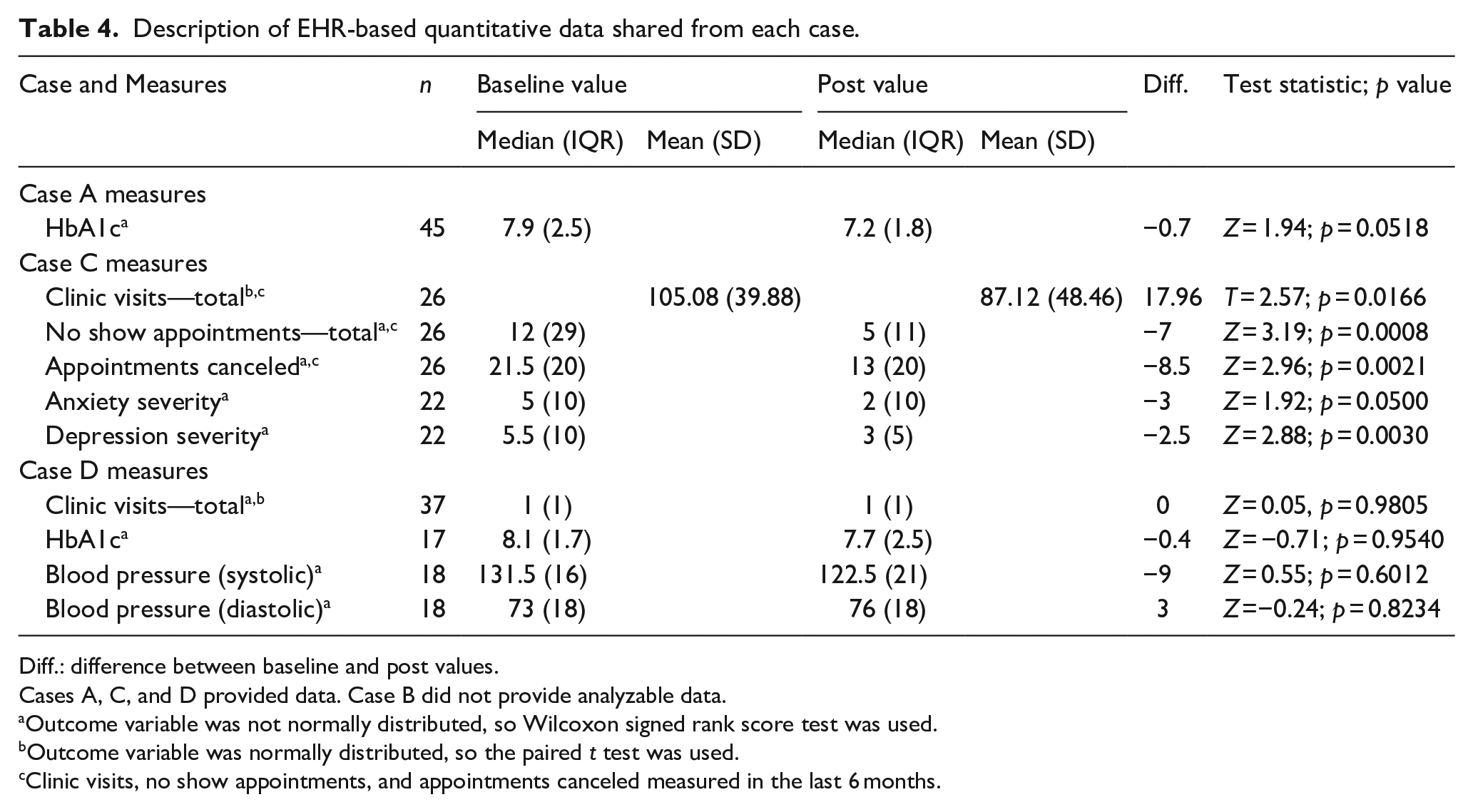

For the health outcomes that were submitted, there was missingness when baseline and post data were matched for each participant; this missingness ranged from 0% to 100%, depending on the specific outcome. A description of the quantitative data shared is found in Table 4.

Description of EHR-based quantitative data shared from each case.

Diff.: difference between baseline and post values.

Cases A, C, and D provided data. Case B did not provide analyzable data.

Outcome variable was not normally distributed, so Wilcoxon signed rank score test was used.

Outcome variable was normally distributed, so the paired t test was used.

Clinic visits, no show appointments, and appointments canceled measured in the last 6 months.

Changes in health outcomes

For health outcome variables, Case A (n = 45) used HbA1c and observed a non-statistically significant decline in median values (p = 0.052). Case B provided no health outcome data. Case C measured anxiety severity and depression severity as their main health outcomes. Among the 22 participants, median anxiety severity (p = 0.05) and depression severity scores significantly decreased (p = 0.003). Case D used HbA1c and blood pressure (SBP and DBP) values. Median HbA1c values (n = 17) declined after the program, but this difference was not statistically significant (p = 0.95). For SBP (n = 18), values improved slightly but were not statistically significant (p = 0.60). DBP values increased slightly, but were not statistically significantly different (p = 0.82).

Changes in healthcare utilization

Healthcare utilization was analyzed for Case A, using total clinic visits over 6-month PPR participation period, and no statistically significant changes were observed after the program (p = 0.98). The median number of visits both at baseline and after the program was one visit (IQR = 1). Case B provided no healthcare utilization data. Case C provided the total number of clinic visits (attended), the total number of no-show appointments, and the total number of appointments canceled for 26 participants. The average number of clinic visits decreased and (95% CI: 3.55, 32.37) was statistically significant (p = 0.017); the median number of no-show appointments (p < 0.001) and the median number of canceled appointments (p = 0.002) also decreased. Of note, Case D did not provide data to analyze for healthcare utilization.

Qualitative findings

Three salient themes emerged from the qualitative dataset. These included: (1) facilitators to collecting and sharing EHR-derived healthcare outcomes, utilization, and cost data; (2) challenges encountered with collecting and sharing EHR-derived healthcare outcomes, utilization, and cost data; (3) capacity building and future directions in collecting and sharing EHR-derived healthcare outcomes, utilization, and cost data. Categories that support each theme as segregated by each grantee are illustrated in Table 5.

Qualitative themes delineated by each case.

DUA: data use agreement; EHR: electronic health record; MOU: memorandum of understanding; PHI: private health information; PPR: produce prescription program.

Theme #1: Facilitators to collecting and sharing EHR-derived healthcare outcomes, utilization, and cost data

High motivation to learn how to and to “practice” collecting and sharing EHR-derived healthcare outcomes, utilization, and cost data was a key facilitator across Cases. Participants expressed the desire to better evaluate their programs, expand opportunities to receive funding, and share findings with participating parties, such as healthcare providers and local growers/food suppliers who provide fresh FV for the PPR projects. One grantee shared: And so, I’m really proud of what we found. I think this is the first time we’re able to do it and the results are super exciting to share back with our providers. We did it at the provider meeting last week and then tomorrow, we’re going to get some feedback on what does this data mean to you? So really creating a culture of using data to affect services and care. And the other exciting thing on the other spectrum is tomorrow we’re having a farmer’s dinner with the farmers who (. . .) created or grew the produce, and they get to see these graphs, like how did their produce affect people’s health? And I think that’s really exciting for us. Case B

Motivation also stemmed from grantees striving to be compliant with GusNIP PPR grant funding requirements. Other key facilitators included strong leadership support, a dedicated (paid) staff member to extract the EHR data, and in two of the four Cases, a strong internal evaluation and quality improvement team.

Theme #2: Challenges encountered with collecting and sharing EHR-derived healthcare outcomes, utilization, and cost data

Participants also shared key challenges in collecting and sharing these data. Three of the four Cases discussed time as a key challenge, given the need to manually extract data from their EHRs. One Case explained: I can’t just punch in names and then pull a data and then get a compile list. I had to go individually into somebody’s [chart], then I had to punch in the data for them, and then. . . I had to put in the particular dates, and then I had to pull the data and then transfer it [to the spreadsheet]. I had to go in individually for each client to do that (. . .) and sometimes the system isn’t always cooperating, so you have to finagle it a little bit. Case C

Participants from three of the four Cases also noted this was their first time working within their EHRs to extract healthcare outcomes, utilization, or cost data and discovered profound limitations with the technology. These challenges included an inability to automate longitudinal data extraction (e.g., software will only report most recent lab value) and to extract disaggregated data, and an inability for multiple programs and software to “talk to” one another. Participants also discussed concerns with missingness in their datasets and went to great lengths to triple check data for protected health information (PHI) prior to sharing with the NTAE. Another challenge (in two of four cases) was determining what type of healthcare outcome, utilization, and cost data would best answer their research question as tailored to their program implementation protocols, such as inclusion criteria for eligible patients.

Theme #3: Capacity building and future directions in collecting and sharing EHR-derived healthcare outcomes, utilization, and cost data

Participants acknowledged their naiveté in using EHR data for evaluation, cited this project was a “steep learning curve,” and conveyed feeling far more prepared should they engage in such an endeavor again. Clear communication, knowing what resources (e.g., technology and staff expertise) were available, and understanding limitations (e.g., technology) from the start of the project were discussed as helpful learnings for when they work with EHRs in the future. Participants discussed challenges with securing MOU, DUA, and IRB approvals and indicated the capacity needed for securing these agreements and approvals, especially given that this was done as a part of a small (e.g., $10,000) grant. All participants suggested needing flexibility and the ability to tailor their evaluations, which were permitted within this project. They suggested that the ability to choose what metrics “make sense” for their program, technology, and staff capacity was imperative to their success, self-efficacy, and ultimately their willingness to continue this type of evaluation. One participant shared: So, what I’ve been enjoying, and it goes along with some of the advice, is with this small grant, I like the flexibility that we had to customize our searches based on what’s realistic and feasible for us. So, we did appreciate that because we were able to be creative and just really look into our systems and see, okay, this is possible. This is not possible. So, I think if they were to do something like this, I think giving organizations this flexibility for them to kind of provide this information based on what is feasible for them. Because every healthcare center has a different platform. Some may use Epic, a whole different, we use NextGen. They may use a different population database than we do. So, there’s different limitations and capacities with all these different platforms. So, I think we can have the same flexibility. I think it’s possible. And that’s how we were able to complete this program was because of how [the NTAE] were and us customizing the searches. Case D

Most participants were not aware what type of data they had access to (or did not have access to, in the case of cost data) and expressed needing an opportunity such as this small grant project to familiarize themselves with data availability. Participants discussed the need to have a dedicated, paid staff to conduct the EHR data extraction and emphasized the time it takes, even for skilled employees. The following participant, a quality improvement staff member, suggested: I mean, funding plays a big role. I mean, in reality, we only had 46 patients, which maybe to some, it’s just considered a little bit of patients. It’s definitely less than a hundred, but these 46 patients just took up a lot of time. And also because of that manual work, and we didn’t have the capacity to pull data so easily from even our own platforms. So, definitely having a staff member that just is dedicated to doing this manual work and this data would be a good thing to invest in. And then we also have to keep in mind, we also have probably other deliverables and other work tasks. So, definitely a staff member to just be dedicated on this project and pulling data. And yeah, I think that’s my number one. Case D

Participants were overwhelmingly grateful for this opportunity and the ability to “practice” without punitive consequences should they be unable to collect data they originally planned to collect (e.g., their DUA did not match the final shared dataset and less data than was proposed in the DUA was shared). When asked how national evaluators (e.g., NTAE) could best support GusNIP PPR grantees in collecting and sharing EHR-derived datasets, participants from one focus group shared:

I think just stay flexible. (. . .) I really appreciate (that) we’re on this journey together it’s exploration and that is helpful (. . .) That’s the most helpful.

Yes, I completely agree. I like that we were able to kind of customize this project to fit our agency in our community.

Same. I want to echo what was just said. I think it’s been really great having that reassurance from your team [NTAE] that everything will be okay and that this is a learning process for all of us. Cases A and B

Discussion

Together, these findings tell a story of each grantee’s experience with collecting, extracting, organizing, and sharing EHR-derived data for their healthcare outcomes, utilization, and cost evaluation. Community-based organizations and non-research medical institutions (e.g., FQHCs) are increasingly engaged in addressing social determinants of health under a clinical care model, such as FIM interventions. These projects require increasing engagement in clinical evaluation processes, despite well-established challenges to using EHR-based data for research. 46 Findings from this case study analysis can be used as an example of how EHR-based evaluations are operationalized within these non-research institutions.

A key facilitator for all four Cases in this study was high motivation to engage in this project. With a relatively small amount of funding (e.g., $10,000), these four grantees willingly applied to participate in this project, suggesting from the outset they were motivated to engage. Nevertheless, motivation proved to be key throughout the duration of the small grant funding cycle. Further unpacking motivation, we can draw on the Theory of Acceptability in Healthcare Interventions which posits that acceptability is a multi-faceted construct that centers on seven constructs: affective attitude, burden, perceived effectiveness, ethicality, intervention coherence, opportunity costs, and self-efficacy. 47 Participants verbalized their improved self-efficacy, perceived effectiveness (e.g., need), and burden (e.g., time) throughout the interviews and focus groups. Since motivation was such a key driver in each grantee case’s willingness to participate and persevere despite the challenges in this project, it is prudent to consider how to capitalize and leverage intrinsic motivation of key parties (e.g., CBO leadership and staff, clinical staff) to engage in such evaluation processes.

There are well-established challenges with data sharing within public health research—one literature review summarizes them as technical, motivational, economic, political, legal, or ethical challenges. 48 Findings from this study can be further mapped with these challenges 48 —in that technology and economic challenges persisted across three of the four grantee cases. The only Case that did not struggle with technical and economic challenges was that of Case A, as this large medical institution had well-built infrastructure, expertise, and resources to collect such EHR-based data. Of note, legal challenges in executing the DUA and MOU documents with this Case were arguably the most challenging and required the most time, personnel, and “back and forth” between the NTAE and grantee. The only Case who was not a healthcare organization, but rather a CBO (Case C), experienced challenges related to data access—this Case relied on an external evaluator and experienced challenges related to new partnerships and period of growth regarding collaborative efforts across entities (e.g., clinic, CBO, external evaluator). Across all Cases, the small grant budget was used to offset FTE of a clinical staff member to extract the EHR-derived data, and it was strongly emphasized that one dedicated paid staff member (versus several different staff contributing) was the most effective way to extract this data. Those who extracted EHR data for this project said they intended to share the task across several colleagues, but in the end, the learning curve was so steep that it was more effective to just “do it myself.” However, they also noted if the sample size were to increase, manual data extraction by just one person would not be sustainable. Finally, though not overtly stated as a barrier, the legal and ethical challenges related to attempts to share PHI required grantees to spend an inordinate amount of time manually checking each dataset prior to uploading and sharing with the NTAE. This manual “triple check” explained by three of the four Cases likely contributed to overextension of the FTE committed to the project.

In all Cases, different entities (e.g., evaluators, clinic staff) and/or technologies (e.g., Qualtrics, EPIC) were used to collect and extract data. This approach required a tremendous amount of time to harmonize, especially when data were deidentified and individual-level records (e.g., survey and EHR data) needed to be linked. In only one Case (C), survey data was linked to clinical data. Of note, this Case requested a 4-month extension to conduct this lengthy process. Case D suggested they could have logistically connected these datasets, but they lacked time and personnel to do so. Two of the Cases (A and B) did not have the ability to connect survey-based data with EHR-extracted data for various reasons. Even within clinical organizations, there were often different platforms or programs where data was housed. Though trying to link de-identified data was not a concern because all clinic staff had access to identifiable data on all platforms/software programs within their organization, manually “searching” different programs/platforms for data was very time consuming and time-prohibitive.

All Cases, as well as the NTAE, recognized the need to build capacity for evaluations featuring EHR-derived data in the future. For three of the four Cases, this was the first time they had ever participated in an EHR-derived data extraction and sharing project. For the NTAE, this project provided an opportunity to establish DUA and MOU templates, a secure site for data transfer, and an understanding for how to best support GusNIP PPR grantees with evaluations in healthcare settings (e.g., provide flexible advice on suggested data to extract). Additionally, all grantees needed to establish or amend IRB approvals to access and share human subjects data (e.g., EHR data) as required for all research involving humans the United States. Some grantees had straightforward access to an IRB (e.g., the healthcare organization itself has its own IRB), while others had to collaborate with University-based collaborators to route the IRB protocol through an external University-based IRB.

The challenge of working across sectors and linking de-identified EHR datasets is documented in the literature. 49 These findings align with published literature regarding the need for improvements in technology, technical skills, and innovative approaches to integrating datasets. 49 In addition, there are opportunities for technical assistance and support from funders and evaluators that can mitigate aforementioned challenges. First, enhancing intrinsic motivation from key players involved (e.g., CBO, clinical organization) and harmonizing motivational factors and goals across parties from the project’s inception would improve communication and mitigate competing priorities. Second, it is prudent to underscore flexibility and tailoring of what kind of metrics should be collected given differences in PPR protocols, grantee data access and infrastructure, and types of data available. Providing support to decide what metrics adequately answer the research and evaluation questions specific to any given PPR requires those giving advice to understand the PPR in its entirety (e.g., patient inclusion criteria, relationship with clinical site, leadership support, technical infrastructure, etc.). Third, templates for DUAs and MOUs, scripts for email communication with leadership, opportunities for peer-to-peer learning, and examples of metrics (e.g., healthcare outcomes) can be helpful tools for organizations for whom these processes are new. Additionally, suggestions on what “type” of expertise should be on the evaluation team (e.g., quality improvement or data management specialists) to assist with tasks such as extracting data or who have specific expertise in the EHR technology. It is noteworthy that some of these highly specialized roles may not be available to smaller organizations, as we observed with Cases B, C, and to some extent, Case D. Strengths of this study include multiple methods of data collection to understand a full picture of each Case’s experience in collecting data derived from an EHR. The collaborative nature of the partnership between each Case and the NTAE allowed for bidirectional learning in a trusted and supportive space. A key limitation to this project is related to generalizability to other PPR grantees in that the selected Cases were highly motivated to participate in this evaluation and may not represent the level of motivation for other PPR grantees (e.g., selection bias). Another limitation is that none of these Cases opted to collect data on emergency department utilization, hospital admissions, or readmissions. Much “food is medicine” literature focuses on these types of programs as potentially effective in decreasing “big ticket” healthcare costs as a means to bolster support from payers.50,51 The paucity in this type of data collected by the Cases in this study is largely because as FQHCs, they do not have access to the hospital-based EHR where this data would be tracked.

EHR data holds much promise but presents hurdles within FIM. Drawing upon facilitators and overcoming challenges outlined in this case study to EHR data extraction within FIM interventions will help to strengthen the evidence base and build capacity for healthcare delivery models to demonstrate public health impact.

Footnotes

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: The GusNIP NTAE Center is funded through a cooperative agreement and is supported by Gus Schumacher Nutrition Incentive Program grant no. 2019-70030-30415/project accession no. 1020863, grant no. 2023-70435-38766/project accession no. 1029638, and grant no. 2023-70414-40461/project accession no. 1031111 from the USDA National Institute of Food and Agriculture.