Abstract

This paper evaluates the effectiveness of timeouts in the EuroLeague using Play-by-Play data to estimate short-run within-game effects and Box-Score data to assess season-level outcomes over the 2021–22 to 2023–24 regular seasons. Within games, timeout effectiveness is identified using an event-study framework comparing team performance in fixed possession windows immediately before and after each timeout, complemented by a difference-in-differences strategy exploiting situations in which teams are unable to call additional timeouts. Performance is measured by the point differential (points scored minus points conceded) over these windows. Under the identifying assumption that pre-timeout trends are comparable across treated and control situations, timeouts are associated with a statistically significant short-run improvement: the average point differential increases from

Keywords

Introduction

Timeouts are a central coaching instrument in basketball, used to interrupt play, adjust tactics, and potentially influence short-run performance dynamics. Despite their strategic importance, empirical evidence on the effectiveness of timeouts remains mixed, particularly regarding whether they can alter in-game momentum or generate persistent performance gains. This paper evaluates the effectiveness of timeouts along two dimensions: their short-run impact on within-game performance and their longer-run implications for team success over an entire season. We define momentum as a short-run deviation in the scoring process from a team’s recent scoring baseline. Operationally, momentum is captured by sustained positive or negative scoring differentials over consecutive possessions, which we measure through (i) uncontested scoring runs and (ii) changes in the local point differential within fixed one-minute windows. Under this definition, a team is said to experience negative momentum when it concedes a sequence of points without response, generating a locally negative scoring differential relative to its recent performance.

This definition allows us to treat momentum as an observable feature of the scoring process rather than as a purely psychological construct. Timeouts are therefore interpreted as interventions aimed at altering the short-run conditional expectation of the point differential. The empirical question becomes whether, conditional on pre-timeout dynamics, the scoring process exhibits a statistically significant shift in its conditional mean following a timeout call. 1

From a theoretical perspective, timeouts may affect short-run performance through several non-mutually exclusive channels: (i) tactical adjustments that alter shot selection or defensive schemes, (ii) substitution patterns that modify lineup efficiency, and (iii) psychological interruption of opponent scoring sequences. In reduced-form terms, these mechanisms imply a shift in the conditional mean of the scoring differential immediately after the timeout. Our baseline empirical strategy does not separately identify these channels; instead, it estimates their combined effect on the local scoring process. 2 This conceptual framework aligns directly with our empirical design, which focuses on local changes in scoring performance in narrowly defined windows around timeout events.

A joint analysis of the immediate effectiveness of timeouts and their season-level implications requires combining two complementary data sources. Play-by-play (PbP) data provide high-frequency information on all in-game events and allow for the identification of short-run performance changes around timeout calls, while Box-Score data summarize player and team outcomes and permit an assessment of cumulative performance over the season. Such detailed data have been extensively exploited in NBA research, but comparable evidence for the EuroLeague remains limited.

The main contribution of this paper is a comprehensive evaluation of EuroLeague coaches’ timeout decisions. By focusing on the EuroLeague, we extend the timeout literature beyond the NBA and provide evidence from a competition characterized by different rules, playing styles, and institutional features. In particular, only coaches are allowed to call timeouts in the EuroLeague, which allows for a cleaner interpretation of timeout decisions as coaching interventions rather than shared player–coach choices. This institutional setting strengthens the external validity of our findings for evaluating coaching behavior.

Using detailed EuroLeague PbP data, we examine whether timeouts effectively interrupt opponents’ momentum. We measure timeout effectiveness using the Score Differential Impact (SDI), defined as the difference between points scored and points conceded in the minute following a timeout relative to the preceding minute. We find that timeouts are associated with a statistically significant improvement in short-run performance: on average, they reduce the magnitude of negative scoring differentials but rarely reverse them. This suggests that timeouts primarily function as a stabilization mechanism rather than a tool for shifting game trajectories.

A key methodological contribution of this paper is the explicit treatment of the selection problem inherent in timeout decisions. We address this issue using two complementary strategies. First, we estimate the average treatment effect on the treated by comparing team performance immediately before and after a timeout within the same team, thereby controlling for time-invariant team characteristics. Second, we implement a difference-in-differences approach that contrasts post-timeout performance changes with comparable situations in which a timeout cannot be called.

We further assess whether timeout effectiveness has implications for long-run outcomes by extending the standard Four-Factor Model of Wins to include a measure of team-specific timeout effectiveness. While the traditional four factors continue to robustly explain team performance in the EuroLeague, the timeout variable adds no incremental explanatory power. This finding indicates that, despite their relevance in specific game situations, timeouts do not systematically affect season-long team success. The remainder of the paper is organized as follows. Section “Placing our contribution in the literature” situates our contribution within the existing literature. Section “Methodology” describes the data and methodology. Section “Results using Play-by-Play data” presents the Play-by-Play evidence, while Section “Results using box-score data” extends the analysis to Box-Score data. Section “Conclusion” concludes.

Placing our contribution in the literature

The effectiveness of timeouts has long been debated in basketball analytics. Existing studies primarily focus on NBA data and reach divergent conclusions, reflecting differences in definitions of momentum, identification strategies, and performance measures. This paper concentrates on timeouts outside end-of-game situations and therefore complements analyses of timeout usage in critical late-game contexts (Allgrunn et al., 2024; Vázquez-Estévez et al., 2025).

Early empirical work examines whether timeouts can halt negative scoring runs. Using NBA data, Vergara (2025) define runs as uncontested scoring stretches and find that timeouts can be effective, with timing and game context playing a crucial role. In contrast, an analysis based on over 380,000 events from the 3stepsbasket analytics platform (Eurohoops, 2021) defines runs using fixed possession sequences and reports evidence favoring non-intervention strategies. These contrasting results highlight the sensitivity of findings to operational definitions of momentum.

More recent contributions address selection bias by applying causal inference techniques. Assis et al. (2020) and Gibbs et al. (2022) estimate the causal impact of coach-called timeouts in the NBA and find little evidence of positive effects; if anything, the estimated effects are close to zero or slightly negative. These studies rely on rich sets of observable covariates, including score margin, run length, and game timing, but may still conflate heterogeneous team and coaching strategies.

A different approach is proposed by Weimer et al. (2023), who exploit the quasi-random timing of television timeouts as a natural experiment. They document a significant decline in subsequent scoring by teams previously experiencing positive momentum, providing compelling evidence that game stoppages can disrupt momentum. However, television timeouts combine exogenous stoppages with potential strategic adjustments, making it difficult to fully disentangle momentum interruption from coaching responses.

Our contribution differs from existing NBA-focused studies in two key respects. First, we provide new evidence from the EuroLeague, extending the external validity of the timeout literature to a distinct competitive environment. Second, rather than matching across heterogeneous teams and contexts, we explicitly compare pre- and post-timeout performance within teams, allowing for a more homogeneous assessment of timeout effectiveness. Our findings contribute to the ongoing debate by showing that timeouts in the EuroLeague generate statistically significant short-run improvements but do not reverse scoring dynamics or translate into sustained season-level success. Taken together, the existing evidence suggests that the impact of timeouts remains an open empirical question, shaped by context, definitions, and institutional features.

Methodology

To analyze the efficiency of timeouts and the empirical relationship between them and the wins of a team in a season we use two sets of data: i) Play-by-Play data to gauge the immediate effectiveness of a timeout call, ii) game Box-Score data to evaluate the impact of timeouts on the performance of teams over the course of a season by extending the traditional four factors model of wins with a timeout efficiency factor. Our sample includes all regular season games from 2021-2022 to 2023-2024. Both sets of data are retrieved from the official Euroleague GameCenter API. 3

Play-by-Play data

Play-by-Play data is a detailed log of all the events that occur during a basketball game. This data is recorded in real-time and includes information about every play, organized as follows:

Our use of Play-by-Play data

In order to assess timeout efficiency, we use PbP data to compute functions of a team game score, both in general and around the timeout, that allows to gauge statistically the effectiveness of the coach call. We construct two new variables: i) runs, as in Vergara (2025), which are namely uncontested scoring stretches from one team. In this case, we label a timeout called by the coach of the team which is falling behind in the game as successful if it halts the ongoing run 4 in the first possession after the timeout. ii) score differential impact, which is the difference between points scored by a team and points conceded in the minute before and after a timeout. In this case, we label a timeout as successful if this difference is negative.

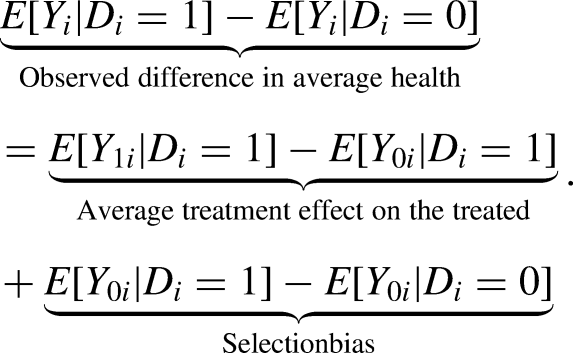

Measuring the impact of timeouts using PbP data helps overcome one of the most fundamental challenges in econometrics: selection bias (Angrist and Pischke, 2009).

Consider the case where we aim to measure the impact of a treatment, represented by a binary variable that takes the value of one for the generic individual

Now, consider a timeout as a treatment. The equivalent of an individual in our case is a team, and we consider teams in different years as different individuals. 5 Simply comparing performance after a timeout with average performance in general would conflate the effect of the timeout with the deteriorating trend that prompted it. This is a textbook case of selection on unobservables that vary over time.

To overcome this issue, we follow two strategies. First, we exploit the panel structure of PbP data, and treat team-season combinations as individual observational units to consider direct measures of

Second, we use a difference-in-differences (DiD) strategy. The idea is to compare the change in performance of teams following a timeout (treated group) with the change in performance of teams in similar situations where no timeout is called (control group). Specifically, we examine runs conceded by teams, comparing situations with and without a timeout. This method allows us to isolate the causal effect of the timeout from other confounding factors that might be influencing performance.

Formally, let

Equivalently, outcome trends are assumed to be the same for both groups in the counterfactual scenario without treatment. Formally, this condition can be expressed as the standard unconfoundedness assumption on potential outcomes:

Possible violations of this assumption may arise if only one of the groups experiences a change in outcome trends for reasons unrelated to the treatment, or if there are differential changes in the composition of the treated and control groups. In the context of basketball games, such violations could occur if teams that call timeouts differ systematically from teams that do not in terms of coaching strategy, lineup quality, or other situational factors that independently affect subsequent performance. To increase the plausibility of the parallel trends assumption, we introduce a novel exercise that, to our knowledge, has not yet been explored. Specifically, we compute the difference-in-differences estimator comparing situations in which a team suffering a certain run calls a timeout with situations where the same team suffering a run has no timeouts left and is therefore unable to call one. This additional analysis further supports our main findings, as the results remain consistent even in this restricted setting. In this specific context, the parallel trends assumption is strengthened by the fact that both treated and control observations refer to the same team, coach, and roster, thereby addressing concerns about differential group composition. A potential concern with the comparison between timeout calls and situations in which no timeouts are left is that the latter may itself be endogenous to coaching strategy. We discuss further and show that our specification is robust to this possibility in Appendix B.2.

Moreover, our causal inference framework also relies on the Stable Unit Treatment Value Assumption (SUTVA). In this context, SUTVA requires that the effect of a timeout on a given run is not influenced by other nearby timeouts, either for the same team or the opponent. That is, each timeout constitutes a well-defined treatment whose effect is independent of the timing or occurrence of other timeouts. This assumption rules out interference across treatments and ensures that the potential outcomes associated with a given timeout are uniquely defined. We come back to the practical implementation of this assumption in Section “Direct Tests”. For these reasons, because the inability to call a timeout is mechanically determined by independent prior timeout usage rather than by contemporaneous confounders, this comparison reduces the scope for differential changes in outcome trends unrelated to the treatment. As a result, the treated and control situations differ only in the availability of a timeout, lending additional credibility to the causal interpretation of the DiD estimates.

Alternative approaches in the literature have relied on propensity score matching to address selection bias. For example, Assis et al. (2020), Gibbs et al. (2022), Weimer et al. (2023) apply propensity score matching on a range of observable factors, including team identities, betting lines, game time, run duration, estimated win probability, and player substitutions. These methods aim to balance observed covariates between treated and control groups drawn from different teams and game contexts.

However, we consider it very difficult to fully disentangle the effect of a game stoppage from coaches’ strategic changes and other team-level unobserved factors that could influence the outcome. Therefore, we match observations within the same team to account for team fixed effects, allowing us to keep constant the team’s characteristics and context, which is a standard application of the difference-in-differences approach and of the parallel trends assumptions. As a result, we interpret our estimate as reflecting the effect of the timeout in altering the course of the game.

The only remaining challenge is to define appropriate performance indicators. Building on the concept of runs and score differential impact we construct and analyze three indicators.

Game Box-Score data

We retrieve a rich set of game Box-Score variables through the API. This allows us to obtain a comprehensive list of elements, capturing the names of the teams and their respective coaches, individual player statistics (labeled

Our use of Box-Score data

Since Play-by-Play data only allow for a local measure of the impact of timeouts—by evaluating team performance before and after a timeout–we complement this with an analysis of their global effect on performance. To do so, we use Box-Score data to estimate the standard four-factor model of wins, introduced by Oliver (2004) and described in Winston (2009) and Zuccolotto and Manisera (2020) and further developed in Migliorati et al. (2023) and Cecchin (2022). We then assess whether adding a fifth factor, capturing timeout efficiency, provides additional predictive power for wins beyond the standard four factors.

To define a season wide indicator of success timeouts we rely on the SDI. Formally, for team

In our baseline specification, we model performance using a one-minute window before and after each timeout. To ensure that our findings are not driven by the arbitrary choice of this window, we conduct a robustness analysis considering alternative time windows previously employed in the literature. For example, Gibbs et al. (2022) examine performance in the two minutes preceding a timeout and the one minute following it, while Weimer et al. (2023), within a different identification framework, evaluate performance over a three-minute interval after the timeout call. Across all these alternative specifications, our main results remain qualitatively and quantitatively unchanged, indicating that the estimated effects are not sensitive to the particular choice of the time window. 6

Results using Play-by-Play data

We use PbP data to analyze the general features of time out calls. We analyze regular-season data from three seasons, excluding the final minute of each of the first three quarters and the final two minutes of the fourth quarter. 7

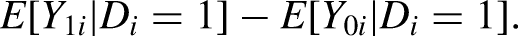

Figure 1 reports the share of total timeouts called in the three seasons (4300) when the team was home or away, or on the run or off the run. The evidence shows that being home or away makes very little difference in determining the coach’s attitude toward timeouts, while being off the run is a crucial factor as over 95 per cent of timeouts are called when the team is off the run. Figure 2 offers a more granular perspective by reporting, for home and away games, the counts of timeouts as a function of opponents’ scoring runs, defined as consecutive points conceded to the opponent.

When are timeouts called?

Home vs. Away Timeouts by opponents’ run.

The distribution exhibits a clear mode at five points, with the majority of timeouts occurring during runs of four to six points. Notably, a secondary local peak emerges at runs of two points, which may indicate a propensity among coaches to call a timeout immediately after their team relinquishes the lead. The distribution is homogenous for home and away games. 8

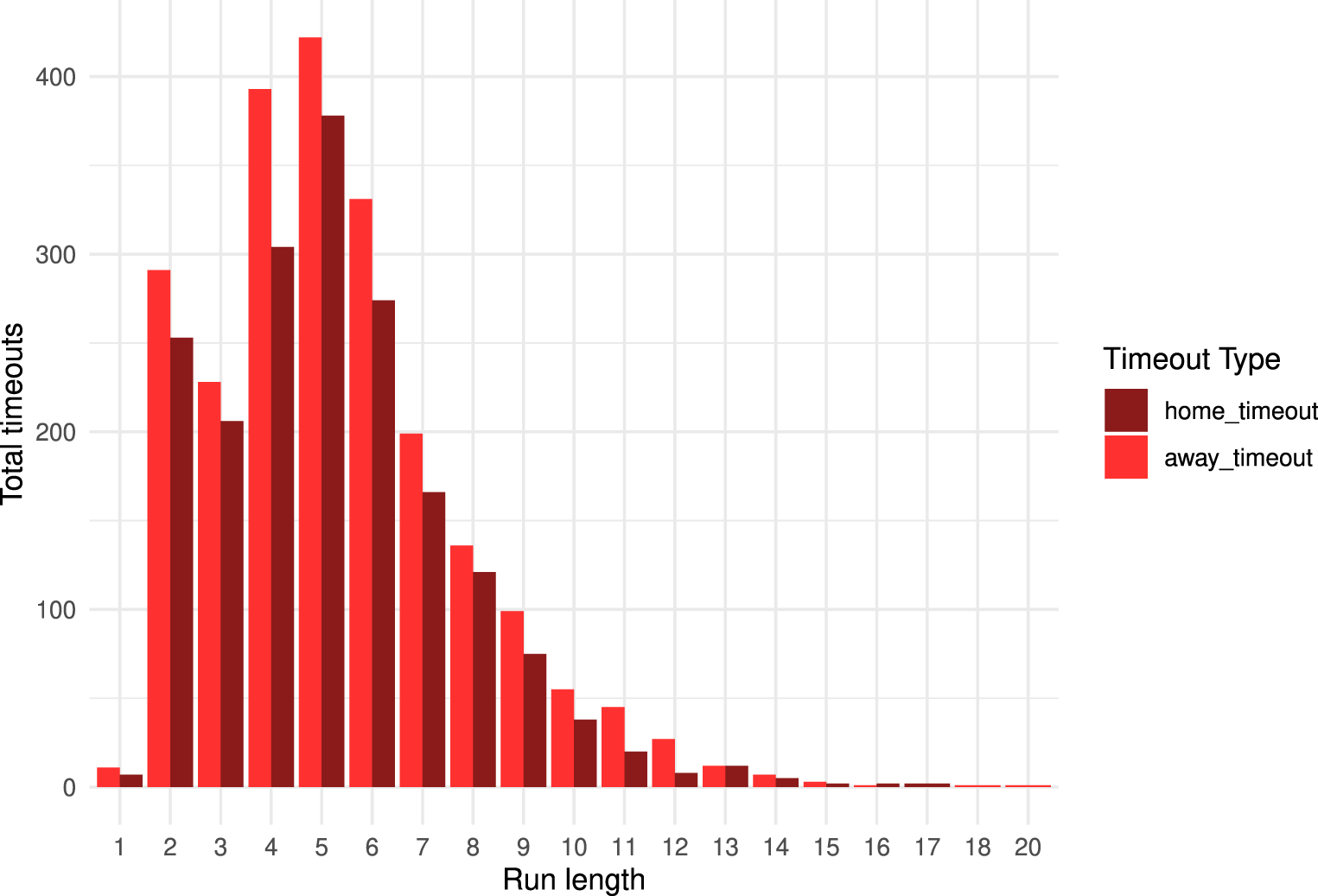

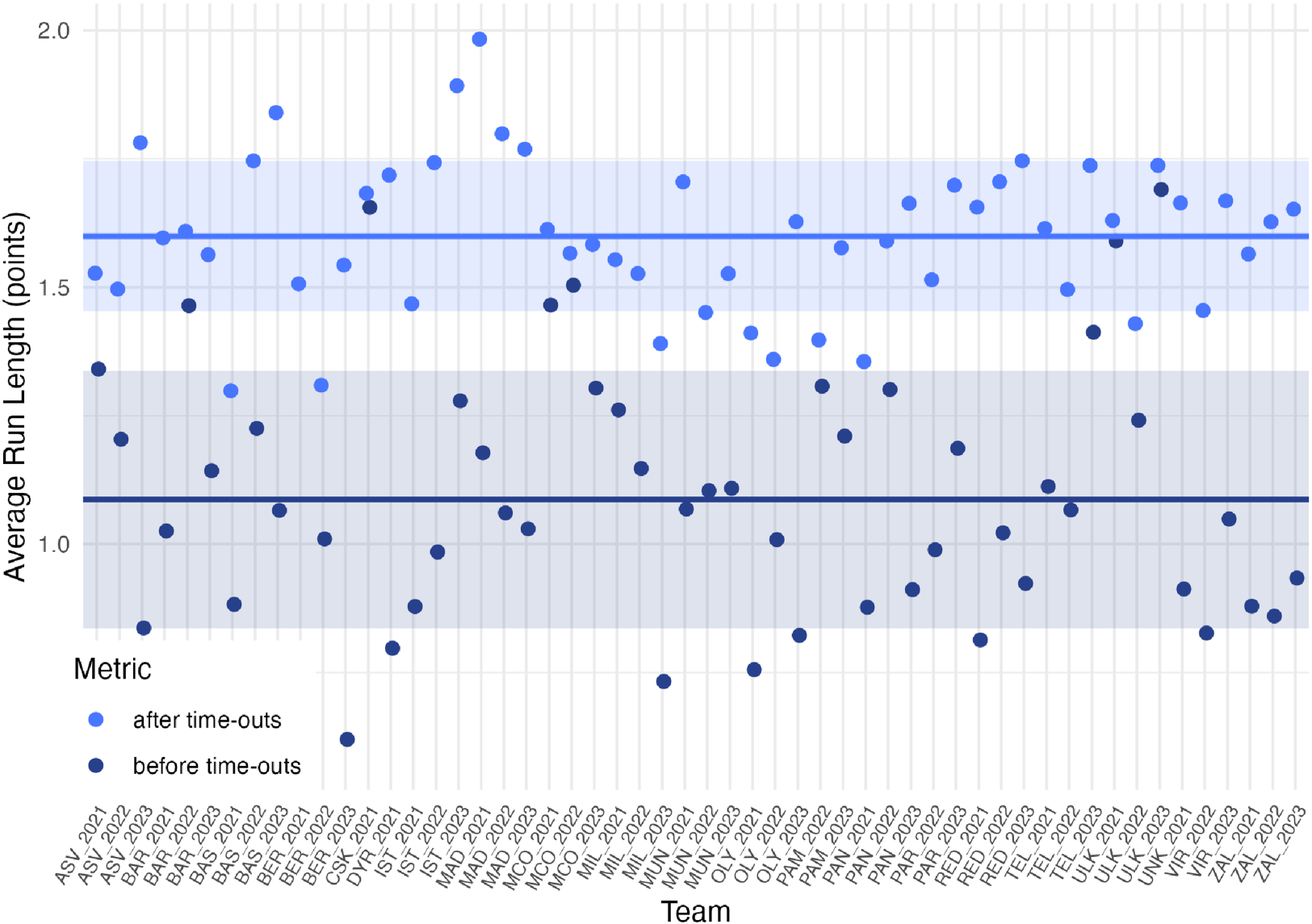

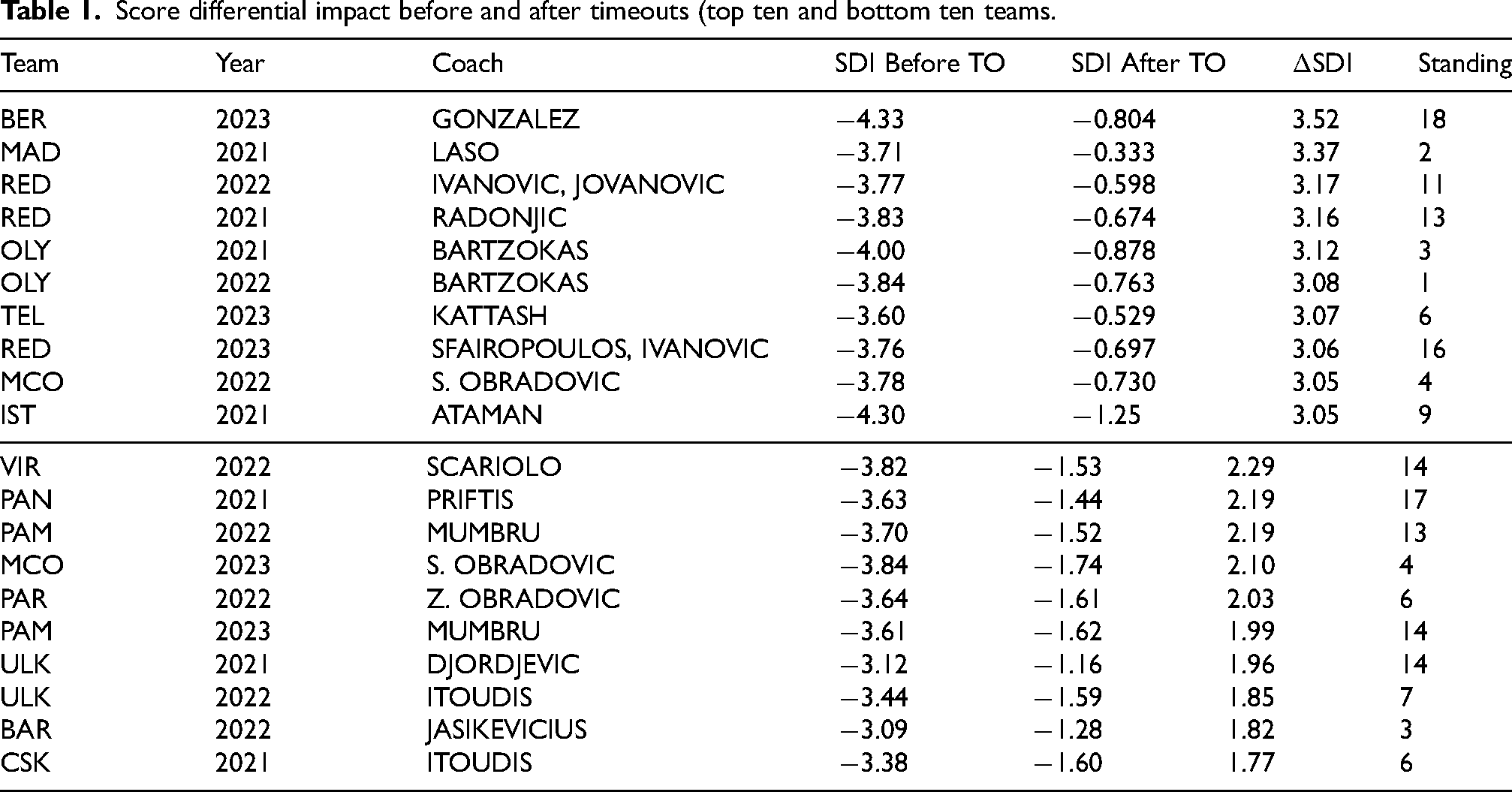

Figures 3–5 present graphical evidence on the effects of timeouts on our three performance indicators, which are computed by taking the averages for each team in each season for a total of 54 observations (18 teams over 3 seasons). Each figure reports the individual observations for the indicator, together with the corresponding sample mean and a confidence interval defined as

Average length of off-the-run streaks in the minute before and after a timeout.

Average length of on-the-run streaks in the minute before and after a timeout.

SDI: difference between points scored by a team and points conceded in the minute following a timeout, compared to the minute preceding the timeout.

Figures 3–4 indicate that timeouts exert some influence on the average lengths of both off-the-run and on-the-run streaks. While the figures provide a visual impression of timeout effectiveness as captured by these two indicators, the considerable width of the confidence intervals highlights the substantial uncertainty surrounding these estimates. The most informative evidence on the impact of timeouts on the score is provided by the Score Differential impact reported in Figure 5. The Figure shows that the difference between points scored by a team and points conceded is narrower in the minute following a timeout compared to the minute preceding it. However, the score differential never turns positive on average for any team, indicating that timeouts help limit losses but do not turn games around.

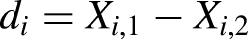

Table 1 complements the evidence by reporting the SDI for the top ten and the bottom ten teams in the league according to this criterion of timeout performance. Although SDI after timeouts are always smaller than SDI before timeouts, they never turn positive; not even for Israel Gonzalez, who is the most efficient coach in calling timeouts. It is also interesting to note that there is no clear pattern of association between timeouts effectiveness and standing of the team in the league at the end of the season. Again, Alba Berlin, the best performing team around timeouts, came last in the regular season 2022-23.

Score differential impact before and after timeouts (top ten and bottom ten teams.

Direct tests

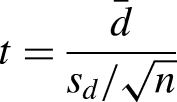

Our first strategy to gather statistical evidence on the causal effect of timeouts is to test directly the significance of the average treatment effect on the treated. In particular, we adopt the statistical tests used to measure performance differences in the same subjects under different conditions in medical research (pre/post treatment effects), finance (comparing investment returns), and psychology (effect of interventions) to the analysis of the differential team performance after the timeouts and before the timeouts. We implement two tests: the paired t-test, originally introduced by (Gosset, 1908),

9

and the Wilcoxon text (Wilcoxon, 1945).

10

As, as pointed out in Gibbs et al. (2022), a necessary assumption that must hold for our causal inference framework assumption is the Stable Unit Treatment Value Assumption (SUTVA): a timeout’s effect on a given run should not be influenced by other nearby timeouts. To limit potential interference, we retain only timeout observations that are isolated: specifically, we exclude any timeout for which another timeout occurs within a

The

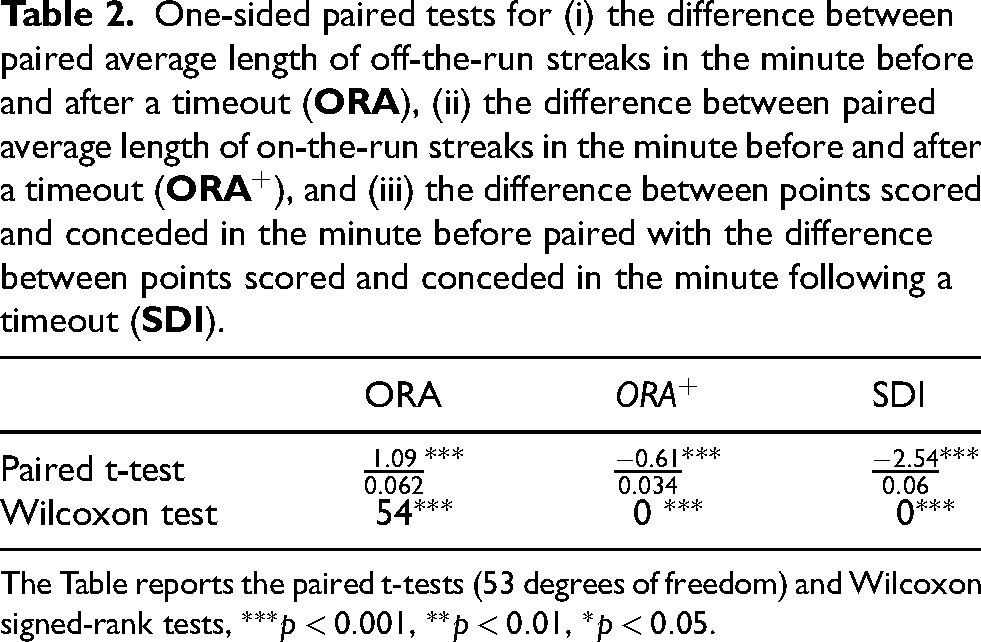

Table 2 reports the results of the implementation of the two tests to (i) the difference between paired Average length of off-the-run streaks in the minute before and after a timeout (

One-sided paired tests for (i) the difference between paired average length of off-the-run streaks in the minute before and after a timeout (

The Table reports the paired t-tests (53 degrees of freedom) and Wilcoxon signed-rank tests,

The statistical results indicate that the null hypothesis is consistently rejected, implying that timeouts have a statistically significant effect in limiting losses. To assess the magnitude of this effect, consider the case of the SDI. The estimated average difference between points scored and points conceded in the minute before the timeout and the minute after is –2.54. This implies that timeouts locally improve team performance by 2.54 points on average, and the null hypothesis that this effect equals zero is rejected at the 1 per cent significance level. However, timeouts do not reverse the overall scoring imbalance. Teams calling a timeout continue to concede more points than they score: although the point differential in the minute after the timeout is less negative than in the minute before, it remains positive, indicating that timeouts mitigate losses but do not fully eliminate them.

This evidence is robust to a series of robustness checks designed to assess the stability of our results. Across all specifications the sign and magnitude of the estimated effects—and their statistical significance—remain in line with our main findings.

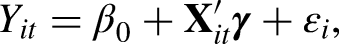

Our robustness analysis is well illustrated by a model representation of our approach for the SDI index of performance. We consider a linear model for the conditional expectation of SDI that incorporates both the timeout indicator and a vector of relevant covariates. Let

The coefficient of interest is

The results from the estimation of the linear model are reported in Table 3. The model is estimated on all data points (without averaging across teams and seasons), for a total of 2879 observations.

Linear models for SDI.

Notes: Standard errors in parentheses.

The intercept of the model can be interpreted as the expected value of the Scoring Differential Index (SDI) and it matches the

The evidence from Diff-in-Diff test

Our second empirical strategy is based on a setting that approximates a quasi–natural experiment: we compare runs conceded by a team to runs conceded when no timeouts are left, i.e., when the coach cannot halt momentum to reorganize the team or implement strategic adjustments. This comparison provides a plausibly exogenous counterfactual for the effect of calling a timeout. As shown in Figure 6 and Table 4, the results align with our main finding, both graphically and statistically. Figure 6 show that timeouts have some impact on the average length of off-the run streaks, where off-the run streaks are considerable higher when the coach does not have the possibility to call a timeout with respect to the case where timeouts are available.

Difference between off-the run streaks conceded by a team and off-the run streaks conceded when no timeouts are left for the coach.

One-sided paired tests for the difference between paired averages:

The table reports the paired t-test (53 degrees of freedom) and Wilcoxon signed-rank test;

Table 4 reports the results of the implementation of the two tests of the previous section to the difference between paired Average length of off-the-run streaks conceded by a team during the whole game vs off-the-run streaks conceded when no timeouts are left.

Both tests point in the same direction: the paired mean difference is negative (

Limitations

Although our results are robust across multiple empirical approaches, they should be interpreted in light of their limitations. First, our analysis focuses on short-run outcomes measured in narrowly defined post-timeout windows, which limits the potential impact of confounding factors but may not fully capture longer-lasting tactical or psychological effects that unfold over extended stretches of the game. Second, although we control for a rich set of contextual variables, in the case of relevant omitted variables, even combined non-parametric and parametric approaches may not fully isolate causal effects. A related limitation concerns the potential endogeneity of timeout usage to gameplay dynamics. In particular, timeouts may not be entirely exogenous to performance changes, especially during the regular season. Early in the season, coaches may use timeouts to experiment with specific plays, lineups, or tactical adjustments, rather than solely to interrupt opponent momentum. As a result, the state of being without timeouts left—while central to our identification strategy—may itself reflect earlier strategic experimentation or learning processes that evolve over the course of the season. Future research could address this concern by focusing on playoff games or late-season matchups, where coaching strategies and team mechanisms are more stable, thereby reducing the scope for experimentation-driven timeout usage and strengthening the exogeneity of timeout availability. Early in the season, timeout usage may reflect coaching experimentation with plays, lineups, or tactical adjustments rather than efforts to interrupt opponent momentum. Consequently, the absence of remaining timeouts, while central to our identification strategy, could partly capture earlier strategic choices or learning processes that vary over the course of the season. We address this concern by explicitly examining later-season matchups, as detailed in Appendix B.2, where incentives are clearer and strategic behavior is more stable. Nonetheless, further strengthening the exogeneity of timeout availability remains an important avenue for future research. In particular, focusing on playoff games or on high-stakes regular-season matchups, such as contests involving teams at the top of the standings or well established rivalries, could further limit the role of experimentation in timeout usage and provide an even cleaner setting for causal identification.

Third, our analysis relies exclusively on regular-season Euroleague data, which complements the evidence for the NBA but may limit the generalization of the findings to other less important leagues or stages of the competition.

Results using Box-Score data

The evidence from Play-by-Play data suggests that timeouts have a statistically significant, yet modest, local effect. This finding naturally raises the question of their impact on overall team performance. Although timeouts appear to mitigate losses during critical phases of the game, they do not necessarily alter its eventual course. The relevant issue, then, is whether one can identify any systematic influence of timeouts on team performance over the regular season, as measured by Box-Score data.

To address this question, we specify first a standard model that links basketball performance measured by wins in a regular season 11 to a few key factors, to then investigate whether a factor measuring timeout efficiency adds explanatory power.

Our benchmark model is the Oliver’s four-factor model of wins (Oliver, 2004, which models teams’ wins in the regular season as a linear function of four indicators. These indicators are used to parsimoniously aggregate team statistics across different performance dimensions. The dimensions considered are Shooting, Turnovers, Rebounding, and Free Throws and Fouls.

The four factors are constructed for each team

– EFG = – OEFG =

– TPP = – OTPP = – Employed Possession = –Acquired Possession = Opponent Turnovers + Defensive Rebounds + Team Rebounds + Opponent Field Goal Attempts + 0.45 × Opponent Free Throws

– ORP = – DRP =

– FTR = – OFTR =

The first factor captures the relative shooting efficiency, measured via the Effective Field Goal Percentage (EFG), compared to the Opponents’ Field Goal Percentage (OEFG). EFG is calculated by appropriately weighting two-point and three-point shots made.

The second factor measures the relative efficiency of teams and their opponents in utilizing possessions, using Turnover Per Possession (TPP) as the efficiency indicator. Employed and Acquired Possessions are nearly equal by construction. A possession refers to the period during which one team controls the ball and attempts to score. It starts when a team gains the ball and ends when the team either scores or turns it over. A possession may also include multiple plays, and offensive rebounds can extend the same possession. Free throws are weighted at 0.45 in the definition of possession due to the occurrence of ”and-one” situations.

The third factor accounts for the relative rebounding abilities by combining Offensive Rebound Percentage (ORP) and Defensive Rebound Percentage (DRP).

Finally, the fourth factor focuses on free throws and fouls, using the Free Throw Ratio (FTR), which is the ratio of Foul Shots Made to Field Goal Attempts. This factor captures both the effectiveness of drawing fouls on shooters and capitalizing on free throws.

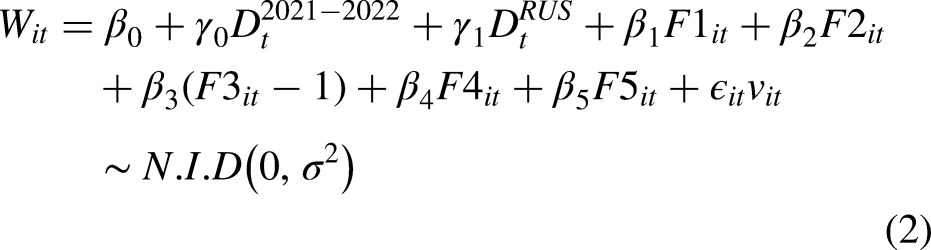

To assess the explanatory power of the four factors in accounting for team performance, we begin with the standard approach and estimate the following regression using pooled cross-sectional data from all available seasons. 12 However, we later relax the pooling assumption as part of our robustness analysis.

The following model, where the total number of wins for each team in each season

Note that in the 2021–2022 EuroLeague season, the expulsion of the Russian teams (CSKA Moscow, Zenit Saint Petersburg, and UNICS Kazan) resulted in a reduced number of regular-season games. The reduction in the number of games was different for the Russian teams and the other teams. Therefore, we introduce two dummies in our specification:

The model has a very natural interpretation: all factors take the value of zero for the average team in the league, because the average team in the league performs exactly as the average opponent.

13

The constant in the regression captures the number of wins in the season of the average team, which is half of the game played, while the coefficients on each of the factors capture the contribution of each factor to explain, for each team and each season, higher number of wins with respect to the marginal team. The expected sign of the coefficients on the factors are positive for

– SDIA = the average number of successful timeouts per game SDI for team i in season t

We then estimate the following augmented model:

In this specification the significance of the coefficient on

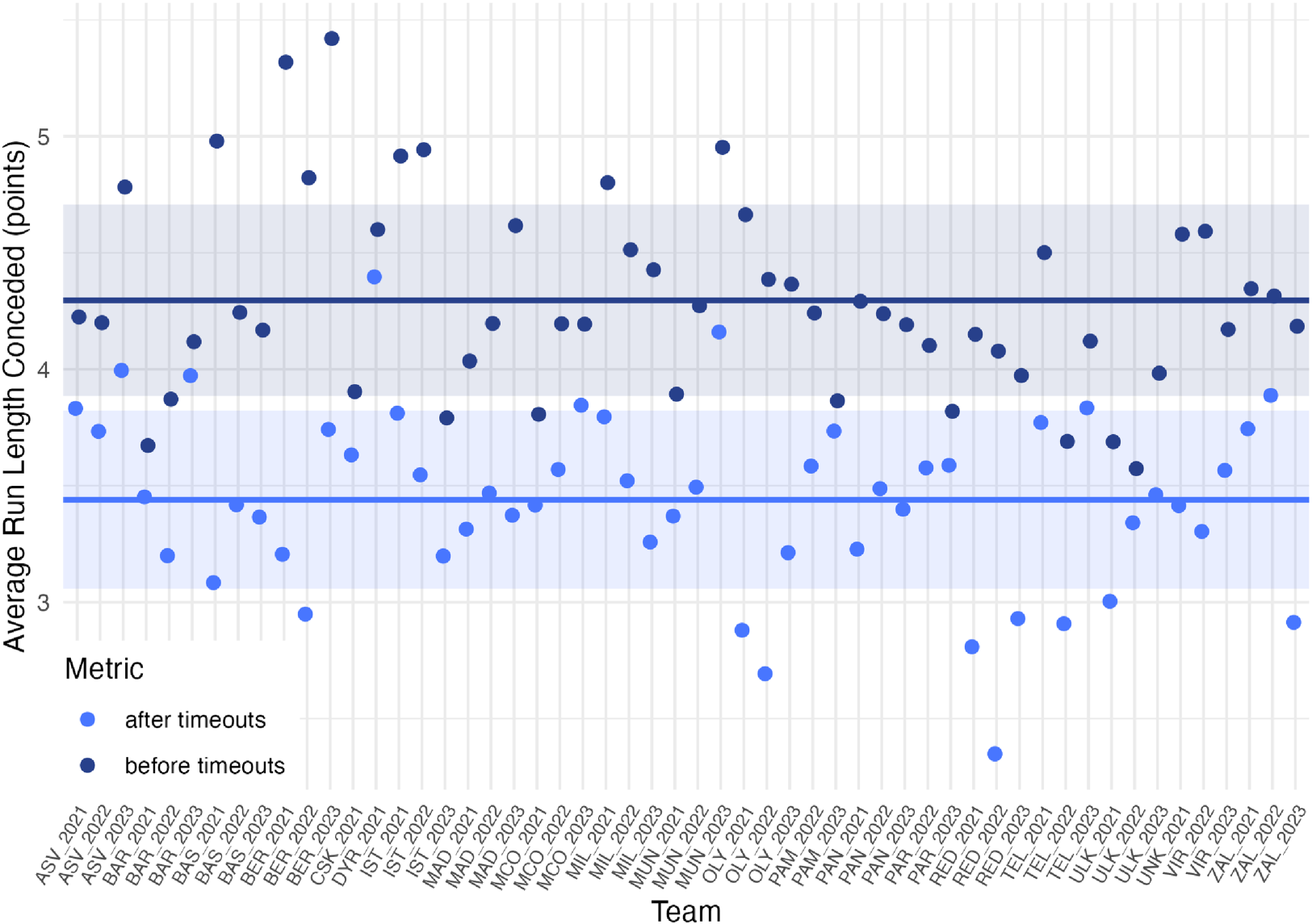

The four-factor model and the five-factor model with a timeout efficiency factor.

The estimation of the four-factor models reveals that all coefficients are statistically significant with positive values for the coefficients on

The 5-th factor (timeouts) and the residuals from the standard 4-factor model for Wins.

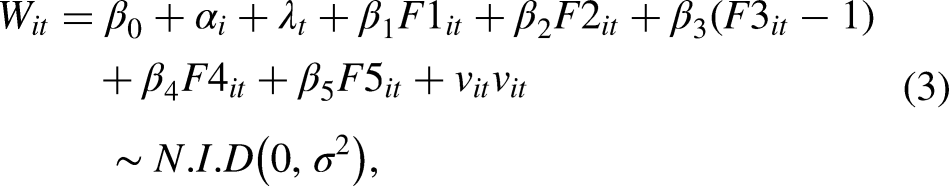

Despite the intuitive structure and interpretability of the pooled cross-sectional specification, by stacking team–year observations and treating them as independent, the model implicitly assumes the absence of unobserved team-specific heterogeneity, independence across repeated observations for the same team, and stability of the relationships between performance factors and wins across seasons. For this reason, we additionally estimate a fixed-effects model that includes both team fixed effects (capturing all time-invariant unobserved characteristics) and year fixed effects (capturing league-wide shocks, rule changes, or seasonal disruptions such as the 2021–2022 Russian team expulsions). This panel-data specification relaxes the strong independence assumptions of the pooled model and allows the estimated coefficients to be interpreted as within-team effects over time. We also report standard errors clustered at the team level. The fixed-effects estimates serve as a robustness check and provide a more credible assessment of the marginal contribution of the four traditional factors and the proposed timeout efficiency factor to team performance. The model specification becomes the following:

Five-factor model with team and year fixed effects.

Team and year fixed effects included. Clustered SEs at team level.

The fixed-effects regression delivers results that are remarkably similar to those obtained from expanding the standard four factor model (Oliver, 2004). The signs, magnitudes, and statistical significance of the first four coefficients remain essentially unchanged, and the estimated coefficient on the timeout efficiency factor continues to be statistically indistinguishable from zero. This robustness to the inclusion of team and year fixed effects confirms that the earlier findings are not driven by omitted, time-invariant structural characteristics of teams or by season-level common shocks.

Conclusion

This paper evaluated Euroleague coaches’ timeout strategies, focusing on their local impact during games and their broader effect on team performance over a season. Using Play-by-Play data, our main findings are that timeouts help reduce scoring deficits, but they do not, on average, reverse the score differential in favor of the calling team. In other words, timeouts mitigate losses but do not turn games around. To assess whether timeouts contribute to long-term team success, we extend the standard Four-Factor Model of Wins for the analysis of the drivers of teams’ performances over the course of regular seasons by incorporating an SDI-based factor. The results show that the four standard factors have a strong explanatory power for team performance, and the timeout factor provides no additional predictive power. So, do timeouts matter in the Euroleague? Yes, but only in a limited sense. Timeouts are moderately useful for slowing opponents’ momentum, but they do not significantly alter game outcomes or contribute to long-term team success. The practical implications for coaches of our evidence are that substitutions during a timeout do not contribute to increase their local impact on the game and that the local effect of a timeout is higher if it is called after a scoring run of the opponent team of at least six points.

This study provides a foundation for several extensions in future work. First, future research may focus on the external validity of our evidence by assessing whether timeouts may matter more in different contexts. In particular, playoff games, with their higher stakes, tighter rotations, and slower pace, may offer a setting where timeouts have a stronger or more strategic impact than in the regular season. Moreover, focusing on playoff games or on high-stakes regular-season matchups, such as games involving teams at the top of the standings or well established rivalries, could further limit the role of experimentation in timeout usage and provide an even cleaner setting for causal identification. Second, is to explore alternative statistical models, such as in-game win probability models, state-dependent scoring models and regression discontinuity approaches, that may capture more complex ways in which timeouts influence performance. Third, and more importantly from an identification perspective, future research could go beyond estimating the reduced-form effect of a timeout being called and aim to disentangle the effect of the timeout itself from coaches’ strategic adjustments that occur during the timeout. Timeouts simultaneously interrupt momentum and provide an opportunity for tactical changes, such as calling specific offensive schemes or altering defensive strategies (switching from man-to-man to zone defense). Isolating these channels would require more granular Play-by-Play and tactical data. Leveraging such information would allow researchers to separately identify momentum-stopping effects from strategic coaching decisions, thereby providing a deeper understanding of the mechanisms through which timeouts affect game outcomes.

Footnotes

Funding

The author(s) received no financial support for the research, authorship, and/or publication of this article.

Declaration of conflicting interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and publication of this article.