Abstract

Central to diving competitions is the diver's “dive list”, which is the list of dives an athlete will perform during a competition. Coaches and divers work together to balance dive list difficulty so that a diver remains competitive without exceeding his or her capabilities. In this work, we examine the discrepancy between a diver's ability and judges’ scores in springboard diving meets with the purpose of discovering biases in scoring that might allow a diver to complete an advantageous dive list. A diver's ability, called the competency score, is estimated by the mean score for all dives and all meets in which the diver has participated. We use the term “discrepancy” to indicate the difference between a judge's score in each meet and the diver's competency score; therefore, discrepancy reflects the gap between the judges’ assessment of a diver's performance and his or her actual ability. The notions of competency and discrepancy are applied to a data set for high-school one meter diving competitions in the US from 2017 to 2022. Using visualizations and a mixed-effects model, we show that a large part of discrepancy in scores can be explained by the position, direction, and difficulty of the dive.

Keywords

Introduction

Springboard diving presents significant technical challenges due to the complex interplay of biomechanical factors. Divers must coordinate board clearance, vertical and angular velocity, and the need for in-flight adjustments (Miller and Sprigings, 2001), among other considerations. The balance of these factors requires significant strength and agility. When done well, diving is entertaining, beautiful, and awe-inspiring to watch.

Research on judging bias in subjectively scored sports has gained importance due to increased media scrutiny and financial stakes (Heiniger and Mercier, 2021). Studies have examined various aspects of judge performance, including nationalistic bias in diving (Emerson and Meredith, 2011), scoring patterns in pairs ice skating (Looney, 2004), and the relationship between judges’ scores and objective measurements in scoring in competitive diving (Yung and Reinkensmeyer, 2014). Scoring patterns from judges in a national sport dance competition were examined within the Many-Facet Rasch Measurement framework to assess potential inconsistencies in the ratings. (Anderlucci et al., 2021). These studies highlight the importance of monitoring judge performance and developing methods to enhance the fairness and accuracy of scoring in subjectively judged sports.

Diving, swimming, synchronized diving, and water polo are collectively known as ‘aquatics’. Aquatics is one of the top three most-searched events during the Olympics and has the second highest number of newspaper articles published during the Games (Wood, 2015). Diving is also a popular high school sport and collegiate sport, and aquatics as a whole is ranked as the 9th easiest scholarship to get for both men and women, behind men's and women's ice hockey, men's and women's lacrosse, football, women's field hockey, and baseball (Cajic, 2024). Typically, of NCAA Division I scholarships earmarked for swimming and diving, 85% go to swimmers, while only 15% are reserved for divers (NCSA, 2023). In the 2023–24 school year, the latest year for which numbers are available, swimming and diving was the 9th most popular high school sport for girls, with 138,174 participating, and the 10th most popular for boys, with 116,799 participating (National federation of State High School Associations, 2023). For all its popularity and beauty, aquatics performance in general and diving performance in particular are not readily analyzed.

Some of the lack of data analysis for the sport of diving might be due to a perceived lack of access to diving scores. While not all scores are easily accessible, numerous sources provide detailed diving results. For example, there are several websites devoted to recording scores from diving meets. DiveMeets.com (Meet Control, LLC, 2020) is one of the most comprehensive. It has scores for all dives, all judges, and all meets in the US including collegiate, high school, and youth diving meets. Registration is required to access the scores. The World Aquatics website (World Aquatics, 2021) has scores broken down by round, diver, and dive, for elite diving meets around the world. USA Diving is another website that contains results on meets worldwide, including national Olympic trials (USA Diving, 2023). Swimming.org (Swim England, 2025), a website run by Swim England, has results from world and British diving championships, including the British National Cup from 2010- 2023 and the British Elite Junior Diving Championships from 2006–2023. SwimSwam (Swim Swam Partners, LLC, 2025) maintains a scoring archive of results for swimming and diving at all levels, including Olympic, international, and all three divisions of US collegiate athletics.

Most diving literature has focused on nationalistic bias in judging at international competitions. Notable judging scandals in sports like ice skating (Brennan, 2018) and gymnastics (Armour, 2024; Zaccardi, 2012) have heightened public concern about fairness in all subjectively judged sports. Evidence of nationalistic favoritism was discovered using data from diving competitions in the 2000 Summer Olympic Games (Emerson and Meredith, 2011; Emerson et al., 2009). The data considered for this paper are from high school competitions, and there is no information on the judge affiliation; therefore, it is not possible to spin juicy tales about bias for judges from rival high schools.

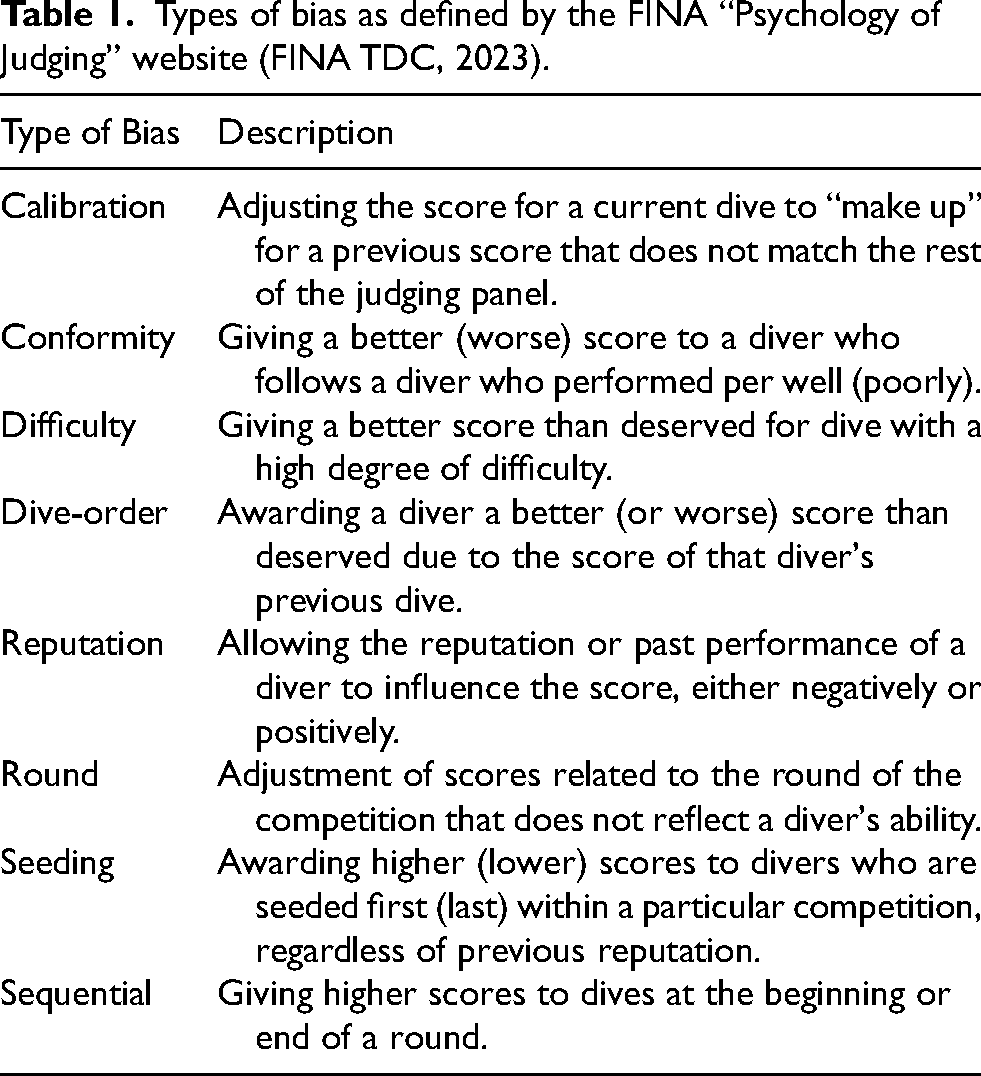

Although nationalistic bias receives the most scrutiny, it is not the only type of judging bias. World Aquatics (WA) warns against reputation bias, round bias, dive-order bias, difficulty bias, seeding bias, and conformity bias (FINA TDC, 2023). Table 1 explains the different types of bias. Waguespack and Salomon (2016) found reputation bias, a strong association between past performance and current performance in Olympic sports, particularly for those where the winner is determined based on subjective scores (Waguespack and Salomon, 2016). Bruno (1986) used data from the 1983 USA Indoor Diving Nationals to examine the relationship between judges’ scores and the degree of difficulty (DD) for a dive. He found that judges tended not to penalize difficult dives as much as expected and suggested a revision of the DD tables (Bruno, 1986). Other articles have investigated sequential bias (Kramer, 2017), where the score of the current athlete under consideration is affected (positively or negatively) by the score of the previous athlete in the sequence. One paper measured the width, height, and size of the splash to examine the effect of such measurements on the judges’ score (Driscoll et al., 2014).

Types of bias as defined by the FINA “Psychology of Judging” website (FINA TDC, 2023).

Previous work has found evidence of difficulty bias in gymnastics, and furthermore that the difficulty bias is strong for female gymnasts and weak for male gymnasts. However, order bias does not seem to be present in the gymnastics data examined thus far (Morgan and Rotthoff, 2014; Rotthoff, 2020). The data set that we have does not have information on the order of the divers within a given competition; nor do we have information on a diver's seed for a given competition. Therefore, we cannot comment on conformity bias, order bias, or seeding bias. This paper focuses on discrepancy, the difference between the judges’ assessment of a diver's performance and a diver's ability (Bruno, 1986; Emerson and Meredith, 2011). The notion of discrepancy is important for athletes when creating their dive lists (explained in the next section) for a meet. For example, if there is a systematic tendency for judges to give larger scores than expected at the beginning of a meet, then the diver can plan for more difficult dives at the beginning of a meet. If there is a tendency for certain dive positions (e.g., tuck, pike, etc.) or directions (e.g., forward, backward, reverse, inward, twist) to be judged more favorably, the diver can plan to perform dives in those positions or directions within the confines of meet rules. Positions and directions are discussed in more detail in the section on scoring high school diving competitions.

The data for this analysis are from one-meter springboard diving competitions at the high school level in the United States. Competitions for 25 of the 50 states are given in the data set, with California, North Carolina, and Georgia hosting the most meets. There are two basic types of meets in high school diving: dual meets and competition meets. In dual meets, a small number of schools compete against one other with one school as the host in their home natatorium. For these meets, there are usually qualified swimming officials to check strokes and touches for relay teams; however, the diving judges are typically the swimming and diving coaches of the participating teams. For these meets, the divers typically perform six dives. For competition meets, which can take place at any time during the season, divers perform 11 dives. For these more prestigious meets, judges must have the appropriate certification. There is no mandatory nationwide certification for high school diving judges; however, most states require prospective judges to complete a training course on diving rules, judging criteria, and scoring systems (National Federation of State High School Associations, 2024). For example, in Texas, the governing body for high school athletic and academic competitions is the University Interscholastic League (UIL). The UIL requires that diving judges for UIL-sponsored meets be certified through a course administered by the Texas Interscholastic Swimming and Diving Officials (TISDO) organization (University Scholastic League, 2024). Other states have similar certification organizations, such as the North Carolina High School Athletic Association, the California Interscholastic Federation, and the Georgia High School Association. The details of the certification are beyond the scope of this paper. Suffice it to say that judges at more prestigious diving meets typically receive formal training in the sport's rules, scoring systems, and judging criteria. Therefore, to account for potential differences in the level of judge training, we restrict our analysis to more prestigious meets in which each diver must perform 11 dives.

First, we explain the intricacies of diving as a sport and the logistics of scoring a diving competition in terms of difficulty and execution. Next, we explain the variables within the data set. Continuing, we examine competency and discrepancy, terms formally defined in Equations (1) and (2), in the context of gender, age, and dive difficulty. Then, we examine difficulty bias both overall and in the context of dive order, which is synonymous with the round of the competition. The analysis culminates using a mixed model with random effects for diver and meet, and fixed effects for round, position, direction, age, gender, and DD. Finally, we conclude with a discussion and future directions for research.

High school diving and its scoring

Regardless of the competition level, every dive has three elements to it: direction, position, and rotation. The five directions are forward, backward, inward, reverse, and twist. Although a twist can be performed in any of the other four directions, historically it is considered as a fifth direction (Franklin, 2018). The four positions are tuck, pike, straight, and free. The free position can be any position. Rotation has to do with the number of half-rotations that a diver does in one of the four positions and directions. For example, a forward 1.5 flip in the tuck position is a dive where the diver faces the pool on leaving the board, does one flip in the air in a tuck position, and enters the pool headfirst (thus 3-half-rotations). Dives performed in the same direction with the same number of rotations but using a different body position are considered as the same dive. For example, a forward 1.5 in a tuck position and a forward 1.5 in a pike position are the same dive. However, a backward 1.5 somersault in the tuck position and a forward 1.5 somersault in the tuck position are different dives. The more prestigious high school diving competitions typically have 11 rounds, in which each round consists of different dives performed by each diver in the competition. Girls and boys participate in separate competitions in the sense that places are awarded to boys and girls separately, but both genders are scored by the same judging panel.

Critical to each diver is his or her dive list, which is the list of dives that a diver plans to perform in each meet. A dive list must be created prior to a meet and signed by the diver and his or her coach before it is given to the judges. Once the list is finalized, it cannot be changed. The dives on the list must be performed in the order in which they are given on the list. However, divers cannot perform just any dives they wish. For an 11- dive competition, every diver must perform 2 dives in each direction (forward, backward, reverse, inward, or twist) and an 11 th dive of the diver's choice (Franklin, 2018). More precisely, the diver performs a series of voluntary dives and optional dives. The voluntary dives consist of one dive from each of the 5 diving directions. The six optional dives consist of one dive from each of the 5 diving directions with one direction represented twice. There is no set of standard voluntary or optional dives. In other words, a voluntary dive for one diver might be counted as an optional dive for another. Furthermore, the voluntary and optional dives can be performed in any order. The only limitations are that total DD for the voluntary dives must be ≤ 9.0 and the total DD for optional dives must be a minimum of 11.5 for girls and 12.0 for boys. In addition, all five directions must be represented in the first 8 rounds of diving and all 11 dives must be totally different dives (Franklin, 2018).

Unlike gymnastics or ice skating, where the difficulty of a routine is judged separately from the execution of the routine, the degree of difficulty of a dive (DD) is determined by World Aquatics (WA), the international governing body for aquatic sports, previously known as Fédération Internationale de Natation (FINA). The DD is fixed for a given dive, and is the same regardless of the age, gender, or level of the diver. For example, dive 103B (forward 1.5 in a pike position) is assigned a DD of 1.6, and this DD is the same whether the diver is male or female, is 13 years old or 22 years old, or is a high school diver or an Olympic diver. Regardless of competition level, dives are scored on a scale of 0 to 10 in increments of 0.5, with 0 being a failed dive, and 10 being a perfect dive. Just as with the DD, there are no adjustments to the execution score for the level, gender, or age of the diver. For example, most high school scores are in the range of 4–7, with a score of 8 being quite rare. However, most Olympic diving scores are in the range of 8–10, with scores of 6–7 quite rare.

Diving, like gymnastics, ice skating and ski-jumping, is scored by a panel of judges; therefore, there is a subjective element to a diving score. Each panel consists of an odd number of judges, usually 5 although sometimes 3, 7, or 9, who give their scores independently for each diver for each round of a competition. The exception to independent scoring is a failed dive. To fail a dive, which results in a score of 0 for the diver, the entire panel of judges must agree to do so. The

A description of the DiveMeets data set

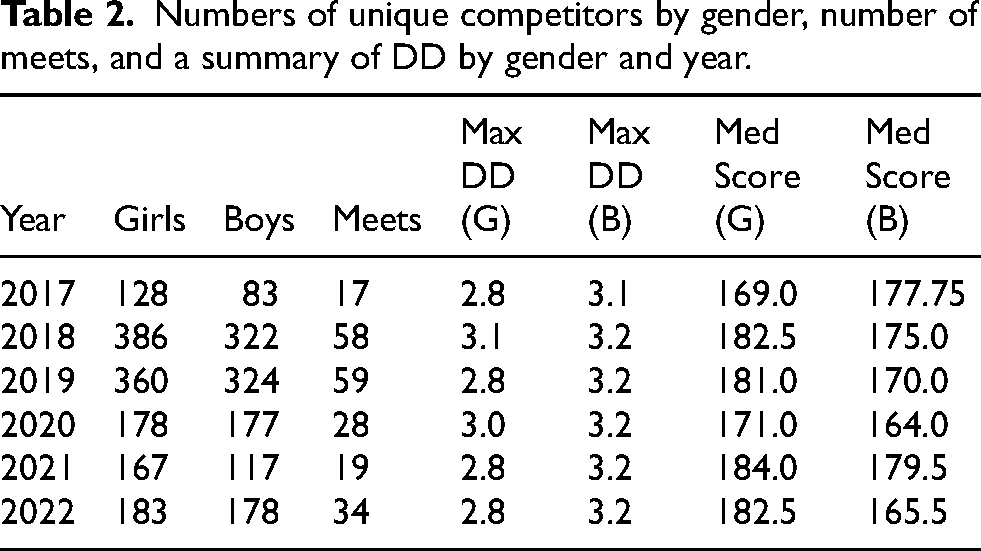

The dataset includes approximately 38,000 entries for high school divers aged 14 to 19 who competed in meets held between 2017 and 2022. All data were scraped from divemeets.com and pertain to one-meter springboard events from meets across the United States during that five-year period. Each diver has a unique diver ID, and each meet is assigned a unique meet ID. We have information on gender, team membership, age at time of competition, and the name of the competition for each diver. For each dive, we have degree of difficulty of the dive (1.2 to 3.2 for these data), the round in which the dive was performed (1 to 11), the dive direction, position, and number of rotations, the individual score for each judge and the aggregate score for each round (award). From these data, we calculated the net score (the trimmed mean of the judge's scores without a multiplier for degree of difficulty). We also have the date that the meet took place, from which we extracted the year. Table 2 gives information on the number of unique meets, unique divers, and maximum DD for the meets with 11 dives for each year of the data set. The number of divers is broken down by gender. The maximum DD is shown because it was the only statistic from a 5-number summary of the DD for each year that differed substantially for males and females. The minimum DD for both genders is 1.2, the first quartile was 1.6, the median is 1.7, and the upper quartile was either 2.1 or 2.2 depending on the year. The median net score for each year and gender is also shown.

Numbers of unique competitors by gender, number of meets, and a summary of DD by gender and year.

Some divers are counted twice in Table 2 because they participated in multiple years. There are a total of 1872 unique divers in the data set: 1301 participated for only 1 year, 439 participated for 2 years, 108 participated for 3 years, 20 participated for 4 years, and 4 participated for 5 years. From this table, more females than males participate in diving in each year, and the maximum DD for females tends to be less than the maximum DD for males. Further, it seems that the median judges’ score is larger for girls than for boys. However, this score is the net score, which does not include the DD multiplier, and the net score is aggregated over all dives. We need a more nuanced analysis to determine whether there is positive or negative gender bias in judging.

Measuring diver ability

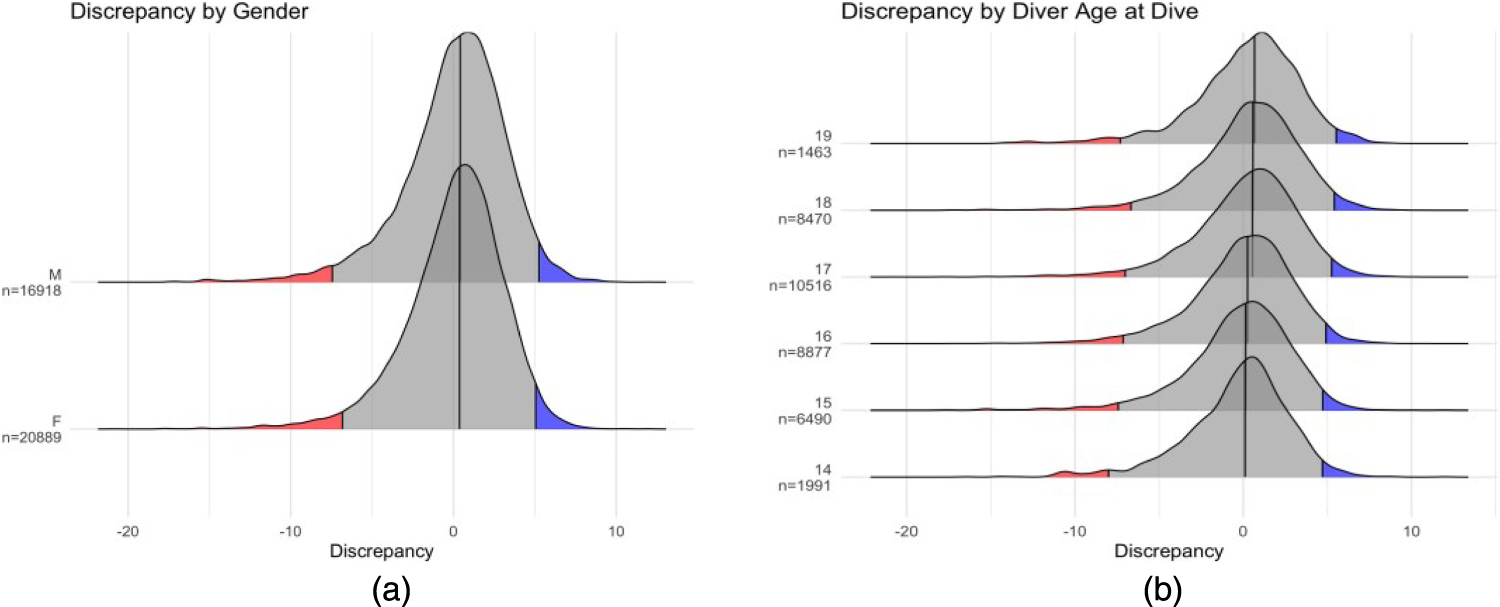

We now turn to an examination of the difference in a diver's ability versus their scores. We call this difference the “discrepancy”. Discrepancy is defined in terms of a judge's mark that does not reflect a diver's ability. In other words, the definition of statistical bias applies, as given by

Measuring overall athlete ability in the absence of an objective measure, such as a clock or a tape measure, is a tricky thing. Diving, especially high school diving, does not have a technical judging panel, as do World Championship ice skating or gymnastics, to ensure that the athletes complete the rotations and twists that a given dive demands. In high school competitions, there are no announcers examining every frame of an instant replay (nor are there instant replays, for that matter). However, there is research using videos from a diving competition to measure characteristics of dives that typically differentiate a good dive from a bad one, such as height of splash, distance of entry from the end of the board, and angle of entry (Luedeker and McGee, 2022). That work showed that the judges’ scores are closely aligned with quantitative values measured from the video evidence. In only one instance did the difference between the video-based measurements and the judges’ scores lead to a meaningful change in standings: it would have altered the order of the third- and fourth-place divers, affecting which athlete advanced to the next level of competition. In other words, trained diving judges are generally quite accurate when it comes to judging dive quality within a diving competition. The same work also showed that untrained diving judges are unreliable when it comes to qualitative scores matching quantitative measurements (Luedeker and McGee, 2022).

In the absence of video evidence and objective measurements to define diver ability, we compute the mean net score (not including the multiplier for DD) over all dives and all meets in which a diver has participated. There is a precedent in previous papers on diving, ice skating, and gymnastics, for using the median score as a measure of athlete ability (Emerson and Meredith, 2011; Emerson et al., 2009; Heiniger and Mercier, 2021); however,

For each meet, dive, and diver, we compute a discrepancy score, Ddmr, which is the difference between the diver's estimated ability (competency) and the judges’ combined net score for a particular dive and meet.

Results for an analysis of discrepancy

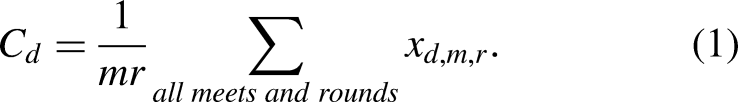

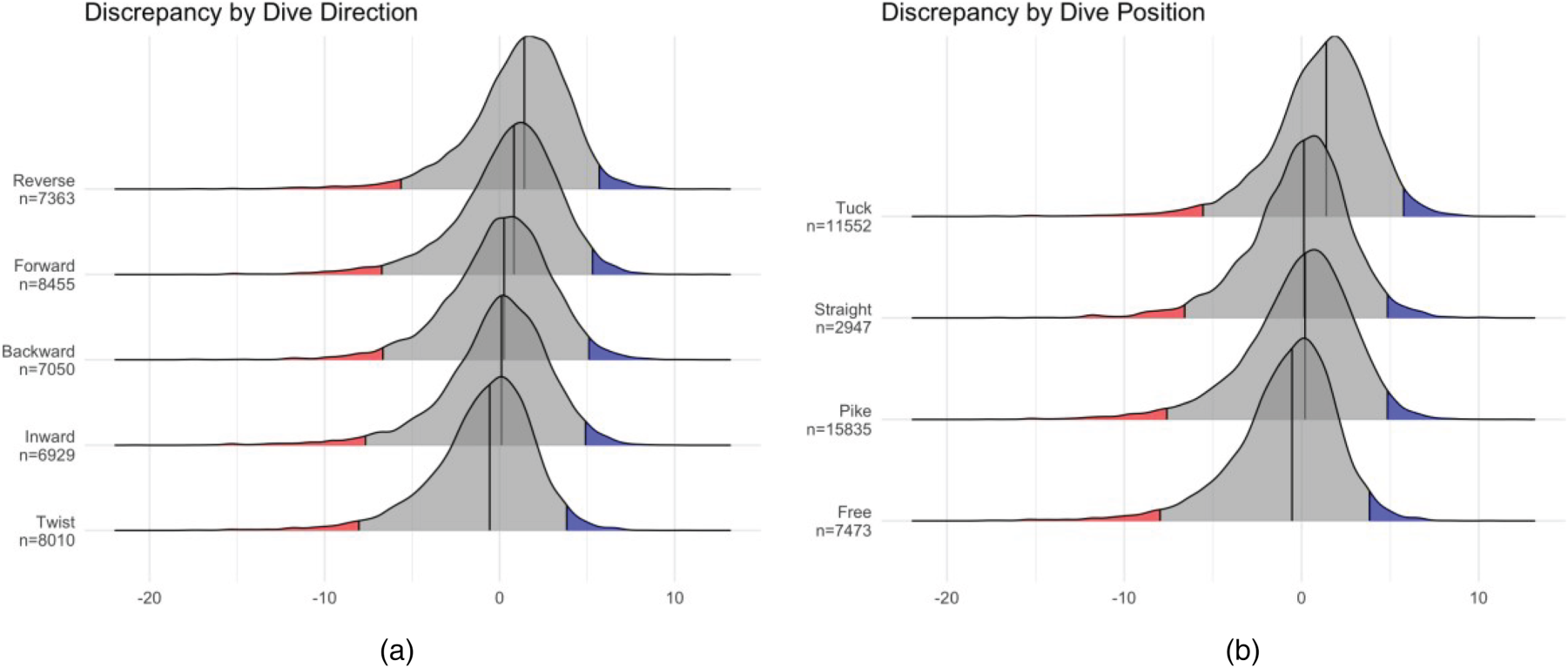

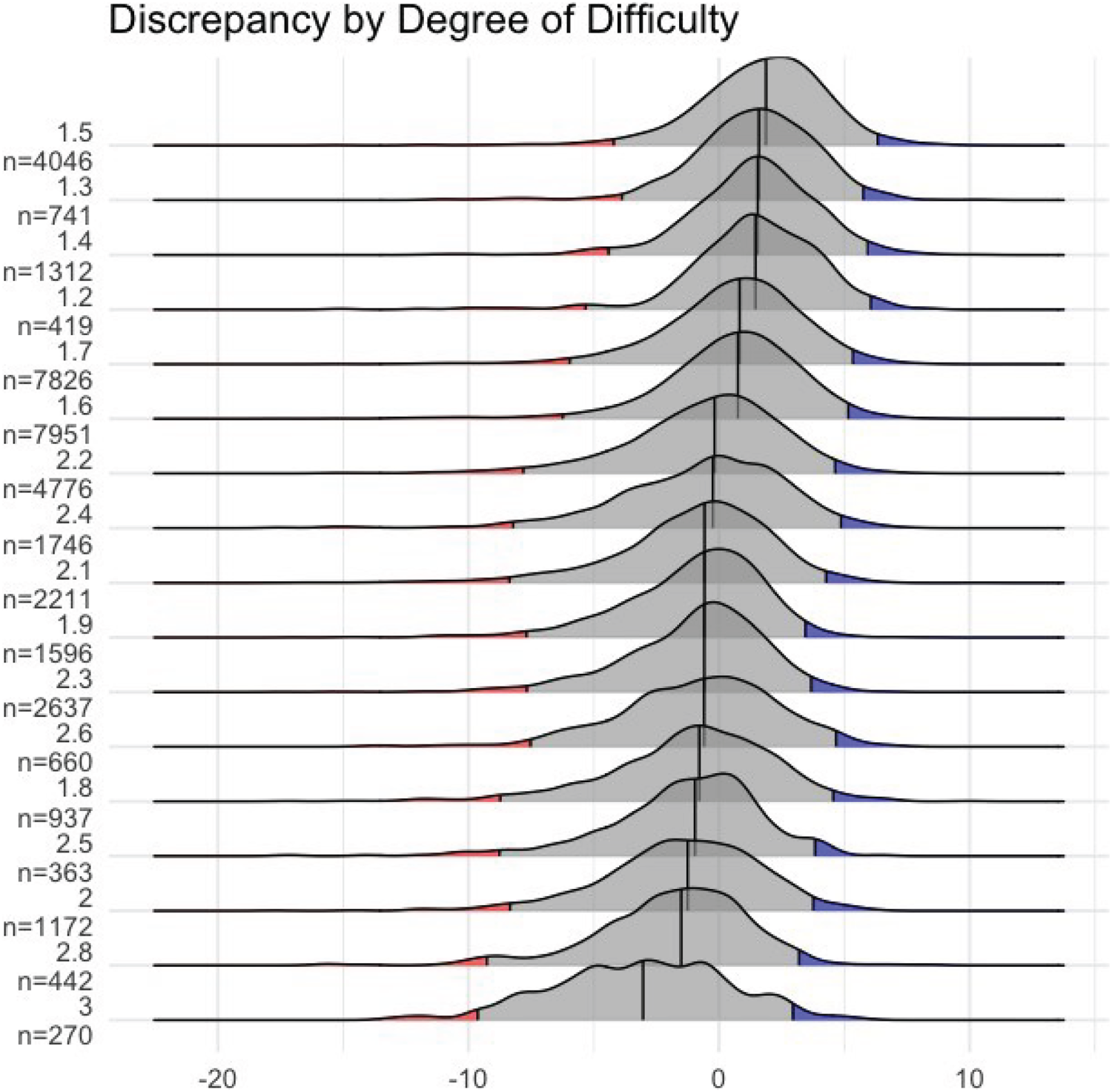

We used ridgeline plots (Wilke, 2020) to show the distribution of the discrepancy according to gender, age, dive position, dive direction, and DD for all meets, divers, and dives (Figures 1–3). The y-axis for each plot gives different categories for an explanatory variable. The x-axis is the discrepancy, which typically ranges from −25 to 15. The black line in the middle of each curve is the median discrepancy, the red area on the left of each curve marks the 2.5th percentile for the discrepancies, and the blue area on the right of each curve marks the 97.5th percentile for the discrepancies. For all ridgeline plots, the categories are ordered by the median discrepancy. For each category, the total number of dives is given under the category name on the vertical axis.

(a) Discrepancy by gender (gender referenced on the vertical axis). (b) Discrepancy by age at dive (age referenced on the vertical axis). Each curve is a density estimate of the discrepancy. The black line in the middle of each density curve is the median discrepancy, and the shaded areas on the left and right tails of the curves are the 2.5% and 97.5% quantiles, respectively.

Discrepancy ridgeline plots for dive direction (from top (a) reverse, forward, backward, inward, and twist) and dive position (from top (b) tuck, straight, pike, and free). (a) Discrepancy by Dive Direction. (b) Discrepancy by Dive Position.

Discrepancy plotted against the degree of difficulty assigned by WA for a given dive. The number of dives performed at this difficulty is on the vertical axis under each assigned DD.

Figure 1(a) shows a plot of the distribution of the discrepancy for male and female divers. The black lines in the middle of each curve correspond to the median discrepancy. Visually, the black lines are aligned at 0 for both genders; numerically, the median for females is 0.36 and the median for males is 0.40, for an absolute difference of 0.04. There is an indication that judges tend to give slightly higher scores overall than are deserved; however, this effect is the same regardless of gender of the diver. Figure 1(b) shows the discrepancy plotted for each age. There is a slight shift to the right as the divers age (the medians for 14–19 year olds are 0.11, 0.14, 0.25, 0.55, 0.57, and 0.67, respectively). For 14 and 15 year old divers (who have the same median discrepancy), the discrepancies are quite close to unbiased. A calculation of the mean and standard deviation for each age shows that both male and female divers increase their DD as they age. The change in mean DD is from 1.75 (1.80) at age 14 for female (male) divers, to 1.86 (1.91) for female (male) divers at age 19. The standard deviations for both genders also increase slightly from .2 to .4 as the age of the diver increases. An analysis for the number of years a diver has participated in diving competitions (1 to 5 for our data set) shows similar means and standard deviations of DD as given for age of the diver. These descriptive statistics show that the change in median discrepancy by age might indicate improvement in diver ability with experience as much as it would indicate a tendency for judges to judge older divers more leniently.

Figures 2(a) and 2(b) show the distribution of discrepancy scores for dive directions and dive positions, respectively. The five directions are forward, backward, inward, reverse, and twist. To perform a forward dive, the diver faces the pool on take off and rotates forward. For a reverse dive, the diver faces the pool on take off and rotates backward toward the board (the opposite direction of a forward dive). To perform a backward dive, the diver turns his or her back to the pool and rotates backward. An inward dive is performed by turning one's back to the pool and rotating toward the board on take off. Generally, reverse and inward dives are considered more difficult than forward and backward dives in the sense that a dive in a forward direction will have a lower degree of difficulty than that same dive in a reverse or inward direction. For example, a forward 1.5 in a tuck position has a DD of 1.6. A backward 1.5 tuck has a DD of 2.0, a reverse 1.5 tuck as a DD of 2.1, and an inward 1.5 tuck has a DD of 2.2. The median discrepancy from Figure 2(a) appears to be close to 0; however, the numerical median discrepancies are 1.41, 0.82, 0.26, 0.11, and −0.57 for the reverse, forward, backward, inward, and twist directions, respectively. Reverse dives tend to be scored more leniently, which is some indication of a “bump” in score for performing a more difficult dive; however, divers do not receive a similar bump for backward and inward dives, which also tend to be more difficult. Twisting dives tend to get lower scores than the diver's ability would suggest. Discrepancies for reverse, forward, and twisting dives are certainly large enough to change a diver's score substantially.

The positions are tuck, straight, pike, and free. Free is most often reserved for twisting dives, where the diver is free to twist in any position. Generally, dives in a pike or straight position will have a greater DD than the same dive in a tuck position. Numerically, the median discrepancy for the free position is −0.53, for pike it is 0.2, for straight it is 0.14, and for the tuck position it is 1.39. Note that the median discrepancy for free and tuck are certainly large enough to impact a diver's final score and there is clear evidence of judges scoring dives in the tuck position more leniently.

Discrepancy vs. Degree of Difficulty

To examine the impact of DD further, the values of the DD assigned by WA were plotted on the vertical axis with the discrepancy on the horizontal axis (Figure 3). Because few dives at this level have DD greater than 2.7, any dive with a DD between of 2.7 to 2.9 was assigned to a DD of 2.8, and any dive with DD of 3.0 or greater was assigned a DD of 3.0. Easier dives, with degrees of difficulty between 1.2 and 1.6, are more leniently judged, while more difficult dives, with DD of 2.1 or greater are more harshly judged. For example, the largest positive median score is 1.87 for dives with DD of 1.5, and the largest negative score is −3.04 for dives with DD of 3 or more. It is not strictly true that more difficult dives are more harshly judged, as dives with DD of 1.8 (median discrepancy of −.82), for example, are judged more harshly than dives with a DD or 2.3 or 2.6 (median discrepancies of −.59 and −.55, respectively). Interestingly, 86% of the dives with DD equal to 1.8 or 1.9 are twisting dives. This explains the negative discrepancies for these purportedly easier dives, as judges tend to judge twisting dives more harshly (Figure 2(a)).

This finding would seem to conflict with the previous direction of difficulty bias, where more difficult dives are judged more leniently (Bruno, 1986; Morgan and Rotthoff, 2014). However, we are not really measuring difficulty bias in the same way as was measured by previous investigations of difficulty bias in judged sports. Difficulty bias is a tendency for judges to award higher scores to more difficult routines (Morgan and Rotthoff, 2014). Discrepancy is a measure of the distance of a judges’ score from the diver's ability. It is possible that the expected direction of “difficulty bias” is reversed because a diver is attempting a dive that is beyond his or her ability; thus, getting a smaller score than expected. For discrepancies in the positive direction, divers are likely performing the easier dives quite well because they have been in their repertoire longer than the more difficult dives. Anecdotally, divers tend to have less practice with the more difficult dives because they must achieve a certain level of ability before attempting these dives. In addition, more difficult dives might have not been performed in a previous competition. Therefore, the harsher judging for more difficult dives in these data is likely due to divers overreaching their ability and/or having less practice with more difficult dives in competition. There might be other factors at play, also, as we examine in the next section.

Discrepancy by round of competition

Another potential factor affecting discrepancy is the round within a diving meet. Recall that each meet is 11 rounds, and, depending on the number of divers in a meet, the judges could be judging for several hours. For the analysis that follows, we have eliminated meets in which fewer than 5 divers participate in the meet.

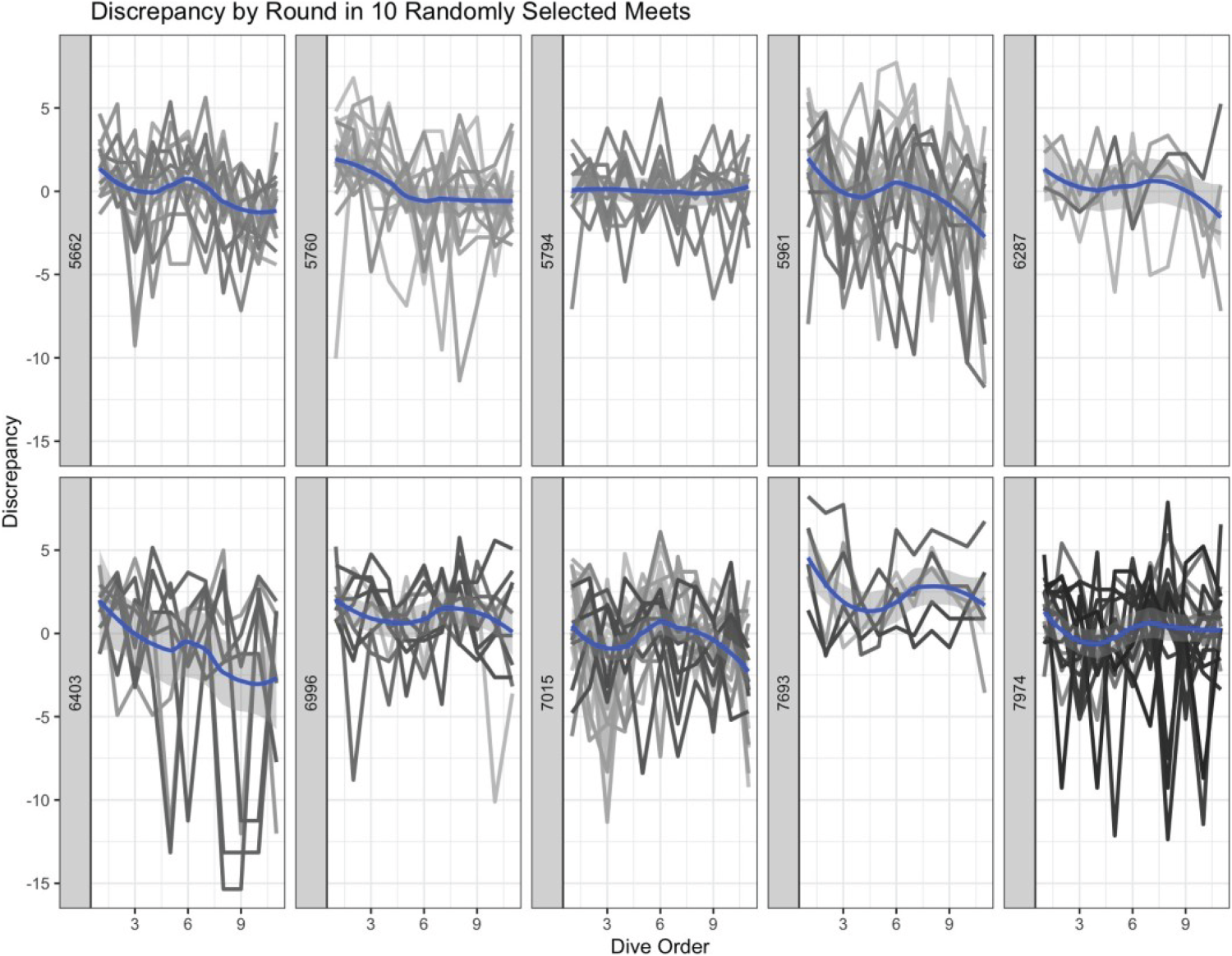

Figure 4 shows discrepancies for all divers in ten randomly selected meets. The number of divers in the meet for this plot range from 5 to 21 (in the data set, the maximum number of divers within a meet is 45). Each frame represents a single meet, and each dark gray or black line represents the discrepancies for a different diver. The frame label on the left-hand side of each frame gives the unique meet ID. The discrepancy is plotted on the vertical axis and the round in which a dive was performed on the horizontal axes for all frames. The blue curve within each frame is a loess smoothed line. Except for meet 5794, where the loess line is flat, generally, the loess lines indicate a positive discrepancy at the beginning of a meet, near 0 discrepancy in the middle of the meet, and a negative discrepancy at the end of the meet. Interestingly, the effect is there regardless of the meet, which is confounded with the judging panel. We also see evidence of much more variability in judging panels for some meets, as in meets 5961, 6403, and 7974. Because the data do not contain information on the identify or affiliation of the judges, we cannot determine the level of training of these judges nor examine their tendency to be harsh or lenient across multiple meets in which they might have judged.

Discrepancies (vertical axis) plotted as a line for each diver in 10 randomly selected meets. The horizontal axis is the round for the meet from 1 to 11. The blue line is a loess smoother. Each meet shows evidence of harsher judging (negative discrepancies) as the meet wears on.

Each of the 10 randomly selected meets in Figure 4 shows various gradations of negative trends. To examine whether the negative trend in discrepancy was common throughout the data set, we calculated slopes of discrepancy versus round for each of the 215 meets in the data set. The mean slope of the discrepancy is −0.145 (median = −0.147) and the standard deviation is 0.128. 89% of the slopes are negative, indicating that negative discrepancies are quite common in these 11-round meets. Therefore, what is seen in Figure 4 holds true for most of the meets; there is a tendency for judges to judge more harshly as a meet enters its final rounds.

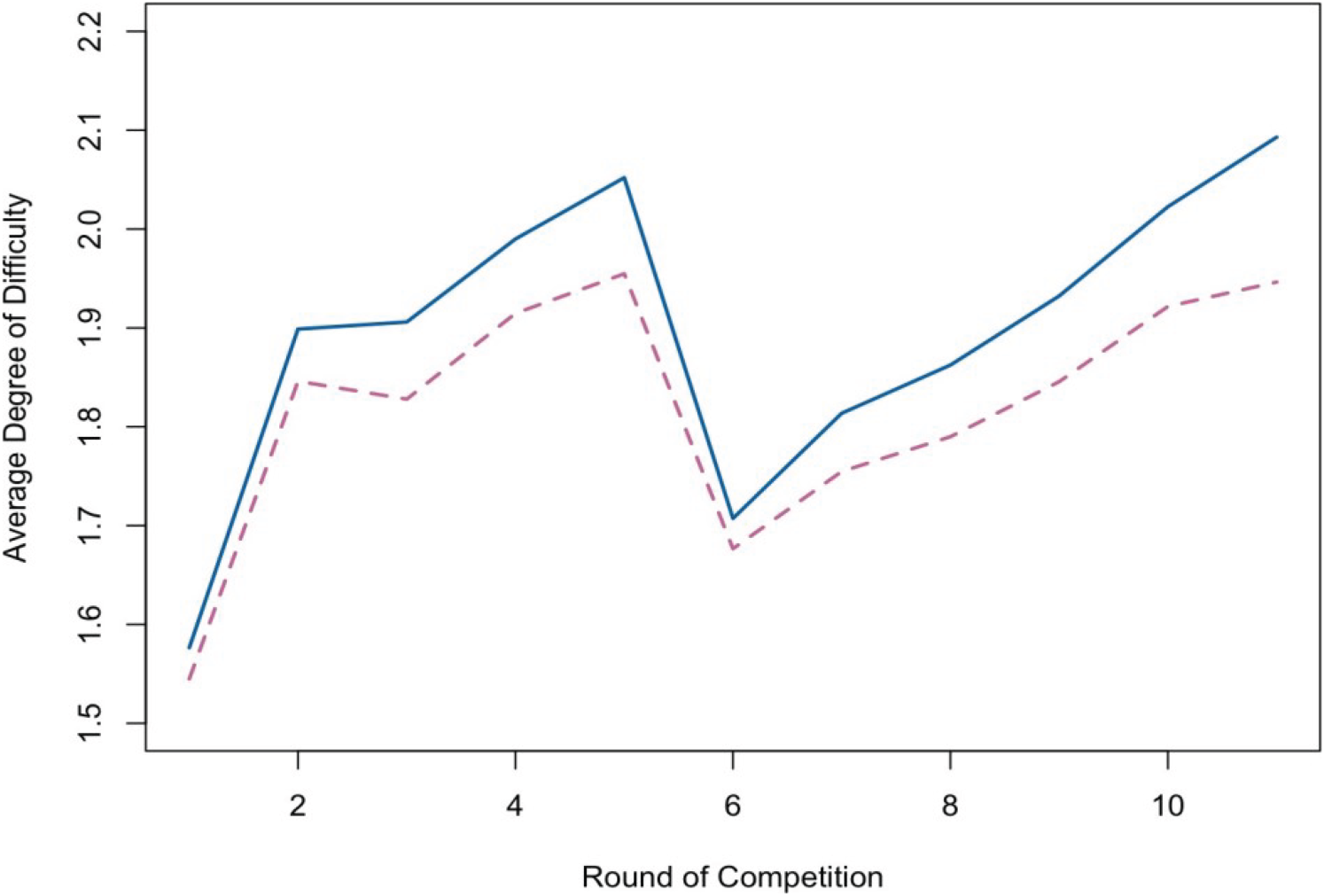

Recall that divers are free to perform dives in almost any order for a given meet, with respect to the stipulations on voluntary vs. optional dives mentioned previously. Round and dive are confounded, and dives have different degrees of difficulty. It is possible that the negative discrepancy seen in the final rounds of a meet is due to divers reserving more difficult dives for latter rounds (to finish with a splash, so to speak). Figure 5 shows a line plot of the average DD for each round for girls (magenta dashed line) and boys (blue solid line).

DD (on the vertical axis) plotted versus the round of competition (on the horizontal axis). The magenta dashed line shows the fluctuation in DD for girls, and the blue solid line shows the fluctuation for the boys. There is a similar pattern of DD by round for each gender.

Regardless of gender, on average, divers start with less difficult dives (the most popular starting dive is a forward 1.5 in a tuck position, DD = 1.6), work up to more difficult dives by round 5, then start again with less difficult dives and finish with more difficult dive. This overall pattern makes sense with the pattern of discrepancy in Figure 4: divers are performing above their ability at the beginning of the competition with easier dives, taking more risks in the middle, and then finishing with a difficult dive that is perhaps beyond their ability (or perhaps they are too tired to perform the more difficult dive). Starting with a dive that matches a diver's ability plays into performance bias. If a diver starts with a good score, the judges might reward that diver with higher scores than expected in subsequent rounds. Performance bias is another possible explanation for the tendency for discrepancies to be close to 0 in the middle of a meet.

The data used for this research give the round in which each dive was performed for each diver; however, the data does not designate which dives are voluntary and which are optional. It might be possible to determine voluntary from optional dives in a reasonable fashion and examine the discrepancies between voluntary vs. optional dives, but this is left for future research. Other types of bias, such as sequential bias and conformity bias (see Table 1), might also be in play. Unfortunately, we do not have access to sequential diver order for these data; that is, the data do not have an indicator for which diver went first, which went second, etc, for each meet; therefore, we cannot examine sequential bias or conformity bias.

In conclusion, visual exploration of ridgeline plots and numerical summaries for each explanatory variable indicate that round and DD are associated with largest absolute discrepancies. Most of the discrepancy values are quite small; however, recall that scores are given in increments of 0.5; therefore, a discrepancy of 0.5 or more could have a beneficial (or deleterious) effect on the overall placement of the diver within the competition.

Mixed effects model

Figures 1(a) to 4 show the main effect of each of several variables on discrepancy. In this section, we describe the results of a mixed-effects model for all variables simultaneously to control for fixed effects of age, gender, position, direction, and DD. The reference category for the gender factor was female. Position was entered as a factor with the straight position as the reference, and direction was entered into the model with forward direction as the reference.

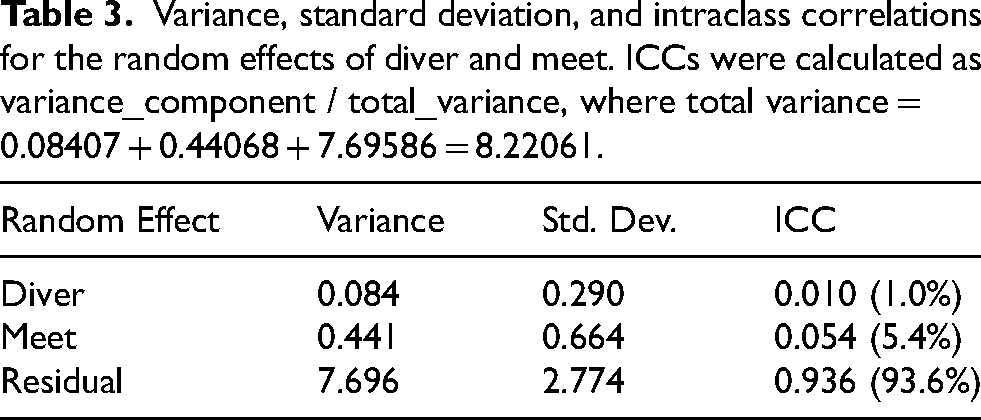

To evaluate the appropriate random effects structure, we fit models with (a) a random intercept for diver only, (b) a random intercept for meet only, and (c) both random intercepts. All models were fit using the lme4 function in the R package lmer (Bates et al., 2015). The model including both random effects provided a significantly better fit (χ² = 31.48, df = 1, p ≈ 2 × 10−8) than models with either effect alone. Consequently, we report only the results for the model that includes random intercepts for both meet and diver (Table 3). This model makes sense because each diver starts at a different ability level (competency), and every meet has a different panel of judges. A random effect for meet is a surrogate for the effect of the judging panel. The mixed-effects model was estimated using Restricted Maximum Likelihood (REML) because our primary interest lies in obtaining unbiased estimates of the variance component associated with meet-to-meet variability rather than comparing alternative fixed-effect structures. REML is preferred in this setting because it adjusts for the loss of degrees of freedom due to estimating fixed effects, yielding more accurate and less biased estimates of variance parameters than full Maximum Likelihood (ML) (Papageorgiou, 2012). For our final model, REML = 185,318.7 and the residual standard deviation is 2.77.

Variance, standard deviation, and intraclass correlations for the random effects of diver and meet. ICCs were calculated as variance_component / total_variance, where total variance = 0.08407 + 0.44068 + 7.69586 = 8.22061.

Model residuals showed mild right skewness and a few extreme values, reflecting occasional large discrepancies between judges’ scores and diver ability. Because mixed-effects models are robust to moderate departures from normality, particularly with large sample sizes, no transformation was applied. Residual diagnostics and sensitivity checks (not shown) confirmed that model conclusions were not driven by extreme observations. As a further check, we re-estimated the model using robust mixed-effects estimation (robustlmm::rlmer) to evaluate the influence of outliers and non-normal residuals (Koller, 2016). The robust model produced nearly identical fixed-effect estimates to the classical REML fit, with only minor reductions in residual and meet-level variance components. These results indicate that the observed relationships, particularly the stricter judging on later and higher-difficulty dives and variation across dive positions and directions, are robust to outliers and distributional assumptions.

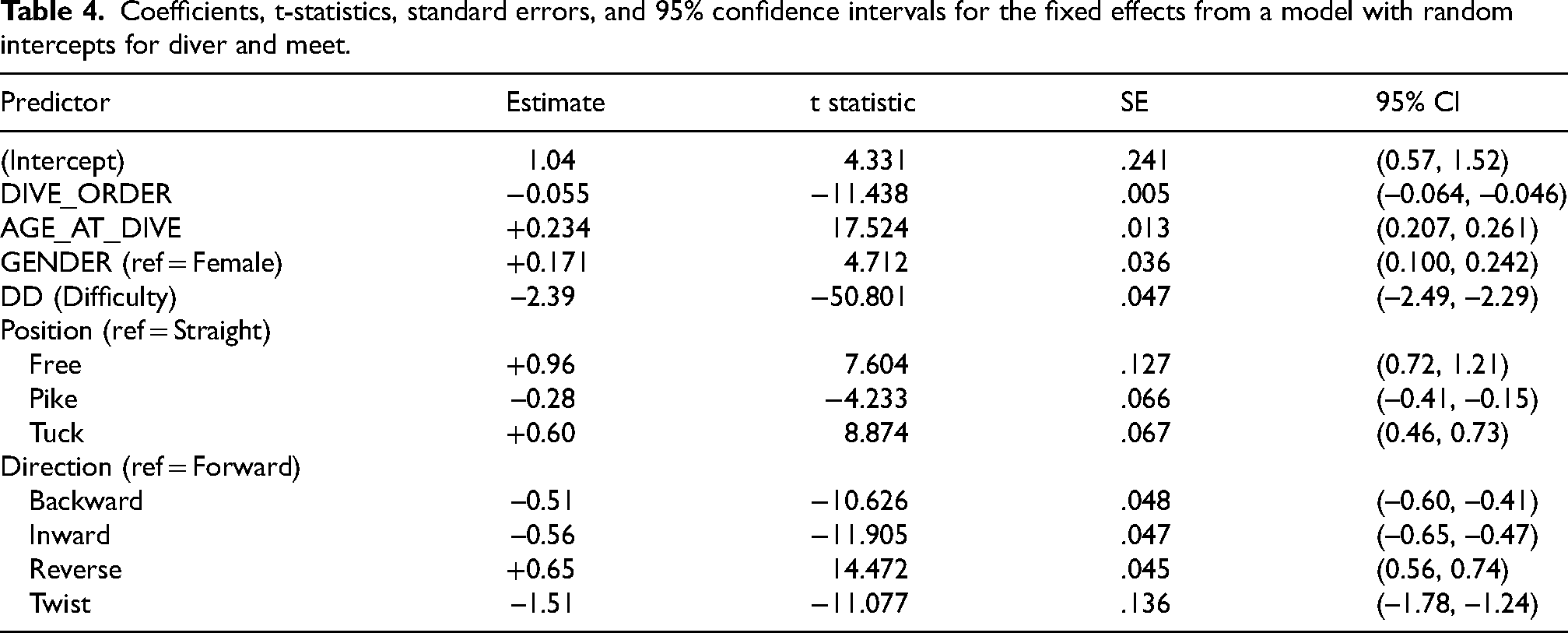

Table 4 shows the parameter estimates, t-statistics, and 95% confidence intervals for each of the fixed effects for the classical REML model fit. We explain the results for each of effects and each model in the following paragraphs.

Coefficients, t-statistics, standard errors, and 95% confidence intervals for the fixed effects from a model with random intercepts for diver and meet.

The intercept can be interpreted as “baseline leniency” for a female diver, straight-position, forward-direction dive, average age and DD and first in the dive order. Overall judging is slightly lenient on average. The effect of round of the meet, represented by the effect for dive order, is small and negative. For every round that passes, the discrepancy decreases by a small amount (−0.055), indicating that the judges tend to judge more harshly (net scores are less than the diver's competency) as a competition continues, adjusted for age, gender, position, and direction. This finding matches the visual evidence seen in Figure 4. As for the effect of age, its coefficient is 0.234 with a 95% CI of (0.207, 0.261), indicating that judges tend to judge more favorably for each year increase in age of the diver. The coefficient for gender is .171 (0.100, .242). Male divers judged slightly more leniently, though the effect is small. The findings for age and gender are commensurate with the ridgeline plots in Figure 1. Overall, the coefficients for round, age, and gender are small; however, none of the 95% confidence intervals contain 0, indicating that all effects are statistically discernible given that other effects are held constant. Later, we will examine whether these effects are large enough to make a practical difference in the outcome of a competition (Table 5).

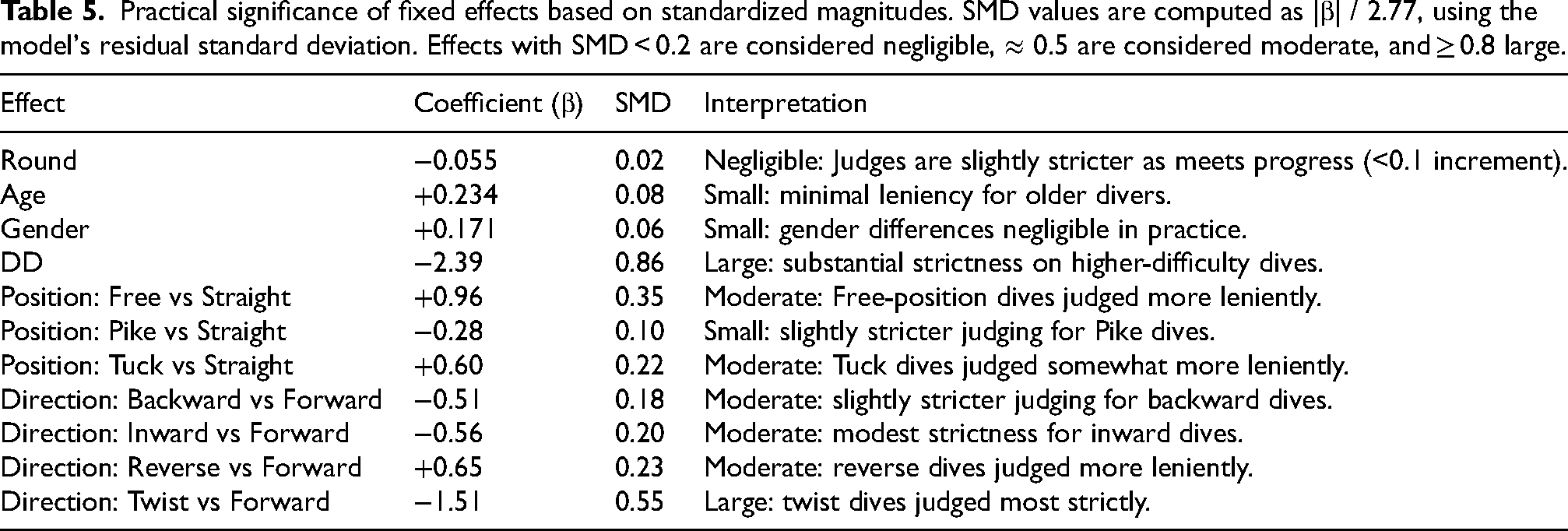

Practical significance of fixed effects based on standardized magnitudes. SMD values are computed as |β| / 2.77, using the model's residual standard deviation. Effects with SMD < 0.2 are considered negligible, ≈ 0.5 are considered moderate, and ≥ 0.8 large.

Next, we examine the effects of DD, position and direction. The coefficient for DD is −2.39 (−2.49, −2.29). Higher-difficulty dives judged much more strictly, implying either that judges apply tighter standards on complex dives or that divers are attempting dives that are beyond their ability. Figure 4 also points to a potential fatigue effect for the divers because, on average, the DD for dives in the final rounds increases. For position, free and tuck positions are judged more leniently compared to the straight position, and the pike position is judged more strictly. The coefficients for free and tuck positions are larger than that of the pike position. Regarding direction, twist dives have the largest negative effect (−1.51) compared to forward dives and reverse dives have a positive coefficient. According to these results, judges tend to favor reverse dives compared to forward and generally do not favor twisting dives.

The variance, standard deviation, and intraclass correlation for the random effects for diver and meet are given in Table 3. ICCs were calculated by divided the variance of each component by the total variance, where total variance = 0.08 + 0.44 + 7.70 = 8.22. Approximately 1% of the variance in discrepancy is attributable to diver-level differences, 5% to meet-level differences, and 94% to within-diver and within-meet variability. This suggests that most variability in judging stringency arises from dive-specific factors rather than consistent patterns across divers or meets. In plain language, any effect of discrepancy that an individual diver or individual judging panel within a meet contributes is completely overshadowed by the fixed effects. This is the opposite effect of a similar analysis done for track and field data, where the variation due to a random effect for an individual athlete was greater than 75% (McGee et al., 2023).

Practical significance of effects

Although the 95% confidence intervals for fixed-effect coefficients do not contain 0, some of coefficients are small relative to the 0.5-point scoring increment used by judges. Practically speaking, a 0.2-unit difference, as seen for age of diver, in predicted discrepancy corresponds to only a 0.4–0.6-point change in the final dive score for typical degrees of difficulty (DD 2.0–3.0). Coefficients are even smaller, and of less practical significance, for diver order and gender. In contrast, effects associated with dive position and direction (0.6–1.0 units) can alter final scores by 1–3 points, large enough to affect diver rankings.

To further assess practical importance, we standardized all fixed-effect coefficients by dividing by the residual standard deviation of the model (σ = 2.77). This expresses each effect as a proportion of the typical within-meet variability in discrepancy. Following accepted conventions, standardized effects < 0.2 were considered small (negligible), ≈ 0.5 medium, and ≥ 0.8 large (Cohen, 1988). Table 5 is a display of coefficients, standardized effects, and an interpretation of the coefficient for each of the fixed effects in the model. Although the p-values for many of the effects were quite small due to the large sample size, most effects associated with those p-values were small in magnitude relative to the variability in judging scores.

In summary, while confidence intervals for the coefficients of all fixed effects do not contain 0, only dive difficulty and certain position or direction contrasts have effects large enough to influence competition outcomes meaningfully. The remaining coefficients are statistically detectable but practically negligible relative to the 0.5-point scoring increment and the overall residual variability in judging.

Discussion and conclusion

The purpose of this analysis is to determine how a diver can use information about the discrepancy between judges’ scores and diver ability to maximize their score in a meet. Specifically, we wanted to determine whether there was a difference in judges’ scores versus divers’ ability based on the position, direction, and difficulty of dives, accounting for random effects of the diver and the judging panel. To accomplish this, we defined two measures: competency and discrepancy. Competency is defined as the mean score for a particular diver over all meets in which the diver participated, regardless of the characteristics of the diver performed. A similar measure has been used to describe athlete ability from previous work in ice skating and gymnastics (Heiniger and Mercier, 2021; Morgan and Rotthoff, 2014; Rotthoff, 2020). Discrepancy is defined as the difference between a diver's ability and the judges’ scores for a given diver for each dive within a meet.

This study demonstrates that, while judge scoring in high school springboard diving is largely consistent with diver ability, certain dive characteristics are associated with predictable differences in scores. Some positions, particularly the tuck position and the free position, tend to receive higher marks relative to the straight position, others, such as pike positions, are judged more harshly. Likewise, backward, inward, and twist directions are associated with less favorable scoring compared to forward dives, whereas reverse dives tend to be scored more positively regardless of dive difficulty. These differences are not explained by individual diver effects or effects of a particular meet, suggesting that judging patterns are more strongly related to dive-specific factors than to diver-specific bias.

Results from the mixed model analyses show that the effects of age, gender, and round (dive order) are negligible compared to the fixed effects of degree of difficulty and certain dive characteristics. The coefficient for DD is large and negative, indicating that judges score more difficult dives more harshly. While this seems to be a contradiction to other findings of a positive difficulty bias, recall that the response variable is discrepancy, which is the difference between a judge's score and a diver's overall ability. The negative effect of DD might indicate that a diver is attempting a dive beyond his or her ability; therefore, the diver might not execute the dive well. The mixed-effect analysis indicating a positive relationship between discrepancy and age, tuck position (relative to straight) and reverse direction (relative to forward). The REML value is quite large (> 185 K); therefore, we have certainly not accounted for all variability. One issue is that dive order is confounded with round, because each diver can choose his or her own dive order within certain parameters.

There are several limitations to the study. Each meet has a different number of divers, as seen in Figure 4, and not every diver participates in each meet. This creates an imbalance for the purpose of modeling, and we did not attempt to deal with the imbalance in this analysis. There are 11 correlations in the model are large (|r| > 0.2). We did not attempt to accommodate different correlation structures. However, most correlations are small (|r| < 0.1), indicating low multicollinearity among fixed effects. The strongest dependencies occur among the dummy variables for position and direction (e.g., 0.84 between Tuck and Pike contrasts), which is expected because these represent related categorical contrasts. The correlation between the intercept and the age of the diver is −0.87, reflecting centering and scaling: as diver age increases, the intercept baseline shifts. None of these correlations suggest problematic multicollinearity beyond what is expected for factor contrasts.

Like all observational data, confounding factors exist in the diving data, and we do not have information in these data to account for several of these factors within a statistical model. The data used in this study do not have information on the order that each diver competed within each meet (sequential order). This is a major limitation because sequential bias or conformity bias cannot be tested, and the presence of these biases is potentially confounded with the effect for the round of the competition. Furthermore, we do not have information on the seed for each diver within a meet or their reputation prior to each meet. In the future, we hope to obtain information on the order of the divers within a meet and to examine sequential bias and conformity bias (Table 1).

For gymnastics and ice skating, there are technical panels that calculate the difficulty of a routine, and the difficulty score can be challenged within certain limits. For diving, the DD of a dive is set by WA and it is not up for discussion during a meet. Furthermore, the DD for a dive is the same regardless of the gender, age, or level of the diver. For the meets we examined, there are 11 rounds consisting of one dive each, and no two divers will perform the same dives in the same order in the same meet. This makes comparisons among divers performing the same dive difficult. For example, it is quite possible that all divers within a meet will perform the same dive; however, they will do so at different points in the competition, which means that any differences could be due to conformity bias or sequential bias (see Table 1). We restricted our current study to examination of discrepancy given age, gender, round, position, and direction to determine ways a diver optimize a dive list for a meet to maximize his or her score. The practical implication is that coaches and divers seeking to maximize competitive advantage may benefit from considering systematic patterns for judges, as considered in this research, when constructing dive lists. Strategic selection of dive positions and directions, particularly in borderline qualification scenarios, could modestly influence competition outcomes without necessarily altering the difficulty of dives attempted.

So, what does the analysis of discrepancy indicate for a diver's dive list? From the above analysis, we have the following advice: on average, older divers have larger positive discrepancies; therefore, stay in the sport! The degree of difficulty has a large negative effect; therefore, divers should not increase difficulty as the expense of practicing the less difficult dives. According to competition rules, the dive list must contain inward and backward dives. It is advisable for divers to choose inward and backward dives that they can perform well at the expense of a greater DD. All divers must also do twisting dives, which are judged more harshly according to this analysis; however, the tuck position and reverse direction are generally more favorable; therefore, a reverse twist in the tuck position might give a better score than the diver's ability would merit (and would look quite impressive). In general, it does not seem that the pike position results in more favorable scores even though it generally increases DD for a given dive. For beginning and advanced dives, one should consider performing the dive in the pike position if (1) the diver is in danger of over-rotating the dive in a tuck position or (2) the diver is flexible enough to perform the pike in a closed position (with no space between the torso and thighs). This latter finding was supported using video evidence (Luedeker and McGee, 2022). Finally, because the 11th optional dive is the diver's choice, choose something other than a twist. The best bet would be a dive in the a reverse direction and a tuck position.

Footnotes

Acknowledgements

The author thanks Gabe Downey, University of Kanas, and Zachary Berg, Pembroke School of Kansas, for providing the data for this paper. The author also thanks two anonymous reviewers for helpful and substantive comments to a previous version of this manuscript.

Ethical approval and informed consent statements

Not applicable

Author contributions

MM contributed vision, coding, data analysis, and write up to this article.

Funding

The author received no financial support for the research, authorship, and/or publication of this article.

Declaration of Conflicting Interests

The author declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.