Abstract

Study Design

Global cross-sectional survey

Objective

To develop and refine the techniques for web-based international validation of fracture classification systems.

Methods

A live webinar was organized in 2018 for validation of the AO Spine Subaxial Injury Classification System, consisting of 35 unique computed tomography (CT) scans and key images with subaxial spine injuries. Interobserver reliability and intraobserver reproducibility was calculated for injury morphology, subtype, and facet injury according to the classification system. Based on the experiences from this webinar and incorporating rater feedback, adjustments were made in the organization and techniques used and in 2020 a repeat validation webinar was performed, evaluating images of 41 unique subaxial spine injuries.

Results

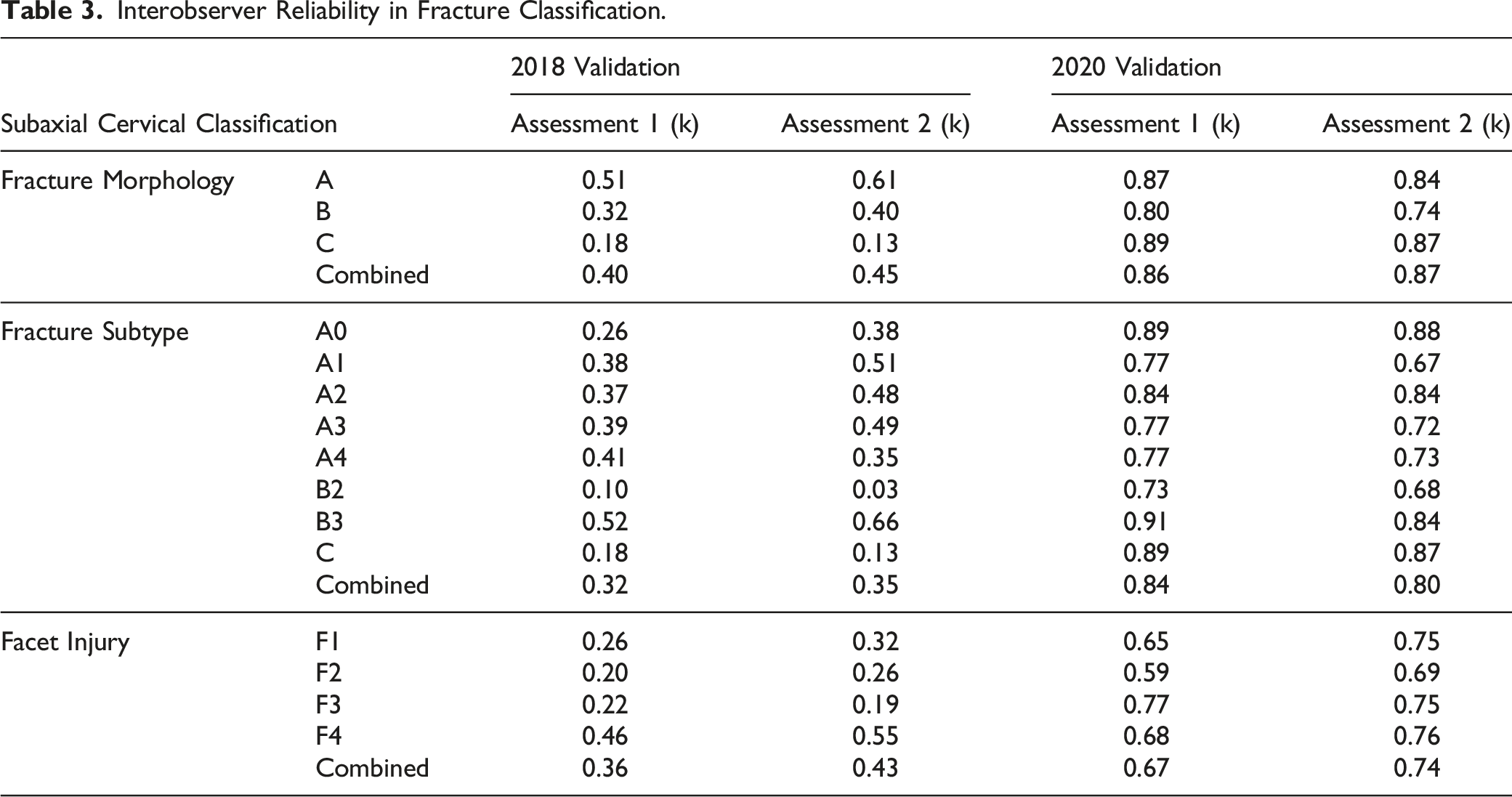

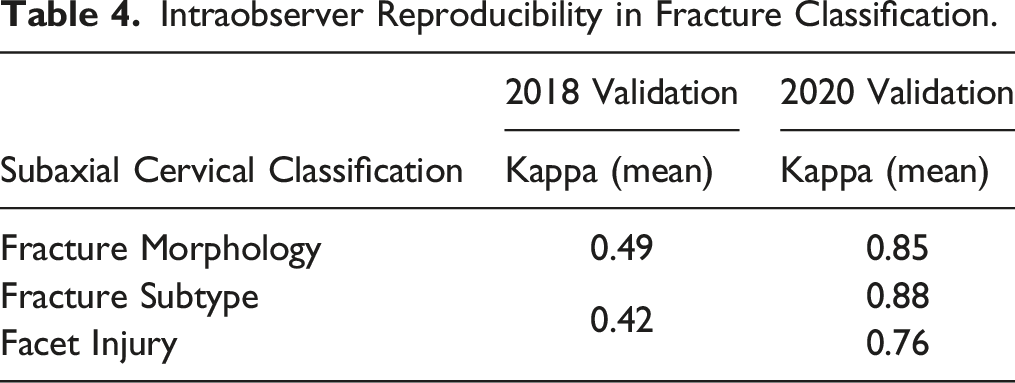

In the 2018 session, the AO Spine Subaxial Injury Classification System demonstrated fair interobserver reliability for fracture subtype (κ = 0.35) and moderate reliability for fracture morphology and facet injury (κ=0.45, 0.43, respectively). However, in 2020, the interobserver reliability for fracture morphology (κ = 0.87) and fracture subtype (κ = 0.80) was excellent, while facet injury was substantial (κ = 0.74). Intraobserver reproducibility for injury morphology (κ =0.49) and injury subtype/facet injury were moderate (κ = 0.42) in 2018. In 2020, fracture morphology and subtype reproducibility were excellent (κ =0.85, 0.88, respectively) while reproducibility for facet injuries was substantial (κ = 0.76).

Conclusion

With optimized webinar-based validation techniques, the AO Spine Subaxial Injury Classification System demonstrated vast improvements in intraobserver reproducibility and interobserver reliability. Stringent fracture classification methodology is integral in obtaining accurate classification results.

Introduction

Classification systems are communication instruments designed to characterize injuries and simplify the transmission of information between providers. These systems are also used as tools to help guide clinical management. Ideal classification systems are both concise and comprehensive, and must be reliably applied by all care providers. A classification system that lacks reliability or has poor validity will have minimal clinical value as it may serve as a biased predictor of patient outcomes. 1 At best, this may confound scientific research and at worst, it may negatively influence patient management. 2

Historically, validation of classification systems have occurred during national or international conferences; however, the coronavirus disease-2019 (COVID-19) pandemic precipitated utilization of online platforms to communicate ideas. 3 Although potential drawbacks with videoconference platforms including quickened mental fatigue and information overload, they can also provide unparalleled opportunity for global communication and inclusion of additional participants. 4 Previous attempts at validating the AO Spine Subaxial Injury Classification have been based on the reliability and reproducibility of only a few physicians, bringing into question the generalizability of these injury classification systems.5-7 Therefore, a protocol which facilitates identifying the reliability and reproducibility of an injury classification system internationally with the inclusion of hundreds of participants may elucidate pitfalls of the current AO Spine Subaxial Injury Classification.

The value of evaluating a classification system in this manner lies in its ability to include a variety of surgeon characteristics that can determine if the classification system can be globally implemented, throughout levels of training, and across multiple specialties. Accordingly, the aim of this study was to perform an advanced validation of the AO Spine Subaxial Injury Classification System using surgeons from around the world through various years of practice. We have therefore developed an online validation technique, which can be used for these kinds of studies.

Methods

The AO foundation has relationships with multiple institutions internationally. In order to receive anonymous injury films, IRB approval is required by each of the centers providing the images. Additionally, AO has research imaging associates who confirm image anonymity to ensure there is no breach of patient confidentiality. Once anonymity of the films is confirmed, injury CT and radiographs are deposited in a secured database for future validation studies.

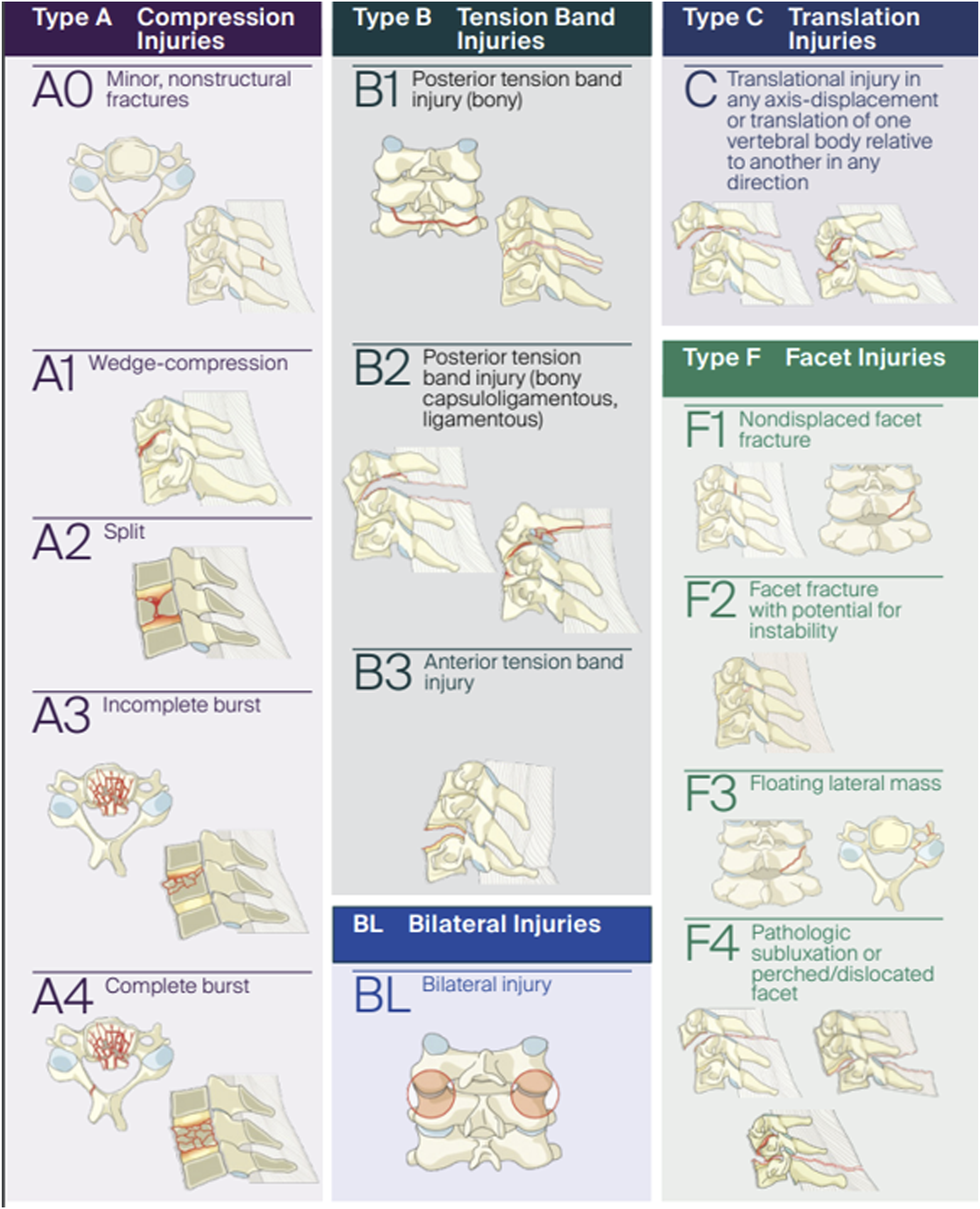

An online live webinar format was utilized to maximize the inclusion of AO Spine Subaxial Injury Classification System validation participants with an emphasis on inclusion of participants from different parts of the world with varying degrees of experience. The first of these webinars was conducted in 2018. All AO Spine members were invited to participate in the webinar. A total of 264 AO Spine members joined the session (assessment 1) which was repeated after 3 weeks (assessment 2). The webinar session was conducted in English. Prior to assessment of the injury classification, all participants watched a live tutorial video by one of the developers of the classification system during a training session. Radiographic images for 35 distinct injuries were then presented for review (all injury types had 3 distinct injury CT videos reviewed except for F4 injuries, which only had two distinct injuries, and B1 injuries, which were underrepresented in the database and were thus chosen not to be included in the validation). The case order was randomized during both case assessments. Computed tomography (CT) scans of the entire cervical spine including high-resolution axial, sagittal, and coronal videos were viewed by each participant at a rate of 10 frames/second. Additionally, key images (reported by participants to be difficult to visualize due to their small size) were also available for viewing simultaneously. Respondents were asked to classify fractures based on the AO Spine Subaxial Injury Classification System, which included injury location (C3-C7), morphology (A, B, C), subtype (A1, A2, B2, etc.), and presence of a facet injury (Figure 1). The validation members were also asked if the facet injury was unilateral or bilateral and a primary or secondary injury. All answers were recorded in an online survey using Research Electronic Data Capture (REDCap) that required a classification be recorded for each injury without the ability for the participant to skip cases. Therefore, if a participant had internet connection issues and was unable to view the CT scan of an injury, they were required to put random answers to resume the presentation (e.g. if five CT scans were missed due to connection issues, five random classifications were required to be put into the survey). It should also be noted that if the online survey was closed prior to completion of the validation, the answers were not saved and random answers were required as input prior to resuming the validation. Pictorial demonstration of the AO Spine Subaxial Injury Classification. Permission to use this image granted by the AO Foundation©, AO Spine, Switzerland.

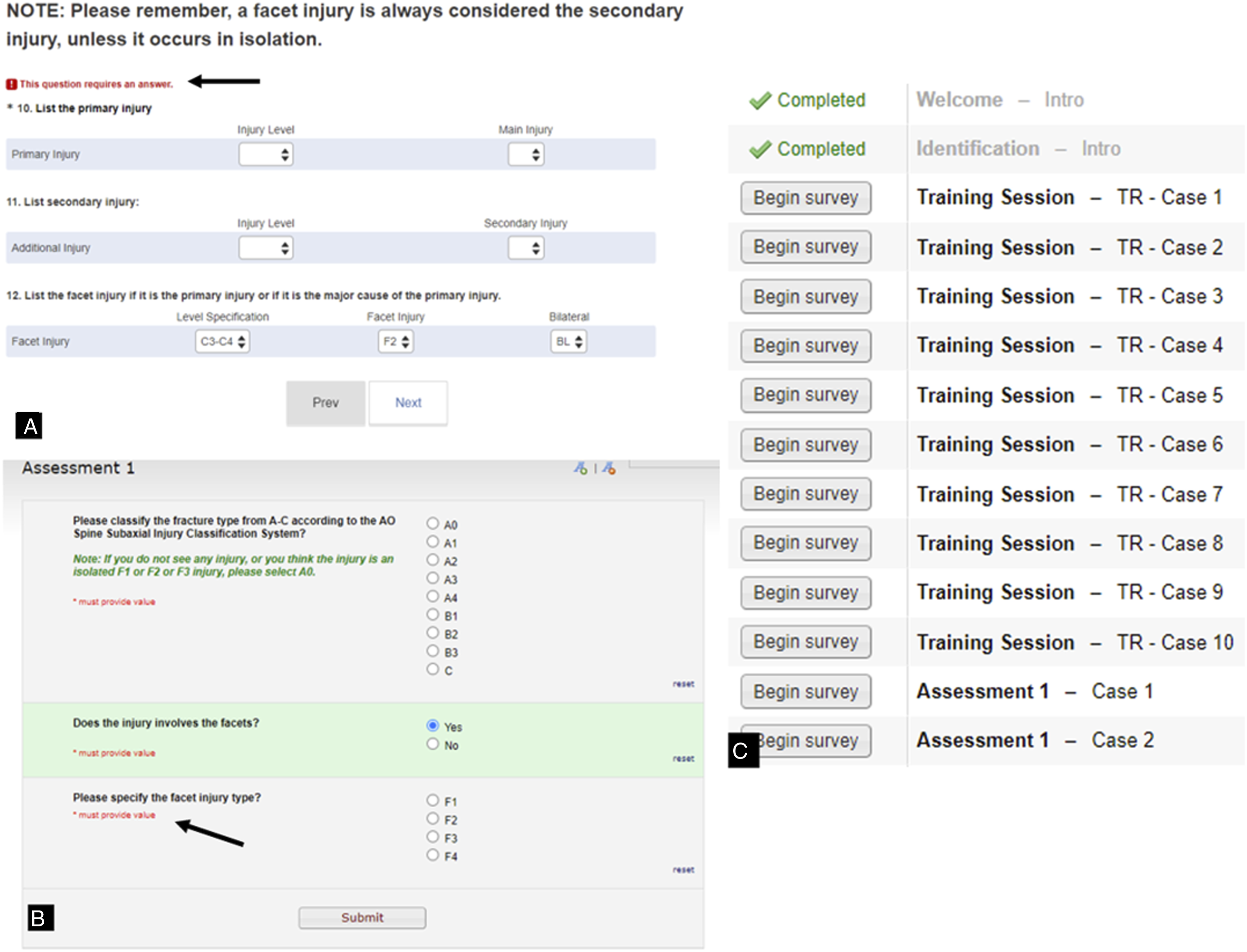

Because this was a novel way of conducting a fracture classification validation, we asked the participants to provide feedback and make suggestions for improvement. All the comments from the validation members were reviewed. Based on the comments, changes in the construction of the webinar were implemented including reducing the frame rate speed of all CT videos and limiting the CT video to the segment of injury. Additionally, we decided to focus the classification on injury type (A,B,C) and fracture subtype with separate facet injury scoring. We also modified the survey to tolerate missed questions due to internet connection issues (Figure 2). (A). Example of the 2018 survey, which requires a primary injury selection in order to proceed to the next case. The black arrow demonstrates facet injury selection alone will not let the rater proceed to the next question. (B). The 2020 survey does not require entry of a primary or secondary injury and allows for easier selection of facet injuries. The black arrow indicates this question only populates with selection of “yes” to a facet injury. (C). The 2020 survey did not require completing surveys on all previous cases before proceeding to a specific case assessment.

With this modified approach, we repeated the study in 2020. In this live webinar, 203 AO Spine members from six different geographic regions participated. Similar to the previous iteration, all participants attended the live tutorial video and training session. However, CT images presented during this assessment were trimmed to the region of interest and were played once at a rate of 2 frames/second. Additionally, key images were presented both before and after CT images, and were standardized in size and quality. In this validation, cases with facet injuries were presented separately from morphologic A, B, C classification and were not required to be indicated as primary vs secondary injuries or distinguished as unilateral vs bilateral injuries. Additionally, validation members were no longer required to identify the level of injury. Forty-one cases were included in these 2020 sessions.

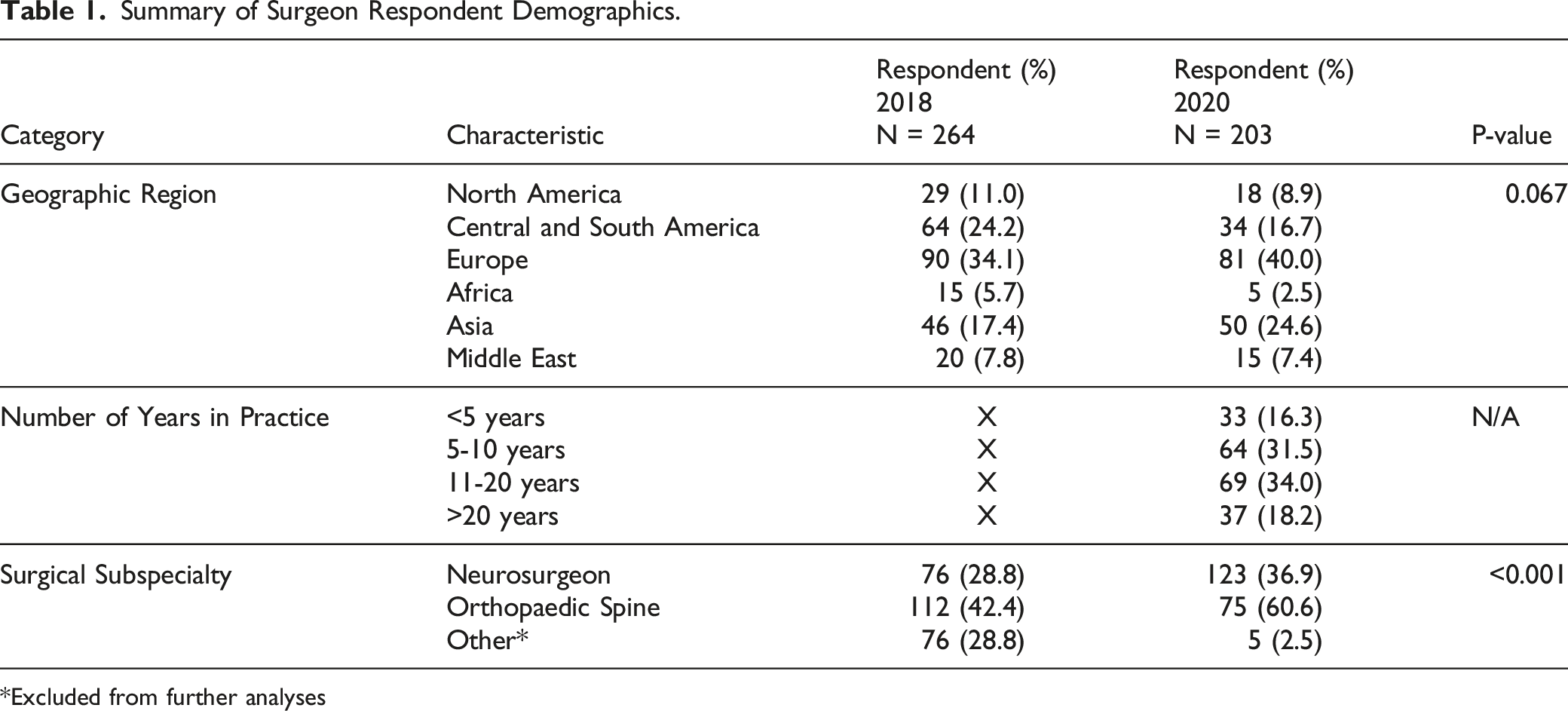

Demographic data for all validation members was recorded in both 2018 and 2020 based on geographic region (North America, Central and South America, Europe, Africa, Middle East, and Asia and the Pacific) and surgical subspecialty (orthopaedic spine, neurosurgery, or other), but number of years in practice (<5, 5-10, 11-20, and >20) was only recorded in 2020.

AO Spine Knowledge Forum Trauma

Members of the AO Spine Knowledge Forum Trauma committee picked images chosen for inclusion in the validation. The decision for selecting of each image is based on if the image is representative of the classification description. For example, does an A4 injury accurately represent a complete burst fracture or is it difficult to tell if there it is an incomplete burst fracture with questionable extension into the inferior endplate. This might be difficult to detect given the time constraints for the validation, since each injury evaluated is seen live without the ability to review the image at a later time period.

Gold Standard Committee

The gold standard committee assigned the AO Spine Subaxial Injury Classification System grade to each distinct injury. The committee was comprised of five surgeons who are members of the AO Spine Knowledge Forum Trauma committee, which also consisted of the original creators of the classification schema. Each gold standard committee member rated each injury individually. Any image with disagreement then required a formal discussion to resolve the dispute. Each injury film selected for validation inclusion ultimately achieved unanimous agreement by the committee and this was determined the final gold-standard classification by which the validation participants would be graded.

Statistical Analysis

Chi-square test was used to evaluate the significance of change in demographic data between the 2018 and 2020 webinar cohorts. Agreement percentages were used to compare the validation members classification grade to the “gold standard”, defined by a panel of expert spine surgeons and traumatologists who came to unanimous agreement on the classification of the injury.

Per consultation with our statistician, a minimum of three cases was required to achieve adequate power to compare intraobserver reproducibility and interobserver reliability ratings based on each injury. Cohen’s Kappa (κ) statistic was used to assess the reproducibility and reliability of the injury morphology (A, B, or C), injury subtype (A1, A2, A3, etc.), and facet injury (F1, F2, F3, or F4) classification between independent observers (interobserver reliability) and the reproducibility of the injury classification over two assessments (intraobserver reproducibility). The κ coefficients were interpreted using the Landis and Koch grading system. 8 A κ coefficient of less than 0.2 was defined as slight, between 0.2 and 0.4 as fair, between 0.4 and 0.6 as moderate, between 0.6 and 0.8 as substantial, and greater than 0.8 as excellent reliability or reproducibility.

Results

Summary of Surgeon Respondent Demographics.

Excluded from further analyses

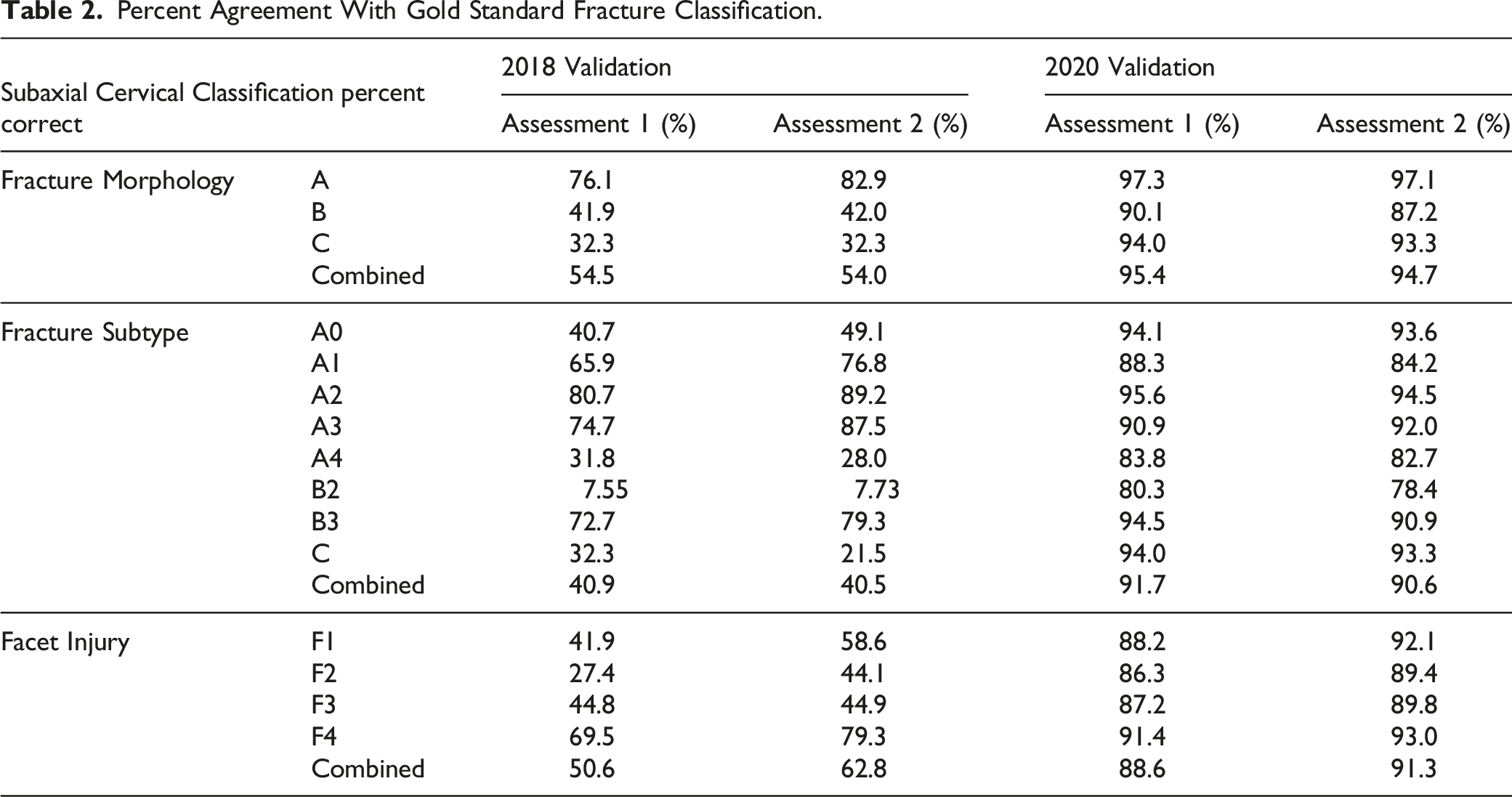

Percent Agreement with Gold Standard

Percent Agreement With Gold Standard Fracture Classification.

Interobserver Reliability

Interobserver Reliability in Fracture Classification.

Intraobserver Reproducibility

Intraobserver Reproducibility in Fracture Classification.

Discussion

In an attempt to involve surgeons from around the world with varying degrees of experience, the AO Spine knowledge forum trauma attempted a new technique to validate the AO Spine Subaxial Injury Classification System. In our first trial in 2018 we came across some technical and organizational problems. The results were disappointing and the feedback from the participants was overwhelmingly negative. While the initial results demonstrated lower than expected reliability, user comments indicated that this was likely a technical issue with the validation and not an issue with the classification itself. The results from the 2018 validation demonstrated “fair” interobserver reliability and “moderate” intraobserver reproducibility. This was much lower than the AO Spine preliminary subaxial validation by the knowledge forum members. 9 Based on the participant’s responses, this lower reliability was largely attributed to the difficulty in fracture visualization during the live conference format and survey question structure. After internal discussions we modified our approach. The frames viewed per second were reduced five-fold in the 2020 validation and CT scans were trimmed to the area of injury rather than displaying the entire cervical spine to minimize rater fatigue during the assessment. 10 Additionally, raters were not required to determine the vertebral injury level or to decipher between primary vs secondary injuries for fractures with both A, B, C and facet components. As a result, the 2020 validation demonstrated excellent interobserver reliability and intraobserver reproducibility for fracture morphology and subtype, with substantial interobserver reliability and intraobserver reproducibility for facet injuries.

In an attempt to limit validation member recall of each injury, two separate live webinar events were held three weeks apart in 2018. This ensured all validation members would have an equal length of time (three weeks) between each subaxial cervical spine injury evaluation. However, the frame rate speed (10 frames/second) during the 2018 validation likely contributed to study bias due to different validation members’ injury identification and classification processing speeds, which may be affected by age, experience level, or English language proficiency.11-13 Therefore, when performing radiographic validation studies with a large number of participants in a live webinar format, our study identified slow frame rate speed is imperative to allow adequate time for imaging review, as the live format does not allow for raters to visualize the scan with multiple passes. This may be exponentially important when relying on international validation team members who have variable broadband internet speeds and variable English proficiency. 14 Additionally, validation participants should be given adequate time between cases to record the injury classification so as not to interrupt viewing of subsequent injury films.

While the validation of any classification system is essential to establish its clinical utility, the literature is currently bereft of recommendations for large scale advanced validation testing. The results of this study highlight the importance of proper methodology for international classification validation. Although both validation studies were performed by AO members using the exact same classification system, disparate validation results were obtained. This ultimately highlights how suboptimal methodology can serve as a biased predictor of outcomes in research. Part of the importance of this study is building the foundation of the methodology required to obtain accurate fracture classification validations. We recommend displaying standardized high-resolution key images before and after viewing CT imaging. CT images may be played through once only to limit time spent to one minute on each injury evaluation, but they should be trimmed to the region of injury and played at a rate of no faster than 2 frames/second. Ideally, surveys should also have at minimum 15 seconds to allow for answer selection before displaying the subsequent injury for rating, and survey questions should not have mandatory answers before proceeding to the next injury due to potential internet-connectivity issues. Live technical support familiar with the webinar software should be present throughout the validation to assist when necessary. Finally, a trial tutorial of selected cases should be instituted prior to the official validation to help guide the rater in the workflow necessary for validation completion.

In order to establish whether methodological issues alone were causative of the lower classification accuracy of the 2018 validation, we examined the effect of regional participation between the 2018 and 2020 webinars. Although there was a slight increase in participants from Asia and Europe in 2020, this did not reach statistical significance. Further, previous literature evaluating AO Spine fracture classification systems has not demonstrated geographic region or surgeon experience accounts for differences in radiographic classification of thoracolumbar fractures. Therefore, we believe it unlikely surgeon demographics alone accounted for the large variability in the subaxial cervical spine classification accuracy. 15

In order to further identify the role of methodology in variations between the 2018 and 2020 iterations of the fracture classification, we compared both groups to an established baseline as determined by the AO Spine knowledge forum trauma. The knowledge forum trauma previously demonstrated an interobserver reliability for injury subtype of 0.64 and an intraobserver reproducibility of 0.75 indicating substantial reliability and reproducibility of the AO Spine Subaxial Injury Classification System. 9 It should be noted, only 10 participants were included in the study, so poor reliability or reproducibility from a single member would have large effects on the results of their validation. 9 Although the 2018 validation demonstrated fair to moderate injury subtype and morphology reliability and reproducibility, the 2020 iteration demonstrated excellent intraobserver reproducibility and interobserver reliability, thus comparing favorably to the results obtained by the knowledge forum trauma. 9 Although it is unclear why the 2020 classification results actually had improved reliability and reproducibility compared to the knowledge forum trauma, this may be a result of having over 200 participants, which allowed for some variability in incorrectly classifying injuries. Additionally, in 2020 the classification system had been present for 5 years prior to the validation, giving participants the opportunity to practice classifying subaxial cervical spine fractures prior to the study.

There were multiple limitations present in the design of our study. Although a side-to-side comparison of the 2018 and 2020 interobserver reliability and intraobserver reproducibility appears to indicate study design alteration after 2018 led to improved interobserver reliability and intraobserver reproducibility, this may be partly due to confounding on multiple levels. First, although regional demographics were not significantly different between the two validation groups, we were unable to collect surgeon experience during the 2018 validation. Additionally, since there was a significantly larger group of non-spine surgeons in 2018, this may have led to worse interobserver reliability and intraobserver reproducibility in the 2018 participation as opposed to true differences due to validation methodology alone. Second, if validation members had internet connection problems, inadequate time to classify injuries between cases, or they did not visualize the fracture during the first viewing of the CT scan, they were unable to review the CT scan for either the 2018 or 2020 iteration of the validation study. In clinical practice, there is no limitation to the time you are able to view a CT scan and subsequently classify the injury. This may have artificially suppressed the percent correct compared to the gold standard and the interobserver reliability and intraobserver reproducibility for both validation studies. Third, the study did not use identical injury films during the 2018 and 2020 validation periods since the initial primary goal of the study was to obtain an accurate depiction of the interobserver reliability and intraobserver reproducibility of the AO Spine Subaxial Injury Classification System, not to validate if the 2020 methodology was superior to the 2018 version. Finally, injury films evaluated by validation participants were limited to what was available in the AO Spine repository. Due to the limited number of F4 injuries available (N = 2), the interobserver reliability and intraobserver reproducibility may have been underpowered. Further, there were no B1 injuries evaluated. Given the lower reliability and reproducibility of B type injuries, this may have artificially raised the reliability and reproducibility of our results in both 2018 and 2020. It is worth noting that B1 injuries were not evaluated in either version of the validation, so this would not affect the comparison of the reliability and reproducibility results between the 2018 and 2020 validations.

Conclusion

The AO Spine Subaxial Injury Classification System demonstrated substantial reliability for facet fracture injury, and excellent reliability for fracture morphology and subtype among surgeons worldwide based on the most recent 2020 validation assessment. The results highlight the importance of survey methodology in classification validation. Imaging speed, video length, survey construction, and web conference interface had significant impact in the dissimilar results found between the 2018 and 2020 validation assessments.

Footnotes

Acknowledgments

The authors of the manuscript would like to thank Christian Knoll and Janik Hilse from the AO Innovation Translation Center for their support with the statistical analysis, developing the methodology, and database structure.

AO Spine Subaxial Classification Group Members: ABM Morshed Goni, Jason Cheung, Jose Arturo Rodriguez, Abduljabbar Alhammoud, Chloe Curri, Khalid Saeed, Mauro Fernandes, Alessandro Ramieri, Bjorn Biera, Jeevan Sharma, David Wamae, Luis Muniz Luna, Crag Steiner, Itati Romero, Mohamed Khattab, Daniel Ricciardi, Syed Sultan, Sotiris Plakoutsis, Sandeep Gidvani, Richard Avero, Akinwande Akinjolire, Assem Sultan, Catalin Mejer, Mahmoud Alkharsawi, Guillermo Ricciardi, Aikaterini Karagianni, Phedy Lim, Ahmad Atan, Andrea Veroni, Richard Lindtner, Paloma Bas, Daniel Lupinucci, Emilija Pemovska, Vivek Tadav, Austin Enright, Juan Delgado-Fernandez, Yohan Robinson, Ahmed Ibrahim, Marcelo Valacco, Fabricio Medina, David Orosco, Haifeng Yuan, CC Wong, Miguel Angel Luna Ortiz, Nicola Montemurro, Zdenek Klezl, John Chen, Ripul Panchal, Ignacio Garfinkel, Mohamed El Salamouny, Darko Perovic, Olga Marillo Acosta, Alejandro Audiffred, Alexander Weening, Andrew James, Vito Fiorena, Eugen Cezar Popescu, Jayakumar Subbiah, Desai Ankit, Rafael da Mota Moraes, Ronald Rioja Rosas, Thami Benzakour, Ricardo Rodrigues-Pinto, Paulo Pereira, Francisco Villarreal Garcia, Mauro Pluderi, Chun Kee Chung, Osama Aldahamsheh, Robert Lucaciu, Maria Garcia Pallero, Baron Zarate Kalfopulos, Mohammed El-Sharkawi, Shardul Soman, James Harrop, Brett Freedman, Drew Bednar, Wael Alsammak, Hussain Alhamal, David Ruiz, Raphael Lotan, Konstantinos Paterakis, Ricardo Velasco, Claudio Bernucci, Amin Henine, Gunaseelan Ponnusamy, Laura Ganau, Jose Alfredo Corredor, Johnny Duerinck, Neelakanta Buchireddygrai, Jeronimo Milano, Arun Viswandaha, Flynn Rowan, Oscar Gonzalez Guerra, Yunus Akman, Lara Prisco, Guillermo Espinosa, Daniela Linhares, Sara Diniz, Selvaraj Ramakrishnan, Hugo Aleixo, Mario Ganau, Brandon Rebholz, Horatiu Moisa, Maria Gandia, Barani Rathinavelu, Reza Yassari, Sean Barry, Aleksei Ziabrov, Khalid Almadni, Benzarti Sofien, Bruno Saciloto, John Koerner, Andrey Grin, O. Clark West, Nitesh Gahlot, Nuno Neves, Tarek El Hewala, Alkinoos Althanasiou, Joost Rutges, Insafe Mezjan, Scott Wagner, Jibin Francis, Yoni Goldenberg, Mark Bernstein, Devi Tokala, Ahmed Dawoud, Yuvaraja Murugan, Fabian Hinojosa, Tijjani Magashi, Jose Arbatin, Felipe de Negreiros Nanni, Seibert Franz, Joaquim Teixeria, Colin Harris, Simon Weidert, Salvatore Russo, Simon Weidert, Juan Montoya, Rafael Llombart-Blanco, Bruno Direito-Santos, Rodolfo Paez, Shilabant Sribastav, Adetunji Toluse, Mahamoud Elshamly, Kwok Tsz Teung, Victor Ungurean, Anand Segar, Andrey Pershin, Quynh Nguyen, Asmatullah Abdulsalam, Adetunji Toluse, William Lopez, Bhavuk Garg, Mahmoud Elshamly, Babak Shariati, Fernando Oviedo Pitti, Cristian Valdez, Taolin Fang, Maximo-Alberto Ulloa, Uwe Platz, Mohamad Zaki Mohd Amin, Cristina Mihoc, Hamish Alexander, Raghuraj Kundangar, Meng-Huang Wu, Aju Bosco, Bruno Costa, Kubilay Ozdener, Patrick Pritchard, Yasunori Sorimachi, Amauri Godinho Jr., Panchu Subramanian, Naohisa Mihakoshi, Pragnesh Bhatt, Myriham Murguia, Ali Alrubaye, Chadi Tannoury, Sung-Joo Yuh, Nianhu Li, Lingjie Fu, Nissim Ackshota, Nanette Maue, Arturo Cabrera de la Cruz, Christan Cheng, Jose Perozo, Tarun Suri, Vasili Hurinovich, Luis Marques, Nestor Taboada, Chrisitan Konrads, Mahmoud Shoaib, Balgopal Karmacharya, Andrey Leontev, Hugo Arturo Gonzalez, Francisco Mannara, Cumhur Kilincer, Ala' Marji, Chumpon Jetjumnong, Robert Mulbah, Stipe Corluka, Wen-Tien Wu, Ahmed Abdelgawaad, Valentine Mandizvidza, Akbar Zubairi, Konstantinos Margetis, Thomas Niemeier, Alfredoi Guiroy, Juan Lourido, Marcu de Oliveira Ferreira, Mauro Medina, Zachary Hickman, Rian Vieriera, Sean Smith, Joana Guasque, Hassane Amadou, Alberto Bogna, Jagannath Palaniappan, Alejo Vernengo-Lezica, Derek Cawley, Alfredo Figueiredo, Noe Dimas, Federico Landriel, Ariel Kaen, Fernando Krywinski, Robin Pillay, Federico Sartor, Nicola Nicassio, Joachim Vahl, Jose-Carlos Sauri-Barraza, Johanna Valdeblanquez Atencio, Andrew O'Brien, Joseph Butler, Nader Amini, Marcelo Gruenberg, Ferdinand Krappel, Norberto Fernandez, Alejandro Castillo, Sebastian Cruz Morande, Dilip Gopalakrishnan, Segundo Fuego, Luis Miguel Duchen Rodriguez, Miguel Martinez Guillermo, Cristina Igualada, Gbolabo Idowo-Deifa, Joao Morais, Shafiq Hackla, Guilherme Ramos, Salim Msuya, Emanuele Bavaresco, Ankit Patel, Samuel Grozman, Waheed Abdul, Alon Grundshtein, Hugo Samano, Lady Yemira Cari, Pedro Bazan, Andrea Redaelli, Konstantinos Starantzis, Wesley Bronson, Serdar Demiroz, Moyo Kruyt, Mansouri Nacer, Martin Estefan, Mbarak Abeid, Rui Manilha

Declaration of Conflicting Interests

The author(s) declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The author(s) disclosed receipt of the following financial support for the research, authorship, and/or publication of this article: The AO Spine Injury Classification Systems were developed and funded by AO Spine through the AO Spine Knowledge Forum Trauma, a focused group of international spine trauma experts. AO Spine is a clinical division of the AO Foundation, which is an independent medically-guided not-for-profit organization. Study support was provided directly through the AO Spine Research Department.