Abstract

Cognitive assessment and training are needed to avoid accelerated cognitive decline. We have developed BrainTagger, a suite of serious games that evaluate and potentially train cognitive abilities such as inhibitory control, processing speed, cognitive flexibility, and working memory. Ideally, cognitive assessments can be used as sentinels to detect problems that might damage brain functions (e.g., dehydration, poor nutrition, depression, delirium, inappropriate medication). In this paper, we report on a multi-year effort to develop hardware and software solutions that support effective implementation of cognitive assessment games (CAGs) in long-term care. Issues and constraints addressed include accommodating physical disabilities; making cognitive assessment enjoyable and engaging; providing scientific evidence that each game is measuring the intended construct; and reporting game results in a meaningful way. This article describes how we addressed some of these issues and demonstrates the sustained effort required to make this type of functionality work in practice. We also include some preliminary design guidelines, based on our experience, that may be useful in guiding future work on developing CAGs.

Introduction

As of July 2022, over seven million people living in Canada were 65 years of age and older (Statistics Canada, 2023a). Across this demographic, over 172,000 people were living in long-term care (LTC) homes in 2021 (Statistics Canada, 2023b). Given the greater incidence of older adults developing or experiencing cognitive impairment compared to younger demographics (Salthouse, 2009), alongside the 87% prevalence rate reported between 2015 and 2016 in Canadian LTC facilities (Canadian Institute for Health Information, n.d.), there is a need for cognitive assessment and training in these settings. In service of addressing this need, the Interactive Media Lab at the University of Toronto in collaboration with its spinoff company, Centivizer Inc., is developing a suite of cognitive assessment games (CAGs) through a project in which the first publication was written a decade ago (Tong & Chignell, 2013) (for explanations and demos, see intro.braintagger.com). In this article, we describe lessons learned in trying to create an effective system of game-based cognitive assessment and the resulting re-designs from these learnings.

Braintagger: An Overview

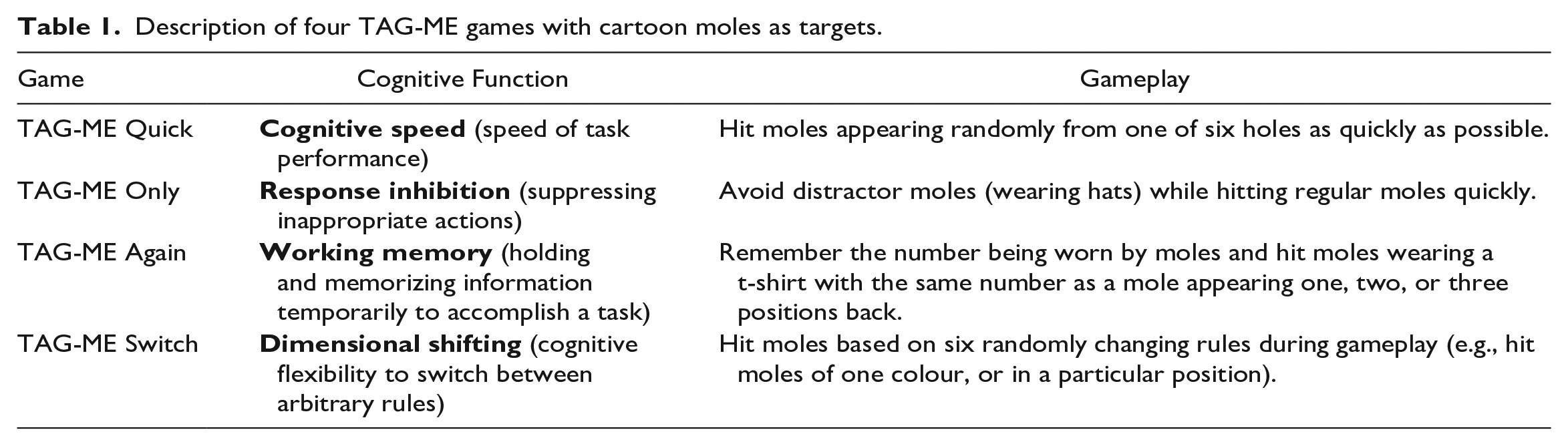

BrainTagger is a suite of Target Acquisition Games for Measurement and Evaluation (TAG-ME). These serious games assess a variety of cognitive abilities and initially used a “Whac-A-Mole” design that involved “hitting” targets on a screen in alignment with each game’s objective while avoiding distractor items. Four of these games are described in Table 1.

Description of four TAG-ME games with cartoon moles as targets.

Some TAG-ME games have been scientifically demonstrated to serve as analogs of well-founded psychological tasks. For example, TAG-ME Only functions as an analog of the Go/No-Go task, which is a standard response inhibition task, and has been demonstrated to act as an assessment of response inhibition ability through its convergent validity with the Go/No-Go task (Tong et al., 2021). Tong et al. (2020) also found that an earlier version of TAG-ME Only was significantly correlated with the mini-mental state examination (MMSE). Additionally, TAG-ME Again has been demonstrated to act as an assessment of working memory updating through its convergent validity with the N-back task (Hu et al., 2023).

Figure 1 shows BrainTagger’s gameplay interface, which consists of six positions from which targets can appear. Players have the option of using an accompanying button box, which is also shown in Figure 1, that has six buttons mapping to each target-appearing position on the screen to support fine motor impairments (Tong et al., 2020). The button box is one of the early accommodations that we made to enable a wider range of people to play the games. A keyboard connected to the screen, which requires more precise key-based input, can alternatively be used, as can computers connected to large displays or tablets without button boxes (the original form factor that we tried).

BrainTagger being played with a button box.

Methodology

Since the work carried out was design research, our methodology employed a combination of iterative design, interviews, and anecdotal observations. We triangulated where possible to ensure that different methods were giving us similar answers and we also looked for consistency in the anecdotal observations that were made at different locations before acting on them. The main field testing with residents of LTC was carried out at York Care Centre in Fredericton, New Brunswick by Grace Steeves, assisted by Debbie Barton in the summer of 2022. Additional feedback was provided by Christine Cormier (CEO of Faubourg du Mascaret in Moncton, New Brunswick) during an informal interview. Feedback was also solicited at Deer Park Library in Toronto, Ontario during four sessions held in the fall of 2019 and at a number of conferences, including most recently Together We Care (Toronto, Ontario, April 2022), the AGE-WELL Annual Conference (Regina, Saskatchewan, October 2022) and the Therapeutic Recreation Ontario Conference (Toronto, Ontario, June 2023).

Iterative Redesign of Braintagger

The question of what form factor to use with BrainTagger was salient from the outset of the project. The first application of BrainTagger games was in screening for delirium in the emergency department (Tong et al., 2016a, 2016b; Lee et al., 2019). The original games ran as native apps on Android tablets. When we found that older players used a sliding gesture, instead of tapping, we were able to revise the software to recognize finger slides as well as pure taps. However, when we later decided to implement BrainTagger games as web apps so that they could run across multiple platforms we could no longer look for different kinds of input gestures using a native app and thus we developed the button box so that older people could provide reliable inputs to the web app versions of the games.

Some people with visual disabilities wanted to have a bigger screen. Thus we experimented with a Chromebook paired with the button box so that the user could play the games by hitting the buttons, but also see the game display on the larger Chromebook screen. We have also used keyboard or button box input to a computer connected to a large display as another type of form factor. Since the button presses are output as alphabetical letters by the Arduino microprocessor controlling the button box, the button box can be connected to any computer and treated as a keyboard.

Another problem noted by users is that the button box keys and tablet screen are somewhat displaced from each other making it difficult to play the games. This observation, along with a wish to reduce the manufacturing costs associated with the button box, has led us to create a specialized sleeve that is placed over the tablet (Figure 2) to give an all-in-one solution. This design places the buttons as close as possible to the screen.

A rendering of the tablet sleeve.

Our main reason for preferring button-based input is that the game can then be played even if a person lacks manual dexterity. It is much easier to hit a button than it is to choose the right key to press on a keyboard. For people who are blind, an auditory version of BrainTagger will be needed, but this version has yet to be developed.

Hand Strap for Button Box

To enhance comfort while using the button box during gameplay, one LTC home used duct tape and Styrofoam to create a pad on which a player’s hand could be secured while playing BrainTagger games. The duct tape and Styrofoam solution was designed to assist residents with weakness in their hands or tremors, making it difficult to consistently and quickly press buttons on the button box as required when targets appear in each BrainTagger game. Staff at this LTC home noticed that TAG-ME Where gameplay was particularly affected when residents could not keep their hand resting on the button box while waiting for the next mole to appear.

Different Game Skins

While the Whack-a-Mole games were well received in the emergency department, where they were likely seen as part of medical care, some LTC residents found the Whack-a-Mole theme “childish”. After consulting with stakeholders in care homes we settled on a new gardening theme. Figure 3 shows the gardening version of TAG-ME Quick where the player needs to dig up the weeds as quickly as possible. Similarly, in the gardener version of TAG-ME Only, the objective is to dig up weeds as quickly as possible without digging up the flowers that occasionally appear. We have recently started working with a third game skin/motif involving playing cards. In addition to evoking happy memories for many older adults, playing cards with their various combinations of suits, colours, numbers, and face cards provide a rich foundation for developing new games. We are exploring a levels-based approach of assessing cognitive abilities, where response time and accuracy measures are supplemented with progression through levels, and capacity is assessed in terms of which level the player is able to progress to, somewhat analogous to the digit span task where working memory capacity is assessed as the number of randomly presented digits that can be recalled.

In the gardening version, users dig up weeds instead of tagging moles.

Adjustable Game Speed

One of the hardest things about designing for older people is that individual differences, and disabilities, tend to increase with age. When a person is responding slowly, is she cognitively slow, or does she have a movement disability (e.g., caused by a stroke)? In order to deal with this problem we use the TAG-ME Quick game to assess movement speed and adjust the appearance time of moles up or down depending on how fast the player is responding. We can then look at the difference between response times for a cognitively complex task, and corresponding times for the simple TAG-ME Quick task to remove the movement speed effect and derive an estimate of cognitive processing time. This subtractive approach has a long history in psychology (Donders, 1868; Posner, 2008). Eventually, after sufficient gameplay data is available, we plan to use machine learning to separate out the effects of physical disabilities when measuring cognitive abilities.

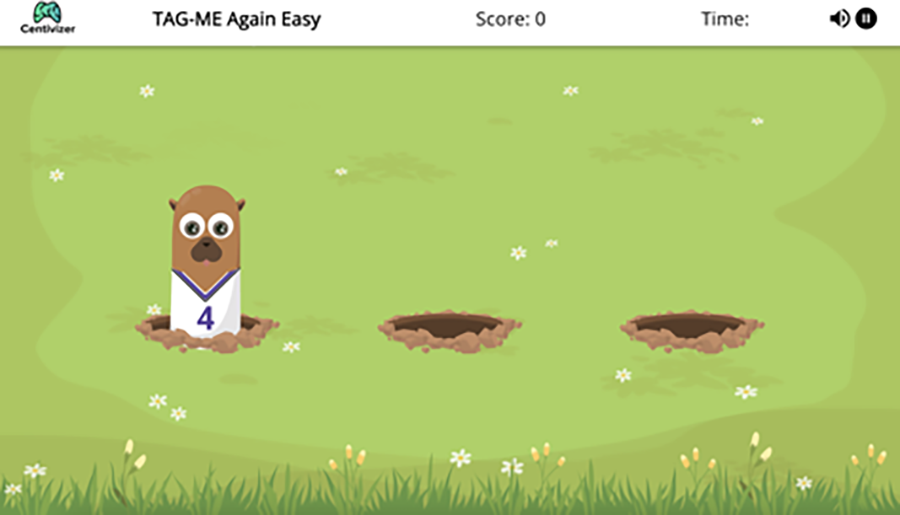

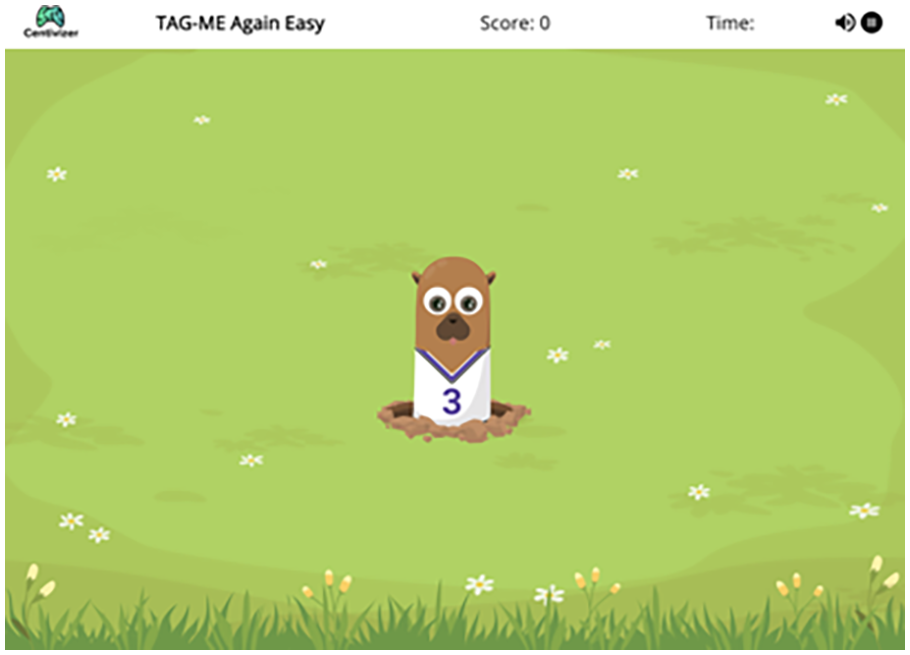

Improving Game Task Purity

Problems also arise when trying to validate CAGs scientifically. Game developers often try to validate their games by showing that they significantly differ between two different populations (e.g., cognitively normal vs. impaired, or those with mild cognitive impairment vs. those with dementia). However, this approach is flawed, as these populations can often be distinguished just as well by non-cognitive factors such as walking speed or propensity to fall. Ideally, CAGs should be convergently validated (e.g., via statistical correlation) with psychological tasks that are known to measure the cognitive ability targeted by the game, which is the approach that we have taken in our research (Zhang & Chignell, 2020). However, care must be taken to make the game comparable to the task and sometimes there is a tradeoff where features designed to make a game more enjoyable may introduce task impurities. For instance, if TAG-ME Again uses moles to be memory matched, and the moles are popping out of multiple holes, then the psychological task is actually a target acquisition task superimposed on an N-back (working memory updating) task. Ideally, when validating a game against an equivalent psychological task, stimulus-response mappings in the game should be equivalent to those in the psychological task. Hu et al. (2023) addressed this problem in a validation of the TAG-ME Again game by making stimulus-response mappings equivalent between the game and the N-back task, which then improved correlations between TAG-ME Again and N-back task performance for the 2-back and 3-back conditions.

The TAG-ME Again gameplay interface in its original three-hole configuration.

The TAG-ME Again gameplay interface with one hole.

Performance Reports

In our research with LTC homes we have found that it is very important that proposed interventions do not increase staff workload. Where some additional effort is required there needs to be a very clear value proposition justifying that effort. One example of a value proposition (paraphrased) stated by the CEO of a care setting, representing a continuum of care from independent living apartments to LTC, was the following: “I would like to have a cognitive assessment report that I can use to show families that their loved one is no longer capable of living independently, when that time comes”. Furthermore, it is important to consider the usability of interventions for family members, students, and volunteers trained to use BrainTagger in support of residents engaging with the platform.

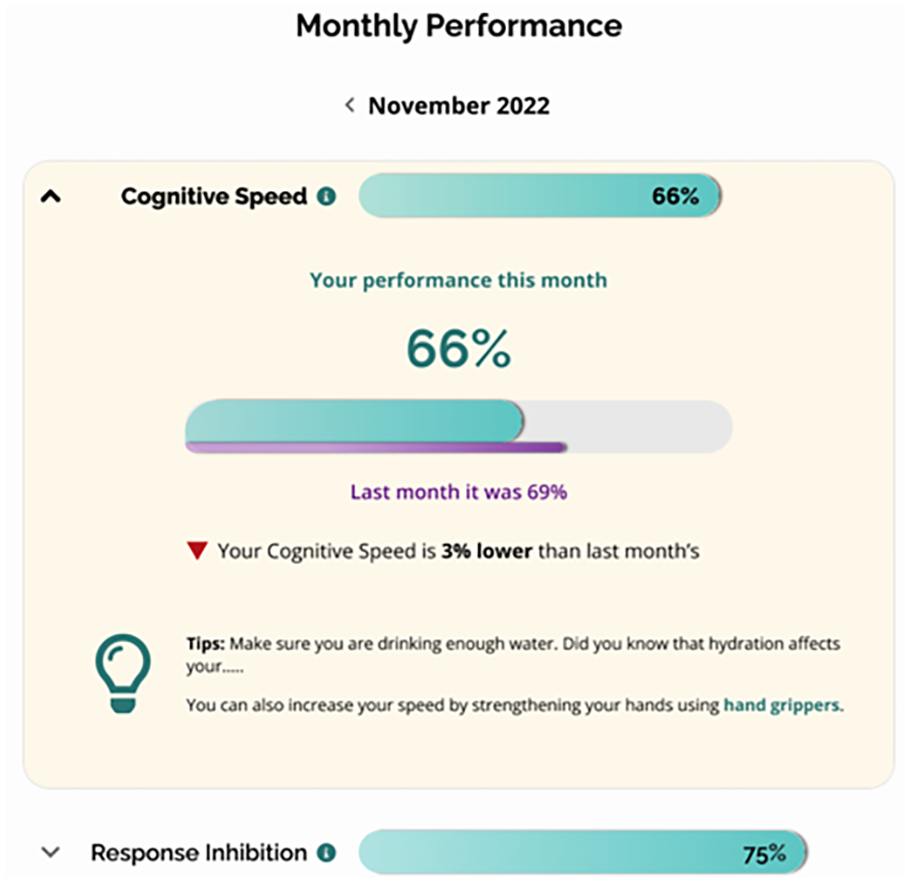

Since percentages are easy for most people to understand, we have been developing reports (e.g., Figure 6) for each cognitive ability based on percentiles representing the level of performance achieved by the player. For instance, a person at the median level of performance would receive a 50% score. Percentiles can be normed by age, whether a person has dementia, and possibly other factors.

Performance report on cognitive speed showing the percentile of the current user in the user group.

Design Guidelines

Based on the described improvements that have been made to BrainTagger, and on general psychometric principles, we propose several design guidelines that can be applied to future endeavors in CAG design.

Design guideline 1

CAGs should be sufficiently enjoyable that target users are willing to play the game with the targeted frequency (e.g., once or twice a week).

Design guideline 2

Notwithstanding guideline 1, features of a CAG that lead to task impurity (e.g., irrelevant but amusing visual search) should be minimized.

Design guideline 3

CAGs should either represent single cognitive functions/abilities that are measurable by standard psychological tasks, or there should be a process (e.g., machine learning) that allows levels of specific cognitive abilities to be inferred from performance on a suite of games.

Design guideline 4

CAGs should minimize the effects of players’ potential physical limitations on their performance.

Design guideline 5

The form factor of CAGs should meet the requirements of the context of use (e.g., a kiosk may be most suitable for a game to be used by a number of people in a public setting).

Design guideline 6

Feedback on cognitive abilities provided in a performance report should be tailored to the needs and expectations of people reading the report (e.g., care staff, family, residents).

Design guideline 7

Design of CAGs should be principled, based on an underlying theory (e.g., target acquisition games) and with well defined rules for mapping game skins and mechanics to the underlying theoretical task/function (e.g., a target acquisition game where distinguishing between target and distractor involves response competition).

Design guideline 8

CAGs should have multiple game skins where a user/player can select a particular skin to match his/her preferences.

Design guideline 9

CAGs should avoid linguistic and other prerequisites that artificially lower the performance for some users and thus create biased cognitive assessments.

Design guideline 10

CAGs should adapt to the player's skill and abilities. For instance, timing of gameplay should reflect the player’s ability to respond quickly and should be adjusted to keep the game enjoyable without making it too demanding.

Conclusions

We have been developing a game-based method of cognitive assessment for over a decade. We have shown that a CAG can be used to screen for delirium in hospital emergency departments, and can also be used to assess the cognitive functioning of university students. However, the implementation of cognitive assessment in LTC settings has proven to be challenging. We have had to rethink the way in which games are presented (game skins), the way in which games adapt to user abilities, the form factors that govern how users operate on the games, and the way that game results are reported. This research has required a great deal of patience. However, the ability to track cognitive status over time, and at scale, could revolutionize how people are managed in LTC. CAGs that are designed specifically for LTC could lead to interventions that slow the relatively rapid cognitive decline that is all too often assumed to be an inevitable part of current LTC. One final note is that CAGs might also be used for cognitive training. In the future we recommend that CAGs, such as BrainTagger’s TAG-ME games, be compared with results obtained with performance on tasks that assess similar cognitive functions. Presumably, if performance gets better not only on the CAG, but also on other tasks that target the same cognitive function, then the cognitive ability has in fact been strengthened, showing that the game is serving both assessment and training functions, and that improved performance is not due to game-specific learning that doesn’t transfer to other tasks.