Abstract

Open science is challenging and frequently time-consuming work, but the payoff is greater assurance that published research is transparent, conducted rigorously, and protected against some forms of researcher bias. In this editorial, we reflect on progress made toward the integration of open-science practices at Clinical Psychological Science (CPS) 7 years after badges were introduced in the journal and 3 years after open science was initiated as an editorial priority at CPS. Along with establishing open science as an editorial priority, the first team of Open Science Advisors was established to oversee and facilitate preregistration, open materials, and open data badge applications. Here, we discuss how these practices have evolved over time, highlight best practices and common challenges in this work, and emphasize next steps for the future of open science in clinical-psychology research.

Keywords

Clinical psychology has long been a fringe participant in the open-science movement, content to allow the concerns of social and cognitive psychology shape standards for practices such as sharing data, materials, and preregistration. As a result, clinical psychology has been slow to adopt and implement open-science practices, limiting opportunities to realize their many benefits (see e.g., Burke et al., 2021; Carpenter & Law, 2021; Dora et al., 2023; Kirtley et al., 2022; Tackett, Brandes, King, & Markon, 2019; Tackett, Brandes, & Reardon, 2019; Tackett et al., 2017). Open-science practices contribute to reproducibility and replicability of research and mitigate biases (Munafò et al., 2017), which are fundamental to building a cumulative science of clinical psychology. Their use should increase transparency, which, in turn, facilitates the evaluation and recognition of studies’ credibility (Vazire, 2019). Psychologists involved in clinical trials were acutely aware of the problems of publication bias and the need for trial registration years before the contemporary replication crisis (Dickersin & Rennie, 2003), and rightly so: The stakes for clinical-psychological research are high, with topics and results poised to advance the understanding of psychopathology and its amelioration (Carpenter & Law, 2021; Kirtley et al., 2022; Tackett et al., 2017). Research that lacks methodological rigor and transparency is costly for funders, researchers, policymakers, and ultimately, people at risk of and affected by mental-health problems, all of whom are depending on research to deliver societal and scientific value (Schleider, 2022; Schneider et al., 2022). Rigorous clinical-psychological science is therefore essential for good clinical practice (McFall, 1991).

In this editorial, we reflect on progress toward the integration of open-science practices at Clinical Psychological Science (CPS) and discuss advantages, challenges, and next steps for the future of open science in clinical psychology. We discuss in detail the new Open Science Advisor (OSA) role implemented at CPS in 2021, reporting on the nature of the role, our experiences about how things have evolved, and lessons learned after 3 years of overseeing and facilitating preregistration, open materials, and open-data inclusions at CPS. We conclude with suggestions for continued open-science improvement in clinical-psychology research.

Timeline of Open-Science Implementation at CPS

Researchers, academic publishers, policymakers, and funding agencies are increasingly investing in open-science practices, such as preregistering study designs and methods, publicly sharing data and materials, and publishing replication studies (see e.g., The White House, 2023). Guidelines for transparency and openness promotion (TOP) were released in 2015 (Nosek et al., 2015), and CPS, along with the other journals in the Association for Psychological Science portfolio, signed on to TOP Level II guidelines in 2022. In contrast to other subdisciplines in psychology, open science has been slow to gain recognition in clinical science, where adoption has been limited (Tackett, Brandes, King, & Markon, 2019). Initially, this contrast was a research question in its own right: Was there a substantive reason why clinical psychologists were slow to adopt open-science practices? Working with hard-to-reach populations, collecting multiyear and resource-intensive data, and managing sensitive interview data are barriers that make it practically difficult and professionally costly to embrace open science (Tackett et al., 2017). The current and previous editors-in-chief of CPS, with a larger author team of clinical scientists, prepared a call meant to serve two functions: (a) persuade clinical scientists that they should be part of the open-science conversation and (b) persuade open-science advocates that conceptualization of the problems and creation of solutions were inadequate if all areas of psychological science were not considered (Tackett et al., 2017).

Following this call to action, CPS began offering open-science badges to articles submitted after July 1, 2016 (Lilienfeld, 2017), emulating Psychological Science, the first field-wide journal to introduce a badge system (Eich, 2014) to incentivize authors to use open-science practices in their research. For qualifying articles, badge icons appear on the title page of awarded articles and can be requested for preregistration, open data, or open materials. Open-science practices and principles were thus encouraged but not required for article submission.

In January 2021, Tackett established open-science practices and principles as a primary priority area for submissions to CPS under the new editorial team (Tackett, 2020). Expanding on the infrastructure built by Eich, Lindsay, Lilienfeld, and others, one consideration was how to systematically operationalize this priority area while still being flexible and encouraging of clinical scientists who may be new to these methods and approaches. Another editorial consideration was how to increase open-science expectations and adjudication without further burdening the editorial team beyond their standard responsibilities. Changes to the CPS submission portal drew authors’ attention to the potential gatekeeping function of open-science priorities while also creating space for authors to explain their own implementation of open-science principles and practices. TOP Level II requirements, implemented across the journal in 2022, obligated submitting authors to either include open data, materials, and preregistration or to actively disclose which practices were not implemented in their research. CPS continued to encourage authors to apply to receive badges for their accepted articles that acknowledge open-science efforts.

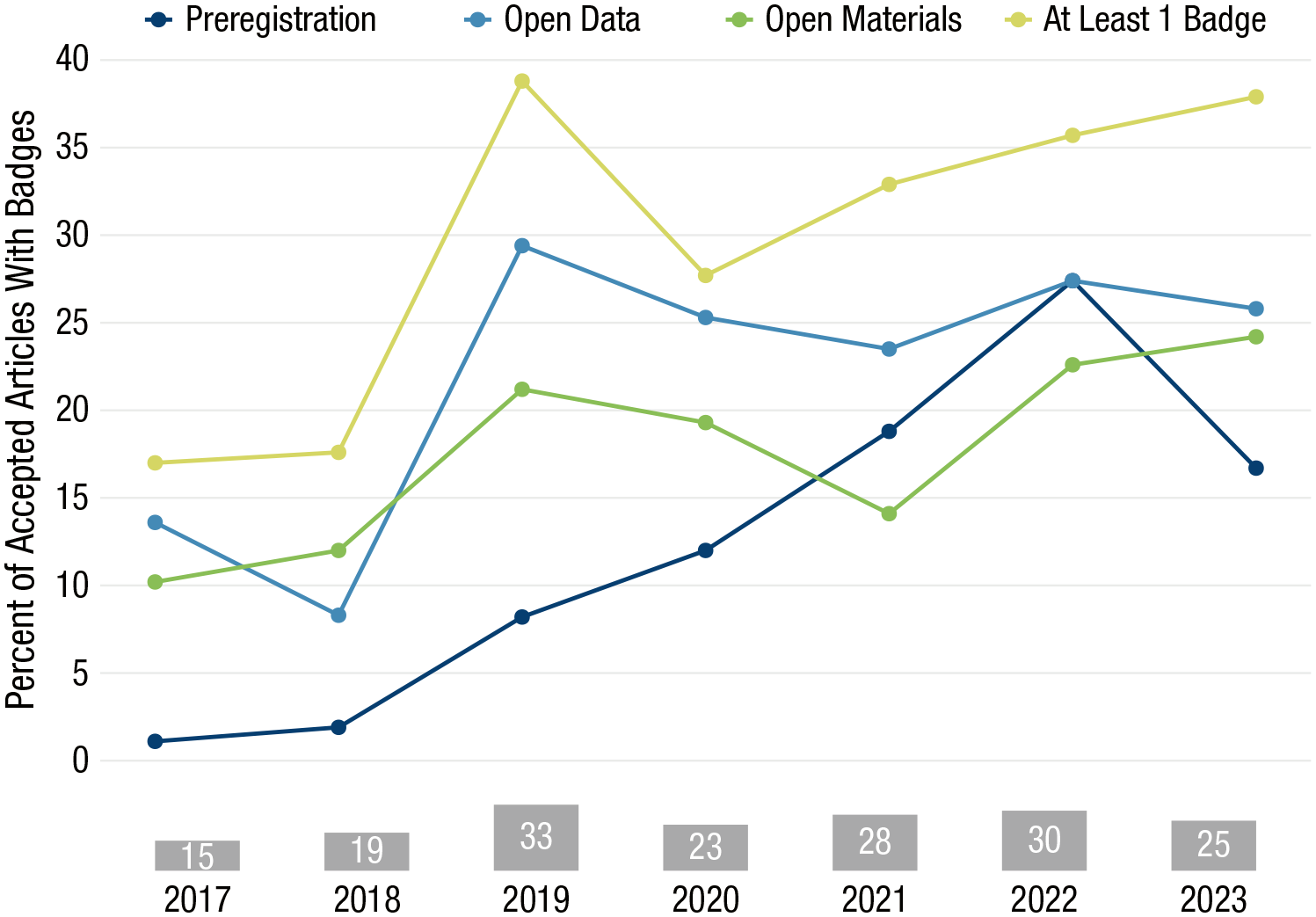

Figure 1 shows how badge uptake at CPS has changed since 2017, when badges were first introduced. In 2023, 38% of accepted articles were awarded at least one badge. Although badges were conceived as a tool to incentivize authors to engage in open science, they do not guarantee high-quality scientific work. Mounting evidence suggests that badges are becoming their own end goal as signals of credibility, making them subject to the same kinds of questionable research practices behind the replication crisis (Klonsky, 2024). In the April 2019 issue of Psychological Science, just one of the 14 articles awarded an Open Data badge was fully reproducible, and six were mostly or not at all reproducible (Crüwell et al., 2023).

Percentage of accepted articles published in Clinical Psychological Science between 2017 and 2023 that received open-science badges. Gray bars at the bottom show numbers of articles that earned badges each year. Badges awarded in 2021 to 2023 inclusive were subject to structured Open Science Advisor badge adjudication.

Earlier studies similarly found that a minority of articles were fully reproducible (Hardwicke, Thibault, et al., 2021; Obels et al., 2020). Articles with badges are perceived as more trustworthy than articles without badges (Schneider et al., 2022), potentially attaching unearned credibility to articles whose badges were awarded without verification. Before 2021, it is unclear how standards for badge adjudication were applied at CPS, leaving room for variability across handling editors. Clear and consistent standards for adjudication are necessary to properly evaluate adherence, particularly given evidence that without such systems, many of these practices remain unchecked even when reported by authors (Syed, 2024). January 2021 saw the launch of an OSA team tasked with implementing badge-adjudication standards.

Innovating a New OSA Team as Part of the Editorial Board

Editorial teams are universally underresourced and overburdened (Severin & Chataway, 2021). Implementation and adjudication of open-science practices add an additional layer of burden for authors, reviewers, and editors (e.g., Romero, 2018). With the goal of increasing the capacity of the journal to support open-science practices, a new type of editorial role was innovated at CPS: the OSA. Monitoring and evaluating open-science practices in accepted articles became the task of the newly created team of OSAs beginning in 2021. Three OSAs began their terms with the new editorial team at that time, with the mission to engage in metawork with the editor and publisher to conceptualize the OSA role, develop and test workflow to support open-science engagement, and work with the journal to verify open-science practices and adjudicate badge applications. This model was later adopted at Psychological Science in 2023, serving as a precursor to the expanded system of checks on all articles that will be taken under the incoming editorial team (Hardwicke & Vazire, 2023). At CPS, the OSAs helped the editor and publisher craft a workflow for communicating the importance of open-science practices to submitting authors. We meet and collaborate to guide each other in determining whether a particular manuscript meets or can meet criteria to earn a badge or badges. Through 2023, the OSAs (past and present) adjudicated 89 badge applications.

Lessons Learned From Adjudicating Open-Science Practices in CPS Articles

OSAs at CPS perform a light-duty verification of every article requesting badges. Each accepted article requesting at least one open-science badge is assigned to one member on the OSA team, who then attempts to verify the existence of data or materials and verifies that any preregistration document reflects the work described in the article. Under TOP Level II guidelines, data and materials are not verified for reproducibility, although these are often spot-checked at the discretion of the assigned OSA to help catch overt discrepancies. In nearly all cases, the OSA elects to follow up with the corresponding author for clarification or to request changes before awarding a badge. In our experience, the biggest challenge in implementing badge adjudication is navigating each author’s unique interpretations of the criteria for receiving a badge. The challenges regularly encountered in this process underscore the importance of a systematic and transparent adjudication process for badges to have meaning. In the following sections, we provide background to open-science practices for which we offer badges and highlight common problems that arise during adjudication.

Preregistration: Deviations are common but require transparent documentation

Preregistration is uniquely challenging for clinical scientists and others who routinely collect large or longitudinal data sets meant to inform multiple publications. Some researchers worry that preregistration is not possible if the data are already collected, but guidelines for preregistering secondary data-analysis plans exist (Kirtley et al., 2021; van der Akker et al., 2021), and many teams are choosing to adopt this approach (e.g., Samimy et al., 2022; Weick et al., 2022). Even when preregistration is possible, researchers worry that a preregistration locks them into a specific analysis plan. On the contrary, deviating from preregistrations is common (Claesen et al., 2021, Willroth & Atherton, 2024), and all the authors of this editorial have deviated from preregistrations in their own work (e.g., Howard et al., 2022; Janssens et al., 2023; Line & Neal, 2020; Shields et al., 2024; Wolfe et al., 2023). In fact, 42% of the submissions to CPS that applied for a preregistration badge in the first 5 years of badge availability endorsed deviations from their preregistration.

Preregistration obligates the research team to transparently document their changes, in either a dedicated “Transparency and Openness” section, elsewhere in the main text, or a footnote, as appropriate (Lakens, 2023; Willroth & Atherton, 2024). The authors of one recent article in CPS (Fuhrmann et al., 2022) nicely documented deviations from their preregistration. These authors used an unplanned data transformation, made changes in model specifications, and used a different modeling technique than planned. By reporting deviations transparently, readers have the information they need to evaluate and potentially build on their hard work.

Although many templates exist to facilitate preregistration, it is ultimately up to each research team to decide what content to include in a preregistration of their study and how to make it publicly available. At CPS, authors occasionally request a preregistration badge when they have posted a link to a protocol paper, grant application, ClinicalTrials.gov or PROSPERO registration (occasionally, these documents will suffice), or document explaining general procedures for a multistudy program of research. Such documents typically lack the specificity required to account for study design and analytic decision-making in a single manuscript. Sometimes, an appropriate, study-specific registration document exists, but the authors failed to create a time-stamped, immutable, publicly available copy. Often, the information reported in the preregistration does not match what appears in the final article (e.g., sample-size discrepancies, undisclosed deviations from the protocol, failure to acknowledge that supplemental or exploratory analyses were not preregistered). In studies involving complex analyses of large or longitudinal data sets, authors often fail to document their analysis plan in sufficient detail, exposing studies to considerable decision-making subjectivity (for suggested strategies, see Kirtley, 2022). Our experiences suggest that in the absence of standard and systematic adjudication (e.g., Syed, 2024), many reported preregistrations may not meet basic thresholds for transparent and open practices.

Open data: Share what you can

Open data might be the most intractable barrier for clinical researchers and is a less common practice than open materials or open code at CPS. In many cases, clinical-psychological-science data are highly sensitive and subject to external ethical or confidentiality agreements. As of January 2023, however, research funded by the National Institutes of Health is subject to its data management and sharing policy mandating the dissemination of sufficient data to reproduce the findings reported in a publication (National Institutes of Health, 2023). For cases in which confidentiality is a concern, HIPAA-compliant guidance on de-identifying data is extensive (Walsh et al., 2018). Data can also be shared via secure databases when necessary—open data need not be fully unrestricted (Joel et al., 2018). A more personal concern is that once shared, someone else might publish with your data before you get the chance (on perceived barriers to data sharing, see Houtkoop et al., 2018). Fortunately, “open data” does not have to mean “share everything you collected,” at least not before key work on the project is complete. For an open-data badge at CPS, authors need make accessible only the data and code required to reproduce analyses reported in their manuscripts.

Our experience reviewing articles for open-data badges aligns with findings from studies that have difficulty reproducing badge-awarded published papers (Crüwell et al., 2023; Hardwicke, Bohn, et al., 2021). Authors sometimes include only part of the data needed to reproduce their work or provide data with file names that do not correspond to the file names referenced in their analysis code. In contrast, authors of multistudy articles or with complex analyses sometimes provide dozens of files with no documentation indicating how to navigate the project and match data to subsections of the article; documentation and code are often not produced with readers unfamiliar with the project in mind. During badge adjudication, members of the OSA team routinely ask authors to add files, documents, and instructions to their online repositories to enhance the reproducibility of their work.

Open materials: Accessible entry-level practices

Instructions to participants, sample stimuli, equipment technical details, and survey questions are easily stored in an online repository such as OSF (Kathawalla et al., 2021). In cases in which survey instruments are protected by copyright or studies involve diagnostic assessments by trained professionals, the research team can still share details such as how to purchase the copyrighted instrument or links to diagnostic manuals and information about required training (e.g., www.scid5.org/training). In short, researchers should share what they can and explain what they cannot. Although typically adjudicated as part of an Open Data badge, open code (with or without data) is easy to share, and barriers to implementing this practice are largely self-imposed. Researchers who conduct all their analyses using drop-down menus without a written record of the steps involved face the biggest learning curve, but most commercial software allows users to create text-based syntax files (and SPSS analyses performed through the graphical user interface can be “pasted” to syntax). Researchers may be hesitant to share their code because it is messy or inelegant or might contain errors. The idea of other researchers finding mistakes is unsettling and may even lead to article retractions. The good news is that learning of one’s mistakes makes it possible to correct them, and what matters at the end of the day is the integrity of the scientific record. Respectfully, we encourage our colleagues to set pride aside and be more like a vacuum cleaner. 1 We applaud the commitment of scholars such as Julia Strand (2020), who found and disclosed a major error that could have had career-damaging impact and went on to receive a commendation from the Society for the Improvement of Psychological Science for her efforts.

When badge reviews are unsuccessful

Although the open-science movement has grown to a point at which scientists and funding agencies are broadly aware of its advantages, most practices remain optional and are not formally attached to any career-advancing incentives. We believe that authors requesting badges disproportionately represent research teams who believe in open-science values and are proactive about incorporating these practices into their work. Yet even in this group of early adopters, we see problems that lead us to worry about the state of the remaining non-badge-seeking literature. Although most authors comply with our requests for clarification, changes, or additional documentation, sometimes the changes requested are substantial enough that the authors elect not to proceed with a badge because of time and resource barriers. One author withdrew a request for an open-data badge after an OSA conducted a spot-check of analysis code and brought to the author’s attention problems related to data formatting and software versioning that prevented others from reproducing the work. In another case, an author withdrew a request for a preregistration badge after an OSA noted several discrepancies between the registration and accepted article and asked the author to transparently document how the study deviated from the registration. In these and other cases, articles proceeded to publication as is despite documented (but undisclosed) transparency and reproducibility shortcomings. Those of us concerned about clinical psychology’s sparser involvement in open science were right to be worried.

Suggestions for Continued Open-Science Improvement in Clinical Psychology

On balance, we believe the issues we have come across to date reflect growing pains in clinical psychology’s adoption of open-science practices and not fatal flaws in how the field conducts its research. Although there is a risk that badges as open-science tools may be corrupted to serve as “aesthetic markers of strong science” (Klonsky, 2024), we see the steady pace of badge requests at CPS as an encouraging sign of continuing interest in open-science implementation. The additional layer of arm’s-length scrutiny introduced by OSA adjudication strengthens the credibility of the badge-bearing published research at CPS, and most authors work with us to meet badge criteria when discrepancies or omissions are pointed out and corrections requested. As of 2024, Psychological Science will now perform checks on all accepted articles (Hardwicke & Vazire, 2023), a move by a flagship journal that signals broader discipline-wide readiness to mandate transparent and open reporting in peer-reviewed research. To further stimulate the interest and intent of clinical scientists to engage in open-science practices, we summarize some forward-looking suggestions for the field.

Better planned work with secondary data sets

Preregistration can help to manage researchers’ expectations about what is possible with sometimes limited clinical data sets. Sample-size justifications, anticipated effect sizes, and precision in estimates are critical issues for clinical science that are harder to neglect when preparing a public-facing data-analysis plan. Advance planning forces researchers to think deeply and carefully about the decisions they are making in a study and why they are making them. Such work can also help avoid costly mistakes in data management (Kovacs et al., 2021) that are harder to miss when data are being prepared with other scientists’ later use in mind (Perkel, 2023). Broadening conceptualizations of preregistration to include registration following data collection but before data access/analysis (Benning et al., 2019) and the use of data checkout systems (Kirtley, 2022; Scott & Kline, 2019), specialist registration templates (van der Akker et al., 2021), and robustness checks (Weston et al., 2019) open up exciting opportunities to practice open science in studies using preexisting data.

Overcome open science barriers one step at a time

Open science takes time and effort—an investment that may not be offset by creating savings elsewhere in the research workflow (Hostler, 2023). Because work hours are finite, the time and effort put toward open science necessarily reduces the time and effort available for other research, teaching, and clinical pursuits. However, open-science practices are not all or nothing. Researchers can each decide which practices to adopt and when. If you are new to open science, consider choosing a project for which it is practical to make the data open or to share materials and focus on that goal. Sharing comprehensive analysis code—even without accompanying data—is an excellent entry-level step that most researchers should be able to take (as of 2024, analysis code is now required of articles submitted to the Journal of Psychopathology and Clinical Science; Wright, 2024; although, our experiences suggest that code without stringent adjudication practices can be of limited utility).

Publish null results

When rigorous study methods and transparent reporting receive higher priority than the novelty of a study’s results, barriers to publication ease, and there are fewer undue incentives to engage in the kinds of questionable research practices that ensure a study “works out.” This is most demonstrably true for Registered Reports: studies whose methodology is peer reviewed in advance and conditional acceptance decisions are awarded before the results are known (Chambers & Tzavella, 2022). Although the current editorial team has been open to and encouraging of Registered Reports submissions at the journal, CPS receives very few. Those that are submitted are further affected by the lack of familiarity in the field with this mechanism, which can make identification of well-versed reviewers challenging. We view this as an open-science practice lagging even further behind in the field and an area ripe for opportunity. Whether submitted as a Registered Report or a regular article, null results from high-quality studies that preregister study designs and analyses, share data, and transparently document the research workflow are valuable, and CPS encourages these contributions (e.g., Gallyer et al., 2023; Rogers et al., 2024; Vuorre et al., 2021).

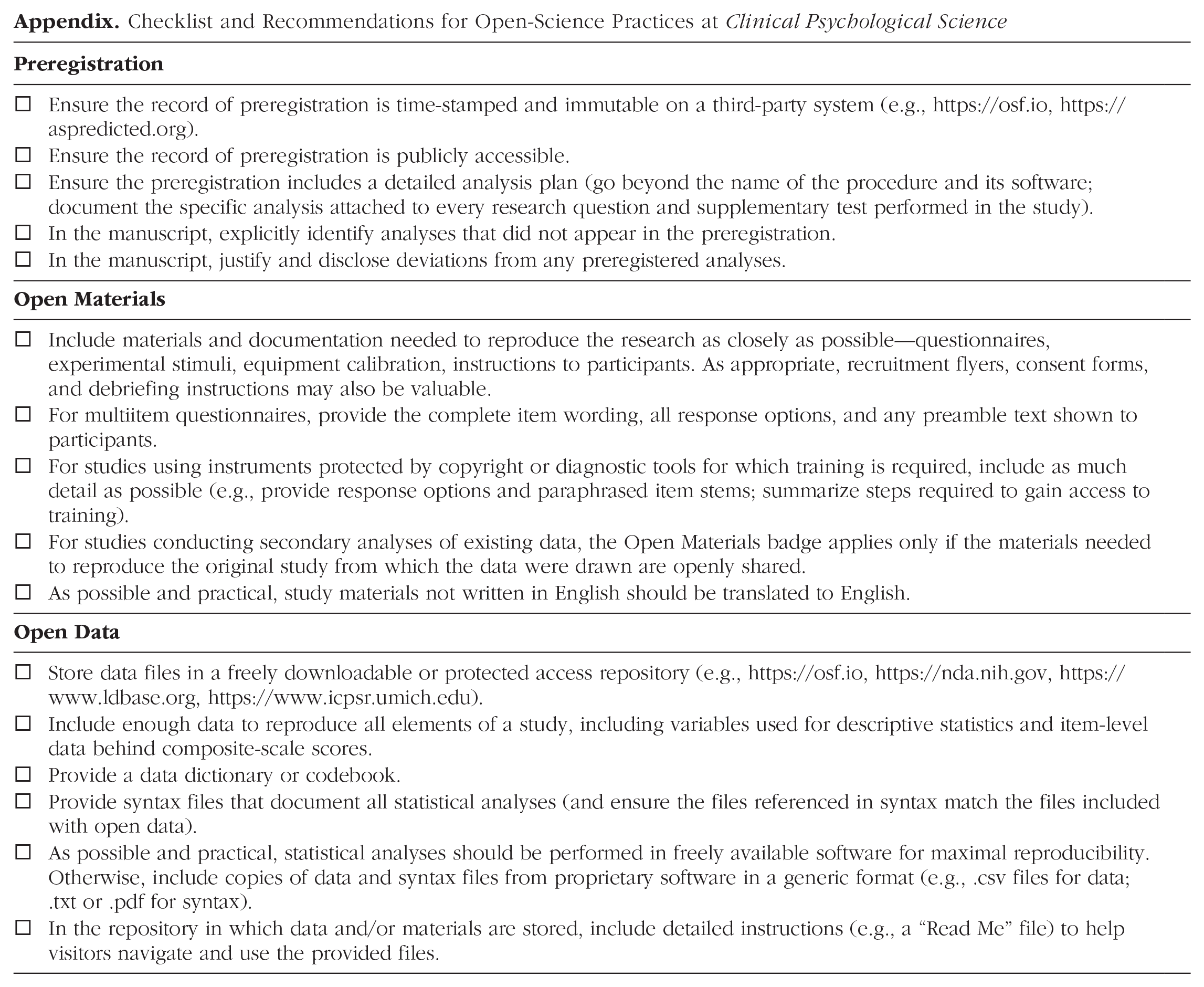

Ultimately, we believe the time and effort put into open science is worthwhile and increasingly necessary. Open-science practices support our goal as clinical-psychological scientists to advance understanding of psychopathology in ways that promote well-being and alleviate suffering. In the Appendix to this editorial, readers can find a checklist of steps to maximize transparency and openness in their work that will also ensure a seamless badge-review process at CPS.

Conclusion and Next Steps

Our hope is that the work involved in conceptualizing a role for OSAs at CPS and sharing our collective experiences spanning the past 3 years will reduce hesitation in clinical researchers’ efforts to be more open and transparent in their research practices. The OSA team at CPS are scholars familiar with open-science practices and clinical-psychological research; they are responsible for all badge adjudication and facilitate ongoing conversations about implementing openness and transparency at the journal. One of the primary lessons we have learned is that transparency is paramount: Strict adherence to a preregistered plan is less valuable (and realistic) than transparency through the entire research workflow. We understand how difficult it is to anticipate everything you will need to know to create ironclad plans, especially for the complex study designs and analyses that we routinely see in clinical science. The trade-off is that researchers must be prepared to disclose their decision-making in manuscripts and supplemental materials.

As open-science enthusiasts, we see the benefits of transparency as outweighing its associated costs, but we are also attuned to the need to manage weaknesses in the badge system that may incentivize “openwashing” 2 (Helmstädt, 2017) and related practices to achieve unearned scientific credibility. For the majority of clinical researchers interested in good-faith efforts to increase the openness of their work, we see tremendous value in creating lab standard operating procedures that can be adapted to serve as preregistration-type templates describing the kinds of procedures and measures a given lab uses with regularity. Such standards aid in open-science practices and also mitigate error in workflow (see e.g., Strand, 2023). We look forward to continuing to learn from our peers and colleagues about innovative ideas for improving our science.

Footnotes

Appendix

Checklist and Recommendations for Open-Science Practices at Clinical Psychological Science

|

|

|

|---|---|

| □ Ensure the record of preregistration is time-stamped and immutable on a third-party system (e.g., https://osf.io, https://aspredicted.org). | |

| □ Ensure the record of preregistration is publicly accessible. | |

| □ Ensure the preregistration includes a detailed analysis plan (go beyond the name of the procedure and its software; document the specific analysis attached to every research question and supplementary test performed in the study). | |

| □ In the manuscript, explicitly identify analyses that did not appear in the preregistration. | |

| □ In the manuscript, justify and disclose deviations from any preregistered analyses. | |

|

|

|

| □ Include materials and documentation needed to reproduce the research as closely as possible—questionnaires, experimental stimuli, equipment calibration, instructions to participants. As appropriate, recruitment flyers, consent forms, and debriefing instructions may also be valuable. | |

| □ For multiitem questionnaires, provide the complete item wording, all response options, and any preamble text shown to participants. | |

| □ For studies using instruments protected by copyright or diagnostic tools for which training is required, include as much detail as possible (e.g., provide response options and paraphrased item stems; summarize steps required to gain access to training). | |

| □ For studies conducting secondary analyses of existing data, the Open Materials badge applies only if the materials needed to reproduce the original study from which the data were drawn are openly shared. | |

| □ As possible and practical, study materials not written in English should be translated to English. | |

|

|

|

| □ Store data files in a freely downloadable or protected access repository (e.g., https://osf.io, https://nda.nih.gov, https://www.ldbase.org, https://www.icpsr.umich.edu). | |

| □ Include enough data to reproduce all elements of a study, including variables used for descriptive statistics and item-level data behind composite-scale scores. | |

| □ Provide a data dictionary or codebook. | |

| □ Provide syntax files that document all statistical analyses (and ensure the files referenced in syntax match the files included with open data). | |

| □ As possible and practical, statistical analyses should be performed in freely available software for maximal reproducibility. Otherwise, include copies of data and syntax files from proprietary software in a generic format (e.g., .csv files for data; .txt or .pdf for syntax). | |

| □ In the repository in which data and/or materials are stored, include detailed instructions (e.g., a “Read Me” file) to help visitors navigate and use the provided files. |

Acknowledgements

We are grateful to David Sbarra and Simine Vazire for their comments on an earlier version of this manuscript. Amy Drew and Becca White at APS were instrumental in helping to establish the Open Science Advisor role and positions at CPS, and set up OSA workflow and communication.

Transparency

Author Contributions